Accelerating Biomanufacturing: A High-Throughput Screening Workflow for Metabolic Engineering Strain Development

This article provides a comprehensive guide for researchers and scientists on implementing high-throughput screening (HTS) workflows to overcome the central challenge in metabolic engineering: the inability to rationally design high-performing...

Accelerating Biomanufacturing: A High-Throughput Screening Workflow for Metabolic Engineering Strain Development

Abstract

This article provides a comprehensive guide for researchers and scientists on implementing high-throughput screening (HTS) workflows to overcome the central challenge in metabolic engineering: the inability to rationally design high-performing industrial strains. We explore the foundational principles of HTS, detailing automated and miniaturized assay technologies that enable the rapid testing of thousands of genetic constructs. The content covers advanced methodological applications, including CRISPR-based genome editing and AI-driven data analysis, alongside practical strategies for troubleshooting common pitfalls like false positives and data overload. Finally, we examine validation frameworks and comparative analyses that ensure screening results successfully translate to scalable biofactory processes, positioning HTS as an indispensable engine for accelerating the development of robust microbial cell factories for a sustainable bioeconomy.

The Core Challenge: Why Metabolic Engineering Demands High-Throughput Solutions

The Design-Build-Test Bottleneck in Strain Development

In the field of metabolic engineering, the development of efficient microbial cell factories is fundamentally constrained by the Design-Build-Test (DBT) cycle, which has emerged as the critical bottleneck in strain development pipelines. This iterative process of designing genetic constructs, building them in a host organism, and testing the resulting phenotypes forms the core of synthetic biology and metabolic engineering efforts. Within the context of high-throughput screening workflows for metabolic engineering strain development research, accelerating this DBT cycle is paramount to achieving competitive titers, yields, and productivity for target compounds. The conventional, artisanal approach to this cycle is prohibitively slow, often requiring months to complete a single iteration with limited exploration of the vast biological design space. However, recent technological breakthroughs in automation, bioinformatics, and analytical science are poised to overcome these limitations through the implementation of fully automated Design-Build-Test-Learn (DBTL) pipelines that integrate machine learning and robotic systems to dramatically accelerate strain development timelines.

Deconstructing the Bottleneck: Core Challenges in Each Phase

The Design Challenge: Navigating Vast Biological Complexity

The Design phase presents the initial bottleneck, characterized by the need to navigate an exponentially large biological design space with traditional tools. Metabolic engineers must select optimal enzymes, regulatory elements, gene orders, and expression levels from nearly infinite combinations. For a typical pathway with four genes, the combinatorial design space can easily exceed 2,500 possible configurations when considering variables such as promoter strengths, ribosome binding sites, and gene ordering [1]. Manual design approaches cannot effectively explore this complexity, leading to suboptimal designs that propagate inefficiencies throughout the entire development pipeline. The challenge is further compounded by context-dependent effects of biological parts, where identical genetic elements behave differently depending on their genomic location and cellular environment.

The Build Bottleneck: Manual Laboratory Limitations

The Build phase translates digital designs into physical biological constructs, traditionally through labor-intensive molecular biology techniques. Standard cloning protocols, transformation, and quality control checks create significant throughput limitations. Construct assembly remains a primary constraint, with even experienced technicians typically assembling only a few dozen constructs per week. Quality control through sequencing and restriction digest analysis creates additional workflow interruptions. These manual limitations directly restrict the number of design variants that can be physically realized and tested, forcing researchers to make premature decisions about which designs to pursue with inadequate data.

The Test Impediment: Analytical Throughput Constraints

The Test phase represents perhaps the most severe bottleneck in conventional strain development, where analytical methods struggle to provide rapid, quantitative data on strain performance. Standard chromatography-based methods (e.g., HPLC, GC-MS) provide excellent data quality but have limited throughput, typically processing only scores of samples per day with significant manual intervention. This analytical bottleneck means that only a tiny fraction of constructed variants can be thoroughly characterized. Furthermore, cultivation conditions in multi-well plates often introduce significant variability and poor scalability to bioreactor performance, creating additional challenges in reliably identifying top-performing strains.

Table 1: Quantitative Comparison of Traditional vs. Automated DBT Cycle Performance

| Performance Metric | Traditional Manual Approach | Automated DBTL Pipeline | Improvement Factor |

|---|---|---|---|

| Cycle Time | Several months | 1-2 weeks | ~8x faster |

| Constructs per Cycle | Dozens | Hundreds to thousands | ~10-100x increase |

| Data Points Generated | Limited (10s-100s) | Extensive (1000s) | ~100x increase |

| Pathway Optimization Iterations | 1-2 per year | Multiple cycles per month | ~10x increase |

Case Study: Automated DBTL for Flavonoid Production

A landmark application of an automated DBTL pipeline demonstrates the potential for overcoming the DBT bottleneck in strain development. The study focused on optimizing the microbial production of the flavonoid (2S)-pinocembrin in Escherichia coli, achieving a remarkable 500-fold improvement in titers (from 0.002 to 88 mg L⁻¹) through just two DBTL cycles [1].

Experimental Protocol and Methodology

The automated pipeline incorporated several key technological innovations at each stage:

Design Phase: The pipeline employed integrated bioinformatics tools including RetroPath for pathway design and Selenzyme for enzyme selection [1]. PartsGenie software optimized ribosome-binding sites and coding sequences, with all designs deposited in a centralized repository (JBEI-ICE) for traceability. A combinatorial library of 2,592 possible pathway configurations was reduced to just 16 representative constructs using design of experiments (DoE) methodologies, achieving a 162:1 compression ratio while maintaining statistical power to identify significant factors.

Build Phase: Automated ligase cycling reaction (LCR) assembly was performed on robotics platforms following automated worklist generation [1]. Commercial DNA synthesis was followed by automated part preparation via PCR, though some manual interventions remained (PCR clean-up and transformation). Quality control was implemented through high-throughput automated plasmid purification, restriction digest, and capillary electrophoresis analysis.

Test Phase: An automated 96-deepwell plate growth and induction pipeline was implemented with fast ultra-performance liquid chromatography coupled to tandem mass spectrometry (UPLC-MS/MS) for quantitative analysis of target products and key intermediates [1]. Custom R scripts automated data extraction and processing, enabling rapid evaluation of all constructs.

Learn Phase: Statistical analysis identified the main factors influencing production, with vector copy number demonstrating the strongest significant effect (P value = 2.00 × 10⁻⁸), followed by chalcone isomerase (CHI) promoter strength (P value = 1.07 × 10⁻⁷) [1]. Weaker effects were observed for chalcone synthase (CHS), 4-coumarate:CoA ligase (4CL), and phenylalanine ammonia-lyase (PAL) promoter strengths.

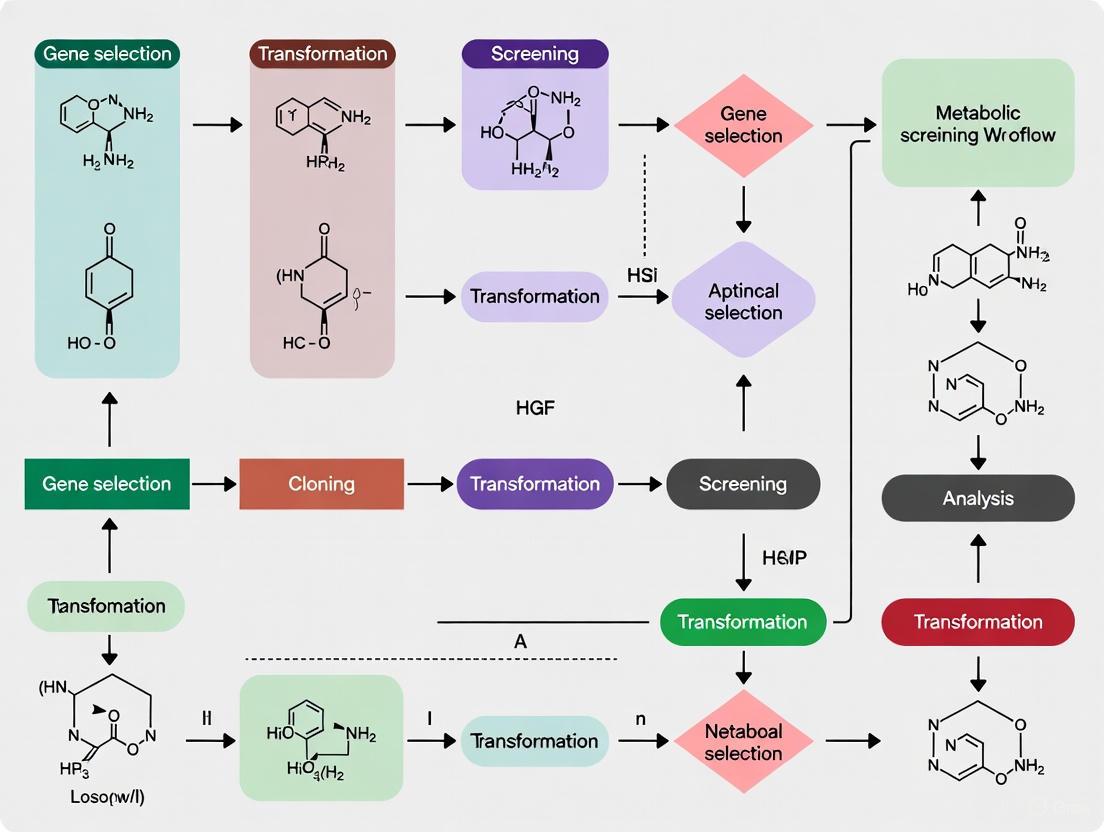

Workflow Visualization

Automated DBTL Cycle for Strain Engineering

Essential Research Reagent Solutions

The implementation of automated DBTL pipelines requires specialized reagents and tools designed for high-throughput workflows. The following table details key research reagent solutions essential for overcoming the DBT bottleneck in strain development.

Table 2: Key Research Reagent Solutions for Automated Strain Development

| Reagent/Tool Category | Specific Examples | Function in Workflow | Throughput Considerations |

|---|---|---|---|

| DNA Assembly Systems | Ligase Cycling Reaction (LCR), Golden Gate Assembly | High-efficiency multi-part DNA construction | Enables parallel assembly of hundreds of constructs |

| Specialized Vectors | p15A, pSC101, ColE1 origins with varying copy numbers [1] | Tunable gene expression levels | Library design with expression level variation |

| Promoter/RBS Libraries | Ptrc, PlacUV5, synthetic RBS variants [1] | Fine-tuning transcriptional and translational regulation | Enables combinatorial optimization of expression |

| Genome Editing Tools | MAGE (Multiplex Automated Genome Engineering), CRISPR-Cas9 | Direct chromosomal modifications | Allows rapid in situ pathway optimization |

| Analytical Standards | Stable isotope-labeled internal standards for MS | Accurate quantification of metabolites and products | Essential for reliable high-throughput screening |

| Specialized Growth Media | Optimized induction media with precursors | Controlled gene expression and precursor supplementation | Standardized cultivation conditions for reproducibility |

Integrated Experimental Protocol for Automated DBTL

Design Phase Protocol

Pathway Design: Utilize RetroPath software for retrobiosynthetic analysis to identify potential pathways to target molecules [1].

Enzyme Selection: Employ Selenzyme web server for automated enzyme selection based on sequence and structural features [1].

Parts Optimization: Use PartsGenie for automated design of genetic parts with optimized ribosome binding sites and codon-optimized coding sequences [2].

Library Design: Apply statistical Design of Experiments (DoE) methods, particularly orthogonal arrays combined with Latin square designs, to reduce combinatorial libraries to tractable sizes [1].

Automated Worklist Generation: Utilize PlasmidGenie to generate assembly recipes and robotics worklists for downstream automation [1].

Build Phase Protocol

DNA Synthesis: Order codon-optimized genes from commercial synthesis providers with standardized vector backbones [1].

Part Preparation: Perform automated PCR amplification and purification of genetic parts using liquid handling robots.

Assembly Reaction: Set up ligase cycling reaction (LCR) assemblies on robotics platforms following automated worklists [1].

Transformation: Transform assembled constructs into suitable E. coli strains (e.g., DH5α) using high-efficiency chemical transformation or electroporation.

Quality Control: Implement high-throughput plasmid purification, restriction digest analysis via capillary electrophoresis, and sequence verification of key constructs [1].

Test Phase Protocol

Cultivation: Inoculate constructs in 96-deepwell plates with optimized media and growth conditions using liquid handling robots.

Induction: Implement automated induction protocols with standardized timing and inducer concentrations.

Metabolite Extraction: Perform automated metabolite extraction using standardized solvent systems.

Quantitative Analysis: Utilize fast UPLC-MS/MS methods with multiple reaction monitoring (MRM) for targeted quantification of products and key intermediates [1].

Data Processing: Apply custom R scripts for automated data extraction, peak integration, and concentration calculation [1].

Learn Phase Protocol

Statistical Analysis: Perform analysis of variance (ANOVA) to identify significant factors influencing production titers.

Machine Learning: Apply regression models and other machine learning approaches to identify complex relationships between design parameters and performance.

Pathway Analysis: Use flux balance analysis and other metabolic modeling techniques to identify potential pathway bottlenecks.

Design Refinement: Incorporate learned parameters into the next Design phase, focusing on the most impactful variables identified.

Implementation Framework and Pathway Optimization

The successful implementation of an automated DBTL pipeline requires careful planning of the iterative optimization process. The following visualization illustrates the strategic pathway optimization workflow that enables continuous strain improvement.

Pathway Optimization Workflow with Statistical Learning

The traditional Design-Build-Test bottleneck in strain development is being systematically dismantled through integrated automation, statistical design, and machine learning. The demonstrated 500-fold improvement in product titer through just two DBTL cycles illustrates the transformative potential of these approaches [1]. As these technologies mature and become more accessible, the timeline for developing industrial-grade production strains will shrink from years to months, fundamentally accelerating the pace of innovation in metabolic engineering and synthetic biology. The full integration of artificial intelligence and mechanistic models throughout the DBTL cycle promises to further enhance predictive design capabilities, potentially reducing the experimental burden required to identify optimal strain designs [2]. These advances in high-throughput screening workflows position metabolic engineering to fully deliver on its promise as a manufacturing platform for a sustainable bioeconomy.

Defining High-Throughput Screening (HTS) and Ultra-HTS (uHTS) in a Biomanufacturing Context

High-Throughput Screening (HTS) is an automated methodology for scientific discovery that enables researchers to rapidly conduct hundreds of thousands to millions of biological, genetic, or pharmacological tests [3] [4]. This approach has become a cornerstone in modern drug discovery and metabolic engineering, allowing for the systematic evaluation of vast compound libraries against specific biological targets. The fundamental goal of HTS is to identify "hits"—compounds, antibodies, or genes that modulate a particular biomolecular pathway—which then provide starting points for further design and optimization [3] [5]. In the context of biomanufacturing and metabolic engineering, HTS technologies have revolutionized strain development by accelerating the identification of non-obvious metabolic engineering targets that enhance production of valuable compounds [6].

The evolution from traditional manual screening to HTS began in the late 1980s, when screening capabilities expanded from merely 10-100 compounds per week to thousands [4]. The term "Ultra-High-Throughput Screening" (uHTS) emerged in the mid-1990s as technological advances enabled the screening of 100,000 or more compounds per day [3] [4]. This dramatic increase in throughput has been driven by parallel developments in robotics, miniaturization, detection technologies, and data processing capabilities. The cut-off between HTS and uHTS is somewhat arbitrary, but generally, uHTS refers to screening in excess of 100,000 compounds per day, with some systems capable of screening millions of compounds daily [3] [7].

Core Principles and Technical Specifications

Definition and Key Characteristics

High-Throughput Screening is defined by its use of automated equipment to rapidly test thousands to millions of samples for biological activity at the model organism, cellular, pathway, or molecular level [8]. The process leverages robotics, data processing software, liquid handling devices, and sensitive detectors to maximize throughput while minimizing reagent consumption and human intervention [3]. HTS typically involves screening 103–106 small molecule compounds of known structure in parallel, though it can also be applied to other substances including chemical mixtures, natural product extracts, oligonucleotides, and antibodies [8].

Ultra-High-Throughput Screening (uHTS) represents the upper echelon of this methodology, conducting hundreds of thousands of biological or chemical screening tests per day [4]. The transition from HTS to uHTS has been facilitated by several key technological developments, including the replacement of radiolabeling assays with luminescence- and fluorescence-based screens, automated plate-handling instrumentation, and significant miniaturization of assay volumes [4].

Technical Specifications and Comparison

Table 1: Technical Comparison of HTS and uHTS Platforms

| Parameter | Traditional HTS | uHTS |

|---|---|---|

| Throughput (tests per day) | 10,000 - 100,000 [7] [9] | >100,000 - millions [3] [4] [9] |

| Standard plate formats | 96, 384, 1536-well [3] [8] | 1536, 3456, 6144-well [3] [9] |

| Assay volume range | 5-50 μL [7] | 1-2 μL [3] [9] |

| Automation level | Integrated robotic systems [3] | Fully automated, often with central robots and scheduling software [7] |

| Primary applications | Primary screening, hit identification [5] | Large library screening, quantitative HTS [8] [9] |

Table 2: Detection Methods Commonly Used in HTS/uHTS

| Detection Method | Principle | Applications | Advantages |

|---|---|---|---|

| Fluorescence Intensity | Measures fluorescence emission [9] | Enzymatic assays, binding studies | High sensitivity, compatibility with HTS formats [9] |

| Fluorescence Resonance Energy Transfer (FRET) | Energy transfer between fluorophores [7] | Protein-protein interactions, enzymatic activity | Ratiometric measurement, reduces false positives [7] |

| Luminescence | Light emission from chemical reactions [4] | Reporter gene assays, cell viability | High signal-to-noise ratio, broad dynamic range [4] |

| Mass Spectrometry | Mass-to-charge ratio of ions [9] | Metabolite screening, ADME assays | Label-free, direct measurement [9] |

| Differential Scanning Fluorimetry | Protein thermal stability shifts [9] | Ligand binding, protein stability | Label-free, requires minimal optimization [9] |

Workflow and Experimental Design

Comprehensive HTS/uHTS Workflow

The following diagram illustrates the complete workflow for high-throughput screening in metabolic engineering applications:

Assay Plate Preparation and Design

The key labware in HTS is the microtiter plate, which features a grid of small, open divots called wells [3]. Modern HTS utilizes plates with 96, 192, 384, 1536, 3456, or 6144 wells, with the higher density formats being essential for uHTS applications [3]. A screening facility typically maintains a library of stock plates whose contents are carefully catalogued, from which separate assay plates are created as needed [3]. The process of assay plate preparation involves pipetting small amounts of liquid (often measured in nanoliters) from the wells of a stock plate to the corresponding wells of an empty plate [3].

Effective experimental design in HTS requires careful consideration of plate layout, including the strategic placement of positive and negative controls to monitor assay performance and quality [3]. The development of high-quality HTS assays requires integration of both experimental and computational approaches for quality control, with three critical means of QC being: (1) good plate design, (2) selection of effective positive and negative controls, and (3) development of effective QC metrics to measure the degree of differentiation [3].

Automation and Robotics Systems

Automation is an essential element in HTS's usefulness and a defining characteristic of uHTS [3]. Typically, an integrated robot system consisting of one or more robots transports assay-microplates from station to station for sample and reagent addition, mixing, incubation, and finally readout or detection [3]. An HTS system can usually prepare, incubate, and analyze many plates simultaneously, further speeding the data-collection process [3]. Modern HTS robots can test up to 100,000 compounds per day, with uHTS systems exceeding this capacity [3].

The automation process often involves multiple layered computers, various operating systems, a single central robot, and complex scheduling software [7]. A central robot is typically equipped with a gripper that can pick and place microplates around a platform, with a single run processing from 400 to 1000 microplates depending on the assay type [7].

Applications in Metabolic Engineering and Biomanufacturing

Coupled Screening Approaches for Metabolic Engineering

In metabolic engineering for strain development, researchers have developed innovative workflows that couple HTS with targeted screening to identify non-obvious metabolic engineering targets [6]. This approach is particularly valuable when industrially interesting molecules cannot be screened at sufficient throughput using conventional methods. The coupled workflow involves:

- Primary HTS of Common Precursors: Initial high-throughput screening of common precursors (e.g., amino acids) that can be screened either directly or by artificial biosensors [6].

- Low-Throughput Targeted Validation: Subsequent lower-throughput validation of the actual molecule of interest to identify beneficial metabolic engineering targets [6].

This methodology was successfully demonstrated in a study screening 4k gRNA libraries each deregulating 1000 metabolic genes in Saccharomyces cerevisiae [6]. Researchers initially screened yeast cells transformed with gRNA library plasmids for individual regulatory targets improving production of l-tyrosine-derived betaxanthins, identifying 30 targets that increased intracellular betaxanthin content 3.5–5.7 fold [6]. These targets were then validated in high-producing p-coumaric acid and L-DOPA strains, with several targets increasing secreted titers by up to 89% [6].

Quantitative High-Throughput Screening (qHTS)

Quantitative High-Throughput Screening (qHTS) has emerged as a powerful extension of traditional HTS, testing compounds at multiple concentrations to generate concentration-response curves immediately after screening [8]. This approach more fully characterizes the biological effects of chemicals and decreases rates of false positives and false negatives compared to traditional single-concentration screening [8]. In the context of metabolic engineering, qHTS enables more robust identification of optimal genetic modifications or culture conditions by providing complete dose-response relationships rather than single-point data.

Scientists at the NIH Chemical Genomics Center leveraged automation and low-volume assay formats to develop qHTS, enabling pharmacological profiling of large chemical libraries through generation of full concentration-response relationships for each compound [3]. The accompanying curve fitting and cheminformatics software yields half maximal effective concentration (EC50), maximal response, and Hill coefficient (nH) for entire libraries, enabling assessment of nascent structure activity relationships [3].

Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for HTS/uHTS

| Reagent Category | Specific Examples | Function in HTS/uHTS |

|---|---|---|

| Microplates | 96-, 384-, 1536-, 3456-well plates [3] | Primary assay vessel; higher densities enable higher throughput |

| Compound Libraries | ChemBridge, ChemDiv, National Cancer Institute libraries [10] | Source of chemical diversity for screening campaigns |

| Detection Reagents | Fluorescent dyes (e.g., Alamar Blue), luciferase substrates, FRET pairs [7] [9] | Enable detection and quantification of biological activity |

| Cell Lines | Engineered microbial strains, mammalian cell lines, stem cell-derived models [7] | Provide biological context for screening; may be engineered with specific reporters |

| Biosensors | Betaxanthin-based sensors, transcription factor-based reporters [6] | Enable indirect screening of compounds or metabolic states |

| Enzymes & Proteins | Recombinant enzymes, therapeutic targets [9] | Targets for biochemical screening assays |

| Robotic Liquid Handlers | Pipettors, dispensers, plate washers [10] | Automate reagent addition and washing steps |

Advanced Methodologies and Protocols

Experimental Protocol for uHTS in Metabolic Engineering

The following diagram details a specific experimental protocol for ultra-high-throughput screening in metabolic engineering applications, based on published methodologies:

Data Analysis and Hit Selection Protocols

The massive data generation capacity of HTS and uHTS necessitates sophisticated analytical approaches for quality control and hit selection [3]. Key methodologies include:

Quality Control Metrics:

- Z-factor: Measures the separation between positive and negative controls, with values >0.5 indicating excellent assays [3].

- Strictly Standardized Mean Difference (SSMD): Recently proposed for assessing data quality in HTS assays, providing a robust measure of effect size [3].

- Signal-to-background ratio and signal window: Traditional measures of assay robustness and dynamic range [3].

Hit Selection Methods:

- For screens without replicates: z-score, z-score (robust version), SSMD, B-score, and quantile-based methods [3].

- For screens with replicates: t-statistic and SSMD methods that directly estimate variability for each compound [3].

The hit selection process must balance statistical significance with practical effect sizes, as compounds with desired size of effects are designated as "hits" [3]. For metabolic engineering applications, this typically means identifying genetic modifications that significantly enhance production of target molecules while maintaining cellular viability and function.

Future Directions and Implementation Considerations

The field of HTS continues to evolve with several emerging trends shaping its application in biomanufacturing and metabolic engineering. Three-dimensional cell culture systems are increasingly being adapted for HTS formats, offering more physiologically relevant models for screening [10]. Advances in microfluidics and lab-on-a-chip technologies enable even greater miniaturization and throughput beyond current uHTS capabilities [4] [9]. The integration of artificial intelligence and machine learning with HTS data generation is creating new opportunities for predictive modeling and experimental design [2].

For research teams considering implementation of HTS technologies, key considerations include:

- Throughput Requirements: Balance between screening capacity and data quality management.

- Automation Level: Assess the trade-offs between full automation and semi-automated approaches.

- Assay Compatibility: Ensure biological assays are suitable for miniaturization and automation.

- Data Infrastructure: Implement robust data management systems capable of handling massive screening datasets.

- Cost-Benefit Analysis: Evaluate the substantial upfront investment against long-term screening needs.

The successful implementation of HTS and uHTS methodologies in metabolic engineering workflows has demonstrated significant potential for accelerating strain development and identifying non-obvious engineering targets that would be difficult to discover through rational design approaches alone [6]. As these technologies continue to advance and become more accessible, their impact on biomanufacturing and sustainable production of valuable compounds is expected to grow substantially.

High-Throughput Screening (HTS) represents a foundational methodology in modern metabolic engineering, enabling the systematic evaluation of vast libraries of microbial strains or enzymes to identify candidates with optimized properties for industrial production. HTS technologies allow researchers to efficiently navigate the immense design space of engineered biological systems, accelerating the design-build-test-learn cycle that is central to strain development [11] [12]. The core principle of HTS involves the miniaturization and parallelization of experimental processes, combined with automation and sophisticated detection technologies, to rapidly test thousands to millions of variants under controlled conditions. In the context of metabolic engineering for strain development, HTS facilitates the identification of strains with enhanced production capabilities for target molecules, improved substrate utilization, and increased robustness to industrial fermentation conditions [11].

The integration of HTS into metabolic engineering workflows has become increasingly critical as computational tools generate larger libraries of potential strain designs. Systems metabolic engineering faces the formidable task of rewiring microbial metabolism to cost-effectively generate high-value molecules from various inexpensive feedstocks. Because cellular systems remain too complex to model accurately, vast collections of engineered organism variants must be systematically created and evaluated through an enormous trial-and-error process to identify manufacturing-ready strains [11]. This review provides a comprehensive technical examination of the essential components that constitute modern HTS platforms, with particular emphasis on their application to metabolic engineering strain development.

Core HTS Technological Components

Automated Liquid Handling Systems

Automated liquid handlers form the operational backbone of any HTS workflow, enabling precise and reproducible transfer of liquids across microtiter plates with minimal human intervention. These systems range from high-end commercial platforms to more accessible low-cost alternatives, each offering distinct advantages for specific applications and budget constraints.

High-End Commercial Systems: Platforms from established manufacturers like Hamilton, Tecan, and Beckman Coulter represent the premium segment of liquid handling technology. These systems offer exceptional precision, flexibility, and integration capabilities, with prices often exceeding $150,000 USD. They typically feature multiple pipetting channels, robotic arm integration for plate movement, and compatibility with various ancillary devices such as incubators and detection modules. The primary advantages of these systems include their high throughput capacity, minimal cross-contamination risk, and robust construction suitable for continuous operation in industrial settings [13].

Low-Cost Accessible Platforms: Recent technological advancements have democratized access to liquid handling automation through more affordable systems. The Opentrons OT-2 represents this category, costing approximately $20,000-30,000 USD and offering comparable basic functionality to premium systems at a fraction of the cost. These platforms typically utilize open-source protocol scripting (Python in the case of the OT-2), providing greater flexibility for customization. While they may have limitations in maximum throughput or integration capabilities, their affordability makes HTS accessible to academic laboratories and smaller biotech companies [13].

Fixed-Tip vs. Disposable Tip Systems: Liquid handlers can be categorized based on their tip management approach. Fixed-tip systems utilize permanent tips that are washed between dispensing operations, significantly reducing plastic waste and consumable costs. However, they require rigorous decontamination protocols to prevent cross-contamination between samples. Disposable tip systems eliminate cross-contamination concerns but generate substantial plastic waste and incur ongoing consumable expenses. Recent developments have established effective calibration and decontamination protocols for fixed-tip systems, making them increasingly viable for biological applications where contamination risk must be minimized [12].

Miniaturized Cultivation Systems

Effective strain screening in metabolic engineering requires miniature cultivation platforms that accurately mimic large-scale fermentation conditions. Several formats have been developed to balance throughput with environmental control.

Microtiter Plates: Standard 96-well, 384-well, and 1536-well plates represent the most common cultivation vessels in HTS. The ongoing trend toward higher density formats increases throughput but presents challenges for adequate oxygen transfer, particularly for aerobic fermentations. For anaerobic phenotyping, special measures must be implemented to establish and maintain oxygen-free conditions, such as the use of sealing films with permeable membranes or integrated anaerobic chambers [12].

Deep-Well Plates: For microbial cultivation, 24-deep-well plates with 2-10 mL culture volumes provide improved aeration compared to standard microtiter plates. The deeper wells allow for greater liquid surface area and better gas exchange when combined with orbital shaking. These systems support the use of standard shaker-incubators with larger orbits (typically 19 mm) rather than specialized plate shakers with smaller orbits, making them more accessible to laboratories without dedicated HTS equipment [13].

Microfluidic Devices: Lab-on-a-chip technologies represent the cutting edge of miniaturization in cultivation systems. These devices enable extremely high-density screening with thousands to millions of discrete reaction chambers or droplets. Microfluidic platforms offer unparalleled control over environmental conditions and the ability to perform dynamic perturbations, but require specialized equipment and expertise. They are particularly valuable for screening massive libraries where other methods would be prohibitively expensive or time-consuming [11] [14].

Detection and Analysis Technologies

The effectiveness of any HTS campaign ultimately depends on the detection methodologies employed to quantify desired phenotypes. Multiple detection strategies have been developed, each with specific applications in metabolic engineering.

Cell-Based Assays: Accounting for approximately 39.4% of the HTS technology segment, cell-based assays dominate metabolic engineering applications due to their ability to deliver physiologically relevant data [15]. These assays enable direct assessment of strain performance, including growth characteristics, substrate consumption, and product formation. Common detection methods include fluorescence-based readouts, absorbance measurements, and luminescence assays. Recent advancements in live-cell imaging and fluorescence assays have significantly enhanced the information content obtainable from cell-based screening [15].

Label-Free Technologies: These methods detect analytes without requiring fluorescent or other tags, reducing assay complexity and potential interference with biological systems. Techniques include surface plasmon resonance (SPR), isothermal titration calorimetry, and mass spectrometry. While often lower in throughput than labeled approaches, they provide direct binding and kinetic information valuable for enzyme characterization [15].

Ultra-High-Throughput Screening (uHTS): uHTS technologies enable the screening of millions of compounds or strains using highly miniaturized formats (nanoliter volumes) and advanced detection systems. This segment is anticipated to expand with a 12% CAGR through 2035, reflecting its growing importance in exploring vast biological design spaces [15]. uHTS typically employs specialized equipment for liquid handling, detection, and data processing to manage the immense data volumes generated.

Advanced Immunoassays: Recent innovations in detection technology include platforms like nELISA (next-generation enzyme-linked immunosorbent assay), which combines DNA-mediated, bead-based sandwich immunoassays with advanced multicolor bead barcoding. This approach enables highly multiplexed protein quantification with sub-picogram-per-milliliter sensitivity across seven orders of magnitude. While traditionally associated with clinical applications, such technologies have growing relevance in metabolic engineering for quantifying multiple protein expression levels or metabolic enzymes simultaneously [16].

Data Analysis and Visualization Platforms

The massive datasets generated by HTS campaigns require sophisticated computational tools for analysis, interpretation, and visualization. These platforms transform raw screening data into biologically meaningful information to guide strain optimization.

Commercial Analysis Suites: Platforms such as CDD Vault provide integrated solutions for HTS data management, analysis, and visualization. These systems typically include tools for storing, mining, and securely sharing HTS data alongside capabilities for building machine learning models from screening results. Modern implementations utilize web-based visualization modules that enable researchers to interactively explore multidimensional data through scatterplots, histograms, and other graphical representations [17].

Specialized Bioinformatics Tools: For specific data types, specialized analysis packages have been developed. SeqCode represents an example focused on high-throughput sequencing data analysis, providing standardized approaches for generating meta-plots, heatmaps, feature charts, and other visualizations from genomic datasets. Such tools address the critical need for reproducible analysis methods as sequencing costs decrease and dataset sizes increase [18].

Machine Learning Integration: Computational modeling has become increasingly integrated with HTS data analysis. Bayesian models, neural networks, and other machine learning algorithms can identify complex patterns in screening data that might escape conventional analysis. These approaches are particularly valuable for predicting strain performance based on multidimensional screening readouts, enabling more intelligent selection of candidates for further development [17]. Dimensionality reduction techniques such as t-distributed stochastic neighbor embedding (t-SNE) can effectively cluster similarly performing strains, facilitating the identification of promising candidates from large libraries [12].

HTS Applications in Metabolic Engineering Strain Development

Anaerobic Phenotyping for Strain Characterization

A critical application of HTS in metabolic engineering involves the characterization of strain performance under anaerobic conditions, which are relevant for many industrial fermentation processes. Traditional aerobic screening methods may fail to identify strains with optimal performance under anaerobic production conditions, creating a need for specialized screening approaches.

Raj et al. (2021) developed an automation-assisted workflow for anaerobic phenotyping that addresses both technical and sustainability concerns [12]. Their method incorporates eco-friendly automation practices that effectively calibrate and decontaminate fixed-tip liquid handling systems to reduce plastic waste. Additionally, they investigated inexpensive methods to establish anaerobic conditions in microplates, making high-throughput anaerobic screening more accessible to laboratories without specialized equipment.

The validation of this platform included two case studies: an anaerobic enzyme screen and a microbial phenotypic screen. Researchers used the automation platform to investigate conditions under which several strains of E. coli exhibit consistent phenotypes between 0.5 L bioreactors and the scaled-down fermentation platform. The integration of t-SNE analysis enabled effective clustering of similarly performing strains at the bioreactor scale, demonstrating the predictive value of the miniaturized system [12].

High-Throughput Enzyme Discovery and Engineering

Advancements in computational protein design and directed evolution have created enormous libraries of enzyme variants that require characterization. HTS platforms specifically designed for enzyme engineering enable the efficient functional assessment of these variants.

A landmark study by the Beckham Lab (2024) demonstrated a low-cost, robot-assisted pipeline for high-throughput protein purification and characterization [13]. This platform enables the purification of 96 proteins in parallel using small-scale expression in E. coli and an affordable liquid-handling robot, with scalability for processing hundreds of proteins weekly per user. The methodology incorporates several innovations:

- Fixed-tip liquid handling significantly reduces plastic waste generation compared to disposable tip systems

- Culture aeration optimization through the use of 24-deep-well plates with 2 mL cultures

- Automated purification using nickel-charged magnetic beads for affinity capture

- Tag removal via protease cleavage to avoid elution with imidazole, which can interfere with downstream assays

The researchers validated this platform by expressing and purifying 23 poly(ethylene terephthalate) hydrolases, replicated across a 96-well plate. The semi-automated protocol produced purified samples with high reproducibility, achieving sufficient yields and purity for both thermostability measurements and activity analysis across varied reaction conditions [13].

Emerging Compartmentalization Strategies

Ultra-high-throughput screening platforms increasingly rely on compartmentalization strategies to enable the screening of enzyme variant libraries exceeding millions of members. These technologies can be broadly categorized into three approaches:

Cellular Compartmentalization: Using cells as discrete reaction compartments represents the most established approach, leveraging natural cellular boundaries to isolate individual variants. This method benefits from the well-developed infrastructure for cell culture and manipulation but is limited by transformation efficiency and the ability to link genotype to phenotype [14].

In Vitro Compartmentalization via Synthetic Droplets: Water-in-oil emulsion droplets function as artificial cells, each containing a single variant alongside necessary reaction components. This approach achieves extremely high compartment densities (up to 10^10 droplets per mL) and enables direct control of reaction conditions. Microfluidic devices are often used to generate monodisperse droplets with precise control over size and content [14].

Microchambers: Arrays of fabricated microwells or surface-tethered reaction zones provide defined locations for screening. These systems facilitate repeated observation of the same variants over time, enabling kinetic analyses. While typically lower in throughput than droplet-based systems, they offer superior spatial organization and tracking capabilities [14].

Quantitative Market Analysis and Growth Projections

The expanding adoption of HTS technologies across academic, industrial, and government research sectors has driven substantial market growth. Understanding these trends provides context for the evolving landscape of HTS in metabolic engineering.

Table 1: High-Throughput Screening Market Projections 2025-2035

| Metric | Value |

|---|---|

| Market Value in 2025 (Estimated) | USD 32.0 billion [15] |

| Market Value in 2035 (Projected) | USD 82.9 billion [15] |

| Forecast CAGR (2025-2035) | 10.0% [15] |

| Historical CAGR (2020-2025) | 14.0% [15] |

| Leading Technology Segment | Cell-Based Assays (39.4% share) [15] |

| Leading Application Segment | Primary Screening (42.7% share) [15] |

| Fastest Growing Technology | Ultra-High-Throughput Screening (12% CAGR) [15] |

| Fastest Growing Application | Target Identification (12% CAGR) [15] |

Table 2: Regional Growth Variations in HTS Adoption

| Country | Projected CAGR (2025-2035) | Key Growth Drivers |

|---|---|---|

| United States | 12.6% [15] | Strong biotechnology startup ecosystem, specialized in HTS technologies [15] |

| United Kingdom | 12.9% [15] | Drug repurposing initiatives, focus on identifying new therapeutic applications for existing compounds [15] |

| China | 13.1% [15] | Rapid expansion of biopharmaceutical industry, increased R&D investment, favorable government policies [15] |

| Japan | 13.7% [15] | Government initiatives toward precision medicine, advanced manufacturing capabilities [15] |

| South Korea | 14.9% [15] | Not specified in search results, but typically driven by significant government and private investment in biotechnology |

Experimental Protocols for HTS in Metabolic Engineering

Automated Protein Purification Protocol

The high-throughput protein purification protocol developed by the Beckham Lab provides a representative example of an integrated HTS workflow for enzyme characterization [13]. This protocol enables the parallel transformation, inoculation, and purification of 96 enzymes in a well-plate format, with options to process multiple plates consecutively.

Gene Synthesis and Cloning:

- Employ plasmid constructs containing both an affinity tag and protease cleavage site (e.g., pCDB179 with histidine tag and SUMO site)

- Codon-optimize genes for expression in E. coli

- Utilize commercial synthesis and cloning services or in-house methods

Transformation:

- Use chemically competent E. coli cells (e.g., Zymo Mix & Go! E. coli Transformation Kit)

- Combine cells with plasmid DNA using liquid handler

- Incubate on ice for 30 minutes

- Add outgrowth media and incubate at 37°C for 1 hour

- Add antibiotic selection and grow for ~40 hours at 30°C to saturation

Inoculation and Expression:

- Transfer saturated transformation cultures to deep-well plates containing autoinduction media

- Use 24-deep-well plates with 2 mL cultures for improved aeration

- Incubate at 37°C with shaking (250 rpm) for 24 hours

Cell Lysis and Purification:

- Harvest cells by centrifugation

- Resuspend pellets in lysis buffer (50 mM HEPES, 500 mM NaCl, 20 mM imidazole, pH 7.4)

- Lyse cells using repeated freeze-thaw cycles or chemical lysis

- Transfer lysates to plates containing nickel-charged magnetic beads

- Incubate with shaking to allow binding (30 minutes, room temperature)

- Wash beads twice with wash buffer (50 mM HEPES, 500 mM NaCl, 40 mM imidazole, pH 7.4)

- Cleave target protein from beads using SUMO protease (3 hours, room temperature)

- Recover purified protein in the supernatant [13]

Anaerobic Phenotyping Protocol

The automation-assisted anaerobic phenotyping protocol addresses the specific challenges of screening strains under oxygen-free conditions, which are relevant for many metabolic engineering applications involving fermentative production [12].

Anaerobic Chamber Preparation:

- Use commercial anaerobic chambers or create modified atmosphere using anaerobic gas packs

- Validate anaerobic conditions using resazurin indicator (colorless when anaerobic)

- Pre-reduce media by storing in anaerobic conditions for 24-48 hours before use

Culture Setup:

- Inoculate strains into pre-reduced media in microtiter plates using liquid handler

- Seal plates with gas-permeable membranes to maintain anaerobic conditions while allowing gas exchange

- Incubate in anaerobic chambers or modified atmosphere at appropriate temperature

Sampling and Analysis:

- For time-course measurements, use automated sampling systems integrated with anaerobic chambers

- Analyze metabolites, substrates, and products using methods compatible with small volumes:

- HPLC with autosampler

- GC-MS for volatile compounds

- Microplate-based absorbance/fluorescence assays

Data Analysis:

- Apply dimensionality reduction techniques (t-SNE) to identify strain performance clusters

- Compare performance between miniature and bioreactor scales to validate predictive value

- Use machine learning approaches to identify features predictive of industrial performance [12]

Workflow Visualization

HTS Workflow for Metabolic Engineering

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagent Solutions for HTS in Metabolic Engineering

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Affinity Purification Resins | Selective capture of target proteins | Nickel-charged magnetic beads for His-tagged proteins; enable automated purification in plate formats [13] |

| Cell Lysis Reagents | Disruption of cells to release intracellular content | Chemical lysis buffers (lysozyme, detergents) or physical methods (freeze-thaw); compatible with automation [13] |

| Autoinduction Media | Protein expression without manual induction | Enables high-throughput expression screening; reduces manual intervention [13] |

| Anaerobic Indicator Solutions | Verification of oxygen-free conditions | Resazurin (redox indicator); colorless when anaerobic; essential for validating anaerobic screening setups [12] |

| Assay Buffers | Provide optimal conditions for enzymatic reactions | HEPES, phosphate, or Tris buffers at appropriate pH and ionic strength; may include cofactors or substrates [13] |

| Detection Reagents | Enable quantification of enzymatic activity or metabolites | Fluorogenic or chromogenic substrates; antibody conjugates for immunoassays; mass spectrometry standards [16] |

| Barcoded Beads | Multiplexed protein detection | Spectral barcoding with fluorophores (AlexaFluor 488, Cy3, Cy5, Cy5.5) for high-plex assays like nELISA [16] |

| DNA Tethers | Spatially separate assay components | Flexible single-stranded DNA oligos for preassembling antibody pairs; enable detection by strand displacement [16] |

The continuous evolution of HTS technologies is transforming metabolic engineering by accelerating the iterative design-build-test-learn cycle that underpins strain development. The essential components of HTS platforms—from automated liquid handlers to advanced detection technologies—have matured to the point where screening millions of variants is becoming routine in both industrial and academic settings. The ongoing market growth, projected to reach USD 82.9 billion by 2035, reflects the expanding adoption of these technologies across diverse applications [15].

Future advancements in HTS for metabolic engineering will likely focus on several key areas: further miniaturization to increase throughput while reducing costs, enhanced integration of experimental and computational workflows, development of more sophisticated scale-down models that better predict industrial performance, and creation of multi-parametric screening approaches that capture complex phenotype characteristics. Additionally, the growing emphasis on sustainability is driving innovation in eco-friendly automation practices that reduce plastic waste and resource consumption [12].

As artificial intelligence and machine learning continue to advance, the synergy between computational prediction and experimental validation through HTS will become increasingly tight, enabling more intelligent exploration of the vast sequence and design spaces available to metabolic engineers. The essential components described in this technical guide provide the foundation upon which the next generation of strain development platforms will be built, ultimately accelerating the creation of microbial cell factories for sustainable chemical production.

The Role of HTS in Accelerating the 'Bench to Biofactory' Pipeline

The transition of a bioprocess from laboratory demonstration to industrial-scale production—the 'bench to biofactory' journey—is a complex and costly endeavor. A significant challenge lies in the vast optimization space that must be navigated to develop robust microbial cell factories. Metabolic engineering, the discipline of rewiring microbial metabolism to produce target compounds, relies on iterative Design-Build-Test-Learn (DBTL) cycles. However, traditional methods, where strain design and construction can generate thousands of variants, are often bottlenecked by the "Test" phase, which lags in throughput, robustness, and generalizability [19]. High-Throughput Screening (HTS) technologies are therefore not merely beneficial but essential for bridging this capability gap. By enabling the rapid evaluation of immense strain libraries, HTS allows researchers to identify rare, high-performing candidates that would be impossible to find with slower, chromatographic methods [19] [20]. The integration of automation, sophisticated biosensors, and advanced data analytics into HTS workflows is fundamentally accelerating the DBTL cycle, reducing development time and costs, and making the economic viability of bio-based production a more attainable goal [2].

The following diagram illustrates the central, iterative DBTL paradigm in metabolic engineering, which is powered by HTS.

HTS Methodologies and Experimental Protocols

The effectiveness of an HTS campaign hinges on selecting the appropriate screening method for the biological question and production metric. The following table summarizes the core characteristics of major HTS detection methodologies.

Table 1: Comparison of Key HTS Detection Methodologies

| Method | Typical Daily Throughput (Samples) | Sensitivity (Limit of Detection) | Key Advantages | Primary Limitations |

|---|---|---|---|---|

| Chromatography (LC/GC) | 10 - 100 [19] | mM - µM [19] | High flexibility; confident identification and precise quantification [19]. | Very low throughput; not suitable for large library screening [19]. |

| Biosensors | 1,000 - 10,000 [19] | pM - nM [19] | Excellent throughput; enables real-time monitoring of production in live cells [21]. | Requires development of specific ligand-recognition element; can suffer from cross-talk [19] [21]. |

| Growth-Coupled Selection | >10⁷ [19] [22] | Varies | Extremely high throughput; no specialized equipment needed; directly links production to survival [22]. | Requires extensive strain rewiring; not applicable to all products [22]. |

| MOMS | >10⁷ [20] | 100 nM [20] | Ultra-high sensitivity and throughput; no genetic modification of producer needed; versatile sensor anchoring [20]. | Requires cell surface biotinylation and aptamer coupling [20]. |

Genetically Encoded Biosensors

Protocol Overview: Genetically encoded biosensors are genetic circuits that convert the intracellular concentration of a target molecule (input) into a measurable signal, such as fluorescence or antibiotic resistance (output) [21]. The most common architectures are transcription factor-based or riboswitch-based.

Transcription Factor (TF)-Based Biosensors:

- Design: A plasmid is constructed containing the gene for a reporter protein (e.g., GFP) under the control of a promoter that is naturally activated or repressed by a TF specific to the target molecule.

- Implementation: The biosensor plasmid is transformed into the microbial strain library. Intracellular production of the target molecule causes it to bind the TF, which then modulates transcription of the reporter gene.

- Screening: Fluorescence-activated cell sorting (FACS) is used to isolate high-fluorescence cells, which correlate with high producer strains [21].

Riboswitch-Based Biosensors:

- Design: An aptamer sequence that binds the target metabolite is fused to a riboswitch element that regulates gene expression at the transcriptional or translational level, controlling the expression of a reporter gene [21].

- Implementation & Screening: Similar to TF-based biosensors, the strain library is transformed with the riboswitch construct and screened via FACS [21].

Application Example: Biosensors have been crucial in discovering and engineering enzymes for metabolic pathways. For instance, a FadR-based biosensor was used to screen for genes that enhance fatty acyl-CoA pools in Saccharomyces cerevisiae, while an ectoine-responsive biosensor has guided the engineering of a more efficient chorismate pathway in E. coli [21].

The MOMS Platform for Extracellular Secretion

Protocol Overview: The Mother Yeast Cell Membrane Surface (MOMS) sensor technology is a recent breakthrough for analyzing extracellular secretions from yeast [20]. It allows for ultrasensitive, high-speed screening without genetic modification of the production strain.

- Cell Surface Biotinylation: Yeast cells from the library are harvested and treated with a membrane-impermeable biotinylation reagent (e.g., sulfo-NHS-LC-biotin). This covalently attaches biotin groups exclusively to proteins on the cell wall [20].

- Sensor Assembly: The biotinylated cells are sequentially incubated with streptavidin and biotin-labeled DNA aptamers. The aptamers are pre-designed to bind specifically to the target secreted molecule (e.g., vanillin, ATP) [20]. This creates a high-density sensor coating (~1.4 × 10⁷ sensors/cell) [20].

- Selective Mother Cell Coating: A key feature is that this sensor coating remains confined to the original "mother" cells during cell division and budding, ensuring the sensor density and signal remain strong [20].

- Screening and Sorting: The MOMS-coated cell library is analyzed using a high-throughput flow cytometer or cell sorter. When a target molecule is secreted and bound by its aptamer on the mother cell surface, it generates a localized fluorescent signal. Cells with signals above a set threshold (e.g., the top 0.05% of producers) are isolated at high speed (up to 3.0 × 10³ cells/second) [20].

The workflow of the MOMS platform is detailed below.

Growth-Coupled Selection

Protocol Overview: This powerful method engineers the host strain's metabolism so that the production of the target compound becomes essential for growth and survival [22]. This creates a direct evolutionary pressure to optimize the pathway.

- Strain Rewiring: Key native metabolic genes that are redundant with or compete against the desired synthetic pathway are deleted from the genome. This is often done to create auxotrophs that depend on the synthetic pathway for essential biomass precursors [22].

- Library Transformation: The mutant enzyme or pathway library is introduced into the rewired selection strain.

- Selection: The population of transformed cells is simply inoculated into a minimal medium where the target pathway is necessary to synthesize an essential metabolite. Only cells with functional, efficient pathway variants are able to grow [22].

- Validation: Enriched clones from the selection are validated using analytical methods like LC-MS to quantify production titers.

Application Example: E. coli selection strains have been designed to couple the production of various compounds, including those from central carbon metabolism, amino acids, and energy carriers, to growth [22].

Data Management and Analysis in HTS

The massive datasets generated by HTS campaigns necessitate robust informatics pipelines and careful statistical analysis to avoid false discoveries and derive meaningful biological insights.

Cheminformatics and Hit-Prioritization Workflow

A standard HTS data analysis pipeline involves two major steps after primary data normalization and quality control [23]:

- Hit-Calling: This step identifies a subset of compounds or strains ("hits") that show activity of interest in the primary screen. Researchers use visualization tools to set activity thresholds (e.g., % inhibition or fluorescence intensity) and the minimum percentage of replicates that must pass this threshold. This process is documented to ensure reproducibility [23].

- Cherry-Picking: The initial hit list is prioritized for confirmatory dose-response testing. This involves filtering based on computed chemical properties (e.g., cLogP), removing compounds with reactive functional groups, and selecting structurally related analogs to establish early structure-activity relationships (SAR) [23].

Statistical Analysis of High-Dimensional Data

Metabolomics and other omics data used in the "Learn" phase present statistical challenges due to a high number of variables (e.g., metabolites) relative to samples, and strong intercorrelations between these variables [24].

- Univariate vs. Multivariate Methods: Traditional univariate methods (e.g., t-tests with FDR correction) are simple but can be less informative in high-dimensional settings because they ignore variable correlations. They may yield many "false positives" that are merely correlated with true positives [24].

- Sparse Multivariate Models: Methods like LASSO (Least Absolute Shrinkage and Selection Operator) and SPLS (Sparse Partial Least Squares) are particularly suited for HTS data. They perform variable selection and regression simultaneously, identifying a smaller set of variables that collectively predict the outcome [24]. Studies show that as the number of metabolites and sample size increases, these sparse multivariate methods outperform univariate approaches in both positive predictive value and the number of false positives [24].

Essential Tools and Reagents for HTS

Table 2: Research Reagent Solutions for HTS Workflows

| Reagent / Tool | Function in HTS | Example Application / Note |

|---|---|---|

| CRISPR-Cas9 Systems | Enables high-throughput, precise genome editing for library construction. | The TUNEYALI method uses CRISPR for promoter swapping in Y. lipolytica [25]. |

| DNA Aptamers | Serve as recognition elements in biosensors and surface sensors; bind specific small molecules. | Used in the MOMS platform to detect metabolites like vanillin and ATP [20]. |

| Transcription Factors | Natural protein-based sensors used in genetically encoded biosensors. | Engineered to respond to non-natural ligands for novel pathway screening [21]. |

| Sulfo-NHS-LC-Biotin | Membrane-impermeable biotinylation reagent for labeling cell surface proteins. | Critical for anchoring the sensor complex in the MOMS protocol [20]. |

| Fluorescent Reporters (e.g., GFP) | Provide a measurable output for biosensors and FACS-based screening. | Fluorescence intensity is correlated with intracellular target metabolite concentration [19] [21]. |

| HTS-Compatible Microplates | Standardized plates (e.g., 384- or 1536-well) for miniaturized and parallel assays. | Fundamental vessel for running millions of chemical or biological tests [26]. |

The integration of advanced HTS technologies is unequivocally compressing the timeline from laboratory concept to industrial biofactory. Methodologies like biosensor-guided sorting and the groundbreaking MOMS platform are shattering previous throughput and sensitivity barriers, allowing for the intelligent interrogation of vast biological design spaces. The future of HTS in metabolic engineering is inextricably linked to the increasing adoption of automation, self-driving laboratories, and sophisticated data management systems [2]. These developments generate the high-quality, large-scale datasets required to power Artificial Intelligence and Machine Learning (AI/ML) models. As these models become more predictive, they will progressively invert the DBTL cycle, shifting the burden from physical screening to in silico design, ultimately leading to more rational and dramatically accelerated strain engineering efforts. The continued evolution of HTS promises to be a cornerstone in the realization of a robust, sustainable, and economically viable bioeconomy.

Building Better Cell Factories: HTS Methods for Strain Construction and Screening

Metabolic engineering aims to rewire microbial metabolism to transform inexpensive feedstocks into valuable molecules, from pharmaceuticals to biofuels [11]. However, a significant challenge persists: cellular systems remain too complex to model accurately, making the rational design of high-performing manufacturing strains exceptionally difficult [25]. Consequently, strain development relies on testing vast collections of engineered variants through an enormous trial-and-error process [11]. This necessitates high-throughput (HTP) methods that allow researchers to build and test numerous genetic hypotheses simultaneously. The TUNEYALI (TUNing Expression in Yarrowia lipolytica) method represents a significant advancement in this domain. It is a CRISPR-Cas9-based platform for HTP gene expression tuning in the industrially relevant yeast Yarrowia lipolytica, enabling the systematic exploration of genetic perturbations to identify optimal configurations for desired phenotypes [25] [27].

The TUNEYALI Platform: Core Methodology and Workflow

Principle and Genetic Design

The foundational principle of TUNEYALI is scarless promoter replacement to precisely modulate gene expression levels [25]. The method involves swapping the native promoter of a target gene with a library of native Y. lipolytica promoters of varying strengths or even removing the promoter entirely. This allows for tuning the expression of each target gene to multiple predefined levels, creating a diverse population of engineered strains for screening [25] [27].

A key innovation of TUNEYALI is its solution to a major bottleneck in library-scale genome editing: ensuring the correct sgRNA and its corresponding repair template co-localize in the same cell. Traditional methods that co-transform pools of separate elements suffer from low editing efficiency due to mispairing. TUNEYALI overcomes this by encoding both the sgRNA and its homologous repair (HR) template on a single plasmid, guaranteeing their coupled delivery [25].

The genetic design of the editing plasmid is as follows:

- Target-Specific sgRNA: Designed to target the promoter region of the gene of interest.

- Homology Arms: Short sequences flanking the insertion site facilitate homologous recombination. The upstream arm matches the region upstream of the native promoter, and the downstream arm matches the start of the coding sequence (CDS).

- SapI Restriction Site: A double SapI site is engineered between the homology arms, allowing for the modular insertion of promoter elements via Golden Gate assembly. The 3-bp overhang generated by SapI corresponds to a start codon (ATG), ensuring scarless fusion between the new promoter and the target gene's CDS [25].

Experimental Protocol and Workflow

The following diagram illustrates the complete TUNEYALI workflow, from library construction to strain screening:

Figure 1: The TUNEYALI workflow for high-throughput strain development.

Detailed Step-by-Step Protocol:

Library Construction:

- Design and Synthesis: For each target gene, design and synthesize a ~300-500 bp DNA construct containing the gene-specific sgRNA sequence, 62-162 bp homology arms, and the intermediate SapI restriction site [25].

- Plasmid Assembly: Clone each synthesized construct individually into a plasmid backbone using Gibson assembly, creating a target-specific base plasmid [25].

- Promoter Insertion: Mix the individual target plasmids (or a pooled subset) with a library of promoter elements. Perform a Golden Gate assembly reaction using the SapI enzyme to insert the promoters between the homology arms, creating the final editing library [25].

Yeast Transformation and Screening:

- Transformation: Transform the pooled plasmid library into a chosen Y. lipolytica host strain (e.g., a wild-type or betanin-producing strain) [25] [27].

- Phenotypic Screening: Plate the transformed cells and screen the resulting thousands of clones for the phenotype of interest. In the case study, this included improved thermotolerance, altered morphology (loss of pseudohyphal growth), or enhanced betanin production [27].

Variant Identification:

- Isolation and Sequencing: Isolate genomic DNA from clones exhibiting the desired phenotype. Sequence the integrated plasmid region to determine which promoter was inserted and which transcription factor's expression was altered [25]. This directly links the phenotype back to its genetic cause.

Key Technical Optimization: Homology Arm Length

The efficiency of homologous recombination in CRISPR editing is critically dependent on the length of the homology arms. The TUNEYALI team systematically evaluated this parameter, demonstrating that longer arms significantly increase editing efficiency.

Table 1: Impact of Homology Arm Length on Genome Editing Efficiency in Y. lipolytica [25]

| Homology Arm Length | Total Transformants | Fluorescent (Edited) Colonies | Editing Efficiency |

|---|---|---|---|

| 62 bp | Low | Very few | Low |

| 162 bp | Hundreds | Many | Significantly higher |

| 500 bp | Highest | Highest | Highest (but cost-prohibitive) |

The data showed that while 500 bp arms yielded the highest efficiency, the 162 bp arms provided a optimal balance between high editing efficiency and synthetic DNA cost, making them suitable for large-scale library construction [25].

Case Study: Application in Transcription Factor Engineering

To demonstrate its capabilities, the TUNEYALI method was deployed to engineer a library of 56 transcription factors (TFs) in Y. lipolytica. The goal was to identify TFs that, when perturbed, could confer advantageous industrial phenotypes [25] [27].

Experimental Setup:

- Library Scale: A plasmid library was constructed to target 56 different transcription factors.

- Expression Tuning: For each TF, the method enabled tuning its expression to seven distinct levels by replacing its native promoter with promoters of different strengths [27].

- Screening Strains: The library was transformed into both a reference strain and an engineered betanin-producing strain [27].

Results and Outcomes: The high-throughput screen successfully identified multiple TFs linked to key phenotypes:

- Thermotolerance: Several TFs were identified whose altered expression increased the yeast's tolerance to high temperatures [27].

- Morphology Engineering: Two specific TFs were found that, when perturbed, eliminated the undesirable pseudohyphal growth, a trait that can complicate large-scale fermentations [27].

- Metabolic Production: Several TFs were discovered whose regulatory changes led to increased production of the high-value compound betanin [27].

This case study validates TUNEYALI as a powerful functional genomics tool for uncovering gene-phenotype relationships and for rapidly isolating strains with improved industrial performance.

Essential Research Reagents and Tools

The following table details the key reagents and tools that form the core of the TUNEYALI platform, which are available to the research community.

Table 2: The Scientist's Toolkit: Key Reagents for the TUNEYALI Method

| Research Reagent | Function / Description | Availability / Reference |

|---|---|---|

| TUNEYALI-TF Library | Pre-built plasmid library targeting 56 transcription factors in Y. lipolytica. | AddGene (#217744) [25] |

| TUNEYALI-TF Kit | Toolkit for constructing new target libraries using the TUNEYALI method. | AddGene (#1000000255) [25] |

| CRISPR-Cas9 System | GV393 (U6-sgRNA-EF1a-Cas9-FLAG-P2A-EGFP) or similar vector for expressing sgRNA and Cas9. | [25] [28] |

| Golden Gate Assembly | Uses SapI (Type IIs) restriction enzyme for modular promoter insertion. | [25] |

| Reporter Strain | Y. lipolytica strain ST14141 (ΔURA3::mNG) for validating editing efficiency. | [25] |

Integration into a High-Throughput Metabolic Engineering Workflow

TUNEYALI is a pivotal component in the modern Design-Build-Test-Learn (DBTL) cycle for metabolic engineering. Its value is fully realized when integrated with other HTP technologies.

The "Build" Module: TUNEYALI excels in the "Build" phase, enabling the rapid construction of thousands of genetically diverse variants [25]. Its single-plasmid system ensures high-fidelity editing at a library scale.

The "Test" Module: Effective screening is crucial. This involves HTP cultivation in microplates and precise phenotyping. Advanced methods include:

- Multiplexed Fermentation Monitoring: Using fluorescence-based assays with soluble probes (e.g., BCECF for pH, ruthenium complexes for dissolved oxygen) in standard microplates allows parallel monitoring of physiological parameters like growth, acidification, and oxygen consumption [29].

- Automated Data Mining: Numerical methods can be applied to the rich data from online sensors to automatically extract key physiological descriptors (e.g., growth rate, lag phase duration, acidification rate), enabling objective and high-volume comparison of strain performance [29].

The "Learn" Module: The genetic makeup of superior clones identified by screening is determined by sequencing. Tools like CRISPR-detector can be employed for accurate detection and visualization of genome-wide mutations induced by editing, confirming the intended genetic changes and checking for potential off-target effects [30]. The aggregated data from successful clones informs the next DBTL cycle, creating a virtuous cycle of strain improvement.

The relationship between TUNEYALI and these supporting technologies within a metabolic engineering workflow is shown below:

Figure 2: The role of the TUNEYALI platform within an integrated high-throughput DBTL cycle for metabolic engineering.

Within the framework of high-throughput screening (HTS) for metabolic engineering strain development, the construction of high-quality genetic libraries represents a critical initial phase in the Design-Build-Test-Learn (DBTL) cycle [31]. The efficiency of the entire screening workflow is profoundly influenced by the design and diversity of the variant library. Promoter libraries, transcription factor (TF) targeting, and combinatorial assembly techniques are foundational methodologies for generating this necessary genetic diversity. These strategies enable systematic exploration of genetic space, allowing researchers to optimize metabolic flux, engineer complex regulatory circuits, and ultimately identify high-performing production strains. This guide details the core principles, experimental protocols, and quantitative performance of these library design modalities, providing a technical foundation for their application in accelerated strain engineering.

Promoter Library Design and Analysis

Promoter libraries are powerful tools for fine-tuning gene expression levels, which is essential for balancing metabolic pathways and avoiding the accumulation of toxic intermediates or metabolic burden.

Combinatorial Promoter Library Architecture

Combinatorial promoters, which respond to one or more transcription factors, allow for the integration of multiple regulatory signals. A landmark study constructed a library of 288 E. coli promoters with architectures comprising up to three inputs from four different TFs (AraC, LuxR, LacI, TetR) [32]. The library was assembled from modular components:

- Distal Unit: 45 bp region upstream of the -35 box.

- Core Unit: 25 bp region between the -35 and -10 boxes.

- Proximal Unit: 30 bp region downstream of the -10 box.

Each position was represented by 5 unregulated and 11 operator-containing units, varying operator affinity, location, and orientation. This design allowed for varied -10 and -35 boxes, resulting in promoter strengths spanning five decades of dynamic range [32].

Quantitative Analysis of Promoter Function

The function of promoters from the combinatorial library was characterized by measuring expression in response to 16 combinations of four chemical inducers. The analysis defined key functional parameters:

Table 1: Performance of Single-Input Gates (SIGs) from Combinatorial Promoter Library [32]

| Transcription Factor | Type | Uninduced Expression (ALU) | Induced Expression (ALU) | Regulatory Range (r) |

|---|---|---|---|---|

| TetR | Repressor | 26 ± 8 | 2.3 × 10⁶ ± 0.2 × 10⁶ | 8.9 × 10⁴ ± 0.3 × 10⁴ |

| TetR | Repressor | 14 ± 4 | 1.7 × 10⁵ ± 0.1 × 10⁵ | 1.2 × 10⁴ ± 0.4 × 10⁴ |

| LuxR | Activator | 1.3 ± 0.3 | 1.4 × 10³ ± 0.1 × 10³ | 1.1 × 10³ ± 0.3 × 10³ |

Key findings from the library analysis include:

- Repressors exhibited higher maximum regulatory ranges (up to r=10⁵) compared to activators (r=10³) [32].

- Of the unique promoters, 49% changed expression by a factor of 10 or more in response to inducer signals [32].

- No significant regulation was observed without the presence of a corresponding TF operator, confirming the specificity of the designed architectures [32].

Figure 1: Workflow for constructing and screening a combinatorial promoter library. Modular DNA units are assembled via randomized ligation to generate a vast library, which is then functionally screened under various inducer conditions to quantify expression performance [32].

Transcription Factor Targeting in Library Screening

Transcription factor-based biosensors are indispensable for HTS as they convert intracellular metabolite concentrations into measurable signals, bypassing the need for slow, direct chemical quantification [33].

Biosensor-Based Screening Modalities

TF-based biosensors can be deployed in several screening formats, each with different throughput capacities and technical requirements [33]:

Table 2: High-Throughput Screening Modalities Using Transcription Factor-Based Biosensors

| Screen Method | Throughput Capacity | Organism Examples | Target Molecule | Documented Improvement |

|---|---|---|---|---|

| Well Plate | ~10²-10⁴ variants | E. coli, Y. lipolytica | Glucaric acid, Erythritol | 4-fold improved specific titer [33] |

| Agar Plate | ~10⁴-10⁶ variants | E. coli | Salicylate, Mevalonate | 123% increased production [33] |

| FACS | >10⁸ variants | E. coli, S. cerevisiae, C. glutamicum | Acrylic acid, L-lysine, Fatty acids | 1.6-fold improved kcat/Km, 49.7% increased production [33] |

| Droplet Screening | >10⁹ variants | N/A | N/A | N/A |

Experimental Protocol: FACS with TF-Based Biosensors