Advances in Amino Acid Secretion Phenotype Prediction: From Machine Learning to Clinical Translation

Accurate prediction of amino acid secretion phenotypes is revolutionizing biomedical research and therapeutic development.

Advances in Amino Acid Secretion Phenotype Prediction: From Machine Learning to Clinical Translation

Abstract

Accurate prediction of amino acid secretion phenotypes is revolutionizing biomedical research and therapeutic development. This comprehensive review explores the computational frameworks powering this transformation, from foundational deep mutational scanning and neural networks to cutting-edge ensemble models integrating sequence and structural data. We examine how machine learning approaches capture complex genotype-phenotype relationships, address critical optimization challenges including data scarcity and epistatic effects, and establish robust validation paradigms. For researchers, scientists, and drug development professionals, this synthesis provides actionable insights into selecting appropriate prediction tools, interpreting results within biological contexts, and translating computational predictions into validated therapeutic outcomes across vaccine design, enzyme engineering, and personalized medicine applications.

The Computational Framework: Linking Amino Acid Sequences to Secretion Phenotypes

Deep Mutational Scanning (DMS) has emerged as a powerful experimental framework for systematically quantifying the effects of hundreds of thousands of genetic variants on protein function in a single experiment [1] [2]. This approach represents a paradigm shift from traditional one-variant-at-a-time studies to massively parallel analyses that comprehensively map sequence-function relationships [3]. At its core, DMS solves a fundamental challenge in genetics: our limited ability to predict which mutations will most informatively reveal protein function [2]. Since its systematic introduction approximately a decade ago, DMS has enabled scientific breakthroughs across evolutionary biology, genetics, and biomedical research by providing efficient and economical assessment of genotype-phenotype relationships [1]. The technology has proven particularly valuable for classifying human disease variants of unknown significance, understanding viral evolution including SARS-CoV-2, guiding therapeutic antibody engineering, and revealing fundamental principles of protein structure and function [1] [4] [5]. This review examines the experimental foundations of DMS, comparing methodological approaches and their applications in high-throughput functional characterization, with particular relevance to phenotypic prediction in amino acid secretion research.

Core Methodological Framework of Deep Mutational Scanning

The Fundamental Workflow

The DMS methodology follows a consistent workflow with three critical phases, each with multiple technical options that researchers must select based on their specific experimental goals [1]. Table 1 summarizes the key steps and considerations in a typical DMS experiment.

Table 1: Core Workflow and Technical Considerations in Deep Mutational Scanning

| Experimental Phase | Key Steps | Technical Considerations | Common Pitfalls |

|---|---|---|---|

| Library Generation | 1. Design mutant library2. Synthesize oligo pool3. Clone into expression system | - Choice of mutagenesis method- Library coverage and diversity- Cloning efficiency | - Synthesis biases- Inadequate variant representation- Frameshifts and truncations |

| Functional Selection | 1. Introduce library to expression system2. Apply selection pressure3. Collect pre- and post-selection samples | - Selection stringency optimization- Phenotype-genotype linkage- Adequate biological replicates | - Overly stringent/weak selection- Bottlenecks in population size- Poor phenotype-genotype correlation |

| Sequencing & Analysis | 1. High-throughput sequencing2. Variant frequency quantification3. Fitness score calculation | - Sufficient sequencing depth- Error correction with UMIs- Statistical normalization | - Insufficient read depth for rare variants- PCR/sequencing errors- Improper normalization for initial biases |

The process begins with creating a comprehensive mutant library, typically through oligo synthesis followed by cloning into expression vectors [1]. The library then undergoes a functional selection that links genetic sequences (genotypes) to functional outputs (phenotypes), enabling enrichment or depletion of variants based on their activity [2] [6]. Finally, high-throughput sequencing quantifies variant frequencies before and after selection, with computational analysis generating fitness scores that reflect each variant's functional impact [6].

Visualization of Core DMS Workflow

Diagram Title: DMS Experimental Workflow

This workflow enables the creation of comprehensive sequence-function maps that reveal how mutations affect protein properties. The resulting data can be visualized as heatmaps that display functional scores for each amino acid substitution at every position, providing immediate insight into functionally critical regions [2].

Comparative Analysis of Mutagenesis Methods

Library Generation Techniques

The initial library generation represents a critical foundational step that determines the scope and quality of a DMS experiment. Researchers must select from several established mutagenesis approaches, each with distinct advantages and limitations [1]. Table 2 provides a comparative analysis of the primary methods used for creating DMS libraries.

Table 2: Comparison of Mutagenesis Methods for DMS Library Generation

| Method | Mechanism | Advantages | Limitations | Best Applications |

|---|---|---|---|---|

| Error-Prone PCR | Low-fidelity polymerases introduce random mutations during amplification [1] | - Cost-effective- Simple protocol- No special equipment needed | - Mutation biases (A/T mutations favored)- Difficult to achieve all amino acid substitutions- Multiple simultaneous mutations common [1] | - Initial exploratory studies- Directed evolution projects- When comprehensive saturation is not required |

| Oligo Synthesis with Doped Oligos | Defined percentage of mutations incorporated during oligo synthesis [1] | - Customizable mutation rate- Reduced biases compared to error-prone PCR- Can generate long mutant oligos (up to 300nt) | - Higher cost than error-prone PCR- Requires specialized synthesis- Potential synthesis errors | - Targeted mutagenesis of specific regions- Studies requiring defined mutation spectra |

| Oligo Synthesis with NNN Triplets | Oligos containing NNN (or NNK/NNS) codons target each position for all amino acid substitutions [1] | - Comprehensive coverage of all 20 amino acids- User-defined mutation sites- Compatible with low-cost pool synthesis (e.g., DropSynth) [1] | - Higher synthesis costs- Some codon bias remains- Requires careful library design | - Saturation mutagenesis studies- Construction of all single-amino-acid variant libraries- Precision mapping projects |

The choice between these methods involves trade-offs between completeness, bias, and cost. For comprehensive single-amino-acid substitution libraries, oligo synthesis with NNN triplets currently represents the gold standard, despite higher costs [1]. However, error-prone PCR remains valuable for specific applications where random mutagenesis across longer regions is desirable, particularly when using commercial kits with engineered polymerases that reduce but do not eliminate mutational biases [1].

Emerging Alternatives: Base Editing

CRISPR base editing has recently emerged as an alternative approach to DMS for functional variant annotation in mammalian cells [7]. This method uses nCas9 fused to deaminase enzymes to target transition mutations (C>T or A>G) at specific genomic locations, enabling endogenous editing without double-strand breaks [7]. A 2024 direct comparison found that base editing screens can achieve surprising correlation with gold standard DMS datasets when focusing on high-efficiency single-edits, suggesting potential for multiplexed functional annotation [7]. However, base editing faces challenges including editing efficiency variability, bystander edits when multiple editable sites fall within the editing window, and PAM sequence requirements that limit targeting scope [7].

Phenotyping Systems and Selection Strategies

Comparative Platform Analysis

The selection of an appropriate phenotyping platform represents a critical decision point in DMS experimental design, with different model systems offering distinct advantages. Table 3 compares the primary platforms used for high-throughput functional characterization in DMS experiments.

Table 3: Comparison of DMS Phenotyping and Selection Platforms

| Platform | Selection Mechanisms | Key Applications | Technical Considerations |

|---|---|---|---|

| Yeast Surface Display | - Folding efficiency via surface expression- Ligand binding via fluorescent detection [4] | - Antigen-antibody interactions- Receptor-ligand binding affinity- Protein stability assessment | - Eukaryotic glycosylation patterns- Quality control machinery similar to mammalian cells- Medium throughput capacity |

| Mammalian Cell Systems | - Growth-based selection- Drug resistance- Cell sorting with fluorescent reporters [8] | - Human disease variant characterization- Endogenous pathway analysis- Therapeutic protein engineering | - Most relevant cellular context for human proteins- Lower throughput than microbial systems- More complex genetic manipulation |

| Bacterial Systems | - Growth complementation- Toxin resistance- Antibiotic selection [9] | - Bacterial protein characterization- Enzyme evolution- Fundamental biophysical studies | - Highest throughput capacity- Simplified genetics and lower cost- Limited for eukaryotic-specific processes |

| In Vitro Display | - Ribosome display selection- Phage display panning [3] | - Antibody engineering- Peptide-binding specificity- Directed evolution | - Largest library diversity potential- No cellular transformation limitations- No native cellular environment |

The SARS-CoV-2 pandemic highlighted the power of yeast display for rapid characterization of viral protein variants, as demonstrated by Starr et al. who measured how all possible amino acid mutations to the SARS-CoV-2 receptor-binding domain affect ACE2 binding and protein folding [4]. Their platform enabled quantitative measurement of dissociation constants across thousands of variants, revealing both constrained regions ideal for vaccine targeting and mutations that enhance receptor binding [4].

Multi-Environment Phenotyping

Traditional DMS experiments typically examine variant effects under a single condition, but emerging approaches now leverage multi-environment phenotyping to reveal condition-dependent functional effects [9]. A 2025 study of a bacterial kinase demonstrated how profiling variant effects across multiple temperatures identified distinct classes of temperature-sensitive and temperature-resistant variants [9]. This approach revealed that temperature-sensitive mutations occur throughout both the protein core and surface, challenging existing paradigms that localized such effects primarily to structural cores [9]. Furthermore, temperature-resistant variants exhibited increased enzymatic activity rather than improved stability, highlighting how multi-condition profiling can uncover unexpected functional relationships [9].

For amino acid secretion research, this multi-environment approach could be particularly valuable for identifying mutations that optimize secretion efficiency under different bioprocessing conditions or in response to metabolic demands.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of DMS requires careful selection of reagents and methodologies throughout the experimental pipeline. The following toolkit summarizes key solutions employed in foundational DMS studies.

Table 4: Essential Research Reagent Solutions for DMS Experiments

| Reagent Category | Specific Examples | Function in DMS Workflow | Implementation Notes |

|---|---|---|---|

| Mutagenesis Reagents | - Error-prone PCR kits (commercial mixes with engineered polymerases) [1]- Pooled oligonucleotide libraries (Twist Bioscience, Agilent) [7] [4]- DropSynth for cost-effective oligo pool synthesis [1] | Generation of comprehensive variant libraries with controlled diversity | Commercial error-prone kits reduce but don't eliminate polymerase biases; Pooled oligos enable precise library design but require validation of synthesis quality |

| Cloning & Expression Systems | - Lentiviral vectors (pUltra, Addgene #24129) [7]- Yeast display vectors (pCTCON) [4]- Mammalian landing pad systems for genomic integration [8] | Delivery and expression of variant libraries in host systems | Lentiviral systems enable stable integration in hard-to-transfect cells; Landing pad systems ensure single-copy consistent expression |

| Selection Tools | - Fluorescently labeled ligands (ACE2-Fc for SARS-CoV-2 studies) [4] [5]- FACS instrumentation for cell sorting- Drug selection markers (puromycin, hygromycin) [7] | Linking genotype to phenotype through functional enrichment | Labeled ligands must be titrated to establish appropriate selection stringency; FACS enables multi-parameter sorting |

| Sequencing & Analysis | - Unique Molecular Identifiers (UMIs) for error correction [6]- PacBio SMRT sequencing for long-read barcode linkage [4]- Custom analysis pipelines (Enrich, dms_tools) [2] | Accurate variant frequency quantification and fitness score calculation | UMIs are essential for correcting PCR and sequencing errors; Specialized software handles the statistical challenges of low-complexity, high-variant-count data |

Data Analysis and Fitness Metric Computation

From Sequencing Reads to Fitness Scores

The transformation of raw sequencing data into reliable fitness scores requires careful computational processing to account for various sources of noise and bias. The standard analytical approach involves comparing variant frequencies before and after selection, typically using a metric such as the enrichment score [6]. For experiments with time-series sampling, growth rates can be calculated using the exponential growth equation:

$$\text{growthrate} = \frac{\ln(\frac{\text{MAF}1 \times \text{Count}1}{\text{MAF}0 \times \text{Count}0})}{\text{Time}1 - \text{Time}0}$$

where MAF represents mutant allele frequency, Count indicates cell count, and subscripts 0 and 1 denote initial and final time points, respectively [7]. This approach accounts for population dilution during the selection process and enables calculation of variant-specific growth rates relative to wild-type.

The implementation of Unique Molecular Identifiers has become standard practice in modern DMS studies to address PCR and sequencing errors [6]. UMIs are short, random DNA sequences attached to each initial DNA molecule before amplification, enabling computational correction by collapsing reads sharing the same UMI into consensus sequences [7]. This process dramatically reduces noise and enables accurate quantification of rare variants that would otherwise be obscured by technical artifacts.

Visualization of Data Analysis Pipeline

Diagram Title: DMS Data Analysis Pipeline

Machine Learning Integration

Recent advances have integrated DMS data with machine learning approaches to learn generalizable protein fitness landscapes [10]. Multi-protein training schemes that leverage existing DMS data from diverse proteins can improve fitness predictions for new proteins through transfer learning [10]. These approaches consider both structural environments of mutations and evolutionary contexts from multiple sequence alignments, enabling accurate prediction of variant effects even with limited protein-specific data [10]. For amino acid secretion research, such models could help prioritize mutations that optimize secretion efficiency without requiring exhaustive experimental screening of all possible variants.

Applications and Validation in Biomedical Research

Predictive Power for Real-World Outcomes

DMS data has demonstrated remarkable predictive power for real-world biological phenomena, particularly in understanding viral evolution. The comprehensive DMS of SARS-CoV-2 spike protein by Starr et al. accurately identified mutations that later became prevalent in the pandemic, demonstrating how preemptive functional characterization can anticipate natural evolutionary trajectories [1] [5]. Subsequent work showed that viral growth rates of SARS-CoV-2 clades could be explained in substantial part by measured effects of mutations on spike phenotypes, including ACE2 binding, cell entry, and serum escape [5]. This predictive capability underscores the value of DMS for forecasting evolution of pathogens and designing robust countermeasures that account for likely escape mutations.

In clinical genetics, DMS has enabled systematic classification of variants of unknown significance (VUS) in disease-associated genes [1] [8]. By providing functional measurements for thousands of mutations in single experiments, DMS datasets serve as references for interpreting newly discovered human genetic variants [8]. This approach has been successfully applied to genes such as BRCA1, PTEN, and TP53, where functional scores from DMS correlate with clinical pathogenicity assessments [8]. The move toward mammalian cell DMS platforms further enhances clinical relevance by providing functional data in more physiologically relevant contexts [8].

Technical Validation and Reproducibility

The reliability of DMS data depends critically on appropriate experimental design and validation. Key validation approaches include:

- Replicate concordance: High correlation between biological replicates indicates experimental reproducibility [5]

- Gold standard validation: Comparison with known functional measurements for characterized variants [4]

- Cross-platform validation: Agreement between different experimental systems (e.g., yeast display vs. mammalian cell assays) [4] [5]

Technical pitfalls that can compromise data quality include inadequate library diversity, inappropriate selection stringency, insufficient sequencing depth, and failure to account for initial library biases [6]. Best practices emphasize sequencing the input library deeply to establish baseline variant frequencies, optimizing selection conditions through pilot experiments, implementing UMI-based error correction, and performing adequate biological replicates [6].

Future Directions and Concluding Perspectives

Deep Mutational Scanning has transformed our ability to map sequence-function relationships at unprecedented scale and resolution. The experimental foundations of DMS continue to evolve with improvements in library synthesis, phenotyping platforms, and computational analysis. For amino acid secretion research and phenotypic prediction, DMS offers a powerful framework for systematically identifying mutations that optimize secretion efficiency, stability, and function. The integration of DMS with machine learning approaches promises to further enhance predictive capabilities, potentially enabling accurate functional prediction from sequence alone.

As DMS methodologies mature, we can anticipate expanded applications in protein engineering, therapeutic development, and functional annotation of human genetic variation. The move toward multi-environment profiling will provide richer functional landscapes that capture context-dependent effects, while advances in base editing and other CRISPR-based approaches may enable more efficient variant characterization in endogenous genomic contexts. Through continued methodological refinement and validation, DMS will remain an essential tool for high-throughput functional characterization across diverse research domains.

Sequence-Structure-Phenotype Paradigm in Protein Science

The sequence-structure-phenotype paradigm posits that a protein's amino acid sequence determines its three-dimensional structure, which in turn dictates its biological function and observable characteristics, or phenotypes [11]. In the specific context of amino acid secretion research, this paradigm provides a foundational framework for understanding how genetic sequences ultimately influence secretory functions, a process critical to cellular communication, drug targeting, and metabolic regulation. The secretory pathway involves the endoplasmic reticulum, Golgi apparatus, and vesicles that transport proteins to their destinations, with the endoplasmic reticulum serving as the crucial entry point where proteins are synthesized, folded, and modified before secretion [12] [13].

Despite this elegant theoretical framework, significant challenges persist in achieving accurate phenotypic predictions, particularly for secretory functions. The relationship between sequence, structure, and phenotype is extraordinarily complex, incorporating evolutionary dynamics, structural flexibility, and neo-functionalization of proteins across different organismal contexts [11]. For secretion specifically, this complexity is compounded by multiple factors including proper targeting to the endoplasmic reticulum via signal peptides, correct folding with chaperone assistance, formation of disulfide bonds in the oxidative environment of the ER, and successful navigation through the entire secretory pathway [12] [13]. Approximately 11% of human genes encode soluble secretory proteins, with an additional 20% encoding transmembrane proteins that enter the secretory pathway [12], highlighting the critical importance and scale of this biological process.

Comparative Analysis of Computational Prediction Methods

Performance Benchmarking of Prediction Tools

Table 1: Performance comparison of protein phenotype prediction tools

| Tool | Approach | Key Applications | Performance Metrics | Experimental Validation |

|---|---|---|---|---|

| Protein-Vec [11] | Multi-aspect information retrieval using contrastive learning | Enzyme Commission number prediction, remote homology detection | 55% exact match accuracy for EC prediction, outperforming CLEAN (45%) | Time-based evaluation on UniProt proteins introduced after May 2022 |

| ESM1b [14] | Protein language model | Variant effect prediction, distinguishing GOF/LOF variants | p < 0.05 for mean phenotype prediction in 6/10 cardiometabolic genes | UK Biobank exomes (200,638 samples), Mt. Sinai BioMe Biobank |

| ProCyon [15] | Multimodal foundation model (11B parameters) | Protein retrieval, question answering, phenotype generation | 72.7% QA accuracy, Fmax 0.743 for retrieval (30.1% improvement over ProtST) | Benchmarking across 14 task types, zero-shot evaluation |

| EA Method [16] | Evolutionary action analysis | Functional impact prediction of missense variants | Top performer in CAGI challenges (2011, 2013, 2015) | Multiple assays testing protein interactions and cellular phenotypes |

Table 2: Specialized capabilities of prediction methodologies

| Method | Sequence Analysis | Structure Integration | Phenotype Prediction | Secretory Pathway Application |

|---|---|---|---|---|

| Protein-Vec | Multi-aspect sequence encoding | TM-scores for structural similarity | Enzyme function, protein families | Limited direct application |

| ESM1b | Deep sequence modeling | Limited structural analysis | Variant pathogenicity, metabolic traits | Indirect via variant effects |

| ProCyon | Sequence encoders | Geometric deep learning for structure | Molecular functions, disease associations, therapeutics | Potential for secretory phenotype prediction |

| coralME [17] | Genome-scale metabolic modeling | Not primary focus | Microbial metabolism, nutrient utilization | Gut microbiome secretion products |

Methodological Approaches and Experimental Designs

Protein-Vec employs a multi-aspect information retrieval system using contrastive learning framework where the model is trained to identify positive proteins that share functional labels with anchor proteins while differentiating negative proteins with different labels [11]. The architecture incorporates a mixture of experts approach, combining seven single-aspect models (Aspect-Vec) covering Enzyme Commission numbers, Gene Ontology terms, Pfam families, TM-scores for structural similarity, and Gene3D domain annotations. For evaluation, researchers typically employ time-split validation where models are trained on proteins deposited before a certain date and tested on newer additions to databases like UniProt, ensuring realistic performance assessment on novel sequences [11].

ESM1b (Evolutionary Scale Modeling) leverages deep learning on evolutionary sequences to predict variant effects without explicit structural input [14]. The methodology involves training transformer models on millions of natural protein sequences from diverse organisms to learn fundamental principles of protein biochemistry. For variant effect prediction, the model computes likelihood scores for amino acid substitutions, with scores less than -7.5 indicating likely pathogenic mutations [14]. Experimental validation typically involves correlation analysis between ESM1b scores and clinical measurements from biobank data, such as lipid levels for cardiometabolic variants or HbA1c for diabetes-related genes, with statistical significance determined through linear regression models [14].

ProCyon represents a multimodal foundation model that integrates protein sequences, structures, and natural language descriptions through a novel architecture combining protein encoders with large language models [15]. The training utilizes the ProCyon-Instruct dataset containing 33 million protein-phenotype instructions across five knowledge domains: molecular functions, disease phenotypes, therapeutics, protein domains, and protein-protein interactions. Benchmarking involves zero-shot task transfer where the model addresses problems not explicitly seen during training, such as identifying protein domains that bind small molecule drugs or generating phenotypic descriptions for poorly characterized proteins [15].

Experimental Protocols for Validation

Functional Assays for Secretory Phenotype Verification

Secretory Protein Localization and Processing Assays: The classic experimental approach for verifying secretory proteins involves cell fractionation followed by protease protection assays [13]. In this protocol, cells are first disrupted using homogenization to generate microsomes (sealed vesicles derived from endoplasmic reticulum). The microsomal fraction is then treated with proteases such as trypsin with or without detergent. Proteins that are protected from protease digestion in the absence of detergent but become susceptible when membranes are dissolved with detergent are classified as secretory pathway proteins, as they were lumenally located within organelles. This method provides direct evidence of a protein's localization within the secretory pathway.

Comprehensive Functional Impact Assessment: For thorough phenotypic characterization of variants in secretory proteins, researchers employ multiple assays measuring different aspects of protein function [16]. For example, in studying ADRB2 (a G protein-coupled receptor that traverses the secretory pathway), scientists developed a multifaceted protocol measuring: (1) interactions with downstream binding partners (Gαi, Gαs, and β-arrestin) using co-immunoprecipitation or FRET; (2) receptor endocytosis via fluorescence microscopy or flow cytometry; (3) cAMP concentration changes using ELISA or reporter assays; and (4) cell surface expression through antibody labeling of extracellular epitopes [16]. Dose-response curves are generated for each assay, with data reduced to quantitative parameters including EC50, maximal response, and ligand-induced response. Total functional impact is calculated as the sum of absolute differences between wild-type and mutant measurements across all assays.

Variant Effect Validation in Biobank Scales: For large-scale validation of secretory phenotype predictions, researchers leverage biobank resources combining exome sequencing with clinical phenotypes [14]. The standard protocol involves: (1) identifying carriers of putative pathogenic variants in genes of interest; (2) quantifying relevant clinical biomarkers (e.g., HbA1c for diabetes-related genes, LDL cholesterol for lipid metabolism genes); (3) assessing penetrance as the percentage of carriers meeting clinical threshold criteria; and (4) correlating computational predictions (e.g., ESM1b scores) with phenotypic severity using statistical models that account for covariates such as age, sex, and genetic background [14]. This approach provides direct evidence of variant effects on secretory functions in human populations.

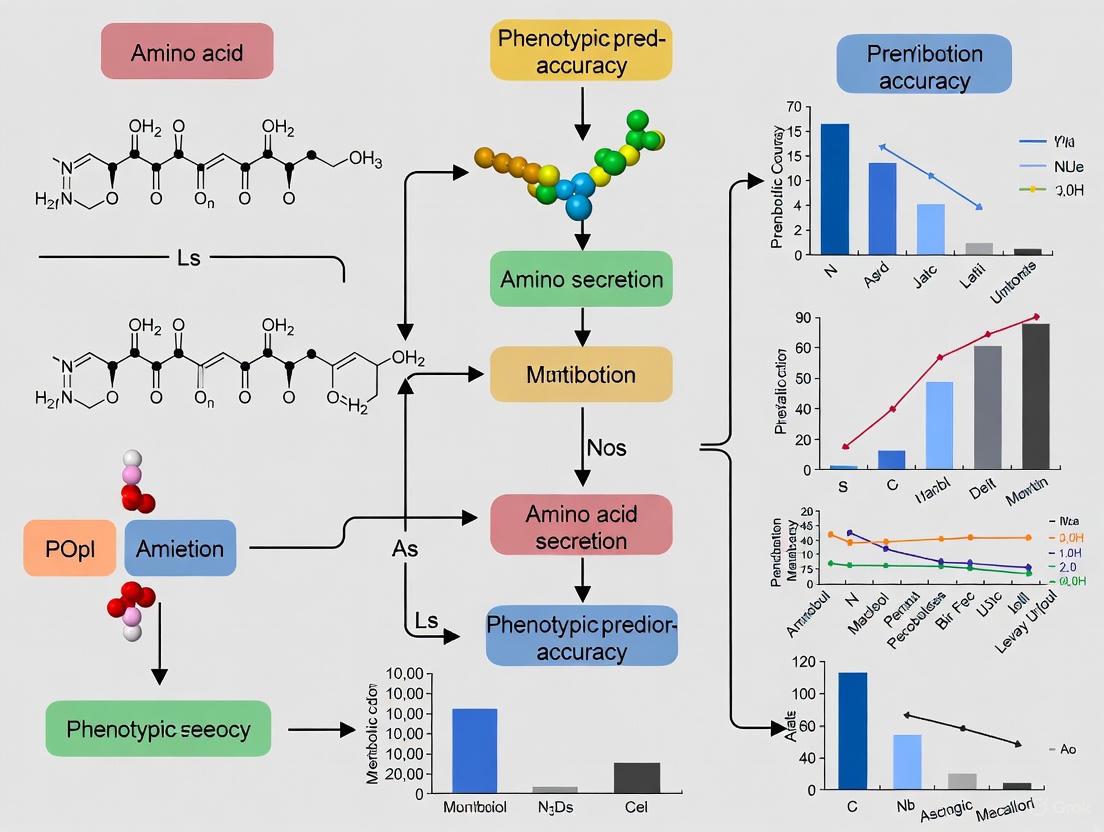

Workflow Visualization for Phenotype Prediction

Diagram 1: Integrated workflow for predicting secretory phenotypes from sequence and structural data

Table 3: Key research reagents and computational resources for secretion studies

| Resource | Type | Primary Function | Application in Secretion Research |

|---|---|---|---|

| UniProt Knowledgebase [11] | Database | Protein sequence and functional information | Reference data for secretory signal peptides and protein families |

| ESM1b Model [14] | Computational Tool | Variant effect prediction | Assessing impact of mutations on secretory protein function |

| ProCyon Model [15] | Multimodal Foundation Model | Protein phenotype prediction | Generating hypotheses about secretory functions for uncharacterized proteins |

| Sec61 Translocon Complex [12] [13] | Biological Machinery | ER protein translocation | Studying endoplasmic reticulum targeting efficiency of secretory proteins |

| Signal Recognition Particle (SRP) [12] [13] | Ribonucleoprotein Complex | Cotranslational targeting to ER | Investigating secretory protein synthesis and membrane integration |

| UK Biobank Exomes [14] | Dataset | Human genetic and phenotypic data | Validating secretory phenotype predictions in population-scale data |

| coralME [17] | Metabolic Modeling Tool | Genome-scale metabolic network reconstruction | Predicting microbial secretion products and nutrient utilization |

| Gene Ontology Annotations [11] [15] | Ontology Database | Standardized functional terminology | Consistent annotation of secretory processes across studies |

The sequence-structure-phenotype paradigm continues to evolve rapidly with advances in computational methods, each offering distinct strengths for predicting secretory functions. Protein language models like ESM1b provide exceptional variant effect prediction, multi-aspect retrieval systems like Protein-Vec enable comprehensive functional annotation, and multimodal foundation models like ProCyon offer unprecedented flexibility in generating phenotypic descriptions. For secretion research specifically, integration of these computational approaches with experimental validation through protease protection assays, functional characterization, and biobank studies creates a powerful framework for bridging genetic information to observable secretory phenotypes.

The future of phenotypic prediction in secretion research lies in more sophisticated integration of multimodal data, improved modeling of secretory pathway dynamics, and enhanced capacity for predicting context-dependent effects of genetic variation. As these tools become more advanced and accessible, they promise to accelerate discovery in secretory biology, with important implications for understanding disease mechanisms, developing therapeutic interventions, and engineering proteins with optimized secretion properties for industrial and biomedical applications.

In the field of protein engineering and biopharmaceutical development, predicting the impact of genetic variations on key biochemical phenotypes is crucial. Among these phenotypes, binding affinity, protein expression, and secretion efficiency represent a fundamental triad that determines the functional success of a protein. Binding affinity dictates how strongly a protein interacts with its molecular partners, such as receptors or antibodies. Protein expression refers to the yield of correctly folded protein within a production system. Secretion efficiency measures the capability of a protein to be translocated across membranes and released from the cell, a critical step in manufacturing and natural protein function. Accurate phenotypic prediction allows researchers to move beyond costly and time-consuming experimental screens, enabling the rational design of proteins with optimized properties for therapeutic and industrial applications [18] [19] [20].

This guide provides a comparative analysis of the experimental and computational methodologies used to quantify these phenotypes, with a specific focus on amino acid substitutions. It is structured within the broader thesis that integrating high-throughput experimental data with advanced machine learning models significantly enhances prediction accuracy, thereby accelerating research and development.

Quantitative Comparison of Phenotypic Prediction Methods

The table below summarizes the core performance metrics, advantages, and limitations of prominent methods for assessing the impact of amino acid variants.

Table 1: Comparison of Methods for Predicting Phenotypic Impacts of Amino Acid Variants

| Method Category | Key Measurable Phenotypes | Reported Performance Metric | Key Advantages | Primary Limitations |

|---|---|---|---|---|

| Deep Mutational Scanning (DMS) [18] | Binding affinity, protein expression, antibody escape | Neural network predictions achieved Spearman correlation of 0.78 for ACE2 binding affinity. | High-throughput; Generates large-scale sequence-function landscape data. | Requires sophisticated experimental setup and data modeling. |

| Computational ΔΔG Prediction (ICM) [21] | Peptide-protein binding affinity | Significant correlation with experimental ΔΔG values; Uncertainty of ~1 kcal/mol. | Provides atomic-level structural insights; Fast in silico screening. | Accuracy depends on template structure quality; Can miss non-local effects. |

| Signal Peptide Screening [19] [20] | Secretion efficiency, protein yield | Novel designed signal peptides improved secreted yield by up to 3.5-fold in E. coli. | Directly applicable to industrial protein production; Experimental validation. | Results are highly dependent on target protein and host system. |

| Machine Learning Pathogenicity Prediction (MutPred2) [22] | Pathogenicity via structural/functional disruption | AUC of 91.3% for discriminating pathogenic variants; Provides mechanistic hypotheses. | Sequence-based; Models specific molecular alterations (e.g., PTM loss, stability change). | Focused on disease causation; May be less direct for industrial phenotypes. |

Experimental Protocols for Key Phenotypes

Deep Mutational Scanning (DMS) for Binding Affinity and Expression

Objective: To systematically quantify the effects of thousands of single amino acid mutations on binding affinity and protein expression levels.

Detailed Workflow:

- Library Construction: Generate a comprehensive library of mutant genes for the target protein (e.g., SARS-CoV-2 Spike RBD) using saturation mutagenesis or other high-throughput methods.

- Cell Surface Display: The mutant library is expressed on the surface of yeast or mammalian cells, where each cell displays a unique variant.

- Fluorescence-Activated Cell Sorting (FACS):

- Cells are labeled with two fluorescent probes:

- A probe for the expression level (e.g., a tag-specific antibody).

- A probe for binding affinity (e.g., a fluorescently-labeled target protein like ACE2).

- Cells are sorted into bins based on their dual-fluorescence signals, separating variants with high/low expression and high/low binding.

- Cells are labeled with two fluorescent probes:

- Sequencing and Data Analysis: High-throughput sequencing of each bin quantifies the enrichment or depletion of every mutation. The resulting data is used to calculate functional scores for binding affinity and expression for each variant in the library [18].

Secretion Efficiency Assay via Signal Peptide Screening

Objective: To identify the optimal signal peptide for secreting a target recombinant protein into the culture medium of a production host like E. coli.

Detailed Workflow:

- Signal Peptide Library Design: Construct a library of expression vectors where the gene for the target protein (e.g., ATH35L antigen) is fused to a diverse set of natural or synthetically designed signal peptides. Design can involve swapping the n-, h-, and c-regions of known signal peptides [20].

- Transformation and Fermentation: Introduce the library into the expression host (E. coli BL21) and perform fed-batch fermentation under controlled conditions to produce the recombinant protein.

- Sample Collection and Analysis:

- Collect culture supernatants after fermentation.

- Measure the yield of the secreted target protein using techniques like SDS-PAGE and densitometry or specific activity assays.

- Verify correct signal peptide cleavage via N-terminal sequencing or mass spectrometry.

- Data Interpretation: The secretion efficiency for each signal peptide is quantified as the yield of the target protein in the culture medium. The performance is often reported relative to the yield achieved with the best-performing signal peptide [19] [20].

Visualization of Core Workflows

Deep Mutational Scanning and Analysis Workflow

Computational Prediction of Variant Effects

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Reagents for Phenotypic Analysis Experiments

| Reagent / Solution | Function in Experiment | Specific Example / Note |

|---|---|---|

| Signal Peptide Library [19] [20] | Directs the translocation of recombinant proteins to the periplasm or extracellular medium in expression hosts. | Can be natural (e.g., dsbA, pelB) or synthetically designed by swapping n-, h-, and c-regions. |

| Fluorescently-Labeled Ligands [18] | Used as probes in FACS to quantify the binding affinity of cell-surface displayed protein variants. | Target protein (e.g., ACE2) labeled with a fluorophore like FITC or PE. |

| Expression Host Strains [20] | Cellular systems for producing recombinant proteins. Different strains optimize for yield, proper folding, or secretion. | E. coli BL21(DE3) is commonly used for T7 promoter-driven protein expression. |

| AAindex Database [18] | A curated database of numerical indices representing various physicochemical and biochemical properties of amino acids. | Used to featurize protein sequences for machine learning models (e.g., hydrophobicity, long-range energy). |

| Mid-Infrared (MIR) Spectrometer [23] | Enables rapid, high-throughput prediction of amino acid content in complex mixtures like milk, based on absorption spectra. | Used for phenotypic screening where traditional AA analysis is too slow/costly. |

| ICM Software [21] | A computational biology platform for predicting changes in binding free energy (ΔΔG) due to amino acid substitutions. | Utilizes Biased-Probability Monte Carlo simulations for side-chain optimization and scoring. |

Neural Networks for Learning Complex Genotype-Phenotype Relationships

Decoding the relationship between genetic information and observable traits is a central challenge in genetics, with critical implications for understanding disease mechanisms and advancing precision medicine. Despite biological systems being defined by complex, often nonlinear interactions between genes, phenotypes, and environments, traditional methods for genotype-phenotype mapping have changed little in decades, typically focusing on isolated traits and assuming linear, additive genetic effects [24]. This approach can miss substantial biological phenomena. The emergence of complex neural network models offers a powerful alternative, capable of capturing these intricate, nonlinear relationships to improve predictive accuracy. This is particularly relevant for amino acid secretion research, where accurately predicting secretory phenotypes from sequence data can illuminate regulatory pathways and identify therapeutic targets. This guide objectively compares the performance of modern neural network approaches against traditional methods and specialized algorithms, providing researchers with the data needed to select appropriate tools for their phenotypic prediction challenges.

Comparative Performance of Prediction Methods

Quantitative Benchmarks for Variant Effect Prediction

Predicting the effects of coding variants, especially missense mutations, is a major challenge in human genetics. Protein language models, particularly ESM1b, have demonstrated superior performance in classifying variant pathogenicity. The following table summarizes the performance of ESM1b against a leading unsupervised method, EVE, across clinical databases.

Table 1: Performance comparison of ESM1b and EVE on clinical variant classification

| Method | Clinical Benchmark | ROC-AUC | True Positive Rate at 5% FPR |

|---|---|---|---|

| ESM1b | ClinVar (19,925 pathogenic, 16,612 benign variants) | 0.905 | 60% |

| EVE | ClinVar (19,925 pathogenic, 16,612 benign variants) | 0.885 | 49% |

| ESM1b | HGMD/gnomAD (27,754 disease-causing, 2,743 common variants) | 0.897 | 61% |

| EVE | HGMD/gnomAD (27,754 disease-causing, 2,743 common variants) | 0.882 | 51% |

ESM1b, a 650-million-parameter protein language model, was applied to all ~450 million possible missense variants across 42,336 human protein isoforms, outperforming EVE and 44 other prediction methods in classifying pathogenic and benign variants in ClinVar and HGMD [25]. Its strength is particularly evident in the clinically critical low false-positive rate regime. Furthermore, when predicting quantitative experimental measurements from 28 deep mutational scanning (DMS) assays, ESM1b also achieved state-of-the-art performance, validating its accuracy against empirical biochemical data [25].

Prediction from Gene Expression and Other Omics Data

Beyond variant effects, neural networks are also applied to predict complex traits from transcriptomic and multi-omics data. A comprehensive comparison of statistical learning methods for predicting traits like starvation resistance in Drosophila from gene expression data found that no single method universally outperforms others, with accuracy being highly dependent on the specific trait and its genetic architecture [26]. However, integrating multiple types of omics data can enhance model performance.

Table 2: Performance of visible neural networks on multi-omics prediction tasks (BIOS consortium, N=2,940)

| Prediction Task | Omics Data Used | Performance Metric | Result |

|---|---|---|---|

| Smoking Status | Gene Expression + Methylation | Mean AUC | 0.95 (95% CI: 0.90–1.00) |

| Subject Age | Gene Expression + Methylation | Mean Error | 5.16 years (95% CI: 3.97–6.35) |

| LDL Levels | Gene Expression + Methylation | R² (in a single cohort) | 0.07 (95% CI: 0.05–0.08) |

Interpretable ("visible") neural networks that incorporate prior biological knowledge, such as gene and pathway annotations, have been successfully used for such multi-omics predictions. For instance, one study achieved high accuracy in predicting smoking status from blood-based gene expression and methylation data, with interpretation of the model revealing well-replicated genes like AHRR [27]. For regression tasks like age and LDL-level prediction, using multi-omics networks generally improved performance, stability, and generalizability compared to models using only a single type of omic data [27].

Experimental Protocols for Model Training and Evaluation

Workflow for Genome-Wide Variant Effect Prediction with ESM1b

The ESM1b workflow represents a significant shift from homology-based models. The following diagram illustrates the core process for scoring a missense variant.

Workflow for Variant Effect Scoring with ESM1b

Key Experimental Steps [25]:

- Input Preparation: The wild-type and variant protein sequences are formatted for the model. A modified workflow allows ESM1b to handle sequences longer than its default 1,022-amino-acid limit.

- Likelihood Calculation: The ESM1b model, a deep neural network pre-trained on ~250 million protein sequences, processes each sequence. For the specific residue position in question, the model calculates the conditional probability (likelihood) of the observed amino acid given the context of the entire sequence.

- Variant Effect Scoring: The effect of the variant is quantified as a log-likelihood ratio (LLR). The LLR is computed as the logarithm of the variant residue likelihood divided by the wild-type residue likelihood. A strongly negative LLR indicates the variant is highly disruptive and likely pathogenic.

- Benchmarking: For evaluation, the model's predictions are benchmarked against known pathogenic and benign variants from databases like ClinVar and HGMD, as well as against experimental data from deep mutational scans.

Protocol for Transfer Learning in Understudied Populations

A common limitation in genetics is the lack of large datasets for specific populations or traits. Transfer learning, where knowledge from a large, well-studied population is applied to a smaller, understudied population, has been shown to be an effective strategy.

Key Experimental Steps [28]:

- Data Generation and Partitioning: Using tools like HAPGEN2 and PhenotypeSimulator, genotype and phenotype data are simulated for both a large population (e.g., CEU) and a small population (e.g., YRI). The data is split into training and testing sets.

- Base Model Training: A deep learning model (e.g., an LSTM or GRU) is first trained on the large population dataset. This model learns the general patterns of genotype-phenotype relationships from the abundant data.

- Model Fine-Tuning (Transfer): The pre-trained model's layers are partially frozen, and the remaining layers are fine-tuned on the much smaller dataset from the target population. This allows the model to adapt its general knowledge to the specific characteristics of the small population.

- Performance Evaluation: The fine-tuned model's accuracy is compared against a model trained exclusively on the small population data. Reported improvements in accuracy for this approach have ranged from 2% to over 14% for different simulated phenotypes [28].

Framework for Multi-Phenotype Prediction with G–P Atlas

The G–P Atlas framework addresses the limitation of single-trait analyses by modeling multiple phenotypes simultaneously, capturing pleiotropy and complex relationships.

G-P Atlas Two-Tiered Architecture

Key Experimental Steps [24]:

- Phenotype Autoencoder Training: A denoising autoencoder is trained to learn a compressed, latent representation (

Z) of the multi-phenotype data. The model is trained to reconstruct clean phenotype data from a corrupted input, which forces it to learn robust, underlying relationships between traits. - Genotype Mapping: In a second training phase, a separate neural network is trained to map genotype data directly into the pre-trained phenotype latent space (

Z). The weights of the phenotype decoder are frozen during this step, drastically reducing the number of parameters that need to be learned and making the process highly data-efficient. - Phenotype Prediction and Interpretation: Once trained, the full model can predict a full suite of phenotypes from an individual's genotype alone. Permutation-based feature ablation is used to identify which genetic loci are most important for predicting specific phenotypes.

The Scientist's Toolkit: Key Research Reagents & Solutions

For researchers seeking to implement these advanced neural network models, the following table details key software and data resources.

Table 3: Essential research reagents and computational tools for neural network-based phenotypic prediction

| Resource Name | Type | Primary Function in Research | Key Application in Phenotypic Prediction |

|---|---|---|---|

| ESM1b / ESM2 | Pre-trained Protein Language Model | Embeds evolutionary constraints and biophysical properties from protein sequences. | Predicts missense variant effects and protein function from sequence alone [25]. |

| SignalP 6.0 | Specialized Prediction Tool | Uses a protein language model (BERT) to detect signal peptides. | Predicts protein secretion and translocation, directly relevant to amino acid secretion research [29]. |

| singleDeep | End-to-End Software Pipeline | Deep neural networks for analyzing single-cell RNA-Seq data. | Classifies sample phenotypes (e.g., disease status) from complex single-cell transcriptomics [30]. |

| G–P Atlas | Neural Network Framework | A two-tiered denoising autoencoder for mapping genotypes to multiple phenotypes. | Simultaneously predicts many traits from genetic data, capturing pleiotropy and interactions [24]. |

| Visible Neural Networks (e.g., GenNet) | Model Architecture | Neural networks with architecture informed by prior biological knowledge (genes, pathways). | Integrates multi-omics data (e.g., expression, methylation) for interpretable phenotype prediction [27]. |

| UniProt / ClinVar | Curated Biological Databases | Provide annotated protein sequences and classified human genetic variants. | Serve as essential gold-standard datasets for model training and benchmarking [25] [29]. |

| Deep Mutational Scan (DMS) Data | Experimental Dataset | Measures the functional impact of thousands of protein variants in a single experiment. | Provides quantitative data for validating and benchmarking computational predictions [25]. |

The accurate prediction of phenotypes from amino acid sequences is a cornerstone of modern bioinformatics, with profound implications for understanding disease risk, optimizing drug development, and engineering proteins with novel functions. At the heart of this predictive capability lies a critical preprocessing step: how to numerically represent amino acid sequences in a way that captures biologically relevant information for computational models. The choice of feature representation methodology significantly influences the performance of phenotypic prediction models in amino acid secretion research and related fields [31].

Feature encoding schemes fundamentally serve two essential requirements in biological sequence analysis. First, they must provide distinguishability – enabling the model to discriminate between different amino acids. Second, they should offer preservability – capturing the meaningful biological, chemical, and evolutionary relationships among amino acids [31]. The encoding strategy transforms discrete amino acid sequences into continuous vector representations that machine learning algorithms can process, thereby bridging the gap between biological sequences and computational analysis.

This guide provides a comprehensive comparison of the predominant amino acid encoding strategies, from traditional one-hot encoding to advanced physicochemical property-based representations, with a specific focus on their application in phenotypic prediction accuracy for amino acid secretion research. We present structured experimental data, detailed methodologies, and practical frameworks to assist researchers in selecting optimal encoding strategies for their specific biological prediction tasks.

One-Hot Encoding

One-hot encoding represents each of the 20 canonical amino acids as a binary vector of 20 dimensions, with a value of 1 at the position corresponding to the specific amino acid and 0 elsewhere [31] [32]. This approach assumes no prior biological knowledge about amino acid relationships and treats each amino acid as entirely distinct.

- Implementation: Each amino acid is mapped to a unique 20-dimensional binary vector

- Advantages: Simple to implement, preserves original amino acid information without assumptions

- Disadvantages: High dimensionality, ignores known biological relationships, cannot capture similarity between amino acids

- Typical Use Cases: Baseline models, initial prototyping, when biological similarity metrics are unknown or irrelevant

Substitution Matrices (BLOSUM)

BLOSUM (BLOck SUbstitution Matrix) encoding schemes capture evolutionary relationships between amino acids based on observed substitution patterns in aligned protein families [31]. BLOSUM62, one of the most widely used variants, represents amino acids based on their log-odds probabilities for substitution.

- Implementation: Each amino acid is represented by a score vector derived from substitution frequencies

- Basis: Evolutionary conservation patterns across protein families

- Advantages: Captures evolutionary constraints, biologically meaningful for homology modeling

- Disadvantages: May not optimize task-specific performance, limited to evolutionary information

Physicochemical Property Encoding

Physicochemical encoding schemes represent amino acids based on their intrinsic chemical and physical properties, such as hydrophobicity, steric properties, polarity, and electronic characteristics [32] [33]. The VHSE8 (Vectors of Hydrophobic, Steric, and Electronic properties) scheme represents one such approach, capturing eight key physicochemical dimensions derived from principal component analysis of 15 original physicochemical parameters [31].

- Implementation: Amino acids represented by continuous values across multiple physicochemical dimensions

- Basis: Experimentally measured chemical and physical properties

- Advantages: Directly incorporates structural determinants, chemically intuitive

- Disadvantages: May not capture all relevant biological information, property selection can be arbitrary

Table 1: Comparison of Fundamental Amino Acid Encoding Schemes

| Encoding Scheme | Dimension | Basis | Information Captured | Computational Efficiency |

|---|---|---|---|---|

| One-Hot | 20 | Categorical identity | Distinguishability only | Moderate (high dimensionality) |

| BLOSUM62 | 20 | Evolutionary substitution patterns | Evolutionary relationships | High (fixed matrix) |

| VHSE8 | 8 | Physicochemical properties | Structural and chemical properties | High (low dimensionality) |

Performance Comparison in Predictive Tasks

Predictive Accuracy Across Architectures

Comparative studies have systematically evaluated encoding schemes across different deep learning architectures and biological prediction tasks. In predicting human leukocyte antigen class II (HLA-II)-peptide interactions, end-to-end learned embeddings achieved performance comparable to classical encodings but with significantly lower dimensionality [31]. A 4-dimensional learned embedding matched the performance of 20-dimensional BLOSUM62 and one-hot encodings, demonstrating the efficiency of learned representations [31].

For protein secondary structure prediction, models utilizing both one-hot and novel chemical encodings based on molecular fingerprints (Morgan and atom-pair fingerprints) achieved superior accuracy compared to using either encoding alone [32]. This hybrid approach achieved state-of-the-art performance while requiring approximately nine times fewer trainable parameters than competing methods [32].

Table 2: Performance Comparison Across Prediction Tasks and Encoding Schemes

| Prediction Task | Best Performing Encoding | Key Metric | Performance Advantage |

|---|---|---|---|

| HLA-II-peptide interaction [31] | Learned embedding (4D) | Validation AUC | Matched 20D classical encodings with 80% fewer parameters |

| Protein secondary structure [32] | One-hot + chemical encodings | Q3 Accuracy | Superior to single encoding schemes across test sets |

| Protein-protein interaction [31] | Learned embedding (8D) | Validation Accuracy | Exceeded classical encodings with increasing data size |

| Protein function prediction [34] | 1×1 CNN embedding | AUROC | Improved rare GO term classification |

Data Efficiency and Generalization

The performance of different encoding schemes varies significantly with available training data size. For protein-protein interaction prediction, end-to-end learning demonstrated particularly strong advantages as dataset size increased, exceeding the performance of classical encoding schemes at 25%, 75%, and 100% data fractions [31]. This suggests that learned embeddings more effectively leverage large datasets to capture task-relevant amino acid properties.

Physicochemical encodings have shown particular value for sequences with limited homologs, where evolutionary information is scarce [32]. In these scenarios, the inherent chemical properties provide a valuable inductive bias that helps models generalize despite limited evolutionary information.

Advanced and Hybrid Encoding Methodologies

End-to-End Learned Embeddings

Modern deep learning approaches often treat amino acid encoding as a learnable parameter, jointly optimizing the representation with the main prediction task. This end-to-end learning approach allows models to discover task-specific amino acid representations without relying on manually curated features [31].

- Mechanism: Embedding layer with trainable parameters that are updated during model training

- Advantages: Adapts to specific prediction task, discovers relevant features automatically

- Constraints: Requires sufficient training data, may overfit with small datasets

- Performance: Achieves comparable or superior performance to classical encodings with lower dimensionality [31]

Molecular Fingerprint-Based Encodings

Recent approaches have adapted molecular fingerprint techniques from cheminformatics to create novel amino acid representations. Morgan fingerprints and atom-pair fingerprints encode graph fragments of amino acid structures into fixed-length vectors, which are then dimensionally reduced using algorithms like FastMap [32].

- Implementation: Graph-based representation of amino acid structures converted to fixed-length vectors

- Dimensionality Reduction: FastMap algorithm preserves chemical distances between amino acids

- Advantages: Captures detailed chemical structure, provides non-redundant information to one-hot encoding

- Performance: Achieves comparable accuracy to one-hot encoding with reduced dimensionality (18D and 14D vs 20D) [32]

AAindex and Expanded Property Sets

The AAindex database provides comprehensive coverage of 566 experimentally derived and computationally inferred physicochemical properties for amino acids [35] [33]. This extensive collection enables researchers to select property sets specific to their prediction tasks or to create composite representations.

For non-canonical amino acids (ncAAs), which are increasingly important in protein engineering and drug development, computational methods have been developed to estimate AAindex properties based on chemical structure representations (SMILES encoding) [35]. These approaches use stepwise regression analysis to predict physicochemical properties for ncAAs not present in the original database.

Diagram 1: Amino Acid Encoding Workflow for Phenotypic Prediction. This diagram illustrates the transformation of raw amino acid sequences into various encoded representations and their application to different phenotypic prediction tasks.

Experimental Protocols and Implementation

Benchmarking Encoding Schemes

To evaluate different encoding strategies for phenotypic prediction of amino acid secretion, researchers should implement the following experimental protocol:

Data Preparation:

- Curate labeled dataset of amino acid sequences with associated secretion efficiency measurements

- Partition data into training, validation, and test sets with appropriate stratification

- Implement sequence segmentation for long sequences if necessary [34]

Encoding Implementation:

- Implement one-hot encoding as baseline (20 dimensions)

- Integrate BLOSUM62 matrix from standard bioinformatics libraries

- Compute VHSE8 vectors from physicochemical property databases

- Implement embedding layer for end-to-end learning (dimensions: 4, 8, 16, 32)

Model Architecture:

- Standardize deep learning architecture across encoding schemes (e.g., CNN-LSTM hybrid)

- Fix architectural hyperparameters while varying only encoding strategy

- Implement appropriate regularization to prevent overfitting

Evaluation Metrics:

- Primary: AUC-ROC for classification tasks, RMSE for regression tasks

- Secondary: Precision-recall curves, training time, inference speed

- Statistical significance testing between encoding performances

Protocol for Learned Embeddings

For end-to-end learned embeddings, the following specific protocol is recommended:

Embedding Layer Configuration:

- Initialize with random weights of appropriate dimension

- Allow joint training with main model parameters

- Compare fixed dimensionalities (4, 8, 16, 32) to identify optimal size

Training Procedure:

- Use standard optimizers (Adam) with controlled learning rates

- Implement early stopping based on validation performance

- Monitor for overfitting, especially with higher-dimensional embeddings

Validation:

- Compare performance against classical encoding baselines

- Analyze embedding vectors for biologically meaningful patterns

- Visualize embedding spaces to assess clustering by chemical properties

Research Reagent Solutions for Encoding Implementation

Table 3: Essential Research Resources for Amino Acid Encoding Implementation

| Resource Category | Specific Tools/Databases | Function | Access Information |

|---|---|---|---|

| Amino Acid Property Databases | AAindex [35] [33] | Comprehensive repository of 566 physicochemical properties | https://www.genome.jp/aaindex/ |

| Deep Learning Frameworks | TensorFlow with Keras [34] | Implementation of embedding layers and model architectures | https://www.tensorflow.org/ |

| Bioinformatics Libraries | BioPython | Access to substitution matrices and sequence utilities | https://biopython.org/ |

| Pre-trained Language Models | ESM-2, ProtTrans [36] | Protein-specific embeddings for transfer learning | https://github.com/facebookresearch/esm |

| Structure Prediction Tools | AlphaFold2, RoseTTAFold [36] | Template generation and structural context | https://github.com/deepmind/alphafold |

| Specialized Encoding Tools | AAindexNC [35] | Property prediction for non-canonical amino acids | https://aaindexnc.eimb.ru/ |

The optimal choice of amino acid encoding strategy depends critically on the specific phenotypic prediction task, available data resources, and computational constraints. Based on current experimental evidence:

For novel prediction tasks with limited prior biological knowledge, end-to-end learned embeddings provide the most flexible approach, automatically discovering relevant features while achieving competitive performance with reduced dimensionality [31].

When evolutionary information is particularly relevant to the phenotype (e.g., homology detection), BLOSUM-type substitution matrices offer biologically meaningful representations grounded in evolutionary principles [31].

For structure-related predictions or when evolutionary information is limited, physicochemical property encodings provide valuable inductive biases that improve generalization [32].

Hybrid approaches that combine multiple encoding strategies often achieve superior performance by capturing complementary aspects of amino acid information [32].

As the field advances, the integration of these encoding strategies with protein language models and structure-aware representations will likely push the boundaries of phenotypic prediction accuracy further, enabling more precise engineering of amino acid sequences for desired secretion properties and therapeutic applications.

Machine Learning Architectures for Phenotype Prediction

Convolutional Neural Networks for Spatial Feature Extraction

In the field of amino acid secretion research, accurately predicting phenotypic outcomes from spatial and structural data is paramount for advancing therapeutic development. Convolutional Neural Networks (CNNs) have emerged as a powerful computational tool for spatial feature extraction, capable of learning complex hierarchical representations directly from raw input data. Their architecture is particularly suited to identifying spatially-localized patterns—from simple edges and textures in initial layers to complex, abstract features in deeper layers—making them exceptionally valuable for analyzing biological data where spatial relationships determine function [37] [38]. This guide provides an objective comparison of CNN performance against alternative feature extraction methods, with a specific focus on applications relevant to phenotypic prediction in amino acid secretion studies. We present summarized experimental data, detailed methodologies, and essential resources to inform researchers and drug development professionals.

Performance Comparison: CNNs vs. Alternative Feature Extraction Techniques

Table 1: Comparative Performance in Image-Based Classification Tasks

| Feature Extraction Method | Reported Accuracy | Precision | Recall/Sensitivity | Specificity | F1-Score | AUC | Domain (Study) |

|---|---|---|---|---|---|---|---|

| Convolutional Neural Network (CNN) | >99% [39] | N/R | N/R | N/R | N/R | N/R | Meat Adulteration (Thermal) |

| CNN (ResNet50) | 99.2% [40] | N/R | N/R | 99.6% | 99.1% | 0.999 [40] | Breast Cancer (Histopathology) |

| CNN (ConvNeXT) | 99.2% [40] | N/R | N/R | 99.6% | 99.1% | 0.999 [40] | Breast Cancer (Histopathology) |

| Gabor Filter | <99% (Inferior to CNN) [39] | N/R | N/R | N/R | N/R | N/R | Meat Adulteration (Thermal) |

| Handcrafted Features (HF) | ~65% (Balanced Acc.) [41] | N/R | N/R | N/R | N/R | N/R | Parkinson's Dysgraphia (Handwriting) |

| CNN-Learned Features | ~58-60% (Balanced Acc.) [41] | N/R | N/R | N/R | N/R | N/R | Parkinson's Dysgraphia (Handwriting) |

N/R: Not explicitly reported in the source material within the context of the comparison.

Table 2: Comparative Performance in Biochemical Phenotype Prediction from Sequence Data

| Model Type | Spearman Correlation | Phenotype | Biological Context |

|---|---|---|---|

| Convolutional Neural Network (CNN) | 0.78 [18] | ACE2 Binding Affinity | SARS-CoV-2 RBD - Human ACE2 Interaction |

| Multilayer Perceptron (MLP) | <0.78 (Inferior to CNN) [18] | ACE2 Binding Affinity | SARS-CoV-2 RBD - Human ACE2 Interaction |

| Linear Regression | 0.49 [18] | ACE2 Binding Affinity | SARS-CoV-2 RBD - Human ACE2 Interaction |

| CNN (Integrated Model) | r² = 0.30 (H. sapiens) [42] | Protein Abundance | Prediction from mRNA & Sequence |

| Previous Sequence-Based Model | ~50% lower r² than CNN [42] | Protein Abundance | Prediction from mRNA & Sequence |

Key Performance Insights

- Superiority in Complex Pattern Recognition: CNNs consistently outperform traditional machine learning and handcrafted feature methods in tasks requiring the identification of complex, hierarchical spatial patterns. In a direct comparison for meat adulteration detection, CNNs achieved over 99% accuracy, surpassing the performance of traditional techniques like Local Binary Pattern (LBP), Gray Level Co-occurrence Matrices (GLCM), and Gabor filters [39].

- Effectiveness in Biological Sequence Analysis: The application of CNNs extends beyond image data. In modeling mutational effects on biochemical phenotypes, a sequence-based CNN significantly outperformed linear regression, achieving a Spearman correlation of 0.78 for predicting binding affinity changes in the SARS-CoV-2 spike protein [18]. This demonstrates their utility for spatial feature extraction from amino acid sequence data.

- Context-Dependent Performance: The advantage of CNNs is not absolute. In some specific tasks, such as language-dependent sentence writing analysis for Parkinson's disease, carefully designed handcrafted features can slightly outperform features automatically extracted by a pre-trained CNN [41]. This highlights the importance of matching the tool to the specific data modality and research question.

Experimental Protocols for CNN-Based Phenotypic Prediction

To ensure reproducible and reliable results, adherence to standardized experimental protocols is crucial. Below are detailed methodologies for two key types of experiments cited in the performance comparisons.

Protocol 1: CNN for Image-Based Phenotypic Classification

This protocol is adapted from studies on medical image analysis and food adulteration detection [39] [40].

Data Acquisition & Preprocessing:

- Image Acquisition: Collect raw image data using appropriate sensors (e.g., thermal cameras, microscopes, MRI machines). Standardize resolution and lighting conditions where possible.

- Preprocessing: Apply noise reduction filters and contrast enhancement to highlight relevant features. Normalize pixel values to a standard range (e.g., 0-1).

- Data Augmentation: Artificially expand the training dataset by applying random, realistic transformations to the images, such as rotation, flipping, cropping, and slight color jittering. This technique is critical for preventing overfitting and improving model generalization [37] [38].

Model Architecture & Training:

- Architecture Selection: Choose a well-established CNN backbone (e.g., ResNet, ConvNeXT, EfficientNet) based on the task's complexity and computational constraints [40].

- Transfer Learning: Initialize the model with weights pre-trained on a large, general dataset like ImageNet. This provides a strong starting point for feature extraction [38].

- Fine-Tuning: Replace the final classification layer to match the number of phenotypic classes in your dataset. Use a lower learning rate for the pre-trained layers and a higher one for the new head during training to adapt the learned features to the specific domain.

- Loss Function & Optimization: Use Categorical Cross-Entropy loss for multi-class problems. Optimize with stochastic gradient descent (SGD) or Adam, employing a learning rate scheduler to reduce the rate as training progresses.

Evaluation:

- Validation: Use a hold-out validation set or k-fold cross-validation to monitor performance during training and select the best model.

- Metrics: Report standard metrics on a blinded test set, including Accuracy, Precision, Recall, Specificity, F1-Score, and the Area Under the Receiver Operating Characteristic Curve (AUC-ROC) [40] [43].

Protocol 2: CNN for Sequence-Based Phenotype Prediction from DMS

This protocol is derived from research modeling mutational effects on biochemical phenotypes like binding affinity and protein expression [18].

Data Preparation:

- Input Encoding: Represent protein or nucleotide sequences numerically. One-hot encoding is a common method, where each amino acid or nucleotide is represented as a binary vector.

- Feature Enrichment: Incorporate an external featurization table (e.g., AAindex) that summarizes intrinsic physicochemical properties of amino acids, such as hydrophobicity, solvent-accessible surface area, and long-range non-bonded energy per atom. This has been shown to significantly improve prediction performance [18].

- Data Splitting: Split the deep mutational scanning (DMS) data into training (e.g., 60%), tuning/validation (e.g., 20%), and testing (e.g., 20%) subsets, ensuring no data leakage between sets.

Model Architecture & Training:

- 1D Convolutional Layers: Apply convolutional filters that slide along the sequence to learn local, position-invariant motifs and patterns. The filters in the first layers often learn to detect simple sequence motifs, which are combined into more complex features in deeper layers.

- Activation and Pooling: Pass outputs through activation functions (e.g., ReLU) and pooling layers to introduce non-linearity and reduce dimensionality.

- Fully Connected Head: The extracted features are flattened and passed through one or more fully connected layers to map them to the final phenotypic readout (e.g., binding affinity score).

- Regularization: Use techniques like dropout during training, which randomly turns off a subset of neurons to prevent the network from over-relying on any single node and to encourage more robust feature learning [18] [37].

Validation and Interpretation:

- Performance Assessment: Evaluate the model on the held-out test set using metrics relevant to regression or classification, such as Spearman correlation or Mean Squared Error.

- Motif Analysis: Analyze the weights of the trained convolutional filters to identify sequence motifs that the model has learned to be important for the phenotype. Clustering these filters can reveal known and putative regulatory elements [42].

Visualizing the CNN Workflow for Biological Data

The following diagram illustrates the core workflow of a CNN for processing different data types relevant to phenotypic prediction, such as images of biological samples or amino acid sequences.

CNN Workflow for Phenotypic Prediction

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Essential Computational Tools for CNN-Based Research

| Tool / Solution | Function / Description | Relevance to Phenotypic Prediction |

|---|---|---|

| TensorFlow / PyTorch | Open-source libraries for building and training deep learning models. | Provide the flexible framework necessary for implementing and customizing CNN architectures for novel biological data. |

| One-Hot Encoding | A simple method for converting categorical data (e.g., amino acids) into a numerical format. | Essential for representing protein or nucleotide sequences as input for a CNN [18] [42]. |

| AAindex Database | A curated database of numerical indices representing various physicochemical and biochemical properties of amino acids. | Integrating these features (e.g., hydrophobicity) significantly boosts CNN prediction performance for sequence-structure-phenotype tasks [18]. |

| Pre-trained Models (e.g., on ImageNet) | CNNs previously trained on large, generalist datasets. | Serve as a powerful starting point for new tasks via transfer learning, reducing data and computational requirements [38]. |

| Data Augmentation Pipelines | Algorithms for generating modified versions of training data. | Critically prevents overfitting and improves model generalization, especially vital when working with limited biological datasets [37]. |

| Dropout Regularization | A technique that randomly ignores a subset of neurons during training. | Prevents co-adaptation of neurons and overfitting, leading to more robust and generalizable models [18] [38]. |

Convolutional Neural Networks represent a superior methodology for spatial feature extraction in a wide range of applications relevant to phenotypic prediction in amino acid secretion research. The experimental data and protocols outlined in this guide demonstrate their capacity to automatically learn relevant, hierarchical features from complex input data, often surpassing the performance of traditional methods and other neural network architectures. While the choice of model depends on the specific data modality and research question, CNNs offer a powerful, versatile, and data-efficient toolkit for researchers and drug development professionals aiming to enhance the accuracy of their phenotypic predictions.

Graph Neural Networks Modeling Protein Structures and Interactions

The accurate prediction of protein structures and their intricate interactions represents a cornerstone of modern biological research, with profound implications for understanding cellular functions, disease mechanisms, and drug development. Within this domain, Graph Neural Networks (GNNs) have emerged as transformative computational tools that fundamentally reshape how researchers model biological systems. Unlike traditional sequence-based models, GNNs natively operate on graph-structured data, making them exceptionally well-suited for representing proteins as networks of interacting residues or atoms [44] [45]. This capability allows GNNs to capture the complex topological and spatial relationships that govern protein folding and protein-protein interactions (PPIs), thereby offering unprecedented accuracy in phenotypic predictions relevant to amino acid secretion research [46]. The integration of GNNs into computational biology pipelines has accelerated the pace of discovery by providing more reliable models of protein function and interaction landscapes, which are essential for predicting how genetic variations influence secretory phenotypes and cellular behavior.

The biological significance of protein interactions extends far beyond structural considerations. PPIs regulate virtually all cellular processes, including signal transduction, metabolic pathways, gene expression regulation, and secretory mechanisms [46] [47]. Disruptions in these interactions can lead to pathological states, making their accurate prediction crucial for understanding disease etiology and developing targeted therapeutics. For researchers investigating amino acid secretion—a process fundamental to nutrient sensing, intercellular communication, and metabolic homeostasis—precise models of protein interaction networks are indispensable. These models help elucidate how proteins involved in synthesis, transport, and regulation coordinate their activities to control secretory fluxes, thereby enabling more accurate phenotypic predictions in both normal and diseased states [47].

Comparative Analysis of GNN Architectures for Protein Modeling