Benchmarking FBA Algorithms for E. coli Genome-Scale Models: A Comprehensive Guide for Predictive Metabolism and Strain Design

Flux Balance Analysis (FBA) is a cornerstone constraint-based method for predicting metabolic phenotypes in Escherichia coli, with critical applications in metabolic engineering and biomedical research.

Benchmarking FBA Algorithms for E. coli Genome-Scale Models: A Comprehensive Guide for Predictive Metabolism and Strain Design

Abstract

Flux Balance Analysis (FBA) is a cornerstone constraint-based method for predicting metabolic phenotypes in Escherichia coli, with critical applications in metabolic engineering and biomedical research. However, the accuracy and computational efficiency of FBA predictions are highly dependent on the choice of algorithm, model quality, and optimization solver. This article provides a systematic benchmark of FBA methodologies, from foundational principles and core model architectures to advanced hybrid machine-learning approaches and solver performance. We compare optimization techniques like MOMA and ROOM, evaluate commercial and open-source solvers for linear and mixed-integer problems, and explore the integration of graph neural networks for enhanced gene essentiality prediction. Aimed at researchers and scientists, this review synthesizes current best practices for model selection, troubleshooting, and validation to empower reliable, high-throughput metabolic simulations in E. coli and related organisms.

Foundations of E. coli Metabolic Modeling: From Genome-Scale Reconstructions to Core Models

Genome-scale metabolic models (GEMs) are structured computational knowledge bases that represent an organism's complete metabolic network [1]. These models mathematically describe the biochemical, genetic, and genomic (BiGG) information for an organism, enabling systems-level analysis of metabolic capabilities [2]. GEMs computationally describe gene-protein-reaction (GPR) associations for entire metabolic genes in an organism and can be simulated using constraint-based approaches like Flux Balance Analysis (FBA) to predict metabolic fluxes [1]. The first GEM was reconstructed for Haemophilus influenzae in 1999, marking the beginning of a rapidly expanding field that has produced models for thousands of organisms across bacteria, archaea, and eukarya [1].

Escherichia coli K-12 MG1655, as a model organism for bacterial genetics, has been the subject of extensive GEM development for nearly two decades [1]. This article traces the evolutionary trajectory of E. coli GEMs from the initial iJE660 model to the sophisticated iML1515 reconstruction, with particular emphasis on their expanding capabilities and performance in predicting metabolic phenotypes.

The Evolutionary Trajectory of E. coli GEMs

Historical Development and Key Milestones

The reconstruction of GEMs for E. coli represents a sustained effort to encapsulate growing understanding of its metabolism into computational frameworks. Being a model organism for bacterial genetics, the Gram-negative bacterium Escherichia coli has been subjected to genome-scale metabolic reconstruction campaigns for almost two decades [1]. The first E. coli GEM, iJE660, was reported in 2000 soon after the first release of the genome sequence of E. coli K-12 MG1655 [1]. This pioneering model established the foundation for subsequent iterations that progressively incorporated new genetic and biochemical discoveries.

The iJE660 model has subsequently been updated in terms of the coverage of GPR associations and prediction capacities, officially at least four times [1]. With each iteration, the models expanded in scope and accuracy, incorporating newly discovered metabolic functions, improved gene-protein-reaction associations, and updated biochemical knowledge. The most recent version, iML1515, contains information on 1,515 open reading frames, twice the number of open reading frames incorporated in the original iJE660 model [1]. This expansion reflects the substantial growth in knowledge about E. coli metabolism over nearly two decades of research.

Comparative Analysis of Model Features and Capabilities

Table 1: Evolution of Key Features in E. coli Genome-Scale Metabolic Models

| Model Feature | iJE660 (2000) | iJO1366 (2011) | iML1515 (2017) |

|---|---|---|---|

| Open Reading Frames | 660 | 1,366 | 1,515 |

| Metabolic Reactions | 739 | 2,583 | 2,719 |

| Unique Metabolites | Not specified | Not specified | 1,192 |

| Gene Essentiality Prediction Accuracy | Not specified | 89.8% | 93.4% |

| Notable Features | First comprehensive reconstruction | Expanded gene & reaction coverage | Protein structures, ROS metabolism, metabolite repair |

The quantitative expansion from iJE660 to iML1515 represents not only increased coverage but also enhanced model quality and functionality. The iML1515 knowledgebase is linked to 1,515 protein structures to provide an integrated modeling framework bridging systems and structural biology [2]. This structural integration enables analysis of sequence-structure-function relationships that was not possible in earlier models. Additionally, iML1515 incorporates metabolism of reactive oxygen species (ROS), metabolite repair pathways, and updated growth maintenance coefficients, reflecting advances in understanding of cellular physiology [2].

In-Depth Analysis of the iML1515 Model

Comprehensive Model Components and Structure

The iML1515 model represents the most complete genome-scale reconstruction of the metabolic network in E. coli K-12 MG1655 to date [2]. Enabling analysis of several data types, including transcriptomes, proteomes, and metabolomes, iML1515 accounts for 1,515 open reading frames and 2,719 metabolic reactions involving 1,192 unique metabolites [2]. The model includes 184 new genes and 196 new reactions compared to its immediate predecessor iJO1366, which contained 2,583 reactions [2].

A key innovation in iML1515 is the enhancement of classic gene-protein-reaction (GPR) relationships to catalytic domain resolution, creating domain-gene-protein-reaction (dGPR) relationships [2]. This approach links genes to specific protein domains rather than just entire proteins, enabling more precise mapping of genetic variations to structural and functional consequences. The model identifies 1,888 unique domains in the structural proteome of iML1515, with most proteins containing more than one domain [2]. This domain-level resolution provides insights into enzyme promiscuity and underground metabolism that were not accessible with previous modeling frameworks.

Advanced Features and Applications

iML1515 incorporates several advanced features that distinguish it from earlier reconstructions. The model includes updated reactive oxygen species (ROS)-generating reactions, increasing their number from 16 to 166 to produce iML1515-ROS [2]. It also includes reported links between genes and transcriptional regulators using a promoter 'barcode' for each gene, indicating whether a metabolic gene is regulated by a given transcription factor and the type of regulation [2].

The applications of iML1515 extend beyond traditional metabolic modeling. The knowledgebase has been used to build metabolic models of E. coli human gut microbiome strains from metagenomic sequencing data and to construct metabolic models for E. coli clinical isolates to predict their metabolic capabilities [2]. Additionally, researchers have utilized iML1515 to perform a comparative structural proteome analysis of 1,122 E. coli strains and identify multi-strain sequence variations [2]. These applications demonstrate how iML1515 serves as a platform for integrating and interpreting diverse data types beyond what was possible with earlier models.

Benchmarking FBA Performance Across E. coli GEMs

Gene Essentiality Prediction as a Benchmark Standard

Gene essentiality prediction represents a fundamental benchmark for evaluating the predictive capability of GEMs and FBA algorithms. This assessment involves simulating gene deletion mutants and determining whether the model predicts growth (non-essential) or no growth (essential) under specific conditions, with results compared to experimental gene essentiality data [2].

The iML1515 model demonstrates superior performance in this critical benchmark. The iML1515 knowledgebase predicted gene essentiality in 16 conditions with an accuracy of 93.4% compared with an accuracy of 89.8% using iJO1366, thus representing a 3.7% increase in predictive accuracy [2]. This improvement reflects both the expanded coverage of metabolic functions and the refined GPR associations in the newer model.

Table 2: Performance Comparison of E. coli GEMs for Gene Essentiality Prediction

| Performance Metric | iJO1366 | iML1515 | Improvement |

|---|---|---|---|

| Overall Accuracy | 89.8% | 93.4% | +3.7% |

| Carbon Sources Tested | Not specified | 16 | - |

| Essential Genes Identified | Not specified | 345 genes (188 in all conditions, 157 condition-specific) | - |

| False Positive Reduction | Baseline | 12.7% decrease with condition-specific models | Significant improvement |

Experimental validation of iML1515 involved genome-wide gene-knockout screens for the entire KEIO collection (3,892 gene knockouts) grown on 16 different carbon sources that represent different substrate entry points into central carbon metabolism [2]. These comprehensive experimental benchmarks provide a robust foundation for assessing model performance across diverse metabolic conditions.

Methodological Advances in FBA Benchmarking

Recent methodological innovations have further enhanced FBA benchmarking capabilities. Flux Balance Analysis (FBA), as the gold standard method, predicts metabolic phenotypes by combining genome-scale metabolic models (GEMs) with an optimality principle [3]. This technique can model many metabolic tasks, such as growth capabilities in various substrates, cell-specific auxotrophies, or responses to drug interventions [3].

A novel approach called Flux Cone Learning (FCL) has demonstrated potential to surpass traditional FBA in predictive accuracy. Using Monte Carlo sampling and supervised learning, FCL identifies correlations between the geometry of the metabolic space and experimental fitness scores from deletion screens [3]. When tested on E. coli, FCL delivered best-in-class accuracy for prediction of metabolic gene essentiality, outperforming the gold standard predictions of Flux Balance Analysis [3]. This machine learning framework represents a significant advancement in computational methods for leveraging the biological knowledge encoded in GEMs like iML1515.

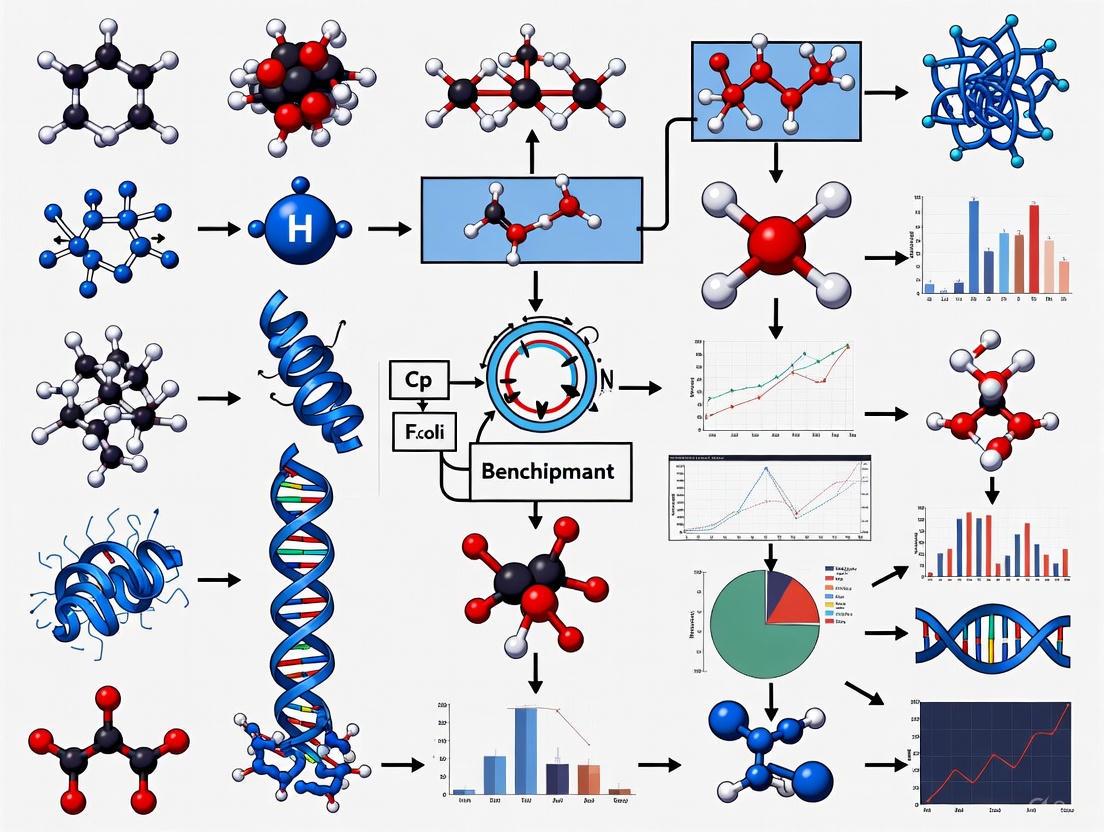

Figure 1: FBA Algorithm Benchmarking Workflow for E. coli GEMs

Experimental Protocols for GEM Validation

Standard Gene Essentiality Screening Protocol

The experimental validation of GEM predictions follows standardized protocols to ensure reproducibility and comparability. For iML1515 validation, researchers conducted experimental genome-wide gene-knockout screens for the entire KEIO collection (3,892 gene knockouts) grown on 16 different carbon sources representing different substrate entry points into central carbon metabolism [2]. The detailed methodology includes:

Strain Preparation: Utilizing the KEIO collection of single-gene knockout mutants in E. coli K-12 BW25113 background.

Growth Conditions: Culturing strains in minimal media with 16 different carbon sources including glucose, xylose, acetic acid, and others that represent different metabolic entry points.

Growth Phenotyping: Measuring growth profiles, including lag-time, maximum growth rate, and growth saturation point (OD) using high-throughput cultivation systems.

Essentiality Classification: Identifying genes essential for growth based on significantly impaired or absent growth compared to wild-type controls.

Model Comparison: Comparing experimental essentiality data with computational predictions across multiple conditions to calculate accuracy metrics.

This comprehensive experimental approach generated 345 genes that were essential in at least 1 of 16 conditions, with 188 genes essential in all conditions, and 157 essential in specific conditions [2].

Condition-Specific Model Contextualization Protocol

To enhance prediction accuracy, iML1515 can be contextualized to specific growth conditions using omics data. The protocol involves:

Data Collection: Acquiring proteomics data for E. coli K-12 MG1655 grown on seven carbon sources.

Reaction Removal: Eliminating reactions catalyzed by gene products not expressed in specific conditions.

GPR Adjustment: Modifying gene-protein-reaction associations based on expression patterns.

Model Simulation: Running FBA simulations with condition-specific constraints.

Validation: Comparing predictions with experimental growth data.

This condition-specific modeling approach resulted in an average 12.7% decrease in false-positive predictions and a 2.1% increase in essentiality predictions (MCC score) compared to the generic model [2].

Table 3: Essential Research Reagents for E. coli GEM Development and Validation

| Reagent/Resource | Function/Application | Example/Source |

|---|---|---|

| KEIO Collection | Genome-wide single-gene knockout mutants for experimental validation | E. coli K-12 BW25113 background |

| Biolog Phenotype Microarray | High-throughput growth profiling under different nutrient conditions | Biolog, Inc. |

| Protein Structure Databases | Linking metabolic genes to protein structures and domains | Protein Data Bank (PDB) |

| Metabolic Databases | Reference biochemical pathways and reaction information | KEGG, CHEBI |

| Transcriptomics Datasets | Condition-specific gene expression data for model contextualization | 333 normalized experiments in iML1515 |

| Monte Carlo Samplers | Generating flux distributions for machine learning approaches | Flux Cone Learning |

The KEIO collection deserves particular emphasis as it provides comprehensive genome-wide coverage of single-gene deletions, enabling systematic experimental validation of model predictions [2]. The integration of protein structure databases allows for the domain-gene-protein-reaction (dGPR) relationships that distinguish iML1515 from earlier models [2]. For advanced machine learning approaches like Flux Cone Learning, Monte Carlo samplers are essential for generating training data that captures the shape of the metabolic space [3].

Emerging Applications and Future Directions

Expanded Applications of Advanced GEMs

The evolution of E. coli GEMs has enabled diverse applications across biotechnology and biomedical research. iML1515 has been applied to build metabolic models of E. coli human gut microbiome strains from metagenomic sequencing data and to construct metabolic models for E. coli clinical isolates to predict their metabolic capabilities [2]. These applications demonstrate the utility of GEMs in understanding the metabolic basis of strain diversity and adaptation.

In metabolic engineering and synthetic biology, GEMs play crucial roles in strain development for chemicals and materials production [1]. The iML1515 model serves as a knowledgebase for designing engineered strains with enhanced production capabilities for valuable compounds. Additionally, compact models derived from iML1515, such as iCH360, provide focused resources for specific applications like enzyme-constrained flux balance analysis, elementary flux mode analysis, and thermodynamic analysis [4].

Innovative Computational Frameworks

The field is witnessing the development of novel computational frameworks that leverage the rich information in GEMs. Flux Cone Learning (FCL) represents a promising approach that uses Monte Carlo sampling and supervised learning to predict deletion phenotypes from the shape of the metabolic space [3]. This method has demonstrated best-in-class accuracy for predicting metabolic gene essentiality in organisms of varied complexity, outperforming standard FBA predictions [3].

Another innovative direction involves the creation of machine learning surrogates for whole-cell models. These surrogates can achieve a 95% reduction in computational time compared with the original whole-cell model while maintaining high accuracy in predicting cell viability [5]. Such approaches illustrate how the holistic understanding gained from comprehensive models can be leveraged for synthetic biology tasks while overcoming computational limitations.

Figure 2: Evolution and Diversification of E. coli Metabolic Models

The evolution of E. coli genome-scale models from iJE660 to iML1515 represents a remarkable trajectory of increasing comprehensiveness, accuracy, and application scope. This progression has seen a doubling of gene coverage from 660 to 1,515 open reading frames and a significant improvement in gene essentiality prediction accuracy from 89.8% with iJO1366 to 93.4% with iML1515 [1] [2]. The incorporation of protein structures, domain-level resolution of GPR relationships, and condition-specific modeling capabilities has transformed these models from simple metabolic networks into sophisticated knowledgebases that bridge systems and structural biology.

The benchmarking of FBA algorithms across these model generations demonstrates continuous improvement in predictive performance while also highlighting emerging approaches like Flux Cone Learning that may define the next evolutionary stage [3]. As these models continue to evolve, they will undoubtedly remain indispensable tools for researchers, scientists, and drug development professionals seeking to understand, predict, and engineer microbial metabolism for diverse applications in biotechnology, medicine, and basic science.

Genome-scale metabolic models (GEMs) and core metabolic models (CMMs) serve as fundamental tools in systems biology for investigating cellular metabolism. The choice between a comprehensive GEM and a focused CMM presents a critical trade-off between network coverage and model interpretability, a balance that is paramount when benchmarking Flux Balance Analysis (FBA) algorithms for E. coli research. This guide provides an objective comparison of these model classes to inform their application in metabolic engineering and drug development.

Genome-Scale Models (GEMs) are comprehensive knowledge bases that computationally describe gene-protein-reaction associations for an organism's entire metabolic genes [6]. They aim to represent the complete metabolic network of a cell.

Core Metabolic Models (CMMs) are streamlined, manually curated sub-networks of GEMs that focus on central metabolic pathways essential for producing energy carriers and biosynthetic precursors [4].

The table below summarizes the defining characteristics of each model class.

Table 1: Fundamental Characteristics of GEMs and CMMs

| Characteristic | Genome-Scale Model (GEM) | Core Metabolic Model (CMM) |

|---|---|---|

| Scope & Coverage | Organism-wide metabolism; all known metabolic reactions [7] [6] | Central metabolism; pathways for energy and key biosynthetic precursors [4] |

| Primary Application | Systems-level studies, pan-reactome analysis, strain development, drug target identification [6] | Educational tool, benchmark for novel algorithms, metabolic engineering design, detailed pathway analysis [4] |

| Typical Size (E. coli) | ~1,500 genes, ~2,700 reactions (e.g., iML1515) [4] [6] | ~100 reactions (e.g., ecolicore) [8] |

| Interpretability | Challenging due to size; predictions can be hard to interpret and sometimes biologically unrealistic [4] | High; easy to visualize and interpret flux distributions due to compact size [4] |

| Computational Demand | High for complex methods (e.g., sampling, EFM analysis) [4] [9] | Low; suitable for computationally intensive methods like kinetic modeling and EFM analysis [4] |

Quantitative Performance Comparison in E. coli Case Studies

The performance of GEMs and CMMs can be quantitatively evaluated based on their predictive accuracy for growth, gene essentiality, and metabolite production in E. coli. The following table consolidates key experimental findings.

Table 2: Experimental Performance Metrics for E. coli Models

| Model Name | Type | Key Performance Metric | Result | Experimental Context |

|---|---|---|---|---|

| iML1515 [4] [6] | GEM | Gene Essentiality Prediction | 93.4% Accuracy | Minimal media with 16 different carbon sources |

| GEMsembler Consensus Model [10] | Enhanced GEM | Auxotrophy & Gene Essentiality | Outperformed gold-standard models | Lactiplantibacillus plantarum and Escherichia coli |

| ecolicore [8] | CMM | Gene Essentiality Prediction (FBA) | F1-Score: 0.000 | Comparison against experimental ground truth |

| Topology-Based ML on ecolicore [8] | CMM-derived | Gene Essentiality Prediction | F1-Score: 0.400 | Machine learning model using graph-theoretic features |

| iCH360 [4] | CMM | Biological Realism | Avoids unphysiological bypasses | Manually curated model of energy and biosynthesis metabolism |

Experimental Protocols for Benchmarking

To ensure reproducible and objective comparisons between models and algorithms, standardized experimental protocols are essential. Below are detailed methodologies for two key benchmarking tests.

Protocol 1: Single-Gene Deletion for Gene Essentiality Prediction

This protocol tests a model's ability to predict which gene knockouts will prevent growth.

- Model Setup: Load the model (GEM or CMM) using a toolbox like COBRApy [8]. Set the constraints to simulate the desired growth condition (e.g., minimal glucose medium with appropriate uptake rates).

- Wild-Type Simulation: Perform an FBA simulation with biomass maximization as the objective function to determine the wild-type growth rate (μ_wt) [8].

- Gene Knockout Simulation: For each gene in the model:

- Constrain the flux of all reactions associated with the target gene to zero.

- Re-solve the FBA problem to compute the mutant growth rate (μ_mut).

- Essentiality Classification: A gene is classified as essential if the predicted mutant growth rate (μmut) is a small fraction (e.g., <5%) of the wild-type growth rate (μwt) [8].

- Validation: Compare predictions against a curated ground-truth dataset of experimentally verified essential genes (e.g., from the PEC database for E. coli) [8]. Calculate performance metrics like precision, recall, and F1-score.

Protocol 2: Consensus Model Assembly with GEMsembler

This protocol, based on the GEMsembler package, creates an improved model by combining multiple input GEMs [10].

- Input Preparation: Collect multiple GEMs for the same organism (e.g., E. coli) reconstructed by different tools (e.g., CarveMe, gapseq, modelSEED).

- Nomenclature Conversion: Convert metabolite and reaction IDs from all input models to a consistent namespace (e.g., BiGG IDs) to enable cross-model comparisons [10].

- Supermodel Assembly: Assemble all converted models into a single "supermodel" object that tracks the origin of every metabolic feature (metabolite, reaction, gene) [10].

- Consensus Generation: Generate consensus models based on feature confidence levels. For example, a "core3" model would contain only the metabolic features present in at least 3 of the 4 input models [10].

- Performance Evaluation: Test the predictive capacity (e.g., growth, auxotrophy, gene essentiality) of the resulting consensus models against a manually curated gold-standard model and experimental data [10].

GEMsembler Workflow for Building Consensus Models

Model Selection Guide and Research Applications

When to Use Each Model Class

- Choose a Genome-Scale Model (GEM) when: Your research requires a comprehensive, systems-wide view of metabolism. This is critical for tasks such as predicting organism-wide effects of genetic perturbations, identifying non-obvious drug targets in pathogens, studying host-pathogen interactions, or performing pan-metabolic analyses across multiple strains [6]. GEMs like iML1515 for E. coli are indispensable for simulating growth on diverse carbon sources and for genome-scale strain design [6].

- Choose a Core Metabolic Model (CMM) when: Your focus is on central metabolism, and your priority is high interpretability and computational efficiency. CMMs are ideal for developing and benchmarking new algorithms (e.g., FBA variants, machine learning models) [8], for educational purposes, and for applications where detailed analysis of flux distributions in central pathways is required, such as in certain metabolic engineering projects [4].

Advanced Applications and Workflows

The distinction between GEMs and CMMs is sometimes bridged by innovative workflows that leverage the strengths of both.

- Topology-Based Machine Learning: A CMM (ecolicore) can be used to generate a reaction-reaction graph. Graph-theoretic features (e.g., betweenness centrality) are then computed for each gene, serving as input for a machine learning classifier (e.g., Random Forest) to predict gene essentiality, a method that decisively outperformed traditional FBA in one study [8].

- Building "Goldilocks" Models: Tools like GEMsembler use GEMs as inputs to create refined consensus models [10]. Furthermore, medium-scale models like iCH360 are manually curated from GEMs to strike a balance, including all central metabolic pathways while remaining small enough for thorough curation and complex analyses like Elementary Flux Mode (EFM) analysis [4].

Primary Applications of GEMs and CMMs

Table 3: Key Computational Tools and Resources for Metabolic Modeling

| Tool/Resource Name | Type | Function in Research | Relevance to Model Type |

|---|---|---|---|

| COBRApy [8] | Software Toolbox | A Python package for constraint-based reconstruction and analysis of metabolic models; used for running FBA and other simulations. | Essential for both GEMs and CMMs |

| GEMsembler [10] | Software Package | Compares, combines, and analyzes GEMs built with different tools to build higher-performance consensus models. | Primarily for GEMs |

| BiGG Database [10] | Knowledgebase | A curated database of metabolic reactions and metabolites; used as a standard namespace for model reconciliation. | Primarily for GEMs |

| MetaNetX [10] | Platform | Connects metabolite and reaction namespaces from different databases, facilitating model comparison. | Primarily for GEMs |

| ecolicore Model [8] | Core Model | A well-established model of E. coli central metabolism; used as a benchmark and test network. | CMM |

| iML1515 Model [4] [6] | Genome-Scale Model | The most recent comprehensive GEM for E. coli K-12 MG1655; represents the state-of-the-art in coverage. | GEM |

| opt-yield-FBA [11] | Algorithm | An FBA-based method for calculating optimal yields, enabling dynamic modeling of genome-scale networks without EFM calculation. | Primarily for GEMs |

| ComMet [9] | Method | A framework for comparing metabolic states in large GEMs using flux space sampling, without relying on assumed objective functions. | Primarily for GEMs |

Benchmarking Flux Balance Analysis (FBA) algorithms requires well-defined, standardized metabolic networks that capture essential biological functions while remaining computationally tractable. For Escherichia coli research, the most valuable models for benchmarking balance comprehensive pathway coverage with manual curation to eliminate biologically implausible predictions. Genome-scale models (GEMs) provide extensive coverage but often generate unrealistic metabolic bypasses that complicate algorithm validation [4]. Conversely, overly simplified models miss critical biosynthetic pathways essential for assessing prediction accuracy in metabolic engineering contexts. The ideal benchmarking framework incorporates central carbon metabolism alongside biosynthetic networks for amino acids, nucleotides, and lipids, creating a metabolic "sweet spot" that enables rigorous testing of FBA algorithms against experimentally validated phenotypes [4] [12].

The selection of appropriate metabolic pathways for benchmarking directly impacts the assessment of FBA algorithm performance. Models must include sufficient complexity to reveal differences between methods while maintaining biological accuracy. Recent evaluations of E. coli GEMs have demonstrated that inaccurate gene essentiality predictions often stem from incomplete representation of cofactor dependencies and pathway redundancies [12]. By focusing on conserved, high-flux metabolic pathways that are essential for growth and biomass production, researchers can create standardized testing environments that generate comparable results across studies and algorithms.

Essential Metabolic Pathways for Benchmarking

Central Carbon Metabolic Network

The central carbon metabolism represents the core energy conversion system in E. coli and serves as the foundational element for any benchmarking framework. This network includes glycolysis, gluconeogenesis, the pentose phosphate pathway, and the tricarboxylic acid (TCA) cycle, which collectively generate energy, reducing equivalents, and precursor metabolites for biosynthetic processes. The compact iCH360 model, derived from the iML1515 genome-scale reconstruction, provides a manually curated representation of these pathways with comprehensive database annotations and visualization resources [4] [13]. These pathways carry high metabolic flux, are essential for growth under most conditions, and provide critical testing grounds for FBA algorithm performance in predicting carbon utilization efficiencies and metabolic flux distributions.

Central carbon metabolism offers particular value for benchmarking because it contains numerous regulatory checkpoints, branching points, and thermodynamic constraints that challenge simulation algorithms. The iCH360 model enriches stoichiometric representations with thermodynamic and kinetic constants, enabling more sophisticated benchmarking scenarios that assess how well algorithms incorporate these additional constraints [4]. When evaluating FBA methods, the central carbon network reveals differences in how algorithms handle energy balancing, redox cofactor regeneration, and precursor distribution – all critical factors for predicting realistic metabolic phenotypes.

Biosynthetic Pathway Networks

Biosynthetic pathways for amino acids, nucleotides, and lipids transform central metabolic precursors into essential biomass components, creating an extended testing framework for FBA algorithms. The iCH360 model includes curated versions of these biosynthetic networks while representing the conversion of precursors into complex biomass components through a consolidated biomass reaction [4]. This approach maintains pathway completeness for benchmarking while controlling model size. Amino acid biosynthesis pathways are particularly valuable for testing gene essentiality predictions, as they often involve complex regulation and cofactor dependencies that challenge simulation methods.

Nucleotide biosynthesis pathways provide excellent test cases for assessing FBA algorithm performance in predicting auxotrophies and nutrient requirements. These pathways frequently involve salvage pathways and interconversions that create redundant routes, testing how well algorithms handle metabolic redundancy. Lipid biosynthesis pathways, including both saturated and unsaturated fatty acid synthesis, offer opportunities to evaluate FBA predictions under varying environmental conditions, as membrane composition adapts to external factors [4]. Including these complete biosynthetic networks in benchmarking frameworks ensures that FBA algorithms are tested against the full metabolic capabilities required to support growth and replication.

Cofactor Metabolism and Essential Cofactor Pathways

Cofactor metabolism represents a critical, often overlooked component of metabolic networks that significantly impacts FBA benchmarking results. Evaluation of iML1515 predictions against experimental mutant fitness data revealed that inaccurate essentiality predictions frequently involve vitamin and cofactor biosynthesis pathways, including biotin, R-pantothenate, thiamin, tetrahydrofolate, and NAD+ metabolism [12]. These errors likely result from unaccounted cofactor availability in experimental conditions due to cross-feeding between mutants or metabolite carryover, highlighting the importance of carefully defining medium composition in benchmarking studies.

Including cofactor metabolism in benchmarking frameworks tests how well FBA algorithms handle the complex interconnections between cofactor biosynthesis and central metabolism. For example, accurate prediction of NAD+ auxotrophy requires proper representation of NAD+ utilization in central metabolic pathways and its regeneration through biosynthesis. The iCH360 model incorporates extensive biological information on cofactor dependencies, enabling more realistic benchmarking scenarios [4]. Cofactor pathways also provide excellent test cases for assessing thermodynamic constraints, as many cofactor-dependent reactions operate near thermodynamic equilibrium, creating potential bottlenecks that affect flux distributions.

Comparative Performance of Metabolic Models

Table 1: Comparison of E. coli Metabolic Models for Algorithm Benchmarking

| Model | Reactions | Genes | Pathways Included | Primary Benchmarking Applications |

|---|---|---|---|---|

| iCH360 | ~360 | ~360 | Central carbon, amino acid biosynthesis, nucleotide biosynthesis, fatty acid synthesis | Enzyme-constrained FBA, elementary flux mode analysis, thermodynamic analysis |

| iML1515 | 2,712 | 1,515 | Full metabolic network including degradation, cofactor biosynthesis, transport | Genome-scale phenotype prediction, gene essentiality assessment |

| E. coli Core (ECC) | 95 | 137 | Glycolysis, TCA, PPP, limited biosynthesis | Educational purposes, basic FBA implementation |

| ECC2 | ~350 | ~350 | Central metabolism + extended biosynthesis | Stoichiometric modeling, pathway analysis |

The performance characteristics of metabolic models vary significantly based on their scope and curation level, directly impacting their utility for FBA algorithm benchmarking. The iCH360 model represents an intermediate "Goldilocks" option that balances comprehensive coverage of energy metabolism and biosynthesis with manual curation to eliminate unrealistic predictions [4]. This model specifically includes pathways essential for producing energy carriers and biosynthetic precursors while omitting degradation pathways and de novo cofactor biosynthesis that can introduce computational artifacts in benchmarking. The manual curation process applied to iCH360 corrected problematic network structures from the parent iML1515 model, enhancing biological realism for algorithm testing.

Benchmarking studies using mutant fitness data across 25 carbon sources have revealed systematic limitations in genome-scale models like iML1515, particularly in handling isoenzyme gene-protein-reaction mappings and cofactor dependencies [12]. These limitations manifest as false essentiality predictions for genes involved in vitamin and cofactor biosynthesis. Compact models like iCH360 can mitigate these issues through careful manual curation while maintaining sufficient complexity for meaningful algorithm evaluation. For benchmarking studies focused on central metabolic functions, medium-scale models provide computational advantages, enabling more sophisticated analyses like elementary flux mode enumeration and thermodynamic profiling that are infeasible with genome-scale models [4].

Experimental Protocols for Benchmarking Studies

Gene Essentiality Prediction Protocol

Gene essentiality prediction represents a fundamental benchmarking test for FBA algorithms, with standardized protocols enabling direct comparison between methods. The following protocol, adapted from evaluations of E. coli GEMs, provides a robust framework for assessing essentiality prediction accuracy:

Reference Data Curation: Obtain experimentally validated essential gene sets from dedicated databases such as the PEC (Profiling of E. coli Chromosome) database. For E. coli K-12 MG1655 grown on glucose minimal medium, this typically yields approximately 20 essential metabolic genes [8].

Simulation Environment Specification: Define the simulated growth medium composition, typically M9 minimal medium with a single carbon source (e.g., glucose at 10 mM), ammonium as nitrogen source, and standard ion compositions. Critical vitamins and cofactors (biotin, thiamin, etc.) should be explicitly included or excluded based on the benchmarking objectives [12].

Gene Deletion Implementation: For each gene in the model, constrain the flux through all associated enzymatic reactions to zero using the model's gene-protein-reaction rules. For multi-subunit complexes, apply appropriate Boolean logic to simulate complete functional knockout [8].

Growth Prediction: Solve the FBA problem with biomass maximization as the objective function. A growth rate threshold (typically <1-5% of wild-type growth) classifies genes as essential or non-essential [8].

Performance Quantification: Calculate precision, recall, and F1-score by comparing predictions against experimental data. For imbalanced datasets (few essential genes among many non-essential), the area under the precision-recall curve (AUC) provides a more robust accuracy metric than overall accuracy [12].

This protocol specifically addresses common pitfalls in essentiality benchmarking, including the proper handling of vitamin and cofactor availability in simulation media, which significantly impacts prediction accuracy for biosynthesis pathways [12].

Metabolic Flux Distribution Protocol

Comparing predicted versus measured intracellular flux distributions provides a rigorous quantitative benchmark for FBA algorithms, particularly for central carbon metabolism:

Isotopic Tracer Experimentation: Conduct ¹³C-labeling experiments with specifically labeled glucose (e.g., [1-¹³C] or [U-¹³C] glucose) and measure isotopic labeling patterns in proteinogenic amino acids using GC-MS or LC-MS.

Flux Determination: Use metabolic flux analysis (MFA) software to compute intracellular flux distributions that best fit the experimental labeling data and extracellular flux measurements.

Flux Prediction: Apply FBA algorithms to predict flux distributions for the same growth conditions, using measured uptake and secretion rates as constraints.

Statistical Comparison: Quantify agreement between predicted and measured fluxes using statistical measures such as weighted sum of squared residuals (WRSS) or Pearson correlation coefficients across all comparable fluxes.

This approach specifically tests how well FBA algorithms predict metabolic pathway usage and flux partitioning at key branch points (e.g., glycolysis vs. pentose phosphate pathway), revealing differences in how algorithms handle network redundancy and regulation.

Table 2: Benchmarking Metrics for FBA Algorithm Evaluation

| Performance Category | Specific Metrics | Calculation Method | Interpretation |

|---|---|---|---|

| Gene Essentiality | Precision | TP / (TP + FP) | Proportion of correct essentiality predictions |

| Recall | TP / (TP + FN) | Proportion of essential genes correctly identified | |

| F1-Score | 2 × (Precision × Recall) / (Precision + Recall) | Balanced measure of precision and recall | |

| Precision-Recall AUC | Area under precision-recall curve | Overall performance accounting for class imbalance | |

| Flux Prediction | Mean Absolute Error | Σ|vpred - vmeas| / n | Average flux prediction error |

| Pearson Correlation | Cov(vpred, vmeas) / (σpred × σmeas) | Linear relationship between predicted and measured fluxes | |

| WRSS | Σ[(vpred - vmeas)² / σ²] | Goodness-of-fit weighted by measurement uncertainty | |

| Computational Efficiency | Solution Time | Time to solve optimization problem | Algorithm speed for practical applications |

| Memory Usage | Peak memory during computation | Resource requirements for large-scale studies |

Visualization of Benchmarking Pathways and Workflows

Central Carbon and Biosynthetic Metabolism

Diagram 1: Central carbon metabolism and biosynthetic network connections. This pathway visualization shows the integration between central carbon metabolism (yellow), energy production (red), and biosynthetic product formation (green), highlighting key metabolites used in FBA benchmarking.

FBA Benchmarking Workflow

Diagram 2: FBA algorithm benchmarking workflow. The standardized process for evaluating FBA algorithms includes model selection, experimental data integration, simulation setup, algorithm testing, and quantitative performance assessment using defined metrics.

Advanced Benchmarking Approaches

Machine Learning and Topological Analysis

Recent advances in metabolic network analysis have demonstrated that machine learning approaches based on network topology can outperform traditional FBA methods for specific prediction tasks like gene essentiality. A topology-based machine learning model using graph-theoretic features (betweenness centrality, PageRank, closeness centrality) achieved an F1-score of 0.400 for predicting gene essentiality in the E. coli core model, substantially outperforming standard FBA which failed to identify any known essential genes (F1-score: 0.000) [8]. This approach constructs a reaction-reaction graph from metabolic models, excluding highly connected currency metabolites, then computes topological metrics that capture a gene's structural importance within the network architecture.

The superior performance of topological methods for certain prediction tasks highlights the importance of including diverse benchmarking approaches beyond traditional FBA. Topological analysis captures the "keystone" role of certain reactions that occupy critical positions as metabolic bottlenecks or connectors between functional modules, independent of transient flux states [8]. Incorporating these structural benchmarks provides a more comprehensive assessment of metabolic network analysis algorithms, particularly for applications in drug discovery where identifying essential genes is paramount. Benchmarking frameworks should therefore include both functional simulations (FBA) and structural analyses to fully characterize algorithm performance.

Thermodynamic and Kinetic Constraints

Incorporating thermodynamic and kinetic constraints represents an advanced benchmarking dimension that tests how well algorithms handle biophysical realism. The iCH360 model includes curated thermodynamic and kinetic constants, enabling benchmarking scenarios that assess flux predictions under thermodynamic constraints [4]. Methods that properly account for reaction directionality, energy balancing, and metabolite dilution effects demonstrate improved prediction accuracy for growth rates and gene essentiality across diverse conditions.

Metabolite Dilution Flux Balance Analysis (MD-FBA) addresses a critical limitation in traditional FBA by accounting for the dilution of all intermediate metabolites during growth, not just those included in the biomass equation [14]. This approach is particularly important for metabolites participating in catalytic cycles, many of which are metabolic cofactors. Benchmarking studies have shown that MD-FBA improves predictions of gene essentiality and growth rates compared to standard FBA, especially for cofactor biosynthesis genes [14]. Including MD-FBA as a reference method in benchmarking frameworks helps evaluate how well new algorithms handle the complex interplay between metabolic flux and biomass composition.

Table 3: Essential Research Reagents and Computational Tools for Metabolic Benchmarking

| Resource | Type | Primary Function | Application in Benchmarking |

|---|---|---|---|

| iCH360 Model | Metabolic Model | Manually curated medium-scale model of E. coli metabolism | Reference network for algorithm testing and validation |

| iML1515 Model | Metabolic Model | Comprehensive genome-scale reconstruction of E. coli metabolism | Large-scale testing and comparative performance assessment |

| COBRA Toolbox | Software Package | MATLAB suite for constraint-based modeling | Standardized implementation of FBA and variant algorithms |

| COBRApy | Software Package | Python implementation of COBRA methods | Flexible, scriptable environment for algorithm development |

| PEC Database | Reference Data | Curated essential gene information for E. coli | Ground truth data for essentiality prediction benchmarks |

| RB-TnSeq Data | Experimental Data | High-throughput mutant fitness measurements | Validation dataset for phenotype prediction accuracy |

| KEGG Database | Pathway Resource | Biochemical pathway maps and functional annotations | Pathway analysis and network visualization |

| PANEV R Package | Visualization Tool | Pathway-based network visualization | Interactive exploration of metabolic networks and gene mappings |

Benchmarking FBA algorithms requires carefully selected metabolic pathways that capture essential biological functions while minimizing computational complexity. Central carbon metabolism combined with biosynthetic networks for amino acids, nucleotides, and lipids provides an optimal testing ground that balances these competing demands. The iCH360 model represents a particularly valuable resource for this purpose, offering manual curation, comprehensive annotations, and thermodynamic data that enable sophisticated benchmarking scenarios.

Future advancements in metabolic benchmarking will likely incorporate more sophisticated constraint formulations, including regulatory constraints and proteomic limitations, to better reflect biological reality. Additionally, standardized benchmarking protocols across multiple growth conditions and genetic backgrounds will provide more comprehensive algorithm assessments. As machine learning approaches continue to mature, benchmarking frameworks must evolve to include diverse methodological paradigms beyond traditional optimization-based approaches. Through continued refinement of these benchmarking resources and methods, the metabolic modeling community can develop more accurate, reliable algorithms with enhanced predictive capabilities for basic research and biotechnology applications.

Genome-scale metabolic models (GEMs) provide a computable representation of the complete set of biochemical reactions within a cell, enabling in silico simulation of metabolic capabilities. The critical framework connecting an organism's genetic blueprint to these metabolic functions is the Gene-Protein-Reaction (GPR) association. GPR rules are logical statements, typically using Boolean logic, that describe how gene products (proteins, enzyme subunits, isoforms) collaborate to catalyze specific metabolic reactions [15]. These associations create an essential bridge between genomic information and metabolic phenotype, allowing researchers to simulate how genetic perturbations affect cellular metabolism. In essence, GPRs form the genetic basis for in silico predictions, transforming static reaction networks into genetically-encoded models that can be contextually tailored using omics data.

The reconstruction of accurate GPR rules remains a cornerstone for reliable model predictions, particularly in constraint-based modeling approaches like Flux Balance Analysis (FBA). The integration of GPRs into models such as the expanded E. coli K-12 model (iJR904 GSM/GPR) represented a significant advancement, enabling direct analysis of gene-protein-reaction relationships and facilitating the integration of diverse datasets including genomic, transcriptomic, proteomic, and fluxomic data [16]. Without proper GPR associations, understanding the mechanistic link between genotype and phenotype remains hampered, as models can only describe metabolic phenomena at the reaction level without genetic resolution [17].

GPR Logic and Structure: The Computational Framework

Boolean Representation of Catalytic Mechanisms

GPR rules employ Boolean logic (AND, OR) to represent the complex relationships between genes and the reactions they enable. This logical structure accurately captures the biochemical reality of enzymatic catalysis:

- AND operator: Joins genes encoding different subunits of the same enzyme complex, indicating all specified subunits are required for catalytic activity

- OR operator: Joins genes encoding distinct protein isoforms of the same enzyme or subunit, indicating functional redundancy or alternative catalytic units

This Boolean representation allows complex scenarios to be described, such as multiple oligomeric enzymes behaving as isoforms due to sharing common subunits while possessing distinctive features [15]. The logical framework enables computational interpretation of genetic capabilities and facilitates the integration of gene expression data to create context-specific metabolic models.

Stoichiometric Representation of GPR Associations

Recent advancements have introduced stoichiometric representations of GPR associations that can be directly integrated into the stoichiometric matrix of constraint-based models. This transformation changes the Boolean representation of gene states (on/off) to a real-valued representation, effectively treating enzymes or enzyme subunits as species within the model [17]. This approach explicitly accounts for the individual flux carried by each enzyme or subunit encoded by specific genes, enabling:

- Gene-level phenotype predictions using standard constraint-based methods

- Formulation of objectives and constraints at the gene/protein level

- More accurate prediction of enzyme allocation and flux distributions

Statistical analysis of GPR structures in the iAF1260 E. coli model reveals the complexity of these associations, with over 16% of enzymes formed by protein complexes (up to 13 subunits), approximately 31% of reactions catalyzed by multiple isozymes (up to 7), and more than two-thirds (72%) catalyzed by at least one promiscuous enzyme [17].

Benchmarking FBA Algorithms: The Critical Role of GPRs

Validation Methodologies for Algorithm Performance

The quality of context-specific models generated through FBA approaches depends significantly on the algorithm choice and its handling of GPR associations. Benchmarking procedures typically employ two major validation categories [18]:

Table 1: Validation Methods for Context-Specific Reconstruction Algorithms

| Validation Type | Specific Methods | Algorithms Utilizing Method |

|---|---|---|

| Consistency Testing | Cross-validation | PRIME, FASTCORE, MBA, FASTCORMICS, iMAT |

| Diversity of generated models | GIMME, mCADRE, tINIT, FASTCORMICS | |

| Comparison-Based Testing | Comparison with manually curated networks | INIT, MBA |

| Comparison with additional databases | mCADRE, RegrEx, iMAT | |

| Comparison with shRNA knockdown screens | MBA, FASTCORMICS | |

| Comparison with metabolic exchange rates | PRIME |

Consistency testing evaluates robustness against noise and the ability to distinguish different biological contexts. This includes cross-validation to identify reactions consistently included/excluded despite input variations, and diversity assessment to ensure distinct cell types yield appropriately distinct models [18].

Comparison-based testing validates reconstructed models against external references, including manually curated networks, additional databases (e.g., BRENDA, HPA), functional genetic screens (shRNA), literature mining, and experimental metabolic exchange rates [18]. Each approach offers distinct insights into algorithm performance, with comprehensive benchmarking requiring multiple validation strategies.

Algorithm Performance in Predictive Modeling

Different algorithm classes exhibit varying performance characteristics in E. coli and other models:

Table 2: Performance Comparison of Model Extraction Methods

| Method | Class | E. coli Performance | Mammalian Performance | Key Characteristics |

|---|---|---|---|---|

| GIMME | Optimization-based | Best-performing [19] | Moderate | Minimizes removal of highly expressed genes |

| iMAT | Optimization-based | Good | Variable | Maximizes consistency with highly expressed genes |

| MBA | Pruning-based | Variable | Most alternate solutions [19] | Evidence-based reaction retention |

| mCADRE | Pruning-based | Good | Best-performing [19] | Most reproducible models |

| gMCS-based | Genetic Minimal Cut Sets | Superior sensitivity [20] | Superior sensitivity [20] | Identifies synthetic lethality |

The gMCS (genetic Minimal Cut Sets) approach represents a paradigm shift by operating directly on the reference metabolic network without prior contextualization, identifying minimal subsets of genes whose simultaneous removal blocks metabolic objectives like biomass production. This method demonstrated significantly superior sensitivity (OR = 3.62, p = 3.78×10⁻¹⁶) compared to reconstruction-based methods like GIMME (OR = 2.13, p = 0.003) and iMAT (OR = 1.22, p = 0.44) when validated against genome-scale loss-of-function screens [20].

Impact of GPR Accuracy on Prediction Outcomes

The critical importance of accurate GPR rules becomes evident in strain design and essentiality predictions. Traditional reaction-level intervention strategies identified by optimization procedures often prove infeasible when translated to gene-level modifications due to GPR complexities, particularly when target reactions involve promiscuous enzymes [17]. A stoichiometric representation of GPR associations enables feasible gene-based strain designs and improves phenotype prediction accuracy.

Experimental comparisons with ¹³C-flux data demonstrate that simple reformulations of simulation methods with gene-wise objective functions result in improved prediction accuracy [17]. The explicit representation of GPR rules allows more biologically realistic allocation of enzyme resources, moving beyond reaction-level abstractions to genetically-grounded metabolic simulations.

Experimental Protocols and Methodologies

GPR Reconstruction and Validation Protocol

Purpose: To reconstruct and validate accurate Gene-Protein-Reaction associations for genome-scale metabolic models.

Materials:

- Genome annotation of target organism

- Reference metabolic models (e.g., Recon, HMR for human; iJO1366 for E. coli)

- Biological databases: KEGG, UniProt, STRING, MetaCyc, Complex Portal

- Computational tools: GPRuler, RAVEN Toolbox, merlin

Procedure:

- Data Acquisition: Mine text and data from biological databases to identify gene-enzyme-reaction relationships [15]

- Boolean Rule Formulation: Construct GPR rules using AND/OR logic based on:

- Enzyme complex subunits (AND relationships)

- Isozyme alternatives (OR relationships)

- Promiscuous enzyme activities (multiple reactions)

- Stoichiometric Transformation: Convert Boolean GPR rules into stoichiometric representations by:

- Introducing enzyme usage variables

- Decomposing reversible reactions

- Handling isozyme-catalyzed reactions [17]

- Model Integration: Incorporate GPR rules into the stoichiometric matrix

- Validation: Assess GPR accuracy through:

- Comparison with manually curated rules

- Gene essentiality predictions versus experimental data

- Flux prediction accuracy with ¹³C-flux data [17]

Technical Notes: GPRuler provides an open-source Python-based framework for automated GPR reconstruction, mining information from nine different biological databases and demonstrating high accuracy in reproducing manually curated GPR rules [15].

Algorithm Benchmarking Protocol for FBA Methods

Purpose: To objectively compare the performance of different FBA algorithms in predicting metabolic phenotypes.

Materials:

- Reference genome-scale model (e.g., iJO1366 for E. coli)

- Gene expression datasets (microarray, RNA-seq)

- Experimental validation data (gene essentiality, flux measurements)

- Computational environments: COBRA Toolbox, MATLAB, Python

Procedure:

- Model Extraction: Generate context-specific models using different algorithms (GIMME, iMAT, MBA, mCADRE) with identical input data [19]

- Phenotype Protection: Explicitly and quantitatively protect flux through required metabolic functions (e.g., biomass production) [19]

- Ensemble Generation: Create multiple models for each algorithm to account for alternate optimal solutions

- Performance Assessment:

- Statistical Analysis: Use ROC plots and Euclidean distance metrics to identify best-performing models [19]

Technical Notes: The scope of alternate optimal solutions varies significantly by algorithm, with mCADRE generating the most reproducible models and MBA producing the most variable solutions [19]. Quantitative protection of metabolic functions is essential, as qualitative protection alone fails to accurately predict experimentally measured growth rates.

Pathway Visualization and Logical Relationships

GPR Logical Relationships: This diagram illustrates the Boolean logic underlying GPR associations, showing AND relationships (enzyme complexes requiring multiple subunits) and OR relationships (isozymes providing alternative catalytic routes).

Table 3: Essential Research Reagents and Computational Tools for GPR Research

| Resource Type | Specific Tools/Databases | Application in GPR Research |

|---|---|---|

| Biological Databases | KEGG, UniProt, STRING, MetaCyc, Complex Portal | Source of gene-protein-reaction relationship data [15] |

| Reference Metabolic Models | Recon (human), iJO1366 (E. coli), iJR904 (E. coli) | Benchmarking and validation frameworks [16] [19] |

| GPR Reconstruction Tools | GPRuler, RAVEN Toolbox, merlin, SimPheny | Automated reconstruction of GPR rules [15] |

| Model Extraction Algorithms | GIMME, iMAT, MBA, mCADRE, FASTCORE | Generation of context-specific models [18] [19] |

| Constraint-Based Modeling Tools | COBRA Toolbox, ΔFBA, TIObjFind | Simulation and analysis of metabolic networks [21] [22] |

| Validation Datasets | Gene knockout screens (Achilles), ¹³C-flux data, shRNA screens | Experimental validation of predictions [18] [20] |

Accurate Gene-Protein-Reaction associations form the genetic foundation of reliable in silico predictions in metabolic modeling. The benchmarking of FBA algorithms reveals significant differences in performance, with method selection dependent on the biological context—GIMME excels in prokaryotic systems like E. coli, while mCADRE demonstrates superior performance in complex mammalian models [19]. Emerging approaches that operate directly on genetic minimal cut sets (gMCSs) show promising sensitivity in identifying synthetic lethal interactions without requiring prior network contextualization [20].

The field continues to evolve with automated GPR reconstruction tools like GPRuler improving accuracy and reproducibility, while stoichiometric representations of GPR associations enable gene-level constraint-based analysis [15] [17]. As these methodologies mature, the integration of accurate GPR rules with sophisticated algorithm benchmarking promises to enhance the predictive capabilities of metabolic models, advancing their application in basic research, drug development, and metabolic engineering.

Genome-scale metabolic models (GEMs) provide a structured, mathematical representation of an organism's metabolism, enabling the simulation of physiological states and prediction of metabolic phenotypes. For the well-studied bacterium Escherichia coli, the most recent genome-scale reconstruction, iML1515, accounts for 1,877 metabolites and 2,712 reactions mapped to 1,515 genes [4]. However, the predictive power and biological relevance of these models heavily depend on the quality of their underlying data, annotations, and curation protocols. Standardizing these elements is paramount for ensuring model reproducibility, comparability, and reliability in research and drug development applications.

Flux Balance Analysis (FBA) serves as a cornerstone computational technique for analyzing these metabolic networks, predicting metabolic flux distributions by optimizing a defined cellular objective, such as biomass maximization [22]. Yet, FBA predictions can face challenges in capturing flux variations under different conditions, and their accuracy fundamentally relies on the quality and standardization of the model itself [22]. This guide objectively compares current standards, databases, and curation protocols that underpin model quality for E. coli GEM research, providing researchers with a framework for evaluating and implementing best practices in metabolic modeling.

Database Standards and Metabolic Model Repositories

The foundation of any high-quality metabolic model lies in its comprehensive and accurate database annotations. Standardized databases ensure that model components—reactions, metabolites, and genes—are consistently annotated and easily comparable across different models and studies.

Table 1: Core Databases for Metabolic Model Curation and Annotation

| Database Name | Primary Focus | Key Features | Relevance to E. coli Modeling |

|---|---|---|---|

| KEGG | Pathway and functional annotation | Extensive collection of biological pathways; links genes to functions | Foundational database for reaction stoichiometry and pathway mapping [22] |

| EcoCyc | E. coli specific biology | Encyclopedic resource for E. coli K-12 MG1655; curated from literature | Essential for strain-specific model refinement and validation [22] |

| BiGG Models | Curated metabolic reconstructions | Knowledgebase of standardized genome-scale metabolic models | Repository for iML1515 and other E. coli models; enables cross-model comparison [3] |

| MetaNetX | Model reconciliation and analysis | Platform for integrating and analyzing metabolic networks | Automates annotation checks and supports model standardization [23] |

Effective model standardization extends beyond initial database annotations. The iCH360 model, a manually curated medium-scale model derived from iML1515, demonstrates the value of enhanced annotations. This model extends coverage of annotations pointing to external databases and includes additional layers of biological information on catalytic function, protein complex composition, and small molecule regulation [4]. Such enrichment expands the model's applicability for advanced analyses like enzyme-constrained FBA and thermodynamic profiling.

Model Curation Protocols and Quality Assessment

Manual curation remains an irreplaceable step in developing high-quality metabolic models, despite the availability of automated reconstruction tools. Curation protocols ensure biological accuracy and prevent the propagation of errors from databases to functional models.

Curation Workflow and Quality Assurance

The following diagram illustrates a standardized model curation workflow that integrates automated checks with manual review processes:

Standardized quality assessment employs specific metrics to evaluate annotation consistency and model functionality. For data annotation quality, Inter-Annotator Agreement (IAA) metrics such as Cohen's Kappa, Fleiss' Kappa, and Krippendorff's Alpha provide statistical measures of agreement between different curators or annotation sources [24] [25] [26]. These metrics help quantify consistency in manual curation efforts and identify areas requiring clearer guidelines. Additional quality assurance techniques include implementing gold standard testing against validated datasets, conducting random sampling audits of annotations, and establishing consensus pipelines for resolving discrepancies in curation decisions [25] [26].

Protocol Implementation for E. coli Models

For E. coli specific models, implementation of these protocols has demonstrated significant benefits. The iCH360 model was created through manual curation that applied corrections to the original iML1515 reactions based on literature evidence [4]. This process eliminated biologically unrealistic predictions and unphysiological metabolic bypasses that often plague genome-scale models. The curation included enrichment with quantitative data such as thermodynamic and kinetic constants, substantially enhancing the model's utility for advanced analysis methods [4].

Benchmarking FBA Algorithms: Performance Comparison

Standardized models enable rigorous benchmarking of FBA algorithms. Recent methodological advances have introduced frameworks that move beyond traditional biomass maximization objectives to improve prediction accuracy.

Table 2: Performance Comparison of FBA Algorithms for E. coli Metabolic Models

| Algorithm/Method | Core Approach | Experimental Validation | Reported Advantages |

|---|---|---|---|

| Traditional FBA | Biomass maximization single objective | 93.5% accuracy predicting gene essentiality in E. coli on glucose [3] | Established benchmark; computationally efficient |

| TIObjFind | Integrates MPA with FBA; infers context-specific objectives | Better alignment with experimental flux data across conditions [22] | Captures metabolic flexibility; reduces need for pre-defined objectives |

| Flux Cone Learning (FCL) | Machine learning on metabolic space geometry | 95% accuracy predicting gene essentiality; outperforms FBA [3] | No optimality assumption needed; applicable to diverse phenotypes |

| ObjFind | Determines Coefficients of Importance (CoIs) for reactions | Improved prediction of flux distributions [22] | Data-driven objective function identification |

The experimental protocol for benchmarking these algorithms typically involves:

- Model Preparation: Utilizing a standardized model like iML1515 or iCH360 to ensure consistency [4] [3].

- Data Integration: Incorporating experimental flux data or gene essentiality data for validation [22] [3].

- Algorithm Implementation: Applying each algorithm with appropriate parameters (e.g., for TIObjFind, defining start and target reactions for pathway analysis) [22].

- Performance Quantification: Comparing predictions against experimental outcomes using metrics like accuracy, precision, recall, and flux prediction error [3].

Flux Cone Learning represents a particularly significant advancement, as it uses Monte Carlo sampling of the metabolic flux space and supervised learning to correlate flux cone geometry with experimental fitness data, achieving best-in-class accuracy for predicting metabolic gene essentiality in E. coli without requiring an explicit optimality assumption [3].

Context-Specific Model Extraction: Methodologies and Standards

Constructing condition-specific models from global reconstructions using omics data requires standardized extraction protocols to ensure biological relevance. These methodologies integrate transcriptomic data to create models reflective of specific physiological states.

Extraction Method Comparison

The following diagram outlines the standardized workflow for generating context-specific models, highlighting key decision points that affect model quality:

Benchmark studies have quantified the impact of methodological choices on model quality. For RNA-seq data normalization, between-sample methods (RLE, TMM, GeTMM) produce context-specific models with considerably lower variability in the number of active reactions compared to within-sample methods (FPKM, TPM) [27]. These methods also more accurately capture disease-associated genes, with average accuracy of approximately 0.80 for Alzheimer's disease models [27]. For extraction algorithms, mCADRE generates the most reproducible context-specific models, while models generated using MBA exhibit the most alternate solutions [23]. The performance of these algorithms also depends on model complexity; GIMME generates the best-performing models for E. coli, while mCADRE is better suited for complex mammalian models [23].

Standardized Extraction Protocol

A standardized protocol for generating context-specific models includes:

- Data Preprocessing: Normalize RNA-seq data using between-sample methods (RLE, TMM, or GeTMM) to minimize technical variability [27].

- Covariate Adjustment: Account for covariates such as age, gender, or post-mortem interval that can affect gene expression patterns [27].

- Algorithm Selection: Choose extraction algorithms based on organism complexity—GIMME for E. coli and mCADRE for mammalian systems [23].

- Phenotype Protection: Explicitly and quantitatively protect metabolic tasks defining essential cellular functions to maintain biological relevance [23].

- Ensemble Evaluation: Screen ensembles of alternate models using receiver operating characteristic plots to identify best-performing models [23].

Table 3: Essential Research Reagents and Computational Tools for Standardized Metabolic Modeling

| Resource Category | Specific Tools/Reagents | Function in Workflow | Implementation Notes |

|---|---|---|---|

| Computational Frameworks | COBRApy, TIObjFind, Flux Cone Learning | FBA simulation, objective function identification, phenotype prediction | FCL uses random forest classifiers on flux samples [3]; TIObjFind integrates MPA with FBA [22] |

| Data Integration Tools | iMAT, INIT, mCADRE | Context-specific model extraction from transcriptomic data | iMAT and INIT don't require biological objective definition [27] |

| Quality Assurance Metrics | Cohen's Kappa, Krippendorff's Alpha, Gold Standard Testing | Quantify annotation consistency and model quality | Gold standards should be expert-curated dataset subsets [25] |

| Model Samplers | Monte Carlo Samplers (for FCL) | Characterize flux space geometry for machine learning | Generate 100+ samples/deletion cone for training [3] |

| Reference Datasets | Keio Collection (E. coli knockouts), RNA-seq datasets | Experimental validation of model predictions | Essential for benchmarking algorithm performance [23] |

Standardizing database annotations, curation protocols, and benchmarking methodologies is essential for advancing E. coli metabolic modeling research and applications. The field has progressed significantly from relying solely on comprehensive but sometimes unwieldy genome-scale models to developing purpose-specific, carefully curated models like iCH360 that balance coverage with analytical tractability [4]. Concurrently, FBA algorithms have evolved from single-objective optimization to sophisticated frameworks like TIObjFind and Flux Cone Learning that better capture cellular metabolic objectives and improve predictive accuracy [22] [3].

For researchers and drug development professionals, adopting these standardized approaches ensures that metabolic models generate reliable, reproducible predictions that can effectively guide experimental design and hypothesis generation. As the field moves forward, continued development and adoption of community-wide standards for model quality, annotation, and benchmarking will be crucial for realizing the full potential of metabolic modeling in basic research and biotechnology applications.

FBA Algorithms and Applications: From Standard Optimization to Advanced Strain Design

Flux Balance Analysis (FBA) is a mathematical approach for simulating the metabolism of cells using genome-scale metabolic reconstructions (GEMs) [28]. As a constraint-based modeling technique, FBA enables researchers to predict metabolic flux distributions—the flow of metabolites through a biochemical network—by focusing on the stoichiometry of metabolic reactions and applying an optimization principle [29] [3]. This method has become a cornerstone in systems biology and metabolic engineering due to its ability to analyze large-scale metabolic networks without requiring extensive kinetic parameter data [28].

FBA finds applications across multiple domains, including bioprocess engineering for improving chemical production yields, identification of putative drug targets in pathogens and cancer, rational design of culture media, and studying host-pathogen interactions [28]. The method is particularly valuable for simulating the effect of genetic perturbations, such as gene knockouts, and for predicting optimal genetic modifications to enhance the production of industrially valuable compounds [29].

Core Principles and Mathematical Foundation

The mathematical foundation of FBA rests on representing metabolism as a stoichiometrically balanced system of equations. Genome-scale metabolic models form the basis of this representation, containing all known metabolic reactions for an organism [29]. For E. coli, the iML1515 model serves as a well-curated GEM, representing strain K-12 MG1655 with 1,515 open reading frames, 2,719 metabolic reactions, and 1,192 metabolites [29].

The steady-state assumption is formalized through the equation: S · v = 0 where S is an m × n stoichiometric matrix (m metabolites and n reactions), and v is an n-dimensional vector of metabolic fluxes [28]. This equation represents the system at steady state, where metabolite concentrations remain constant as production and consumption fluxes balance each other.

Additional constraints are incorporated as inequality constraints: lower bound ≤ v ≤ upper bound which define the minimum and maximum allowable fluxes for each reaction based on thermodynamic, enzyme capacity, or substrate uptake limitations [28].

Since the system of equations is typically underdetermined (more reactions than metabolites), FBA identifies a single flux distribution by optimizing a cellular objective function using linear programming [28]. The canonical FBA problem formulation is:

- maximize c^Tv

- subject to S · v = 0

- and lower bound ≤ v ≤ upper bound

where c is a vector of coefficients defining the objective function, typically set to maximize biomass production, ATP yield, or synthesis of a target metabolite [28].

The following diagram illustrates the workflow of a classic FBA simulation:

Key Assumptions of Classic FBA

Steady-State Metabolism

FBA assumes that the metabolic network operates at steady state, meaning metabolite concentrations remain constant over time as the rates of production and consumption balance each other [28]. This assumption transforms the problem from a system of differential equations to a system of linear equations, significantly reducing computational complexity [29]. However, this simplification comes at the cost of unable to capture transient metabolic dynamics or metabolite accumulation, which can be critical in engineered systems where metabolite levels trigger regulatory responses [29].

Optimality Principle

Classic FBA operates on the evolutionary assumption that cellular metabolism has been optimized for specific biological objectives, most commonly biomass maximization for microbial growth [28]. The method identifies a single optimal flux distribution from the possible solution space that maximizes or minimizes the defined objective function [30]. While this assumption works well for microorganisms under selective pressure for rapid growth, it may not hold for all biological systems, particularly multicellular organisms or engineered strains where multiple competing objectives may coexist [3] [30].

System Boundary Constraints

FBA requires pre-defined boundaries for metabolic exchanges between the cell and its environment, typically implemented as flux constraints on transport reactions [29]. These constraints are often derived from experimental measurements of substrate uptake rates or known physiological limitations. However, accurate quantification of all exchange fluxes can be challenging, and incorrect boundary constraints can lead to unrealistic flux predictions [29].

Methodological Limitations and Challenges

Prediction Inaccuracies in Genetic Engineering

While FBA successfully predicts metabolic gene essentiality in E. coli with approximately 93.5% accuracy under standard conditions, its predictive power diminishes when analyzing genetically engineered strains [3]. This limitation stems primarily from incomplete annotations of gene interactions in GEMs and the method's inability to account for complex regulatory mechanisms beyond stoichiometric constraints [31]. Recent studies demonstrate that FBA often fails to accurately predict the behavior of cells with engineered pathways due to unmodeled metabolic adaptations and regulatory responses [31].

Ommission of Cellular Regulation

Classic FBA does not incorporate transcriptional, translational, or post-translational regulatory mechanisms that modulate enzyme activity in response to metabolic needs [29] [32]. This represents a significant limitation, as cellular metabolism is subject to multi-layered regulation that dynamically adjusts flux distributions. The method's static nature prevents capturing metabolic adaptations to changing environmental conditions or engineered perturbations [32].

Unrealistically High Flux Predictions

A well-documented limitation of FBA is its tendency to predict unrealistically high fluxes through certain pathways [29]. This occurs because the method relies solely on stoichiometric coefficients without considering enzyme kinetics or capacity limitations. The resulting large metabolic solution space allows for thermodynamically infeasible flux distributions that would require impossible enzyme concentrations or catalytic rates [29].

Benchmarking FBA Against Contemporary Alternatives

Quantitative Performance Comparison

Table 1: Predictive Accuracy for E. coli Metabolic Gene Essentiality

| Method | Accuracy | Precision | Recall | Key Innovation |

|---|---|---|---|---|

| Classic FBA [3] | 93.5% | 91.2% | 89.8% | Biomass maximization objective |

| Flux Cone Learning (FCL) [3] | 95.0% | 94.1% | 95.3% | Machine learning on flux cone geometry |