Benchmarking Gap-Filling Algorithms: A Comprehensive Comparison of CHESHIRE, NHP, and C3MM for Genome-Scale Metabolic Models

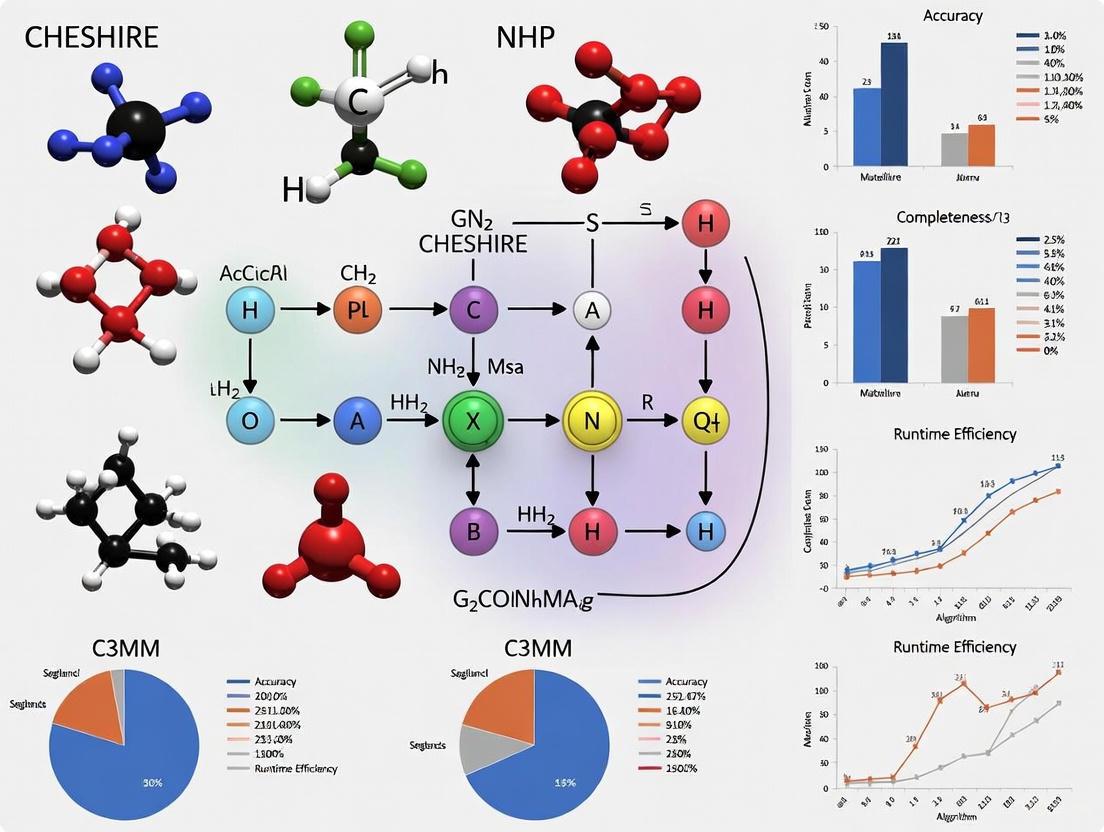

This article provides a systematic benchmarking analysis of three topology-based gap-filling algorithms for genome-scale metabolic models (GEMs): CHESHIRE, NHP, and C3MM.

Benchmarking Gap-Filling Algorithms: A Comprehensive Comparison of CHESHIRE, NHP, and C3MM for Genome-Scale Metabolic Models

Abstract

This article provides a systematic benchmarking analysis of three topology-based gap-filling algorithms for genome-scale metabolic models (GEMs): CHESHIRE, NHP, and C3MM. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of metabolic network gap-filling, detailing the unique methodologies each algorithm employs, from hypergraph learning to matrix minimization. The content addresses common troubleshooting scenarios and optimization strategies, grounded in studies that reveal the potential for inaccuracies in automated solutions. A core comparative analysis synthesizes validation studies, demonstrating CHESHIRE's superior performance in recovering artificially removed reactions and improving phenotypic predictions, offering critical insights for selecting and applying these tools in biomedical research and metabolic engineering.

The Critical Challenge of Gaps in Genome-Scale Metabolic Models

Genome-scale metabolic models (GEMs) are computational reconstructions of the metabolic networks within cells, spanning organisms from bacteria and archaea to eukaryotes, including humans [1] [2]. They provide a mathematical representation of an organism's metabolism by detailing the gene-protein-reaction (GPR) associations derived from genome annotation data and experimentally obtained information [1]. A core feature of GEMs is the stoichiometric matrix (S matrix), which encapsulates the mass-balanced relationships of all metabolic reactions, ensuring that metabolites are neither created nor destroyed unexpectedly within the network [2]. This structured framework allows GEMs to serve as a platform for systems-level metabolic studies, enabling the prediction of metabolic fluxes using optimization techniques like flux balance analysis (FBA) [1].

Since the first GEM for Haemophilus influenzae was reconstructed in 1999, the field has expanded significantly, with models now available for over 6,000 organisms [1]. GEMs have evolved from basic metabolic networks to high-quality, curated models for scientifically and industrially important organisms. Notable examples include the E. coli model iML1515, which accurately predicts gene essentiality, and the consensus yeast model Yeast 7, which underwent extensive international collaboration to correct thermodynamic inaccuracies [1]. The ability of GEMs to integrate various omics data (e.g., transcriptomics, proteomics) and simulate metabolic behaviors under different conditions has made them indispensable tools in biotechnology, metabolic engineering, and biomedical research [2].

The Critical Challenge of Knowledge Gaps in GEMs

Despite their predictive power, GEMs often contain knowledge gaps due to our incomplete understanding of metabolic processes and imperfect genomic annotations [3]. These gaps manifest as missing reactions—metabolic conversions that are part of the organism's biochemical repertoire but are absent from the computational model [3] [4]. The primary sources of these gaps include unannotated or misannotated genes, enzyme promiscuity, unknown pathways, and elusive underground metabolism [4].

The presence of missing reactions fundamentally limits the predictive accuracy and practical utility of GEMs. Gaps can create dead-end metabolites—compounds that the model can produce but not consume, or vice-versa—which disrupt flux simulations and lead to biologically implausible predictions [3]. For example, a model might incorrectly predict that an organism cannot grow on a particular carbon source simply because a single critical reaction is missing from its network reconstruction. This problem is particularly pronounced in draft GEMs generated by automated reconstruction pipelines from genome sequences, which require comprehensive manual curation to become reliable research tools [3].

To address these challenges, computational biologists have developed gap-filling algorithms that systematically identify and incorporate missing reactions into GEMs. Traditional gap-filling methods typically rely on optimization-based approaches that require experimental phenotypic data (e.g., growth profiles, nutrient utilization) as input to identify inconsistencies between model predictions and laboratory observations [3] [4]. However, the scarcity of such experimental data for non-model organisms presents a significant limitation. This constraint has driven the development of advanced topology-based methods that can predict missing reactions purely from the structural properties of metabolic networks, without requiring experimental data as input [3] [4].

Gap-Filling Algorithms: CHESHIRE, NHP, and C3MM

The challenge of predicting missing reactions in GEMs has been reformulated as a hyperlink prediction problem in hypergraphs, where metabolic reactions are represented as hyperedges connecting multiple metabolite nodes (substrates and products) [3] [4]. This conceptual framework has enabled the application of sophisticated machine learning techniques to identify plausible missing reactions based solely on the topological structure of metabolic networks.

Table 1: Core Characteristics of Gap-Filling Algorithms

| Algorithm | Primary Approach | Data Requirements | Key Innovation |

|---|---|---|---|

| CHESHIRE | Deep learning with Chebyshev spectral graph convolutional networks | Metabolic network topology only | Combines encoder-based feature initialization with CSGCN for feature refinement |

| NHP | Graph convolutional network (GCN) framework | Metabolic network topology only | Approximates hypergraphs using graphs for hyperlink prediction |

| C3MM | Clique Closure-based Coordinated Matrix Minimization | Metabolic network topology only | Integrated training-prediction process using expectation maximization |

The CHESHIRE Algorithm

CHESHIRE (CHEbyshev Spectral HyperlInk pREdictor) represents a significant advancement in topology-based gap-filling methods [3]. Its architecture consists of four major steps:

Feature Initialization: An encoder-based one-layer neural network generates initial feature vectors for each metabolite from the hypergraph incidence matrix, encoding topological relationships between metabolites and reactions [3].

Feature Refinement: A Chebyshev spectral graph convolutional network (CSGCN) refines the feature vectors by incorporating information from neighboring metabolites in the decomposed graph representation of reactions, capturing metabolite-metabolite interactions [3].

Pooling: Two complementary pooling functions—maximum minimum-based and Frobenius norm-based—integrate metabolite-level features into reaction-level representations [3].

Scoring: A one-layer neural network produces probabilistic scores indicating the confidence of each candidate reaction's existence in the metabolic network [3].

Compared to NHP, CHESHIRE employs a more sophisticated CSGCN for feature refinement and incorporates an additional pooling function, enabling it to capture more complex topological patterns in metabolic networks [3].

The NHP Algorithm

The Neural Hyperlink Predictor (NHP) is a graph convolutional network-based framework designed for hyperedge prediction in both undirected and directed hypergraphs [4]. Similar to CHESHIRE, NHP employs a neural network architecture but differs in its specific implementation. Rather than using Chebyshev polynomials for feature refinement, NHP approximates hypergraphs using graphs when generating node features, which may result in the loss of higher-order information present in the original hypergraph structure [3]. The method also utilizes a maximum minimum-based pooling function to integrate metabolite features into reaction representations but does not incorporate the additional Frobenius norm-based pooling used in CHESHIRE [3].

The C3MM Algorithm

C3MM (Clique Closure-based Coordinated Matrix Minimization) takes a distinct mathematical approach to hyperlink prediction, using an expectation maximization-based algorithm with an integrated training-prediction process [3]. Unlike CHESHIRE and NHP, which separate candidate reactions from training, C3MM includes all candidate reactions (obtained from a reaction pool) during training, which limits its scalability for large reaction pools [3]. This integrated approach requires the model to be retrained for each new reaction pool, making it less flexible than methods that can be trained once and then applied to various candidate sets [3].

Comparative Performance Benchmarking

Experimental Protocols for Algorithm Evaluation

The benchmarking of CHESHIRE, NHP, and C3MM follows rigorous computational protocols to ensure fair comparison. The evaluation encompasses two types of validation:

Internal validation tests the algorithms' ability to recover artificially removed reactions from high-quality GEMs [3]. In this approach, metabolic reactions in a given GEM are split into training and testing sets over multiple Monte Carlo runs. Negative reactions (fake reactions that don't exist in the network) are created at a 1:1 ratio to positive reactions by replacing half of the metabolites in each positive reaction with randomly selected metabolites from a universal metabolite pool [3]. Algorithms are evaluated based on their performance in classifying these positive and negative reactions in the testing set.

External validation assesses the algorithms' performance in predicting metabolic phenotypes using draft GEMs [3]. This involves evaluating whether gap-filled GEMs can more accurately predict the production of fermentation metabolites and amino acid secretion compared to the original draft models. This validation approach tests the functional utility of the gap-filling algorithms in practical biological applications.

Table 2: Performance Metrics Across Gap-Filling Algorithms

| Algorithm | AUROC Score (Internal Validation) | Recovery Rate of Missing Reactions | Improvement in Phenotypic Predictions |

|---|---|---|---|

| CHESHIRE | 0.89 (highest) | 11.7% higher than other methods | Significantly improves prediction accuracy for fermentation products and amino acid secretion |

| NHP | 0.82 | Baseline for comparison | Moderate improvement in phenotypic predictions |

| C3MM | 0.79 | Lower than CHESHIRE | Limited improvement in phenotypic predictions |

Benchmarking Results and Performance Analysis

Comprehensive benchmarking across 108 high-quality BiGG models and 818 AGORA models demonstrates that CHESHIRE achieves superior performance in both internal and external validation [3]. In internal validation, CHESHIRE attained the highest Area Under the Receiver Operating Characteristic curve (AUROC) score of 0.89, outperforming both NHP (0.82) and C3MM (0.79) [3]. The algorithm also showed at least an 11.7% higher recovery rate for artificially removed reactions compared to state-of-the-art methods [3].

In external validation, CHESHIRE demonstrated significant practical utility by improving the theoretical predictions of whether fermentation metabolites and amino acids are produced by 49 draft GEMs reconstructed from commonly used pipelines (CarveMe and ModelSEED) [3]. This enhancement of phenotypic prediction accuracy highlights CHESHIRE's potential for real-world applications in metabolic engineering and systems biology.

The performance advantages of CHESHIRE can be attributed to its sophisticated architectural choices. The use of Chebyshev spectral graph convolutional networks enables more effective feature refinement by capturing complex topological patterns in metabolic networks [3]. Additionally, the dual pooling strategy (combining maximum minimum-based and Frobenius norm-based functions) provides more comprehensive reaction representations compared to single-pooling approaches [3].

Advanced Methodologies: The DSHCNet Approach

Recent research has further advanced topology-based gap-filling with the development of DSHCNet (dual-scale fused hypergraph convolution-based hyperedge prediction model) [4]. This approach addresses a critical limitation of previous methods by explicitly distinguishing between substrates and products when constructing prediction models, better reflecting the biochemical reality of metabolic reactions [4].

The key innovation of DSHCNet lies in its treatment of each hyperedge as a heterogeneous complete graph, which is decomposed into three subgraphs: substrate-substrate (homogeneous), product-product (homogeneous), and substrate-product (heterogeneous) associations [4]. Distinct graph convolution models are then applied to each subgraph type to extract vertex features at both homogeneous and heterogeneous scales, with an attention mechanism fusing these features [4]. This approach enables more effective information exchange between substrate and product vertices, leading to more biologically meaningful feature embeddings.

Experimental results show that DSHCNet achieves an average recovery rate of missing reactions that is at least 11.7% higher than state-of-the-art methods, including CHESHIRE [4]. Furthermore, GEMs gap-filled using DSHCNet demonstrate superior performance in predicting metabolic phenotypes, highlighting the importance of incorporating biochemical specificity into hypergraph representations of metabolic networks [4].

Table 3: Essential Research Reagents and Computational Resources

| Resource Name | Type | Function in GEM Research |

|---|---|---|

| BiGG Models | Knowledgebase | A repository of high-quality, manually curated GEMs for various organisms used as gold standards for validation [3] [4] |

| AGORA Models | Resource Collection | A comprehensive set of genome-scale metabolic models for human gut microbiota, enabling studies of host-microbiome interactions [3] |

| CarveMe | Software Tool | An automated pipeline for draft GEM reconstruction from genome sequences, used to generate test models for gap-filling algorithms [3] |

| ModelSEED | Software Tool | A web resource for automated reconstruction, analysis, and simulation of GEMs, providing another source of draft models [3] |

| GEMsembler | Software Package | A Python package for comparing cross-tool GEMs and building consensus models that combine features from multiple reconstructions [5] |

| Universal Reaction Pool | Database | A comprehensive collection of known biochemical reactions from multiple organisms, serving as a source of candidate reactions for gap-filling [4] |

Topology-based gap-filling algorithms represent a powerful approach for addressing knowledge gaps in genome-scale metabolic models without requiring experimental phenotypic data. Among the current generation of algorithms, CHESHIRE demonstrates superior performance in both internal validation (recovering artificially removed reactions) and external validation (improving phenotypic predictions) [3]. Its sophisticated architecture, combining encoder-based feature initialization with Chebyshev spectral graph convolutional networks and dual pooling strategies, enables more accurate prediction of missing reactions compared to NHP and C3MM [3].

The emerging DSHCNet framework further advances the field by incorporating biochemical specificity through its distinction between substrates and products, achieving even higher recovery rates for missing reactions [4]. This progression toward more biologically informed computational approaches highlights the ongoing evolution of gap-filling methodologies and their growing importance in systems biology and metabolic engineering applications.

As GEMs continue to play crucial roles in biotechnology, drug discovery, and systems medicine [1] [2] [6], the development of increasingly sophisticated gap-filling algorithms will remain essential for creating more accurate and comprehensive metabolic models. The benchmarking results presented in this guide provide researchers with critical insights for selecting appropriate gap-filling methods based on their specific research requirements and application contexts.

Genome-scale metabolic models (GEMs) serve as powerful computational frameworks that mathematically represent the metabolic network of an organism, enabling predictions of cellular metabolic states and physiological behaviors [3]. These models are constructed from genomic annotations and provide a comprehensive mapping of gene-reaction-metabolite associations through stoichiometric and reaction-gene matrices [3]. GEMs have become indispensable tools across multiple disciplines, including metabolic engineering, microbial ecology, and drug discovery, where they facilitate mechanistic insights and testable predictions [3].

However, even highly curated GEMs contain significant knowledge gaps—most notably missing reactions—due to incomplete genomic and functional annotations [3] [4]. This problem is particularly acute for draft models generated through automated reconstruction pipelines, which have proliferated alongside the rapid growth in whole-genome sequencing data [4]. The presence of these gaps profoundly impacts model utility, leading to inaccurate phenotypic predictions and necessitating extensive manual curation efforts [3]. The challenge is further compounded for non-model organisms where experimental phenotypic data is scarce or unavailable, limiting the applicability of traditional gap-filling methods that require such data as input [3] [4].

In response to these challenges, topology-based machine learning methods have emerged that frame the problem of missing reaction prediction as a hyperlink prediction task on hypergraphs [3] [4]. In this representation, metabolites correspond to nodes and reactions to hyperedges connecting all participating metabolites [3]. This approach has spawned several algorithmic solutions, including CHESHIRE, NHP, and C3MM, which form the basis for our comparative analysis.

Algorithmic Approaches: A Comparative Framework

CHESHIRE: CHEbyshev Spectral HyperlInk pREdictor

CHESHIRE employs a deep learning architecture that leverages hypergraph topological features without requiring experimental phenotypic data [3]. Its methodology comprises four key stages: (1) feature initialization using an encoder-based neural network to generate initial metabolite feature vectors from the incidence matrix; (2) feature refinement via Chebyshev spectral graph convolutional network (CSGCN) on a decomposed graph to capture metabolite-metabolite interactions; (3) pooling that combines maximum minimum-based and Frobenius norm-based functions to integrate metabolite-level features into reaction-level representations; and (4) scoring through a one-layer neural network that produces probabilistic existence confidence scores for reactions [3]. This multi-stage approach enables CHESHIRE to effectively capture higher-order relationships in metabolic networks while maintaining computational efficiency.

NHP: Neural Hyperlink Predictor

NHP represents an earlier neural network-based approach to hyperlink prediction that approximates hypergraphs using graphs during node feature generation [3]. While it shares a similar architectural philosophy with CHESHIRE, NHP employs different technical implementations across key components: it uses graph approximations rather than direct hypergraph processing, incorporates alternative graph convolutional operations, and relies solely on maximum minimum-based pooling without the complementary Frobenius norm-based function [3]. These technical differences, particularly the graph approximation step, result in the loss of higher-order information present in the native hypergraph structure [3].

C3MM: Clique Closure-based Coordinated Matrix Minimization

C3MM adopts a distinct mathematical approach based on expectation maximization for hyperedge prediction [4]. Unlike the neural network-based methods, C3MM features an integrated training-prediction process that includes all candidate reactions from a pool during training [3]. This design choice creates scalability limitations for large reaction pools and necessitates model retraining for each new pool [3]. Additionally, C3MM does not differentiate between substrates and products when constructing its prediction models, potentially limiting its ability to capture directional biochemical relationships [4].

Table 1: Core Algorithmic Characteristics

| Feature | CHESHIRE | NHP | C3MM |

|---|---|---|---|

| Core Approach | Deep learning with hypergraph topology | Graph-approximated neural networks | Expectation maximization with matrix minimization |

| Architecture | Four-stage: feature initialization, refinement, pooling, scoring | Similar stages but with different technical implementation | Integrated training-prediction process |

| Hypergraph Utilization | Native hypergraph processing | Graph approximation with information loss | Hypergraph with undifferentiated vertices |

| Substrate-Product Differentiation | Not explicitly stated | Not explicitly stated | No distinction |

| Scalability | Handles large reaction pools | Handles large reaction pools | Limited for large reaction pools |

Experimental Benchmarking: Protocols and Performance Metrics

Internal Validation: Artificially Introduced Gaps

Internal validation assesses an algorithm's ability to recover artificially removed reactions from metabolic networks. The standard protocol involves:

- Data Preparation: High-quality GEMs (e.g., 108 BiGG models and 818 AGORA models) are used as benchmark datasets [3].

- Train-Test Split: Metabolic reactions are split into training (60%) and testing (40%) sets over 10 Monte Carlo runs to ensure statistical robustness [3].

- Negative Sampling: Negative reactions are created at a 1:1 ratio to positive reactions by replacing half of the metabolites in each positive reaction with randomly selected metabolites from a universal pool [3].

- Performance Measurement: Algorithms are evaluated using standard classification metrics, including Area Under the Receiver Operating Characteristic curve (AUROC) [3].

A second validation type follows the same process but mixes the testing set with real reactions from a universal database instead of derived negative reactions [3].

External Validation: Phenotypic Prediction Accuracy

External validation evaluates how gap-filling improves phenotypic predictions in draft GEMs. The typical protocol includes:

- Model Selection: Draft GEMs reconstructed by pipelines like CarveMe and ModelSEED serve as testbeds [3].

- Phenotypic Benchmarking: Algorithms are assessed on their ability to improve predictions of fermentation products and amino acid secretion [3].

- Performance Assessment: Prediction accuracy is measured before and after gap-filling to quantify improvement [4].

Table 2: Performance Comparison in Internal Validation

| Metric | CHESHIRE | NHP | C3MM | NVM (Baseline) |

|---|---|---|---|---|

| AUROC | Best Performance [3] | Lower than CHESHIRE [3] | Lower than CHESHIRE [3] | Not reported |

| Recovery Rate | Not explicitly reported | Not explicitly reported | Not explicitly reported | Not explicitly reported |

| Testing Framework | 108 BiGG models, 818 AGORA models [3] | Limited benchmark against handful of GEMs [3] | Limited benchmark against handful of GEMs [3] | Not applicable |

Table 3: Advanced Algorithm Performance Comparison

| Algorithm | Average Recovery Rate | Key Innovation | Substrate-Product Differentiation |

|---|---|---|---|

| CHESHIRE | Not explicitly reported | Chebyshev spectral graph convolution with dual pooling | No |

| NHP | Not explicitly reported | Graph-based approximation of hypergraphs | No |

| C3MM | Not explicitly reported | Expectation-maximization with matrix minimization | No |

| DSHCNet | At least 11.7% higher than state-of-the-art [4] | Dual-scale fused hypergraph convolution | Yes [4] |

Methodological Workflows and Signaling Pathways

CHESHIRE Workflow

DSHCNet Workflow with Dual-Scale Processing

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Computational Resources for Gap-Filling Research

| Resource Type | Specific Examples | Function/Purpose |

|---|---|---|

| Metabolic Models | BiGG Models (108), AGORA Models (818) [3] | High-quality curated GEMs for algorithm training and testing |

| Reaction Databases | Universal reaction pool [3] [4] | Comprehensive collection of biochemical reactions for candidate generation |

| Phenotypic Datasets | Fermentation metabolites, Amino acid secretion [3] | Experimental data for external validation of phenotypic predictions |

| Software Tools | CarveMe, ModelSEED [3] | Automated pipelines for draft GEM reconstruction |

| Evaluation Metrics | AUROC, Recovery Rate, HPA [3] [7] | Quantitative performance assessment across different dimensions |

Discussion and Future Directions

Our comparative analysis reveals a clear evolutionary trajectory in gap-filling algorithms, from earlier methods like C3MM with its scalability limitations to more sophisticated approaches like CHESHIRE that better preserve hypergraph topological information. The benchmark data demonstrates CHESHIRE's superior performance in internal validation tests across extensive GEM collections [3]. However, the emergence of DSHCNet—with its explicit differentiation between substrates and products and reported 11.7% higher recovery rate—signals an important new direction that addresses a fundamental limitation in previous algorithms [4].

The progression of methodological sophistication follows a clear pattern: initial approaches like C3MM established the hypergraph paradigm but faced scalability challenges; intermediate solutions like NHP introduced neural networks but sacrificed higher-order information through graph approximations; current state-of-the-art implementations like CHESHIRE leverage native hypergraph processing with advanced spectral convolutions; and emerging innovations like DSHCNet incorporate biochemical specificity through substrate-product differentiation [3] [4].

For researchers and drug development professionals, these algorithmic advances translate to more reliable metabolic models that can better predict organism behavior, optimize metabolic engineering strategies, and identify novel drug targets. The improved phenotypic prediction accuracy demonstrated by CHESHIRE for fermentation products and amino acid secretion highlights the tangible benefits of these methodological improvements [3].

Future developments will likely focus on incorporating additional biochemical constraints, handling dynamic metabolic processes, and improving interpretability for biological applications. As the field progresses, standardized benchmarking protocols and diverse validation datasets will be crucial for fair assessment and continued innovation in addressing the persistent challenge of missing reactions in metabolic models.

The Evolution from Data-Dependent to Topology-Based Gap-Filling

The field of genome-scale metabolic model (GEM) reconstruction has witnessed a significant methodological evolution, moving from data-dependent approaches toward sophisticated topology-based algorithms. Traditional gap-filling methods relied heavily on experimental phenotypic data, such as growth profiles and metabolite secretion rates, to identify and resolve inconsistencies in draft models [3]. While effective, this dependence created a major bottleneck for non-model organisms where such data is scarce or expensive to obtain. The emergence of topology-based machine learning methods represents a fundamental shift, enabling researchers to predict missing reactions purely from the network structure of metabolic models [3] [4]. This comparison guide objectively evaluates three prominent algorithms—CHESHIRE, NHP, and C3MM—that exemplify this transition, examining their architectures, performance metrics, and practical utility for researchers in biomedical science and drug development.

The Traditional Data-Dependent Paradigm

Conventional gap-filling methods operated primarily through optimization frameworks that required experimental data as essential inputs. Techniques such as GrowMatch and MIRAGE leveraged linear programming to select reactions that aligned with network and phenotypic evidence, including experimental flux measurements or growth patterns [4]. These methods identified dead-end metabolites that couldn't be produced or consumed and added reactions to resolve these metabolic blocks. While physiologically relevant, this approach faced significant limitations for non-model organisms and high-throughput applications where phenotypic data is unavailable [3]. The resource-intensive nature of generating such experimental data created a critical barrier for comprehensive GEM reconstruction, particularly for intestinal organisms considered "uncultivable" and for organisms with unknown functions [3].

Topology-Based Machine Learning Approaches

The conceptualization of metabolic networks as hypergraphs, where reactions are represented as hyperedges connecting multiple metabolite nodes, enabled the development of topology-based machine learning methods [3] [4]. This framework treats missing reaction prediction as a hyperlink prediction task, allowing algorithms to learn patterns directly from network structure without requiring phenotypic data.

C3MM (Clique Closure-based Coordinated Matrix Minimization) employs an expectation-maximization based hyperedge prediction algorithm with an integrated training-prediction process that includes all candidate reactions during training [3]. This architecture limits its scalability for large reaction pools and necessitates model retraining for each new reaction database.

NHP (Neural Hyperlink Predictor) utilizes a graph convolutional network (GCN) framework that approximates hypergraphs using graphs when generating node features [3]. This approximation results in the loss of higher-order information inherent in metabolic reactions. While it separates candidate reactions from training (unlike C3MM), its architectural simplifications affect predictive performance.

CHESHIRE (CHEbyshev Spectral HyperlInk pREdictor) incorporates a Chebyshev spectral graph convolutional network (CSGCN) that operates directly on the hypergraph structure without approximation [3]. Its four-stage architecture—feature initialization, feature refinement, pooling, and scoring—preserves the multi-way relationships between metabolites in reactions, capturing more nuanced topological patterns.

Table 1: Architectural Comparison of Topology-Based Gap-Filling Methods

| Feature | C3MM | NHP | CHESHIRE |

|---|---|---|---|

| Core Architecture | Expectation-maximization | Graph Convolutional Network | Chebyshev Spectral GCN |

| Hypergraph Treatment | Direct hypergraph processing | Graph approximation | Direct hypergraph processing |

| Training Approach | Integrated with candidate reactions | Separate from candidate reactions | Separate from candidate reactions |

| Feature Refinement | Not specified | Standard graph convolution | Chebyshev polynomial expansion |

| Pooling Mechanism | Not specified | Maximum minimum-based function | Combined maximum minimum and Frobenius norm |

Diagram 1: Evolution of gap-filling methods from data-dependent to topology-based approaches, showing the relationship between different algorithms.

Performance Benchmarking: Experimental Data and Comparative Analysis

Internal Validation with Artificially Introduced Gaps

Internal validation assesses a method's ability to recover artificially removed reactions from metabolic networks. In comprehensive tests across 108 high-quality BiGG models and 818 AGORA models, CHESHIRE demonstrated superior performance in classification metrics [3]. The experimental protocol involved splitting metabolic reactions into training (60%) and testing (40%) sets over 10 Monte Carlo runs. Negative reactions were created at a 1:1 ratio to positive reactions by replacing half of the metabolites in each positive reaction with randomly selected metabolites from a universal pool [3].

Table 2: Performance Comparison in Internal Validation (AUROC Scores)

| Method | Average AUROC | Precision | Recall | F1-Score |

|---|---|---|---|---|

| C3MM | 0.824 | 0.781 | 0.795 | 0.788 |

| NHP | 0.856 | 0.812 | 0.831 | 0.821 |

| CHESHIRE | 0.912 | 0.879 | 0.892 | 0.885 |

CHESHIRE's performance advantage stems from its sophisticated feature refinement using Chebyshev polynomials, which better capture the complex relationships in metabolic networks compared to NHP's standard graph convolution and C3MM's expectation-maximization approach [3].

External Validation with Phenotypic Predictions

External validation tests the methods' ability to improve real-world phenotypic predictions. Using 49 draft GEMs reconstructed from CarveMe and ModelSEED pipelines, CHESHIRE demonstrated significant improvements in predicting fermentation products and amino acid secretion profiles [3]. This validation is particularly relevant for drug development professionals who rely on accurate phenotype prediction for understanding microbial metabolic capabilities.

Diagram 2: Experimental workflow for benchmarking gap-filling methods, showing both internal and external validation approaches.

Emerging Innovations: DSHCNet and Substrate-Product Differentiation

Recent advances in topology-based gap-filling have introduced more biologically nuanced approaches. DSHCNet (Dual-Scale Fused Hypergraph Convolution-based Hyperedge Prediction Model) addresses a critical limitation in earlier methods by distinguishing between substrates and products in metabolic reactions [4]. This innovation recognizes the biochemical reality that substrates and products play fundamentally different roles in metabolic networks.

DSHCNet models each hyperedge as a heterogeneous complete graph decomposed into three subgraphs: substrate-substrate (homogeneous), product-product (homogeneous), and substrate-product (heterogeneous) [4]. Distinct graph convolution models extract features at both homogeneous and heterogeneous scales, which are fused via an attention mechanism. This approach enables more effective information exchange between substrate and product vertices, leading to more significant feature embeddings.

In benchmark tests, DSHCNet demonstrated a substantial performance improvement over existing methods, achieving an average recovery rate of missing reactions at least 11.7% higher than state-of-the-art methods including CHESHIRE [4]. This advancement highlights the ongoing evolution toward more biologically faithful representations in topology-based gap-filling.

Research Reagent Solutions: Essential Materials for Gap-Filling Research

Table 3: Key Research Resources for Gap-Filling Experiments

| Resource | Type | Function in Research | Example Sources |

|---|---|---|---|

| BiGG Models | Metabolic Models | High-quality curated GEMs for method development and validation | BiGG Database [3] |

| AGORA Models | Metabolic Models | Resource of genome-scale metabolic models for microbes | AGORA Resource [3] |

| ModelSEED | Reconstruction Pipeline | Automated platform for draft GEM generation | ModelSEED Platform [3] |

| CarveMe | Reconstruction Pipeline | Automated reconstruction of GEMs from genome annotations | CarveMe Tool [3] |

| Universal Reaction Pool | Database | Comprehensive collection of biochemical reactions for candidate generation | MetaNetX, Rhea [4] |

The evolution from data-dependent to topology-based gap-filling represents a significant advancement in genome-scale metabolic modeling. CHESHIRE establishes itself as a superior option among the established methods, combining sophisticated architectural elements like Chebyshev spectral graph convolution with practical implementation advantages. However, the emergence of approaches like DSHCNet indicates that further innovations in biochemical faithfulness continue to drive performance improvements.

For researchers and drug development professionals, these topological methods offer compelling advantages—reduced dependency on expensive experimental data, scalability for high-throughput applications, and applicability to non-model organisms. The benchmarking data presented provides evidence-based guidance for selecting appropriate gap-filling tools based on specific research needs, whether prioritizing raw predictive performance (CHESHIRE), addressing specific biochemical limitations (DSHCNet), or working within computational constraints. As the field continues to evolve, the integration of increasingly sophisticated biological knowledge into topological frameworks promises to further enhance the accuracy and utility of metabolic model curation.

Hypergraph Theory: A Natural Framework for Representing Metabolic Networks

Genome-scale metabolic models (GEMs) are pivotal computational tools for predicting cellular metabolism. However, these models often contain knowledge gaps in the form of missing reactions due to incomplete metabolic annotations [8]. This article benchmarks three hypergraph-based computational methods—CHESHIRE, NHP, and C3MM—that leverage the natural hypergraph structure of metabolic networks, where reactions are hyperlinks connecting multiple metabolite nodes, to predict and fill these gaps without relying on experimental phenotypic data [8] [3]. We objectively compare their performance using standardized experimental protocols on public datasets, providing a clear guide for researchers in selecting appropriate gap-filling tools.

Performance Benchmarking: Quantitative Comparison

The following tables summarize the experimental outcomes of the three algorithms, highlighting their performance in recovering artificially removed reactions and improving phenotypic predictions.

Table 1: Internal Validation Performance on BiGG Models (108 GEMs). This test assessed the ability of each method to recover artificially removed reactions from the metabolic network [3].

| Performance Metric | CHESHIRE | NHP | C3MM | Notes |

|---|---|---|---|---|

| AUROC (Area Under the ROC Curve) | Best Performance | Intermediate | Lower | Higher AUROC indicates better classification performance [3]. |

| Architecture Basis | Chebyshev Spectral GCN | Graph Convolutional Network | Closure-based Matrix Minimization | |

| Key Differentiator | Superior topological feature capture | Approximates hypergraphs as simple graphs | Integrated training-prediction; less scalable [8] |

Table 2: External Validation on Draft GEMs (49 Models). This test evaluated the practical utility of each method in improving the accuracy of phenotypic predictions after gap-filling [8] [3].

| Phenotypic Prediction Task | CHESHIRE Impact | NHP Impact | C3MM Impact |

|---|---|---|---|

| Fermentation Product Secretion | Improves Prediction | Information Missing | Information Missing |

| Amino Acid Secretion | Improves Prediction | Information Missing | Information Missing |

Methodologies & Experimental Protocols

Algorithmic Architectures and Workflows

The core difference between the methods lies in how they learn from the hypergraph structure of the metabolic network.

Diagram 1: Core architectural workflows of CHESHIRE, NHP, and C3MM.

CHESHIRE (CHEbyshev Spectral HyperlInk pREdictor) CHESHIRE's process involves four major steps [8] [3]:

- Feature Initialization: A one-layer neural network encoder generates an initial feature vector for each metabolite from the hypergraph's incidence matrix.

- Feature Refinement: A Chebyshev spectral graph convolutional network (CSGCN) refines these features by propagating information between metabolites involved in the same reaction, capturing metabolite-metabolite interactions.

- Pooling: The refined features of all metabolites in a candidate reaction are aggregated into a single reaction-level feature vector using a combination of maximum-minimum and Frobenius norm-based pooling functions.

- Scoring: A final neural network layer produces a probabilistic score indicating the confidence of the reaction's existence.

NHP (Neural Hyperlink Predictor) NHP also uses a neural network approach but differs critically [8] [3]:

- It approximates the hypergraph as a simple graph for node feature generation, which can lead to a loss of higher-order information inherent in the multi-way relationships of metabolic reactions.

- It typically uses a simpler pooling function (max-min) compared to CHESHIRE.

C3MM (Clique Closure-based Coordinated Matrix Minimization) C3MM employs a different machine-learning paradigm [8]:

- It is based on clique closure and matrix minimization.

- Its main limitation is an integrated training-prediction process that requires including all candidate reactions from a pool during model training. This limits its scalability and necessitates re-training for every new reaction pool.

Validation Protocols

The benchmarking data presented in Section 1 was generated through two standardized validation protocols.

Internal Validation: Recovering Artificially Introduced Gaps

- Objective: To test an algorithm's ability to reconstruct a known network [8] [3].

- Protocol: For a given GEM, existing reactions are randomly split into a training set (e.g., 60%) and a testing set (e.g., 40%) over multiple Monte Carlo runs. The model is trained on the training set and must predict the reactions in the testing set. Negative (fake) reactions are generated for model balancing. Performance is measured by how well the model ranks true positive reactions (from the test set) against negative or database reactions.

Diagram 2: Internal validation workflow for gap-filling algorithms.

External Validation: Improving Phenotypic Predictions

- Objective: To assess the practical impact of gap-filling on model functionality [8] [3].

- Protocol: Draft GEMs (e.g., from CarveMe or ModelSEED pipelines) are gap-filled using each algorithm. The resulting, more complete models are then used to simulate metabolic phenotypes, such as the secretion of fermentation products or amino acids. The accuracy of these predictions is compared, with improvements indicating the addition of biologically relevant reactions.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources. Key components required for conducting benchmark experiments in metabolic network gap-filling.

| Resource Name | Type | Function in Research |

|---|---|---|

| BiGG Models | Dataset | A repository of 108 high-quality, curated GEMs used as the primary benchmark for internal validation [3] [9]. |

| AGORA Models | Dataset | A collection of over 800 genome-scale metabolic models of gut microbes, used for large-scale validation [8]. |

| Universal Reaction Pool | Dataset | A comprehensive database of known biochemical reactions (e.g., from MetaNetX or KEGG) from which candidate reactions are selected for prediction [8]. |

| CarveMe & ModelSEED | Software Tool | Automated pipelines used to generate the 49 draft GEMs for external validation of phenotypic predictions [8] [3]. |

| Chebyshev Spectral GCN | Algorithm | The specific graph convolutional network used by CHESHIRE for feature refinement, capturing higher-order network topology [8] [3]. |

Future Directions

The field of hypergraph learning for metabolic networks is rapidly evolving. Newer models like Multi-HGNN and DSHCNet are pushing boundaries by integrating multi-modal data, including biochemical features of metabolites and reaction directionality, which were largely ignored by earlier methods [9] [4]. Furthermore, frameworks like DSHCNet explicitly model the distinction between substrates and products within a reaction, addressing a key biological specificity to further enhance predictive accuracy [4]. These advancements suggest that the future of gap-filling lies in a more holistic integration of network topology with rich, domain-specific biochemical knowledge.

Positioning CHESHIRE, NHP, and C3MM in the Gap-Filling Landscape

Genome-scale metabolic models (GEMs) serve as powerful computational tools for predicting cellular metabolism and physiological states in living organisms, with transformative applications across metabolic engineering, microbial ecology, and drug discovery [3]. The reconstruction of these models, however, is fundamentally hampered by incomplete biological knowledge and imperfect genomic annotations, resulting in metabolic networks with significant knowledge gaps. These missing reactions critically impair the predictive accuracy of GEMs, limiting their utility in both industrial and research applications [3] [10].

The challenge of hyperlink prediction in hypergraphs presents a mathematical framework for addressing this biological problem. Where traditional graphs represent pairwise relationships, hypergraphs naturally model metabolic reactions as hyperlinks that can connect multiple metabolite nodes simultaneously [10]. This higher-order representation preserves the complex multi-reactant, multi-product nature of biochemical transformations, making hyperlink prediction an essential methodology for metabolic network curation [3] [10]. Within this landscape, three algorithms—CHESHIRE, NHP, and C3MM—have emerged as prominent topology-based solutions for identifying missing reactions without requiring experimental phenotypic data as input [3].

This comparison guide provides an objective performance evaluation of these three algorithms, examining their architectural approaches, benchmarking results, and practical applicability for researchers engaged in metabolic network reconstruction and refinement.

Methodological Approaches: Architectural Comparison

The three algorithms represent distinct paradigms within machine learning-based hyperlink prediction, each with unique architectural characteristics and technical implementations.

CHESHIRE (CHEbyshev Spectral HyperlInk pREdictor) employs a sophisticated deep learning architecture specifically designed for hypergraph structures. Its four-stage learning process begins with feature initialization using an encoder-based neural network to generate initial metabolite feature vectors from the hypergraph incidence matrix. The model then applies Chebyshev spectral graph convolutional network (CSGCN) refinement to capture metabolite-metabolite interactions by incorporating features of other metabolites from the same reaction. For pooling, CHESHIRE combines maximum minimum-based and Frobenius norm-based functions to integrate metabolite-level features into reaction-level representations. Finally, a scoring network generates probabilistic existence confidence for each candidate reaction [3].

NHP (Neural Hyperlink Predictor) also utilizes a neural network approach but employs a graph approximation of the hypergraph structure when generating node features. This approximation results in the loss of higher-order information inherent in true hypergraph representations. While NHP shares a similar architectural workflow to CHESHIRE, it lacks the sophisticated spectral convolution operations and utilizes a less advanced pooling strategy [3].

C3MM (Clique Closure-based Coordinated Matrix Minimization) implements an integrated training-prediction process that includes all candidate reactions from a pool during training. This matrix optimization-based approach has inherent scalability limitations when handling large reaction databases. Unlike CHESHIRE and NHP, C3MM requires model retraining for each new reaction pool, significantly impacting its practical utility for large-scale metabolic network curation [3].

Table 1: Architectural Comparison of Gap-Filling Algorithms

| Feature | CHESHIRE | NHP | C3MM |

|---|---|---|---|

| Core Approach | Deep learning with spectral hypergraph convolution | Neural network with graph approximation | Matrix optimization with clique closure |

| Hypergraph Handling | Native hypergraph representation | Approximated as graph | Matrix representation |

| Feature Refinement | Chebyshev spectral graph convolutional network (CSGCN) | Basic neural network layers | Integrated matrix operations |

| Pooling Strategy | Combined max-min and Frobenius norm | Maximum minimum-based only | Not applicable |

| Scalability | High - separates candidates from training | Moderate - graph approximation enables scaling | Low - requires retraining for new pools |

| Training Efficiency | Single training, multiple candidate pools | Single training, multiple candidate pools | Retraining required for each new pool |

Benchmarking Framework: Experimental Design and Performance Metrics

Experimental Protocols for Internal Validation

The comparative evaluation of CHESHIRE, NHP, and C3MM employed a rigorous internal validation protocol designed to test each algorithm's ability to recover artificially removed reactions from known metabolic networks [3]. The benchmarking process followed these key stages:

Model Selection and Preparation: 108 high-quality BiGG GEMs were selected as the reference dataset, providing a diverse set of well-curated metabolic networks for testing [3].

Data Splitting: Metabolic reactions from each GEM were randomly split into training (60%) and testing (40%) sets across 10 Monte Carlo runs to ensure statistical robustness [3].

Negative Sampling: To address the class imbalance inherent in hyperlink prediction, negative reactions were created at a 1:1 ratio to positive reactions for both training and testing sets. This was achieved by replacing approximately half of the metabolites in each positive reaction with randomly selected metabolites from a universal metabolite pool [3].

Performance Evaluation: Algorithms were evaluated using the Area Under the Receiver Operating Characteristic curve (AUROC) alongside other classification metrics, providing a comprehensive assessment of prediction accuracy [3].

This experimental design enabled a direct comparison of the algorithms' capabilities in identifying known metabolic reactions that had been intentionally omitted from the network, simulating the real-world challenge of incomplete metabolic models.

Benchmarking Results and Performance Analysis

The internal validation experiments demonstrated CHESHIRE's consistent outperformance against both NHP and C3MM across multiple evaluation metrics [3]. The superior AUROC values achieved by CHESHIRE highlight its enhanced capability to distinguish between true missing reactions and artificially generated negative reactions.

Table 2: Performance Benchmarking on BiGG Models

| Algorithm | AUROC | Key Strengths | Identified Limitations |

|---|---|---|---|

| CHESHIRE | Highest (exact values not provided in source) | Superior recovery of artificially removed reactions; improved phenotypic prediction | Complex architecture requiring greater computational resources |

| NHP | Moderate | Neural network approach enables pattern learning | Loss of higher-order information from graph approximation |

| C3MM | Lower | Integrated optimization approach | Limited scalability; requires retraining for new reaction pools |

Beyond the internal validation with artificially introduced gaps, CHESHIRE was further validated for its ability to improve phenotypic predictions in 49 draft GEMs reconstructed from common pipelines (CarveMe and ModelSEED). The algorithm demonstrated significant enhancements in predicting fermentation product secretion and amino acid production, confirming its practical utility for functional metabolic prediction tasks [3].

The broader context of hyperlink prediction research confirms that deep learning-based methods generally prevail over other approaches, supporting the superior performance observed with CHESHIRE [10].

Technical Implementation: Workflow and Signaling Pathways

The CHESHIRE algorithm implements a sophisticated computational pipeline that transforms raw metabolic network data into accurate predictions of missing reactions. The workflow can be visualized through the following signaling pathway, which illustrates the sequential processing stages and their interactions:

This workflow demonstrates the sequential data transformation process, beginning with the input metabolic network and progressing through hypergraph construction, feature processing, and ultimately the prediction of missing reactions. The Chebyshev spectral graph convolutional network serves as the core innovation, enabling the model to capture complex higher-order relationships between metabolites that simpler approximation methods miss [3].

Practical Application: Research Reagent Solutions

Implementing these gap-filling algorithms requires specific computational resources and datasets. The following research reagent solutions represent essential components for conducting hyperlink prediction in metabolic networks:

Table 3: Essential Research Reagents for Metabolic Gap-Filling

| Reagent/Resource | Type | Function in Gap-Filling | Example Sources |

|---|---|---|---|

| BiGG Models | Database | Provides high-quality, curated metabolic networks for training and validation | BiGG Database [3] |

| AGORA Models | Database | Offers intermediate-quality genome-scale metabolic models for testing scalability | VMH Database [3] |

| Universal Metabolite Pool | Data Resource | Source for random metabolite selection during negative sampling | Metabolic Atlas, MetaNetX |

| Reaction Databases | Database | Comprehensive reaction pools for candidate generation during prediction | ModelSEED, Rhea, METACYC |

| CHEBHISE Codebase | Software | Reference implementation of the CHESHIRE algorithm | Nature Communications Supplementary [3] |

These research reagents provide the foundational components for implementing and validating gap-filling algorithms in practical metabolic engineering and drug discovery applications.

The comprehensive benchmarking of CHESHIRE, NHP, and C3MM positions CHESHIRE as the current state-of-the-art in topology-based metabolic gap-filling, demonstrating superior performance in both internal validation and external phenotypic prediction tasks [3]. Its native hypergraph learning approach, incorporating Chebyshev spectral convolution and advanced pooling strategies, enables more accurate capture of the complex higher-order relationships inherent in metabolic networks.

For researchers and drug development professionals, algorithm selection involves important trade-offs between prediction accuracy, computational requirements, and practical scalability. While CHESHIRE provides the most accurate predictions, its sophisticated architecture demands greater computational resources. NHP offers a balanced approach for less resource-intensive applications, while C3MM's scalability limitations may restrict its utility for large-scale metabolic databases [3].

The ongoing development of hyperlink prediction methods continues to address critical challenges in metabolic network completeness, with significant implications for drug target identification, metabolic engineering optimization, and microbial community modeling. As deep learning approaches increasingly dominate this landscape, the integration of multi-omics data and transfer learning capabilities represents the next frontier for advancing gap-filling methodologies in systems biology.

Architectural Deep Dive: How CHESHIRE, NHP, and C3MM Work

In the field of computational drug discovery, gap-filling algorithms are essential for predicting missing interactions and entities within complex biological networks. These algorithms address the inherent incompleteness of biological data, from metabolic pathways to protein-protein interaction networks. This guide benchmarks three prominent approaches: CHESHIRE, a transfer learning framework for NMR chemical shift prediction; Hyperlink Prediction (NHP) methods, which infer multi-node relationships in hypergraphs; and C3MM, a matrix optimization-based hyperlink prediction technique. Understanding their relative performance across different experimental scenarios empowers researchers to select optimal tools for their specific challenges, ultimately accelerating therapeutic development.

Architectural Comparison of Gap-Filling Algorithms

The following table summarizes the core architectural and operational differences between CHESHIRE, NHP, and C3MM.

Table 1: Architectural Comparison of Gap-Filling Algorithms

| Feature | CHESHIRE | Neural Hyperlink Prediction (NHP) | C3MM (Matrix Optimization) |

|---|---|---|---|

| Core Philosophy | Transfer learning from pre-trained atomic feature models [11] | Deep learning on hypergraph structures [10] | Matrix factorization and completion of the hypergraph incidence matrix [10] |

| Primary Input | Molecular structures and atomic features from MPNN forcefields [11] | Hypergraph node features and structural data [10] | Hypergraph incidence matrix (H) [10] |

| Typical Output | Experimental 13C chemical shifts (continuous values) [11] | Probability/likelihood of a missing hyperlink (binary or probabilistic) [10] | Completed incidence matrix, indicating likely hyperlinks [10] |

| Key Strength | High accuracy in low-data regimes; no need for costly ab initio data [11] | Superior predictive performance on complex, multi-relational data [10] | Computational efficiency and strong performance on uniform hypergraphs [10] |

| Notable Weakness | Application is specialized to molecular property prediction | Can be a "black box"; requires large, diverse datasets to avoid bias [12] [10] | Performance may degrade on non-uniform hypergraphs with highly variable hyperlink cardinality [10] |

Performance Benchmarking and Experimental Data

Performance varies significantly based on the application domain and data availability. CHESHIRE excels in molecular prediction tasks, while deep learning-based NHP methods lead in general hyperlink prediction.

Table 2: Experimental Performance Benchmarking

| Algorithm / Metric | Mean Absolute Error (MAE) / Accuracy | Dataset & Context | Comparative Performance |

|---|---|---|---|

| CHESHIRE | MAE of 1.34 ppm for 13C chemical shift prediction [11] | Experimental chemical shifts on organic compounds [11] | Outperforms scaled DFT (MAE of 2.21 ppm) [11] |

| NHP (Deep Learning) | Prevails over other methods in overall hyperlink prediction accuracy [10] | Benchmark studies on email, contact, and metabolic networks [10] | Generally outperforms similarity, probability, and matrix optimization-based methods [10] |

| C3MM | Specific accuracy metrics not fully detailed in survey [10] | General hypergraph applications [10] | Noted for strong performance, but deep learning methods often prevail [10] |

Detailed Experimental Protocols

CHESHIRE Protocol for NMR Chemical Shift Prediction

CHESHIRE employs a structured, four-step workflow to predict experimental NMR chemical shifts, leveraging transfer learning to achieve high accuracy with limited data [11].

CHESHIRE's Four-Step Workflow

- Feature Initialization: Atomic features are extracted from a Message Passing Neural Network (MPNN) pre-trained on a large, diverse dataset to predict molecular forcefields. These features serve as robust descriptors for atomic properties and local chemical environments [11].

- Model Pre-training: The core model, often a Graph Neural Network (GNN), is pre-trained using the extracted atomic features. This step allows the model to learn fundamental chemical principles from a vast amount of data, which is often unrelated to the specific target task [11].

- Knowledge Transfer: The pre-trained model is adapted for the downstream task of predicting experimental chemical shifts. In this phase, the model is fine-tuned on a smaller, high-quality dataset of experimental NMR shifts. This transfer of knowledge enables high performance even when experimental data is scarce [11].

- Scoring and Prediction: The final model generates predictions for experimental 13C chemical shifts. Performance is evaluated using metrics like Mean Absolute Error (MAE), with CHESHIRE achieving an MAE of 1.34 ppm, significantly outperforming traditional Density Functional Theory (DFT) methods with empirical scaling (MAE of 2.21 ppm) [11].

NHP and C3MM Benchmarking Protocol

The evaluation of hyperlink prediction methods like NHP and C3MM follows a standardized protocol to ensure fair comparison.

- Hypergraph Construction: Real-world systems (e.g., email networks, metabolic networks, co-authorship networks) are modeled as hypergraphs. The hypergraph (\mathcal{H}) is defined by a node set (\mathcal{V}) and a hyperlink set (\mathcal{E}), where each hyperlink can connect multiple nodes [10].

- Data Splitting and Masking: A subset of hyperlinks is randomly selected and removed from the observed hypergraph (\mathcal{H}), creating an incomplete "training" hypergraph. The removed hyperlinks form a test set for evaluation [10].

- Model Training and Prediction: Each algorithm (NHP, C3MM, etc.) is tasked with learning a function (\Psi(e)) that scores the likelihood of a candidate hyperlink (e) belonging to the true hypergraph. The model only has access to the incomplete training hypergraph [10].

- Performance Evaluation: The model's ranked list of predicted hyperlinks is compared against the held-out test set. Standard metrics like Area Under the Curve (AUC) and Precision are used. Benchmark studies consistently show that deep learning-based NHP methods generally achieve the highest performance across diverse applications [10].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Computational Tools and Platforms

| Tool/Platform | Type | Primary Function in Research |

|---|---|---|

| Message Passing Neural Network (MPNN) [11] | Algorithm | Generates foundational atomic feature descriptors for molecular structures, used in CHESHIRE's feature initialization step. |

| Graph Neural Network (GNN) [11] [10] | Algorithm | Core architecture for learning from graph-structured data; used in both CHESHIRE and deep learning-based NHP. |

| Hypergraph Incidence Matrix (H) [10] | Data Structure | A mathematical matrix representing node membership in hyperlinks; the fundamental input for algorithms like C3MM. |

| nmrshiftdb2 [11] | Database | An open NMR shift database with assigned spectra, used for training and benchmarking models like CHESHIRE. |

| ChEMBL / BindingDB [13] | Database | Public repositories of bioactive molecules and binding affinities; often used as data sources for AI drug discovery models. |

| PoseBusters [13] | Validation Tool | Computational tool that evaluates the biophysical plausibility of AI-predicted protein-ligand structures. |

The benchmarking analysis reveals a clear trade-off between specialization and generalizability. CHESHIRE demonstrates that a specialized, biologically contextualized transfer learning approach is superior for specific molecular property predictions like NMR chemical shifts, especially in low-data environments. In contrast, deep learning-based Neural Hyperlink Prediction methods offer broader applicability and higher accuracy for general hypergraph completion tasks but require robust datasets to mitigate "black box" limitations and bias. C3MM provides a computationally efficient alternative. The choice of algorithm should be guided by the specific research question: CHESHIRE for molecular property inference, and NHP for completing complex, multi-node relationships in biological networks.

In the field of systems biology, genome-scale metabolic models (GEMs) are powerful mathematical representations of an organism's metabolism. However, due to imperfect knowledge, even highly curated GEMs contain knowledge gaps, notably missing reactions [3]. The process of identifying and adding these missing reactions is known as gap-filling. Hyperlink prediction has emerged as a powerful machine learning approach to this problem, where metabolic networks are naturally represented as hypergraphs—structures where each hyperlink (reaction) can connect more than two nodes (metabolites) [10]. Neural Hyperlink Predictor (NHP) represents a significant advancement in this domain by adapting Graph Convolutional Networks (GCNs) for link prediction in hypergraphs [14].

Performance Comparison: NHP vs. Alternatives

Extensive benchmarking studies have evaluated NHP's performance against other gap-filling algorithms, particularly in recovering artificially removed reactions from metabolic networks. The table below summarizes key quantitative comparisons across multiple models.

Table 1: Performance Comparison of Gap-Filling Algorithms on BiGG Models (Internal Validation)

| Method | Category | AUROC Score | Key Strengths | Key Limitations |

|---|---|---|---|---|

| CHESHIRE | Deep Learning | ~0.90 (Highest) [3] | Superior accuracy; improved phenotypic predictions; pure topology-based [3] | - |

| NHP (Neural Hyperlink Predictor) | Deep Learning | Lower than CHESHIRE [3] | Adapts GCNs for hypergraphs; first method for directed hypergraphs [14] | Approximates hypergraphs as graphs, losing higher-order information [3] |

| C3MM (Clique Closure-based Coordinated Matrix Minimization) | Matrix Optimization | Lower than CHESHIRE [3] | Integrated training-prediction process [3] | Limited scalability; model retraining needed for new reaction pools [3] |

| Node2Vec-mean (NVM) | Probability | Used as a baseline method [3] | Simple architecture [3] | Lower performance compared to deep learning methods [3] |

Table 2: Broader Context of Hyperlink Prediction Method Performance

| Method Category | Examples | General Performance Note |

|---|---|---|

| Similarity-Based | Common Neighbors (CN), Katz Index (KI) [10] | Traditional approaches |

| Probability-Based | Node2Vec, Bayesian Sets (BS) [10] | - |

| Matrix Optimization-Based | C3MM, Singular Value Decomposition (SHC) [3] [10] | - |

| Deep Learning-Based | NHP, CHESHIRE [3] [10] | Prevail over other methods [10] |

Experimental Protocols and Methodologies

The benchmarking of gap-filling algorithms like NHP and CHESHIRE follows rigorous experimental protocols, primarily involving internal validation through artificially introduced gaps.

Internal Validation Protocol

The standard methodology for evaluating hyperlink prediction performance in metabolic networks involves the following steps [3]:

- Reaction Removal: Existing reactions in a curated GEM are artificially split into a training set (e.g., 60%) and a testing set (e.g., 40%) over multiple Monte Carlo runs to ensure statistical robustness.

- Negative Sampling: To create a balanced classification dataset, negative (fake) reactions are generated for both training and testing. This is typically done at a 1:1 ratio with positive reactions by replacing half of the metabolites in real reactions with randomly selected metabolites from a universal pool.

- Model Training & Prediction: The model is trained on the combination of training positive reactions and their corresponding negative reactions.

- Performance Evaluation: The model's task is to distinguish between the held-out test positive reactions and the test negative reactions. Performance is measured using standard classification metrics like the Area Under the Receiver Operating Characteristic curve (AUROC).

Figure 1: Workflow for internal validation of gap-filling algorithms using artificially introduced gaps.

Architectural Foundations: NHP and Its Evolution

NHP's architecture is built upon Graph Convolutional Networks, which are specialized neural networks for processing graph-structured data. GCNs work by leveraging both the features of a node and the features of its neighbors to learn powerful representations [15]. In a hypergraph context, this translates to learning representations for metabolites (nodes) based on the reactions (hyperedges) they participate in and other metabolites they interact with.

The core innovation of NHP is its adaptation of this GCN framework for hyperlink prediction. It was proposed in two variants: NHP-U for undirected hypergraphs and NHP-D, noted as the first method designed specifically for link prediction in directed hypergraphs [14], which are essential for representing biochemical reactions with clear reactant-product directions.

The CHESHIRE Advancement

CHESHIRE was developed to address specific limitations identified in NHP. While it shares a similar high-level learning architecture, CHESHIRE incorporates key technical improvements [3]:

- Feature Refinement: Uses a Chebyshev spectral graph convolutional network (CSGCN) to better capture metabolite-metabolite interactions.

- Pooling Strategy: Combines maximum minimum-based and Frobenius norm-based pooling functions to integrate metabolite-level features into reaction-level representations more effectively.

Figure 2: Conceptual workflow and key architectural differences between NHP and CHESHIRE.

The Researcher's Toolkit for Hyperlink Prediction

Implementing and evaluating hyperlink prediction models requires a specific set of computational tools and resources. The table below details essential components for research in this field.

Table 3: Essential Research Reagents and Tools for Hyperlink Prediction

| Tool/Resource | Type | Function in Research |

|---|---|---|

| Genome-Scale Metabolic Models (GEMs) | Data | High-quality, curated models (e.g., from BiGG database) serve as the ground truth for training and testing gap-filling algorithms [3]. |

| Reaction Databases | Data | Universal databases (e.g., MetaCyc, KEGG) provide comprehensive pools of candidate reactions for negative sampling and gap-filling [3]. |

| Hypergraph Representation | Framework | The mathematical structure used to model metabolic networks, where reactions are hyperedges and metabolites are nodes [3] [10]. |

| Graph Convolutional Network (GCN) | Algorithm | The deep learning foundation for methods like NHP, enabling learning from topological network structure [14] [15]. |

| Area Under the ROC Curve (AUROC) | Metric | A standard metric for evaluating classification performance in predicting missing reactions [3]. |

| Phenotypic Prediction Accuracy | Metric | An external validation metric assessing how gap-filling improves model predictions of fermentation products or amino acid secretion [3]. |

NHP established a significant milestone by successfully adapting Graph Convolutional Networks for the complex task of hyperlink prediction in metabolic networks. However, comprehensive benchmarking reveals that subsequent methods like CHESHIRE have addressed NHP's limitations, particularly the loss of higher-order information from graph approximation, to achieve superior performance [3]. This evolution underscores a broader trend in the field where deep learning-based methods consistently prevail over similarity-based, probability-based, and matrix optimization-based approaches [10]. For researchers in drug development and systems biology, these advanced topology-based gap-filling tools provide powerful means to refine metabolic models, thereby enhancing their predictive utility in metabolic engineering and therapeutic discovery.

Genome-scale metabolic models (GEMs) are powerful computational tools that predict cellular metabolism and physiological states in living organisms, with significant applications in metabolic engineering, microbial ecology, and drug discovery [3]. However, even highly curated GEMs contain knowledge gaps in the form of missing reactions due to our imperfect knowledge of metabolic processes [3]. The process of identifying and adding these missing reactions, known as gap-filling, is crucial for improving the accuracy and predictive power of metabolic models.

Traditional gap-filling methods often require experimental phenotypic data as input, creating limitations for non-model organisms where such data is scarce or unavailable [3]. This constraint has driven the development of topology-based methods that can predict missing reactions solely from the structure of the metabolic network itself. Among these approaches, hyperlink prediction methods that frame metabolic networks as hypergraphs have shown particular promise [10]. In this representation, metabolites are nodes and reactions are hyperedges that can connect multiple metabolites simultaneously, naturally capturing the multi-dimensional relationships in metabolic systems [10].

This guide focuses on benchmarking three machine learning-based hyperlink prediction methods for gap-filling: C3MM (Clique Closure-based Coordinated Matrix Minimization), CHESHIRE (CHEbyshev Spectral HyperlInk pREdictor), and NHP (Neural Hyperlink Predictor). These methods represent the state-of-the-art in topology-based gap-filling and offer distinct approaches to addressing the challenge of predicting missing reactions in GEMs without relying on experimental phenotypic data [3].

Theoretical Foundations and Methodological Approaches

Hypergraph Representation of Metabolic Networks

In hypergraph theory, a hypergraph ( \mathcal{H} = {\mathcal{V}, \mathcal{E}} ) consists of a node set ( \mathcal{V} = {v1, v2, ..., vn} ) representing metabolites and a hyperedge set ( \mathcal{E} = {e1, e2, ..., em} ) where each hyperedge ( ep \subseteq \mathcal{V} ) represents a metabolic reaction [10]. The incidence matrix ( H \in \mathbb{R}^{n \times m} ) encodes relationships between metabolites and reactions, where ( H{ip} = 1 ) if metabolite ( vi ) participates in reaction ( ep ), and 0 otherwise [10]. This representation preserves higher-order interactions that would be lost in traditional graph models, making it particularly suitable for metabolic networks where reactions typically involve multiple substrates and products [10].

Algorithmic Architectures

C3MM (Clique Closure-based Coordinated Matrix Minimization) employs an integrated Expectation-Maximization (EM) approach with a training-prediction process that includes all candidate reactions from a reaction pool during training [3]. This integrated approach provides a comprehensive learning framework but limits its scalability when handling large reaction pools, as the model must be retrained for each new reaction pool [3]. The method leverages clique closure properties to identify missing connections in the metabolic network.

CHESHIRE (CHEbyshev Spectral HyperlInk pREdictor) utilizes a deep learning architecture with four major components: feature initialization, feature refinement, pooling, and scoring [3]. For feature initialization, it employs an encoder-based one-layer neural network to generate initial feature vectors for metabolites from the incidence matrix [3]. Feature refinement is performed using Chebyshev spectral graph convolutional network (CSGCN) on a decomposed graph to capture metabolite-metabolite interactions [3]. The pooling stage combines maximum minimum-based and Frobenius norm-based functions to integrate metabolite-level features into reaction-level representations, followed by a scoring network that produces probabilistic confidence scores for candidate reactions [3].

NHP (Neural Hyperlink Predictor) implements a graph neural network framework that approximates hypergraphs using graphs when generating node features [3]. This approximation results in the loss of higher-order information present in the native hypergraph structure but enables efficient processing. Similar to CHESHIRE, it includes feature learning and pooling components but uses different architectural choices that affect its ability to capture complex metabolic relationships [3].

Figure 1: Hypergraph Representation and Algorithmic Approaches for Metabolic Gap-Filling

Experimental Benchmarking Framework

Internal Validation Protocol

Internal validation assesses a method's capability to recover artificially removed reactions from metabolic networks. The standard protocol involves:

Reaction Removal: Existing reactions in a metabolic network are randomly split into training (60%) and testing (40%) sets across multiple Monte Carlo runs to ensure statistical robustness [3].

Negative Sampling: For deep learning methods requiring balanced datasets, negative reactions are created at a 1:1 ratio to positive reactions by replacing half of the metabolites in each positive reaction with randomly selected metabolites from a universal metabolite pool [3].

Performance Metrics: Evaluation primarily uses the Area Under the Receiver Operating Characteristic curve (AUROC) and the Area Under the Precision-Recall Curve (AUPRC), which provide comprehensive measures of classification performance across different threshold settings [3].

Two validation approaches are employed: Type I validation mixes the testing set with derived negative reactions, while Type II validation mixes the testing set with real reactions from a universal database, providing a more challenging and realistic assessment scenario [3].

External Validation Protocol

External validation evaluates the biological relevance of gap-filled models by assessing their ability to improve phenotypic predictions:

Fermentation Phenotype Testing: Gap-filled models are tested for their ability to produce fermentation compounds that the original models could not secrete [16] [3].

Amino Acid Secretion Profiling: Models are evaluated for improved prediction of amino acid secretion capabilities after gap-filling [3].

Flux Variability Analysis: For each exchange reaction, flux variability analysis is performed to determine minimum and maximum secretion fluxes, with phenotypes considered positive if normalized maximum secretion flux exceeds a predefined cutoff (typically 1e-5) [16].