Beyond the χ²-Test: A Modern Guide to Goodness of Fit and Model Validation in 13C Metabolic Flux Analysis

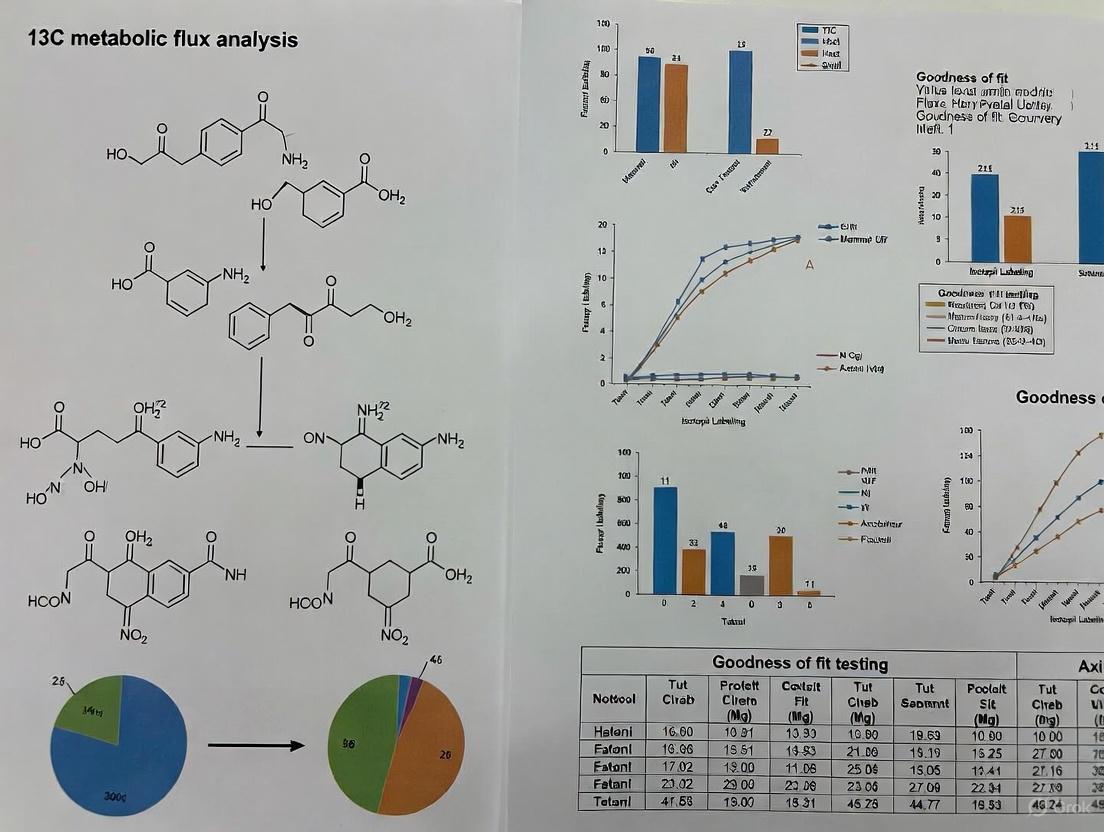

This article provides a comprehensive guide to goodness of fit (GOF) testing and model validation for 13C Metabolic Flux Analysis (13C-MFA), a gold-standard technique for quantifying intracellular reaction rates.

Beyond the χ²-Test: A Modern Guide to Goodness of Fit and Model Validation in 13C Metabolic Flux Analysis

Abstract

This article provides a comprehensive guide to goodness of fit (GOF) testing and model validation for 13C Metabolic Flux Analysis (13C-MFA), a gold-standard technique for quantifying intracellular reaction rates. Aimed at researchers and scientists in metabolic engineering and biomedical research, we explore the foundational role of the χ²-test while highlighting its limitations in modern practice. The scope extends to advanced methodological approaches, including validation-based model selection and Bayesian frameworks, which offer robustness against uncertain measurement errors. We detail common troubleshooting scenarios for poor model fit and present comparative validation techniques to enhance confidence in flux maps. This guide synthesizes current best practices and emerging statistical methodologies to empower researchers in performing statistically rigorous and reproducible flux analysis.

The Critical Role of Goodness of Fit: Why Your 13C-MFA Model's Validity Matters

Defining Goodness of Fit in the Context of 13C-MFA

Goodness of fit (GOF) testing serves as a critical statistical foundation for validating metabolic models in 13C Metabolic Flux Analysis (13C-MFA). This guide compares the predominant GOF method—the χ²-test—with emerging validation-based approaches, providing a structured evaluation of their application, limitations, and performance. We present quantitative data on flux precision, detailed experimental protocols for generating requisite data, and a curated toolkit of software and reagents. This synthesis aims to equip researchers with the knowledge to implement robust model validation protocols, thereby enhancing the reliability of flux estimations in metabolic research and drug development.

In 13C-MFA, "goodness of fit" (GOF) refers to a set of statistical procedures used to evaluate how well a mathematical model of a metabolic network explains experimental isotopic labeling data [1] [2]. The primary goal is to ensure that the estimated fluxes are statistically justified and that the model structure is an accurate representation of the underlying metabolic system. The fidelity of the fitted model is paramount, as it directly impacts the biological interpretation of the results, guiding hypotheses in systems biology and decisions in metabolic engineering [3] [1].

The process of 13C-MFA involves inferring in vivo metabolic fluxes, which cannot be directly measured, by fitting a model to experimental data, primarily Mass Isotopomer Distributions (MIDs) [4] [5]. A model that fits the data poorly may lead to incorrect flux estimates, while overfitting—where a model is overly complex and fits not only the underlying system but also the experimental noise—can reduce its predictive power and obscure the true biological signal [2]. Therefore, rigorous GOF assessment is not a mere formality but a fundamental step in establishing confidence in the model's predictions. The core challenge in model selection lies in choosing the most statistically justified model from a set of alternatives without falling into the traps of underfitting or overfitting [1].

Statistical Frameworks for Goodness of Fit

The statistical evaluation of 13C-MFA models has traditionally relied on one primary method, with a more recent alternative emerging to address its limitations. The following table summarizes the core characteristics of these two approaches.

Table 1: Core Goodness-of-Fit Methods in 13C-MFA

| Method | Underlying Principle | Primary Output | Key Assumptions |

|---|---|---|---|

| χ²-test of Goodness-of-Fit [1] [2] | A weighted sum of squared residuals between model-predicted and measured MIDs is computed and compared to a χ² distribution. | A p-value indicating whether to reject the model (typically p < 0.05) or fail to reject it. | 1. Measurement errors are accurately known.2. The model is correctly specified.3. Data points are independent. |

| Validation-based Model Selection [2] | The model is fitted to a training dataset and its predictive power is evaluated on a separate, independent validation dataset. | A Sum of Squared Residuals (SSR) or similar metric for the validation data. The model with the lowest validation SSR is selected. | 1. The training and validation datasets are from the same system but are independent.2. The validation data contains novel, but not overly dissimilar, information. |

The workflow for applying these methods, from experimental design to final model selection, is illustrated below.

The χ²-test: Applications and Limitations

The χ²-test is the most widely used quantitative GOF and model selection method in 13C-MFA [1]. The test statistic is calculated as: [ \chi^2 = \sum \frac{(measured - simulated)^2}{\sigma^2} ] where (\sigma) represents the measurement error for each data point [2]. The resulting value is compared to a χ² distribution with appropriate degrees of freedom. A model is typically deemed acceptable if the p-value exceeds a threshold of 0.05 [2].

However, this method has significant limitations. Its correctness is highly dependent on accurate knowledge of measurement errors (σ) [2]. In practice, error estimates from technical or biological replicates may be too low, as they fail to capture all sources of variability, such as instrumental bias or small deviations from metabolic steady-state. When the assumed σ is inaccurate, the χ²-test can become unreliable, leading to the selection of an incorrect model structure and, consequently, biased flux estimates [2].

Validation-based Model Selection

To address the limitations of the χ²-test, a validation-based approach has been proposed [2]. This method leverages independent data, which can be:

- A subset of the MID data not used for training (e.g., data from a specific time point).

- Data from a different type of mass spectrometer.

- Labeling data from a parallel tracer experiment that was not included in the initial model fitting [2].

This approach is more robust to uncertainties in measurement error estimates. Since it does not rely on a known σ for the validation data, it avoids the pitfall of model selection being dictated by potentially erroneous error assumptions [2]. Simulation studies have demonstrated that validation-based selection consistently identifies the correct model structure even when the magnitude of measurement error is substantially mis-specified, a scenario where the χ²-test fails [2].

Experimental Protocols for GOF Assessment

Robust GOF assessment begins with a carefully designed experiment capable of generating high-quality data for both model fitting and validation.

High-Resolution 13C-MFA Protocol

The following protocol, adapted from Antoniewicz (2019), is designed to achieve high-precision flux estimates [6].

Table 2: Step-by-Step Protocol for High-Resolution 13C-MFA

| Step | Procedure | Critical Parameters | Purpose |

|---|---|---|---|

| 1. Experimental Design | Use parallel labeling with multiple glucose tracers (e.g., [1-(^{13})C], [U-(^{13})C]). | Tracer combination with high "precision" and "synergy" scores [6]. | Maximizes information content for high flux precision. |

| 2. Cell Cultivation | Grow cells in parallel cultures with the chosen tracers. Ensure metabolic steady-state. | Constant metabolite levels and growth rate [5]. | Foundation for steady-state MFA. |

| 3. Harvesting | Collect culture medium for extracellular flux analysis. Quench cells to stop metabolism. | Rapid filtration and cold methanol quenching [6]. | Accurately capture metabolic state. |

| 4. Mass Spectrometry | Derivatize and analyze proteinogenic amino acids and other polymers via GC-MS. | Measure MIDs for 20-25 amino acids [6]. | Generate rich labeling dataset for flux constraints. |

| 5. Flux Estimation | Use software (e.g., Metran) to fit the network model to the combined MID dataset. | Minimize the SSR between measured and simulated MIDs [6]. | Obtain the most likely flux map. |

| 6. GOF & Statistical Analysis | Perform χ²-test on the best-fit model. Calculate confidence intervals for all fluxes. | Report goodness-of-fit p-value and flux confidence intervals [6]. | Validate the model and quantify flux uncertainty. |

Generating Data for Validation-Based Selection

To implement validation-based model selection, the experimental design must incorporate an independent validation dataset from the outset [2]. A practical strategy is to:

- Design Multiple Tracer Experiments: Plan two or more parallel labeling experiments using different isotopic tracers (e.g., [1,2-(^{13})C]glucose and [U-(^{13})C]glutamine) [2].

- Split Data for Training and Validation: Use the labeling data from one subset of tracers to fit the model (training data). Withhold the data from the other tracer(s) to be used exclusively for validation [2].

- Assess Predictive Power: Fit candidate model structures to the training data. Then, use each fitted model to predict the MIDs of the withheld validation data. The model that predicts the validation data with the lowest SSR is selected [2].

The relationship between the experimental workflow and the data flow for model validation is depicted below.

Comparative Performance Data

The choice of GOF method has a direct and measurable impact on the accuracy of resulting flux estimates. The table below synthesizes key findings from simulation studies and experimental analyses.

Table 3: Impact of GOF Method on Flux Estimation Outcomes

| Study Type | GOF Method | Key Finding | Impact on Flux Estimates |

|---|---|---|---|

| Simulation Study [2] | χ²-test | Model selection outcome was highly sensitive to the assumed magnitude of measurement error (σ). | Led to selection of incorrect model structures when σ was mis-specified, resulting in biased fluxes. |

| Simulation Study [2] | Validation-based | Consistently selected the correct model structure regardless of errors in the assumed σ. | Produced accurate and robust flux estimates by ensuring the correct model was used. |

| Experimental (Human Mammary Epithelial Cells) [2] | χ²-test | Informally used in iterative model development. | The final model was dependent on the iterative process. |

| Experimental (Human Mammary Epithelial Cells) [2] | Validation-based | Identified pyruvate carboxylase as a key, statistically supported model component. | Provided robust, data-driven evidence for including a specific metabolic reaction in the network. |

| High-Resolution Protocol [6] | χ²-test & Confidence Intervals | When combined with optimal tracer design and GC-MS measurements of proteinogenic amino acids. | Achieved a standard deviation of ≤2% for core metabolic fluxes in E. coli. |

The Scientist's Toolkit

Implementing 13C-MFA and associated GOF tests requires a suite of specialized software and reagents.

Table 4: Essential Research Reagent Solutions for 13C-MFA

| Category | Item | Specific Example / Vendor | Function in 13C-MFA/GOF |

|---|---|---|---|

| Software | 13C-MFA Flux Estimation | METRAN [7], INCA [5], 13CFLUX2 [8] | Performs computational flux estimation and provides core GOF statistics (SSR, χ²-test). |

| Software | Flux Uncertainty Analysis | Built into METRAN [6] and INCA. | Calculates confidence intervals for estimated fluxes. |

| Software | General Constraint-Based Modeling | COBRA Toolbox [3] | Provides a framework for model reconstruction and analysis, useful for preliminary FBA. |

| Isotopic Tracers | (^{13})C-Labeled Substrates | Cambridge Isotope Laboratories; Sigma-Aldrich [8] | Source of (^{13})C-glucose, (^{13})C-glutamine, etc., for generating MID data. |

| Mass Spectrometry | GC-MS | Standard instrumentation for analyzing derivatized amino acids [6]. | Primary tool for measuring Mass Isotopomer Distributions (MIDs) of protein-bound amino acids. |

| Mass Spectrometry | LC-MS | Used for non-targeted analysis and polar metabolites [9]. | Measures a broader range of metabolites, useful for global (^{13})C tracing [9]. |

| Cell Culture | Defined Media Kits | Commercially available custom media kits. | Ensures a chemically defined environment for accurate measurement of external rates [5]. |

In 13C Metabolic Flux Analysis (13C-MFA), the χ²-test has traditionally been the cornerstone for determining whether a metabolic model provides an acceptable fit to experimental isotope labeling data. This article compares the performance, application, and limitations of this traditional method against emerging validation-based approaches. We summarize quantitative data on their performance, provide detailed experimental protocols, and outline the essential toolkit for researchers in the field.

13C Metabolic Flux Analysis (13C-MFA) is a powerful technique used to quantify intracellular metabolic reaction rates (fluxes) in living cells [10]. By feeding cells with 13C-labeled substrates (e.g., glucose) and measuring the resulting mass isotopomer distributions (MIDs) of intracellular metabolites, researchers can infer metabolic pathway activities [11] [2]. The process involves fitting a computational model of the metabolic network to the experimental MID data. A critical step in this process is model selection—determining which model structure, from a set of candidates, best represents the true underlying metabolic system [3]. For decades, the χ²-test of goodness-of-fit has been the traditional and most widely used method for this purpose [11] [2].

The Traditional Workflow and the χ²-Test

In the conventional 13C-MFA workflow, model development is an iterative process. A researcher proposes a model structure (a set of metabolic reactions), fits it to the MID data, and evaluates the fit. The χ²-test is the standard statistical tool for this evaluation.

The Protocol for the Traditional χ²-Test Approach

The following protocol outlines the key steps for model acceptance using the χ²-test [11] [2]:

- Model Fitting: For a candidate model, find the parameter set (flux values) that minimizes the weighted sum of squared residuals (SSR) between the simulated and measured MIDs.

- Goodness-of-Fit Calculation: Calculate the χ² value. This value is the achieved SSR from the model fitting step.

- Statistical Evaluation: Compare the χ² value to a χ²-distribution. The degrees of freedom (df) for this distribution are calculated as the number of data points minus the number of independently identifiable parameters in the model.

- Model Acceptance/Rejection: If the χ² value falls below a critical threshold (typically at a 5% significance level), the model is not rejected and is considered statistically acceptable. If the value is above the threshold, the model is rejected.

Table 1: Key Components of the Traditional χ²-Test Workflow

| Component | Description | Role in Model Acceptance |

|---|---|---|

| Mass Isotopomer Distribution (MID) | Measured relative abundances of different isotopomers for a metabolite [2]. | Primary experimental data used for model fitting. |

| Sum of Squared Residuals (SSR) | The weighted sum of squared differences between simulated and measured MIDs [11]. | The objective function for model fitting; becomes the χ² statistic. |

| Degrees of Freedom (df) | Number of independent data points minus the number of identifiable model parameters [11]. | Adjusts the χ²-test critical threshold to account for model complexity. |

| Significance Level | The probability threshold for rejecting a model (commonly 0.05) [2]. | Determines the critical value for the χ²-test. |

Limitations of the χ²-Test in Practice

Despite its widespread use, reliance on the χ²-test for model selection presents several challenges [11] [2]:

- Dependence on Accurate Error Estimates: The test's validity hinges on knowing the true measurement errors (σ). In practice, these errors are estimated from biological replicates, but they can severely underestimate actual errors due to instrumental bias or unaccounted experimental noise [2].

- Sensitivity to Error Magnitude: The outcome of the model selection is highly sensitive to the believed measurement uncertainty. If errors are underestimated, overly complex models may be selected (overfitting); if errors are overestimated, models may be too simple (underfitting) [11].

- Difficulty in Determining Identifiable Parameters: Correctly calculating the degrees of freedom requires knowing the number of identifiable parameters, which is difficult to determine for non-linear models like those used in 13C-MFA [11].

Beyond the χ²-Test: The Rise of Validation-Based Model Selection

To address the limitations of the χ²-test, validation-based model selection has been proposed as a robust alternative [11]. This method leverages independent data to assess a model's predictive power.

Protocol for Validation-Based Model Selection

The protocol for this modern approach is as follows [11]:

- Data Splitting: Divide the experimental dataset into two parts: the estimation data (Dest) and the validation data (Dval). The validation data should come from a distinct tracer experiment to ensure it provides qualitatively new information.

- Model Fitting: Fit each candidate model exclusively to the estimation data (Dest) to obtain its parameter estimates.

- Model Evaluation: Test the predictive power of each fitted model by calculating its SSR against the independent validation data (Dval).

- Model Selection: Select the model that achieves the smallest SSR on the validation data. This model demonstrates the best predictive performance.

Table 2: Comparison of Model Selection Methods in 13C-MFA

| Method | Core Principle | Key Advantage | Key Disadvantage | Performance with Uncertain Measurement Errors |

|---|---|---|---|---|

| χ²-Test (First) | Selects the simplest model that passes the χ²-test [11]. | Parsimonious; avoids unnecessary complexity. | Highly sensitive to believed measurement uncertainty; can lead to underfitting [11]. | Poor; model selection changes with error estimates [11]. |

| χ²-Test (Best) | Selects the model that passes the χ²-test with the greatest margin [11]. | Selects a well-fitting model. | Prone to overfitting if measurement errors are underestimated [11]. | Poor; model selection changes with error estimates [11]. |

| AIC / BIC | Selects the model that minimizes an information criterion, balancing fit and complexity [11]. | Provides a formal trade-off between goodness-of-fit and model simplicity. | Performance can degrade if the error model is incorrect [11]. | Varies; depends on the specific criterion and context. |

| Validation-Based | Selects the model with the best performance on an independent validation dataset [11]. | Robust to uncertainties in measurement errors; directly tests predictive power [11]. | Requires additional experimental effort to generate a suitable validation dataset [11]. | Excellent; consistently selects the correct model independently of error estimates [11]. |

The diagram below illustrates the logical workflow and key difference between the traditional and validation-based approaches.

Workflow Comparison: Traditional vs. Validation-Based Model Selection

The Scientist's Toolkit: Essential Reagents and Software

Successful 13C-MFA, regardless of the model selection method, relies on a suite of specialized reagents and computational tools.

Table 3: Key Research Reagent Solutions for 13C-MFA

| Item | Function in 13C-MFA | Example Application |

|---|---|---|

| 13C-Labeled Tracers | Carbon sources with specific 13C labeling patterns (e.g., [U-13C]glucose, [1-13C]glucose) fed to cells to trace metabolic pathways [12]. | A mixture of 28% [U-13C6]glucose, 20% [1-13C]glucose, and 52% [1,2-13C2]glucose was used to study Myc-induced metabolic reprogramming in B-cells [12]. |

| Gas Chromatography-Mass Spectrometry (GC-MS) | Analytical platform for measuring the Mass Isotopomer Distribution (MID) of metabolites derived from 13C tracers [13]. | Used for high-resolution isotopic labeling measurements of protein-bound amino acids and RNA-bound ribose [13]. |

| Metabolic Modeling Software | Computational tools to simulate isotope labeling and estimate metabolic fluxes. | Software like 13CFLUX provides high-performance simulation for both stationary and non-stationary 13C-MFA [14]. Metran is another academic software used for flux estimation [13]. |

The χ²-test has served as the traditional cornerstone for model acceptance in 13C-MFA, providing a statistically grounded framework for evaluating model fit. However, its dependence on accurately known measurement errors is a significant vulnerability in practice. As the field advances, validation-based model selection emerges as a powerful, robust alternative that prioritizes a model's predictive power over its fit to a single dataset. This paradigm shift enhances the reliability of flux estimates, which is crucial for applications in metabolic engineering and drug development.

In 13C Metabolic Flux Analysis (13C-MFA), the accuracy of intracellular flux estimates is entirely dependent on the proper fit between the mathematical model, experimental data, and the underlying metabolic network [15] [2]. Model selection represents a critical step where researchers choose which compartments, metabolites, and reactions to include in their metabolic network model [16] [2]. When this process is conducted informally using the same dataset for both model fitting and selection, it often leads to statistical distortions that compromise flux reliability [2]. The consequences of poor model fit manifest primarily as overfitting (incorporating excessive complexity) or underfitting (oversimplifying the network), both generating misleading biological conclusions that can impede scientific progress and therapeutic development [16] [2] [3].

The challenge of achieving proper fit is particularly acute in 13C-MFA because, unlike other omics technologies, it does not directly measure fluxes but infers them indirectly through mathematical modeling of isotopic labeling patterns [15] [17]. This multi-step process involves growing cells on 13C-labeled substrates, measuring resulting mass isotopomer distributions (MIDs) of intracellular metabolites, and estimating fluxes through iterative computational fitting [13] [17]. Each stage introduces potential sources of error that can propagate to the final flux estimates, making robust model validation essential for producing reliable results [15] [3].

Quantitative Impacts of Poor Model Fit

Statistical and Biological Consequences

The statistical implications of poor model fit extend beyond mathematical imperfection to fundamentally flawed biological interpretations. The table below summarizes the primary consequences of overfitting and underfitting in 13C-MFA:

Table 1: Consequences of overfitting and underfitting in 13C-MFA

| Aspect | Overfitting | Underfitting |

|---|---|---|

| Model Complexity | Excess reactions/compartments [2] | Missing key pathways/compartments [2] |

| Statistical Power | Falsely precise flux estimates [2] | Reduced ability to resolve parallel pathways [15] |

| Flux Reliability | Poor reproducibility between studies [15] | Systematic bias in flux estimates [2] |

| Biological Interpretation | Identification of non-existent pathways [2] | Failure to detect active pathways [18] |

| χ²-test Performance | May pass despite incorrect structure [2] [3] | May be rejected despite correct core structure [3] |

The fundamental challenge in model selection lies in balancing model complexity with explanatory power. Overfitting occurs when models contain unnecessary reactions or compartments that artificially improve fitting metrics without reflecting biological reality [2]. These overly complex models often produce falsely precise flux estimates that appear statistically sound but fail validation when tested against independent datasets [2] [3]. Conversely, underfitted models omit crucial metabolic functions, leading to systematic biases in flux estimates [2]. For example, simplified non-compartmented models have proven insufficient for describing mammalian cell metabolism, particularly for understanding compartment-specific processes like NADPH generation and shuttle systems [19].

Empirical Evidence of Poor Fit Consequences

Case studies demonstrate how poor model fit directly impacts biological conclusions. In one isotope tracing study on human mammary epithelial cells, conventional model selection approaches failed to identify pyruvate carboxylase as a key model component, while validation-based methods correctly highlighted its metabolic importance [2]. This enzyme plays critical roles in anaplerosis and gluconeogenesis, and its omission would significantly distort understanding of central carbon metabolism.

In studies of immune cell metabolism, oversimplified models failed to detect important metabolic rewiring during neutrophil differentiation and activation [18]. Only with appropriately complex models could researchers observe that lipopolysaccharide (LPS) activation of HL-60 neutrophil-like cells upregulated fluxes through the oxidative pentose phosphate pathway and lipid degradation pathways – findings with potential implications for targeting immunometabolism in therapeutic development [18].

The reproducibility crisis in 13C-MFA further underscores the consequences of poor fit. A comprehensive review of 13C-MFA publications revealed that only approximately 30% of studies provided sufficient information to reproduce the reported flux results [15]. This deficiency stems largely from incomplete model documentation and informal selection procedures, making it difficult to reconcile conflicting results between studies and hindering scientific progress [15] [20].

Model Validation and Selection Methodologies

Established and Emerging Approaches

Robust model validation requires specialized methodologies to discriminate between alternative model structures. The table below compares the primary validation approaches used in 13C-MFA:

Table 2: Model validation and selection methods in 13C-MFA

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| χ²-test of Goodness-of-Fit [2] [3] | Tests if model-predicted MIDs match measured data within expected error | Well-established theoretical foundation; Widely implemented in software | Sensitive to inaccurate error estimates; Does not directly compare models [2] |

| Validation-Based Model Selection [16] [2] | Uses independent validation data to test model predictions | Robust to measurement error uncertainty; Consistently selects correct model in simulations [2] | Requires additional experimental work; More complex implementation [2] |

| Flux Confidence Intervals [15] [3] | Statistical assessment of flux estimate precision | Quantifies reliability of individual flux values; Identifies poorly constrained fluxes [15] | Computationally intensive; Does not validate model structure [3] |

| Metabolite Pool Size Validation [3] | Incorporates independent pool size measurements | Additional constraints improve flux identifiability; Tests metabolic steady-state assumption | Experimentally challenging to measure accurately [3] |

The traditional approach to model selection relies heavily on the χ²-test for goodness-of-fit, where models are iteratively modified until they are not statistically rejected [2]. However, this method proves problematic in practice because it depends on accurately knowing measurement uncertainties, which is often difficult for mass spectrometry data where error models may not capture all sources of bias [2]. Furthermore, the χ²-test correctness depends on properly determining the number of identifiable parameters, which is challenging for nonlinear models [2] [3].

Validation-Based Model Selection Protocol

Validation-based model selection has emerged as a robust alternative that addresses key limitations of traditional methods [16] [2]. The protocol involves:

- Independent Dataset Creation: Splitting experimental data into estimation and validation sets, or collecting completely separate validation data [2].

- Model Training: Fitting candidate model structures to the estimation data.

- Prediction Testing: Evaluating how well each fitted model predicts the independent validation data.

- Model Selection: Choosing the model that demonstrates superior predictive performance for the validation data [2].

This method includes an additional innovation for quantifying prediction uncertainty of mass isotopomer distributions in new labeling experiments, helping researchers identify validation data with appropriate novelty – neither too similar nor too dissimilar to the original training data [2]. Simulation studies demonstrate that this approach consistently selects the correct model structure in a way that remains independent of errors in measurement uncertainty estimates, providing a significant advantage over χ²-test based methods [2].

Diagram 1: Validation-based model selection workflow for 13C-MFA

Best Practices for Achieving Optimal Fit

Experimental Design and Model Specification

Achieving optimal model fit begins with proper experimental design rather than post-hoc analysis. Parallel labeling experiments using multiple 13C-labeled tracers simultaneously significantly improve flux precision and resolution compared to single-tracer designs [13]. For instance, using both [1,2-13C]glucose and [U-13C]glutamine tracers in parallel helps resolve fluxes in the pentose phosphate pathway and TCA cycle more effectively than sequential experiments [13] [17].

Comprehensive model specification requires complete documentation of several components. The metabolic network must include atom transitions for all reactions, particularly less common ones, as these dictate carbon atom rearrangements that generate specific isotopomer patterns [15]. The FluxML language has been developed as a universal modeling language to unambiguously express and conserve all necessary information for model re-use, exchange, and comparison [20]. This standardized format helps address the current reproducibility crisis by ensuring implicit assumptions made during modeling are properly documented [20].

Comprehensive Reporting Standards

Complete reporting should encompass seven key categories, as outlined in Table 3 below.

Table 3: Minimum information standards for publishing 13C-MFA studies

| Category | Minimum Information Requirements |

|---|---|

| Experiment Description | Source of cells, medium, isotopic tracers; Culture conditions; Sampling times [15] |

| Metabolic Network Model | Complete reaction network; Atom transitions; Number of reactions/fluxes; Balanced metabolites [15] |

| External Flux Data | Growth rates; Nutrient uptake/secretion rates; Metabolite concentrations [15] [17] |

| Isotopic Labeling Data | Uncorrected mass isotopomer distributions; Standard deviations; Tracer labeling purity [15] |

| Flux Estimation | Software used; Fitting algorithm; Optimization method; Statistical criteria [15] |

| Goodness-of-Fit | χ²-value; Measurement residuals; Degrees of freedom; p-value [15] [3] |

| Flux Confidence Intervals | Statistical method; Confidence levels; Flux ranges; Best-fit values [15] [3] |

Adherence to these reporting standards enables proper evaluation of model fit quality and facilitates comparison across studies [15]. This is particularly important when reconciling conflicting results, as incomplete information often prevents identifying the root causes of discrepancies between studies [15].

Implementing robust 13C-MFA requires specialized tools and resources. The table below outlines key solutions available to researchers.

Table 4: Essential research reagent solutions for 13C-MFA

| Tool/Resource | Primary Function | Key Applications |

|---|---|---|

| Metran Software [13] | 13C-MFA flux estimation | Flux calculation from labeling data; Statistical analysis; Confidence interval determination |

| FluxML Format [20] | Standardized model specification | Model exchange between tools; Reproducible model documentation; Community sharing |

| ISODYE Tracers | 13C-labeled substrates | Tracing carbon fate; Metabolic pathway elucidation; Flux determination |

| GC-MS Platforms [13] [19] | Isotopic labeling measurement | Mass isotopomer distribution analysis; Metabolic flux experimental data generation |

| COBRA Toolbox [3] [21] | Constraint-based modeling | Flux Balance Analysis (FBA); Model quality control; Growth phenotype prediction |

| MEMOTE Suite [3] | Model quality assessment | Stoichiometric consistency testing; Metabolic functionality validation |

Specialized software like Metran implements the elementary metabolite units (EMU) framework, which enables efficient simulation of isotopic labeling in large biochemical networks [13]. This framework dramatically reduces computational complexity while maintaining accuracy, making 13C-MFA accessible to non-specialists [13] [17]. For standardized model sharing, the FluxML language provides an implementation-independent format that separates model specification from software tools, enhancing reproducibility and enabling model re-use across different computational platforms [20].

The consequences of poor model fit in 13C-MFA extend far beyond statistical imperfections to fundamentally unreliable biological conclusions that can misdirect research and drug development efforts. Overfitting produces models that appear precise but lack predictive power, while underfitting overlooks crucial metabolic functions, yielding systematically biased flux estimates [2]. The transition from traditional χ²-test based model selection to validation-based approaches represents significant methodological progress, offering robustness against measurement error uncertainty and consistently identifying correct model structures in simulation studies [2].

Future directions for improving model fit include broader adoption of parallel labeling experiments, development of universal model exchange standards like FluxML, and implementation of comprehensive reporting guidelines that enable proper evaluation of model quality [15] [13] [20]. As 13C-MFA continues to expand into new research areas including cancer metabolism, immunometabolism, and neurodegenerative diseases, rigorous model validation and selection practices will be essential for building accurate, reliable understanding of metabolic rewiring in health and disease [17] [18] [3].

The Urgent Need for Reproducibility and Minimum Reporting Standards

13C Metabolic Flux Analysis (13C-MFA) has emerged as the gold standard technique for quantifying intracellular metabolic fluxes in living cells, with critical applications across metabolic engineering, biotechnology, and biomedical research including cancer biology [17] [22]. As the methodology has gained widespread adoption beyond expert groups, the field has faced growing challenges in maintaining research quality and reproducibility. Currently, only approximately 30% of published 13C-MFA studies provide sufficient information to be considered reproducible, creating confusion and hindering scientific progress [15] [22]. This reproducibility crisis stems from inconsistent reporting practices, undocumented model assumptions, and insufficient methodological details in publications. The establishment and universal adoption of minimum reporting standards represents an urgent priority for ensuring the rigor, transparency, and cumulative advancement of 13C-MFA research.

The State of Reproducibility in 13C-MFA Research

Current Challenges and Consequences

The complex, multi-step nature of 13C-MFA makes it particularly vulnerable to reproducibility failures. Unlike other omics technologies, 13C-MFA requires both experimental measurements and sophisticated computational modeling to infer fluxes from isotopic labeling data [15] [2]. This dependency creates multiple potential failure points in study reproducibility. A fundamental challenge lies in the diversity of model implementations—even for well-studied organisms like E. coli, different research groups employ slightly different metabolic network models, and these models are continually updated and refined [15]. Without complete documentation of the specific model used, reaction atom mappings, and computational parameters, independent verification of reported fluxes becomes impossible.

The consequences of poor reproducibility are severe. Conflicting results between studies cannot be reconciled without understanding the methodological differences that might account for discrepancies [15] [22]. In one documented case, researchers attempting to follow published protocols discovered that key operational decisions and parameter specifications were omitted, making exact replication impossible [23]. Such omissions are particularly problematic in 13C-MFA because subtle differences in model structure or data processing can significantly impact flux estimates [2].

Root Causes of Irreproducibility

Several interconnected factors contribute to the reproducibility crisis in 13C-MFA research. The field has transitioned from a small community of experts to a widely used technology adopted by researchers with diverse backgrounds [15] [22]. This expansion has occurred without standardized reporting frameworks. Furthermore, the computational complexity of 13C-MFA means that complete methodological details cannot be adequately described in traditional results and methods sections [20]. Critical information about network stoichiometry, atom mappings, and fitting algorithms is often buried in supplementary materials or omitted entirely.

The problem is compounded by the absence of consensus standards among researchers and journal editors regarding what minimum information should be required for publication [15] [22]. Unlike genomics, where established repositories and data standards have facilitated reproducibility, 13C-MFA lacks universal standards for depositing models, isotopic labeling data, and flux results [15]. Additionally, traditional model selection approaches that rely solely on χ²-testing can yield different model structures depending on believed measurement uncertainty, potentially leading to overfitting or underfitting [2].

Minimum Reporting Standards Framework for 13C-MFA

Core Components of Reporting Standards

Based on extensive analysis of reporting deficiencies in the 13C-MFA literature, a comprehensive framework for minimum reporting standards has been developed, encompassing seven essential categories [15] [22]. These standards are designed to ensure that flux analysis results can be independently verified and critically evaluated. The table below summarizes the critical elements required for each category.

Table 1: Minimum Reporting Standards for 13C-MFA Studies

| Category | Minimum Information Required | Purpose |

|---|---|---|

| Experiment Description | Source of cells, medium composition, isotopic tracers, culture conditions, sampling times | Enables experimental replication and identifies potential confounding variables |

| Metabolic Network Model | Complete reaction network with stoichiometry, atom transitions for all reactions, list of balanced metabolites | Allows verification of network completeness and correctness of atom mapping |

| External Flux Data | Measured growth rates, substrate uptake rates, product secretion rates in tabular form | Provides constraint validation and carbon balancing verification |

| Isotopic Labeling Data | Raw mass isotopomer distributions or NMR fractional enrichments with standard deviations | Enables data quality assessment and independent flux fitting |

| Flux Estimation | Software tool used, fitting algorithm, statistical weighting method, goodness-of-fit measures | Permits evaluation of computational approach and fitting quality |

| Goodness-of-Fit | Residual sum of squares (SSR), χ²-test results, degrees of freedom | Provides statistical validation of model fit to experimental data |

| Flux Confidence Intervals | Confidence intervals for all estimated fluxes, parameter covariance matrix | Enables assessment of flux precision and identifiability |

Implementation and Adoption Considerations

Successful implementation of these reporting standards requires both cultural and technical adoption across the research community. Journals and editors play a critical role in enforcing compliance through checklist requirements during manuscript submission [15] [22]. The standardization of reporting must balance comprehensiveness with practicality—requiring sufficient detail for reproducibility without creating prohibitive barriers to publication.

Technical infrastructure represents another crucial component. The development of specialized modeling languages like FluxML provides a standardized, computer-readable format for encoding all essential model components, including atom mappings, constraints, and data configurations [20]. This approach addresses the limitation of natural language descriptions in traditional publications. Furthermore, the creation of public repositories for 13C-MFA models and datasets would facilitate model sharing, comparison, and reuse across the scientific community [15] [20].

Experimental Design and Methodological Standards

Tracer Selection and Experimental Protocol

The foundation of reproducible 13C-MFA begins with rigorous experimental design. Tracer selection profoundly influences flux resolution, with studies demonstrating that rational tracer design approaches based on Elementary Metabolite Unit (EMU) decomposition can identify optimal tracers that significantly outperform conventional choices [24]. For mammalian systems, [2,3,4,5,6-¹³C]glucose has been identified as optimal for elucidating oxidative pentose phosphate pathway flux, while [3,4-¹³C]glucose provides superior resolution for pyruvate carboxylase flux [24].

The experimental workflow for 13C-MFA consists of five critical stages that must be thoroughly documented to ensure reproducibility [25]:

- Tracer Selection and Experimental Design: Specification of isotopic tracer(s), labeling strategy (single tracer vs. mixtures), and cultivation system.

- Steady-State Culture and Sampling: Maintenance of metabolic and isotopic steady-state through appropriate cultivation duration (typically >5 residence times) with careful monitoring of growth rates and metabolic stability [25].

- Analytical Measurements: Precise quantification of extracellular fluxes (substrate consumption and product formation rates) and intracellular isotopic labeling patterns using mass spectrometry (GC-MS, LC-MS/MS) or NMR [17] [25].

- Flux Estimation: Computational fitting of metabolic network model to experimental data using nonlinear regression algorithms implemented in specialized software platforms (INCA, Metran, OpenFLUX) [17] [25].

- Statistical Validation: Comprehensive assessment of model fit, flux confidence intervals, and residual analysis to ensure statistical reliability of flux estimates [15] [25].

Table 2: Essential Research Reagents and Computational Tools for 13C-MFA

| Category | Specific Examples | Function/Purpose |

|---|---|---|

| Isotopic Tracers | [1,2-¹³C]glucose, [U-¹³C]glutamine, [3,4-¹³C]glucose | Carbon source with specific labeling patterns to probe pathway activities |

| Analytical Instruments | GC-MS, LC-MS/MS, NMR spectroscopy | Measurement of mass isotopomer distributions or fractional enrichments in metabolites |

| Cell Culture Components | Defined media formulations, serum lots, supplements | Controlled cellular environment with specified carbon sources |

| Computational Tools | INCA, Metran, OpenFLUX, 13CFLUX2 | Software platforms for flux estimation using EMU or isotopomer balancing methods |

| Modeling Standards | FluxML, SBML | Standardized formats for encoding and sharing metabolic network models |

Model Selection and Validation Protocols

Robust model selection represents a critical methodological challenge in 13C-MFA. Traditional approaches that rely exclusively on χ²-testing are vulnerable to errors, particularly when measurement uncertainties are inaccurately estimated [2]. Validation-based model selection approaches have been developed that utilize independent validation data rather than relying solely on goodness-of-fit to estimation data [2]. This method demonstrates superior performance in selecting the correct model structure while remaining robust to uncertainties in measurement error estimates.

The model selection and validation process requires careful implementation [2]:

- Model Candidate Development: Generation of alternative model structures representing different metabolic hypotheses (e.g., with/without specific pathway activities, different compartmentation).

- Parameter Estimation: Fitting each candidate model to the estimation dataset using nonlinear regression.

- Validation Experiment: Design of independent labeling experiments that provide distinct information from the estimation data.

- Prediction Testing: Evaluation of each fitted model's ability to predict the validation data, with selection of the model demonstrating superior predictive performance.

- Uncertainty Quantification: Assessment of prediction uncertainty to ensure validation data provides meaningful discrimination between model candidates.

The following workflow diagram illustrates the key stages in 13C-MFA experimentation and analysis, highlighting critical decision points that must be documented for reproducibility:

Comparative Analysis of Goodness-of-Fit Testing Approaches

Traditional χ²-Test Methods

The χ²-test has served as the cornerstone for statistical validation in 13C-MFA, providing a quantitative measure of how well the model-predicted labeling patterns match experimental measurements [2]. The test computes a residual sum of squares (SSR) that represents the weighted difference between observed and simulated data points. When the model correctly describes the system and measurement errors are accurately estimated, this SSR follows a χ² distribution with degrees of freedom equal to the number of measurable metabolites minus the number of estimated parameters [25]. The traditional model development cycle involves iteratively modifying the model structure until it passes the χ²-test at a specified confidence level (typically α=0.05) [2].

Despite its widespread use, the χ²-test approach suffers from significant limitations. Correct application requires accurate knowledge of the number of identifiable parameters, which can be difficult to determine for nonlinear models [2]. More fundamentally, the test depends critically on accurate estimation of measurement uncertainties, which often reflect only analytical variability without accounting for potential systematic errors or deviations from metabolic steady-state [2]. When uncertainty estimates are inaccurate, the χ²-test can lead to selection of either overly complex models (overfitting) or overly simple ones (underfitting), both resulting in poor flux estimation.

Emerging Validation-Based Approaches

Recent methodological advances have introduced validation-based model selection as a robust alternative to traditional χ²-test approaches [2]. This method selects among candidate model structures based on their ability to predict independent validation data rather than their goodness-of-fit to the estimation data alone. The fundamental strength of this approach lies in its reduced sensitivity to inaccuracies in measurement uncertainty estimates [2]. Simulation studies demonstrate that validation-based methods consistently select the correct model structure even when uncertainty estimates are substantially inaccurate, whereas χ²-test performance degrades significantly under the same conditions.

The implementation of validation-based selection requires careful design of validation experiments that provide meaningful discriminatory power between model candidates. The validation data should be sufficiently distinct from the estimation data to exercise different aspects of model behavior, yet not so different that it ventures into untested regions of model extrapolation [2]. Methods have been developed to quantify the prediction uncertainty of mass isotopomer distributions in potential validation experiments, helping researchers identify experiments with appropriate novelty relative to existing data [2].

Table 3: Comparison of Model Selection Methods in 13C-MFA

| Selection Method | Statistical Foundation | Key Advantages | Key Limitations |

|---|---|---|---|

| χ²-Test Based | Residual sum of squares relative to χ² distribution | Well-established, computationally straightforward, provides clear threshold criteria | Sensitive to measurement error miscalibration, difficult to determine identifiable parameters |

| Validation-Based | Predictive performance on independent data | Robust to measurement error uncertainty, directly tests model generalizability | Requires additional experimental data, more computationally intensive |

| Information Criteria (AIC/BIC) | Likelihood-based with parameter penalty | Balances model fit against complexity, applicable to non-nested models | Still sensitive to measurement error misspecification, may require modification for 13C-MFA |

| Likelihood Ratio Test | Nested model comparison | Formal statistical framework for comparing related models | Only applicable to nested models, requires proper degrees of freedom determination |

Implementation Pathways and Future Directions

Community Adoption and Tool Development

Widespread adoption of reproducibility standards in 13C-MFA requires coordinated effort across multiple stakeholders. Research communities should develop domain-specific extensions of general reproducibility guidelines to address the unique methodological aspects of flux analysis [23] [26]. Journal editors and funding agencies can accelerate this process by mandating adherence to minimum reporting standards and providing structured checklists for authors and applicants [15] [26].

Critical technical infrastructure needs include the development of centralized repositories for 13C-MFA models, datasets, and flux results [15] [22]. These repositories should leverage standardized formats like FluxML to ensure long-term interpretability and reusability of computational models [20]. The continued development of open-source software tools that both implement advanced analytical methods and enforce complete documentation of model assumptions and parameters will further enhance reproducible research practices.

Integration with Broader Scientific Reporting Standards

The movement toward improved reproducibility in 13C-MFA aligns with broader initiatives across scientific disciplines to enhance research transparency and rigor [23] [27]. The FAIR Data Principles (Findable, Accessible, Interoperable, Reusable) provide a framework for developing data and model sharing practices in flux analysis [20]. Similarly, the establishment of minimum reporting standards for 13C-MFA mirrors successful efforts in other specialized methodological domains where complex analytical pipelines require detailed documentation to ensure interpretability and reproducibility [26].

Future methodological developments should focus on enhancing the efficiency and accessibility of reproducible research practices. This includes creating user-friendly tools for model annotation and validation, developing educational resources for proper experimental design and data analysis, and establishing certification processes for 13C-MFA software tools to ensure they implement current best practices for statistical validation and uncertainty quantification [2]. Through these coordinated efforts, the field can transform minimum reporting standards from an additional burden into an integral component of the research process that enhances scientific reliability and accelerates discovery.

From Theory to Practice: Implementing Robust Goodness of Fit and Model Selection Methods

This guide provides a detailed protocol for applying the Chi-Square Goodness of Fit Test within the specialized context of 13C Metabolic Flux Analysis (13C-MFA). The Χ²-test serves as a critical statistical tool for validating metabolic models by comparing experimentally observed isotopic labeling distributions with computationally expected patterns. We present a rigorous, step-by-step methodology encompassing hypothesis formulation, test statistic calculation, and result interpretation, aligned with established good practices in fluxomics. The procedures outlined herein enable researchers to quantitatively assess model fit, thereby ensuring the reliability of inferred intracellular metabolic fluxes in metabolic engineering and cancer biology research.

13C Metabolic Flux Analysis (13C-MFA) has emerged as the premier technique for quantifying intracellular metabolic fluxes in living cells, with profound applications in metabolic engineering, systems biology, and cancer research [15] [17]. At its core, 13C-MFA is a model-based analysis that interprets stable isotope labeling patterns to infer metabolic pathway activities. The technique involves introducing 13C-labeled substrates (e.g., glucose or glutamine) to cells, measuring the resulting isotopic enrichment in downstream metabolites, and using computational models to estimate flux values that best explain the observed labeling data [17].

The Chi-Square Goodness of Fit Test provides an essential statistical framework for validating 13C-MFA models. As a hypothesis test, it determines whether the discrepancies between observed isotopic labeling measurements and model-predicted values are small enough to support the model's validity, or whether the model should be rejected [28] [29]. In 13C-MFA studies, goodness-of-fit testing answers a critical question: Is our metabolic network model consistent with the experimental isotopic labeling data? This validation step is crucial before drawing biological conclusions about metabolic flux distributions [15].

The Χ²-test is particularly well-suited for 13C-MFA because it can handle the categorical nature of mass isotopomer distributions (MIDs) frequently measured in tracer experiments. Each mass isotopomer (m0, m1, m2, etc.) represents a distinct category, and the test evaluates whether the observed frequencies of these categories match the expected frequencies predicted by the metabolic model [28] [30].

Theoretical Foundations of the Χ²-Test

Statistical Hypotheses

The Chi-Square Goodness of Fit Test evaluates two mutually exclusive hypotheses [28]:

- Null Hypothesis (H₀): The population follows the specified distribution. In 13C-MFA context, this means the metabolic network model adequately explains the observed isotopic labeling data.

- Alternative Hypothesis (Hₐ): The population does not follow the specified distribution, indicating the metabolic model is insufficient to explain the experimental measurements.

For 13C-MFA, we specifically test whether the deviations between measured and simulated mass isotopomer distributions can be attributed to random sampling error rather than fundamental model inadequacy.

Test Statistic and Distribution

The Pearson's Chi-Square test statistic is calculated as [28] [30] [31]:

$$X^2 = \sum\frac{(O - E)^2}{E}$$

Where:

- X² = Chi-square test statistic

- O = Observed frequency (measured isotopic labeling)

- E = Expected frequency (model-simulated isotopic labeling)

- Σ = Summation over all categories (mass isotopomers)

This test statistic follows a Chi-Square distribution with k - 1 degrees of freedom, where k represents the number of categories (mass isotopomers) being compared [31]. The degrees of freedom can be adjusted when additional parameters are estimated from the data.

Assumptions and Validity Conditions

For valid application of the Χ²-test, three key conditions must be satisfied [28] [29]:

- Random Sampling: Data must come from a random sample of the population

- Categorical Data: Variables must be categorical or nominal (satisfied by mass isotopomer distributions)

- Adequate Sample Size: Minimum of 5 expected observations per category

In 13C-MFA, the expected frequencies correspond to the model-predicted mass isotopomer abundances, which must be sufficiently large to ensure statistical validity.

Computational Workflow for Χ²-Test in 13C-MFA

The following diagram illustrates the comprehensive computational workflow for conducting the Χ²-test within the context of 13C-MFA:

Step-by-Step Experimental Protocol

Step 1: Formulate Hypotheses for 13C-MFA Model Validation

Establish clear statistical hypotheses specific to your metabolic model:

- H₀: The metabolic network model adequately fits the observed mass isotopomer distribution data. Any deviations between observed and simulated labeling patterns are due to random experimental error.

- Hₐ: The metabolic network model does not adequately fit the observed data. Systematic deviations indicate model misspecification, such as missing reactions, incorrect atom transitions, or incomplete pathway representation [15].

Step 2: Collect and Prepare Isotopic Labeling Data

Gather mass isotopomer distributions (MIDs) from your 13C-tracer experiment:

- Measurement Technique: Utilize GC-MS or LC-MS for precise quantification of mass isotopomer abundances [15] [17]

- Data Formatting: Organize measurements in a structured table with observed frequencies for each mass isotopomer

- Data Quality: Ensure measurements meet quality control standards, including appropriate signal-to-noise ratios and minimal natural isotope interference

Table 1: Example Format for Mass Isotopomer Data Collection

| Metabolite | m0 (Observed) | m1 (Observed) | m2 (Observed) | m3 (Observed) |

|---|---|---|---|---|

| Alanine | 0.455 | 0.321 | 0.142 | 0.082 |

| Lactate | 0.512 | 0.288 | 0.126 | 0.074 |

| Citrate | 0.234 | 0.415 | 0.251 | 0.100 |

Step 3: Generate Expected Frequencies from Metabolic Model

Simulate the expected mass isotopomer distributions using your 13C-MFA model:

- Software Tools: Employ specialized 13C-MFA software such as INCA, Metran, or 13C-FLUX [15]

- Flux Estimation: Use least-squares regression to find flux values that minimize the difference between simulated and measured labeling data [15] [17]

- Simulation Output: Extract the model-predicted mass isotopomer abundances for comparison with experimental data

Table 2: Example Format for Expected Mass Isotopomer Distributions

| Metabolite | m0 (Expected) | m1 (Expected) | m2 (Expected) | m3 (Expected) |

|---|---|---|---|---|

| Alanine | 0.462 | 0.315 | 0.138 | 0.085 |

| Lactate | 0.508 | 0.295 | 0.123 | 0.074 |

| Citrate | 0.241 | 0.408 | 0.259 | 0.092 |

Step 4: Calculate the Chi-Square Test Statistic

Compute the test statistic using the step-by-step calculation method:

Table 3: Chi-Square Test Statistic Calculation Worksheet

| Mass Isotopomer | Observed (O) | Expected (E) | O - E | (O - E)² | (O - E)²/E |

|---|---|---|---|---|---|

| Alanine_m0 | 0.455 | 0.462 | -0.007 | 0.000049 | 0.000106 |

| Alanine_m1 | 0.321 | 0.315 | 0.006 | 0.000036 | 0.000114 |

| Alanine_m2 | 0.142 | 0.138 | 0.004 | 0.000016 | 0.000116 |

| ... | ... | ... | ... | ... | ... |

| Total | - | - | - | - | Σ = 12.85 |

The final test statistic is the sum of all values in the last column: X² = 12.85

Step 5: Determine Degrees of Freedom

Calculate the appropriate degrees of freedom for your test:

- Formula: df = k - 1 - p

- k: Number of independent mass isotopomer measurements

- p: Number of parameters estimated from the data (flux values)

For typical 13C-MFA applications with 20 independent mass isotopomer measurements and 10 estimated flux parameters: df = 20 - 1 - 10 = 9 degrees of freedom.

Step 6: Find the Critical Chi-Square Value

Consult a Chi-Square distribution table or use statistical software to determine the critical value:

- Significance Level: Conventionally α = 0.05 (5% probability of rejecting H₀ when it is true)

- Distribution Table: Locate the value at the intersection of your degrees of freedom and significance level

- Example: For df = 9 and α = 0.05, the critical value is 16.92 [32]

Step 7: Compare and Make Statistical Decision

Apply the decision rule to interpret your results:

- If X² > critical value: Reject the null hypothesis (model does not fit the data)

- If X² ≤ critical value: Fail to reject the null hypothesis (model adequately fits the data)

In our example: 12.85 < 16.92, so we fail to reject H₀, indicating the metabolic model provides an adequate fit to the experimental data.

Step 8: Interpret Biological Significance

Translate statistical conclusions into biological insights:

- Adequate Fit: Proceed with confidence in your flux estimates and biological interpretations

- Poor Fit: Investigate potential causes including missing pathways, incorrect atom mappings, or inadequate model scope [15]

- Reporting: Include the test statistic, degrees of freedom, and p-value in publications: X²(df, N = sample size) = value, p = value [30]

Research Reagent Solutions for 13C-MFA Studies

Table 4: Essential Research Reagents for 13C Metabolic Flux Analysis

| Reagent / Material | Function in 13C-MFA | Example Specifications |

|---|---|---|

| 13C-Labeled Substrates | Carbon sources for tracing metabolic pathways; enable quantification of intracellular fluxes | [1,2-13C]glucose, [U-13C]glutamine, isotopic purity >99% |

| Mass Spectrometry Instrumentation | Analytical platform for measuring mass isotopomer distributions in metabolic intermediates | GC-MS or LC-MS systems with high mass resolution and precision |

| Cell Culture Media | Defined chemical environment for maintaining cells during tracer experiments | Custom formulations without unlabeled carbon sources that would dilute the tracer |

| Metabolic Modeling Software | Computational tools for simulating isotopic labeling and estimating flux parameters | INCA, Metran, 13C-FLUX with support for EMU modeling |

| Isotopic Standard Compounds | Reference materials for validating mass isotopomer measurements and correcting for natural isotope abundance | Certified 13C-labeled amino acids, organic acids, and other metabolites |

Data Presentation Standards in 13C-MFA Publications

Proper documentation and presentation of 13C-MFA results are essential for reproducibility and scientific rigor. The following table outlines minimum data standards for publications involving goodness-of-fit testing:

Table 5: Minimum Data Standards for Publishing 13C-MFA Studies with Goodness-of-Fit Tests

| Category | Minimum Information Required | Goodness-of-Fit Specific Requirements |

|---|---|---|

| Experimental Description | Source of cells, isotopic tracers, culture conditions, sampling times | Rationale for tracer selection and experimental design |

| Metabolic Network Model | Complete reaction network with atom transitions for all reactions | List of balanced metabolites, free fluxes, and model constraints |

| Isotopic Labeling Data | Uncorrected mass isotopomer distributions in tabular form | Standard deviations for all measurements, description of measurement techniques |

| Flux Estimation | Description of software and algorithms used for parameter estimation | Goodness-of-fit statistics (X² value, degrees of freedom, p-value) |

| Statistical Evaluation | Confidence intervals for key flux values | Results of chi-square goodness-of-fit test and residual analysis |

Troubleshooting Common Issues in Goodness-of-Fit Testing

Poor Model Fit (Significant X² Value)

When your metabolic model shows statistically significant lack of fit:

- Investigate Model Completeness: Ensure all active metabolic pathways are included, particularly around reversibility, parallel pathways, and compartmentation [15]

- Verify Atom Transitions: Confirm correct carbon atom mappings for all reactions, especially for complex reactions in TCA cycle and pentose phosphate pathway

- Check Measurement Quality: Assess potential technical artifacts in mass isotopomer measurements, including background correction and natural isotope effects

Inadequate Sample Size

Addressing violations of the minimum expected frequency assumption:

- Pool Data: Combine measurements from multiple experimental replicates

- Reduce Categories: Aggregate low-frequency mass isotopomers when biologically justified

- Increase Sample Size: Design tracer experiments with sufficient biological replicates and measurement precision

Multiple Testing Considerations

Managing Type I error inflation when testing multiple model configurations:

- Adjust Significance Level: Apply Bonferroni or similar corrections when evaluating multiple competing models

- Use Nested Models: Compare hierarchical models using likelihood ratio tests with appropriate chi-square distributions

The Chi-Square Goodness of Fit Test provides an essential statistical foundation for validating metabolic models in 13C-MFA studies. By systematically applying the step-by-step protocol outlined in this guide, researchers can rigorously assess model adequacy, identify potential model deficiencies, and ensure the biological reliability of inferred metabolic fluxes. Proper implementation of goodness-of-fit testing, coupled with adherence to data presentation standards, enhances the reproducibility and impact of 13C-MFA research in metabolic engineering, cancer biology, and drug development. As 13C-MFA continues to evolve with increasingly complex models and measurement technologies, robust statistical validation through goodness-of-fit testing remains paramount for generating biologically meaningful insights into cellular metabolism.

Model selection represents a critical step in 13C metabolic flux analysis (13C-MFA), where the choice of an inappropriate metabolic network model can lead to either overfitting or underfitting, ultimately compromising flux estimation accuracy. While the χ2-test has been traditionally employed for this purpose, its reliability is often hampered by difficulties in accurately quantifying measurement errors. This guide objectively compares the performance of two prominent information criteria—Akaike Information Criterion (AIC) and Bayesian Information Criterion (BIC)—against traditional methods and an emerging validation-based approach. Through a systematic evaluation of their theoretical foundations, penalty structures, and application to simulated and experimental data, we demonstrate that information criteria provide a robust framework for model comparison, particularly when measurement uncertainties are uncertain. However, validation-based model selection exhibits superior performance in consistently identifying the correct model structure independent of error magnitude, suggesting its integration as a best practice in fluxomics research.

In 13C metabolic flux analysis, intracellular metabolic fluxes are estimated indirectly by fitting a mathematical model of the metabolic network to mass isotopomer distribution (MID) data obtained from isotope labeling experiments [11] [2]. The model selection process—choosing which compartments, metabolites, and reactions to include in the metabolic network model—profoundly impacts the accuracy and biological relevance of the resulting flux estimates [11]. Traditionally, model selection in 13C-MFA has been conducted informally during an iterative modeling process, where models are successively modified and evaluated against the same dataset until one passes the χ2-test for goodness-of-fit [11] [2].

This conventional approach presents several significant limitations. The χ2-test's correctness depends on accurately knowing the number of identifiable parameters, which can be challenging to determine for nonlinear models [11]. Furthermore, the test's reliability is compromised when the underlying error model is inaccurate, a common scenario given that standard deviations from biological replicates may not capture all error sources, such as instrumental bias in mass spectrometry or deviations from metabolic steady-state in batch cultures [2]. Consequently, researchers face a dilemma: either arbitrarily inflate error estimates to pass the χ2-test (potentially increasing flux uncertainty) or introduce additional fluxes that may lead to overfitting [11].

Information criteria like AIC and BIC offer a principled alternative by balancing model fit against complexity, thereby addressing the fundamental trade-off between underfitting and overfitting [33] [34]. This review provides a comprehensive comparison of these information criteria against traditional and emerging model selection methods, with specific application to 13C-MFA, enabling researchers to make more informed decisions in their flux analysis workflows.

Theoretical Foundations of Model Selection Criteria

Akaike Information Criterion (AIC)

The Akaike Information Criterion (AIC) is an estimator of prediction error derived from information theory principles. Developed by Hirotugu Akaike, AIC estimates the relative amount of information lost when a given model is used to represent the data-generating process [34]. The criterion is founded on the concept of Kullback-Leibler divergence, measuring the distance between the true model and candidate approximations.

The AIC formula is expressed as: AIC = 2k - 2ln(L̂) where k represents the number of estimated parameters in the model, and L̂ is the maximum value of the likelihood function for the model [34]. The first term (2k) penalizes model complexity, while the second term (-2ln(L̂)) rewards goodness of fit. When comparing multiple candidate models, the one with the lowest AIC value is preferred [33] [34].

In practical terms, AIC is designed to select a model that performs well in predicting new data while avoiding excessive complexity. It is particularly useful when the goal is finding a approximating model that captures the essential features of the data without overfitting [35].

Bayesian Information Criterion (BIC)

The Bayesian Information Criterion (BIC), also known as the Schwarz Information Criterion, derives from a Bayesian probability framework. It provides an asymptotic approximation to the marginal likelihood of a model, particularly suitable for situations where the true model is among the candidates [35].

The BIC formula is given by: BIC = -2ln(L̂) + k·ln(n) where L̂ is the maximized likelihood value, k is the number of parameters, and n is the sample size [33] [35]. Similar to AIC, models with lower BIC values are preferred.

While both AIC and BIC balance fit and complexity, BIC imposes a stronger penalty for additional parameters, especially as sample size increases. This heavier penalty makes BIC more conservative, tending to select simpler models than AIC, particularly with larger datasets [33] [35].

Traditional and Emerging Alternatives

Beyond information criteria, several other approaches exist for model selection in 13C-MFA:

- χ2-test Based Methods: The "First χ2" method selects the simplest model that passes a χ2-test, while "Best χ2" selects the model passing with the greatest margin [11]. Both depend heavily on accurate error estimation.

- Sum of Squared Residuals (SSR): Selects the model with the lowest weighted sum of squared residuals, but risks overfitting as it doesn't penalize complexity [11].

- Validation-Based Method: An emerging approach that partitions data into estimation and validation sets, selecting the model that performs best on the independent validation data [11] [2]. This method has demonstrated robustness to uncertainties in measurement errors.

Table 1: Comparison of Model Selection Criteria Theoretical Foundations

| Criterion | Theoretical Basis | Key Formula | Complexity Penalty | Optimality Principle |

|---|---|---|---|---|

| AIC | Information Theory (Kullback-Leibler divergence) | AIC = 2k - 2ln(L̂) | 2k | Predictive accuracy |

| BIC | Bayesian Probability | BIC = -2ln(L̂) + k·ln(n) | k·ln(n) | Consistency (finding true model) |

| χ2-test | Frequentist Hypothesis Testing | χ2 = Σ[(observed - expected)²/variance] | Implicit via degrees of freedom | Statistical significance |

| Validation | Empirical Prediction Error | SSR_val = Σ(y - ŷ)² | Implicit via performance on new data | Generalization ability |

Comparative Performance in 13C-MFA

Simulation Studies with Known Ground Truth

Simulation studies where the true model structure is known provide the most reliable assessment of model selection criteria performance. In such controlled settings, validation-based approaches have demonstrated remarkable consistency in selecting the correct metabolic network model, regardless of uncertainties in measurement error magnitude [11]. This independence from error estimation is particularly valuable in 13C-MFA, where determining the true magnitude of measurement errors can be challenging due to potential biases in mass isotopomer measurements and deviations from steady-state assumptions [2].

Information criteria show variable performance under these conditions. AIC tends to favor more complex models than BIC, making it potentially more suitable when the risk of underfitting is a greater concern than overfitting [33]. BIC's stronger penalty for complexity makes it more conservative, particularly with larger datasets, which can be advantageous when seeking the most parsimonious adequate model [35].

Traditional χ2-test based methods exhibit significant limitations in these studies. Their model selection proves highly sensitive to the believed measurement uncertainty, with different error estimates leading to the selection of different model structures [11]. This dependency poses practical challenges, as researchers may consciously or unconsciously manipulate error estimates to achieve desired model characteristics.

Application to Experimental Data

In real-world applications to isotope tracing studies, such as those conducted on human mammary epithelial cells, validation-based model selection has successfully identified biologically relevant model components, including pyruvate carboxylase as a key reaction [11] [2]. This demonstrates the method's capacity to recover physiologically meaningful network structures from experimental data.

The performance of information criteria in experimental settings depends on appropriate likelihood specification. For 13C-MFA, this typically involves assumptions about the distribution of residuals between measured and simulated MIDs. When these assumptions are reasonable, both AIC and BIC provide viable model selection, with their relative performance influenced by sample size and the true complexity of the underlying metabolic system [34] [35].

Table 2: Performance Comparison in Simulated and Experimental Settings

| Criterion | Accuracy in Simulation Studies | Sensitivity to Error Estimation | Performance with Limited Data | Tendency in Model Selection |

|---|---|---|---|---|

| AIC | Moderate to High | Low | Good, but may overfit | Favors more complex models |

| BIC | Moderate to High | Low | Good with sufficient samples | Favors simpler models |

| First χ2 | Variable | High | Poor with inaccurate errors | Stops at simplest adequate model |

| Best χ2 | Variable | High | Poor with inaccurate errors | May select overly complex models |

| Validation | High | Very Low | Requires data splitting | Balanced, based on prediction |

Practical Implementation Considerations

From a practical standpoint, information criteria offer computational advantages as they can be calculated from the same likelihood evaluation used for parameter estimation, without requiring additional experiments or data partitioning [34] [35]. However, they do necessitate determining the effective number of parameters, which can be challenging for nonlinear models with parameter correlations [11].

Validation-based approaches address this limitation but require careful experimental design to ensure the validation data provides sufficiently novel information compared to the estimation data [11]. Recent methodological advances include approaches to quantify prediction uncertainty of mass isotopomer distributions in new labeling experiments, helping researchers avoid situations where validation data is either too similar or too dissimilar to estimation data [2].

Experimental Protocols for Model Comparison

Workflow for Systematic Model Evaluation

Implementing a rigorous model comparison protocol requires a structured workflow that minimizes bias and ensures comprehensive evaluation. The following diagram illustrates the key decision points in this process:

Diagram Title: Model Selection and Validation Workflow

Step-by-Step Protocol for 13C-MFA Model Comparison

Candidate Model Specification

- Define a set of candidate metabolic network models with increasing complexity (additional reactions, compartments, or metabolites)

- Document all model components including atom transitions for each reaction [15]

- Ensure proper stoichiometric balancing for all models

Experimental Design and Data Collection

- Design isotope labeling experiments using distinct tracer inputs for estimation and validation

- For validation-based approach: Partition data into estimation (Dest) and validation (Dval) sets, ensuring Dval comes from different tracer experiments [11]