Bridging Knowledge Gaps: How KEGG and Universal Databases Power Metabolic Network Reconstruction

This article explores the critical role of universal biochemical databases, with a focus on the Kyoto Encyclopedia of Genes and Genomes (KEGG), in addressing knowledge gaps in genome-scale metabolic models...

Bridging Knowledge Gaps: How KEGG and Universal Databases Power Metabolic Network Reconstruction

Abstract

This article explores the critical role of universal biochemical databases, with a focus on the Kyoto Encyclopedia of Genes and Genomes (KEGG), in addressing knowledge gaps in genome-scale metabolic models (GEMs). Gaps arising from incomplete genomic annotations hinder accurate predictions in biotechnology and biomedical research. We detail the foundational principles of databases like KEGG that serve as knowledge repositories for gap-filling algorithms. The article further examines a spectrum of computational methodologies, from established tools like fastGapFill to emerging machine learning techniques such as CHESHIRE and workflows like NICEgame. We also address common challenges in gap-filling, strategies for solution optimization, and provide a comparative analysis of tool performance in predicting metabolic phenotypes. This resource is tailored for researchers, scientists, and drug development professionals seeking to enhance the accuracy of metabolic models for applications in metabolic engineering and drug discovery.

The Bedrock of Knowledge: Understanding KEGG and Universal Biochemical Databases

Defining the Gap-Filling Problem in Genome-Scale Metabolic Models (GEMs)

Genome-scale metabolic models (GEMs) are mathematical representations of the metabolic network of an organism, connecting genomic information to cellular physiology [1]. The reconstruction of GEMs from an organism's genome sequence involves mapping annotated genes to the biochemical reactions they encode. However, imperfect genome annotations and incomplete biochemical knowledge mean that these draft models frequently contain metabolic gaps—disconnections in the metabolic network that prevent the synthesis of essential biomass components from available nutrients [2] [3].

The core of the gap-filling problem lies in identifying and resolving these disconnections by proposing a set of missing biochemical reactions that, when added to the model, restore metabolic functionality and enable the production of all required metabolites. This process is computationally challenging due to the vast space of possible reactions to consider from universal biochemical databases and the need to propose biologically plausible solutions [1] [3]. Gap-filling has evolved from simply enabling biomass production to incorporating multiple data types and addressing different types of network inconsistencies, making it a crucial step in creating predictive metabolic models.

The Critical Need for Gap-Filling in Metabolic Modeling

Origins and Impact of Metabolic Gaps

Metabolic gaps arise from several fundamental limitations in our knowledge and methodologies. Incomplete genome annotation fails to assign functions to many genes, while existing annotations may be incorrect [2]. Furthermore, biochemical databases themselves contain inconsistencies and incomplete information, propagating errors into metabolic reconstructions [4]. The consequences of these gaps are profound—gapped models cannot accurately predict cellular growth, essentiality, or metabolic phenotypes, limiting their utility in biotechnology and biomedical applications [2] [5].

The practical impact of unresolved gaps became evident in a comparative study of automated versus manual gap-filling for Bifidobacterium longum, where the automated solution achieved only 61.5% recall and 66.6% precision compared to manual curation [2]. This performance gap highlights the complexity of the problem and the continued need for expert biological knowledge in the curation process, particularly for reconciling multiple possible solutions that are mathematically equivalent but biologically distinct [2].

Implications for Drug Discovery and Microbial Community Modeling

The accuracy of gap-filling has direct implications for drug target identification. For pathogens like Vibrio parahaemolyticus, gap-filled GEMs enable the identification of essential metabolites critical for bacterial survival that may serve as targets for novel antimicrobial strategies [5]. In microbial community modeling, gap-filling individual organism models affects the prediction of cross-feeding interactions and community dynamics, as the metabolic secretions of one organism depend on a complete and accurate network reconstruction [3] [4].

Table 1: Quantitative Assessment of Gap-Filling Performance Across Studies

| Organism/Context | Gap-Filling Method | Performance Metrics | Key Findings |

|---|---|---|---|

| Bifidobacterium longum | GenDev (Pathway Tools) | Recall: 61.5%, Precision: 66.6% | 8 of 13 manually curated reactions correctly identified; 4 false positives [2] |

| 926 GEMs (BiGG & AGORA) | CHESHIRE | Superior AUROC vs. NHP, C3MM, NVM | Outperformed other topology-based methods in recovering artificially removed reactions [1] |

| Bacterial phenotypes (10,538 tests) | gapseq | False negative rate: 6% | Outperformed CarveMe (32%) and ModelSEED (28%) in enzyme activity prediction [4] |

| Microbial communities | Community gap-filling | Enabled prediction of metabolic interactions | Resolved gaps while accounting for species interdependencies [3] |

Universal Biochemical Databases as the Foundation for Gap-Filling

Universal biochemical databases serve as the reaction pools from which candidate reactions are drawn during gap-filling. These databases provide the essential chemical and taxonomic information needed to evaluate potential reactions for inclusion in a model [2] [5].

The Kyoto Encyclopedia of Genes and Genomes (KEGG) is frequently utilized in reconstruction pipelines. During the reconstruction of the VPA2061 model for Vibrio parahaemolyticus, KEGG provided the foundational metabolic data, including genes, reactions, enzymes, metabolites, and pathways for five bacterial subtypes [5]. The pathway-prioritized screening approach employed in this reconstruction preferentially selected gap-filling reactions from the same KEGG pathways as reactions flanking the metabolic gap, balancing biological interpretability with network connectivity [5].

Other essential databases include MetaCyc, which stores taxonomic range and reaction directionality information used by tools like the GenDev gap-filler in Pathway Tools [2], and the BiGG Models database, which provides curated metabolic reconstructions for benchmarking gap-filling algorithms [1]. The ModelSEED biochemistry database forms the basis for many automated reconstruction pipelines, though it often requires extensive curation to remove thermodynamic inconsistencies [4].

Methodological Approaches to Gap-Filling

Optimization-Based Gap-Filling Methods

Traditional gap-filling methods are primarily optimization-based, formulating the problem as a linear programming (LP) or mixed-integer linear programming (MILP) problem to find the minimal set of reactions that enable metabolic functionality [2] [3] [4]. The classic GapFill algorithm identified dead-end metabolites and added reactions from MetaCyc to resolve network gaps [3]. These methods typically require phenotypic data, such as known growth capabilities or nutrient utilization profiles, as input to identify inconsistencies between model predictions and experimental observations [1].

More advanced implementations like gapseq use LP-based gap-filling to enable biomass formation on a given medium while additionally filling gaps in metabolic functions supported by sequence homology evidence [4]. This approach reduces medium-specific biases in the resulting network structure. The community gap-filling algorithm extends this concept to microbial communities, resolving metabolic gaps across multiple organisms while accounting for their metabolic interactions [3].

Topology-Based and Machine Learning Approaches

Topology-based methods represent an alternative approach that uses only the network structure of the metabolic model without requiring phenotypic data. Methods like GapFind/GapFill and FastGapFill restore network connectivity based on flux consistency [1].

Recent advances apply machine learning to frame gap-filling as a hyperlink prediction problem on hypergraphs, where reactions are represented as hyperlinks connecting multiple metabolite nodes [1]. The CHESHIRE method uses a deep learning architecture with Chebyshev spectral graph convolutional networks to refine metabolite feature vectors and predict missing reactions purely from metabolic network topology [1]. This approach has demonstrated superior performance in recovering artificially removed reactions across hundreds of GEMs compared to earlier machine learning methods like Neural Hyperlink Predictor and C3MM [1].

Integrated Approaches Using Genomic Evidence

State-of-the-art tools like gapseq integrate genomic evidence with network topology to make more biologically informed gap-filling decisions. Unlike methods that rely solely on network connectivity or phenotypic data, gapseq uses sequence homology to reference proteins to identify and fill gaps in metabolic functions that are genomically supported but missing from the network [4]. This approach results in more versatile models that perform better under diverse environmental conditions and shows significantly lower false negative rates (6%) in predicting enzyme activities compared to other automated tools [4].

Experimental Protocols and Workflows

Standard Gap-Filling Workflow

The generalized gap-filling workflow involves multiple stages that can be adapted based on available data and tools. The process begins with draft network reconstruction from genomic data, followed by identification of network gaps such as dead-end metabolites or blocked reactions. Researchers then select an appropriate reaction database (KEGG, MetaCyc, ModelSEED, or BiGG) as the source for candidate reactions. The core gap-filling step applies computational algorithms (optimization-based, topology-based, or machine learning) to propose reaction additions. Finally, the proposed reactions undergo manual curation using biological knowledge to refine the solutions [2] [5] [4].

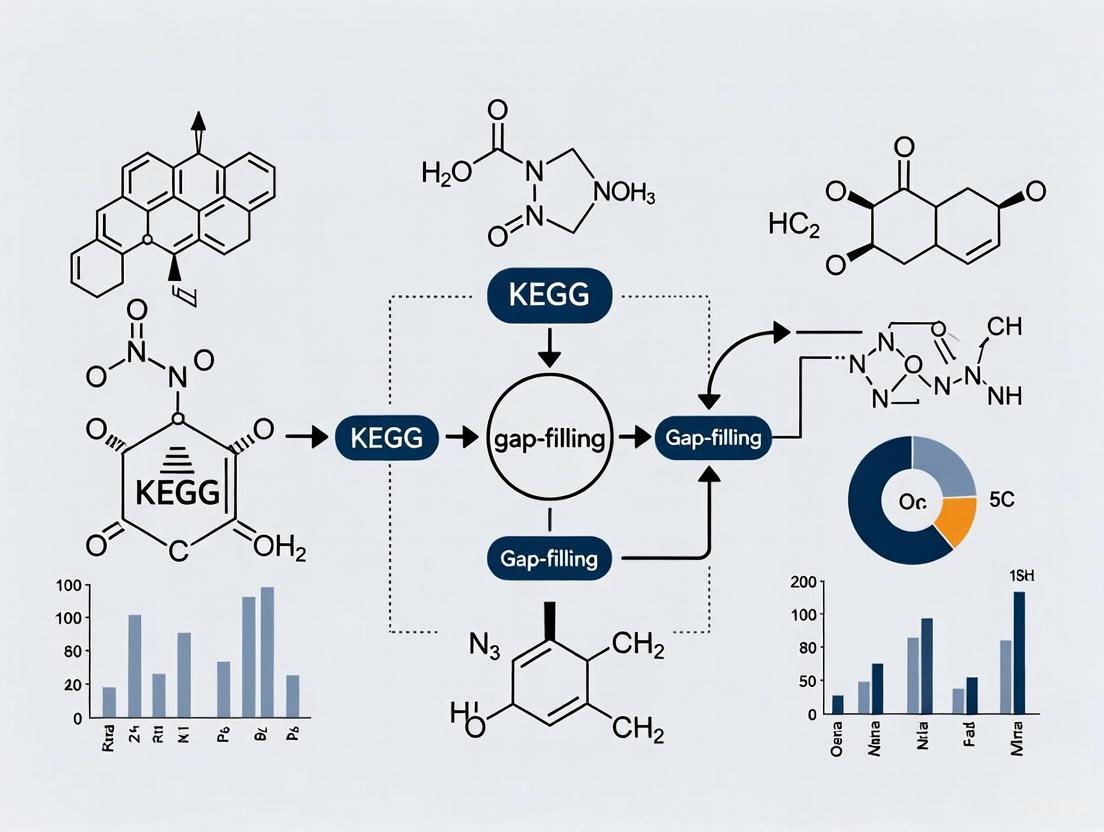

Diagram 1: Generalized Gap-Filling Workflow

Community Gap-Filling Protocol

For microbial communities, the gap-filling protocol must account for metabolic interactions between species. The community gap-filling algorithm involves compartmentalizing individual metabolic models to create a community model, identifying gaps that prevent community growth, and adding a minimal set of reactions from a reference database that restore growth while considering potential cross-feeding [3]. This approach was successfully applied to a synthetic community of auxotrophic E. coli strains and more complex communities of gut microbiota species [3].

Machine Learning-Based Gap-Filling with CHESHIRE

The CHESHIRE method implements a specialized workflow for topology-based gap-filling: (1) Hypergraph construction representing metabolites as nodes and reactions as hyperlinks; (2) Feature initialization using an encoder-based neural network to generate initial metabolite feature vectors; (3) Feature refinement with Chebyshev spectral graph convolutional networks to capture metabolite-metabolite interactions; (4) Pooling operations to integrate metabolite features into reaction-level representations; and (5) Scoring using a neural network to produce confidence scores for candidate reactions [1]. This method demonstrates that topological features alone contain significant information for predicting missing reactions.

Comparative Analysis of Gap-Filling Tools and Algorithms

Table 2: Methodological Comparison of Gap-Filling Approaches

| Method | Underlying Approach | Data Requirements | Key Features | Performance Highlights |

|---|---|---|---|---|

| GenDev (Pathway Tools) | MILP optimization | Phenotypic data (growth conditions) | Taxonomic range and directionality constraints | 61.5% recall, 66.6% precision vs. manual curation [2] |

| CHESHIRE | Deep learning on hypergraphs | Only network topology | Chebyshev spectral graph convolutional networks | Superior AUROC across 926 GEMs [1] |

| Community Gap-Filling | LP/MILP optimization | Community growth data | Resolves gaps at community level; predicts interactions | Enabled prediction of cross-feeding in gut microbiota [3] |

| gapseq | LP optimization with genomic evidence | Genomic sequence; optional phenotypic data | Integrates sequence homology; reduces medium bias | 6% false negative rate for enzyme activity prediction [4] |

| FastGapFill | Flux consistency analysis | Network topology only | Fast identification of connectivity gaps | Early topology-based method [1] |

Diagram 2: Gap-Filling Methods and Data Requirements

Table 3: Key Research Reagents and Computational Tools for Gap-Filling

| Resource Type | Specific Tools/Databases | Function in Gap-Filling Research |

|---|---|---|

| Biochemical Databases | KEGG, MetaCyc, ModelSEED, BiGG | Provide reference reaction pools for candidate reaction selection [5] [3] |

| Reconstruction Software | Pathway Tools, CarveMe, ModelSEED, gapseq | Automated pipeline for draft model creation and gap-filling [2] [4] |

| Gap-Filling Algorithms | GenDev, CHESHIRE, Community Gap-Filling, FastGapFill | Core computational methods for identifying missing reactions [2] [1] [3] |

| Simulation Environments | COBRA Toolbox, SBMLsimulator, COMETS | Validate gap-filled models through flux simulation and phenotypic prediction [6] [3] |

| Model Validation Data | BacDive, phenotypic microarrays, mutant libraries | Experimental data for assessing gap-filling accuracy [4] |

Gap-filling remains an essential but challenging step in metabolic model reconstruction, with significant implications for model accuracy and predictive capability. The integration of multiple evidence types—genomic, topological, and phenotypic—represents the most promising path forward for improving gap-filling accuracy [4]. As universal biochemical databases continue to expand and improve in quality, they will provide an increasingly solid foundation for gap-filling algorithms.

Future methodological developments will likely focus on machine learning approaches that can leverage the growing repository of curated metabolic models [1], while community-aware gap-filling will become increasingly important for modeling complex microbial ecosystems [3]. The ultimate goal remains the development of fully automated, highly accurate gap-filling methods that minimize the need for labor-intensive manual curation while producing models that faithfully capture an organism's metabolic capabilities.

The Kyoto Encyclopedia of Genes and Genomes (KEGG) represents a comprehensive knowledge base that integrates genomic, chemical, and systemic functional information to enable biological data interpretation in the context of cellular processes and organismal behaviors. Developed since 1995, KEGG has evolved into a foundational resource for researchers exploring high-level functions of biological systems using molecular-level datasets generated through genome sequencing and high-throughput experimental technologies [7] [8]. This database resource is structured around three principal pillars: pathway maps that diagram molecular interaction networks, ortholog groups that define conserved functional units across species, and reaction networks that describe chemical structure transformations. These core components collectively provide a framework for linking genomic information to higher-order biological functions, making KEGG particularly valuable for metabolic reconstruction, pathway analysis, and gap-filling research in incomplete genomic datasets [9]. The integration of these elements allows researchers to move beyond simple gene catalogs to understanding systemic functions, enabling predictions about metabolic capabilities even when genomic information remains partial or fragmented.

KEGG Pathway Maps: Molecular Interaction and Reaction Networks

Architecture and Classification System

KEGG PATHWAY serves as a centralized repository of manually drawn pathway maps representing current knowledge on molecular interaction, reaction, and relation networks [10]. These pathway maps are systematically organized into a hierarchical structure encompassing seven major categories: Metabolism, Genetic Information Processing, Environmental Information Processing, Cellular Processes, Organismal Systems, Human Diseases, and Drug Development [10] [8]. Each pathway map is identified by a unique identifier combining a 2-4 letter prefix code with a 5-digit number, where the prefix denotes the pathway type and the number indicates its specific classification within the KEGG system [10]. The pathway classification system enables precise navigation through biological processes, with metabolism pathways further subdivided into global/overview maps and specific metabolic pathways covering processes like phenylpropanoid biosynthesis, flavonoid biosynthesis, and various antibiotic synthesis pathways [10].

Table 1: KEGG Pathway Identifier Prefixes and Their Meanings

| Prefix | Pathway Type | Description |

|---|---|---|

| map | Reference pathway | Manually drawn reference pathway |

| ko | Reference pathway | Highlights KEGG Orthology (KO) groups |

| ec | Reference metabolic pathway | Highlights Enzyme Commission (EC) numbers |

| rn | Reference metabolic pathway | Highlights reactions |

| Organism-specific pathway | Generated by converting KOs to organism-specific gene identifiers | |

| vg | Viruses pathway | Viruses pathway generated by converting KOs to geneIDs |

| vx | Viruses extended pathway | Includes synteny analysis data |

Visualization and Interpretation

The KEGG pathway maps employ consistent visualization conventions where rectangular boxes typically represent enzymes or gene products, and circles represent metabolites or chemical substances [8]. These graphical representations are interactive, allowing researchers to click on elements to access detailed information about genes, enzymes, and metabolites. In experimental data visualization, color coding is frequently employed to represent differential expression or abundance, with red commonly indicating up-regulation and green indicating down-regulation [8]. The KEGG Mapper tool suite provides computational resources for mapping user data onto these pathway maps, enabling researchers to interpret their genomic, transcriptomic, or metabolomic datasets in the context of known biological pathways [7] [11]. This visualization capability is particularly valuable for identifying activated pathways, understanding metabolic regulation in disease states, and detecting functional modules within large-scale omics data.

KEGG Orthology (KO): Bridging Genomes and Biological Systems

Functional Orthologs and Molecular Networks

The KEGG Orthology (KO) database serves as a critical bridge connecting genomic information with higher-order biological systems through the concept of functional orthologs [12]. A KO entry represents a group of homologous proteins that share conserved functional characteristics, manually defined within the context of KEGG molecular networks including pathway maps, BRITE hierarchies, and KEGG modules. Each ortholog group is assigned a unique K number identifier (e.g., K00973), which serves as the fundamental unit for linking gene products to their functional roles across species [12]. The KO system employs a hierarchical classification structure organized into six top-level categories (09100 to 09160) for KEGG pathway maps and one top category (09180) for BRITE hierarchies, facilitating systematic functional annotation [12]. This orthology-based approach allows for consistent functional prediction and annotation transfer from experimentally characterized proteins to uncharacterized homologs across diverse organisms.

Genome Annotation and KO Assignment

KEGG provides sophisticated tools for genome annotation through KO assignment, which involves identifying appropriate K numbers for genes within a genome rather than providing simple text descriptions of functions [12]. The primary tools for this purpose include:

- KOALA (KEGG Orthology And Links Annotation): An internal annotation algorithm that uses a modified identity score considering both sequence identity and alignment length to assign K numbers [12].

- BlastKOALA: A web service utilizing BLAST searches against a curated set of KEGG GENES data followed by KO assignment using the newkoala algorithm [12].

- GhostKOALA: A similar service using GHOSTX for faster sequence comparison against KEGG GENES [12].

These annotation tools enable automatic reconstruction of KEGG pathways through the process of KEGG mapping, where a gene set is converted to a K number set and mapped onto pathway representations [12]. This approach facilitates the interpretation of high-level biological functions directly from genomic sequences, making it particularly valuable for analyzing newly sequenced organisms or metagenomic assemblies.

Reaction Modules and Chemical Network Analysis

Reaction Classes and Modular Organization

KEGG Reaction Modules (RModules) represent conserved sequences of chemical structure transformation patterns defined by sets of Reaction Class identifiers (RC numbers) [13]. Unlike KEGG modules defined by gene orthologs, reaction modules are derived purely from chemical structure transformation patterns along metabolic pathways without incorporating enzyme data [13]. This chemical-centric approach allows for the identification of conserved biochemical transformation motifs across diverse metabolic pathways. Reaction classes function as "reaction orthologs" that accommodate global structural differences between metabolites while preserving core chemical transformation patterns. Examples of these modules include RM001 (2-Oxocarboxylic acid chain extension by tricarboxylic acid pathway) and RM018 (Beta oxidation in acyl-CoA degradation), which represent fundamental biochemical transformation units [13].

Table 2: Representative KEGG Reaction Modules and Their Functions

| Reaction Module ID | Name | Functional Role |

|---|---|---|

| RM001 | 2-Oxocarboxylic acid chain extension by tricarboxylic acid pathway | Chain elongation in carboxylic acid metabolism |

| RM002 | Carboxyl to amino conversion using protective N-acetyl group | Basic amino acid synthesis |

| RM018 | Beta oxidation in acyl-CoA degradation | Fatty acid degradation |

| RM020 | Fatty acid synthesis using acetyl-CoA | Lipid biosynthesis (reversal of RM018) |

| RM022 | Nucleotide sugar biosynthesis, type 1 | Sugar activation and nucleotide sugar formation |

| RM008 | Ortho-cleavage of dihydroxylated aromatic ring | Aromatic compound degradation (beta-ketoadipate pathway) |

| RM009 | Meta-cleavage of dihydroxylated aromatic ring | Alternative aromatic compound degradation pathway |

Integration with KEGG Modules

The relationship between reaction modules and KEGG modules reveals the fundamental architecture of metabolic networks. KEGG modules (M numbers) represent functional units defined by sets of KO identifiers for the enzymes involved, while reaction modules (RM numbers) describe the underlying chemical transformations [13]. The overview maps in KEGG illustrate the correspondence between these two perspectives, demonstrating how genetic and chemical networks align in metabolic pathways. For instance, the degradation capacity for aromatic compounds like benzene, toluene, and xylene can be traced through both module types: benzene is converted to catechol via M00548 (enzymatic module) or RM006 (reaction module), followed by ring cleavage through M00569/RM009 (meta-cleavage) or M00568/RM008 (ortho-cleavage) [13]. This dual representation enables researchers to analyze metabolic capabilities from both genetic and biochemical perspectives, enhancing gap-filling approaches in metabolic reconstruction.

KEGG in Gap-Filling Research: Methodologies and Applications

Computational Frameworks for Pathway Completion

KEGG's structured representation of biological knowledge enables sophisticated gap-filling methodologies that predict missing metabolic functions in incomplete genomic datasets. Gap-filling addresses the challenge that metabolic networks reconstructed from environmental genomes often contain gaps due to sequencing biases, novel protein families, and incomplete annotation databases [9]. Traditional approaches include network topology-based methods like Gapseq and rule-based methods using predefined KEGG module completeness cutoffs, as implemented in METABOLIC [9]. However, these methods often underestimate pathways in highly incomplete genomes. More advanced machine learning approaches have emerged, notably MetaPathPredict, which employs deep learning models trained on gene annotation features from high-quality genomes to predict the presence of KEGG metabolic modules even when annotation support is incomplete [9]. This tool demonstrates that robust predictions can be achieved with genomes as incomplete as 30%, significantly advancing gap-filling capabilities.

Diagram 1: MetaPathPredict workflow for KEGG module prediction

Genome-Scale Metabolic Network Reconstruction

The reconstruction of Genome-Scale Metabolic Network (GSMN) models represents a powerful systems biology approach for identifying potential drug targets and understanding pathogen physiology [5]. The standard workflow for GSMN reconstruction involves three main stages: (1) preliminary reconstruction using genomic data from KEGG, (2) manual curation including gap filling and standardization, and (3) simulation-based refinement to assess biomass synthesis capability [5]. A key application of this approach is demonstrated in the VPA2061 model for Vibrio parahaemolyticus, which comprises 2061 reactions and 1812 metabolites [5]. Through essential metabolite analysis and pathogen-host association screening, this model identified 10 essential metabolites critical for bacterial survival that serve as candidate targets for novel antimicrobial strategies [5]. The subsequent identification of 39 structural analogs for these essential metabolites further enables targeted drug design, demonstrating how KEGG-based metabolic models bridge genomic information and therapeutic development.

Table 3: Key Reagent Solutions for KEGG-Based Metabolic Reconstruction

| Research Reagent/Resource | Type | Function in Analysis |

|---|---|---|

| KEGG PATHWAY Database | Database | Reference pathway maps for manual curation and validation |

| KEGG ORTHOLOGY (KO) Database | Database | Functional ortholog definitions for gene annotation |

| KEGG MODULE Database | Database | Predefined functional units for pathway completeness assessment |

| KEGG Compound Database | Database | Metabolic reactant and product structures for reaction balancing |

| BlastKOALA | Tool | Automated K number assignment for gene products |

| KEGG Mapper Color Tool | Tool | Visualization of user data on KEGG pathway maps |

| MetaPathPredict | Tool | Machine learning prediction of KEGG module presence in incomplete genomes |

| Structural Analog Databases (ChemSpider, PubChem, ChEBI, DrugBank) | Database | Identification of compound analogs for drug target development |

Experimental Protocol for Drug Target Identification Using KEGG

The following methodology outlines a proven protocol for identifying potential drug targets through KEGG-based metabolic network reconstruction, adapted from successful applications in bacterial pathogens [5]:

Data Acquisition and Preliminary Reconstruction

- Retrieve metabolic data for target organism subtypes from KEGG database, including genes, metabolic reactions, enzymes, metabolites, and pathways.

- Systematically organize and integrate datasets to preliminarily reconstruct the GSMN.

- Compile reaction information including IDs, names, reaction equations, directionality, and stoichiometric balance.

Manual Model Curation and Refinement

- Supplement missing network information using KEGG pathway maps and RCLASS data.

- Standardize metabolite chirality to biologically predominant forms (e.g., convert D-Glucose C00031 to alpha-D-Glucose C00267).

- Remove redundant reactions according to criteria: multi-step reactions (retain overall if no branching), general reactions (remove class-based), incomplete reactions (remove undefined coefficients), macromolecular reactions (remove R-group containing), and duplicates.

- Perform gap filling at pathway and global levels using KEGG-derived reactions, prioritizing reactions sharing pathways with gap-flanking reactions.

Network Validation and Simulation

- Add transport and exchange reactions based on phylogenetically related organisms with characterized models.

- Assess biomass synthesis capability through flux balance analysis.

- Iteratively refine model until accurate simulation of biomass production is achieved.

Essentiality Analysis and Target Identification

- Conduct essential metabolite analysis to identify compounds critical for pathogen survival.

- Perform pathogen-host association screening to filter out metabolites common to host metabolism.

- Identify structural analogs for essential metabolites using chemical databases (ChemSpider, PubChem, ChEBI, DrugBank).

- Validate target potential through molecular docking analysis of essential metabolites and their structural analogs.

Diagram 2: KEGG components in metabolic reconstruction

KEGG's integrated framework of pathway maps, ortholog groups, and reaction modules provides an indispensable foundation for modern biological research, particularly in addressing the challenge of metabolic network gap-filling in incomplete genomic datasets. The structured representation of biological knowledge in KEGG enables both traditional homology-based approaches and advanced machine learning methods like MetaPathPredict to predict metabolic capabilities and identify potential therapeutic targets. As genomic sequencing continues to generate increasingly complex and fragmented datasets, KEGG's role as a central repository of curated biological knowledge becomes ever more critical. The continued development of computational tools that leverage KEGG's resources promises to enhance our ability to infer complete metabolic networks from partial genomic information, advancing both fundamental understanding of biological systems and applied drug discovery efforts.

In the field of systems biology, a primary challenge is the interpretation of genomic data to understand high-level cellular and organismal functions. The Kyoto Encyclopedia of Genes and Genomes (KEGG) was initiated in 1995 to address this challenge by providing a reference knowledge base for biological interpretation of genome sequences [14]. For gap-filling research—the process of identifying and filling missing components in metabolic pathways—KEGG serves as an indispensable resource. Its value lies in the integrated nature of its databases, which link genomic information with chemical reactions, metabolic pathways, and functional orthologs. This integration enables researchers to predict metabolic capabilities of organisms based on genomic data, even when those capabilities are not immediately evident from sequence alone. By representing biological systems as molecular interaction and reaction networks, KEGG provides the conceptual framework and data infrastructure necessary for computational prediction of missing enzymatic functions and pathway components [15] [14].

Core Components of the KEGG Database

The Chemical Foundation: Reactions and Metabolites

The chemical infrastructure of KEGG is built upon several interconnected databases that document the molecular components and transformations of biological systems. KEGG REACTION is a comprehensive database of biochemical reactions, primarily enzymatic reactions, containing all reactions present in KEGG metabolic pathway maps along with additional reactions from the Enzyme Nomenclature [15]. Each reaction is assigned a unique R number identifier (e.g., R00259 for the acetylation of L-glutamate), enabling precise tracking of chemical transformations across different biological contexts.

The KEGG COMPOUND and KEGG GLYCAN databases document metabolites and other small molecules, as well as glycans, respectively. These databases provide chemical structures, formulas, molecular weights, and links to the reactions and pathways in which these molecules participate. The integration of these chemical databases enables researchers to track molecular transformations across entire metabolic networks, a crucial capability for identifying gaps in metabolic pathways.

Table 1: Core Chemical Databases in KEGG LIGAND

| Database Name | Identifier Prefix | Content Description | Primary Use in Gap-Filling |

|---|---|---|---|

| KEGG REACTION | R number | Biochemical reactions, mostly enzymatic | Identifying missing transformations in pathways |

| KEGG COMPOUND | C number | Metabolites and other small molecules | Identifying missing metabolites in pathways |

| KEGG GLYCAN | G number | Glycans | Tracing glycan biosynthesis pathways |

| KEGG RCLASS | RC number | Reaction classes based on transformation patterns | Grouping similar reactions for pattern recognition |

A critical innovation in KEGG is the Reaction Class (RCLASS) system, which classifies reactions based on chemical structure transformation patterns of substrate-product pairs [15]. This classification uses KEGG atom types—68 classifications of C, N, O, S, and P atomic species that distinguish functional groups and atomic microenvironments. The RCLASS represents a form of "reaction orthology" that accommodates global structural differences of metabolites while focusing on the core chemical transformation, making it particularly valuable for identifying functionally similar enzymes that might fill gaps in metabolic pathways [15].

The Enzyme Coding System: From EC Numbers to K Numbers

The KEGG ENZYME database implements the Enzyme Nomenclature (EC number system) established by the IUBMB/IUPAC Biochemical Nomenclature Committee [16]. This database provides systematic information about enzymatic functions, including accepted names, systematic names, catalytic activities, and links to relevant literature. However, KEGG has evolved beyond relying solely on EC numbers as primary identifiers.

In the current KEGG framework, KEGG Orthology (KO) identifiers serve as the central hub linking genomic information to functional knowledge. Each K number represents an ortholog group that shares conserved functional characteristics [14]. This shift from EC numbers to K numbers addressed a fundamental limitation: while EC numbers represent experimentally characterized enzymatic activities, they do not inherently contain sequence information. The KO system connects these functional definitions with sequence data, enabling more reliable transfer of functional annotations across organisms [16] [14].

Table 2: Enzyme and Orthology Representation in KEGG

| Identifier Type | Format | Source/Basis | Role in Pathway Reconstruction |

|---|---|---|---|

| EC number | 1.1.1.1 | IUBMB/IUPAC Enzyme Nomenclature | Standardized reaction classification |

| K number (KO) | K00001 | Ortholog groups defined by sequence similarity and function | Linking genes to pathway modules |

| R number | R00259 | Biochemical reactions in KEGG | Representing specific chemical transformations |

| RC number | RC00064 | Reaction classes based on transformation patterns | Identifying conserved reaction patterns |

The manual curation process for KO records includes associating them with protein sequence data from functional characterization experiments and relevant reference literature [14]. As of September 2015, references (PubMed links) and sequence data (GENES links) were included in 76% and 45%, respectively, of approximately 19,000 KO entries, establishing a solid foundation for reliable annotation transfer in gap-filling exercises [14].

Integrated Pathway Mapping

The KEGG PATHWAY database provides manually drawn pathway maps that represent molecular interaction, reaction, and relation networks [10]. These maps serve as reference frameworks against which researchers can compare their genomic data to identify missing components. Each pathway map is identified by a combination of a 2-4 letter prefix code and a 5-digit number, with prefixes indicating the type of representation:

- map: Manually drawn reference pathway

- ko: Reference pathway highlighting KOs

- ec: Reference metabolic pathway highlighting EC numbers

- rn: Reference metabolic pathway highlighting reactions

- <org>: Organism-specific pathway generated by converting KOs to gene IDs [10] [17]

This multi-layered representation allows researchers to view metabolic networks from different perspectives—focusing on chemical transformations (rn), enzymatic functions (ec), or evolutionary conserved ortholog groups (ko)—depending on the specific gap-filling task at hand.

Database Integration: Linking Reactions, Metabolites and Enzymes

The power of KEGG for gap-filling research emerges from the sophisticated integration of its component databases. This integration creates a network of knowledge where information can be traversed seamlessly from genomic sequences to metabolic functions.

The KEGG Orthology System as an Integration Hub

The KO system serves as the central integration point in KEGG, connecting genomic information with functional knowledge. K numbers are associated with ortholog groups defined by sequence similarity and functional conservation [14]. Each KO entry contains:

- Symbol and name identifying the functional ortholog group

- Pathway information linking the KO to relevant metabolic pathways

- Module information connecting the KO to functional modules

- BRITE hierarchies classifying the KO within functional categories

- Gene lists from various organisms that belong to the ortholog group

- Sequence data representing the ortholog group [18]

This organization enables a systematic approach to gap-filling: when a gene is annotated with a K number, it automatically inherits the functional context of that ortholog group, including its position in metabolic pathways and association with specific biochemical reactions.

Reaction Modules as Functional Units

Reaction modules represent conserved sequences of chemical structure transformation patterns defined by sets of Reaction Class identifiers (RC numbers) [13]. Unlike KEGG modules (defined by K numbers for enzymes), reaction modules are derived purely from chemical data without incorporating enzyme information, based on the analysis of chemical structure transformation patterns along metabolic pathways [13]. This dual perspective—gene-centric modules and chemistry-centric modules—provides complementary evidence for gap-filling.

Examples of reaction modules include:

- RM001: 2-Oxocarboxylic acid chain extension by tricarboxylic acid pathway

- RM018: Beta oxidation in acyl-CoA degradation

- RM020: Fatty acid synthesis using acetyl-CoA (reversal of RM018) [13]

The correspondence between gene-defined modules (M numbers) and reaction modules (RM numbers) reveals the evolutionary conservation of chemical transformation patterns across different organisms and enzyme systems. For instance, the BTX (benzene, toluene, xylene) degradation pathway can be represented both in terms of gene modules (M00548, M00538, etc.) and reaction modules (RM006, RM003, etc.), providing orthogonal evidence for pathway completeness [13].

Methodologies for Gap-Filling Research Using KEGG

Pathway Reconstruction and Completion

The standard methodology for gap-filling using KEGG involves systematic reconstruction of metabolic pathways from genomic data, followed by identification and prediction of missing components. The KEGG Mapper tool suite provides essential functionality for this process:

Genome Annotation: Assign K numbers to genes in the target genome using BlastKOALA or GhostKOALA annotation servers, which utilize non-redundant pangenome data sets generated from the KEGG GENES database [14].

Pathway Mapping: Map the annotated K numbers to KEGG pathway maps using the KEGG Mapper - Search Pathway tool to visualize present and missing pathway components.

Gap Identification: Identify reactions in target pathways that lack corresponding gene annotations in the query genome.

Candidate Gene Identification: Search for candidate genes that might fill the identified gaps using:

- Sequence similarity against KOs known to catalyze the missing reactions

- Genomic context analysis of neighboring genes

- Reaction class analysis to identify isofunctional enzymes with divergent sequences

Experimental Validation Design: Design experiments to verify predicted functions of candidate genes based on metabolic profiling and enzyme activity assays.

Pathway Prediction Tools

KEGG provides specialized tools for predicting metabolic pathways, particularly for biodegradation and biosynthesis of compounds:

PathPred: Predicts biodegradation/biosynthetic pathways for given compounds based on reaction module patterns and known pathway templates [15].

E-zyme: Automatically assigns EC numbers to substrate-product pairs based on chemical transformation patterns, enabling functional prediction of uncharacterized enzymes [15].

The experimental protocol for using these tools involves:

Input Preparation:

- For PathPred: Provide the compound identifier (C number) or chemical structure of the target compound

- For E-zyme: Provide substrate and product structures or identifiers

Pathway Analysis:

- PathPred returns possible pathway routes with similarity scores to known pathways

- E-zyme returns possible EC numbers with confidence measures

Result Interpretation:

- Evaluate pathway completeness based on presence of required enzymes in the target genome

- Identify missing steps that require gap-filling candidates

- Consider alternative pathways with different enzyme requirements

Reaction Module Analysis for Pathway Evolution

The analysis of reaction modules provides a methodology for understanding pathway evolution and identifying alternative enzymes that can fill functional roles:

Identify Reaction Modules: Decompose target pathways into their constituent reaction modules using the KEGG MODULE database and RM numbers [13].

Compare Module Conservation: Examine the conservation of reaction modules across different taxonomic groups to identify evolutionarily stable functional units.

Search for Isofunctional Modules: Identify different gene modules (M numbers) that implement the same reaction module (RM number), revealing evolutionary solutions to the same chemical transformation.

Predict Alternative Pathway Completions: Based on conserved reaction modules, predict possible alternative implementations of missing pathway steps using different enzyme combinations.

Table 3: Essential Research Reagent Solutions in KEGG Gap-Filling

| Resource Type | Specific Examples | Function in Gap-Filling Research |

|---|---|---|

| Annotation Servers | BlastKOALA, GhostKOALA | High-throughput K number assignment for genome annotation |

| Pathway Mapping Tools | KEGG Mapper, Search Pathway | Visualization of present and missing pathway components |

| Prediction Tools | PathPred, E-zyme | Prediction of metabolic pathways and enzyme functions |

| API Access | KEGG REST API | Programmatic access for large-scale analyses |

| Modular Resources | KEGG MODULE, Reaction Modules | Identification of conserved functional units |

| Chemical Tools | RCLASS, RPAIR | Analysis of chemical transformation patterns |

Accessing KEGG: Programmatic Methods and Data Retrieval

For large-scale gap-filling analyses, programmatic access to KEGG is essential. The KEGG API provides a REST-style interface for retrieving data from all KEGG databases [19]. The basic URL format is:

Essential operations include:

- info: Get database statistics and release information

- list: Obtain lists of entry identifiers and names

- find: Search for entries matching query keywords

- get: Retrieve database entries in flat file format

- conv: Convert identifiers between KEGG and external databases

- link: Create links between KEGG databases [19]

Example usage for gap-filling research:

Retrieve all reactions for a pathway:

Find enzymes for a specific reaction:

Get orthologs for an enzyme:

Retrieve organism-specific genes for a KO:

The KGML (KEGG Markup Language) format provides computational access to pathway structure and topology, enabling advanced analyses of pathway connectivity and gap identification [17]. KGML files can be obtained through the KEGG API or via "Download KGML" links on pathway pages, supporting computational modeling of metabolic networks and systematic identification of missing components.

KEGG represents a comprehensive framework for understanding and analyzing biological systems through its integrated representation of reactions, metabolites, and enzyme codes. For gap-filling research, KEGG provides both the reference knowledge and computational tools necessary to identify missing components in metabolic pathways and predict candidate genes to fill these gaps. The power of KEGG lies in its multi-layered integration—connecting genomic sequences through KO groups to biochemical reactions and metabolic pathways, while maintaining complementary perspectives through gene-centric modules and chemistry-centric reaction modules.

As genomic data continues to grow exponentially, the role of integrated databases like KEGG in gap-filling research becomes increasingly critical. The structured organization of chemical, genomic, and systems information enables researchers to move beyond simple sequence annotation to meaningful functional prediction and pathway reconstruction. Future developments in KEGG will likely enhance these capabilities through expanded coverage of enzyme functions, improved integration of chemical knowledge, and more sophisticated prediction algorithms—further solidifying its role as a universal database for bridging gaps in our understanding of biological systems.

Metabolism is crucial for all living cells as it provides energy and molecular building blocks for all biological functions. Systematically understanding metabolism is therefore critically important in both medical research and synthetic biology for engineering cells [20]. Over the last decade, researchers have built genome-scale metabolic models (GEMs) to simulate the complete known metabolism of organisms of interest. However, these models contain significant knowledge gaps stemming from unannotated and misannotated genes, promiscuous enzymes, unknown reactions and pathways, and underground metabolism [20]. A detailed understanding of these cellular functions drives biomedical applications such as drug-targeting strategies and enables the efficient design of cell factories for producing valuable chemicals and pharmaceuticals [20].

The functionality of a considerable portion of each genome remains undefined, with even well-characterized organisms like Escherichia coli lacking annotation for approximately 35% of its genes [21]. Universal biochemical databases like KEGG play a pivotal role in gap-filling research by providing curated repositories of known biochemical knowledge that serve as reference points for identifying and reconciling these metabolic gaps, though they are limited to known biochemistry.

Metabolic gaps in GEMs primarily originate from two fundamental sources: missing gene annotations and incomplete biochemistry.

Missing Gene Annotations

Missing gene annotations occur when genes within a genome have not been assigned a specific biochemical function. This represents a significant challenge for constructing accurate GEMs, which rely on gene-protein-reaction (GPR) associations to simulate metabolic capabilities [21]. In the context of GEMs, this manifests as:

- False essentiality predictions: Cases where models incorrectly predict that a gene is essential for growth despite experimental evidence showing the organism can survive without it [21].

- Incomplete network connectivity: Metabolic networks that contain dead-end metabolites or interrupted pathways due to missing enzyme functions.

Incomplete Biochemistry

Incomplete biochemistry refers to the limitation of existing biochemical databases to only include previously observed and characterized reactions, potentially missing:

- Underground metabolism: Native promiscuous activities of enzymes that are not their primary function [20].

- Novel enzyme functions: Biochemical capabilities that exist in nature but have not yet been discovered or characterized [20].

- Hypothetical reactions: Thermochemically feasible transformations between known metabolites that have not been experimentally verified [20].

The limitations of database-dependent approaches become apparent when considering that earlier gap-filling methods relying solely on known biochemical databases like KEGG offer limited solutions. In a case study of E. coli, the average number of solutions per rescued reaction was only 2.3 when using KEGG, compared to 252.5 when using the ATLAS database of known and hypothetical reactions [20].

Table 1: Quantitative Comparison of Gap-Filling Reaction Databases

| Database | Type of Content | Number of Reactions | Average Solutions per Rescued Reaction | Gaps Rescued in E. coli iML1515 |

|---|---|---|---|---|

| KEGG | Known biochemical reactions | Limited to characterized reactions | 2.3 | 53/152 (35%) |

| ATLAS of Biochemistry | Known + hypothetical reactions | ~150,000 putative reactions | 252.5 | 93/152 (61%) |

Methodologies for Identification and Resolution

The NICEgame Workflow for Gap Identification and Curation

Network Integrated Computational Explorer for Gap Annotation of Metabolism (NICEgame) is a computational workflow specifically designed to characterize and curate metabolic gaps using both known and hypothetical reactions [21]. This workflow represents a significant advancement over traditional methods by systematically exploring beyond known biochemistry.

The NICEgame workflow involves seven main steps [21]:

- Harmonization of metabolite annotations with the ATLAS of Biochemistry to ensure proper connectivity.

- Preprocessing of the GEM and identification of metabolic gaps by comparing in silico and in vitro gene knockout experiments.

- Merging the GEM with the ATLAS of Biochemistry to create an ATLAS-merged GEM.

- Comparative essentiality analysis to identify reactions or genes that are essential in the original GEM but dispensable in the ATLAS-merged GEM (designated as "rescued").

- Systematic identification of alternative biochemistry for the rescued reactions or genes.

- Evaluation and ranking of all alternative biochemistry based on multiple criteria.

- Identification of potential genes using the BridgIT tool to catalyze the top-ranked suggested biochemistry.

Diagram 1: The NICEgame workflow for metabolic gap identification and resolution.

Community-Level Gap-Filling Algorithm

For microbial communities, a specialized gap-filling approach considers metabolic interactions between species that coexist. This method resolves metabolic gaps in individual metabolic reconstructions while considering potential metabolic cross-feeding and other interactions in the community [22]. This approach is particularly valuable for organisms that cannot be easily cultivated in isolation due to complex metabolic interdependencies.

The community gap-filling algorithm:

- Combines incomplete metabolic reconstructions of microorganisms known to coexist.

- Permits metabolic interactions during the gap-filling process.

- Identifies non-intuitive metabolic interdependencies that are difficult to discover experimentally [22].

- Restores growth in metabolic models while predicting both cooperative and competitive metabolic interactions.

This method has been successfully applied to a synthetic community of auxotrophic E. coli strains, a community of Bifidobacterium adolescentis and Faecalibacterium prausnitzii from the human gut microbiota, and a community of Dehalobacter and Bacteroidales species [22].

Experimental Protocol: Essentiality Analysis for Gap Identification

A critical component of metabolic gap identification involves comparing computational predictions with experimental data to pinpoint discrepancies indicating missing metabolism.

Materials and Experimental Setup:

- Organism: Escherichia coli strain MG1655

- Growth Medium: Glucose minimal media

- Genetic Material: Single-gene knockout library

- Analysis Tools: Genome-scale metabolic model iML1515

Methodology:

- Perform in silico essentiality analysis using the GEM under defined conditions (e.g., glucose minimal media) [21].

- Compare computational predictions with experimental phenotype data from single-gene knockout studies [21].

- Identify false essentiality predictions - genes that the model predicts are essential but experimental data shows are non-essential [21].

- Map these false essentiality predictions to the corresponding reactions in the metabolic network [21].

In the application of NICEgame to E. coli GEM iML1515, this process identified 148 false-negative genes corresponding to 152 false-negative essential reactions [21]. These represent metabolic gaps where the model lacks biochemistry that clearly exists in the actual organism.

Table 2: Key Research Reagents and Computational Tools for Metabolic Gap-Filling

| Resource Name | Type | Primary Function | Application in Gap-Filling |

|---|---|---|---|

| KEGG Database | Biochemical Database | Repository of known biochemical pathways and reactions | Reference database of known biochemistry for traditional gap-filling [20] |

| ATLAS of Biochemistry | Expanded Reaction Database | Database of ~150,000 known and hypothetical biochemical reactions | Provides hypothetical reactions to explore biochemical space beyond known reactions [20] [21] |

| BridgIT | Computational Tool | Maps biochemical reactions to potential enzyme-coding genes | Identifies candidate genes for catalyzing proposed gap-filling reactions [20] [21] |

| Gene Knockout Libraries | Experimental Resource | Collections of strains with individual genes inactivated | Provides phenotypic data for validating and refining model predictions [21] |

| iML1515 | Genome-Scale Model | Comprehensive metabolic reconstruction of E. coli | Reference model for testing gap-filling methodologies [21] |

Validation and Implementation

Case Study: Enhanced E. coli Metabolic Model

The application of NICEgame to the E. coli GEM iML1515 demonstrated substantial improvements in model accuracy and predictive power:

- 77 new biochemical reactions associated with 35 E. coli genes were proposed to extend the model [20].

- These additions reconciled 47% of the 148 identified false essential gene predictions [20] [21].

- Among the 35 genes, 33 were present in the original GEM iML1515, demonstrating substrate or mechanism promiscuity, while two new genes (ArcA and LacA) were added to the model [20].

- The extended GEM, designated iEcoMG1655, showed a 23.6% accuracy increase in gene essentiality predictions across 15 carbon sources compared to the original iML1515 [20].

Diagram 2: Results of applying NICEgame to E. coli metabolic model iML1515.

Assessment of Hypothetical Biochemistry

A critical consideration in implementing gap-filling solutions is the evaluation and prioritization of proposed hypothetical reactions. NICEgame employs a multi-criteria scoring system to rank potential solution sets [20]:

- Thermodynamic feasibility: Reactions must be thermodynamically plausible.

- Minimal network impact: Solutions with minimal effect on model structure are preferred.

- Pathway length: Shorter pathways are favored due to lower cellular protein production costs.

- Gene association confidence: Reactions annotated with enzymes having higher BridgIT confidence scores are prioritized [20].

- Biological consistency: Solutions that expand metabolome or enzymatic capabilities are ranked lower to maintain biological relevance.

This systematic approach ensures that gap-filling solutions are not only computationally efficient but also biologically plausible, enhancing the model's predictive accuracy without introducing unrealistic metabolic capabilities.

Metabolic gaps arising from missing annotations and incomplete biochemistry represent significant challenges in systems biology. While universal databases like KEGG provide essential foundational knowledge for gap-filling research, their limitation to known biochemistry constrains their ability to fully resolve metabolic gaps. Advanced computational workflows like NICEgame that incorporate hypothetical reactions from expanded databases like ATLAS of Biochemistry demonstrate substantially improved capability to identify and reconcile metabolic gaps.

The integration of these approaches with experimental validation and community-aware gap-filling algorithms provides a powerful framework for enhancing genome-scale metabolic models. These advances directly impact drug development and biotechnology by enabling more accurate predictions of cellular behavior, identification of novel drug targets, and design of efficient microbial cell factories. As high-throughput phenotyping technologies continue to advance, these gap-filling workflows will generate increasingly robust hypotheses to systematically characterize the unexplored metabolic capabilities of organisms central to biomedical research and industrial applications.

From Theory to Practice: Computational Strategies for Gap-Filling with KEGG

Genome-scale metabolic reconstructions are powerful tools for summarizing biochemical knowledge and predicting cellular phenotypes. However, these reconstructions often contain gaps—missing metabolic functions that hinder their predictive accuracy and biochemical fidelity. This whitepaper examines optimization-based algorithms for gap filling, with a specific focus on the fastGapFill algorithm and its core principle of metabolic flux consistency. We explore how this method leverages universal biochemical databases like KEGG to efficiently identify candidate missing reactions in compartmentalized metabolic networks, enabling more accurate metabolic model reconstruction for biomedical and biotechnological applications.

Metabolic network reconstructions systematically represent biochemical, physiological, and genomic knowledge in a structured, computable format [23]. When converted to computational models, these reconstructions can predict phenotypes with valuable applications in drug discovery, microbial strain improvement, and understanding human disease mechanisms [24] [4]. The predictive capacity of these models directly depends on the comprehensiveness and biochemical accuracy of the underlying reconstruction.

Network gaps—metabolic functions that are present in the target organism but missing from the reconstruction—manifest as blocked reactions that cannot carry flux in steady-state simulations [23]. These gaps arise from incomplete biochemical knowledge or limitations in genomic annotation. Gap-filling algorithms address this problem by algorithmically identifying missing metabolic functions from universal biochemical databases, thereby improving model functionality and predictive power [23] [9].

The development of fastGapFill represented a significant advancement in the field, as it was the first scalable algorithm capable of efficiently handling compartmentalized genome-scale models without requiring decompartmentalization, which previously led to underestimating missing information [23].

The FastGapFill Algorithm: Core Principles and Methodologies

Fundamental Problem Formulation

The metabolic gap-filling problem begins with a computational metabolic model (M) that contains blocked reactions—reactions that cannot carry flux under steady-state conditions despite being biologically required [23]. The algorithm searches a universal biochemical database (such as KEGG) to find minimal sets of reactions that, when added to model M, enable previously blocked reactions to carry flux [23].

Algorithmic Workflow and Implementation

fastGapFill extends the fastcore algorithm, which approximates cardinality minimization to identify compact flux-consistent models [23]. The implementation involves several key phases:

Phase 1: Preprocessing and Global Model Generation

- A cellularly compartmentalized metabolic model (S) without blocked reactions (B) is expanded using a universal metabolic database (U)

- A copy of U is placed in each cellular compartment of S, including the extracellular space, to generate SU

- For metabolites in non-cytosolic compartments, reversible intercompartmental transport reactions are added

- For extracellular metabolites, exchange reactions are added

- These additional reaction sets (X) are added to SU to generate a global model

- Solvable blocked reactions (Bs) that become flux-consistent when added to the global model are identified and added to create the extended global model (SUX) [23]

Phase 2: Computing a Compact Flux-Consistent Subnetwork

- fastGapFill computes a subnetwork of SUX containing all core reactions plus a minimal number of reactions from UX

- This ensures all reactions in the resulting compact subnetwork are flux-consistent

- A modified version of fastcore employs linear weightings to prioritize addition of specific reaction types from UX [23]

Phase 3: Optional Analysis and Validation

- Flux vectors can be computed that maximize flux through each previously blocked reaction while minimizing Euclidean norm of flux through the gap-filled subnetwork

- Stoichiometric consistency can be verified using approaches for approximate cardinality maximization to identify metabolites involved in mass-conserving reactions [23]

Table 1: fastGapFill Performance Across Metabolic Models [23]

| Model Name | Reactions in S | Reactions in SUX | Compartments | Blocked Reactions (B) | Solvable Blocked Reactions (Bs) | Gap-Filling Reactions Added |

|---|---|---|---|---|---|---|

| Thermotoga maritima | 535 | 31,566 | 2 | 116 | 84 | 87 |

| Escherichia coli | 2,232 | 49,355 | 3 | 196 | 159 | 138 |

| Synechocystis sp. | 731 | 62,866 | 4 | 132 | 100 | 172 |

| sIEC | 1,260 | 109,522 | 7 | 22 | 17 | 14 |

| Recon 2 | 5,837 | 132,622 | 8 | 1,603 | 490 | 400 |

FastGapFill Algorithm Workflow

The Role of KEGG and Universal Biochemical Databases

Universal biochemical databases serve as knowledge repositories for gap-filling algorithms. The Kyoto Encyclopedia of Genes and Genomes (KEGG) provides a comprehensive collection of pathway maps representing molecular interaction, reaction, and relation networks [10]. KEGG modules are functional units of metabolic pathways composed of sets of ordered reaction steps that cover essential metabolic processes including carbon fixation pathways, nitrification, biosynthesis of vitamins, and transport systems [9].

For gap-filling approaches, KEGG provides:

- Stoichiometrically balanced biochemical reactions that can be integrated into metabolic models

- Structural pathway information that helps validate proposed gap-filling solutions

- Taxonomic-specific pathway variants that enable organism-specific reconstruction

- Reaction modules that maintain biochemical consistency when adding multiple reactions [23] [10] [9]

The integration of KEGG resources with optimization algorithms like fastGapFill enables systematic hypothesis generation about missing metabolic functions, though these computational predictions ultimately require experimental validation [23].

Advanced Methodologies and Comparative Analysis

Experimental Protocol for fastGapFill Implementation

Researchers can implement fastGapFill using the following detailed protocol:

Step 1: Environment Setup and Dependency Installation

- Install MATLAB and the COBRA Toolbox

- Download fastGapFill from http://thielelab.eu

- Configure the universal reaction database (KEGG provided with fastGapFill)

Step 2: Input Data Preparation

- Load the target metabolic reconstruction in MATLAB format

- Verify stoichiometric consistency of the initial model

- Identify blocked reactions using flux variability analysis

Step 3: Algorithm Execution

- Run preprocessing to generate the SUX model

- Set weighting factors to prioritize metabolic over transport reactions

- Execute the core gap-filling algorithm

- Validate stoichiometric consistency of the solution

Step 4: Output Analysis and Interpretation

- Examine the proposed gap-filling reactions

- Map solutions to KEGG pathways for biological context

- Generate flux maps visualizing the resolved network connectivity [23]

Comparative Analysis of Gap-Filling Approaches

Table 2: Comparison of Metabolic Gap-Filling and Pathway Prediction Tools

| Tool | Approach | Key Features | Limitations |

|---|---|---|---|

| fastGapFill | Optimization-based (LP) | Handles compartmentalized models; Ensures flux consistency; Scalable to genome-scale models | Requires MATLAB/COBRA; Solution may not be unique |

| gapseq | Homology & LP-based | Uses curated reaction database; Reduced false negatives in enzyme activity prediction; Automates reconstruction | Focused on bacterial metabolism |

| MetaPathPredict | Machine learning (Deep Learning) | Predicts KEGG modules in incomplete genomes; Works with as low as 30% completeness | Requires gene annotations as KEGG orthologs |

| KEMET | Taxonomy-informed HMMs | Fills gaps using taxonomic constraints | Limited by genome taxonomies in KEGG |

| MinPath | Parsimony-based | Conservative approach; Minimizes additions | Tends to underestimate pathway presence |

Applications in Biotechnology and Drug Development

Metabolic flux analysis, enhanced by comprehensive gap-filled models, has become fundamental for metabolic engineering and biotechnology [25] [26]. The accurate prediction of metabolic states enables researchers to optimize microbial strains for industrial production and identify potential drug targets in pathogens [4].

Biotechnology Applications:

- Microbial strain optimization for biochemical production

- Prediction of substrate utilization and fermentation products

- Identification of metabolic bottlenecks in production pathways

- Design of co-culture systems with complementary metabolic capabilities [24] [4]

Drug Development Applications:

- Identification of essential metabolic functions in pathogens

- Prediction of antimicrobial targets through gene essentiality analysis

- Understanding host-microbiome interactions in disease states

- Personalized medicine approaches through modeling human metabolic variations [4]

Applications of Gap-Filled Metabolic Models

Table 3: Key Research Reagents and Computational Tools for Metabolic Gap-Filling

| Resource | Type | Function | Relevance to Gap-Filling |

|---|---|---|---|

| KEGG Database | Biochemical Database | Provides reference metabolic pathways and reactions | Source of candidate reactions for gap-filling |

| COBRA Toolbox | Software Platform | MATLAB suite for constraint-based reconstruction and analysis | Implementation framework for fastGapFill |

| ModelSEED Biochemistry | Biochemical Database | Comprehensive reaction database with stoichiometrically balanced reactions | Alternative universal database for gap-filling |

| CarveMe | Software Tool | Automated metabolic model reconstruction | Comparative approach for model building |

| MetaPathPredict | Machine Learning Tool | Deep learning prediction of KEGG modules | Complementary approach for pathway completion |

The integration of optimization-based gap-filling with machine learning approaches represents the future of metabolic network reconstruction. Tools like MetaPathPredict demonstrate how deep learning can predict pathway presence in highly incomplete genomes, potentially complementing optimization-based methods like fastGapFill [9]. Similarly, MotifMol3D shows how neural networks can leverage molecular structural features to predict metabolic pathway categories, offering another dimension for validating gap-filling solutions [27].

Future advancements will likely focus on:

- Multi-omics integration combining genomic, transcriptomic, and metabolomic data

- Condition-specific model reconstruction reflecting metabolic adaptations

- Automated validation frameworks for computational predictions

- Improved database curation to reduce stoichiometric inconsistencies

- Taxonomy-specific pathway databases enhancing organism-specific reconstructions [4] [9] [27]

In conclusion, fastGapFill provides an efficient, scalable solution for identifying missing metabolic functions in genome-scale models by leveraging the biochemical knowledge contained in universal databases like KEGG. The principle of metabolic flux consistency ensures biologically relevant solutions that enhance our understanding of cellular metabolism and enable more accurate prediction of metabolic phenotypes for biotechnological and biomedical applications.

Leveraging KEGG Mapper for Pathway Reconstruction and Visualization

The Kyoto Encyclopedia of Genes and Genomes (KEGG) is an integrated database resource developed since 1995 for linking genomic and molecular data to higher-level biological functions, such as pathways and diseases [28] [29]. Its core strength lies in the use of human intelligence to create manually curated models of biological systems, most notably KEGG pathway maps, which capture knowledge from published literature [28]. KEGG Mapper is a suite of computational tools designed to project user data onto these reference knowledge bases, a process termed KEGG mapping, enabling the biological interpretation of large-scale molecular datasets like genome and metagenome sequences [30] [29]. Within the context of gap-filling research—aimed at identifying and predicting missing metabolic functions in biological networks—KEGG Mapper provides an indispensable framework for reconstructing organism-specific pathways from genomic data and visualizing functional capabilities [31].

The KEGG database is organized into four main categories, encompassing 16 databases as shown in Table 1. This integrated structure allows for the systematic linking of genomic information with systems-level and chemical information [28].

Table 1: Core Databases within the KEGG Resource

| Category | Database | Core Content and Purpose |

|---|---|---|

| Systems Information | PATHWAY | Manually drawn KEGG pathway maps [32]. |

| BRITE | Hierarchical classifications of biological entities [28]. | |

| MODULE | Functional units called KEGG modules [28]. | |

| Genomic Information | KO (KEGG Orthology) | Groups of functional orthologs (K numbers) [28] [32]. |

| GENES | Catalog of genes and proteins from complete genomes [28]. | |

| GENOME | Collection of KEGG organisms and viruses [28]. | |

| Chemical Information | COMPOUND, GLYCAN | Metabolites and other small molecules, glycans [28]. |

| REACTION, RCLASS | Biochemical reactions and reaction classes [28]. | |

| ENZYME | Enzyme nomenclature [28]. | |

| Health Information | DISEASE, DRUG | Human diseases and drugs [28]. |

| NETWORK, VARIANT | Disease-related network elements and human gene variants [28]. |

Core KEGG Mapper Tools and Their Applications in Gap-Filling

KEGG Mapper consists of several tools, each designed for specific mapping tasks. For pathway reconstruction and gap-filling, the Reconstruct and Color tools are particularly critical [30].

The Reconstruct Tool

The Reconstruct tool is the primary method for KO-based mapping, which is fundamental for gap-filling analysis [33]. It takes a set of K numbers (KEGG Orthology identifiers) assigned to a genome and reconstructs organism-specific pathways, BRITE hierarchies, and KEGG modules. The tool performs completeness checks on KEGG modules, which are defined functional units, thereby directly identifying potential gaps in a metabolic network [28] [33]. The input for this tool is typically a two-column file where the second column contains K numbers, consistent with the output format of KEGG's automatic annotation servers like BlastKOALA and KofamKOALA [33] [31].

The Search and Color Tools

The Search tool is used to find and mark user-supplied KEGG identifiers (e.g., K numbers, compound numbers) in red on pathway maps or BRITE hierarchies [30]. The more advanced Color tool allows mapping of various objects (genes, metabolites, drugs) to pathway maps and marking them with any combination of background and foreground colors specified by the user [11]. This is invaluable for visualizing complex data, such as overlaying gene expression data (up-/down-regulated in red/green) onto a pathway to interpret metabolic activity and pinpoint inactive pathway branches [8] [11].

The Join Tool and MWsearch

The Join tool combines a BRITE hierarchy file with a binary relation file, effectively adding a new column of attributes to the hierarchy [30]. The MWsearch tool is a specialized variant that converts mass spectrometry data (molecular masses or formulas) into KEGG compound identifiers (C numbers), facilitating the mapping of metabolomics data onto pathways [30].

Table 2: KEGG Mapper Tools for Different Research Applications

| Tool Name | Primary Input | Target Database | Key Application in Gap-Filling |

|---|---|---|---|

| Reconstruct | K numbers (KO identifiers) [33] | PATHWAY, BRITE, MODULE [33] | Reconstruction of pathways and module completeness checks from genomic data. |

| Search | K numbers, EC numbers, Compound numbers, etc. [30] | PATHWAY, BRITE, MODULE [30] | Quick identification of present genes/compounds in reference pathways. |

| Color | KEGG IDs with color specs [11] | PATHWAY (reference & organism-specific) [11] | Visualizing multi-omics data (e.g., gene expression, metabolomics) on pathways. |

| Join | K numbers, Compound numbers, etc. [30] | BRITE hierarchies and tables [30] | Adding custom attributes or experimental data to functional classifications. |

| MWsearch | Molecular formulas or exact masses [30] | PATHWAY [30] | Mapping metabolomics data from mass spectrometry to pathways. |

Technical Protocols for Pathway Reconstruction and Visualization

Protocol 1: Metabolic Reconstruction from Protein Sequences

This protocol details the process of reconstructing metabolic pathways from a set of protein sequences, a cornerstone of gap-filling analysis.

Step 1: Functional Annotation with KO Identifiers

- Input: A FASTA file of amino acid sequences.

- Procedure: Use an automatic annotation service such as BlastKOALA [31], GhostKOALA, or KofamKOALA available on the KEGG website. These tools compare your sequences against KEGG's internal database of KOs and assign the best-matching K number to each query sequence.

- Output: A two-column annotation file, where the first column contains the user's gene identifier and the second column contains the assigned K number (e.g.,

gene001 K00001) [33].

Step 2: Pathway Reconstruction with KEGG Mapper

- Input: The annotation file generated in Step 1.

- Procedure:

- Navigate to the KEGG Mapper Reconstruct tool [33].

- Upload your annotation file or paste its contents.

- Execute the search. The tool will process the K numbers against the PATHWAY, BRITE, and MODULE databases.

- Output: The result is presented in multiple tabs (Pathway, Brite, Module). The Pathway tab lists KEGG pathway maps that contain one or more of your query K numbers. The Module tab lists KEGG modules and automatically evaluates their completeness (complete, partially complete, etc.) based on the presence of required K numbers, directly highlighting metabolic gaps [28] [33].

Step 3: Visualization and Interpretation

- Procedure: Click on any pathway entry in the results to open it in the KEGG pathway map viewer. Genes (or more precisely, their associated KOs) present in your input data will be highlighted in green on the reference pathway map. Missing components will remain uncolored, providing a direct visual guide to gaps in the network [28] [33].

- Advanced Option: For a higher-level view, examine the global metabolic map (map01100) in the "module mode," which treats the map as a collection of functional modules rather than individual genes, offering a coarser but more functionally oriented perspective on network completeness [28].

Diagram: Workflow for metabolic reconstruction from sequences leading to gap identification.

Protocol 2: Visualizing Multi-Omics Data on Pathways

This protocol allows for the color-based visualization of experimental data, such as transcriptomics or metabolomics, directly on KEGG pathways to contextualize findings.

Step 1: Data Preparation

- Input: A list of KEGG identifiers and corresponding color specifications.

- Procedure: Create a two-column, tab- or space-separated file. The first column contains a KEGG identifier (e.g., a K number, EC number, C number, or organism-specific gene ID like