Cofactor Balance in Drug Discovery: Bridging In Silico Predictions and Experimental Validation

Cofactor balance is a critical determinant of success in metabolic engineering and drug discovery, influencing everything from cellular viability to product yield.

Cofactor Balance in Drug Discovery: Bridging In Silico Predictions and Experimental Validation

Abstract

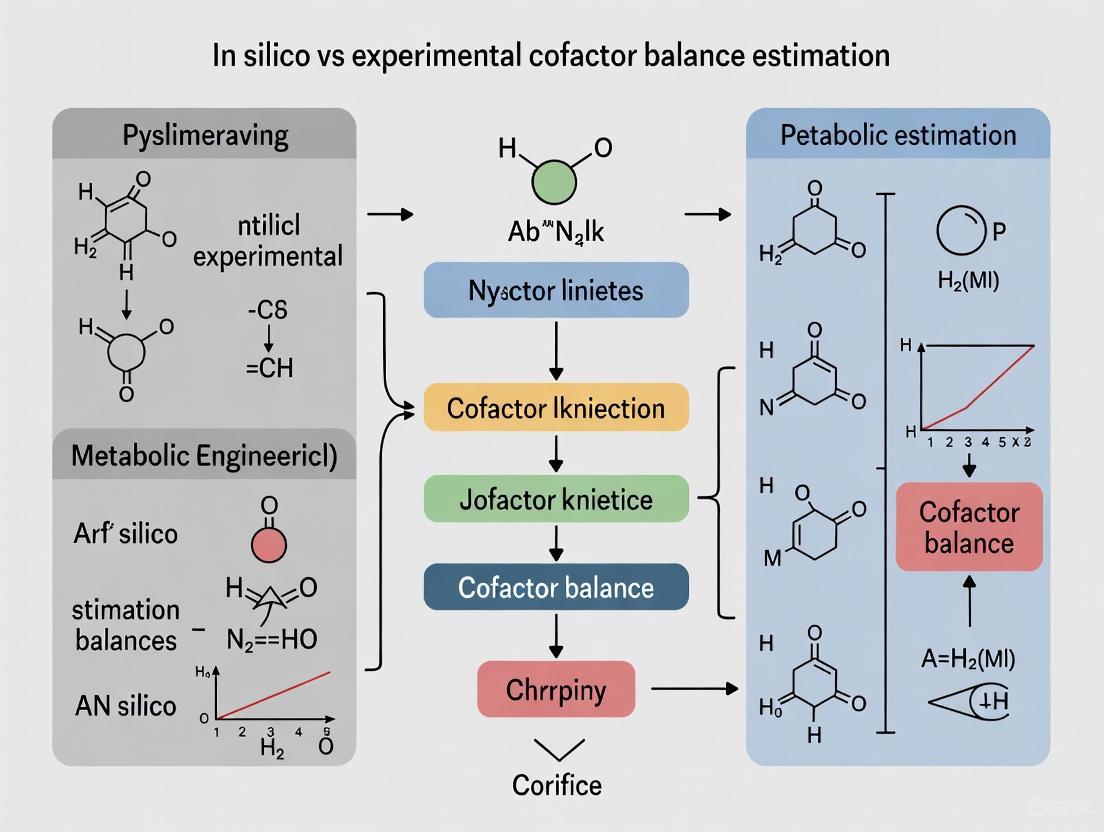

Cofactor balance is a critical determinant of success in metabolic engineering and drug discovery, influencing everything from cellular viability to product yield. This article provides a comprehensive analysis for researchers and drug development professionals, exploring the foundational principles of key cofactors like NAD(P)H and ATP. It delves into the primary computational methodologies, such as Constraint-Based Modeling and Cofactor Balance Assessment algorithms, and contrasts them with experimental techniques like 13C-Metabolic Flux Analysis. The content further addresses common pitfalls in both approaches, offers strategies for model validation and troubleshooting, and synthesizes how the synergistic use of in silico and experimental methods can de-risk the drug development pipeline, reduce costs, and accelerate the creation of efficient microbial cell factories and therapeutic candidates.

The Critical Role of Cofactors: Understanding NAD(P)H, ATP, and Acetyl-CoA in Cellular Metabolism

Cofactors are non-protein chemical compounds that are essential for the catalytic activity of enzymes, acting as the fundamental "currency" for energy conversion and electron transfer within all living organisms. These molecules, which include adenosine nucleotides, nicotinamide adenine dinucleotides, and flavin cofactors, play pivotal roles in every core metabolic pathway by helping proteins catalyze reactions that would otherwise be challenging for the limited chemical toolbox provided by amino acids alone [1] [2]. In eukaryotic mitochondria, the electron transport chain relies on an sophisticated array of cofactors including flavins, iron-sulfur centers, heme groups, and copper to divide the redox change from reduced nicotinamide adenine dinucleotide (NADH) at -320 mV to oxygen at +800 mV into manageable steps [3]. This precise arrangement allows for the conversion and conservation of energy released during electron transfer, ultimately driving the synthesis of adenosine triphosphate (ATP), the universal energy currency of the cell.

The balance of these cofactors is crucial for cellular homeostasis, as they function as interconnected mediators of energy transfer. Living organisms maintain adequate levels of cofactors to preserve metabolic equilibrium or facilitate reproduction, with imbalances leading to significant phenotypic changes [2]. In metabolic engineering, where microorganisms are engineered to function as bio-factories for chemical production, cofactor balance directly influences biotechnological performance [4]. Understanding the precise quantification and interplay of these molecules has become a critical focus in both basic research and applied biotechnology, driving the development of increasingly sophisticated analytical and computational methods for their study.

Cofactor Classification and Core Functions

Cofactors can be systematically categorized based on their primary biochemical functions, which center around energy transfer, redox reactions, and group transfer processes. Each class possesses distinct structural features and thermodynamic properties that enable their specific roles in cellular metabolism.

Energy Currency Cofactors

The adenosine phosphate series, including adenosine monophosphate (AMP), adenosine diphosphate (ADP), and adenosine triphosphate (ATP), serves as the primary energy currency in biological systems. These molecules store and transfer chemical energy through their phosphoryl bonds, with the ATP/ADP couple representing the most commonly used coenzyme in reconstructions of the last universal common ancestor's biochemistry [1] [3]. The free energy released during ATP hydrolysis drives countless cellular processes, from biosynthesis to muscle contraction and active transport across membranes. The structure of ATP features a ribose sugar, adenine base, and three phosphate groups, with the high-energy phosphoanhydride bonds between the beta and gamma phosphates providing approximately 30.5 kJ/mol of energy when hydrolyzed under standard cellular conditions.

Electron Carriers

Electron transfer cofactors function as essential redox mediators, shuttling reducing equivalents between metabolic pathways. The major classes include:

- Nicotinamide adenine dinucleotides (NAD⁺/NADH and NADP⁺/NADPH): Life's premier redox coenzymes, these modified ribonucleotides exist in reduced and oxidized forms and are believed to have been essential components since the last universal common ancestor [1]. NAD⁺ primarily functions in catabolic processes, accepting electrons to become NADH, while NADPH serves as the dominant reducing agent in anabolic biosynthesis.

- Flavin cofactors (FMN and FAD): Derived from riboflavin (vitamin B₂), these cofactors can be reduced by one electron to form flavosemiquinones or by two electrons to form flavohydroquinones, making them versatile redox mediators with midpoint potentials typically around -0.2V [3].

- Iron-sulfur clusters: These inorganic cofactors contain two, three, or four iron atoms bridged by sulfur atoms and liganded to proteins via cysteine residues. Each cluster transfers single electrons, with the charge distributed unevenly across the metal atoms in mixed valence states [3].

- Quinones (Ubiquinone): Lipid-soluble benzoquinones that shuttle electrons between complexes in the mitochondrial electron transport chain, with the ability to carry both electrons and protons.

Group Transfer Cofactors

- Coenzyme A (CoA) and its derivatives: These cofactors function as carriers and activators of acyl groups in numerous metabolic transformations, including critical steps in the citric acid cycle and fatty acid metabolism [2].

- Pyridoxal phosphate (PLP): The active form of vitamin B₆, PLP is involved in the metabolism of all amino acids and represents one of the coenzymes with the largest impact on putative prebiotic networks [1].

Table 1: Major Cofactor Classes and Their Primary Functions

| Cofactor Class | Specific Examples | Primary Metabolic Function | Key Structural Features |

|---|---|---|---|

| Energy Currency | ATP, ADP, AMP | Energy transfer and storage | Adenine, ribose, phosphate groups (1-3) |

| Electron Carriers | NAD⁺/NADH, NADP⁺/NADPH | Redox reactions; electron transfer | Nicotinamide ring, adenine, ribose moieties |

| Electron Carriers | FAD/FADH₂, FMN/FMNH₂ | Redox reactions; 1 or 2 electron transfer | Isoalloxazine ring system |

| Electron Carriers | Ubiquinone, Iron-sulfur clusters | Electron transport in membranes | Benzoquinone head; Fe-S inorganic clusters |

| Group Transfer | Coenzyme A, Acetyl-CoA | Acyl group transfer | Pantothenate, β-mercaptoethylamine, ADP |

| Group Transfer | Pyridoxal phosphate | Amino group transfer | Pyridine derivative, aldehyde functional group |

Quantitative Analysis of Cofactors: Experimental Methodologies

Accurate quantification of cellular cofactor levels is essential for understanding metabolic status, identifying bottleneck reactions in engineered pathways, and diagnosing disease states. Liquid chromatography/mass spectrometry (LC/MS) has emerged as the most powerful analytical platform for cofactor analysis due to its high sensitivity, specificity, and ability to simultaneously quantify multiple cofactor classes [2].

Optimized LC/MS Protocols for Cofactor Analysis

Comprehensive methodological comparisons have identified optimal conditions for cofactor analysis using LC/MS in negative mode without ion-pairing agents, which can cause ion suppression and instrument contamination [2]. Systematic evaluation of chromatographic columns revealed that a Hypercarb column with reverse elution provides superior performance for simultaneous analysis of 15 cofactors, including adenosine nucleotides, nicotinamide adenine dinucleotides, and various acyl-CoAs. The optimal mobile phase consists of 15 mM ammonium acetate buffer at various pH levels (pH 5.0, 7.0, and 9.0) with a gradient of acetonitrile, which effectively minimizes cofactor degradation during analysis [2].

The optimized method demonstrates exceptional sensitivity, with limits of detection (LoD) ranging from 0.09-2.45 ng mL⁻¹ and limits of quantification (LoQ) ranging from 0.29-7.42 ng mL⁻¹ across the 15 cofactors analyzed. This sensitivity enables researchers to detect subtle changes in cofactor pools in response to genetic or environmental perturbations, providing crucial insights into metabolic regulation [2].

Extraction and Quenching Methods for Saccharomyces cerevisiae

For the model organism Saccharomyces cerevisiae, a systematic comparison of extraction methods revealed that fast filtration outperforms conventional cold methanol quenching, which causes membrane damage and metabolite leakage [2]. The optimal extraction solvent was identified as acetonitrile:methanol:water (4:4:2, v/v/v) with 15 mM ammonium acetate buffer, which maximizes cofactor recovery while maintaining stability. This optimized protocol represents a significant advancement over traditional approaches and can serve as a standard for reliable cofactor quantification in yeast-based metabolic engineering studies [2].

Diagram 1: Experimental workflow for LC/MS-based cofactor analysis, highlighting optimal methods at each step. The diagram contrasts superior approaches (green) with suboptimal traditional methods (red).

In Silico Approaches for Cofactor Balance Estimation

Computational methods for predicting cofactor balance have become indispensable tools in metabolic engineering, enabling researchers to evaluate and optimize pathway performance before experimental implementation. Constraint-based modeling approaches, particularly Flux Balance Analysis (FBA), provide a powerful framework for assessing the network-wide effects of engineered pathways on cellular energy and redox states [4].

Co-factor Balance Assessment (CBA) Algorithm

The CBA protocol uses stoichiometric modeling (FBA, pFBA, FVA, and MOMA) with the Escherichia coli core stoichiometric model to investigate how synthetic pathways with differing energy and electron demands affect product yield [4]. This algorithm systematically tracks and categorizes how ATP and NAD(P)H pools are affected by introduced pathways, distributing cofactor fluxes across five core categories: (1) cofactor production, (2) biomass production, (3) waste release, (4) cellular maintenance, and (5) target production [4] [5].

A significant challenge identified through CBA is the underdeterminacy of FBA solutions, which manifests as unrealistic futile cofactor cycles with excessive energy dissipation [4]. For example, when modeling eight different butanol production pathways in E. coli, solutions with minimal futile cycling diverted surplus energy and electrons toward biomass formation rather than target compound production. Manual constraint of the models or the use of loopless FBA was required to obtain biologically realistic flux distributions [4].

Comparison of Computational and Experimental Approaches

Table 2: Comparison of Methodologies for Cofactor Analysis and Balance Estimation

| Parameter | In Silico CBA Approach | Experimental LC/MS Approach |

|---|---|---|

| Primary Objective | Predict theoretical yield and cofactor demands of engineered pathways | Quantify actual intracellular cofactor concentrations |

| Throughput | High (rapid evaluation of multiple pathway designs) | Medium (sample preparation and analysis required) |

| Key Inputs | Stoichiometric model, reaction network, objective function | Cell extracts, analytical standards, optimized solvents |

| Key Outputs | Theoretical yield, flux distributions, cofactor balance | Absolute concentrations, concentration ratios, pool sizes |

| Major Limitations | Futile cycling in solutions, requires manual constraints | Metabolite leakage during extraction, analyte degradation |

| Experimental Validation | Required to confirm predictions | Direct measurement of cofactor levels |

| Best Applications | Pathway selection, strain design, identifying imbalances | Diagnostic applications, understanding metabolic status |

Case Study: Cofactor Balance in Butanol Production Pathways

The practical implications of cofactor balance are vividly illustrated by a case study comparing eight synthetic pathways for butanol and butanol precursor production in E. coli, which exhibit distinct energy and redox requirements [4]. Each pathway variant was introduced into the E. coli Core stoichiometric model, resulting in eight distinct models (BuOH-0, BuOH-1, tpcBuOH, BuOH-2, fasBuOH, CROT, BUTYR, BUTAL) with different ATP and NAD(P)H demands [4].

The CBA protocol revealed that pathways with better cofactor balance achieved higher theoretical yields, with excessive ATP or NAD(P)H surplus leading to diversion of carbon toward biomass formation or dissipation through futile cycles [4]. Both FBA-based CBA and the independent calculation method developed by Dugar and Stephanopoulos identified the same pathway as the highest-yielding option, despite differences in how they adjusted for cofactor imbalances [4]. This convergence strengthens confidence in computational predictions while highlighting the importance of cofactor balance as a design principle in metabolic engineering.

Diagram 2: Cofactor balance analysis workflow for butanol production pathways. The diagram illustrates how pathway variants are evaluated through CBA, with balanced pathways (green) achieving higher theoretical yields than imbalanced ones (red).

Essential Research Tools and Reagent Solutions

Advancing research in cofactor analysis requires specialized reagents, tools, and computational resources. The following table summarizes key solutions for experimental and computational approaches to cofactor studies.

Table 3: Essential Research Reagent Solutions for Cofactor Analysis

| Research Tool | Specific Examples/Suppliers | Primary Function | Application Notes |

|---|---|---|---|

| Analytical Columns | Hypercarb, ACQUITY BEH Amide, ZIC-pHILIC | Chromatographic separation of cofactors | Hypercarb with reverse elution optimal for simultaneous analysis of 15 cofactors [2] |

| Extraction Solvents | Acetonitrile:methanol:water (4:4:2; v/v/v) with 15 mM ammonium acetate | Metabolite extraction with stability preservation | Optimal for cofactors from S. cerevisiae; minimizes degradation [2] |

| Stoichiometric Models | E. coli Core Model, Genome-scale models | Constraint-based modeling of cofactor balance | Enables FBA, pFBA, FVA, MOMA simulations [4] |

| Cofactor Standards | Sigma-Aldrich (purity >85%) | Quantification reference standards | Includes AMP, ADP, ATP, NAD⁺, NADH, NADP⁺, NADPH, various acyl-CoAs [2] |

| Software Platforms | Python with COBRApy, MATLAB | Implementation of CBA algorithms | Customizable flux balance analysis and pathway simulation [4] |

The comprehensive analysis of cofactors—from their fundamental roles as electron carriers and energy currency to their quantitative assessment through experimental and computational methods—reveals the critical importance of these molecules in cellular metabolism and biotechnological applications. Experimental LC/MS approaches provide precise quantification of cofactor concentrations with impressive sensitivity (LoD: 0.09-2.45 ng mL⁻¹), while in silico CBA algorithms enable predictive assessment of cofactor demands in engineered pathways [4] [2].

The most powerful research strategies integrate both methodologies, using computational predictions to guide strain design and experimental validation to verify intracellular cofactor states and identify unanticipated metabolic adaptations. This synergistic approach is particularly valuable in metabolic engineering, where balanced cofactor metabolism is essential for maximizing product yields. As research continues to unveil the sophisticated roles of cofactors in quantum biological processes and pre-enzymatic metabolism, the methodologies reviewed here will provide the foundation for new discoveries and applications across biochemistry, synthetic biology, and biomedical research [6] [1].

Physiological Functions of NAD(P)H/NAD(P)+, ATP/ADP, and Acetyl-CoA

Cellular metabolism relies on a network of universal cofactors and metabolic intermediates that govern energy transfer, redox balance, and biosynthetic processes. Among these, the NAD(P)H/NAD(P)+ redox couples, ATP/ADP system, and acetyl-CoA represent three cornerstone components that enable fundamental biochemical transformations. Within the context of in silico versus experimental cofactor balance estimation, understanding the precise physiological functions and quantitative dynamics of these molecules becomes paramount. Computational models predict metabolic fluxes and cofactor utilization, but these predictions require validation through rigorous experimental measurement of concentrations, turnover rates, and binding constants. This guide objectively compares the roles of these essential metabolites, supported by experimental data and methodologies relevant to researchers investigating metabolic engineering, drug development, and systems biology.

Quantitative Comparison of Core Cofactors

Table 1: Key Functional and Quantitative Attributes of Core Cofactors

| Cofactor Pair / Molecule | Primary Physiological Functions | Key Regulatory Enzymes | Reported Intracellular Concentrations | Free Energy of Hydrolysis/Redox Potential |

|---|---|---|---|---|

| NAD+/NADH | Cellular energy metabolism; substrate for NAD+-consuming enzymes (SIRTs, PARPs) [7]. | NAD+ kinases, Dehydrogenases | Compartment-specific pools maintained by biosynthesis and salvage pathways [7]. | Redox potential governs electron transfer in catabolism. |

| NADP+/NADPH | Anabolic biosynthesis; redox homeostasis; antioxidant defense [8] [7]. | Glucose-6-phosphate dehydrogenase, NADP+-linked malic enzyme, NAD+ kinase [9]. | Distinct from NAD(H) pools; maintained in more reduced state [7]. | Critical for reductive biosynthesis (e.g., fatty acids, cholesterol) [9]. |

| ATP/ADP | Universal "energy currency"; phosphorylation; signaling [10]. | ATP synthase, Phosphofructokinase-1 (PFK1), Pyruvate kinase [10]. | 1 to 10 μM; maintained ~10 orders of magnitude from equilibrium [10] [11]. | ΔG°' = -30.5 kJ/mol (ATP → ADP + Pi) [11]. |

| Acetyl-CoA | Central metabolic hub: delivers acetyl group to TCA cycle; precursor for lipid synthesis; substrate for protein acetylation [12] [13] [14]. | Pyruvate dehydrogenase, ATP-citrate lyase (ACLY), Acetyl-CoA synthetase (ACSS2) [13] [14]. | Varies by compartment; mitochondrial, cytosolic, and nuclear pools (e.g., ~20–200 μM in some contexts) [14]. | Thioester bond hydrolysis is exergonic (ΔG°' = -31.5 kJ/mol) [13]. |

Table 2: Experimental Data from Mitochondrial Studies in Different Tissues

| Experimental Model | Krebs Cycle Flux Control | Notable Enzyme Activity (Vmax) Findings | Sensitivity to Rotenone (Complex I Inhibition) | Key Metabolic Features |

|---|---|---|---|---|

| AS-30D Rat Hepatoma (HepM) | High flux control by NADH consumption (Complex I) [15]. | Higher enzyme Vmax values than liver, lower than heart [15]. | High sensitivity; cancer cell proliferation more affected [15]. | Krebs cycle functional but citrate may be diverted for biosynthesis [15]. |

| Rat Liver Mitochondria (RLM) | Lower flux control by Complex I [15]. | Lower Vmax values for KC enzymes [15]. | Lower sensitivity compared to hepatoma [15]. | |

| Rat Heart Mitochondria (RHM) | Highest Vmax order: RHM > HepM > RLM [15]. | High energy demand for contraction [10]. |

Detailed Functional Analysis and Experimental Evidence

NAD(H) and NADP(H) Redox Couples

The NAD+/NADH and NADP+/NADPH redox couples are essential for maintaining cellular redox homeostasis and have distinct, non-overlapping physiological roles.

Cellular Functions and Compartmentalization: The NAD+/NADH ratio is primarily tuned for catabolic processes, acting as a universal electron acceptor in pathways like glycolysis and the Krebs cycle to facilitate ATP generation [7]. In contrast, the NADP+/NADPH system is maintained in a more reduced state and dedicated to anabolic processes and defense against oxidative stress [8] [7]. NADPH serves as the unique electron donor for regenerating reduced glutathione, a critical cellular antioxidant [8] [9]. Furthermore, both cofactors act as substrates for signaling enzymes; NAD+ is a substrate for sirtuins and PARPs, while NADPH is a substrate for NADPH oxidases (NOX enzymes) that generate reactive oxygen species for immune defense and signaling [8] [7].

Biosynthesis and Homeostasis: Cellular levels of these cofactors are tightly regulated through biosynthesis and salvage pathways. NAD+ is synthesized de novo from tryptophan or from other precursors like nicotinic acid (NA), nicotinamide (NAM), and nicotinamide riboside (NR) via the Preiss-Handler and salvage pathways [7]. The enzyme NAD+ kinase (NADK) is the sole enzyme responsible for phosphorylating NAD+ to generate NADP+ [7] [9]. The NADPH pool is primarily generated by the pentose phosphate pathway, with contributions from NADP+-dependent isoforms of isocitrate dehydrogenase (IDH) and malic enzyme [9]. The concept of "redox stress" – both oxidative and reductive – is increasingly recognized as critical in pathological disorders, reflecting imbalances in these redox couples [7].

ATP/ADP: The Cellular Energy Currency

Adenosine triphosphate (ATP) serves as the universal energy currency of the cell, coupling energy-releasing and energy-requiring processes.

Energy Transfer and Hydrolysis: The structure of ATP, featuring three phosphate groups, contains high-energy phosphoanhydride bonds. Hydrolysis of ATP to ADP and inorganic phosphate (Pi) releases a significant amount of free energy (ΔG°' = -30.5 kJ/mol), which drives diverse cellular functions [10] [11]. This energy release is harnessed for active transport (e.g., Na+/K+ ATPase), muscle contraction, nerve impulse propagation, and biosynthesis of macromolecules [10].

Metabolic Regulation and Production: ATP levels are maintained far from equilibrium, and the cell uses feedback mechanisms to regulate its production. For instance, high [ATP] allosterically inhibits key glycolytic enzymes like phosphofructokinase-1 (PFK1), while high [AMP/ADP] activates them, ensuring ATP synthesis matches energetic demand [10]. The majority of ATP is produced through oxidative phosphorylation in the mitochondria, which generates approximately 30 ATP molecules per glucose oxidized [10]. Glycolysis contributes a smaller net yield of 2 ATP per glucose but can proceed anaerobically [11]. Emerging research using techniques like monitoring "mitochondrial flashes" reveals real-time dynamics of ATP production inhibition, demonstrating sophisticated feedback control during low energy demand [10].

Acetyl-CoA: A Multifaceted Metabolic Hub

Acetyl-coenzyme A (Acetyl-CoA) is a pivotal metabolite at the crossroads of carbohydrate, fat, and protein metabolism, with expanding roles in epigenetic regulation.

Metabolic Integration and Biosynthesis: Acetyl-CoA's primary function is to deliver the acetyl group to the Krebs cycle (TCA cycle) for oxidation and energy production [13]. It is produced from various sources: through glycolysis followed by pyruvate dehydrogenase activity, from fatty acid β-oxidation, and from the catabolism of certain amino acids [13]. When energy is abundant, mitochondrial citrate can be exported to the cytosol and cleaved by ATP-citrate lyase (ACLY) to generate cytosolic acetyl-CoA, which serves as the fundamental building block for fatty acid and cholesterol synthesis [13] [14]. This makes acetyl-CoA a key indicator of the cell's metabolic state.

Signaling and Epigenetic Regulation: Beyond its metabolic functions, acetyl-CoA is the sole donor of acetyl groups for protein acetylation, a major post-translational modification [12] [14]. This is particularly significant in the nucleus, where acetyl-CoA levels directly influence histone acetylation. Histone acetyltransferases (HATs) have a Km for acetyl-CoA within the physiological concentration range, meaning fluctuations in nuclear acetyl-CoA can directly alter gene expression patterns linked to cell growth, proliferation, and metabolism [14]. This establishes acetyl-CoA as a critical nutrient rheostat, linking metabolic status to transcriptional regulation [14].

Experimental Protocols for Cofactor Analysis

Validating in silico cofactor balance predictions requires precise experimental methodologies. This section details protocols for assessing cofactor function and metabolism.

This protocol determines flux control coefficients in the Krebs cycle, crucial for understanding energy metabolism differences in normal versus cancer cells.

Mitochondria Isolation: Rat liver (RLM), heart (RHM), and AS-30D hepatoma (HepM) mitochondria are isolated via differential centrifugation. Mitochondrial fractions are resuspended in SHE buffer (250 mM sucrose, 10 mM HEPES, 1 mM EGTA, pH 7.3) and centrifuged at 12,857 x g for 10 min at 4°C; this wash process is repeated three times to minimize cytosolic contamination. Final pellets are resuspended in SHE buffer supplemented with 1 mM PMSF, 1 mM EDTA, and 5 mM DTT, with protein concentrations adjusted to 30-80 mg/mL, and stored at -70°C [15].

Enzyme Activity (Vmax) and Kinetic Parameter (Km) Determination: Enzyme activities for Krebs cycle enzymes (e.g., citrate synthase, isocitrate dehydrogenase, 2-oxoglutarate dehydrogenase, succinate dehydrogenase, malate dehydrogenase) are assayed in mitochondrial preparations. Activities are measured spectrophotometrically by monitoring NADH or NADPH production/consumption at 340 nm. Vmax and Km values are calculated from the resulting kinetic data [15].

Kinetic Modeling and Metabolic Control Analysis (MCA): A kinetic model of the Krebs cycle is constructed using the experimentally determined Vmax and Km values. Flux control coefficients (CJ Ei) are calculated for each enzyme. A flux control coefficient quantifies the fractional change in pathway flux in response to an infinitesimal change in the activity of a specific enzyme. This identifies which enzymes exert the most significant control over the Krebs cycle flux (e.g., Complex I in hepatoma) [15].

Functional Validation with Inhibitors: The model's prediction is tested by applying specific metabolic inhibitors and measuring the impact on cell proliferation. For example, the model predicted high sensitivity to rotenone (Complex I inhibitor) in hepatoma cells was confirmed by treating AS-30D cancer cells, rat heart cells, and non-cancer cells with rotenone and observing a greater inhibition of proliferation in the cancer cells [15].

This protocol examines the link between metabolic status and epigenetic regulation via acetyl-CoA.

Cell Culture under Nutrient-Modified Conditions: Cells are subjected to glucose deprivation, serum starvation, or treatment with specific pharmacological agents (e.g., ACLY or ACSS2 inhibitors) to manipulate intracellular acetyl-CoA levels [14].

Acetyl-CoA and Acyl-CoA Measurement: Cells are harvested, and metabolites are extracted. Liquid chromatography-tandem mass spectrometry (LC-MS/MS) is used for precise quantification of acetyl-CoA and other acyl-CoAs. The inherent instability of the thioester bond necessitates rapid processing, use of internal standards, and proper quality controls [14].

Analysis of Histone Acetylation Status: Histones are acid-extracted from cell nuclei. Global histone acetylation or acetylation at specific lysine residues is analyzed via Western blotting using pan-specific or site-specific anti-acetyl-lysine antibodies. Alternatively, mass spectrometry-based proteomics provides a comprehensive, quantitative map of histone modification sites [14].

Correlation and Gene Expression Analysis: Changes in acetyl-CoA levels are correlated with the degree of histone acetylation. Subsequent effects on gene expression are assessed by RNA sequencing (RNA-Seq) or quantitative RT-PCR, focusing on genes related to cell growth and metabolism [14].

Visualization of Metabolic Integration and Experimental Workflows

The following diagrams illustrate the interconnected roles of the cofactors in central metabolism and the key experimental workflows for their study.

Cofactor Integration in Central Metabolism

Workflow for Krebs Cycle Flux Control Analysis

The Scientist's Toolkit: Key Research Reagents and Materials

Table 3: Essential Reagents for Studying Cofactor Metabolism

| Reagent / Material | Function in Experimental Protocols | Example Application |

|---|---|---|

| HEPES-EGTA-Sucrose (SHE) Buffer | Isotonic preservation medium for mitochondrial isolation and storage. Maintains structural and functional integrity of mitochondria during preparation [15]. | Mitochondria isolation from liver, heart, and hepatoma tissues [15]. |

| Specific Metabolic Inhibitors (e.g., Rotenone, Malonate) | Chemically probe the contribution of specific enzymes/pathways to overall metabolic flux. Rotenone inhibits Complex I; malonate inhibits succinate dehydrogenase [15]. | Validation of flux control coefficients predicted by kinetic modeling [15]. |

| Antibodies for Metabolic Enzymes & Histone Modifications | Detection and quantification of protein expression (Western blot) and specific post-translational modifications. | Analysis of Krebs cycle enzyme levels (e.g., anti-IDH2, anti-SDH) [15] and histone acetylation status (anti-acetyl-lysine) [14]. |

| NAD+, NADH, NADP+, NADPH, Acetyl-CoA Standards | Calibration standards for accurate quantification of metabolite concentrations in complex biological samples using LC-MS/MS or enzymatic assays [14]. | Absolute quantification of cofactor levels in cell or tissue extracts [14]. |

| LC-MS/MS System | High-precision analytical platform for the separation and quantification of metabolites, including unstable acyl-CoA thioesters, based on mass-to-charge ratio [14]. | Targeted measurement of acetyl-CoA and other acyl-CoAs with high specificity and sensitivity [14]. |

In microbial cell factories, cofactors such as ATP and NAD(P)H serve as the fundamental currency of energy and reducing power, driving the vast network of biochemical reactions essential for both cell survival and product synthesis [4]. Cofactor balance refers to the precise homeostasis between the generation and consumption of these metabolites, a state that is frequently disrupted when engineered pathways are introduced into host organisms [16]. This imbalance can trigger metabolic bottlenecks, reduce carbon efficiency, and ultimately diminish the yield of target compounds, posing a significant challenge for industrial bioprocesses [17]. The central thesis of this guide explores the dichotomy in how this critical balance is quantified—contrasting the predictive power of in silico modeling with the empirical validation provided by experimental analysis. For researchers and drug development professionals, understanding the capabilities and limitations of each approach is paramount for designing robust microbial systems for chemical and therapeutic production.

Methodologies for Cofactor Balance Estimation: A Comparative Guide

1In SilicoConstraint-Based Modeling

In silico methods rely on genome-scale metabolic models and computational simulations to predict metabolic behavior and cofactor demands before any wet-lab experimentation.

- Flux Balance Analysis (FBA): This is a foundational constraint-based method that uses stoichiometric models of metabolism to calculate the flow of metabolites through a network. It predicts optimal metabolic fluxes, including cofactor production and consumption rates, under steady-state assumptions [4] [18].

- Cofactor Balance Assessment (CBA) Algorithm: An extension of FBA, the CBA algorithm was developed to systematically track and categorize how ATP and NAD(P)H pools are affected by the introduction of a new production pathway, helping to identify the source of cofactor imbalances [4].

- Cofactor Modification Analysis (CMA): This optimization procedure uses Mixed-Integer Linear Programming (MILP) to identify optimal cofactor-specificity "swaps" for oxidoreductase enzymes in genome-scale models. It aims to increase the theoretical yield of target products by modifying the cofactor specificity of key enzymes like glyceraldehyde-3-phosphate dehydrogenase (GAPD) [18].

Experimental & Analytical Techniques

Experimental approaches provide direct, empirical measurements of metabolic fluxes and intracellular cofactor levels, offering validation for computational predictions.

- 13C-Metabolic Flux Analysis (13C-MFA): This technique is the gold standard for experimentally quantifying intracellular metabolic fluxes. It involves feeding cells with 13C-labeled substrates (e.g., glucose) and using Mass Spectrometry (MS) to trace the isotopomer patterns in intracellular metabolites. The data is used to constrain and compute precise metabolic reaction rates, including those governing cofactor regeneration [4] [19].

- Quantitative Metabolomics for Cofactor Profiling: Liquid Chromatography-Mass Spectrometry (LC-MS) is used to absolutely quantify the concentrations of intracellular metabolites, including ATP, ADP, AMP, NADPH, and NADH. This allows for the calculation of energy charge and redox states, providing a direct snapshot of the cell's cofactor balance under different genetic or environmental conditions [19].

- Whole-Cell Proteomics: High-resolution proteomics, typically via LC-MS/MS, quantifies protein expression levels. This helps confirm the presence and relative abundance of key enzymes involved in cofactor metabolism, such as those in the Pentose Phosphate Pathway (PPP) or transhydrogenases, linking gene expression to functional metabolic outcomes [16].

Comparative Analysis:In Silicovs. Experimental Approaches

Table 1: A direct comparison of key methodologies for cofactor balance analysis.

| Feature | In Silico Methods (e.g., FBA, CBA) | Experimental Methods (e.g., 13C-MFA, Metabolomics) |

|---|---|---|

| Primary Objective | Predict theoretical maximum yields and identify potential network bottlenecks [4] [18]. | Provide quantitative, empirical validation of fluxes and cofactor levels in vivo [4] [19]. |

| Key Outputs | Predicted flux distributions, theoretical product yields, identification of optimal gene knockouts/swaps [18]. | Absolute intracellular flux maps, measured metabolite concentrations, energy charge [19]. |

| Throughput & Cost | High throughput; low cost once a model is established. | Low to medium throughput; requires significant time and resource investment. |

| Key Limitations | May predict unrealistic futile cycles; relies on accurate model reconstructions and constraints [4]. | Captures a snapshot in time; requires sophisticated instrumentation and data analysis. |

| Data Used as Constraint | Growth rate, substrate uptake rate, reaction stoichiometry, gene essentiality data. | Measured extracellular fluxes, 13C-labeling patterns, quantitative metabolite concentrations [19]. |

Table 2: Summary of key findings from cofactor engineering case studies.

| Organism | Target Product | Engineering Strategy | Key Cofactor(s) Addressed | Outcome | Validation Method |

|---|---|---|---|---|---|

| E. coli [16] | D-Pantothenic Acid (D-PA) | Multi-module engineering: Flux redistribution via EMP/PPP/ED pathways; heterologous transhydrogenase; optimized serine-glycine system. | NADPH, ATP, 5,10-MTHF | Record titer: 124.3 g/L; Yield: 0.78 g/g glucose [16]. | Fed-batch fermentation, Fluxomics |

| E. coli [4] | n-Butanol | In silico CBA of eight different pathway variants with distinct energy/redox demands. | ATP, NAD(P)H | Identified the highest-yielding pathway; highlighted issue of futile cycles in models [4]. | FBA, pFBA, MOMA |

| P. putida [19] | Lignin-derived Aromatics Utilization | Native metabolic network analysis using 13C-fluxomics to understand cofactor coupling during growth on phenolic acids. | NADPH, NADH, ATP | Revealed TCA cycle remodeling generates 50-60% of NADPH via anaplerotic carbon recycling [19]. | 13C-Fluxomics, Proteomics |

| E. coli & S. cerevisiae [18] | Various (e.g., 1,3-PDO, Amino Acids) | Computational identification of optimal cofactor specificity swaps (e.g., GAPD, ALCD2x) using an MILP framework. | NADH vs. NADPH | Increased theoretical yields for numerous native and non-native products [18]. | FBA, pFBA |

Experimental Protocols for Integrated Cofactor Analysis

Protocol 1:In SilicoCofactor Balance Assessment (CBA)

This protocol is adapted from the methodology used to analyze butanol production pathways in E. coli [4].

- Model Selection and Modification: Select a genome-scale metabolic model (e.g., E. coli Core model). Introduce the stoichiometric reactions for the heterologous production pathway of interest.

- Define Objective Function: Set the model's objective function to maximize the production rate of the target chemical (e.g., butanol).

- Simulation and Flux Prediction: Perform Flux Balance Analysis (FBA) under defined environmental constraints (e.g., glucose uptake rate) to obtain a flux distribution.

- Cofactor Tracking: Implement the CBA algorithm to parse the flux solution. Categorize and sum the fluxes of all reactions producing and consuming key cofactors (ATP, NADH, NADPH).

- Identify Imbalance: Calculate the net balance for each cofactor pool. A significant surplus or deficit indicates a cofactor imbalance that may limit theoretical yield.

- Iterative Constraining: To mitigate unrealistic flux solutions (e.g., high-flux futile cycles), apply additional constraints based on experimental data, such as flux ranges from 13C-MFA, or use loopless FBA.

Protocol 2: Experimental 13C-Fluxomics for Cofactor Production Rates

This protocol is based on the workflow used to decode carbon and energy metabolism in P. putida [19].

- Cultivation and Isotope Labeling: Grow the engineered strain in a bioreactor with a defined medium where the sole carbon source (e.g., glucose or a phenolic acid) is replaced with its 13C-labeled equivalent (e.g., [1-13C]-glucose).

- Metabolite Sampling and Quenching: During mid-exponential growth, rapidly sample the culture and quench metabolism immediately (e.g., using cold methanol). This preserves the in vivo metabolic state.

- Metabolite Extraction: Extract intracellular metabolites from the cell pellet.

- Mass Spectrometry (MS) Analysis: Analyze the metabolite extracts using Gas Chromatography- or Liquid Chromatography-Mass Spectrometry (GC-MS/LC-MS) to measure the mass isotopomer distributions of key intermediate metabolites.

- Flux Calculation: Use specialized software (e.g., INCA, 13C-FLUX) to integrate the measured MS data, extracellular uptake/secretion rates, and biomass composition into a stoichiometric model. The software performs a non-linear optimization to compute the most probable intracellular flux map.

- Cofactor Flux Determination: From the estimated flux map, extract the fluxes of reactions that generate or consume cofactors (e.g., flux through G6PDH in PPP for NADPH, flux through malic enzyme for NADPH, flux through ATP synthase for ATP). This provides a quantitative picture of cofactor metabolism.

Visualization of Metabolic Pathways and Workflows

Diagram 1: Cofactor Nodes in a Metabolic Network. This map highlights key nodes in central metabolism (yellow, blue) where major cofactor transactions (red for ATP, green for NAD(P)H) occur, feeding into an engineered product pathway.

Diagram 2: Integrated Cofactor Analysis Workflow. This workflow illustrates the cyclical process of using in silico predictions to guide experimental design, with experimental results then being used to refine the computational models.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key reagents and materials for conducting cofactor balance research.

| Reagent / Material | Function / Application | Example Use Case |

|---|---|---|

| 13C-Labeled Substrates (e.g., [1-13C]-Glucose, [U-13C]-Glucose) | Serves as a tracer for 13C-MFA, enabling the experimental determination of intracellular metabolic fluxes [19]. | Quantifying flux through the Pentose Phosphate Pathway versus Glycolysis. |

| Quenching Solution (e.g., Cold Methanol Buffers) | Rapidly halts all metabolic activity to capture an accurate snapshot of the intracellular metabolome at the time of sampling. | Preserving in vivo metabolite concentrations for subsequent LC-MS analysis. |

| Genome-Scale Metabolic Model (e.g., iJO1366 for E. coli) | A computational representation of an organism's metabolism, used for in silico simulation and prediction of metabolic behavior [18]. | Performing FBA to predict theoretical yields and identify cofactor imbalances in engineered strains. |

| LC-MS / GC-MS Instrumentation | The core analytical platform for identifying and quantifying metabolites (metabolomics) and analyzing 13C-isotopomer distributions. | Measuring absolute concentrations of ATP/ADP/AMP and NADPH/NADP+; determining labeling patterns for 13C-MFA. |

| Cloning & Genetic Engineering Kits | Tools for constructing plasmids and engineering microbial genomes to implement proposed metabolic modifications. | Overexpressing a transhydrogenase or swapping the cofactor specificity of a key oxidoreductase [16] [18]. |

In the rigorous process of drug development, the inability to accurately predict and control molecular interactions leads directly to clinical failure. Nearly 50% of new drug candidates fail due to a lack of clinical efficacy, while approximately 30% fail due to unmanageable toxicity [20]. These failures often stem from a common root: a critical imbalance between a drug's intended design and its actual behavior in a biological system. This imbalance manifests as poor binding to the intended target or damaging off-target effects, ultimately derailing promising therapies. This article examines these high-stakes imbalances through the lens of a parallel challenge in bioengineering: predicting cofactor balance in metabolic pathways, where the gap between in silico models and experimental reality also dictates success or failure.

The Drug Development Imbalance: Efficacy vs. Toxicity

The journey from a drug candidate to an approved therapy is fraught with risk, with data showing that over 90% of candidates that enter clinical trials ultimately fail [20]. The primary reasons for this high attrition rate are a direct reflection of the fundamental imbalances in drug design.

Table 1: Primary Reasons for Clinical Drug Development Failure

| Reason for Failure | Proportion of Failures | Root Cause (Imbalance) |

|---|---|---|

| Lack of Clinical Efficacy | 40%–50% | Poor Binding & Engagement: The drug does not effectively interact with its intended target at the required concentration or duration [20] [21]. |

| Unmanageable Toxicity | ~30% | Off-Target Effects: The drug interacts with unintended targets or healthy tissues, causing adverse effects [20]. |

| Poor Drug-Like Properties | 10%–15% | Pharmacokinetic Imbalance: The drug's absorption, distribution, metabolism, or excretion (ADME) properties prevent it from reaching the target site effectively [20]. |

A leading cause of efficacy failure is a lack of target engagement—the failure of a drug molecule to interact sufficiently with its intended biological target to elicit the desired therapeutic effect [21]. This can occur due to:

- Insufficient drug concentrations at the target site, often due to poor pharmacokinetic properties or inadequate dosing [21].

- Low binding affinity or selectivity, where the drug does not bind strongly or specifically enough to its target [21].

- An inadequate understanding of the target's biology, including its complex interactions, isoforms, and dynamics [21].

Conversely, toxicity failures often arise from off-target effects. A prominent example is found in Antibody-Drug Conjugates (ADCs), designed to be "magic bullets" that deliver potent cytotoxic agents directly to cancer cells. However, off-site, off-target toxicity remains a major cause of ADC failure, occurring when the cytotoxic payload is released prematurely in the bloodstream or delivered to healthy cells, damaging vital organs and bone marrow [22]. This has led to the failure of numerous clinical trials, such as vadastuximab talirine and rovalpituzumab tesirine, due to intolerable toxicity or fatal adverse events [22].

In Silico Predictions: The Promise and Peril of Modeling

The field of metabolic engineering faces a strikingly similar challenge: predicting and managing the balance of cellular cofactors. In silico models are indispensable tools for designing microbial "bio-factories," but their predictions can be misleading if they fail to capture biological complexity.

The Co-Factor Balance Challenge

Microorganisms require energy and electrons, supplied by co-factors like ATP and NAD(P)H, to grow and produce chemicals. A synthetic production pathway introduced into a host cell can disrupt the homeostasis of these co-factors, creating an imbalance [23] [4]. If the model does not accurately predict this imbalance, the engineered strain will divert resources inefficiently, leading to low product yields and high byproduct formation.

Limitations of Constraint-Based Modeling

A primary computational method used is Constraint-Based Modelling (CBM), including Flux Balance Analysis (FBA). While useful, these steady-state models are often underdetermined, meaning they have multiple mathematically valid solutions [23] [4]. This can lead to predictions that include unrealistic futile co-factor cycles—energy-wasting loops that are tightly regulated in real cells [23] [4]. Consequently, models may overestimate production yields by assuming the cell will optimize for the engineer's goal, whereas in reality, the cell's native regulatory and kinetic constraints dominate.

The Advent of Advanced Kinetic Models

To address these shortcomings, researchers are turning to kinetic modeling, which simulates the dynamic behavior of metabolic networks. A 2025 study used perturbation-response simulations on kinetic models of E. coli's central carbon metabolism and found that metabolic systems exhibit "hard-coded responsiveness" [24]. The study demonstrated that minor initial perturbations in metabolite concentrations can amplify over time, leading to significant deviations from the desired state. Furthermore, it identified adenyl cofactors (ATP/ADP) as consistently critical in governing the system's responsiveness to change [24]. This highlights a key weakness of simpler models: their inability to capture the dynamic, non-linear sensitivities that are inherent to living systems.

The following diagram illustrates the workflow of such a perturbation-response analysis, revealing how small imbalances can be amplified.

Bridging the Gap: Experimental Validation and Advanced Tools

The gap between prediction and reality can only be closed by robust experimental validation and the development of more sophisticated tools.

Experimental Protocols for Validation

- CETSA (Cellular Thermal Shift Assay): This method allows researchers to measure target engagement directly in intact cells and tissues under physiological conditions. By quantifying how a drug binding stabilizes a target protein against heat denaturation, CETSA provides a label-free, unbiased assessment of whether a drug is effectively engaging its intended target in situ [21].

- Advanced Preclinical Models: Traditional 2D cell cultures often fail to predict human clinical outcomes. Advanced models like Patient-Derived Xenografts (PDXs) and 3D organoids are becoming the gold standard. PDXs retain the original tumor's architecture and heterogeneity, providing a highly clinically relevant platform to test ADC efficacy and toxicity [22]. Organoids offer a controlled, yet physiologically relevant, 3D environment to isolate and analyze specific mechanisms of toxicity [22].

The Evolving Role of In Silico Tools

While in silico tools have limitations, they are rapidly evolving. AlphaFold 2 has revolutionized protein structure prediction, yet systematic evaluations reveal its limitations in capturing the full spectrum of biologically relevant states [25]. For nuclear receptors—a key drug target family—AlphaFold 2 shows high accuracy for stable conformations but systematically underestimates ligand-binding pocket volumes and misses functionally important asymmetric conformations in homodimeric receptors [25]. This underscores that while computational tools are powerful, their predictions, especially regarding flexible regions and co-factor interactions, must be validated experimentally.

Table 2: Comparison of In Silico & Experimental Methodologies

| Methodology | Key Application | Strengths | Limitations & Data Requirements |

|---|---|---|---|

| Constraint-Based Modelling (FBA) [23] [4] [26] | Predicting flux in metabolic networks at steady state. | Fast; applicable to genome-scale models; requires only stoichiometric network. | Underdetermined; predicts unrealistic futile cycles; lacks regulatory/kinetic details. |

| Kinetic Modelling & Perturbation-Response [24] | Simulating dynamic metabolic responses and stability. | Captures non-linear dynamics and system responsiveness; more biologically realistic. | Computationally heavy; requires extensive kinetic parameters; model-specific. |

| CETSA [21] | Measuring drug-target engagement in physiological conditions. | Label-free; uses intact cells; confirms on-target binding. | Does not confirm functional efficacy; requires a specific assay for each target. |

| Advanced Preclinical Models (PDXs, Organoids) [22] | Predicting clinical efficacy and toxicity. | High clinical translatability; retains tumor heterogeneity and microenvironment. | Costly and time-consuming to establish; not all tumor types grow readily. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions

| Research Tool | Function in Addressing Imbalance |

|---|---|

| Genome-Scale Metabolic Models (GEMs) [26] | Provide a stoichiometric blueprint of an organism's metabolism to simulate product yield and identify engineering targets. |

| Site-Specific Conjugation Kits [22] | Improve the homogeneity and stability of Antibody-Drug Conjugates (ADCs), reducing off-target payload release and toxicity. |

| Patient-Derived Xenograft (PDX) Libraries [22] | Offer highly translational in vivo models for evaluating ADC efficacy and toxicity, reflecting human patient responses. |

| CETSA Kits [21] | Enable quantitative measurement of target engagement in cells and tissues, validating a drug's ability to bind its intended target. |

| Structured Biomarker Panels [21] | Monitor pharmacodynamic responses and off-target effects in clinical trials, linking target engagement to clinical outcome. |

The high stakes of imbalance in drug development and metabolic engineering are clear: failed trials and inefficient processes. The central thesis unifying these fields is that over-reliance on simplified in silico models, which neglect biological complexity and dynamics, leads to predictions that do not hold up in experimental or clinical settings. The path forward requires a more integrated approach. For drug developers, this means employing tools like CETSA for early target engagement validation and using advanced preclinical models to de-risk toxicity. For metabolic engineers, it involves moving beyond simple constraint-based models to incorporate kinetic and thermodynamic constraints. In both fields, success hinges on closing the loop between computational prediction and rigorous experimental validation, ensuring that designs are not just theoretically sound, but biologically balanced.

Linking Cofactor Dynamics to Broader Drug Discovery Challenges

In the intricate landscape of drug discovery, cofactors—essential non-protein chemical compounds—orchestrate a vast array of enzymatic reactions crucial to cellular function. The dynamics of these cofactors, particularly their production, consumption, and regeneration (collectively termed "cofactor balance"), fundamentally influence metabolic pathways, protein function, and ultimately, drug efficacy and safety [4]. Accurately estimating this balance has emerged as a critical challenge, giving rise to two distinct methodological paradigms: experimental estimation, which measures cofactor dynamics in biological systems, and in silico estimation, which uses computational models to predict these relationships [4] [27]. This guide provides a comparative analysis of these approaches, examining their performance, applications, and limitations within modern drug development workflows. The strategic selection between these methods can significantly impact the efficiency of developing microbial cell factories for biomanufacturing, the accuracy of predicting off-target drug effects, and the successful targeting of complex protein-cofactor interactions [28] [27].

Methodological Comparison: Experimental vs. In Silico Approaches

The following section details the core protocols for the leading techniques in both experimental and in silico cofactor analysis.

Experimental Protocol: Structural Dynamics Response (SDR) Assay

The SDR assay is an innovative experimental technique that leverages the natural vibrations of proteins to detect ligand binding without the need for target-specific reagents [29].

Detailed Workflow:

- Sensor-Target Fusion: The target protein is genetically fused to a small fragment of the NanoLuc luciferase (NLuc) sensor protein.

- Ligand Incubation: The fused protein is incubated with a library of potential drug ligands.

- Sensor Reconstitution: The larger, missing fragment of the NLuc protein is added to the mixture. The intact sensor protein reforms, but its light output is modulated by the vibrational dynamics of the attached target protein.

- Ligand Binding Detection: Binding of a ligand to the target protein alters the protein's structural dynamics. This change is transmitted to the NLuc sensor, resulting in a measurable increase or decrease in luminescence intensity.

- Quantitative High-Throughput Screening (qHTS): The entire process is automated to rapidly test thousands of drug molecules simultaneously across different dosages, enabling the assessment of binding affinity and the detection of allosteric binders [29].

Experimental Protocol: SELEX-seq for Cofactor-Induced Specificity

SELEX-seq is used to determine how cofactors alter the DNA-binding specificity of transcription factors, revealing latent specificities not observable with the transcription factor alone [30].

Detailed Workflow:

- Complex Formation: The transcription factor of interest is purified in complex with its cofactor (e.g., the Hox protein with Exd/Homothorax).

- In Vitro Selection with Random DNA Library: The protein complex is incubated with a vast pool of double-stranded DNA oligonucleotides containing random sequences.

- EMSA Enrichment: Protein-DNA complexes are isolated from unbound DNA using an Electrophoretic Mobility Shift Assay (EMSA).

- PCR Amplification: The bound DNA sequences are recovered and amplified via Polymerase Chain Reaction (PCR).

- Iterative Selection: The enriched DNA pool is used as input for subsequent rounds of selection (typically 3-4 rounds) to refine high-affinity binders.

- Massively Parallel Sequencing: The selected DNA pools from each round are sequenced using high-throughput platforms (e.g., Illumina).

- Computational Analysis: A biophysical model is applied to the sequencing data to calculate the relative affinity of the protein-cofactor complex for every possible DNA sequence, generating a unique binding fingerprint [30].

In Silico Protocol: Cofactor Balance Assessment (CBA)

CBA is a constraint-based modeling approach used to quantify the impact of synthetic metabolic pathways on cellular cofactor pools [4].

Detailed Workflow:

- Model Construction: A Genome-scale Metabolic Model (GEM) of the host organism (e.g., E. coli), representing all known gene-protein-reaction associations, serves as the base.

- Pathway Integration: Heterologous reactions for the desired biosynthetic pathway (e.g., for butanol production) are introduced into the model.

- Constraint Definition: Physiological constraints are applied, such as substrate uptake rate, non-growth-associated maintenance energy (NGAM), and a minimum growth rate.

- Simulation: Computational techniques like Flux Balance Analysis (FBA) are used to simulate metabolism and predict metabolic fluxes.

- Balance Calculation: The algorithm tracks the consumption and production of key cofactors (e.g., ATP, NADH, NADPH) across the entire network in the presence of the new pathway.

- Identification of Imbalance: The analysis identifies futile cofactor cycles and quantifies cofactor imbalance, which can compromise theoretical product yield. This helps in selecting optimal pathways and hosts [4].

In Silico Protocol: Molecular Docking and Dynamics for Cofactor Binding

These computational methods predict how small molecules, including drugs, interact with the cofactor-binding sites of enzymes [28] [31].

Detailed Workflow:

- Protein Preparation: The 3D structure of the target protein (e.g., human Thiopurine S-methyltransferase, TPMT) is obtained from the Protein Data Bank (PDB). Water molecules and non-essential ligands are removed, and polar hydrogens and charges are added.

- Ligand Preparation: The 3D structure of the drug candidate (e.g., Telmisartan) is energy-minimized.

- Molecular Docking: The ligand is computationally posed into the defined binding site of the protein (e.g., the SAM/SAH cofactor-binding site). Hundreds to thousands of poses are generated and ranked by a scoring function.

- Validation (Redocking): The docking protocol is validated by redocking a native ligand (e.g., S-adenosyl-L-homocysteine, SAH) and calculating the Root-Mean-Square Deviation (RMSD) from the crystallized pose. An RMSD of <2.0 Å is generally acceptable [28].

- Molecular Dynamics (MD) Simulation (Optional): The top-ranked docked complex is subjected to an MD simulation. The system is solvated in a water box, ions are added, and Newton's equations of motion are solved over nanoseconds to microseconds to observe the stability of the binding interaction and conformational changes [31].

Performance Data Comparison

The table below summarizes quantitative and qualitative performance data for the featured methodologies, illustrating the trade-offs between experimental and in silico paradigms.

Table 1: Performance Comparison of Cofactor Analysis Methods

| Method | Key Performance Metrics | Throughput | Resource Requirements | Key Advantages | Primary Limitations |

|---|---|---|---|---|---|

| SDR Assay [29] | Detects allosteric binders missed by standard kinase assays; Requires minimal protein (fraction of standard tests). | High (qHTS of 1000s of compounds) | Moderate (requires protein purification & HTS instrumentation) | Universal platform; label-free; no need for protein function knowledge. | Limited to binding events that alter protein dynamics. |

| SELEX-seq [30] | Generates a comprehensive binding fingerprint (relative affinity for any DNA sequence). | Medium (requires multiple selection rounds & sequencing) | High (specialized protein purification & NGS) | Reveals latent specificities only apparent in protein-cofactor complexes. | Purely in vitro; may not capture full in vivo chromatin context. |

| CBA (FBA) [4] | Predicts Maximum Theoretical Yield (YT) and Achievable Yield (YA); e.g., YT of L-lysine in S. cerevisiae: 0.8571 mol/mol glucose [27]. | Very High (system-wide simulations) | Low (computational resources) | Genome-scale perspective; enables host strain selection & pathway design. | Predictions can be compromised by unrealistic futile cycles; requires manual constraint tuning. |

| Molecular Docking [28] | Binding affinity score (e.g., Vina score for Telmisartan with TPMT: -11.2 kcal/mol); RMSD for validation (<1.0 Å is excellent). | High (1000s of compounds virtually screened) | Low to Moderate | Rapid screening of large compound libraries; atomic-level insight. | Scoring functions can overestimate affinity; limited conformational sampling. |

| MD Simulations [31] | Simulation time (ns to µs); system size (10,000s to millions of atoms); RMSD/F of protein-ligand complex. | Low (computationally intensive, limited timescales) | Very High (HPC clusters) | Provides dynamic view of binding; assesses complex stability. | High computational cost; force field inaccuracies; limited sampling of rare events. |

Research Reagent Solutions

The table below lists essential reagents and tools for implementing the described methodologies.

Table 2: Essential Research Reagents and Tools

| Reagent / Tool | Function / Application | Method Category |

|---|---|---|

| NanoLuc Luciferase (NLuc) | Sensor protein whose light output is modulated by the dynamics of an attached target protein to detect ligand binding. | Experimental (SDR Assay) [29] |

| Random DNA Oligomer Library | A diverse pool of DNA sequences used as a starting point for selecting high-affinity binding sites for a protein-cofactor complex. | Experimental (SELEX-seq) [30] |

| Genome-Scale Metabolic Model (GEM) | A mathematical representation of an organism's metabolism, used as a foundation for simulating cofactor usage and production yields. | In Silico (CBA) [4] [27] |

| Force Fields (e.g., AMBER, CHARMM) | Empirical potentials describing interatomic interactions, essential for energy calculations in molecular docking and dynamics simulations. | In Silico (Docking/MD) [31] |

| Functionalized Cofactor Mimics | Synthetic cofactors with clickable handles (e.g., alkynes, azides) or photoaffinity labels for profiling cofactor interactomes and PTMs. | Hybrid / Chemical Proteomics [32] |

Visualizing Workflows and Relationships

The following diagrams illustrate the core workflows and conceptual relationships discussed in this guide.

SDR Assay Workflow

SELEX-seq Workflow

Cofactor Balance In Silico Analysis

Method Selection Strategy

From Virtual Models to Bench Techniques: A Toolkit for Cofactor Analysis

For researchers in metabolic engineering and drug development, predicting cellular metabolism in silico is crucial for accelerating strain design and identifying therapeutic targets. This guide compares two foundational approaches in this domain: the well-established Flux Balance Analysis (FBA) and the concept of Cofactor Balance Assessment (CBA), framing them within the critical research context of in silico versus experimental cofactor balance estimation.

Constraint-based metabolic modeling provides a computational framework to analyze metabolic networks at the genome-scale without requiring detailed kinetic parameters. These methods rely on the stoichiometry of biochemical reactions to predict systemic metabolic capabilities. The core principle is to use mass-balance constraints, defining that for each metabolite in the network, the rate of production must equal the rate of consumption under steady-state assumptions [33]. This approach allows researchers to simulate how microorganism or human cells utilize nutrients to grow, produce energy, or synthesize products of interest, making it invaluable for both fundamental research and industrial applications.

Flux Balance Analysis (FBA): Concept and Workflow

Core Principles and Mathematical Formulation

Flux Balance Analysis is a mathematical method for simulating metabolism in cells using genome-scale metabolic reconstructions [33]. FBA operates on two key assumptions: the system is at steady-state, meaning metabolite concentrations do not change over time, and the organism has been optimized through evolution for a biological objective, such as maximizing growth or ATP production [33].

Mathematically, this is represented as:

- Steady-State Constraint: ( S \cdot v = 0 ) Where ( S ) is the stoichiometric matrix and ( v ) is the vector of metabolic fluxes [33].

- Objective Function: ( Z = c^{T}v ) Where ( c ) is a vector of weights indicating how much each reaction contributes to the objective [33].

The system is solved using linear programming to find a flux distribution that maximizes the objective function while satisfying the steady-state and flux capacity constraints [33].

Standard FBA Workflow

The following diagram illustrates the standard workflow for performing a Flux Balance Analysis.

Advanced FBA Frameworks and Cofactor Balancing

Recent advancements have led to more sophisticated FBA frameworks that better integrate experimental data and pathway analysis. The TIObjFind framework, for instance, integrates Metabolic Pathway Analysis (MPA) with FBA to identify context-specific metabolic objective functions by calculating Coefficients of Importance (CoIs) for reactions [34]. This helps align model predictions with experimental flux data and reveals shifting metabolic priorities under different environmental conditions [34].

For dynamic processes like batch cultures, Dynamic FBA (dFBA) simulates time-varying metabolism. One approach uses experimental time-course data (e.g., glucose and biomass concentrations) to approximate specific uptake and growth rates, which are then used as constraints in sequential FBA simulations [35]. This method has demonstrated that high-producing experimental strains can achieve up to 84% of the theoretical maximum production simulated by dFBA [35].

Cofactor Balance Assessment (CBA): Concept and Workflow

The Role of Cofactors in Metabolic Models

Cofactors, such as ATP/ADP, NADH/NAD+, and NADPH/NADP+, are essential molecules in cellular metabolism, transferring chemical groups, electrons, and energy between reactions. Assessing their balance is critical because an imbalanced cofactor pool can halt metabolic flux, making predictions biologically irrelevant. Cofactor Balance Assessment is not a standalone method like FBA but is a fundamental constraint embedded within models like FBA to ensure thermodynamic feasibility.

Integrating CBA into Metabolic Models

In practice, CBA is implemented by ensuring that the production and consumption of each cofactor are balanced across the entire network at steady state. This is inherently part of the stoichiometric matrix ( S ) in FBA. The following workflow illustrates how CBA is integrated into a larger metabolic modeling process to ensure thermodynamically feasible predictions.

Comparative Analysis: FBA vs. CBA in Practice

The table below summarizes the core characteristics of FBA and how CBA is integrated as a critical component within such modeling frameworks.

Table 1: Comparative Analysis of FBA and Integrated CBA

| Feature | Flux Balance Analysis (FBA) | Cofactor Balance Assessment (CBA) |

|---|---|---|

| Primary Objective | Predict steady-state flux distributions that maximize/minimize a biological objective (e.g., growth) [33]. | Ensure thermodynamic feasibility and redox/energy balance within the metabolic network. |

| Methodological Approach | Linear Programming applied to a stoichiometrically-balanced network [33]. | A set of mass-balance constraints embedded within a larger model like FBA. |

| Key Input Requirements | Stoichiometric matrix, flux boundaries, objective function [33]. | Definition of cofactor pairs and their stoichiometric coefficients in all reactions. |

| Typical Outputs | Growth rate, product yield, full flux map for all reactions [36]. | Net flux through cofactor cycles, identification of cofactor bottlenecks. |

| Role in In Silico vs. Experimental Validation | Predicts phenotypes; validated by comparing predicted vs. measured growth rates or product secretion [35]. | A model-internal sanity check; validated by direct measurement of cofactor pools (e.g., via HPLC) or fluxomics. |

| Strengths | Computationally inexpensive, genome-scale applicability, no need for kinetic parameters [33]. | Ensures model predictions are thermodynamically feasible and identifies energy/redox inefficiencies. |

| Limitations | Relies on correct objective function; steady-state assumption may not reflect all conditions [34]. | Does not directly predict phenotype; is a component of a larger modeling strategy. |

Experimental Data and Validation

Case Study: Validating dFBA for Shikimic Acid Production

A 2024 study applied dFBA to evaluate the performance of an engineered E. coli strain for shikimic acid production [35]. The methodology and results provide a clear example of in silico and experimental data integration.

Experimental Protocol:

- Data Acquisition: Time-course data for glucose and biomass concentrations were manually extracted from literature and approximated using fifth-order polynomial regression [35].

- Constraint Calculation: The polynomial equations were differentiated and divided by the cell concentration to obtain time-dependent specific glucose uptake and growth rates for dFBA constraints [35].

- Simulation: A bi-level FBA optimization was performed, sequentially maximizing both growth and shikimic acid production under the calculated constraints [35].

Results and Validation: The dFBA simulation provided a theoretical maximum for shikimic acid concentration under the experimental constraints of substrate consumption and bacterial growth. Comparison with actual experimental data showed that the high-producing strain constructed in the lab achieved a concentration that was 84% of the simulated maximum, providing a clear metric for the strain's performance and highlighting room for improvement [35].

Case Study: The TIObjFind Framework

The novel TIObjFind framework addresses the challenge of selecting an appropriate objective function in FBA, which is critical for accurate predictions [34].

Methodology:

- Optimization Problem: Reformulates objective function selection as a problem that minimizes the difference between predicted and experimental fluxes.

- Pathway Analysis: Maps FBA solutions onto a Mass Flow Graph (MFG) for pathway-based interpretation.

- Coefficient Assignment: Determines Coefficients of Importance (CoIs) that quantify each reaction's contribution to an objective function that best aligns with the data [34].

Application: This framework was successfully applied to a multi-species system for isopropanol-butanol-ethanol (IBE) production, demonstrating a good match with experimental data and an ability to capture stage-specific metabolic objectives [34].

Essential Research Reagents and Tools

The table below lists key resources, including software and databases, essential for conducting FBA and related metabolic modeling studies.

Table 2: Key Research Tools and Resources for Metabolic Modeling

| Tool/Resource Name | Type | Primary Function in Research |

|---|---|---|

| COBRA Toolbox [35] | Software Toolbox | Provides a suite of functions for constraint-based reconstruction and analysis; includes implementations for dFBA. |

| KBase (KnowledgeBase) [36] | Online Platform | An integrated platform that includes apps for building models, running FBA, and comparing FBA solutions side-by-side. |

| GitHub Repository [34] | Code Repository | Hosts custom scripts and case study data for advanced frameworks like TIObjFind. |

| EcoCyc / KEGG [34] | Biological Database | Foundational databases for metabolic pathway information and stoichiometric data used in network reconstruction. |

| AlphaFold [37] | Protein Structure DB | Provides predicted 3D protein structures for analyzing enzyme active sites, though not directly for FBA. |

| UniProt [37] | Protein Sequence DB | Provides amino acid sequences for metabolic enzymes, useful for model refinement and validation. |

Flux Balance Analysis stands as a powerful, scalable in silico method for predicting metabolic phenotypes, with its accuracy continually enhanced by frameworks like TIObjFind and dFBA that better integrate experimental data. Cofactor Balance Assessment, while not a standalone predictive tool, is an indispensable component of model validation, ensuring thermodynamic feasibility. The convergence of in silico simulations—which can evaluate strain performance against a theoretical maximum—with experimental data for validation, creates a powerful feedback loop. This synergy is pivotal for advancing metabolic engineering and drug development, guiding efficient strain design and the identification of critical enzyme targets in pathogens.

In the modern drug discovery pipeline, the validation of a biological target is a critical first step, ensuring that therapeutic modulation will yield a desired clinical effect. For enzyme targets, particularly, this process is intricately linked to understanding the role of essential cofactors, such as NAD(P)H, glutathione (GSH), or ATP, which are small molecules that facilitate catalysis. The integration of computational structure-based methods provides a powerful strategy for probing these cofactor-driven mechanisms. Molecular docking and molecular dynamics (MD) simulations have emerged as indispensable tools for validating drug targets by offering atomic-level insights into the stability, dynamics, and druggability of cofactor-binding sites. This guide compares the performance of these in silico methodologies against traditional experimental approaches, framing the discussion within the broader thesis of balancing computational predictions with experimental validation in early-stage drug discovery.

Performance Comparison of Computational Methods

The efficacy of structure-based drug design (SBDD) hinges on selecting the appropriate computational tool for the task at hand. The following comparisons outline the performance of various molecular docking paradigms and the critical contribution of MD simulations.

Comparative Performance of Docking Methodologies

A comprehensive multi-dimensional evaluation of docking methods reveals distinct performance tiers across key metrics, including pose prediction accuracy and physical plausibility [38].

Table 1: Performance Comparison of Docking Methods Across Benchmark Datasets

| Method Category | Specific Method | Pose Accuracy (RMSD ≤ 2 Å) | Physical Validity (PB-Valid Rate) | Combined Success Rate | Key Characteristics and Limitations |

|---|---|---|---|---|---|

| Traditional Physics-Based | Glide SP | ~70% (Astex) | >94% (All Datasets) | ~70% (Astex) | High physical validity; computationally intensive [38]. |

| Traditional Physics-Based | AutoDock Vina | ~70% (Astex) | >80% (All Datasets) | ~60% (Astex) | Good balance of speed and accuracy; widely used [38]. |

| Generative Diffusion Models | SurfDock | >75% (All Datasets) | 40-64% | 33-61% | Superior pose accuracy; often produces physically invalid poses [38]. |

| Regression-Based Models | KarmaDock, QuickBind | Low | Very Low | Low | Often fail to produce physically valid poses; high steric tolerance [38]. |

| Hybrid Methods | Interformer | Moderate | High | Best Balance | Integrates AI scoring with traditional search; offers a balanced approach [38]. |

The data indicates a performance trade-off: while generative AI models like SurfDock excel in raw pose prediction accuracy, they frequently generate structures with physical imperfections such as incorrect bond lengths or steric clashes [38]. Conversely, traditional methods like Glide SP, while less flashy, consistently produce physically plausible results, making them more reliable for applications where molecular realism is critical. A significant challenge for most deep learning methods is generalization, with performance often declining when encountering novel protein binding pockets not represented in training data [38].

The Role of Molecular Dynamics in Validating Docking Results

Molecular docking provides a static snapshot of binding, but MD simulations are crucial for assessing the stability and dynamics of the predicted ligand-receptor-cofactor complexes under biologically relevant conditions.

Table 2: Application of Molecular Dynamics Simulations in Drug Discovery

| Application Area | Specific Use Case | Typical Simulation Scale | Key Insights Provided |

|---|---|---|---|

| Target Validation & Dynamics | Study of cofactor role in mPGES-1 stability [39] | 100 ns - 10 µs | Revealed GSH's structural role in packing protein chains at monomer interfaces [39]. |

| Binding Energetics & Kinetics | Free Energy Pertigation (FEP) calculations [31] | >100 ns | Estimates binding affinities (ΔG⊖) and kinetics, guiding lead optimization [31]. |

| Membrane Protein Systems | GPCRs, Ion Channels, Cytochrome P450s [31] | Varies by system size | Essential for studying proteins in a realistic lipid bilayer environment [31]. |

| Formulation Development | Stability of amorphous solids & nanoparticles [31] | Varies by system | Informs drug delivery strategies by simulating drug-polymer interactions [31]. |

MD simulations bridge a critical gap left by docking, as the "lack of a proper description of systems’ true dynamics is one of the biggest caveats of docking" [31]. For example, a study on microsomal prostaglandin E2 synthase‐1 (mPGES-1) used MD simulations to validate that the glutathione (GSH) cofactor is tightly bound and unlikely to be displaced, informing the strategy for designing competitive inhibitors [39]. Furthermore, MD can investigate the role of specific residues, such as R73 in mPGES-1, in solvent exchange and gatekeeping between the active site and adjacent cavities [39].

Experimental Protocols for Cofactor-Driven Target Validation