Consensus vs. Single-Tool Metabolic Models: A Comparative Guide for Enhanced Predictive Biology

This article provides a comprehensive analysis for researchers and drug development professionals on the critical comparison between consensus genome-scale metabolic models (GEMs) and single-tool reconstructions.

Consensus vs. Single-Tool Metabolic Models: A Comparative Guide for Enhanced Predictive Biology

Abstract

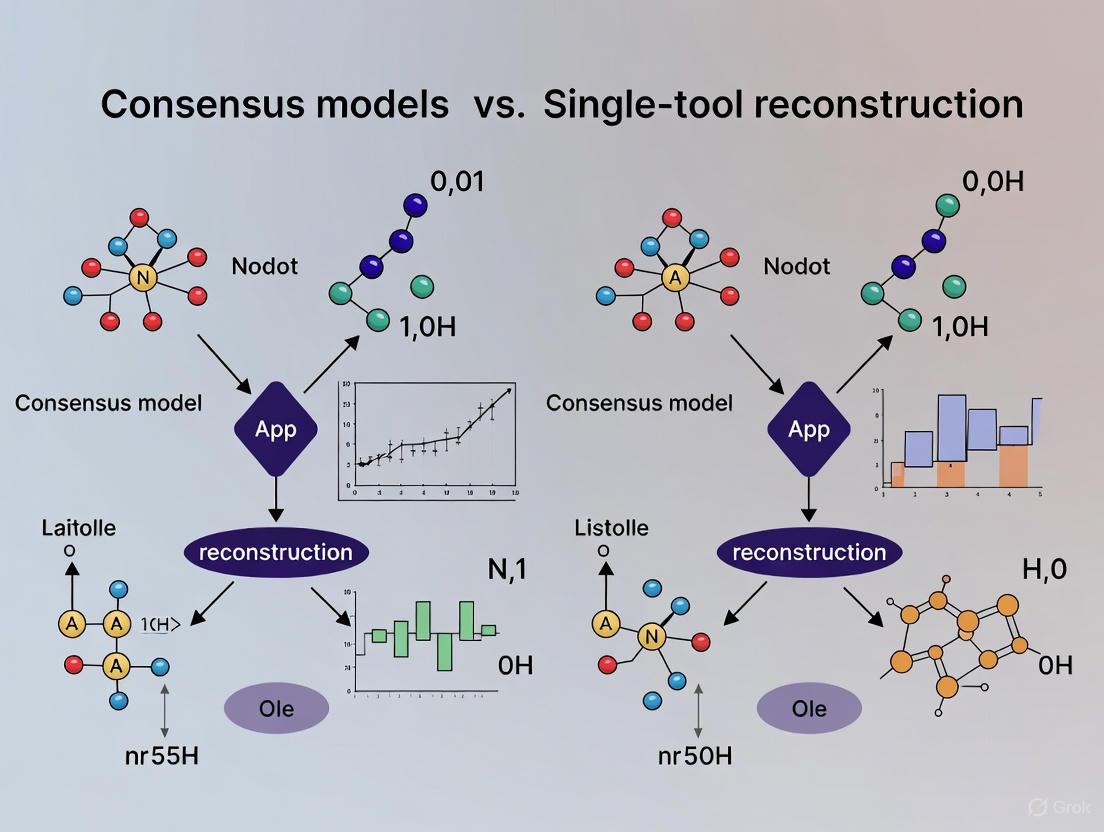

This article provides a comprehensive analysis for researchers and drug development professionals on the critical comparison between consensus genome-scale metabolic models (GEMs) and single-tool reconstructions. We explore the foundational principles driving the need for consensus approaches, detailing the methodologies and tools like GEMsembler and COMMGEN that enable their assembly. The content delves into troubleshooting model inconsistencies and optimizing gene-protein-reaction rules, followed by a rigorous validation and comparative assessment of model performance in predicting auxotrophy and gene essentiality. Evidence demonstrates that consensus models, by integrating diverse reconstructions, reduce network gaps, enhance predictive accuracy, and offer a more reliable foundation for systems biology and drug discovery applications than any single model alone.

The Case for Consensus: Overcoming Single-Model Limitations in Metabolic Reconstruction

The Inherent Challenges of Automated GEM Reconstruction

Genome-scale metabolic models (GEMs) serve as powerful computational frameworks that mathematically represent the metabolic network of an organism, connecting genetic information to metabolic phenotypes through gene-protein-reaction (GPR) associations [1] [2]. The reconstruction of high-quality GEMs has become fundamental to systems biology, enabling the prediction of cellular behavior under various genetic and environmental conditions [2]. While manual reconstruction remains the gold standard, producing highly curated models like iML1515 for Escherichia coli and Yeast7 for Saccharomyces cerevisiae, the labor-intensive nature of this process has spurred the development of automated reconstruction tools [2]. These automated methods promise to broaden the application of GEMs to non-model organisms and complex microbial communities, yet they introduce significant challenges related to consistency, accuracy, and functional predictability [3] [4].

The core challenge stems from the fact that different automated tools, despite using the same genomic starting point, can produce markedly different metabolic networks [3]. This variability arises from differences in underlying biochemical databases, algorithmic approaches, and inherent assumptions about network connectivity and functionality. As the field moves toward more complex modeling scenarios—including microbial communities, host-pathogen interactions, and less-annotated species—understanding and addressing these challenges becomes paramount for generating reliable biological insights [1] [3]. This review examines the inherent limitations of single-tool automated reconstruction and evaluates the emerging paradigm of consensus modeling as a strategy to overcome these challenges.

Methodological Divergence in Automated Reconstruction Tools

Fundamental Reconstruction Approaches

Automated GEM reconstruction tools primarily follow one of two philosophical approaches: bottom-up or top-down reconstruction. Bottom-up approaches, implemented in tools like gapseq and KBase, construct draft models by mapping annotated genomic sequences to metabolic reactions, progressively building the network from individual components [3] [5]. This method begins with genome annotation, retrieves corresponding biochemical reactions from databases, assembles a draft metabolic network, and then undergoes manual curation to resolve network gaps and inconsistencies [4]. In contrast, top-down approaches, exemplified by CarveMe, begin with a manually curated universal metabolic model containing reactions from databases like BiGG, which is then "carved" into an organism-specific model by removing reactions without genetic evidence in the target organism [4]. This approach preserves the structural integrity and manual curation of the original universal model while adapting it to specific genomic evidence.

A comparative analysis of these approaches reveals that each entails different trade-offs. Bottom-up methods may better capture organism-specific pathways but suffer from more network gaps, while top-down approaches produce more connected networks but might include reactions not genuinely present in the target organism [4].

Database Dependencies and Annotation Biases

The reconstruction process is heavily dependent on the underlying biochemical databases that provide the reaction templates and metabolic rules. Different tools utilize different databases—CarveMe relies on BiGG, gapseq and KBase use ModelSEED, and RAVEN can leverage both KEGG and MetaCyc [1] [3] [4]. This dependency introduces significant variability because these databases differ in their coverage of metabolic functions, namespace conventions, and quality of curation. A recent comparative analysis demonstrated that the choice of reconstruction tool—and by extension its underlying database—significantly influenced the resulting model structure, with gapseq models generally containing more reactions and metabolites, while CarveMe models included more genes [3].

The impact of database choice extends beyond mere reaction counts to functional capabilities. For instance, when models were reconstructed from the same metagenome-assembled genomes (MAGs) using different tools, the resulting GEMs showed remarkably low similarity in their reaction sets, with Jaccard similarity indices as low as 0.23-0.24 between gapseq and KBase models, despite using the same genomic input [3]. This suggests that the database dependency introduces substantial uncertainty in metabolic network structure.

Quantitative Comparison of Reconstruction Tool Performance

Structural and Functional Discrepancies

A systematic comparison of models reconstructed from the same bacterial genomes using CarveMe, gapseq, KBase, and consensus approaches reveals substantial structural differences that inevitably affect functional predictions. The table below summarizes key structural metrics from a study analyzing 105 marine bacterial MAGs:

Table 1: Structural comparison of GEMs from different reconstruction approaches

| Reconstruction Approach | Number of Genes | Number of Reactions | Number of Metabolites | Dead-end Metabolites |

|---|---|---|---|---|

| CarveMe | Highest | Medium | Medium | Fewest |

| gapseq | Lowest | Highest | Highest | Most |

| KBase | Medium | Medium | Medium | Medium |

| Consensus | High | Highest | Highest | Few |

Source: Adapted from comparative analysis of coral-associated and seawater bacterial communities [3]

The structural differences translate directly to functional variations. gapseq models, despite having the most reactions and metabolites, also contained the highest number of dead-end metabolites, which can compromise network functionality and lead to incorrect phenotype predictions [3]. CarveMe models contained the highest number of genes but fewer reactions, suggesting differences in how GPR associations are mapped across tools. Importantly, the consensus approach successfully combined the strengths of individual tools, incorporating a comprehensive set of reactions while minimizing dead-end metabolites that create network gaps [3].

Impact on Biological Predictions

The consequences of these structural differences extend to critical biological predictions, including gene essentiality, substrate utilization, and metabolic capabilities. Comparative studies have demonstrated that different reconstruction tools can produce conflicting predictions about an organism's ability to utilize specific carbon sources or survive gene knockouts [3] [6]. These discrepancies stem from several sources:

- Varying gap-filling solutions during reconstruction that introduce different metabolic routes

- Different essentiality annotations for the same genes across tools

- Alternative biomass compositions that affect growth predictions

- Inconsistent reaction directionality constraints based on thermodynamic considerations

The fundamental challenge is that each reconstruction tool captures a different aspect of the metabolic network, with no single tool consistently outperforming others across all prediction tasks [7]. This has led to the emergence of consensus approaches that aim to leverage the complementary strengths of multiple tools while mitigating their individual weaknesses.

Consensus Modeling: A Path Toward Robust Reconstruction

Theoretical Framework and Implementation

Consensus modeling represents a paradigm shift in automated GEM reconstruction, addressing tool-specific biases by integrating multiple reconstructions into a unified model. The core premise is that reactions supported by multiple independent reconstruction approaches are more likely to be biologically valid than those identified by a single tool [3] [7] [6]. This approach follows the same philosophical principle as consensus methods in other bioinformatics domains, where integrating multiple predictions improves overall accuracy and reliability.

Several methodologies have been developed for consensus model generation:

- COMMGEN: Identifies similarities, dissimilarities, and complements between models and provides semi-automatic resolution of inconsistencies related to metabolites, reactions, and compartments [6].

- GEMsembler: A Python package that compares cross-tool GEMs, tracks the origin of model features, and builds consensus models containing any subset of the input models [7].

- COMMIT: Specifically designed for gap-filling community models using an iterative approach based on MAG abundance [3].

These tools address critical challenges in model integration, including namespace reconciliation, resolution of different pathway granularities (lumped vs. detailed reactions), and standardization of compartmentalization [6]. By systematically resolving these inconsistencies, consensus methods produce metabolic networks that more comprehensively represent an organism's metabolic capabilities.

Performance Advantages of Consensus Approaches

Empirical evidence demonstrates that consensus models consistently outperform individual reconstructions in both structural completeness and functional predictions. A recent evaluation of consensus models for Lactiplantibacillus plantarum and Escherichia coli showed that they outperformed gold-standard models in auxotrophy and gene essentiality predictions [7]. Additionally, optimizing GPR combinations from consensus models improved gene essentiality predictions, even in manually curated gold-standard models.

Table 2: Performance comparison of consensus vs. single-tool reconstruction

| Performance Metric | Single-Tool Models | Consensus Models |

|---|---|---|

| Reaction Coverage | Variable, tool-dependent | Highest |

| Gene Essentiality Prediction | Moderate accuracy | Highest accuracy |

| Auxotrophy Prediction | Variable accuracy | Highest accuracy |

| Dead-end Metabolites | Tool-dependent | Reduced |

| Functional Capabilities | Limited to tool-specific database | Comprehensive |

The structural advantages of consensus models directly translate to improved predictive performance. By incorporating reactions from multiple sources, consensus models reduce network gaps and expand metabolic capabilities, leading to more accurate phenotype predictions [3] [7]. Furthermore, the process of generating consensus models helps identify and resolve inconsistencies between reconstructions, resulting in more robust and reliable metabolic networks.

Experimental Protocols for Reconstruction Validation

Standardized Workflow for Reconstruction Comparison

To objectively evaluate and compare different reconstruction approaches, researchers should implement a standardized validation protocol. The following workflow outlines key steps for systematic comparison:

Title: GEM reconstruction validation workflow

This workflow begins with multi-tool reconstruction using at least three different automated tools (e.g., CarveMe, gapseq, and KBase) from the same genomic input. The resulting models then undergo structural analysis comparing metrics such as reaction counts, metabolite counts, gene coverage, and dead-end metabolites. Functional assessment evaluates the models' ability to simulate growth on different substrates, predict gene essentiality, and produce biologically feasible flux distributions. Based on this analysis, consensus generation integrates the models using tools like GEMsembler or COMMGEN. Finally, model validation compares predictions against experimental data, followed by performance benchmarking to quantify improvements.

Key Experimental Metrics and Validation Data

Rigorous validation of metabolic reconstructions requires both computational metrics and experimental comparisons. The table below outlines essential validation metrics and their biological significance:

Table 3: Essential validation metrics for GEM reconstruction

| Validation Category | Specific Metrics | Biological Significance |

|---|---|---|

| Structural Quality | Number of blocked reactions | Indicates network connectivity and functionality |

| Dead-end metabolites | Highlights gaps in pathway knowledge | |

| Mass and charge balance | Ensures biochemical realism | |

| Functional Accuracy | Gene essentiality prediction accuracy | Tests model's ability to recapitulate genetic constraints |

| Substrate utilization range | Validates catabolic pathway completeness | |

| Biomass precursor production | Confirms anabolic capability | |

| Predictive Performance | Growth rate correlation with experiments | Quantifies phenotypic prediction accuracy |

| Metabolic flux distributions | Compares internal network activity with experimental data | |

| Essential nutrient identification | Tests auxotrophy prediction capability |

Experimental validation should leverage available omics data, including transcriptomics, proteomics, and metabolomics measurements, to contextualize and verify model predictions [8]. For well-characterized organisms, comparison with manually curated gold-standard models provides an additional benchmark for assessing reconstruction quality.

Research Reagent Solutions: Essential Tools for GEM Reconstruction

The field of automated metabolic reconstruction has developed a suite of computational tools and resources that serve as essential "research reagents" for model generation and validation. The table below catalogues key resources and their specific functions:

Table 4: Essential research reagents for GEM reconstruction and analysis

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| CarveMe | Software | Top-down model reconstruction | High-throughput model generation for diverse organisms |

| gapseq | Software | Bottom-up model reconstruction | Detailed pathway-based reconstruction |

| RAVEN | Software | Semi-automated reconstruction | Eukaryotic and non-model organism reconstruction |

| GEMsembler | Software | Consensus model generation | Integrating multiple reconstructions |

| COMMGEN | Software | Consensus model generation | Resolving inconsistencies between models |

| BiGG | Database | Curated metabolic reactions | Reference database for reaction information |

| ModelSEED | Database | Biochemical database | Reaction templates and pathway mapping |

| KEGG | Database | Pathway database | Pathway inference and annotation |

| BRENDA | Database | Enzyme kinetics | Kinetic parameter incorporation [8] |

| GECKO | Software | Enzyme constraint modeling | Incorporating proteomic constraints [8] |

These tools collectively enable the complete reconstruction pipeline, from initial genome annotation to functional model simulation. Researchers should select tools based on their specific organism of interest, available data resources, and intended applications, recognizing that different tools may be optimal for different scenarios.

The inherent challenges of automated GEM reconstruction stem from methodological differences, database dependencies, and the complex nature of metabolic networks themselves. While individual reconstruction tools each have strengths and weaknesses, consensus approaches represent a promising path forward by integrating multiple evidence sources to create more robust and predictive models. The field continues to evolve with new methods like pan-Draft that leverage genomic redundancy across multiple strains or MAGs to improve reconstruction quality [5], and enzyme-constrained modeling through tools like GECKO that incorporate kinetic and proteomic constraints [8].

For researchers navigating this complex landscape, a pragmatic approach involves using multiple reconstruction tools followed by consensus generation and rigorous validation against experimental data. As the field moves toward more complex modeling scenarios—including microbial communities, host-microbe interactions, and personalized medicine applications—addressing these reconstruction challenges will be essential for generating biologically meaningful insights. The development of standardized benchmarking frameworks, improved biochemical databases, and more sophisticated integration algorithms will further enhance the reliability of automated reconstruction, ultimately expanding the scope and impact of metabolic modeling across biological research and biotechnology.

Genome-scale metabolic models (GEMs) are powerful computational frameworks that link an organism's genotype to its metabolic phenotype. They have become indispensable tools in systems biology, with applications ranging from predicting microbial growth and gene essentiality to elucidating metabolic interactions in complex microbial communities. The construction of high-quality GEMs, however, remains a complex process that has been greatly accelerated by the development of automated reconstruction tools. Among these, CarveMe, gapseq, and ModelSEED (often implemented through the KBase platform) are widely used for their ability to generate "ready-to-use" models directly from genome sequences that can immediately be utilized for flux balance analysis (FBA) [9] [10].

Despite being applied to the same genomic starting material, these tools frequently produce models with divergent structural and functional properties. This divergence stems from their fundamentally different reconstruction philosophies, underlying biochemical databases, and algorithmic approaches. A comprehensive comparative analysis revealed that "these reconstruction approaches, while based on the same genomes, resulted in GEMs with varying numbers of genes and reactions as well as metabolic functionalities" [9]. Such discrepancies introduce uncertainty into predictions derived from constraint-based modeling and can significantly impact biological interpretations, particularly when studying metabolic interactions within microbial communities.

This guide objectively compares the performance of CarveMe, gapseq, and ModelSEED/KBase in reconstructing metabolic models, with a specific focus on how their methodological differences manifest in final model properties. We frame this comparison within the emerging research paradigm that advocates for consensus models—integrated reconstructions that combine outputs from multiple tools—as a strategy to mitigate individual tool biases and create more comprehensive metabolic networks.

Methodological Foundations: How the Tools Work

Reconstruction Philosophies and Algorithms

The three tools employ distinct methodological approaches to reconstruct metabolic networks from genomic data:

CarveMe utilizes a top-down approach, beginning with a manually curated universal metabolic model containing reactions from major biochemical databases. The algorithm subsequently "carves out" reactions not supported by genomic evidence, resulting in a organism-specific model. This method prioritizes network functionality and thermodynamic consistency through its curated template [9] [11].

gapseq implements a bottom-up approach combined with informed pathway prediction. It constructs models by mapping annotated genomic sequences to a custom-curated reaction database derived from ModelSEED but extensively refined. A distinctive feature is its Linear Programming (LP)-based gap-filling algorithm that incorporates both network topology and sequence homology to reference proteins to identify and resolve metabolic gaps, reducing medium-specific biases during reconstruction [10].

ModelSEED/KBase also follows a bottom-up paradigm but relies primarily on the ModelSEED biochemistry database and automated annotation pipelines. The reconstruction process involves generating draft models from genome annotations followed by gap-filling to enable biomass production on a specified growth medium. The KBase platform integrates these capabilities within a broader bioinformatics workflow environment [9] [12].

Biochemical Databases and Knowledge Bases

The biochemical databases underlying each tool significantly influence which reactions and metabolites are included in reconstructed models:

CarveMe draws from a manually curated universal model that integrates data from multiple biochemical sources, emphasizing thermodynamic consistency and removing energy-generating futile cycles [11].

gapseq utilizes a custom-curated metabolism database comprising approximately 15,150 reactions (including transporters) and 8,446 metabolites, derived from ModelSEED but extensively refined. This database is regularly updated using the latest UniProt and TCDB releases [10].

ModelSEED/KBase relies on the ModelSEED Biochemistry database, a comprehensive resource that harmonizes identifiers and properties from multiple reference databases. This database is publicly available and can be set up independently for use in various metabolic modeling workflows [13] [12].

Table 1: Foundational Characteristics of Automated Reconstruction Tools

| Feature | CarveMe | gapseq | ModelSEED/KBase |

|---|---|---|---|

| Reconstruction approach | Top-down | Bottom-up | Bottom-up |

| Core database | Curated universal model | Custom-curated database derived from ModelSEED | ModelSEED Biochemistry database |

| Gap-filling strategy | Medium-specific using genomic evidence | LP-based using topology and homology | Medium-specific to enable biomass production |

| Key advantage | Speed, thermodynamic consistency | Comprehensive pathway prediction, reduced medium bias | Integration with KBase platform workflows |

The following diagram illustrates the fundamental reconstruction workflows employed by these tools:

Structural and Functional Divergence in Reconstructed Models

Comparative Analysis of Model Structures

When reconstructed from the same set of metagenome-assembled genomes (MAGs), the three tools produce models with markedly different structural characteristics. A systematic comparison using 105 high-quality MAGs from marine bacterial communities revealed substantial variations in model components [9]:

gapseq models generally encompassed the highest number of reactions and metabolites, suggesting comprehensive network coverage. However, this expansiveness came with a trade-off: gapseq models also exhibited the largest number of dead-end metabolites, which can limit pathway connectivity and functionality [9].

CarveMe models contained the highest number of genes associated with metabolic reactions, yet featured fewer overall reactions and metabolites compared to gapseq models. This pattern reflects CarveMe's curated template approach, which may exclude reactions without strong genomic evidence or those not fitting network context [9].

KBase/ModelSEED models occupied an intermediate position in terms of reaction and metabolite counts, but showed distinct gene content compared to the other tools [9].

Table 2: Quantitative Structural Comparison of Models Reconstructed from identical MAGs

| Structural Metric | CarveMe | gapseq | KBase/ModelSEED |

|---|---|---|---|

| Number of genes | Highest | Lowest | Intermediate |

| Number of reactions | Lower | Highest | Intermediate |

| Number of metabolites | Lower | Highest | Intermediate |

| Dead-end metabolites | Fewer | Most | Intermediate |

| Jaccard similarity of reactions | Low vs. others (≈0.24) | Higher with ModelSEED (≈0.24) | Higher with gapseq (≈0.24) |

Performance in Phenotypic Predictions

The accuracy of metabolic models is ultimately judged by their ability to predict experimentally observed phenotypes. Large-scale validation using enzymatic data from the Bacterial Diversity Metadatabase (BacDive), encompassing 10,538 enzyme activities across 3,017 organisms and 30 unique enzymes, demonstrated significant performance differences [10]:

gapseq achieved the highest true positive rate (53%) and lowest false negative rate (6%) in predicting enzyme activities, indicating superior sensitivity in capturing known metabolic capabilities [10].

CarveMe and ModelSEED showed substantially higher false negative rates (32% and 28%, respectively) and lower true positive rates (27% and 30%, respectively), suggesting they more frequently miss experimentally verified enzymatic functions [10].

These performance differences likely stem from gapseq's comprehensive pathway prediction algorithm and its use of multiple evidence sources beyond simple genomic annotation, including sequence homology to reference proteins and network topology considerations during gap-filling [10].

The Consensus Approach: Integrating Multiple Reconstructions

Methodology for Building Consensus Models

The documented divergences between individual reconstruction tools have prompted the development of consensus approaches that integrate models from multiple tools. The consensus method involves:

- Draft Model Generation: Reconstructing metabolic models for the same genome using CarveMe, gapseq, and KBase/ModelSEED.

- Model Merging: Combining these draft models into a unified draft consensus model that incorporates reactions, metabolites, and genes from all individual reconstructions.

- Gap-Filling: Applying community-scale gap-filling algorithms such as COMMIT, which uses an iterative approach based on MAG abundance to predict permeable metabolites and augment the medium for subsequent reconstructions [9].

This process results in a consensus model that aims to capture the metabolic capabilities supported by any of the reconstruction tools, while mitigating tool-specific biases and omissions.

Advantages of Consensus Models

Research comparing consensus models with single-tool reconstructions has demonstrated several key advantages:

Enhanced Network Coverage: Consensus models encompass a larger number of reactions and metabolites than any single tool, successfully integrating the unique contributions from each reconstruction approach [9].

Reduced Metabolic Gaps: Consensus models exhibit fewer dead-end metabolites, indicating improved network connectivity and functionality. This addresses a significant limitation observed particularly in gapseq models [9].

Stronger Genomic Evidence Support: By incorporating a greater number of genes from the individual reconstructions, consensus models benefit from stronger genomic evidence for included reactions [9].

Mitigation of Tool-Specific Bias: The consensus approach reduces reliance on any single tool's biochemical database or reconstruction algorithm, potentially leading to more balanced and comprehensive metabolic networks [9].

Interestingly, the iterative order of MAG inclusion during gap-filling showed only negligible correlation (r = 0-0.3) with the number of added reactions, suggesting that consensus model generation is robust to processing order variations [9].

Experimental Protocols for Tool Comparison

Standardized Evaluation Framework

To objectively compare reconstruction tools, researchers should implement a standardized evaluation protocol:

Input Standardization: Use identical, high-quality genome sequences (complete genomes or MAGs) as input for all tools to ensure comparisons are based on identical genetic starting material.

Model Reconstruction: Process genomes through each tool using default parameters, while documenting any tool-specific settings that might affect output.

Structural Analysis: Quantify model components including genes, reactions, metabolites, and dead-end metabolites using standardized counting methods.

Functional Validation: Compare model predictions against experimentally verified phenotypic data, such as:

- Enzyme activity assays from databases like BacDive

- Carbon source utilization data

- Fermentation products

- Gene essentiality measurements

Similarity Assessment: Calculate Jaccard similarity coefficients for reaction, metabolite, and gene sets to quantify overlap between tools.

Community Modeling: For microbial communities, analyze predicted metabolite exchanges and cross-feeding interactions to identify tool-specific patterns in interaction prediction.

Table 3: Key Resources for Metabolic Reconstruction and Validation

| Resource Name | Type | Function in Analysis | Access |

|---|---|---|---|

| BacDive Database | Phenotypic database | Provides experimental enzyme activity data for model validation | Publicly available |

| ModelSEED Biochemistry | Biochemical database | Standardized reaction and metabolite information for reconstruction | Publicly available |

| UniProt | Protein sequence database | Reference sequences for functional annotation | Publicly available |

| TCDB | Transporter database | Reference information for transporter prediction | Publicly available |

| COMMIT | Algorithm | Community-scale gap-filling for consensus model generation | Implementation described in literature |

Implications for Microbial Community Modeling

The choice of reconstruction tool has particularly profound implications for studying microbial communities, where metabolic interactions between species shape community structure and function. Research has revealed that "the set of exchanged metabolites was more influenced by the reconstruction approach rather than the specific bacterial community investigated" [9]. This finding indicates a potential bias in predicting metabolite interactions using community-scale metabolic models, as the tool selection may artificially emphasize or minimize certain metabolic exchange processes.

The consensus approach offers a promising path forward for community modeling by integrating the strengths of multiple reconstruction tools while minimizing individual biases. This is especially valuable when working with metagenome-assembled genomes, where incomplete genomic information amplifies the limitations of any single reconstruction method.

Automated reconstruction tools have democratized access to genome-scale metabolic modeling, but their divergent approaches lead to substantially different models from the same genomic input. CarveMe, gapseq, and ModelSEED/KBase each offer distinct strengths: CarveMe provides rapid, thermodynamically consistent models; gapseq delivers comprehensive pathway coverage with superior phenotypic prediction accuracy; and ModelSEED/KBase offers integration within a broader bioinformatics platform.

The emerging consensus paradigm—building integrated models from multiple reconstruction tools—addresses the limitations of individual approaches by creating more comprehensive networks with fewer gaps and reduced tool-specific bias. As metabolic modeling continues to expand into complex microbial communities and non-model organisms, this consensus framework promises to enhance prediction reliability and biological insight, ultimately strengthening the bridge between genomic potential and metabolic phenotype.

Understanding Top-Down vs. Bottom-Up Reconstruction Approaches

In computational biology, particularly in the construction of genome-scale metabolic models (GEMs), top-down and bottom-up approaches represent two fundamentally different philosophies for reconstructing metabolic networks from genomic data. These approaches are central to systems biology, where the goal is to understand complex biological systems by integrating computational and experimental data [14]. Top-down approaches begin with a universal, well-curated template model and "carve out" a species-specific model by removing reactions without genomic evidence, while bottom-up strategies construct draft models from scratch by mapping annotated genomic sequences to biochemical reactions [3]. The choice between these approaches significantly impacts the structure, functional capabilities, and predictive accuracy of the resulting metabolic models, which are crucial for applications in drug discovery, metabolic engineering, and understanding microbial communities [3] [6].

The increasing availability of multiple reconstruction tools, each employing different approaches and databases, has created a challenge for researchers who must select appropriate methodologies for their specific applications. This has led to the emergence of consensus models that integrate predictions from multiple reconstruction approaches to create more comprehensive and accurate metabolic networks [3] [6]. This guide provides a systematic comparison of top-down and bottom-up reconstruction methodologies, supported by experimental data and detailed protocols, to inform researchers and drug development professionals in selecting appropriate strategies for their work.

Core Conceptual Differences Between Approaches

Top-down and bottom-up approaches differ fundamentally in their starting points, underlying principles, and reconstruction processes. A top-down approach begins with a broad overview of the system and progressively refines it into smaller subsystems until the entire specification is reduced to base elements [15]. In metabolic reconstruction, this translates to starting with a universal metabolic template containing known biochemical reactions from multiple organisms, then removing elements that lack support from the target organism's genomic evidence [3]. This method prioritizes network functionality from the outset, as the initial template is already a coherent metabolic network.

In contrast, a bottom-up approach pieces together systems from their basic components to give rise to more complex systems [15]. For GEM reconstruction, this means building metabolic networks entirely from the target organism's genomic annotations, typically by identifying enzyme-encoding genes and assembling their associated reactions into a network [3]. This method emphasizes the individual components first, potentially growing in complexity and completeness like a "seed" model, but may result in subsystems developed in isolation without guaranteeing global network functionality.

The conceptual differences extend beyond metabolic modeling to other scientific domains. In neuroscience, top-down processing is characterized by high-level direction of sensory processing by cognitive factors like goals or targets, while bottom-up processing is driven primarily by incoming sensory data without higher-level direction [15] [16]. In image processing, top-down approaches first identify objects of interest (e.g., humans in an image) then analyze their components (e.g., body joints), while bottom-up approaches recognize components first (e.g., all body joints in an image) then assemble them into objects [17]. These cross-domain parallels highlight the fundamental nature of these complementary approaches to complex system analysis.

Table 1: Conceptual Comparison of Reconstruction Approaches

| Feature | Top-Down Approach | Bottom-Up Approach |

|---|---|---|

| Starting Point | Universal template model [3] | Genomic annotations of target organism [3] |

| Process | Stepwise refinement by removing unsupported reactions [15] | Assembly of components into increasingly complex systems [15] |

| Primary Focus | Global network functionality and coherence | Individual components and their properties |

| Implementation in GEMs | CarveMe [3] | gapseq, KBase [3] |

| Information Flow | Hypothesis-driven [18] | Data-driven [18] |

Methodological Comparison in Metabolic Reconstruction

Reconstruction Protocols and Tools

Top-down reconstruction protocol typically employs tools like CarveMe, which uses a universal model (e.g., the AGORA resource for microbes) as starting point [3]. The reconstruction process follows these steps:

- Template selection: A curated universal metabolic template is selected

- Genome integration: The target organism's genome annotation is mapped to the template reactions

- Network carving: Reactions without genomic evidence are removed while maintaining network connectivity

- Gap-filling: Minimal reactions are added to restore biomass production and metabolic functionality

- Quality control: The model is checked for blocked reactions, energy-generating cycles, and growth capabilities

Bottom-up reconstruction protocol using tools like gapseq or KBase follows a different sequence:

- Genome annotation: The target organism's genome is annotated to identify metabolic genes

- Reaction assembly: Biochemical reactions associated with the identified genes are retrieved from databases

- Compartmentalization: Reactions are assigned to appropriate cellular compartments

- Network validation: The draft network is checked for connectivity and functionality

- Gap-filling: Missing reactions are added to complete essential metabolic pathways

- Biomass definition: Organism-specific biomass composition is defined based on literature or experimental data

Workflow Visualization

The following diagram illustrates the conceptual workflow differences between top-down and bottom-up approaches to metabolic reconstruction:

Performance Comparison: Experimental Data

Structural and Functional Differences

Comparative analysis of metabolic models reconstructed from the same metagenome-assembled genomes (MAGs) using different approaches reveals significant structural differences. A study comparing CarveMe (top-down), gapseq, and KBase (both bottom-up) on 105 high-quality MAGs from coral-associated and seawater bacterial communities demonstrated that each approach produces models with distinct characteristics [3].

Table 2: Structural Comparison of Models from Different Approaches (Adapted from [3])

| Reconstruction Approach | Number of Genes | Number of Reactions | Number of Metabolites | Dead-End Metabolites |

|---|---|---|---|---|

| CarveMe (Top-Down) | Highest | Intermediate | Intermediate | Lowest |

| gapseq (Bottom-Up) | Lowest | Highest | Highest | Highest |

| KBase (Bottom-Up) | Intermediate | Intermediate | Intermediate | Intermediate |

The analysis revealed remarkably low similarity between models reconstructed from the same MAGs using different approaches. The Jaccard similarity for reaction sets between gapseq and KBase models was only 0.23-0.24, despite both being bottom-up approaches [3]. This suggests that the choice of biochemical database and implementation details significantly impact the resulting models, potentially introducing biases in predicted metabolic capabilities and metabolite exchange profiles.

Consensus Models: Integrating Multiple Approaches

Consensus reconstruction approaches have emerged to mitigate the limitations of individual reconstruction tools. These methods integrate models from multiple approaches to create more comprehensive and accurate metabolic networks. The COMMGEN tool, for instance, automatically identifies inconsistencies between models and semi-automatically resolves them, contributing to consolidated knowledge of metabolic function [6].

Experimental evidence demonstrates that consensus models retain the majority of unique reactions and metabolites from the original models while reducing the presence of dead-end metabolites [3]. They also incorporate a greater number of genes, indicating stronger genomic evidence support for the included reactions. When applied to microbial community modeling, consensus approaches have been shown to enhance functional capability and provide more comprehensive metabolic network coverage [3].

Table 3: Performance Advantages of Consensus Models

| Performance Metric | Advantage of Consensus Models | Experimental Support |

|---|---|---|

| Reaction Coverage | Includes majority of unique reactions from individual models | Jaccard similarity analysis [3] |

| Dead-End Metabolites | Reduced number compared to individual bottom-up models | Structural analysis of GEMs [3] |

| Genomic Evidence | Stronger support through incorporation of more genes | Gene set analysis [3] |

| Predictive Capability | Retains or improves on initial models' predictive capabilities | Growth simulation studies [6] |

Experimental Protocols for Comparative Analysis

Protocol for Comparing Reconstruction Approaches

To systematically compare top-down and bottom-up reconstruction approaches, researchers can implement the following experimental protocol, adapted from published comparative studies [3]:

1. Input Data Preparation

- Select high-quality genomes or metagenome-assembled genomes (MAGs)

- Use standardized genome annotation pipelines (e.g., PROKKA, RAST) for consistent gene calling

- Create a minimal medium composition consistent across all reconstructions

2. Model Reconstruction

- Apply at least one top-down tool (CarveMe recommended) and two bottom-up tools (gapseq and KBase recommended)

- Use standardized namespace for metabolites and reactions to enable comparison

- Apply consistent gap-filling parameters across all approaches

3. Model Analysis

- Extract key model statistics: genes, reactions, metabolites, dead-end metabolites

- Calculate Jaccard similarities for model components between approaches

- Assess functional capabilities through flux balance analysis under standardized conditions

- Evaluate metabolite exchange profiles for community models

4. Consensus Model Generation

- Apply consensus-building tools (COMMGEN or custom pipelines)

- Resolve namespace inconsistencies and reaction duplicates

- Implement iterative gap-filling based on organism abundance (for communities)

Protocol for Validating Model Predictions

Experimental validation of metabolic models is essential for assessing their predictive accuracy. The following protocol outlines key validation steps:

1. Growth Capability Assessment

- Simulate growth on different carbon sources

- Compare predictions with experimental growth data (if available)

- Calculate accuracy, precision, and recall for growth predictions

2. Gene Essentiality Analysis

- Perform single-gene deletion studies in silico

- Compare essentiality predictions with experimental knockout data

- Calculate statistical measures of prediction accuracy

3. Metabolic Flux Validation

- Compare predicted flux distributions with 13C-flux analysis data

- Assess correlation between predicted and measured exchange rates

- Validate predicted maximum yields on different substrates

The following diagram illustrates the experimental workflow for comparing reconstruction approaches and building consensus models:

Table 4: Essential Tools and Databases for Metabolic Reconstruction

| Tool/Resource | Type | Function | Approach |

|---|---|---|---|

| CarveMe [3] | Software Tool | Automated metabolic reconstruction from genome annotations | Top-Down |

| gapseq [3] | Software Tool | Automated metabolic reconstruction and pathway prediction | Bottom-Up |

| KBase [3] | Platform | Integrated environment for reconstruction and analysis | Bottom-Up |

| COMMGEN [6] | Software Tool | Consensus model generation from multiple reconstructions | Hybrid |

| COMMIT [3] | Software Tool | Gap-filling and constraint-based modeling of community models | Model Refinement |

| ModelSEED [3] | Database | Biochemical database for reaction and metabolite information | Bottom-Up |

| AGORA [3] | Resource | Curated template models for microbial organisms | Top-Down |

| MetaCyc [14] | Database | Curated database of metabolic pathways and enzymes | Reference |

| BiGG Models [14] | Database | Knowledgebase of genome-scale metabolic models | Reference |

| COBRA Toolbox [14] | Software | MATLAB toolbox for constraint-based reconstruction and analysis | Analysis |

The comparative analysis of top-down and bottom-up reconstruction approaches reveals that neither method is universally superior; each has distinct strengths and limitations that make them suitable for different research scenarios. Top-down approaches like CarveMe typically produce more compact models with fewer dead-end metabolites, while bottom-up approaches like gapseq often generate more comprehensive reaction networks at the cost of potential network gaps [3]. The choice between approaches should be guided by research objectives: top-down methods may be preferable for high-throughput applications and consistent model generation across multiple organisms, while bottom-up approaches may be better suited for detailed investigation of specific metabolic capabilities.

For critical applications in drug development and metabolic engineering, where model accuracy significantly impacts decision-making, consensus approaches that integrate multiple reconstruction methods offer substantial advantages. Experimental evidence demonstrates that consensus models retain more biological information from individual reconstructions while reducing artifacts specific to any single approach [3] [6]. The research community would benefit from standardized protocols for model comparison and consensus building, particularly as metabolic modeling expands to complex microbial communities and host-pathogen interactions where comprehensive metabolic coverage is essential for accurate predictions.

The pursuit of reliable artificial intelligence models in biomedical research hinges on effectively quantifying and managing uncertainty. This guide objectively compares the performance of consensus models against single-tool reconstructions, demonstrating how strategic database selection and annotation quality directly impact model structure, function, and predictive reliability. Supported by experimental data, we provide methodologies and metrics for researchers to make informed decisions in model development, particularly for critical applications in drug discovery and development.

In biomedical research, the choice between using a single, powerful model or an ensemble of models (a consensus) is more than a technicality; it is a fundamental decision that influences the reliability and interpretability of outcomes. The rapid proliferation of foundation models, with over 30+ models each for biomedical text and images, has created a fragmented ecosystem, making model selection challenging [19]. This fragmentation introduces significant epistemic uncertainty—uncertainty stemming from incomplete knowledge of the best model for a task.

Furthermore, the integrity of any model is built upon its training data. The principle of "Garbage In, Garbage Out" (GIGO) is paramount; a model's ability to generalize is contingent on the quality and breadth of its annotations and database sources [20]. This guide directly compares consensus and single-tool approaches, quantifying how data-driven choices mitigate uncertainty and enhance model performance for scientific and drug development applications.

Theoretical Foundation: Uncertainty in Machine Learning

Uncertainty in machine learning is broadly categorized into two types: aleatoric and epistemic. Aleatoric uncertainty is inherent to the data, such as random noise or stochastic processes, and is generally irreducible. Epistemic uncertainty, on the other hand, stems from a lack of knowledge or incomplete information, which can be reduced by gathering more data or improving models [21].

- Data-Driven Uncertainty: Arises from noise, biases, or inconsistencies in the training data. High-quality, well-annotated data is essential to minimize this uncertainty [20].

- Model-Driven Uncertainty: Relates to the model's architecture and parameters. Ensemble or consensus methods are particularly effective at quantifying and reducing this form of uncertainty [21].

Quantifying this uncertainty is not merely an academic exercise. It provides a measure of confidence in predictions, which is crucial for decision-making in high-stakes fields like healthcare. As noted in research, the accuracy of ML models tends to fall when used on data that are statistically different from their training data (out-of-distribution data) [22]. Uncertainty Quantification (UQ) methods help estimate this expected drop in performance and provide an uncertainty band for the estimates [22].

Performance Comparison: Consensus Models vs. Single-Tool Reconstructions

The core of the model selection dilemma lies in the trade-off between the robust uncertainty estimates offered by consensus models and the computational simplicity of single-tool approaches. The performance divergence becomes especially pronounced when handling complex, noisy, or out-of-distribution data.

Table 1: Comparative Analysis of Single-Tool vs. Consensus Model Approaches

| Feature | Single-Tool Reconstructions | Consensus Models (Ensembles) |

|---|---|---|

| Core Principle | A single model architecture or algorithm is used for prediction. | Predictions are aggregated from multiple, diverse models. |

| Uncertainty Quantification | Often limited; may require specific techniques like Monte Carlo Dropout [21]. | Inherent; measured by the variance or disagreement among model predictions [21]. |

| Typical Accuracy | Can be high on in-distribution data but may degrade significantly on out-of-distribution data [22]. | Generally more robust and maintain higher accuracy on diverse data types due to aggregation. |

| Computational Cost | Lower cost for training and inference. | Higher cost, as it requires training and running multiple models [21]. |

| Resistance to Data Noise & Bias | Vulnerable to biases and noise present in its specific training set. | Mitigates individual model biases and noise through averaging, leading to more reliable insights [20]. |

| Interpretability | Can be simpler to interpret. | The aggregation mechanism can add a layer of complexity. |

| Ideal Use Case | Resource-constrained environments, well-defined problems with stable data distributions. | Safety-critical applications (e.g., medical diagnostics), and scenarios with complex or shifting data landscapes. |

The variance of predictions within an ensemble serves as a direct measure of uncertainty. Mathematically, for an ensemble with N members, the uncertainty for an input x can be quantified as:

Var[f(x)] = (1/N) * Σ (f_i(x) - f̄(x))² [21]

Where f_i(x) is the prediction of the i-th model and f̄(x) is the mean prediction. A larger variance indicates higher uncertainty, flagging predictions that require closer human inspection.

Experimental Protocols for Model Evaluation

A rigorous, statistically-sound evaluation protocol is essential for objectively comparing model performance. The following methodology outlines key steps, from data preparation to statistical testing.

Data Preparation and Annotation

- Data Sourcing: Utilize diverse and representative databases. In healthcare, this includes leveraging multi-modal data from biobanks, public repositories (e.g., JUMP-CP consortium), and internal organizational records [23].

- Annotation Protocol: Implement a structured annotation workflow.

- Define Guidelines: Create clear, documented annotation guidelines with examples and edge cases [20].

- Redundancy and Consensus: Use multiple annotators per task and establish a consensus mechanism to reduce errors [20].

- Quality Control (QC): Employ built-in QC checks like Ground Truth jobs and "honeypots" (hidden validation frames) to monitor annotator accuracy in real-time [20].

- Data Splitting: Split data into three sets: a training set for model development, a calibration set for tuning conformal prediction, and a test set for final evaluation [21].

Evaluation Metrics and Statistical Testing

For a comprehensive evaluation, move beyond single metrics. The table below summarizes key metrics for different ML tasks.

Table 2: Selection of Evaluation Metrics for Supervised Machine Learning Tasks

| ML Task | Key Evaluation Metrics | Brief Description |

|---|---|---|

| Binary Classification | Sensitivity (Recall), Specificity, Precision, F1-score, AUC-ROC [24] | Metrics derived from the confusion matrix (TP, TN, FP, FN) and ROC curve. |

| Multi-class Classification | Macro/Micro-averaged Precision, Recall, F1-score [24] | Extends binary metrics by computing them per-class and then averaging. |

| Regression | Mean Absolute Error (MAE), Mean Squared Error (MSE), Root MSE (RMSE) [25] | Measures the average magnitude of prediction errors. |

| Model Calibration | Conformal Prediction Sets [21] | Provides prediction sets with guaranteed coverage (e.g., 95% of sets contain the true label). |

After obtaining multiple metric values (e.g., via cross-validation), use statistical tests to determine if performance differences are significant. Avoid the commonly misused paired t-test if its assumptions (like normality of differences) are violated [24]. Use non-parametric tests like the Wilcoxon signed-rank test for comparing two models or the Friedman test for comparing multiple models across multiple datasets [24].

Quantifying the Impact of Data Dissimilarity

A novel approach to UQ involves measuring the dissimilarity between training and test datasets. The Anomaly-based Dataset Dissimilarity (ADD) measure is computed from the activation values of a neural network when fed the datasets. This dissimilarity measure can then be used to estimate classifier accuracy on unseen, out-of-distribution data and assign an uncertainty band to those estimates [22]. The amplitude of this uncertainty band tends to increase with data dissimilarity, providing a quantifiable warning of potential performance degradation [22].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Building and evaluating reliable models requires a suite of tools and methodologies. The following table details key solutions for managing data, annotation, and model uncertainty.

Table 3: Key Research Reagent Solutions for Robust Model Development

| Tool / Solution | Category | Primary Function |

|---|---|---|

| CIDOC Conceptual Reference Model (CRM) [26] | Documentation Standard | An ontology for semantically linking 3D models, sources, and decision-making processes, ensuring documentation interoperability. |

| IDOVIR Platform [26] | Documentation Platform | A user-friendly, web-based tool designed specifically for documenting the sources and paradata (reasoning) behind digital architectural reconstructions. |

| CVAT (Computer Vision Annotation Tool) [20] | Data Annotation | An open-source tool for labeling images and videos. Supports quality control features like consensus labeling, audit trails, and honeypots. |

| Conformal Prediction [21] | Uncertainty Quantification | A model-agnostic framework for creating prediction sets/intervals with guaranteed coverage (e.g., 95%), quantifying uncertainty for any black-box model. |

| Monte Carlo Dropout [21] | Uncertainty Quantification | A technique where dropout is kept active during prediction. Multiple forward passes create a distribution, quantifying model uncertainty efficiently. |

| WissKI [26] | Documentation System | A system using Semantic Web technologies (e.g., CIDOC CRM) to build virtual research environments for documenting cultural heritage and 3D reconstructions. |

| Anomaly-based Dataset Dissimilarity (ADD) [22] | Data Dissimilarity Measure | A novel measure to quantify the statistical divergence between two datasets, used to predict model performance and uncertainty on out-of-distribution data. |

The choice between consensus models and single-tool reconstructions is not about finding a universal winner, but about aligning methodology with project goals and constraints. Consensus models excel in scenarios demanding high reliability, robust uncertainty estimates, and resilience against data variability, making them suited for critical applications in drug development and healthcare. Single-tool approaches offer a computationally efficient alternative for well-defined problems with stable data distributions.

The empirical data and protocols presented confirm that uncertainty is not an abstract concept but a quantifiable property. The foundational element influencing this uncertainty is, unequivocally, the quality of data annotation and the strategic selection of source databases. By adopting the rigorous evaluation frameworks and tools outlined, researchers and drug development professionals can make informed decisions, build more trustworthy models, and ultimately accelerate the translation of AI research into real-world impact.

What is a Consensus Model? Defining the 'CoreX' and 'Assembly' Concepts

In the field of systems biology, a consensus model is a unified genome-scale metabolic model (GEM) created by integrating the reconstructions of the same organism generated by multiple automated tools [27]. This approach synthesizes models built from different biochemical databases and algorithms to form a single, more reliable network. The terms "Assembly" and "CoreX" describe specific types of consensus models, differentiated by the level of agreement required for including metabolic features [27].

- Assembly Model: This is the broadest type of consensus model, representing the union of all input models. It contains every metabolite, reaction, and gene present in at least one of the original reconstructions [27].

- CoreX Model: This is a more conservative consensus model that includes only the metabolic features present in at least X number of the input models. For example, a "Core2" model contains features found in at least two tools, while a "Core4" model contains only those found in four or more [27]. A higher "X" value indicates a higher confidence level for the included features.

The primary goal of consensus modeling is to mitigate the uncertainty and tool-specific biases inherent in single-tool reconstructions, ultimately leading to more accurate and biologically realistic predictions of metabolic behavior [9].

Table 1: Experimental Performance: Consensus vs. Single-Tool Models

The following table summarizes key experimental findings from studies that compared the performance of consensus models against individual reconstruction tools.

| Study Organism / Context | Performance Metric | Consensus Model Performance | Single-Tool Model Performance | Key Finding |

|---|---|---|---|---|

| E. coli & L. plantarum [27] | Auxotrophy Prediction | Outperformed gold-standard manual models | Varies by tool; consensus was superior | Consensus models better predict nutrient requirements. |

| Gene Essentiality Prediction | Outperformed gold-standard models | Varies by tool; consensus was superior | Optimizing GPR rules in consensus models improves gene essentiality predictions. | |

| Marine Bacterial Communities [9] | Network Coverage | Higher number of reactions and metabolites | Fewer reactions and metabolites (gapseq had most) | Consensus models retain unique features from individual tools, creating a more comprehensive network. |

| Network Quality | Fewer dead-end metabolites | More dead-end metabolites (highest in gapseq) | Consensus approach reduces network gaps, improving functional utility. | |

| Gene Support | Incorporated more genes | Fewer genes (CarveMe had the most) | Consensus models have stronger genomic evidence for reactions. |

Experimental Protocols in Consensus Modeling

The development and validation of consensus models follow a structured workflow. The diagram below illustrates the key stages of the GEMsembler pipeline, a dedicated framework for building consensus metabolic models [27].

Diagram 1: The GEMsembler consensus model creation workflow.

Detailed Methodology

The process can be broken down into the following detailed steps, as implemented in the GEMsembler pipeline [27]:

Feature Conversion to Common Nomenclature:

- Objective: To enable a direct comparison of models built using different biochemical databases (e.g., ModelSEED, MetaCyc, BiGG).

- Protocol: All metabolite and reaction identifiers from the input models are programmatically mapped to a standardized namespace, such as BiGG IDs. Gene identifiers are converted using BLAST against a selected reference genome to ensure consistent locus tags across all models.

Supermodel Creation:

- Objective: To create a single object containing all metabolic features from all input models.

- Protocol: The converted models are merged into a "supermodel." This supermodel is a union of all metabolites, reactions, and genes, with metadata tracking the original source of each feature.

Consensus Model Generation:

- Objective: To create specific consensus models (Assembly and CoreX) based on the level of agreement between tools.

- Protocol: The pipeline generates different model combinations from the supermodel.

- The Assembly model is equivalent to a "Core1" model, containing every feature present in at least one tool.

- CoreX models are generated by applying an agreement threshold. For instance, a reaction must be present in at least

Xnumber of the original models to be included in the CoreX model. Gene-Protein-Reaction (GPR) rules are also logically combined based on this agreement principle.

Model Analysis, Curation, and Validation:

- Objective: To evaluate the functional performance of the consensus models and compare them against single-tool and gold-standard models.

- Protocol: The generated consensus models are subjected to functional tests using constraint-based methods like Flux Balance Analysis (FBA). Key validation protocols include [27]:

- Auxotrophy Prediction: Testing the model's ability to correctly predict which nutrients the organism requires to grow in a minimal medium.

- Gene Essentiality Prediction: Systematically knocking out each gene in the model and predicting whether the knockout would prevent growth, then comparing these predictions to experimental data.

- Tools: Frameworks like COMMIT can be used for gap-filling community consensus models to ensure metabolic functionality [9].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The table below lists key software tools, databases, and resources essential for conducting consensus model research.

| Item Name | Type | Primary Function in Research |

|---|---|---|

| GEMsembler [27] | Software Package | A Python package specifically designed to compare, combine, and analyze GEMs from different tools to build consensus models. |

| CarveMe [9] | Reconstruction Tool | An automated tool using a top-down approach, starting with a universal model and removing unnecessary reactions. |

| gapseq [9] | Reconstruction Tool | An automated tool using a bottom-up approach, mapping enzyme genes to reactions and performing extensive gap-filling. |

| KBase [9] | Reconstruction Tool & Platform | An automated tool using a bottom-up approach and the ModelSEED database for reconstruction. |

| BiGG Database [27] | Biochemical Database | A knowledgebase of curated, non-redundant metabolic reactions; often used as a standard namespace for model integration. |

| COBRApy [27] | Software Toolbox | A fundamental Python library for Constraint-Based Reconstruction and Analysis, used for simulating and analyzing metabolic models. |

| COMMIT [9] | Software Toolbox | A tool used for the gap-filling of community metabolic models, which can be applied in the consensus model workflow. |

| MetaNetX [27] | Online Platform | A resource that maps metabolite and reaction identifiers across different biochemical databases, facilitating model comparison. |

Consensus models, defined by their "Assembly" and "CoreX" constructions, represent a powerful strategy to overcome the limitations of single-tool metabolic reconstructions. By integrating multiple data sources, they create more comprehensive, accurate, and reliable metabolic networks. Experimental data consistently shows that consensus models can outperform even manually curated gold-standard models in critical predictive tasks like auxotrophy and gene essentiality. For researchers in drug development and systems biology, adopting a consensus approach provides a more robust foundation for predicting metabolic interactions, identifying drug targets, and understanding cellular physiology.

Building Better Models: Tools and Techniques for Assembling Consensus Metabolic Networks

Consensus approaches in computational biology and enterprise systems aim to synthesize multiple, often divergent, inputs to produce a more accurate and reliable output. This guide explores two distinct frameworks that embody this principle: GEMsembler, used in systems biology for building genome-scale metabolic models, and COMMGEN (Communication Generation), an enterprise process within the PeopleSoft Campus Community for generating communications. While operating in vastly different domains, both leverage consensus to overcome the limitations of single-source constructions.

The following table highlights the core differences in the application of the consensus principle between the two frameworks.

| Feature | GEMsembler | COMMGEN (PeopleSoft) |

|---|---|---|

| Domain | Systems Biology / Metabolic Modeling | Enterprise Resource Planning (ERP) / Communications Management |

| Core Consensus Function | Assembles a unified metabolic model from multiple, automatically-reconstructed input models. [7] [27] | Generates personalized communications (letters/emails) by merging recipient data from the database with predefined templates. [28] [29] |

| Primary Inputs | GEMs from tools like CarveMe, ModelSEED, and gapseq. [27] [30] | Recipient IDs, a Letter Code, data source definitions, and BI Publisher templates. [28] |

| Primary Outputs | A consensus "supermodel" or curated core model with improved predictive performance. [7] [27] | Finalized, personalized communications (PDFs or emails) sent to recipients. [28] [29] |

| Key Performance Advantage | Outperforms single-tool and manually curated gold-standard models in predicting auxotrophy and gene essentiality. [27] | Supports multi-language and multi-method (email/print) communications based on recipient preferences, unlike simpler Letter Generation. [28] [29] |

GEMsembler: Consensus in Metabolic Model Reconstruction

GEMsembler is a Python package designed to address a key challenge in systems biology: different automated tools for reconstructing Genome-scale Metabolic Models (GEMs) for the same organism produce models with varying structures and predictive capabilities. [27] GEMsembler does not build models from scratch but operates on models generated by other tools, comparing them and assembling consensus models that harness the strengths of each approach. [7] [27]

Experimental Workflow and Protocol

The process of generating a consensus model with GEMsembler follows a structured, multi-stage workflow.

Detailed Experimental Protocol:

- Input Model Preparation: Gather GEMs for the same organism that have been reconstructed using different tools such as CarveMe, modelSEED, and gapseq. These models must be in a COBRApy-readable format (e.g., SBML). [30] [31]

- Nomenclature Unification: Run the GEMsembler conversion process to map all metabolites and reactions from the input models to a common namespace (BiGG IDs). This step is critical for a structurally accurate comparison. GEMsembler uses BLAST to convert gene identifiers to a unified set of locus tags if genome sequences are provided. [27]

- Supermodel Creation: Assemble the converted models into a single "supermodel" object. This object contains the union of all metabolic features (metabolites, reactions, genes) and tracks the origin of each feature from the input models. [27]

- Consensus Generation: Generate specific consensus models from the supermodel. A common approach is to create "coreX" models, which contain only the metabolic features (reactions, metabolites) present in at least X of the input models. This filters out uncertain features and increases the confidence level of the resulting network. [27]

- Functional Validation: Analyze the performance of the consensus models using standard metabolic modeling assessments, such as predicting growth on different nutrient sources (auxotrophy) and identifying genes essential for growth under specific conditions. Compare these predictions against experimental data and the performance of the original, single-tool models. [27]

Performance and Experimental Data

Research demonstrates the tangible benefits of the GEMsembler consensus approach. In a study on Escherichia coli and Lactiplantibacillus plantarum, GEMsembler-curated consensus models, built from four automatically reconstructed models, were shown to outperform the manually curated gold-standard models in both auxotrophy and gene essentiality predictions. [27] Furthermore, by optimizing gene-protein-reaction (GPR) rules based on the consensus, GEMsembler even improved gene essentiality predictions in the gold-standard models, highlighting its power for model refinement. [27]

Essential Research Toolkit for GEMsembler

| Research Reagent / Tool | Function in the Workflow |

|---|---|

| CarveMe, modelSEED, gapseq | Automated GEM reconstruction tools that generate the diverse input models for GEMsembler. [27] |

| COBRApy | A fundamental Python library for constraint-based modeling. GEMsembler's supermodel structure is based on its classes. [27] |

| BiGG Database | A knowledgebase of curated metabolic reactions and metabolites. Serves as the target nomenclature for unifying model components. [27] |

| BLAST | Used internally by GEMsembler for converting gene identifiers from different input models to a common set of locus tags. [27] [30] |

| MetaNetX | A platform that can be used to map metabolite and reaction identifiers from different databases, assisting in the unification process. [27] |

COMMGEN: Consensus in Enterprise Communications

The Communication Generation (COMMGEN) process, known as SCC_COMMGEN, is an application engine process within the PeopleSoft Campus Community suite. It is designed to generate personalized outgoing communications (letters or emails) for individuals or organizations. [28] [29] Its "consensus" logic lies in its ability to merge specific data extracted from the system's database with pre-defined, rule-based templates to produce a final, coherent document. This ensures that the output communication represents an agreed-upon, institution-standard format that is consistently applied across all recipients.

System Workflow and Configuration

The process of generating a communication via COMMGEN is a multi-step sequence involving both pre-launch configuration and execution.

Detailed Configuration and Execution Protocol:

Prerequisite Setup:

- Letter Code Configuration: A standard letter code must be set up in the system. This code is linked to an Oracle BI Publisher report definition and its associated data source and templates (e.g., for PDF or email output). [28]

- Communication Assignment: The communication must be assigned to the target individuals or organizations. This can be done manually or automatically via the 3C engine (Population Selection, Trigger Event). The assignment records the letter code and context. [28] [29]

Process Execution:

- Enter Selection Parameters: Specify the recipient IDs (e.g., one person, all persons, or a population selection), the letter code, and the specific report/template to use. The process can respect the recipient's preferred communication method (email/letter) and language. [28]

- Enter Process and Email Parameters: Define data usages (e.g., for names and addresses), handle missing data rules, and for emails, provide the sender, subject line, and other email headers. [28]

- Run the Process: Execute the

SCC_COMMGENprocess. It will extract the required variable data for the specified recipients, merge it with the selected BI Publisher template, and generate the final output. [28]

Performance and Functional Advantages

COMMGEN's primary performance advantage over simpler alternatives like the legacy Letter Generation process is its deep integration with PeopleSoft and use of modern templating. The key differentiator is its support for generating communications based on recipient preferences for language and method (email or print). [28] Furthermore, it supports advanced features like joint communications (e.g., a single letter to a couple at a shared address), enclosures, and checklist status updates, making it a more robust and flexible consensus framework for enterprise communication needs. [29]

Essential Research Toolkit for PeopleSoft COMMGEN

| Research Reagent / Tool | Function in the Workflow |

|---|---|

| Oracle BI Publisher | The core reporting engine used to design and process the communication templates, merging them with the XML data from COMMGEN. [28] |

| Standard Letter Table | The PeopleSoft table where letter codes are defined and linked to their corresponding BI Publisher report definitions. [28] |

| 3C Engine (Communications, Checklists, Comments) | An automation engine within PeopleSoft that can be used to assign communications to recipients based on predefined rules and conditions, feeding into COMMGEN. [28] [29] |

| Population Selection | A method, often using PS Query or an external file, to identify a group of IDs for processing, which can be used as the input for COMMGEN. [28] |

Genome-scale metabolic models (GEMs) are fundamental tools in systems biology for predicting cellular metabolism and perturbation responses. However, automated GEM reconstruction tools—such as CarveMe, gapseq, and KBase—each utilize different biochemical databases and algorithms, resulting in models with varying structural and functional properties for the same organism [27] [9]. This variability introduces significant uncertainty in model predictions, as no single tool consistently outperforms others across all biological contexts [9].

Consensus modeling addresses this limitation by synthesizing multiple individual reconstructions into a unified "supermodel." This approach harnesses model diversity to create a new, higher-dimensional system that benefits from each component model, compensating for individual biases and errors [32] [27]. The resulting consensus models demonstrate enhanced performance in predicting auxotrophy and gene essentiality, sometimes even surpassing manually curated gold-standard models [27]. This guide provides a comprehensive workflow for creating these consensus models, from initial data preparation to final validation.

The process of building a consensus model follows a structured pathway that transforms multiple individual models into a unified, high-confidence reconstruction. The overall workflow encompasses model conversion, unification, and consensus building, as visualized below.

Step-by-Step Experimental Protocol

Model Conversion and Nomenclature Unification

The initial phase focuses on standardizing the heterogeneous input from various reconstruction tools into a common namespace to enable meaningful comparison and integration.

Step 1: Metabolite ID Conversion - Convert all metabolite identifiers from source databases (ModelSEED, MetaCyc, etc.) to a standardized namespace, preferably BiGG IDs, using cross-reference databases such as MetaNetX [27] [9]. This step is crucial for identifying equivalent metabolites across models that may use different naming conventions.

Step 2: Reaction ID Conversion - Map reaction identifiers to the target namespace using reaction equations to verify consistency and maintain proper network topology during conversion [27]. This equation-based approach ensures that the stoichiometry and directionality of reactions are preserved regardless of identifier differences.

Step 3: Gene ID Conversion - If genome sequences are provided with input models, convert gene identifiers to a standardized locus tag system using BLAST analysis for cross-referencing [27]. This genetic unification enables consistent gene-protein-reaction (GPR) rule mapping across the consensus model.

Supermodel Assembly and Consensus Generation

After successful unification, the converted models are assembled into a supermodel structure that tracks the origin of all metabolic features.

Step 4: Supermodel Creation - Assemble all converted models into a unified "supermodel" object that maintains the COBRApy structure while adding fields to store provenance information for each feature (metabolites, reactions, genes) [27]. Features that could not be converted are stored in a separate "not_converted" field for manual inspection.