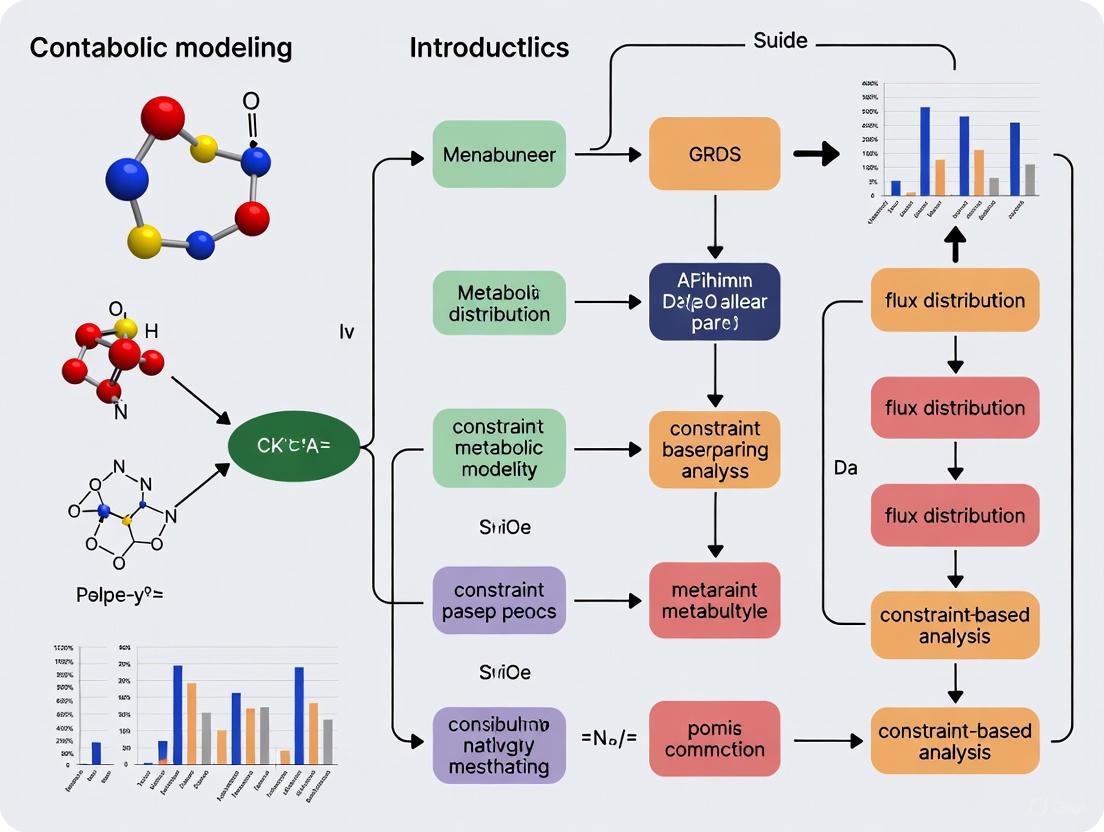

Constraint-Based Metabolic Modeling: A Foundational Guide for Biomedical Research and Drug Development

This guide provides a comprehensive introduction to constraint-based metabolic modeling (CBM), a powerful computational framework for simulating metabolism at the genome-scale.

Constraint-Based Metabolic Modeling: A Foundational Guide for Biomedical Research and Drug Development

Abstract

This guide provides a comprehensive introduction to constraint-based metabolic modeling (CBM), a powerful computational framework for simulating metabolism at the genome-scale. Tailored for researchers, scientists, and drug development professionals, it covers foundational principles, core methodologies like Flux Balance Analysis (FBA), and practical applications in biotechnology and biomedicine, such as investigating drug-induced metabolic changes in cancer. The content also addresses crucial steps for troubleshooting, optimizing, and validating models to ensure predictive reliability, and explores advanced frameworks integrating proteome constraints. By synthesizing current tools, best practices, and real-world case studies, this article serves as an essential resource for leveraging CBM to uncover therapeutic vulnerabilities and drive innovation in biomedical research.

Core Principles: What is Constraint-Based Modeling and Why is it Essential?

Defining Constraint-Based Modeling and Genome-Scale Metabolic Models (GEMs)

Constraint-Based Modeling (CBM) is a mathematical framework used to analyze and predict the behavior of biological systems, particularly cellular metabolism. Unlike kinetic modeling that requires extensive parameter data, CBM relies on physicochemical constraints to define the space of possible metabolic behaviors, making it particularly valuable for studying systems where comprehensive kinetic data are unavailable [1]. The most common application of CBM is in Flux Balance Analysis (FBA), which uses linear programming to predict flow of metabolites through a metabolic network under steady-state conditions [2].

Genome-Scale Metabolic Models (GEMs) are computational representations of the complete metabolic network of an organism, contextualizing different types of "Big Data" such as genomics, metabolomics, and transcriptomics [3]. A GEM quantitatively defines the relationship between genotype and phenotype by containing all known metabolic reactions, associated genes, enzymes, gene-protein-reaction (GPR) rules, and metabolites [3]. The first GEM for Haemophilus influenzae was reported in 1999, and since then, advances have led to the development of GEMs for an increasing number of organisms across bacteria, archaea, and eukarya [2]. As of February 2019, GEMs had been reconstructed for 6,239 organisms (5,897 bacteria, 127 archaea, and 215 eukaryotes), with 183 organisms subjected to manual GEM reconstruction [2].

Table: Key Statistics on GEM Reconstructions (as of February 2019)

| Category | Number of Organisms | Manually Reconstructed |

|---|---|---|

| Bacteria | 5,897 | 113 |

| Archaea | 127 | 10 |

| Eukaryotes | 215 | 60 |

| Total | 6,239 | 183 |

Core Principles of Constraint-Based Modeling

Fundamental Concepts and Mathematical Formalism

The core principle of CBM is the use of constraints to limit the possible metabolic behaviors of a system. These constraints include:

- Stoichiometric constraints: Based on the conservation of mass in metabolic networks

- Thermodynamic constraints: Defining reaction directionality based on energy considerations

- Enzyme capacity constraints: Limiting flux rates based on enzyme availability

- Regulatory constraints: Incorporating transcriptional and translational regulation

The mathematical foundation of CBM is the stoichiometric matrix S, where rows represent metabolites and columns represent reactions [1]. The system is described by the equation:

S · v = 0

where v is the vector of metabolic fluxes. This equation represents the steady-state assumption, where metabolite concentrations remain constant over time [1]. Additional constraints are added as inequalities:

α ≤ v ≤ β

where α and β represent lower and upper bounds for each reaction flux [4].

Types of Constraints in Metabolic Models

Table: Constraint Types in Metabolic Modeling

| Constraint Type | Description | Application Examples |

|---|---|---|

| Stoichiometric | Mass balance for each metabolite in the network | Fundamental to all FBA simulations |

| Thermodynamic | Reaction directionality based on energy feasibility | Eliminating thermodynamically infeasible cycles [5] |

| Enzyme | Limits based on enzyme kinetics and capacity | GECKO models incorporating kcat values [6] |

| Dynamic | Time-dependent changes in metabolites and biomass | dFBA simulating batch cultures [1] |

| Transcriptional | Incorporation of gene expression data | Context-specific model reconstruction [7] |

Reconstruction and Simulation of GEMs

GEM Reconstruction Process

The reconstruction of high-quality GEMs involves multiple stages of refinement and validation. The technological process of model construction primarily follows four key steps [4]:

- Draft Model Construction: Automated reconstruction using genomic data and database resources

- Manual Refinement: Curating gene-protein-reaction associations and verifying reaction directionality

- Model Conversion: Translating the biochemical network into a mathematical format

- Model Validation: Testing model predictions against experimental data

Figure: GEM Reconstruction and Validation Workflow

Three main methods exist for GEM construction: manual, automatic, and semi-automatic [4]. Semi-automatic construction has emerged as the preferred approach, combining the accuracy of manual curation with the efficiency of automated tools. This method involves obtaining an initial GEM using automated build tools followed by extensive manual refinement to produce a more accurate model [4].

Simulation Methods and Algorithms

Flux Balance Analysis (FBA) is the most widely used algorithm for simulating GEMs. FBA uses linear programming to find a flux distribution that maximizes or minimizes an objective function (typically biomass growth) under the applied constraints [2]. The standard FBA formulation is:

Maximize: Z = cᵀv Subject to: S · v = 0 and: α ≤ v ≤ β

where Z is the objective function and c is a vector indicating which reactions contribute to the objective.

Several extensions to FBA have been developed to address specific research questions:

- Dynamic FBA (dFBA): Extends FBA to dynamic systems by calculating successive quasi-steady states over time [1]

- 13C Metabolic Flux Analysis (13C MFA): Uses isotopic tracer data to determine intracellular metabolic fluxes [3]

- Regulatory FBA (rFBA): Incorporates transcriptional regulatory constraints

- Thermodynamic-based FBA (tFBA): Includes thermodynamic constraints to eliminate infeasible cycles

Figure: Flux Balance Analysis Methodology

Advanced Applications of GEMs

Biomedical and Industrial Applications

GEMs have become indispensable tools in both basic research and applied biotechnology with several key application areas:

- Strain development for chemical production: Engineering microorganisms to produce valuable chemicals and materials [2]

- Drug target identification: Identifying potential therapeutic targets in pathogens [3] [2]

- Understanding human diseases: Studying metabolic aspects of cancer and other diseases [7] [2]

- Pan-reactome analysis: Comparing metabolic capabilities across multiple strains or species [2]

- Modeling host-microbe interactions: Studying metabolic interactions in microbial communities and between hosts and their microbiomes [3]

In cancer research, GEMs have been used to investigate drug-induced metabolic changes. For example, a 2025 study used GEMs to analyze the metabolic effects of kinase inhibitors in gastric cancer cells, revealing "widespread down-regulation of biosynthetic pathways, particularly in amino acid and nucleotide metabolism" [7]. The study applied the Tasks Inferred from Differential Expression (TIDE) algorithm to infer pathway activity changes following drug treatments [7].

Multi-Strain and Community Modeling

Advances in GEM methodologies have enabled the construction of multi-strain models and microbial community models. Multi-strain GEMs capture metabolic diversity across different strains of the same species. For example, Monk et al. created a multi-strain GEM from 55 individual E. coli GEMs, comprising both a "core" model (intersection of all models) and a "pan" model (union of all models) [3].

Microbial community modeling extends CBM to simulate interactions between multiple organisms. Tools like MICOM (Microbial Community) enable metabolic modeling at the community level by accounting for species abundances and cross-feeding interactions [1]. These approaches allow researchers to study complex systems such as the human gut microbiome and environmental microbial communities.

Essential Software and Platforms

The GEM research community has developed numerous software tools to facilitate model reconstruction, simulation, and analysis. These tools have made GEM methodology accessible to researchers without extensive programming backgrounds.

Table: Key Computational Tools for GEM Reconstruction and Analysis

| Tool Name | Primary Function | Key Features |

|---|---|---|

| GECKO | Enhancement of GEMs with enzymatic constraints | Automated retrieval of kinetic parameters from BRENDA [6] |

| Fluxer | Web application for flux computation and visualization | Interactive visualization of genome-scale metabolic networks [8] |

| COBRA Toolbox | MATLAB package for constraint-based modeling | Comprehensive suite of simulation algorithms [4] |

| Model SEED | Automated reconstruction of draft GEMs | Rapid generation of initial metabolic models [4] |

| MicroLabVR | VR visualization of spatiotemporal microbiome data | 3D immersive exploration of microbial community simulations [1] |

| MTEApy | Python implementation of TIDE algorithm | Analysis of metabolic task changes from transcriptomic data [7] |

Table: Essential Resources for GEM Research

| Resource Type | Examples | Function in GEM Research |

|---|---|---|

| Knowledgebases | BRENDA, KEGG, BiGG Models | Source of kinetic parameters and reaction databases [6] |

| Proteomics Data | Mass spectrometry datasets | Constraining enzyme abundance in ecGEMs [6] |

| Transcriptomics Data | RNA-seq datasets | Constructing context-specific models [7] |

| Metabolomics Data | LC/MS, GC/MS datasets | Validating flux predictions [3] |

| Kinetic Parameters | kcat values from BRENDA | Enabling enzyme-constrained modeling [6] |

| Genome Annotations | NCBI, UniProt | Foundation for initial model reconstruction [4] |

Current Challenges and Future Directions

Despite significant advances, several challenges remain in constraint-based modeling and GEM development:

- Methodological inconsistencies: Lack of consensus on best practices for constructing context-specific GEMs [7]

- Limited kinetic parameter availability: Sparse coverage for non-model organisms in databases like BRENDA [6]

- Integration of multi-omics data: Challenges in effectively combining transcriptomic, proteomic, and metabolomic data

- Spatiotemporal considerations: Most models assume well-mixed systems, neglecting spatial organization [1]

- Regulatory constraints: Difficulties in incorporating transcriptional and translational regulation

Future development areas include improved integration of machine learning approaches, expansion of enzyme-constrained models through tools like GECKO 2.0 [6], enhanced community modeling techniques, and development of more sophisticated multi-scale models that incorporate spatial and temporal dynamics [1]. As the field continues to evolve, GEMs are expected to play an increasingly important role in systems biology, metabolic engineering, and biomedical research.

Constraint-based modeling provides a powerful mathematical framework for analyzing metabolic networks at the genome scale, enabling researchers to predict organism behavior, simulate genetic manipulations, and understand system-level physiology. This approach fundamentally differs from kinetic modeling as it focuses on defining the space of possible network states based on physical and biochemical constraints rather than predicting precise, dynamic transients. The core principle revolves around the fact that the stoichiometry of metabolic reactions imposes mass conservation constraints that systematically limit the possible metabolic flux distributions. By applying these constraints, one can define a bounded solution space containing all thermodynamically feasible flux states of the network, then use optimization methods to identify particular flux distributions that optimize biological objectives such as growth rate or metabolite production [9].

This mathematical foundation has found diverse applications in physiological studies, gap-filling efforts, and genome-scale synthetic biology. Researchers routinely employ constraint-based reconstruction and analysis (COBRA) methods to simulate growth on different media, predict the effects of gene knockouts, calculate yields of important cofactors like ATP, NADH, or NADPH, and even design microbial strains for metabolic engineering. The ability to make these predictions without requiring difficult-to-measure kinetic parameters makes constraint-based modeling particularly valuable for analyzing large-scale metabolic networks, including those representing human microbiome metabolism and its role in health and disease [9] [10].

Stoichiometric Matrices: Representing Metabolic Networks

Mathematical Representation of Metabolic Reactions

The stoichiometric matrix forms the mathematical cornerstone of constraint-based modeling, providing a complete numerical representation of the metabolic network. This matrix, typically denoted as S, tabulates all stoichiometric coefficients for each metabolic reaction in the system. Every row in this matrix represents one unique metabolite (for a system with m compounds), while every column represents one biochemical reaction (for a system with n reactions). The entries in each column are the stoichiometric coefficients of the metabolites participating in the corresponding reaction [9].

The conventions for populating the stoichiometric matrix follow consistent biochemical principles: negative coefficients indicate metabolites consumed (reactants) in a reaction, positive coefficients indicate metabolites produced (products), and a zero coefficient is used for every metabolite that does not participate in a particular reaction. Since most biochemical reactions involve only a few metabolites, the stoichiometric matrix S is typically a sparse matrix with mostly zero entries. This structured representation captures the complete connectivity of the metabolic network, enabling subsequent mathematical analysis [9].

Table 1: Interpretation of Stoichiometric Matrix Entries

| Coefficient Value | Metabolic Role | Directionality |

|---|---|---|

| Negative (< 0) | Reactant/Substrate | Consumed |

| Positive (> 0) | Product | Produced |

| Zero (0) | Non-participant | Uninvolved |

Worked Example: Constructing a Stoichiometric Matrix

Consider a simplified metabolic network comprising four metabolites (A, B, C, D) and three reactions:

- Reaction 1: A → B

- Reaction 2: B → C + D

- Reaction 3: C → D

The stoichiometric matrix S for this system would be constructed as follows:

Table 2: Example Stoichiometric Matrix

| Metabolite | Reaction 1 | Reaction 2 | Reaction 3 |

|---|---|---|---|

| A | -1 | 0 | 0 |

| B | +1 | -1 | 0 |

| C | 0 | +1 | -1 |

| D | 0 | +1 | +1 |

This 4×3 matrix completely represents the metabolic network, capturing all substrate consumption and product formation relationships. In practice, genome-scale metabolic models can contain thousands of metabolites and reactions, making computational tools essential for their construction and analysis [9].

Figure 1: Structure of a Stoichiometric Matrix

Mass Balance Equations and Steady-State Assumption

The Steady-State Mass Balance Equation

The fundamental equation governing constraint-based models is the mass balance equation, which at steady state is expressed as Sv = 0, where S is the stoichiometric matrix and v is the flux vector containing the reaction rates. This equation represents the system of mass balance constraints for all metabolites in the network, ensuring that for each compound, the total production flux exactly equals the total consumption flux. Mathematically, this steady-state condition assumes that metabolite concentrations do not change over time (dx/dt = 0), meaning the network is in a homeostatic state [9].

The steady-state assumption is biologically justified for many systems, particularly when modeling microorganisms growing at exponential phase or when considering cellular processes that maintain homeostasis. This constraint dramatically reduces the solution space by eliminating flux distributions that would lead to metabolite accumulation or depletion. The vector x represents the concentrations of all metabolites (with length m), while the vector v represents the fluxes through all reactions (with length n). In any realistic large-scale metabolic model, there are more reactions than metabolites (n > m), resulting in an underdetermined system with more unknown variables than equations, and therefore no unique solution to this system [9].

Experimental Protocol: Implementing Mass Balance Constraints

Implementing mass balance constraints requires careful formulation of the stoichiometric matrix and appropriate boundary conditions:

Reconstruction of the Metabolic Network: Compile all known metabolic reactions for the target organism from databases and literature sources, ensuring correct stoichiometries and reaction directions.

Stoichiometric Matrix Assembly: Construct the S matrix where each column corresponds to a biochemical reaction and each row to a metabolite, following the signing convention (negative for substrates, positive for products).

Flux Vector Definition: Define the flux vector v containing the net reaction rates for all internal reactions, as well as exchange reactions that represent metabolite uptake or secretion.

Equation System Formulation: Set up the system of linear equations Sv = 0, which typically contains thousands of reactions and metabolites for genome-scale models.

Steady-State Validation: Verify that the resulting system accurately represents biological reality by checking that known metabolic pathways are correctly captured and can carry flux.

Any flux vector v that satisfies the equation Sv = 0 is said to be in the null space of S, representing a thermodynamically feasible flux distribution that does not violate mass conservation. The underdetermined nature of this system means that there is typically a multidimensional space of possible flux distributions rather than a single unique solution [9].

Flux Constraints and Boundary Conditions

Types of Flux Constraints

While the mass balance equations Sv = 0 define the fundamental constraints based on reaction stoichiometries, additional flux constraints are required to further restrict the solution space to biologically meaningful regions. These constraints are primarily implemented as inequality constraints that define upper and lower bounds on reaction fluxes:

Lower bounds (lbᵢ): Represent the minimum allowable flux for reaction i, typically set to 0 for irreversible reactions or a negative value for reversible reactions operating in reverse.

Upper bounds (ubᵢ): Represent the maximum allowable flux for reaction i, often determined by enzyme capacity, substrate availability, or other physiological limitations.

These bounds collectively define the allowable flux through each reaction in the network: lbᵢ ≤ vᵢ ≤ ubᵢ. Together with the mass balance constraints, they define the space of all allowable flux distributions of the system—that is, the rates at which every metabolite is consumed or produced by each reaction [9].

Table 3: Types of Flux Constraints in Metabolic Models

| Constraint Type | Mathematical Form | Biological Interpretation |

|---|---|---|

| Mass Balance | Sv = 0 | Mass conservation for all metabolites at steady state |

| Reaction Irreversibility | vᵢ ≥ 0 | Thermodynamic constraints on reaction direction |

| Capacity Constraints | vᵢ ≤ ubᵢ | Enzyme capacity, catalytic rate limitations |

| Uptake Constraints | vₑₓ ≤ ubₑₓ | Nutrient availability in environment |

| Secretion Constraints | vₑₓ ≥ lbₑₓ | Byproduct secretion requirements |

Experimental Protocol: Setting Flux Boundaries

Setting appropriate flux bounds is critical for generating biologically realistic predictions:

Determine Reaction Reversibility: Based on thermodynamic data and biochemical literature, assign directionality constraints. For irreversible reactions, set lower bound = 0.

Define Exchange Reaction Bounds: For reactions representing metabolite uptake from the environment:

- Set upper bound = 0 for metabolites not available in the environment

- Set upper bound to measured uptake rates when available

- Set lower bound = 0 or negative values for secretion

Apply Capacity Constraints: When available, use experimental measurements of maximum metabolic fluxes (e.g., from enzyme assays or metabolic flux analysis) to set upper bounds.

Implement Condition-Specific Constraints: Adjust bounds to reflect specific experimental conditions, such as:

- Aerobic conditions: Set oxygen uptake upper bound to measured value

- Anaerobic conditions: Set oxygen uptake upper bound to 0

- Nutrient limitation: Set specific nutrient uptake to limiting values

For example, when modeling Escherichia coli growth, one might cap the maximum glucose uptake rate at 18.5 mmol glucose gDW⁻¹ hr⁻¹ (a physiologically realistic level) while setting oxygen uptake to a high value under aerobic conditions or to zero under anaerobic conditions [9].

Flux Balance Analysis: From Constraints to Optimal Phenotypes

The Optimization Framework

Flux Balance Analysis (FBA) is the primary method for identifying particular flux distributions within the constrained solution space. While constraints define the range of possible solutions, FBA identifies specific points within this space that optimize biological functions. FBA seeks to maximize or minimize an objective function Z = cᵀv, which represents a linear combination of fluxes, where c is a vector of weights indicating how much each reaction contributes to the objective [9].

In practice, when only one reaction is desired for maximization or minimization, c is a vector of zeros with a one at the position of the reaction of interest. The most common biological objective is biomass production, which represents the conversion of metabolic precursors into cellular constituents. A biomass reaction is included in the model that drains precursor metabolites from the system at their relative biomass stoichiometries. This reaction is scaled so that the flux through it equals the exponential growth rate (μ) of the organism. Optimization of this system is accomplished using linear programming, which can rapidly identify optimal solutions even for large-scale networks [9].

Figure 2: Flux Balance Analysis Workflow

Experimental Protocol: Performing Flux Balance Analysis

A standard FBA protocol involves these key methodological steps:

Objective Function Definition:

- For growth prediction: Define c as a vector with weight 1 for the biomass reaction and 0 for all others

- For metabolite production: Define c to maximize the secretion flux of the target compound

Linear Programming Formulation:

- Objective: Maximize Z = cᵀv

- Constraints:

- Sv = 0 (mass balance)

- lb ≤ v ≤ ub (flux bounds)

Numerical Solution:

- Apply simplex or interior-point algorithms to solve the linear programming problem

- Extract the optimal flux distribution v* that maximizes the objective function

Result Interpretation:

- The value of Z* at optimum represents the maximum growth rate or metabolite yield

- The flux vector v* provides the complete distribution of reaction fluxes

For example, when FBA is applied to predict aerobic growth of E. coli with glucose uptake constrained to 18.5 mmol/gDW/h, it yields a predicted growth rate of 1.65 hr⁻¹, while anaerobic growth with oxygen uptake constrained to zero yields a predicted growth rate of 0.47 hr⁻¹, both consistent with experimental measurements [9].

Software Implementations

Several computational tools are available for implementing constraint-based models and performing FBA:

Table 4: Software Tools for Constraint-Based Modeling

| Tool Name | Platform | Key Features | Applications |

|---|---|---|---|

| COBRA Toolbox | MATLAB | Comprehensive FBA and network analysis methods | Metabolic engineering, phenotype prediction [9] |

| COBRApy | Python | Python implementation of COBRA methods | Scriptable, large-scale analysis [11] |

| CellNetAnalyzer | MATLAB | Metabolic flux analysis, pathway analysis | Network robustness, metabolic design [12] |

| CNApy | Python | Graphical interface for constraint-based modeling | Educational use, network visualization [12] |

| SBMLsimulator | Multi-platform | Dynamic visualization of metabolic networks | Time-course data animation [13] |

Research Reagent Solutions

Table 5: Essential Resources for Constraint-Based Modeling Research

| Resource Type | Specific Examples | Function/Purpose |

|---|---|---|

| Metabolic Network Databases | Virtual Metabolic Human (VMH), BiGG Models, BioModels | Provide curated metabolic reconstructions for various organisms [9] [10] |

| Model Visualization Tools | ReconMap, MicroMap, Escher | Interactive exploration of metabolic networks and simulation results [13] [10] |

| Standards and Formats | Systems Biology Markup Language (SBML) | Enable model sharing, reproducibility, and tool interoperability [9] |

| Organism-Specific Models | AGORA2 (microbiome), Recon3D (human) | Provide curated metabolic reconstructions for specific biological systems [10] |

Advanced Applications and Extensions

The basic framework of stoichiometric matrices, mass balance, and flux constraints serves as a foundation for numerous advanced computational methods. Flux Variability Analysis (FVA) identifies the range of possible fluxes for each reaction while maintaining optimal objective function value, revealing alternative optimal solutions [9]. Robustness analysis examines how the objective function changes when varying a particular reaction flux, while phenotypic phase planes analyze simultaneous variation of two fluxes [9].

Metabolic engineering applications include algorithms like OptKnock, which identifies gene knockout strategies that couple cellular growth with production of desirable compounds [9]. For drug discovery and human health applications, constraint-based models enable studying host-microbiome interactions and drug metabolism. The recently developed MicroMap resource provides visualization of microbiome metabolism, capturing over 5064 unique reactions and 3499 unique metabolites from more than 250,000 microbial metabolic reconstructions, including metabolism of 98 commonly prescribed drugs [10].

Dynamic extensions of FBA incorporate time-varying constraints to model metabolic shifts, while integration with other omics data types (transcriptomics, proteomics) enables context-specific model construction. These advanced applications demonstrate how the fundamental mathematical foundation of stoichiometric matrices and flux constraints continues to enable new biological insights across biotechnology, biomedical research, and systems biology [9] [11] [10].

Living systems are inherently non-equilibrium, consuming energy to maintain organized states against the tendency toward increasing entropy [14]. Constraint-based modeling of metabolic networks provides a powerful framework for analyzing biochemical systems, but its predictive power relies heavily on properly incorporating thermodynamic principles. Thermodynamic constraints determine the fundamental feasibility and directionality of metabolic reactions, shaping the possible flux distributions in metabolic networks [15] [16]. The integration of thermodynamics into metabolic models represents a crucial advancement over traditional stoichiometric approaches alone, as it ensures that predicted metabolic states comply with the laws of physics while significantly narrowing the solution space of possible flux distributions [17] [16].

Understanding thermodynamic constraints is particularly vital for applications in metabolic engineering and drug development, where accurate prediction of metabolic behavior can guide intervention strategies. This technical guide examines the core thermodynamic principles that govern metabolic networks, the methodologies for implementing these constraints computationally, and the practical implications for research and development.

Theoretical Foundations of Metabolic Thermodynamics

Basic Thermodynamic Principles in Biochemical Systems

The operation of metabolic networks is fundamentally constrained by thermodynamics, which determines the direction and capacity of metabolic fluxes through Gibbs free energy relationships. For any biochemical reaction, the Gibbs free energy change (ΔG) depends on both the standard Gibbs free energy (ΔG°) and the metabolite concentrations [15]:

ΔG = ΔG° + RT ln(Q)

where R is the universal gas constant, T is temperature, and Q is the reaction quotient. A reaction can only proceed spontaneously when ΔG < 0, providing the thermodynamic driving force for metabolic transformations [15] [18].

The standard Gibbs free energy can be further decomposed into contributions from the standard Gibbs free energy of formation (ΔfG°) of each metabolite involved:

ΔG°j = Σ sij ΔfG°i

where sij represents the stoichiometric coefficient of metabolite i in reaction j [17]. This relationship highlights how the thermodynamic properties of individual metabolites collectively determine reaction thermodynamics.

Energy Coupling and Metabolic Driving Forces

Non-equilibrium conditions in metabolic networks are maintained through coupling between energy-releasing and energy-consuming processes. Reactions that are far from equilibrium, typically catalyzed by highly regulated enzymes, often serve as key control points in metabolic pathways [15]. The deviation from equilibrium at individual reaction steps influences the distribution of flux control throughout pathways, with upstream enzymes typically exerting greater control in pathways operating far from equilibrium [15].

Table 1: Key Thermodynamic Parameters in Metabolic Modeling

| Parameter | Symbol | Description | Application in Modeling |

|---|---|---|---|

| Gibbs Free Energy Change | ΔG | Determines reaction directionality | Constrains flux direction; ΔG < 0 for feasible forward flux |

| Standard Gibbs Free Energy | ΔG° | Property at standard conditions | Calculated from formation energies |

| Gibbs Free Energy of Formation | ΔfG° | Formation energy from elements | Fundamental thermodynamic property |

| Reaction Quotient | Q | Ratio of product to substrate activities | Connects concentrations to thermodynamics |

| Equilibrium Constant | K | Ratio at equilibrium | Related to ΔG° by ΔG° = -RTlnK |

| Mass Action Ratio | Γ | Actual concentration ratio | Γ = Q; determines displacement from equilibrium |

Methodological Approaches for Implementing Thermodynamic Constraints

Thermodynamic Metabolic Flux Analysis (TMFA)

Thermodynamic Metabolic Flux Analysis (TMFA) represents a significant advancement over traditional Flux Balance Analysis by incorporating thermodynamic constraints to ensure predicted flux distributions are energetically feasible [17]. TMFA introduces additional constraints that restrict flux through a reaction to occur only if the associated Gibbs free energy change is negative, thereby enforcing the second law of thermodynamics [17] [16].

The implementation requires knowledge of standard Gibbs free energies for reactions, which is often hampered by missing ΔfG° values for many metabolites [17]. To address this challenge, reaction lumping has been developed as a computational strategy that eliminates metabolites with unknown ΔfG° through linear combinations of reactions, resulting in lumped reactions with fully specified ΔG° values [17].

Figure 1: Workflow for Implementing Thermodynamic Constraints in Metabolic Models

Thermodynamics-based Metabolite Sensitivity Analysis (TMSA)

Thermodynamics-based Metabolite Sensitivity Analysis (TMSA) combines constraint-based modeling, Design of Experiments (DoE), and Global Sensitivity Analysis (GSA) to rank metabolites based on their ability to reduce thermodynamic uncertainty in metabolic networks [16]. This method identifies which metabolite concentration measurements would most significantly constrain the solution space, providing guidance for targeted experimental design.

The TMSA workflow involves: (1) constructing the thermodynamically constrained solution space, (2) systematically varying metabolite concentrations within physiological ranges, (3) quantifying the resulting uncertainty in reaction thermodynamics, and (4) ranking metabolites by their influence on overall thermodynamic uncertainty [16]. This approach is modular and can be applied to individual reactions, pathways, or entire metabolic networks.

Systematic Assignment of Reaction Directions

An algorithm for systematic reaction direction assignment combines thermodynamic data with network topology analysis to determine irreversible reactions in metabolic networks [18]. The approach follows a three-tiered strategy:

First, it utilizes experimentally derived Gibbs energies of formation to identify reactions that are thermodynamically irreversible under physiological conditions [18]. When thermodynamic data is incomplete, the method applies network topology considerations and biochemistry-based heuristic rules to identify reactions that must be irreversible to prevent thermodynamically infeasible energy production cycles [18].

Table 2: Computational Methods for Implementing Thermodynamic Constraints

| Method | Key Features | Data Requirements | Applications |

|---|---|---|---|

| Thermodynamic Metabolic Flux Analysis (TMFA) | Ensures flux solutions obey ΔG < 0; Reduces solution space | Stoichiometry, ΔfG° values, Concentration ranges | Genome-scale flux prediction [17] |

| Reaction Lumping | Eliminates metabolites with unknown ΔfG°; Enables TMFA | Stoichiometric matrix, Known ΔfG° subset | Model refinement [17] |

| Thermodynamics-based Metabolite Sensitivity Analysis (TMSA) | Ranks metabolites by uncertainty reduction; Guides experiments | Stoichiometry, ΔG° estimates, Concentration ranges | Experimental design [16] |

| Systematic Direction Assignment | Identifies irreversible reactions; Uses heuristics when data limited | Stoichiometry, Available ΔfG° data, Network topology | Model reconstruction [18] |

Computational Tools and Implementation

Software Platforms for Thermodynamic Modeling

Several software platforms facilitate the implementation of thermodynamic constraints in metabolic models:

- Pathway Tools with its MetaFlux component supports development and execution of metabolic flux models, including thermodynamic considerations [19].

- CellNetAnalyzer (CNA) and CNApy provide comprehensive constraint-based modeling capabilities, including methods for incorporating thermodynamic constraints [12].

- COBRApy enables constraint-based reconstruction and analysis, with tutorials available for implementing advanced methods including thermodynamic constraints [11].

These tools enable researchers to apply thermodynamic principles to metabolic networks ranging from small pathways to genome-scale models.

Table 3: Key Research Reagents and Computational Resources for Thermodynamic Modeling

| Resource | Type | Function/Purpose | Example Sources |

|---|---|---|---|

| Thermodynamic Database | Data Resource | Provides ΔfG° values for metabolites | Experimental literature, Group contribution methods [18] |

| Metabolite Standards | Wet Lab Reagent | Enables concentration measurement calibration | Commercial suppliers (e.g., Sigma) |

| QC Pool Samples | Wet Lab Reagent | Normalizes metabolomic data sets | SERRF methodology [20] |

| Group Contribution Method | Computational Tool | Estimates unknown ΔfG° values | Implementation in [18] |

| Mass Spectrometry Platforms | Analytical Instrument | Measures metabolite concentrations | Various vendors |

| Pathway Analysis Software | Computational Tool | Visualizes metabolic pathways and integrates data | WikiPathways, Metabolon Platform [21] |

Applications and Case Studies

Thermodynamic Constraints in Metabolic Engineering

Thermodynamic analysis has proven valuable in metabolic engineering for identifying bottlenecks and predicting feasible metabolic states. For example, TMFA applied to models with lumped reactions has demonstrated improved precision in predicting flux distributions compared to original models [17]. By eliminating thermodynamically infeasible solution regions, thermodynamic constraints enable more reliable identification of engineering targets for strain improvement.

In linear metabolic pathways, thermodynamic analysis reveals that flux control tends to be dominated by upstream enzymes when pathways operate far from equilibrium, providing guidance for enzyme engineering strategies [15]. This relationship between thermodynamic driving force and flux control enables rational prioritization of enzyme modulation targets.

Thermodynamic Analysis of Glycolysis

Studies of glycolysis as a model pathway have demonstrated how thermodynamic constraints shape metabolic control. Computational analyses using metabolic control analysis have established quantitative relationships between reaction free energies and flux control coefficients [15]. These studies confirm that steps with large negative free energy changes (far from equilibrium) often exhibit high flux control, validating their status as potential regulation points.

Figure 2: Thermodynamic Constraints in Glycolytic Pathway with Key Regulation Points

Emerging Methodological Developments

Recent theoretical advances include the formalization of the "thermodynamic space" concept, which describes the accessible region of species concentrations in chemical reaction networks as a function of energy budget [14]. This framework provides universal thermodynamic bounds on concentration spaces and reaction affinities, offering a more fundamental understanding of how global thermodynamic properties constrain local non-equilibrium behaviors.

The integration of multi-omics data with thermodynamic models represents another advancing frontier. Methods for incorporating metabolomics data into constraint-based models continue to improve, with new approaches for handling uncertainty and variability in concentration measurements [16] [20].

Thermodynamic constraints provide essential physical bounds that shape metabolic network behavior and regulation. The integration of these constraints into computational models significantly enhances their predictive capability and biological relevance. As methodologies for implementing thermodynamic constraints continue to mature, they offer increasingly powerful approaches for understanding metabolic regulation, guiding metabolic engineering, and informing drug discovery efforts aimed at metabolic pathways.

The continuing development of computational tools, thermodynamic databases, and experimental methods for metabolite quantification promises to further strengthen the integration of thermodynamic principles into metabolic modeling, enabling more accurate and reliable predictions of cellular metabolism in health and disease.

Gene-Protein-Reaction (GPR) associations provide the critical linkage between genomic information and metabolic functions in constraint-based metabolic models. These Boolean logical rules define how genes encode proteins that catalyze metabolic reactions, forming the mechanistic bridge that enables genotype-to-phenotype predictions. This technical guide examines GPR fundamentals, reconstruction methodologies, and applications across biomedical and biotechnological domains, highlighting current computational tools and emerging research directions that are advancing systems biology research.

Constraint-based metabolic modeling has emerged as a powerful framework for predicting organism phenotypes from genomic information. At the heart of these models lie Gene-Protein-Reaction (GPR) associations, which create mechanistic links between an organism's genotype and its metabolic capabilities. Unlike statistical approaches such as genome-wide association studies (GWAS), GPR-based models provide causal relationships that predict cellular responses to genetic and environmental perturbations [22]. GPR rules use Boolean logic to describe the complex relationships between genes, their protein products, and the metabolic reactions they catalyze, effectively encoding the catalytic mechanisms of enzymes within metabolic networks [23].

The reconstruction of GPR rules remains a significant bottleneck in metabolic model development. Despite advances in automating network reconstruction, GPR assignment has traditionally required extensive manual curation from literature and biological databases [23]. This process is essential for enabling key applications such as gene deletion simulations and the integration of omics data, which depend on accurate gene-reaction relationships [23]. The growing importance of GPRs is reflected in their central role in contextualizing genome-scale models for specific biological conditions, from microbial engineering to human disease states.

The Fundamental Logic of GPR Associations

Boolean Representation of Catalytic Mechanisms

GPR associations employ Boolean logic operators (AND, OR) to represent the structural and functional relationships between genes and their catalytic products. These logical expressions describe how gene products combine to form functional enzymes that catalyze metabolic reactions [23]. The Boolean representation follows specific biological principles:

- AND operator: Joins genes encoding different subunits of the same enzyme complex, indicating that all specified gene products are required for functional activity

- OR operator: Joins genes encoding distinct protein isoforms or isozymes that can alternatively catalyze the same reaction

This logical framework can represent increasingly complex biological scenarios. For instance, multiple oligomeric enzymes may behave as isoforms due to sharing common subunits while possessing distinctive subunits that constitute their unique features [23]. The example below illustrates a GPR rule where either a monomeric enzyme (GeneA) OR a heterodimeric complex (GeneB AND Gene_C) can catalyze a reaction:

Classification of Enzymatic Reactions

From a GPR perspective, metabolic reactions are classified based on their catalytic requirements:

- Non-enzymatic reactions: Occur spontaneously or catalyzed by small molecules, requiring no gene associations

- Monomeric enzyme reactions: Catalyzed by a single subunit encoded by one gene

- Oligomeric enzyme reactions: Catalyzed by protein complexes requiring multiple subunits (AND logic)

- Isozyme-catalyzed reactions: Multiple alternative enzymes that catalyze the same reaction (OR logic) [23]

This classification system enables accurate representation of the genetic basis for metabolic functionality within computational models.

Figure 1: GPR Boolean Logic Representation. This diagram illustrates the relationship between genes, proteins, and metabolic reactions using Boolean operators (AND, OR).

Computational Methods for GPR Reconstruction

Automated GPR reconstruction leverages information from multiple biological databases to establish comprehensive gene-reaction relationships. Current tools integrate data from nine different biological databases, including:

Table 1: Key Biological Databases for GPR Reconstruction

| Database | Primary Use in GPR | Data Type | Reference |

|---|---|---|---|

| UniProt | Protein function and complex information | Protein composition, function | [23] [24] |

| KEGG | Reaction-enzyme associations | ORTHOLOGY, BRITE databases | [23] |

| MetaCyc | Enzyme and pathway information | Curated enzyme data | [23] [25] |

| Complex Portal | Protein-protein interactions | Macromolecular complexes | [23] |

| Rhea | Biochemical reactions | Reaction database | [23] |

| TCDB | Transporter classification | Transporter information | [23] |

GPRuler, an open-source Python-based framework, represents a significant advancement in automated GPR reconstruction by mining text and data from these multiple biological databases [23]. The tool can reconstruct GPRs starting from either just the name of a target organism or an existing metabolic model, addressing a critical bottleneck in metabolic network reconstruction.

Reconstruction Workflows and Algorithms

The GPR reconstruction pipeline follows two primary approaches:

- Organism-centric reconstruction: Begins with the organism name and reconstructs GPRs by identifying metabolic genes and their relationships through database mining

- Model-centric reconstruction: Starts with an existing SBML model or reaction list and enhances it with GPR associations

A novel algorithm for assembling GPR associations integrates genome annotation data with protein composition and function information from UniProt database [24]. This approach has been validated against state-of-the-art models for E. coli and S. cerevisiae with competitive results.

Recent research has also implemented a model transformation that generates a stoichiometric representation of GPR associations, enabling constraint-based methods to be applied at the gene level by explicitly accounting for individual fluxes of enzymes encoded by each gene [22]. This transformation changes the Boolean representation of gene states to a real-valued representation, where enzymes become species in the model.

Figure 2: GPR Reconstruction Workflow. Computational pipeline for reconstructing GPR rules from either organism names or existing metabolic models.

Experimental and Computational Protocols

Protocol 1: Automated GPR Reconstruction with GPRuler

Purpose: To automatically reconstruct GPR rules for any organism using the GPRuler framework.

Input Requirements:

- Option A: Name of target organism

- Option B: Existing SBML model or reaction list lacking GPR rules

Methodology:

Data Retrieval Phase:

- Query biological databases (MetaCyc, KEGG, Rhea, ChEBI, TCDB) for target organism data

- Download most recent database versions to ensure data currency

- Extract gene-protein and protein-reaction relationships

Gene Identification Phase:

- For organism-name input: Identify metabolic genes through genome annotation

- For existing model input: Map reactions to catalyzing enzymes and encoding genes

Rule Assembly Phase:

- Apply Boolean logic based on protein complex information from Complex Portal

- Assign AND operators for enzyme complex subunits

- Assign OR operators for isozymes and alternative enzyme subunits

Validation Phase:

- Compare reconstructed GPRs with manually curated rules when available

- Assess accuracy through statistical measures (precision, recall)

- Perform manual investigation of mismatches to identify potential improvements

Output: Boolean GPR rules in standard SBML format for integration into metabolic models.

Protocol 2: Gene-Level Constraint-Based Analysis

Purpose: To perform constraint-based analysis at the gene level using stoichiometric representation of GPRs.

Methodology:

Model Transformation:

- Convert Boolean GPR rules to stoichiometric representation

- Introduce enzyme usage reactions for each gene

- Decompose reversible reactions and isozyme-catalyzed reactions

- Represent enzymes and enzyme subunits as pseudo-species in the model

Gene-Level Simulation:

- Apply standard constraint-based methods (FBA, pFBA) to transformed model

- Utilize enzyme usage variables to determine flux carried by each enzyme

- Formulate gene-wise objective functions when appropriate

Analysis Applications:

- Flux distribution prediction: Identify gene contributions to metabolic phenotypes

- Gene essentiality analysis: Determine critical genes under specific conditions

- Strain design: Develop gene-based rather than reaction-based modification strategies

- Transcriptomics integration: Incorporate gene expression data directly into models

Validation: Compare predictions with experimental 13C-flux data and essentiality datasets to verify improved accuracy over reaction-level models [22].

Applications in Metabolic Research and Biotechnology

Drug Discovery and Development

GPR-enabled metabolic models have become valuable tools in pharmaceutical research, particularly in oncology. Recent studies have investigated drug-induced metabolic changes in cancer cell lines using GPR-integrated models. For example, research on gastric cancer cells (AGS) treated with kinase inhibitors utilized GPR associations to connect transcriptomic profiling with metabolic alterations [7]. This approach revealed widespread down-regulation of biosynthetic pathways, particularly in amino acid and nucleotide metabolism, following drug treatment.

The integration of GPR rules enables more accurate prediction of drug synergy mechanisms by modeling how combination treatments affect metabolic tasks at the gene level. In the AGS study, combinatorial treatments induced condition-specific metabolic alterations, including strong synergistic effects in the PI3Ki–MEKi condition affecting ornithine and polyamine biosynthesis [7]. These insights provide mechanistic understanding of drug synergy and highlight potential therapeutic vulnerabilities.

Metabolic Engineering and Strain Design

GPR associations are crucial for rational metabolic engineering, enabling the design of microbial cell factories for chemical production. Traditional strain design methods identify reaction modifications that must be translated to gene-level interventions, often with suboptimal results due to GPR complexity [22]. Gene-level strain design using explicit GPR representation overcomes these limitations:

- Prevents infeasible designs resulting from promiscuous enzymes

- Accounts for enzyme complex requirements in flux constraints

- Enables direct genetic implementation without loss of optimality

Studies have demonstrated that a significant fraction of reaction-based designs (up to 30%) are genetically infeasible due to GPR complexities, highlighting the importance of gene-aware approaches [22].

Context-Specific Model Reconstruction

GPR rules enable the development of context-specific metabolic models from omics data, representing the active metabolic network under particular conditions. By integrating transcriptomic, proteomic, and metabolomic data through GPR associations, researchers can create cell-type, tissue, or condition-specific models [26] [27]. These contextualized models have applications in:

- Human health: Tissue-specific models for understanding metabolic aspects of diseases

- Microbiome research: Multi-species models of microbial communities

- Biotechnology: Condition-specific models for optimizing industrial processes

Table 2: GPR Applications Across Biological Domains

| Application Domain | Key Use Case | Benefit of GPR Integration |

|---|---|---|

| Drug Discovery | Mechanism of action analysis | Connects gene expression changes to metabolic alterations |

| Metabolic Engineering | Strain optimization | Ensures genetic feasibility of designed modifications |

| Biomedical Research | Disease modeling | Enables tissue-specific model reconstruction |

| Microbial Ecology | Community modeling | Links metagenomic data to community metabolic functions |

| Biotechnology | Process optimization | Predicts metabolic behavior under industrial conditions |

Advanced Research and Future Directions

Integration with Machine Learning Approaches

Recent research has explored hybrid neural-mechanistic approaches that combine machine learning with constraint-based modeling to improve predictive power. These approaches embed metabolic models within artificial neural networks, creating architectures that can learn from flux data while respecting GPR-derived constraints [28]. The neural-mechanistic hybrid models:

- Require training set sizes orders of magnitude smaller than classical machine learning

- Systematically outperform traditional constraint-based models in prediction accuracy

- Can capture regulatory effects and gene knockout phenotypes

- Enable more accurate conversion from extracellular concentrations to uptake fluxes

This integration addresses a fundamental limitation in traditional FBA: the lack of a direct relationship between extracellular concentrations and uptake flux bounds [28].

Multi-Tissue and Multi-Cellular Modeling

GPR associations are extending constraint-based modeling to multi-cellular systems, including microbial communities and multi-tissue models of higher organisms. These advanced applications leverage GPR rules to connect genomic information with metabolic functions across different cell types and species [27]. Current research directions include:

- Host-microbe interaction models: Combining human metabolic models with microbial community models

- Multi-tissue human models: Integrating tissue-specific models through transport reactions

- Spatially-resolved modeling: Incorporating spatial organization into metabolic simulations

These developments require sophisticated GPR management to maintain consistent gene-reaction relationships across different cellular contexts and organizational levels.

Table 3: Key Research Reagents and Computational Tools for GPR Research

| Resource | Type | Function | Application Context |

|---|---|---|---|

| GPRuler | Software Tool | Automated GPR reconstruction | Draft model development, GPR gap-filling |

| UniProt Knowledgebase | Database | Protein complex information | GPR rule validation and refinement |

| Complex Portal | Database | Protein-protein interactions | Enzyme complex structure determination |

| - RAVEN Toolbox | Software Suite | Semi-automated GPR assignment | Template-based model reconstruction |

| COBRA Toolbox | Software Suite | Constraint-based analysis | Simulation with GPR-integrated models |

| MetaCyc | Database | Enzyme and pathway data | Reaction-gene association evidence |

| BiGG Models | Database | Curated metabolic models | GPR rule comparison and validation |

Gene-Protein-Reaction associations represent a foundational component of modern metabolic modeling, creating an essential bridge between genomic information and metabolic phenotype. The accurate reconstruction of GPR rules through automated tools and multi-database integration has significantly accelerated metabolic network development. As constraint-based modeling expands to include multi-cellular systems, integrates with machine learning approaches, and tackles increasingly complex biological questions, the role of GPR associations becomes ever more critical. Future methodological advances will likely focus on enhancing the automation of GPR reconstruction, improving the representation of regulatory constraints, and developing more sophisticated approaches for contextualizing GPR rules based on multi-omics data. These developments will further strengthen the mechanistic link between genotype and metabolic phenotype across diverse biological applications.

Major Applications in Biomedicine and Drug Discovery

Constraint-Based Reconstruction and Analysis (COBRA) has emerged as a powerful computational framework for modeling metabolic networks in biomedical research and drug discovery. By leveraging genome-scale metabolic models (GEMs), researchers can simulate cellular metabolism and predict how perturbations—such as genetic mutations or drug treatments—alter metabolic flux states [7]. This approach is particularly valuable in oncology, where cancer cells extensively reprogram their metabolism to support rapid proliferation and survival [7] [29]. The fundamental principle underlying constraint-based modeling is the imposition of physicochemical constraints on metabolic networks, including mass balance, energy conservation, and reaction directionality, to predict feasible metabolic behaviors under specific conditions.

The biomedical applications of constraint-based modeling are extensive and growing. Researchers now employ these methods to identify metabolic vulnerabilities in cancer cells, understand drug mechanisms of action, predict synergistic drug combinations, and discover novel therapeutic targets [7]. The integration of transcriptomic, proteomic, and metabolomic data with metabolic models has enhanced their predictive accuracy and biological relevance, enabling more precise investigation of disease-specific metabolic alterations [7]. This whitepaper examines key applications of constraint-based modeling in biomedicine and drug discovery, with particular emphasis on recent methodological advances and their implementation in current research paradigms.

Drug Mechanism Elucidation and Synergy Prediction

Investigating Kinase Inhibitor-Induced Metabolic Reprogramming

A recent landmark study demonstrates the power of constraint-based modeling for elucidating drug-induced metabolic changes in cancer cells [7]. Researchers investigated the metabolic effects of three kinase inhibitors (TAK1i, MEKi, PI3Ki) and their synergistic combinations in AGS gastric cancer cells using genome-scale metabolic models and transcriptomic profiling. The study applied the Tasks Inferred from Differential Expression (TIDE) algorithm to infer pathway activity changes across treatment conditions [7]. The experimental workflow integrated multiple data types and analytical approaches to comprehensively characterize metabolic responses to targeted therapies.

Table 1: Key Findings from Kinase Inhibitor Metabolic Profiling Study

| Treatment Condition | Total DEGs | Metabolic DEGs | Key Metabolic Pathways Affected | Synergistic Effects |

|---|---|---|---|---|

| TAKi | ~2000 | ~700 | Lipid metabolism | Moderate |

| MEKi | ~2000 | ~700 | Mitochondrial processes | Moderate |

| PI3Ki | ~2000 | ~700 | Nervous system development | Moderate |

| PI3Ki–TAKi | ~2000 | ~700 | Keratinization, mRNA metabolism | Additive |

| PI3Ki–MEKi | >2000 | >700 | Ornithine/polyamine biosynthesis | Strong synergy |

The transcriptomic analysis revealed that all treatment conditions resulted in approximately 2000 differentially expressed genes (DEGs), with a consistent pattern of more up-regulated than down-regulated genes [7]. However, the PI3Ki–MEKi combination demonstrated particularly strong synergistic effects, with a higher number of DEGs and approximately 25% unique DEGs not observed in individual treatments [7]. This unique transcriptional signature suggested distinct metabolic rewiring induced by the drug combination.

Experimental Protocol for Drug Synergy Investigation

The methodology for investigating drug-induced metabolic changes involves a multi-step process integrating experimental and computational approaches [7]:

Cell Treatment and Transcriptomic Profiling: AGS gastric cancer cells are treated with individual kinase inhibitors (TAK1i, MEKi, PI3Ki) and their combinations (PI3Ki–TAKi, PI3Ki–MEKi), followed by RNA sequencing to quantify gene expression changes.

Differential Expression Analysis: Process raw transcriptomic data using standard pipelines with the DESeq2 package to identify statistically significant DEGs for each treatment condition compared to controls.

Pathway Enrichment Analysis: Perform Gene Ontology (GO) and KEGG pathway enrichment analyses to identify biological processes and pathways significantly altered by drug treatments.

Constraint-Based Modeling Implementation: Apply the TIDE algorithm or its variant TIDE-essential to infer changes in metabolic pathway activity from transcriptomic data. TIDE-essential focuses on task-essential genes without relying on flux assumptions, providing a complementary perspective to the original algorithm.

Synergy Quantification: Calculate metabolic synergy scores by comparing the effects of combination treatments with individual drugs to identify metabolic processes specifically altered by drug synergies.

Validation and Tool Development: Implement both TIDE frameworks in an open-source Python package (MTEApy) to support reproducibility and broader application.

Figure 1: Experimental workflow for investigating drug-induced metabolic changes using constraint-based modeling

Metabolic Network Reconstruction and Analysis Tools

Computational Frameworks for Metabolic Analysis

The advancement of constraint-based modeling applications in biomedicine relies heavily on specialized computational tools and platforms. Recent developments have produced sophisticated software solutions that streamline metabolic network reconstruction, simulation, and analysis.

Table 2: Key Platforms for Metabolic Modeling and Analysis

| Tool/Platform | Primary Function | Data Sources | Key Applications | Access |

|---|---|---|---|---|

| MTEApy | Python implementation of TIDE algorithms | Transcriptomic data | Inferring metabolic task changes from gene expression | Open-source Python package [7] |

| MetaDAG | Metabolic network reconstruction and analysis | KEGG database | Visualizing metabolic interactions, comparative analysis | Web-based tool [30] |

| KBase | Metabolic model reconstruction and gap-filling | RAST annotations, ModelSEED biochemistry | Draft model reconstruction, predicting growth conditions | Web-based platform [31] |

| ModelSEED | Genome-scale metabolic model construction | RAST functional roles | Generating metabolic models from genomic data | Integrated with KBase [31] |

MetaDAG represents a significant innovation in metabolic network analysis, constructing two complementary models: a reaction graph where nodes represent reactions and edges represent metabolite flow, and a metabolic directed acyclic graph (m-DAG) that collapses strongly connected components into metabolic building blocks [30]. This approach substantially reduces network complexity while maintaining connectivity, enabling more intuitive topological analysis of reconstructed metabolic networks. The tool can generate metabolic networks from various inputs, including specific organisms, sets of organisms, reactions, enzymes, or KEGG Orthology identifiers, making it applicable to diverse research scenarios from individual microbial samples to complex metagenomic communities [30].

Protocol for Metabolic Network Reconstruction

The reconstruction and analysis of metabolic networks using tools like MetaDAG follows a systematic process [30]:

Input Specification: Define the scope of reconstruction using KEGG organism codes, specific reactions, enzymes, or KEGG Orthology identifiers.

Data Retrieval: Query the KEGG database to retrieve all reactions associated with the input parameters, establishing the foundational reaction set for network construction.

Reaction Graph Generation: Construct a directed graph where nodes represent individual reactions and edges connect reactions that share metabolites (product of one reaction serves as substrate for another).

m-DAG Computation: Identify strongly connected components within the reaction graph and collapse them into single nodes called Metabolic Building Blocks (MBBs), creating a simplified directed acyclic graph representation.

Network Analysis and Visualization: Compute topological properties, identify key metabolic pathways, and generate interactive visualizations of both reaction graphs and m-DAGs.

Comparative Analysis: When analyzing multiple groups or conditions, calculate core and pan metabolism for each group and perform comparative analysis of their m-DAGs to identify shared and unique metabolic features.

Figure 2: Metabolic network reconstruction and analysis workflow using MetaDAG

Target Identification and Therapeutic Vulnerability Mapping

Identifying Metabolic Vulnerabilities in Cancer Cells

Constraint-based modeling enables systematic identification of metabolic dependencies in cancer cells that can be exploited therapeutically. The study of kinase inhibitors in AGS cells revealed widespread down-regulation of biosynthetic pathways, particularly affecting amino acid and nucleotide metabolism [7]. These pathways represent potential therapeutic targets, as their inhibition could further compromise cancer cell viability already stressed by kinase inhibition.

The TIDE algorithm analysis specifically identified strong synergistic effects in the PI3Ki–MEKi combination affecting ornithine and polyamine biosynthesis [7]. This finding highlights how constraint-based modeling can uncover non-obvious metabolic vulnerabilities that emerge from drug combinations rather than single agents. Polyamine metabolism is crucial for cancer cell proliferation, and its targeted inhibition represents a promising therapeutic strategy currently under investigation.

Gene set enrichment analysis further complemented these findings by revealing consistent down-regulation of rRNA and ncRNA ribonucleotide biogenesis, rRNA-protein complex organization, and tRNA aminoacylation across all treatment conditions [7]. This consistent pattern suggests a global suppression of protein synthesis machinery in response to kinase inhibition, identifying another potential vulnerability that could be exploited therapeutically.

Protocol for Metabolic Vulnerability Assessment

The methodology for identifying metabolic vulnerabilities using constraint-based approaches involves [7] [31]:

Context-Specific Model Reconstruction: Generate condition-specific metabolic models by integrating transcriptomic data with global metabolic reconstructions using tools like RAVEN or COBRA Toolbox.

Essentiality Analysis: Perform systematic in silico gene knockout simulations to identify metabolic genes essential for growth under specific nutrient conditions.

Reaction Flux Analysis: Compare flux distributions between normal and perturbed states to identify reactions with significantly altered metabolic flux.

Synthetic Lethality Screening: Identify pairs of non-essential reactions whose simultaneous inhibition compromises cell viability, revealing potential combination therapy targets.

Drug Target Prioritization: Rank potential targets based on multiple criteria including essentiality, flux control, and expression in target tissues.

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of constraint-based modeling in biomedical research requires specialized computational tools and resources. The following table summarizes essential components of the metabolic modeler's toolkit.

Table 3: Essential Research Reagents and Computational Tools for Constraint-Based Modeling

| Resource Type | Specific Tool/Resource | Function/Purpose | Application Context |

|---|---|---|---|

| Software Packages | MTEApy | Implements TIDE and TIDE-essential algorithms for inferring metabolic tasks from transcriptomic data | Analysis of drug-induced metabolic changes [7] |

| Web Tools | MetaDAG | Reconstruction, visualization, and analysis of metabolic networks from KEGG data | Metabolic network comparison across conditions or species [30] |

| Modeling Platforms | KBase with ModelSEED | Reconstruction, gap-filling, and simulation of genome-scale metabolic models | Draft model generation from genomic data [31] |

| Biochemistry Databases | KEGG, BioCyc, MetaCyc | Curated metabolic pathway information and reaction databases | Metabolic network reconstruction and annotation [30] |

| Solvers | GLPK, SCIP | Linear and mixed-integer programming solvers for flux balance analysis | Constraint-based optimization and gap-filling [31] |

Future Directions and Implementation Considerations

As constraint-based modeling continues to evolve, several emerging trends promise to expand its applications in biomedicine and drug discovery. The integration of multi-omics data—including transcriptomic, proteomic, and metabolomic profiles—will enhance the predictive accuracy of metabolic models [7]. Additionally, the development of more sophisticated algorithms for mapping transcriptional changes to metabolic flux states, such as the TIDE-essential variant, provides complementary perspectives for analyzing metabolic adaptations [7].

The implementation of these approaches in broader biomedical contexts faces both computational and methodological challenges. Large-scale metabolic reconstructions, such as those encompassing complete sets of available eukaryotes and prokaryotes (8,935 species in MetaDAG), can require substantial computational resources—exceeding 40 hours processing time and 70 GB storage space [30]. These requirements highlight the need for efficient algorithms and high-performance computing infrastructure as the field progresses toward more comprehensive metabolic network analysis.

For researchers implementing constraint-based modeling approaches, careful consideration of several factors is essential: (1) selection of appropriate media conditions that reflect the physiological environment, (2) validation of model predictions through experimental follow-up, and (3) utilization of multiple complementary algorithms to cross-validate findings [7] [31]. The continued development of open-source tools like MTEApy and accessible web platforms like MetaDAG will further democratize access to these powerful methods, enabling broader application across the biomedical research community [7] [30].

As constraint-based modeling matures, its integration with other computational approaches—including machine learning and kinetic modeling—will likely create new opportunities for understanding complex metabolic adaptations in disease and identifying novel therapeutic interventions. The methodologies and applications described in this whitepaper provide a foundation for researchers to leverage these powerful approaches in their own biomedical investigations.

From Theory to Practice: Core Algorithms, Tools, and Biomedical Applications

Flux Balance Analysis (FBA) is a mathematical approach to finding an optimal net flow of mass through a metabolic network that follows a set of instructions defined by the user [32]. This powerful computational method enables researchers to simulate metabolic phenotypes and predict the behavior of biological systems under specific conditions. As a cornerstone of constraint-based metabolic modeling, FBA operates on the fundamental principle that metabolic networks are governed by physico-chemical constraints, including mass balance, energy conservation, and reaction thermodynamics. By leveraging these constraints, FBA can predict flux distributions through genome-scale metabolic models (GSMMs), providing invaluable insights for metabolic engineering, drug target identification, and systems biology research.

The methodology has proven particularly valuable in microbial and algal research, as demonstrated by its application in reconstructing an enzyme-constrained, genome-scale metabolic model of Chlorella ohadii – the fastest-growing green alga reported to date [33]. This application validated growth predictions across three distinct growth conditions and facilitated the identification of potential targets for growth improvement through extensive flux-based comparative analyses.

Mathematical Foundations of FBA

Core Mathematical Framework

The mathematical foundation of FBA is built upon the stoichiometric matrix S, where m represents metabolites and n represents reactions. The fundamental equation governing metabolic fluxes is:

S · v = 0

Where v is the flux vector through each metabolic reaction. This equation represents the mass balance constraint, ensuring that for each internal metabolite, the rate of production equals the rate of consumption under steady-state assumptions.

Constraints and Objective Functions

FBA incorporates additional constraints to define the solution space:

- Capacity constraints: vmin ≤ v ≤ vmax

- Objective function: Typically biomass maximization, denoted as Z = cᵀv

The optimization problem is formulated as:

Maximize Z = cᵀv Subject to: S · v = 0 vmin ≤ v ≤ vmax

Where c is a vector indicating which reactions contribute to the cellular objective, typically biomass production.

Table 1: Key Components of the FBA Mathematical Framework

| Component | Symbol | Description | Role in FBA |

|---|---|---|---|

| Stoichiometric Matrix | S | m × n matrix defining metabolic network structure | Encodes metabolite relationships in reactions |

| Flux Vector | v | n-dimensional vector of reaction rates | Variables to be optimized |

| Objective Vector | c | n-dimensional vector defining cellular objective | Weights contributions to fitness function |

| Capacity Constraints | vmin, vmax | Lower and upper bounds on flux values | Defines feasible flux space |

Computational Implementation and Workflow

FBA Protocol Steps

The implementation of FBA follows a systematic workflow:

- Model Construction: Reconstruction of a genome-scale metabolic network from genomic, biochemical, and physiological data

- Constraint Definition: Specification of environmental conditions and physiological constraints

- Objective Selection: Definition of appropriate biological objective functions

- Optimization: Solving the linear programming problem to obtain flux distributions

- Validation: Comparison of predictions with experimental data

- Analysis: Interpretation of results and generation of testable hypotheses

For Chlorella ohadii, researchers developed a semiautomated platform for de novo generation of genome-scale algal metabolic models, which was then deployed to reconstruct an enzyme-constrained model validated under multiple growth conditions [33].

Advanced FBA Variants

Several sophisticated extensions to basic FBA have been developed to enhance predictive capabilities:

Table 2: Advanced Constraint-Based Modeling Approaches

| Method | Key Features | Applications | Constraints Added |

|---|---|---|---|

| Dynamic FBA | Incorporates time-varying extracellular metabolites | Bioprocess optimization, fed-batch cultures | Dynamic mass balances |

| Regulatory FBA | Includes transcriptional regulatory rules | Cell fate predictions, differentiation studies | Boolean regulatory constraints |

| Metabolic Flux Analysis (MFA) | Uses isotopic tracer experiments | Quantification of intracellular fluxes | Experimental flux measurements |

| Enzyme-Constrained FBA | Accounts for enzyme kinetics and allocation | Proteome-limited growth predictions | Enzyme capacity constraints |

Essential Research Tools and Reagents

Successful implementation of FBA requires both computational tools and experimental validation methods. The following table outlines key components of the FBA research toolkit.

Table 3: Research Reagent Solutions for FBA and Experimental Validation

| Item Name | Function/Application | Technical Specifications |

|---|---|---|

| Genome-Scale Metabolic Model | In silico representation of metabolic network | Species-specific reconstruction; typically in SBML format |

| Constraint-Based Reconstruction and Analysis (COBRA) | Software platform for FBA implementation | MATLAB/Python toolbox; open-source |

| Linear Programming Solver | Numerical optimization of objective function | CPLEX, Gurobi, or open-source alternatives |

| Gas Chromatography-Mass Spectrometry (GC-MS) | Experimental flux validation using isotopic tracers | ¹³C labeling patterns analysis |

| Defined Growth Media | Controlled nutrient availability for model constraints | Specific carbon, nitrogen sources |

| Biomass Composition Data | Definition of biomass objective function | Experimentally measured macromolecular percentages |

Workflow Visualization

The following diagram illustrates the comprehensive FBA workflow from model construction to experimental validation:

FBA Workflow: From Data to Predictions

Applications in Metabolic Research and Drug Development

FBA has become an indispensable tool in metabolic engineering and pharmaceutical research. The methodology enables researchers to identify essential genes and reactions that represent potential drug targets, particularly in pathogenic microorganisms. By simulating gene knockout experiments in silico, FBA can predict lethal mutations and identify pathway bottlenecks.