Constraint-Based Reconstruction and Analysis (COBRA): A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive introduction to Constraint-Based Reconstruction and Analysis (COBRA), a powerful computational framework for modeling metabolic networks.

Constraint-Based Reconstruction and Analysis (COBRA): A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive introduction to Constraint-Based Reconstruction and Analysis (COBRA), a powerful computational framework for modeling metabolic networks. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles of COBRA, detail the essential methodologies and tools like the COBRA Toolbox and COBRApy, and guide you through practical application and troubleshooting. The content also covers advanced model validation techniques and a comparative analysis of different COBRA approaches, empowering you to leverage genome-scale models for predicting phenotypic states and driving innovation in biomedicine and biotechnology.

Understanding COBRA: The Core Principles and Ecosystem for Metabolic Modeling

What is COBRA? Defining Constraint-Based Reconstruction and Analysis

Constraint-Based Reconstruction and Analysis (COBRA) is a mechanistic, computational framework for the analysis of biochemical networks, particularly metabolism, at the genome scale [1] [2]. This approach provides a molecular mechanistic framework for the integrative analysis of experimental molecular systems biology data and the quantitative prediction of physicochemically and biochemically feasible phenotypic states [1]. The core principle of COBRA methods is to mathematically model the constraints that limit the possible behaviors of a biochemical system, including physicochemical laws, genetic capabilities, and environmental conditions [1].

COBRA has evolved from primarily analyzing metabolic networks to modeling increasingly complex biological processes, including integrated models of metabolism and macromolecular expression (ME-models) [3] [4]. The popularity of constraint-based approaches stems from their ability to analyze biological systems without requiring a comprehensive set of kinetic parameters, instead focusing on data-driven physicochemical and biological constraints to enumerate the set of feasible phenotypic states of a reconstructed biological network [3]. These methods have found widespread application in biology, biomedicine, and biotechnology, enabling researchers to predict metabolic behaviors, identify drug targets, design microbial strains for biotechnology, and investigate host-microbe interactions [1] [2] [5].

Theoretical Foundations and Mathematical Framework

Core Mathematical Principles

COBRA methods are built upon stoichiometric representations of biochemical reaction networks. The core mathematical framework involves representing a metabolic network as a stoichiometric matrix S of size m × n, where m represents the number of metabolites and n represents the number of reactions [2] [4]. The flux through all reactions is represented by a vector v of length n. The system is assumed to operate at steady state, where the production and consumption of metabolites are balanced, leading to the fundamental mass balance equation:

S · v = 0

This equation defines the solution space of all possible flux distributions that do not violate mass conservation. To further constrain the solution space, bounds are applied to individual reaction fluxes:

vlb ≤ v ≤ vub

where v_lb and v_ub represent lower and upper bounds on reaction fluxes, respectively [2]. These bounds can incorporate thermodynamic constraints (setting irreversible reactions to have non-negative fluxes), enzyme capacity constraints, and measured uptake/secretion rates.

Solution Space and Optimization

The constraints described above define a flux cone of feasible metabolic states. Within this space, COBRA methods typically use optimization to find flux distributions that optimize a biological objective, formulated as a linear combination of fluxes:

Z = c^T · v

where c is a vector of weights indicating which reactions contribute to the biological objective [2]. A common objective is the maximization of biomass production, which simulates cellular growth [3].

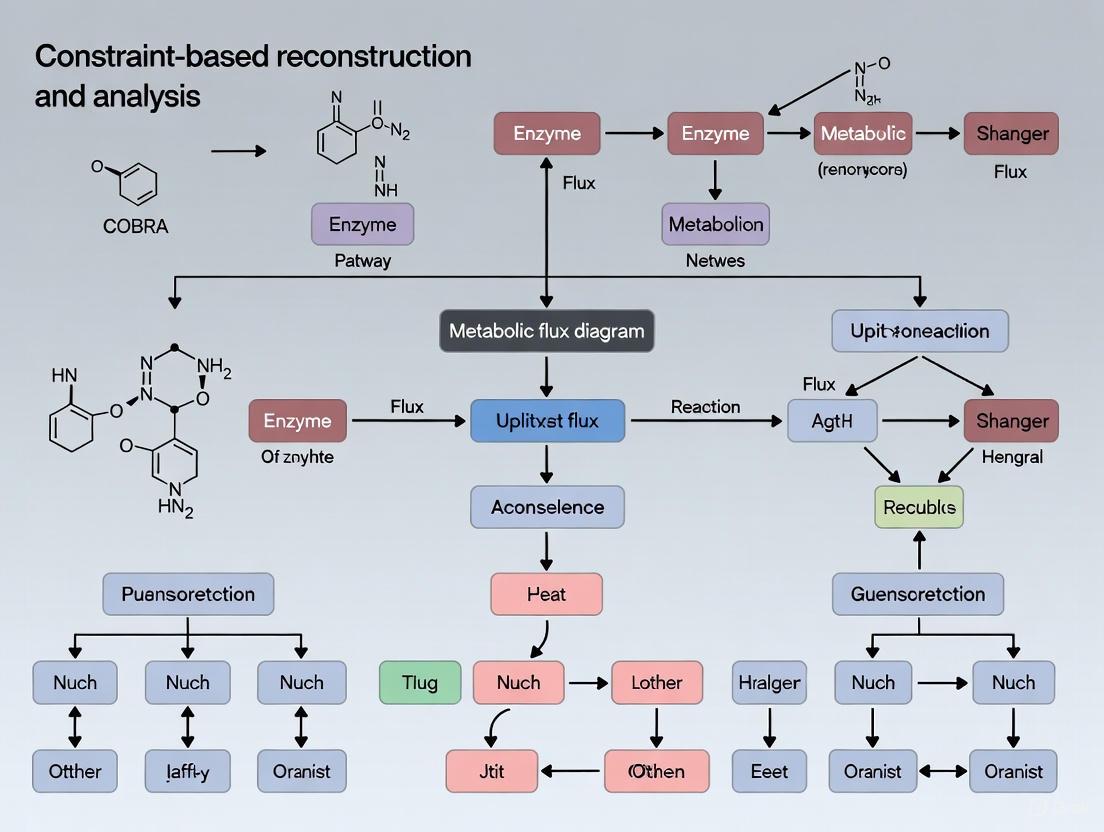

Figure 1: The COBRA Mathematical Framework. This diagram illustrates the logical flow from biological data to the prediction of metabolic fluxes through constraint-based optimization.

Core COBRA Methodologies and Algorithms

Fundamental Algorithms

COBRA encompasses a suite of computational methods for analyzing metabolic networks:

Flux Balance Analysis (FBA): FBA is the foundational COBRA method that calculates the flow of metabolites through a metabolic network by optimizing a specified objective function (e.g., biomass production) subject to stoichiometric and capacity constraints [1] [3]. It identifies a single flux distribution that achieves optimal performance of the biological objective.

Flux Variability Analysis (FVA): FVA determines the minimum and maximum possible flux through each reaction while maintaining optimal or near-optimal objective function value [3] [2]. This is important because alternative optimal solutions may exist, and reactions may carry different flux ranges while supporting the same objective value.

Gene Deletion Analysis: This method predicts the effect of single or double gene knockouts on network function, typically simulated by constraining the flux through reactions associated with the deleted gene to zero [3]. It helps identify essential genes and synthetic lethal gene pairs.

Gap Filling: An algorithm that identifies and proposes additions to the model (e.g., missing reactions) to restore functionality and improve consistency with experimental data [1].

Advanced and Specialized Methods

As COBRA methods have evolved, more advanced techniques have been developed:

Thermodynamic Constraint Integration: New algorithms incorporate thermodynamic data to estimate reaction directionality and constrain feasible kinetic parameters in multi-compartmental, genome-scale metabolic models [1].

Uniform Sampling: Advanced algorithms sample the solution space to generate a uniform distribution of feasible flux states, providing a comprehensive view of network capabilities [1].

Minimal Cut Sets: This approach identifies minimal sets of reactions or genes whose removal disrupts network functionality, with applications in drug target identification [1].

Strain Design Algorithms: Methods such as OptForce identify genetic modifications that optimize the production of desired compounds in metabolic engineering applications [1].

Implementation and Software Ecosystem

Software Tools and Platforms

The COBRA methodology is implemented in several software packages, each with distinct capabilities and requirements:

Table 1: Major COBRA Software Implementations

| Software | Language | License | Key Features | Applications |

|---|---|---|---|---|

| COBRA Toolbox [1] | MATLAB | Open Source | Comprehensive desktop suite, extensive reconstruction & analysis methods | General metabolic modeling, multi-omics integration |

| COBRApy [3] [2] | Python | Open Source | Object-oriented design, parallel processing, ME-model support | Large-scale modeling, high-density omics data |

| CellNetAnalyzer [3] | MATLAB | Proprietary | Rich functionality for signaling networks | Metabolic & signaling networks |

| Raven Toolbox [3] | MATLAB | Open Source | Genome-scale reconstruction | Metabolic model reconstruction |

Computational Considerations

Solving COBRA models, particularly large-scale ME-models, presents significant computational challenges due to the multiscale nature of biochemical networks where flux values span many orders of magnitude [4]. Standard double-precision solvers may return inaccurate solutions, leading to the development of specialized solution procedures:

- Quadruple-Precision Solvers: Quadruple-precision versions of optimization solvers (e.g., Quad MINOS) improve solution accuracy for multiscale problems [4].

- DQQ Procedure: A three-step procedure (Double-Quad-Quad) that combines double and quadruple-precision solvers to achieve both computational efficiency and solution accuracy [4].

- Exact Simplex Solvers: Solvers based on rational arithmetic (e.g., QSopt_ex) provide exact solutions but require substantially more computation time [4].

Table 2: Computational Approaches for Solving COBRA Models

| Solution Approach | Precision | Accuracy | Speed | Best Suited For |

|---|---|---|---|---|

| Standard Double-Precision | 16 digits | Moderate | Fast | Most M-models |

| DQQ Procedure [4] | Up to 34 digits | High | Moderate | ME-models, large multiscale models |

| Exact Solvers [4] | Exact arithmetic | Highest | Very slow | Small to medium models where exact solution is critical |

Applications in Biological Research and Biotechnology

Biomedical Applications

COBRA methods have found significant application in biomedical research, particularly in cancer metabolism. The dysregulated metabolic systems in cancer interact heavily with the surrounding environment, and metabolic flux analysis provides a beneficial approach to modeling these systems [2] [6]. Key applications include:

- Identification of Cancer-Specific Metabolic Dependencies: GEMs of cancer cells can predict essential metabolic genes and pathways that represent potential therapeutic targets [2].

- Drug Target Discovery: Constraint-based models can identify reactions whose inhibition would selectively impair cancer cell growth while sparing normal cells [2].

- Integration with Multi-Omics Data: Cancer genomic, transcriptomic, proteomic, and metabolomic data can be integrated into COBRA models to build context-specific models of tumor metabolism [2].

Microbial Communities and Host-Microbe Interactions

Constraint-based modeling has been extended to microbial communities, enabling the study of metabolic interactions between different species:

- Interrogation of Microbiome Interactions: Multispecies COBRA models allow mechanistic prediction of metabolic fluxes in microbial communities, spanning hundreds of organisms [5].

- Host-Microbe Metabolic Interactions: Integrated models of host and microbial metabolism can predict how gut microbiota influence host metabolic states [5].

- Ecological and Industrial Applications: COBRA modeling of microbial communities has applications extending from ecology and environmental conservation to industrial biotechnology [5].

Experimental Protocols and Methodologies

Workflow for Constraint-Based Modeling

A typical COBRA modeling protocol involves several key steps that integrate computational and experimental approaches:

Figure 2: COBRA Modeling Workflow. The iterative process of reconstruction, simulation, and validation that characterizes constraint-based modeling.

Table 3: Essential Research Reagents and Computational Tools for COBRA Modeling

| Resource Type | Specific Examples | Function/Purpose |

|---|---|---|

| Model Databases | BiGG Database [3] [4], BioModels [3], Model SEED [3] | Provide curated, published metabolic models for various organisms |

| Modeling Software | COBRA Toolbox [1], COBRApy [3], MEMOTE [2] | Implement constraint-based reconstruction and analysis methods |

| Optimization Solvers | CPLEX [4], MINOS [4], Gurobi | Solve linear optimization problems in FBA and related methods |

| Data Standards | SBML with FBC Package [3] [2] | Standardized format for model exchange and reproducibility |

| Quality Control Tools | MEMOTE [2] | Test suite for assessing metabolic model quality |

The COBRA field continues to evolve with several emerging trends. There is increasing development of open-source Python tools to enhance accessibility and handle complex datasets, moving beyond the traditional MATLAB-based implementations [2]. Methods for single-cell metabolic modeling are emerging to capitalize on the growing availability of single-cell omics data [2]. Integration of additional biological layers beyond metabolism, including transcriptional regulation and signaling networks, is expanding the scope of constraint-based modeling [3]. Additionally, new computational approaches are being developed to address the challenges of multi-scale modeling, particularly for integrated models of metabolism and macromolecular expression [4].

In conclusion, Constraint-Based Reconstruction and Analysis provides a powerful, mechanistic framework for studying biochemical networks. By integrating biochemical knowledge with mathematical constraints, COBRA methods enable quantitative prediction of cellular phenotypes and have demonstrated valuable applications across basic biology, biomedical research, and biotechnology. The continued development of computational tools and methodologies promises to further expand the capabilities and applications of constraint-based modeling in systems biology.

Constraint-Based Reconstruction and Analysis (COBRA) represents a paradigm shift in systems biology, enabling researchers to predict metabolic phenotypes without requiring detailed, difficult-to-measure kinetic parameters. This whitepaper details the core philosophy, mathematical foundations, and practical methodologies that make this possible, focusing on the principles of chemical organization theory and phenotype-centric modeling. By leveraging stoichiometric models and applying physicochemical constraints, COBRA methods can systematically characterize a network’s biochemical potential, offering a powerful framework for applications in metabolic engineering and drug development [7] [8].

The central challenge in building mechanistic models of metabolism is the scarcity of accurate kinetic data for all enzymatic reactions. The COBRA framework circumvents this limitation by shifting the focus from kinetic details to network structure and mass-balance constraints.

- Principle of Boundedness: Instead of predicting precise metabolite concentrations and fluxes, COBRA defines the possible space in which all functional states of a metabolic network must exist. This space is bounded by governing physicochemical constraints, such as mass conservation, reaction directionality, and enzyme capacity [9].

- From Kinetics to Potential: The question changes from "What will the system do?" to "What can the system do?". This approach identifies all potential biochemical phenotypes—such as growth rates or production yields—that a network can theoretically support, which can then be filtered and tested against experimental data [8].

This philosophy is powerfully embodied in Chemical Organization Theory (OT), which rigorously relates the structure of a network to its potential dynamics, predicting persistent species sets and the phenotypes they represent without kinetic parameters [7].

Mathematical Foundations: Chemical Organization Theory

Chemical Organization Theory (OT) provides a formal framework for analyzing reaction networks based solely on their stoichiometry. It predicts which sets of molecular species ("organizations") can persist together in a steady state or a growth regime over the long term [7].

Core Definitions

A reaction network is defined as a pair ( \langle M, R \rangle ), where ( M ) is the set of molecular species and ( R ) is the set of reactions. An Organization is a set of species ( S \subseteq M ) that is both closed and self-maintaining [7].

Table 1: Core Mathematical Concepts of Chemical Organization Theory

| Concept | Formal Definition | Biological Interpretation |

|---|---|---|

| Reaction Network | ( \langle M, R \rangle ) | The full set of metabolites and metabolic reactions in a system. |

| Closed Set | For all reactions ( (A \rightarrow B) \in R ) with ( A \in PM(S) ), then ( B \in PM(S) ). | No reaction within the set can produce a new species outside the set. The network is functionally contained. |

| Self-Maintaining Set | A flux vector ( v ) exists where ( S \cdot v \geq 0 ) for all species in ( S ), and fluxes are positive only for reactions with educts in ( S ). | Every species that is consumed within the set can be replenished by reactions from within the set. The set is sustainable. |

| Organization | A set of species that is both closed and self-maintaining. | A theoretically stable, persistent biochemical phenotype. |

The critical link to dynamics is provided by the theorem that all steady states of the system are instances of organizations. This means the set of species with positive concentration in a steady state will always form an organization [7].

Incorporating Regulatory Constraints

A key advancement is the integration of regulatory constraints (e.g., gene knockouts) into OT. This is achieved by mapping regulatory rules to pseudo-reactions and introducing pseudo-species that represent the absence of a molecule or the direction of a flux. This allows the metabolic network and its regulation to be analyzed as one unified reaction network, enabling accurate prediction of lethal knockouts and condition-specific phenotypes [7].

The following diagram illustrates the logical relationship between network structure, organizations, and phenotypes.

Key Methodologies and Protocols

This section outlines the primary computational protocols for applying the COBRA philosophy, from model reconstruction to phenotype prediction.

Protocol 1: Genome-Scale Metabolic Model Reconstruction

Objective: Build a stoichiometric model of an organism's metabolism.

- Genome Annotation: Identify all metabolic genes and map them to the reactions they encode.

- Reaction Assembly: Compile the full set of biochemical reactions into a stoichiometric matrix ( S ), where rows represent metabolites and columns represent reactions.

- Define Constraints: Apply constraints including:

- Mass Balance: ( S \cdot v = 0 ) for steady-state analysis.

- Capacity Constraints: ( \alphai \leq vi \leq \beta_i ), defining reaction reversibility and maximum fluxes.

- Model Validation: Compare model predictions (e.g., essential genes, growth capabilities) with experimental data to assess and refine the reconstruction [9].

Protocol 2: Phenotype Prediction via Chemical Organization Analysis

Objective: Identify all potential persistent metabolic phenotypes of a network.

- Network Definition: Represent the metabolic network as ( \langle M, R \rangle ), incorporating regulatory rules as pseudo-reactions if needed [7].

- Compute Organizations: Algorithmically identify all species sets that are closed and self-maintaining.

- This involves finding the extreme rays of the convex polyhedral cone defined by ( v \geq 0 ) and ( S \cdot v \geq 0 ) [7].

- Map to Phenotypes: Interpret each organization as a potential phenotype (e.g., growth on a specific substrate, lethality of a knockout).

- Filter and Validate: Filter the set of organizations based on environmental conditions (e.g., available nutrients) and compare the resulting predictions to experimental phenotype data [7] [8].

Protocol 3: Dynamic FBA and Data Integration

Objective: Simulate time-course behaviors and incorporate omics data.

- Dynamic Flux Balance Analysis (dFBA): Use FBA to compute fluxes at a time point, update the extracellular environment, and iterate through time to simulate dynamics [9].

- Omics Data Integration: Contextualize models by integrating transcriptomic or proteomic data to constrain the model to only include reactions active under a specific condition [9].

The following workflow diagram outlines the process from model creation to phenotype prediction.

Case Study: Predicting Lethal Knockouts inE. coli

To demonstrate the power of this philosophy, we examine the application of OT to a model of central metabolism in E. coli that incorporates the regulation of all involved genes [7].

Experimental Setup

- Model: The Covert and Palsson model of E. coli central metabolism.

- Method: Chemical Organization Theory with an integrated approach to handle inhibitory regulation.

- Comparison: Predictions were benchmarked against the established regulatory FBA (rFBA) method and known experimental outcomes.

Table 2: Key Reagent and Computational Solutions for COBRA Analysis

| Item | Function in Analysis |

|---|---|

| Genome-Scale Metabolic Model (GEM) | A stoichiometric database of all metabolic reactions in an organism; the core substrate for analysis. |

| COBRApy Package | An open-source Python toolkit for performing constraint-based modeling of metabolic networks [9]. |

| Stoichiometric Matrix (S) | A mathematical representation of the metabolic network where element ( S_{ij} ) is the stoichiometric coefficient of metabolite ( i ) in reaction ( j ). |

| Chemical Organization Theory Algorithm | Software to compute closed and self-maintaining sets of species from the reaction network [7]. |

| Omics Data (Transcriptomics/Proteomics) | Experimental data used to contextually constrain the model to a specific biological condition. |

Results and Performance

The OT-based method successfully predicted the known growth phenotypes of E. coli on 16 different substrates. For gene knockout experiments, the analysis yielded the following results [7]:

Table 3: Performance of OT in Predicting Lethal Knockouts

| Condition | Correct Predictions | Total Cases | Success Rate |

|---|---|---|---|

| Without model-specific assumptions | 101 | 116 | 87.1% |

| With the same assumptions as rFBA* | 106 | 116 | 91.4% |

| *Assumptions: 1) Secreted molecules do not influence regulation. 2) Metabolites with increasing concentrations indicate a lethal state. |

This performance was identical to that achieved by the rFBA method, demonstrating that OT is a powerful and universal technique for studying the potential behaviors of biological network models without kinetic parameters [7].

Discussion and Future Directions

The phenotype-centric approach of COBRA, exemplified by Chemical Organization Theory, represents a significant advancement for rational metabolic engineering and systems biomedicine.

- Landscape of Design Strategies: Phenotype-centric modeling allows for the identification of the global landscape of possible intervention strategies for a given network, providing a structured analysis of system design in the parameter space [8].

- Multi-Scale Modeling: The field is rapidly evolving toward more complex applications, including dynamic modeling of microbial communities and host-pathogen interactions, enabled by open-source software and model-sharing standards [9] [10].

- AI/ML Integration: A key future direction is the fusion of COBRA with artificial intelligence and machine learning (AI/ML) to create new, data-driven paradigms for synthetic biology and predictive modeling [10].

The core philosophy of predicting phenotypes without kinetic parameters, grounded in constraint-based modeling and chemical organization theory, provides a robust and scalable framework for understanding cellular metabolism. By focusing on network structure and physicochemical constraints, COBRA methods unlock the ability to predict metabolic capabilities, design efficient cell factories, and identify novel drug targets, all while circumventing the grand challenge of kinetic parameter uncertainty. This makes COBRA an indispensable tool in the modern researcher's toolkit.

Constraint-Based Reconstruction and Analysis (COBRA) is a systems biology framework that uses mathematical representations of biochemical networks to compute feasible metabolic phenotypes. The core principle of COBRA is that physicochemical constraints limit the system's possible behaviors, and applying these constraints allows for the prediction of metabolic fluxes. This methodology has become indispensable for modeling genome-scale metabolic networks, with applications ranging from metabolic engineering to drug target discovery [11]. The COBRA approach rests on a foundation of several key constraints, the most fundamental of which are mass conservation, thermodynamics, and stoichiometry. These constraints enable the construction of a "solution space" that contains all possible metabolic flux distributions a cell can theoretically display. By integrating these constraints with computational optimization, COBRA methods provide a mechanistic bridge between the cellular genotype and its metabolic phenotype, enabling quantitative prediction of cellular behavior under various genetic and environmental conditions [2] [11].

The Foundational Constraints of Metabolic Networks

Stoichiometry and Mass Conservation

Stoichiometry and mass conservation form the most fundamental constraint in COBRA modeling. The law of mass conservation requires that the total mass of reactants equals the total mass of products in any biochemical reaction. In the context of a metabolic network, this translates to the requirement that for each internal metabolite, the rate of production must equal the rate of consumption when the system is at steady state [11] [12].

This principle is mathematically encoded using the stoichiometric matrix S, where rows represent metabolites and columns represent reactions. The entries in the matrix are the stoichiometric coefficients of each metabolite in each reaction. The steady-state assumption, which implies that metabolite concentrations do not change over time, is expressed by the equation:

S · v = 0

where v is the vector of reaction fluxes [12]. This system of linear equations defines the core structure of any constraint-based model, ensuring that mass is balanced throughout the network.

Table 1: Components of the Stoichiometric Matrix Framework

| Component | Mathematical Representation | Biological Significance |

|---|---|---|

| Stoichiometric Matrix (S) | m × n matrix (m metabolites, n reactions) | Encodes network connectivity and mass balance |

| Flux Vector (v) | n × 1 vector of reaction rates | Represents metabolic flux through each reaction |

| Steady-State Condition | S · v = 0 | Ensures mass conservation for all internal metabolites |

| Exchange Reactions | Pseudo-reactions in S | Model metabolite uptake and secretion |

Thermodynamic Constraints

Thermodynamic constraints implement the second law of thermodynamics in metabolic networks, which dictates that reactions must proceed in the direction of negative Gibbs free energy (ΔG < 0). This principle is crucial for determining reaction directionality and eliminating thermodynamically infeasible flux cycles [13] [11].

The transformed reaction Gibbs energy is calculated using:

ΔG = ΔG°' + RT · ln(Q)

where ΔG°' is the standard transformed Gibbs energy of reaction, R is the gas constant, T is temperature, and Q is the reaction quotient. The directionality constraint is implemented by setting appropriate lower and upper bounds on reaction fluxes (vlb and vub) based on the calculated ΔG [13]. For irreversible reactions, these bounds constrain fluxes to either non-negative or non-positive values.

Advanced implementations, such as the von Bertalanffy extension to the COBRA Toolbox, automate the assignment of reaction directionality by integrating experimental or computationally estimated standard metabolite Gibbs energies with compartment-specific metabolite concentrations, pH, temperature, ionic strength, and electrical potential [13].

Table 2: Thermodynamic Parameters for Reaction Directionality Assignment

| Parameter | Description | Typical Range/Values |

|---|---|---|

| Standard Metabolite Gibbs Energy (ΔfG°') | Experimentally derived or group contribution estimated | Alberty (2003, 2006) tables; Jankowski et al. (2008) estimates |

| Temperature | Physiological temperature range | 273–313 K |

| pH | Compartment-specific pH | 5 to 9 |

| Ionic Strength | Affects metabolite activity | 0–0.35 M |

| Electrical Potential | Membrane potential for transport reactions | Compartment-dependent (mV) |

Integration of Constraints in COBRA Framework

The integration of stoichiometric, mass conservation, and thermodynamic constraints creates a bounded solution space within which biologically relevant flux distributions must reside. Additional constraints further refine this space, including:

- Flux capacity constraints: Limit reaction rates based on enzyme capacity and availability

- Gene-protein-reaction (GPR) associations: Link reaction activity to gene presence and expression through Boolean logic

- Environmental constraints: Define nutrient availability and byproduct secretion rates [2] [11]

The complete constrained system is typically represented as:

Maximize c^T · v Subject to: S · v = 0 vlb ≤ v ≤ vub

where c is a vector defining the biological objective function, such as biomass maximization or ATP production [12].

Diagram 1: COBRA constraint integration and analysis workflow

Experimental Protocols and Methodologies

Protocol for Quantitative Assignment of Reaction Directionality

The thermodynamic pipeline for assigning reaction directionality in multi-compartmental models involves several methodical steps [13]:

Prerequisite Data Collection

- Obtain standard metabolite Gibbs energies (ΔfG°') from experimental sources (e.g., Alberty tables) or group contribution estimates (e.g., Jankowski et al.)

- Gather compartment-specific physiological parameters: pH, ionic strength, electrical potential, and temperature

- Acquire metabolite concentration ranges when available

Model Quality Checks

- Verify mass and charge balance of all reactions

- Identify reactions that exchange metabolites with the environment

- Adjust reaction stoichiometry for thermodynamic consistency (e.g., represent dissolved CO2 as H2CO3 with H2O added appropriately)

Transformation to Physiological Conditions

- Adjust standard Gibbs energies from 298.15 K to physiological temperature (298-313 K) using the van't Hoff equation

- Account for ionic strength effects using the extended Debye-Hückle equation

- Perform Legendre transformations of metabolite species standard Gibbs energy for specified pH and electrical potential

- Calculate the average number of hydrogen ions bound by each reactant for H+ stoichiometric adjustment

Reaction Directionality Assignment

- Calculate transformed reaction Gibbs energy using standard transformed Gibbs energy and metabolomic data

- Compute cumulative probability that a reaction is irreversible based on uncertainty distributions

- Set irreversible directions for reactions with confidence above a specified cutoff

- Generate directionality reports highlighting conflicts with reconstruction directions

Protocol for Flux Balance Analysis with Thermodynamic Constraints

Integrating thermodynamic constraints into standard FBA involves these key methodological steps [13] [12]:

Model Constraint Definition

- Apply stoichiometric constraints: S · v = 0

- Set flux bounds based on thermodynamic feasibility: vlb ≤ v ≤ vub

- Define environmental constraints based on growth conditions

Objective Function Specification

- Define biological objective (e.g., biomass maximization, ATP production, or metabolite synthesis)

- Formulate objective as linear combination: Z = c^T · v

Linear Programming Solution

- Apply optimization algorithm to maximize objective function

- Extract optimal flux distribution from solution

Validation and Analysis

- Compare predicted fluxes with experimental data when available

- Perform flux variability analysis to identify alternative optimal solutions

- Test essentiality of reactions through in silico deletion studies

Diagram 2: Thermodynamic constraint implementation workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools for COBRA Research

| Tool Name | Programming Language | Key Functions | Application in Constraint Implementation |

|---|---|---|---|

| COBRA Toolbox | MATLAB | Core FBA, flux variability analysis, gene deletion | Thermodynamic constraint integration via von Bertalanffy extension [13] |

| COBRApy | Python | Object-oriented model representation, FBA, parallel FVA | Mass conservation via SBML with FBC package; thermodynamic constraint integration [3] [2] |

| COBRA.jl | Julia | Distributed FBA, high-performance flux computations | Implementation of stoichiometric and flux bound constraints [14] |

| libSBML | C/C++/Python/Java | Read/write SBML models with FBC support | Exchange of models with stoichiometric and constraint information [13] |

| MEMOTE | Python | Model quality testing and validation | Checking mass and charge balance of stoichiometric models [2] |

Table 4: Key Databases for Constraint Parameters

| Database/Resource | Content Type | Application in Constraint Implementation |

|---|---|---|

| Alberty's Thermodynamic Tables | Experimental ΔfG°' and ΔfH° for 135 reactants | Thermodynamic directionality constraints [13] |

| Group Contribution Method | Estimated ΔfG°' for metabolite structures | Augmenting experimental thermodynamic data [13] |

| BiGG Models | Curated genome-scale metabolic models | Source of stoichiometrically balanced models [2] |

| KEGG/EcoCyc | Biochemical pathways and reaction information | Source of stoichiometric information for network reconstruction [15] [16] |

| BioModels Database | SBML-formatted computational models | Source of constraint-based models for analysis [3] |

Advanced Topics and Future Directions

Emerging Computational Approaches

Recent advances in COBRA methodology have introduced sophisticated frameworks that build upon the foundational constraints. The TIObjFind framework integrates Metabolic Pathway Analysis (MPA) with FBA to identify context-specific objective functions by calculating Coefficients of Importance (CoIs) for reactions. This approach addresses the challenge of selecting appropriate objective functions that align with experimental flux data under different conditions [15] [16].

Quantum computing approaches are also being explored for solving core metabolic modeling problems. Recent research has demonstrated that quantum interior-point methods using quantum singular value transformation can reproduce classical FBA results for key cellular pathways. This approach may potentially accelerate metabolic simulations as models scale to whole cells or microbial communities, though current implementations remain limited to simulations on classical hardware [17].

Application in Therapeutic Development

The rigorous application of mass conservation, thermodynamic, and stoichiometric constraints has proven particularly valuable in cancer metabolism research. COBRA methods enable the identification of metabolic vulnerabilities in cancer cells by predicting essential genes and reactions under different metabolic environments. The creation of cell type-specific models through the integration of transcriptomic, proteomic, and metabolomic data with the fundamental constraints has facilitated the discovery of potential drug targets in various cancer types [2].

Similar approaches have been applied to pathogen metabolism, where constraint-based models identify enzymes essential for survival but absent in the host, representing promising antibiotic targets. The reliability of these predictions hinges on the accurate implementation of thermodynamic and stoichiometric constraints to ensure biological feasibility of the predicted essential reactions [11] [12].

The COnstraint-Based Reconstruction and Analysis (COBRA) approach represents a cornerstone methodology in systems biology that addresses a fundamental challenge: the frequent absence of sufficiently detailed parameter data required for precise biophysical modeling of organisms at genome-scale [18]. Instead of attempting to define unique metabolic states, COBRA methods leverage omics data to define sets of feasible states for biological networks under specific conditions by applying known constraints including compartmentalization, mass conservation, molecular crowding, and thermodynamic directionality [18]. This mechanistic framework mathematically and computationally models the constraints imposed on biochemical system phenotypes by physicochemical laws, genetics, and environmental factors [1]. The COBRA approach has demonstrated remarkable versatility across biological research, biomedicine, and biotechnology, with applications ranging from metabolic engineering to drug discovery [1].

The openCOBRA project has emerged as a community-driven initiative to provide researchers with standardized, accessible implementations of core COBRA methodologies. Initially developed with tools for MATLAB, the project has expanded to include Python and Julia-based modules designed to handle the complex relationships in next-generation COBRA models [18]. This ecosystem is currently led by researchers from the National University of Ireland Galway, Technical University of Denmark, and UCSD [18]. The openCOBRA project embodies a collaborative approach to scientific software development, proving that "knowledge integration and collaboration by large numbers of scientists can lead to cooperative advances impossible to achieve by a single scientist or research group alone" [1].

Core Concepts of Constraint-Based Modeling

Constraint-based modeling operates on several fundamental principles that distinguish it from other systems biology approaches. The methodology acknowledges the inherent incompleteness of mechanistic information in biological systems while still providing a framework for quantitative prediction of physicochemically and biochemically feasible phenotypic states [1]. At its core, COBRA modeling involves:

Stoichiometric Constraints: These enforce mass conservation by requiring that the production and consumption of each metabolite must balance at steady state, represented mathematically by the equation S·v = 0, where S is the stoichiometric matrix and v is the flux vector [1].

Capacity Constraints: These define upper and lower bounds (vmin and vmax) on reaction fluxes based on enzyme capacity, thermodynamic feasibility, and other physiological limitations [1].

Environmental Constraints: These represent nutrient availability and other extracellular conditions that influence metabolic capabilities [1].

The solution space defined by these constraints contains all possible metabolic flux distributions that satisfy the imposed conditions. COBRA methods then interrogate this solution space to predict phenotypic behaviors, identify potential drug targets, and guide metabolic engineering strategies. The Flux Balance Analysis (FBA) approach, which optimizes an objective function (often biomass production) within these constraints, has become particularly widely adopted for predicting growth rates, nutrient uptake, and byproduct secretion [19] [1].

The openCOBRA Software Ecosystem

The openCOBRA project provides interoperable software tools across multiple programming languages, each designed to leverage the unique strengths of its respective platform while maintaining consistency in core COBRA functionality.

Table 1: Core Components of the openCOBRA Ecosystem

| Package | Language | Key Features | Installation | License |

|---|---|---|---|---|

| COBRA Toolbox | MATLAB | Comprehensive desktop suite with extensive tutorials & methods [19] | MATLAB environment | Not specified |

| COBRApy | Python | Simple interface, object-oriented model management [20] [21] | pip install cobra or conda install -c conda-forge cobra |

GPL/LGPL v2+ |

| COBRA.jl | Julia | High-performance computing, distributedFBA [22] [23] | Pkg.add("COBRA") in Julia |

Not specified |

COBRA Toolbox for MATLAB

The COBRA Toolbox represents the original and most comprehensive implementation of COBRA methods. As a MATLAB-based suite, it provides "an unparalleled depth of constraint-based reconstruction and analysis methods" [1]. Version 3.0 of the toolbox includes extensive new capabilities for "quality controlled reconstruction, modelling, topological analysis, strain and experimental design, network visualisation as well as network integration of chemoinformatic, metabolomic, transcriptomic, proteomic, and thermochemical data" [1]. The toolbox supports multi-scale, multi-cellular, and reaction kinetic modeling through integration with high-precision and nonlinear numerical optimization solvers [1].

The COBRA Toolbox is particularly noted for its extensive educational resources, including dozens of specialized tutorials covering topics from basic Flux Balance Analysis to advanced techniques like thermodynamically constrained modeling and context-specific model extraction [19]. These resources make it particularly accessible for researchers new to constraint-based modeling approaches.

COBRApy for Python

COBRApy is designed as a Python package that provides "a simple interface to metabolic constraint-based reconstruction and analysis" [20]. Its development was motivated by the need to accommodate "the biological complexity of the next generation of COBRA models" while providing "useful, efficient infrastructure" for creating and managing metabolic models, accessing solvers, and performing common analyses [21]. The package features simple, object-oriented interfaces for model construction and implements commonly used COBRA methods including flux balance analysis, flux variability analysis, and gene deletion analyses [20].

A key design goal for COBRApy is to serve as foundational infrastructure for developers building new COBRA-related Python packages for visualization, strain design, and data-driven analysis [21]. This modular approach encourages community development and extension of the core functionality. COBRApy supports reading and writing models in multiple formats including SBML, MATLAB, and JSON [20].

COBRA.jl for Julia

COBRA.jl leverages the Julia programming language's strengths in high-performance computing to implement efficient COBRA analyses such as Flux Balance Analysis (FBA), Flux Variability Analysis (FVA), and distributed variants of these methods [22] [23]. The package is designed for high-performance flux balance analysis, particularly benefiting from Julia's just-in-time compilation and distributed computing capabilities [22]. A notable feature is distributedFBA.jl, which implements high-level, high-performance flux balance analysis capable of leveraging parallel computing resources [22] [23].

COBRA.jl can be used with any LP problem defined in a .mat file following the format outlined in the COBRA Toolbox, and it supports all solvers compatible with MathProgBase.jl [22]. A significant advantage is that loading COBRA models from .mat format using the MAT.jl package does not require a MATLAB license, reducing software dependencies and costs [22].

Essential Computational Protocols

The following section provides detailed methodologies for key analyses across the openCOBRA ecosystem, highlighting both common principles and implementation-specific details.

Flux Balance Analysis (FBA)

Flux Balance Analysis is the cornerstone method of constraint-based modeling, optimizing for an objective function (typically biomass production) within physicochemical constraints [19] [1].

COBRA Toolbox Protocol:

COBRApy Protocol:

COBRA.jl Protocol:

Flux Variability Analysis (FVA)

Flux Variability Analysis determines the minimum and maximum possible flux through each reaction in a network while maintaining optimal objective function value [19] [14].

COBRA.jl Distributed FVA Protocol:

COBRApy FVA Protocol:

Model Reconstruction and Curation

The openCOBRA ecosystem provides extensive tools for building and curating metabolic reconstructions.

COBRA Toolbox Reconstruction Protocol:

Visualization of COBRA Workflows

The following diagram illustrates the typical workflow for constraint-based reconstruction and analysis using openCOBRA tools, highlighting the iterative nature of model building and validation.

Successful implementation of COBRA methods requires both computational tools and biological data resources. The following table details essential components of the COBRA research toolkit.

Table 2: Essential Research Reagents and Resources for COBRA Studies

| Resource | Type | Function | Example Sources/Formats |

|---|---|---|---|

| Genome-Scale Metabolic Models | Data | Foundation for constraint-based simulations | SBML, .mat, JSON formats [20] [1] |

| Optimization Solvers | Software | Solve LP problems for FBA/FVA | Gurobi, CPLEX, GLPK, CLP, Mosek [22] [23] [14] |

| Omics Data Integration Tools | Software | Create context-specific models | MetaboAnnotator, XomicsToModel [19] [1] |

| Stoichiometric Matrix (S) | Data | Mathematical representation of metabolic network | .mat files with model structure [22] [23] |

| Thermochemical Data | Data | Estimate reaction directionality & energy constraints | Component contribution method [1] |

| Atom Mapping Data | Data | Enable atom-level resolution of metabolic networks | Molecular structures and transition networks [1] |

| Community Standards | Documentation | Ensure reproducibility & interoperability | SBML FBC standards, tutorial protocols [19] [1] |

Advanced Applications and Future Directions

The openCOBRA ecosystem continues to evolve with advancements in several key areas:

Multi-Scale Modeling: Recent developments enable "multi-scale, multi-cellular and reaction kinetic modelling" through high-precision and nonlinear numerical optimization solvers [1]. This allows researchers to bridge molecular and physiological scales in their analyses.

Thermodynamic Constraining: New algorithms allow for "estimation of thermochemical parameter estimation in multi-compartmental, genome-scale metabolic models" [1], significantly enhancing the biological realism of predictions.

Kinetic Modeling: The incorporation of "variational kinetic modelling" approaches provides "new algorithms and methods for genome-scale kinetic modelling" [1], moving beyond traditional steady-state assumptions.

Visualization Advances: Tools like "ReconMap" enable "new method for genome-scale metabolic network visualisation" [1], making complex network relationships more interpretable.

Community-Driven Development: The openCOBRA project exemplifies collaborative scientific software development, with community contributions facilitated by tools like MATLAB.devTools, which enables "contributions by those unfamiliar with version control software" [1]. The project maintains an active forum with "more than 800 posted questions with supportive replies connecting problems and solutions" [1].

As constraint-based modeling continues to expand its applications in biomedical research, metabolic engineering, and drug development, the openCOBRA ecosystem provides a robust, interoperable framework that adapts to increasingly complex research questions while maintaining core functionality and accessibility across multiple programming paradigms.

The Role of Genome-Scale Metabolic Models (GEMs) in Systems Biology

Genome-scale metabolic models (GEMs) are formal, mathematical representations of the metabolic network of an organism, constructed from its annotated genome sequence [24]. They serve as organized knowledge-bases that convert genomic information into a biochemical network capable of simulating metabolic phenotypes. By mapping the annotated genome to metabolic databases such as the Kyoto Encyclopedia of Genes and Genomes (KEGG), researchers can reconstruct a network comprising all known metabolic reactions for a target organism [24]. The core mathematical foundation of a GEM is the stoichiometric matrix (S matrix), where columns represent reactions, rows represent metabolites, and entries contain stoichiometric coefficients [24]. This structured framework enables GEMs to serve as powerful platforms for interpreting and predicting phenotypic states resulting from environmental and genetic perturbations, making them indispensable tools in the constraint-based reconstruction and analysis (COBRA) research ecosystem.

Construction and Reconstruction of GEMs

Model Reconstruction Workflow

The construction of a high-quality GEM follows a systematic, iterative process that integrates genomic, biochemical, and physiological data. The standard workflow begins with genome annotation using tools like RAST (Rapid Annotation using Subsystem Technology) to identify metabolic genes [25]. These annotations are then processed through automated model-building platforms such as ModelSEED to generate a draft reconstruction [25]. However, automated drafts require substantial manual curation to reach high quality standards.

Manual refinement involves several critical steps: 1) Homology-based gap filling using sequence similarity searches (BLAST) against template models of related organisms; 2) Integration of Gene-Protein-Reaction (GPR) associations using standardized Boolean rules; 3) Mass and charge balancing of all biochemical reactions; and 4) Incorporation of organism-specific physiological and biochemical data from literature [25]. The recently developed MACAW (Metabolic Accuracy Check and Analysis Workflow) suite provides algorithms for semi-automatic detection of errors in GEMs, including tests for dead-end metabolites, dilution errors, duplicate reactions, and thermodynamically infeasible loops [26].

Biomass Composition Estimation

A critical component of GEM construction is defining an accurate biomass objective function that represents the metabolic requirements for cellular growth. This involves quantifying the macromolecular composition of the cell, including proteins, DNA, RNA, lipids, and other essential components. For example, in the Streptococcus suis model iNX525, the biomass composition was adapted from phylogenetically related organisms (Lactococcus lactis and Streptococcus pyogenes) and included detailed measurements of: proteins (46%), DNA (2.3%), RNA (10.7%), lipids (3.4%), lipoteichoic acids (8%), peptidoglycan (11.8%), capsular polysaccharides (12%), and cofactors (5.8%) [25].

Quality Assessment and Validation

Rigorous quality assessment is essential before deploying GEMs for predictions. The MEMOTE (Metabolic Model Testing) suite provides a standardized benchmark for evaluating model quality, calculating metrics such as reaction network connectivity, metabolite mass and charge balance, and GPR consistency [27] [25]. For instance, the manually curated Streptococcus suis model iNX525 achieved a 74% overall MEMOTE score, indicating good quality despite remaining gaps [27] [25]. Model validation typically involves comparing simulation results against experimental growth phenotypes under different nutrient conditions and gene essentiality data from mutant screens [25].

Core Analytical Methods: Flux Balance Analysis and Beyond

Fundamentals of Flux Balance Analysis (FBA)

Flux Balance Analysis (FBA) is the most widely used method for simulating metabolic fluxes in GEMs [24]. FBA formulates metabolism as a linear programming problem that optimizes an objective function (typically biomass production) subject to constraints representing mass conservation, reaction capacities, and nutrient availability [24]. Mathematically, this is represented as:

Maximize: Z = cT · v Subject to: S · v = 0 and vmin ≤ v ≤ vmax

Where S is the stoichiometric matrix, v is the flux vector, and c is a vector defining the objective function [24]. The solution space defined by these constraints forms a convex polyhedron representing all feasible metabolic states [28]. FBA and related constraint-based methods are implemented in computational tools such as the COBRA Toolbox for MATLAB and COBRApy for Python, which provide standardized frameworks for GEM analysis [24].

Advanced Sampling and Context-Specific Modeling

While FBA identifies optimal flux states, many biological applications require understanding the complete space of possible metabolic behaviors. Flux sampling approaches address this need by generating probability distributions of feasible fluxes, enabling analysis of phenotypic diversity and metabolic robustness [28]. This is particularly valuable for modeling human tissues for drug development and complex microbial communities where single optimum solutions may be insufficient [28].

Context-specific GEMs extend this further by integrating omics data (transcriptomics, proteomics) to extract tissue-specific, disease-specific, or patient-specific metabolic networks [28]. Methods such as iMAT (Integrative Metabolic Analysis Tool) use gene expression data to create context-specific models by categorizing reactions into highly, moderately, and lowly expressed groups, then constraining the model to reflect these expression patterns [29]. For instance, in a lung cancer study, researchers used iMAT with RNA-seq data to reconstruct GEMs for both healthy and cancerous lung tissues, enabling identification of metabolic reprogramming in tumors [29].

Table 1: Key Analytical Methods for GEMs

| Method | Principle | Applications | Tools |

|---|---|---|---|

| Flux Balance Analysis (FBA) | Linear programming to optimize objective function | Predict growth rates, nutrient uptake, byproduct secretion | COBRA Toolbox, COBRApy |

| Flux Variability Analysis (FVA) | Identsible range of fluxes for each reaction | Determine essential reactions, robustness analysis | COBRA Toolbox |

| Gene Deletion Analysis | Simulates knockout of specific genes | Predict essential genes, synthetic lethality | COBRA Toolbox |

| Flux Sampling | Random sampling of feasible flux space | Analyze phenotypic diversity, network flexibility | optGpSampler, MATLAB |

| Context-Specific Modeling | Integration of omics data to extract tissue-specific models | Patient-specific analysis, disease modeling | iMAT, mCADRE, tINIT |

Thermodynamic and Kinetic Constraints

Recent advances incorporate thermodynamic and kinetic constraints to improve prediction accuracy. Metabolic Thermodynamic Sensitivity Analysis (MTSA) represents a novel approach that analyzes temperature-dependent metabolic vulnerabilities by integrating Michaelis-Menten kinetics with FBA [29]. This method assumes enzymatic reaction rates follow Michaelis-Menten equations and that reactions operate at maximum driving force under pseudo-steady state conditions [29]. Such approaches help identify thermodynamic bottlenecks and energy inefficiencies in metabolic networks.

Applications in Biomedical Research and Biotechnology

Drug Target Identification and Virulence Analysis

GEMs have proven particularly valuable for identifying novel antibacterial drug targets by analyzing metabolic pathways essential for both growth and virulence factor production. The Streptococcus suis model iNX525 exemplifies this approach, where researchers identified 131 virulence-linked genes through comparison with virulence factor databases [27] [25]. Among these, 79 genes were associated with 167 metabolic reactions in the model, with 101 metabolic genes predicted to affect the formation of nine virulence-linked small molecules [27] [25]. Most significantly, 26 genes were found to be essential for both cell growth and virulence factor production, with eight enzymes and metabolites in the biosynthesis pathways of capsular polysaccharides and peptidoglycans identified as promising antibacterial drug targets [27] [25].

Table 2: GEM Applications in Drug Discovery and Biotechnology

| Application Area | Specific Use Case | Representative Example |

|---|---|---|

| Infectious Disease | Identification of antimicrobial targets | Streptococcus suis model iNX525 identified 8 targets in capsule and peptidoglycan biosynthesis [27] |

| Cancer Metabolism | Analysis of metabolic reprogramming | Lung cancer GEMs revealed upregulated amino acid metabolism in tumor cells [29] |

| Live Biotherapeutic Products (LBPs) | Strain selection and safety assessment | AGORA2 framework for evaluating probiotic strains and their metabolic interactions [30] |

| Host-Microbe Interactions | Modeling gut microbiome metabolism | GEMs of Akkermansia muciniphila and Faecalibacterium prausnitzii for LBP development [30] |

| Toxicology | Prediction of drug-microbiome interactions | Curated reactions for degradation of 98 drugs to assess potential biotransformation [30] |

Live Biotherapeutic Products (LBP) Development

GEMs provide a powerful framework for the systematic development of Live Biotherapeutic Products (LBPs), which are microbiome-based therapeutics designed to restore microbial homeostasis. The AGORA2 resource, which contains curated strain-level GEMs for 7,302 gut microbes, enables in silico screening of LBP candidates through both top-down and bottom-up approaches [30]. In top-down screening, microbes are isolated from healthy donor microbiomes and their GEMs are analyzed to identify therapeutic functions, such as promoting growth of beneficial species or suppressing pathogens [30]. For example, pairwise growth simulations identified Bifidobacterium breve and Bifidobacterium animalis as antagonistic to pathogenic Escherichia coli, suggesting their potential for colitis alleviation [30].

In bottom-up approaches, therapeutic objectives are predefined based on omics analysis, followed by systematic screening of GEMs to identify strains with desired metabolic outputs [30]. GEMs also facilitate safety evaluation by predicting potential antibiotic resistance mechanisms, drug interactions, and toxic metabolite production [30]. Furthermore, media optimization through GEM-based prediction of essential nutrients helps address the challenge of cultivating fastidious gut microbes, accelerating LBP development [30].

Host-Microbe Interactions and Community Modeling

GEMs enable investigation of metabolic interactions between hosts and microbes at a systems level, revealing reciprocal metabolic influences and cross-feeding relationships [31]. By simulating metabolic fluxes and nutrient exchanges, GEMs can predict how microbial communities influence host metabolism and vice versa [31]. These approaches are particularly valuable for understanding the role of specific immune cells in disease contexts. For instance, GEMs of mast cells in lung cancer revealed enhanced histamine transport and increased glutamine consumption, indicating a shift toward immunosuppressive activity in the tumor microenvironment [29]. The novel Metabolic Thermodynamic Sensitivity Analysis (MTSA) method applied to these models identified impaired biomass production in cancerous mast cells across physiological temperatures (36-40°C), suggesting temperature-dependent metabolic vulnerabilities [29].

Experimental Protocols and Methodologies

Protocol 1: Construction and Validation of a GEM

Objective: Reconstruct a genome-scale metabolic model for a target organism and validate its predictive accuracy.

Materials:

- Genome sequence of target organism

- Template GEMs from phylogenetically related organisms

- Biochemical databases (KEGG, MetaCyc, UniProtKB/Swiss-Prot)

- Computational tools: RAST, ModelSEED, COBRA Toolbox, MEMOTE, MACAW

Procedure:

- Genome Annotation: Annotate the genome using RAST to identify protein-coding genes and their functional roles [25].

- Draft Reconstruction: Generate a draft model using ModelSEED or similar automated pipeline [25].

- Homology-Based Refinement: Use BLAST to identify homologous genes in template organisms (e.g., Bacillus subtilis, Staphylococcus aureus) with identity ≥40% and match lengths ≥70% [25].

- GPR Association: Integrate gene-protein-reaction associations from reference models and manual curation [25].

- Gap Filling: Identify and fill metabolic gaps using gapAnalysis in COBRA Toolbox, adding relevant reactions based on biochemical literature and database searches [25].

- Mass and Charge Balance: Check and correct unbalanced reactions using checkMassChargeBalance program [25].

- Biomass Formulation: Compose biomass equation based on experimental measurements or phylogenetic approximation [25].

- Quality Assessment: Evaluate model quality using MEMOTE test suite and error detection with MACAW [26].

- Validation: Compare model predictions with experimental growth phenotypes under different nutrient conditions and gene essentiality data from mutant screens [25].

Protocol 2: Context-Specific Modeling with Transcriptomic Data

Objective: Generate a context-specific GEM using transcriptomic data and identify condition-specific metabolic features.

Materials:

- Generic GEM of target organism (e.g., Human1 for human cells)

- Transcriptomic data (RNA-seq or microarray) from specific condition

- Computational tools: COBRA Toolbox, iMAT algorithm, CIBERSORTx (for tissue samples)

Procedure:

- Data Preprocessing: Map gene expression data to genes in the GEM using gene-protein-reaction associations [29].

- Reaction Expression Calculation: Calculate reaction expression levels based on GPR rules and gene expression values [29].

- Expression Categorization: Categorize reactions as lowly, moderately, or highly expressed using thresholds (e.g., mean ± 0.5*standard deviation) [29].

- Model Extraction: Use iMAT to generate context-specific models by including highly expressed reactions and excluding lowly expressed reactions with high variability [29].

- Flux Analysis: Perform FBA to obtain reaction flux distributions for each sample [29].

- Machine Learning Integration: Employ Random Forest classifiers with reaction fluxes as features to distinguish between conditions (e.g., healthy vs. cancerous) [29].

- Feature Importance Analysis: Identify discriminatory reactions using feature importance scores from machine learning models [29].

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for GEM Research

| Resource Category | Specific Tool/Resource | Function and Application |

|---|---|---|

| Annotation Tools | RAST (Rapid Annotation using Subsystem Technology) | Genome annotation and initial metabolic reconstruction [25] |

| Model Construction | ModelSEED | Automated pipeline for draft GEM generation [25] |

| Model Curation | MACAW (Metabolic Accuracy Check and Analysis Workflow) | Suite of algorithms for detecting errors in GEMs [26] |

| Quality Assessment | MEMOTE (Metabolic Model Testing) | Standardized testing suite for GEM quality metrics [27] |

| Simulation Environments | COBRA Toolbox (MATLAB) | Comprehensive toolbox for constraint-based metabolic analysis [25] |

| Simulation Environments | COBRApy (Python) | Python implementation of COBRA methods for GEM simulation [24] |

| Reference Databases | AGORA2 (Assembly of Gut Organisms through Reconstruction and Analysis) | Curated collection of 7,302 gut microbial GEMs [30] |

| Context-Specific Modeling | iMAT (Integrative Metabolic Analysis Tool) | Algorithm for building context-specific models using transcriptomic data [29] |

| Cell Type Deconvolution | CIBERSORTx | Machine learning tool for estimating cell type abundance from bulk data [29] |

Current Challenges and Future Directions

Despite significant advances, several challenges remain in GEM development and application. Data integration represents a major hurdle, as methods for incorporating transcriptomic, proteomic, and metabolomic data into GEMs continue to evolve [28]. Current approaches often struggle with accurately predicting enzyme catalytic rates and accounting for post-translational modifications that affect metabolic activity [28]. Predicting distributions of possible fluxes, rather than single optimal states, remains computationally demanding but essential for capturing phenotypic diversity and metabolic flexibility [28].

The predictive accuracy of GEMs is limited by incomplete biochemical knowledge, particularly for less-studied organisms and secondary metabolism [26]. Error detection tools like MACAW help identify inaccuracies, but manual curation is still required to resolve them [26]. Future methodological developments will need to address these limitations while improving the accessibility of GEM tools for non-expert users.

Emerging applications in personalized medicine and microbiome engineering are driving innovation in multi-scale modeling approaches that integrate GEMs with other modeling frameworks [30]. The combination of GEMs with machine learning techniques shows particular promise for identifying complex metabolic patterns and predicting therapeutic outcomes [29]. As the field progresses, GEMs will continue to serve as fundamental platforms for understanding cellular metabolism and bridging genomic information with physiological phenotypes.

A Brief History and Evolution of the COBRA Approach

Constraint-Based Reconstruction and Analysis (COBRA) has emerged as a cornerstone mathematical and computational framework for systems biology. This approach enables researchers to build mechanistic, genome-scale models of metabolic networks and to predict physiologically and biochemically feasible phenotypic states [1]. The core principle of COBRA methods is the use of physicochemical, genetic, and environmental constraints to define the set of possible metabolic behaviors of a biological system, without requiring comprehensive kinetic parameter data [3] [1]. This protocol provides an in-depth technical guide to the historical development, methodological evolution, and current applications of the COBRA approach, framed within the context of its growing impact on biomedical and biotechnological research.

Historical Development and Evolution

The COBRA approach has matured through several distinct generations of methodological development, each expanding its capabilities and applications.

Foundational Years and Early Toolboxes

The theoretical foundations of constraint-based modeling were established through early work on stoichiometric modeling and flux balance analysis (FBA). The first major collaborative software implementation emerged with the COBRA Toolbox for MATLAB, initially released as an open-source package to facilitate quantitative prediction of metabolic phenotypes [1]. This version (1.0) provided the community with a standardized set of tools for basic constraint-based operations, addressing the need for reproducibility and method reuse. The recognition that COBRA methods could mechanistically represent genotype-phenotype relationships even with incomplete mechanistic information drove its initial adoption [1].

Expansion and Community Growth

The release of the COBRA Toolbox 2.0 represented a significant milestone, featuring an enhanced range of methods to simulate, analyze, and predict diverse phenotypes using genome-scale metabolic reconstructions [1]. This expansion coincided with the growing phylogeny of COBRA methods and an expanding user community. During this period, the framework saw application in microbial metabolic engineering and, to a lesser extent, in modeling transcriptional and signaling networks [3]. The establishment of the openCOBRA Project formalized the community-driven development model, promoting constraints-based research through freely available software tools [3].

Current State: Multi-Scale Integration

The current iteration, COBRA Toolbox 3.0, represents a substantial evolution in scope and capability. It incorporates new methods for quality-controlled reconstruction, modeling, topological analysis, strain and experimental design, network visualization, and multi-omics data integration [1]. A significant development has been the introduction of COBRApy, a Python-based implementation that provides an object-oriented framework designed to represent the complexity of integrated biological processes beyond metabolism [3]. This version supports more complex modeling scenarios, including multi-cellular systems and integrated models of metabolism and gene expression (ME-Models) [3].

Table: Historical Evolution of COBRA Software Implementations

| Toolbox Version | Release Environment | Key Innovations | Primary Applications |

|---|---|---|---|

| COBRA Toolbox 1.0 | MATLAB | Standardized basic COBRA methods; Flux Balance Analysis (FBA) | Metabolic phenotype prediction |

| COBRA Toolbox 2.0 | MATLAB | Expanded method repertoire; Community amalgamation | Diverse phenotypic simulations |

| COBRA Toolbox 3.0 | MATLAB | Multi-omics integration; Thermodynamic constraints; Kinetic modeling | Context-specific models; Strain design |

| COBRApy | Python | Object-oriented design; ME-Models; Parallel processing | Next-generation models; High-density omics data |

Core Methodology and Principles

The COBRA approach is built upon a mathematical foundation that leverages constraints to reduce the solution space of possible metabolic states.

Mathematical Foundation

At the core of any COBRA model is the stoichiometric matrix S, where each element Sᵢⱼ represents the stoichiometric coefficient of metabolite i in reaction j. Under the assumption of steady-state metabolite concentrations, the system is described by:

S · v = 0

where v is the vector of metabolic reaction fluxes. This equation represents mass conservation constraints. The solution space is further constrained by lower and upper bounds on reaction fluxes:

α ≤ v ≤ β

These constraints define a feasible solution space of all possible metabolic flux distributions that satisfy mass balance and thermodynamic constraints [1].

Flux Balance Analysis (FBA)

Flux Balance Analysis is the most widely used COBRA method for predicting metabolic behavior. FBA formulates the identification of an optimal flux distribution as a linear programming problem:

Maximize cᵀv Subject to S·v = 0 and α ≤ v ≤ β

where c is a vector defining the linear objective function, typically representing biomass production or ATP synthesis [19] [1]. FBA has enjoyed substantial success in qualitative analyses of gene essentiality and metabolic capabilities [3].

Figure: The core workflow of Flux Balance Analysis (FBA), beginning with model reconstruction and culminating in experimental validation of predictions.

Advanced COBRA Methods

Beyond basic FBA, the COBRA toolbox has expanded to include numerous advanced algorithms:

- Flux Variability Analysis (FVA): Determines the minimum and maximum possible flux through each reaction while maintaining optimal objective value [3] [19]. This identifies alternative optimal solutions and network "pinch-points."

- Parsimonious FBA (pFBA): Finds the flux distribution that minimizes total enzyme usage while achieving optimal growth [19].

- Thermodynamic Constraint Integration: Incorporates estimated Gibbs free energy values to determine reaction directionality [1].

- Gene Deletion Analysis: Predicts the effect of single or double gene knockouts on metabolic function [3].

Implementation and Workflow

The practical implementation of COBRA methods involves a structured workflow from biochemical network reconstruction to model simulation and validation.

Biochemical Network Reconstruction

The foundation of any constraint-based model is a high-quality, genome-scale metabolic reconstruction. This biochemical network is assembled through:

- Genome Annotation: Identifying metabolic genes and their associated reactions [1].

- Stoichiometric Matrix Construction: Documenting metabolite-reaction relationships [1].

- Compartmentalization: Assigning metabolites and reactions to appropriate subcellular locations [1].

- Gap Filling: Identifying and adding missing metabolic functions to ensure network connectivity [1].

The COBRA Toolbox 3.0 includes enhanced methods for quality-controlled reconstruction, maintenance of internal model consistency, and identification of stoichiometrically balanced cycles [1].

Data Integration and Context-Specific Modeling

A powerful capability of modern COBRA methods is the integration of multi-omics data to generate context-specific models:

- Transcriptomic and Proteomic Data: Used to create tissue- or condition-specific models through algorithms that constrain the model to reactions supported by molecular evidence [1].

- Metabolomic Data: Integrated for analysis of metabolic fluxes in a network context [1].

- Thermochemical Data: Enables estimation of reaction thermodynamics and directionality constraints [1].

Table: Essential Research Reagents and Computational Tools for COBRA

| Resource Type | Specific Tool/Model | Function and Application |

|---|---|---|

| Software Environment | COBRA Toolbox (MATLAB) | Comprehensive desktop suite of interoperable COBRA methods [1] |

| Software Environment | COBRApy (Python) | Object-oriented framework for next-generation models [3] |

| Model Format | Systems Biology Markup Language (SBML) | Standard format for model representation and exchange [3] [1] |

| Reference Metabolic Model | E. coli K-12 MG1655 | Well-curated model for gram-negative bacterial metabolism [3] |

| Reference Metabolic Model | Recon (Human Metabolic Reconstruction) | Community-driven human metabolic network [1] |

| Optimization Solver | Linear and Nonlinear Programming Solvers | Computational engines for flux optimization [1] |

Simulation and Analysis Workflow

A typical COBRA analysis protocol involves multiple interconnected steps:

Figure: The comprehensive COBRA workflow, highlighting how omics data integration (red arrow) informs constraint definition during model development.

Applications and Impact

COBRA methods have found diverse applications across biology, biomedicine, and biotechnology.

Metabolic Engineering and Strain Design

COBRA approaches are widely used in microbial metabolic engineering for identifying gene knockout and overexpression targets that optimize production of desired compounds [3] [1]. Methods such as OptKnock and OptForce leverage constraint-based models to predict genetic interventions that couple cell growth with chemical production [19].

Drug Discovery and Disease Mechanism Elucidation

In biomedical research, COBRA models of human metabolism have been used to:

- Identify drug targets by predicting essential metabolic genes in pathogens [3].

- Understand metabolic alterations in cancer and other diseases [1].

- Predict host-microbe metabolic interactions in the gut microbiome [19] [1].

Biological Discovery and Systems Biology

Beyond applied applications, COBRA methods serve as fundamental tools for basic biological research:

- Generating testable hypotheses about metabolic network functions [1].

- Interpreting high-throughput omics data in a mechanistic metabolic context [1].

- Studying the evolution of metabolic networks across species [1].

Future Directions

The COBRA field continues to evolve with several emerging frontiers:

- Multi-Cellular and Multi-Tissue Modeling: Extending constraint-based approaches to model metabolic interactions between different cell types and tissues [1].

- Integrated Metabolism and Expression Models (ME-Models): Incorporating gene expression constraints directly into metabolic models [3].

- Kinetic Modeling Integration: Combining constraint-based approaches with kinetic parameters for more dynamic simulations [1].

- High-Performance Computing: Leveraging parallel processing and advanced algorithms to handle increasingly complex models [3].

The ongoing development of COBRA methods ensures that this framework will continue to provide powerful tools for deciphering the complex biochemistry of living systems and engineering biological capabilities for biomedical and biotechnological applications.

A Practical Workflow: From Model Reconstruction to Advanced Analysis

Constraint-Based Reconstruction and Analysis (COBRA) is a molecular mechanistic framework that enables the integrative analysis of experimental molecular systems biology data and the quantitative prediction of physicochemically and biochemically feasible phenotypic states [32]. This methodology provides a scalable approach for studying genome-scale metabolic models of microbes, human cells in health and disease, and even multi-cellular systems like microbiota [9]. The COBRA framework has found widespread application in biology, biomedicine, and biotechnology because its functions can be flexibly combined to implement tailored protocols for any biochemical network [32].

The core workflow of COBRA research follows three fundamental phases: Reconstruction of genome-scale metabolic networks from genomic and biochemical data; Simulation of metabolic phenotypes using mathematical constraints; and Interpretation of results in biological contexts. This workflow enables researchers to formulate testable hypotheses about metabolic functions and to identify potential therapeutic targets in drug development [9]. The field continues to evolve with new methods for quality-controlled reconstruction, modeling, topological analysis, and multi-omics data integration [32].

Phase 1: Network Reconstruction

Network reconstruction represents the foundational first step in the COBRA workflow, where a biochemical network is systematically assembled from genomic, biochemical, and physiological data.

Reconstruction Methodology

The reconstruction process follows a standardized protocol:

- Draft Reconstruction: Generate an initial model from genome annotation using automated tools. This draft compilation identifies candidate metabolic reactions based on gene-protein-reaction (GPR) associations.

- Curation and Gap-Filling: Manually curate the draft model using biochemical literature and databases. The