Dead-End Metabolites: A Comprehensive Guide for Researchers from Detection to Clinical Impact

This guide provides a systematic overview of dead-end metabolites (DEMs) in metabolic networks, crucial yet often overlooked components that signify gaps in metabolic knowledge.

Dead-End Metabolites: A Comprehensive Guide for Researchers from Detection to Clinical Impact

Abstract

This guide provides a systematic overview of dead-end metabolites (DEMs) in metabolic networks, crucial yet often overlooked components that signify gaps in metabolic knowledge. Tailored for researchers, scientists, and drug development professionals, it covers the foundational definition and biological significance of DEMs, explores advanced computational tools and methodologies for their detection and analysis, offers practical strategies for troubleshooting and network optimization, and validates approaches through comparative analysis of current methods. By synthesizing insights from the latest research, this article serves as a vital resource for improving the accuracy of genome-scale metabolic models (GEMs), advancing metabolic engineering, and informing drug discovery efforts.

What Are Dead-End Metabolites? Defining the Known Unknowns in Cellular Metabolism

In the computational analysis of metabolic networks, dead-end metabolites (DEMs) represent a critical class of compounds that reveal fundamental gaps in our understanding of cellular biochemistry. Formally defined, a dead-end metabolite is a compound that is either only produced (Root-Non-Consumed or RNC) or only consumed (Root-Non-Produced or RNP) by the reactions within a given cellular compartment, including transport reactions [1] [2]. These metabolites become isolated within the metabolic network, unable to reach a steady state different from the trivial solution, and consequently block any reactions in which they participate [3]. The presence of DEMs typically reflects either a deficit in how a metabolic database represents knowledge from the scientific literature or signifies genuine gaps in our current understanding of an organism's metabolism [1]. Their identification serves as a powerful systems biology approach that alerts researchers to areas where more experimental work is required, effectively acting as signposts to the 'known unknowns' of metabolism [1].

The systematic identification of dead-end metabolites has become increasingly important with the rise of genome-scale metabolic models (GSMs) that provide mathematical representations of an organism's metabolism [3]. When applying constraint-based modeling (CBM) to these metabolic models, dead-end metabolites create inconsistencies that prevent feasible steady-state solutions [3]. The absence of flow through RNP metabolites can be propagated downstream, creating Downstream-Non-Produced (DNP) metabolites, while RNC metabolites can create Upstream-Non-Consumed (UNC) metabolites upstream [3]. This propagation effect can lead to extensive blocking of reaction networks, making the identification and resolution of DEMs essential for creating accurate metabolic models capable of predicting metabolic capabilities, growth rates, and systems responses to environmental or genetic perturbations [3].

Classification and Quantitative Analysis

Formal Classification of Gap Metabolites

Dead-end metabolites are systematically classified based on their position and role within the metabolic network. The classification hierarchy extends beyond the basic producer-only and consumer-only categories to account for propagation effects throughout the network [3]:

- Root-Non-Produced (RNP) Metabolites: These metabolites are only consumed by the system's reactions and lack any known production pathways within the network. They represent the fundamental starting points for metabolic gaps [3].

- Root-Non-Consumed (RNC) Metabolites: These metabolites are only produced by the network but never consumed, creating terminal points in metabolic pathways [3].

- Downstream-Non-Produced (DNP) Metabolites: These metabolites become gaps as a direct consequence of upstream RNP metabolites. The absence of flow from RNP metabolites propagates downstream, blocking these subsequent metabolites [3].

- Upstream-Non-Consumed (UNC) Metabolites: These metabolites become gaps due to downstream RNC metabolites. The inability to consume metabolites at the end of pathways creates a blocking effect that propagates upstream [3].

Quantitative Analysis of DEMs in Model Organisms

Analysis of the EcoCyc database (version 17.0) for Escherichia coli K-12 MG1655 provides a representative case study of DEM prevalence in a well-characterized model organism. From 995 compounds directly involved in reactions within the metabolic network, 127 dead-end metabolites were identified [1]. Further categorization revealed distinct subgroups with different biological implications, as summarized in Table 1.

Table 1: Classification and Resolution of Dead-End Metabolites in E. coli K-12

| Category | Count | Resolution Approach | Examples |

|---|---|---|---|

| Total DEMs Identified | 127 | Comprehensive analysis of 995 metabolic compounds | 32 from pathways, 95 from isolated reactions |

| Pathway DEMs | 32 | More likely to be physiologically relevant | Curcumin, tetrahydrocurcumin |

| Non-Physiological DEMs | 39 | Identification as non-physiological artifacts | In vitro enzyme activities not occurring in vivo |

| Resolved via Curation | 38 | Addition of transport reactions | Methylphosphonate |

| Resolved via Pathway Repair | 3 | Addition of metabolic reactions | Vitamin B12 salvage pathway |

| True Knowledge Gaps | Remaining DEMs | Represent deficiencies in knowledge of E. coli metabolism | Various uncharacterized compounds |

The analysis further revealed that among the 127 dead-end metabolites, 32 were derived from within defined metabolic pathways, while the majority came from isolated reactions not contained within pathways [1]. Pathway DEMs are considered particularly significant because their participation in established metabolic pathways suggests they have greater physiological relevance despite their dead-end status [1]. Through extensive literature searches and manual curation, researchers were able to resolve many of these DEMs by adding 38 transport reactions and 3 metabolic reactions to the database, significantly improving the network representation [1].

Table 2: Common Dead-End Metabolites in E. coli and Their Functional Categories

| Metabolite Name | Type | Functional Category | Status |

|---|---|---|---|

| (2R,4S)-2-methyl-2,3,3,4-tetrahydroxytetrahydrofuran (AI-2) | Pathway DEM | Quorum-sensing signaling molecule | Knowledge gap |

| Allantoin | Pathway DEM | Purine metabolism | Knowledge gap |

| Cis-vaccenate | Pathway DEM | Fatty acid biosynthesis | Knowledge gap |

| Cobinamide | Pathway DEM | Vitamin B12 metabolism | Partially resolved |

| Ethanolamine | Pathway DEM | Lipid metabolism | Knowledge gap |

| S-adenosyl-4-methylthio-2-oxobutanoate | Pathway DEM | Methionine metabolism | Knowledge gap |

| 1-chloro-2,4-dinitrobenzene (CDNB) | Non-pathway DEM | Xenobiotic metabolism | Artifact |

| N-ethylmaleimide | Non-pathway DEM | Chemical inhibitor | Artifact |

Methodologies for Identification and Resolution

Computational Identification Protocols

The identification of dead-end metabolites employs sophisticated computational algorithms that analyze the stoichiometric matrix representation of metabolic networks. The foundational methodology involves scanning the rows of the stoichiometric matrix (N) to identify metabolites that lack either production or consumption reactions [3]. The basic algorithm can be summarized as follows:

Stoichiometric Matrix Analysis: For a metabolic network with m metabolites and n reactions represented by stoichiometric matrix N, where element N(i,j) represents the stoichiometric coefficient of metabolite i in reaction j [3].

RNP Metabolite Identification: A metabolite i is classified as RNP if for all reactions j in the network, N(i,j) ≥ 0 (meaning it is only produced or not involved), with at least one reaction where N(i,j) > 0 [3].

RNC Metabolite Identification: A metabolite i is classified as RNC if for all reactions j in the network, N(i,j) ≤ 0 (meaning it is only consumed or not involved), with at least one reaction where N(i,j) < 0 [3].

Propagation Analysis: The algorithm subsequently identifies DNP and UNC metabolites by analyzing the network connectivity and flux propagation patterns from the root RNP and RNC metabolites [3].

Compartmental Considerations: The analysis must account for cellular compartments, including transport reactions between compartments, to avoid false identification of DEMs [2].

The Pathway Tools software, which underpins databases like EcoCyc, incorporates a Dead-End Metabolite Finder tool that implements these algorithms and allows researchers to customize searches based on various parameters [1] [2]. This tool can be configured to identify only those compounds existing within metabolic pathways (pathway DEMs) or to include DEMs from reactions occurring outside pathways (non-pathway DEMs) [1]. Additional options include limiting searches to small molecules, including non-pathway reactions, and handling reactions with unknown directionality [2].

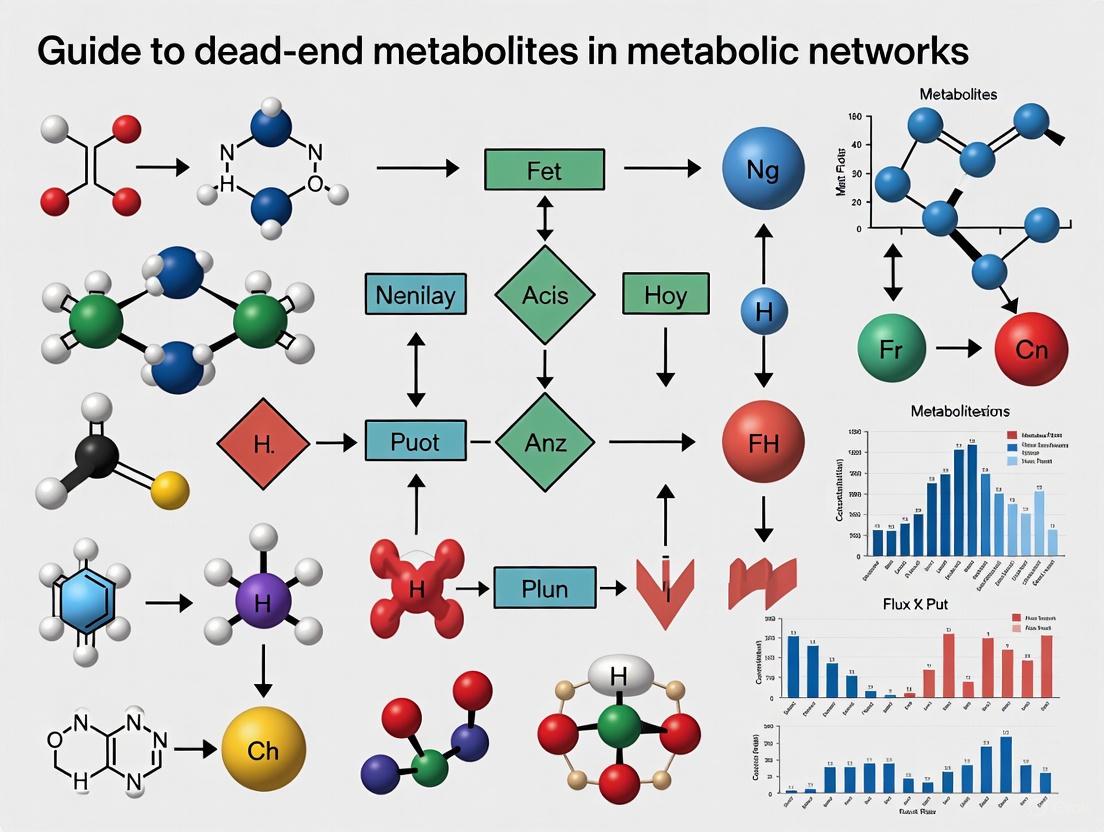

Diagram 1: DEM Identification Workflow - This flowchart illustrates the computational process for identifying different classes of dead-end metabolites in metabolic networks.

Gap-Filling and Resolution Strategies

Once dead-end metabolites are identified, the process of gap-filling aims to resolve these inconsistencies by adding appropriate metabolic or transport reactions. The gap-filling process follows a systematic protocol [1] [3]:

Literature Validation: Conduct extensive literature searches to identify potentially missing reactions that could resolve the DEM status. This involves reviewing biochemical studies, enzyme characterization papers, and comparative genomics analyses.

Transport Reaction Addition: Determine if the DEM lacks transport reactions that would account for its import or export from the cell. For example, in the EcoCyc database, correct classification of "methylphosphonate" as a child of the class "alkylphosphonates" allowed the software to recognize it as a substrate of the phosphonate ABC transporter, resolving its dead-end status [1].

Metabolic Pathway Completion: Identify missing metabolic reactions that would connect the DEM to the broader metabolic network. In the EcoCyc analysis, this approach led to an improved representation of the pathway for Vitamin B12 salvage [1].

Physiological Relevance Assessment: Evaluate whether the reactions producing or consuming the DEM are physiologically relevant. In the E. coli analysis, 39 dead-end metabolites were identified as components of reactions that are not physiologically relevant - these represent properties of purified enzymes in vitro that would not be expected to occur in vivo [1].

Database Curation: Implement corrections to the database representation based on findings. This may include reclassifying compounds, adding missing reactions, or correcting erroneous pathway assignments.

Experimental Validation: Design follow-up experiments to verify computational predictions, particularly for DEMs that represent potential novel metabolic capabilities.

The gap-filling process can be enhanced using optimization-based methods that identify the minimum number of reactions needed to resolve all dead-end metabolites in a network. These methods typically use Mixed Integer Linear Programming (MILP) combined with universal reaction databases such as KEGG, BiGG, or MetaCyc [3].

Essential Research Reagent Solutions

Table 3: Research Reagent Solutions for Dead-End Metabolite Analysis

| Research Tool | Function | Application Context |

|---|---|---|

| EcoCyc Database | Encyclopedia of E. coli genes, metabolism, and regulatory networks | Primary resource for metabolic network reconstruction and DEM identification in E. coli K-12 [1] |

| Pathway Tools Software | Bioinformatics software environment including DEM finder tool | DEM identification, pathway analysis, and metabolic network visualization [1] [2] |

| COBRA Toolbox | MATLAB toolbox for constraint-based reconstruction and analysis | Flux balance analysis, gap-filling, and metabolic model simulation [3] |

| Recon2 | Community-driven generic human metabolic reconstruction | Human metabolic network modeling, containing 2191 genes, 5063 metabolites, and 7440 reactions [4] |

| MetaCyc Database | Database of nonredundant, experimentally elucidated metabolic pathways | Reference database for gap-filling reactions across multiple organisms [3] |

| Mixed Integer Linear Programming (MILP) | Optimization method for gap-filling | Identification of minimum reaction sets to resolve DEMs [3] |

The systematic analysis of dead-end metabolites relies on specialized databases and computational frameworks that provide the necessary infrastructure for metabolic network reconstruction and analysis. The EcoCyc database, for instance, provides an integrated view of the metabolic and regulatory network of Escherichia coli K-12, facilitating computational exploration of this model organism [1]. As of version 17.0, it contained 1497 metabolic enzymes and 268 transporters catalyzing a total of 2175 reactions, with 2392 compounds of which 995 are directly involved in reactions [1]. This comprehensive coverage makes it an invaluable resource for identifying and analyzing DEMs.

For human metabolic studies and drug development applications, the Recon2 database provides a generic human metabolic reconstruction that includes 2191 genes collected into Gene Protein Reaction rules (GPRs), 5063 metabolites, and 7440 reactions [4]. This reconstruction enables researchers to build context-specific metabolic models for human tissues and diseases, with particular relevance to cancer metabolism and drug discovery [4]. The integration of transcriptomics data with these metabolic models through GPR rules allows for the construction of condition-specific models that can predict metabolic vulnerabilities and drug targets [4].

Diagram 2: DEM Resolution Pathways - This diagram shows the primary strategies for resolving dead-end metabolites through database curation and experimental validation.

Applications in Drug Discovery and Development

The analysis of dead-end metabolites has significant implications for pharmaceutical research and development, particularly in understanding drug metabolism and identifying novel therapeutic targets. In drug discovery, metabolites from pharmaceutical compounds form as part of the natural biochemical process of degrading and eliminating these compounds [5]. The rate of degradation of a compound is an important determinant of the duration and intensity of its action, and understanding how pharmaceutical compounds are metabolized - along with the potential side effects of their metabolites - is an essential part of drug discovery [5].

Metabolomics approaches that integrate metabolic network analysis with experimental data have demonstrated particular utility in oncology research. For example, probabilistic graphical models and flux balance analysis applied to metabolomics and gene expression data from breast cancer tumor samples have highlighted the importance of glutamine metabolism in breast cancer [4]. Cell experiments confirmed that treating breast cancer cells with drugs targeting glutamine metabolism significantly affects cell viability, validating the computational predictions [4]. This integrated approach allows researchers to associate metabolomics data with patient clinical outcomes, potentially identifying new biomarkers and therapeutic targets.

The identification of dead-end metabolites in human metabolic networks can reveal potential drug targets by highlighting metabolic vulnerabilities in specific disease states. For instance, if a cancer cell line shows accumulation of a particular dead-end metabolite, it may indicate a blocked metabolic pathway that could be exploited therapeutically. Furthermore, the analysis of DEM patterns across different tissue types or disease states can reveal context-specific metabolic deficiencies that may serve as biomarkers for disease detection or monitoring.

Dead-end metabolites (DEMs) are biochemical species within a metabolic network that cannot be produced or consumed, representing critical breaks in metabolic pathways. In genome-scale metabolic models (GEMs), which are mathematical representations of an organism's metabolism, DEMs signify knowledge gaps stemming from incomplete genomic annotations, uncharacterized enzymes, or undiscovered biochemical pathways [6] [7]. The identification and resolution of DEMs through a process called gap-filling is a fundamental step in curating high-quality, predictive metabolic networks [8] [7]. This process is not merely technical model refinement; it drives biological discovery by pinpointing exact locations where metabolic knowledge is incomplete, thereby guiding subsequent experimental research [7].

The Impact of DEMs on Metabolic Model Quality and Utility

DEMs disrupt the functional connectivity of metabolic networks, leading to erroneous predictions of cellular capabilities. A model riddled with DEMs will incorrectly predict an organism's inability to synthesize essential biomass components, such as amino acids, nucleotides, or lipids, even when experimental evidence confirms otherwise [8] [7]. This directly compromises the model's utility in vital applications. For instance, in metabolic engineering, an incomplete model can mislead efforts to design microbial cell factories for chemical production [8]. In biomedical research, gaps in human metabolic models can obscure the understanding of disease mechanisms or drug interactions [7]. The presence of DEMs often reveals a deeper issue: even highly curated GEMs for well-studied model organisms can lack integral biochemistry necessary for biomass precursor formation [8]. Consequently, rigorous DEM analysis and resolution are prerequisites for generating reliable biological hypotheses and predictions from in silico models.

Methodologies for Identifying and Resolving DEMs

Detection and Gap-Filling Algorithms

Computational methods for DEM handling typically follow a structured pipeline involving detection, solution suggestion, and gene assignment.

Table 1: Key Types of Gap-Filling Algorithms

| Algorithm Type | Core Principle | Example Methods |

|---|---|---|

| Optimization-Based | Uses linear programming to find a minimal set of reactions to add, making biomass production feasible [7]. | FASTGAPFILL [7], GLOBALFIT [7] |

| Topology-Based | Leverages network structure to predict missing links without requiring phenotypic data [6]. | Meneco [7], CHESHIRE [6] |

| Likelihood-Based | Incorporates genomic evidence (e.g., sequence similarity) to prioritize biologically plausible reactions [8]. | BLAST-weighted Linear Programming [8] |

The following workflow diagram illustrates the standard process for identifying and resolving DEMs.

Advanced Computational Techniques: The Case of CHESHIRE

Recent advances leverage machine learning to predict missing reactions purely from network topology. CHESHIRE (CHEbyshev Spectral HyperlInk pREdictor) is one such method that models metabolic networks as hypergraphs, where each reaction is a hyperlink connecting all participating metabolites [6]. Its deep learning architecture includes:

- Feature Initialization: A neural network encoder generates initial feature vectors for each metabolite from the network's incidence matrix [6].

- Feature Refinement: A Chebyshev spectral graph convolutional network (CSGCN) refines these features by incorporating information from metabolites involved in the same reaction [6].

- Pooling and Scoring: Features are pooled to the reaction level and scored to predict the likelihood of a reaction's existence [6].

CHESHIRE has demonstrated superior performance in recovering artificially removed reactions and, crucially, in improving phenotypic predictions for draft GEMs, such as the secretion of fermentation products and amino acids [6].

Experimental Protocols for Validation

In silico gap-filling predictions require experimental validation. A core protocol involves comparing computational predictions with high-throughput phenotypic data.

- Objective: To test and refine a metabolic model by resolving discrepancies between its predictions and experimental growth data.

- Procedure:

- In Silico Prediction: Perform Flux Balance Analysis (FBA) to predict growth capabilities of gene knockout mutants under defined medium conditions [7].

- Experimental Phenotyping: Conduct high-throughput growth assays for the same set of knockout mutants, measuring growth rates or yields [7].

- Identify Inconsistencies: Compare in silico predictions with experimental data to flag two types of errors:

- Iterative Gap-Filling: Use an algorithm to add reactions from a universal database (e.g., Model SEED) to the model to resolve the false negatives, ensuring the production of previously dead-end metabolites [8] [7].

- Validate Gene Associations: For added reactions, use sequence similarity tools (BLAST) against the organism's genome to identify candidate genes, providing genomic support for the gap-filling solution [8].

Table 2: Key Reagents and Resources for DEM Research

| Resource Category | Specific Tool / Reagent | Function in DEM Research |

|---|---|---|

| Metabolic Databases | Model SEED Biochemistry Database [8] | A comprehensive repository of metabolic reactions, metabolites, and associated genes used as a source for gap-filling reactions. |

| Software & Platforms | CHESHIRE [6] | A deep learning-based tool for predicting missing reactions in GEMs using only network topology. |

| FASTGAPFILL [7] | An efficient algorithm for computing a near-minimal set of reactions to add to a compartmentalized model. | |

| Genomic Tools | BLAST (Basic Local Alignment Search Tool) [8] | Used to find sequence similarities between known enzyme sequences and a target genome, providing evidence for adding a reaction. |

| Experimental Assays | High-Throughput Growth Profiling [7] | Phenotypic microarrays or growth assays to generate experimental data on gene essentiality and metabolic capabilities for model validation. |

Dead-end metabolites are far more than model artifacts; they are precise indicators of the boundaries of our metabolic knowledge. A systematic approach to DEMs—combining robust topological detection, advanced gap-filling algorithms, and iterative experimental validation—is fundamental to building high-fidelity metabolic models [6] [7]. This process directly fuels scientific discovery, leading to the identification of previously unknown enzymes, the characterization of underground metabolic pathways, and the correction of misannotated genes [8] [7]. As computational methods like hypergraph learning continue to evolve [6], DEMs will remain indispensable beacons, guiding researchers toward a more complete and accurate understanding of the biochemical machinery of life.

Dead-end metabolites (DEMs) represent critical "known unknowns" in metabolic network research. In the context of Escherichia coli K-12 metabolism, a DEM is formally defined as a metabolite that is produced by the known metabolic reactions of an organism and has no reactions consuming it, or that is consumed by the metabolic reactions and has no known reactions producing it, and in both cases has no identified transporter [1]. These metabolites effectively form isolated compounds within the interconnected metabolic network, creating discontinuities that may represent gaps in our scientific knowledge or database representation [9]. The systematic identification and analysis of DEMs serves as a powerful approach to pinpointing specific areas where metabolic understanding remains incomplete, thus guiding future research efforts toward resolving these biochemical ambiguities.

The EcoCyc database (EcoCyc.org) provides an integrated view of the metabolic and regulatory network of the model bacterium Escherichia coli K-12 MG1655, combining computable representations of biological features with detailed summaries from manual literature curation [10]. In release version 17.0, EcoCyc contained 1,497 metabolic enzymes and 268 transporters catalyzing 2,175 reactions, with 2,392 compounds of which 995 are directly involved in reactions [1]. Within this extensive network, analysis identified 127 dead-end metabolites from the 995 compounds directly involved in metabolic reactions, highlighting significant opportunities for improving our understanding of E. coli metabolism [1] [9].

Methodology for DEM Identification

DEM Finder Tool Implementation

The identification of DEMs within the EcoCyc database is performed using the built-in DEM finder tool, accessible through the EcoCyc website command "Tools → Dead-end metabolites" [1]. This computational tool systematically scans the entire metabolic network to identify compounds that lack either producing or consuming reactions, including transport reactions. The tool offers customization options that allow researchers to focus on different aspects of the metabolic network: investigators can identify only those compounds existing within defined metabolic pathways (pathway DEMs) or include DEMs originating from reactions occurring outside established pathways (non-pathway DEMs) [9]. This distinction is biologically significant because participation in metabolic pathways may make pathway DEMs relatively rare but considerably more likely to be physiologically relevant to the organism's core metabolism.

The DEM identification algorithm operates on logical principles that examine the connectivity of each metabolite within the network. A metabolite is classified as a dead end if it meets one of two conditions: (1) it is produced by reactions within the network but has no consuming reactions or transporters, or (2) it is consumed by reactions but has no producing reactions or transporters [1]. The tool can be further refined to limit searches to specific cellular compartments, include or exclude non-pathway reactions, and focus on small molecules, providing flexibility for targeted investigations of particular metabolic subsystems [2].

Experimental Workflow for DEM Analysis

The following diagram illustrates the comprehensive workflow for identifying, classifying, and resolving dead-end metabolites in the EcoCyc database:

Research Reagent Solutions for DEM Analysis

Table 1: Essential Research Tools and Resources for DEM Investigation

| Resource | Type | Primary Function in DEM Analysis |

|---|---|---|

| EcoCyc Database | Metabolic Database | Provides comprehensive, computable representations of E. coli K-12 metabolism with manually curated knowledge [1] [10] |

| DEM Finder Tool | Software Utility | Automates identification of dead-end metabolites within the metabolic network through connectivity analysis [2] [1] |

| BRENDA Database | Enzyme Database | Provides enzyme kinetic parameters and functional information indexed by Enzyme Commission (EC) numbers [11] |

| Pathway Tools Software | Bioinformatics Platform | Supports creation, curation, and analysis of Pathway/Genome Databases (PGDBs) including DEM analysis capabilities [1] |

| Literature Compilation | Knowledge Base | 24,391 publications cited in EcoCyc v17.0 provide foundational knowledge for resolving DEMs through manual curation [1] |

Comprehensive DEM Analysis Results

DEM Classification and Distribution

The initial DEM analysis of the EcoCyc database revealed 127 dead-end metabolites from the 995 compounds directly involved in metabolic reactions [1] [9]. These DEMs were not uniformly distributed throughout the metabolic network but rather clustered in specific functional areas. A search of 271 metabolic pathways yielded 32 pathway DEMs, while a search of 393 isolated reactions returned 123 compounds [1]. After initial identification, 28 of these compounds were resolved through improved classification within the EcoCyc database hierarchy, demonstrating that proper taxonomic organization directly impacts DEM identification [1]. For example, correct classification of "methylphosphonate" as a child of the class "alkylphosphonates" enabled the EcoCyc software to recognize it as a substrate of the phosphonate ABC transporter, resolving its dead-end status [1].

The remaining 127 DEMs were subjected to extensive literature searches and manual curation, resulting in the addition of 38 transport reactions and 3 metabolic reactions to the database [1]. This curation effort led specifically to an improved representation of the pathway for Vitamin B12 salvage, demonstrating how DEM analysis drives tangible improvements in metabolic pathway knowledge. Further investigation identified that 39 DEMs were components of reactions not physiologically relevant to E. coli K-12, representing properties of purified enzymes in vitro that would not be expected to occur in vivo [1]. This distinction between true metabolic gaps and database artifacts is crucial for prioritizing research efforts.

Categorization of Dead-End Metabolites

Table 2: Classification and Resolution of DEMs in EcoCyc Database

| DEM Category | Count | Resolution Approach | Examples |

|---|---|---|---|

| Pathway DEMs | 32 | Add missing pathway reactions or transporters | allantoin, methanol, oxalate [1] |

| Non-Pathway DEMs | 123 | Add isolated reactions or correct classification | methyl red, N-ethylmaleimide, nicotinamide riboside [1] |

| Classification Issues | 28 | Correct compound classification in database | methylphosphonate (resolved) [1] |

| Non-Physiological Reactions | 39 | Annotate as in vitro only | Various enzyme assay artifacts [1] |

| True Knowledge Gaps | Remaining DEMs | Targeted experimental research | Unknown C3 fragment, queuine [1] |

The 127 DEMs identified in the EcoCyc database represent diverse chemical classes and metabolic origins. Notable examples include signaling molecules such as (2R,4S)-2-methyl-2,3,3,4-tetrahydroxytetrahydrofuran (AI-2), biosynthetic intermediates like cobinamide, and secondary metabolites including curcumin and tetrahydrocurcumin [1]. The presence of DEMs in the latter category is particularly informative; curcumin and tetrahydrocurcumin are both considered pathway DEMs because the database contains no other reactions for these molecules—it neither describes the production nor transport of curcumin, nor the metabolic fate of tetrahydrocurcumin [1]. Similarly, the compound 3α,12α-dihydroxy-7-oxo-5β-cholan-24-oate, a product of an E. coli 7-α-hydroxysteroid dehydrogenase (HdhA), is a DEM with no further consuming reactions or transporters documented in the database [1].

Experimental Protocols for DEM Resolution

Literature-Based Curation Methodology

The resolution of dead-end metabolites requires systematic investigation combining computational analysis with manual literature curation. The following protocol outlines the comprehensive approach used to resolve DEMs in the EcoCyc database:

DEM Identification and Classification: Run the DEM Finder tool with default parameters to identify all metabolites lacking either producing or consuming reactions. Classify results into pathway and non-pathway DEMs based on their inclusion in defined metabolic pathways [1].

Literature Mining: Conduct extensive searches of scientific literature using the DEM compound names and associated reactions as primary search terms. Focus on identifying documented transport capabilities or additional metabolic transformations not currently represented in the database [1].

Taxonomic Validation: Verify the physiological relevance of each reaction to E. coli K-12 specifically. Remove or annotate reactions that represent properties of purified enzymes in vitro but are not physiologically relevant in vivo [1].

Hierarchical Classification Check: Ensure proper classification of compounds within the EcoCyc ontology, as correct classification may automatically resolve some DEMs by connecting them to existing transport systems [1].

Database Enhancement: Add missing metabolic or transport reactions based on literature evidence, ensuring proper annotation of associated genes, enzymes, and regulatory elements [1].

Validation and Quality Control: Re-run the DEM Finder tool to verify resolution of targeted DEMs and ensure new additions do not create additional dead-end metabolites elsewhere in the network.

This protocol resulted in the addition of 38 transport reactions and 3 metabolic reactions to the EcoCyc database, significantly improving the representation of E. coli metabolic connectivity [1]. The iterative nature of this process is crucial, as each refinement may reveal additional connections or inconsistencies requiring resolution.

Pathway Visualization with Regulatory Interactions

For metabolites that remain as true DEMs after comprehensive curation, advanced visualization techniques can help researchers interpret regulatory interactions within metabolic networks. The concept of Regulatory Strength (RS) provides a quantitative measure for the strength of up- or down-regulation of a reaction step compared with the completely non-inhibited or non-activated state [12]. This approach enables intuitive interpretation of simulation data on a percentage scale where 100% means maximal possible inhibition or activation, and 0% means absence of regulatory interaction [12]. When many effectors influence a reaction step, RS percentages indicate the proportion different effectors contribute to the total regulation, providing crucial insights into metabolic control mechanisms that may explain dead-end metabolite accumulation [12].

The following diagram illustrates the relationship between DEMs and the broader metabolic network, highlighting potential regulatory interactions:

Significance and Research Applications

The systematic identification and resolution of 127 DEMs in the EcoCyc database represents more than just a data curation exercise—it provides fundamental insights into the known unknowns of E. coli metabolism. This analysis has led to direct improvements in both the software that underpins the database and the program that finds dead-end metabolites within EcoCyc [1]. More importantly, it has helped define the boundaries of our current understanding of E. coli metabolic capabilities, highlighting specific areas where further experimental research is needed to complete our knowledge of this model organism's metabolic network.

For researchers in metabolic engineering and synthetic biology, DEM analysis offers practical applications in strain optimization and pathway design. Unexplained dead-end metabolites may indicate the presence of undocumented detoxification pathways, storage mechanisms, or metabolic sinks that could impact the efficiency of engineered pathways. Similarly, for drug discovery professionals, DEMs may represent potential drug targets, particularly in pathogenic bacteria where essential metabolic pathways might contain undocumented reactions critical for survival in host environments. The DEM analysis framework established for E. coli can be extended to other organisms, providing a systematic approach to mapping the complete metabolic capabilities of both model organisms and emerging pathogens of clinical importance.

The remaining dead-end metabolites in the EcoCyc database after extensive curation likely represent genuine deficiencies in our knowledge of E. coli metabolism rather than database errors [1]. These DEMs serve as signposts to the "known unknowns" of metabolism, directing research attention to specific biochemical gaps that, when filled, will advance our fundamental understanding of bacterial metabolism and provide new opportunities for biomedical and biotechnological innovation.

In metabolic network reconstruction, dead-end metabolites (DEMs)—compounds produced or consumed without known subsequent or preceding reactions—signify critical gaps in knowledge. However, these gaps can represent either genuine biological unknowns ("real gaps") or methodological artifacts. This guide provides a systematic framework for researchers to distinguish between these possibilities, focusing on the crucial differentiation between in vivo physiological processes and in vitro enzymatic properties. Through curated databases, targeted experimental protocols, and advanced visualization tools, scientists can prioritize DEMs for further investigation, thereby refining metabolic models and identifying novel metabolic functions.

A dead-end metabolite (DEM) is formally defined as a metabolite that is produced by the known metabolic reactions of an organism but has no reactions consuming it, or that is consumed but has no known reactions producing it, and in both cases lacks an identified transporter [1] [9]. DEMs act as signposts to the 'known unknowns' of metabolism. Their presence in a network reconstruction can stem from two primary sources:

- Real Gaps: Genuine deficits in biological knowledge, indicating metabolic pathways or transport systems that exist in the organism but are yet to be discovered or characterized.

- Artifacts: Errors or misrepresentations arising from the research process itself, including the incorporation of non-physiological in vitro enzyme activities, incomplete database curation, or analytical artifacts introduced during metabolomic sample preparation.

The core challenge, and the focus of this guide, is to develop robust strategies to differentiate between these possibilities. This distinction is vital for efficiently directing research efforts towards biologically relevant questions rather than pursuing methodological phantoms.

Defining the Problem: Known Unknowns vs. Methodological Artifacts

The Nature of "Real Gaps"

Real gaps represent true deficiencies in our understanding of an organism's metabolic capacity. In an analysis of the Escherichia coli K-12 metabolic network in the EcoCyc database, 127 DEMs were identified from 995 metabolic compounds [1] [9]. A systematic curation effort resolved many of these by adding missing transport or metabolic reactions, but a significant number remained, likely representing authentic gaps in the knowledge of E. coli metabolism. These are the "known unknowns" that can guide hypothesis-driven research into novel pathway discovery and gene function annotation.

Artifacts confound the interpretation of DEMs and arise from several stages of research:

Non-Physiological In Vitro Reactions: A primary source of artifacts is the incorporation of reactions that are properties of purified enzymes in vitro but do not occur in vivo under physiological conditions. In the EcoCyc study, 39 DEMs (over 30% of the total identified) were attributed to such reactions [1]. These often result from enzymes exhibiting low-specificity activity on non-native substrates in artificial laboratory conditions.

Analytical Artifacts in Metabolomics: The process of extracting and analyzing metabolites can generate artifactual compounds not present in the intact metabolome [13]. Common issues include:

- Esterification: Reaction of analyte carboxyl groups with alcohols (e.g., methanol, ethanol) used in extraction protocols [13].

- Trans-esterification: Intramolecular rearrangements, such as the isomerization of chlorogenic acid in aqueous solution [13].

- Oxidation/Dehydration: Degradation of labile compounds, particularly when using Soxhlet extraction or halogenated solvents like chloroform [13].

- Acetal/Hemiacetal Formation: Reactions involving aldehydes or ketones with alcohols [13].

Network Reconstruction and Curation Errors: Discrepancies between the network and its null model during computational analysis can generate artefactual motif signatures, misleading functional interpretation [14]. Furthermore, simple database errors, such as an incomplete ontological classification of a compound, can falsely render it a dead-end [1].

Table 1: Categorization and Resolution of Dead-End Metabolites (DEMs) Based on an E. coli K-12 Study [1] [9]

| DEM Category | Description | Example from E. coli Analysis | Primary Resolution Method |

|---|---|---|---|

| Non-Physiological DEMs | Metabolites from enzyme activities observed only in vitro, not relevant in vivo. | 39 DEMs identified as components of non-physiological reactions. | Removal from the organism-specific network model. |

| Curation-Based DEMs | DEMs resulting from incomplete or incorrect database representation. | 28 DEMs resolved by correcting compound classification. | Improved database curation and ontology. |

| Gap-Fill DEMs | DEMs resolved by adding missing reactions supported by literature evidence. | Addition of 38 transport and 3 metabolic reactions. | Extensive literature search and manual curation. |

| True Knowledge Gaps | DEMs that remain after curation and likely represent genuine biological unknowns. | Remaining DEMs after analysis. | Targeted experimental investigation. |

A Framework for Distinguishing Real Gaps from Artifacts

A multi-pronged approach is necessary to effectively classify DEMs.

Computational and Database Curation Checks

The first line of investigation involves computational and bioinformatic tools.

Database Cross-Referencing: Tools like MetaDAG can reconstruct metabolic networks from KEGG data, generating reaction graphs and metabolic Directed Acyclic Graphs (m-DAGs) to analyze connectivity and identify potential gaps in a comparative context across organisms [15]. Check for the DEM in metabolic databases for other organisms (e.g., MetaCyc). Its presence in a confirmed pathway elsewhere suggests a potential real gap.

Null Model Analysis: When performing network motif analysis, ensure the pool of random graphs used as a null model accurately reflects the topological constraints of the metabolic network to avoid artefactual motif signatures [14].

Classification and Ontology Verification: As demonstrated in the EcoCyc study, a DEM might be resolved simply by correctly classifying it. For example, classifying "methylphosphonate" under "alkylphosphonates" allowed the software to recognize it as a substrate for an existing transporter [1].

Experimental Validation and Protocol Design

Computational hypotheses must be tested experimentally with carefully designed protocols to minimize artifacts.

In Vivo Validation: Techniques like isotope tracing (e.g., using

\(^{13}C\)-labeled precursors) can confirm the flow of carbon through a proposed pathway in living cells. The metabolite in question should be tracked over time to see if it is consumed or produced.Artifact-Control in Metabolomics: Sample preparation protocols must be optimized and validated to prevent the introduction of artifacts [13].

- Solvent Selection: Avoid methanol and ethanol for compounds prone to esterification; consider acetonitrile or water-based extractions for sensitive analytes.

- Temperature Control: Perform extractions at lower temperatures (e.g., 4°C) to minimize thermal degradation. Avoid Soxhlet extraction for labile metabolites [13].

- pH and Light: Control pH to stabilize compounds and perform procedures in the dark to prevent photo-degradation.

- Stability Testing: Incubate pure standards in the proposed extraction solvent and conditions, then re-analyze to check for decomposition or adduct formation.

Table 2: Research Reagent Solutions for Artifact Mitigation in Metabolic Studies [13]

| Reagent / Material | Function | Risk of Artifact Formation | Recommended Mitigation Strategy |

|---|---|---|---|

| Methanol / Ethanol | Common solvents for metabolite extraction. | High risk of esterification with fatty acids and other carboxylic acid-containing metabolites. | Use stable isotope-labeled solvents (e.g., deuterated methanol) to track artifacts; replace with acetonitrile where possible. |

| Chloroform | Solvent for lipid extraction. | Can contain phosgene; causes oxidation of labile compounds like reserpine. | Use fresh, stabilized grades; test analyte stability; prefer methyl-tert-butyl ether (MTBE) for lipids. |

| Diethyl Ether | Solvent for extraction. | Often contains peroxides and aldehydes that can react with analytes. | Test for peroxides; use high-purity, inhibitor-free grades. |

| Silica-based Phases | Stationary phase for chromatography. | Can catalyze the oxidation of compounds with prenyl groups. | Use end-capped silica or alternative stationary phases; keep samples on the column for minimal time. |

Visualization of Dynamic Data

Static network maps can obscure the dynamic behavior of metabolites. Tools like GEM-Vis enable the visualization of time-course metabolomic data within the context of metabolic networks through animation, mapping quantitative data to node fill levels [16]. This can help distinguish a true dead-end (which does not change or accumulates monotonically) from a transiently accumulating metabolic intermediate.

The following workflow diagram summarizes the key steps in the systematic identification and classification of dead-end metabolites:

Distinguishing real metabolic gaps from artifacts is a critical, multi-disciplinary challenge in metabolic network research. A systematic approach combining rigorous database curation, awareness of common analytical pitfalls, and targeted experimental validation is essential. By adopting the framework outlined in this guide—leveraging computational tools, mitigating reagent-induced artifacts, and employing dynamic visualization—researchers can more effectively pinpoint genuine "known unknowns." This focused approach accelerates the discovery of new metabolic pathways, improves the accuracy of genome-scale models, and drives innovation in fields ranging from fundamental microbiology to drug development.

A Digital Elevation Model (DEM) is a three-dimensional digital representation of the Earth's bare ground surface, stripped of any objects like trees or buildings [17] [18]. Each point in a DEM grid contains an elevation value, providing fundamental terrain information critical for numerous scientific and engineering applications. DEMs serve as primary spatial inputs for a wide range of environmental and hydrological applications, forming the foundational dataset upon which numerous derived analyses are built [19]. The quality and characteristics of DEMs significantly influence the accuracy and reliability of predictive models across disciplines, from geomorphology to systems biology.

The creation of DEMs involves several advanced remote sensing technologies. Aerial photogrammetry captures overlapping images from aerial platforms to calculate elevation, while Synthetic Aperture Radar (SAR) uses radar waves capable of penetrating clouds for day-and-night data collection [18]. Light Detection and Ranging (LiDAR) technology employs laser pulses reflected from the ground to deliver high-resolution elevation data, particularly effective in complex landscapes with dense vegetation [18]. The choice of data collection method directly impacts the resulting DEM's accuracy, resolution, and suitability for specific applications.

DEM Selection and Performance Evaluation

Comparative Performance of Open-Access DEMs

DEM availability from multiple sources at various spatial resolutions presents significant challenges for watershed modeling and terrain analysis, as their characteristics directly influence feature delineation and model simulations [19]. Recent evaluations have revealed substantial performance variations among commonly used DEMs.

Table 1: Performance Comparison of Open-Access DEMs in Hydrological Modeling

| DEM Name | Key Characteristics | Performance Ranking | Optimal Use Environments |

|---|---|---|---|

| AW3D30 | Global 30m resolution | Top performer | Diverse terrain, general applications |

| COP30 (Copernicus GLO-30) | Global 30m resolution | Top performer | General terrain analysis |

| MERIT | Global 90m resolution | Good performance | Large-scale hydrological studies |

| HydroSHEDS | Hydrologically corrected | Poorer performance | Not recommended based on evaluation |

| TanDEM-X | Radar-based | Poorer performance | Specialized applications only |

Performance evaluation metrics including Willmott's index of agreement and nRMSE have demonstrated that AW3D30 and COP30 deliver superior accuracy in stream and catchment delineation, while TanDEM-X and HydroSHEDS exhibit notably poorer performance across multiple test regions [19]. Importantly, all DEMs show better accuracy in mountainous and larger catchments compared to smaller, flatter catchments, with forest cover significantly influencing accuracy, particularly through its interaction with steep slopes [19].

Resolution Versus Accuracy Considerations

The assumption that higher spatial resolution always yields better results requires careful examination. Recent research demonstrates that higher resolution does not automatically guarantee superior performance for all applications [20]. In landslide prediction studies, TINITALY (a national Italian dataset) resampled to 30m resolution outperformed both global DEMs and higher-resolution alternatives for representing fine-scale morphology and delineating slope units, despite not having the finest native resolution [20].

The propagation of errors within DEMs significantly impacts derived topographic attributes. Even small elevation errors amplify in derivatives such as slope gradient, curvature, and topographic wetness indices, critically affecting their predictive capacity in geomorphological and hydrological applications [20]. Consequently, selecting an appropriate DEM has proven more important than the number of DEM-derived factors used in landslide assessment [20].

DEMs in Hydrological Predictive Modeling

Impact on Hydrological Feature Delineation and Streamflow Simulation

DEM choice substantially affects the accuracy of stream and catchment delineation, while interestingly, its influence on streamflow simulation within the same catchment is relatively minor [19]. This differential impact highlights the complex relationship between terrain representation and hydrological processes, where correct catchment boundary identification proves more sensitive to elevation accuracy than the subsequent rainfall-runoff transformations.

The errors are most pronounced in forested and flat catchments, where vegetation interference with remote sensing measurements and limited topographic expression present particular challenges for DEM accuracy [19]. Furthermore, the removal of surface objects (forests and buildings) from Digital Surface Models (DSMs) to create bare-earth DEMs introduces additional uncertainty, necessitating specialized correction approaches in these environments [21].

Methodologies for Deriving Hydrological Factors from DEMs

Digital Elevation Models processed through Geographic Information Systems (GIS) enable the derivation of critical hydrological factors widely used in predictive modeling [22]. The standard methodology involves a structured analytical workflow:

Table 2: Key Hydrological Factors Derived from DEM Analysis

| Hydrological Factor | Mathematical Formula | Primary Application | Interpretation |

|---|---|---|---|

| Topographic Wetness Index (TWI) | TWI = ln(α/tanβ) | Soil moisture prediction, runoff generation areas | Higher values indicate greater saturation potential |

| Stream Power Index (SPI) | SPI = α * tanβ | Erosion potential, sediment transport | Higher values indicate greater erosive power |

| Sediment Transport Index (STI) | STI = (α/22.13)0.6 * (sinβ/0.0896)1.3 | Sediment erosion and deposition patterns | Estimates sediment transport capacity |

| Topographic Roughness Index (TRI) | TRI = √(∑(Zi - Zmean)²) | Terrain complexity, geomorphological analysis | Higher values indicate more rugged terrain |

| Topographic Position Index (TPI) | TPI = Z0 - Zmean | Landscape position classification | Positive: ridges; Negative: valleys |

The calculation process in ArcMap software involves specific computational steps to avoid mathematical errors. For TWI derivation, slope in radians is first calculated as: Radianslope = ("Slope.tif" * 1.570796)/90, followed by a tangent slope calculation with a conditional statement to prevent division by zero: Tanslope = Con("Radian_slope.tif">0, Tan(("Radian_slope.tif"), 0.001) [22]. Flow accumulation rescaling addresses zero values: Rescaledflowaccumulation = ("FlowAccu.tif"+1) * 30, enabling proper logarithmic transformation in the final TWI calculation [22].

Figure 1: Workflow for Deriving Hydrological Factors from DEMs

Flux Analysis Fundamentals and Methodologies

Principles of Flux Balance Analysis

Flux Balance Analysis (FBA) is a mathematical approach for analyzing the flow of metabolites through metabolic networks, particularly genome-scale metabolic reconstructions [23]. This constraint-based methodology computes the flow of metabolites through biochemical networks, enabling predictions of organism growth rates or production rates of biotechnologically important metabolites [23]. Unlike kinetic models that require numerous difficult-to-measure parameters, FBA relies on stoichiometric constraints and optimization principles.

The mathematical foundation of FBA begins with representing metabolic reactions as a stoichiometric matrix (S) of size m×n, where m represents unique compounds and n represents reactions [23]. Each column contains stoichiometric coefficients for metabolites participating in a reaction (negative for consumed metabolites, positive for produced metabolites). At steady state, the system follows the mass balance equation: Sv = 0, where v is the flux vector representing reaction rates [23].

Computational Framework for FBA

Flux Balance Analysis utilizes linear programming to identify optimal flux distributions within the constrained solution space. The optimization process maximizes or minimizes an objective function Z = cTv, where c is a weight vector indicating each reaction's contribution to the biological objective [23]. For microbial growth prediction, this typically involves maximizing the biomass reaction, simulating the conversion of metabolic precursors into cellular constituents.

The computational implementation involves defining constraints in two forms: (1) equality constraints that balance reaction inputs and outputs through the stoichiometric matrix, and (2) inequality constraints that impose bounds on system fluxes [23]. These constraints collectively define the allowable flux distributions of the system. The COBRA Toolbox provides a standardized implementation platform for these calculations, using models formatted in Systems Biology Markup Language (SBML) [23].

Figure 2: Flux Balance Analysis Computational Framework

Researchers have access to numerous authoritative platforms for acquiring DEM data. OpenTopography provides centralized access to diverse topographic data collections from multiple sources including the USGS 3D Elevation Program (3DEP), NOAA Coastal Lidar, Natural Resources Canada, and the Polar Geospatial Center [24]. The platform offers value-added services enabling users to subset, grid, download, and visualize portions of extensive collections, with specialized access policies for academic and commercial users [24].

NASA Earthdata provides DEM datasets from missions including the Space Shuttle Radar Topography Mission (SRTM), Advanced Spaceborne Thermal Emission and Reflection Radiometer (ASTER), and Global Ecosystem Dynamics Investigation (GEDI) [17]. The NOAA Data Access Viewer offers authoritative land cover, imagery, and lidar data through its Digital Coast platform [25]. These resources collectively provide comprehensive, freely available elevation data for research applications.

Computational Tools for Flux Analysis

The COBRA Toolbox represents the standard computational environment for implementing Flux Balance Analysis and related constraint-based reconstruction and analysis methods [23]. This MATLAB-based toolbox includes functions for reading metabolic models in SBML format (readCbModel), performing FBA (optimizeCbModel), and modifying reaction bounds (changeRxnBounds). For biochemical network simulations including flux analysis, Copasi provides specialized simulation capabilities with support for time-dependent concentration calculations [26].

Table 3: Essential Research Reagents and Computational Tools

| Resource Type | Specific Tools/Datasets | Primary Function | Application Context |

|---|---|---|---|

| DEM Data Platforms | OpenTopography, USGS 3DEP, NASA Earthdata | High-resolution elevation data access | Terrain analysis, hydrological modeling |

| Global DEM Datasets | AW3D30, COP30, MERIT, FABDEM | Regional to global scale terrain representation | Cross-comparison studies, global analyses |

| Flux Analysis Software | COBRA Toolbox, Copasi | Metabolic network simulation and analysis | Metabolic engineering, systems biology |

| Spatial Analysis Tools | ArcMap, QGIS | Geospatial data processing and visualization | Hydrological factor derivation, spatial modeling |

| Specialized DEMs | FABDEM (forest/building removed) | Urban and vegetated environment analysis | Flood risk assessment, urban hydrology |

Integrated Applications and Future Directions

DEM Error Correction Using Machine Learning

Machine learning approaches are increasingly employed to correct vertical biases in global DEMs caused by vegetation and buildings [21]. Recent research has trained separate models for different land cover environments to correct biases in the Copernicus DEM, explaining them using SHapley Additive exPlanation (SHAP) values [21]. This specialized approach has demonstrated that variable importance is highly dependent on training environment, suggesting that ensembles of land cover-specific models likely outperform single general models.

The most important input variables for these error prediction models include topographical derivatives and neighborhood statistics, though specific selected variables vary significantly across land cover types [21]. This tailored approach to DEM correction represents a significant advancement over one-size-fits-all methods, particularly for applications requiring bare-earth elevations in complex environments.

Synergistic Applications in Environmental and Metabolic Modeling

The integration of DEM-based environmental factors with metabolic modeling approaches opens new possibilities for understanding biological systems in their environmental context. DEM-derived topographic controls influence soil moisture distribution, nutrient transport, and microclimate conditions—all factors that significantly impact metabolic processes in plants, soil microbiota, and ecosystem functioning [22]. This integration enables more predictive models of ecosystem metabolism and biogeochemical cycling.

Future directions include developing tighter couplings between spatial environmental data and metabolic network models, potentially enhancing predictions of biologically mediated environmental processes. Such integrative approaches could prove particularly valuable in addressing complex challenges at the environment-biology interface, including climate change impacts, ecosystem resilience, and sustainable bioengineering applications.

Tools and Techniques: How to Detect and Analyze Dead-End Metabolites

Dead-end metabolites (DEMs) are critical concepts in metabolic network analysis, defined as metabolites that are either only consumed or only produced by the reactions within a given cellular compartment, including transport reactions [27]. While some DEMs represent biologically accurate network terminations, their presence frequently aids in identifying incomplete or incorrect curation of Pathway/Genome Databases (PGDBs) [27]. The identification and resolution of these metabolic gaps are essential for constructing high-quality genome-scale metabolic models (GSMMs), which are powerful tools for predicting metabolic fluxes in living organisms with applications ranging from drug target identification to metabolic engineering [28].

The MetaCyc database serves as a foundational resource for metabolic pathways and enzymes, containing experimentally elucidated metabolic pathways from all domains of life [29] [30]. As a manually curated database, MetaCyc provides a comprehensive reference for metabolic network prediction, analysis, and refinement [31] [32]. Within this ecosystem, the MetaCyc Dead-End Metabolite Finder emerges as a specialized tool designed to systematically identify these metabolic gaps, thereby supporting the refinement of metabolic network reconstructions and enhancing the accuracy of subsequent computational analyses.

Core Functionality and Operational Parameters

The MetaCyc Dead-End Metabolite Finder is a web-based tool that analyzes metabolic networks to identify metabolites that function as dead-ends. The tool provides researchers with several configurable parameters to refine their analysis based on specific research requirements and biological contexts [27].

Table 1: Configurable Parameters in the MetaCyc Dead-End Metabolite Finder

| Parameter | Functionality | Impact on Analysis |

|---|---|---|

| Small Molecule Limitation | Limits DEM search to small molecules | Focuses analysis on core metabolic intermediates; excludes macromolecules |

| Non-Pathway Reactions Inclusion | Includes or excludes non-pathway reactions in search | Broadens scope to all network reactions or focuses on curated pathways only |

| Pathway-Only Limitation | Limits search to reactions found in pathways | Restricts analysis to formally defined metabolic pathways |

| Unknown Direction Handling | Treats reactions with unknown direction as bidirectional or excludes them | Affects connectivity assessment based on directionality assumptions |

| Compartment Specification | Limits search to specific cellular compartments | Enables compartment-specific gap analysis in eukaryotic systems |

The tool's operational definition of dead-end metabolites encompasses two primary scenarios: (1) metabolites that have producing reactions but lack consuming reactions within the network ("root no-consumption metabolites"), and (2) metabolites that have consuming reactions but lack producing reactions ("root no-production metabolites") [27] [33]. This classification helps researchers pinpoint the exact nature of metabolic gaps and prioritize their resolution strategies accordingly.

Workflow and Integration with Metabolic Analysis

The Dead-End Metabolite Finder operates within the broader MetaCyc platform, which interrelates pathway information with genes, enzymes, and reactions [30]. This integration enables researchers to navigate seamlessly from identified dead-end metabolites to associated pathways, enzymes, and genetic information, providing essential biological context for gap resolution.

The following diagram illustrates the logical relationship between dead-end metabolite identification and subsequent metabolic network refinement processes:

DEM Analysis Workflow

The process begins with metabolic network input, proceeds through DEM identification and classification, and culminates in targeted refinement strategies based on gap type.

Methodological Framework for Dead-End Metabolite Analysis

Experimental Protocol for DEM Identification and Resolution

A comprehensive approach to dead-end metabolite analysis involves both computational identification and experimental resolution, forming an iterative refinement cycle for metabolic networks.

Table 2: Methodological Framework for Dead-End Metabolite Analysis

| Phase | Procedure | Technical Considerations |

|---|---|---|

| Network Preparation | Export metabolic network from target PGDB in SBML format | Ensure proper compartmentalization and reaction balancing |

| DEM Identification | Configure and run MetaCyc Dead-End Metabolite Finder with appropriate parameters | Use compartment-specific analysis for eukaryotic organisms |

| Gap Classification | Categorize identified DEMs as knowledge, biological, or scope gaps | Assess phylogenetic conservation of pathways to prioritize targets |

| Candidate Reaction Identification | Query reference databases (MetaCyc, KEGG) for candidate gap-filling reactions | Consider enzyme commission number and taxonomic distribution |

| Experimental Validation | Design growth phenotyping or gene essentiality experiments | Use knockout strains to test metabolic capabilities |

The initial step involves exporting the metabolic network from the target Pathway/Genome Database (PGDB) in Systems Biology Markup Language (SBML) format, ensuring proper annotation of cellular compartments and reaction stoichiometry [32]. The Dead-End Metabolite Finder is then configured with parameters appropriate to the research context—for initial network assessment, including all reaction types and treating directionally ambiguous reactions as bidirectional provides the most comprehensive gap identification [27].

Following DEM identification, each dead-end metabolite must be classified according to gap type. Knowledge gaps represent missing biochemical information and are prime targets for discovery research [33]. Biological gaps reflect genuine absences in the organism's metabolic capabilities, while scope gaps arise from model boundary limitations [33]. This classification directly informs resolution strategy selection, with knowledge gaps being candidates for computational gap-filling followed by experimental validation.

Advanced Computational Methodologies

Beyond the core DEM identification capabilities of MetaCyc, several advanced computational approaches have been developed to address metabolic network gaps. The MACAW (Metabolic Accuracy Check and Analysis Workflow) suite incorporates a dead-end test alongside complementary analyses including dilution, duplicate, and loop tests to provide comprehensive network validation [28]. This multi-faceted approach helps researchers distinguish between different types of network deficiencies that may coexist in metabolic reconstructions.

Machine learning approaches such as CHESHIRE (CHEbyshev Spectral HyperlInk pREdictor) represent cutting-edge methodologies for predicting missing reactions in metabolic networks purely from topological features [6]. By framing the problem as a hyperlink prediction task on metabolic hypergraphs, where reactions connect multiple metabolites simultaneously, these methods can propose biologically plausible gap-filling reactions without requiring experimental data as input [6]. This is particularly valuable for non-model organisms where extensive phenotyping data may be unavailable.

Thermodynamic considerations further refine gap analysis through tools like ThermOptCobra, which addresses thermodynamically infeasible cycles (TICs) that can compromise metabolic model predictions [34]. Integrating thermodynamic constraints helps distinguish stoichiometrically possible from thermodynamically feasible gap-filling solutions, increasing the biological relevance of proposed network refinements.

Integration with Broader Metabolic Research Workflows

The Researcher's Toolkit for DEM Analysis

Effective dead-end metabolite research requires specialized computational resources and databases that form an integrated toolkit for metabolic network refinement.

Table 3: Essential Research Resources for Dead-End Metabolite Analysis

| Resource | Type | Primary Function in DEM Research |

|---|---|---|

| MetaCyc Database | Curated Metabolic Database | Reference database for experimentally validated pathways and enzymes [31] [32] |

| Dead-End Metabolite Finder | Analysis Tool | Identification of metabolic gaps in network reconstructions [27] |

| Pathway Tools | Software Platform | PGDB creation, curation, and visualization [30] [32] |

| MACAW | Algorithm Suite | Comprehensive metabolic network validation including dead-end tests [28] |

| CHESHIRE | Machine Learning Tool | Topology-based prediction of missing reactions [6] |

| ThermOptCobra | Thermodynamic Analysis Tool | Identification and resolution of thermodynamically infeasible cycles [34] |

| SMILEY | Gap-Filling Algorithm | Growth phenotype-based gap-filling using reaction databases [33] |

This toolkit enables researchers to progress from initial dead-end metabolite identification through to biologically plausible hypothesis generation for experimental testing. The resources span multiple methodological approaches, from manual curation-supported tools to fully automated algorithms, accommodating varying levels of research expertise and availability of organism-specific data.

Applications in Pharmaceutical and Biotechnology Development

The identification and resolution of dead-end metabolites has significant practical implications in pharmaceutical development and biotechnology. In drug discovery, complete metabolic networks enable more accurate prediction of drug metabolism and identification of potential toxicity issues [35]. For antibiotic development, understanding an pathogen's complete metabolic network allows identification of essential genes and pathways that represent promising drug targets [28] [33].

In cancer research, constraint-based modeling of drug-induced metabolic changes relies on high-quality metabolic networks without spurious gaps [35]. For instance, studies of kinase inhibitors in gastric cancer cell lines have revealed widespread down-regulation of biosynthetic pathways, particularly in amino acid and nucleotide metabolism [35]. Accurate network models are essential for distinguishing direct drug effects from secondary consequences of metabolic gaps.

Metabolic engineering applications particularly benefit from dead-end metabolite resolution, as production pathways for valuable compounds must be connected to central metabolism without interruptions [6] [32]. The MetaCyc database serves as an encyclopedia of metabolic pathways that engineers can draw upon to fill gaps in industrial production strains, connecting heterologously expressed pathways to native metabolism [30] [31].

The following diagram illustrates how DEM analysis integrates with broader metabolic network reconstruction and validation workflows:

DEMs in Metabolic Reconstruction

The workflow shows how DEM analysis functions as a critical quality control checkpoint in the metabolic network reconstruction pipeline, enabling iterative model refinement.

The MetaCyc Dead-End Metabolite Finder represents an essential component in the metabolic researcher's toolkit, providing a specialized interface for identifying network gaps that compromise the predictive accuracy of genome-scale metabolic models. When integrated with complementary validation tools and gap-filling methodologies, it supports an iterative refinement cycle that progressively enhances metabolic network quality and biological accuracy.

As metabolic modeling continues to expand into new research areas including personalized medicine, microbiome studies, and industrial biotechnology, the importance of high-quality, gap-free network reconstructions will only increase. The Dead-End Metabolite Finder, embedded within the comprehensive MetaCyc knowledge base, provides a critical foundation for these advancing research domains, connecting computational predictions with biochemical reality through systematic identification and resolution of metabolic network deficiencies.

The Metabolic Accuracy Check and Analysis Workflow (MACAW) represents a significant advancement in the domain of genome-scale metabolic model (GSMM) validation. MACAW is a suite of algorithms designed to semi-automatically detect and visualize errors within densely interconnected metabolic networks [28]. Its development addresses a critical limitation in metabolic network research: the presence of erroneous or missing reactions that hinder the predictive accuracy of GSMMs. These models are fundamental for predicting metabolic fluxes, with applications spanning from the identification of novel drug targets to the engineering of microbial metabolism. Unlike earlier tools that often focus on individual reactions, MACAW specializes in identifying and contextualizing errors at the level of connected pathways. This pathway-level perspective is crucial for researchers and drug development professionals who rely on accurate metabolic simulations to study diseases, characterize cellular differences, and design therapeutic interventions. By highlighting systematic issues and inaccuracies of varying severity, MACAW provides a powerful diagnostic toolkit for improving the reliability of metabolic models in foundational research.

The Critical Need for Diagnostic Tools in Metabolic Modeling

Genome-scale metabolic models are formal mathematical representations of cellular metabolism, typically structured as stoichiometric matrices where rows correspond to metabolites and columns represent reactions [28]. Despite their widespread use and importance, the accuracy and completeness of even extensively curated GSMMs remain highly variable. These models are prone to several types of errors, including reactions with inaccurate stoichiometric coefficients or reversibilities, incorrect gene-reaction associations, duplicate reactions, reactions incapable of sustaining steady-state fluxes (dead-ends), and reactions capable of infinite or thermodynamically implausible fluxes [28].

The presence of these errors has tangible consequences for the application of GSMMs in drug discovery and development. For instance, an incomplete or erroneous model could lead to incorrect predictions about a drug's metabolic fate or the identification of an ineffective drug target. Existing gap-filling algorithms, such as Meneco and fastGapFill, focus on connecting dead-end metabolites but are often prone to introducing new errors while attempting to resolve network gaps [28]. Other tools that address thermodynamically infeasible loops can sometimes block fluxes through critical pathways like electron transport chains and ATP synthases, thereby reducing a model's ability to simulate realistic metabolic phenotypes [28]. MACAW's diagnostic approach, which prioritizes visualization and pathway-level error identification without automatically applying often-imperfect fixes, provides a more reliable foundation for model refinement.

Core Diagnostic Tests of MACAW

MACAW incorporates four independent but complementary tests, each designed to identify specific categories of potential inaccuracies within a GSMM. The following sections detail the principles and methodologies behind each test.

Dead-End Test

Principles and Methodology: The dead-end test identifies metabolites that can only be produced or only consumed within the metabolic network, making them "dead-ends" [28]. These metabolites are incapable of sustaining steady-state fluxes, as they lack either an input or an output pathway under the model's current constraints. The test works by analyzing the network topology to pinpoint metabolites that are not properly integrated into the metabolic system. From a research perspective, dead-end metabolites often indicate missing reactions, incomplete pathway annotations, or context-specific gaps in metabolic functionality. Resolving these dead-ends is a fundamental step in model curation, as they can block flux through entire pathways and lead to false-negative predictions in simulations.

Protocol for Identification:

- Network Compartmentalization: Define the system boundaries and internal compartments of the model.

- Metabolite Exchange Analysis: For each metabolite in the model, analyze all associated reactions to determine if it can be transported across system boundaries (e.g., via exchange, demand, or sink reactions).

- Consumption/Production Capability Assessment: Systematically evaluate each internal metabolite to determine if it has at least one reaction that consumes it and one reaction that produces it within the network. Metabolites lacking either consumption or production pathways are flagged as dead-ends.

- Result Visualization: The flagged reactions associated with dead-end metabolites are grouped into networks to help users visualize pathway-level errors rather than isolated issues.

Dilution Test

Principles and Methodology: The dilution test is one of MACAW's more innovative components. It addresses a subtle but critical issue in metabolic modeling: the inability of a network to sustain the net production of certain metabolites, particularly cofactors [28]. While many metabolites like ATP/ADP are continuously recycled, cells must have biosynthetic or uptake pathways to counter dilution effects caused by cellular growth, division, or loss to side reactions. A GSMM might be capable of recycling a cofactor but incapable of its de novo synthesis, which would become apparent only under conditions of net growth or dilution. This test identifies such flaws by checking if the model can sustain net production of each metabolite through a simulated "dilution reaction" that consumes the metabolite without producing anything else [28].

Protocol for Identification:

- Define Dilution Reaction: For a target metabolite

M, a dilution reaction is formulated asM ->(a reaction that consumesMand produces nothing). - Flux Balance Analysis (FBA) Setup: Constrain all standard exchange reactions (metabolite uptake and secretion) to their typical bounds.

- Objective Function Definition: Set the objective of the FBA to maximize the flux through the dilution reaction for metabolite

M. - Feasibility Check: Solve the linear programming problem. If a non-zero flux through the dilution reaction is possible, the metabolite