Decoding Cellular Control: How Kinetic Models Uncover Enzyme Regulation Mechanisms

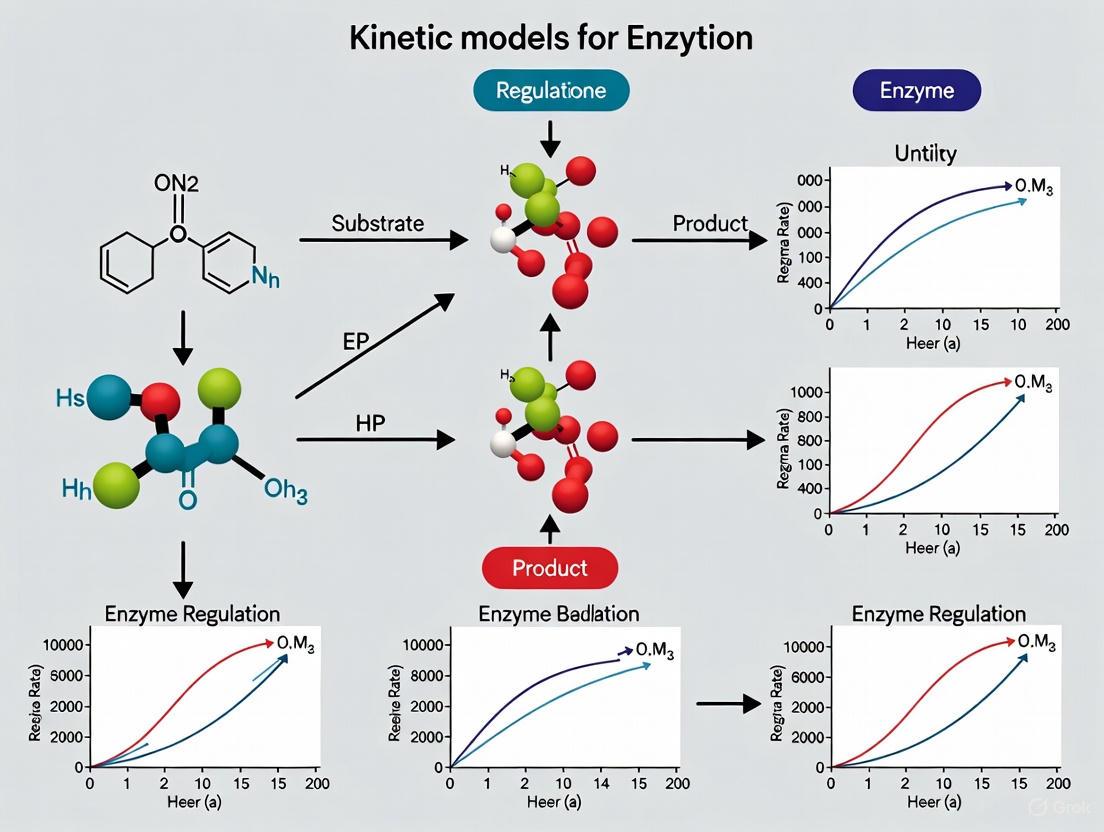

This article provides a comprehensive exploration of how kinetic models serve as essential tools for capturing the complex mechanisms of enzyme regulation.

Decoding Cellular Control: How Kinetic Models Uncover Enzyme Regulation Mechanisms

Abstract

This article provides a comprehensive exploration of how kinetic models serve as essential tools for capturing the complex mechanisms of enzyme regulation. Tailored for researchers, scientists, and drug development professionals, it bridges foundational theories with cutting-edge applications. The scope spans from fundamental principles like Michaelis-Menten and allosteric kinetics to advanced methodological frameworks including computational QM/MM simulations and machine-learning-guided engineering. It further addresses practical challenges in model troubleshooting, optimization for industrial and therapeutic use, and the critical validation of models against experimental data. By integrating these perspectives, the article offers a holistic resource for leveraging kinetic modeling to decipher enzyme behavior, optimize biocatalysts, and accelerate the development of novel therapeutics.

The Principles of Enzyme Kinetics: From Michaelis-Menten to Allosteric Regulation

Enzyme kinetics is the study of the rates of enzyme-catalyzed reactions and the conditions that affect them. The mathematical modeling of these rates is fundamental to understanding how enzymes behave in living organisms, with applications ranging from basic cellular biochemistry to drug discovery and metabolic engineering. Kinetic models provide a quantitative framework for deciphering cellular processes, integrating disparate datasets, and predicting biological responses to perturbations [1] [2]. At the heart of this field lies a set of core parameters—reaction rate (V), maximum reaction rate (Vmax), Michaelis constant (Km), and the dynamics of the enzyme-substrate (ES) complex—which together describe the efficiency and behavior of enzymes. These parameters are not merely static numbers; they are the variables in mathematical models that allow researchers to simulate metabolic states, characterize intracellular processes, and probe disease mechanisms [1] [3] [2]. This guide details these core concepts, the experimental methods used to determine them, and their critical role in advanced kinetic modeling for enzyme regulation research.

Foundational Principles and Key Parameters

The Enzyme-Substrate Complex and Reaction Stages

The catalytic cycle begins with the reversible binding of an enzyme (E) and a substrate (S) to form an enzyme-substrate complex (ES). This complex then undergoes a chemical transformation to produce a product (P) and release the free enzyme. The general reaction scheme is represented as: [ E + S \xrightarrow[k{-1}]{k{+1}} ES \xrightarrow[]{k{cat}} E + P ] where ( k{+1} ) and ( k{-1} ) are the rate constants for the formation and dissociation of the ES complex, and ( k{cat} ) (the catalytic rate constant) is the rate constant for the product-forming step [4] [5].

The formation of the ES complex is a key feature of enzyme catalysis. The active site of the enzyme, often described as complementary to the substrate's transition state, stabilizes this high-energy intermediate, thereby lowering the activation energy (Ea) required for the reaction to proceed [6].

When an enzyme is mixed with a substrate, the reaction progresses through three distinct kinetic phases [6]:

- Pre-steady state: A rapid, initial burst of ES complex formation. The rate of product formation is initially slow as it waits for ES to form.

- Steady-state: The concentration of the ES complex remains relatively constant because it is formed as quickly as it breaks down. The rate of product formation reaches a constant, faster rate. Michaelis-Menten kinetics analyzes the reaction in this phase.

- Post-steady state: The substrate becomes depleted, leading to a decrease in ES complex formation and a subsequent slowing of the reaction rate.

Defining the Core Kinetic Parameters

The following parameters are essential for characterizing enzyme activity and are the foundational outputs of kinetic experiments.

- Reaction Rate (V): The velocity or rate of catalysis of an enzyme, defined as the number of moles of product formed per unit time. It is directly proportional to the concentration of the ES complex [4] [6].

- Maximum Reaction Rate (Vmax): The maximum rate of reaction achievable when all of the enzyme's active sites are saturated with substrate. At this point, the reaction rate is zero-order with respect to substrate concentration, meaning further increases in substrate do not increase the rate. Vmax is mathematically defined as ( V{max} = k{cat}[Et] ), where ( [Et] ) is the total enzyme concentration [6] [5].

- Michaelis Constant (Km): The substrate concentration at which the reaction rate is half of Vmax. It is a measure of the affinity an enzyme has for its substrate; a lower Km value indicates a higher affinity, as the enzyme requires less substrate to become half-saturated [6] [5].

- Catalytic Constant (kcat): Also known as the turnover number, this is the maximum number of substrate molecules converted to product per enzyme active site per unit time. It is the rate-limiting step for the catalytic cycle when the enzyme is fully saturated [5].

- Specificity Constant (kcat/Km): A measure of catalytic efficiency that combines both affinity and turnover rate. It defines how efficiently an enzyme converts a substrate at low substrate concentrations. A higher ( k{cat}/Km ) indicates greater efficiency [5].

The relationship between the initial reaction velocity (v) and the initial substrate concentration ([S]) is described by the Michaelis-Menten equation: [ v = \frac{V{max} [S]}{Km + [S]} ] This equation produces a rectangular hyperbola when reaction rate is plotted against substrate concentration [4] [6] [5].

Table 1: Key Parameters in Michaelis-Menten Kinetics

| Parameter | Symbol | Definition | Interpretation |

|---|---|---|---|

| Reaction Rate | v |

Moles of product formed per unit time (( dp/dt )) | The instantaneous velocity of the catalyzed reaction. |

| Maximum Velocity | Vmax |

The rate of reaction when enzyme is saturated with substrate (( k{cat}[Et] )) | Defines the enzyme's maximum catalytic capacity. |

| Michaelis Constant | Km |

Substrate concentration at which ( v = V_{max}/2 ) | An inverse measure of the enzyme's affinity for the substrate. |

| Catalytic Constant | kcat |

Turnover number (( V{max}/[Et] )) | The rate constant for the product-forming step. |

| Specificity Constant | kcat/Km |

M⁻¹s⁻¹ | A measure of the enzyme's catalytic efficiency for a substrate. |

Table 2: Example Kinetic Parameters for Various Enzymes [5]

| Enzyme | Km (M) | kcat (s⁻¹) | kcat/Km (M⁻¹s⁻¹) |

|---|---|---|---|

| Chymotrypsin | ( 1.5 \times 10^{-2} ) | 0.14 | 9.3 |

| Pepsin | ( 3.0 \times 10^{-4} ) | 0.50 | ( 1.7 \times 10^{3} ) |

| tRNA synthetase | ( 9.0 \times 10^{-4} ) | 7.6 | ( 8.4 \times 10^{3} ) |

| Ribonuclease | ( 7.9 \times 10^{-3} ) | ( 7.9 \times 10^{2} ) | ( 1.0 \times 10^{5} ) |

| Carbonic anhydrase | ( 2.6 \times 10^{-2} ) | ( 4.0 \times 10^{5} ) | ( 1.5 \times 10^{7} ) |

| Fumarase | ( 5.0 \times 10^{-6} ) | ( 8.0 \times 10^{2} ) | ( 1.6 \times 10^{8} ) ``` |

Diagram 1: Enzyme kinetics reaction mechanism. The core catalytic cycle involves reversible enzyme-substrate complex formation followed by product release.

Experimental Determination of Kinetic Parameters

Standard Spectrophotometric Assay Protocol

The most common method for determining kinetic parameters involves continuously monitoring the consumption of substrate or the generation of a product using spectroscopic techniques [7].

Workflow for a Coupled Enzyme Assay (e.g., Pyruvate Decarboxylase, PDC):

Reaction Mixture Preparation: In a microtiter plate well, combine:

- 100 µL of crude enzyme extract

- 90 µL of 1 M MES buffer (pH 6.5)

- 10 µL of 5 mM thiamine pyrophosphate (cofactor)

- 10 µL of magnesium chloride (cofactor)

- 5 µL of commercial ADH solution (coupling enzyme, ≥50 units)

- 25 µL of 500 mM sodium pyruvate (substrate)

- 10 µL of 10 mM NADH (reporter molecule)

Reaction Initiation: The reaction is initiated by adding the reaction buffer containing the substrates and cofactors. The total reaction volume is brought to 250 µL.

Data Acquisition: The oxidation of NADH to NAD⁺ is monitored by continuously recording the decrease in absorbance at 360 nm using a spectrophotometer until a steady base level is reached.

Data Conversion: The absorbance readings are converted to NADH concentration using a pre-established calibration curve. The rate of NADH consumption is directly proportional to the rate of the PDC-catalyzed reaction [7].

Data Analysis and the Lineweaver-Burk Plot

The initial velocity (v) is determined from the steepest, linear slope of the progress curve (product concentration vs. time). A series of initial velocities are measured at different initial substrate concentrations ([S]) [7]. The resulting data of v versus [S] is fitted to the Michaelis-Menten equation to directly determine Vmax and Km.

A more linear representation is the Lineweaver-Burk plot, which is a double-reciprocal plot of ( 1/v ) versus ( 1/[S] ). This transforms the Michaelis-Menten equation into: [ \frac{1}{v} = \frac{Km}{V{max}} \cdot \frac{1}{[S]} + \frac{1}{V_{max}} ] From this linear plot:

- The y-intercept is ( 1/V_{max} )

- The x-intercept is ( -1/K_m )

- The slope is ( Km/V{max} ) [6]

This plot is also particularly useful for visually diagnosing the mechanism of enzyme inhibition [6].

Table 3: The Scientist's Toolkit - Key Research Reagents for Kinetic Assays [7]

| Reagent / Material | Function in the Experiment |

|---|---|

| MES Buffer | Maintains a constant pH (e.g., 6.5) optimal for enzyme activity. |

| Dithiothreitol (DTT) | A reducing agent added to the extraction buffer to prevent oxidation of cysteine residues in the enzyme, preserving activity. |

| Polyvinylpyrrolidone (PVP) | Added during extraction to bind and remove phenolic compounds that can inhibit enzymes. |

| Triton X-100 | A non-ionic detergent used to disrupt cellular membranes during homogenization, aiding in enzyme extraction. |

| NADH (β-nicotinamide adenine dinucleotide) | A reporter molecule; its oxidation (decrease in absorbance at 360 nm) is used to monitor the reaction rate. |

| Thiamine Pyrophosphate | A coenzyme (vitamin B1 derivative) required for the catalytic activity of pyruvate decarboxylase. |

| Commercial Coupling Enzyme (e.g., ADH) | Used in coupled assays to convert the product of the reaction of interest into a secondary, easily measurable product. |

| Microtiter Plate | A flat-bottom 96-well plate used as a vessel for high-throughput spectrophotometric measurements. |

Advanced Kinetic Models in Enzyme Regulation Research

Moving Beyond Michaelis-Menten: tQSSA and dQSSA

The classical Michaelis-Menten model assumes low enzyme concentration and irreversible product formation, which may not be valid in crowded intracellular environments [1]. This has led to the development of more advanced models:

- Total Quasi-Steady-State Assumption (tQSSA): This model eliminates the low-enzyme concentration assumption, making it more applicable to in vivo conditions. However, it comes at the cost of increased mathematical complexity [1].

- Differential Quasi-Steady-State Approximation (dQSSA): A recently proposed model that expresses differential equations as a linear algebraic equation. It eliminates the restrictive assumptions of the Michaelis-Menten model without increasing model dimensionality, making it suitable for modeling complex enzyme-mediated biochemical systems, including reversible reactions and those with coenzyme inhibition [1].

Machine Learning and Circuit Modeling in Kinetics

Cutting-edge approaches are now revolutionizing how kinetic models are built and applied:

- Generative Machine Learning (RENAISSANCE): Frameworks like RENAISSANCE (REconstruction of dyNAmIc models through Stratified Sampling using Artificial Neural networks and Concepts of Evolution strategies) use generative machine learning to efficiently parameterize large-scale kinetic models. This approach integrates diverse omics data (metabolomics, fluxomics, proteomics) to accurately characterize intracellular metabolic states in organisms like E. coli, substantially reducing parameter uncertainty and improving predictive accuracy for studies in health and biotechnology [2].

- Electronic Circuit Modeling: The mathematics of biochemical kinetics can be exactly mapped to the dynamics of electronic circuits, where voltages represent molecular concentrations and currents represent molecular fluxes. This allows researchers to model complex reaction networks—including various inhibition types, reversible reactions, and multi-substrate reactions—using intuitive circuit schematics and simulation software, bypassing the need to derive tedious differential equations [8].

Diagram 2: Evolution of enzyme kinetic modeling approaches, from classic equations to modern computational methods.

Stability, Dynamics, and Inhibition Analysis

Understanding the dynamic behavior of enzymatic reactions is crucial, especially in complex biological systems:

- Stability Analysis: Mathematical models based on systems of nonlinear differential equations can be analyzed for stability. Using methods like the Next Generation Matrix to calculate a basic reproduction number (R₀), researchers can determine if a system will return to a steady state after a perturbation, which is a key property of robust biological systems [9].

- Time-Delayed Effects: Incorporating time delays into differential equations (Delay Differential Equations) can model the lag between changes in reactant concentrations and product responses. Analysis of these models reveals that time delays can significantly influence system stability, leading to oscillations in chemical reactions [10].

- Empirical Inhibition Modeling: Traditional models of enzyme inhibition (competitive, non-competitive, uncompetitive) are often overly complex and based on flawed assumptions. A modern empirical approach directly links the mass binding of the inhibitor to the enzyme population with the resulting effect on kinetic parameters (K₁ and V₁), providing a simpler, more logical, and broadly applicable framework for analyzing drug interactions [3].

The core concepts of reaction rate, Vmax, Km, and enzyme-substrate complex dynamics form the foundation of a sophisticated modeling ecosystem. While the Michaelis-Menten equation remains a vital tool for initial characterization, the field of enzyme regulation research is increasingly driven by models that more accurately reflect intracellular conditions, such as the dQSSA, and powered by novel computational approaches like generative machine learning and electronic circuit simulation. The accurate determination of core kinetic parameters through rigorous experimental protocols provides the essential data required to parameterize these advanced models. As the integration of multi-omics data becomes routine, these evolving kinetic models will continue to enhance our ability to predict and manipulate metabolic behavior, ultimately accelerating discovery in therapeutic development and biotechnology.

Within the broader inquiry into how kinetic models capture enzyme regulation, the Michaelis-Menten model stands as a foundational pillar. This framework provides a quantitative language for describing how reaction rates depend on enzyme and substrate concentration, offering critical parameters that illuminate enzyme function and control within biological systems. This whitepaper details the model's fundamental principles, its mathematical derivation, the critical assumptions underlying its application, and the standard experimental protocols for its determination. By framing these concepts for researchers and drug development professionals, we aim to reinforce the model's indispensable role in elucidating enzymatic regulation, from basic biochemical research to the development of therapeutic inhibitors.

Enzyme kinetics is the study of the rates of enzyme-catalyzed reactions, a field central to understanding metabolic control, cellular signaling, and pharmacodynamics [6] [11]. The model introduced by Leonor Michaelis and Maud Menten in 1913 provides the simplest and most widely applied kinetic framework for reactions involving a single substrate [5] [12]. Its primary achievement was the formalization of the hypothesis that enzyme catalysis proceeds via the formation of an enzyme-substrate (ES) complex [12]. The resulting mathematical model successfully describes the observed hyperbolic relationship between substrate concentration and the initial reaction rate, allowing researchers to quantify catalytic efficiency and substrate affinity [13] [6]. This capability to distill complex enzymatic behavior into defined, measurable constants makes the model an essential tool for capturing the mechanistic basis of enzyme regulation.

Foundational Principles and the Kinetic Model

The Reaction Model

The Michaelis-Menten model for a single-substrate, irreversible reaction is represented by the following scheme [5] [4]:

E + S ⇌ ES → E + P

In this model, the enzyme (E) reversibly binds the substrate (S) to form the enzyme-substrate complex (ES). This complex can then dissociate back to E and S or undergo catalysis to yield the product (P) and regenerate the free enzyme [13] [11]. The rate constants k₁ (or kON) and k₋₁ (or kOFF) govern the association and dissociation steps for the ES complex, while k_cat (often denoted k₂ or k₃ in simpler models) is the catalytic rate constant for the formation of product [13] [5].

The Michaelis-Menten Equation

From the reaction model, Michaelis and Menten derived the following equation that describes the initial velocity (V₀) of the reaction [13] [5]:

V₀ = (Vmax × [S]) / (KM + [S])

- V₀: Initial velocity of the reaction.

- [S]: Substrate concentration.

- V_max: The maximum reaction velocity, achieved when all enzyme active sites are saturated with substrate.

- KM: The Michaelis constant, defined as the substrate concentration at which the reaction velocity is half of Vmax [6].

This equation produces the characteristic hyperbolic saturation curve when V₀ is plotted against [S]. At low substrate concentrations ([S] << KM), the rate increases nearly linearly with [S] (approximately first-order kinetics). At high substrate concentrations ([S] >> KM), the rate approaches V_max and becomes independent of [S] (zero-order kinetics) [5] [6].

Key Kinetic Parameters and Their Significance

The Michaelis-Menten equation yields two fundamental parameters that are instrumental for comparing and regulating enzymes.

Table 1: Key Parameters in Michaelis-Menten Kinetics

| Parameter | Symbol | Definition | Interpretation |

|---|---|---|---|

| Michaelis Constant | K_M | Substrate concentration at half V_max | An inverse measure of the enzyme's apparent affinity for the substrate. A lower K_M indicates higher affinity [13] [6]. |

| Maximum Velocity | V_max | Maximum rate achieved at saturating [S] | Vmax = kcat × [E]_total. It defines the enzyme's turnover capacity when fully saturated [13] [5]. |

| Catalytic Constant | k_cat | Vmax / [E]total | Also called the turnover number, it is the maximum number of substrate molecules converted to product per active site per unit time [5]. |

| Specificity Constant | kcat / KM | The second-order rate constant for the reaction of free enzyme with substrate | A measure of catalytic efficiency. It determines the rate of the reaction at low substrate concentrations [5]. |

The following diagram illustrates the core reaction pathway and the resulting kinetic curve:

The Underlying Assumptions of the Model

The derivation of the Michaelis-Menten equation relies on several key assumptions that define its scope and validity [13].

Initial Velocity Steady-State: The equation is strictly valid only for the initial rate of the reaction (V₀), denoted by the subscript '0'. This is measured before the substrate concentration has decreased significantly and before product, which may act as an inhibitor, has accumulated [13] [12]. This ensures that the reverse reaction is negligible.

Steady-State Approximation: The concentration of the ES complex remains constant over the measured period of the reaction. The rate of ES complex formation equals the rate of its breakdown (to E + S and E + P) [13].

Free Ligand Approximation: The total substrate concentration ([S]total) is much greater than the total enzyme concentration ([E]total). This justifies the approximation that the concentration of free substrate is approximately equal to [S]_total, as the amount bound in the ES complex is negligible [13].

Single Substrate and Irreversible Product Formation: The model, in its basic form, applies to reactions with one substrate. The catalytic step (ES → E + P) is assumed to be irreversible, meaning product conversion back to substrate is not considered [13] [11].

A final assumption, which was part of the original derivation but was later relaxed by Briggs and Haldane, is the Rapid Equilibrium assumption. Michaelis and Menten assumed that the first step (E + S ⇌ ES) is rapidly reversible and remains at equilibrium throughout the reaction. The modern derivation uses the more general steady-state approximation, which does not require this equilibrium assumption [13] [12].

Experimental Protocols for Determining KM and Vmax

Classic Initial Rate Determination

The standard methodology for determining KM and Vmax involves measuring the initial velocity of the reaction at a series of substrate concentrations while keeping other conditions (pH, temperature, enzyme concentration) constant [14] [12].

Research Reagent Solutions & Essential Materials:

Table 2: Key Reagents and Materials for Michaelis-Menten Experiments

| Item | Function / Explanation |

|---|---|

| Purified Enzyme | The enzyme of interest, prepared and purified to a known concentration or activity. Source and purity are critical for reproducibility. |

| Substrate Solution | A stock solution of the specific substrate. Diluted to create a range of concentrations for the assay. |

| Reaction Buffer | Maintains constant pH and ionic strength, providing optimal and stable conditions for the enzyme. |

| Cofactors / Cations | Any required metal ions (e.g., Mg²⁺) or coenzymes (e.g., NADH) essential for catalytic activity. |

| Detection System | Method to monitor product formation or substrate depletion over time (e.g., spectrophotometer, fluorometer, pH-stat). |

Step-by-Step Workflow:

- Preparation: Prepare a concentrated stock solution of the substrate. Prepare a dilution series covering a broad range, ideally from a concentration well below the estimated K_M to well above it [14].

- Reaction Initiation: In separate reaction vessels, combine buffer, cofactors, and a fixed, known amount of enzyme. Initiate the reaction by adding a specific volume from each substrate dilution tube. The enzyme is often the last component added [14].

- Initial Rate Measurement: Immediately after mixing, monitor the formation of product or the disappearance of substrate for a short initial period. This can be done via continuous assay (e.g., spectrophotometrically if NADH is involved) or by taking discrete time points and quenching the reaction [14] [12].

- Data Collection: The initial velocity (V₀) is calculated from the slope of the linear portion of the progress curve (concentration vs. time). This is repeated for every substrate concentration in the series [6].

- Curve Fitting: The resulting values of V₀ and [S] are plotted. The parameters Vmax and KM are obtained by fitting the data to the Michaelis-Menten equation using non-linear regression analysis, which is the most direct and accurate method [14].

The following diagram outlines this general experimental workflow:

Data Analysis and Linear Transformations

Before non-linear regression software was widely available, linear transformations of the Michaelis-Menten equation were used to graphically determine KM and Vmax. The most common of these is the Lineweaver-Burk (Double-Reciprocal) Plot [6].

The Michaelis-Menten equation is transformed into: 1/V₀ = (KM / Vmax) × (1/[S]) + 1/V_max

A plot of 1/V₀ versus 1/[S] yields a straight line. The y-intercept is equal to 1/Vmax, the x-intercept is equal to -1/KM, and the slope is KM/Vmax [6]. While useful for visualization and for determining the type of enzyme inhibition (e.g., competitive, non-competitive), the Lineweaver-Burk plot can distort experimental error and is less reliable for parameter estimation than non-linear fitting of the original data [6].

The Model in a Broader Research Context

Enzyme Regulation and Inhibition Kinetics

The Michaelis-Menten model provides the baseline for quantifying how enzymes are regulated. Inhibitors are a primary mode of regulation, and their effects are kinetically defined by how they alter KM and Vmax [14].

- Competitive Inhibition: The inhibitor competes with the substrate for the active site. This increases the apparent KM (lowers apparent affinity), while Vmax remains unchanged because sufficient substrate can outcompete the inhibitor [14].

- Non-Competitive Inhibition: The inhibitor binds to a site other than the active site, impairing catalysis without affecting substrate binding. This decreases Vmax, but the apparent KM remains unchanged [14].

These predictable changes in the kinetic parameters allow researchers to identify an inhibitor's mechanism of action, which is crucial for rational drug design [14].

Clinical and Pharmaceutical Applications

The principles of Michaelis-Menten kinetics are directly applied in drug discovery and diagnostics.

- Drug Design and Optimization: Many therapeutic agents are enzyme inhibitors. Determining the KM for a natural substrate and the inhibition constant (Ki) for a drug candidate helps optimize its potency and specificity [14]. The model allows for the prediction of drug efficacy under varying cellular substrate concentrations.

- Plasma Enzyme Assays: Clinical diagnostics often measure the levels of specific enzymes in blood plasma as markers of tissue damage or disease. For example, elevated levels of creatine kinase MB isoenzyme indicate myocardial infarction, while elevated lactate dehydrogenase can indicate various tissue injuries. These assays often rely on kinetic measurements of enzyme activity under substrate-saturating conditions (near V_max) to quantify enzyme concentration in plasma [6].

The Michaelis-Menten model remains a cornerstone of biochemical research, providing an elegant and powerful framework to quantify enzyme activity. Its parameters, KM, Vmax, and kcat/KM, offer a precise language to discuss substrate affinity, catalytic capacity, and overall efficiency. While its assumptions define its limitations, its core principles form the basis for understanding more complex enzymatic behaviors, including cooperativity and allosteric regulation. For researchers and drug development professionals, mastery of this classical framework is not merely historical; it is a fundamental and practical necessity for capturing, interpreting, and manipulating the kinetic basis of enzyme regulation.

Kinetic models have emerged as a powerful framework for capturing the dynamic and regulatory complexities of enzyme behavior that steady-state models cannot address. Unlike genome-scale metabolic models (GEMs) and Resource Allocation Models (RAMs), which operate under steady-state assumptions and omit enzyme kinetics, kinetic models formulated as systems of ordinary differential equations (ODEs) simultaneously link enzyme levels, metabolite concentrations, and metabolic fluxes [15]. This capability is particularly crucial for modeling multi-substrate reactions and cooperativity, as these phenomena involve transient states, allosteric regulation, and feedback mechanisms that operate under continuously changing cellular conditions. The ability to capture how metabolic responses to diverse perturbations change over time enables researchers to study dynamic regulatory effects and complex interactions with other cellular processes, making kinetic modeling an indispensable tool in systems biology, metabolic engineering, and drug development [15].

Recent advancements are transforming this field, addressing previous limitations through the integration of machine learning with mechanistic models, novel kinetic parameter databases, and tailor-made parametrization strategies [15]. These developments are particularly relevant for modeling complex enzyme kinetics, as they enhance the speed, accuracy, and scope of kinetic models, bringing genome-scale kinetic modeling within reach. For drug development professionals, these models offer unprecedented capabilities for predicting enzymatic responses to allosteric modulators and designing targeted therapeutic interventions that exploit regulatory mechanisms [16].

Theoretical Foundations: From Michaelis-Menten to Complex Systems

Limitations of Classical Approaches for Complex Enzymatic Mechanisms

Traditional Michaelis-Menten kinetics, while foundational to enzymology, provides an inadequate framework for understanding multi-substrate reactions and cooperative systems. This classical approach assumes: (1) a single substrate binding site, (2) no interactions between distinct binding sites, and (3) instantaneous equilibrium conditions that ignore memory effects and temporal dynamics [15] [17]. These assumptions break down when modeling real enzymatic systems where allosteric regulation, multi-substrate binding, and time-dependent phenomena fundamentally influence catalytic behavior.

The critical limitation of conventional models is their inability to capture non-local and history-dependent effects in enzymatic processes. Recent research has demonstrated that enzyme binding sites and reaction interfaces often exhibit fractal-like geometries whose irregular structures significantly affect reaction rates [17]. This structural complexity, combined with the time delays inherent in processes such as conformational changes and intermediate complex formation, necessitates advanced mathematical frameworks that can represent these sophisticated regulatory mechanisms [17].

Mathematical Frameworks for Complex Enzyme Kinetics

Advanced kinetic modeling employs several sophisticated mathematical approaches to overcome the limitations of classical enzyme kinetics:

Deterministic ODE Systems with Canonical Rate Laws: These models depict the balance between production and consumption of metabolites within networks, linking enzyme levels, metabolite concentrations, and metabolic fluxes simultaneously. They use approximative rate laws that specify how reaction rates depend on substrate concentrations, enzyme activity, and regulatory effects without depicting intermediate species, providing intuitive biochemical interpretations of parameters [15].

Variable-Order Fractional Derivative Models: This emerging framework incorporates memory effects and non-local behavior more accurately than integer-order models. The variable-order Caputo fractional derivative is particularly valuable as it allows the use of standard initial conditions expressed in terms of integer-order derivatives, such as experimentally measurable initial concentrations of substrate and enzyme [17]. This approach captures how the "memory strength" evolves over time, reflecting phenomena like enzyme saturation, inhibition, or activation phases.

Delay Differential Equation Frameworks: These models incorporate constant time delays to account for biochemical reaction steps that do not occur instantaneously, such as conformational changes in enzymes or intermediate complex formation [17]. This is particularly relevant for allosteric enzymes like phosphofructokinase that demonstrate time lags through cooperative binding mechanisms.

Table 1: Mathematical Frameworks for Modeling Complex Enzyme Kinetics

| Framework | Key Features | Advantages | Best-Suited Applications |

|---|---|---|---|

| ODE Systems with Approximative Rate Laws | Models reactions without intermediate species; uses Michaelis, inhibition constants | Intuitive biochemical parameters; fewer parameters than mechanistic approaches | Multi-substrate reactions with known regulatory effects |

| Elementary Reaction Mass-Action | Models enzymatic reactions as sequence of elementary steps | High mechanistic fidelity; detailed regulatory interactions | Single-enzyme mechanistic studies |

| Variable-Order Fractional Derivatives | Captures time-varying memory effects; power-law memory | Reflects adaptive enzyme behavior; fractal geometry correlation | Systems with evolving kinetic parameters; heterogeneous structures |

| Delay Differential Equations | Incorporates time delays for non-instantaneous steps | Accounts for conformational changes; channeling effects | Allosteric enzymes; multi-enzyme complexes |

Current Methodologies and Computational Tools

High-Throughput Kinetic Modeling Frameworks

The development of sophisticated computational tools has dramatically accelerated the construction and parameterization of kinetic models for complex enzymatic systems:

SKiMpy: This semiautomated workflow constructs and parametrizes models using stoichiometric models as a scaffold and assigns kinetic rate laws from a built-in library. It samples kinetic parameter sets consistent with thermodynamic constraints and experimental data, pruning them based on physiologically relevant time scales. SKiMpy also provides robust numerical integration across scales, from single-cell dynamics to bioreactor simulations [15].

MASSpy: Built on COBRApy, this framework uses mass-action rate laws by default but allows custom mechanisms for individual reactions. It integrates the strengths of constraint-based metabolic modeling, enabling efficient sampling of steady-state fluxes and metabolite concentrations [15].

Tellurium: A versatile kinetic modeling tool designed for applications in systems and synthetic biology that supports various standardized model formulations and integrates external packages for ODE simulation, parameter estimation, and visualization [15].

These tools have achieved model construction speeds one to several orders of magnitude faster than their predecessors, making high-throughput kinetic modeling a reality [15]. Their development reflects a broader trend in the field toward automating the labor-intensive process of model building while ensuring thermodynamic consistency and physiological relevance.

Machine Learning and Parameter Estimation Advances

Generative machine learning approaches are reshaping kinetic parameter estimation by efficiently exploring parameter spaces and identifying feasible parameter sets that satisfy multiple constraints [15]. These methods are particularly valuable for modeling cooperativity, where parameter landscapes are often complex and multidimensional. Bayesian statistical inference frameworks, such as Maud, efficiently quantify the uncertainty of parameter value predictions, though they can be computationally intensive for large-scale kinetic models [15].

Structural identification techniques analytically derive parameter values from a minimal set of experiments, while tools like pyPESTO enable researchers to test different parametrization techniques on the same kinetic model [15]. The integration of these computational approaches with novel kinetic parameter databases has significantly improved the predictive capabilities of kinetic models, providing higher accuracy and enabling simulations that reliably mimic real-world experimental conditions [15].

Table 2: Computational Frameworks for Kinetic Modeling of Enzyme Regulation

| Method/Tool | Parameter Determination | Requirements | Advantages | Limitations |

|---|---|---|---|---|

| SKiMpy | Sampling | Steady-state fluxes, concentrations, thermodynamics | Efficient, parallelizable; ensures physiologically relevant time scales | No explicit time-resolved data fitting |

| MASSpy | Sampling | Steady-state fluxes and concentrations | Well-integrated with constraint-based modeling tools; computationally efficient | Only mass-action rate law implemented by default |

| Tellurium | Fitting | Time-resolved metabolomics data | Integrates many tools and standardized model structures | Limited parameter estimation capabilities |

| KETCHUP | Fitting | Experimental steady-state data from wild-type and mutant strains | Efficient parametrization with good fitting; parallelizable and scalable | Requires extensive perturbation experiment data |

| Maud | Bayesian statistical inference | Various omics datasets | Efficiently quantifies parameter uncertainty | Computationally intensive; not yet for large-scale models |

Experimental Protocols and Data Integration

Data Requirements and Parameterization Strategies

Building accurate kinetic models for multi-substrate reactions and cooperativity requires specific types of experimental data and rigorous parameterization approaches:

Thermodynamic Consistency Enforcement: The second law of thermodynamics allows coupling reaction directionality with metabolite concentrations, as reactions can only proceed in the direction of negative Gibbs free energy difference. Thermodynamic properties of reactions are estimated using computational techniques such as group contribution and component contribution methods when experimental data is unavailable [15].

Multi-Omics Data Integration: Kinetic models enable direct integration and reconciliation of multi-omics data by explicitly representing metabolic fluxes, metabolite concentrations, protein concentrations, and thermodynamic properties in the same system of ODEs. Proteomics data is directly incorporated by explicitly modeling enzyme kinetics, unlike steady-state models where enzyme amounts merely set upper bounds of metabolic fluxes [15].

Validation Through Dynamic Measurements: Model validation and refinement compare time-course and steady-state predictions to experimental data from various sources, including quantitative measurements of metabolite concentrations and metabolic fluxes over time for single strains and physiological conditions or responses from multiple strains or conditions [15].

Protocol for Modeling Multi-Substrate Enzyme Kinetics

A robust protocol for developing kinetic models of multi-substrate enzyme systems involves these critical steps:

Stoichiometric Network Reconstruction: Define all substrates, products, and potential intermediates using genome-scale metabolic models as a structural scaffold [15].

Rate Law Selection: Assign appropriate kinetic mechanisms from built-in libraries or define custom mechanisms for specific reactions. For multi-substrate reactions, this may involve ordered-sequential, random-sequential, or ping-pong mechanisms [15].

Parameter Sampling: Sample kinetic parameter sets consistent with thermodynamic constraints and available experimental data using algorithms that ensure thermodynamic feasibility [15].

Time-Scale Pruning: Prune parameter sets based on physiologically relevant time scales to eliminate dynamically infeasible solutions [15].

Model Validation: Compare model predictions with experimental data not used in parameterization, including dynamic responses to perturbations and steady-state fluxes under various conditions [15].

Visualizing Complex Enzyme Kinetics

The following diagrams illustrate key concepts and workflows in the kinetic modeling of multi-substrate reactions and cooperativity, created using Graphviz with the specified color palette.

Multi-Substrate Reaction Mechanisms

Multi-Substrate Sequential Mechanism - This diagram visualizes an ordered sequential mechanism where substrates S1 and S2 bind in a specific sequence before products P1 and P2 are released.

Allosteric Cooperativity Modeling

Allosteric Cooperativity Mechanism - This diagram shows the Monod-Wyman-Changeux (MWC) model of allosteric regulation, depicting the equilibrium between tense (T) and relaxed (R) states modulated by substrates, activators, and inhibitors.

Kinetic Model Construction Workflow

Kinetic Model Construction Workflow - This workflow diagram illustrates the iterative process of building, validating, and refining kinetic models of enzyme systems with multi-substrate reactions and cooperativity.

Research Reagent Solutions for Kinetic Studies

Table 3: Essential Research Reagents and Computational Tools for Enzyme Kinetic Studies

| Reagent/Tool | Function | Application in Kinetic Modeling |

|---|---|---|

| SKiMpy Software | Semiautomated kinetic model construction | Uses stoichiometric network as scaffold; assigns rate laws; samples kinetic parameters [15] |

| Tellurium Platform | Kinetic modeling and simulation | Supports standardized model formulations; integrates ODE simulation and parameter estimation [15] |

| MASSpy Framework | Constraint-based modeling integration | Enables sampling of steady-state fluxes and concentrations; mass-action kinetics [15] |

| KETCHUP Tool | Kinetic model parametrization | Efficient fitting using steady-state data from wild-type and mutant strains [15] |

| Thermodynamic Databases | Reaction Gibbs free energy estimation | Provides essential parameters for ensuring thermodynamic consistency [15] |

| Time-Resolved Metabolomics | Dynamic metabolite concentration measurement | Enables model validation against experimental time-course data [15] |

| Proteomics Datasets | Enzyme abundance quantification | Direct incorporation into kinetic models as enzyme concentration variables [15] |

Applications in Drug Development and Biotechnology

Kinetic models of multi-substrate reactions and cooperativity are revolutionizing drug development by enabling precise intervention in enzymatic pathways through allosteric modulation [16]. The pharmaceutical industry is increasingly leveraging these models to identify and exploit allosteric sites, developing therapeutic designs that leverage distal regulation to enhance specificity and overcome resistance [16]. Computational frameworks that integrate evolutionary, structural, and dynamic features with machine learning models are particularly valuable for predicting the effects of allosteric modulators on complex enzymatic systems [16].

In biotechnology, these models support the optimization of enzyme-catalyzed processes in pharmaceutical manufacturing and food technology, where enzyme efficiency changes gradually as substrates deplete and products accumulate [17]. Variable-order fractional models provide superior predictive capabilities by capturing how enzymatic activity adapts to changing biochemical environments, allowing for better control strategies in industrial applications [17]. The capability to simulate dynamic responses to genetic manipulations, environmental conditions, and substrate availability makes kinetic modeling an essential tool for metabolic engineering and bioprocess optimization [15].

The field of kinetic modeling for multi-substrate reactions and cooperativity is advancing rapidly along three critical axes: speed, accuracy, and scope [15]. Methodologies based on generative machine learning and novel nonlinear optimization formulations now enable rapid construction of models and analysis of phenotypes, drastically reducing the time required to obtain metabolic responses [15]. The development of novel databases of enzyme properties and kinetic parameters, combined with increased access to high-performance computational resources, has significantly improved predictive capabilities [15].

Current modeling efforts focus on developing large kinetic models that encompass a broad range of organisms and physiological conditions, with creating genome-scale kinetic models on the horizon [15]. These advances promise to provide unique insights into metabolic processes and enable robust identification of optimal genetic and environmental interventions. The integration of perturbation-based simulations, network analyses, and deep mutational data is reshaping our understanding of allosteric regulation, revealing the growing utility of allostery in drug design and underscoring its potential to expand the therapeutic target space beyond conventional binding sites [16].

As these computational frameworks continue to evolve, they will increasingly bridge the gap between theoretical enzymology and practical applications in medicine and biotechnology, offering powerful tools for understanding and manipulating complex enzymatic systems with unprecedented precision.

Enzyme kinetics provides the fundamental framework for understanding how biological catalysts accelerate chemical reactions, central to cellular metabolism, signaling, and regulation. For researchers and drug development professionals, quantitative kinetic models serve as indispensable tools for predicting metabolic behaviors, identifying therapeutic targets, and elucidating mechanisms of drug action. The prevailing paradigm explaining enzymatic rate enhancement historically centered on transition state (TS) stabilization, where enzymes bind more tightly to the high-energy transition state than to the ground state (GS) substrate, thereby lowering the activation energy barrier [18]. However, emerging experimental and computational evidence reveals a more nuanced picture, wherein reactant destabilization (or GS destabilization) contributes significantly to catalytic efficiency through distinct yet complementary physical mechanisms [19]. This technical guide examines the physical principles underlying both mechanisms, their representation in kinetic models, and experimental approaches for their discrimination and quantification.

Theoretical Foundations of Catalytic Mechanisms

Transition State Stabilization

The transition state stabilization model, originally postulated by Linus Pauling, posits that enzymes are complementary in structure to the transition state of the reaction they catalyze rather than to the substrate itself [18]. This complementarity results in tighter binding of the transition state compared to the substrate.

- Molecular Principle: An enzyme catalyzes a reaction by binding the transition state more tightly than the substrate [18].

- Energetic Consequence: The free energy difference between enzyme-bound transition state and enzyme-bound substrate is smaller than the corresponding difference in the uncatalyzed reaction, resulting in a lower activation energy barrier [18].

- Structural Implications: Enzymes achieve transition state stabilization through precise positioning of catalytic residues, cofactors, and metal ions that form favorable electrostatic and hydrogen-bonding interactions with the transient structure of the transition state.

Reactant Destabilization

The reactant destabilization mechanism proposes that enzymes can also accelerate reactions by selectively destabilizing the ground state substrate through various physical means.

- Molecular Principle: Enzymes catalyze reactions by raising the free energy of the enzyme-substrate complex through desolvation effects, steric strain, or unfavorable electrostatic interactions [19].

- Energetic Consequence: The destabilized ground state sits closer in energy to the transition state, effectively reducing the activation barrier without necessarily stabilizing the transition state itself [19].

- Structural Implications: Active site environments that exclude water or position charged groups unfavorably relative to the substrate can produce significant ground state destabilization effects.

Unified Molecular Mechanism

Recent computational studies reveal that despite their apparent differences, transition state stabilization and reactant destabilization share a common molecular mechanism—both enhance the charge densities of catalytic atoms that experience charge reduction between ground and transition states [19]. The key distinction lies in the timing of this enhancement:

- In TS stabilization, the charge density enhancement occurs prior to enzyme-substrate binding through evolutionary optimization of active site complementarity.

- In GS destabilization, the charge density enhancement occurs during enzyme-substrate binding through strategic placement of destabilizing interactions.

Table 1: Comparative Analysis of Catalytic Mechanisms

| Feature | Transition State Stabilization | Reactant Destabilization |

|---|---|---|

| Primary mechanism | Tight binding to transition state | Weaker binding to ground state |

| Effect on ΔG‡ | Lowers activation energy | Lowers activation energy |

| Charge density effects | Enhanced prior to binding | Enhanced during binding |

| Experimental evidence | Transition state analog inhibition | Desolvation/steric effects |

| Theoretical support | Pauling hypothesis, abzyme studies | Computational studies of KSI |

Kinetic Modeling of Catalytic Mechanisms

Traditional Enzyme Kinetic Models

Kinetic models of enzyme catalysis provide the mathematical framework for quantifying catalytic efficiency and parameterizing the effects of transition state stabilization and reactant destabilization.

- Michaelis-Menten Kinetics: The classical model describes enzyme kinetics using two fundamental parameters: KM (Michaelis constant) and Vmax (maximum reaction rate) [20]. While useful for simple systems, its assumptions of low enzyme concentration and irreversibility limit applicability to in vivo conditions [1].

- Quasi-Steady-State Approximations: Advanced models including the total quasi-steady state assumption (tQSSA) and differential quasi-steady state approximation (dQSSA) address limitations of Michaelis-Menten kinetics under physiological enzyme concentrations [1]. The dQSSA expresses differential equations as linear algebraic equations, eliminating reactant stationary assumptions without increasing model dimensionality [1].

Incorporating Catalytic Mechanisms into Kinetic Models

Modern kinetic models explicitly incorporate parameters that reflect the physical basis of catalysis:

- Reversible Kinetic Models: Mass action models of reversible enzyme kinetics require six kinetic parameters to fully describe association, dissociation, and catalytic rates in both forward and reverse directions [1].

- Parameter-Reduced Models: The dQSSA approach reduces parameter dimensionality while maintaining accuracy, successfully predicting coenzyme inhibition in lactate dehydrogenase where Michaelis-Menten models fail [1].

- Machine Learning Approaches: Frameworks like RENAISSANCE use generative machine learning to parameterize large-scale kinetic models, integrating diverse omics data to characterize intracellular metabolic states [2].

Diagram Title: Enzyme Catalysis Energy Landscape

Experimental Methodologies and Protocols

Quantifying Transition State Stabilization

Transition State Analog Design and Characterization

Principle: Stable molecules that structurally and electronically mimic the transition state bind tightly to enzymes and serve as potent inhibitors [18].

Protocol:

- Analog Design: Synthesize phosphonate esters or phosphonamides that mimic the tetrahedral transition state of ester hydrolysis reactions [18]. These compounds feature sp³ hybridized phosphorus atoms with negatively charged oxygen atoms that resemble the oxyanion transition state.

- Immunization: Conjugate the transition state analog to a carrier protein and inject into host animals to generate catalytic antibodies (abzymes) [18].

- Kinetic Analysis: Measure kcat and KM values for the abzyme-catalyzed reaction using spectrophotometric assays [18].

- Site-Directed Mutagenesis: Systematically modify abzyme structure to enhance catalytic efficiency.

Validation: Successful catalysis of ester hydrolysis by antibodies raised against phosphonate transition state analogs confirms transition state stabilization as a sufficient mechanism for catalysis [18].

Computational Analysis of Charge Density Changes

Principle: Transition state stabilization enhances charge densities of catalytic atoms involved in bond rearrangement [19].

Protocol:

- Quantum Mechanical Calculations: Perform density functional theory (DFT) calculations on substrate and transition state structures in enzymatic and reference solution environments.

- Charge Analysis: Calculate atomic charge distributions using natural population analysis (NPA) or electrostatic potential (ESP) derived charges.

- H-Bonding Capability Assessment: Determine hydrogen-bonding capabilities of catalytic atoms from water to nonpolar solvent phase transfer free energies [19].

- Correlation Analysis: Establish relationship between charge density enhancement and reduction in activation energy barrier.

Measuring Reactant Destabilization

Desolvation Energy Measurements

Principle: Moving charged atoms from aqueous solution to nonpolar enzyme active sites is thermodynamically unfavorable and destabilizes the ground state [19].

Protocol:

- Binding Affinity Studies: Compare binding affinities of ground state analogs to wild-type enzymes versus mutants with altered active site polarity.

- Calorimetric Measurements: Use isothermal titration calorimetry (ITC) to quantify binding thermodynamics and desolvation penalties.

- Computational Estimation: Calculate desolvation free energies using molecular dynamics simulations with explicit solvent models.

Table 2: Experimental Techniques for Catalytic Mechanism Analysis

| Technique | Measured Parameters | Catalytic Mechanism | Applications |

|---|---|---|---|

| Transition state analog studies | Inhibition constants, Ki | TS stabilization | Abzyme production, inhibitor design |

| Site-directed mutagenesis | ΔΔG‡, kcat/KM | Both mechanisms | Active site residue function |

| Computational chemistry | Atomic charge densities, ΔG‡ | Both mechanisms | Mechanism elucidation, catalyst design |

| Isothermal titration calorimetry | ΔH, ΔS, ΔG of binding | GS destabilization | Desolvation energy quantification |

| Kinetic isotope effects | KIE values | TS stabilization | TS structure characterization |

Kinetic Analysis of Ketosteroid Isomerase (KSI)

Case Study: The isomerization of 5-androstene-3,17-dione (5-AND) by KSI provides compelling evidence for both transition state stabilization and ground state destabilization mechanisms [19].

Protocol:

- Wild-type vs Mutant Enzymes: Compare catalytic efficiency of KSI with wild-type anionic Asp40 general base versus uncharged Asn and Ala mutants.

- Binding Measurements: Determine binding affinities of ground state analogs to assess destabilization effects.

- Electrostatic Analysis: Compute interaction energies between substrate oxygen atoms and active site residues using quantum mechanical/molecular mechanical (QM/MM) methods.

- Desolvation Quantification: Estimate free energy penalties associated with moving charged groups from solution to enzyme active site.

Key Finding: Desolvation of the wild-type anionic Asp40 general base decreases binding affinity of ground state analogues, demonstrating ground state destabilization, while simultaneous electrostatic interactions with the transition state provide stabilization [19].

Integration with Kinetic Models of Enzyme Regulation

Advanced Kinetic Frameworks

Modern kinetic modeling approaches capture the complexities of enzyme regulation in physiological contexts:

- dQSSA for Complex Networks: The differential quasi-steady state approximation adapts readily to reversible enzyme kinetic systems with complex topologies, predicting behavior consistent with mass action kinetics while reducing parameter dimensionality [1].

- Machine Learning Parameterization: The RENAISSANCE framework uses generative machine learning with natural evolution strategies to efficiently parameterize large-scale kinetic models, integrating metabolomics, fluxomics, and proteomics data [2].

- Thermodynamically Consistent Models: Kinetic models for open cellular systems account for continual energy consumption through coenzymes like ATP, enabling simulation of cyclic reactions that maintain homeostatic equilibrium [1].

Diagram Title: Machine Learning Kinetic Model Parameterization

Applications in Metabolic Engineering and Drug Discovery

Kinetic models incorporating physical catalytic principles enable:

- Metabolic State Characterization: Accurate estimation of intracellular metabolic states in E. coli using RENAISSANCE-generated models that match experimentally observed doubling times and dynamic responses [2].

- Enzyme Inhibition Analysis: Prediction of coenzyme inhibition patterns in lactate dehydrogenase using dQSSA models, surpassing capabilities of traditional Michaelis-Menten approaches [1].

- Drug Target Identification: Quantitative comparison of catalytic mechanisms facilitates rational design of transition state analog inhibitors for therapeutic applications.

Research Reagent Solutions

Table 3: Essential Research Reagents for Catalytic Mechanism Studies

| Reagent/Category | Function/Application | Specific Examples |

|---|---|---|

| Transition state analogs | Enzyme inhibition, abzyme production | Phosphonate esters, phosphonamides [18] |

| Site-directed mutagenesis kits | Active site modification | KSI mutants (Asp40→Asn/Ala) [19] |

| Computational software | Quantum mechanical calculations, MD simulations | DFT codes, QM/MM packages [19] |

| Kinetic assay systems | Reaction rate measurement | Spectrophotometric assays, radiometric assays [20] |

| Catalytic antibody reagents | Abzyme production and characterization | Phosphonate-carrier protein conjugates [18] |

| Stable isotopically labeled substrates | Kinetic isotope effect studies | ²H, ¹³C, ¹⁵N-labeled compounds |

| Calorimetry systems | Binding thermodynamics | Isothermal titration calorimetry [19] |

Allosteric regulation is a fundamental mechanism through which cells dynamically modulate enzyme activity in response to environmental changes and metabolic demands. Unlike orthosteric regulation, where effectors bind directly to the active site, allosteric regulation involves binding at distinct sites, inducing conformational changes that alter enzyme function from a distance [21] [22]. This form of regulation is critical for maintaining cellular homeostasis, coordinating complex biological functions, and enabling sophisticated feedback loops in metabolic pathways [23]. The kinetic analysis of allosteric enzymes reveals distinctive sigmoidal progress curves rather than the hyperbolic curves characteristic of Michaelis-Menten kinetics, indicating cooperative interactions between multiple binding sites [23].

Theoretical models developed over the past half-century, particularly the Monod-Wyman-Changeux (MWC) concerted model, provide a mathematical framework for quantifying and interpreting these cooperative effects [24] [22]. The integration of the Hill equation with the MWC model offers researchers a powerful toolkit for extracting meaningful parameters from experimental data, connecting observable kinetic behavior to underlying molecular mechanisms [25]. For drug development professionals, understanding these models is increasingly valuable as allosteric modulators offer unique advantages over traditional orthosteric drugs, including enhanced specificity, reduced off-target effects, and the potential to target previously "undruggable" proteins [26]. This technical guide explores the theoretical foundations, experimental methodologies, and practical applications of these essential kinetic models in contemporary enzyme research.

Theoretical Foundations of Allosteric Models

The Hill Equation and Coefficient

The Hill equation provides a phenomenological description of cooperativity in ligand binding. It characterizes the sigmoidal relationship between ligand concentration and fractional saturation, serving as a valuable tool for quantifying the degree of cooperativity without necessarily specifying the molecular mechanism. The Hill coefficient (nH) quantitatively expresses the steepness of the sigmoidal curve and thus the degree of cooperativity [24]. A coefficient of 1.0 indicates non-cooperative binding, values greater than 1.0 suggest positive cooperativity, and values less than 1.0 imply negative cooperativity [23]. Although the Hill coefficient does not directly equal the number of binding sites, it provides a lower-bound estimate of this number and serves as a useful empirical measure of cooperative interactions [27].

The Monod-Wyman-Changeux (MWC) Model

The MWC model proposes a concerted transition mechanism between two primary conformational states: the tense (T) state with lower ligand affinity and the relaxed (R) state with higher ligand affinity [24] [22]. The model posits three fundamental parameters: L, the equilibrium constant between T and R states in the absence of ligand; KR, the dissociation constant for ligand binding to the R state; and KT, the dissociation constant for ligand binding to the T state. The ratio c = KR/KT defines the relative affinity difference between the two states, with c < 1 indicating higher affinity for the R state [24]. A key feature of the MWC model is its distinction between the binding function (Ȳ, fraction of sites occupied) and the state function (R̄, fraction of molecules in the R state), each exhibiting different cooperative properties [24].

Table 1: Key Parameters in the MWC Allosteric Model

| Parameter | Symbol | Definition | Biological Significance |

|---|---|---|---|

| Allosteric Constant | L | L = [T]/[R] (no ligand) | Intrinsic stability of T state relative to R state |

| Dissociation Constant (R state) | KR | KR = [R][ligand]/[R-ligand] | Ligand affinity for active conformation |

| Dissociation Constant (T state) | KT | KT = [T][ligand]/[T-ligand] | Ligand affinity for inactive conformation |

| Affinity Ratio | c | c = KR/KT | Relative ligand preference for R vs T state |

| Hill Coefficient | nH | nH = dlog[Ȳ/(1-Ȳ)]/dlog[ligand] | Measure of observed cooperativity |

Figure 1: MWC Allosteric Model Schematic. The model depicts the concerted transition between T and R states governed by equilibrium constant L, with differential ligand binding affinities KT and KR.

Relating the Hill Coefficient to MWC Parameters

A significant advancement in allosteric theory came with the derivation of a simple analytical relationship between the Hill coefficient and the parameters of the MWC model. For the state function R̄, the Hill coefficient (n′H) can be expressed as:

n′H = n(1 - c)/(1 + cα) × α/(1 + α) [24]

where n represents the number of subunits, c = KR/KT, and α = [ligand]/KR. This relationship reveals that the cooperativity of R̄ depends solely on the relative affinities of the two states (c) and not on their relative intrinsic stabilities (L) [24]. The maximum value of n′H occurs at α = 1/√c and simplifies to:

n′H,max = n(1 - √c)/(1 + √c) [24]

This mathematical relationship provides a powerful tool for interpreting experimental data, as it allows researchers to connect the observable Hill coefficient to fundamental molecular parameters of the MWC model, facilitating more accurate analysis of allosteric systems [25].

Experimental Methodologies for Allosteric Enzyme Analysis

Enzyme Activity Assays and Progress Curve Analysis

The accurate determination of enzyme activity forms the foundation of kinetic analysis. Continuous spectrophotometric assays that monitor substrate consumption or product formation in real-time provide the most comprehensive data, capturing the complete progress curve from reaction initiation to completion [7]. For allosteric enzymes exhibiting sigmoidal kinetics, it is particularly important to collect data across a wide range of substrate concentrations to fully define the characteristic S-shaped curve [27]. The reaction typically proceeds until a steady base level is reached, providing information about both the initial velocity and the approach to equilibrium [7].

A critical consideration in experimental design is ensuring that enzyme saturation is maintained throughout the measurement period. Substrate depletion can lead to non-linear progress curves even in the initial phase, complicating data interpretation [7]. While traditional analysis often focuses on the linear portion of the progress curve, a more robust approach involves kinetic modeling that accounts for the entire curve, including non-linear regions [7]. This integrated analysis provides more reliable estimates of enzyme activity, especially under conditions where substrate saturation cannot be guaranteed throughout the assay duration.

Table 2: Essential Research Reagents for Allosteric Enzyme Studies

| Reagent/Category | Function/Application | Example Specifics |

|---|---|---|

| Extraction Buffers | Isolation of native enzymes from tissue/cells | MES buffer (pH 7.5), Dithiothreitol (reducing agent), Polyvinylpyrrolidone (phenol binder), Triton X-100 (detergent) [7] |

| Cofactors | Enable or enhance enzymatic reactions | Thiamine pyrophosphate (e.g., for pyruvate decarboxylase), Mg2+ ions [7] |

| Spectroscopic Probes | Monitor reaction progress | NADH (absorbance at 360 nm), 4-Methyl Umbelliferone Butyrate - MUB (fluorogenic substrate for lipases) [7] [28] |

| Allosteric Effectors | Investigate modulation patterns | Specific inhibitors/activators for target enzyme (e.g., ATP for phosphofructokinase) [23] |

| Phase-Separation Components | Study condensation effects | RGG domains (e.g., from Laf1 protein) to create enzymatic condensates [28] |

Data Fitting and Model Selection

The distinction between Michaelis-Menten kinetics and allosteric sigmoidal kinetics requires careful statistical comparison of model fits. Software tools such as GraphPad Prism facilitate this process through built-in algorithms that compare the goodness-of-fit between different models using methods like the extra sum-of-squares F-test [27]. A significant P value (typically < 0.05) indicates that the more complex allosteric model provides a statistically better fit to the data than the simpler Michaelis-Menten equation [27].

When working with the MWC model, parameter correlation presents a common challenge, as different combinations of L, KR, and KT can sometimes produce similar theoretical curves [25]. The recently derived relationship between the Hill coefficient and MWC parameters helps constrain these values, enabling researchers to select the most physiologically relevant parameter combination from multiple mathematically possible solutions [24] [25]. For the GroEL chaperonin, this approach has provided insights into the thermodynamic driving forces behind its allosteric transitions, demonstrating the practical utility of integrated Hill-MWC analysis [25].

Figure 2: Experimental Workflow for Allosteric Enzyme Kinetics. The process encompasses enzyme preparation, assay development, data collection, and computational analysis to distinguish kinetic mechanisms and estimate parameters.

Advanced Methodologies: Biomolecular Condensates

Recent advances in enzyme kinetics have revealed the significant impact of biomolecular condensates on enzymatic activity. These membraneless organelles can enhance reaction rates through multiple mechanisms, including local concentration effects and modulation of the enzyme's microenvironment [28]. For the Bacillus thermocatenulatus Lipase 2 (BTL2), incorporation into biomolecular condensates resulted in a 3-fold increase in enzymatic activity, comparable to the enhancement observed with 10% isopropanol addition [28]. This effect stems from the more apolar environment within condensates, which stabilizes the open, active conformation of the enzyme [28].

Furthermore, condensates can function as local pH buffers, maintaining optimal conditions for enzymatic activity even when the bulk solution pH is suboptimal [28]. This property enables cascade reactions involving multiple enzymes with different pH optima that would otherwise be incompatible in a homogeneous solution [28]. For researchers studying allosteric enzymes, these findings highlight the importance of considering supramolecular organization and local microenvironment effects when interpreting kinetic data in both in vitro and cellular contexts.

Computational and Mathematical Frameworks

Mathematical Formulations of Allosteric Models

The MWC model provides distinct mathematical expressions for the binding function (Ȳ) and state function (R̄). For an oligomeric protein with n identical subunits, the fractional saturation (binding function) is given by:

Ȳ = [α(1 + α)^(n-1) + Lcα(1 + cα)^(n-1)] / [(1 + α)^n + L(1 + cα)^n] [24]

where α = [ligand]/KR, L = [T]/[R] (in absence of ligand), and c = KR/KT. The state function, representing the fraction of molecules in the R state, is described by:

R̄ = (1 + α)^n / [(1 + α)^n + L(1 + cα)^n] [24]

The concept of allosteric range (Q) further refines our understanding of system behavior, defined as Q = R̄max - R̄min, where R̄min = 1/(1 + L) and R̄max = 1/(1 + Lc^n) [24]. Systems with low L values and high c values (approaching 1) exhibit small allosteric ranges (Q ≪ 1), indicating limited regulatory capacity, while large allosteric ranges correspond to more robust switching behavior between inactive and active states.

Computational Approaches for Allosteric Site Identification

Computational methods have become indispensable tools for identifying and characterizing allosteric sites, complementing experimental approaches. Molecular dynamics (MD) simulations track atomic movements over time, revealing conformational changes and transient pockets that may not be visible in static crystal structures [21]. For example, MD simulations of branched-chain α-ketoacid dehydrogenase kinase (BCKDK) uncovered cryptic allosteric sites that were not detected by X-ray crystallography alone [21].

Enhanced sampling techniques, such as metadynamics and umbrella sampling, accelerate the exploration of conformational space by overcoming energy barriers, facilitating the identification of rare conformational states relevant to allosteric regulation [21]. These methods can be combined with machine learning approaches that leverage evolutionary information, as residues involved in allosteric communication often exhibit co-evolution patterns [26]. Tools like PASSer, AlloReverse, and AlphaFold-enhanced analyses are increasingly employed to predict allosteric sites and mechanisms, providing valuable starting points for experimental validation [21] [26].

Applications in Drug Discovery and Therapeutic Development

The unique properties of allosteric modulators offer distinct advantages for therapeutic intervention. Allosteric drugs typically exhibit greater specificity than orthosteric compounds because they target less-conserved regions of proteins, reducing the risk of off-target effects [26]. Additionally, allosteric modulators can fine-tune enzyme activity rather than completely inhibiting it, allowing for more subtle pharmacological control [22]. This property is particularly valuable for essential enzymes where complete inhibition would be toxic.

Several FDA-approved drugs exemplify the therapeutic potential of allosteric enzyme modulation. Trametinib, an allosteric inhibitor of MEK kinases, demonstrates significantly greater potency than orthosteric alternatives, achieving enhanced target inhibition at lower concentrations [26]. Similarly, the allosteric ABL kinase inhibitor asciminib showed superior efficacy compared to the orthosteric inhibitor bosutinib in treating chronic myeloid leukemia, with significantly higher molecular response rates [26]. These clinical successes underscore the translational relevance of understanding allosteric mechanisms and developing drugs that target allosteric sites.

The "ceiling effect" represents another advantageous property of many allosteric modulators, where their effect plateaus at higher concentrations, potentially reducing toxicity risks associated with overdosing [26]. Furthermore, allosteric drugs can be used in combination with orthosteric agents to overcome drug resistance, as demonstrated by the synergistic interaction between GNF-2 and imatinib in ABL kinase inhibition [26]. For drug development professionals, these characteristics make allosteric enzymes attractive targets for next-generation therapeutics across diverse disease areas, from cancer to metabolic disorders.

Kinetic models centered on the Hill equation and MWC framework provide indispensable tools for quantifying and interpreting the complex behavior of allosteric enzymes. The integration of these mathematical approaches with robust experimental methodologies enables researchers to connect macroscopic kinetic measurements to microscopic molecular mechanisms, offering insights into the fundamental principles of enzyme regulation. As computational methods advance and our understanding of allosteric landscapes deepens, these kinetic models continue to evolve, incorporating new dimensions such as biomolecular condensates and dynamic allosteric networks. For scientists and drug development professionals, mastery of these concepts and techniques remains essential for exploiting allosteric mechanisms in basic research and therapeutic innovation, ultimately expanding the druggable target space and enabling more precise control of biological systems.

Advanced Modeling Frameworks and Their Applications in Drug Discovery and Metabolic Engineering

The mathematical modeling of enzyme kinetics is a cornerstone of systems biology, providing a framework to understand the dynamic regulation of biochemical networks. For decades, the Michaelis-Menten model and its associated standard Quasi-Steady-State Assumption (sQSSA) have dominated enzyme kinetics, particularly for in vitro studies. However, a significant limitation of this traditional approach is its inherent assumption of low enzyme concentrations, a condition often violated in in vivo environments where enzyme concentrations can be high [29]. This validity gap can lead to unrealistic conclusions when modeling cellular systems [1]. The Total QSSA (tQSSA) and the Differential QSSA (dQSSA) represent sophisticated advancements that overcome these limitations. These generalized kinetic models maintain accuracy across a wider range of biological conditions, including high enzyme concentrations and complex reaction topologies, thereby providing more reliable tools for research in drug development and metabolic engineering [1] [29] [30].

Theoretical Foundations: From Michaelis-Menten to Modern Approximations

The Standard Quasi-Steady-State Assumption (sQSSA) and Its Limits

The canonical enzyme kinetic model describes the transformation of a substrate (S) into a product (P) catalyzed by an enzyme (E) via the formation of an enzyme-substrate complex (C). This is represented by the fundamental scheme: E + S ⇌ C → E + P [29]. The sQSSA, leading to the classic Michaelis-Menten equation, is derived by assuming that the complex concentration remains approximately constant (dC/dt ≈ 0) after a brief initial transient. The validity of this approximation is predicated on the condition that the enzyme concentration is sufficiently low relative to the substrate concentration and the Michaelis constant ((K_M)) [29]. While this condition often holds for purified in vitro experiments, it frequently breaks down in crowded cellular environments, limiting the sQSSA's applicability for physiological modeling [29].

The Total QSSA (tQSSA): A Broader Validity Domain

The tQSSA addresses the sQSSA's limitations by introducing a change of variables. Instead of tracking free substrate (S), it uses the total substrate concentration ((ST = S + C)) [30]. This simple yet powerful shift in perspective leads to a kinetic model that remains valid for both low and high enzyme concentrations [30]. The core differential equation becomes: d(ST)/dt = -k₂ C where the complex concentration (C) is defined implicitly as a function of (ST) by solving the quadratic equation derived from the conservation laws: C² - (ET + KM + (ST))C + ET (ST) = 0 [30]. This formulation does not require the low enzyme assumption, making it uniformly valid across a much wider parameter space [29] [30]. Its application has been successfully extended to complex reaction schemes, including fully competitive reactions and phosphorylation cycles [29].

The Differential QSSA (dQSSA): Linear Algebraic Formulation

The dQSSA is another generalized model designed to eliminate the restrictive assumptions of the sQSSA without increasing model dimensionality. It expresses the system of differential equations as a linear algebraic equation, significantly simplifying the mathematical analysis [1]. A key advantage of the dQSSA is its ease of adaptation to reversible enzyme kinetic systems with complex topologies. It has been demonstrated to predict behavior consistent with mass action kinetics in silico and can capture nuanced regulatory phenomena, such as coenzyme inhibition in reversible lactate dehydrogenase (LDH), which the classical Michaelis-Menten model fails to reproduce [1]. Furthermore, by reducing the number of parameters, the dQSSA simplifies the optimization process during model fitting [1].

Table 1: Comparative Analysis of Quasi-Steady-State Approximations in Enzyme Kinetics

| Feature | sQSSA (Michaelis-Menten) | tQSSA | dQSSA |

|---|---|---|---|

| Core Assumption | dC/dt ≈ 0; Low [Enzyme] | dC/dt ≈ 0; Uses total substrate variable | Linear algebraic formulation of ODEs |