Flux Consistency in Metabolic Models: A Guide to Reliable Predictions for Biomedical Research

This article provides a comprehensive overview of flux consistency in genome-scale metabolic models (GEMs), a critical factor for generating reliable predictions in biomedical and biotechnological applications.

Flux Consistency in Metabolic Models: A Guide to Reliable Predictions for Biomedical Research

Abstract

This article provides a comprehensive overview of flux consistency in genome-scale metabolic models (GEMs), a critical factor for generating reliable predictions in biomedical and biotechnological applications. Aimed at researchers, scientists, and drug development professionals, it covers foundational concepts, key methodologies like Flux Balance Analysis (FBA) and 13C-Metabolic Flux Analysis (13C-MFA), and advanced techniques such as flux sampling. The scope extends to practical applications in bioprocess optimization and drug discovery, addresses common challenges in model troubleshooting and validation, and explores emerging methods like machine learning for improving predictive accuracy. The goal is to equip practitioners with the knowledge to build, validate, and apply robust metabolic models.

Demystifying Metabolic Flux: Core Concepts and the Importance of Consistency

What is Metabolic Flux? Defining Reaction Rates in Cellular Networks

Metabolic flux, defined as the rate of metabolite turnover through biochemical pathways, represents the functional phenotype of cellular metabolic networks [1] [2]. This quantitative measure of reaction rates provides crucial insights into cellular physiology, from fundamental biological processes to disease mechanisms like cancer [3] [4]. As the definitive parameter for investigating cell metabolism, flux analysis bridges the gap between genetic potential and metabolic function, enabling researchers to understand how metabolic pathways are regulated under different physiological conditions [2] [4]. This technical guide explores the fundamental principles, measurement methodologies, and computational frameworks for analyzing metabolic fluxes, with particular emphasis on flux consistency in metabolic reconstructions—a critical consideration for developing predictive biological models in pharmaceutical and biotechnology applications [5] [6].

Core Principles of Metabolic Flux

Fundamental Definition and Biochemical Basis

In biochemical terms, metabolic flux refers to the rate of turnover of molecules through a metabolic pathway [1]. It is mathematically represented as the net rate of a metabolic reaction, calculated as the difference between the forward ((Vf)) and reverse ((Vr)) reaction rates:

[ J = Vf - Vr ]

where (J) represents the flux through a given reaction [1]. At equilibrium, where forward and reverse rates equalize, no net flux occurs. Metabolic flux is not a static property but a dynamic measure that quantifies the flow of metabolites through interconnected metabolic networks [1] [2].

The control of metabolic flux represents a systemic property that depends on all interactions within the metabolic network [1]. This regulation is quantified by the flux control coefficient, which measures the degree to which individual enzymatic steps influence pathway flux [1]. In linear reaction chains, this coefficient ranges between zero and one, where zero indicates no influence over steady-state flux and one signifies complete control [1].

Metabolic Flux in Network Context

Cellular metabolism functions as an integrated network of chemical reactions rather than isolated pathways [1]. These networks are interconnected through shared metabolites and cofactors, with metabolic flux representing the movement of matter through this complex system [1]. The regulation of flux through these networks occurs primarily at enzymatic steps catalyzing irreversible reactions, while reversible steps are governed by simple chemical equilibria based on reactant and product concentrations [1].

Metabolic flux provides a quantitative readout of cellular function that reflects the integration of gene expression, translation, post-translational modifications, and protein-metabolite interactions [1] [4]. As such, flux distributions represent the ultimate expression of cellular phenotype under specific conditions, making them particularly valuable for understanding metabolic adaptations in diseases like cancer, where tumor cells exhibit dramatically altered glucose metabolism compared to normal cells [1] [3].

Table 1: Key Characteristics of Metabolic Flux in Cellular Systems

| Property | Description | Biological Significance |

|---|---|---|

| Dynamic Nature | Rate of metabolite flow through pathways | Represents real-time metabolic activity rather than metabolic potential |

| Systemic Regulation | Controlled by multiple enzymatic steps simultaneously | Explains how perturbations at one network node affect entire system |

| Connectivity | Links different metabolic networks through common cofactors | Enables coordination between carbohydrate, lipid, and amino acid metabolism |

| Condition Dependency | Varies with genetic background and environment | Explains metabolic adaptations in disease and stress responses |

Methodological Approaches for Flux Analysis

Experimental Measurement Techniques

Measuring metabolic fluxes presents unique challenges since fluxes cannot be directly measured but must be inferred from other observables [2]. The most informative approaches utilize stable isotope labeling and tracking techniques, with (^{13}\text{C})-based metabolic flux analysis ((^{13}\text{C})-MFA) emerging as the most advanced and widely applicable method [7].

The (^{13}\text{C})-MFA protocol involves several critical steps [7]:

- Preparation of cell cultures with labelled tracers, typically using (^{13}\text{C})-labeled substrates (e.g., [1,2-(^{13}\text{C})] glucose; [U-(^{13}\text{C})] glucose)

- Cell cultivation until isotopic steady state is reached, where isotopes are fully incorporated and static

- Extraction of intra- and extracellular metabolites

- Analysis using targeted mass spectrometry (MS) or nuclear magnetic resonance (NMR) spectroscopy

- Data processing and computational modeling to evaluate and predict cellular fluxes

For mammalian cells, a significant limitation of traditional (^{13}\text{C})-MFA is the extended time required to reach isotopic steady state (often 4 hours to a full day) [7]. To address this, isotopic nonstationary (^{13}\text{C})-MFA (INST-MFA) monitors the transient accumulation of labeled metabolites over time before the system reaches isotopic steady state, while maintaining metabolic steady state [7]. This approach provides faster results but requires more complex computational modeling using the elementary metabolite unit (EMU) approach to reduce computational difficulty [7].

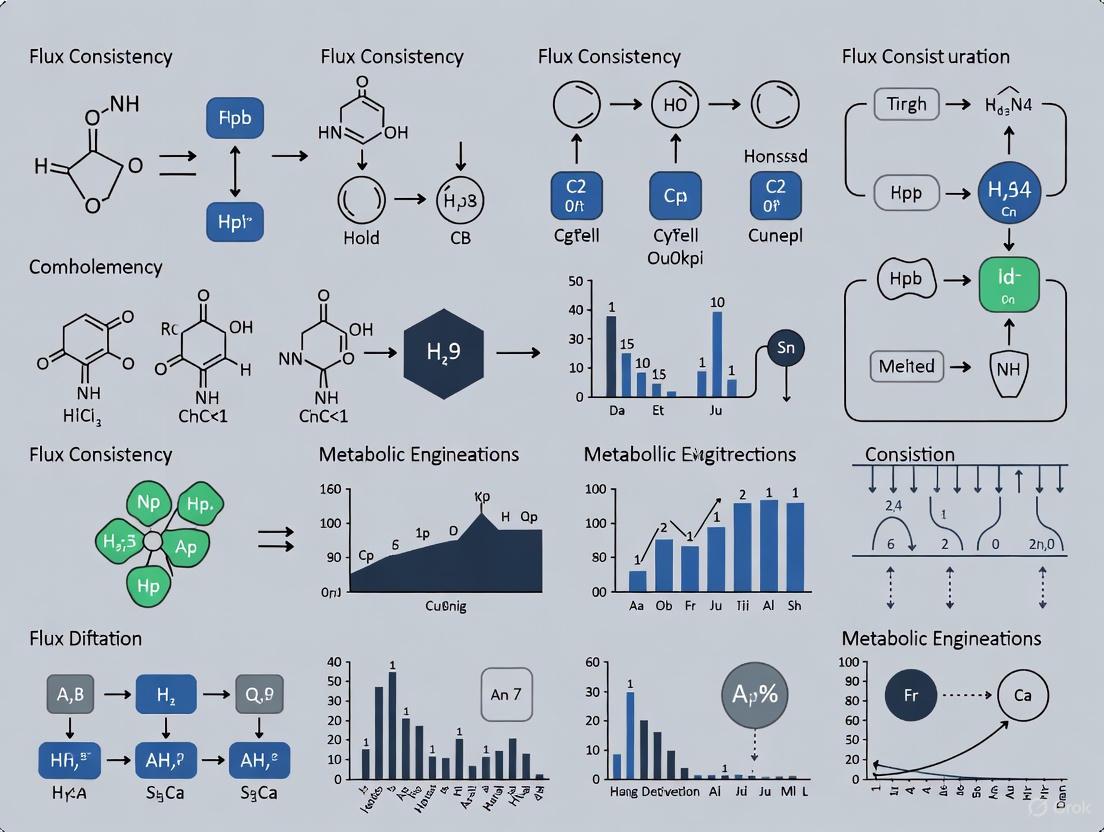

Figure 1: Experimental workflow for 13C-Metabolic Flux Analysis (13C-MFA)

Computational Modeling Approaches

Computational methods for flux analysis have evolved significantly, with several distinct techniques now available [7]:

Table 2: Comparison of Major Metabolic Flux Analysis Techniques

| Method | Abbreviation | Labeled Tracers | Metabolic Steady State | Isotopic Steady State | Primary Applications |

|---|---|---|---|---|---|

| Flux Balance Analysis | FBA | Not Required | Required | Not Required | Genome-scale prediction of metabolic capabilities |

| Metabolic Flux Analysis | MFA | Not Required | Required | Not Required | Central carbon metabolism studies |

| 13C-Metabolic Flux Analysis | 13C-MFA | Required | Required | Required | Detailed resolution of intracellular fluxes |

| Isotopic Nonstationary 13C-MFA | 13C-INST-MFA | Required | Required | Not Required | Systems with slow isotope labeling |

| Dynamic Metabolic Flux Analysis | DMFA | Not Required | Not Required | Not Required | Transient culture conditions |

| 13C-Dynamic MFA | 13C-DMFA | Required | Not Required | Not Required | Comprehensive dynamic flux mapping |

Flux Balance Analysis (FBA) represents the foundational computational approach, using large-scale metabolic models and assuming steady-state conditions within the metabolic network [7]. FBA treats the cell as a network of reactions constrained by mass balance laws, seeking to find reaction rates (fluxes) that satisfy these constraints while maximizing a biological objective such as growth or ATP production [8].

More advanced techniques like 13C-MFA integrate experimental labeling data with computational models to resolve intracellular fluxes with greater accuracy [7]. This method assumes both metabolic steady state (constant metabolic fluxes over time) and isotopic steady state (static isotope incorporation) [7].

Flux Consistency in Metabolic Reconstructions

Concept and Importance in Metabolic Modeling

Flux consistency represents a critical quality metric for metabolic reconstructions, referring to the capability of reactions within a network to carry non-zero flux under given physiological conditions [5]. The presence of flux-inconsistent reactions indicates gaps, errors, or thermodynamic impossibilities in metabolic reconstructions that undermine their predictive accuracy [5].

In genome-scale metabolic reconstructions, flux consistency is evaluated through flux variability analysis and related algorithms that identify reactions which cannot carry flux in any valid network state [5]. The percentage of flux-consistent reactions serves as a key indicator of reconstruction quality, with higher percentages generally reflecting better-curated models [5]. For instance, in the AGORA2 resource of human microbiome reconstructions, flux consistency was a primary validation metric, with the reconstructions demonstrating significantly higher flux consistency than automatically generated drafts [5].

Methodologies for Ensuring Flux Consistency

Multiple computational approaches have been developed to evaluate and improve flux consistency in metabolic reconstructions:

Constraint-Based Reconstruction and Analysis (COBRA) methods provide the mathematical foundation for evaluating flux consistency [5]. These approaches apply mass balance, thermodynamic, and enzymatic capacity constraints to define the feasible flux space, then identify reactions that cannot carry flux under these constraints [5].

DEMETER (Data-drivEn METabolic nEtwork Refinement) represents an advanced pipeline for reconstruction refinement that systematically improves flux consistency [5]. This workflow integrates data collection, draft reconstruction generation, and simultaneous iterative refinement, gap-filling, and debugging [5]. The process includes manual validation of gene functions across metabolic subsystems and extensive literature curation to ensure biological accuracy [5].

Recent advances include flux sampling techniques that characterize distributions of all possible fluxes rather than single optimal states [6]. This approach is particularly valuable for capturing phenotypic diversity and incorporating uncertainty into flux predictions, especially for applications in personalized medicine and microbial community modeling [6].

Table 3: Research Reagent Solutions for Metabolic Flux Analysis

| Reagent/Resource | Function | Application Context |

|---|---|---|

| 13C-labeled substrates (e.g., [U-13C] glucose) | Carbon source for tracing metabolic pathways | 13C-MFA experiments to determine intracellular flux distributions |

| AGORA2 reconstructions | Genome-scale metabolic models of human microorganisms | Personalized modeling of host-microbiome interactions in drug metabolism |

| Flux analysis software (INCA, OpenFLUX, 13C Flux2) | Computational modeling of flux distributions | Data processing and flux calculation from isotopic labeling patterns |

| Analytical platforms (GC-MS, LC-MS, NMR) | Detection and quantification of isotopic labeling | Measurement of isotope incorporation in metabolic intermediates |

Applications and Current Research Directions

Biomedical and Biotechnology Applications

Metabolic flux analysis has become indispensable across multiple research domains, with significant implications for human health and disease:

Cancer research has particularly benefited from flux analysis, as tumor cells exhibit characteristic metabolic reprogramming known as the Warburg effect—enhanced glucose uptake and lactate production even under aerobic conditions [1] [3]. In glioblastoma multiforme (GBM), one of the most aggressive malignant brain tumors, flux analysis of GBM-specific metabolic models has predicted major sources of acetyl-CoA and oxaloacetic acid pools in the TCA cycle, revealing that pyruvate dehydrogenase from glycolysis and anaplerotic flux from glutaminolysis serve as primary contributors [3].

Metabolic engineering represents another major application area, where flux analysis guides the rational redesign of microbial strains for industrial biotechnology [4]. By identifying metabolic limitations and bottlenecks, researchers can implement targeted genetic modifications to redirect flux toward desired products [4]. For example, flux analysis revealed limitations in redox cofactor regeneration rates of NADH and NADPH in various microbial hosts, leading to engineering strategies that overcome these constraints [4].

Emerging Technologies and Future Perspectives

The field of metabolic flux analysis continues to evolve with several emerging technologies poised to expand capabilities:

Quantum computing algorithms represent a frontier in flux analysis, with recent demonstrations showing that quantum interior-point methods can solve core metabolic-modeling problems like flux balance analysis [8]. Though currently limited to simulations, this approach suggests a potential route to accelerate metabolic simulations as models scale to whole cells or microbial communities [8]. Japanese researchers have successfully adapted quantum singular value transformation to map how cells use energy and resources, recovering correct solutions for test cases involving glycolysis and the TCA cycle [8].

Personalized medicine applications are advancing through resources like AGORA2, which provides 7,302 genome-scale metabolic reconstructions of human microorganisms [5]. This enables strain-resolved modeling of individual gut microbiomes and their drug transformation potentials, which vary considerably between individuals and correlate with age, sex, body mass index, and disease stages [5].

Single-cell flux analysis represents another emerging frontier, though current methods primarily focus on transcriptomic and proteomic profiling at single-cell resolution [6]. The development of true single-cell flux measurements would revolutionize our understanding of cellular heterogeneity in metabolic responses.

Metabolic flux represents the dynamic flow of metabolites through biochemical pathways, providing a quantitative measure of cellular metabolic activity that reflects the integration of genetic potential, environmental constraints, and regulatory mechanisms. The analysis of metabolic fluxes has evolved from simple material balancing to sophisticated approaches integrating stable isotope tracing, advanced analytics, and computational modeling. Flux consistency has emerged as a critical consideration in metabolic reconstructions, serving as both a quality metric and a practical target for model refinement. As technologies advance—particularly in quantum computing, personalized modeling, and single-cell analysis—the resolution and predictive power of flux analysis will continue to improve, offering new insights into fundamental biological processes and unlocking novel therapeutic strategies for human diseases.

In the field of metabolic engineering and systems biology, researchers frequently encounter a fundamental mathematical challenge: underdetermined systems. These systems arise when the number of unknown metabolic fluxes exceeds the number of constraining equations derived from mass balances and experimental measurements [9]. The core of the problem lies in the stoichiometric matrix S, where the system of equations is represented as S·v = 0, with v representing the flux vector. When the number of fluxes (n) exceeds the number of metabolites (m), the system possesses infinite mathematical solutions [9] [10]. This underdetermination is not merely a theoretical concern but a practical limitation that affects the predictability and precision of metabolic models. Genome-scale metabolic reconstructions typically contain hundreds to thousands of reactions but only a fraction of corresponding metabolite balance equations, making them inherently underdetermined [11]. Consequently, researchers face significant challenges in determining unique flux distributions, necessitating specialized approaches to narrow the solution space.

The Mathematical Basis of Underdetermination

Fundamental Concepts and Stoichiometric Constraints

The mathematical formulation of metabolic networks begins with mass balance equations. For each metabolite ( X_i ) in the system, the rate of change is described by the differential equation:

[ \frac{dX_i}{dt} = \sum Influxes - \sum Effluxes ]

At steady state, where ( \frac{dX_i}{dt} = 0 ), this simplifies to ( \sum Influxes = \sum Effluxes ) [10]. These balances form a system of linear equations represented in matrix form as S·v = 0, where S is the m×n stoichiometric matrix (m metabolites, n reactions), and v is the n×1 flux vector. The difference between the number of reactions (n) and the rank of S determines the system's degrees of freedom. A positive value indicates an underdetermined system where the null space of S contains multiple vectors satisfying the mass balance constraints [9] [10].

A Simplified Illustrative Example

Consider the simplified metabolic network iSIM, which captures central energy metabolism with just nine metabolic reactions [11]. Even in this minimal reconstruction, if the number of measurable fluxes is insufficient, the system may remain underdetermined. For instance, a branching point with three fluxes (v1, v2, v3) and only one mass balance equation (v1 = v2 + v3) has three unknowns but only one equation, resulting in infinitely many solutions satisfying the constraint.

Experimental and Computational Methodologies for Resolving Underdetermined Systems

Constraint-Based Modeling and Optimization Approaches

To address underdetermination, researchers employ constraint-based modeling approaches that incorporate additional biological constraints:

- Flux Balance Analysis (FBA): FBA resolves underdetermination by imposing an objective function (e.g., biomass maximization) and solving for the flux distribution that optimizes this function [10] [12]. The solution space is further constrained by incorporating physiological bounds on reaction fluxes (( \alphai \leq vi \leq \beta_i )).

- Dynamic Flux Estimation (DFE): This model-free approach uses time-series metabolite concentration data to estimate derivatives (( dX_i/dt )) [10]. The system is decoupled into algebraic equations linear in the fluxes. However, DFE requires the system to be determined (number of independent fluxes = number of metabolites) or overdetermined to compute a unique solution [10].

- Most Accurate Fluxes (MAF) Algorithm: This systematic approach iteratively determines fluxes based on accuracy measures, progressively resolving fluxes until the system becomes determined [9].

- REKINDLE Framework: A deep-learning-based method using Generative Adversarial Networks (GANs) to efficiently generate kinetic models with tailored dynamic properties, significantly reducing computational resources compared to traditional sampling methods [13].

Table 1: Computational Methods for Resolving Underdetermined Systems

| Method | Core Principle | Data Requirements | Key Advantages |

|---|---|---|---|

| Flux Balance Analysis (FBA) | Optimization of biological objective function | Network stoichiometry, exchange fluxes | Computationally efficient, widely applicable |

| Dynamic Flux Estimation (DFE) | Decoupling ODEs using time-series data | Dense metabolite time-course data | Model-free, provides dynamic flux profiles |

| Most Accurate Fluxes (MAF) | Iterative flux resolution based on accuracy ranking | Partial flux measurements | Systematic, computationally efficient |

| REKINDLE | Deep learning generation of kinetic parameters | Training data from traditional sampling | High efficiency in generating viable models |

| Monte Carlo Sampling | Random sampling of feasible parameter space | Physicochemical constraints | Characterizes solution space diversity |

Protocol for Dynamic Flux Estimation (DFE)

The DFE methodology provides a representative protocol for addressing underdetermination [10]:

- Time-Series Data Collection: Conduct high-throughput experiments to measure metabolite concentrations (( X_i )) at multiple time points (K) under specific physiological conditions. Data should characterize the system's full dynamic response to perturbations.

- Data Smoothing and Derivative Estimation: Apply smoothing algorithms (e.g., spline interpolation) to the time-series data to obtain continuous concentration profiles. Estimate the derivatives (( dX_i/dt )) at each time point numerically.

- System Decoupling: Substitute the estimated derivatives into the mass balance equations, transforming the system of N differential equations into N sets of K algebraic equations: ( \frac{dX_i}{dt} \approx \sum Influxes - \sum Effluxes ).

- Flux Calculation: If the system has full rank (number of independent fluxes = number of metabolites), solve the linear algebraic system at each time point to obtain numerical flux values as functions of time.

- Flux Representation: Plot numerical flux values against metabolite concentrations and potential modulators. Use these plots to identify appropriate mathematical representations (e.g., Michaelis-Menten, Hill functions) for each flux.

- Parameter Estimation: Determine parameter values for the identified rate laws using regression techniques, now simplified as each flux is handled individually.

Protocol for the Most Accurate Fluxes (MAF) Algorithm

For steady-state systems, the MAF algorithm provides an alternative systematic approach [9]:

- Problem Formulation: Define the underdetermined metabolic network with stoichiometric matrix S and flux vector v, subject to constraints S·v = 0 and ( \alphai \leq vi \leq \beta_i ).

- Accuracy Assessment: Calculate an accuracy measure for each flux based on experimental reliability (e.g., measurement precision, technical replicates).

- Flux Ranking: Rank all fluxes from highest to lowest accuracy.

- Iterative Resolution: Select the flux with the highest accuracy from the unresolved set. If this flux is measurable, use its measured value. Otherwise, apply an appropriate objective function (e.g., maximization/minimization) to determine its value.

- System Reduction: Incorporate the resolved flux value into the system, effectively reducing the degrees of freedom by one.

- Iteration: Repeat steps 4-5 until all fluxes are determined. The solution corresponds to the flux distribution that satisfies all constraints while maintaining maximal accuracy for the best-known fluxes.

Consequences and Research Implications

Impact on Predictive Modeling and Experimental Design

The underdetermined nature of metabolic networks has profound implications for biological research and biotechnology development:

- Alternative Optimal Solutions: Different flux distributions may equally satisfy all constraints, leading to non-unique solutions that complicate biological interpretation [9].

- Computational Challenges: Traditional Monte Carlo sampling methods may generate large subpopulations of kinetic models inconsistent with observed physiology, resulting in considerable computational inefficiency [13]. For example, the generation rate of locally stable large-scale kinetic models can be lower than 1% [13].

- Data Integration Imperative: Resolving underdetermination requires integration of diverse datasets, including transcriptomic data [12], metabolomic profiles [14] [10], and enzyme kinetic parameters [13].

- Therapeutic Targeting Difficulties: In disease research, such as inflammatory bowel disease (IBD), underdetermination complicates identification of metabolic drivers versus consequential adaptations [14].

Table 2: Key Research Reagents and Computational Tools for Flux Analysis

| Reagent/Tool | Type | Primary Function | Application Example |

|---|---|---|---|

| Genome-Scale Reconstruction (GENRE) | Computational Model | Mathematical description of metabolic reactions | iSYM (Simplified), iSyn669 (Cyanobacterium) [11] [12] |

| Constraint-Based Reconstruction and Analysis (COBRA) | Software Toolbox | Implement FBA and related algorithms | Simulation of gene deletions, flux variability analysis [11] |

| REKINDLE | Deep Learning Framework | Generate kinetic models with desired properties | Navigate physiological states with limited data [13] |

| SKiMpy Toolbox | Software Toolbox | Implement ORACLE kinetic modeling framework | Generate training datasets for kinetic parameters [13] |

| 13C-Labeled Substrates | Experimental Reagent | Enable experimental flux measurement via isotopic tracing | Resolve fluxes in central carbon metabolism |

| Time-Course Metabolomics | Experimental Data | Provide dynamic concentration profiles | Derivative estimation in DFE [10] |

The challenge of underdetermined systems represents a fundamental aspect of metabolic network analysis that continues to shape research methodologies in systems biology and metabolic engineering. While these systems inherently possess multiple flux solutions, the scientific community has developed sophisticated constraint-based, data integration, and computational approaches to extract biologically meaningful insights. The resolution of underdetermined systems requires careful consideration of physiological constraints, integration of high-quality experimental data, and application of appropriate computational frameworks. As the field advances, emerging technologies like machine learning and comprehensive multi-omics data generation promise to further constrain the solution space, ultimately enhancing our ability to predict metabolic behavior and engineer biological systems for therapeutic and biotechnological applications.

Core Concepts: GEMs as the Foundation for Digital Twins

A Genome-Scale Metabolic Model (GEM) is a computational reconstruction of the complete metabolic network of an organism, mathematically representing all known biochemical reactions, metabolites, and their associations with genes and proteins [15] [16]. These models are built from systematized knowledge bases and can be converted into a mathematical format—typically a stoichiometric matrix (S matrix)—where rows represent metabolites and columns represent reactions [17]. When contextualized with specific data to represent a particular physiological or bioprocess state, GEMs transform into digital twins, serving as virtual counterparts to physical biological systems for in silico analysis and prediction [18] [19].

The core structure of a GEM encompasses Gene-Protein-Reaction (GPR) associations, which explicitly link genomic information to metabolic capabilities [15] [16]. This foundational framework enables researchers to simulate metabolic behavior under various genetic and environmental conditions, providing a powerful platform for analyzing complex biological systems. As digital twins, GEMs integrate multi-omics data to create condition-specific models that can predict system responses to perturbations, optimize bioprocesses, and identify critical metabolic bottlenecks [18] [19] [20].

Mathematical Frameworks and Flux Analysis

Constraint-Based Reconstruction and Analysis (COBRA)

The primary mathematical framework for simulating GEMs is Constraint-Based Reconstruction and Analysis (COBRA). COBRA methods utilize physicochemical constraints—such as stoichiometry, thermodynamics, and enzyme capacities—to define the space of possible metabolic behaviors [17]. The most fundamental constraint is the steady-state assumption, which posits that the production and consumption of internal metabolites must balance. This is represented mathematically as:

S · v = 0

where S is the stoichiometric matrix and v is the flux vector of all reaction rates in the network [21] [17]. Additional constraints based on measured uptake rates, enzyme capacities, and omics data further refine the solution space to physiologically relevant flux distributions.

Flux Prediction Techniques

Several computational techniques have been developed to predict metabolic fluxes within GEMs:

Table 1: Key Flux Analysis Methods for GEMs

| Method | Principle | Applications | References |

|---|---|---|---|

| Flux Balance Analysis (FBA) | Linear programming to optimize an objective function (e.g., biomass growth) under stoichiometric constraints | Predicting growth rates, substrate uptake, byproduct secretion | [18] [16] [17] |

| 13C Metabolic Flux Analysis (13C MFA) | Uses stable isotope labeling (13C) and mass spectrometry to determine intracellular fluxes at metabolic branch points | Quantitative mapping of central carbon metabolism, validation of FBA predictions | [21] |

| Isotopically Non-Stationary MFA (INST-MFA) | Extends 13C MFA to non-steady-state conditions using ordinary differential equations to model isotopomer dynamics | Analyzing transient metabolic states, rapid responses to perturbations | [21] |

| Dynamic FBA (dFBA) | Extends FBA to dynamic conditions by incorporating time-dependent changes in extracellular metabolites | Simulating batch/fed-batch cultures, microbial community dynamics | [15] |

| Flux Variability Analysis (FVA) | Determines the minimum and maximum possible flux through each reaction while maintaining optimal objective function | Identifying alternative optimal solutions, essential reactions | [11] |

Diagram 1: GEM Development Workflow from Data to Digital Twin

Methodologies for GEM Reconstruction and Simulation

GEM Reconstruction Protocols

The reconstruction of high-quality GEMs follows a systematic workflow:

- Draft Reconstruction: Automatic generation of an initial model from genome annotation using tools that map genes to reactions via databases like KEGG and ModelSEED [15] [16].

- Network Gap Filling: Identification and addition of missing metabolic functions to ensure network connectivity and functionality [16].

- Biomass Objective Function (BOF) Formulation: Definition of the biomass composition (amino acids, nucleotides, lipids, cofactors) required for cellular growth, which serves as the primary objective function in FBA simulations [19] [20].

- Manual Curation and Experimental Validation: Iterative refinement of the model using experimental data on growth capabilities, gene essentiality, and substrate utilization [16].

For mammalian cells, particularly Chinese hamster ovary (CHO) cells used in biomanufacturing, the biomass reaction must be carefully defined to include appropriate macromolecular composition representative of the specific cell type [19] [20].

Context-Specific Model Generation

A critical advancement in GEM applications is the generation of context-specific models from global reconstructions using omics data. Multiple algorithms have been developed for this purpose:

Table 2: Algorithms for Context-Specific GEM Reconstruction

| Algorithm | Approach | Data Requirements | |

|---|---|---|---|

| iMAT | Maximizes the number of high-expression reactions with high flux and low-expression reactions with zero flux | Transcriptomics or Proteomics | [20] |

| GIMME | Minimizes flux through reactions associated with low-expression genes while maintaining metabolic objectives | Transcriptomics | [22] [20] |

| mCADRE | Uses expression data and network topology to remove lowly expressed reactions while maintaining network connectivity | Transcriptomics | [20] |

| INIT | Integrates quantitative proteomics data to build tissue-specific models | Proteomics | [20] |

Diagram 2: Relationship Between Flux Analysis Methods and Applications

Applications in Research and Industry

Biopharmaceutical Manufacturing

GEMs as digital twins have revolutionized bioprocess development for therapeutic protein production. CHO cell GEMs have been successfully deployed to:

- Optimize feed media composition to reduce byproduct accumulation [19]

- Identify metabolic engineering targets to enhance productivity and growth [19]

- Predict metabolic responses to process perturbations like oxygen limitation [19]

- Guide cell line development through in silico screening of metabolic phenotypes [18] [19]

The integration of CHO-GEMs with artificial intelligence and systems engineering algorithms enables increasingly digitized and dynamically controlled bioprocessing pipelines, paving the way for autonomous bioreactor management [18] [19].

Biomedical Research and Drug Discovery

In biomedical contexts, GEMs serve as digital twins of pathological states to:

- Identify potential drug targets in pathogens by determining essential metabolic functions [15] [16] [22]

- Elucidate metabolic reprogramming in cancer cells, including glioblastoma [20]

- Understand host-pathogen interactions by integrating GEMs of pathogens with human metabolism [16]

- Investigate synthetic lethality—pairs of reactions whose simultaneous inhibition abrogates growth—for combination therapy development [22]

For glioblastoma, GBM-specific metabolic models reconstructed from gene expression data have successfully predicted flux-level metabolic reprogramming, including enhanced aerobic glycolysis and glutaminolysis [20].

Metabolic Engineering and Strain Development

GEMs enable rational design of microbial cell factories for industrial biochemical production by:

- Predicting gene knockout strategies to enhance product yields [16] [11]

- Identifying novel metabolic pathways for biosynthesis of non-native compounds [15] [16]

- Optimizing co-factor utilization and redox balance [16]

- Guiding adaptive laboratory evolution through selection of desired metabolic phenotypes [16]

Table 3: Experimentally Validated GEM Predictions Across Organisms

| Organism | GEM Application | Validation Outcome | References |

|---|---|---|---|

| E. coli | Prediction of gene essentiality in minimal media | 93.4% accuracy with iML1515 model | [16] |

| S. cerevisiae | Consensus model Yeast 7 | Improved prediction of metabolic capabilities across conditions | [16] |

| CHO cells | Media optimization for fed-batch processes | Reduced byproduct secretion, improved cell growth | [19] |

| M. tuberculosis | Drug target identification under hypoxic conditions | Insights into pathogen metabolism in dormant state | [16] |

| Glioblastoma | Prediction of metabolic dependencies | Confirmed glutaminolysis and glycolytic flux patterns | [20] |

Research Reagent Solutions

Table 4: Essential Research Tools for GEM Development and Validation

| Reagent/Tool | Function | Application Examples |

|---|---|---|

| COBRA Toolbox | MATLAB-based suite for constraint-based modeling | FBA, FVA, gene deletion analyses [17] |

| COBRApy | Python implementation of COBRA methods | Building, simulating, and analyzing GEMs [17] |

| 13C-labeled substrates | Tracers for experimental flux determination | Validation of model predictions via 13C MFA [21] |

| Gene expression microarrays/RNA-seq | Transcriptome profiling for context-specific model reconstruction | Generating tissue- or condition-specific models [20] |

| Mass spectrometry | Measurement of metabolite concentrations and labeling patterns | Determination of exchange fluxes and isotopomer distributions [21] |

| Biochemical databases (KEGG, ModelSEED) | Curated reaction databases for draft reconstruction | Automatic generation of initial metabolic networks [17] |

Future Perspectives and Integration with Emerging Technologies

The future development of GEMs as digital twins is advancing along several frontiers. The integration of machine learning and artificial intelligence with GEMs is creating powerful hybrid modeling frameworks that can leverage both mechanistic knowledge and data-driven patterns [18]. Additionally, next-generation GEMs are expanding beyond metabolism to incorporate macromolecular expression (ME-models) that account for proteomic and transcriptional constraints [15].

There is also a growing emphasis on multi-scale models that integrate GEMs with kinetic parameters, regulatory networks, and systemic physiology [19] [23]. For mammalian systems, the incorporation of cellular functions beyond metabolism—such as signaling and gene regulation—will enhance the predictive accuracy of digital twins for both biomanufacturing and biomedical applications [23].

As the field progresses, community-driven efforts to standardize, curate, and validate GEMs will be crucial for establishing these digital twins as reliable tools for research and industry. The continued expansion of biological databases and advancement of computational methods will further enhance the resolution and predictive power of GEMs, solidifying their role as indispensable digital counterparts to biological systems.

Flux consistency represents a cornerstone concept in constraint-based metabolic modeling, determining the space of possible, or feasible, metabolic flux distributions that a biochemical network can achieve under steady-state and capacity constraints. This technical guide delves into the mathematical definition of flux consistency, its calculation via network-wide pathway analysis, and its critical role in bridging the gap between in silico predictions and biological reality. We explore advanced algorithms that enhance predictive accuracy by integrating multi-omics data, thereby refining the feasible flux space into a biologically relevant subset. Designed for researchers and drug development professionals, this whitepaper provides a detailed examination of current methodologies, quantitative benchmarks, and practical experimental protocols, framed within the broader imperative of understanding flux consistency in metabolic reconstructions research.

In genome-scale metabolic models (GEMs), a flux vector represents the rates of all biochemical reactions within a cellular system. The principle of flux consistency governs whether a given flux vector is possible within the defined constraints of the model. The foundational constraints are derived from mass conservation and thermodynamics. The steady-state assumption, formalized by the stoichiometric matrix ( S ), dictates that ( S \cdot \vec{v} = 0 ), meaning the production and consumption of every internal metabolite must be balanced [24] [25]. Further constraints bound reaction fluxes, such that ( \alphai \leq vi \leq \betai ), where ( \alphai ) and ( \beta_i ) represent lower and upper flux limits, respectively. A flux vector ( \vec{v} ) is deemed flux consistent if it satisfies all these constraints, thereby residing within the feasible solution space. The primary challenge in systems biology is that this feasible space is vast; the core task of flux analysis is to shrink this space by integrating biological data, transforming abstract mathematical solutions into predictions that reflect physiological states.

The concept extends beyond single reactions to the coupling between different parts of the network. For instance, Flux Coupling Analysis (FCA) identifies dependent reactions whose fluxes are inextricably linked [24]. Similarly, the recently introduced Flux-Sum Coupling Analysis (FSCA) applies this principle to metabolites, defining coupling relationships based on the flux-sum of a metabolite—the total flux through all reactions consuming or producing it [24]. A non-zero flux-sum for one metabolite may imply a non-zero flux-sum for another, creating directional, partial, or full coupling relationships. Understanding these interdependencies is crucial for predicting how perturbations, such as gene knockouts or drug treatments, propagate through the metabolic network, affecting overall biochemical function and cellular phenotype.

Quantitative Landscape of Flux Coupling

The application of flux coupling analysis to metabolic models of diverse organisms reveals a distinct quantitative landscape of metabolite interdependencies. The following table summarizes the prevalence of different flux-sum coupling types in three well-established models: Escherichia coli (iML1515), Saccharomyces cerevisiae (iMM904), and Arabidopsis thaliana (AraCore) [24].

Table 1: Prevalence of Flux-Sum Coupling Types in Different Metabolic Models

| Organism | Model Name | Full Coupling | Partial Coupling | Directional Coupling |

|---|---|---|---|---|

| Escherichia coli | iML1515 | 0.007% | 0.063% | 16.56% |

| Saccharomyces cerevisiae | iMM904 | 0.010% | 0.036% | 3.97% |

| Arabidopsis thaliana | AraCore | 0.12% | 2.94% | 80.66% |

Data adapted from Seyis et al. (2025) [24].

The data shows that directional coupling is the most prevalent type across all models, indicating that the flux-sum of one metabolite often implies the flux-sum of another, but not vice versa. Full coupling, where a fixed ratio exists between two metabolite flux-sums, is the rarest, reflecting the highly interconnected and regulated nature of metabolism that typically avoids such rigid, one-to-one relationships. The significant variation in coupling profiles, particularly the high percentage of directional coupling in the AraCore plant model, underscores the organism-specific topology of metabolic networks and their associated functional constraints.

The performance of algorithms that predict flux states can be quantitatively benchmarked. The following table compares the performance of enhanced Flux Potential Analysis (eFPA) against other methods for predicting relative flux levels in Saccharomyces cerevisiae using proteomic data, demonstrating its superior predictive power [25].

Table 2: Performance Comparison of Flux Prediction Methods in S. cerevisiae

| Prediction Method | Level of Expression Data Integration | Key Performance Metric |

|---|---|---|

| Enhanced Flux Potential Analysis (eFPA) | Pathway-level | Optimal prediction of relative flux levels; handles data sparsity and noisiness effectively |

| Flux Potential Analysis (FPA) | Adjustable network neighborhood | Suboptimal, requires flux data for parameter optimization |

| Compass | Whole-network | Valuable for identifying metabolic switches, but less accurate for flux prediction |

| Individual Reaction Analysis | Single reaction | Weak correlation between enzyme expression and flux |

Data synthesized from Yilmaz et al. (2025) [25].

The benchmark establishes that integrating expression data at the pathway level, as done by eFPA, achieves an optimal balance. It surpasses methods focused solely on the cognate reaction, which miss network effects, and those that integrate across the entire network, which can dilute critical local information. This pathway-level approach provides a more accurate reflection of biological reality, where functional metabolic modules operate in a coordinated fashion.

Methodological Protocols for Flux Analysis

Protocol 1: Flux-Sum Coupling Analysis (FSCA)

Flux-Sum Coupling Analysis (FSCA) is a constraint-based approach to categorize interdependencies between metabolite pairs based on their flux-sums [24].

Define the Metabolic Model and Flux-Sum: Begin with a stoichiometric matrix ( S ) for the metabolic network. For a metabolite ( m ), the flux-sum ( \Phim ) is defined as: ( \Phim = \sumj |S{m,j}| \cdot |vj| ) where ( S{m,j} ) is the stoichiometric coefficient of metabolite ( m ) in reaction ( j ), and ( v_j ) is the flux of reaction ( j ).

Formulate Linear Programming (LP) Problems: For a pair of metabolites ( A ) and ( B ), the coupling is determined by solving two linear fractional programming problems to find the minimum ( (\rho{min}) ) and maximum ( (\rho{max}) ) possible values for the ratio ( \PhiA / \PhiB ) under the steady-state constraint ( S \cdot \vec{v} = 0 ) and relevant flux bounds.

Classify the Coupling Type: The values of ( \rho{min} ) and ( \rho{max} ) define the coupling relationship:

- Fully Coupled (( A \leftrightarrow B )): ( \rho{min} = \rho{max} ) and is a finite, non-zero constant.

- Partially Coupled (( A \leftrightarrow B )): ( \rho{min} ) and ( \rho{max} ) are finite but unequal.

- Directionally Coupled (( A \rightarrow B )): ( \rho{min} = 0 ) and ( \rho{max} ) is a finite constant (or vice versa).

- Uncoupled: ( \rho{min} = 0 ) and ( \rho{max} ) is unbounded.

Diagram 1: FSCA computational workflow for classifying metabolite coupling.

Protocol 2: Enhanced Flux Potential Analysis (eFPA)

Enhanced Flux Potential Analysis (eFPA) predicts relative flux changes by integrating omics data at the pathway level, outperforming reaction-level or whole-network approaches [25].

Data Acquisition and Preprocessing: Obtain proteomic or transcriptomic data for the conditions under study. Normalize the data appropriately. If using flux data for validation or parameter optimization, adjust absolute fluxes by the specific growth rate to obtain relative, growth-rate-independent values.

Define the Pathway-Level Influence: For a Reaction of Interest (ROI), eFPA integrates expression data from the ROI's enzyme and enzymes catalyzing nearby reactions within the network. A distance factor ( d ) controls the size of the network neighborhood, with the influence of other reactions weighted based on their network proximity to the ROI.

Calculate the Flux Potential: The flux potential ( P{ROI} ) for the reaction is computed as a weighted sum of the expression levels ( Ei ) of all relevant enzymes ( i ) within the effective pathway neighborhood: ( P{ROI} = \sumi w(di) \cdot Ei ) where ( w(d_i) ) is a distance-dependent weighting function.

Optimization and Validation: Using a training dataset with paired flux and expression measurements (e.g., the yeast dataset from Hackett et al.), systematically optimize the distance parameter ( d ) to maximize the correlation between predicted flux potential and experimentally determined relative fluxes. The optimized eFPA model can then be applied to predict fluxes in new contexts using only expression data.

Diagram 2: eFPA workflow for predicting flux from expression data.

Successful implementation of flux consistency research relies on a suite of computational and biological resources. The following table details essential components of the research toolkit.

Table 3: Key Reagents and Resources for Flux Consistency Research

| Item Name | Type | Function and Application in Research |

|---|---|---|

| Genome-Scale Metabolic Model (GEM) | Computational Resource | A mathematical representation of a target organism's metabolism (e.g., iML1515, iMM904, AraCore). Serves as the scaffold for constraint-based analysis and flux simulation [24] [26]. |

| AGORA Reconstructions | Computational Resource | A resource of 773 genome-scale metabolic reconstructions for human gut bacteria. Enables studies of host-microbiome interactions and community metabolism [26]. |

| COBRA Toolbox | Software | A MATLAB/Python toolbox for performing Constraint-Based Reconstruction and Analysis. Used to implement FBA, FVA, and other flux consistency algorithms [26]. |

| Fluxomic Dataset | Experimental Data | Quantitative measurements of intracellular metabolic fluxes, often via isotope tracing. Serves as the ground truth for validating and parameterizing prediction models like eFPA [25]. |

| Proteomic/Transcriptomic Dataset | Experimental Data | Measurements of enzyme abundance (protein or mRNA) across different conditions. Used as input for algorithms like eFPA and REMI to predict context-specific flux states [25] [27]. |

| REMI Algorithm | Computational Method | Integrates relative gene expression and relative metabolite abundance into thermodynamically consistent models to predict differential flux profiles between two conditions [27]. |

Flux consistency provides the fundamental link between the mathematical feasibility of metabolic fluxes and their biological realizability. Moving from the vast, abstract feasible space defined solely by stoichiometry requires the integration of increasingly sophisticated data types and algorithms. Techniques like FSCA reveal the inherent topological constraints and couplings within the network, while methods like eFPA and REMI successfully incorporate quantitative omics data to generate biologically contextualized flux predictions. The demonstrated superiority of pathway-level integration in eFPA offers a crucial principle for the field: biological regulation operates through coordinated modules. For drug development professionals, these advanced models are indispensable for identifying critical metabolic vulnerabilities in pathogens or cancer cells, and for predicting off-target effects on human metabolism. As metabolic reconstructions continue to expand in scope and accuracy, the precise definition and application of flux consistency will remain central to translating genomic information into a mechanistic understanding of life.

Metabolic flux is the rate of turnover of molecules through a metabolic pathway, ultimately regulating cellular physiology and manifesting the phenotype of an organism [28]. The field of fluxomics aims to quantify and model these fluxes throughout the entire metabolic network. Reliable prediction of metabolic fluxes is crucial because they represent the functional outcome of cellular processes, integrating information from genomics, transcriptomics, and proteomics [7] [29]. Flux Balance Analysis (FBA) serves as the gold-standard computational method that leverages genome-scale metabolic models (GEMs) to predict metabolic phenotypes by combining stoichiometric constraints with an optimality principle, typically biomass maximization [30] [7]. However, new methods are emerging that overcome FBA's limitation of requiring a predefined cellular objective, which is particularly problematic for complex organisms like humans where the objective is often unknown or context-specific [30] [31].

Table 1: Key Computational Methods for Metabolic Flux Prediction

| Method | Primary Approach | Key Inputs | Major Applications |

|---|---|---|---|

| Flux Balance Analysis (FBA) | Optimization principle with stoichiometric constraints | GEM, Growth objective | Prediction of gene essentiality, growth capabilities [30] [7] |

| Flux Cone Learning (FCL) | Monte Carlo sampling + supervised learning | GEM, Experimental fitness data | Gene essentiality prediction, small molecule production [30] |

| REMI | Integration of relative expression & metabolomic data | GEM, Transcriptomic, Metabolomic, Thermodynamic data | Analysis of altered physiology under perturbations [29] |

| ΔFBA | Direct prediction of flux differences | GEM, Differential gene expression | Metabolic alterations in disease, environmental perturbations [31] |

| 13C-MFA | Stable isotope tracing + computational modeling | 13C-labeled substrates, MS/NMR data | Central metabolism studies, metabolic engineering [7] [28] |

Flux Predictions in Human Disease and Drug Development

Predicting Drug Metabolism via the Human Microbiome

The human microbiome significantly influences the efficacy and safety of commonly prescribed drugs, with gut microorganisms capable of metabolizing 176 of 271 tested drugs [5]. The AGORA2 resource enables strain-resolved modeling of personalized drug metabolism by providing genome-scale metabolic reconstructions of 7,302 human microorganisms with detailed drug degradation and biotransformation capabilities. When applied to 616 patients with colorectal cancer and controls, AGORA2 revealed that drug conversion potential of gut microbiomes varied substantially between individuals and correlated with age, sex, body mass index, and disease stages [5]. This demonstrates how flux prediction can pave the way for personalized medicine approaches that account for individual variations in microbiome composition.

Uncovering Metabolic Alterations in Complex Diseases

The ΔFBA method enables direct prediction of metabolic flux alterations between healthy and diseased states by integrating differential gene expression data with GEMs, without requiring specification of a cellular objective [31]. When applied to type-2 diabetes in human muscle, ΔFBA successfully predicted metabolic alterations characteristic of the disease state. This approach identified critical flux changes in energy metabolism pathways that contribute to the pathological phenotype, providing insights into potential therapeutic targets [31]. Methods like ΔFBA are particularly valuable for understanding metabolic hallmarks of observable phenotypes in complex diseases where the underlying metabolic objectives are not well defined.

Table 2: Experimental Platforms for Flux Validation

| Experimental Method | Key Features | Data Output | Limitations |

|---|---|---|---|

| 13C-MFA | Uses 13C-labeled substrates; metabolic & isotopic steady state | Quantitative flux maps of central metabolism | Slow isotopic steady state in mammalian cells [7] |

| 13C-INST-MFA | Transient 13C-labelling; metabolic steady state only | Dynamic flux information | Computational complexity [7] |

| 13C-DMFA | Multiple time intervals; non-steady state | Comprehensive flux transients | Huge data requirements, model complexity [7] |

| FRET Nanosensors | Protein conformational changes to ligand binding | Cellular & subcellular metabolite dynamics | Limited to single compounds [28] |

Flux Predictions in Biotechnology and Metabolic Engineering

Predicting Gene Essentiality and Small Molecule Production

Flux Cone Learning (FCL) represents a breakthrough in predicting metabolic gene essentiality, outperforming traditional FBA with 95% accuracy in E. coli across various carbon sources [30]. FCL uses Monte Carlo sampling to capture the shape of the metabolic flux cone for each gene deletion, then applies supervised learning to correlate these geometric changes with experimental fitness data. This approach demonstrates best-in-class accuracy for predicting metabolic gene essentiality in organisms of varied complexity, including Escherichia coli, Saccharomyces cerevisiae, and Chinese Hamster Ovary cells [30]. Beyond essentiality prediction, FCL has been successfully trained to predict small molecule production using data from large deletion screens, enabling more efficient design of microbial cell factories for producing high-value compounds in the food, energy, and pharmaceutical sectors [30].

Enhancing Bioproduction through Multi-Omics Integration

The REMI method exemplifies how integrating multiple data layers enhances flux predictions for biotechnology applications. REMI incorporates relative gene expression, metabolite abundance, and thermodynamic constraints into genome-scale models, significantly reducing the solution space of feasible fluxes [29]. When applied to E. coli under various genetic and environmental perturbations, REMI achieved a 32% higher Pearson correlation coefficient (r = 0.79) with experimental fluxomic data compared to similar methods [29]. This improved predictive power allows for more precise metabolic engineering strategies, enabling researchers to optimize microbial chassis for bio-production by identifying key flux alterations that maximize product yield while maintaining cellular fitness.

Experimental Methodologies and Validation

Protocol for 13C-Metabolic Flux Analysis

13C-MFA Protocol:

- Cell Cultivation: Grow cells in metabolic steady state, then replace medium with 13C-labeled substrate (e.g., [1,2-13C] glucose, [U-13C] glucose) [7].

- Isotope Steady State: Continue cultivation until isotopic steady state is reached (varies from hours to days depending on cell type) [7].

- Metabolite Extraction: Quench metabolism and extract intracellular and extracellular metabolites.

- Mass Spectrometry/NMR Analysis: Analyze labeling patterns in metabolites using targeted MS or NMR spectroscopy [7].

- Computational Modeling: Use software tools (e.g., METRAN, INCA, OpenFLUX) to calculate metabolic fluxes that best fit the measured isotope distributions and physiological fluxes [7].

Workflow for Integrating Omics Data with Flux Predictions

REMI Experimental Workflow:

- Data Pre-processing: Convert FBA model to thermodynamic-based flux analysis (TFA) model incorporating Gibbs free energy of metabolites and reactions [29].

- Ratio Conversion: Systematically convert gene-expression and metabolite-level ratios into reaction ratios for integration.

- Constraint Integration: Apply thermodynamic, gene-expression, and metabolomic constraints to the model based on available data (REMI-TGex, REMI-TM, or REMI-TGexM) [29].

- Flux Prediction: Use optimization principles to maximize consistency between differential gene-expression/metabolite data and predicted differential fluxes.

- Solution Enumeration: Generate multiple alternative flux profiles using mixed-integer linear programming to identify commonly regulated genes across conditions [29].

Diagram 1: Experimental workflow for metabolic flux analysis.

Essential Research Reagents and Tools

Table 3: Research Reagent Solutions for Flux Analysis

| Reagent/Tool | Function | Application Context |

|---|---|---|

| Genome-Scale Metabolic Models (GEMs) | Provide stoichiometric representation of metabolism | Constraint-based modeling, FBA, FCL [30] [5] |

| 13C-labeled Substrates | Trace metabolic pathways through isotope incorporation | 13C-MFA, 13C-INST-MFA [7] |

| Mass Spectrometry | Measure isotope labeling patterns in metabolites | 13C-MFA, metabolomics [7] [28] |

| FRET Nanosensors | Monitor metabolite dynamics with cellular resolution | Live-cell imaging, subcellular flux analysis [28] |

| AGORA2 Resource | Strain-resolved metabolic reconstructions of human microbiome | Personalized drug metabolism prediction [5] |

| COBRA Toolbox | MATLAB toolbox for constraint-based modeling | Implementation of FBA, ΔFBA [31] |

Diagram 2: Workflow for personalized drug metabolism prediction using AGORA2.

From Theory to Practice: Key Methods for Flux Analysis and Their Biotechnological Applications

Flux Balance Analysis (FBA) stands as a cornerstone constraint-based modeling approach within systems biology for analyzing metabolic networks. By leveraging stoichiometric models and optimization principles, FBA enables the prediction of steady-state metabolic fluxes, facilitating the study of cellular phenotypes without requiring extensive kinetic parameter data. This technical guide details FBA's mathematical foundations, computational efficiency, and practical applications while critically examining its limitations, including its reliance on predefined objective functions and challenges in capturing dynamic metabolic adaptations. Furthermore, we explore recent methodological advances, such as the TIObjFind framework, which integrates metabolic pathway analysis with FBA to enhance interpretability and align model predictions with experimental data. Designed for researchers and drug development professionals, this review frames FBA within the broader context of understanding flux consistency in metabolic reconstructions research.

Flux Balance Analysis is a mathematical computational method for simulating the metabolism of cells or entire unicellular organisms using genome-scale metabolic reconstructions [32]. These reconstructures provide a stoichiometrically balanced representation of all known biochemical reactions within an organism, mapping the interactions between metabolites and linking reactions to associated genes [32]. FBA has revolutionized systems biology by enabling researchers to predict metabolic behavior under various genetic and environmental conditions, with applications spanning microbial strain engineering, drug target identification, and analysis of human metabolic diseases [32].

The power of FBA lies in its ability to overcome the common data limitations in biological research. Traditional kinetic modeling approaches require detailed knowledge of enzyme kinetic parameters and metabolite concentrations, which are often unavailable for entire metabolic networks [32]. FBA circumvents this requirement through two fundamental assumptions: steady-state metabolism, where metabolite concentrations remain constant over time as production and consumption fluxes balance, and evolutionary optimality, where the network is optimized for a specific biological objective such as biomass production or ATP synthesis [32]. This combination of constraints and optimization enables quantitative prediction of metabolic flux distributions that can be validated experimentally.

Within the context of flux consistency research, FBA provides a framework for determining whether postulated metabolic networks can maintain stoichiometric balance while achieving biologically relevant objectives. The consistency between predicted fluxes and experimental measurements serves as a critical validation metric for metabolic reconstructions, helping identify gaps in metabolic knowledge and refine genome annotations.

Core Mathematical Principles

Stoichiometric Matrix and Mass Balance

The fundamental mathematical framework of FBA centers on the stoichiometric matrix S, where each element Sₙₘ represents the stoichiometric coefficient of metabolite n in reaction m. This m×n matrix encapsulates the entire network structure, with rows corresponding to metabolites and columns representing biochemical reactions [32]. The system dynamics are described by the differential equation:

dX/dt = S · v - μX

where X is the metabolite concentration vector, v is the flux vector through each reaction, and μ is the specific growth rate [32]. The critical steady-state assumption reduces this to:

S · v = 0

This equation represents the mass balance constraint, ensuring that for each metabolite, the combined rate of production equals the combined rate of consumption, with no net accumulation or depletion [32].

Solution Space and Constraints

The equation S · v = 0 defines a solution space containing all feasible flux distributions that satisfy mass balance. Since metabolic networks typically contain more reactions than metabolites (m > n), the system is underdetermined, with infinitely many solutions [32]. To reduce the solution space, FBA incorporates additional constraints:

- Enzyme capacity constraints: vᵢ ≤ vᵢₘₐₓ

- Thermodynamic constraints: reversibility/irreversibility of reactions

- Nutrient uptake rates: constraints on substrate consumption

- Gene deletion constraints: vᵢ = 0 for knocked-out reactions

These constraints define a bounded convex solution space within which optimal solutions are sought.

Objective Function and Linear Programming

FBA identifies a single flux distribution from the feasible space by assuming the cell optimizes for a specific biological objective, formulated as a linear objective function:

Z = cᵀv

where c is a vector of weights indicating how much each reaction contributes to the biological objective [32]. Common objectives include:

- Biomass maximization

- ATP production

- Synthesis of specific metabolites

- Nutrient uptake efficiency

The complete FBA problem is formulated as a linear program:

where vₗb and vᵤb represent lower and upper bounds on reaction fluxes, respectively [32]. This optimization problem can be solved efficiently using linear programming algorithms even for genome-scale models with thousands of reactions.

Figure 1: Flux Balance Analysis Computational Workflow. The diagram illustrates the core mathematical components of FBA and their relationships, from network representation through constraint application to solution generation.

Key Strengths of FBA

Computational Efficiency and Scalability

A significant advantage of FBA is its computational efficiency, enabling rapid analysis of genome-scale metabolic networks. Simulations for models containing over 10,000 reactions typically complete in seconds on modern personal computers [32]. This efficiency stems from the linear programming foundation, for which highly optimized solvers exist. The low computational cost enables extensive perturbation analyses, including single and double reaction deletions, and gene essentiality screens across multiple environmental conditions.

Minimal Parameter Requirements

Unlike kinetic modeling approaches that require extensive parameterization of enzyme mechanisms and kinetic constants, FBA requires only the network stoichiometry and flux constraints [32]. This parameter-sparse approach makes FBA particularly valuable for studying poorly characterized systems or organisms where comprehensive kinetic data are unavailable.

Versatile Applications

FBA has demonstrated utility across diverse biological domains:

- Bioprocess Engineering: Systematic identification of metabolic modifications in industrial microbes to improve yields of commercially important chemicals like ethanol and succinic acid [32]

- Drug Target Discovery: Identification of essential metabolic reactions in pathogens and cancer cells that represent potential therapeutic targets [32]

- Host-Pathogen Interactions: Modeling metabolic interactions between hosts and pathogens to identify vulnerable points in infection processes [32]

- Culture Media Optimization: Designing optimal growth media using Phenotypic Phase Plane (PhPP) analysis to enhance growth rates or desired metabolite production [32]

Table 1: Quantitative Analysis of FBA Applications in Metabolic Engineering

| Application Domain | Typical Model Size (Reactions) | Key Objective Function | Prediction Accuracy vs. Experimental Data |

|---|---|---|---|

| Microbial Strain Engineering | 1,000-2,500 | Biomass Maximization | 70-85% [32] |

| Drug Target Identification | 500-1,500 | ATP Production | 75-90% [32] |

| Nutrient Utilization Studies | 800-2,000 | Substrate Uptake Efficiency | 80-88% [32] |

| Byproduct Secretion Analysis | 1,200-2,500 | Metabolite Production | 65-80% [32] |

Limitations and Methodological Challenges

Objective Function Selection

The choice of an appropriate objective function represents a fundamental challenge in FBA. While biomass maximization successfully predicts growth phenotypes for many microorganisms, it may not accurately represent metabolic states in all biological contexts [33]. Cells likely employ multiple, condition-specific objectives that change throughout growth phases or in response to environmental stimuli. Static objective functions fail to capture these adaptive metabolic shifts, limiting prediction accuracy in dynamic environments [33].

Steady-State Assumption

The core steady-state assumption enables mathematical tractability but restricts FBA's ability to model transient metabolic states, dynamic responses to perturbations, or metabolic oscillations [32]. This limitation is particularly significant when studying:

- Rapid environmental changes

- Dynamic metabolic regulation

- Cellular differentiation processes

- Metabolic transitions between growth phases

Absence of Regulatory Information

Traditional FBA does not incorporate metabolic regulation, including:

- Allosteric regulation of enzymes

- Transcriptional control of metabolic genes

- Post-translational modifications

- Metabolic channeling

The absence of these regulatory constraints can lead to predictions of flux distributions that are stoichiometrically feasible but biologically unrealized due to regulatory restrictions.

Table 2: Comprehensive Analysis of FBA Limitations and Current Mitigation Approaches

| Limitation Category | Specific Challenge | Current Mitigation Strategies | Impact on Prediction Accuracy |

|---|---|---|---|

| Objective Function | Single objective may not reflect biological priorities | Multi-objective optimization, Pareto optimality [33] | Medium-High |

| Network Coverage | Gaps in pathway annotations | Genome-scale model curation, gap-filling algorithms | Medium |

| Constraint Definition | Inaccurate flux bounds | Integration of omics data (transcriptomics, proteomics) | Medium |

| Regulatory Oversight | Lack of regulatory constraints | rFBA, integration of Boolean regulatory rules [33] | High |

| Dynamic Modeling | Steady-state assumption | dFBA, dynamic extension of FBA [33] | High |

| Spatial Compartmentalization | Non-compartmentalized models | Compartment-specific models | Low-Medium |

Recent Methodological Advances

TIObjFind Framework

The TIObjFind framework represents a significant advancement addressing FBA's limitation in objective function selection. This approach integrates Metabolic Pathway Analysis (MPA) with traditional FBA to systematically infer metabolic objectives from experimental data [33]. The framework operates through three key steps:

Optimization Problem Formulation: Reformulates objective function selection as an optimization problem that minimizes the difference between predicted and experimental fluxes while maximizing an inferred metabolic goal [33]

Mass Flow Graph Construction: Maps FBA solutions onto a Mass Flow Graph (MFG), enabling pathway-based interpretation of metabolic flux distributions [33]

Pathway Analysis: Applies a minimum-cut algorithm (e.g., Boykov-Kolmogorov) to extract critical pathways and compute Coefficients of Importance (CoIs), which serve as pathway-specific weights in optimization [33]

This framework enhances interpretability by distributing importance across metabolic pathways rather than focusing on single reactions, better capturing the distributed nature of metabolic regulation [33].

Multi-Dimensional Extensions

Recent work has extended FBA principles to new domains, including Constraint-Based Multi-Dimensional Flux Balance Analysis (CBMDFBA) for optimizing resource allocation in mobile edge computing networks [34]. This cross-disciplinary application demonstrates the versatility of the flux balance approach beyond traditional metabolic modeling.

Figure 2: TIObjFind Framework Workflow. This topology-informed method integrates metabolic pathway analysis with FBA to determine pathway-specific weighting factors that align model predictions with experimental data.

Experimental Protocols and Methodologies

Standard FBA Implementation Protocol

Objective: Predict metabolic flux distribution for a genome-scale metabolic model under specific environmental conditions.

Required Inputs:

- Stoichiometric matrix (S)

- Objective function vector (c)

- Flux constraints (lower and upper bounds)

- Nutrient availability constraints

Procedure:

- Formulate the linear programming problem:

Apply appropriate flux constraints based on environmental conditions:

- Set upper bounds for substrate uptake rates

- Constrain oxygen availability for anaerobic conditions

- Set non-growth associated maintenance ATP requirements

Solve the linear programming problem using an appropriate algorithm (e.g., simplex, interior-point)

Extract and analyze the optimal flux distribution:

- Identify active pathways

- Calculate yields of interest

- Compare with experimental data

Validate predictions through:

- Growth rate comparisons

- Substrate uptake measurements

- Byproduct secretion profiles

Gene Deletion Analysis Protocol

Objective: Identify essential genes/reactions for a specific metabolic function.

Procedure:

- Run wild-type FBA simulation to establish baseline flux distribution

- For each gene/reaction in the model:

- Constrain the corresponding reaction flux to zero

- Resolve the FBA problem

- Record the objective function value (e.g., growth rate)

- Classify gene/reaction essentiality based on threshold reduction in objective function (typically >90% reduction indicates essentiality)

- For pairwise deletion studies (synthetic lethality analysis):

- Constrain all possible pairs of non-essential reactions to zero

- Identify combinations that eliminate metabolic function

TIObjFind Implementation Protocol

Objective: Determine Coefficients of Importance that align FBA predictions with experimental flux data.

Procedure:

- Perform initial FBA using candidate objective functions

- Construct Mass Flow Graph from FBA solutions

- Apply minimum-cut algorithm to identify critical pathways between source (e.g., substrate uptake) and target (e.g., product formation) reactions

- Calculate Coefficients of Importance (CoIs) quantifying each reaction's contribution to the objective function

- Reformulate objective function as weighted sum of fluxes using CoIs

- Iterate until optimal alignment with experimental data is achieved [33]

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for FBA Implementation

| Tool/Category | Specific Examples | Function/Purpose | Implementation Platform |

|---|---|---|---|

| Metabolic Network Databases | KEGG, EcoCyc [33] | Foundational databases for pathway information and network reconstruction | Web-based, API access |

| Constraint-Based Modeling Software | COBRA Toolbox, FlexFlux [33] | MATLAB/Python implementations for FBA and related methods | MATLAB, Python |

| Linear Programming Solvers | Gurobi, CPLEX, GLPK | Algorithms for solving the optimization problem | Various platforms |

| Pathway Analysis Tools | CellNetAnalyzer, Pathway Tools | Metabolic pathway analysis and visualization | Various platforms |

| Model Curation Tools | MEMOTE, ModelSEED | Quality assessment and gap-filling for metabolic models | Web-based, Python |

| Data Integration Tools | rFBA, integrated FBA | Incorporation of regulatory constraints | MATLAB, Python |

| Dynamic FBA Implementations | dFBA, DyMMM | Dynamic extension of FBA for non-steady-state conditions | MATLAB, Python |

Flux Balance Analysis remains an indispensable tool in systems biology, providing a mathematically robust framework for predicting metabolic behavior from stoichiometric constraints. While its limitations in objective function selection, regulatory oversight, and dynamic modeling present ongoing challenges, methodological advances like the TIObjFind framework demonstrate promising approaches for enhancing prediction accuracy and biological relevance. The continued development of multi-objective optimization techniques, integration of regulatory constraints, and dynamic extensions will further solidify FBA's role in metabolic engineering, drug discovery, and fundamental biological research. For researchers investigating flux consistency in metabolic reconstructions, FBA provides both a validation framework and a predictive tool for probing metabolic capabilities across diverse biological systems and environmental conditions.

13C-Metabolic Flux Analysis (13C-MFA) has emerged as the gold-standard technique for quantifying intracellular metabolic fluxes in living cells under metabolic quasi-steady state conditions [35] [36]. Unlike other omics technologies that provide static snapshots of cellular components, 13C-MFA delivers dynamic information on the functional phenotype by tracing the flow of carbon through metabolic networks [37]. This capability is particularly valuable for flux consistency studies in metabolic reconstruction, where it serves as a critical validation tool for genome-scale metabolic models [3] [5]. By integrating isotopic labeling data with mathematical modeling, 13C-MFA enables researchers to move beyond theoretical network reconstructions to experimentally-verified quantification of pathway activities, thereby bridging the gap between genetic potential and metabolic function [35] [3].

The fundamental principle underlying 13C-MFA is that different flux distributions through metabolic pathways result in distinct isotopic labeling patterns in intracellular metabolites [36]. When cells are fed with 13C-labeled substrates, the carbon atoms are distributed through metabolic pathways in patterns that reflect the activities of those pathways. By measuring these labeling patterns and applying computational models that simulate the atom transitions through biochemical reactions, researchers can infer the in vivo reaction rates with remarkable precision [38] [36].

Fundamental Principles and Workflow

Core Principles of Isotopic Tracer Analysis

The theoretical foundation of 13C-MFA rests on several key principles. First, stable isotopes such as 13C act as non-radioactive tracers that can be followed through metabolic conversions without perturbing the biological system [39]. Second, the isotopic dilution principle states that the rate of isotopic enrichment within a system corresponds with the proportion of labeled to unlabeled isotopes, allowing quantitative flux calculations [39]. Third, the method assumes that isotopic mass effects are negligible, meaning that the labeling states of metabolites do not influence their enzymatic conversion rates [40].

A typical 13C-MFA workflow consists of five integrated steps that combine experimental and computational approaches [36] [37]:

- Experimental Design: Selection of appropriate isotopic tracers and labeling strategies

- Tracer Experiment: Culturing cells with 13C-labeled substrates under metabolic steady-state

- Isotopic Labeling Measurement: Analyzing labeling patterns in intracellular metabolites

- Flux Estimation: Computational inference of fluxes through iterative model fitting