From Microplate to Manufacturing: A Strategic Guide to Validating High-Throughput Screening in Bioreactor Scale-Up

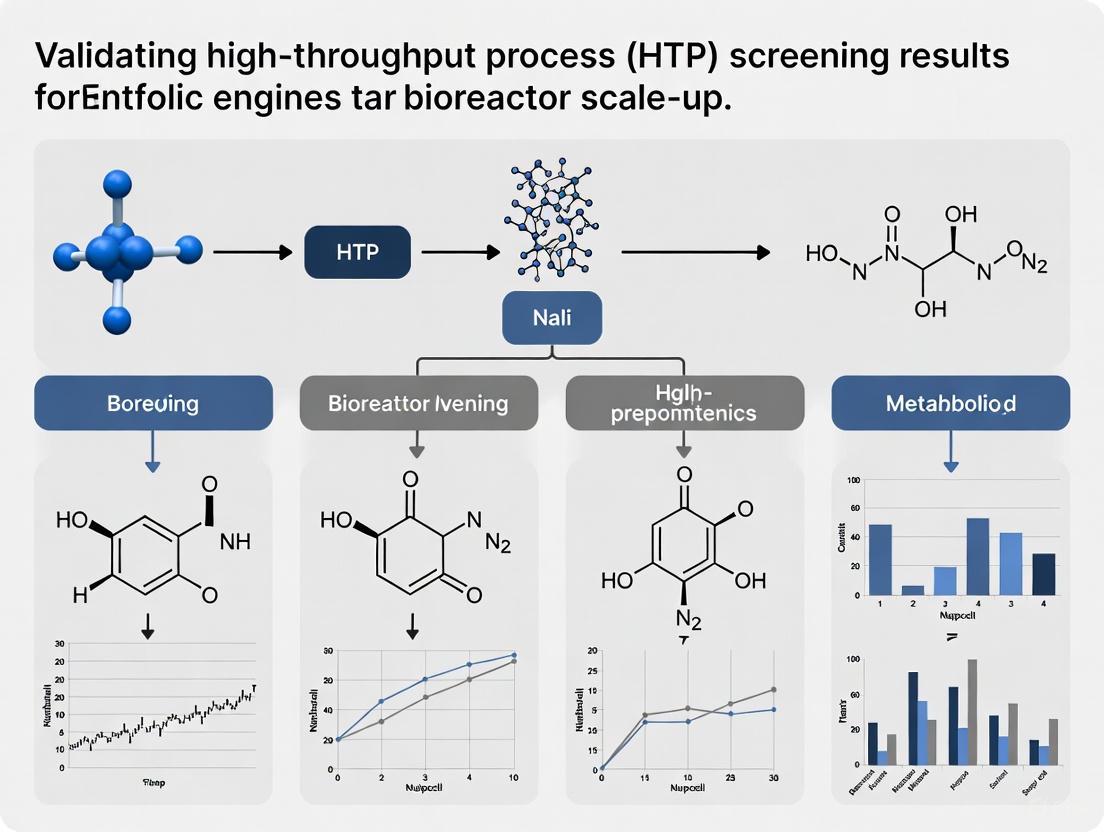

This article provides a comprehensive framework for researchers and drug development professionals to bridge the critical gap between high-throughput screening (HTS) results and successful large-scale bioprocessing.

From Microplate to Manufacturing: A Strategic Guide to Validating High-Throughput Screening in Bioreactor Scale-Up

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to bridge the critical gap between high-throughput screening (HTS) results and successful large-scale bioprocessing. It covers the foundational principles of bioreactor scaling, modern HTS and scale-down methodologies, strategies for troubleshooting common scale-up discrepancies, and robust techniques for validating that small-scale data accurately predicts manufacturing performance. By integrating the latest advances in scale-down modeling, multivariate data analysis, and digital twins, this guide aims to de-risk scale-up, accelerate process development, and ensure the consistent production of high-quality biologics.

The Scale-Up Challenge: Bridging the Gap Between High-Throughput Data and Industrial Bioreactors

Understanding the Core Principles and Inevitable Hurdles of Bioreactor Scale-Up

In the biopharmaceutical industry, the journey from laboratory discovery to commercial production hinges on the successful scale-up of bioreactor processes. This transition is not merely a matter of increasing volume but represents one of the most complex challenges in bioprocessing, where maintaining identical cellular environments across scales determines technical and commercial success [1] [2]. The stakes are extraordinarily high, as failed scale-ups can result in reduced product yields, altered product quality profiles, and significant financial losses [2]. This guide examines the core principles of bioreactor scale-up within the critical context of validating high-throughput screening (HTS) results, providing researchers with a framework for navigating the inevitable hurdles encountered when transitioning from micro-scale screening to production-scale bioreactors.

The fundamental challenge lies in the fact that cells respond to their immediate local environment, not the vessel's average conditions [2]. While high-throughput screening technologies enable rapid testing of thousands of conditions in miniaturized bioreactors, the scale-dependent parameters that dominate large-scale performance often remain unaddressed in these systems [3] [4]. Understanding the disconnect between HTS results and production-scale outcomes requires a thorough grasp of how physical, chemical, and biological factors interact across scales, and how scale-down models can bridge this validation gap [5].

Core Physical Principles Governing Bioreactor Scale-Up

The Fundamental Challenge of Geometric Similarity

The principle of geometric similarity—maintaining similar height-to-tank diameter (H/T) and impeller-to-tank diameter (D/T) ratios across scales—is often a starting point for scale-up exercises [1]. However, this approach introduces unavoidable physical consequences that create scale-up hurdles. When H/T ratios are held constant during scale-up, the surface-area-to-volume ratio (SA/V) decreases dramatically with increasing bioreactor size [1]. This reduction in SA/V creates significant challenges for heat removal in large-scale microbial fermenters and complicates CO₂ stripping in animal cell culture bioreactors due to increased liquid height and added head pressure [1].

The following table illustrates how key parameters change disproportionately during scale-up when different scaling criteria are applied:

Table 1: Interdependence of Scale-Up Parameters (Scale-up factor: 125)

| Scale-Up Criterion | Impeller Speed (N₂/N₁) | Power/Volume (P/V)₂/(P/V)₁ | Tip Speed (u₂/u₁) | Circulation Time (t₂/t₁) | kLa (kLa₂/kLa₁) |

|---|---|---|---|---|---|

| Equal P/V | 0.34 | 1.00 | 1.50 | 2.93 | 1.20 |

| Equal Tip Speed | 0.20 | 0.20 | 1.00 | 5.00 | 0.86 |

| Equal N | 1.00 | 125.00 | 5.00 | 1.00 | 2.28 |

| Equal Re | 0.04 | 0.0016 | 0.20 | 25.00 | 0.40 |

| Equal kLa | 0.63 | 4.00 | 2.80 | 1.59 | 1.00 |

Source: Adapted from Lara et al. [1]

The data reveals a critical insight: no single scaling parameter can be maintained constant across scales without causing significant deviations in other parameters [1]. For instance, scale-up based on equal power per unit volume (P/V) results in lower impeller speeds but higher tip speeds, longer circulation times, and greater kLa values. This interdependence forces process scientists to make strategic trade-offs during scale-up rather than seeking perfect parameter matching [1] [2].

Scaling Parameters and Their Biological Implications

Each scaling parameter affects different aspects of cell physiology and culture performance, making parameter selection process-dependent:

Constant Power per Unit Volume (P/V): This common approach aims to maintain similar energy input for mixing relative to volume. However, it increases impeller tip speed and circulation time, potentially exposing cells to higher shear forces and longer periods in substrate gradients [1] [2].

Constant Impeller Tip Speed: Often used for shear-sensitive cells like mammalian cell lines, this approach minimizes potential damage from high fluid velocities. However, it substantially reduces P/V and can lead to inadequate mixing and longer mixing times in larger vessels [1].

Constant Volumetric Mass Transfer Coefficient (kLa): Essential for aerobic processes where oxygen transfer is limiting, maintaining kLa ensures similar oxygen transfer capacity. However, this may require operating conditions that create undesirable CO₂ accumulation or excessive shear stress [1] [6].

Constant Mixing Time: While theoretically ideal for maintaining homogeneity, scaling with constant mixing time results in a 25-fold increase in P/V, which is mechanically infeasible and would generate destructive shear forces [1].

The evolution from experience-based to science-based scaling represents a paradigm shift in bioprocessing. Traditional approaches relied heavily on empirical correlations and trial-and-error, while modern methodologies emphasize mechanistic understanding of the underlying physics and cellular responses [2].

Scale-Dependent Hurdles in Bioreactor Operations

Gradient Formation and Heterogeneity

In large-scale bioreactors, inadequate mixing leads to the formation of spatial and temporal gradients in critical process parameters, creating distinct microenvironments that cells experience as they circulate [5]. These gradients develop when the characteristic time of substrate consumption (τc) is equal to or lower than the characteristic mixing time [5]. The likelihood of gradients increases with higher biomass concentrations and specific substrate consumption rates according to the formula:

τc = cS / (qS · cX)

Where cS is mean substrate concentration, qS is biomass-specific substrate consumption rate, and cX is biomass concentration [5].

Table 2: Common Gradients in Large-Scale Bioreactors and Their Impacts

| Gradient Type | Causes | Impact on Cells | Consequences |

|---|---|---|---|

| Substrate Concentration | Localized feeding, inadequate mixing | Alternating feast-famine conditions | Overflow metabolism, reduced yield, byproduct formation |

| Dissolved Oxygen | Limited O₂ transfer, high consumption | Oxygen limitation in zones | Metabolic shifts, reduced productivity |

| pH | Inadequate base/acid distribution | Cellular stress | Altered metabolism, viability loss |

| Carbon Dioxide | Poor stripping efficiency | Dissolved CO₂ accumulation | Inhibition of growth, product formation |

Substrate concentration gradients can be particularly dramatic. In one study with Saccharomyces cerevisiae in a 30 m³ stirred-tank bioreactor, glucose concentration reached 40.7 mg/L near the feed port at the top of the bioreactor but only 4.3 mg/L at the bottom—a nearly tenfold difference [5]. As cells travel through these varying environments, they experience continually changing conditions that can alter overall culture performance, typically resulting in 20% reduction in biomass yield and increased byproduct formation when scaling from 3L to 9000L [5].

Mass Transfer Limitations

Oxygen transfer and carbon dioxide removal present complementary challenges at large scale. Oxygen transfer is governed by the equation:

OTR = kLa × (C* - CL)

Where OTR is oxygen transfer rate, kLa is volumetric mass transfer coefficient, C* is saturated oxygen concentration, and CL is actual dissolved oxygen concentration [2]. The oxygen consumption rate (OCR) must be balanced against OTR and is calculated as:

OCR = VCD × qO₂

Where VCD is viable cell density and qO₂ is specific oxygen consumption rate [2].

While oxygen transfer typically becomes more efficient with scale due to increased hydrostatic pressure, CO₂ removal becomes progressively more challenging [1] [2]. In large tanks, the increased fluid height creates greater backpressure that reduces CO₂ stripping efficiency, potentially leading to inhibitory dissolved CO₂ levels [2]. This is particularly problematic for cell culture processes where CO₂ accumulation can inhibit cell growth and productivity.

A recent study highlighted how aeration pore size significantly impacts mass transfer efficiency in single-use bioreactors, creating substantial challenges for technology transfer between different manufacturer systems [6]. The research established a quantitative relationship between aeration pore size and initial aeration vvm in the P/V range of 20 ± 5 W/m³, with optimal initial aeration between 0.01 and 0.005 m³/min for pore sizes ranging from 1 to 0.3 mm [6].

The High-Throughput Screening Validation Gap

Limitations of Miniaturized Systems

High-throughput screening systems have become indispensable tools for rapid bioprocess development, with the global HTS market projected to grow from USD 26.12 billion in 2025 to USD 53.21 billion by 2032, reflecting increasing adoption across pharmaceutical and biotechnology industries [3]. These systems enable researchers to test thousands of culture conditions in parallel using dramatically reduced volumes, but they introduce significant scale-down challenges when used to predict large-scale performance [4].

The extreme scaling factor (>10⁵) between miniaturized stirred bioreactors (MSBRs) and industrial-scale processes creates physical limitations that complicate accurate scale-down [4]. Miniaturized systems typically operate in laminar or transitional flow regimes compared to the fully turbulent flow in production bioreactors, resulting in different mixing characteristics and energy dissipation patterns [4]. Practical challenges include:

- Vortex formation at high agitation rates

- Wall growth in small vessels

- Altered volume dynamics due to evaporation

- Limited integration of advanced process controls

- Different gas-liquid mass transfer mechanisms [4]

The table below compares key characteristics of different bioreactor scales:

Table 3: Comparison of Bioreactor Scale Characteristics

| Parameter | Lab Scale (1-10L) | Pilot Scale (200-1000L) | Production Scale (2000-5000L) | Microscale (<100mL) |

|---|---|---|---|---|

| Mixing Time | Seconds (< 5s) | 10s of seconds | 100s of seconds | Very short |

| Surface Area/Volume | High | Medium | Very Low | Extremely High |

| Heat Transfer | Efficient | Challenging | Significant limitation | Highly efficient |

| Gradient Formation | Minimal | Moderate | Significant | None |

| Flow Regime | Transitional | Turbulent | Fully turbulent | Laminar |

Scale-Down Methodology for HTS Validation

To bridge the validation gap between HTS results and manufacturing scale performance, researchers employ scale-down models that mimic large-scale heterogeneity at laboratory scale [5]. These systems typically use multi-compartment bioreactors or specially configured single vessels with controlled feeding regimes to replicate the gradients encountered in production bioreactors [5].

The following experimental workflow illustrates a systematic approach to validating HTS results across scales:

Scale-Down Validation Workflow for HTS Results

This systematic approach to scale-down modeling allows researchers to identify potential scale-up issues early in process development and design more robust processes capable of maintaining performance across scales [5] [2]. Computational Fluid Dynamics (CFD) plays an increasingly important role in these efforts by providing insights into the complex fluid dynamics and mass transfer phenomena that drive gradient formation, though challenges remain in accurately describing biological responses to dynamically changing conditions [5].

Experimental Approaches and Research Toolkit

Key Experimental Protocols for Scale-Up Studies

Protocol 1: Scale-Down Bioreactor Operation for Gradient Simulation

Purpose: To mimic large-scale substrate gradients in a laboratory-scale system [5].

Methodology:

- Utilize a multi-compartment bioreactor system consisting of interconnected, ideally mixed zones

- Establish circulation between zones using peristaltic pumps to simulate large-scale mixing times

- Implement pulsed feeding in one compartment to create substrate concentration gradients

- Monitor gradient formation using inline glucose sensors in each compartment

- Sample from each compartment to analyze cellular responses to fluctuating conditions

Key Parameters:

- Circulation time: 30-120 seconds (simulating large-scale mixing)

- Glucose concentration gradient: 5-50 mM between compartments

- Sampling frequency: Every 2-4 hours for metabolite analysis

Validation: Compare metabolic byproducts (e.g., lactate, acetate) with those observed in large-scale runs [5].

Protocol 2: kLa Determination Using the Dynamic Gassing-Out Method

Purpose: To characterize oxygen mass transfer capacity across scales [2].

Methodology:

- Equilibrate bioreactor with nitrogen to deplete dissolved oxygen

- Switch to air sparging while monitoring DO with fast-responding electrode

- Record DO increase from 10% to 80% saturation

- Calculate kLa from the slope of ln(1 - C*/C) versus time

Key Parameters:

- Agitation rate: Varied from 50% to 150% of baseline

- Gas flow rate: 0.01-0.1 vvm

- Temperature: Controlled at process setpoint

- Working volume: 30-70% of total volume

Application: Establish correlation between kLa, power input, and aeration rate for scaling calculations [2].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagents and Equipment for Scale-Up Studies

| Category | Specific Examples | Function in Scale-Up Studies |

|---|---|---|

| Cell Culture Media | QuaCell CHO CD04 Basal Medium, QuaCell CHO Feed02 | Consistent nutrient supply across scales; formulation impacts gradient formation [6] |

| Process Analytical Technology | Dissolved oxygen probes, pH sensors, Biocapacitance probes | Monitor critical process parameters; detect gradient formation [7] |

| Single-Use Bioreactors | Ambr systems, Parallel bioreactor systems (CloudReady) | High-throughput process development; scale-down modeling [3] [6] |

| Cell Lines | CHO cell lines (e.g., Dartsbio DS003), Microbial strains (E. coli, S. cerevisiae) | Consistent biological system across scales; cell-specific responses to gradients [5] [6] |

| Mass Transfer Tools | Drilled-hole spargers (0.3-1mm), Gas blending systems | Control oxygen transfer and CO₂ stripping; impact gradient formation [6] |

Case Study: Scaling with Aeration Parameter Integration

A 2024 study demonstrates a modern approach to addressing scale-up challenges by systematically integrating aeration pore size into scale-up calculations [6]. The research addressed the critical issue of inconsistent aeration pore sizes across different manufacturers' single-use bioreactors, which poses significant challenges for technology transfer.

Experimental Design: Researchers employed an orthogonal test method to examine the combined effects of different P/V (8.8-28.8 W/m³), vvm (0.003-0.012 m³/min), and aeration pore size (0.3-1 mm) conditions on culture results using parallel bioreactors with 300 mL working volume [6].

Key Finding: The study established a quantitative relationship between aeration pore size and initial aeration vvm in the P/V range of 20 ± 5 W/m³. The appropriate initial aeration was between 0.01 and 0.005 m³/min for aeration pore sizes ranging from 1 to 0.3 mm [6].

Validation: The model was successfully validated in a 15 L glass bioreactor and a 500 L single-use bioreactor, with results consistent with predictions [6]. This approach demonstrates how traditional scaling parameters must be modified to account for equipment-specific characteristics like sparger design, moving beyond the conventional constant P/V or constant kLa approaches that fail to consider mass transfer efficiency variations across different aeration systems.

Bioreactor scale-up remains a complex endeavor requiring balanced consideration of multiple, often competing, parameters. The core principles of geometric similarity, power input, mass transfer, and mixing must be applied with recognition of their inherent limitations and interactions. The inevitable hurdles of gradient formation, heterogeneity, and mass transfer limitations can be mitigated through systematic scale-down approaches that realistically mimic large-scale conditions.

The validation of high-throughput screening results requires careful attention to the fundamental differences between microscale and production-scale environments. By employing scale-down models that replicate the gradients and dynamics of large bioreactors, researchers can bridge the validation gap and develop more robust processes. The integration of computational tools like CFD with experimental scale-down systems represents the most promising path toward predictive scale-up.

As the biopharmaceutical industry continues to evolve with increasingly complex modalities including cell and gene therapies, the importance of scientifically rigorous scale-up methodologies will only increase [8]. Success will depend on continued advancement in scale-down techniques, sensor technologies, and fundamental understanding of cellular responses to the fluctuating environments they experience in large-scale bioreactors.

Key Scale-Dependent vs. Scale-Independent Parameters in Process Translation

Successful bioprocess scale-up is a critical activity for transferring laboratory discoveries to commercial manufacturing, ensuring timely startup and consistent processing from pilot to clinical and commercial scales [9]. The core challenge lies in replicating the cellular environment across different bioreactor sizes, a task complicated by the fundamental differences in how parameters behave when volume changes [1] [10]. The concept of Scale-Independent Parameters and Scale-Dependent Parameters provides the essential framework for addressing this challenge. Scale-independent parameters are those that can be optimized in small-scale bioreactors and kept constant during scale-up, as they are not significantly influenced by the bioreactor's size. In contrast, scale-dependent parameters are inherently affected by a bioreactor's geometric configuration and operating conditions, requiring careful adjustment and optimization at each new scale [1]. Understanding and managing the interplay between these two parameter classes is the cornerstone of validating High-Throughput Process Development (HTPD) screening results and achieving a robust, reproducible manufacturing process.

Comparative Analysis: Scale-Independent vs. Scale-Dependent Parameters

The table below summarizes the core characteristics, examples, and roles of scale-independent and scale-dependent parameters in bioprocess translation.

Table 1: Fundamental Characteristics of Scale-Independent and Scale-Dependent Parameters

| Feature | Scale-Independent Parameters | Scale-Dependent Parameters |

|---|---|---|

| Core Definition | Parameters not significantly influenced by bioreactor size or geometry [1]. | Parameters directly affected by bioreactor geometric configuration and operating parameters [1]. |

| Primary Role | Define the biochemical and biological environment for the cells [1]. | Govern the physical environment, including fluid dynamics and mass transfer [1] [10]. |

| Dependency | Independent of scale; represent the "set points" for the process. | Dependent on scale; represent the "knobs" to be adjusted during scale-up. |

| Typical Examples | - pH [1]- Temperature [1]- Dissolved oxygen (DO) concentration [1]- Media composition & osmolality [1] | - Impeller rotational speed (N) [1]- Power per unit volume (P/V) [1] [11]- Volumetric gas flow rate (vvm) [1]- Mixing time [1] [10] |

| Experimental Optimization | Typically tested and optimized in small-scale bioreactors [1]. | Must be optimized for the specific large-scale bioreactor [1]. |

| Impact of Scaling | Can be held constant across scales in theory, though gradients may form in large-scale equipment [5]. | Cannot be held constant; follow scaling rules (e.g., constant kLa, P/V) to maintain a similar environment [1] [11]. |

The relationship between these parameters and their impact on the bioreactor environment can be visualized as a flow of dependencies.

Figure 1: Parameter Interdependence in Bioreactor Scale-Up. Scale-independent parameters define the biochemical environment, while scale-dependent parameters control the physical environment. Both must be managed to maintain a consistent cellular environment across scales.

The Scaling Toolkit: Key Parameters and Their Quantitative Interdependence

In-Depth Look at Key Parameters

Scale-Independent Parameters form the foundation of the process. Parameters like pH and temperature are fundamental as they directly influence enzyme activity, cell growth, and metabolic pathways [12]. Media composition, including the concentrations of nutrients, vitamins, and minerals, provides the essential building blocks for cell development and product synthesis [12]. While the dissolved oxygen (DO) setpoint is a scale-independent variable crucial for aerobic metabolism, the method to achieve this setpoint becomes a scale-dependent challenge [1] [12].

Scale-Dependent Parameters are the levers engineers adjust to physically realize the scale-independent conditions in a larger volume. Power per unit volume (P/V), the energy transferred from the stirrer to the liquid, is a critical scaling criterion as it influences mixing, shear stress, and mass transfer [10] [11]. The volumetric mass transfer coefficient (kLa) quantifies the efficiency of oxygen transfer from gas to liquid and is often kept constant across scales to ensure adequate oxygen supply [1] [9]. Mixing time, the time required to achieve homogeneity, increases with scale and can lead to gradients in substrates, pH, and dissolved gases if not properly accounted for [1] [5]. Impeller tip speed is sometimes used as a scaling criterion, as it relates to the shear forces experienced by cells [1] [10].

Quantitative Interdependence of Scaling Parameters

The complexity of scale-up arises because these key parameters are intrinsically linked. Changing one parameter to match a scaling criterion will inevitably change others. The table below illustrates this interdependence for a scale-up factor of 125, demonstrating that it is impossible to keep all scale-dependent parameters constant simultaneously.

Table 2: Interdependence of Scale-Dependent Parameters for a Scale-Up Factor of 125 (adapted from [1])

| Scale-Up Criterion (Held Constant) | Impeller Speed (N) | Power per Volume (P/V) | Impeller Tip Speed | Reynolds Number (Re) | Mixing/Circulation Time | kLa (Oxygen Mass Transfer) |

|---|---|---|---|---|---|---|

| Impeller Speed (N) | 1 | 1/3125 | 5 | 1/625 | 1 | 1/5 |

| Power per Volume (P/V) | 5 | 1 | 25 | 1/125 | 3 | 5 |

| Impeller Tip Speed | 1/5 | 1/25 | 1 | 1/3125 | 5 | 1/25 |

| Reynolds Number (Re) | 25 | 625 | 125 | 1 | 1/5 | 125 |

| Mixing Time | 1 | 1/25 | 5 | 1/625 | 1 | 1/5 |

Note: The table shows the relative change in parameters when a specific criterion is held constant during scale-up. For example, scaling up with constant P/V increases mixing time by a factor of 3 and kLa by a factor of 5, while tip speed increases dramatically by a factor of 25.

Experimental Protocols for Parameter Translation

A systematic, risk-based approach is essential for successful process translation. The following workflow outlines a modern methodology for scaling bioprocess parameters, moving beyond traditional single-parameter rules.

Figure 2: A Modern Workflow for De-risking Parameter Translation. This integrated approach uses high-throughput screening, multi-parameter scaling, and predictive tools to ensure a robust scale-up.

Protocol 1: Validating Mixing and Mass Transfer in Scale-Down Models

Objective: To ensure that mass transfer (kLa) and mixing efficiency are adequately replicated in the scale-down model to avoid gradients present at production scale.

Background: In large-scale bioreactors (e.g., 10,000 L), mixing times can be on the order of 100 seconds or more, leading to significant gradients in substrate, pH, and dissolved oxygen [5]. Cells circulating through the bioreactor are exposed to these fluctuating conditions, which can alter metabolism and reduce product yield [5]. This protocol uses a two-compartment scale-down system to mimic these gradients.

- Methodology:

- System Setup: Configure a two-compartment bioreactor system. One compartment represents the "well-mixed" zone with high oxygen and substrate (simulating the impeller region). The other represents the "stagnant" or "gradient" zone with lower mixing and oxygen (simulating distant parts of a large tank) [5].

- Parameter Calculation:

- kLa Determination: Calculate the kLa in both compartments using the gassing-out method. The kLa in the well-mixed zone should match the target kLa for the production scale.

- Circulation Time: Set the medium circulation rate between the two compartments to match the calculated circulation time of the large-scale bioreactor, which can be estimated from computational fluid dynamics (CFD) or correlations [5].

- Cultivation & Sampling: Run a fed-batch culture with the same cell line and media used in HTPD studies. Implement feeding in the well-mixed zone to mimic a large-scale feed point.

- Analysis:

- Physiological Response: Measure cell viability, productivity, and metabolic byproducts (e.g., lactate). Compare these results to data from both homogeneous small-scale bioreactors and the target large scale.

- Gradient Measurement: Periodically sample from both compartments to directly measure the concentration gradients of key metabolites (e.g., glucose, glutamate) and dissolved gases (O₂, CO₂).

Expected Outcome: A successful scale-down model will exhibit cell physiology and product quality profiles that more accurately predict large-scale performance than a standard, well-mixed lab-scale bioreactor, thereby validating the HTPD results under more industrially relevant conditions.

Protocol 2: Establishing a Scaling Rule Based on Constant kLa

Objective: To translate process conditions from a small-scale (e.g., 2 L) to a pilot-scale (e.g., 200 L) bioreactor by maintaining a constant volumetric mass transfer coefficient (kLa) for oxygen.

Background: kLa is a composite parameter that determines the rate at which oxygen is transferred from gas bubbles to the liquid medium. Keeping it constant across scales helps ensure cells receive sufficient oxygen, a common bottleneck in aerobic bioreactors [1] [9]. The kLa is influenced by agitation speed and gas flow rate.

- Methodology:

- Baseline Characterization: At the small scale (2 L), determine the kLa value under the optimized process conditions (specific agitation speed and gas flow rates) that yielded successful HTPD results. This can be done using the dynamic gassing-out method.

- Large-Scale Projection:

- Use a mathematical model or supplier-provided data for the pilot-scale bioreactor that correlates kLa with agitation speed (N) and gas flow rate (specifically, superficial gas velocity, Vₛ) [10] [9]. A common correlation is: kLa = K * (P₍g₎/V)ˣ * (Vₛ)ʸ, where P₍g₎/V is the gassed power per unit volume.

- Solve the correlation to find the combination of N and Vₛ at the 200 L scale that gives the same kLa value as the 2 L scale.

- Secondary Parameter Check: Calculate other key parameters (e.g., P/V, tip speed) at the proposed 200 L operating conditions using the formulas in Table 1. Ensure they remain within acceptable ranges to avoid excessive shear stress or inadequate mixing.

- Verification Run: Perform a verification run at the 200 L scale using the calculated parameters. Continuously monitor dissolved oxygen, pH, and other critical variables to confirm the environment matches the small-scale model.

Expected Outcome: The pilot-scale bioreactor should demonstrate similar cell growth profiles, viability, and product titer as the small-scale model, confirming that the oxygen transfer environment has been successfully replicated.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Tools and Reagents for Bioreactor Scale-Up Studies

| Tool / Solution | Function in Scale-Up Research |

|---|---|

| Multi-Parallel Micro-Bioreactors (e.g., Ambr systems) | High-throughput systems (e.g., 24–48 vessels) for rapid screening of scale-independent parameters and early process development, drastically cutting development timelines [13]. |

| Single-Use Bioreactors | Disposable vessels that eliminate cross-contamination risk and cleaning validation, compress changeover time, and are available in a "family" of geometrically similar designs from development to production scales [1] [13]. |

| Advanced Inline Sensors (DO, pH, Biomass) | Provide real-time, continuous data on critical process parameters (CPPs). Optical DO sensors and capacitance-based biomass probes are essential for accurate scale-up/scale-down studies [12] [13]. |

| Scale-Down Bioreactor Systems | Lab-scale systems (single- or multi-compartment) designed to mimic the inhomogeneous conditions (gradients) of large-scale production bioreactors, allowing for the study of their impact on cell physiology [5]. |

| Bioprocess Modeling Software (e.g., BioPAT Insights) | Data-driven software applications that use characterized bioreactor data to facilitate scaling using multiple parameters simultaneously, simplifying the process and reducing risk for non-expert users [10]. |

| Chemically Defined Media | Media with precisely known compositions that ensure consistency and reproducibility across scales, eliminating the variability introduced by complex raw materials like hydrolysates. |

The rigorous classification and management of scale-dependent and scale-independent parameters are fundamental to translating HTPD screening results into successful manufacturing processes. While scale-independent parameters like pH and temperature define the fundamental biological environment, scale-dependent parameters such as P/V and kLa must be strategically adjusted using established scaling rules and a clear understanding of their inherent trade-offs. Modern approaches that leverage high-throughput tools, predictive scaling software, and representative scale-down models are de-risking this complex journey. By systematically applying this comparative framework, scientists and drug development professionals can ensure that promising laboratory results are faithfully reproduced at commercial scale, bringing critical biologic therapies to patients efficiently and reliably.

In the realm of industrial bioprocessing, the transition from laboratory-scale bioreactors to large-scale production vessels presents a fundamental paradox: while small-scale bioreactors offer homogeneous conditions ideal for process development, their scaled-up counterparts invariably develop significant heterogeneities that profoundly impact cell physiology and process performance. This challenge is particularly relevant in the context of validating high-throughput screening (HTS) results, where microscale and lab-scale data must reliably predict performance at manufacturing scale. Gradients in substrate concentration, dissolved oxygen (DO), pH, and other critical parameters develop in large-scale bioreactors due to inadequate mixing, creating a dynamic environment to which cells must continually adapt [5] [1]. The core issue stems from dramatically increased mixing times—from seconds in lab-scale systems to minutes in production bioreactors—which frequently exceed relevant cellular reaction times, creating multiple distinct microenvironments that cells navigate as they circulate [5].

Understanding the interplay between fluid dynamics and cell physiology has thus become paramount for successful scale-up. This article examines how mixing time and fluid dynamics impact cellular physiology at scale and explores advanced scale-down models and high-throughput tools that enable more reliable translation of HTS results to manufacturing scale.

Gradient Formation in Large-Scale Bioreactors

Physical Origins of Gradients

In large-scale bioreactors, the physical origins of gradients can be traced to fundamental changes in geometric and hydrodynamic parameters. As bioreactor volume increases, the surface-area-to-volume ratio (SA/V) decreases dramatically, reducing the efficiency of heat transfer and gas exchange [1]. Simultaneously, mixing time—defined as the duration required to homogenize the liquid to 95% of final concentration after adding a tracer—increases significantly, from seconds in laboratory bioreactors to tens or even hundreds of seconds in production-scale systems [5]. This increased mixing time creates conditions where substrate consumption rates outpace mixing efficiency, establishing persistent gradients throughout the vessel.

The formation of substrate gradients follows predictable patterns, particularly in fed-batch processes where concentrated feed solutions are added at a single point. Computational Fluid Dynamics (CFD) simulations of a 22 m³ E. coli fermentation revealed nearly tenfold higher glucose concentrations near the feed port compared to the bottom of the bioreactor [5] [14]. Similarly, dissolved oxygen gradients emerge when oxygen consumption rates exceed oxygen transfer rates in regions distant from the sparging location, creating transient oxygen limitation zones [14].

Quantifying Gradient Severity

The likelihood and severity of gradient formation can be estimated by comparing characteristic timescales. The characteristic time of substrate consumption (τC) can be calculated by dividing the mean substrate concentration (cS) by the mean substrate consumption rate (QS = qS · cX), where qS is the biomass-specific substrate consumption rate and cX is the biomass concentration [5]:

τC = cS / (qS · cX)

When τC is equal to or lower than the mixing time, significant gradients are likely to form. This scenario becomes increasingly probable at high cell densities and high specific consumption rates, conditions typical of industrial bioprocesses [5]. Literature suggests approximately 10% of large-scale aerobic bioreactors operate with substrate-rich and oxygen-limited conditions simultaneously, implying cells may encounter these challenging environments for at least 10% of their circulation time [5].

Impact of Gradients on Cellular Physiology

Metabolic Responses and Byproduct Formation

The fluctuating environments created by poor mixing trigger significant metabolic adaptations in microbial and mammalian cells. When E. coli cells circulate between glucose-rich and glucose-limited zones, they exhibit metabolic overflow, leading to acetate formation in aerobic conditions [14]. Similarly, Saccharomyces cerevisiae produces ethanol under fluctuating glucose concentrations, reducing biomass yield and process efficiency [5]. The physiological basis for this response lies in the different timescales of metabolic regulation: while substrate uptake and central metabolic reactions occur within seconds, regulatory responses on transcriptional and translational levels require minutes to hours [14]. This mismatch prevents cells from optimally adjusting to rapidly changing conditions.

In large-scale fed-batch bioreactors, these metabolic inefficiencies manifest as reduced biomass yields. Scale-up of an E. coli process from 3 L to 9 m³ resulted in a 20% reduction in biomass yield, while a baker's yeast process showed a 7% increase in final biomass concentration when scaled down from 120 m³ to 10 L, highlighting the significant impact of gradients on process performance [5] [14].

Stress Response Activation

The dynamic environment in large-scale bioreactors triggers multiple stress response pathways as cells transition between different microenvironments. Studies with E. coli in scale-down reactors have demonstrated rapid induction/relaxation of stress responses as cells pass through high-glucose, oxygen-limited zones [14]. Transcriptional analysis revealed increased mRNA levels of stress-induced genes, including those associated with oxygen limitation (pfl, fnr), heat shock (groEL, dps), and acid stress (aspA) [14].

The diagram below illustrates the cellular stress response pathways activated by gradients in large-scale bioreactors:

Figure 1: Cellular Stress Pathways Activated by Bioreactor Gradients

Notably, these stress responses are transient, relaxing when cells return to more favorable conditions. However, the repeated induction and relaxation of stress responses throughout the fermentation contributes to altered physiological properties and may explain population heterogeneity observed in large-scale bioprocesses [14].

Population Heterogeneity

Gradients promote phenotypic population heterogeneity, where isogenic cultures exhibit diverse physiological states. This heterogeneity arises because individual cells experience different histories as they circulate through varying microenvironments, leading to subpopulations with distinct metabolic activities, stress resistance, and productivity [5]. While this diversity can enhance population robustness, it often reduces overall process performance and complicates process control. Flow cytometric analysis of E. coli cultures from large-scale bioreactors and scale-down simulators revealed reduced damage to cytoplasmic membrane potential and integrity compared to laboratory-scale cultures, suggesting that the dynamic environment selects for or induces adaptations that improve resilience to stress [14].

Experimental Evidence: Quantitative Data on Gradient Impacts

The table below summarizes key experimental findings from scale-down studies that quantify the impact of gradients on process performance:

Table 1: Experimental Evidence of Gradient Impacts on Bioprocess Performance

| Organism | Scale/System | Gradient Type | Physiological Impact | Performance Effect | Source |

|---|---|---|---|---|---|

| E. coli | 22 m³ production bioreactor | Substrate (glucose), dissolved oxygen | Mixed acid fermentation, formate accumulation | 20% reduction in biomass yield | [14] |

| S. cerevisiae | 30 m³ STR, 19.8-22.3 m³ working volume | Substrate (glucose) | Overflow metabolism, ethanol production | Reduced biomass yield | [5] |

| E. coli | Scale-down reactor (STR-PFR system) | Oscillating glucose (56s cycle) | Transient stress response activation | Altered product quality | [14] |

| Baker's yeast | Scale-down from 120 m³ to 10 L | Multiple gradients | Altered physiological properties | 7% increase in biomass, reduced gassing power | [5] [14] |

Scale-Down Approaches to Mimic Large-Scale Gradients

Scale-Down Bioreactor Configurations

To study large-scale gradient effects at laboratory scale, researchers have developed various scale-down bioreactor configurations that mimic the fluctuating conditions encountered in production bioreactors. These systems typically comprise multiple compartments with different environmental conditions through which cells circulate. The most common configurations include:

Table 2: Scale-Down Bioreactor Configurations for Gradient Studies

| Configuration | Description | Applications | Advantages | Limitations |

|---|---|---|---|---|

| Stirred Tank - Plug Flow Reactor (STR-PFR) | Recirculating system combining well-mixed STR with PFR creating substrate gradient | Study of E. coli and S. cerevisiae response to glucose oscillations | Controlled residence time in gradient zone | Requires custom equipment, complex operation |

| Multi-Compartment Bioreactors | Interconnected vessels with different environmental conditions | Mimicking regional variations in large tanks | Decouples different gradient effects | Limited number of compartments |

| Single STR with Controlled Feeding | Modified feeding strategies to create temporal oscillations | Mammalian cell culture, microbial systems | Uses standard equipment | Limited spatial heterogeneity |

| Miniaturized and Microfluidic Systems | Microbioreactors with microfluidic control | HTS for clone selection, media optimization | High parallelization, low cost | Challenges in scaling down hydrodynamics |

The STR-PFR system has been particularly valuable for elucidating dynamic cellular responses. In one experimental setup, E. coli cells were circulated between a stirred tank reactor (STR) maintained under glucose-limited conditions and a plug flow reactor (PFR) with high glucose concentration, with a total circulation time of 56 seconds mimicking large-scale mixing times [14]. This system demonstrated that short-term exposures (seconds) to high glucose concentrations were sufficient to induce acetate formation and stress responses.

High-Throughput Screening Platforms

Recent advances in high-throughput screening have yielded sophisticated tools for evaluating gradient effects at microscale. The BioLector µ-bioreactor system enables online monitoring of cell growth, dissolved oxygen, and pH in microtiter plates, while enzymatic glucose release systems simulate fed-batch conditions [15]. Similarly, the ambr 15 and ambr 250 systems offer automated, parallel bioreactor operations with enhanced control capabilities [16] [15].

For perfusion processes, novel high-throughput membrane bioreactors (HT-MBR) have been developed with six parallel filtration membrane modules, allowing simultaneous evaluation of multiple membrane types under identical conditions [17]. These systems enable researchers to generate statistically validated data that normally cannot be obtained with conventional MBR testing systems due to time, cost, and technical limitations [17].

A particularly innovative approach combines the ambr 15 system with offline centrifugation for cell retention, creating a high-throughput perfusion mimic. This system employs a variable volume model to maintain high-density cultures matching the cellular microenvironment of true perfusion systems, demonstrating excellent cell culture performance fidelity across scales [16].

The Scientist's Toolkit: Essential Research Reagent Solutions

The table below outlines key technologies and reagents essential for investigating gradient effects and developing robust scale-down models:

Table 3: Essential Research Tools for Gradient Studies and Scale-Down Modeling

| Tool/Reagent | Function | Application Examples | Key Features |

|---|---|---|---|

| Enzymatic Glucose Release Systems | Enable fed-batch conditions in microtiter plates | Clone screening, media optimization in BioLector | Controlled glucose release via enzyme concentration |

| Compartment Modeling Software | Predict large-scale gradient formation from CFD | Feed point optimization, scale-down reactor design | Reduces computational load vs. full CFD |

| CFD-CRK Integration Platforms | Couple hydrodynamic models with cellular kinetics | Predictive scale-up, digital twin development | Links fluid dynamics to metabolic responses |

| Multi-Parameter Flow Cytometry | Assess population heterogeneity | Membrane potential, cell integrity measurements | Single-cell resolution of physiological states |

| mRNA Extraction & Analysis Kits | Quantify stress response induction | Transcriptional analysis of stress genes | Rapid sampling compatible with scale-down studies |

| Parallel Microbioreactor Systems | High-throughput process development | ambr systems, BioLector, DASGIP | Multiple controlled bioreactors in small footprint |

Gradient Mitigation Strategies for Improved Scale-Up

Operational and Design Approaches

Several strategies have proven effective for mitigating gradients in large-scale bioreactors. Optimizing feed point placement represents one of the most powerful approaches. Computational studies have demonstrated that dividing a vessel axially into equal-sized compartments with symmetrical feed point placement can reduce mixing time by more than a minute and significantly mitigate substrate, oxygen, and pH gradients [18]. In some cases, this approach restored large-scale bioreactor performance to ideal, homogeneous reactor conditions, recovering oxygen consumption and biomass yield while diminishing phenotypical heterogeneity [18].

Other effective strategies include:

- Multiple feed points: Distributing substrate feed across multiple locations rather than a single point

- Impeller optimization: Selecting impeller types and configurations that improve overall mixing efficiency

- Sparger design: Optimizing gas distribution to minimize oxygen gradients

- Operating parameter adjustment: Modifying agitation rates, gas flow rates, and feeding strategies to balance mixing efficiency with shear sensitivity

Advanced Modeling and Monitoring Approaches

The integration of Computational Fluid Dynamics with Cell Reaction Kinetics (CFD-CRK) represents a paradigm shift in bioprocess scale-up. These integrated models simulate how cells respond to spatiotemporal variations in their environment, creating digital twins of bioprocesses that enable in silico scale-up and optimization [19]. While Eulerian approaches (using concentration fields) are computationally efficient for transient simulations, Lagrangian methods (tracking virtual particles) provide more detailed insights into individual cell experiences but require greater computational resources [19].

For dissolved CO₂ accumulation—a particularly challenging gradient in large-scale mammalian cell culture—compact mathematical models have been developed that incorporate CO₂ production, removal, and pH-dependent equilibrium reactions. These models help design efficient stripping strategies by accounting for factors such as gas residence time, bubble saturation, and the reduced surface-area-to-volume ratio in large tanks [20].

The following diagram illustrates an integrated experimental-computational workflow for gradient analysis and mitigation:

Figure 2: Integrated Workflow for Gradient Analysis and Mitigation

The presence of gradients in large-scale bioreactors represents a critical factor in the translation of high-throughput screening results to manufacturing performance. While HTS platforms like the BioLector and ambr systems provide valuable data for clone selection and media optimization, their predictive power is limited unless they account for the gradient effects inherent in production-scale systems. The integration of scale-down approaches that mimic large-scale heterogeneities, combined with advanced computational models linking fluid dynamics to cellular physiology, offers a path toward more reliable scale-up. By incorporating gradient considerations early in process development—from clone selection through process optimization—researchers can significantly improve the efficiency and success of bioprocess scale-up, ultimately reducing development timelines and enhancing manufacturing robustness.

The transition from high-throughput screening (HTS) to successful biomanufacturing represents a critical juncture in therapeutic development. While HTS enables rapid identification of promising candidates, the true validation of these outputs occurs during bioreactor scale-up, where cellular phenotypes must translate into predictable process performance. This guide examines the critical relationship between HTS-derived parameters and the Process Performance Indicators (PPIs) that determine scaling success. The industry's progression toward intensified processes and higher cell densities has made this correlation increasingly significant, as scale-dependent factors like mixing time, mass transfer efficiency, and gradient formation can dramatically alter cellular behavior compared to microtiter plate environments [1] [21].

Fundamentally, the scaling challenge arises from physical disparities between systems. As bioreactor volume increases, the surface-area-to-volume ratio decreases significantly, affecting gas exchange and heat transfer. Simultaneously, mixing times increase, creating heterogeneous zones with varying substrate, pH, and dissolved oxygen concentrations [1]. These physical changes can trigger physiological responses in production cells that were not observable during HTS, potentially altering critical quality attributes (CQAs) of the product. Understanding and anticipating these scale-dependent effects through strategic PPI monitoring forms the core of successful technology transfer from screening to manufacturing scale.

HTS Outputs and Corresponding Bioreactor Performance Indicators

Quantitative Correlation Between Screening and Production Metrics

Table 1: Correlation of HTS Outputs with Bioreactor Process Performance Indicators

| HTS Output / Screening Parameter | Corresponding Bioreactor PPI | Impact on Scaling Success | Experimental Evidence |

|---|---|---|---|

| Oxygen Uptake Rate (OUR) | Volumetric Mass Transfer Coefficient (kLa) | Determines maximum viable cell density; Directly impacts productivity [21] | kLa maintained at 10-20 h⁻¹ across scales enabled consistent VCD >40 million cells/mL [21] |

| Specific Productivity (qp) | Metabolic Waste Profiles (Lactate, Ammonia) | Elevated lactate indicates poor pH control; Affects growth, productivity, product quality [22] | PID tuning reduced lactate by >30% and increased protein productivity [22] |

| Cell Growth Rate (μ) | Mixing Time & Homogeneity | Longer mixing times create substrate gradients; Causes metabolic shifts at large scale [1] [21] | Mixing time increase from 20s (lab) to 120s (production) altered metabolism without proper control [1] |

| Nutrient Consumption Kinetics | Gradient Formation (Substrate, pH) | Cells experience cycling between feast/famine conditions; Impacts product quality consistency [1] | Substrate gradients >30% of setpoint observed in large-scale bioreactors [1] |

| Cell Line Stability | Shear Stress & Microenvironment | Varying energy dissipation rates affect cell growth, productivity, and genetic stability [1] [23] | Tip speed maintained at 1-2 m/s across scales preserved cell viability and productivity [1] |

Advanced HTS Approaches for Predictive Scaling

Contemporary HTS methodologies have evolved beyond simple potency assessment to incorporate scale-relevant parameters early in candidate selection. Ultra-high-throughput virtual screening (uHTVS) of synthetically accessible libraries, leveraging AI-assisted workflows like Deep Docking, can evaluate billions of compounds while prioritizing those with favorable developmental characteristics [24]. For bioprocess applications, advanced micro-bioreactor systems (e.g., ambr250) function as high-throughput scale-down models that generate multivariate data on cell growth, metabolism, and productivity under controlled conditions [22] [10].

The integration of protein-protein interaction (PPI) screening in early discovery has proven particularly valuable for identifying candidates with specific mechanistic actions. In one case study targeting adenylyl cyclase 1 (AC1), researchers developed a fluorescence polarization assay to identify inhibitors of the AC1-CaM PPI, followed by validation in cellular NanoBiT and cAMP accumulation assays [25]. This multi-tiered approach ensured selected hits maintained efficacy in progressively complex biological environments, de-risking later scaling activities. Similarly, TR-FRET assays for FAK-paxillin PPI inhibitors incorporated counter-screening and surface plasmon resonance validation, yielding four confirmed hits from a 31,636-compound library [26].

Experimental Protocols for PPI Validation During Scale-Up

Protocol 1: Mass Transfer Characterization (kLa Measurement)

Purpose: To determine the volumetric mass transfer coefficient (kLa) for oxygen, a critical PPI for ensuring consistent cell culture performance across scales [21].

Materials:

- Bioreactor systems at relevant scales (e.g., 200L, 2000L, 15,000L)

- FDO925 dissolved oxygen probe (WTW, Weilheim, Germany) or equivalent

- Nitrogen and air supply systems

- Model medium: 1× PBS with 1 g/L Kolliphor (BASF SE)

- Temperature control system set to 37°C

Procedure:

- Equilibrate the bioreactor with model medium at the target temperature (37°C).

- Sparge the liquid with nitrogen to strip oxygen concentration to below 20% air saturation.

- Initiate aeration with pressurized air at the desired gas flow rate while maintaining target agitation speed.

- Record the dissolved oxygen concentration continuously until it reaches >80% air saturation.

- Calculate kLa from the time constant of the dissolved oxygen concentration curve using the equation: [ OTR = \frac{dc{O2}}{dt} = kLa \cdot (c{O2}^* - c{O2}) ] where (c{O2}^*) is the saturation concentration and (c_{O2}) is the transient concentration [21].

- Repeat for various aeration rates and agitation speeds to establish operating envelopes.

Validation: Compare measured kLa values against predictions from established correlations (e.g., van't Riet: (kLa = C \cdot (P/V)^α \cdot v_S^β)) to identify scale-dependent deviations [21].

Protocol 2: Mixing Time Characterization

Purpose: To assess mixing efficiency across scales, a critical factor in preventing gradients that impact cell physiology [21].

Materials:

- Transparent bioreactor replicas (acrylic glass)

- pH-sensitive tracer: 50 mmol/L bromothymol blue-ethanol solution

- Neutralization agents: 2 M NaOH and 2 M HCl solutions

- High-speed camera (e.g., Nikon D7500)

- Image analysis software

Procedure:

- Establish operating conditions (agitation speed, gassing rate, working volume) in the bioreactor.

- Add bromothymol blue solution to achieve 3 μmol/L concentration throughout the reactor.

- Add NaOH (0.02‰ of reactor volume) to raise pH into basic range, creating uniform blue coloration.

- Mix for 5 minutes at target operating parameters to ensure homogeneity.

- Add HCl (0.04‰ of reactor volume) to the liquid surface.

- Record decolorization process at high temporal resolution until 95% homogeneity is achieved.

- Analyze video data to determine mixing time (t₉₅) based on the 95% criterion [21].

Validation: Compare mixing times across scales using dimensionless numbers (Reynolds, Power number) to identify potential heterogeneity zones.

Protocol 3: PID Control Optimization for Consistent Environment

Purpose: To tune proportional-integral-derivative (PID) controllers for maintaining critical environmental parameters (pH, DO) during scale-up [22].

Materials:

- Bioreactor system with adjustable PID gains (e.g., ambr250)

- pH and dissolved oxygen sensors

- Acid/base addition pumps, CO₂ sparging system, oxygen sparging

- Standardized cell culture (e.g., CHO cells)

Procedure:

- Begin with manufacturer-default PID settings for pH and DO control.

- Monitor system response to planned disturbances (feed additions, antifoam additions).

- For pH control: Adjust proportional gain (kP) and integral gain to minimize deviation from setpoint while preventing oscillation.

- For DO control: Implement cascade control with multiple control levels, tuning each independently.

- Evaluate control performance through multiple generations to ensure robust response across culture phases.

- Document final PID settings, including gain values and manipulated variable ranges [22].

Validation: Assess culture performance (growth, productivity, metabolite profiles) compared to previous settings; successful tuning typically shows reduced lactate accumulation and improved viability [22].

Visualization of HTS to Production Workflow

Diagram 1: Integrated workflow from HTS to production, highlighting critical validation points.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents and Materials for HTS and Scale-Up Validation

| Reagent / Material | Function in HTS-to-Scale-Up Workflow | Application Example |

|---|---|---|

| Bromothymol Blue pH Tracer | Visual determination of mixing efficiency in bioreactors | Global mixing time measurement via decolorization method [21] |

| Kolliphor Polaxomer | Model medium additive to mimic cell culture media surface tension | kLa measurement standardization in PBS/Kolliphor solution [21] |

| Biotin-PEG-1907 Stapled Peptide | PPI probe for TR-FRET screening assays | Mimics paxillin in FAK FAT domain interaction studies [26] |

| Terbium Cryptate Donor | TR-FRET donor for protein-protein interaction assays | FAK-paxillin PPI screening in 384-well low volume format [26] |

| NanoBiT Vectors (Promega) | Cellular protein-protein interaction assessment | Validation of AC1-CaM PPI inhibition in full-length protein context [25] |

| CaM Bacterial Expression Vector | Production of recombinant calmodulin for PPI screening | AC1-CaM interaction studies with N-terminal 6X-His-GST tag [25] |

| FD0925 Dissolved Oxygen Probe | Oxygen concentration monitoring during kLa determination | gassing-out method for volumetric mass transfer coefficient [21] |

| Enamine REAL Library | Ultra-large compound library for virtual screening | AI-assisted uHTVS with 5.51 billion synthetically accessible compounds [24] |

Comparative Analysis of Scale-Up Methodologies

Performance of Scaling Approaches

Table 3: Comparison of Bioreactor Scale-Up Methodologies and Performance Outcomes

| Scale-Up Methodology | Key Principles | Performance Advantages | Limitations | Supporting Data |

|---|---|---|---|---|

| Constant Power/Volume (P/V) | Maintains consistent energy input per unit volume across scales | Simplicity; Reasonable prediction of hydrodynamic environment | Neglects mixing time increases; Can oversimplify mass transfer | 3x longer mixing times despite constant P/V [1] |

| Constant kLa | Maintains oxygen mass transfer capability across scales | Direct addressing of oxygen transfer limitations | Difficult to achieve without compromising other parameters; CO₂ accumulation possible | van't Riet correlation enabled robust kLa prediction (R²=0.94) [21] |

| Constant Tip Speed | Maintains shear conditions at impeller tip across scales | Controls maximum shear stress; Protects sensitive cell lines | Significant reduction in P/V at large scale; Can impair mixing | Optimal range: 1-2 m/s for mammalian cells [1] |

| Simultaneous Multi-Parameter | Uses characterized data and modeling to balance multiple factors | Risk-based approach; Visualizes trade-offs; More robust outcomes | Requires specialized software (BioPAT Insights); Extensive characterization needed | Reduced scale-up deviations by >40% vs. single-parameter methods [10] |

| Geometric Similarity | Maintains consistent H/T and D/T ratios across scales | Establishes foundation for other scaling methods; Reduces variables | Alone insufficient; Must be combined with other methods | H/T 1.5-2.0 and D/T 1/3-1/2 typical for mammalian systems [9] |

Case Study: Successful Multi-Scale Process Transfer

A comprehensive study demonstrated successful process transfer between single-use bioreactors (200L and 2000L SUBs) and a conventional 15,000L stainless steel stirred-tank bioreactor. The strategy employed a modified van't Riet correlation that incorporated stirrer tip speed (utip), volumetric aeration rate (vvm), and reactor volume (V) as parameters:

[ kLa_{mod} = C \cdot (utip)^{α} \cdot (vvm)^{β} \cdot (V)^{γ} ]

This approach enabled robust prediction of mass transfer coefficients across scales for a wide range of operating conditions, confirming that process transfer between different bioreactor technologies (single-use to stainless steel) is uncritical when appropriate engineering parameters are maintained [21]. The study highlighted that while geometric similarities help establish scaling foundations, the key to success lies in maintaining physiological consistency through careful control of the cellular microenvironment across scales.

The correlation between HTS outputs and bioreactor PPIs provides a critical framework for de-risking bioprocess scale-up. Successful technology transfer requires understanding how small-scale observations translate to production environments, particularly for sensitive parameters like mixing efficiency, mass transfer capability, and environmental control. The methodologies and data presented herein demonstrate that a systematic approach—incorporating scale-down modeling, multi-parameter control strategies, and advanced monitoring techniques—can bridge the gap between HTS candidate selection and manufacturing success.

Modern tools including characterized scale-down models, predictive software platforms, and advanced sensor technologies enable more reliable prediction of scale-up outcomes. By establishing quantitative relationships between HTS-derived parameters and process performance indicators early in development, organizations can significantly reduce technical risks, accelerate timelines, and ensure consistent product quality throughout the product lifecycle. The continued integration of HTS with engineering fundamentals represents the future of robust, predictable bioprocess development.

Building Predictive Power: HTS Platforms and Advanced Scale-Down Modeling

Selecting and Implementing High-Throughput Micro-Bioreactor Systems for Scalable Data

High-throughput micro-bioreactor systems have emerged as transformative tools in bioprocess development, addressing the critical need for rapid, data-rich screening of cell lines and process parameters. These systems, typically operating at volumes between 10 mL and 1,000 mL, enable researchers to execute dozens of parallel experiments under tightly controlled conditions, generating statistically significant datasets essential for predictable scale-up [27]. Within the framework of validating high-throughput screening results for bioreactor scale-up, micro-bioreactors serve as the crucial link between initial discovery work and pilot-scale production, providing a controlled, scalable environment for evaluating how well small-scale performance predicts larger-scale outcomes.

The adoption of these systems is accelerating across the biotechnology sector, with more than 62% of new bioprocess development labs now integrating micro-scale bioreactor systems [27]. This widespread adoption is driven by the pressing need to accelerate upstream optimization workflows, with approximately 74% of biologics developers citing micro-scale throughput as essential for their operations [27]. For researchers and drug development professionals, implementing the right micro-bioreactor system is not merely a convenience but a strategic imperative for maintaining competitive advantage in an increasingly crowded and regulated marketplace.

Comparative Analysis of Micro-Bioreactor Systems

System Types and Configurations

Micro-bioreactor systems are primarily categorized by their parallel-processing capabilities, which directly determine experimental throughput and application suitability. The market offers several configurations designed to balance experimental scale with data generation requirements.

Parallel System Configurations: The most common configurations include 24-parallel and 48-parallel systems, which collectively account for approximately 60% of global installations [27]. Twenty-four-parallel systems represent about 33% of global installations and are extensively used in upstream process development, supporting working volumes between 20 mL and 1,000 mL [27]. These systems strike an optimal balance between throughput and cost efficiency, with more than 49% of early-stage biologics programs utilizing them for screening activities [27]. Forty-eight-parallel systems hold approximately 27% of total global system utilization and offer enhanced throughput with up to 48 simultaneous cultivations ranging from 10 mL to 500 mL volumes [27]. These high-capacity systems are particularly valuable for media optimization experiments requiring more than 300 conditions, with approximately 41% of bioprocess development teams preferring this format for such applications [27].

Beyond these standard configurations, "other" systems—including 12-parallel, 8-parallel, and single-use bench-top micro-bioreactors—account for approximately 19% of global utilization [27]. These systems serve niche applications such as specialty enzyme screening and biosensor-testing workflows, with single-vessel micro-bioreactors representing more than 7% of this segment [27].

Table 1: Micro-Bioreactor System Configurations and Applications

| System Type | Global Installation Share | Working Volume Range | Primary Applications | User Preference Data |

|---|---|---|---|---|

| 48-Parallel | 27% | 10 mL - 500 mL | High-throughput screening, media optimization | 41% of bioprocess teams prefer for media optimization with >300 conditions [27] |

| 24-Parallel | 33% | 20 mL - 1,000 mL | Upstream process development, clone selection | 49% of early-stage biologics programs use for screening [27] |

| Other Systems (12-parallel, 8-parallel, single-vessel) | 19% | 5 mL - 200 mL | Exploratory R&D, specialty enzyme screening | 22% of academic bioengineering labs depend on low-parallel systems [27] |

Key Performance Metrics and Comparative Data

When evaluating micro-bioreactor systems, researchers must consider multiple performance dimensions, including sensing capabilities, automation features, and scalability correlations. These factors collectively determine a system's effectiveness in generating predictive data for scale-up activities.

Integrated Sensing and Monitoring Capabilities: Modern micro-bioreactor systems increasingly feature advanced sensor technologies for real-time process monitoring. Recent developments show that approximately 45% of newly launched systems integrate optical sensors, while 44% of micro-bioreactor units are now equipped with multi-parametric sensing systems for real-time bioprocess analytics [27]. These integrated sensors provide continuous measurements of critical process parameters like optical density, pH, and dissolved oxygen, enabling comprehensive process characterization [28]. The trend toward enhanced sensor integration addresses the longstanding challenge of sensor miniaturization, which affects nearly 18% of micro-bioreactor experiments and can contribute to irregular pH or dissolved oxygen readings across parallel runs [27].

Automation and Data Management Features: Automation represents another critical differentiator among micro-bioreactor systems. Market analysis indicates that approximately 52% of new systems feature integrated analytics, while 47% utilize AI-enhanced process monitoring [27]. The integration of automated data management platforms, such as Genedata Bioprocess, supports automated workflows and provides the foundation for increased throughput in cell line and process development [29]. These systems enable automatic processing, aggregation, and visualization of all online, at-line, and offline data, facilitating multi-parametric assessment of any type of bioreactor data in the context of experimental settings [29].

Scale-Up Predictive Accuracy: Perhaps the most critical performance metric for micro-bioreactor systems is their ability to predict performance at larger scales. Current data indicates that approximately 27% of bioprocess engineers report challenges correlating micro-scale performance to pilot-scale bioreactors larger than 50 L [27]. Furthermore, more than 23% of end users indicate that micro-bioreactor mixing patterns differ from larger stirred-tank systems, impacting scale-up predictability [27]. These limitations highlight the importance of selecting systems that most closely mimic the conditions expected at production scale, particularly with respect to mixing, oxygen transfer, and shear forces.

Table 2: Micro-Bioreactor Performance Features and Adoption Trends

| Performance Feature | Current Adoption/Implementation Rate | Impact on Development Workflows | Reported Challenges |

|---|---|---|---|

| Integrated Optical Sensors | 45% of newly launched systems [27] | Enables real-time monitoring of critical process parameters | 18% of users report issues with sensor calibration at volumes <100 mL [27] |

| Automated Data Analytics | 52% of new systems [27] | Reduces data structuring and curation bottlenecks | 17% of R&D teams report limited integration with downstream analytics [27] |

| AI-Enhanced Process Monitoring | 47% of new systems [27] | Improves predictive capabilities and control optimization | 29% of end users face workflow bottlenecks due to insufficient automation in older systems [27] |

| Single-Use Components | 61% of newly procured systems [27] | Reduces cleaning time by >72% compared to stainless steel [27] | 36% of research facilities cite limited availability of compatible consumables [27] |

Experimental Protocols for System Validation

Protocol 1: Evaluating Aeration and Mixing Parameters

The interaction between aeration pore size, mixing efficiency, and oxygen transfer represents a critical factor in bioreactor performance and scalability. This protocol outlines a systematic approach to characterizing these parameters in micro-bioreactor systems.

Experimental Design and Setup: Researchers should employ a Design of Experiments (DoE) approach to efficiently evaluate the combined effects of different power input per volume (P/V), vessel volume per minute (vvm), and aeration pore size conditions on culture results [6]. The experimental setup should encompass relevant ranges for each parameter: P/V from 8.8 to 28.8 W/m³, vvm from 0.003 to 0.012 m³/min, and aeration pore sizes from 0.3 to 1.0 mm [6]. These ranges cover the typical operating conditions encountered in single-use bioreactors from major suppliers, which feature aeration pore sizes varying from 0.178 mm to 0.582 mm across different scales [6].

Methodology Execution: Using parallel bioreactor systems with working volumes of 300 mL, researchers should implement the predetermined experimental conditions while maintaining constant temperature, pH, dissolved oxygen, and feeding strategies [6]. The marine-type impeller with a diameter of 28 mm in a 70 mm diameter vessel provides a geometrically consistent environment for evaluating the impact of each parameter [6]. Throughout the experiments, continuous monitoring of cell density, viability, metabolite concentrations, and product titer enables comprehensive assessment of culture performance under each condition.

Data Analysis and Interpretation: Analysis of results should focus on identifying quantitative relationships between aeration pore size and optimal initial aeration vvm within specific P/V ranges. Research indicates that in the P/V range of 20 ± 5 W/m³, appropriate initial aeration falls between 0.01 and 0.005 m³/min for aeration pore sizes ranging from 1 to 0.3 mm [6]. This relationship provides valuable guidance for determining appropriate initial ventilation and rotational speed for different aeration pore sizes encountered during technology transfer between bioreactor systems.

Protocol 2: Assessing Scalability and Predictive Accuracy

Validating the predictive accuracy of micro-bioreactor systems for larger-scale operations requires systematic comparison across multiple scales using defined performance metrics.

Scale-Down Model Qualification: Researchers should begin by establishing a qualified scale-down model that accurately represents the process performance at manufacturing scale. This involves identifying both scale-dependent parameters (e.g., mixing time, oxygen transfer rate, power input) and scale-independent parameters (e.g., pH, temperature, dissolved oxygen concentration) [1]. While scale-independent parameters can typically be transferred directly across scales, scale-dependent parameters require careful optimization to account for differences in bioreactor geometric configuration and operating parameters [1].

Parallel Operation Across Scales: The validation protocol should include parallel operation of the micro-bioreactor system alongside bench-scale (1-10 L) and pilot-scale (200-5,000 L) bioreactors using the same cell line, media, and process control strategies [1]. Key performance indicators including cell growth kinetics, metabolic profiles, productivity, and product quality attributes (e.g., aggregation, glycosylation patterns) should be monitored and compared across scales [29]. Research shows that successful scale-up using this approach has been demonstrated from 15 L glass bioreactors to 500 L single-use bioreactors, with consistent results matching expectations [6].

Statistical Analysis of Correlation: Comprehensive statistical analysis should be performed to quantify the degree of correlation between micro-bioreactor and larger-scale performance. This includes evaluating critical quality attributes and key performance indicators to ensure they fall within predetermined equivalence margins [29]. Modern data management systems facilitate this analysis by enabling the correlation of process parameters with key performance indicators and product quality attributes across scales [29].

Visualization of Experimental Workflows

Micro-Bioreactor Scale-Up Validation Workflow

The following diagram illustrates the comprehensive workflow for validating micro-bioreactor systems for scale-up predictions, integrating both experimental and computational approaches:

Diagram Title: Micro-Bioreactor Scale-Up Validation Workflow

Aeration Parameter Optimization Logic

The following diagram outlines the decision process for optimizing aeration parameters across different bioreactor scales and configurations:

Diagram Title: Aeration Parameter Optimization Logic

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of high-throughput micro-bioreactor systems requires careful selection of complementary reagents and materials that ensure experimental consistency and reproducibility.

Table 3: Essential Research Reagents and Materials for Micro-Bioreactor Experiments

| Item | Function | Application Notes |

|---|---|---|

| Specialized Cell Culture Media (e.g., QuaCell CHO CD04) | Provides essential nutrients for cell growth and productivity | Used as basal medium in controlled bioreactor experiments; formulation consistency critical for reproducibility [6] |

| Feed Media Supplements (e.g., QuaCell CHO Feed02) | Supports extended culture durations and high cell density | Fed in precise percentages (1-2.5% daily) to maintain metabolic activity and productivity [6] |

| Hydrogel Scaffolds (e.g., GelMA, Agarose) | Provides 3D environment for cell growth in tissue engineering applications | GelMA porosity of 0.8 with permeability of 1×10⁻¹⁶ m²; Agarose porosity of 0.985 with permeability of 6.16×10⁻¹⁶ m² [30] |

| Photopolymerization Initiators (e.g., LAP) | Enables UV crosslinking of hydrogel scaffolds | Used at 0.15% concentration with 10% GelMA solution; cured with 390-395 nm UV light for 1.5 minutes [30] |

| Sensor Calibration Solutions | Ensures accuracy of pH, DO, and other online sensors | Critical for miniaturized sensors where calibration challenges affect 33% of users at volumes below 100 mL [27] |

| Single-Use Bioreactor Vessels | Provides sterile culture environment while eliminating cleaning validation | Used in 61% of newly procured systems; reduce changeover time by >72% compared to stainless steel [27] |

The selection and implementation of high-throughput micro-bioreactor systems represents a critical strategic decision for bioprocess development organizations. The comparative data presented in this guide demonstrates that while 48-parallel systems offer the highest throughput for screening applications, 24-parallel systems provide an optimal balance of throughput and operational practicality for many development workflows. The integration of advanced sensing technologies and automated data management platforms has substantially improved the predictive capabilities of these systems, though challenges remain in achieving perfect correlation across scales.