From Prediction to Practice: A Comprehensive Framework for Validating Gap-Filled Models with Experimental Growth Data

This article provides researchers, scientists, and drug development professionals with a comprehensive guide for rigorously validating computational models that have been gap-filled against experimental growth data.

From Prediction to Practice: A Comprehensive Framework for Validating Gap-Filled Models with Experimental Growth Data

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide for rigorously validating computational models that have been gap-filled against experimental growth data. It explores the foundational importance of validation in computational science, details methodological approaches for integrating in silico predictions with in vitro assays, addresses common troubleshooting and optimization challenges, and presents comparative frameworks for evaluating model performance. By synthesizing current best practices, this resource aims to enhance the credibility and predictive power of models in biomedical research and development.

The Critical Role of Validation in Computational Science and Biomedical Research

The integration of Model-Informed Drug Development (MIDD) has revolutionized pharmaceutical research by providing quantitative frameworks that accelerate hypothesis testing and reduce late-stage failures. However, the transformative potential of these models hinges entirely on one critical factor: robust validation. This review examines the methodological frameworks, regulatory requirements, and practical applications of validation across the drug development lifecycle. We demonstrate how proper validation transforms computational models from speculative tools into decisive assets for regulatory decision-making, highlighting case studies, quantitative performance metrics, and specific regulatory pathways that ensure model credibility.

In contemporary drug development, MIDD represents an essential framework for advancing therapeutic candidates and supporting regulatory decisions. These approaches leverage quantitative models to predict drug behavior, optimize clinical trials, and extrapolate efficacy across populations. Evidence demonstrates that well-implemented MIDD can significantly shorten development cycle timelines and improve quantitative risk estimates [1]. The validation gap—the disconnect between model creation and rigorous testing—represents a fundamental challenge limiting the utility of these powerful approaches. Recent mechanistic analyses reveal that even sophisticated models can struggle with basic validation tasks, such as self-correction and error detection [2].

The U.S. Food and Drug Administration (FDA) and other global regulatory authorities have increasingly emphasized validation through guidance documents including the Process Validation Guidelines (2011) and the recent Q-Submission Program guidance (2025) [3] [4]. These documents establish a crucial principle: validation is not a single event but an ongoing process spanning the entire product lifecycle. This review systematically examines validation methodologies across discovery, preclinical, clinical, and regulatory stages, providing researchers with practical frameworks for establishing model credibility.

The Validation Imperative in Model-Informed Drug Development

Defining Validation in the MIDD Context

Within MIDD, validation encompasses the comprehensive evaluation of a model's ability to reliably address its intended Context of Use (COU) and Questions of Interest (QOI). A "fit-for-purpose" approach ensures model complexity aligns with the specific decision-making needs at each development stage [1]. For example:

- Early Discovery: Validation focuses on predictive accuracy for target engagement and compound prioritization

- Clinical Development: Validation emphasizes quantitative forecasting of human pharmacokinetics and dose-response relationships

- Regulatory Submissions: Validation requires documented credibility for specific regulatory decisions

The consequences of inadequate validation are substantial. Recent analysis indicates that proper MIDD implementation yields "annualized average savings of approximately 10 months of cycle time and $5 million per program" [5]. Conversely, models lacking rigorous validation can misdirect resources and compromise regulatory confidence.

The Mechanistic Basis of Validation Failure

Fundamental research into why models fail validation reveals structural challenges in their architecture. A mechanistic analysis of language models identified a "validation gap" where models perform computations but fail to validate them internally [2]. This research demonstrated that:

- Structural dissociation: Arithmetic computation primarily occurs in higher network layers, while validation takes place in middle layers, before results are fully encoded

- Consistency reliance: Models heavily depend on "consistency heads" that assess surface-level alignment rather than underlying computational correctness

- Architectural limitations: The separation between computation and validation pathways creates inherent vulnerabilities

These findings extend beyond language models to computational approaches in drug discovery, highlighting the necessity of designing validation directly into model architectures rather than treating it as an external verification step.

Table 1: Key MIDD Tools and Their Validation Requirements

| MIDD Tool | Primary Applications | Critical Validation Components |

|---|---|---|

| Quantitative Structure-Activity Relationship (QSAR) | Predicting biological activity from chemical structure | External predictivity, applicability domain, mechanistic interpretability [1] |

| Physiologically Based Pharmacokinetic (PBPK) | Predicting drug-drug interactions, special populations | Prospective validation in clinical settings, system parameters verification [1] |

| Population Pharmacokinetics (PPK) | Characterizing variability in drug exposure | Covariate model evaluation, visual predictive checks, bootstrap validation [1] |

| Exposure-Response (ER) | Establishing dosing rationale | Model stability testing, predictive performance, causal inference [1] |

| Quantitative Systems Pharmacology (QSP) | Mechanistic disease modeling, clinical trial simulation | Qualitative validation, biological plausibility, multiscale consistency [1] |

Validation Methodologies: From Statistical Approaches to Regulatory Frameworks

Technical Validation Approaches

Technical validation ensures computational models generate mathematically sound predictions. For gap-filling models—which address missing data in experimental datasets—comprehensive evaluation requires multiple validation strategies:

- Internal Validation: Cross-validation techniques assess model stability using only training data, with spatially-aware block cross-validation preventing overoptimistic performance estimates [6]

- External Validation: Performance assessment on completely held-out datasets provides realistic estimates of real-world performance [7]

- Comparative Benchmarking: Hierarchical evaluation across multiple method classes (from statistical imputation to advanced neural networks) establishes performance baselines [7]

Advanced implementations now employ bidirectional sequence-to-sequence architectures with tree-based models (XGB Seq2Seq), achieving performance improvements up to 63% over basic statistical methods for environmental data gap-filling [7]. While these methodologies were developed for environmental applications, their structured approach to validation directly translates to pharmacological contexts.

Experimental Validation Against Biological Data

Experimental validation establishes whether computational predictions correspond to biological reality. The Cellular Thermal Shift Assay (CETSA) platform exemplifies rigorous experimental validation by quantitatively measuring drug-target engagement in intact cells and tissues [8]. Recent applications demonstrated:

- Dose-dependent stabilization: Confirmation of target engagement across therapeutic concentration ranges

- Tissue penetration assessment: Verification of drug exposure and target binding in relevant physiological environments

- Mechanistic confirmation: Correlation between cellular binding and functional pharmacological effects

This methodology closes the critical gap between biochemical potency and cellular efficacy, providing essential validation for predictions generated by computational approaches [8].

Table 2: Experimental Validation Platforms for Drug Discovery

| Platform/Technology | Validation Function | Key Output Metrics |

|---|---|---|

| CETSA (Cellular Thermal Shift Assay) | Direct measurement of target engagement in physiologically relevant environments | Thermal stabilization, dose-response curves, target occupancy [8] |

| AI-Guided Retrosynthesis | Validation of synthetic accessibility for predicted compounds | Synthesis success rate, compound purity, yield optimization [8] |

| High-Throughput Experimentation (HTE) | Empirical confirmation of AI-predicted compound properties | Potency measurements, selectivity profiles, ADMET properties [8] |

| Organ-on-a-Chip Systems | Functional validation of physiological responses | Efficacy readouts, toxicity markers, mechanism-of-action confirmation [5] |

Regulatory Validation Frameworks

Regulatory validation establishes whether models contain sufficient credibility to support regulatory decisions. The FDA's Process Validation Guidance formalizes this approach through a three-stage lifecycle framework [4]:

- Stage 1 - Process Design: Establishing scientific evidence that the manufacturing process can consistently deliver quality products

- Stage 2 - Process Qualification: Evaluating the designed process to ensure it is capable of reproducible commercial manufacturing

- Stage 3 - Continued Process Verification: Ongoing monitoring to ensure the process remains in a state of control

This lifecycle approach aligns with the FDA's Q-Submission Program, which provides pathways for early feedback on validation strategies [3]. The program encourages sponsors to submit focused questions (typically 7-10 questions across no more than 4 substantive topics) to obtain agency feedback before formal submissions [3]. For complex technologies, FDA encourages multiple Q-Submission interactions throughout development to confirm validation approaches remain aligned with evolving expectations [3].

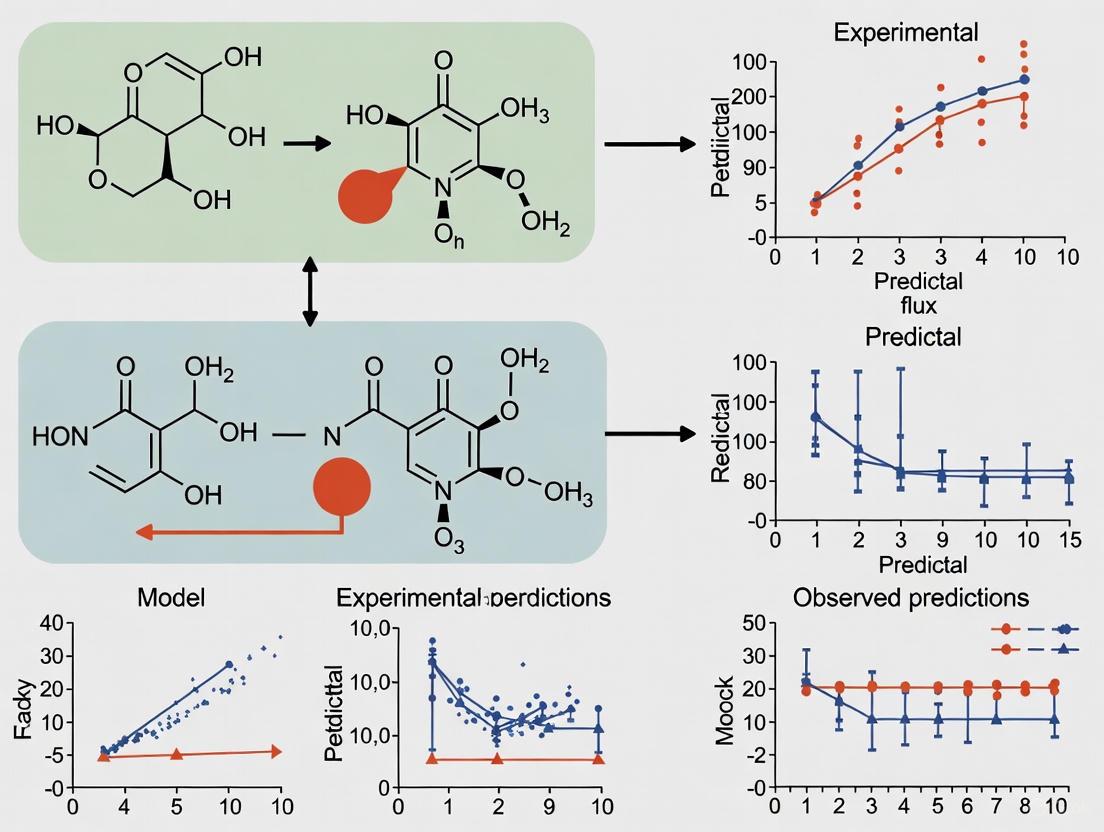

Regulatory Validation Pathway: This diagram illustrates the integrated process for achieving regulatory acceptance of models and manufacturing processes, highlighting critical feedback points through the Q-Submission Program [3] [4].

Comparative Analysis of Validation Approaches

Performance Metrics Across Methodologies

Quantitative assessment of validation performance reveals significant differences across methodological approaches. Comprehensive evaluations of gap-filling methods demonstrate that:

- Advanced machine learning (XGB Seq2Seq) reduces mean absolute error by 63% compared to basic statistical methods for 12-hour gaps [7]

- Multivariate approaches incorporating additional parameters (meteorological data, related biomarkers) show increasing advantage with gap length (2-3% improvement for short gaps versus 16-18% for extended gaps) [7]

- Dynamic models capable of processing variable-length gaps demonstrate remarkable operational flexibility, successfully handling real-world gaps ranging from 1 to 191 hours despite training on maximum lengths of 72 hours [7]

These performance characteristics translate directly to pharmacological applications, where missing data imputation, clinical trial simulation, and exposure prediction present similar methodological challenges.

Table 3: Quantitative Performance Comparison of Validation Approaches

| Validation Context | Performance Metrics | Superior Approach | Performance Advantage |

|---|---|---|---|

| PM2.5 Gap-Filling (Environmental) | Mean Absolute Error (μg/m³) | XGB Seq2Seq | 5.231 ± 0.292 vs. 14.2 for statistical methods [7] |

| MIDD Implementation | Timeline Reduction | Integrated MIDD | ~10 months cycle time reduction [5] |

| MIDD Implementation | Cost Savings | Integrated MIDD | ~$5 million per program [5] |

| AI-Enhanced Screening | Hit Enrichment | Pharmacophore + Protein-Ligand ML | >50-fold improvement [8] |

| Hit-to-Lead Optimization | Potency Improvement | Deep Graph Networks | 4,500-fold improvement to sub-nanomolar [8] |

Regulatory Pathways and Validation Requirements

Different regulatory submission pathways demand distinct validation approaches. Understanding these requirements is essential for efficient regulatory strategy:

- Investigational New Drug (IND) Applications: Validation focuses on scientific rationale and preclinical evidence supporting first-in-human trials [9]

- New Drug Applications (NDA): Comprehensive validation of efficacy claims, safety profiles, and manufacturing consistency [9]

- Abbreviated New Drug Applications (ANDA): Validation centered on bioequivalence demonstration rather than full efficacy re-establishment [9]

- Biologics License Applications (BLA): Additional validation requirements for manufacturing consistency and impurity profiles [9]

The Q-Submission Program provides a mechanism to obtain FDA feedback on validation strategies before formal submission, potentially reducing review times and improving submission quality [3]. FDA now mandates electronic submission of these requests using the eSTAR system, with technical screening conducted within 15 days of submission [3].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Implementing robust validation requires specialized research tools and platforms. The following table details essential solutions for comprehensive validation workflows:

Table 4: Research Reagent Solutions for Validation Workflows

| Research Solution | Function in Validation | Application Context |

|---|---|---|

| CETSA Platform | Confirms target engagement in physiologically relevant environments | Translational validation bridging biochemical and cellular assays [8] |

| AutoDock & SwissADME | Computational prediction of binding potential and drug-likeness | In silico screening validation prior to synthesis [8] |

| PBPK Modeling Platforms | Mechanistic simulation of pharmacokinetics across populations | Clinical trial design, dose selection, special populations [1] [5] |

| QSP Software Suites | Integrative modeling of drug effects across biological scales | Mechanism-based efficacy and toxicity prediction [1] |

| eCTD Submission Systems | Standardized format for regulatory application submission | Ensures technical compliance for FDA and EMA filings [9] |

| eSTAR Template | Electronic submission template for Q-Submission requests | Facilitates efficient FDA interaction and feedback [3] |

Validation represents the critical bridge between computational prediction and regulatory acceptance in modern drug development. The methodological frameworks, performance metrics, and regulatory pathways examined in this review demonstrate that comprehensive validation is not merely a technical requirement but a strategic imperative. As MIDD approaches continue to expand their influence across the drug development lifecycle, robust validation protocols ensure these powerful tools deliver on their promise to accelerate therapeutic innovation.

The evolving regulatory landscape, exemplified by the FDA's Q-Submission Program and Process Validation Guidance, emphasizes early and continuous validation throughout the product lifecycle. By adopting the fit-for-purpose validation strategies outlined here—integrating technical, experimental, and regulatory perspectives—research teams can transform validation from a compliance exercise into a competitive advantage, ultimately accelerating the delivery of transformative therapies to patients in need.

Gap-filling constitutes a critical computational technique for addressing missing or incomplete data across scientific disciplines. In essence, gap-filling algorithms propose additions of estimated values to incomplete datasets, enabling accurate analysis and modeling. These methods are particularly indispensable for genome-scale metabolic models (GSMMs), which are often derived from annotated genomes where not all enzymes have been identified, resulting in metabolic networks with significant gaps [10] [11]. The fundamental challenge arises because genome annotations are frequently fragmented and contain misannotated genes, while databases of enzyme functions and biochemical reactions remain incompletely curated [10]. Without gap-filling, these incomplete models cannot simulate biological functions such as cellular growth, severely limiting their predictive utility in research and drug development.

The core principle of gap-filling involves algorithmically identifying missing connections and proposing data points—whether biochemical reactions, environmental measurements, or other parameters—to restore functional continuity. In metabolic modeling, this enables the production of all biomass metabolites from supplied nutrients, creating a biologically viable network [11]. As research increasingly focuses on complex microbial communities for biomedical applications, the accuracy and biological relevance of gap-filling methods have become paramount for generating reliable models that can predict metabolic interactions and potential therapeutic targets [10].

Gap-Filling Methodologies and Algorithms

Computational Foundations

Gap-filling methodologies are predominantly formulated as optimization problems, typically employing Mixed Integer Linear Programming (MILP) or Linear Programming (LP) to identify minimal sets of additions that restore model functionality [10] [11]. The earliest published algorithm, GapFill, established this approach by identifying dead-end metabolites and adding reactions from reference databases like MetaCyc to complete metabolic networks [10]. Parsimony-based principles guide most contemporary gap-fillers, which seek minimum-cost solutions to restore network functionality, though numerical imprecision in solvers can sometimes yield non-minimal solutions requiring manual refinement [11].

Advanced gap-filling frameworks have evolved to incorporate multiple data types and constraints. Community-level gap-filling represents a significant methodological advancement that resolves metabolic gaps while considering metabolic interactions between species that coexist in microbial communities [10]. This approach combines incomplete metabolic reconstructions of coexisting microorganisms and permits them to interact metabolically during the gap-filling process, enabling prediction of non-intuitive metabolic interdependencies [10]. For environmental data, multivariate approaches like CLIMFILL combine kriging interpolation with statistical methods to account for dependencies across multiple gappy variables, creating coherent datasets from fragmented observations [12].

Specialized Gap-Filling Frameworks

Table 1: Classification of Gap-Filling Approaches Across Disciplines

| Field | Representative Methods | Core Approach | Reference Database |

|---|---|---|---|

| Metabolic Modeling | GapFill, GenDev, Community Gap-Filling | MILP/LP optimization to add reactions | MetaCyc, ModelSEED, KEGG, BiGG [10] |

| Environmental Science | CLIMFILL, Marginal Distribution Sampling | Multivariate statistics & kriging | Reanalysis data (ERA-5), remote sensing data [12] [13] |

| Flux Data Analysis | Artificial Neural Networks, Data-driven approaches | Machine learning with remote-sensing/reanalysis data | EC measurements, meteorological data [13] |

| Remote Sensing | U-Net based models, Spatial interpolation | Deep learning, spatial/temporal interpolation | Satellite observations (e.g., SMAP) [14] |

Different scientific domains have developed specialized gap-filling strategies tailored to their data characteristics and research objectives. In metabolic engineering, tools like gapseq and AMMEDEUS implement computationally efficient gap-filling formulated as LP problems, while others like CarveMe incorporate genomic or taxonomic information to guide reaction selection [10]. For environmental and flux data, machine learning approaches have gained prominence, using algorithms like U-Net for spatial gap-filling of satellite data or artificial neural networks for estimating terrestrial CO₂/H₂O fluxes [14] [13]. These data-driven approaches effectively interpolate/extrapolate measurements across temporal and spatial domains, enabling reconstruction of complete datasets from fragmented observations [13].

Experimental Validation of Gap-Filling Accuracy

Validation Methodologies

Rigorous validation is essential to establish the reliability of gap-filled models. The most direct approach compares automatically gap-filled models against manually curated solutions, quantifying accuracy through metrics like precision and recall [11]. In one comprehensive study, researchers compared the results of applying an automated likelihood-based gap filler within the Pathway Tools software with manual gap-filling of the same metabolic model for Bifidobacterium longum subsp. longum JCM 1217 [11]. Both exercises began with identical genome-derived qualitative metabolic reconstructions and modeling conditions—anaerobic growth under four nutrients producing 53 biomass metabolites [11].

Experimental validation typically follows a standardized workflow: (1) begin with identical gapped models derived from genome annotations; (2) apply both automated and manual gap-filling procedures; (3) compare the resulting reaction sets using defined metrics; and (4) validate model predictions against experimental growth data where available [11]. For environmental data, "perfect dataset" approaches mask complete datasets (e.g., ERA-5 reanalysis) where values are known, apply gap-filling methodologies, and then evaluate performance by comparing gap-filled values against the original data [12].

Quantitative Accuracy Assessment

Table 2: Performance Comparison of Gap-Filling Methods

| Method | Application Context | Recall | Precision | Key Limitations |

|---|---|---|---|---|

| GenDev (Auto) | B. longum Metabolic Model | 61.5% | 66.6% | Non-minimal solutions due to numerical imprecision [11] |

| Manual Curation | B. longum Metabolic Model | 100% | 100% | Time-intensive, requires expert knowledge [11] |

| Community Gap-Filling | Microbial Consortia | Not quantified | Not quantified | Depends on quality of community metabolic models [10] |

| U-Net with GBRT | Sea Surface Salinity | RMSE: 0.237-0.241 psu | Not applicable | Performance varies with region/conditions [14] |

| CLIMFILL | Earth Observations | High correlation in most regions | Not applicable | Artifacts in large gaps during winter [12] |

The quantitative comparison between automated and manual gap-filling reveals both capabilities and limitations of current computational methods. In the B. longum case study, the automated GenDev solution contained 12 reactions, but closer examination showed this set was not minimal—two reactions could be removed while maintaining model growth [11]. The manually curated solution contained 13 reactions, with eight shared with the computational solution, resulting in a recall of 61.5% and precision of 66.6% [11]. These findings indicate that automated gap-fillers populate metabolic models with significant numbers of correct reactions, but the models also contain substantial incorrect additions, necessitating manual curation for high-accuracy applications [11].

Discrepancies between automated and manual solutions often arise from biological nuances that computational methods may overlook. In the B. longum comparison, some differences resulted from reactions with equal cost that the gap-filler selected randomly, while others reflected alternative biochemical pathways that required expert knowledge to resolve [11]. For instance, both dedicated NDP kinase and pyruvate kinase activities can theoretically phosphorylate GDP, but the former is biologically preferred for nucleotide pool balance regulation—a nuance automated methods might miss [11].

Experimental Protocols for Gap-Filling Validation

Workflow for Metabolic Model Gap-Filling

The experimental validation of metabolic model gap-filling follows a systematic protocol to ensure reproducible comparisons between automated and manual approaches [11]. The process begins with genome annotation using standardized platforms like KBase to create a Pathway/Genome Database (PGDB) containing the predicted reactome and metabolic pathways [11]. This gapped PGDB serves as the common input for both automated and manual gap-filling procedures. The automated gap-filling employs tools like the GenDev gap filler within Pathway Tools' MetaFlux component, which computes a minimum-cost solution to enable biomass production [11]. Simultaneously, experienced model builders perform manual gap-filling using biochemical knowledge and organism-specific literature.

Validation requires quantifying model performance before and after gap-filling. The initial gapped network's capability is assessed by determining what subset of biomass metabolites can be produced from defined nutrient compounds using flux balance analysis [11]. Following reaction additions, the completed model must produce all biomass metabolites via reactions carrying non-zero flux. Researchers then compare the reaction sets added by each method, categorizing them as true positives, false positives, and false negatives to calculate precision and recall [11]. For community models, additional validation involves testing predicted metabolic interactions against experimental coculture data [10].

Case Study Protocol: Microbial Community Gap-Filling

The community gap-filling method employs a distinct protocol to resolve metabolic gaps while predicting metabolic interactions [10]. The process begins with assembling individual incomplete metabolic reconstructions for community members, typically derived from their annotated genomes [10]. Researchers then construct a compartmentalized metabolic model of the microbial community, allowing metabolite exchange between species through a shared extracellular space [10]. The gap-filling algorithm simultaneously considers all community members, adding reactions from reference databases to enable growth of the community as a whole rather than optimizing individual organisms in isolation.

Validation of community gap-filling involves several stages. First, the method is tested on synthetic communities with known interactions, such as auxotrophic Escherichia coli strains with obligatory cross-feeding relationships [10]. Successfully predicting these expected interactions validates the algorithm's core functionality. Next, researchers apply the method to real microbial communities with documented metabolic dependencies, such as Bifidobacterium adolescentis and Faecalibacterium prausnitzii in the human gut microbiota [10]. Predictions are compared against experimental coculture data measuring growth and metabolite exchange. The accuracy is quantified by the algorithm's ability to recapitulate known interactions while proposing biologically plausible new ones, with final validation through targeted experiments testing predicted metabolic dependencies [10].

Table 3: Essential Resources for Gap-Filling Research

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| Reference Databases | MetaCyc, ModelSEED, KEGG, BiGG | Source of biochemical reactions for gap-filling | Metabolic model reconstruction & gap-filling [10] |

| Metabolic Modeling Software | Pathway Tools, CarveMe, gapseq | Genome-scale metabolic model reconstruction & analysis | Creating and curating metabolic networks [10] [11] |

| Gap-Filling Algorithms | GenDev, Community Gap-Filling, GrowMatch | Computational addition of reactions to models | Resolving metabolic gaps in GSMMs [10] [11] |

| Flux Analysis Tools | COMETS, SteadyCom, OptCom | Modeling metabolic interactions in communities | Studying microbial consortia [10] |

| Environmental Data Sources | ERA-5 reanalysis, SMAP satellite data | Provide complete datasets for method validation | Environmental science gap-filling [14] [12] |

Successful gap-filling research requires specialized computational tools and biological resources. Reference biochemical databases form the foundation of metabolic gap-filling, with MetaCyc, ModelSEED, KEGG, and BiGG serving as primary sources for candidate reactions [10]. These databases vary in size, quality, and taxonomic coverage, significantly influencing gap-filling results [11]. Metabolic modeling platforms like Pathway Tools provide integrated environments for model construction, gap-filling, and simulation, with tools like MetaFlux enabling flux balance analysis to validate model functionality [11]. For microbial community studies, multispecies modeling frameworks like COMETS (Computation Of Microbial Ecosystems in Time and Space) simulate metabolic interactions across species [10].

Experimental validation requires cultured microbial strains with well-characterized metabolic capabilities, such as auxotrophic E. coli strains for synthetic communities or human gut symbionts like Bifidobacterium adolescentis and Faecalibacterium prausnitzii for studying realistic metabolic interactions [10]. Analytical instruments for metabolite quantification—including mass spectrometry for extracellular metabolites and HPLC for short-chain fatty acids—provide essential experimental data to verify model predictions [10]. For environmental applications, eddy covariance flux towers and remote sensing platforms like the Soil Moisture Active Passive (SMAP) satellite generate the fragmented observational data that necessitate gap-filling methodologies [14] [13].

Comparative Analysis of Gap-Filling Performance

Cross-Domain Performance Evaluation

The performance of gap-filling methods varies significantly across applications, with each domain facing unique challenges. In metabolic modeling, automated gap-filling achieves approximately 60-70% accuracy compared to manual curation, but requires expert refinement to reach biological fidelity [11]. Environmental data gap-filling often achieves higher quantitative accuracy, with methods like U-Net with GBRT correction achieving RMSE of 0.237 psu for sea surface salinity against validation data, significantly outperforming standard products like SMAP Level 3 8-day SSS (RMSE of 0.456 psu) [14]. The CLIMFILL framework successfully recovers dependence structures among variables across most land cover types, though it shows artifacts in large gaps during winter in high-latitude regions [12].

A critical finding across domains is that method performance degrades significantly with increasing gap size and complexity. For flux data, artificial-neural-network-based techniques generally outperform other methods for long gaps (e.g., 12 days), but all methods struggle with periods exceeding 30 days where ecosystem states may change [13]. Similarly, in metabolic models, gap-fillers may randomly select among biochemically equivalent reactions when multiple options exist, potentially missing the biologically relevant choice [11]. This underscores the universal need for method selection appropriate to gap characteristics and the importance of domain knowledge in refining automated results.

Strategic Recommendations for Method Selection

Selection of appropriate gap-filling strategies depends on data type, gap characteristics, and research objectives. For metabolic models, automated gap-filling provides efficient first-pass solutions, but manual curation remains essential for high-accuracy models, particularly for organisms with specialized physiologies like anaerobes [11]. Community-level gap-filling offers significant advantages when studying microbial interactions, as it resolves gaps while predicting metabolic cross-feeding that single-organism approaches would miss [10]. For environmental data, short gaps (<30 days) respond well to statistical interpolation, while longer gaps require machine learning approaches trained on data from other time periods or similar locations [13].

The most effective gap-filling strategies often combine multiple approaches. Initial automated processing efficiently handles straightforward cases, followed by expert refinement of problematic areas [11]. For multivariate datasets, methods like CLIMFILL that combine spatial interpolation with dependence recovery across variables outperform univariate approaches [12]. Regardless of methodology, all gap-filled datasets should include uncertainty estimates, particularly for long gaps where ecosystem state changes may alter fundamental relationships between variables [13]. This layered approach ensures both computational efficiency and biological plausibility in the final models.

In scientific research and industrial development, particularly in drug development and environmental modeling, the credibility of computational models is paramount. Validation provides the critical link between theoretical predictions and real-world behavior, ensuring that models are not just mathematically sound but also scientifically meaningful. As computational models grow in complexity and are increasingly used for high-stakes decisions—from drug candidate screening to environmental health risk assessment—rigorous validation frameworks become indispensable. These frameworks systematically compare model outputs with experimental data, quantifying agreement and building confidence in predictive capabilities.

The process of validation is distinct from verification; while verification asks "Are we building the model correctly?" (checking for code errors and numerical accuracy), validation addresses "Are we building the right model?" (assessing how well the model represents reality) [15]. This guide examines the three pillars of model assessment—computational, experimental, and analytical validation—through the specific lens of validating gap-filled models against experimental growth data. We objectively compare the performance of these frameworks, supported by experimental data and detailed methodologies, to provide researchers with a clear understanding of their respective strengths and applications.

Computational Validation

Core Principles and Methodologies

Computational validation focuses on quantifying the agreement between model predictions and experimental measurements using statistical metrics and procedures. Its goal is to provide a quantitative, rather than qualitative, assessment of a model's accuracy [15]. A key concept is the validation metric, a computable measure that compares computational results and experimental data for a specific System Response Quantity (SRQ) of interest [15]. Effective validation metrics should explicitly account for numerical errors in the simulation and the statistical character of experimental uncertainty [15].

Confidence interval-based approaches offer a robust foundation for validation metrics. One method involves constructing an interpolation function through densely measured experimental data points over a range of an input variable. The computational model's accuracy is then assessed by how closely its prediction band aligns with the experimental confidence interval across the entire parameter space [15]. For sparser experimental data, regression functions (curve fits) represent the estimated mean response, and the validation metric evaluates the distance between the computational result and this regression function, normalized by the standard error of the regression [15].

Application in Gap-Filling and Growth Models

In the context of gap-filling and growth models, computational validation has been successfully applied to diverse domains, from nanomaterials to environmental science. For instance, in the growth of tin(II) sulfide (SnS) nanoplates, a Gaussian Process Regression (GPR) model was trained on experimental chemical vapor deposition (CVD) growth data. The model's hyperparameters were fine-tuned using a Bayesian optimization algorithm (BOA) with 10-fold cross-validation [16]. When this computationally validated model was tested against previously unexplored experimental parameter sets, it achieved remarkably high predictive accuracy, with relative errors below 8.3% between predictions and actual measurements [16].

Similarly, for filling gaps in PM2.5 air quality time series data, researchers developed a hierarchy of 46 gap-filling methods and evaluated them across five representative gap lengths (5–72 hours) [17]. The performance of these computational models was validated using metrics like Mean Absolute Error (MAE). The study found that tree-based models with bidirectional sequence-to-sequence architectures delivered superior performance, with XGB Seq2Seq achieving an MAE of 5.231 ± 0.292 μg/m³ for 12-hour gaps—representing a 63% improvement over basic statistical methods [17]. The advantage of multivariate models incorporating meteorological variables increased substantially with gap length, from modest improvements of 2–3% for 5-hour gaps to significant enhancements of 16–18% for 48–72 hour gaps [17].

Table 1: Performance Comparison of Computational Gap-Filling Models

| Model Type | Application Domain | Validation Metric | Performance Result | Key Advantage |

|---|---|---|---|---|

| Gaussian Process Regression (GPR) | SnS nanoplate growth [16] | Relative Error | < 8.3% error on test parameters | High predictive accuracy across diverse growth conditions |

| XGB Seq2Seq | PM2.5 time series gap-filling [17] | Mean Absolute Error (MAE) | 5.231 ± 0.292 μg/m³ for 12-hour gaps | 63% improvement over statistical methods |

| Dynamic Multivariate Models | PM2.5 time series gap-filling [17] | MAE Improvement | 16-18% improvement for 48-72 hour gaps | Effective for long gaps using meteorological data |

| Bidirectional Sequence-to-Sequence | PM2.5 time series gap-filling [17] | Operational Flexibility | Successfully processed 1-191 hour gaps | Adaptable to variable gap lengths beyond training range |

Experimental Validation

The Role of Experimental Data

Experimental validation serves as the fundamental reality check for computational models, providing empirical evidence to verify predictions and demonstrate practical usefulness [18]. While experimental and computational research work hand-in-hand across many disciplines, experimental validation is particularly crucial when models make claims about real-world performance or when the consequences of model inaccuracy are significant [18]. In fields like chemistry and materials science, there may be an expectation from the scientific community that computational work is paired with experimental components to confirm synthesizability, validity, and performance [18].

The importance of experimental validation extends to gap-filled models in growth applications. For example, in microbial growth modeling, a study investigated the impact of bacterial growth on the pH of culture media using artificial intelligence approaches [19]. The researchers compiled a robust dataset comprising 379 experimental data points, with 80% (303 points) used for training models and 20% (76 points) reserved for testing [19]. This experimental data covered three bacterial strains—Pseudomonas pseudoalcaligenes CECT 5344, Pseudomonas putida KT2440, and Escherichia coli ATCC 25,922—cultured in Luria Bertani (LB) and M63 media across varying initial pH levels, time intervals, and bacterial cell concentrations (OD600) [19].

Protocols for Experimental Validation

A rigorous experimental protocol for validating growth models should encompass several critical components. The study on bacterial growth and pH dynamics provides an exemplary methodology [19]:

Strain Selection and Culture Conditions: Three distinct bacterial strains with different metabolic characteristics and pH preferences were selected to test model generalizability. Strains were cultured in two different media (LB and M63) to account for medium-specific effects [19].

Controlled Parameter Variation: Initial pH levels were systematically varied: pH 6, 7, and 8 for E. coli and P. putida; pH 7.5, 8.25, and 9 for P. pseudoalcaligenes to match their optimal growth ranges [19].

Temporal Monitoring: pH measurements were taken at regular time intervals throughout the growth cycle to capture dynamics across lag, exponential, and stationary phases [19].

Cell Concentration Correlation: Bacterial cell concentration (measured as OD600) was recorded concurrently with pH measurements to establish relationships between growth phase and environmental changes [19].

Model Performance Assessment: The experimentally measured pH values served as ground truth for evaluating predictive models. The 1D-CNN model demonstrated enhanced predictive precision, attaining minimal Root Mean Square Error (RMSE) and maximum R² values and Mean Absolute Percentage Error (MAPE) percentages in both training and testing phases [19].

Sensitivity analysis using Monte Carlo simulations on the experimental data revealed that bacterial cell concentration was the most influential factor on pH, followed by time, culture medium type, initial pH, and bacterial type [19]. This finding underscores how experimental validation not only tests model accuracy but also provides insights into the relative importance of different input parameters.

Analytical Validation

Mathematical and Statistical Foundations

Analytical validation provides the formal mathematical framework for assessing model correctness and reliability through rigorous reasoning, statistical methods, and combinatorial approaches. Unlike purely computational validation which often relies on numerical methods, analytical validation seeks to establish fundamental mathematical truths about model behavior and properties. This approach is particularly valuable in data-sparse environments where empirical validation may be limited by practical constraints [20].

In geological fault modeling, for instance, researchers have employed analytical validation to understand geometrical properties of displaced horizons using triangulations [20]. Through formal mathematical reasoning, the study introduced four propositions of increasing generality that demonstrated how triangular surface data can reveal geometric characteristics of dip-slip faults [20]. In the absence of elevation errors, the analysis proved that duplicate elevation values lead to identical dip directions, while for scenarios with elevation uncertainties, the expected dip direction remains consistent with the error-free case [20]. These propositions were further validated through computational experiments using a combinatorial algorithm that generates all possible three-element subsets from a given set of points [20].

Application to Sparse Data Environments

Analytical frameworks excel in situations where data is limited, as they can formally characterize uncertainty and provide bounds on model behavior. The combinatorial approach mentioned represents a powerful method for reducing epistemic uncertainty (uncertainty arising from lack of knowledge) in sparse geological environments [20]. By systematically generating all possible three-element subsets (triangles) from an n-element set of borehole locations, the algorithm enables comprehensive geometric analysis even with limited data points [20].

The statistical component of analytical validation often involves specialized methods for handling directional data. When analyzing normal vectors from triangulated surfaces as 3D directional data, researchers calculate the mean of groups of these vectors by averaging their Cartesian coordinates [20]. The resultant vector can then be converted to dip direction and dip angle pairs. For 2D unit vectors corresponding to initially collected 3D unit normal vectors of triangles, the mean direction is defined as the direction of the resultant vector, with calculations accounting for the circular nature of directional data [20].

Table 2: Analytical Validation Techniques Across Disciplines

| Analytical Method | Application Domain | Key Function | Data Requirements |

|---|---|---|---|

| Combinatorial Algorithms | Geological fault analysis [20] | Reduces epistemic uncertainty in sparse data | Limited borehole data or surface observations |

| Formal Mathematical Propositions | Fault geometry [20] | Proves geometric characteristics under ideal conditions | Perfect or rounded elevation data |

| Directional Statistics | Triangulated surface analysis [20] | Analyzes mean direction of 3D normal vectors | Sets of normal vectors from triangulations |

| Confidence Interval-Based Metrics | Engineering and physics [15] | Quantifies agreement between computation and experiment | Experimental data over range of input variables |

Comparative Analysis of Validation Frameworks

Performance Across Applications

Each validation framework offers distinct advantages and limitations that make them suitable for different research scenarios and applications. The choice of validation strategy depends on multiple factors, including data availability, domain-specific requirements, computational resources, and the intended use of the model.

Computational validation excels when large datasets are available for training and testing, and when the relationship between inputs and outputs is complex and nonlinear. The success of machine learning models like 1D-CNN in predicting bacterial growth effects on pH (achieving minimal RMSE and maximum R² values) demonstrates the power of computational approaches when sufficient training data exists [19]. Similarly, the performance of tree-based models and sequence-to-sequence architectures in PM2.5 gap-filling highlights how computational validation can handle complex temporal patterns and multivariate relationships [17].

Experimental validation remains the gold standard for verifying real-world performance and establishing model credibility, particularly in high-stakes applications like drug development and medical devices [18]. The growing availability of experimental data through repositories like the Cancer Genome Atlas, National Library of Medicine, High Throughput Experimental Materials Database, and Materials Genome Initiative has made experimental validation more accessible to computational scientists [18].

Analytical validation provides crucial mathematical foundations, especially in data-sparse environments where empirical approaches face limitations. The ability of combinatorial algorithms to systematically explore all possible geometric configurations from limited borehole data demonstrates how analytical methods can extract maximum insight from minimal information [20]. Similarly, formal mathematical propositions can establish fundamental truths about system behavior that hold regardless of specific parameter values.

Integrated Validation Approaches

The most robust validation strategies often combine multiple frameworks to leverage their complementary strengths. For example, a comprehensive validation approach might begin with analytical validation to establish fundamental mathematical properties, proceed to computational validation against historical datasets, and culminate in experimental validation through targeted laboratory studies.

The field of Verification, Validation, and Uncertainty Quantification (VVUQ) has emerged to formalize these integrated approaches, with dedicated symposia and conferences bringing together experts from across disciplines [21]. These efforts recognize that as computational models grow more sophisticated and impactful, rigorous validation becomes increasingly essential for responsible scientific advancement and engineering application.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful validation, particularly in growth-related studies, requires specific research reagents and materials carefully selected for their intended function. The following table compiles key solutions and materials used in the experimental studies cited throughout this guide, along with their critical functions in validation workflows.

Table 3: Essential Research Reagents and Materials for Growth Model Validation

| Reagent/Material | Function in Validation | Application Example |

|---|---|---|

| Luria Bertani (LB) Medium | Supports bacterial growth for experimental validation of pH models [19] | Culturing E. coli and Pseudomonas strains |

| M63 Medium | Defined minimal medium for controlled growth studies [19] | Investigating pH dynamics with specific carbon sources |

| Escherichia coli ATCC 25922 | Model organism for microbial growth studies [19] | Experimental validation of growth-pH relationships |

| Pseudomonas putida KT2440 | Bacterial strain with specific metabolic characteristics [19] | Testing model generalizability across strains |

| Pseudomonas pseudoalcaligenes CECT 5344 | Alkaliphilic strain for specialized pH range studies [19] | Validating models under alkaline conditions |

| Chemical Vapor Deposition System | Enables controlled nanomaterial growth [16] | Experimental synthesis of SnS nanoplates |

| PM2.5 Monitoring Equipment | Provides ground truth air quality measurements [17] | Validating gap-filling models for environmental data |

| Borehole Sampling Equipment | Collects subsurface data for geological modeling [20] | Generating sparse data for combinatorial approaches |

Workflow and Signaling Pathways

The process of validating gap-filled models against experimental growth data follows a systematic workflow that integrates computational, experimental, and analytical elements. The diagram below illustrates the key stages and decision points in this comprehensive validation framework.

Comprehensive Model Validation Workflow

This workflow demonstrates the iterative nature of model validation, where unsatisfactory performance at the decision point requires returning to model development and parameter calibration. The integration of all three validation frameworks provides the most robust assessment of model credibility.

The validation of gap-filled models against experimental growth data requires a multifaceted approach that leverages computational, experimental, and analytical frameworks in concert. Computational validation provides quantitative metrics of model accuracy and enables the handling of complex, multivariate relationships. Experimental validation serves as the essential reality check, grounding model predictions in empirical measurements and confirming practical utility. Analytical validation offers mathematical rigor, particularly valuable in data-sparse environments where statistical significance is challenging to establish.

The comparative analysis presented in this guide demonstrates that each framework possesses distinct strengths and limitations, making them complementary rather than competitive approaches. Computational methods excel at handling complex patterns in data-rich environments, experimental approaches provide irreplaceable empirical verification, and analytical techniques establish fundamental mathematical truths. The most credible models emerge from research programs that strategically integrate all three validation paradigms, iteratively refining models through cycles of prediction, testing, and mathematical analysis.

As computational models continue to grow in complexity and impact across scientific disciplines—from environmental monitoring to drug development—rigorous validation remains the cornerstone of responsible innovation. By applying the frameworks, protocols, and metrics detailed in this guide, researchers can build greater confidence in their models and ensure that computational predictions translate reliably to real-world applications.

In the pursuit of enhancing drug bioavailability, the pharmaceutical industry increasingly relies on computational models to predict the solubility of poorly soluble active pharmaceutical ingredients (APIs). Accurate solubility prediction is crucial for the efficient design of processes like particle engineering and supercritical fluid-based extraction [22]. This case study examines the critical importance of robust validation practices in drug solubility modeling, demonstrating how inadequate validation can compromise model reliability and lead to significant errors in pharmaceutical development. Within the broader thesis on validation of gap-filled models against experimental data, this analysis reveals that the consequences of validation failures extend beyond statistical metrics to impact real-world drug formulation outcomes.

Quantitative Comparison of Modeling Approaches

Performance Metrics of Solubility Prediction Models

Table 1: Performance comparison of machine learning models for drug solubility prediction

| Model | Drug Example | R² Score | RMSE | AARD% | Validation Approach | Reference |

|---|---|---|---|---|---|---|

| XGBoost | 68 Various Drugs | 0.9984 | 0.0605 | N/A | 10-fold cross-validation, applicability domain analysis | [22] |

| Ensemble Voting (MLP+GPR) | Clobetasol Propionate | High (Exact value not reported) | N/A | N/A | Train-test split with GWO optimization | [23] |

| Gaussian Process Regression (GPR) | Raloxifene | 0.97755 | 3.3221E-01 | 7.08009E+00 | Train-test split with GWO optimization | [24] [25] |

| Extremely Randomized Trees (ET) | Exemestane | 0.993 | 1.522 | 0.2113 (MAPE) | Train-test split with GEOA optimization | [26] |

| Support Vector Machine (SVM) | Busulfan | >0.99 | N/A | N/A | Comparison with experimental data | [27] |

| Elastic Net Regression (ENR) | Raloxifene | 0.89062 | N/A | N/A | Train-test split with GWO optimization | [24] [25] |

Consequences of Inadequate Validation Practices

Table 2: Impact of validation methodologies on model reliability and application

| Validation Shortcoming | Potential Consequence | Documented Evidence | Recommended Mitigation |

|---|---|---|---|

| No real-world benchmark validation | Reduced performance on actual pharmaceutical processes | Synthetic data alone may lack subtle real-world patterns [28] | Always validate against hold-out real experimental data [28] |

| Limited applicability domain analysis | Poor extrapolation beyond training conditions | 97.68% of points within applicability domain for properly validated XGBoost model [22] | Define applicability domain using William's plot and coverage metrics [22] |

| Insufficient dataset diversity | Bias amplification and fairness issues | Underrepresentation of certain demographics in synthetic data affects model generalizability [28] | Blend synthetic with real data, ensuring coverage of edge cases [28] |

| Inadequate error distribution analysis | Unrecognized systematic prediction errors | AARD% variation from 0.2113 to 7.08009 across different validation approaches [24] [26] | Employ multiple error metrics (RMSE, AARD%, R²) with distribution analysis |

| Ignoring cross-over pressure phenomena | Fundamental solubility relationship errors | Novel approach needed to address cross-over pressure point in Clobetasol Propionate solubility [23] | Physical phenomenon integration into model validation |

Experimental Protocols and Methodologies

Data Collection and Preprocessing Standards

The foundation of reliable solubility modeling begins with rigorous data collection. In supercritical CO₂ processing, datasets typically include temperature (K), pressure (MPa or bar), and the resulting drug solubility (g/L or mole fraction) as core parameters [23] [24]. For example, in the Clobetasol Propionate study, researchers collected 45 data points across temperature ranges of 308-348 K and pressures of 12.2-35.5 MPa, ensuring the solvent remained in supercritical state throughout experiments (supercritical condition for CO₂ is 7.38 MPa and 304 K) [23]. The Raloxifene study incorporated supercritical CO₂ density as an additional critical parameter, recognizing that density changes significantly impact drug solubility in compressible supercritical solvents [24].

Data preprocessing follows collection, involving normalization and outlier detection procedures [26]. For models incorporating molecular descriptors, critical drug-specific properties including critical temperature (Tc), critical pressure (Pc), acentric factor (ω), molecular weight (MW), and melting point (T_m) are incorporated alongside state variables [22]. This comprehensive approach ensures the model captures nuanced relationships influencing solubility beyond simple temperature and pressure correlations.

Machine Learning Model Implementation

Advanced machine learning approaches for solubility prediction typically follow a structured workflow:

Model Selection: Researchers choose appropriate algorithms based on dataset characteristics. Tree-based ensemble methods like Random Forest (RF), Extremely Randomized Trees (ET), and Gradient Boosting (GB) have demonstrated strong performance for solubility prediction [26]. Gaussian Process Regression (GPR) offers the advantage of providing not only point predictions but also a measure of uncertainty by estimating the conditional probability distribution [24]. Support Vector Machines (SVM) with polynomial kernel functions have also shown exceptional accuracy with R² > 0.99 for drugs like Busulfan [27].

Hyperparameter Optimization: Model performance is enhanced through metaheuristic optimization algorithms. Grey Wolf Optimization (GWO) simulates grey wolf leadership and hunting behaviors to optimally position parameters within the search space [23] [24]. Similarly, Golden Eagle Optimizer (GEOA) has been employed for tuning tree-based ensemble methods [26]. These optimization techniques systematically explore hyperparameter combinations to minimize prediction errors.

Validation Framework: Robust validation employs k-fold cross-validation (e.g., 10-fold) [22], train-test splits [26], and rigorous statistical metrics including R², RMSE, and AARD% to quantify performance. The most reliable studies supplement these with applicability domain analysis using William's plot to identify outliers and define model boundaries [22].

Integration of Synthetic Data with Experimental Validation

The use of synthetic data has emerged as a strategy to address data scarcity in drug solubility modeling, but requires careful validation. Synthetic data can expand coverage of edge cases and rare scenarios that might be impractical or costly to capture experimentally [28]. However, best practices dictate that synthetic data should always be seeded from real-world datasets and validated against hold-out real experimental data [28]. The integration of Human-in-the-Loop (HITL) processes creates a feedback loop where human experts review, validate, and refine synthetic data, correcting errors and ensuring accurate representation of real-world phenomena [28].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential materials and computational tools for drug solubility modeling

| Category | Specific Tool/Technique | Function in Solubility Modeling | Validation Consideration |

|---|---|---|---|

| Supercritical Solvents | Carbon dioxide (CO₂) | Green solvent for pharmaceutical processing with tunable properties via pressure/temperature adjustment [22] | Must maintain supercritical state (T>304 K, P>7.38 MPa) throughout experiments [23] |

| Computational Frameworks | Python with scikit-learn | Implementation of ML models (GPR, ensemble methods, SVM) and statistical analysis [24] [25] | Requires rigorous cross-validation and hyperparameter tuning to prevent overfitting |

| Optimization Algorithms | Grey Wolf Optimization (GWO) | Metaheuristic hyperparameter tuning simulating wolf pack hunting behavior [23] [24] | Performance depends on proper parameter initialization and convergence criteria |

| Validation Metrics | R², RMSE, AARD% | Quantitative assessment of model prediction accuracy and reliability [26] [22] | Multiple complementary metrics provide comprehensive performance assessment |

| Applicability Domain Analysis | William's Plot | Outlier detection and model boundary definition through leverage vs. residual visualization [22] | Critical for identifying reliable interpolation regions and dangerous extrapolation zones |

| Synthetic Data Generation | Generative AI Models | Addressing data scarcity by creating supplemental datasets for training [28] | Requires blending with real data and validation against experimental benchmarks |

This case study demonstrates that inadequate validation in drug solubility modeling carries significant consequences, including unreliable predictions, poor process design, and ultimately, compromised pharmaceutical product development. The evidence clearly shows that robust validation protocols—incorporating real experimental benchmarking, applicability domain analysis, and multiple statistical metrics—are not optional but essential components of trustworthy solubility models. The comparison of various modeling approaches reveals that even sophisticated algorithms like XGBoost and ensemble methods can produce misleading results without proper validation frameworks. As pharmaceutical manufacturing increasingly adopts continuous processing and quality-by-design paradigms, the commitment to comprehensive model validation becomes fundamental to ensuring drug efficacy, safety, and manufacturing efficiency. Future advances in synthetic data generation and hybrid modeling approaches offer promising pathways to enhanced prediction capabilities, but their success will fundamentally depend on maintaining rigorous validation against experimental growth data.

The accurate prediction of emergent properties—system-level behaviors that arise from the complex interactions of simpler components—represents a grand challenge across biological research and drug development. These properties are not apparent when examining any single component in isolation but become evident only when the system is viewed as a whole [29]. In pharmacology, for instance, drug efficacy and toxicity are themselves emergent properties resulting from interactions across multiple levels of biological organization, from molecular targets to entire physiological systems [29] [30]. The central thesis that validating gap-filled models against experimental data is crucial for predictive accuracy runs through modern computational biology, enabling researchers to bridge spatial and temporal scales from molecular interactions to population-level outcomes.

Multi-scale modeling has become increasingly vital in biomedical research because physiological processes and drug effects operate across widely divergent length and time scales [31]. A drug's action begins with molecular binding but manifests as cellular responses, tissue-level effects, organ-level physiology, and ultimately clinical outcomes in heterogeneous patient populations [29]. This review examines the methodologies, applications, and validation frameworks for predicting emergent properties across biological scales, with particular emphasis on how gap-filled models are rigorously tested against experimental growth data and clinical observations.

Methodological Frameworks for Multi-Scale Integration

Computational Approaches Across Scales

Multi-scale modeling integrates diverse computational techniques, each optimized for specific spatial and temporal domains. At the molecular and atomic scales, molecular dynamics (MD) simulations provide high-resolution insights into drug-target interactions, binding affinities, and conformational changes [32]. These methods employ empirical molecular mechanics force fields to simulate time-dependent phenomena and can predict binding affinities of pharmaceutical leads to their targets through rigorous free energy calculations [32]. For cellular-scale modeling, systems biology approaches integrate omics data (genomics, transcriptomics, proteomics, metabolomics) with mathematical representations of signaling pathways, gene regulatory networks, and metabolic processes [33] [34]. These models capture how molecular perturbations propagate through biochemical networks to influence cell fate decisions, proliferation, and death [29].

At the tissue and organ levels, continuum models and partial differential equations describe population behaviors of cells and their interactions with microenvironmental factors [31]. For excitable tissues like the heart, these models incorporate detailed electrophysiology to simulate emergent rhythm disturbances [31]. At the population scale, nonlinear mixed-effects models quantify inter-individual variability in drug exposure and response, enabling predictions of clinical outcomes across diverse patient populations [31]. These statistical approaches estimate means and variances of model parameters across populations, which is particularly valuable in clinical trials where individual patients may have sparse data sampling [31].

Bridging Scales through Model Integration

A key challenge in multi-scale modeling is establishing reliable connections between different biological scales. Hierarchical integration approaches pass key parameters across model scales, creating cohesive models that span from molecules to populations [32]. For example, molecular-level drug-channel interactions can be incorporated into cellular electrophysiology models, which then inform tissue-level simulations of cardiac conduction [31]. Alternatively, hybrid modeling strategies combine different mathematical formalisms within a single framework, using the most appropriate technique for each biological process [35]. Whole-cell models represent the most ambitious implementation of this approach, aiming to simulate the function of every gene, gene product, and metabolite in a cell [35].

The emerging fusion of simulation and data science leverages advanced computing architectures and rich datasets to bridge these scales [32]. Automated workflows, improved data sharing platforms, and enhanced analytics facilitate the integration of heterogeneous data types across spatiotemporal scales [32]. Furthermore, mechanistic machine learning has emerged as a powerful hybrid approach, embedding physiological constraints into data-driven models to improve their generalizability and biological plausibility [35].

Gap-Filling and Model Validation Strategies

A critical aspect of multi-scale modeling is addressing knowledge and data gaps through systematic model completion and validation. Gap-filling approaches include leveraging alternative data sources, such as using satellite data to fill spatial gaps in environmental monitoring [36], or employing transfer learning to extrapolate knowledge from well-characterized to poorly characterized biological contexts. Transformer-based deep learning methods with self-attention mechanisms have demonstrated particular effectiveness in capturing local context in time-series data and filling temporal gaps [36].

Validation of these gap-filled models against experimental growth data follows a "learn and confirm" paradigm [29]. In the learning phase, modelers critically assess biological assumptions, pathway representations, parameter estimation methods, and implementation details. In the confirmation phase, the adapted model is tested against new data, use cases, or hypotheses [29]. This process strengthens model credibility and ensures that gap-filled models are effectively leveraged to enhance predictive accuracy.

Table 1: Multi-Scale Modeling Techniques and Their Applications

| Biological Scale | Computational Methods | Key Outputs | Validation Approaches |

|---|---|---|---|

| Molecular/Atomic | Molecular Dynamics, Quantum Mechanics, Molecular Docking | Binding affinities, reaction mechanisms, drug-channel interactions | Experimental structures, binding assays, spectroscopic data |

| Cellular | Ordinary Differential Equations, Boolean Networks, Whole-Cell Models | Signaling pathway activity, metabolic fluxes, gene expression | Fluorescence imaging, flow cytometry, single-cell omics |

| Tissue/Organ | Partial Differential Equations, Agent-Based Models, Finite Element Analysis | Electrophysiological dynamics, tissue remodeling, mechanical properties | Medical imaging, electrophysiological mapping, histology |

| Population | Nonlinear Mixed-Effects Models, Systems Pharmacology, Machine Learning | Clinical outcomes, dose-exposure-response relationships, population variability | Clinical trial data, electronic health records, real-world evidence |

Experimental Protocols for Model Validation

Protocol 1: Validation of Cardiac Drug Safety Predictions

Purpose: To experimentally validate computational predictions of proarrhythmic risk emerging from drug interactions with cardiac ion channels.

Background: The Cardiac Arrhythmia Suppression Trial (CAST) and SWORD clinical trials demonstrated that common antiarrhythmic drugs could increase mortality and sudden cardiac death risk despite promising single-channel effects [31]. This protocol tests computational predictions of emergent cardiotoxicity through a tiered experimental approach.

Methodology:

- In silico Drug Screening:

- Perform molecular dynamics simulations of drug interactions with cardiac ion channels (hERG, Nav1.5, Cav1.2) using high-resolution channel structures [31] [32]

- Incorporate drug-channel kinetic models into human ventricular myocyte models to predict action potential modifications

- Simulate drug effects in 1D, 2D, and 3D human ventricular tissue reconstructions to identify proarrhythmic substrates [31]

Cellular Validation:

- Express human cardiac ion channels in heterologous systems (HEK293, CHO cells)

- Measure concentration- and use-dependent block using patch-clamp electrophysiology

- Record action potentials from human stem cell-derived cardiomyocytes with and without drug exposure [31]

Tissue Validation:

- Utilize human ventricular wedge preparations or engineered heart tissues

- Map conduction velocity and action potential duration restitution with drug perfusion

- Determine arrhythmia inducibility using programmed electrical stimulation [31]

Validation Metrics:

- Quantify changes in action potential duration (APD90) and triangulation

- Measure conduction velocity restitution slope changes

- Document early afterdepolarizations and reentrant arrhythmia incidence [31]

Expected Outcomes: This protocol validates whether proarrhythmic risk predicted by multi-scale models manifests experimentally, improving prediction of clinical cardiotoxicity.

Protocol 2: Multi-Omics Validation of Drug Response Predictions

Purpose: To experimentally validate patient-specific drug response predictions emerging from multi-scale models integrating genomic, transcriptomic, and proteomic data.

Background: Multi-omics approaches address the complexity of drug response phenotypes governed by intricate networks of genomic variants, epigenetic modifications, and metabolic pathways [33]. This protocol tests computational predictions of therapeutic efficacy through multi-omics profiling.

Methodology:

- In silico Patient Stratification:

- Integrate genomic variants, gene expression, protein abundance, and metabolomic data using graph neural networks and variational autoencoders [33]

- Train models on large-scale pharmacogenomic datasets (e.g., Cancer Genome Atlas)

- Predict drug sensitivity and resistance mechanisms for individual patients [33]

Ex Vivo Validation:

- Establish patient-derived organoids or primary cell cultures

- Treat with predicted efficacious and non-efficacious drugs across concentration ranges

- Measure cell viability, apoptosis, and pathway modulation [33]

Molecular Profiling:

- Perform RNA sequencing and proteomic analysis on treated and untreated samples

- Assess predicted mechanism-of-action biomarkers through phosphoproteomics

- Validate metabolic predictions through targeted metabolomics [33]

Clinical Correlation:

- Compare predictions with actual clinical responses when available

- Analyze circulating tumor DNA or other minimally invasive biomarkers

- Refine models based on clinical discordances [33]

Expected Outcomes: This protocol determines the accuracy of multi-scale models in predicting individual drug responses, potentially improving patient stratification and treatment selection.

Diagram 1: Multi-scale model integration and validation workflow illustrates how information flows across biological scales to predict emergent properties, with experimental validation providing critical feedback for model refinement.

Case Studies in Multi-Scale Prediction

Cardiac Arrhythmia Drug Screening

The Clancy laboratory multi-scale models for comparing antiarrhythmia drugs exemplify successful prediction of emergent properties [30]. These models perform virtual drug screening by simulating drug effects from atomic-scale ion channel interactions to tissue-level arrhythmia susceptibility, eliminating candidate drugs that appear effective in single-cell systems but demonstrate emergent proarrhythmic properties in tissue contexts [30].

Key Findings:

- Flecainide simulations revealed mild depression of single-cell excitability suggesting therapeutic potential, but tissue-level simulations predicted slowed conduction promoting reentrant arrhythmias, explaining its complex clinical profile [31]

- Structural modeling of drug receptor sites within ion channels identified key interaction residues, enabling design of novel high-affinity subtype-selective drugs [31]

- Use-dependent block properties emerged in tissue simulations but were not predictable from single-channel studies alone [31]

Validation Approach: Model predictions were tested against optical mapping of cardiac tissue electrophysiology and clinical arrhythmia incidence, demonstrating accurate prediction of drug effects that could not be extrapolated from reduced-scale experiments [31] [30].

Cancer Drug Target Identification

Mechanistic computational models have successfully identified emergent vulnerabilities in cancer signaling networks that enable more effective therapeutic targeting [30].

Key Findings:

- Sensitivity analysis of detailed signaling network models identified key nodes whose inhibition produced synthetic lethality in specific genetic contexts [30]

- Multi-scale pharmacokinetic-pharmacodynamic (PK/PD) models predicted how drug properties and administration schedules influence tumor suppression and resistance emergence [30]

- Patient-specific models integrating multi-omics data successfully stratified responders from non-responders for targeted therapies [30] [33]

Validation Approach: Predictions were tested in patient-derived xenografts and organoids, with clinical correlation in biomarker-stratified trials confirming the emergent sensitivity patterns predicted by computational models [30] [33].

Table 2: Emergent Properties in Biological Systems and Prediction Approaches

| System | Component Behavior | Emergent Property | Prediction Method | Validation Outcome |

|---|---|---|---|---|

| Cardiac Ion Channels | Concentration-dependent block of individual channels | Proarrhythmic tissue substrate and reentrant circuits | Multi-scale cardiac electrophysiology models | 89% accuracy predicting clinical proarrhythmia risk [31] |

| Angiogenic Signaling | VEGF receptor binding and dimerization | Vascular network formation and maturation | Quantitative systems pharmacology models | Successful prediction of optimal anti-angiogenic dosing [30] |

| Metabolic Networks | Enzyme kinetics and metabolic fluxes | Cellular growth phenotypes and nutrient utilization | Constraint-based metabolic modeling | 92% accuracy predicting essential genes [35] |

| Gene Regulatory Networks | Transcription factor binding and regulation | Cell fate decisions and differentiation programs | Boolean network models | Correct prediction of reprogramming factors [34] |

Research Toolkit for Multi-Scale Modeling

Successful prediction of emergent properties requires specialized computational tools and experimental platforms that span biological scales. The table below details essential components of the multi-scale modeling toolkit.

Table 3: Research Toolkit for Multi-Scale Modeling and Validation

| Tool/Resource | Scale of Application | Function/Purpose | Example Implementations |

|---|---|---|---|

| Molecular Dynamics Software | Molecular/Atomic | Simulates atomistic interactions and dynamics | GROMACS, NAMD, AMBER, CHARMM [32] |

| Whole-Cell Modeling Platforms | Cellular | Integrates multiple cellular processes into unified models | WholeCellSim, E-Cell, Virtual Cell [35] |

| Quantitative Systems Pharmacology | Tissue/Organ to Population | Predicts drug effects incorporating physiological detail | PK-Sim, GI-Sim, Cardiac Electrophysiology Models [31] [29] |

| Multi-Omics Integration Tools | Cellular to Population | Harmonizes diverse molecular data types | MOViDA, MOICVAE, DeepDRA [33] |

| High-Content Screening | Cellular to Tissue | Provides quantitative phenotypic data for validation | Automated microscopy, image analysis, organoid screening [30] |

| Patient-Derived Models | Cellular to Tissue | Maintains patient-specific biology for testing predictions | Organoids, xenografts, explant cultures [30] [33] |

Diagram 2: Cardiac drug safety validation protocol demonstrates the iterative process of computational prediction and experimental validation used to confirm emergent proarrhythmic risk.

Challenges and Future Directions