From Static Ratios to Dynamic Predictions: Deriving Kinetic Models from Stoichiometric Foundations

This article provides a comprehensive guide for researchers and drug development professionals on advancing from stoichiometric reduction principles to dynamic kinetic models.

From Static Ratios to Dynamic Predictions: Deriving Kinetic Models from Stoichiometric Foundations

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on advancing from stoichiometric reduction principles to dynamic kinetic models. It explores the fundamental relationship between reaction stoichiometry and kinetic parameterization, details modern high-throughput and computational methodologies for model construction, addresses common challenges in parameter estimation and thermodynamic consistency, and establishes frameworks for model validation and comparative analysis. By synthesizing foundational theory with practical applications in metabolic engineering and drug development, this resource aims to equip scientists with strategies to enhance predictive capability in biomedical research, from enzyme engineering to therapeutic optimization.

Bridging Stoichiometry and Kinetics: The Fundamental Relationship

Principles of Stoichiometric Balancing and Mass Conservation

Stoichiometry, derived from the Greek words for "element" and "measure," is the branch of chemistry that deals with the quantitative relationships between reactants and products in chemical reactions [1]. This foundation enables researchers to predict the amounts of substances consumed and produced in chemical processes. The practice of stoichiometry is fundamentally rooted in the Law of Conservation of Mass, which states that matter cannot be created or destroyed in chemical reactions, only transformed from one form to another [2]. This principle, established by Antoine Lavoisier in 1789, dictates that the total mass of reactants must equal the total mass of products in any closed system [2].

For researchers in drug development, mastering stoichiometric principles is essential for optimizing reaction yields, minimizing waste, and developing efficient synthetic pathways for active pharmaceutical ingredients (APIs). The application of these principles extends to kinetic modeling, where simplified, stoichiometrically accurate models enable more efficient simulation and analysis of complex biochemical systems without sacrificing essential predictive capabilities [3].

Theoretical Framework

Fundamental Stoichiometric Concepts

Stoichiometric calculations rely on balanced chemical equations where the number of atoms of each element is identical on both reactant and product sides [4]. These balanced equations provide the mole ratios necessary for quantitative predictions in chemical processes. The stoichiometric coefficient—the number written in front of atoms, ions, and molecules in a chemical reaction—establishes the precise relationship between all reactants and products [4].

The mathematical foundation of stoichiometry rests upon the principle of mass conservation, expressed as:

[ \sum \text{mass of reactants} = \sum \text{mass of products} ]

This equation holds true provided the system is properly isolated and all inputs and outputs are accounted for [2]. In practical applications, this means that atoms present in the reactants are merely rearranged to form products, with no net change in the total quantity of matter [1].

Stoichiometry in Kinetic Model Reduction

Complex chemical kinetic models often contain numerous species engaging in large reaction mechanisms across varying timescales, making them computationally expensive to simulate [3]. Stoichiometric reduction methods leverage mass balance principles and stoichiometric ratios to decrease these computational demands while preserving essential model features.

The reduction process involves decoupling species of interest through mass balances and stoichiometric ratios, enabling researchers to solve for specific concentration profiles without simulating the entire system [3]. This approach maintains the fundamental constraints imposed by conservation laws while significantly reducing degrees of freedom in the model. Analytical results demonstrate that properly implemented stoichiometric reduction can achieve zero error at the ordinary differential equation level while substantially accelerating numerical convergence in many cases [3].

Table 1: Key Concepts in Stoichiometric Balancing and Mass Conservation

| Concept | Description | Research Application |

|---|---|---|

| Stoichiometric Coefficients | Numeric multipliers in balanced equations indicating proportional relationships between species [4] | Determine mole ratios for reaction scaling and yield optimization |

| Law of Conservation of Mass | Total mass in isolated system remains constant regardless of chemical changes [2] | Foundation for mass balance calculations in reaction design |

| Mole Ratio | Proportional relationship between amounts of reactants and products derived from balanced equations [4] | Critical for predicting reagent requirements and theoretical yields |

| Stoichiometric Reduction | Method for decreasing model complexity while maintaining mass balance constraints [3] | Enables efficient simulation of complex reaction networks |

Experimental Protocols

Protocol 1: Validating Mass Conservation in a Precipitation Reaction

This protocol demonstrates mass conservation during a double displacement reaction that forms a precipitate, adapted for pharmaceutical research applications [5].

Materials and Reagents

- Magnesium sulfate (MgSO₄) solution, 0.1 M

- Sodium carbonate (Na₂CO₃) solution, 0.1 M

- Two sealed reaction vessels compatible with analytical balance

- Analytical balance (0.0001 g sensitivity)

- Volumetric pipettes and dispensers

Procedure

- Tare the sealed empty reaction vessel on the analytical balance.

- Add exactly 50.0 mL of MgSO₄ solution to the vessel and record the mass.

- In a separate tared vessel, add exactly 50.0 mL of Na₂CO₃ solution and record the mass.

- Calculate and record the combined mass of both solutions.

- Carefully combine the solutions within a sealed system to prevent evaporation.

- Observe the formation of magnesium carbonate precipitate.

- Measure the total mass of the reaction vessel containing the products.

- Compare the combined mass before and after the reaction.

Expected Results

The total mass should remain constant within measurement error (typically ±0.1 g), demonstrating mass conservation despite the formation of a new solid phase [5]. This validates that all atoms present in the reactants are accounted for in the products.

Protocol 2: Mass Conservation in Gas-Producing Reactions

This protocol addresses the technical challenges of demonstrating mass conservation in reactions that produce gaseous products, with specific application to pharmaceutical processes involving gas evolution [6].

Materials and Reagents

- Sodium bicarbonate (NaHCO₃), solid

- Hydrochloric acid (HCl) solution, 1.0 M

- Pressure-resistant sealed reaction vessel (e.g., modified PET bottle rated for pressure)

- Analytical balance (0.001 g sensitivity)

- Small vial or container capable of fitting within reaction vessel

Procedure

- Place 100 mL of 1.0 M HCl solution in the pressure-resistant vessel.

- Load a small vial containing approximately 2.4 g NaHCO₃ into the vessel without allowing contact between reactants.

- Seal the vessel securely and record the total mass.

- Agitate the vessel to mix reactants, initiating the reaction: [ \ce{HCl(aq) + NaHCO3(s) -> NaCl(aq) + H2O(l) + CO2(g)} ]

- After reaction completion, observe the pressure increase from CO₂ production.

- Measure the final mass of the entire sealed system.

- Slowly release gas and note the mass change upon gas escape.

Expected Results

The mass of the sealed system remains unchanged after reaction, confirming mass conservation despite gas production. When the vessel is opened, the escape of CO₂ demonstrates why open systems may appear to violate conservation laws [6].

Protocol 3: Stoichiometric Analysis of Microbial DHA Production

This protocol outlines the stoichiometric analysis of docosahexaenoic acid (DHA) production by Crypthecodinium cohnii, demonstrating the application of stoichiometric principles in biopharmaceutical production [7].

Materials and Reagents

- Crypthecodinium cohnii culture

- Sterile growth media with controlled carbon sources (glucose, ethanol, glycerol)

- Fermentation system with environmental control

- FTIR spectroscopy system for fatty acid analysis

- Biomass quantification equipment

Procedure

- Inoculate C. cohnii into separate bioreactors containing standardized media with glucose, ethanol, or glycerol as carbon sources.

- Monitor biomass growth rates and substrate consumption under controlled conditions.

- Harvest samples at predetermined time points for FTIR analysis.

- Analyze PUFA content using FTIR spectroscopy, focusing on the characteristic 3014 cm⁻¹ absorption band for DHA [7].

- Calculate carbon transformation efficiency from substrate to biomass.

- Determine stoichiometric ratios between carbon source consumed and DHA produced.

- Compare experimental yields with theoretical predictions based on stoichiometric models.

Expected Results

Glycerol substrates typically show slower growth rates but higher PUFA fractions compared to glucose, with carbon transformation efficiencies approaching theoretical limits [7]. These stoichiometric relationships inform process optimization for microbial DHA production.

Data Presentation and Analysis

Quantitative Relationships in Stoichiometric Balancing

Table 2: Stoichiometric Relationships in Balanced Chemical Equations

| Reaction Type | Balanced Equation Example | Mole Ratio | Mass Relationship |

|---|---|---|---|

| Synthesis | ( \ce{2Na(s) + Cl2(g) -> 2NaCl(s)} ) | 2:1:2 | 45.98 g Na + 70.90 g Cl₂ = 116.88 g NaCl |

| Decomposition | ( \ce{2H2O(l) -> 2H2(g) + O2(g)} ) | 2:2:1 | 36.04 g H₂O = 4.04 g H₂ + 32.00 g O₂ |

| Single Displacement | ( \ce{Zn(s) + 2HCl(aq) -> ZnCl2(aq) + H2(g)} ) | 1:2:1:1 | 65.38 g Zn + 72.92 g HCl = 136.28 g ZnCl₂ + 2.02 g H₂ |

| Double Displacement | ( \ce{AgNO3(aq) + NaCl(aq) -> AgCl(s) + NaNO3(aq)} ) | 1:1:1:1 | 169.87 g AgNO₃ + 58.44 g NaCl = 143.32 g AgCl + 84.99 g NaNO₃ |

Stoichiometric Analysis of Carbon Source Efficiency in DHA Production

Table 3: Performance Comparison of Carbon Sources for DHA Production by C. cohnii [7]

| Carbon Source | Growth Rate | PUFA Content | DHA Dominance | Carbon Transformation Efficiency |

|---|---|---|---|---|

| Glucose | High | Lower | Moderate | Below theoretical maximum |

| Ethanol | Moderate | High | High | Approaches theoretical maximum |

| Glycerol | Slower | Highest | Highest | Closest to theoretical maximum |

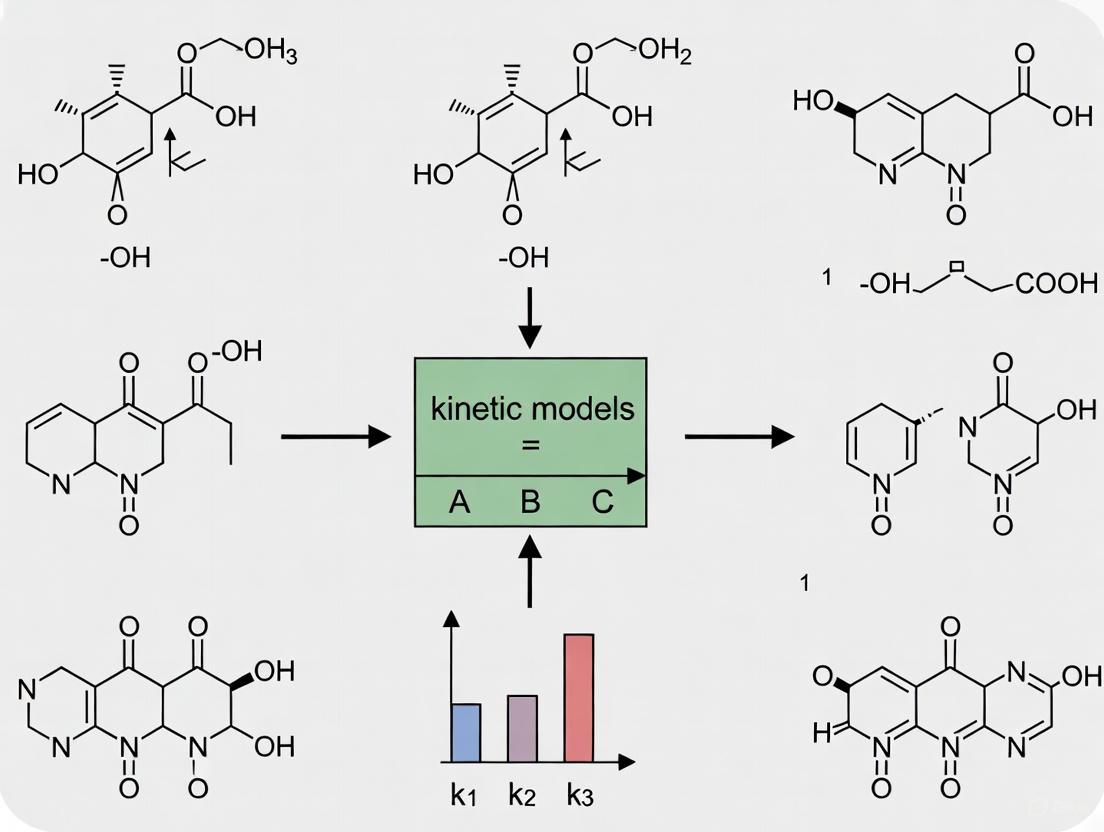

Visualization of Stoichiometry in Kinetic Modeling

Workflow for Stoichiometric Model Reduction

Mass Balance in Ecosystem Stoichiometry

Research Reagent Solutions

Table 4: Essential Research Reagents for Stoichiometric Analysis

| Reagent/Material | Specification | Research Function | Application Example |

|---|---|---|---|

| Analytical Balance | 0.0001 g sensitivity | Precise mass measurement for conservation validation [6] | Quantifying mass relationships in reactions |

| Sealed Reaction Vessels | Pressure-resistant, non-reactive | Containment for gas-producing reactions [6] | Mass conservation studies with gaseous products |

| FTIR Spectroscopy System | Spectral range 4000-400 cm⁻¹ | Rapid analysis of functional groups and compound identification [7] | Monitoring DHA production in microbial systems |

| Carbon Substrates | HPLC/spectroscopic grade | Controlled carbon sources for stoichiometric growth studies [7] | Microbial production of valuable compounds |

| Stoichiometric Modeling Software | MATLAB, Python with SciPy | Implementation of reduced stoichiometric models [3] | Kinetic model reduction and simulation |

The principles of stoichiometric balancing and mass conservation provide fundamental frameworks for quantitative analysis across chemical and biological systems. For drug development professionals, these principles enable precise control over reaction stoichiometry, yield optimization, and efficient process design. The integration of stoichiometric reduction methods with kinetic modeling represents a powerful approach for managing complexity in biochemical systems while maintaining predictive accuracy.

Experimental validation remains essential, as properly controlled demonstrations of mass conservation reinforce the theoretical foundation supporting all stoichiometric calculations. By applying these principles systematically, researchers can develop more efficient synthetic pathways, optimize bioproduction systems, and create more computationally tractable models of complex biological processes relevant to pharmaceutical development.

The transition from analyzing static molar ratios to understanding dynamic reaction rates represents a critical advancement in chemical research. This extension from reaction stoichiometry to kinetic modeling is pivotal for developing a complete mechanistic understanding of chemical processes, particularly in pharmaceutical development and materials science. Stoichiometric analysis, governed by the Law of Conservation of Mass (LCM), reveals the quantitative relationships between reactants and products [8]. However, it provides no information about the time scale or reaction pathway. Kinetic analysis addresses this gap by quantifying reaction rates and identifying intermediate steps, enabling researchers to predict reaction behavior under varying conditions and optimize processes for maximum efficiency and yield [9]. This Application Note details protocols for deriving comprehensive kinetic models from stoichiometric foundations, with specific applications in pharmaceutical chemistry and materials science.

Theoretical Foundation: Connecting Stoichiometry and Kinetics

Fundamental Principles

The connection between stoichiometry and kinetics begins with the fundamental definition of reaction rate. For a generalized reaction:

[ aA + bB \longrightarrow cC + dD ]

the reaction rate can be expressed in terms of any reactant or product concentration [10]:

[ \text{Rate} = -\frac{1}{a}\frac{d[A]}{dt} = -\frac{1}{b}\frac{d[B]}{dt} = \frac{1}{c}\frac{d[C]}{dt} = \frac{1}{d}\frac{d[D]}{dt} ]

This mathematical relationship demonstrates how stoichiometric coefficients (a, b, c, d) directly influence the calculation of reaction rates from concentration measurements. The negative signs for reactants account for their decreasing concentrations over time, ensuring the rate remains positive [10].

The Law of Conservation of Mass provides the foundational framework for all quantitative analysis in chemical reactions [8]. In kinetic studies, LCM ensures mass balance throughout the reaction progress, allowing researchers to account for all species, including intermediates that may not appear in the net stoichiometric equation.

From Equilibrium to Dynamics

While equilibrium constants provide information about the thermodynamic favorability of a reaction, kinetic rate constants reveal the pathway and speed of the reaction. For a binding reaction:

[ A + B \rightleftharpoons AB ]

the association rate constant ((k+)), dissociation rate constant ((k-)), and equilibrium constant ((K)) are fundamentally connected [9]:

[ K = \frac{k+}{k-} ]

This relationship demonstrates how kinetic parameters contain more information than equilibrium constants alone, as kinetic experiments yield both thermodynamic and mechanistic insights [9]. Transient-state kinetics experiments, which observe how a system approaches equilibrium after a perturbation, are particularly valuable for determining these rate constants.

Experimental Protocols

Automated Kinetic Model Determination Using Flow Chemistry

This protocol describes an automated approach for simultaneous reaction model identification and kinetic parameter estimation, particularly suitable for pharmaceutical applications [11].

Materials and Equipment

Table 1: Research Reagent Solutions and Essential Materials

| Item Name | Function/Application |

|---|---|

| Automated Flow Chemistry Platform | Enables precise transient flow experiments and rapid reaction profiling |

| HPLC System with Detector | Provides quantitative concentration data for reaction species |

| Candidate Model Library | Computational database of possible reaction mechanisms based on mass balance |

| Mixed Integer Linear Programming (MILP) Algorithm | Computational method for model discrimination and parameter identification |

| Open-Source Optimization Code | Customizable framework for automated kinetic analysis |

Step-by-Step Procedure

Initial Species Input: Pre-define all known participants in the reaction process, including starting materials, suspected intermediates, and products [11].

Transient Flow-Ramp Experiments:

- Utilize the automated flow chemistry platform to conduct linear flow-ramp experiments

- Map the reaction profile using transient flow data

- Generate comprehensive, data-rich datasets from minimal experimental runs [11]

Model Library Generation:

- Compile all possible reaction model candidates based on mass balance assessment

- Include all chemically plausible mechanisms derived from stoichiometric analysis [11]

Parallel Computational Optimization:

- Implement the MILP approach to evaluate each candidate model

- Algorithmically adjust kinetic parameters for each model to achieve convergence between simulated and experimental kinetic curves [11]

Statistical Model Selection:

- Apply statistical analysis to determine the most probable reaction model

- Balance model simplicity with agreement to experimental data

- Select the model that best represents the underlying mechanism [11]

Data Analysis and Interpretation

The automated framework provides both the identified reaction model and optimized kinetic parameters. Validation should include comparison with manual determinations and assessment of predictive capability under conditions not included in the original dataset.

Stoichiometric Analysis in Metal Deposition Kinetics

This protocol examines the stoichiometry and kinetics of metal cation reduction on silicon surfaces, illustrating how detailed stoichiometric analysis informs kinetic modeling in materials science [12].

Materials and Equipment

Table 2: Research Reagent Solutions for Metal Deposition Studies

| Item Name | Function/Application |

|---|---|

| Multi-crystalline Silicon Wafers | Substrate for metal deposition reactions |

| Dilute Hydrofluoric Acid (HF) Matrix | Reaction medium enabling metal deposition |

| Metal Cation Solutions (Ag⁺, Cu²⁺, AuCl₄⁻, PtCl₆²⁻) | Reactants for reduction studies |

| Ultrapure Water (18 MΩ resistance) | Ensures reagent purity and consistent results |

| Analytical Equipment for Solution Analysis | Measures concentration changes for stoichiometric calculations |

Step-by-Step Procedure

Solution Preparation:

- Prepare solutions with varying concentrations of metal cations (Ag⁺, Cu²⁺, AuCl₄⁻, or PtCl₆²⁻) in dilute HF matrix [12]

- Use ultrapure water (18 MΩ resistance) as the solvent to maintain consistency

Batch Reaction Setup:

- Immerse multi-crystalline silicon wafers in prepared solutions

- Maintain consistent surface area to solution volume ratios across experiments [12]

Time-Based Sampling:

- Collect solution samples at consecutive time intervals

- Analyze metal cation concentration and dissolved silicon species [12]

Stoichiometric Calculation:

- Determine molar ratios of reduced metal to oxidized silicon

- Calculate stoichiometric ratios using mass balance principles [12]

Kinetic Analysis:

- Measure metal deposition rates as a function of cation and HF concentrations

- Correlate stoichiometric ratios with reaction mechanisms [12]

Data Analysis and Interpretation

The stoichiometric ratios between metal cation reduction and silicon oxidation provide critical insights into the operative reaction mechanism. Ratios between 1.5:1 and 2:1 (metal:silicon) suggest involvement of different valence transfer mechanisms [12]. These stoichiometric findings directly inform the development of kinetic models by constraining possible reaction pathways.

Data Analysis and Computational Methods

Numerical Integration of Kinetic Equations

Accurate kinetic modeling requires robust numerical methods for integrating rate equations over time. The PHREEQC documentation describes two primary approaches [13]:

Runge-Kutta Method: An explicit integration method that estimates error and automatically adjusts time subintervals to maintain accuracy within specified tolerances. The method can be configured with different orders (1-6) of approximation, with higher orders providing greater accuracy for complex systems [13].

CVODE Method: An implicit stiff-equation solver based on backward differentiation formulas, particularly suitable for systems with widely varying reaction rates. This method is more robust and faster for stiff systems where reaction rates differ by several orders of magnitude [13].

The integration process requires careful attention to error tolerances, with the absolute difference between integration estimates typically maintained below 10⁻⁸ mol for chemical accuracy [13].

Kinetic Parameter Determination

For binding reactions of the form:

[ A + B \rightleftharpoons AB ]

the time course of association after mixing follows a predictable exponential approach to equilibrium [9]:

[ \text{Signal}(t) = \text{Signal}{\text{final}} + (\text{Signal}{\text{initial}} - \text{Signal}{\text{final}}) \cdot e^{-k{obs} \cdot t} ]

where (k_{obs}) is the observed rate constant that depends on the association and dissociation rate constants:

[ k{obs} = k+ \cdot [B] + k_- ]

By measuring (k{obs}) at different concentrations of ([B]), both (k+) and (k_-) can be determined from the slope and intercept of a linear plot [9].

Applications and Case Studies

Pharmaceutical Reaction Optimization

The automated kinetic modeling approach has demonstrated significant value in pharmaceutical development. In case studies involving API synthesis, the methodology achieved [11]:

- Reduction in experimental time by up to 80% compared to traditional sequential methods

- Comprehensive process understanding from a minimal number of data-rich experiments

- Identification of non-intuitive reaction pathways that would be missed by manual investigation

The open-source nature of the computational framework makes it particularly accessible for drug development applications, where understanding reaction mechanisms is critical for regulatory compliance and process control [11].

Materials Science Applications

In materials science, the study of metal deposition kinetics on silicon surfaces illustrates how stoichiometric analysis informs kinetic modeling. Key findings include [12]:

- Diffusion-limited kinetics for metal cation reduction, with diffusion to the silicon surface representing the rate-limiting step

- Stoichiometric ratios that vary with metal cation concentration, suggesting mechanism shifts between divalent and tetravalent pathways

- First-order kinetics for metal deposition after initial layer formation, following an initial linear growth phase

These insights enable precise control over metal deposition processes for applications in microelectronics, sensor technology, and nanostructure fabrication [12].

The kinetic extension from molar ratios to reaction rates represents a fundamental advancement in chemical analysis methodology. By integrating stoichiometric constraints with dynamic rate measurements, researchers can develop comprehensive kinetic models that provide both predictive power and mechanistic insight. The automated approaches described in this Application Note significantly reduce the time and resources required for full kinetic characterization while increasing the robustness of the resulting models. For pharmaceutical development, materials science, and numerous other fields, this kinetic extension enables deeper process understanding and more efficient optimization of chemical reactions.

Stoichiometric Networks as Scaffolds for Kinetic Model Construction

The construction of predictive kinetic models is fundamental to understanding and engineering cellular processes for therapeutic intervention. However, traditional kinetic modeling faces significant challenges, including the limited availability of kinetic constants and difficulties in scaling to large networks [14]. Stoichiometric networks, derived from genome-scale metabolic reconstructions, provide a structured scaffold that enables the integration of experimental data to build dynamic models without requiring full a priori knowledge of enzyme kinetics [14] [15]. This protocol details the application of Mass Action Stoichiometric Simulation (MASS) modeling, a method that maps metabolomic, fluxomic, and proteomic data onto stoichiometric models to generate kinetic networks capable of simulating dynamic biological states [14] [16]. This approach is positioned within a broader thesis that stoichiometric reduction research provides a principled pathway for deriving biologically realistic kinetic models, bridging the gap between constraint-based and dynamic simulation frameworks.

Key Concepts and Definitions

- Stoichiometric Matrix (N or S): A mathematical representation where rows correspond to metabolites and columns correspond to reactions. Each element ( n_{ij} ) represents the net stoichiometric coefficient of metabolite ( i ) in reaction ( j ) [15].

- Mass Action Stoichiometric Simulation (MASS) Models: Dynamic network models constructed by mapping metabolomic data onto stoichiometric models and applying mass action kinetics, enabling the explicit representation of enzymes and their functional states [14] [16].

- Constraint-Based Reconstruction and Analysis (COBRA): A methodology for interrogating stoichiometric reconstructions of large networks, typically assuming steady-state conditions [14].

- Flux Balance Analysis (FBA): A constraint-based approach that computes steady-state reaction fluxes (J) in a metabolic network, based on the assumption of optimization of an objective function (e.g., biomass production) [15].

- Gradient Matrix (G): Contains the kinetic constants and steady-state concentration information, relating reaction velocities to metabolite concentrations [14].

- Chemical Moisty Conservation: Linear relationships between metabolite concentrations arising from the conservation of chemical groups (e.g., adenosine in ATP, ADP, AMP), which reduce the independent degrees of freedom in the system [15] [3].

Application Notes: Protocol for Constructing MASS Models

This protocol describes the stepwise construction of a Mass Action Stoichiometric Simulation (MASS) model, from a core stoichiometric network to a dynamic model capable of simulation and analysis [14].

Experimental Workflow

The following diagram illustrates the logical workflow and data integration process for constructing a MASS model.

Step-by-Step Procedure

Step 1: Specification of Stoichiometric Network and Steady State

- Action: Begin with a validated stoichiometric network reconstruction (S). Define a particular steady-state flux distribution (J) that the model will satisfy [14].

- Rationale: The stoichiometric matrix forms the structural backbone. A defined flux state is necessary for parameterizing the kinetics.

Step 2: Integration of Experimental 'Omic' Data

- Action: Map available quantitative data onto the network:

- Note: If experimental values are unavailable for all metabolites, reasonable estimates or approximations must be used to proceed [14].

Step 3: Approximation of Thermodynamic Constants

- Action: Assign equilibrium constants ((K_{eq})) for each reaction. These can be obtained from literature, databases, or group contribution methods [14] [17].

- Rationale: (K_{eq}) relates the forward ((k^+)) and reverse ((k^-)) rate constants for a reaction, reducing the number of unknown parameters [14].

Step 4: Calculation of Mass Action Rate Constants

- Action: Solve for the unknown forward rate constants ((k^+)).

- For a reaction ( 2A \rightleftharpoons B ), the net mass action rate is ( v = k^+ A^2 - k^- B ) and ( K{eq} = k^+/k^- ) [14].

- At steady state, ( S \cdot v = 0 ) [15]. Substitute the rate expressions and known values (J, x, (K{eq})) into the steady-state mass balances. This generates a system of linear equations that can be solved for the (k^+) values [14].

- Alternative: If concentration data is incomplete, solve the m equations from ( S \cdot v = 0 ), which may yield multiple solutions (the k-cone) [14].

Step 5: Model Formulation and Dynamic Simulation

- Action: Construct the system of ordinary differential equations (ODEs) defining the model dynamics: ( \frac{dx}{dt} = S \cdot v(k, x) ) [14] Here, (v) is the vector of mass action rate laws.

- Implementation: Use numerical integration software (e.g., Mathematica, MATLAB) to simulate the model. To manage numerical stiffness caused by large disparities in metabolite and enzyme concentrations, consider normalizing enzyme concentrations [14].

Step 6: Incorporation of Regulation (Advanced)

- Action: Explicitly represent regulatory enzymes as nodes in the stoichiometric network. This includes different functional states of the enzyme (e.g., active, inactive, ligand-bound complexes) [14] [16].

- Rationale: This allows the model to capture how regulatory enzymes control network dynamics through their fractional saturation with metabolites [14].

Table 1: Key research reagents and computational tools used in the construction and analysis of MASS models.

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| Stoichiometric Model | Scaffold for data integration and kinetic model construction. | Can be a genome-scale reconstruction or a focused subsystem model [14] [15]. |

| Metabolomic Data Set | Provides in vivo steady-state metabolite concentrations (x). | Critical for parameterizing rate constants; gaps may require estimation [14]. |

| Fluxomic Data Set | Provides steady-state reaction fluxes (J). | Used in conjunction with concentrations to solve for rate constants [14]. |

| Equilibrium Constant (Keq) Database | Source of thermodynamic data for biochemical reactions. | Can be sourced from literature or estimation techniques [14] [17]. |

| Numerical Computing Environment | Platform for model construction, simulation, and analysis. | e.g., Mathematica, MATLAB, or Python with SciPy [14]. |

Data Output and Analysis

The following quantitative data, derived from applications of the stoichiometric scaffolding approach, highlights its utility across different biological systems and objectives.

Table 2: Comparative analysis of stoichiometric modeling applications in different biological contexts.

| Application Context | Key Quantitative Results | Implications for Drug Development & Biotechnology |

|---|---|---|

| MASS Model Construction [14] | Dynamic models constructed in scalable manner; regulatory enzymes control network states via fractional saturation. | Enables prediction of metabolic dynamics in disease states and identification of therapeutic targets. |

| Stoichiometric Model Reduction [3] | Method reduced 4-6 degrees of freedom to 1; demonstrated zero reduction error at ODE level and significant CPU time reduction. | Provides a computationally efficient framework for high-fidelity simulation of complex biochemical pathways. |

| DHA Production in C. cohnii [18] | Glycerol-fed cultures showed highest PUFAs fraction; carbon transformation rate closest to theoretical upper limit. | Informs bioprocess optimization for production of nutraceuticals like DHA using alternative feedstocks. |

| Kinetic Modeling of E. coli [17] | Enzyme saturation extends feasible flux/metabolite concentration ranges; enzymes function at different saturation states. | Suggests robustness in microbial metabolism that must be overcome or exploited in antibiotic development. |

Visualization of Network Properties and Moisty Conservation

A key step in model construction is understanding the constrained relationships within the network. The following diagram illustrates the concept of chemical moiety conservation, a fundamental property that can be derived from the stoichiometric matrix.

The use of stoichiometric networks as scaffolds provides a rigorous and practical methodology for constructing kinetic models of biochemical networks. The MASS framework directly leverages the growing availability of metabolomic and fluxomic data to parameterize mass action kinetics, bypassing the historical bottleneck of unknown enzyme kinetic parameters [14]. This approach, which can be viewed as a middle-out analysis process, results in dynamic models that retain a direct link to stoichiometry, thermodynamics, and physiological constraints [14] [17]. For researchers in drug development, this methodology offers a pathway to generate more predictive models of cellular metabolism, enabling the in silico testing of hypotheses about metabolic dysregulation in disease and the identification of potential targets for intervention.

The development of accurate kinetic models is a cornerstone of predictive research in chemical synthesis and drug development. A foundational step in this process is deriving a rate law, which quantifies the relationship between reactant concentrations and the reaction rate. A prevalent misconception is that the exponents in a rate law—the reaction orders—can be directly inferred from the stoichiometric coefficients of the balanced chemical equation. This application note clarifies the critical distinction between stoichiometry and kinetics, and provides detailed protocols for the experimental determination of the rate law and the subsequent extraction of the rate constant, a key parameter in mechanistic modeling [19] [20].

While the balanced equation for a reaction such as (aA + bB \rightarrow cC + dD) is essential for stoichiometric calculations, the experimentally determined rate law usually has the form (\text{rate} = k[A]^m[B]^n) [20]. The exponents (m) and (n) are the reaction orders with respect to A and B, and the rate constant (k) is the proportionality constant that makes this relationship exact. It is crucial to remember that (m) and (n) are not related to the stoichiometric coefficients (a) and (b) and must be determined experimentally [20]. The value of the rate constant (k) is characteristic of the reaction and the reaction conditions (e.g., temperature, pressure, solvent) but does not change as the reaction progresses under a given set of conditions [20].

Experimental Determination of the Rate Law

The only reliable method to establish the rate law and determine the rate constant is through experiment. The following protocol outlines a general methodology for determining the rate law of a solution-phase reaction via monitoring of concentration changes.

Materials and Equipment

Table 1: Essential Research Reagent Solutions and Equipment

| Item Name | Function/Description |

|---|---|

| Reactant Stock Solutions | Prepared at precise, known concentrations in an appropriate solvent. |

| Constant Temperature Bath | Maintains a consistent reaction temperature, as the value of (k) is temperature-dependent [20]. |

| Spectrophotometer / Colorimeter | For monitoring concentration change of a colored reactant or product via Beer's law [21]. |

| Quenching Agent | A chemical additive (e.g., acid, base) to rapidly stop the reaction at specific time points for analysis, if needed [21]. |

| Data Logging Software | Records changes in the monitored physical property (e.g., absorbance) over time. |

Detailed Protocol: Method of Initial Rates

This method is ideal for determining the orders of reaction ((m, n)) with respect to each reactant.

- Preparation: Prepare multiple reaction mixtures with the same total volume but varying initial concentrations of the reactants.

- Initial Rate Measurement: a. For each run, start the reaction by mixing the pre-thermostatted reactant solutions. b. Immediately begin monitoring a physical property proportional to concentration (e.g., absorbance of a colored species) [21]. c. Record the change of this property over a short initial period where the reactant concentrations have changed only minimally (typically <5% conversion). d. The initial rate is proportional to the slope of the concentration versus time curve at t=0.

- Data Analysis to Find Reaction Orders: a. Compare runs where the concentration of one reactant (e.g., ([A])) is changed while the concentrations of all others (e.g., ([B])) are held constant. b. The order with respect to A, (m), is found from the relationship: (\frac{\text{rate}2}{\text{rate}1} \approx \left(\frac{[A]2}{[A]1}\right)^m). c. Repeat this analysis for each reactant to determine all exponents in the rate law, ( \text{rate} = k[A]^m[B]^n ).

Detailed Protocol: Integrated Rate Law Method

This method is used to confirm a hypothesized rate law and determine (k) with high precision from a single concentration-time dataset.

- Data Collection: Initiate the reaction and monitor the concentration of a reactant or product over time until the reaction is complete or nearly complete.

- Hypothesis Testing: a. Assume a rate law (e.g., first-order in the monitored reactant, A). b. Linearize the data according to the corresponding integrated rate law (e.g., (\ln[A]t = \ln[A]0 - kt) for a first-order reaction). c. If the plot (e.g., (\ln[A]) vs. (t)) is linear, the hypothesized order is confirmed. The slope of the line gives the rate constant ((-k) for this example). d. If the plot is not linear, test the integrated form for a different reaction order (e.g., (1/[A]) vs. (t) for a second-order reaction).

Data Presentation and Analysis

The following table summarizes the kinetic parameters that can be determined for a generic reaction (aA + bB \rightarrow products) with a rate law of (\text{rate} = k[A]^m[B]^n).

Table 2: Summary of Kinetic Parameters and Their Determination

| Parameter | Symbol | Definition | Method of Determination |

|---|---|---|---|

| Reaction Order (with respect to A) | (m) | The exponent indicating the dependence of the rate on ([A]). | Experimental (e.g., Method of Initial Rates). |

| Overall Reaction Order | (m+n+...) | The sum of all exponents in the rate law. | Calculated from experimentally determined orders. |

| Rate Constant | (k) | The proportionality constant in the rate law; specific to the reaction and conditions. | Slope from a linearized integrated rate law plot. |

Visualization of Concepts and Workflows

The following diagrams illustrate the critical conceptual relationship between stoichiometry and kinetics, and the standard workflow for experimental determination of the rate constant.

Stoichiometry vs. Kinetics in Reaction Analysis

Experimental Workflow for Rate Constant Determination

Stoichiometric reduction reactions of alkyl halides are fundamental transformations in organic synthesis, serving as a critical pathway for generating organometallic intermediates and complex molecular structures. Within the broader scope of deriving kinetic models from stoichiometric reduction research, these reactions provide a robust framework for understanding reaction mechanisms, rates, and selectivity patterns. The precise stoichiometric relationships in these transformations offer foundational data for building predictive models that can optimize synthetic routes in pharmaceutical development and fine chemical synthesis.

This case study examines specific stoichiometric reduction processes, with particular emphasis on the formation of organometallic reagents and their subsequent applications. We present detailed experimental protocols, quantitative data analysis, and visualization of key mechanistic pathways to provide researchers with practical tools for implementing these reactions in both discovery and development settings.

Key Stoichiometric Reduction Pathways

Alkyl halides undergo stoichiometric reduction with various metals to form organometallic compounds that serve as versatile intermediates in synthetic chemistry. The most strategically important transformations include:

Formation of Organolithium Reagents: Alkyl halides react with lithium metal in a 1:2 stoichiometry to yield alkyllithium compounds [22] [23]: R3C-X + 2Li → R3C-Li + LiX

Formation of Grignard Reagents: Alkyl halides react with magnesium metal in a 1:1 stoichiometry to produce Grignard reagents [22] [23]: R3C-X + Mg → R3C-MgX

Reductive Aldehyde Formation: Primary alkyl monohalides undergo stoichiometric reduction with electrogenerated nickel(I) salen to form aldehydes through an alkylnickel(II) intermediate [24].

Directed Hydroalkylation: Nickel-catalyzed reductive hydroalkylation of alkenes tethered to directing groups uses alkyl halides as both hydride and alkyl sources [25].

The reactivity of alkyl halides in these reductions follows the trend: I > Br > Cl, with fluorides generally being unreactive under standard conditions [22] [23]. These stoichiometric transformations provide the fundamental kinetic data necessary for modeling more complex catalytic cycles in pharmaceutical synthesis.

Experimental Protocols

General Procedure for Grignard and Organolithium Reagent Formation

Principle: This protocol describes the formation of Grignard and organolithium reagents from alkyl halides and their stoichiometric relationship, which provides essential data for kinetic modeling of organometallic formation rates [22] [23].

Materials:

- Alkyl halide (1.0 equiv)

- Lithium metal (2.0 equiv for organolithium) or Magnesium metal (1.0 equiv for Grignard)

- Anhydrous ethyl ether or THF (for Grignard); pentane, hexane, or ethyl ether (for organolithium)

- Nitrogen or argon atmosphere

Procedure:

- Prepare and flame-dry the reaction flask under an inert atmosphere.

- Add the solvent (typically 0.1-0.5 M concentration relative to alkyl halide).

- Add finely divided metal (clean surface is critical for reproducible kinetics).

- Add the alkyl halide dropwise with efficient stirring at room temperature or with cooling if necessary.

- Monitor the reaction until the metal is consumed (disappearance of metallic sheen).

- Use the organometallic solution directly in subsequent reactions.

Critical Parameters for Kinetic Modeling:

- Metal Surface Area: Finely divided metals provide maximum surface area for reproducible reaction rates [22] [23].

- Solvent Effects: Ethereal solvents are essential for Grignard formation; hydrocarbon solvents may be used for organolithium formation.

- Exclusion of Protic Impurities: Water, alcohols, or acidic protons quench the organometallic reagents and invalidate kinetic measurements.

Stoichiometric Reduction of Primary Alkyl Halides to Aldehydes Using Electrogenerated Nickel(I) Salen

Principle: This specialized protocol enables the conversion of primary alkyl bromides or iodides to aldehydes using stoichiometric nickel(I) salen, providing a unique system for studying the kinetics of alkylnicker intermediate formation and transformation [24].

Materials:

- Primary alkyl monohalide (1.0 equiv)

- Nickel(II) salen complex

- Dimethylformamide (DMF), anhydrous

- Tetramethylammonium tetrafluoroborate (TMABF4) (0.10 M as supporting electrolyte)

- Reticulated vitreous carbon cathode

- Water (stoichiometric additive)

- Xenon arc lamp for irradiation

- Oxygen source

Procedure:

- Prepare the electrochemical cell with a reticulated vitreous carbon cathode and appropriate counter electrode.

- Dissolve nickel(II) salen and TMABF4 in DMF to create an electrolyte solution (typically 2 mM nickel concentration).

- Pre-electrolyze the solution at -0.92 V vs. SCE to generate nickel(I) salen in situ.

- Add a stoichiometric amount of primary alkyl monohalide (1-bromoalkane or 1-iodoalkane) to the solution.

- Add water deliberately to the reaction mixture.

- Irradiate the reaction mixture with a xenon arc lamp while maintaining electrolysis.

- Expose the reaction mixture to oxygen (O2) to form the aldehyde product.

- Work up the reaction and purify the aldehyde by standard techniques.

Critical Parameters for Kinetic Modeling:

- Nickel(I) Concentration: Precisely controlled by charge passed during pre-electrolysis.

- Water Stoichiometry: Critical for aldehyde formation yield; must be optimized for each substrate.

- Light Irradiation: Required for efficient transformation of intermediates.

- Oxygen Exposure Timing: Determines product distribution between aldehyde and dimeric byproducts.

Quantitative Data Analysis

Product Distribution in Nickel(I) Salen-Mediated Reduction

Table 1: Product distribution from stoichiometric reduction of primary alkyl halides with electrogenerated nickel(I) salen [24]

| Alkyl Halide | Aldehyde Yield (%) | Dimer Products (%) | Alkane Byproducts (%) | Alkene Byproducts (%) |

|---|---|---|---|---|

| 1-Bromohexane | 65-72 | 15-18 | 5-8 | 3-5 |

| 1-Iodohexane | 70-75 | 12-15 | 4-7 | 2-4 |

| 1-Bromooctane | 68-74 | 14-17 | 5-7 | 3-5 |

| 6-Bromo-1-hexene | 60-65* | 25-30* | 8-12* | 10-15* |

Note: Data adapted from controlled-potential electrolysis experiments in DMF containing 0.10 M TMABF4 with deliberately added water, followed by irradiation and oxygen exposure. *Product distribution differs for 6-bromo-1-hexene due to competing cyclization pathways [24].

Stoichiometric Relationships in Organometallic Reagent Formation

Table 2: Stoichiometric requirements for organometallic reagent formation from alkyl halides [22] [23]

| Reaction Type | Alkyl Halide | Metal | Stoichiometry (Metal:Halide) | Typical Yield (%) | Key Byproducts |

|---|---|---|---|---|---|

| Organolithium Formation | 1° alkyl bromide | Li | 2:1 | 85-95 | LiX, alkane (if protonated) |

| Organolithium Formation | 2° alkyl iodide | Li | 2:1 | 80-90 | LiX, alkene (if β-elimination) |

| Grignard Formation | 1° alkyl chloride | Mg | 1:1 | 70-85 | MgX2, dimer |

| Grignard Formation | 1° alkyl bromide | Mg | 1:1 | 85-95 | MgX2 |

| Grignard Formation | 2° alkyl bromide | Mg | 1:1 | 80-90 | MgX2, alkene |

Visualization of Pathways and Workflows

Nickel(I) Salen Stoichiometric Reduction Mechanism

Diagram 1: Mechanism of aldehyde formation via nickel(I) salen reduction

Experimental Workflow for Stoichiometric Reduction Studies

Diagram 2: Experimental workflow for stoichiometric reduction studies

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential research reagents for stoichiometric reduction studies [22] [24] [23]

| Reagent/Category | Specific Examples | Function in Stoichiometric Reduction |

|---|---|---|

| Reducing Metals | Lithium metal (finely divided), Magnesium turnings | Electron donors for carbon-halogen bond reduction; form organometallic intermediates |

| Transition Metal Catalysts | Nickel(II) salen, NiCl2(PPh3)2 | Mediate single-electron transfer processes; form key alkyl-metal intermediates |

| Solvents | Anhydrous THF, Diethyl ether, DMF, NMP | Solubilize reagents; stabilize organometallic intermediates; enable electron transfer |

| Supporting Electrolytes | Tetramethylammonium tetrafluoroborate (TMABF4) | Provide conductivity in electrochemical reductions; non-coordinating anions |

| Alkyl Halide Substrates | 1-Bromoalkanes, 1-Iodoalkanes, Secondary alkyl bromides | Substrates for reduction; structure affects reactivity and product distribution |

| Reductants | Manganese powder, Zinc dust | Stoichiometric reducing agents in catalytic systems; drive reaction completion |

| Directing Groups | 8-Aminoquinaldine | Control regioselectivity in hydroalkylation; stabilize reactive intermediates |

| Additives | Water (stoichiometric), Lithium halides | Participate in specific pathways; influence product selectivity |

Stoichiometric reduction reactions of alkyl halides provide invaluable data for building predictive kinetic models in organic synthesis. The case studies presented here—ranging from classical organometallic reagent formation to specialized nickel-mediated aldehyde synthesis—demonstrate how careful stoichiometric control enables precise product outcomes. The experimental protocols, quantitative datasets, and mechanistic visualizations offer researchers a comprehensive toolkit for implementing these transformations in drug development and mechanistic studies.

The integration of stoichiometric reduction research with kinetic modeling represents a powerful approach for optimizing synthetic methodologies in pharmaceutical development. By establishing clear stoichiometric relationships and understanding their impact on reaction rates and selectivity, scientists can design more efficient synthetic routes with predictable outcomes, ultimately accelerating the drug development process.

Advanced Methodologies for Kinetic Model Development and Application

High-Throughput Kinetic Parameter Determination with DOMEK and ASAP

The derivation of kinetic models from stoichiometric reduction research represents a significant advancement in systems and synthetic biology. Historically, the requirements for detailed parametrization and significant computational resources created barriers to the development and adoption of kinetic models for high-throughput studies [26]. However, recent methodological breakthroughs are overcoming these limitations. The DOMEK (mRNA-display-based one-shot measurement of enzymatic kinetics) platform enables the quantitative characterization of enzyme specificity across hundreds of thousands of substrates in a single experiment [27]. When integrated with high-throughput kinetic assessment technologies, these approaches provide unprecedented capability for mapping enzymatic activity landscapes, essential for engineering novel biocatalysts, understanding disease mechanisms, and accelerating therapeutic development [27] [28].

DOMEK (mRNA-display-based one-shot measurement of enzymatic kinetics)

DOMEK addresses the critical bottleneck in enzymology of comprehensively characterizing an enzyme's preferences across vast substrate spaces [27]. This innovative method combines mRNA display, which facilitates rapid preparation of immense substrate libraries, with next-generation sequencing to calculate specificity constants (kcat/KM) for each substrate in a massively parallel format [27].

Key Advantages:

- Operational Simplicity: Relies on standard molecular biology equipment without requiring specialized engineering expertise [27]

- Unprecedented Scalability: Successfully demonstrated with 285,000 distinct peptide substrates in a single experiment [27]

- Quantitative Rigor: Provides direct measurement of kcat/KM values with validation against traditional kinetic methods [27]

- Predictive Modeling: Large datasets enable statistical modeling to decipher enzyme substrate recognition principles [27]

High-Throughput Kinetic Assessment Platforms

Complementing the DOMEK approach, several technological platforms now enable high-throughput determination of binding kinetics critical for drug discovery:

Droplet-Based Microfluidics: A parallel droplet generation and absorbance detection platform achieves a 10-fold improvement in throughput compared to previous methods, generating approximately 8,640 data points per hour [29]. This system functions as a miniaturized spectrophotometer, capable of determining Michaelis-Menten kinetics across 7 orders of magnitude in kcat/KM [29].

TR-FRET-Based Binding Kinetics: The kinetic Probe Competition Assay (kPCA) utilizing time-resolved FRET detects binding events through energy transfer from a lanthanide-based donor fluorophore to an acceptor dye [30]. This approach has enabled the determination of association (kon) and dissociation (koff) rates for 270 kinase inhibitors across 40 drug targets, profiling 3,230 individual interactions [30].

Application Notes

Experimental Workflow: DOMEK Implementation

The following diagram illustrates the integrated workflow for high-throughput kinetic parameter determination using DOMEK technology:

Kinetic Parameter Determination Logic

The following diagram illustrates the computational pathway for deriving kinetic parameters from high-throughput screening data:

Research Reagent Solutions

Table 1: Essential research reagents and materials for high-throughput kinetic studies

| Reagent/Material | Function | Example Application |

|---|---|---|

| mRNA-substrate fusion libraries | Provides diverse substrate repertoire for enzymatic screening | DOMEK implementation for protease/protease substrate profiling [27] |

| Lanthanide-based donor fluorophores | TR-FRET energy donor with long fluorescence lifetime | Kinetic Probe Competition Assays (kPCA) for kinase inhibitor binding [30] |

| Alexa 647-labeled tracers | Acceptor fluorophore for FRET-based detection | Competitive binding assays with unlabeled compounds [30] |

| Streptavidin-Terbium conjugate | Donor complex for target protein labeling | TR-FRET assays with biotinylated kinase targets [30] |

| Biotinylated kinase targets | Immobilization-ready enzymes for binding studies | High-throughput inhibitor screening across kinase families [30] |

| Microfluidic droplet generators | Compartmentalization of individual reactions | Parallel enzyme kinetics in water-in-oil emulsions [29] |

Table 2: Performance metrics of high-throughput kinetic determination platforms

| Platform | Throughput Capacity | Measured Parameters | Dynamic Range | Key Applications |

|---|---|---|---|---|

| DOMEK | 285,000 substrates in single experiment [27] | kcat/KM for each substrate [27] | Validated against traditional methods [27] | Enzyme engineering, therapeutic design, mechanism study [27] |

| Droplet Microfluidics | ~8,640 data points/hour [29] | Michaelis-Menten parameters [29] | 7 orders of magnitude in kcat/KM [29] | Enzyme characterization, directed evolution [29] |

| TR-FRET kPCA | 3,230 inhibitor-target interactions [30] | kon, koff, residence time [30] | Distinguishes clinical development stages [30] | Kinase inhibitor profiling, drug candidate selection [30] |

Detailed Experimental Protocols

Protocol 1: DOMEK for Enzyme Substrate Profiling

Principle: mRNA display enables the generation of immense peptide substrate libraries covalently linked to their encoding mRNA molecules. After enzymatic reactions, substrate conversion is quantified via next-generation sequencing to determine specificity constants [27].

Procedure:

- Library Preparation:

- Generate DNA library encoding diverse peptide substrates with flanking sequences for mRNA transcription

- Transcribe to mRNA and purify using standard molecular biology techniques

- Ligate puromycin-linked oligonucleotide to 3' end of mRNA

- Perform in vitro translation to create mRNA-peptide fusions

- Purify fusion libraries via oligo(dT) chromatography

Enzymatic Reactions:

- Incubate enzyme of interest with mRNA-substrate library (typical concentration: 100-500 nM enzyme)

- Perform reactions in appropriate buffer system with time course sampling

- Quench reactions at predetermined time points (typically 0, 5, 15, 30, 60 minutes)

Sequence Analysis:

- Reverse transcribe mRNA from reacted libraries

- Prepare sequencing libraries with appropriate barcoding

- Perform high-throughput sequencing (Illumina platforms recommended)

- Calculate substrate enrichment/depletion ratios across time points

Kinetic Parameter Calculation:

- Convert sequence count data to relative substrate conversion rates

- Calculate kcat/KM for each substrate using internal standard methods

- Validate key results with traditional kinetic assays

Validation: The research team reliably monitored enzymatic kinetics for 285,000 distinct peptide substrates and validated the results with traditional methods [27].

Protocol 2: TR-FRET Kinetic Probe Competition Assay

Principle: This method detects competitive binding between fluorescent tracers and unlabeled compounds by monitoring time-resolved FRET signals. Binding kinetics are derived from signal changes over time [30].

Procedure:

- Reagent Preparation:

- Prepare biotinylated kinase targets at working concentration (typically 1-5 nM)

- Complex streptavidin-terbium donor with biotinylated kinase (30 minutes, room temperature)

- Dilute Alexa 647-labeled tracer to appropriate concentration (EC80 recommended)

Assay Setup:

- Dispense 5 µL of tracer solution into black 384-well small volume microplates

- Add 5 µL of competitor compounds at various concentrations (typically 8-point dilution series)

- Include control wells without competitor for reference signal

Kinetic Measurement:

- Initiate reactions by adding 5 µL of terbium-labeled kinase using automated dispenser

- Immediately begin kinetic reading on PHERAstar FS plate reader

- Use TR-FRET detection with the following settings:

- Excitation: Laser

- Number of flashes: 5

- Integration start: 100 µs

- Integration time: 400 µs

- Number of cycles: 41

- Cycle time: 10 seconds

Data Analysis:

- Fit tracer-only data to determine tracer association and dissociation rates

- Analyze competition data using appropriate binding models

- Derive kon, koff, and residence time for each compound

- Quality control based on curve fitting statistics

Applications: This protocol has been used to determine binding kinetics of 270 kinase inhibitors against 40 drug targets, profiling 3,230 individual interactions and demonstrating correlation between slow dissociation rates and clinical success [30].

Integration with Stoichiometric Reduction Research

The integration of high-throughput kinetic data with stoichiometric models represents a transformative approach in metabolic engineering and systems biology. Kinetic parameters derived from DOMEK and related technologies provide critical constraints for refining genome-scale metabolic models (GEMs), enabling more accurate predictions of metabolic behaviors [26]. Recent methodologies including SKiMpy, MASSpy, and KETCHUP facilitate the incorporation of kinetic data into structural modeling frameworks, dramatically reducing the time required to construct predictive models [26].

This integration is particularly valuable for understanding metabolic responses under fluctuating conditions where regulatory mechanisms—enzyme inhibition, activation, feedback loops, and changes in enzyme efficiency—play critical roles that cannot be captured by steady-state models alone [26]. The combination of high-throughput kinetic parameter determination with advanced modeling approaches opens new possibilities for predicting optimal genetic and environmental interventions in metabolic engineering, pharmaceutical development, and biomedical research.

The derivation of kinetic models from stoichiometric reconstructions represents a critical step in systems biology, enabling researchers to move beyond static network representations to dynamic simulations of metabolic behavior. This transition is fundamental for predicting cellular responses to genetic perturbations or environmental changes, with significant implications for drug development and metabolic engineering. However, the construction of such models demands specialized computational tools that can efficiently handle parameter estimation, model simulation, and validation. Among the emerging solutions, three Python-based frameworks—SKiMpy, MASSpy, and Tellurium—have established themselves as powerful environments for addressing the distinct challenges of kinetic model development. These frameworks provide structured methodologies for converting stoichiometric models into dynamic kinetic representations, each employing different philosophical and technical approaches to balance model accuracy, computational efficiency, and practical usability [26].

The integration of these tools into a cohesive workflow allows researchers to leverage the strengths of each framework at different stages of the model development pipeline. This application note provides detailed protocols for utilizing these frameworks individually and in an integrated fashion, supported by comparative analyses, visualization workflows, and essential resource guidance to facilitate their adoption in research environments focused on drug discovery and systems biology.

Framework Comparison and Selection Guide

Selecting the appropriate framework depends on specific research objectives, data availability, and desired model characteristics. The table below provides a systematic comparison of SKiMpy, MASSpy, and Tellurium across multiple technical dimensions.

Table 1: Comparative Analysis of Kinetic Modeling Frameworks

| Feature | SKiMpy | MASSpy | Tellurium |

|---|---|---|---|

| Primary Approach | Sampling-based parametrization [26] | Mass-action kinetics & constraint-based integration [26] | Simulation of standardized model structures [26] |

| Parameter Determination | Sampling from steady-state fluxes & concentrations [26] | Sampling & Fitting [26] | Fitting to time-resolved data [26] |

| Core Requirements | Steady-state fluxes, thermodynamic data [26] | Steady-state fluxes & concentrations [26] | Time-resolved metabolomics data [26] |

| Key Advantages | Efficient parallel sampling; ensures physiological relevance; automatic rate law assignment [26] | Tight integration with COBRApy; computationally efficient [26] | Supports many standardized model structures; integrated toolset [26] |

| Notable Limitations | No explicit time-resolved data fitting [26] | Primarily implements mass-action kinetics [26] | Limited built-in parameter estimation capabilities [26] |

| Typical Workflow | Model scaffolding → Parameter sampling → Pruning & validation [26] | Model construction → Constraint integration → Simulation & analysis [26] | Model loading/specification → Simulation → Parameter scanning/estimation [26] |

Framework Selection Guidelines

Choose SKiMpy when working from a known stoichiometric model (e.g., from MetaNetX or BiGG Models) and needing to rapidly generate and screen many thermodynamically feasible kinetic parameter sets without immediate experimental time-course data. Its semi-automated pipeline is ideal for large-scale model generation and initial feasibility studies [26].

Choose MASSpy when the research goal involves tight coupling between constraint-based models (Flux Balance Analysis) and kinetic simulations, particularly for metabolic engineering applications. Its foundation on mass-action kinetics provides a direct link to thermodynamic principles, and its integration with the COBRA toolbox allows for flexible extensions [26].

Choose Tellurium when possessing detailed, time-resolved experimental data (e.g., from LC-MS time courses) for model fitting and validation. Its strength lies in sophisticated simulation, analysis, and standardization of models, making it excellent for prototyping and analyzing smaller, well-characterized systems [26].

Experimental Protocols

Protocol 1: Kinetic Model Construction with SKiMpy

This protocol describes the construction of a kinetic model using SKiMpy's sampling-based approach, which is highly efficient for large networks.

I. Prerequisite Data Preparation

- Stoichiometric Model: Import an SBML model or reconstruct from databases (e.g., BiGG, MetaNetX).

- Physiological Data: Collect or estimate steady-state metabolite concentrations ([S]) and metabolic fluxes (J) for the target condition.

- Thermodynamic Data: Compile standard Gibbs free energies of formation (ΔfG'°) for metabolites, estimated via group contribution methods if experimental values are unavailable [26].

II. Model Scaffolding and Parametrization

- Load the stoichiometric matrix into SKiMpy.

- Assign appropriate kinetic rate laws (e.g., Michaelis-Menten, Hill) from the built-in library to each reaction. Custom mechanisms can be defined.

- Define the model's thermodynamic constraints using the provided data to ensure reaction directionality is consistent [26].

III. Parameter Sampling and Model Pruning

- Use the ORACLE framework within SKiMpy to sample millions of kinetic parameter sets (KM, Vmax) that satisfy the steady-state and thermodynamic constraints.

- Perform a time-scale separation analysis to prune the parameter sets, retaining only those that achieve steady-state within a physiologically realistic timeframe [26].

- The output is an ensemble of viable kinetic models ready for simulation and further validation.

Protocol 2: Integration of Stoichiometric and Kinetic Models with MASSpy

This protocol leverages MASSpy's integration with the COBRApy ecosystem to build kinetic models grounded in constraints-based analysis.

I. Model Initialization and Constraint Integration

- Initialize the model using an existing COBRApy model as a scaffold.

- Incorporate measured or estimated boundary metabolite concentrations.

- Define thermodynamic constraints by setting the logarithm of the mass-action ratio (ln(Γ)) for reactions and their equilibrium constants (Keq) [26].

II. Construction and Simulation of the Dynamic Model

- By default, MASSpy will represent reactions using mass-action kinetics. Custom rate laws can be specified for specific reactions.

- Parameterize the model using the

get_mass_action_kmax_valuesfunction to calculate apparent rate constants that are consistent with a reference flux distribution. - Simulate the dynamic model by numerically integrating the system of Ordinary Differential Equations (ODEs) using the

simulatemethod, which can predict metabolite concentration changes over time [26].

Protocol 3: Model Simulation, Analysis, and Parameter Estimation with Tellurium

This protocol utilizes Tellurium's robust simulation environment to analyze an existing kinetic model and, if data is available, perform parameter estimation.

I. Model Simulation and Analysis

- Load an existing kinetic model in SBML or Antimony format.

- Simulate the model by performing numerical integration over a defined time course.

- Use Tellurium's built-in analysis tools, such as performing a parameter scan to investigate how specific parameter changes affect system dynamics (e.g., oscillation periods, steady-state levels) [26].

II. Parameter Estimation (Using External Packages)

- While Tellurium's native estimation capabilities are limited, it can be integrated with external Python libraries for parameter fitting.

- Load experimental time-course data (e.g., metabolite concentrations over time).

- Define an objective function (e.g., sum of squared residuals) that quantifies the difference between model simulations and experimental data.

- Use a optimization library (e.g.,

pyomo,scipy.optimize) to adjust model parameters to minimize the objective function, thereby calibrating the model to the data.

Workflow Visualization

The following diagram illustrates the logical relationships and typical workflow between the three frameworks, highlighting how they can be used complementarily.

Diagram 1: Kinetic modeling framework workflow.

Successful implementation of kinetic models requires both computational tools and contextual data. The table below lists key "research reagents" for this domain.

Table 2: Key Resources for Kinetic Modeling

| Resource Name | Type | Primary Function | Relevance to Frameworks |

|---|---|---|---|

| Stoichiometric Models (BiGG/MetaNetX) | Data | Provides the network scaffold of reactions, metabolites, and stoichiometry. | Foundational input for all three frameworks [26]. |

| Group Contribution Method | Computational Tool | Estimates standard Gibbs free energies of formation (ΔfG'°) for metabolites. | Critical in SKiMpy and MASSpy for enforcing thermodynamic constraints [26]. |

| Time-Course Metabolomics Data | Experimental Data | Provides measured concentrations of metabolites over time under a perturbation. | Used for model validation in SKiMpy/MASSpy and for parameter estimation in Tellurium [26]. |

| Turnover Numbers (kcat) | Kinetic Parameter | Defines the maximum catalytic rate of an enzyme. | Can be used to inform initial Vmax values during parametrization in all frameworks [26]. |

| Michaelis Constants (KM) | Kinetic Parameter | Defines the substrate concentration at half-maximal enzyme velocity. | Directly sampled in SKiMpy; target for estimation in Tellurium [26]. |

| COBRApy | Python Package | Provides tools for constraint-based reconstruction and analysis of metabolic models. | The foundation upon which MASSpy is built; enables seamless transition from FBA to kinetic models [26]. |

| Parameter Sampling Algorithms (ORACLE) | Computational Method | Generates kinetic parameter sets consistent with thermodynamic and steady-state constraints. | Core component of the SKiMpy workflow for high-throughput model generation [26]. |

Integration of Machine Learning for Parameter Estimation and Prediction

The integration of Machine Learning (ML) methodologies has emerged as a transformative force for enhancing parameter estimation and prediction capabilities within complex scientific domains, including biological kinetic modeling and pharmaceutical development. These data-driven approaches address critical limitations of traditional methods, particularly in handling non-linear relationships, high-dimensional data, and limited datasets. This document provides detailed application notes and protocols for implementing ML strategies—such as Random Forest Regression, Bidirectional Long Short-Term Memory (BiLSTM) networks, and support vector regression (SVR)—to derive accurate, efficient, and generalizable kinetic models from stoichiometric reduction research. Framed within the context of a broader thesis on kinetic model derivation, these guidelines are designed for researchers, scientists, and drug development professionals seeking to leverage ML for advanced predictive analytics.

In scientific research, particularly in deriving kinetic models from stoichiometric foundations, parameter estimation is a cornerstone for building accurate predictive models. Traditional methods, including linear regression and mechanistic modeling, often struggle with the complex, non-linear relationships inherent in systems like metabolic networks and drug disposition processes [31] [32]. The advent of ML offers powerful alternatives that can learn intricate patterns from data, thereby enhancing predictive accuracy and computational efficiency.

The synergy between model-informed paradigms and AI is particularly potent. For instance, in drug development, Model-Informed Drug Development (MIDD) uses mathematical models to simulate drug behavior, and its integration with AI enables more accurate predictions and novel hypothesis generation from large, complex datasets [32]. Similarly, in biological reaction kinetic modeling, accurately defining and correlating parameters like yield coefficients is critical, and misapplication can lead to significant calculation errors [33]. Machine learning provides a robust framework to navigate these complexities, as demonstrated by its successful application in predicting fracture parameters in materials science [31] and optimizing software design effort [34]. This document outlines the practical application of these ML techniques for parameter estimation and prediction.

Key Machine Learning Applications and Performance

Machine learning models have demonstrated superior performance over traditional statistical methods across various prediction tasks. The table below summarizes quantitative performance data from relevant studies, highlighting the efficacy of different algorithms.

Table 1: Comparative Performance of Machine Learning Models in Predictive Tasks

| Field of Application | Machine Learning Model | Comparative Traditional Model | Key Performance Metrics (ML Model vs. Traditional) | Reference |

|---|---|---|---|---|

| Fracture Parameter Prediction | Random Forest Regression (RFR) | Multiple Linear Regression (MLR) | Validation R²: 0.93 (YI), 0.96 (YII), 0.99 (T*) vs. R² as low as 0.44 for MLR | [31] |

| Fracture Parameter Prediction | BiLSTM | Polynomial Regression (PR) | Validation R²: 0.99 (YI), 0.96 (YII), 0.99 (T*) vs. R² as low as 0.57 for PR | [31] |

| Software Design Effort Prediction | Support Vector Regression (SVR) | Statistical Regression Model (SRM) | Statistically superior performance in 5 out of 7 datasets | [34] |

| Software Design Effort Prediction | Multi-layer Perceptron (MLP) | Statistical Regression Model (SRM) | Outperformed SRM on 3 datasets and equal performance on 4 others | [34] |

Beyond the applications above, ML's value is evident in pharmaceutical development. AI and ML components in drug application submissions to the FDA's Center for Drug Evaluation and Research (CDER) have seen a significant increase, with over 100 submissions in 2021 and more than 500 reviewed between 2016 and 2023 [35]. These applications span target identification, toxicity prediction, patient stratification, and the analysis of real-world data, underscoring ML's versatility in parameter estimation and prediction across the development lifecycle [32] [36] [35].

Detailed Experimental Protocols

Protocol 1: ML-Based Estimation of Yield Coefficients in Kinetic Models

This protocol details the application of ML to correlate different forms of yield coefficients in biological reaction kinetic modeling, a critical task for accurate mass balance equations [33].

1. Problem Definition and Data Sourcing:

- Objective: Develop an ML model to predict accurately defined yield coefficients for cell and product formation, thereby preventing errors arising from the misuse of coefficients derived from overall metabolic reactions versus parallel reactions [33].

- Data Requirements: Gather structured datasets from experimental or simulated metabolic reactions. Key features should include:

- Stoichiometric coefficients of substrates and products.

- Thermodynamic properties of the reaction (e.g., Gibbs energy dissipation) [33].

- Rates of substrate consumption, cell growth, and product formation.

- Maintenance energy coefficients.

2. Data Preprocessing and Feature Engineering:

- Clean the data by handling missing values and normalizing numerical features to a common scale.

- Perform feature engineering to create potential input variables, such as ratios of stoichiometric coefficients or thermodynamic efficiencies.

- Split the dataset into training, validation, and test sets (e.g., 70/15/15 ratio).

3. Model Selection and Training:

- Initial Model Choice: Begin with tree-based models like Random Forest Regression (RFR) due to their high accuracy with non-linear data and inherent feature importance analysis [31].

- Advanced Model Exploration: For larger, sequential datasets, consider deep learning models like BiLSTM, which can capture complex temporal dependencies [31].

- Training Procedure: Train the selected model on the training set. Use the validation set for hyperparameter tuning (e.g., number of trees in RFR, learning rate in BiLSTM) to optimize performance and prevent overfitting.

4. Model Validation and Interpretation:

- Evaluate the final model on the held-out test set using metrics such as R-squared (R²), Mean Absolute Error (MAE), and Root Mean Squared Error (RMSE).

- Analyze feature importance scores provided by models like RFR to gain insights into the most influential factors affecting yield coefficients, aligning model predictions with thermodynamic principles [31] [33].

Protocol 2: Implementing a Virtual Screening Workflow with Federated Learning

This protocol outlines a privacy-preserving approach for multi-institutional collaboration in early drug discovery, leveraging federated learning for virtual screening and parameter prediction [36].

1. Collaborative Framework Setup:

- Objective: Train a unified ML model to predict molecular properties or binding affinities across multiple institutions without sharing raw data.

- Infrastructure: Establish a central parameter server and ensure all participating institutions have the necessary software and secure communication channels.

2. Model and Data Preparation:

- Model Architecture: Collaborators agree on a standard model architecture (e.g., a specific Convolutional Neural Network or Graph Neural Network for molecular data).

- Local Data Standardization: Each institution prepares its proprietary dataset of chemical structures and associated experimental parameters (e.g., IC50, solubility). Features must be consistent across sites.

3. Federated Learning Cycle:

- Step 1 - Initialization: The central server initializes a global model with random weights.