Genome-Scale Metabolic Model Reconstruction: A Comprehensive Guide from Foundations to Biomedical Applications

Genome-scale metabolic models (GEMs) provide powerful computational frameworks for systems-level metabolic studies by describing gene-protein-reaction associations across entire metabolic genes.

Genome-Scale Metabolic Model Reconstruction: A Comprehensive Guide from Foundations to Biomedical Applications

Abstract

Genome-scale metabolic models (GEMs) provide powerful computational frameworks for systems-level metabolic studies by describing gene-protein-reaction associations across entire metabolic genes. This comprehensive overview explores the foundational principles, methodological approaches, applications, and current challenges in GEM reconstruction and analysis. We examine the evolution from early manually-curated models to contemporary automated pipelines and consensus approaches that enhance predictive accuracy. The article highlights transformative applications in strain engineering for bioproduction, drug target identification in pathogens, and understanding human diseases. For researchers and drug development professionals, we detail troubleshooting strategies for common reconstruction uncertainties and validation frameworks for ensuring model reliability. By synthesizing recent advances and emerging methodologies, this resource equips scientists with the knowledge to leverage GEMs for advancing biomedical research and therapeutic development.

The Essential Foundations of Genome-Scale Metabolic Modeling

Genome-scale metabolic models (GEMs) are mathematical representations of the complete metabolic network of an organism, constructed from its genomic information [1] [2]. These computational frameworks quantitatively define the relationship between genotype and phenotype by integrating various types of biological data, including genomics, metabolomics, and transcriptomics [3]. GEMs encompass all known metabolic reactions within a cell, their associated genes, enzymes, and metabolites, providing a comprehensive platform for simulating metabolic fluxes and predicting phenotypic behaviors under different conditions [3] [4].

The reconstruction of GEMs represents a foundational methodology in systems biology, enabling researchers to move beyond studying individual metabolic components to understanding the system-level properties of cellular metabolism. By contextualizing different types of 'Big Data' within a structured network, GEMs serve as knowledgebases that organize and systematize biochemical information into testable computational frameworks [3] [4]. The development of these models has accelerated dramatically in recent years, with over 6,000 metabolic models now reconstructed across bacteria, archaea, and eukaryotes [3].

Core Components of GEMs

Genome-scale metabolic models are built upon several interconnected components that together form a comprehensive representation of an organism's metabolic capabilities. Each element plays a distinct role in defining the structure and functionality of the model.

Table 1: Core Components of Genome-Scale Metabolic Models

| Component | Description | Function in Model |

|---|---|---|

| Genes | DNA sequences encoding metabolic enzymes | Provide genetic basis for reactions via Gene-Protein-Reaction rules |

| Enzymes | Proteins catalyzing biochemical reactions | Connect gene information to reaction catalysis |

| Reactions | Biochemical transformations between metabolites | Form the edges of the metabolic network |

| Metabolites | Chemical compounds consumed/produced in reactions | Form the nodes of the metabolic network |

| Stoichiometric Matrix (S) | Mathematical representation of reaction stoichiometry | Enables quantitative flux calculations [4] |

| Gene-Protein-Reaction (GPR) Rules | Boolean relationships connecting genes to reactions | Define genotype-phenotype relationships [3] |

| Biomass Composition | Metabolites required for cellular growth | Serves as common objective function [1] |

The stoichiometric matrix (S) forms the mathematical foundation of a GEM, where rows represent metabolites, columns represent reactions, and entries correspond to stoichiometric coefficients [4]. This matrix defines the topological structure of the metabolic network and enables the application of constraint-based modeling approaches. The gene-protein-reaction associations establish direct connections between genomic content and metabolic capabilities, allowing researchers to simulate the metabolic consequences of genetic perturbations [3].

Table 2: Common Exchange Formats for Metabolic Models

| Format Name | Description | Primary Use Case |

|---|---|---|

| SBML | Systems Biology Markup Language | Model exchange and simulation [2] |

| SBGN | Systems Biology Graphical Notation | Standardized visual representation [2] |

| COBRA | Format for COnstraint-Based Reconstruction and Analysis | Constraint-based modeling simulations |

Methodologies for GEM Reconstruction and Analysis

Reconstruction Pipeline

The reconstruction of high-quality genome-scale metabolic models follows a systematic multi-step process that transforms genomic information into a predictive computational model [1]:

- Functional Genome Annotation: Identification of metabolic genes within the genome and assignment of enzyme functions

- Reaction Network Assembly: Compilation of biochemical reactions based on annotated genes, including determination of stoichiometry and reaction directionality

- Compartmentalization Assignment: Allocation of reactions to appropriate subcellular locations

- Biomass Composition Definition: Specification of metabolic requirements for cellular growth based on experimental data

- Energy Requirement Estimation: Determination of maintenance energy costs

- Model Validation and Gap Filling: Iterative refinement using experimental data to identify and fill metabolic gaps

This reconstruction process has been implemented through various automated and semi-automated tools that enable the development of organism-specific models [3]. However, manual curation remains essential for developing high-quality models capable of accurate phenotypic predictions.

Constraint-Based Analysis Methods

Once reconstructed, GEMs can be analyzed using various constraint-based approaches that simulate metabolic behavior under different conditions:

Flux Balance Analysis (FBA)

Flux Balance Analysis is the most widely used method for analyzing GEMs [3] [4]. FBA operates under the steady-state assumption, where the production and consumption of internal metabolites are balanced. This approach calculates metabolic flux distributions by optimizing an objective function (typically biomass production) subject to constraints represented by:

- The stoichiometric matrix (S)

- Capacity constraints on reaction fluxes

- Nutrient uptake rates

The mathematical formulation of FBA can be represented as:

Maximize: Z = cᵀv (objective function, typically biomass production) Subject to: S·v = 0 (mass balance constraints) vmin ≤ v ≤ vmax (flux capacity constraints)

Where v represents the flux vector, c is the vector of coefficients for the objective function, and S is the stoichiometric matrix [4].

Dynamic and Enzyme-Constrained Extensions

Dynamic FBA extends traditional FBA by incorporating time-dependent changes in extracellular metabolites and biomass composition, enabling simulations of metabolic shifts over time [3]. The GECKO (Enzyme Constraints using Kinetic and Omics data) methodology further enhances GEMs by incorporating enzyme capacity constraints based on kinetic parameters and proteomic data [5]. This approach accounts for the limited intracellular space and protein allocation constraints, improving predictions of metabolic behavior under various conditions.

Advanced Applications of GEMs

Multi-Strain and Pan-Genome Analyses

The expansion of genomic data has enabled the development of multi-strain metabolic models that capture metabolic diversity across different isolates of the same species. This approach involves creating a "core" model containing metabolic reactions shared by all strains and a "pan" model incorporating the union of all metabolic capabilities [3]. Notable implementations include:

- 55 individual E. coli GEMs consolidated into a multi-strain framework [3]

- 410 Salmonella strain models predicting growth across 530 environments [3]

- 64 S. aureus GEMs analyzed under 300 growth conditions [3]

- 22 K. pneumoniae models simulating growth on various nutrient sources [3]

These multi-strain analyses provide insights into strain-specific metabolic capabilities and enable the identification of disease-associated traits across different isolates.

Metabolic Engineering and Drug Development

GEMs have become indispensable tools for metabolic engineering and drug target identification. In industrial biotechnology, GEMs facilitate the design of microbial cell factories for producing valuable chemicals by predicting genetic modifications that optimize product yield [3] [5]. In pharmaceutical research, GEMs enable the identification of essential metabolic reactions in pathogens that represent potential drug targets [3]. The ESKAPEE pathogens (Enterococcus faecium, Staphylococcus aureus, Klebsiella pneumoniae, Acinetobacter baumannii, Pseudomonas aeruginosa, Enterobacter spp., and Escherichia coli) have been particularly targeted using pan-genome analyses coupled with GEMs to identify novel antibiotic targets [3].

Integration with Machine Learning and Big Data

The increasing volume of biological data has driven the development of integration frameworks that combine GEMs with machine learning approaches [3]. GEMs provide structured biochemical context for interpreting high-dimensional omics data, enabling more accurate predictions of metabolic behavior. This integration is particularly valuable for studying complex systems such as:

- Host-microbiome interactions through integrated host-microbe models [3]

- Human diseases by contextualizing patient-specific omics data [3]

- Microbial community dynamics using multi-species metabolic models [3]

Table 3: Essential Research Tools and Databases for GEM Development

| Resource Name | Type | Primary Function | Key Features |

|---|---|---|---|

| BiGG Models | Knowledgebase | Curated GEM repository [6] | Standardized identifiers, 70+ models, cross-references |

| GECKO Toolbox | Software | Enzyme constraint integration [5] | Automated kcat retrieval, proteomics integration |

| COBRA Toolbox | Software | Constraint-based modeling [4] | FBA, dFBA, gap filling algorithms |

| COBRApy | Software | Python implementation of COBRA [4] | Python-based modeling, simulation, and analysis |

| Escher | Software | Pathway visualization [7] | Interactive metabolic maps, data visualization |

| BRENDA | Database | Enzyme kinetic parameters [5] | kcat values, kinetic information for parameterization |

| KEGG | Database | Metabolic pathways and reactions [4] | Reaction database, pathway maps |

Visualization Approaches for Metabolic Networks

The complexity of genome-scale metabolic models presents significant challenges for visualization and interpretation. Effective visualization strategies must address several network characteristics [2]:

- Scale-free topology with few highly connected hub metabolites (H₂O, ATP, NADH)

- Nested subcellular compartments (mitochondrion, cytoplasm, membranes)

- Recurring biochemical motifs (cycles, cascades, linear pathways)

Specialized tools have been developed to address these challenges, including Cytoscape for network analysis, CellDesigner for pathway mapping, and Escher for creating interactive metabolic maps [2] [7]. For dynamic visualization of time-course metabolomic data, GEM-Vis provides animation capabilities that represent metabolite concentrations through fill levels of node elements, enabling researchers to observe metabolic changes over time [7].

The field of genome-scale metabolic modeling continues to evolve rapidly, with several emerging trends shaping future development. The integration of enzyme constraints through tools like GECKO 2.0 represents a significant advancement in model predictive capability [5]. The expansion of multi-kingdom models that encompass host-microbe interactions provides new opportunities for understanding complex biological systems [3]. The development of standardized formats and databases ensures consistent model quality and facilitates collaborative development [6].

As the volume of biological data continues to grow, GEMs will play an increasingly important role in contextualizing and interpreting this information. The integration of machine learning approaches with constraint-based modeling frameworks promises to enhance both the reconstruction process and predictive capabilities [3]. Furthermore, the application of GEMs in biomedical research continues to expand, with growing use in drug discovery, disease mechanism elucidation, and personalized medicine approaches [3] [5].

In conclusion, genome-scale metabolic models represent a mature computational framework for understanding the relationship between genotype and phenotype. By systematically organizing metabolic knowledge into structured networks, GEMs enable quantitative prediction of cellular behavior across diverse organisms and conditions. As reconstruction methodologies continue to advance and integration with other data types improves, these models will remain essential tools for biological discovery and biotechnological innovation.

Genome-scale metabolic model (GEM) reconstruction has evolved from a manual, time-intensive process into a sophisticated computational framework integrating multi-omics data and enabling diverse applications in biotechnology, medicine, and fundamental research. This technical overview examines the historical progression of GEM development, from the first pioneering reconstructions to contemporary automated platforms that generate models for thousands of organisms. We document quantitative expansions in model content and capability, present standardized protocols for reconstruction and analysis, and visualize key workflows that enable researchers to simulate metabolic behavior under varying genetic and environmental conditions. The integration of GEMs with expression data and enzymatic constraints represents a paradigm shift in predictive systems biology, facilitating strain engineering, drug target identification, and understanding of host-microbe interactions.

Genome-scale metabolic models are mathematically structured knowledge bases that computationally represent the complete metabolic network of an organism. They explicitly define gene-protein-reaction associations (GPRs) based on genomic annotation and biochemical literature, creating a stoichiometry-based, mass-balanced representation of metabolism [8]. The core mathematical framework utilizes a stoichiometric matrix (S), where rows represent metabolites and columns represent biochemical reactions. Under the steady-state assumption, this framework allows computation of flux distributions through the equation S · v = 0, where v is the flux vector [9].

The evolution of GEM reconstruction has progressed through distinct phases: initial manual curation efforts, development of semi-automated tools, creation of model repositories and standards, and most recently, integration of multi-omics data and enzymatic constraints. This progression has transformed GEMs from specialized research projects for single organisms into scalable resources covering thousands of species across the phylogenetic tree [8].

Historical Timeline and Quantitative Expansion

The first genome-scale metabolic model was reconstructed for Haemophilus influenzae in 1999, comprising 296 genes and 488 reactions [10] [8]. This pioneering work established the fundamental paradigm of linking genomic information with metabolic capability. The subsequent two decades witnessed exponential growth in both model coverage and complexity, driven by advances in genome sequencing, computational power, and curation tools.

Table 1: Historical Progression of Representative Genome-Scale Metabolic Models

| Organism | Year | Genes in Model | Reactions | Metabolites | Significance |

|---|---|---|---|---|---|

| Haemophilus influenzae | 1999 | 296 | 488 | 343 | First GEM [10] |

| Escherichia coli | 2000 | 660 | 627 | 438 | Early bacterial model [10] |

| Saccharomyces cerevisiae | 2003 | 708 | 1,175 | 584 | First eukaryotic GEM [10] [8] |

| Homo sapiens | 2007 | 3,623 | 3,673 | - | First human metabolic model [10] |

| Escherichia coli (iML1515) | 2019 | 1,515 | 2,712 | 1,872 | High-quality curation [8] |

| Consensus Yeast 7 | 2017-2019 | - | - | - | International collaborative effort [8] |

By February 2019, GEMs had been reconstructed for 6,239 organisms (5,897 bacteria, 127 archaea, and 215 eukaryotes), with 183 undergoing manual curation to achieve high-quality standards [8]. This quantitative expansion has been matched by qualitative improvements in model content, including better coverage of GPR associations, integration of thermodynamic constraints, and representation of subcellular compartmentalization in eukaryotic systems.

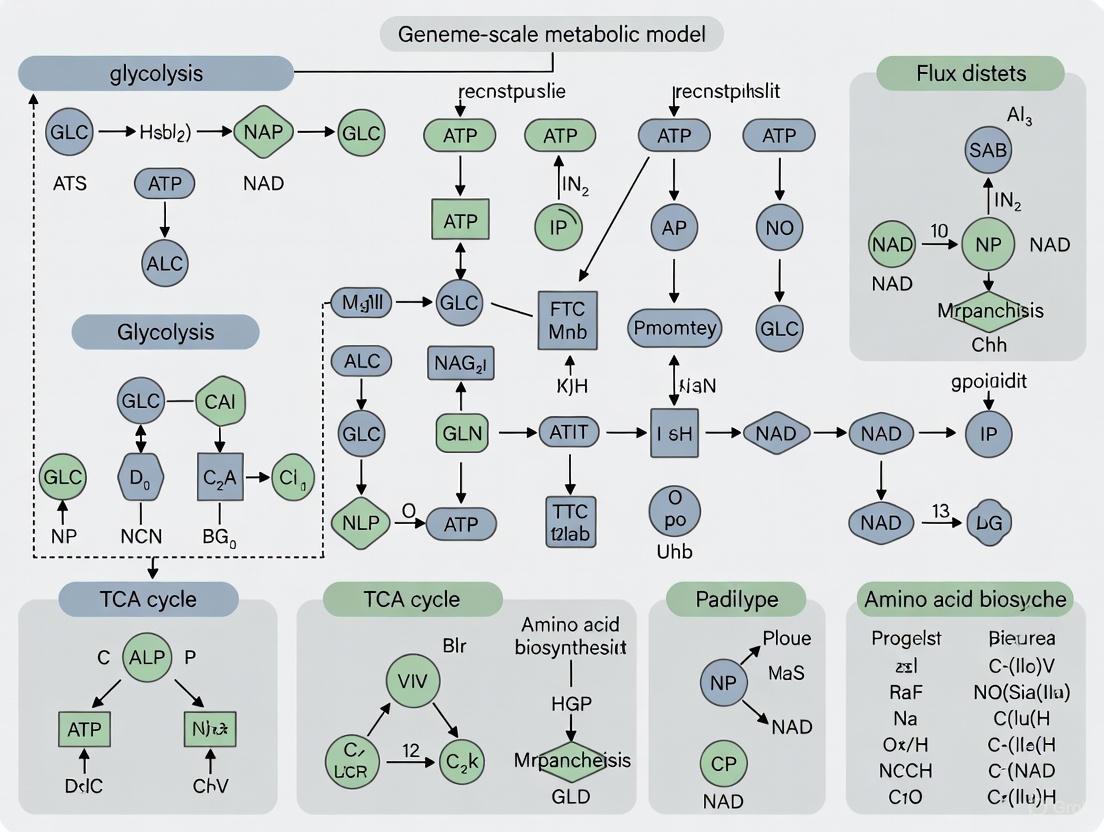

Figure 1: Historical Evolution of Genome-Scale Metabolic Modeling Approaches

Evolution of Reconstruction Methodologies

Early Manual Reconstruction Protocols

The initial phase of GEM development relied exclusively on manual curation, a labor-intensive process that could span from six months for well-studied bacteria to two years for complex eukaryotes like humans [11]. The standardized protocol involved four critical stages:

- Draft Reconstruction: Compiling an initial reaction list from genomic annotations using databases like KEGG and BioCyc [11] [10].

- Network Refinement: Manually evaluating each reaction for organism-specific evidence, including substrate specificity, cofactor utilization, and subcellular localization [11].

- Mathematical Representation: Converting the biochemical network into a stoichiometric matrix compatible with constraint-based analysis [11].

- Model Validation and Debugging: Testing network functionality against experimental growth data and known auxotrophies [11].

This process created high-quality knowledge bases but limited reconstruction to well-funded research groups studying model organisms. The E. coli reconstruction exemplifies this iterative refinement, having been expanded and refined over 19 years through multiple research iterations [11].

Semi-Automated and Automated Reconstruction Tools

The bottleneck of manual curation spurred development of computational reconstruction platforms. A 2019 systematic assessment identified twelve major reconstruction tools, each with distinct strengths and limitations [12]. These tools can be categorized by their underlying approach:

Table 2: Genome-Scale Metabolic Reconstruction Platforms

| Tool | Approach | Advantages | Limitations |

|---|---|---|---|

| CarveMe | Top-down from universal model | Fast generation (minutes); prioritizes genetic evidence | Template-dependent [12] |

| RAVEN | Template-based or de novo from KEGG/MetaCyc | Integration with COBRA Toolbox; comprehensive curation features | Requires MATLAB [12] |

| ModelSEED | Web-based automated pipeline | Integrated annotation and reconstruction; plant capabilities | Limited manual curation during process [12] |

| Pathway Tools | Interactive organism-specific database | Visualization capabilities; cellular overview diagrams | Steep learning curve [12] |

| AuReMe | Workspace with traceability | Good process tracking; Docker availability | Complex setup [12] |

| AutoKEGGRec | KEGG-based automation | Multiple organisms in single run | No biomass, transport, or exchange reactions [12] |

These tools significantly reduced reconstruction time from years to days or hours while increasing model consistency through standardized procedures. However, automated tools generally produce draft reconstructions requiring manual refinement to achieve high prediction accuracy [12].

Knowledge Bases and Standardization Initiatives

The proliferation of GEMs highlighted the need for standardized nomenclature and centralized repositories. BiGG Models emerged as a leading knowledge base, hosting over 75 high-quality, manually-curated models with consistent metabolite and reaction identifiers [13]. This standardization enables direct comparison of metabolic networks across different organisms and facilitates the development of general analysis tools.

Other critical resources include KEGG, BioCyc, and BRENDA, which provide essential biochemical information for reconstruction [10]. The Assembly of Gut Organisms through Reconstruction and Analysis (AGORA2) represents a specialized resource containing curated strain-level GEMs for 7,302 gut microbes, enabling community metabolic modeling [14].

Fundamental Analytical Frameworks

Flux Balance Analysis (FBA)

Flux Balance Analysis represents the core computational technique for simulating GEMs. FBA formulates metabolism as a linear programming problem that identifies flux distributions optimizing a cellular objective (typically biomass production) within physicochemical constraints [9] [8]. The mathematical formulation comprises:

- Objective Function: maximize Z = c · v

- Constraints: S · v = 0 (steady-state)

- Boundary Conditions: lb ≤ v ≤ ub (enzyme capacity, uptake rates)

where S is the stoichiometric matrix, v is the flux vector, and c defines the contribution of each reaction to the cellular objective [9]. FBA enables prediction of growth rates, nutrient uptake, byproduct secretion, and gene essentiality without requiring kinetic parameters.

Integration of Omics Data

The constraint-based framework readily accommodates additional constraints from experimental measurements. Transcriptomic data integration has been particularly advanced through several specialized algorithms:

Table 3: Algorithms for Integrating Expression Data into GEMs

| Method | Approach | Applications | Reference |

|---|---|---|---|

| GIMME | Reactions below expression threshold removed; minimally restored for functionality | Condition-specific model creation | [9] |

| iMAT | Maximizes fluxes of highly expressed reactions; minimizes lowly expressed | Tissue-specific metabolic activity | [9] |

| E-Flux | Converts expression levels into flux constraints | Pathogen drug target identification | [9] |

| MADE | Uses multiple datasets for differential expression without arbitrary thresholds | Comparative condition analysis | [9] |

These methods enhance model specificity by creating condition-specific metabolic networks that more accurately reflect the physiological state under investigation [9].

Figure 2: Genome-Scale Metabolic Model Reconstruction and Validation Workflow

Modern Advances and Applications

Enzyme-Constrained Models

Traditional FBA assumes infinite enzyme capacity, potentially predicting unrealistically high metabolic fluxes. The GECKO (Enzyme Constraints using Kinetic and Omics data) toolbox addresses this limitation by incorporating enzymatic constraints into GEMs [5]. GECKO expands metabolic models to include:

- Enzyme usage pseudo-reactions accounting for catalytic capacity

- kcat values from the BRENDA database

- Proteomics data as additional constraints

- Total protein pool allocation limits

The GECKO 2.0 update generalized the framework for application to any organism with a GEM reconstruction, enabling more accurate predictions of metabolic behavior under resource allocation constraints [5]. Enzyme-constrained models for S. cerevisiae, E. coli, and H. sapiens have demonstrated improved prediction of metabolic phenotypes, including the Crabtree effect in yeast [5].

Therapeutic Applications

GEMs have found valuable applications in drug development and therapeutic design. For Live Biotherapeutic Products (LBPs), GEMs guide strain selection and evaluation by predicting:

- Metabolic capabilities of candidate strains

- Production of therapeutic metabolites (e.g., short-chain fatty acids)

- Interactions with host microbiome and cells

- Adaptation to gastrointestinal conditions [14]

In pathogen research, GEMs of Mycobacterium tuberculosis have identified potential drug targets by simulating metabolism under infection conditions and predicting essential reactions for growth [8]. The integration of host-pathogen GEMs enables comprehensive modeling of infection metabolism and therapeutic interventions.

Metabolic Evolvability and Network Properties

Analysis of metabolic network structures has revealed fundamental principles governing their evolution. Computational exploration of metabolic genotype spaces demonstrates that viable metabolic networks are typically highly connected, allowing transformation between different viable networks through single reaction changes while preserving functionality [15]. This connectedness reduces the impact of historical contingency and enables evolutionary fine-tuning of metabolic properties such as robustness and biomass synthesis rate [15].

Table 4: Key Databases and Software for Metabolic Reconstruction

| Resource | Type | Function | Access |

|---|---|---|---|

| BiGG Models | Knowledge Base | Curated metabolic models | http://bigg.ucsd.edu [13] |

| KEGG | Database | Genes, pathways, reactions | www.genome.jp/kegg/ [10] |

| BRENDA | Database | Enzyme kinetic parameters | www.brenda-enzymes.info/ [10] |

| MetaCyc | Database | Metabolic pathways and enzymes | metacyc.org [10] |

| COBRA Toolbox | Software | MATLAB-based simulation | https://opencobra.github.io/ [12] |

| GECKO | Software | Enzyme constraint incorporation | https://github.com/SysBioChalmers/GECKO [5] |

| CarveMe | Software | Automated model reconstruction | https://github.com/cdanielmachado/carveme [12] |

| RAVEN | Software | Reconstruction and curation | https://github.com/SysBioChalmers/RAVEN [12] |

The historical evolution of genome-scale metabolic models has transformed them from specialized research projects into fundamental tools for systems biology. This progression from manual curation to automated reconstruction, enhanced by enzymatic constraints and multi-omics integration, has expanded their applications from basic metabolic studies to therapeutic development and biotechnology. Current frameworks support the investigation of metabolic evolvability, network properties, and organism interactions across all domains of life. As reconstruction methodologies continue to advance through machine learning and improved biochemical annotation, GEMs will play an increasingly central role in predicting and engineering biological systems.

Genome-scale metabolic models (GEMs) are computational representations of the metabolic network of an organism, enabling the prediction of its phenotypic behavior from its genotype. The utility of GEMs spans from strain engineering for biotechnology to drug target identification in pathogens [8]. The predictive power of these models hinges on three core structural elements: the stoichiometric matrix, which defines the network topology; gene-protein-reaction (GPR) associations, which link metabolic reactions to genetic information; and the biomass equation, which defines the metabolic requirements for cellular growth [16] [8] [17]. This technical guide provides an in-depth analysis of these elements, framed within the context of GEM reconstruction, and is tailored for researchers, scientists, and drug development professionals.

The Stoichiometric Matrix: Topology and Mathematical Foundation

The stoichiometric matrix, denoted as S, is the mathematical cornerstone of a genome-scale metabolic model. It quantitatively represents the connectivity of all metabolic reactions within a cell [4].

Structural Definition and Formulation

The stoichiometric matrix is an m x n matrix, where m is the number of metabolites and n is the number of reactions. Each element Sᵢⱼ represents the stoichiometric coefficient of metabolite i in reaction j. By convention, reactants (substrates) have negative coefficients and products have positive coefficients [4] [17]. For example, a simple reaction A → B would be represented as [-1, 1] in the corresponding column.

Constraint-Based Modeling and Flux Balance Analysis

The primary use of the stoichiometric matrix is in Flux Balance Analysis (FBA), a constraint-based optimization technique. FBA relies on the assumption of a steady-state, where metabolite concentrations do not change over time. This is formulated as: S · v = 0 where v is the vector of metabolic fluxes [4] [17]. To find a particular solution, FBA typically maximizes or minimizes an objective function (e.g., biomass production) subject to this and other constraints on reaction fluxes [17].

The following diagram illustrates the workflow from a metabolic network to a computational model via the stoichiometric matrix.

GPR Associations: Linking Genes to Metabolic Phenotypes

GPR rules are logical Boolean statements that connect genes to reactions through the proteins they encode. They are crucial for simulating the metabolic consequences of genetic perturbations, such as gene knockouts, and for integrating transcriptomic data [18] [8].

Boolean Logic and Enzyme Structure

GPR rules use AND and OR Boolean operators to describe the relationship between genes [18]:

- AND operator (

^): Joins genes encoding different subunits of an enzyme complex. All subunits are necessary for the complex's activity. - OR operator (

|): Joins genes encoding distinct enzyme isoforms that can catalyze the same reaction independently.

The following diagram visualizes the process of mapping genes to a metabolic reaction via a GPR association.

Automated Reconstruction of GPR Rules

The reconstruction of GPR rules has traditionally been a manual process. However, tools like GPRuler now aim to automate this by mining information from multiple biological databases, including KEGG, UniProt, STRING, MetaCyc, and the Complex Portal [18]. GPRuler can start from an organism's name or an existing model and uses the retrieved data on protein-protein interactions and complexes to infer the logical GPR associations [18].

Table 1: Key Data Sources for GPR Rule Reconstruction

| Database | Primary Use in GPR Reconstruction | Reference |

|---|---|---|

| KEGG | Information on protein complex modules and orthology. | [18] |

| UniProt | Detailed protein functional annotation. | [18] |

| STRING | Protein-protein interaction data. | [18] |

| MetaCyc | Curated metabolic pathways and enzymes. | [18] |

| Complex Portal | Information on protein macromolecular complexes. | [18] |

Biomass Equations: Quantifying Cellular Growth

The biomass objective function (BOF) is a pseudo-reaction that represents the drain of metabolic precursors and energy required to create all cellular components for a new cell. Maximizing the flux through this reaction is the most common objective function in FBA for simulating growth [16] [19].

Composition and Formulation

A biomass equation is a stoichiometrically balanced summation of all essential cellular constituents, typically including [16] [19]:

- Macromolecules: Proteins, RNA, DNA, lipids, carbohydrates.

- Building Blocks: Amino acids, nucleotides.

- Cofactors and Prosthetic Groups: Vitamins, coenzymes (e.g., Coenzyme A, NADH).

- Inorganic Ions: Phosphate, sulfate, potassium, etc.

The biomass composition is organism-specific and can be highly variable. An analysis of 71 manually curated prokaryotic GEMs revealed 551 unique metabolites used as biomass constituents, with over half appearing in only one model [16]. This highlights the current lack of standardization in biomass formulation.

Impact on Model Predictions

The qualitative composition of the biomass equation drastically impacts the predictive accuracy of a GEM, particularly for gene and reaction essentiality. Swapping the biomass equation between models of different organisms can lead to 2.74% to 32.8% of reactions changing their essentiality status (from essential to non-essential or vice versa) [16]. This underscores the critical need for accurate, well-validated biomass formulations.

Table 2: Classes of Universally Essential Prokaryotic Organic Cofactors for Biomass

| Essential Cofactor Class | Functional Role | Reference |

|---|---|---|

| Coenzyme A | Acyl group carrier in lipid metabolism. | [16] [19] |

| NAD(P)H | Central electron carriers in redox reactions. | [16] [19] |

| Tetrahydrofolate | One-carbon unit transfer in nucleotide synthesis. | [16] [19] |

| S-Adenosylmethionine | Methyl group donor. | [16] [19] |

| Ubiquinone | Electron transport in respiratory chains. | [16] [19] |

| Pyridoxal Phosphate | Cofactor for amino acid metabolism. | [16] [19] |

Integrated Workflow for GEM Reconstruction and Analysis

Building a functional GEM involves a systematic process of integrating these three core elements. The following workflow, which can be implemented using tools like PyFBA [17], outlines the key steps.

Detailed Experimental Protocol for GEM Construction

The following protocol, adapted from the PyFBA methodology, details the process of building a metabolic model from a genome sequence [17].

- Genome Annotation: The first step is to identify all metabolic genes in the organism using an annotation tool like RAST or PROKKA. The output is a list of functional roles assigned to genes, ideally including Enzyme Commission (EC) numbers.

- Convert Functional Roles to Reactions: Map the functional roles to the enzyme complexes they form and subsequently to the metabolic reactions they catalyze. This requires a knowledge base like the Model SEED to manage the many-to-many relationships between roles, complexes, and reactions.

- Reconstruct GPR Rules: For each reaction, a Boolean GPR rule is defined. This can be automated with GPRuler, which infers the logic by mining protein complex and interaction data from databases like KEGG, UniProt, and the Complex Portal [18].

- Assemble the Stoichiometric Matrix: Compile the list of reactions and their stoichiometries into the S matrix. This defines the system of linear equations for the model.

- Formulate the Biomass Equation: Define the biomass objective function based on experimental data for the target organism or by adapting a template from a related organism. Be sure to include universally essential cofactors [16] [19].

- Gap Filling and Curation: The initial draft model will likely have "gaps"—reactions that are necessary for the production of key biomass precursors but are missing from the network. These are identified and added iteratively by comparing model predictions (e.g., of growth on a specific medium) with experimental data.

- Model Validation and Simulation: Validate the model by testing its ability to predict known physiological behaviors, such as growth on different carbon sources or gene essentiality. Once validated, the model can be used for FBA simulations to predict metabolic fluxes under different genetic or environmental conditions.

Table 3: Key Computational Tools and Databases for GEM Reconstruction

| Tool / Resource | Type | Function in GEM Reconstruction | |

|---|---|---|---|

| GPRuler | Software | Automates the reconstruction of Gene-Protein-Reaction (GPR) rules by mining multiple databases. | [18] |

| PyFBA | Software | A Python-based library for building metabolic models and running Flux Balance Analysis. | [17] |

| COBRA Toolbox | Software | A MATLAB suite for constraint-based modeling and analysis of GEMs. | [4] [8] |

| Model SEED | Database & Platform | Provides a consistent framework for connecting functional annotations to biochemistry for model building. | [17] |

| RAST | Service | A genome annotation server that provides functional roles which can be used as input for tools like PyFBA. | [17] |

| KEGG / MetaCyc | Database | Curated knowledge bases of metabolic pathways, enzymes, and reactions used for evidence during reconstruction. | [18] |

| Complex Portal | Database | A resource of curated protein complexes, crucial for inferring the "AND" logic in GPR rules. | [18] |

The construction of predictive genome-scale metabolic models is a structured process reliant on three meticulously defined elements: the stoichiometric matrix for network topology, GPR associations for genotype-phenotype links, and the biomass equation for modeling growth. Advances in automated tools like GPRuler for GPR inference and comprehensive databases for biomass composition are continuously enhancing the accuracy and scope of GEMs. A rigorous, iterative process of reconstruction and validation is paramount for generating reliable models. These models, in turn, provide a powerful platform for driving discovery in metabolic engineering, drug target identification, and fundamental biological research.

Genome-scale metabolic models (GSMMs) are computational representations of the metabolic network of an organism, detailing the biochemical transformations that occur within a cell. They are built on gene-protein-reaction (GPR) associations, connecting genomic information to catalytic proteins and the metabolic reactions they facilitate [8]. These models serve as a platform for integrating multi-omics data and applying constraint-based reconstruction and analysis (COBRA) methods, such as Flux Balance Analysis (FBA), to predict organism-specific metabolic capabilities and physiological states [8] [20]. The first GSMM was reconstructed for Haemophilus influenzae in 1999, paving the way for models of scientifically and industrially significant organisms across bacteria, archaea, and eukarya [8]. This guide provides a detailed overview of the GSMMs for four key model organisms: Escherichia coli, Bacillus subtilis, Saccharomyces cerevisiae, and Mycobacterium tuberculosis, framing them within the context of GSMM reconstruction and their applications in biomedical research.

Genome-Scale Metabolic Models of Key Organisms

The following table summarizes the core quantitative data for the GSMMs of the four model organisms, highlighting their reconstruction progress and key applications.

Table 1: Overview of Genome-Scale Metabolic Models for Key Model Organisms

| Organism | Representative Model(s) | Reactions / Genes / Metabolites | Key Applications and Distinctive Features | Prediction Accuracy (Examples) |

|---|---|---|---|---|

| Escherichia coli (Gram-negative bacterium) | iML1515 [8] | Not fully specified in sources | - Reference strain for bacterial genetics [8]- Industrial biotechnology and metabolic engineering [8]- Model tailored for specific studies (e.g., iML1515-ROS for antibiotics design) [8] | 93.4% accuracy for gene essentiality simulation under minimal media with 16 different carbon sources [8] |

| Bacillus subtilis (Gram-positive bacterium) | iBsu1144 [8] | Not fully specified in sources | - Industrial enzyme and protein production [8]- Model incorporates thermodynamic information to improve reaction reversibility accuracy [8] | Used to identify effects of oxygen transfer rates on protease and recombinant protein production [8] |

| Saccharomyces cerevisiae (Eukaryotic yeast) | Yeast 7 [8] | Not fully specified in sources | - First eukaryotic model organism with a GSMM [8]- Consensus network (Yeast) reconstructed via international collaboration [8]- Foundation for bio-based chemical production [8] | Continuously improved to remove thermodynamically infeasible reactions [8] |

| Mycobacterium tuberculosis (Bacterial pathogen) | iEK1101 [8] | Not fully specified in sources | - Drug target identification against tuberculosis [8]- Study of metabolism under in vivo hypoxic conditions [8]- Integrated with human GSMMs to study host-pathogen interactions [8] | Used to evaluate metabolic responses to antibiotic pressure [8] |

Core Methodologies in GSMM Reconstruction and Analysis

The reconstruction of a high-quality, predictive GSMM follows a standardized workflow. The subsequent diagram illustrates the primary steps from genome annotation to model simulation and validation.

Detailed Experimental Protocols

Protocol 1: Gene Knockout Analysis Using MOMA

This protocol is used to identify essential genes and potential drug targets by simulating the effect of gene deletions on cellular growth [21].

- Model Loading: Import the genome-scale metabolic model (e.g., in SBML format) into a computational environment like the COBRA Toolbox [21].

- Simulation of Wild-Type Growth: Calculate the wild-type growth rate using Flux Balance Analysis (FBA) with the biomass reaction set as the objective function.

- Single-Gene Knockout: For each gene in the model, computationally delete the gene by constraining the flux of all associated reactions to zero.

- Simulation of Mutant Growth: Use the Minimization of Metabolic Adjustment (MOMA) algorithm to predict the growth rate of the knockout mutant. MOMA is preferred for its ability to find a flux distribution close to the wild-type state, as cells often do not immediately reach a new optimal state after gene deletion [21].

- Calculate Fractional Cell Growth (FCG): Determine the FCG for each knockout as the ratio of the mutant growth rate to the wild-type growth rate.

- Rank and Identify Essential Genes: Rank genes based on their FCG. Genes with an FCG below a defined threshold (e.g., ( 10^{-6} )) are classified as essential for growth and are potential drug targets [21].

Protocol 2: Reconstruction of a Context-Specific Model with iMAT

This protocol generates tissue- or condition-specific models by integrating transcriptomic data into a generic GSMM [22].

- Data Acquisition and Preprocessing: Obtain transcriptomic data (e.g., RNA-seq) for the specific condition or cell type of interest. Map the expressed genes to their corresponding reactions in the generic model (e.g., the Human1 model) using Gene-Protein-Reaction (GPR) associations [22].

- Reaction Expression Categorization: Calculate a reaction expression level based on the associated gene expression values and GPR rules. Categorize each reaction as:

- Highly expressed: Expression above a threshold (e.g., mean + 0.5 * standard deviation).

- Moderately expressed: Expression between thresholds.

- Lowly expressed: Expression below a threshold (e.g., mean - 0.5 * standard deviation) [22].

- Apply iMAT Algorithm: Use the Integrative Metabolic Analysis Tool (iMAT) to create a context-specific model. iMAT formulates a mixed-integer linear programming (MILP) problem to find a flux distribution that:

- Maximizes the number of highly expressed reactions carrying flux.

- Maximizes the number of lowly expressed reactions without flux (minimizes their activity) [22].

- Model Extraction and Validation: Extract the consistent subnetwork as the context-specific model. Validate the model by testing its ability to perform known metabolic functions or by comparing simulated fluxes to experimental data.

Table 2: Essential Research Reagents and Computational Tools for GSMM Work

| Item Name | Function / Application | Specific Examples / Notes |

|---|---|---|

| COBRA Toolbox [23] | A MATLAB-based software suite for constraint-based modeling. It is the standard tool for performing simulations like FBA, gene knockout analysis, and pathway analysis. | Used for performing pFBA and single-gene knockout studies [21]. |

| CIBERSORTx [22] | A machine learning tool for deconvoluting bulk tissue transcriptome data to estimate cell type-specific gene expression profiles. | Used to impute mast cell-specific gene expression from bulk lung tissue data [22]. |

| Kyoto Encyclopedia of Genes and Genomes (KEGG) [24] | A comprehensive database used for retrieving metabolic pathways, reactions, enzymes, and genes during the draft reconstruction of a GSMN. | Used as the primary data source for reconstructing the Vibrio parahaemolyticus model VPA2061 [24]. |

| Biomass Objective Function | A pseudo-reaction that represents the drain of biomass precursors (e.g., amino acids, nucleotides, lipids) required for cell growth. It serves as the objective for growth simulation in FBA. | Typically comprises ~43 metabolites in cancer cell-line models [21]. Critical for simulating cellular proliferation. |

| Human1 Model [22] | A consensus, comprehensive GSMM of human metabolism. Serves as a scaffold for building context-specific models of human cells and tissues. | Used as the base model for constructing lung tissue and mast cell-specific models [22]. |

| Parsimonious FBA (pFBA) [21] | An extension of FBA that finds the flux distribution that supports optimal growth while minimizing the total sum of absolute fluxes, representing an assumption of enzyme efficiency. | Used to classify genes into categories such as essential, pFBA optima, and metabolically less efficient (MLE) [21]. |

Application Workflow: From Gene Knockout to Drug Target Identification

The following diagram outlines a specific application of GSMMs in drug discovery, demonstrating how computational predictions are validated experimentally.

This workflow has been successfully implemented to identify and validate novel drug targets. For instance, a study using GSMMs of the NCI-60 cancer cell line panel performed single-gene knockout studies to rank metabolic genes based on their growth reduction [21]. The top-ranked genes were further analyzed to ensure they were non-essential in normal cells, thus maximizing therapeutic potential. This computational approach was subsequently validated experimentally, demonstrating that the drugs mitotane and myxothiazol could inhibit the growth of at least four cell-lines in the NCI-60 database [21]. This underscores the power of GSMMs to generate testable hypotheses for drug development.

Genome-scale metabolic reconstructions (GENREs) are structured knowledge bases that represent the biochemical reaction networks of an organism. Converting these reconstructions into computable genome-scale metabolic models (GEMs) enables the simulation of phenotypic states and the prediction of metabolic responses to genetic and environmental perturbations [25]. The field has matured significantly, moving from labor-intensive, manual efforts for single organisms to semi-automated, high-throughput pipelines capable of generating reconstructions for hundreds of thousands of microbes [11] [26]. This whitepaper provides a technical overview of the current statistical landscape of reconstructed organisms across the domains of life, detailing the methodologies that enabled this expansion and the resources required for such systems-level research.

Quantitative Landscape of Reconstructed Organisms

The scope of genome-scale metabolic reconstructions has expanded dramatically, driven by advancements in computational tools and the availability of genomic data. The table below summarizes key quantitative statistics.

| Domain of Life / Project | Reported Number of Reconstructions | Key Phyla or Groups Represented | Noteworthy Features |

|---|---|---|---|

| Human Gut Microbiome (APOLLO Resource) | 247,092 microbial reconstructions [26] | 19 phyla [26] | Includes >60% uncharacterized strains; spans 34 countries, all age groups, multiple body sites [26] |

| General Progress (as of 2020) | Reconstructions for >30 organisms published by 2010; the number has since increased rapidly [25] [11] | Bacteria, Archaea, Eukaryotes [25] | Enabled pan-genome analyses and strain-specific modeling [25] |

| Enzyme-Constrained Models (GECKO 2.0) | Generated for multiple key organisms [5] | S. cerevisiae, E. coli, Y. lipolytica, K. marxianus, H. sapiens [5] | Incorporates enzymatic constraints and proteomics data; uses automated update pipelines [5] |

Methodologies for Reconstruction and Analysis

The reconstruction of high-quality, genome-scale metabolic networks is a multi-stage process that integrates genomic, biochemical, and physiological data.

Core Reconstruction Workflow

The established protocol for building a metabolic network reconstruction involves four major stages [11]:

- Draft Reconstruction: Initiated by obtaining the genetic parts list from an annotated genome sequence. Genes are associated with metabolic functions using databases like KEGG and BRENDA, and the corresponding biochemical reactions are delineated to form Gene-Protein-Reaction (GPR) associations [11] [27].

- Manual Reconstruction Refinement and Curation: The draft network is manually refined. This critical step addresses organism-specific features such as substrate specificity, cofactor utilization, reaction directionality, and subcellular localization, which automated tools often miss [11] [27].

- Network Conversion to a Mathematical Model: The curated reconstruction is converted into a stoichiometric matrix (S), where rows represent metabolites and columns represent reactions. This model enables constraint-based computational analysis [11].

- Network Validation and Debugging: The functional capability of the model is tested by simulating known physiological functions, such as the production of all essential biomass precursors. Discrepancies between simulations and experimental data guide further network refinement [11].

The following diagram illustrates this multi-stage workflow and its iterative nature:

Advanced and High-Throughput Methodologies

To address the challenges of scale and prediction accuracy, several advanced methodologies have been developed:

- Enzyme-Constrained Modeling (GECKO): The GECKO toolbox enhances GEMs by incorporating enzymatic constraints using kinetic parameters (e.g., kcat values) from databases like BRENDA [5]. This allows for the integration of proteomics data and improves the prediction of metabolic behaviors, such as the Crabtree effect in yeast and overflow metabolism in bacteria, by accounting for the limited cellular protein pool [5].

- High-Throughput Reconstruction Pipelines: Projects like the APOLLO resource utilize optimized, parallelized pipelines to reconstruct metabolism for hundreds of thousands of metagenome-assembled genomes (MAGs) simultaneously [26]. This approach leverages machine learning to predict taxonomic assignments based on metabolic features and to build sample-specific community models, enabling the stratification of microbiomes by body site, age, and disease state [26].

- Community and Multi-Omics Integration: Metabolic reconstructions form the basis for modeling microbial communities. Methods like OptCom facilitate the metabolic modeling of interactions within communities [25]. Furthermore, reconstructions serve as a scaffold for integrating multi-omics data (e.g., transcriptomics, proteomics, metabolomics) to generate context-specific models for personalized analysis [25] [26].

The reconstruction and simulation of genome-scale metabolic models rely on a suite of key databases, software tools, and computational environments.

Table 2: Key Research Reagent Solutions for Metabolic Reconstruction

| Resource Name | Type | Primary Function in Reconstruction & Modeling |

|---|---|---|

| KEGG [11] [27] | Biochemical Database | Maps genes to metabolic pathways and reactions; provides EC number associations. |

| BRENDA [5] [11] [27] | Enzyme Kinetic Database | Source for enzyme kinetic parameters (e.g., kcat values); crucial for enzyme-constrained models. |

| MetaCyc / BioCyc [27] | Biochemical Database | Curated database of metabolic pathways and enzymes. |

| COBRA Toolbox [25] [11] | Software Package (MATLAB) | A suite of functions for constraint-based reconstruction and analysis (e.g., performing FBA). |

| COBRApy [25] | Software Package (Python) | Python implementation of constraint-based reconstruction and analysis methods. |

| GECKO Toolbox [5] | Software Package (MATLAB/Python) | Enhances GEMs with enzymatic constraints using kinetic and proteomics data. |

| Pathway Tools [27] | Software Package | Aids in automated generation of draft metabolic networks from a genome annotation. |

| OptKnock [25] | Computational Algorithm | A bilevel programming framework for identifying gene knockout strategies for strain optimization. |

| APOLLO Resource [26] | Model Repository | Provides access to a vast resource of pre-computed microbial metabolic reconstructions. |

| Biomass Objective Function [25] | Model Component | A pseudo-reaction that defines the drain of metabolites required for cellular growth; essential for simulating growth. |

Methodological Approaches and Transformative Applications in Biomedical Research

Genome-scale metabolic models (GEMs) provide a computational representation of the metabolic network of an organism, enabling the prediction of physiological properties from genomic information [28]. The reconstruction of high-quality GEMs is a critical step in systems biology, with applications ranging from metabolic engineering and drug discovery to the study of microbial ecology [29] [28]. Automated reconstruction tools have emerged to address the challenge of building these complex models from the vast amount of genomic data now available.

This technical guide provides a comprehensive comparison of four prominent automated reconstruction tools: CarveMe, gapseq, KBase (which implements the ModelSEED pipeline), and ModelSEED itself. We examine their underlying methodologies, database dependencies, performance characteristics, and suitability for different research scenarios. Understanding the strengths and limitations of each tool is essential for researchers, scientists, and drug development professionals who rely on metabolic models to generate accurate biological insights.

Comparative Analysis of Reconstruction Tools

Fundamental Approaches and Database Dependencies

Automated reconstruction tools employ distinct strategies for constructing metabolic models, which significantly impact their output and applications.

Table 1: Core Characteristics of Automated Reconstruction Tools

| Tool | Reconstruction Approach | Primary Database Sources | Model Output | Key Features |

|---|---|---|---|---|

| CarveMe | Top-down (template-based) | BiGG universal model [30] | Ready-for-FBA models [30] | Fast reconstruction speed; Uses a universal model as template [30] |

| gapseq | Bottom-up (genome-driven) | Multiple sources including ModelSEED, manually curated database [29] | Ready-for-FBA models with comprehensive biochemistry [29] | Informed gap-filling; Superior enzyme activity prediction [29] |

| KBase/ModelSEED | Bottom-up (genome-driven) | ModelSEED biochemistry (integrates KEGG, MetaCyc, EcoCyc, Plant BioCyc) [31] | Draft models requiring optional gapfilling [31] | Integrated with RAST annotation; Web-based platform [32] [31] |

The reconstruction philosophy fundamentally differs between tools. CarveMe employs a top-down approach that begins with a universal metabolic network and "carves out" a species-specific model by removing reactions without genomic evidence [30]. In contrast, gapseq and KBase/ModelSEED utilize bottom-up approaches that build models by adding metabolic reactions based on annotated genomic sequences [30] [31].

Database dependencies significantly influence model content. gapseq leverages a manually curated database comprising 15,150 reactions and 8,446 metabolites, derived from ModelSEED but with additional curation [29]. KBase relies on the ModelSEED biochemistry database, which integrates multiple biochemical databases [31]. CarveMe uses the BiGG database as its foundation, though concerns have been raised about its ongoing maintenance [33].

Performance and Predictive Accuracy

Table 2: Performance Comparison of Reconstruction Tools

| Tool | Reconstruction Speed | Enzyme Activity Prediction (True Positive Rate) | Carbon Source Utilization Prediction | Gene Essentiality Prediction | Computational Requirements |

|---|---|---|---|---|---|

| CarveMe | Fast (20-31 seconds/model) [34] | 27% [29] | Moderate accuracy [33] | Moderate accuracy [33] | Command line; Dependent on commercial solvers (CPLEX) [33] |

| gapseq | Slow (4.55-6.28 hours/model without gap-filling) [34] | 53% [29] | High accuracy [29] [33] | High accuracy [29] | Command line; Comprehensive biochemical information [29] |

| KBase/ModelSEED | Moderate (2-5.6 minutes/model) [34] | 30% [29] | Moderate accuracy [33] | Moderate accuracy [33] | Web-based interface; Not suitable for high-throughput analysis [33] [34] |

| Bactabolize | Very Fast (<3 minutes/model) [33] | N/A | Highest accuracy among tools [33] | High accuracy [33] | Command line; Reference-based [33] |

Independent evaluations demonstrate significant variability in predictive performance across tools. gapseq shows superior performance in predicting enzyme activities, achieving a 53% true positive rate compared to 27% for CarveMe and 30% for ModelSEED [29]. This advantage extends to carbon source utilization and fermentation product prediction, where gapseq consistently outperforms other tools [29].

For high-throughput studies requiring rapid model generation, CarveMe and Bactabolize offer significant speed advantages. CarveMe can reconstruct models in 20-31 seconds, while Bactabolize requires under 3 minutes per genome [33] [34]. In contrast, gapseq requires several hours per model, making it less suitable for large-scale studies [34].

Structural Differences in Reconstructed Models

Comparative analysis of GEMs reconstructed from the same metagenome-assembled genomes (MAGs) reveals substantial structural differences depending on the reconstruction approach [30]. gapseq models typically encompass more reactions and metabolites compared to CarveMe and KBase models, though they also exhibit a larger number of dead-end metabolites [30]. CarveMe models generally contain the highest number of genes [30].

The Jaccard similarity between reaction sets of models reconstructed from the same MAGs is relatively low (0.23-0.24 on average), indicating that different tools produce substantially different metabolic networks [30]. gapseq and KBase models show higher similarity to each other, likely due to their shared usage of the ModelSEED database [30].

Methodologies and Experimental Protocols

Reconstruction Workflows

The following diagram illustrates the generalized workflow for metabolic model reconstruction shared by most automated tools, with tool-specific variations noted:

Workflow Title: Generalized Metabolic Model Reconstruction Process

Genome Annotation

The initial step involves identifying protein-coding sequences and assigning functional annotations. KBase requires RAST (Rapid Annotation using Subsystem Technology) annotations, which use the SEED functional ontology linked directly to the ModelSEED biochemistry database [31]. gapseq generates its own annotations using a custom protein sequence database derived from UniProt and TCDB, comprising over 130,000 unique sequences [29]. CarveMe can work with various annotation formats but is optimized for use with the BiGG database [30].

Draft Model Construction

This step converts genomic annotations into a metabolic network. CarveMe employs a top-down approach, starting with a universal model containing all known metabolic reactions and removing those without genomic support [30]. gapseq and KBase/ModelSEED use bottom-up approaches, constructing models by adding reactions based on annotated genomic sequences [30] [31]. KBase constructs organism-specific biomass reactions based on template models that incorporate non-universal cofactors, lipids, and cell wall components [31].

Gap-Filling

Gap-filling identifies and adds missing reactions necessary for metabolic functionality. gapseq uses a novel Linear Programming (LP)-based algorithm that incorporates sequence homology to reference proteins to identify and resolve gaps [29]. This approach reduces medium-specific effects on network structure. KBase employs an optimization algorithm that identifies the minimal set of reactions from the ModelSEED biochemistry database needed to enable biomass production in specified conditions [31]. The COMMIT algorithm, used in consensus approaches, performs iterative gap-filling based on MAG abundance, progressively updating the medium with metabolites from previous gap-filling steps [30].

Model Validation

The final step involves assessing model quality and predictive accuracy. Common validation approaches include:

- Comparing predicted vs. experimental enzyme activities [29]

- Testing carbon source utilization predictions against phenotypic data [29] [33]

- Assessing gene essentiality predictions against experimental knockout studies [35] [33]

- Evaluating growth rates and yields under different conditions [32]

Consensus Reconstruction Approach

Recent research has explored consensus reconstruction methods that combine outputs from multiple reconstruction tools. This approach addresses the inherent uncertainty in GEM reconstruction by integrating models from different tools [30]. The protocol involves:

- Draft Model Generation: Reconstruct models from the same genome using CarveMe, gapseq, and KBase

- Model Integration: Merge draft models into a consensus model containing reactions supported by multiple tools

- Gap-Filling: Use algorithms like COMMIT to fill remaining gaps in the consensus model [30]

Studies show that consensus models encompass more reactions and metabolites while reducing dead-end metabolites, potentially offering more comprehensive metabolic network coverage [30].

Research Reagent Solutions

Table 3: Essential Research Reagents and Resources for Metabolic Reconstruction

| Resource Type | Specific Examples | Function in Reconstruction Process | Availability |

|---|---|---|---|

| Biochemical Databases | ModelSEED, BiGG, KEGG, MetaCyc, EcoCyc | Provide curated reaction information, stoichiometry, and metabolite identifiers [29] [31] | Publicly available |

| Protein Sequence Databases | UniProt, TCDB | Reference sequences for homology-based functional annotation [29] | Publicly available |

| Annotation Tools | RAST, Prodigal | Identify coding sequences and assign initial functional annotations [33] [31] | Open source |

| Solvers | CPLEX, Gurobi | Solve linear programming problems during gap-filling and flux balance analysis [33] | Commercial (academic licenses available) |

| Phenotype Data | BacDive, Biolog | Experimental data for model validation [29] [33] | Publicly available |

| Programming Frameworks | COBRApy, RAVEN Toolbox | Provide computational infrastructure for model manipulation and analysis [33] | Open source |

Uncertainty and Limitations in Metabolic Reconstruction

Despite advances in automated reconstruction, significant uncertainties remain throughout the process. These include:

Annotation Uncertainty: Functional annotations based on sequence homology are inherently uncertain, with many genes annotated as hypothetical proteins of unknown function [28]. Different databases contain varying levels of misannotations, which propagate to the reconstructed models [28].

Database Biases: Each reconstruction tool relies on different biochemical databases with inconsistent reaction and metabolite naming conventions, making model integration challenging [30]. The set of exchanged metabolites in community models is more influenced by the reconstruction approach than the specific bacterial community, suggesting a potential bias in predicting metabolite interactions [30].

Gap-Filling Dependencies: Gap-filling algorithms are sensitive to the specified growth medium, potentially resulting in models that are optimized for specific conditions but lack versatility [29] [28]. The minimal reaction addition approach may not reflect biological reality.

Transport Reaction Uncertainty: Annotation of transport reactions is particularly challenging, with substrate specificity often difficult to predict accurately [28]. Incorrect transport reactions can cause ATP-generating cycles that lead to prediction inaccuracies [28].

Probabilistic approaches and ensemble modeling have been proposed to address these uncertainties, providing a more formal characterization of the confidence in model predictions [28].

Automated reconstruction tools have dramatically accelerated the process of building genome-scale metabolic models, yet each approach presents distinct trade-offs. CarveMe offers speed advantages suitable for high-throughput studies, while gapseq provides superior predictive accuracy at the cost of longer computation times. KBase/ModelSEED offers an integrated web-based platform but is less suitable for large-scale analyses. The emerging consensus approach of combining multiple reconstruction tools shows promise for generating more comprehensive and robust metabolic models.

The choice of reconstruction tool should be guided by research objectives, with consideration of the required balance between speed, accuracy, and biological comprehensiveness. As the field advances, addressing uncertainties through probabilistic methods and improved integration of diverse data sources will further enhance the predictive power and utility of genome-scale metabolic models in basic research and drug development applications.

Genome-scale metabolic models (GEMs) are computational representations of the complete metabolic network of an organism, primarily reconstructed from genomic information and literature [1] [36]. These models contain all known metabolic reactions, the genes that encode each enzyme, and their stoichiometric relationships [37]. The process of reconstructing a GEM involves functional annotation of the genome, identification of associated reactions, determination of reaction stoichiometry, assignment of subcellular localization, determination of biomass composition, estimation of energy requirements, and definition of model constraints [1] [36]. This integrated information creates a stoichiometric model valuable for analyzing metabolic potential using constraint-based approaches.

GEMs mathematically define the relationship between genotype and phenotype by contextualizing different types of Big Data, including genomics, metabolomics, and transcriptomics [38]. The core structure of a GEM is the stoichiometric matrix (S), where rows represent metabolites and columns represent reactions. The entries in the matrix are the stoichiometric coefficients of metabolites in each reaction, with negative coefficients indicating consumption and positive coefficients indicating production [39]. This forms the foundation for all constraint-based analysis techniques, enabling quantitative simulation of metabolic fluxes under various physiological conditions.

Table 1: Key Components of Genome-Scale Metabolic Models

| Component | Description | Role in Constraint-Based Analysis |

|---|---|---|

| Stoichiometric Matrix (S) | Mathematical representation of metabolic network connectivity | Defines mass balance constraints for the system |

| Reaction Fluxes (v) | Vector of metabolic reaction rates | Variables to be determined in the analysis |

| Gene-Protein-Reaction (GPR) Rules | Boolean relationships connecting genes to enzymes and reactions | Links genotype to metabolic phenotype |

| Exchange Reactions | Reactions that simulate metabolite uptake and secretion | Define boundary conditions for the model |

| Biomass Objective Function | Reaction representing biomass composition | Often used as the objective function to maximize |

Fundamental Principles of Constraint-Based Analysis

Constraint-based modeling approaches enable the study of metabolic networks at steady state, where metabolite concentrations do not change over time [39]. This steady-state assumption is formalized mathematically as:

[ S \cdot v = 0 ]

where (S) is the stoichiometric matrix and (v) is the vector of reaction fluxes [37] [39]. This equation ensures that for each metabolite, the sum of fluxes producing it equals the sum of fluxes consuming it, preventing accumulation or depletion of intracellular metabolites over time [39].

In addition to the mass balance equality constraints, other constraints are applied to limit the feasible solution space. These typically include inequality constraints that define lower and upper boundaries for reaction fluxes:

[ \alphai \leq vi \leq \beta_i ]

These boundaries can describe enzyme capacity, reversibility of reactions (where irreversible reactions have a lower bound of zero), or physiological limitations inferred from experimental data [37] [39]. The combination of these constraints defines a space of possible metabolic flux distributions that the cell can maintain, representing its metabolic capabilities.

The constraint-based framework does not require kinetic parameters or enzyme concentrations, making it particularly suitable for genome-scale models where such detailed information is often unavailable [37]. Instead, it relies on the network stoichiometry and applied constraints to determine possible metabolic behaviors. This approach has been successfully applied to bacteria, archaea, and eukaryotic organisms, with models continually being refined and expanded [38].

Figure 1: Conceptual workflow of constraint-based metabolic modeling, showing the transformation of biological data into a defined solution space of possible metabolic behaviors.

Flux Balance Analysis (FBA): Core Methodology and Applications

Flux Balance Analysis is a mathematical approach for analyzing the flow of metabolites through a metabolic network, particularly at the genome scale [37]. FBA estimates unknown fluxes using optimality principles, assuming that the flux vector (v^0) maximizes a given biological objective function [37]. The most common objective is the maximization of biomass production, representing cellular growth, though other objectives like ATP production or substrate uptake minimization are also used [39].

The FBA optimization problem is formally defined as:

[ \max{v} \, c^T \cdot v ] [ \text{subject to } N \cdot v = 0 ] [ \alphai \leq vi \leq \betai ]

where (c) is a vector defining the linear objective function (typically zeros except for a 1 at the position of the biomass reaction), (N) is the stoichiometric matrix, and (\alphai) and (\betai) are lower and upper bounds for each flux (v_i) [37].

FBA is implemented as a linear programming (LP) problem, typically solved using algorithms like the simplex method [37]. The simplex algorithm begins at a starting vertex of the feasible region (polytope) defined by the constraints and moves along the edges of the polytope until it reaches the vertex representing the optimal solution [37]. Commonly used solvers include GUROBI, CPLEX, and the GNU Linear Programming Toolkit (glpk) [37].

Table 2: Common Objective Functions in FBA

| Objective Function | Mathematical Form | Biological Interpretation | Typical Applications |

|---|---|---|---|

| Biomass Maximization | (\max v_{biomass}) | Maximizes cellular growth rate | Simulation of wild-type cells in rich media |

| ATP Production | (\max v_{ATP}) | Maximizes energy production | Study of energy metabolism |

| Substrate Minimization | (\min v_{substrate}) | Minimizes nutrient uptake | Analysis of metabolic efficiency |

| Product Maximization | (\max v_{product}) | Maximizes synthesis of specific compound | Metabolic engineering applications |

A significant limitation of FBA is that the optimal solution is typically not unique—multiple flux distributions can achieve the same optimal objective value [37]. This degeneracy arises because metabolic networks often contain redundant pathways and cycles. While FBA identifies one optimal flux distribution, alternative optimal solutions may exist, necessitating additional methods like Flux Variability Analysis and Flux Sampling to fully characterize the solution space [37].

Advanced FBA Techniques: FVA, pFBA, and Geometric FBA

Flux Variability Analysis (FVA)

Flux Variability Analysis addresses the non-uniqueness of FBA solutions by determining the range of possible fluxes for each reaction while maintaining the objective function at a specified fraction of its optimal value [37] [39]. For each reaction (i), FVA solves two optimization problems:

[ \min \, vi \quad \text{and} \quad \max \, vi ] [ \text{subject to } N \cdot v = 0 ] [ \alphai \leq vi \leq \betai ] [ c^T \cdot v \geq Z \cdot v{opt} ]

where (v_{opt}) is the optimal objective value from FBA and (Z) is a fraction (typically 0.9-1.0) defining the acceptable optimality range [37]. This approach identifies reactions with fixed essential fluxes (narrow ranges) and flexible reactions (wide ranges), providing insights into network flexibility and robustness.

Parsimonious FBA (pFBA)

Parsimonious FBA finds a flux distribution that achieves optimal growth while minimizing the total sum of absolute flux values [37]. This approach is based on the principle that cells may have evolved to minimize protein investment or metabolic burden. The pFBA optimization problem can be formulated as:

[ \min \sum |vi| ] [ \text{subject to } N \cdot v = 0 ] [ \alphai \leq vi \leq \betai ] [ c^T \cdot v = v_{opt} ]

where (v_{opt}) is the optimal objective value from standard FBA [37]. pFBA has been shown to improve predictions for gene knockout mutants compared to standard FBA [37].

Geometric FBA

Geometric FBA identifies a unique optimal flux distribution that is central to the range of possible fluxes [37]. This approach finds a solution that is geometrically centered within the feasible flux space at optimality, potentially representing a more biologically realistic distribution than edge cases typically found by standard FBA.

Figure 2: Relationship between different FBA variants, showing how they extend the basic FBA solution to address solution non-uniqueness.

Flux Sampling Techniques

Flux sampling addresses the limitation of FBA and FVA by generating a statistically representative set of flux distributions from the feasible solution space, rather than just optimal or range solutions [37]. This approach is particularly valuable for studying metabolic networks with high degrees of freedom, where many alternative flux distributions can support the same physiological function.

The fundamental concept behind flux sampling is to randomly sample points from the feasible flux space defined by:

[ N \cdot v = 0 ] [ \alphai \leq vi \leq \beta_i ]

Advanced sampling algorithms like optGpSampler generate uniformly distributed samples from the solution space, enabling comprehensive analysis of metabolic capabilities [37]. These methods employ Markov Chain Monte Carlo (MCMC) approaches to efficiently explore high-dimensional solution spaces.

Flux sampling provides several advantages over FBA and FVA alone:

- Reveals correlated reactions and pathway usage patterns

- Identifies all possible metabolic functionalities, not just optimal states

- Provides statistical significance to flux predictions

- Enables comprehensive analysis of network properties and robustness

Table 3: Comparison of Constraint-Based Analysis Techniques

| Method | Mathematical Approach | Output | Key Applications | Limitations |

|---|---|---|---|---|

| FBA | Linear Programming | Single optimal flux distribution | Prediction of growth rates, nutrient requirements | Non-unique solutions, only optimal states |

| FVA | Double Linear Programming (min/max) per reaction | Flux range for each reaction at near-optimality | Identification of essential reactions, network flexibility | Does not provide correlation information |

| pFBA | Linear Programming with L1-norm minimization | Minimal total flux distribution | Improved prediction of mutant phenotypes, enzyme usage | May not reflect true biological objectives |

| Flux Sampling | Markov Chain Monte Carlo sampling | Statistical ensemble of flux distributions | Analysis of pathway redundancy, network robustness | Computationally intensive for large networks |

Experimental Protocols and Practical Implementation

Protocol for Basic FBA

- Model Preparation: Obtain a genome-scale metabolic model in SBML format or load using COBRA Toolbox functions [37].

- Constraint Definition: Set environmental conditions by defining exchange reaction bounds (e.g., glucose uptake = 10 mmol/gDW/h, oxygen uptake = 20 mmol/gDW/h) [37] [39].

- Objective Selection: Define the objective function, typically biomass maximization for microbial growth simulations [37].

- Problem Formulation: Set up the linear programming problem using the stoichiometric matrix and constraints [37].

- Solution: Solve using an LP solver (e.g., GUROBI, CPLEX, GLPK) [37].

- Validation: Compare predicted growth rates and exchange fluxes with experimental data when available [39].

Protocol for Flux Variability Analysis

- Perform FBA: First run standard FBA to determine the optimal objective value ((v_{opt})) [37].

- Set Optimality Fraction: Define the fraction of optimality for flux variability (typically Z = 0.9-1.0) [37].

- Loop Through Reactions: For each reaction in the model:

- Minimize the flux subject to (c^T \cdot v \geq Z \cdot v{opt})

- Maximize the flux subject to (c^T \cdot v \geq Z \cdot v{opt})

- Store Results: Record the minimum and maximum flux for each reaction [37].

- Analysis: Identify reactions with narrow flux ranges (potentially essential) and those with wide ranges (flexible) [37].

Protocol for Gene Deletion Studies

- Gene-Reaction Mapping: Use Gene-Protein-Reaction (GPR) rules to identify reactions associated with target genes [37].