Genome-Scale Metabolic Model Reconstruction: A Comprehensive Guide from Fundamentals to Biomedical Applications

This article provides a systematic guide to genome-scale metabolic model (GSMM) reconstruction, a cornerstone of systems biology that integrates genomic, biochemical, and physiological data to simulate an organism's complete metabolic...

Genome-Scale Metabolic Model Reconstruction: A Comprehensive Guide from Fundamentals to Biomedical Applications

Abstract

This article provides a systematic guide to genome-scale metabolic model (GSMM) reconstruction, a cornerstone of systems biology that integrates genomic, biochemical, and physiological data to simulate an organism's complete metabolic network. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles of GSMMs, detail step-by-step methodologies and automated tools for reconstruction, and address critical challenges in model curation and gap-filling. A strong emphasis is placed on rigorous validation protocols and comparative analysis of reconstruction approaches to ensure model predictive accuracy. Finally, we highlight transformative applications in drug target discovery, live biotherapeutic development, and personalized medicine, providing a vital resource for leveraging metabolic models in biomedical research.

The Blueprint of Life: Understanding Genome-Scale Metabolic Models and Their Core Components

Genome-scale metabolic models (GEMs) are computational representations of the metabolic network of an organism, built on the foundation of its genome annotation [1]. They provide a comprehensive compilation of all known metabolic reactions within a cell or organism, systematically connecting these reactions to the genes that encode the enzymes which catalyze them [2]. This establishes a direct gene-protein-reaction (GPR) association, which is a fundamental feature of GEMs [2] [1]. The primary goal of a GEM is to enable the prediction of organism-wide metabolic flux distributions (the rates of metabolic reactions) under specific genetic and environmental conditions using mathematical optimization techniques [3] [1]. Since the first GEM for Haemophilus influenzae was reconstructed in 1999, the field has expanded dramatically, with models now available for thousands of organisms across bacteria, archaea, and eukarya, serving as vital tools in systems biology and metabolic engineering [1].

Core Components and Mathematical Framework

A GEM is structurally founded on a stoichiometric matrix (S), where each element Sij represents the stoichiometric coefficient of metabolite i in reaction j [2]. This matrix mathematically encapsulates the entire metabolic network. Under the assumption of a pseudo-steady state for internal metabolites—a reasonable approximation given the fast turnover of intracellular metabolites—the system is constrained by the mass balance equation: S ∙ v = 0, where v is the vector of metabolic reaction fluxes [3].

Table 1: Core Quantitative Components of a Genome-Scale Metabolic Model

| Component | Description | Role in the Model |

|---|---|---|

| Genes | DNA sequences encoding metabolic enzymes. | The genetic basis for the model; defines metabolic potential. |

| Proteins/Enzymes | Gene products that catalyze biochemical reactions. | Functional units linking genes to metabolic activities. |

| Reactions | Biochemical transformations between metabolites. | Represent the metabolic network's processes and capabilities. |

| Metabolites | Substrates, intermediates, and products of reactions. | The chemical species consumed or produced by the network. |

| Stoichiometric Matrix (S) | Mathematical representation of metabolite participation in reactions. | Enforces mass balance constraints in flux simulations. |

| Gene-Protein-Reaction (GPR) Rules | Boolean logic statements linking genes to reactions via enzymes. | Defines the genetic requirements for each metabolic reaction. |

| Biomass Objective Function | A pseudo-reaction representing the composition of cellular biomass. | Often used as the optimization target to simulate cell growth. |

To simulate metabolic behavior, GEMs primarily use Flux Balance Analysis (FBA). FBA is a constraint-based modeling approach that uses linear programming to find a flux distribution that maximizes or minimizes a particular objective function, such as the biomass reaction, which represents the synthesis of all necessary macromolecules for cell growth [2] [3] [1]. The model's predictive capabilities are refined by applying constraints, which define the lower and upper bounds (vj, min and vj, max) for each reaction flux vj, based on known nutrient availability, enzyme capacities, or other physiological data [3].

The GEM Reconstruction Process

The reconstruction of a high-quality GEM is a multi-step, iterative process that integrates genomic, biochemical, and physiological data.

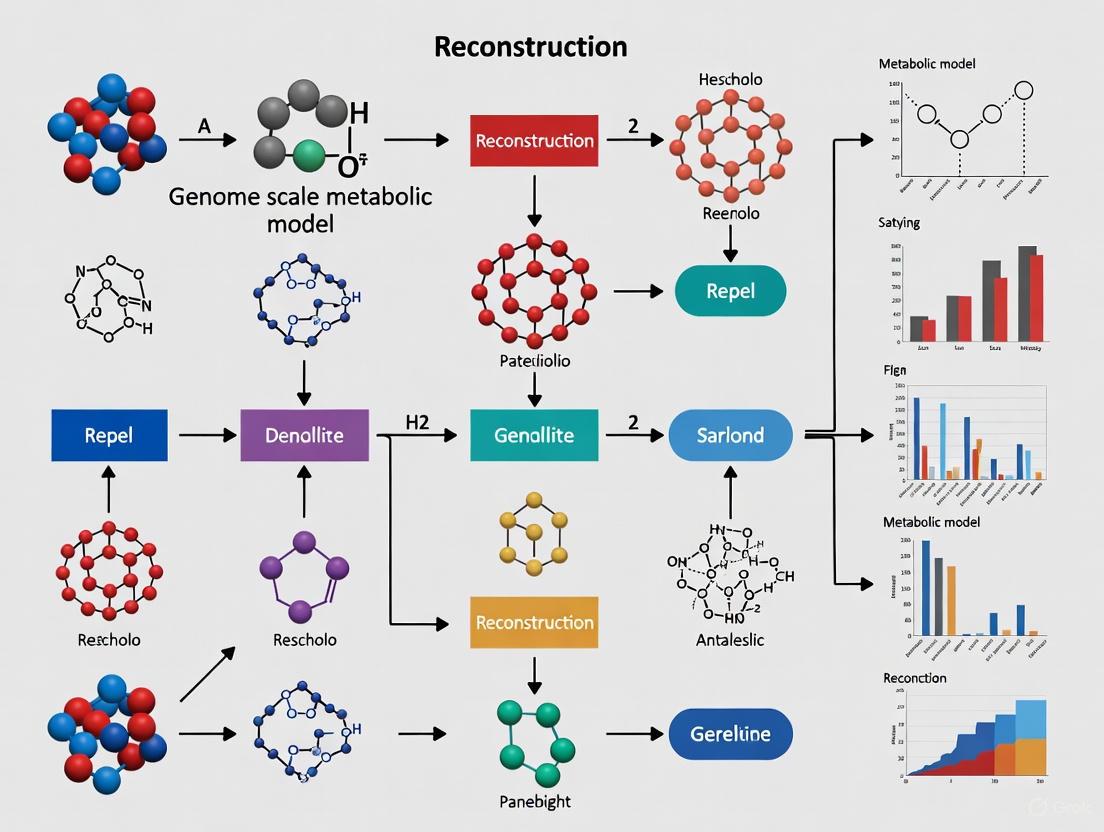

Diagram 1: GEM reconstruction workflow.

Detailed Reconstruction Methodology

- Genome Annotation and Draft Reconstruction: The process begins with the annotated genome of the target organism. Automated reconstruction pipelines like ModelSEED [3] use this annotation to generate a draft model by identifying genes that code for metabolic enzymes and proposing associated reactions.

- Manual Curation and Gap Filling: The draft model is meticulously refined through manual curation. This involves reconciling GPR associations from multiple sources, including homologous models from related organisms identified via sequence similarity searches (e.g., BLAST) [3]. A critical step is metabolic gap analysis, where missing reactions that prevent the synthesis of essential biomass components are identified and filled based on literature evidence, biochemical databases, and experimental physiology [3].

- Defining the Biomass Objective Function: A biomass equation is formulated to represent the drain of biomass precursors (amino acids, nucleotides, lipids, etc.) in their experimentally determined proportions to simulate growth [3]. For example, the biomass composition for Streptococcus suis model iNX525 was adapted from the closely related Lactococcus lactis, containing specific percentages of proteins, DNA, RNA, lipids, and other cellular components [3].

- Model Validation and Refinement: The final model is validated by testing its predictive accuracy against experimental data. Key validation experiments include:

- Growth Phenotype Assays: Comparing in silico predicted growth capabilities under different nutrient conditions (e.g., leave-one-out experiments in chemically defined media) with measured optical density in vitro [3].

- Gene Essentiality Tests: Simulating gene knockout by setting the flux of associated reactions to zero and predicting the impact on growth (grRatio < 0.01 often defines an essential gene), then comparing these predictions to results from mutant screens [3].

Key Research Reagents and Computational Tools

Table 2: Essential Research Reagents and Tools for GEM Reconstruction and Analysis

| Tool/Reagent | Type | Primary Function | Application Example |

|---|---|---|---|

| COBRA Toolbox [3] | Software Toolbox | Provides algorithms for constraint-based reconstruction and analysis of GEMs. | Performing flux balance analysis (FBA) and gap filling. |

| ModelSEED [3] | Automated Pipeline | Automates the reconstruction of draft GEMs from genome annotations. | Generating initial draft model from RAST annotation. |

| GUROBI Optimizer [3] | Mathematical Solver | Solves the linear programming problems in FBA. | Finding the optimal flux distribution that maximizes biomass. |

| AGORA2 [4] | Resource Database | Repository of 7,302 curated, strain-level GEMs of human gut microbes. | Building community models of the gut microbiome. |

| Chemically Defined Medium (CDM) [3] | Laboratory Reagent | A medium with a precisely known chemical composition. | Validating model predictions of growth under specific nutrient conditions. |

| RAST [3] | Annotation Service | Annotates microbial genomes and identifies protein-encoding genes. | Initial functional annotation of a genome prior to model building. |

Applications in Biomedical Research and Drug Development

The ability of GEMs to simulate metabolism in different contexts has made them powerful tools in biomedical research.

Drug Target Identification and Therapeutic Windows

GEMs can be used to identify potential drug targets in pathogens. For example, a GEM of Mycobacterium tuberculosis (iEK1101) was used to simulate the pathogen's metabolic state in vivo versus in vitro, helping to evaluate metabolic responses to antibiotics [1]. Furthermore, by comparing the metabolic vulnerabilities of cancer cells and healthy tissues, GEMs can help identify therapeutic windows. This approach was validated by predicting the differential effect of lipoamide analogs on breast cancer cell line MCF7 versus healthy airway smooth muscle cells, a prediction that was later confirmed experimentally [2].

Host-Microbiome Interactions and Live Biotherapeutic Development

Large-scale resources like the APOLLO resource, which contains 247,092 microbial GEMs, enable the construction of metagenomic sample-specific microbiome community models [5]. These models can stratify microbiomes by disease state, age, and body site. Similarly, the AGORA2 database is used to guide the development of Live Biotherapeutic Products (LBPs) by screening for candidate strains that produce therapeutic metabolites (e.g., short-chain fatty acids for inflammatory bowel disease) or inhibit pathogens [4]. GEMs help predict the outcomes of metabolic interactions between exogenous LBPs, resident microbes, and host cells.

Drug Repurposing and Mechanism Prediction

GEMs facilitate drug repurposing by integrating structural similarity analysis. Drugs with high structural similarity (e.g., Tanimoto score > 0.9) to human metabolites are significantly more likely to bind to enzymes that process those metabolites, acting as inhibitors [2]. When constrained by cell-specific data (e.g., RNA-seq), GEMs can predict the phenotypic effects of such inhibitions, identifying existing drugs with putative anticancer effects, such as those targeting the mevalonate pathway for cholesterol synthesis in cancer cells [2].

Workflow for a Constraint-Based Modeling Study

Diagram 2: Constraint-based modeling workflow.

A typical workflow for applying a GEM, as demonstrated in drug design studies [2], involves:

- Data Integration: Obtain context-specific biological data, such as RNA-sequencing (RNA-seq) data from the cell type of interest.

- Model Constraining: Use the expression data to set maximal flux boundaries for reactions in the model. A common method involves setting the upper bound for a reaction proportional to the abundance of its transcript [2].

- Intervention Simulation: Introduce a simulated intervention, such as a drug, by decreasing the flux bounds of the reaction(s) it targets. The level of decrease is defined as the relative inhibition (e.g., 0.9 means the reaction rate is reduced to 10% of its original value) [2].

- Phenotype Prediction: Calculate the relative growth rate—the ratio of the maximal growth rate after inhibition to the maximal growth rate before inhibition—using FBA. A value of 1 indicates no effect, while a value of 0 indicates complete growth arrest [2]. This metric allows for the quantitative comparison of a drug's effect across different cell types.

Genome-Scale Metabolic Models (GEMs) are computational representations of the metabolic network of an organism, encompassing all known biochemical reactions and their associations with genes, proteins, and metabolites [1] [6]. The reconstruction of GEMs has been established as a fundamental modeling approach for systems-level metabolic studies, enabling the prediction of metabolic fluxes and the integration of various types of omics data [1]. At the heart of these models lie Gene-Protein-Reaction (GPR) associations, which provide the crucial mechanistic link between genotype and phenotype by describing how gene products collaborate to catalyze metabolic reactions [7] [8].

GPR rules use Boolean logic to define the relationships between genes, their protein products, and the metabolic reactions they enable [7]. This Boolean representation allows GEMs to predict metabolic capabilities from genomic information and simulate the metabolic consequences of genetic perturbations, making them invaluable tools in both basic research and applied biotechnology [1] [6]. The reliability of hypotheses formulated using GEMs strongly depends on the quality of their GPR rules, which must accurately represent the catalytic mechanisms of enzymes, including monomeric enzymes, oligomeric complexes, and isozymes [7].

Table: Fundamental Concepts in GPR Associations

| Concept | Description | Boolean Representation |

|---|---|---|

| Monomeric Enzyme | Enzyme consisting of a single subunit | GENE_A |

| Protein Complex | Multiple subunits required for enzymatic activity | GENE_A AND GENE_B |

| Isozymes | Multiple distinct enzymes catalyzing the same reaction | GENE_A OR GENE_B |

| Promiscuous Enzyme | Single enzyme catalyzing multiple different reactions | Multiple reactions reference the same gene |

The Structural Composition of GPR Associations

Biological Foundations of GPR Rules

The structural composition of GPR associations reflects the underlying biological reality of enzymatic catalysis. From a structural perspective, enzymes may be classified as either monomeric or oligomeric entities [7]. Monomeric enzymes consist of a single polypeptide chain, meaning a single gene is responsible for their catalytic function. In contrast, oligomeric enzymes are protein complexes comprising multiple subunits that are all necessarily required to allow the corresponding reaction to be catalyzed [7]. Additionally, enzymes differing in their biological activity, regulatory properties, intracellular location, or spatio-temporal expression may alternatively catalyze the same reaction; these are known as isoforms or isozymes [7].

The complexity of GPR associations in actual genome-scale metabolic networks is substantial. Statistical analysis of the iAF1260 genome-scale model for Escherichia coli reveals that over 16% of enzymes are formed by protein complexes (with up to 13 subunits), about 31% of reactions are catalyzed by multiple isozymes (up to 7), and more than two-thirds (72%) are catalyzed by at least one promiscuous enzyme [8]. This complex topology necessitates a sophisticated representation scheme to accurately capture the relationships between genes, proteins, and reactions.

Boolean Logic in GPR Formulation

GPR rules employ Boolean operators to represent the relationships between gene products and their catalytic functions. The AND operator joins genes encoding different subunits of the same enzyme complex, indicating that all specified gene products are necessary for the reaction to occur [7]. The OR operator joins genes encoding distinct protein isoforms that can alternatively catalyze the same reaction [7]. These two operators can be combined to describe complex scenarios, such as multiple oligomeric enzymes behaving as isoforms due to sharing a common subunit while having distinctive subunits [7].

Diagram: Boolean Logic in GPR Associations. This diagram illustrates the fundamental Boolean relationships in GPR rules, showing both AND logic (protein complexes requiring multiple gene products) and OR logic (isozymes providing alternative catalytic paths).

Computational Representation and Transformation of GPR Rules

From Boolean Logic to Stoichiometric Representation

While GPR associations are typically represented as Boolean rules, for constraint-based modeling and simulation, they must be integrated into the stoichiometric matrix that forms the computational core of GEMs [8]. Machado et al. (2016) proposed a model transformation that generates a stoichiometric representation of GPR associations that can be directly integrated into the stoichiometric matrix [8]. This transformation changes the Boolean representation of gene states (on/off) to a real-valued representation, where the enzyme (or enzyme subunit) encoded by each gene becomes a species in the model [8].

The transformation involves several key steps: reversible reactions and reactions catalyzed by multiple isozymes are decomposed into individual reactions; the participation of an enzyme in a reaction is encoded by adding respective pseudo-species to the left-hand side of that reaction; and a set of artificial enzyme usage reactions (u) are added to account for the total amount of flux carried by each enzyme or enzyme subunit [8]. This approach enables existing constraint-based methods to be used at the gene level without modification, automatically leveraging phenotype analysis from reaction to gene level [8].

Diagram: GPR Representation Transformation. This workflow shows the conversion from Boolean GPR rules to a stoichiometric representation that can be integrated into metabolic models for computational analysis.

Constraint-Based Analysis at Gene Level

The transformation of GPR associations to a stoichiometric representation enables various constraint-based analysis methods to be applied at the gene level, including flux distribution prediction, gene essentiality analysis, random flux sampling, elementary mode analysis, transcriptomics data integration, and rational strain design [8]. This gene-level approach provides significant advantages: it allows the formulation of objectives and constraints at the gene/protein level, enables more accurate prediction of metabolic fluxes, and ensures that strain designs are feasible at the genetic level [8].

For instance, in rational strain design, traditional reaction-based approaches may propose interventions that are not actually feasible due to GPR complexities, such as promiscuous enzymes or protein complexes. The gene-level approach allows using the same optimization methods to obtain feasible gene-based designs [8]. Comparative studies with experimental 13C-flux data have shown that simple reformulations of simulation methods with gene-wise objective functions result in improved prediction accuracy [8].

GPR Reconstruction Methodologies and Tools

Automated Reconstruction with GPRuler

The reconstruction of GPR rules has traditionally been a largely manual and time-consuming process [7]. To address this challenge, automated tools like GPRuler have been developed to fully automate the reconstruction process of GPR rules for any organism [7]. GPRuler is an open-source Python-based framework that can reconstruct GPRs starting from either just the name of the target organism or from an existing metabolic model [7].

The tool works by mining text and data from nine different biological databases, including MetaCyc, KEGG, Rhea, ChEBI, TCDB, and the Complex Portal [7]. The inclusion of the Complex Portal database is particularly significant as it contains information about protein-protein interactions and protein macromolecular complexes established by given genes—a data source not yet exploited by previous state-of-the-art tools [7]. Performance evaluations demonstrate that GPRuler can reproduce original GPR rules with a high level of accuracy, and in many cases, it has been found to be more accurate than the original models after manual investigation of mismatches [7].

Table: Key Biological Databases for GPR Reconstruction

| Database | Primary Use in GPR Reconstruction | URL |

|---|---|---|

| KEGG | Metabolic pathways and orthology | https://www.genome.jp/kegg/ |

| MetaCyc | Metabolic pathways and enzymes | https://metacyc.org/ |

| UniProt | Protein sequence and functional information | https://www.uniprot.org/ |

| STRING | Protein-protein interactions | https://string-db.org/ |

| Complex Portal | Protein macromolecular complexes | https://www.ebi.ac.uk/complexportal/ |

| Rhea | Biochemical reactions | https://www.rhea-db.org/ |

| ChEBI | Chemical entities of biological interest | https://www.ebi.ac.uk/chebi/ |

| TCDB | Transporter classification | http://www.tcdb.org/ |

Integrated Reconstruction Workflow

The overall GPR reconstruction pipeline in GPRuler can be executed starting from two alternative inputs: an already available draft SBML model or a simple list of reactions lacking corresponding GPR rules, or just the name of the organism of interest [7]. In both cases, the inputs are first processed to obtain the list of metabolic genes associated with each metabolic reaction in the target organism/model [7]. This intermediate output is then used as input for the core pipeline, which returns as ultimate output the GPR rule for each metabolic reaction [7].

The reconstruction of metabolic networks more broadly involves four fundamental steps: (1) automated genome-based reconstruction, (2) manual curation and validation, (3) conversion to a mathematical format, and (4) functional testing using objective functions like biomass production [9]. The initial reconstruction begins with the annotated genome of a target organism, which provides unique identifiers and a list of metabolic enzymes, indicating how gene products interact as subunits, protein complexes, or isozymes to form active enzymes [9].

Experimental Protocols for GPR Validation and Integration

Gene Essentiality Analysis Protocol

Gene essentiality analysis is a fundamental method for validating GPR associations in genome-scale metabolic models. This protocol tests the model's ability to correctly predict which gene knockouts will prevent growth under specific conditions [1].

Procedure:

- In Silico Gene Deletion: For each gene in the model, simulate a knockout by constraining the flux through all reactions that require the deleted gene to zero, following the GPR rules.

- Growth Simulation: Perform flux balance analysis (FBA) with the biomass reaction as objective function to determine if the model can support growth without the deleted gene.

- Validation with Experimental Data: Compare predictions with experimental gene essentiality data from knockout libraries or essential gene databases.

- Reconciliation: Investigate discrepancies between predictions and experimental data to identify missing alternative pathways or incorrect GPR associations.

The most recent E. coli GEM, iML1515, shows 93.4% accuracy for gene essentiality simulation under minimal media containing 16 different carbon sources, demonstrating the high quality achievable with well-curated GPR associations [1].

TIDE Algorithm for Metabolic Task Inference

The Tasks Inferred from Differential Expression (TIDE) algorithm is a constraint-based method for inferring pathway activity directly from gene expression data without needing to construct a full context-specific GEM [10]. This approach is particularly valuable for analyzing drug-induced metabolic changes, as demonstrated in studies of kinase inhibitors in gastric cancer cells [10].

Implementation Protocol:

- Differential Expression Analysis: Identify differentially expressed genes (DEGs) between treatment and control conditions using tools like DESeq2 [10].

- Metabolic Task Definition: Define metabolic tasks based on known biochemical pathways and functions.

- Flux Analysis: Apply constraint-based analysis to determine if metabolic tasks can be carried out given the expression changes.

- Synergy Scoring: For drug combination studies, introduce a scoring scheme that compares metabolic effects of combinations with individual drugs to identify synergistic alterations [10].

A variant called TIDE-essential focuses on essential genes without relying on flux assumptions, providing a complementary perspective to the original algorithm [10]. Both approaches have been implemented in open-source tools like MTEApy to facilitate broader application [10].

Research Reagent Solutions for GPR Studies

Table: Essential Research Reagents and Resources for GPR Studies

| Reagent/Resource | Function in GPR Research | Example Sources/Providers |

|---|---|---|

| Genome Annotations | Provides initial gene-function associations for draft reconstructions | EcoCyc, SGD, EntrezGene, IMG |

| Curated Metabolic Models | Reference models for comparative analysis and validation | BiGG Models, ModelSEED |

| Enzyme Databases | Source of EC numbers and enzyme characteristics | BRENDA, MetaCyc, Rhea |

| Protein Interaction Databases | Information on protein complexes and interactions | Complex Portal, STRING |

| Chemical Databases | Metabolite structures and identifiers | ChEBI, PubChem |

| Reconstruction Software | Tools for automated and manual model reconstruction | GPRuler, RAVEN, merlin, CarveMe |

| Constraint-Based Analysis Tools | Simulation and analysis of metabolic models | COBRA Toolbox, COBRApy |

| Omics Data Integration Tools | Methods for incorporating expression data into models | TIDE, CellFie |

Applications in Biotechnology and Biomedicine

Industrial Biotechnology and Strain Design

GPR associations play a crucial role in industrial biotechnology for the design of microbial cell factories. The accurate representation of gene-protein-reaction relationships enables metabolic engineers to predict which genetic modifications will enhance the production of target compounds [1] [6]. GEMs with well-curated GPR rules have been used to optimize production of biofuels, chemicals, and pharmaceuticals in various microorganisms, including Escherichia coli, Saccharomyces cerevisiae, and Bacillus subtilis [1].

The gene-level strain design approach made possible by proper GPR representation is particularly important because a large fraction of reaction-based designs obtained by traditional methods are not actually feasible when GPR complexities are considered [8]. By using the same optimization methods with gene-level constraints, researchers can obtain feasible genetic designs that maintain optimality of the predicted phenotype [8].

Biomedical Applications and Drug Development

In biomedical research, GPR-enhanced metabolic models have been applied to understand human diseases and identify potential drug targets [1] [10]. For pathogenic microorganisms, GEMs with accurate GPR associations can identify essential metabolic functions that serve as potential targets for antimicrobial drugs [1]. For example, GEMs of Mycobacterium tuberculosis have been used to study the pathogen's metabolic status under in vivo hypoxic conditions and in vitro drug-testing conditions, enabling evaluation of the pathogen's metabolic responses to antibiotic pressures [1].

In cancer research, context-specific GEMs reconstructed using GPR associations and transcriptomic data have been used to investigate metabolic reprogramming in cancer cells and identify potential therapeutic targets [10]. Studies of kinase inhibitors in gastric cancer cells have revealed how combinatorial treatments induce condition-specific metabolic alterations, including strong synergistic effects affecting specific biosynthetic pathways [10].

Future Directions and Challenges

The field of GPR reconstruction and utilization continues to evolve with several emerging trends. Multi-strain GEMs are being developed to understand metabolic diversity across different strains of the same species [6]. The reconstruction of GEMs for archaeal organisms is expanding our understanding of metabolism in extreme environments [1] [6]. The integration of machine learning approaches with constraint-based modeling promises to enhance the predictive capabilities of GEMs [6].

Significant challenges remain, particularly in the representation of transporter specificity, where annotations often lack sufficient detail to determine what substrates transporters actually move, even when the transport mechanism is known [9]. Additionally, the integration of regulatory information with metabolic GPR rules represents a frontier for more comprehensive cellular models [11]. As the number of available genome sequences continues to grow, methods for automated and accurate GPR reconstruction will become increasingly essential for leveraging this wealth of genetic information to understand and engineer biological systems.

In the field of systems biology, genome-scale metabolic models (GEMs) serve as comprehensive computational representations of the metabolic network of an organism. The conversion of biological knowledge into a mathematical framework enables the simulation and prediction of cellular phenotypes. The stoichiometric matrix (S matrix) is the fundamental component that structures these models, transforming the topological network of biochemical reactions into a quantitative, computable format [12] [13]. This matrix forms the mathematical bedrock for Constraint-Based Reconstruction and Analysis (COBRA) methods, allowing researchers to analyze metabolic capabilities and engineer organisms for biomedical and industrial applications without requiring extensive kinetic parameters [12] [14]. This whitepaper details the construction, analysis, and application of the stoichiometric matrix within the context of genome-scale metabolic model reconstruction, providing a technical guide for its use in research and drug development.

The Stoichiometric Matrix: Core Concepts and Mathematical Representation

Fundamental Definition and Structure

The stoichiometric matrix is a mathematical representation of a metabolic network where rows correspond to metabolites and columns correspond to biochemical reactions [12] [15]. Each entry Sij in the matrix represents the stoichiometric coefficient of metabolite i in reaction j. By convention, negative coefficients indicate substrate consumption, while positive coefficients indicate product formation [15] [16]. For a system comprising m metabolites and n reactions, the stoichiometric matrix S has dimensions m × n.

The power of this representation lies in its ability to describe the mass balance of the entire metabolic system through the equation: Sv = 0 where v is an n-dimensional vector of reaction fluxes (rates) [15] [17]. This equation encapsulates the assumption of steady-state metabolism, where metabolite concentrations remain constant over time because their production and consumption rates are balanced [18] [19].

Mathematical Framework for Metabolic Analysis

The steady-state equation Sv = 0 defines the core constraints for metabolic flux analysis. However, this system is typically underdetermined, with more reactions than metabolites, leading to infinite possible flux solutions [12]. To resolve this, Flux Balance Analysis (FBA) applies linear programming to identify a single optimal flux distribution by imposing an objective function to be maximized or minimized (e.g., biomass production) subject to the stoichiometric constraints and additional flux boundaries [12] [20]:

Maximize: cᵀv Subject to: Sv = 0 and vₘᵢₙ ≤ v ≤ vₘₐₓ [12]

Table 1: Key Mathematical Properties of the Stoichiometric Matrix

| Property | Mathematical Representation | Biological Interpretation |

|---|---|---|

| Mass Balance | Sv = 0 | Metabolite concentrations remain constant at steady state [15] [18] |

| Network Topology | Non-zero entries in S | Connectivity and structure of the metabolic network [15] |

| Flux Vector | v = (v₁, v₂, ..., vₙ)ᵀ | Rates of metabolic reactions through each pathway [12] |

| Solution Space | {v : Sv = 0, vₘᵢₙ ≤ v ≤ vₘₐₓ} | All possible metabolic states satisfying physical constraints [12] [14] |

The Reconstruction Process: From Genome to Stoichiometric Model

The construction of a genome-scale metabolic model is a meticulous process that transforms genomic information into a functional stoichiometric matrix. This reconstruction pipeline integrates diverse data types to build an organism-specific metabolic network.

Diagram 1: Genome-Scale Metabolic Model Reconstruction Workflow

Genome Annotation and Draft Reconstruction

The reconstruction process begins with genome annotation to identify genes encoding metabolic enzymes [14]. Automated pipelines like Model SEED and the RAVEN Toolbox utilize databases such as KEGG and EcoCyc to generate draft metabolic networks by mapping annotated genes to biochemical reactions [12]. However, this automated process introduces uncertainty due to homology-based annotation errors, misannotations in databases, and the existence of "orphan" reactions without associated genes [14]. Advanced pipelines like ProbAnno incorporate probabilistic approaches to represent annotation uncertainty, assigning likelihood scores to reactions rather than binary presence/absence calls [14].

Manual Curation and Gap Filling

Automated reconstructions require extensive manual curation to address network incompleteness and inaccuracies. A critical step is gap-filling, where biochemical knowledge and experimental data are used to add missing reactions necessary for network functionality [12] [18]. This process resolves "dead-end" metabolites that have only producing or consuming reactions, ensuring the network can simulate biologically feasible phenotypes like growth [18]. The curation process significantly enhances model predictive accuracy but remains a major source of structural uncertainty in the resulting stoichiometric matrix [14].

Model Validation and Contextualization

The final reconstruction steps involve model validation through comparison of simulated outcomes with experimental data, such as growth capabilities and nutrient utilization [18]. For eukaryotic organisms, particularly human, reconstructions are further refined into tissue-specific models by integrating transcriptomic, proteomic, and metabolomic data to remove reactions not expressed in particular cellular contexts [18] [14]. This produces specialized stoichiometric matrices that more accurately represent the metabolic capabilities of specific cell types, which is particularly valuable for drug development and disease modeling [18].

Analysis Methods Built on the Stoichiometric Matrix

Core Constraint-Based Approaches

The stoichiometric matrix enables several powerful analysis techniques that predict metabolic behavior under different conditions:

- Flux Balance Analysis (FBA): The primary method for predicting flux distributions that optimize a biological objective (e.g., biomass maximization) given stoichiometric and capacity constraints [12] [13] [20].

- Flux Variability Analysis (FVA): Determines the minimum and maximum possible flux through each reaction while maintaining optimal objective value, identifying essential and flexible reactions [12].

- Gene Deletion Analysis: Predicts the phenotypic consequences of single or multiple gene knockouts by constraining associated reaction fluxes to zero [12] [20].

Table 2: Key Computational Methods Utilizing the Stoichiometric Matrix

| Method | Primary Function | Applications in Research |

|---|---|---|

| Flux Balance Analysis (FBA) | Predicts optimal flux distribution for a given objective function [12] [13] | Strain design, prediction of growth rates [12] |

| Flux Variability Analysis (FVA) | Identifies range of possible fluxes through each reaction [12] | Determine robustness and flexibility of metabolic networks [12] |

| Metabolic Flux Analysis (MFA) | Estimates intracellular fluxes using isotopic tracer data [18] | Validation of model predictions, analysis of pathway engagement [18] |

| Gene Deletion Analysis | Simulates genetic manipulations by removing reactions [12] [20] | Identification of essential genes and drug targets [12] |

| Sampling Methods | Characterizes the entire space of possible flux states [12] [14] | Assessment of metabolic capabilities without predefined objective [14] |

Advanced Network Representations

Beyond traditional constraint-based analysis, the stoichiometric matrix enables construction of advanced network representations that capture directional flow and environmental context. The Mass Flow Graph (MFG) creates a directed graph where edges represent supplier-consumer relationships between reactions, with weights derived from FBA-calculated fluxes [17]. This representation naturally handles pool metabolites (e.g., ATP, water) that appear in many reactions and obfuscate connectivity in simpler graph constructions, while capturing environment-dependent metabolic connectivity [17].

Diagram 2: From Stoichiometric Matrix to Flux Prediction

Table 3: Key Research Reagents and Computational Tools for Stoichiometric Modeling

| Resource/Tool | Function/Purpose | Application Context |

|---|---|---|

| COBRA Toolbox [12] | MATLAB software suite for constraint-based reconstruction and analysis | Performing FBA, FVA, and gene deletion studies [12] |

| COBRApy [13] | Python implementation of COBRA methods | Programmatic access to constraint-based modeling in Python environments [13] |

| Model SEED [12] | Web-based resource for automated metabolic reconstruction | High-throughput generation of draft metabolic models from genome sequences [12] |

| RAVEN Toolbox [12] | MATLAB toolbox for semi-automated reconstruction | Network reconstruction, curation, and simulation [12] |

| CARVEME [14] | Python-based tool for organism-specific model building | "Carving" generic models into specific organism models using genome annotation [14] |

| BiGG Models [14] | Database of curated genome-scale metabolic models | Reference for reaction and metabolite information, model comparison [14] |

| ARCHNET [20] | Python package for artificial chemistry network analysis | Studying fundamental principles of metabolic network structure [20] |

Applications in Biomedical Research and Therapeutic Development

Stoichiometric modeling has become an invaluable tool in biomedical research, particularly through the development of human tissue-specific metabolic models. These models enable researchers to study human diseases in a mechanistic framework and identify potential therapeutic interventions.

Drug Target Identification and Validation

GEMs facilitate the identification of essential metabolic genes whose inhibition would selectively target diseased cells. By systematically simulating gene knockouts, researchers can pinpoint reactions that are critical for cancer cell proliferation but dispensable for normal cell function [12] [18]. This approach is particularly valuable for understanding metabolic dependencies in cancers with specific mutational backgrounds, enabling development of targeted therapies [18].

Understanding Metabolic Disorders

Stoichiometric models of human hepatocytes have been used to study primary hyperoxaluria type 1, a rare metabolic disorder [17]. By constructing context-specific models and comparing MFGs between wild-type and mutated metabolic states, researchers can identify the systemic rerouting of metabolic flows that characterizes the disease phenotype, suggesting potential compensatory pathways [17].

Multi-Omic Integration for Personalized Medicine

The integration of transcriptomic, proteomic, and metabolomic data with stoichiometric models enables the development of patient-specific metabolic models [18] [14]. This personalized approach helps identify metabolic vulnerabilities unique to individual patients or disease subtypes, paving the way for personalized therapeutic strategies [18]. The standardization of human metabolic models is an ongoing challenge critical for realizing this potential in biomedical applications [18].

Future Directions and Challenges

Despite significant advances, several challenges remain in the development and application of stoichiometric matrix-based models. Standardization of reconstruction methods, representation formats, and model repositories is needed to enable direct comparison between models and consistent integration with other omic data types [18]. Uncertainty quantification in model predictions requires improved methods, with probabilistic approaches and ensemble modeling showing promise for characterizing uncertainty from genome annotation, network reconstruction, and flux simulation [14]. The integration of regulatory information and kinetic constraints into stoichiometric frameworks will enhance model accuracy and expand predictive capabilities beyond steady-state metabolism [12] [19]. Finally, developing approaches to model microbial communities and host-pathogen interactions using multi-species stoichiometric models will open new frontiers in understanding complex biological systems [13] [14].

As these challenges are addressed, the stoichiometric matrix will maintain its position as the mathematical heart of constraint-based modeling, enabling increasingly sophisticated analysis and engineering of biological systems for biomedical and biotechnological applications.

Biomass equations are fundamental components in genome-scale metabolic models (GEMs), serving as mathematical representations of cellular growth and composition. These equations quantitatively define the metabolic requirements for producing essential cellular constituents, including proteins, lipids, carbohydrates, DNA, and RNA, thereby enabling the prediction of growth rates and phenotypic behaviors under various environmental and genetic conditions. Within the GEM reconstruction process, biomass equations provide a critical objective function for constraint-based modeling approaches like Flux Balance Analysis (FBA), translating metabolic network capabilities into measurable physiological outcomes. This technical guide examines the theoretical foundations, development methodologies, and applications of biomass equations in GEMs, providing researchers with comprehensive protocols for their construction and validation in diverse biological systems from microbes to mammalian cells.

Genome-scale metabolic models are structured knowledge-bases that represent the biochemical transformation network of an organism, comprising genes, enzymes, reactions, and metabolites [21]. The conversion of a reconstruction into a mathematical model enables myriad computational biological studies, including analysis of phenotypic characteristics and metabolic engineering [21]. Central to this computational framework is the biomass equation, which serves as the objective function in flux balance analysis by representing the metabolic requirements for cellular growth and replication.

A biomass equation quantitatively defines the drain of metabolic precursors and energy required to synthesize all essential macromolecular components of a cell. Unlike other reactions in a GEM that represent substrate conversions or transport processes, the biomass reaction represents a synthetic process that consumes numerous metabolites in specific proportions to produce one unit of cellular biomass. This equation effectively defines the relationship between metabolic flux distributions and cellular growth, enabling models to predict growth phenotypes under different environmental conditions or genetic perturbations [6] [21].

The critical role of biomass equations becomes evident in Flux Balance Analysis, a constraint-based modeling approach that predicts metabolic fluxes by optimizing a defined cellular objective, most commonly the maximization of biomass production [6]. FBA and related constraint-based methods have become predominant tools for integrating, quantifying, and predicting the spatial and temporal distribution of metabolic flows in biological systems [22]. By simulating the metabolic network at steady-state and using biomass production as the objective function, researchers can predict growth rates, nutrient uptake rates, and byproduct secretion, enabling in silico simulation of metabolic capabilities across different organisms and conditions.

Theoretical Framework and Mathematical Formulation

Fundamental Stoichiometric Representation

Biomass equations are mathematically represented as stoichiometric reactions that account for the conversion of metabolic precursors into biomass constituents. The general form of a biomass equation can be expressed as:

Where a_i represents the stoichiometric coefficient of precursor metabolite i consumed, and b_j represents the stoichiometric coefficient of byproduct j produced during biomass synthesis. The coefficients are typically normalized such that the production of 1 gram of dry cell weight (gDCW) of biomass requires the specified amounts of each component.

The biomass synthesis reaction is incorporated into the stoichiometric matrix S of the metabolic model, which forms the foundation for constraint-based analysis. The mathematical representation of the entire metabolic network at steady-state is:

Where v is the vector of metabolic fluxes. The biomass reaction is typically assigned as the objective function to be maximized, subject to additional constraints on substrate uptake and metabolic capacities:

Where c is a vector with a value of 1 for the biomass reaction and 0 for all other reactions, and v_min and v_max represent lower and upper bounds on metabolic fluxes, respectively.

Core Biomass Components and Their Metabolic Requirements

Table 1: Essential Biomass Components and Their Precursor Metabolites

| Biomass Category | Major Constituents | Key Precursor Metabolites | ATP Requirements |

|---|---|---|---|

| Amino Acids | 20 proteinogenic amino acids | α-Ketoglutarate, Oxaloacetate, Pyruvate, 3-Phosphoglycerate, Phosphoenolpyruvate, Erythrose-4-phosphate | High (activation, polymerization) |

| Lipids | Phospholipids, Neutral lipids, Sterols | Acetyl-CoA, Glycerol-3-phosphate, Fatty acyl-ACPs, S-Adenosylmethionine | Moderate (activation, elongation) |

| Carbohydrates | Glycogen, Structural polysaccharides | Glucose-6-phosphate, Fructose-6-phosphate, UDP-glucose | Low (activation, polymerization) |

| Nucleic Acids | DNA, RNA (rRNA, tRNA, mRNA) | Ribose-5-phosphate, Nucleotide triphosphates (ATP, GTP, CTP, UTP, dATP, dGTP, dCTP, dTTP) | High (polymerization) |

| Cofactors | Vitamins, Metal ions, Metabolic intermediates | Various pathway-specific precursors | Variable |

| Inorganic Ions | K+, Mg2+, Ca2+, Na+, Cl-, PO4^3- | Mineral uptake | None |

The accurate formulation of biomass equations requires detailed knowledge of cellular composition, which varies significantly across organisms, growth conditions, and physiological states. For example, microbial cells typically contain 50-60% protein, 10-20% RNA, 3-5% DNA, 10-15% lipids, and 5-10% carbohydrates, while mammalian cells exhibit different compositional profiles with higher lipid content and specialized metabolites [21]. These compositional differences must be reflected in organism-specific biomass equations to ensure accurate phenotypic predictions.

Quantitative Biomass Composition Across Biological Systems

Compositional Variation Across Organisms and Growth Conditions

Cellular biomass composition exhibits significant variation across biological kingdoms, growth environments, and physiological states. These differences reflect evolutionary adaptations, ecological niches, and metabolic strategies. The quantitative determination of these compositional differences is essential for developing accurate biomass equations that can reliably predict metabolic behaviors.

Table 2: Representative Biomass Composition Across Biological Systems (g/100g dry cell weight)

| Component | E. coli | S. cerevisiae | A. thaliana | Mammalian Cells |

|---|---|---|---|---|

| Protein | 52.4 | 38.2 | 26.5 | 60.8 |

| Carbohydrates | 16.6 | 38.5 | 55.3 | 12.4 |

| Lipids | 9.4 | 7.8 | 5.2 | 14.6 |

| RNA | 13.8 | 8.5 | 7.4 | 6.2 |

| DNA | 3.2 | 0.8 | 1.6 | 1.8 |

| Ash (Minerals) | 4.6 | 6.2 | 4.0 | 4.2 |

The biomass composition data presented in Table 2 highlights the fundamental differences between biological systems. Prokaryotic organisms like E. coli typically contain higher proportions of RNA to support rapid protein synthesis and growth, while plant cells contain substantial carbohydrate reserves in the form of starch and structural polysaccharides [22]. Eukaryotic microbial systems like S. cerevisiae exhibit intermediate characteristics, with significant carbohydrate storage compatible with their metabolic versatility. Mammalian cells display high protein content reflective of their complex regulatory and structural requirements.

Growth Rate-Dependent Composition Variations

Cellular biomass composition is not static but varies significantly with growth rate, a phenomenon particularly pronounced in microorganisms. Under rapid growth conditions, cells allocate more resources to ribosomes and other translational machinery to support high protein synthesis rates, resulting in increased RNA content. This growth rate-dependent variation has important implications for metabolic modeling, as biomass equations should ideally be tailored to specific growth conditions.

For E. coli, the RNA content can increase from approximately 13% at a growth rate of 0.2 h⁻¹ to over 20% at a growth rate of 0.8 h⁻¹, while protein content shows a corresponding decrease. Similar trends have been observed in other rapidly growing microorganisms. These physiological adaptations must be incorporated into condition-specific biomass equations to improve the accuracy of model predictions across different growth regimes. The development of such adaptive biomass formulations represents an active area of research in metabolic modeling.

Protocol for Biomass Equation Development and Integration

Stage 1: Draft Reconstruction and Biomass Component Identification

The reconstruction of high-quality genome-scale metabolic models follows a systematic protocol that includes biomass equation development as a critical component [21]. This process typically requires six months to two years depending on the target organism and available data.

Step 1: Genomic Data Compilation and Annotation

- Obtain annotated genome sequence from databases such as NCBI Entrez Gene, KEGG, or organism-specific resources [21]

- Identify genes encoding enzymes for synthesis of biomass precursors: amino acids, lipids, nucleotides, cofactors

- Compile transport reactions for inorganic ions and mineral requirements

- Document data sources and confidence levels for each annotated gene

Step 2: Experimental Composition Determination

- Cultivate target organism under reference conditions for compositional analysis

- Quantify macromolecular composition: protein (Lowry or Bradford assay), RNA/DNA (spectrophotometric methods), lipids (extraction and gravimetric analysis), carbohydrates (anthrone or phenol-sulfuric acid methods)

- Determine elemental composition (CHNOPS) through elemental analysis

- Analyze amino acid composition via acid hydrolysis and HPLC

- Quantify nucleotide composition through enzymatic digestion and HPLC separation

- Perform mineral analysis using atomic absorption spectroscopy or ICP-MS

Step 3: Biomass Precursor Stoichiometry Calculation

- Normalize all compositional data to g/g dry cell weight

- Calculate molar requirements for each biomass precursor based on molecular weights

- Account for polymerization costs (ATP hydrolysis for protein, nucleic acid, and carbohydrate synthesis)

- Include cofactor requirements (NAD, NADP, FAD, coenzyme A) for biosynthesis

- Incorporate inorganic ion requirements based on experimental mineral analysis

Stage 2: Model Integration and Biomass Equation Validation

Step 4: Stoichiometric Matrix Integration

- Incorporate biomass reaction as an exchange reaction in the stoichiometric matrix

- Verify mass and charge balance for the biomass equation

- Ensure connectivity between biomass precursors and central metabolic pathways

- Validate thermodynamic consistency of biosynthetic pathways

Step 5: Biomass Equation Validation and Refinement

- Test model prediction of growth on different carbon sources

- Compare predicted vs. experimental growth rates and yields

- Validate essential nutrient requirements through in silico gene knockout studies

- Refine biomass composition based on discrepancies between predictions and experimental data

- Implement condition-specific biomass equations for different growth environments

The following workflow diagram illustrates the comprehensive process for developing and validating biomass equations in genome-scale metabolic models:

Biomass Equations in Multi-Cellular and Community Systems

Plant-Specific Considerations in Biomass Formulation

Plant metabolic models present unique challenges for biomass equation development due to their multi-cellular organization, compartmentalization, and diverse metabolic capabilities. The first genome-scale metabolic network model for plants was developed in Arabidopsis to predict biomass component production in suspension cell culture [22]. Plant biomass equations must account for several specialized features:

Tissue-Specific Composition: Different plant tissues (leaves, roots, stems, seeds) exhibit distinct biochemical compositions. For example, leaf biomass emphasizes photosynthetic components including chlorophyll, carotenoids, and thylakoid membranes, while seed biomass emphasizes storage compounds such as proteins, oils, and starch.

Specialized Metabolism: Plant biomass equations must incorporate secondary metabolites that constitute significant portions of cellular mass in specific tissues. These include lignin in secondary cell walls, alkaloids in specialized tissues, and phenolic compounds in response to environmental stresses [22].

Compartmentalization: Plant cells contain multiple subcellular compartments (chloroplasts, mitochondria, peroxisomes, vacuoles) with distinct metabolite pools and biochemical functions. Biomass equations must account for the transport costs and metabolic contributions of these compartments.

Photosynthetic Efficiency: Biomass equations for photosynthetic tissues must accurately represent the metabolic costs and products of photosynthesis, including carbon fixation, light reactions, and photorespiration. Recent models have successfully described compartmentalized central metabolism and predicted biomass production in various plants [22].

Host-Microbe Interactions and Community Modeling

The application of GEMs has expanded from individual organisms to complex microbial communities and host-microbe interactions [6] [23]. In these multi-species systems, biomass equations play a critical role in simulating metabolic interactions and cross-feeding relationships.

Community Biomass Formulation: Microbial community models typically employ separate biomass equations for each species, connected through metabolite exchange processes. The overall community biomass may be represented as the sum of individual biomasses or as a weighted composite based on species abundance.

Metabolic Interaction Analysis: By simulating the growth of multiple organisms with shared nutrient resources, GEMs can predict synergistic and competitive interactions within microbial communities. These approaches have been applied to study host-associated microbiomes, including the human gut microbiome [23].

Integrated Host-Microbe Models: Advanced modeling frameworks integrate GEMs of host cells (e.g., human intestinal epithelial cells) with microbial models to simulate the metabolic consequences of host-microbe interactions. These integrated models require careful balancing of biomass objectives and metabolite exchange between systems [23] [4].

The AGORA2 (Assembly of Gut Organisms through Reconstruction and Analysis, version 2) framework exemplifies this approach, providing curated strain-level GEMs for 7,302 gut microbes that can be used to simulate community interactions and their impact on host health [4].

Essential Research Reagents and Computational Tools

The development and validation of biomass equations requires specialized reagents, analytical tools, and computational resources. The following table summarizes key solutions and their applications in biomass equation research.

Table 3: Research Reagent Solutions for Biomass Equation Development

| Reagent/Tool Category | Specific Examples | Application in Biomass Equation Development |

|---|---|---|

| Genomic Databases | NCBI Entrez Gene, KEGG, BRENDA, SEED database [21] | Gene annotation, metabolic pathway identification, enzyme function verification |

| Composition Analysis Kits | Bradford/Lowry protein assays, RNA/DNA extraction kits, lipid extraction reagents | Quantitative determination of macromolecular cellular components for biomass coefficients |

| Metabolic Modeling Software | COBRA Toolbox, CellNetAnalyzer, Simpheny [21] | Stoichiometric model construction, flux balance analysis, biomass equation implementation and testing |

| Isotope Tracing Reagents | U-13C labeled substrates (glucose, glutamine), 15N ammonium chloride | Experimental validation of metabolic fluxes predicted using biomass equations as objective function |

| Elemental Analysis Standards | CHNOS elemental standards, certified reference materials | Calibration for elemental composition analysis to ensure mass balance in biomass equations |

| Community Modeling Resources | AGORA2 framework, ModelSEED, CarveMe [4] | Standardized biomass equation formulation across multiple organisms in community models |

Applications in Biotechnology and Therapeutic Development

Metabolic Engineering and Bioprocess Optimization

Biomass equations serve as critical tools in metabolic engineering applications, enabling in silico prediction of genetic modifications that enhance product yield while maintaining cellular growth. By incorporating product formation alongside biomass production in the objective function, models can identify optimal metabolic strategies for strain improvement.

Case Study: Biofuel Production - Genome-scale models of eukaryotic microalgae have been developed to explore photosynthesis and biofuel production [22]. These models employ biomass equations that accurately represent photoautotrophic metabolism, enabling prediction of growth rates and lipid accumulation under different light and nutrient conditions. Model-guided engineering has led to improved strains with enhanced biofuel production capabilities.

Case Study: Amino Acid Production - Industrial amino acid production in Corynebacterium glutamicum relies on detailed biomass equations that account for the metabolic costs of overflow metabolism. Models incorporating condition-specific biomass compositions have successfully predicted genetic interventions that redirect carbon flux from biomass to product formation while maintaining minimal growth requirements.

Drug Discovery and Live Biotherapeutic Development

Biomass equations play an increasingly important role in pharmaceutical development, particularly in the emerging field of live biotherapeutic products (LBPs). GEMs with well-curated biomass equations enable in silico assessment of candidate strains for therapeutic applications [4].

Mechanism of Action Elucidation: Biomass equations in bacterial GEMs help identify essential metabolites and metabolic vulnerabilities that can be exploited as drug targets. For example, GEMs of Mycobacterium tuberculosis have identified isocitrate lyase as essential for in vivo growth, leading to targeted inhibitor development.

Live Biotherapeutic Development: The systematic evaluation of LBP candidates benefits from GEMs with accurate biomass equations [4]. These models can predict:

- Growth requirements and optimization of cultivation conditions

- Production of therapeutic metabolites (e.g., short-chain fatty acids for inflammatory bowel disease)

- Strain-strain interactions in multi-strain formulations

- Host-microbe metabolic interactions

Personalized Medicine Applications: AGORA2 and similar frameworks leverage biomass equations to simulate individual-specific microbial communities, enabling prediction of LBP efficacy based on personalized microbiome composition [4]. This approach facilitates the development of precision microbiome therapies tailored to an individual's metabolic landscape.

Emerging Methodologies and Future Perspectives

Condition-Specific and Dynamic Biomass Formulations

Traditional biomass equations represent cellular composition as static, but emerging approaches incorporate condition-dependent variations to improve predictive accuracy. These advanced formulations include:

Multi-Condition Biomass Equations: Development of growth rate-dependent biomass equations that account for changes in macromolecular composition across different physiological states. These formulations incorporate proteome allocation constraints that limit metabolic flexibility at high growth rates.

Dynamic Biomass Formulations: Integration of biomass equations with dynamic FBA (dFBA) methods to simulate time-dependent changes in cellular composition during batch cultivation, nutrient shifts, or environmental perturbations. These approaches have been particularly valuable for modeling plant metabolic responses to environmental stresses [22].

Spatially-Resolved Biomass Equations: For multi-cellular systems and tissue environments, spatially explicit biomass equations account for variations in cellular composition across different regions or cell types. In plant models, this approach has been used to simulate metabolic interactions between bundle sheath and mesophyll cells in C4 plants [22].

Integration with Multi-Omics Data and Machine Learning

The expanding availability of multi-omics datasets enables more refined approaches to biomass equation development and validation:

Proteomics-Informed Constraints: Integration of proteomic data to constrain enzyme capacity in metabolic models, creating more realistic biomass synthesis capabilities under different conditions.

Transcriptomic Refinement: Use of gene expression data to create context-specific biomass equations that reflect the metabolic state of cells in different tissues or disease states.

Machine Learning Applications: Emerging approaches combine GEMs with machine learning to predict biomass composition from genomic features or environmental parameters, potentially streamlining the model reconstruction process for non-model organisms.

As metabolic modeling continues to evolve, biomass equations will remain fundamental components that bridge genomic information with physiological outcomes, enabling increasingly accurate prediction of cellular behavior in health, disease, and biotechnological applications.

Genome-Scale Metabolic Models (GEMs) are powerful computational tools that integrate genes, proteins, and biochemical reactions to simulate an organism's metabolism. By representing metabolism as a stoichiometric matrix of reactions, GEMs enable the prediction of metabolic fluxes using constraint-based methods like Flux Balance Analysis (FBA). These models have become indispensable for systems-level metabolic studies, spanning applications from industrial biotechnology to infectious disease research. The reconstruction of high-quality GEMs for key organisms provides a foundation for understanding metabolic capabilities, predicting phenotypic behavior, and identifying strategic interventions. This technical guide examines the reconstruction process and applications through case studies of scientifically and medically significant organisms, including Escherichia coli, Bacillus subtilis, and the human pathogen Streptococcus suis.

Genome-Scale Metabolic Model Reconstruction Process

The reconstruction of a genome-scale metabolic model is a systematic process that transforms genomic annotation data into a mathematical representation of an organism's metabolism. The standard workflow begins with genome annotation using platforms like RAST, which feeds into automated draft model construction pipelines such as ModelSEED [3]. The draft model is then refined through extensive manual curation, a critical step that involves filling metabolic gaps by referencing biochemical databases, scientific literature, and template models from phylogenetically related organisms.

The manual curation process ensures accurate Gene-Protein-Reaction (GPR) associations, where metabolic reactions are correctly linked to the genes that encode the catalyzing enzymes. This stage also involves adding transport reactions based on databases like the Transporter Classification Database (TCDB) and ensuring reaction stoichiometry is mass- and charge-balanced [3]. A essential component of GEM reconstruction is defining the biomass objective function, which quantitatively represents the biosynthetic requirements for cell growth, including macromolecular composition (proteins, DNA, RNA, lipids) and essential cofactors [3].

The completed model undergoes validation through simulation techniques, primarily Flux Balance Analysis (FBA), which optimizes for a biological objective (typically biomass production) to predict metabolic behavior under specified environmental conditions. Model predictions are tested against experimental growth phenotypes and gene essentiality data to assess predictive accuracy before application to specific research questions [3] [1].

dot-1 Experimental Protocol: GEM Reconstruction & Validation Workflow

Case Study: Escherichia coli

Model Characteristics and Development

Escherichia coli stands as a paradigm for GEM development, with its metabolic models evolving alongside increasingly sophisticated genomic and biochemical knowledge. The first E. coli GEM, iJE660, was published in 2000 shortly after the genome sequence of E. coli K-12 MG1655 became available [1]. Through multiple iterations of expansion and refinement, the most recent version, iML1515, contains 1,515 genes—more than double the gene coverage of the original model. This high-quality model demonstrates 93.4% accuracy in predicting gene essentiality under minimal media with 16 different carbon sources [1]. The E. coli GEM has been tailored for specialized applications, including iML1515-ROS, which incorporates reactions for reactive oxygen species generation to facilitate antibiotics research, and iML976, which defines the core metabolic capabilities conserved across more than 1,000 E. coli strains [1].

Key Applications and Experimental Validation

The iML1515 model serves as a knowledgebase for predicting cellular metabolic states and engineering industrial strains. Model validation employs Flux Balance Analysis to simulate gene knockout experiments and growth under various nutrient conditions. Predictive accuracy is quantified by comparing in silico results with experimental growth phenotypes and gene essentiality data from mutant screens [1]. This validated model enables in silico design of microbial cell factories for chemical production and provides insights into the metabolic basis of pathogenicity in clinical isolates.

Case Study: Bacillus subtilis

Model Characteristics and Development

Bacillus subtilis is a Gram-positive bacterium of significant industrial importance for enzyme and protein production. Multiple GEMs have been reconstructed for this organism, including iYO844, iBsu1103, iBsu1103V2, iBsu1147, and the most recent iBsu1144 [1]. The iBsu1144 model incorporates re-annotated genome information and integrates thermodynamic data, including standard molar Gibbs free energy changes for reactions, significantly improving the accuracy and consistency of predicting reaction reversibility [1]. This model represents the comprehensive metabolic network of B. subtilis, enabling reliable simulation of its industrial applications.

Key Applications and Experimental Validation

The iBsu1144 model has been applied to investigate the effects of oxygen transfer rates (low, medium, and high) on the production of industrial enzymes such as serine alkaline protease and recombinant proteins [1]. Simulation protocols involve constraining the model to reflect different aeration conditions and analyzing flux distributions to identify metabolic bottlenecks. Validation is achieved by comparing predicted product yields and growth rates with experimental bioreactor data. The B. subtilis GEMs serve as reference models for other Gram-positive bacteria, facilitating comparative metabolic studies and industrial strain optimization.

Case Study: Human Pathogens

Mycobacterium tuberculosis

Mycobacterium tuberculosis, the pathogen responsible for tuberculosis, has been extensively studied using GEMs to identify potential drug targets. The iEK1101 model of the H37Rv strain represents the most comprehensive reconstruction, integrating biological information from previous models [1]. This model has been used to simulate the pathogen's metabolic state under in vivo hypoxic conditions that mimic its pathogenic state and in vitro drug-testing conditions [1]. Comparative analysis of flux distributions between these states has revealed metabolic adaptations to antibiotic pressure. Additionally, the M. tuberculosis GEM has been integrated with a human alveolar macrophage model to study host-pathogen interactions and identify metabolic vulnerabilities [1].

Streptococcus suis

Streptococcus suis is an emerging zoonotic pathogen with increasing prevalence in human populations. The recently developed iNX525 model for S. suis SC19, a hypervirulent serotype 2 isolate, contains 525 genes, 708 metabolites, and 818 reactions, achieving a 74% overall MEMOTE score [3] [24]. The model demonstrated strong agreement with experimental growth phenotypes under different nutrient conditions, with predictions aligning with 71.6% to 79.6% of gene essentiality data from three mutant screens [3]. A significant application involved analyzing virulence factors, identifying 131 virulence-linked genes, 79 of which were associated with 167 metabolic reactions in the model [3]. Furthermore, 101 metabolic genes were predicted to influence the formation of nine virulence-linked small molecules [3].

dot-2 Logical Relationship: S. suis Virulence & Metabolism

Comparative Analysis of GEM Characteristics

Table 1: Quantitative Comparison of Genome-Scale Metabolic Models

| Organism | Model Name | Genes | Reactions | Metabolites | Key Applications |

|---|---|---|---|---|---|

| Escherichia coli | iML1515 | 1,515 | Not specified | Not specified | Gene essentiality prediction (93.4% accuracy), strain engineering, antibiotics research [1] |

| Bacillus subtilis | iBsu1144 | Not specified | Not specified | Not specified | Enzyme production optimization, oxygen transfer effect analysis [1] |

| Mycobacterium tuberculosis | iEK1101 | 1,101 | Not specified | Not specified | Host-pathogen interaction study, drug target identification [1] |

| Streptococcus suis | iNX525 | 525 | 818 | 708 | Virulence factor analysis, antibacterial drug target identification [3] [24] |

| Saccharomyces cerevisiae | Yeast 7 | Not specified | Not specified | Not specified | Metabolic engineering, biotechnology applications [1] |

Table 2: Model Validation Methods and Outcomes

| Organism | Growth Condition Validation | Gene Essentiality Prediction Accuracy | Specialized Applications |

|---|---|---|---|

| Escherichia coli | Multiple carbon sources | 93.4% | ROS metabolism (iML1515-ROS), core metabolism across strains (iML976) [1] |

| Bacillus subtilis | Oxygen transfer conditions | Not specified | Recombinant protein production, thermodynamic validation [1] |

| Mycobacterium tuberculosis | In vivo hypoxic vs in vitro conditions | Not specified | Integrated host-pathogen modeling, antibiotic resistance studies [1] |

| Streptococcus suis | Chemically defined media with nutrient exclusions | 71.6%-79.6% (across three screens) | Virulence factor synthesis pathway analysis [3] |

Advanced GEM Applications and Future Directions

Multi-Strain and Pan-Genome Modeling

Advances in GEM methodology now enable the construction of multi-strain models that capture metabolic diversity within species. This approach involves creating a "core" model representing metabolic reactions common to all strains and a "pan" model encompassing the union of all metabolic capabilities across strains [6]. For example, researchers have developed a multi-strain E. coli GEM from 55 individual models, while similar efforts have produced models for 410 Salmonella strains and 64 Staphylococcus aureus strains [6]. These multi-strain models facilitate the identification of conserved and strain-specific metabolic traits, enhancing our understanding of metabolic adaptations in different environments and clinical contexts.

Microbial Community Modeling

A frontier in metabolic modeling involves reconstructing GEMs for microbial communities, particularly the human microbiome. The APOLLO resource represents a landmark achievement in this domain, comprising 247,092 microbial genome-scale metabolic reconstructions spanning 19 phyla, with inclusion of over 60% uncharacterized strains [5]. This resource encompasses microbes from 34 countries, all age groups, and multiple body sites. Using this platform, researchers have constructed 14,451 metagenomic sample-specific microbiome community models, enabling systematic interrogation of community-level metabolic capabilities [5]. These models have demonstrated accurate stratification of microbiomes by body site, age, and disease state, opening new avenues for understanding host-microbiome interactions in health and disease.

dot-3 Microbial Community Modeling Workflow

Machine Learning Integration

The integration of machine learning with GEMs represents a promising direction for enhancing predictive capabilities and model refinement. Machine learning approaches can predict taxonomic assignments based on metabolic features derived from GEMs with high accuracy [5]. Furthermore, these techniques can analyze complex patterns in metabolic flux distributions to identify non-intuitive relationships between genotype and phenotype, potentially accelerating drug target identification and metabolic engineering design.

Table 3: Key Research Reagents and Computational Tools for GEM Reconstruction

| Resource Name | Type | Function in GEM Reconstruction | Application Context |

|---|---|---|---|

| RAST | Bioinformatics Tool | Automated genome annotation to identify protein-coding genes and functional roles | Draft model construction from genomic data [3] |

| ModelSEED | Automated Pipeline | Generation of draft metabolic models from RAST annotations | Initial model creation before manual curation [3] |

| COBRA Toolbox | MATLAB Package | Simulation and analysis of GEMs using constraint-based reconstruction and analysis | Flux Balance Analysis, model validation [3] |

| GUROBI | Optimization Solver | Mathematical optimization for flux balance analysis | Solving linear programming problems in FBA [3] |

| MEMOTE | Assessment Tool | Quality assessment and validation of metabolic models | Evaluating model completeness and correctness [3] |

| TCDB (Transporter Classification Database) | Database | Reference for transporter proteins and reactions | Adding transport reactions to models [3] |

| UniProtKB/Swiss-Prot | Protein Database | Protein sequence and functional information | Validating and refining GPR associations [3] |

| Chemically Defined Medium (CDM) | Growth Medium | Controlled growth conditions for model validation | Experimental testing of model predictions [3] |

Genome-scale metabolic models have evolved from basic metabolic networks to sophisticated computational platforms that integrate genomic, biochemical, and physiological information. The case studies presented here demonstrate how GEM development for key organisms—from established models like E. coli and B. subtilis to human pathogens like M. tuberculosis and S. suis—has enabled diverse applications in basic science, biotechnology, and medicine. As reconstruction methodologies advance, incorporating multi-strain capabilities, community modeling, and machine learning integration, GEMs will play an increasingly central role in systematic investigations of metabolism, host-microbe interactions, and the development of novel therapeutic interventions. The continued refinement of these models promises to enhance our ability to predict cellular behavior and engineer biological systems with greater precision.

The Critical Link Between Metabolism and Phenotype in Systems Biology

The relationship between an organism's genetic blueprint (genotype) and its observable characteristics (phenotype) represents one of the most fundamental challenges in modern biology. While monogeneic traits allow for straightforward genotype-phenotype mapping, most phenotypic traits involve complex interactions among multiple gene products, making this relationship difficult to reconstruct and understand [25]. The emergence of metabolic systems biology has provided a powerful framework for addressing this challenge through genome-scale metabolic reconstructions and their conversion into mathematical models. These models enable the computation of phenotypic traits based on an organism's genetic composition, establishing a mechanistic basis for the genotype-phenotype relationship for metabolic functions [25]. This technical guide explores the critical link between metabolism and phenotype through the lens of constraint-based reconstruction and analysis (COBRA), detailing the methodologies, applications, and research tools that enable researchers to decipher this complex relationship.