Genome-Scale Metabolic Models (GEMs): A Comprehensive Guide from Fundamentals to Biomedical Applications

Genome-scale metabolic models (GEMs) are powerful computational frameworks that mathematically represent the entire metabolic network of an organism, connecting genotype to phenotype.

Genome-Scale Metabolic Models (GEMs): A Comprehensive Guide from Fundamentals to Biomedical Applications

Abstract

Genome-scale metabolic models (GEMs) are powerful computational frameworks that mathematically represent the entire metabolic network of an organism, connecting genotype to phenotype. This article provides a comprehensive overview of GEMs for researchers and drug development professionals, covering their fundamental principles, reconstruction methodologies, and diverse applications in biomedical research. We explore how GEMs integrate multi-omics data to predict metabolic fluxes using constraint-based approaches like Flux Balance Analysis (FBA), enable strain engineering for bioproduction, identify drug targets in pathogens, and model host-microbiome interactions. The content also addresses current challenges in model uncertainty, reconstruction consistency, and computational limitations, while highlighting emerging trends such as machine learning integration and community modeling standards that are shaping the future of metabolic systems biology.

What Are Genome-Scale Metabolic Models? Core Principles and Components

Genome-scale metabolic models (GEMs) represent a cornerstone of systems biology, providing computational frameworks for mathematically representing and simulating the entire metabolic network of an organism. By integrating genomic, biochemical, and physiological information, GEMs enable researchers to predict cellular phenotypes under diverse conditions, design metabolic engineering strategies, and investigate host-microbiome interactions. This technical guide examines the foundational principles, reconstruction methodologies, and constraint-based modeling approaches that underpin GEMs, highlighting their applications through contemporary case studies and emerging computational tools that are advancing predictive biology.

Genome-scale metabolic models (GEMs) are computational frameworks that mathematically represent the metabolic network of an organism. Unlike reductionist approaches that examine biological components in isolation, GEMs employ a systems biology perspective to illustrate biological phenomena through the net interactions of all cellular and biochemical components within a cell or organism [1]. At the core of GEMs lies the systematic reconstruction of metabolic networks from whole-genome sequences, integrating gene-protein-reaction (GPR) associations for nearly all metabolic genes within an organism [2] [1]. These models have the potential to incorporate comprehensive data on stoichiometry, compartmentalization, biomass composition, thermodynamics, and gene regulation [1].

The development of GEMs began with the first model for Saccharomyces cerevisiae (iFF708) in 2003 [1]. Since then, continuous efforts by the scientific community have led to increasingly sophisticated models for various yeast species and other microorganisms. The primary framework for simulating GEMs is constraint-based modeling (CBM), which operates under a set of well-defined mathematical rules rather than requiring detailed kinetic parameters—a significant advantage given the scarcity of kinetic data for most cellular reactions [2]. By imposing systemic constraints on the entire metabolic network, GEMs enable researchers to predict cellular responses under diverse conditions, facilitating their applications in metabolic engineering, synthetic biology, and biomedical research [1].

Mathematical Foundations of GEMs

Stoichiometric Matrix Representation

The metabolic network in a GEM is represented as a stoichiometric matrix (S) of dimensions m×n, where m represents all metabolites in the system and n represents all metabolic reactions [2]. Each element Sij in the matrix corresponds to the stoichiometric coefficient of metabolite i in reaction j. This mathematical representation captures the mass balance for each chemical species in the system.

The mass balance equation for each chemical species is expressed as:

[ \frac{dCi}{dt} = \sum S{ij}v_j ]

Where (Ci) is the concentration of metabolite i, (S{ij}) is the stoichiometric coefficient, and (v_j) is the flux through reaction j [2]. Under the steady-state assumption, which is fundamental to constraint-based modeling, there is no change in metabolite concentrations over time:

[ \frac{dC_i}{dt} = 0 ]

This simplifies to the equation:

[ S \cdot v = 0 ]

Where (v) is the vector of reaction fluxes [2].

Flux Balance Analysis

Flux Balance Analysis (FBA) formulates the metabolic network as a linear programming optimization problem. The objective is to find a flux distribution that maximizes or minimizes a specified biological objective function, subject to the constraints:

[ \begin{align} \text{Maximize } & Z = c^T v \ \text{Subject to } & S \cdot v = 0 \ & LBj \leq vj \leq UB_j \end{align} ]

Where (Z) represents the objective function (typically biomass formation or product synthesis), (c) is a vector of weights indicating how much each reaction flux contributes to the objective, and (LBj) and (UBj) are lower and upper bounds reflecting the physical and thermodynamic limits of each reaction (v_j) [2].

Table 1: Key Components of the FBA Mathematical Framework

| Component | Symbol | Description | Typical Values/Examples |

|---|---|---|---|

| Stoichiometric Matrix | S | m×n matrix representing metabolic network | m metabolites × n reactions |

| Flux Vector | v | nx1 vector of reaction fluxes | v₁, v₂, ..., vₙ |

| Mass Balance | S·v = 0 | Steady-state assumption | No net accumulation of metabolites |

| Flux Constraints | LB ≤ v ≤ UB | Thermodynamic and capacity constraints | LBₛᵤₜₛₜᵣₐₜₑ = -10 mmol/gDW/h |

| Objective Function | Z = cᵀv | Cellular objective to optimize | Biomass formation, ATP production |

GEM Reconstruction Methodologies

Genome-Scale Reconstruction Process

The reconstruction of GEMs follows a systematic workflow that transforms genomic information into a mathematical model. The process begins with genome annotation to identify genes encoding metabolic enzymes, followed by assignment of functions based on biochemical databases such as KEGG, and assembly of metabolic reactions into an interconnected network [2] [3]. For non-model organisms, computational tools like the RAVEN toolbox and CarveFungi facilitate automated reconstruction of draft GEMs by leveraging genomic and proteomic data [1].

The reconstruction process involves several critical steps:

- Draft Model Generation: Mapping genome sequences to knowledge databases or high-quality GEMs of closely related species to create a draft model encompassing all identified metabolic reactions.

- Manual Curation: Refining the draft model through manual curation to produce a high-quality model, including correction of mass and charge balances, refinement of gene associations, and inclusion of thermodynamic parameters [1].

- Gap Filling: Identifying and filling metabolic gaps where reactions are necessary to connect network components but lack genetic evidence.

- Biomass Composition: Defining the biomass objective function that represents the cellular composition required for growth.

Table 2: Computational Tools for GEM Reconstruction and Analysis

| Tool/Platform | Primary Function | Applicability | Key Features |

|---|---|---|---|

| COBRA Toolbox [2] | Constraint-based modeling | General purpose | FBA, model validation, simulation |

| RAVEN [1] | Automated model reconstruction | Yeast and other species | Draft model generation from genomes |

| CarveFungi [1] | Automated model reconstruction | Non-model yeasts | GEM construction for diverse fungi |

| MEMOTE [2] | Model testing | Quality assurance | Biochemical consistency checks |

| KAAS [2] | Genome annotation | Functional assignment | KEGG-based annotation |

Model Validation and Refinement

Model validation is essential to ensure predictive accuracy. This involves comparing simulation results with experimental data, such as growth rates, substrate uptake rates, and product secretion profiles under different conditions. The reduceModel function from the COBRA Toolbox is commonly used to remove blocked and unbounded reactions, simplify the network, and correct inconsistencies related to auxotrophies [2]. Additional validation tests include:

- Growth Simulation: Verifying the model can simulate growth on known carbon sources.

- Gene Essentiality: Comparing predicted essential genes with experimental knockout data.

- Metabolic Capabilities: Ensuring the model can produce known metabolic products.

Constraint-Based Modeling Approaches

Fundamental Constraints

Constraint-based modeling employs physicochemical constraints to narrow the space of possible metabolic behaviors. The primary constraints include:

- Stoichiometric Constraints: Enforce mass conservation through the stoichiometric matrix S.

- Thermodynamic Constraints: Define reaction reversibility/irreversibility through flux bounds (LB, UB).

- Capacity Constraints: Limit reaction fluxes based on enzyme capacity and resource availability.

These constraints define a solution space of feasible flux distributions that represent possible metabolic states of the organism under specified conditions.

Advanced Constraint-Based Techniques

Beyond basic FBA, several advanced constraint-based methods have been developed to improve predictive accuracy:

- Enzyme-constrained GEMs (ecGEMs): Incorporate enzyme kinetic parameters and abundance as additional constraints [1].

- Metabolic Expression (ME) Models: Integrate metabolic and gene expression pathways [1].

- Dynamic FBA: Extends FBA to simulate time-dependent metabolic changes.

- Flux Variability Analysis (FVA): Determines the range of possible fluxes for each reaction within the solution space.

These multiscale models incorporate additional constraints, such as enzymatic or kinetic constraints, or integrate omics data (transcriptomics, proteomics) to narrow the solution space of metabolic models, thereby overcoming limitations of classical GEMs [1].

Experimental Protocols for GEM Application

This protocol demonstrates a typical GEM application using the COBRA Toolbox in MATLAB to simulate the effects of different carbon sources on growth and product formation, based on a case study with Pichia pastoris [2].

Materials and Reagents:

- Metabolic Model: P. pastoris model iMT1026 v3 [2]

- Software: COBRA Toolbox in MATLAB R2023a [2]

- Carbon Sources: Glucose, glycerol, sorbitol, methanol, fructose

Methodology:

- Model Preparation:

- Load the metabolic model (iMT1026 v3 for P. pastoris)

- Remove blocked reactions to improve model consistency

- Set all exchange flux upper bounds to 1000 to allow metabolite exchange

- Assign neutral charge (0) to metabolites lacking annotated charge values

- Delete dead-end metabolites according to MEMOTE test results

- Verify model auxotrophy for biotin and oxidized glutathione

FBA Configuration:

- Set objective function to product export (e.g., Ex_scFVLR for single-chain product)

- Fix internal biomass flux at 0.1 mmol·gDW⁻¹·h⁻¹ with retry optimization

- Allow O₂ uptake and CO₂ secretion

- Set carbon source lower bound to -10

- Set biotin exchange (Ex_btn) lower bound to -4×10⁻⁵

Simulation Execution:

- For each carbon source, modify the corresponding exchange reaction bound

- Perform FBA optimization for each condition

- Extract flux distributions for key metabolic subsystems:

- Glycolysis/Gluconeogenesis

- Oxidative Phosphorylation

- Pyruvate Metabolism

- Citric Acid Cycle (TCA Cycle)

- Pentose Phosphate Pathway (PPP)

Data Analysis:

- Calculate biomass yield (Yxs) and product yield (Yps) for each carbon source

- Compare subsystem flux distributions across conditions

- Identify key metabolic bottlenecks or limitations

Table 3: Example Results from Carbon Source Simulation in P. pastoris [2]

| Carbon Source | Objective Rate | Biomass Yield (Yxs) | Product Yield (Yps) |

|---|---|---|---|

| Glucose (ExglcD) | 0.6809 | 0.01429 | 0.09727 |

| Glycerol (Ex_glyc) | 0.3512 | 0.01429 | 0.05017 |

| Sorbitol (ExsbtD) | 0.7318 | 0.01429 | 0.10454 |

| Methanol (Ex_meoh) | 0.0117 | 0.01429 | 0.00167 |

| Fructose (Ex_fru) | 0.6809 | 0.01429 | ~0.09727 |

Protocol: Visualizing Metabolic Capabilities with MicroMap

The MicroMap resource provides a network visualization platform for exploring microbiome metabolism, enabling comparison of metabolic capabilities across different microbes [4].

Materials and Reagents:

- MicroMap Files: CellDesigner .xml and .pdf formats

- Dataset: AGORA2 (7,302 reconstructions) or APOLLO (247,092 reconstructions)

- Software: CellDesigner, COBRA Toolbox

Methodology:

- Data Integration:

- Download MicroMap files from MicroMap dataverse

- Import strain-specific GEMs in SBML format

- Map reactions and metabolites to MicroMap using VMH identifiers

Visualization Generation:

- Use provided COBRA Toolbox functions to generate reconstruction visualizations

- Create heatmaps of relative reaction presence across microbial taxa

- Visualize flux vectors from FBA simulations on the MicroMap network

Comparative Analysis:

- Identify metabolic capabilities unique to specific microbial taxa

- Compare pathway completeness across different strains

- Visualize flux differences under varying environmental conditions

Applications in Metabolic Engineering and Biotechnology

Strain Optimization for Bioproduction

GEMs have become indispensable tools for metabolic engineering, enabling rational design of microbial cell factories for improved production of valuable compounds. For example, GEMs of P. pastoris have been used to identify key reactions that divert precursors and reducing equivalents away from biomass synthesis toward recombinant protein production [2]. This insight has led to the identification of gene targets for overexpression or deletion to redirect metabolic fluxes and enhance product yields [2].

Specific applications include:

- Gene Knockout Prediction: Identifying non-essential genes whose deletion enhances product formation

- Pathway Optimization: Balancing cofactor utilization and energy metabolism

- Substrate Evaluation: Comparing carbon source efficiency for growth and production

Microbial Community Modeling

GEMs are increasingly applied to model microbial communities, investigating interactions between different species in complex environments like the human gut. The AGORA2 resource provides 7,302 human microbial strain-level metabolic reconstructions, while the APOLLO resource includes 247,092 microbial metabolic reconstructions derived from metagenome-assembled genomes [4]. These resources enable modeling of metabolic interactions within microbial communities and their impact on host health.

The MicroMap visualization tool captures 5,064 unique reactions and 3,499 unique metabolites from these resources, providing an intuitive platform for exploring microbiome metabolism and visualizing computational modeling results [4].

Emerging Frontiers and Challenges

Multi-Omics Integration

Recent advances in omics technologies have promoted the development of multiscale metabolic models that integrate transcriptomic, proteomic, and metabolomic data. Enzyme-constrained GEMs (ecGEMs) incorporate proteomic data to enhance predictive capabilities, while single-cell transcriptomics enables reconstruction of context-specific models under various physiological conditions [1]. The integration of rich and high-precision omics data is expected to further improve the accuracy and predictive capability of GEMs.

Pan-Genome Scale Modeling

Traditional GEMs based on reference genomes cannot account for genetic diversity across different strains. Pan-genome scale models address this limitation by incorporating accessory genes missing from reference genomes. For example, pan-GEMs-1807 was developed based on the pan-genome of 1,807 S. cerevisiae isolates, enabling generation of strain-specific GEMs that reveal metabolic differences among strains from distinct niches [1].

As the field expands, standardization of model reconstruction, curation, and testing becomes increasingly important. Community resources like the Virtual Metabolic Human (VMH) database, COBRA Toolbox, and the MicroMap visualization platform provide essential infrastructure for the expanding community of modelers [4]. However, challenges remain in developing universal standards, particularly for microbial community models [3].

Essential Research Reagent Solutions

Table 4: Key Research Reagents and Computational Tools for GEM Development

| Resource Category | Specific Tools/Platforms | Function/Purpose |

|---|---|---|

| Model Reconstruction | RAVEN Toolbox [1], CarveFungi [1] | Automated draft model generation from genomic data |

| Model Simulation & Analysis | COBRA Toolbox [2], MATLAB | Constraint-based modeling, FBA, flux variability analysis |

| Model Testing & Validation | MEMOTE [2] | Quality assurance, biochemical consistency testing |

| Genome Annotation | KAAS (KEGG Automatic Annotation Server) [2] | Functional assignment of metabolic genes |

| Metabolic Databases | KEGG, VMH (Virtual Metabolic Human) [4] | Biochemical reaction and metabolite information |

| Visualization Tools | MicroMap [4], CellDesigner | Network visualization of metabolic capabilities and flux distributions |

| Strain-Specific Resources | AGORA2 [4], APOLLO [4] | Microbial GEM resources for community modeling |

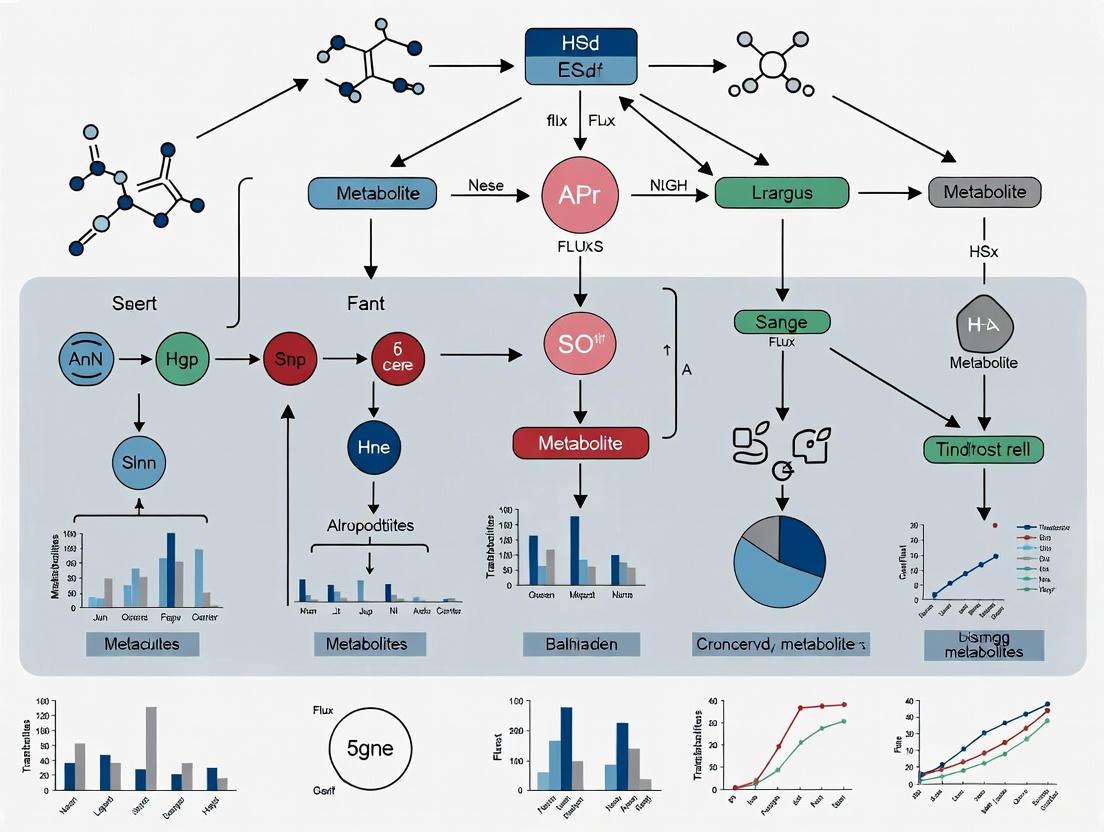

Visualizing GEM Workflows and Relationships

GEM Reconstruction and Application Workflow

GEM Components and Mathematical Structure

Genome-scale metabolic models (GEMs) are computational knowledge-bases that represent the intricate network of biochemical reactions within a cell, providing a systems-level framework for understanding metabolism. These models are built on a bottom-up approach that integrates genomic, biochemical, and physiological data to create a mechanistic link between an organism's genotype and its metabolic phenotype [5] [6]. The construction of GEMs begins with the annotation of an organism's genome, which enables the identification of metabolic genes and their associated enzymes. These enzymes catalyze specific biochemical transformations that are assembled into a network, resulting in a structured representation of cellular metabolism that can simulate metabolic capabilities under various genetic and environmental conditions [7] [6].

The true power of GEMs lies in their ability to serve as a scaffold for integrating multi-omics data and predicting metabolic behaviors using constraint-based modeling approaches. Unlike statistical methods that identify correlations, GEMs provide a causal framework for understanding how genetic perturbations or environmental changes affect metabolic flux and cellular phenotypes [5] [8]. This capability has positioned GEMs as valuable tools across diverse fields, from biomedical research investigating cancer metabolism, drug targets, and pathogenic microorganisms to industrial biotechnology focused on designing microbial cell factories [5] [9]. The continued refinement of GEMs through community-driven efforts has led to increasingly comprehensive models, such as the Human1 consensus model, which incorporates 13,417 reactions, 10,138 metabolites, and 3,625 genes [10].

Core Components of GEMs

The Stoichiometric Matrix (S)

The stoichiometric matrix (S) forms the mathematical foundation of every constraint-based metabolic model, encoding the structure and connectivity of the metabolic network. This m × n matrix, where m represents metabolites and n represents reactions, contains stoichiometric coefficients that define the quantitative relationships between reactants and products in each biochemical transformation [5]. The stoichiometric matrix enables the formulation of mass balance constraints under the assumption of steady-state metabolism, which is expressed mathematically as S · v = 0, where v is the vector of reaction fluxes [7] [11].

The structure of the stoichiometric matrix imposes fundamental constraints on the possible flux distributions that the metabolic network can achieve. Each column in the matrix corresponds to a specific biochemical reaction, with negative coefficients indicating consumed metabolites and positive coefficients indicating produced metabolites [6]. This representation allows researchers to define the solution space of all possible metabolic behaviors and then use optimization methods to predict specific flux distributions that optimize biological objectives, such as biomass production or ATP synthesis [7] [11].

Figure 1: The stoichiometric matrix forms the core mathematical structure of GEMs, connecting metabolites and reactions through mass balance constraints under steady-state assumptions.

Recent methodological advances have expanded the application of stoichiometric matrices to represent more complex biological relationships. For instance, researchers have developed model transformations that encode gene-protein-reaction (GPR) associations directly into extended stoichiometric matrices, enabling constraint-based methods to be applied at the gene level rather than just the reaction level [5]. This approach explicitly accounts for the individual fluxes of enzymes and subunits encoded by each gene, providing a more direct link between genetic information and metabolic phenotype.

Metabolic Reactions

Metabolic reactions in GEMs represent the biochemical transformations that convert substrates into products, facilitated by enzyme catalysts. These reactions are categorized based on their functional roles within the metabolic network:

- Transport reactions facilitate the movement of metabolites across cellular membranes and compartments

- Exchange reactions represent the input of nutrients and output of waste products between the organism and its environment

- Biochemical transformations encompass the core metabolic processes that interconvert metabolites

- Demand reactions simulate the consumption of metabolites for non-growth associated processes

- Sink reactions provide metabolites that cannot be synthesized by the network

- Biomass reaction aggregates all precursors required for cellular growth into a single objective function [7] [6]

Each reaction in a GEM is associated with thermodynamic constraints that define its reversibility or irreversibility under physiological conditions, as well with as flux boundaries that limit its maximum and minimum capacity [7]. The collection of reactions must collectively enable the synthesis of all biomass constituents, including amino acids, nucleotides, lipids, and cofactors, to support cellular growth [7].

Table 1: Reaction Representation in Published GEMs

| Organism | Model Name | Number of Reactions | Reaction Types | Reference |

|---|---|---|---|---|

| Homo sapiens | Human1 | 13,417 | Metabolic, transport, exchange, biomass | [10] |

| Streptococcus suis | iNX525 | 818 | Biochemical, transport, demand | [9] [7] |

| Escherichia coli | iAF1260 | 1,532 | Metabolic, exchange, biomass | [5] |

Metabolites

Metabolites in GEMs represent the chemical species that participate in biochemical reactions, serving as reactants, products, or intermediates in metabolic pathways. Each metabolite is uniquely identified and annotated with standard biochemical databases to ensure consistent referencing across models [10]. Proper metabolite annotation includes:

- Chemical formula and charge to enable mass and charge balance calculations

- Compartmental localization to specify subcellular location (e.g., cytosol, mitochondria, nucleus)

- Database identifiers (e.g., KEGG, MetaCyc, ChEBI) for cross-referencing

- Systematic naming to prevent duplication and ambiguity [10] [6]

Mass and charge balance are critical quality metrics for GEMs, as imbalances violate conservation laws and lead to biologically impossible metabolic functions. The Human1 model demonstrates excellent balancing with 99.4% mass-balanced reactions and 98.2% charge-balanced reactions, a significant improvement over previous models [10]. This high standard of metabolite curation ensures more accurate simulation results and enhances model reliability for predicting metabolic behaviors.

Table 2: Metabolite Representation in Published GEMs

| Organism | Model Name | Number of Metabolites | Compartments | Balancing Status | |

|---|---|---|---|---|---|

| Homo sapiens | Human1 | 10,138 (4,164 unique) | Multiple | 99.4% mass-balanced, 98.2% charge-balanced | [10] |

| Streptococcus suis | iNX525 | 708 | Cytosol, extracellular | Manually curated | [7] |

| Escherichia coli | iAF1260 | 1,032 | Multiple | Not specified | [5] |

Gene-Protein-Reaction (GPR) Associations

Gene-Protein-Reaction (GPR) associations define the logical relationships between genes, the proteins they encode, and the metabolic reactions these proteins catalyze. These associations are typically represented as Boolean rules that specify the gene requirements for a reaction to be active [5] [12]. GPR rules capture three fundamental genetic mechanisms:

- Enzyme complexes (AND relationships): Multiple gene products required to form a functional enzyme

- Isozymes (OR relationships): Multiple enzymes that catalyze the same reaction

- Multi-functional enzymes: Single enzymes that catalyze multiple different reactions [5]

Statistical analysis of GPR associations in the iAF1260 E. coli model reveals the complexity of these relationships, with over 16% of enzymes formed by protein complexes (up to 13 subunits), 31% of reactions catalyzed by multiple isozymes (up to 7), and 72% catalyzed by at least one promiscuous enzyme [5]. This complexity highlights the importance of accurately representing GPR associations to understand the mechanistic link between genotype and phenotype.

Figure 2: GPR associations define the logical relationships between genes, proteins, and reactions, capturing enzyme complexes (AND logic), isozymes (OR logic), and promiscuous enzymes.

Recent advances in GPR formulation include the development of Stoichiometric GPRs (S-GPRs) that incorporate information about the transcript copy number required to produce all subunits of a fully functional catalytic unit [12]. This approach provides a more accurate representation of how gene expression modulates metabolic flux and has been shown to improve the prediction of metabolite consumption and production rates when integrating transcriptomic data [12].

Experimental Protocols for GEM Construction and Validation

GEM Reconstruction Workflow

The construction of high-quality genome-scale metabolic models follows a systematic workflow that integrates automated and manual curation processes:

- Genome Annotation: Identify metabolic genes using platforms like RAST and retrieve corresponding protein sequences from RefSeq or UniProt [7].

- Draft Reconstruction: Generate an initial model using automated tools such as ModelSEED or RAVEN, supplemented by homology mapping to existing models of related organisms (BLAST threshold: ≥40% identity, ≥70% query coverage) [7] [6].

- Manual Curation and Gap Filling:

- Add missing biochemical reactions based on literature evidence and physiological data

- Balance reaction equations by adding H₂O or H⁺ as needed

- Fill metabolic gaps to ensure synthesis of all biomass precursors using gapFill or manual annotation [7]

- Biomass Composition Definition: Assemble biomass equation based on experimental measurements of cellular composition (proteins, DNA, RNA, lipids, etc.) or adopt from phylogenetically related organisms [7].

- Model Validation: Test model predictions against experimental growth phenotypes under different nutrient conditions and gene essentiality data [9] [7].

This workflow typically operates within computational environments like the COBRA Toolbox in MATLAB or COBRApy in Python, utilizing optimization solvers such as GUROBI to perform flux balance analysis [7] [6].

Context-Specific Model Construction with iMAT

The Integrative Metabolic Analysis Tool (iMAT) algorithm constructs context-specific models by integrating transcriptomic data with a generic GEM:

- Gene-to-Reaction Mapping: Map genes to reactions using GPR associations [11].

- Reaction Expression Categorization:

- Calculate reaction expression levels based on GPR rules and gene expression data

- Classify reactions as highly expressed (expression > mean + 0.5STD), moderately expressed, or lowly expressed (expression < mean - 0.5STD) [11]

- Model Construction: Apply iMAT to generate context-specific models that include highly expressed reactions while excluding lowly expressed reactions with high variability [11].

- Flux Balance Analysis: Simulate metabolic fluxes using FBA with biomass production as the objective function [11].

This approach has been successfully applied to build cell-type specific models, including models of lung cancer cells and lung-associated mast cells from human tissue samples [11].

GEM Validation Against Experimental Data

Rigorous validation is essential to ensure GEM predictions reflect biological reality:

- Growth Phenotype Validation: Compare predicted growth capabilities with experimental measurements under different nutrient conditions (e.g., leave-one-out experiments in chemically defined media) [7].

- Gene Essentiality Analysis: Simulate gene knockout by setting corresponding reaction fluxes to zero and compare predicted essential genes with experimental mutant screens [9] [7].

- Quantitative Comparison: Calculate accuracy metrics such as grRatio (growth ratio) threshold <0.01 for essential genes, and alignment percentage with experimental data (e.g., 71.6-79.6% for iNX525 model) [7].

- Flux Validation: Compare predicted flux distributions with experimental ¹³C-flux measurements when available [5].

The Streptococcus suis iNX525 model demonstrates how comprehensive validation can establish model credibility, showing good agreement with growth phenotypes under different nutrient conditions and genetic disturbances [7].

Table 3: Key Computational Tools and Resources for GEM Research

| Tool/Resource | Function | Application in GEM Research |

|---|---|---|

| COBRA Toolbox | MATLAB package for constraint-based modeling | Perform flux balance analysis, gene knockout simulations, and pathway analysis [7] |

| ModelSEED | Automated pipeline for GEM reconstruction | Generate draft metabolic models from genome annotations [7] [6] |

| RAST | Genome annotation server | Annotate metabolic genes for model reconstruction [7] |

| MEMOTE | Test suite for GEM quality assessment | Evaluate model consistency, mass and charge balance, and annotation quality [10] [9] |

| Human-GEM | Consensus human metabolic model | Base model for constructing context-specific human cell models [10] [11] |

| MetaNetX | Resource for metabolic network reconciliation | Map metabolites and reactions to standard identifiers across databases [10] |

| GUROBI | Mathematical optimization solver | Solve linear programming problems in flux balance analysis [7] |

| CIBERSORTx | Machine learning tool for cell type deconvolution | Estimate cell type-specific gene expression from bulk transcriptomic data [11] |

Advanced Applications and Future Directions

The integration of GEMs with multi-omics data has opened new avenues for investigating complex biological systems. In cancer research, GEMs have been used to analyze metabolic reprogramming in lung cancer, revealing selective upregulation of specific amino acid metabolism pathways to support elevated energy demands [11]. Similarly, GEMs have elucidated metabolic alterations in prostate cancer cells chronically exposed to environmental toxicants like Aldrin, identifying important changes in carnitine shuttle and prostaglandin biosynthesis associated with malignant phenotypes [12].

In infectious disease research, GEMs of pathogens like Streptococcus suis have identified essential genes required for both growth and virulence factor production, highlighting potential antibacterial drug targets focused on biosynthetic pathways for capsular polysaccharides and peptidoglycans [9] [7]. The application of GEMs in drug discovery has also advanced through methods like the Task Inferred from Differential Expression (TIDE) algorithm, which infers pathway activity changes from transcriptomic data following drug treatments [8].

Future directions in GEM development include the creation of more sophisticated multi-scale models that integrate metabolic networks with other cellular processes, the development of standardized community-driven curation platforms, and the implementation of more advanced algorithms for extracting context-specific models from multi-omics datasets [10] [6]. The move toward version-controlled, open-source model development, as exemplified by the Human-GEM project, promises to enhance reproducibility and accelerate collaborative model improvement [10].

As GEMs continue to evolve in scope and accuracy, they will play an increasingly important role in systems biology, offering a mechanistic framework for understanding metabolic regulation in health and disease, and supporting the development of novel therapeutic interventions and biotechnological applications.

Genome-scale metabolic models (GEMs) represent a computational framework that mathematically defines the relationship between genetic makeup and observable metabolic phenotypes. By contextualizing multi-omics Big Data—including genomics, transcriptomics, proteomics, and metabolomics—GEMs enable quantitative simulation of metabolic capabilities across all domains of life [13]. This technical guide examines the core principles, reconstruction methodologies, and biotechnological applications of GEMs, with particular emphasis on their growing utility in drug target identification and pathogen vulnerability assessment [14]. We provide comprehensive protocols for model reconstruction, validation, and implementation, along with standardized visualization frameworks to support researchers in deploying these powerful systems biology tools.

Genome-scale metabolic models are network-based tools that collect all known metabolic information of a biological system, including genes, enzymes, reactions, associated gene-protein-reaction (GPR) rules, and metabolites [13]. These models computationally describe stoichiometry-based, mass-balanced metabolic reactions using GPR associations formulated from genome annotation data and experimental validation [14]. The fundamental structure of a GEM is a stoichiometric matrix (S matrix) where columns represent reactions, rows represent metabolites, and entries represent coefficients of metabolites in reactions [15].

GEMs have evolved from single-organism models to sophisticated frameworks capable of representing multi-strain bacterial populations, archaea with unique metabolic capabilities, eukaryotic systems, and host-pathogen interactions [13] [14]. Since the first GEM for Haemophilus influenzae was reported in 1999, the field has expanded to include models for over 6,000 organisms across bacteria, archaea, and eukarya [14]. This growth has been powered by technological advances generating biological Big Data in a cost-efficient and high-throughput manner [13].

Core Principles and Mathematical Foundations

Structural Components of GEMs

The architecture of a genome-scale metabolic model consists of several interconnected components that systematically represent cellular metabolism:

- Gene-Protein-Reaction (GPR) Associations: Boolean relationships that map genes to their protein products and subsequently to the metabolic reactions they catalyze, explicitly defining genotype-phenotype connections [14]

- Stoichiometric Matrix: A mathematical representation where each element Sij corresponds to the stoichiometric coefficient of metabolite i in reaction j [15]

- Metabolite Compartmentalization: Spatial organization of metabolites and reactions within cellular compartments (e.g., cytosol, mitochondria, peroxisomes) [14]

- Biomass Objective Function: A pseudo-reaction representing the drain of precursor metabolites and energy required for cellular growth and maintenance [15]

Constraint-Based Reconstruction and Analysis (COBRA)

The COBRA methodology provides the mathematical foundation for simulating GEMs through the imposition of physicochemical constraints:

Flux Balance Analysis (FBA) represents the most widely used approach for simulating GEMs [15]. FBA calculates the flow of metabolites through a metabolic network, enabling prediction of growth rates, nutrient uptake, and byproduct secretion by optimizing an objective function (typically biomass production) subject to constraints:

Maximize: Z = cᵀ·v Subject to: S·v = 0 vₗb ≤ v ≤ vᵤb

Where S is the stoichiometric matrix, v is the flux vector, c is the vector of objective coefficients, and vₗb and vᵤb represent lower and upper flux bounds [15].

Advanced Simulation Techniques

Beyond standard FBA, several specialized extensions address specific biological scenarios:

- Dynamic FBA (dFBA): Extends FBA to simulate time-dependent changes in extracellular metabolites and biomass [13]

- 13C Metabolic Flux Analysis (13C MFA): Uses isotopic tracer experiments to determine intracellular metabolic fluxes with high resolution [13]

- Flux Sampling: Characterizes the complete space of feasible metabolic states rather than a single optimal solution, particularly valuable for capturing phenotypic diversity [16]

- Regulatory FBA: Incorporates transcriptional regulatory constraints to improve context-specific predictions [14]

Quantitative Landscape of Reconstructed GEMs

Current Status of Metabolic Models Across Life Domains

Table 1: Distribution of Genome-Scale Metabolic Models Across Organisms

| Domain | Total Organisms | Manually Curated Models | Representative Models | Key Features |

|---|---|---|---|---|

| Bacteria | 5,897 | 113 | E. coli iML1515, B. subtilis iBsu1144, M. tuberculosis iEK1101 | 93.4% gene essentiality prediction accuracy in iML1515; Industrial enzyme production; Drug target identification |

| Archaea | 127 | 10 | M. acetivorans iMAC868, iST807 | Methanogenesis pathways; Extreme environment adaptation; Thermodynamic constraints |

| Eukarya | 215 | 60 | S. cerevisiae Yeast 7, Human recon models | Consensus networks; Tissue-specific models; Multicellular systems |

As of 2019, GEMs have been reconstructed for 6,239 organisms (5,897 bacteria, 127 archaea, and 215 eukaryotes), with 183 organisms subjected to manual curation [14]. High-quality models for scientifically and industrially important organisms have undergone multiple iterations of refinement, with the E. coli GEM showing 93.4% accuracy for gene essentiality prediction across multiple carbon sources [14].

Model Quality Assessment Metrics

Table 2: GEM Quality Assessment and Validation Metrics

| Validation Type | Metric | Application | Benchmark Performance |

|---|---|---|---|

| Genetic | Gene essentiality prediction | Compare in silico knockouts with experimental data | 71.6-79.6% agreement in S. suis iNX525 [9] |

| Phenotypic | Growth capability prediction | Assess growth/no-growth in different nutrient conditions | 74% MEMOTE score for S. suis model [9] |

| Flux | Metabolic flux distribution | Compare predicted vs. measured fluxes using 13C MFA | Correlation coefficients >0.7 in core metabolism |

| Biochemical | Network connectivity | Ensure metabolite production/consumption balance | Balanced energy and redox cofactors |

GEM Reconstruction Workflow: Methodologies and Protocols

Comprehensive Model Reconstruction Pipeline

Detailed Experimental Protocols

Protocol 1: Genome-Scale Metabolic Model Reconstruction

Purpose: To reconstruct a organism-specific metabolic model from genomic data

Materials:

- Annotated genome sequence

- Biochemical databases (KEGG, MetaCyc, BiGG)

- Reconstruction software (ModelSEED, RAVEN, CarveMe)

- Curation tools (MEMOTE for quality assessment)

Procedure:

- Genome Annotation Processing: Extract all metabolic genes from annotated genome using Gene-Protein-Reaction mapping

- Draft Network Generation: Use automated reconstruction tools to generate initial reaction network

- Gap Analysis: Identify and fill metabolic gaps to ensure biomass precursor production

- Manual Curation: Refine model based on literature and experimental data

- Stoichiometric Matrix Formation: Convert metabolic network to mathematical representation

- Constraint Definition: Establish reaction directionality and capacity constraints

- Objective Function Formulation: Define biomass composition and maintenance requirements

Validation:

- Compare predicted vs. experimental growth phenotypes

- Assess gene essentiality predictions against knockout studies

- Validate nutrient utilization capabilities

Protocol 2: Context-Specific Model Extraction Using Omics Data

Purpose: To generate tissue- or condition-specific metabolic models from multi-omics data

Materials:

- Transcriptomic, proteomic, or metabolomic datasets

- Context-specific extraction algorithms (mCADRE, INIT, iMAT)

- COBRA Toolbox or COBRApy

Procedure:

- Data Preprocessing: Normalize and scale omics data for integration

- Reaction Activity Scoring: Calculate likelihood of reaction activity based on evidence

- Model Extraction: Remove inactive reactions while maintaining network functionality

- Gap Filling: Ensure metabolic functionality of pruned network

- Validation: Compare predicted metabolic capabilities with known tissue functions

Applications:

- Host-pathogen interaction studies [13]

- Tissue-specific metabolic models for drug targeting [16]

- Strain-specific analysis of bacterial pathogens [13]

Applications in Drug Discovery and Pathogen Control

Target Identification in Pathogenic Organisms

GEMs enable systematic identification of potential drug targets in pathogenic organisms through in silico simulation of gene essentiality and metabolic vulnerabilities:

Table 3: Drug Target Identification Using GEMs in Pathogenic Bacteria

| Pathogen | GEM | Target Identification Approach | Potential Targets |

|---|---|---|---|

| Mycobacterium tuberculosis | iEK1101 | Comparison of hypoxic vs. aerobic metabolism; Integration with macrophage model | Enzymes in central carbon metabolism; Cofactor biosynthesis [14] |

| Streptococcus suis | iNX525 | Analysis of virulence-linked genes; Essentiality for growth and virulence factor production | 8 enzymes in capsular polysaccharide and peptidoglycan biosynthesis [9] |

| ESKAPEE Pathogens | Multi-strain models | Pan-genome analysis across clinical strains; Conservation analysis | Two-component system proteins; Strain-specific essential genes [13] |

In Streptococcus suis, GEM analysis identified 131 virulence-linked genes, with 79 mapping to metabolic reactions in model iNX525 [9]. Among these, 26 genes were essential for both cell growth and virulence factor production, with eight enzymes in capsular polysaccharide and peptidoglycan biosynthesis prioritized as antibacterial drug targets [9].

Multi-Strain Analysis for Broad-Spectrum Therapeutics

Pan-genome scale modeling enables identification of therapeutic targets effective across multiple strains of pathogenic species:

This approach has been applied to Salmonella (410 strains), S. aureus (64 strains), and K. pneumoniae (22 strains), predicting growth capabilities across hundreds of environments and identifying conserved essential reactions [13].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools and Resources for GEM Reconstruction and Analysis

| Tool Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Reconstruction Platforms | ModelSEED, RAVEN, CarveMe | Automated draft reconstruction from genomes | Initial model generation; Large-scale modeling [13] |

| Simulation Environments | COBRA Toolbox (MATLAB), COBRApy (Python) | Flux balance analysis; Constraint-based modeling | Metabolic flux prediction; Gene knockout simulation [15] |

| Quality Assessment | MEMOTE | Model testing and validation | Quality assurance before publication [9] |

| Visualization | Escher, Shu, CNApy | Metabolic map visualization with data overlay | Multi-omics data integration; Publication-ready figures [17] |

| Context-Specific Modeling | mCADRE, INIT, iMAT | Tissue- and condition-specific model extraction | Host-pathogen interactions; Tissue-specific metabolism [16] |

| Databases | KEGG, MetaCyc, BiGG Models | Biochemical reaction databases | Reaction and metabolite information during curation [15] |

Emerging Frontiers and Future Directions

The field of genome-scale metabolic modeling continues to evolve with several emerging frontiers enhancing predictive capabilities and application scope:

- Machine Learning Integration: Combining GEM predictions with machine learning algorithms to improve context-specific flux predictions [13]

- Microbiome Community Modeling: Multi-species models to simulate metabolic interactions in microbial communities and host-associated microbiomes [13] [16]

- Kinetic Model Integration: Incorporating enzyme kinetics and thermodynamic constraints to improve predictive accuracy [17]

- Single-Cell Metabolic Modeling: Developing approaches to model metabolic heterogeneity at single-cell resolution [16]

- Automated Curation: Natural language processing and AI-assisted literature mining to accelerate manual curation processes [13]

Visualization tools like Shu are addressing the challenge of representing complex multi-omics data on metabolic maps, enabling simultaneous display of distributional data across multiple experimental conditions [17]. These advances position GEMs as increasingly central tools for connecting genotype to phenotype in basic research, biotechnology, and therapeutic development.

Genome-scale metabolic models (GEMs) represent comprehensive computational reconstructions of the metabolic network of an organism, detailing the biochemical reactions and gene-protein-reaction associations within a cell. These models have become indispensable tools in systems biology, enabling researchers to simulate metabolic fluxes, predict phenotypic behavior, and elucidate the molecular basis of diseases. The historical evolution of GEMs spans from early stoichiometric models of core metabolism to current sophisticated models encompassing thousands of reactions across diverse tissues and organisms. This evolution has paralleled advances in genomics, computational power, and experimental techniques, leading to their widespread adoption in basic research and drug development. For researchers and pharmaceutical professionals, understanding this trajectory provides critical insights into both the capabilities and limitations of contemporary GEM applications in target identification and therapeutic development.

The Early Foundations of GEMs (1990-2005)

The genesis of GEMs emerged from the convergence of genome sequencing projects and constraint-based modeling approaches. Early models focused on minimal metabolic networks and foundational algorithms that would later enable genome-scale reconstructions.

Key Conceptual and Technical Advancements:

- Stoichiometric Modeling: Early models utilized the stoichiometric matrix (S-matrix) to represent the interconnections between metabolites and reactions, providing a mathematical framework for analyzing metabolic network properties.

- Flux Balance Analysis (FBA): FBA emerged as a cornerstone computational technique, enabling the prediction of metabolic flux distributions by optimizing an objective function (e.g., biomass production) subject to stoichiometric and capacity constraints.

- First Genome-Scale Reconstructions: Haemophilus influenzae, sequenced in 1995, became the subject of one of the first published genome-scale metabolic reconstructions. This was quickly followed by models for Escherichia coli and Saccharomyces cerevisiae, which became benchmark organisms for method development.

Limitations of Early Models:

- Heavy reliance on in silico predictions with limited experimental validation.

- Incomplete gene annotations leading to gaps in metabolic networks.

- Lack of formalism for integrating omics data types.

- Minimal representation of regulatory constraints or multi-cellular interactions.

The Expansion and Refinement Era (2005-2015)

This period witnessed a dramatic expansion in the scope, quality, and application of GEMs. The number of reconstructed organisms grew exponentially, and methodologies advanced to create more context-specific models.

- Development of Semi-Automated Reconstruction Pipelines: Tools like Model SEED and RAVEN Toolbox began to streamline the labor-intensive process of draft model reconstruction, making the technology accessible to a broader scientific community.

- Integration of Omics Data: A critical transition occurred with the development of algorithms such as INIT (Integrative Network Inference for Tissues) and tINIT, which used transcriptomic, proteomic, and metabolomic data to extract tissue- or condition-specific models from a generic reference reconstruction like Human-GEM [18]. This enabled the investigation of human metabolism in a tissue-resolved manner.

- Key Model Properties During this Era:

- Shift from unicellular to multicellular organism models.

- Incorporation of thermodynamic constraints and enzyme kinetics.

- Development of community standards for model quality control and curation, such as those proposed by the community-wide metabolic model reconstruction effort for human metabolism.

Table 1: Evolution of Key GEM Properties Over Time

| Time Period | Representative Model | Typical Reaction Count | Key Technological Enabler | Primary Application Focus |

|---|---|---|---|---|

| 1990-2005 | H. influenzae Reconstruction | ~600 Reactions | Genome Sequencing, FBA | Prediction of Essential Genes |

| 2005-2015 | Recon 1 (Human) | ~3,300 Reactions | Transcriptomics, INIT/tINIT algorithms [18] | Tissue-Specific Metabolism |

| 2015-Present | Human-GEM, HMA | ~13,000 Reactions | Proteomics, Metabolomics, Machine Learning | Personalized Medicine, Drug Target ID |

Current Landscape and Widespread Adoption (2015-Present)

The contemporary landscape of GEM research is characterized by highly curated, multi-compartmental models and their integration into a wide array of biomedical applications. Widespread adoption is evident across academia and industry, particularly in drug development.

- Enhanced Comprehensiveness and Curation: Current reference models, such as Human-GEM, represent the metabolic functions of thousands of metabolic genes, their associated proteins, and the reactions they catalyze [18]. These models undergo continuous community-driven curation to minimize gaps and improve predictive accuracy.

- Integration with Multi-Omics and Clinical Data: GEMs now serve as scaffolds for integrating multi-omics data. This allows for the generation of patient-specific models to investigate the metabolic underpinnings of diseases like cancer, neurodegenerative disorders, and metabolic syndromes.

- Applications in Drug Development:

- Target Identification: GEMs can predict essential genes and reactions whose inhibition would selectively halt the growth of cancer cells or pathogenic organisms.

- Mechanism of Action Elucidation: Simulations can reveal how drug-induced perturbations cascade through metabolic networks, helping to explain therapeutic and off-target effects.

- Personalized Medicine: By constructing models from patient-derived data, researchers can identify personalized metabolic vulnerabilities and predict individual responses to treatment.

Experimental Protocols for GEM Comparison and Analysis

A critical methodology in contemporary GEM research involves the systematic comparison of multiple models, either from different tissues or different conditions, to uncover functionally relevant differences. The following protocol, utilizing the RAVEN Toolbox in MATLAB, provides a standardized workflow for such analyses [18].

Structural Comparison of Multiple GEMs

This protocol assesses the compositional differences between GEMs based on their reaction and subsystem content.

Model Preparation: Load the cell array of GEMs to be compared. Ensure all models were extracted from the same reference GEM to maintain namespace consistency (i.e., same reaction, metabolite, and gene ID types) [18].

Execute Comparison Function: Use the

compareMultipleModelsfunction to compute structural similarities.This function outputs a results structure containing:

reactions.matrix: A binary matrix of reaction presence/absence in each GEM.subsystems.matrix: The number of reactions in each subsystem for each GEM.structComp: A matrix of Hamming similarity (1 - Hamming distance) for reaction content between each GEM pair [18].

Visualization and Interpretation:

- Clustergram: Generate a clustergram of the

res.structCompmatrix to visualize clustering of models based on reaction content similarity. - t-SNE Projection: Perform dimensionality reduction on the binary reaction matrix using t-SNE with Hamming distance to project models into 2D space and identify natural groupings.

- Subsystem Analysis: Calculate and visualize the percent difference in subsystem coverage across a subset of models to identify which metabolic pathways differ most significantly [18].

- Clustergram: Generate a clustergram of the

Functional Comparison Using Metabolic Tasks

Beyond structure, GEM function can be compared by testing their ability to simulate a predefined set of metabolic tasks, such as the production of essential biomass components or secretion of metabolites.

Task Definition: Prepare a list of metabolic tasks in a predefined format (e.g., an Excel file

metabolicTasks_Full.xlsx). Each task defines inputs, outputs, and conditions for a specific metabolic function.Functional Evaluation: Re-run the

compareMultipleModelsfunction with the task list as an input.The function checks each GEM for the ability to perform each task, outputting a binary matrix (

res_func.funcComp.matrix) of task success (1) or failure (0) [18].Analysis of Functional Differences: Identify tasks that are not uniformly passed or failed across all GEMs (

isDiff = ~all(...)). These differential tasks highlight functional metabolic differences between the tissues or conditions represented by the GEMs.

Successful GEM research and analysis relies on a suite of software tools, databases, and computational resources. The table below details key components of the modern metabolic modeler's toolkit.

Table 2: Key Research Reagent Solutions for GEM Analysis

| Item Name | Type | Primary Function | Relevance to Protocol |

|---|---|---|---|

| RAVEN Toolbox | Software Toolbox | Genome-scale metabolic model reconstruction, simulation, and analysis in MATLAB. | Core engine for running compareMultipleModels and related functions [18]. |

| Human-GEM | Reference Model | A comprehensive, community-driven GEM of human metabolism. | Serves as the reference model from which tissue-specific models are extracted [18]. |

| tINIT Algorithm | Algorithm | Integrative Network Inference for Tissues; generates functional tissue-specific models. | Protocol for generating the tissue GEMs that are compared in the analysis [18]. |

| MATLAB | Software Environment | High-level technical computing language and interactive environment. | The computational platform required to run the RAVEN Toolbox and execute the protocol. |

| Metabolic Task List | Curation (Excel File) | A defined set of metabolic functions to test model capability (e.g., synthesis of essential metabolites). | Used as input for the functional comparison of GEMs to assess their physiological relevance [18]. |

| GTEx RNA-Seq Data | Biological Dataset | Publicly available transcriptomic data from a wide range of human tissues. | Typical input data used by the tINIT algorithm to generate the context-specific GEMs. |

The historical evolution of genome-scale metabolic models from simple network representations to sophisticated, context-aware reconstructions mirrors the broader advances in genomics and systems biology. This journey has transformed GEMs from academic curiosities into fundamental tools for drug development, enabling the in silico prediction of metabolic vulnerabilities in diseases and the generation of testable hypotheses for therapeutic intervention. The establishment of standardized protocols for model comparison and analysis, as detailed herein, ensures rigor and reproducibility in the field. As the integration of single-cell multi-omics and complex metabolic imaging data becomes more seamless, the next evolutionary chapter of GEMs will likely focus on capturing spatiotemporal metabolic dynamics at an unprecedented resolution, further solidifying their role in personalized medicine and rational drug design.

Genome-scale metabolic models (GEMs) are computational representations of the metabolic network of an organism, encapsulating all known biochemical transformations within a cell. These models quantitatively define the relationship between genotype and phenotype by contextualizing various types of Big Data, including genomics, metabolomics, and transcriptomics [13]. A GEM computationally describes a whole set of stoichiometry-based, mass-balanced metabolic reactions using gene-protein-reaction (GPR) associations formulated from genome annotation data and experimentally obtained information [14]. The primary simulation technique for GEMs is Flux Balance Analysis (FBA), a constraint-based optimization method that predicts metabolic flux distributions by assuming steady-state metabolite concentrations and optimizing for a biological objective such as biomass production [19] [14]. GEMs have evolved into powerful platforms for systems-level metabolic studies, enabling researchers to predict phenotypic behaviors, identify drug targets, and guide metabolic engineering strategies across all domains of life.

Current Status of Reconstructed GEMs

The field of metabolic modeling has expanded dramatically since the first GEM for Haemophilus influenzae was reconstructed in 1999 [14]. As of February 2019, GEMs have been reconstructed for a total of 6,239 organisms, encompassing a diverse range of life forms [14]. The table below summarizes the distribution of these models across the major domains of life.

Table 1: Total Number of Reconstructed GEMs by Domain of Life (as of February 2019)

| Domain | Number of Organisms with GEMs | Manually Curated GEMs |

|---|---|---|

| Bacteria | 5,897 | 113 |

| Archaea | 127 | 10 |

| Eukarya | 215 | 60 |

| Total | 6,239 | 183 |

Manual reconstruction, while time-consuming, produces higher-quality models that serve as excellent knowledge bases and reference models for related organisms [14]. The following sections provide a detailed, domain-by-domain breakdown of these reconstructions, highlighting key model organisms and their applications.

Bacterial GEMs

Bacteria represent the most extensively modeled domain of life, with thousands of GEMs reconstructed. High-quality, manually curated models for scientifically, industrially, and medically important bacteria have been updated multiple times as new biological information becomes available [14].

Table 2: High-Quality Genome-Scale Metabolic Models for Representative Bacteria

| Organism | Example Model(s) | Key Features and Applications |

|---|---|---|

| Escherichia coli | iML1515 [14] | Most recent version contains 1,515 open reading frames; 93.4% accuracy for gene essentiality simulation; used as a template for pathotype-specific models (e.g., APEC) [20]. |

| Bacillus subtilis | iBsu1144 [14] | Incorporates thermodynamic information to improve flux prediction accuracy; used for production of enzymes and recombinant proteins. |

| Mycobacterium tuberculosis | iEK1101 [14] | Used to understand metabolic states under in vivo hypoxic conditions and for systematic drug target identification. |

| Avian Pathogenic E. coli (APEC) | Multi-strain model [20] | Pan-genome-based model of 114 isolates; identified a unique 3-hydroxyphenylacetate metabolism pathway in phylogroup C. |

A significant advancement in bacterial modeling is the move from single-strain to multi-strain GEMs. For example, a pan-genome analysis of E. coli led to the creation of a "core" model (intersection of all genes/reactions) and a "pan" model (union of all genes/reactions) from 55 individual GEMs [13]. Similar approaches have been applied to Salmonella (410 GEMs) and Klebsiella pneumoniae (22 GEMs) to simulate growth in hundreds of different environments and understand strain-specific metabolic traits [13].

Archaeal GEMs

Archaea, single-cell organisms with distinct molecular characteristics from both bacteria and eukaryotes, are less represented in the GEM repository due to the challenges associated with their isolation and study, particularly from extreme environments [13] [14]. Despite this, GEMs have been reconstructed for several key archaeal species, with a focus on methanogens.

Table 3: Representative Genome-Scale Metabolic Models for Archaea

| Organism | Example Model(s) | Key Features and Applications |

|---|---|---|

| Methanosarcina acetivorans | iMAC868, iST807 [14] | Model methanogenesis pathways; iST807 incorporates tRNA-charging to characterize effects of differential tRNA gene expression on metabolic fluxes. |

| Methanobacterium formicicum | MFI model [13] | Methanogen present in the digestive system of humans and ruminants; implicated in gastrointestinal and metabolic disorders. |

These archaeal GEMs serve as valuable resources for metabolic studies of unusual characteristics, such as survival in extreme environments and the unique ability to produce biological methane [13] [14].

Eukaryotic GEMs

Eukaryotic GEMs cover a wide spectrum of organisms, including fungi, plants, and mammals. The increased complexity of eukaryotic cells, with their compartmentalized organelles, presents additional challenges for model reconstruction [14].

Table 4: High-Quality Genome-Scale Metabolic Models for Representative Eukaryotes

| Organism | Example Model(s) | Key Features and Applications |

|---|---|---|

| Saccharomyces cerevisiae | Yeast7 [21] [14] | First eukaryotic GEM; consensus model from international collaboration; foundation for enzyme-constrained models (ecModels) using the GECKO toolbox. |

| Homo sapiens | Recon series [21] [13] | Used to understand human diseases, study cancer metabolism, and model host-pathogen interactions. |

The GEM for the budding yeast S. cerevisiae exemplifies the evolution of eukaryotic models. The development of the GECKO (Enzymatic Constraints using Kinetic and Omics data) toolbox allows for the enhancement of the core Yeast7 model with detailed enzyme constraints, enabling more accurate studies of protein allocation and metabolic robustness under stress [21]. This approach has since been expanded to other eukaryotes like Yarrowia lipolytica and Kluyveromyces marxianus, as well as to Homo sapiens [21].

Methodologies and Experimental Protocols in GEM Research

Workflow for GEM Reconstruction and Validation

The process of building and utilizing GEMs involves a multi-step workflow that integrates genomic data and experimental validation. The following diagram illustrates the generalized protocol for multi-strain GEM reconstruction and analysis, as exemplified by the APEC study [20].

Detailed Experimental Protocols

Protocol 1: Reconstruction of a Multi-Strain Pathotype Model

This protocol is adapted from the construction of a pan-genome-scale metabolic model for Avian Pathogenic E. coli (APEC) [20].

Strain Selection and Genomic DNA Extraction:

- Select a comprehensive panel of isolates representing the genetic diversity of the pathotype (e.g., 114 APEC isolates from different clinical presentations).

- Culture isolates on standard agar plates (e.g., MacConkey, Nutrient, or LB agar) aerobically at 37°C.

- Extract high molecular weight genomic DNA using a commercial purification kit.

Genome Sequencing and Assembly:

- Sequence the genomic DNA on a next-generation sequencing platform (e.g., Illumina MiSeq, 150 nt paired-end reads).

- Perform de novo assembly of contigs using software like Shovill (which utilizes the SPAdes assembler) with minimum coverage and length thresholds.

Pan-Genome Analysis and Draft Reconstruction:

- Annotate all genomes and define the core (genes present in all isolates) and accessory (genes present in a subset) genomes.

- Use the pan-genome to predict a comprehensive set of metabolic reactions for the pathotype.

- Perform gap-filling and manual correction of the draft network to ensure metabolic functionality and connectivity.

Generation of Phylogroup-Specific Sub-Models:

- From the generalized model, extract sub-models for major phylogenetic lineages (e.g., Phylogroups B2, C, and G for APEC) based on the presence of lineage-specific accessory reactions.

Protocol 2: Experimental Validation of Model Predictions

In silico predictions must be validated with experimental data. The following methods were used to validate the APEC GEM [20].

Phenotypic Microarray Assays (Biolog):

- Purpose: To test the metabolic capability of strains on hundreds of carbon, nitrogen, phosphorus, and sulfur sources.

- Procedure: a. Pre-culture selected isolates on R2A agar. b. Prepare cell suspensions as per the manufacturer's instructions. c. Inoculate the suspensions into Biolog PM1, PM2, and PM4A microplates. d. Incubate plates in an OmniLog instrument at 37°C for 24 hours, taking kinetic readings every 15 minutes.

- Outcome: The quantitative growth data is compared against the in silico growth predictions to assess model accuracy.

Defined Minimal Media Growth Assays:

- Purpose: To confirm specific metabolic capabilities predicted by the model, such as the utilization of a unique metabolite.

- Procedure: a. Use a defined minimal medium (e.g., M9 salts, supplemented with MgSO₄, CaCl₂, and a base carbon source like glucose if required). b. Supplement the medium with the target compound at various concentrations (e.g., 3-hydroxyphenylacetate at 0.5, 0.75, and 1.0 mM). c. Inoculate isolates in a 96-well plate and measure growth kinetics in a plate reader (e.g., Spark microplate reader) at 37°C for 24 hours, reading every 15 minutes.

Gene Essentiality Validation:

- Purpose: To test model predictions of gene essentiality.

- Procedure:

a. Select genes predicted to be conditionally essential (e.g.,

lysAfor lysine biosynthesis) and non-essential (e.g.,potE). b. Generate knockout mutants for the target genes. c. Cultivate the mutants in minimal media with and without the essential metabolite (e.g., L-Lysine or putrescine) to confirm the predicted auxotrophy.

Table 5: Essential Materials and Tools for GEM Reconstruction and Analysis

| Item Name | Function/Application | Specific Example/Description |

|---|---|---|

| GECKO Toolbox | Enhances GEMs with enzymatic constraints using kinetic and proteomics data. | An open-source MATLAB toolbox; enables creation of enzyme-constrained models (ecModels) for better prediction of protein allocation [21]. |

| COBRA Toolbox | Provides a suite of functions for constraint-based reconstruction and analysis of GEMs. | A fundamental software suite for simulating GEMs using FBA and related techniques [21]. |

| BRENDA Database | Primary source for enzyme kinetic parameters (e.g., kcat values). | Used by tools like GECKO to parameterize enzyme constraints; contains over 38,000 entries for unique E.C. numbers [21]. |

| Biolog Phenotype MicroArrays | High-throughput experimental validation of model-predicted growth capabilities. | Microplates (e.g., PM1, PM2, PM4A) pre-filled with different carbon, nitrogen, phosphorus, and sulfur sources to test metabolic capacity [20]. |

| Defined Minimal Media (M9) | Validates specific metabolic functions and gene essentiality predictions in vitro. | A chemically defined medium allowing precise control over nutrient availability to test auxotrophies and substrate utilization [20]. |

The scope and coverage of reconstructed GEMs now span all three domains of life, providing an unprecedented resource for understanding cellular physiology. The reconstruction of over 6,000 models marks a transition from building models for individual organisms to sophisticated multi-strain and pan-genome analyses that capture species-level metabolic diversity [13] [20]. Future directions in the field include the deeper integration of enzymatic constraints [21], the application of machine learning to improve predictions [19], and the continued expansion of high-quality models for non-model organisms, particularly in the underrepresented archaeal domain. These advances will further solidify the role of GEMs as indispensable tools in systems biology, metabolic engineering, and drug development.

Building and Applying GEMs: Reconstruction Tools and Biomedical Implementations

Genome-scale metabolic models (GEMs) are computational representations of the metabolic network of an organism, systematically encoding the relationship between genes, proteins, and reactions [14]. These models contain all known metabolic reactions and their associated genes, enabling mathematical simulation of metabolic capabilities through gene-protein-reaction (GPR) associations [13] [14]. The primary simulation technique for GEMs is Flux Balance Analysis (FBA), which uses linear programming to predict metabolic flux distributions under steady-state assumptions [22] [14]. GEMs have evolved from small-scale pathway representations to comprehensive networks that contextualize various types of 'Big Data' including genomics, transcriptomics, proteomics, and metabolomics data [13].

The reconstruction of high-quality GEMs enables quantitative exploration of genotype-phenotype relationships, supporting applications ranging from strain development for industrial biotechnology to drug target identification and understanding human diseases [13] [14]. As of 2019, GEMs have been reconstructed for over 6,000 organisms across bacteria, archaea, and eukarya, with 183 organisms having manually curated models [14]. The continuous refinement of these models, such as the iterative updates from Recon 1 to Recon3D for human metabolism, demonstrates how GEMs serve as dynamic knowledgebases that incorporate expanding biological information [22].

Foundational Concepts in GEM Reconstruction

Core Components and Structure

All genome-scale metabolic models share fundamental components that form their mathematical and biological foundation. The stoichiometric matrix (S-matrix) represents the core mathematical structure, where rows correspond to metabolites and columns represent reactions [22]. This matrix defines the stoichiometric coefficients of metabolites involved in each reaction, enforcing mass conservation constraints. GEMs also incorporate Gene-Protein-Reaction (GPR) rules, which are Boolean associations that link genes to the reactions they enable through encoded enzymes [22] [14]. These rules account for different types of enzymatic relationships, including isozymes (OR relationships) and enzyme complexes (AND relationships) [22].

The reconstruction process aims to create a network that is both genomically accurate and biochemically consistent. Additional essential components include: exchange reactions that model metabolite uptake and secretion, biomass reaction that represents biomass composition and requirements for growth, and objective functions that define cellular goals for flux optimization [23]. The quality of a GEM is often evaluated through gene essentiality analysis, where in silico knockout simulations are compared with experimental data to validate prediction accuracy [24].

Reconstruction Approaches: Top-Down vs. Bottom-Up

Automated reconstruction tools generally follow one of two fundamental approaches: top-down or bottom-up strategies [25]. The top-down approach begins with a well-curated, universal template model and removes reactions without genomic evidence in the target organism. In contrast, the bottom-up approach constructs draft models by mapping annotated genomic sequences to biochemical reactions from reference databases [25]. This fundamental distinction influences the characteristics of the resulting models, with top-down methods typically generating more compact networks and bottom-up approaches potentially capturing more organism-specific metabolic capabilities.

Table 1: Comparison of Top-Down vs. Bottom-Up Reconstruction Approaches

| Feature | Top-Down Approach | Bottom-Up Approach |

|---|---|---|

| Foundation | Universal template model | Genomic sequence annotation |

| Process | Carve out non-supported reactions from template | Build network from mapped reactions |

| Speed | Typically faster | Can be more computationally intensive |

| Coverage | May miss species-specific pathways | Potentially broader reaction inclusion |

| Representative Tools | CarveMe | gapseq, KBase, RAVEN |

Comparative Analysis of Major Reconstruction Tools

The landscape of automated GEM reconstruction includes several established tools that employ distinct methodologies and database resources. CarveMe utilizes a top-down approach, starting with a curated universe model and removing reactions without genomic support [25]. It emphasizes speed and generates immediately functional models ready for constraint-based analysis [25]. gapseq implements a bottom-up strategy that leverages comprehensive biochemical information from multiple data sources during reconstruction [25]. It employs a novel pathway-based gap-filling algorithm and can predict metabolic pathways from genome annotations [25].

KBase (Systems Biology Knowledgebase) offers an integrated platform for GEM reconstruction through its narrative interface, combining multiple bioinformatics tools in a reproducible workflow environment [25]. It employs a bottom-up approach and shares the ModelSEED database with gapseq, resulting in some similarity in reaction sets [25]. The RAVEN (Reconstruction, Analysis, and Visualization of Metabolic Networks) toolbox is a MATLAB-based software suite that supports both draft reconstruction from KEGG and MetaCyc databases and manual curation efforts [24]. It is particularly valuable for creating models for less-studied organisms using homology-based approaches [24].

Structural and Functional Comparison

A comparative analysis of community models reconstructed from the same metagenomics data revealed significant differences in GEMs generated by different tools, despite using identical genomic inputs [25]. The research demonstrated that gapseq models generally encompassed more reactions and metabolites compared to CarveMe and KBase models, though they also exhibited a larger number of dead-end metabolites [25]. In contrast, CarveMe models consistently contained the highest number of genes associated with metabolic functions [25].

The similarity between models varies substantially depending on the reconstruction approach. Analysis using Jaccard similarity coefficients showed that gapseq and KBase models exhibited higher similarity in reaction and metabolite sets (average Jaccard similarity of 0.23-0.24 for reactions, 0.37 for metabolites), likely attributable to their shared use of the ModelSEED database [25]. For gene composition, CarveMe and KBase models showed greater similarity (Jaccard similarity 0.42-0.45) than either did with gapseq models [25].

Table 2: Quantitative Comparison of GEMs Reconstructed from Marine Bacterial Communities

| Reconstruction Tool | Number of Genes | Number of Reactions | Number of Metabolites | Dead-End Metabolites |

|---|---|---|---|---|

| CarveMe | Highest | Intermediate | Intermediate | Lower |

| gapseq | Lowest | Highest | Highest | Higher |

| KBase | Intermediate | Intermediate | Intermediate | Intermediate |

| Consensus | High (similar to CarveMe) | Highest | Highest | Lowest |

Database Dependencies and Namespace Challenges

A critical factor influencing reconstruction outcomes is the underlying biochemical database employed by each tool. Different tools rely on different reference databases—such as ModelSEED, KEGG, BiGG, and MetaCyc—each with their own curation standards, reaction coverage, and metabolite identifiers [25]. This database dependency introduces a fundamental source of variation, as the same genomic evidence may be mapped to different biochemical reactions depending on the reference data used [25].