High-Throughput Screening for Metabolic Network Optimization: Strategies and Breakthroughs for Accelerated Strain Engineering

This article explores the integration of high-throughput screening (HTS) technologies with metabolic network optimization to overcome the formidable challenge of identifying high-performing microbial strains from vast genetic libraries.

High-Throughput Screening for Metabolic Network Optimization: Strategies and Breakthroughs for Accelerated Strain Engineering

Abstract

This article explores the integration of high-throughput screening (HTS) technologies with metabolic network optimization to overcome the formidable challenge of identifying high-performing microbial strains from vast genetic libraries. We examine foundational principles, including the critical bottlenecks in conventional metabolic engineering and the economic drivers propelling the HTS market. The discussion delves into cutting-edge methodological advances, from ultra-sensitive molecular sensors and intelligent biosensors to automated biofoundries. A practical troubleshooting framework addresses universal challenges in screening campaigns, such as cytotoxicity and assay robustness. Finally, we present rigorous validation through case studies and comparative technology analysis, providing researchers and drug development professionals with a comprehensive guide to leveraging HTS for efficient bioproduction and therapeutic discovery.

The Why and What: Foundational Principles and Market Drivers of HTS in Metabolic Engineering

The central challenge in modern metabolic engineering lies in navigating the vast combinatorial space of potential genetic modifications to construct efficient microbial cell factories. The field operates on a design–build–test–learn (DBTL) paradigm, where each cycle aims to incrementally improve production metrics such as yield, titer, and productivity [1]. However, a significant capability gap has emerged: while tools for designing pathways and building genetic constructs have advanced rapidly, the capacity to test the resulting strains has not kept pace. This disconnect creates a combinatorial bottleneck, where the number of potential strain variants exponentially outstrips our ability to characterize them [1]. Consequently, metabolic engineers often face the impractical task of identifying optimal producers from thousands of potential variants without adequate screening methods.

This bottleneck is particularly pronounced when engineering complex metabolic traits that require balanced expression of multiple pathway enzymes. For instance, in a pathway with just 10 genes, each with 5 potential expression levels, the number of possible combinations exceeds 10 million. Classical analytical techniques, while highly informative, are too low-throughput to effectively navigate this complexity. High-Throughput Screening (HTS) technologies therefore become not merely beneficial but essential for generating the actionable data required to inform subsequent engineering cycles and advance toward economically viable bioprocesses [1].

The High-Throughput Screening Arsenal for Metabolic Engineering

High-Throughput Screening in metabolic engineering encompasses a suite of technologies designed to rapidly evaluate strain libraries. These methods balance throughput, flexibility, and informational depth, and can be broadly categorized as follows.

Table 1: Categories of High-Throughput Screening Assays

| Assay Category | Throughput | Key Feature | Primary Application | Example Technology |

|---|---|---|---|---|

| Biosensor-Based | Very High | Links metabolite concentration to measurable signal | Dynamic regulation; enrichment of high-producers | Transcription Factor-based, FRET-based [2] |

| Growth Selection | Highest | Directly couples production to survival | Optimization of essential metabolites or cofactors | Auxotrophies, antibiotic resistance [2] |

| Spectroscopic | High | Detects intrinsic chromophores/fluorophores | Screening for colored or fluorescent compounds | FACS, microplate readers [1] |

| Analytical Chemistry | Low | High-confidence identification & quantification | Validation of top hits; detailed pathway analysis | GC-MS, LC-MS [1] |

Genetically Encoded Biosensors: The Core of Modern HTS

Genetically encoded biosensors are sensory proteins or RNA elements that have been engineered to couple the concentration of a target metabolite to a measurable output, such as fluorescence or cell survival. They are among the most powerful tools for HTS because they operate at the single-cell level and are inherently compatible with ultra-high-throughput techniques like Fluorescence-Activated Cell Sorting (FACS) [2] [1].

1. Transcription Factor (TF)-Based Biosensors: These are the most widely applied class of biosensors. They utilize natural sensory proteins that, upon binding a specific effector molecule (e.g., a metabolic intermediate), undergo a conformational change that modulates transcription of a reporter gene [2].

- Mechanism: In their typical configuration, the TF binds to its operator sequence and represses transcription in the absence of the ligand. When the ligand is present, the TF dissociates, allowing expression of a reporter protein like GFP [2].

- Applications: TFs have been engineered to detect a wide range of molecules, including antibiotics, amino acids, vitamins, organic acids (e.g., succinate), and alcohols (e.g., butanol) [2].

2. FRET-Based Biosensors: FÖrster Resonance Energy Transfer (FRET) biosensors rely on a pair of fluorophores and a ligand-binding domain. Binding of the target metabolite induces a conformational change that alters the distance between the fluorophores, leading to a measurable change in the FRET signal [2].

- Advantages: They offer high temporal resolution and orthogonality.

- Disadvantages: They typically have a lower dynamic range and can only report on metabolite levels, not directly drive downstream regulatory responses for strain enrichment. They are often used for monitoring intracellular metabolic dynamics [2].

3. Riboswitches: These are structured RNA elements that sense metabolites and regulate gene expression at the transcriptional or translational level. While not covered in detail in the provided results, they represent a third major category of genetically encoded biosensor [2].

Growth Selection and Spectroscopic Methods

Growth Selection represents the ultimate in screening throughput. By designing a system where production of the target compound is essential for survival under selective conditions (e.g., by complementing an auxotrophy or conferring antibiotic resistance), millions of clones can be evaluated simultaneously without specialized equipment [2]. This method is powerful but is generally only applicable to compounds that can be directly linked to growth.

Spectroscopic Methods, such as colorimetric assays or the detection of native fluorescence, provide a versatile platform for HTS in microtiter plates. Their applicability, however, is limited to target molecules that possess or can be derivatized to possess a suitable chromophore or fluorophore [1].

Case Study: Alleviating the Astaxanthin Biosynthesis Bottleneck

A prime example of successfully addressing a metabolic bottleneck through combinatorial engineering and HTS is the enhanced production of astaxanthin in Saccharomyces cerevisiae. Astaxanthin is a high-value carotenoid pigment, and its biosynthesis in yeast involves a lengthy pathway with multiple potential rate-limiting steps [3].

The Engineering Strategy

The research strategy involved a multi-pronged approach to optimize both precursor supply and downstream conversion efficiency [3]:

- Enhancing Precursor Supply: To increase the flux toward the key precursor, β-carotene, the team overexpressed a mutant GGPP synthase (CrtE03M) with improved activity, alongside other rate-limiting enzymes (tHMG1, CrtI, and CrtYB).

- Improving Catalytic Activity: A color-based screening system was developed for the directed evolution of β-carotene ketolase (BKT). As β-carotene (yellow) is converted to astaxanthin (red) via ketolation, colonies with higher BKT activity turn redder. This visual HTS allowed the identification of a triple mutant (OBKTM) with 2.4-fold improved activity [3].

- Balancing Gene Expression: The copy numbers of the pathway genes were carefully adjusted to balance metabolic flux and avoid the accumulation of cytotoxic intermediates or excessive cellular burden.

Quantitative Outcomes of Combinatorial Engineering

The impact of each successive engineering step was quantified, demonstrating the power of this iterative approach.

Table 2: Metabolic Engineering Outcomes for Astaxanthin Production in S. cerevisiae

| Engineering Intervention | Key Achievement | Resulting Astaxanthin Yield | Fold Improvement |

|---|---|---|---|

| Baseline Strain | Initial pathway introduction | Not explicitly stated | - |

| Precursor Enhancement | Overexpression of CrtE03M, tHMG1, CrtI, CrtYB | Increased β-carotene supply | Foundational |

| Enzyme Evolution | Directed evolution of OBKT | OBKTM mutant with 2.4x activity | Foundational |

| Combinatorial Optimization | Balancing expression levels & generating diploid strain | 8.10 mg/g DCW (47.18 mg/L) | Highest reported yield at the time [3] |

This case study underscores a critical principle: overcoming complex metabolic bottlenecks often requires a combinatorial strategy that integrates multiple engineering approaches, with HTS (in this case, a color-based screen) serving as the essential engine for discovering improved enzymatic components [3].

Experimental Protocols for Key HTS Methodologies

Protocol: Color-Based High-Throughput Screening for Directed Enzyme Evolution

This protocol is adapted from the astaxanthin case study for the discovery of improved β-carotene ketolase mutants [3].

- Library Construction: Generate a diverse mutant library of the target enzyme gene (e.g., β-carotene ketolase) via error-prone PCR or other gene mutagenesis techniques. Clone the library into an appropriate expression vector.

- Strain Transformation: Transform the mutant library into a microbial host (e.g., S. cerevisiae) that is engineered to produce the enzyme's substrate (e.g., β-carotene). The parent strain should ideally produce a visible background color.

- Plating and Cultivation: Plate the transformed cells on solid medium at a density that allows for the visual distinction of individual colonies. Incubate until colonies are fully formed.

- Visual Screening: Manually screen the plates for colonies exhibiting a color change indicative of higher product conversion (e.g., from yellow β-carotene to red astaxanthin/canthaxanthin).

- Hit Recovery and Validation: Pick the candidate colonies (hits) with the most intense target color and re-streak for purity. Validate improved production and enzyme activity using analytical methods like LC-MS or HPLC.

Protocol: Employing a Transcription Factor Biosensor for FACS-Based Screening

This protocol outlines the use of TF-based biosensors to isolate high-producing strains from a library [2] [1].

- Biosensor Integration: Construct a genetic circuit where a TF responsive to the target metabolite controls the expression of a fluorescent reporter protein (e.g., GFP). Integrate this biosensor into the host strain's genome or maintain it on a plasmid.

- Library Generation: Create a library of strain variants through methods such as MAGE (Multiplex Automated Genome Engineering), promoter/library engineering, or genome shuffling.

- Cultivation: Grow the library of variants in a suitable liquid medium under inducing conditions.

- FACS Sorting: Harvest cells during the mid-to-late exponential growth phase. Use a Fluorescence-Activated Cell Sorter to isolate the top 1-5% of the population exhibiting the highest fluorescence intensity, indicating high intracellular concentration of the target metabolite.

- Recovery and Re-sorting: Collect the sorted cells, allow them to recover and proliferate, and repeat the sorting process for 2-3 rounds to enrich the population for high-producers.

- Clone Characterization: Plate the final enriched population to obtain single clones. Characterize individual clones for stable and high-level production of the target molecule.

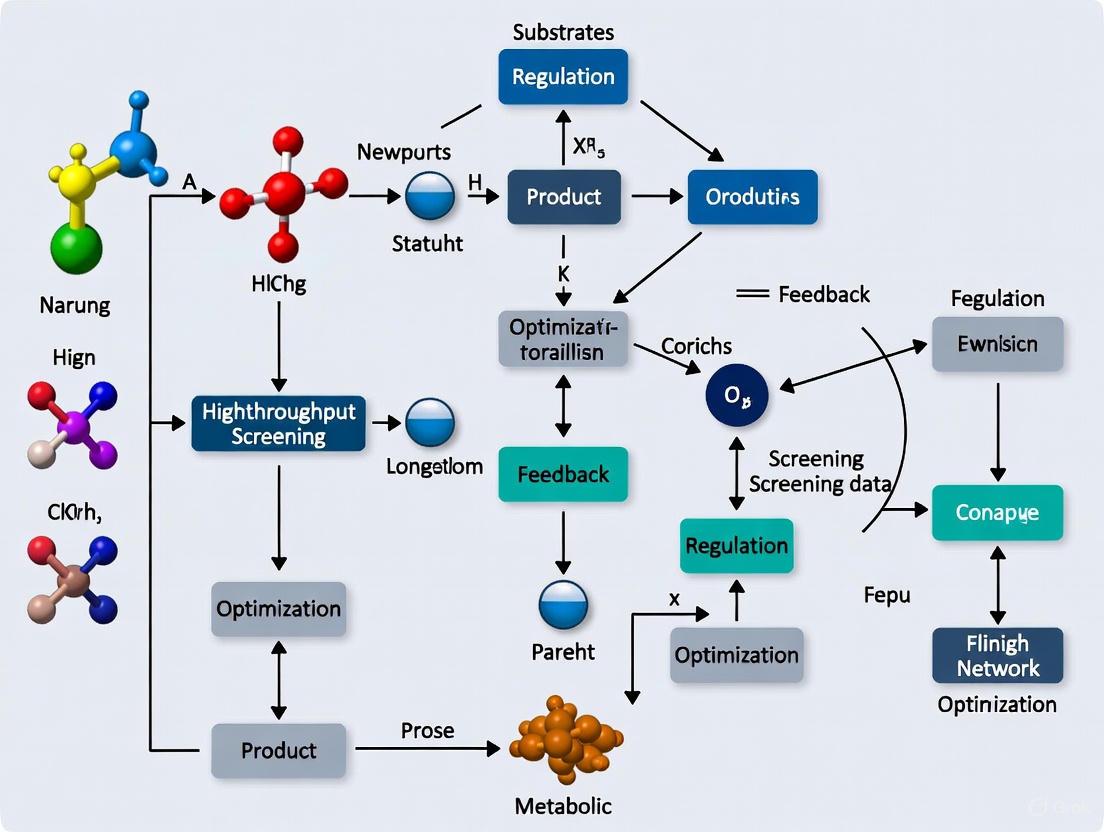

Visualizing the Workflow and Biosensor Mechanisms

The following diagrams, generated using Graphviz, illustrate the core concepts and workflows discussed in this whitepaper.

DBTL Cycle in Metabolic Engineering

Transcription Factor-Based Biosensor Mechanism

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Tools for HTS in Metabolic Engineering

| Tool / Reagent | Function | Specific Example / Note |

|---|---|---|

| Mutant Enzyme Libraries | Provides genetic diversity for directed evolution. | Error-prone PCR library of β-carotene ketolase (OBKT) [3]. |

| Transcription Factor Biosensors | Converts metabolite concentration into measurable fluorescence output. | TF-based circuits for sensing succinate, butanol, or malonyl-CoA [2]. |

| FRET Biosensors | Enables real-time monitoring of metabolite dynamics in live cells. | T6P sensor using TreR protein fused to eCFP/Venus [2]. |

| Fluorescent Reporters | Acts as the optical output for biosensors, enabling FACS. | Green Fluorescent Protein (GFP) [2] [1]. |

| Fluorescence-Activated Cell Sorter (FACS) | Physically enriches high-performing cells from large libraries. | Critical for screening TF-based biosensor libraries [1]. |

| Genome Editing Tools (e.g., CRISPR-Cas9) | Enables rapid and precise genomic integration of pathways and biosensors. | Facilitates the "Build" phase of the DBTL cycle [1]. |

| Promoter & RBS Libraries | Systematically varies gene expression levels to balance pathway flux. | Used in multivariate modular metabolic engineering [1]. |

The combinatorial bottleneck is a fundamental constraint in the rational design of microbial cell factories. As the case of astaxanthin production clearly demonstrates, overcoming this bottleneck requires the integration of combinatorial strain construction with rigorous High-Throughput Screening methodologies. Biosensors, particularly TF-based systems, are emerging as the linchpin of this strategy, providing the necessary link between intracellular metabolic flux and a scalable, measurable phenotype [2] [1].

Looking forward, the integration of HTS data with machine learning and computational modeling will further close the DBTL loop, transforming metabolic engineering from a largely empirical pursuit into a predictive science. The continued development of novel biosensors for a wider range of metabolites, coupled with advances in microfluidics and single-cell analytics, promises to deepen the resolution and broaden the scope of HTS. In this evolving landscape, proficiency in developing and applying HTS strategies will remain an imperative for researchers aiming to unlock the full potential of metabolic networks for the production of renewable chemicals and pharmaceuticals.

Metabolic networks and their dynamics represent a foundational framework for understanding cellular physiology. The integration of these networks with the concept of metabolic flux—the rate of metabolite turnover through biochemical pathways—provides a dynamic perspective on cellular function [4]. In modern drug discovery and metabolic engineering, the manipulation of these systems is accelerated by high-throughput screening (HTS), a method that enables the rapid execution of millions of chemical, genetic, or pharmacological tests [5]. Together, these elements form a critical knowledge base for researchers aiming to optimize metabolic networks for therapeutic intervention or bioproduction. This guide examines the core principles, methodologies, and tools that define this interdisciplinary field, providing a technical foundation for scientists and drug development professionals engaged in metabolic optimization research.

Metabolic Networks: Structure and Reconstruction

Definition and Biological Significance

A metabolic network is the complete set of metabolic and physical processes that determine the physiological and biochemical properties of a cell [6]. These networks comprise not only the chemical reactions of metabolism and metabolic pathways but also the regulatory interactions that guide these reactions [6]. From a systems biology perspective, cellular metabolism can be computationally represented by a large set of metabolites connected by biochemical reactions [7]. When a system includes all possible reactions performed by a cell, it is termed a genome-scale metabolic network [7].

Metabolic networks function as powerful tools for studying and modeling metabolism, with applications ranging from basic biological insight to clinical diagnostics [6] [7]. For instance, they can be used to detect comorbidity patterns in diseased patients, as the cascading effects of enzyme defects at one reaction can affect fluxes of subsequent reactions, coupling metabolic diseases associated with these connected pathways [6].

Reconstruction of Metabolic Networks

The process of metabolic network reconstruction, also known as metabolic pathway analysis, correlates the genome with molecular physiology by breaking down metabolic pathways into their respective reactions and enzymes [8]. The general process for building a reconstruction follows these key stages:

- Draft a reconstruction: Compile data from genomic and biochemical databases to identify metabolic genes and their associated reactions.

- Refine the model: Manually curate and verify the network using experimental data and literature evidence.

- Convert model into a mathematical/computational representation: Transform the biochemical network into a stoichiometric format amenable to simulation.

- Evaluate and debug model through experimentation: Validate and refine the model through comparison with experimental results [8].

Table 1: Key Databases for Metabolic Network Reconstruction

| Database | Scope | Primary Use |

|---|---|---|

| KEGG | Genes, proteins, reactions, pathways | Reference pathway maps and gene annotation [8] |

| BioCyc/EcoCyc | Enzymes, genes, reactions, pathways | Organism-specific metabolic databases [6] [8] |

| MetaCyc | Enzymes, reactions, pathways | Encyclopedia of experimentally defined metabolic pathways [8] |

| BRENDA | Enzymes, reactions | Comprehensive enzyme functional data [8] |

| BiGG | Reactions, metabolites, genes | Biochemically, genetically, and genomically structured models [8] |

Computational Representations

The mathematical foundation of metabolic network modeling centers on the stoichiometric matrix (S), which stores metabolite connectivity in terms of reaction stoichiometric coefficients [7]. For a network of n reactions and m metabolites, S has m columns and n rows. The dynamics of the metabolic network are described by the equation:

dC/dt = S·υ

where C is the vector of metabolite concentrations, t is time, and v is the flux vector [7]. Under the steady-state assumption, which simplifies computational complexity by assuming internal metabolites are not accumulated, this equation reduces to:

S·υ = 0

This equation represents the internal mass balance of the network, where the sum of reaction fluxes producing any metabolite equals the sum of fluxes consuming it [7].

Figure 1: Metabolic Network Reconstruction Workflow

Metabolic Flux: The Dynamic Dimension

Fundamental Principles

In biochemistry, metabolic flux refers to the rate of turnover of molecules through a metabolic pathway [4]. Flux is regulated by the enzymes involved in a pathway and is vital for regulating pathway activity under different conditions [4]. The flux of metabolites through each reaction (J) represents the rate of the forward reaction (Vf) less that of the reverse reaction (Vr):

J = Vf - Vr

At equilibrium, there is no flux, and throughout a steady-state pathway, the flux is determined to varying degrees by all steps in the pathway [4]. This concept can be understood by analogy to road networks: decreased flux at one point (e.g., a roadblock) can lead to increased flux through alternative routes, demonstrating how networks are interconnected and changes in one part may be transmitted throughout the system [9].

Control and Regulation of Flux

Control of flux through a metabolic pathway requires that the degree to which metabolic steps determine the metabolic flux varies based on the organism's metabolic needs, and that this change in flux is communicated throughout the metabolic pathway to maintain steady-state [4]. Key principles of flux control include:

- The control of flux is a systemic property, depending to varying degrees on all interactions in the system.

- The control of flux is measured by the flux control coefficient.

- In a linear chain of reactions, the flux control coefficient has values between zero and one, where zero indicates no influence and one indicates complete control [4].

Existing metabolic networks control molecular movement through enzymatic steps primarily by regulating enzymes that catalyze irreversible reactions [4]. The movement through reversible steps is generally regulated by concentration of products and reactants rather than direct enzyme regulation [4].

Relationship to Phenotype

Metabolic fluxes represent the ultimate representation of the cellular phenotype when expressed under certain conditions [4]. They are a function of gene expression, translation, post-translational protein modifications, and protein-metabolite interactions [4]. This relationship is particularly evident in:

- Regulation of mammalian cell growth: Rapidly growing cells show changes in metabolism, particularly glucose metabolism, as rate of metabolism controls signal transduction pathways that coordinate activation of transcription factors and cell-cycle progression [4].

- Cancer metabolism: Tumor cells exhibit enhanced glucose metabolism compared to normal cells, making understanding of flux alterations critical for therapeutic development [4].

Table 2: Methods for Measuring and Analyzing Metabolic Flux

| Method | Principle | Applications |

|---|---|---|

| Flux Balance Analysis (FBA) | Constraint-based optimization using stoichiometric models | Prediction of flux distributions in genome-scale networks [10] [7] |

| Nuclear Magnetic Resonance (NMR) | Detection of isotopic labeling patterns | Non-invasive flux determination in vivo [4] |

| Gas Chromatography-Mass Spectrometry (GC-MS) | Separation and identification of metabolite species | High-sensitivity flux ratio determination [4] |

| Metabolic Control Analysis | Quantification of flux control coefficients | Understanding distributed control in pathways [4] |

| (^13)C Metabolic Flux Analysis | Tracing of (^13)C-labeled substrates | Experimental determination of intracellular fluxes [4] |

High-Throughput Screening: Technological Acceleration

Principles and Methodologies

High-throughput screening (HTS) is a method for scientific discovery especially used in drug discovery and relevant to biology, materials science, and chemistry [5]. Using robotics, data processing/control software, liquid handling devices, and sensitive detectors, HTS allows researchers to quickly conduct millions of chemical, genetic, or pharmacological tests [5]. Through this process, researchers can rapidly identify active compounds, antibodies, or genes that modulate a particular biomolecular pathway.

The key labware for HTS is the microtiter plate, featuring a grid of small wells, with common formats including 96, 384, 1536, 3456, or 6144 wells [5]. A screening facility typically maintains a library of stock plates whose contents are carefully catalogued. Assay plates are created as needed by pipetting small amounts of liquid (often nanoliters) from stock plates to empty plates [5].

Automation and Workflow

Automation is essential to HTS utility, typically involving integrated robot systems that transport assay microplates between stations for sample and reagent addition, mixing, incubation, and final readout [5]. An HTS system can usually prepare, incubate, and analyze many plates simultaneously, dramatically accelerating data collection. Modern HTS robots can test up to 100,000 compounds per day, with systems capable of exceeding this throughput classified as ultra-high-throughput screening (uHTS) [5].

The general HTS workflow involves:

- Assay plate preparation: Transferring compounds from stock plates to assay plates.

- Biological entity introduction: Adding proteins, cells, or other biological material to wells.

- Incubation: Allowing time for biological interaction.

- Measurement: Detecting signals manually or with automated readers.

- Hit confirmation: Retesting promising compounds from initial screens [5].

Figure 2: High-Throughput Screening Workflow

Experimental Design and Data Analysis

The massive data generation capacity of HTS introduces fundamental challenges in extracting biochemical significance from results, requiring appropriate experimental designs and analytic methods for both quality control and hit selection [5]. Critical aspects include:

- Quality control (QC): Implementing good plate design, selecting effective positive and negative controls, and developing effective QC metrics to identify assays with inferior data quality [5].

- Hit selection: Applying statistical methods to identify compounds with desired effects, with approaches differing between primary screens (usually without replicates) and confirmatory screens (with replicates) [5].

Quality assessment measures include signal-to-background ratio, signal-to-noise ratio, signal window, assay variability ratio, Z-factor, and strictly standardized mean difference (SSMD) [5]. For hit selection in primary screens without replicates, methods include z-score, SSMD, robust z*-score, B-score, and quantile-based methods [5].

Integration for Metabolic Network Optimization

Optimization Strategies and Algorithms

Optimization of metabolic networks typically involves manipulating networks to improve desired characteristics of biochemical systems, such as maximizing normal product yield or redirecting production to normally residual fluxes [10]. Two primary modeling approaches are:

- Kinetic models: Describe complete network dynamics but require extensive parameter estimation.

- Stoichiometric models: Based on reaction stoichiometry, easier to obtain but less predictive of system dynamics [10].

Flux Balance Analysis (FBA) has emerged as a key constraint-based method for studying genome-scale metabolic networks [11] [7]. FBA determines optimal flux distribution through a network described by stoichiometry and reaction constraints [10]. The mathematical core of FBA is a linear programming problem where a system of mass-balanced equations and intake fluxes defines a constrained solution space, with an objective function selected to find an optimal solution within this space [7].

Advanced Frameworks: Flux-Dependent Graphs

Recent advances include frameworks for constructing flux-based graphs that encode directionality of metabolic flows, with edges representing metabolite flow from source to target reactions [11]. This methodology can be applied:

- Without biological context: By modeling fluxes probabilistically (Normalised Flow Graph).

- With environmental context: By incorporating flux distributions from constraint-based approaches like FBA (Mass Flow Graph) [11].

These flux-dependent graphs address limitations of traditional metabolic graph constructions by incorporating directional information, naturally discounting over-representation of pool metabolites, and enabling analysis of context-specific metabolic responses at a system level [11].

Visualization of Network Dynamics

Visualization techniques are crucial for interpreting time-course metabolomic data within metabolic networks. GEM-Vis is one method that enables visualization of time-series data in the context of metabolic network maps through animation [12]. This approach uses node fill level to represent metabolite amounts at each time point, allowing intuitive estimation of quantities and tracking of changes across the network [12].

Table 3: Optimization Methods for Metabolic Networks

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| Flux Balance Analysis (FBA) | Linear programming optimization of flux distribution | No need for detailed kinetic parameters; genome-scale application [10] [7] | Relies on steady-state assumption; may predict non-unique solutions [7] |

| Elementary Modes | Analysis of minimal functional subnetworks | Identifies all possible routes through network [7] | Computationally intensive for large systems [7] |

| Minimal Cut Sets | Identification of essential reaction sets | Reveals metabolic bypasses; analyzes robustness [7] | Dual approach to elementary modes [7] |

| Bi-Level Optimization | Hierarchical optimization (e.g., OptKnock) | Identifies gene knockout strategies for strain design [10] | May require multiple objective functions [10] |

| Geometric Programming | Mathematical optimization for special function forms | Efficient solving of large-scale problems [10] | Requires problem formulation in specific form [10] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Materials for Metabolic Network Studies with HTS

| Reagent/Material | Function | Application Context |

|---|---|---|

| Microtiter Plates | Multi-well platforms for parallel experimental testing | Core labware for HTS; available in 96, 384, 1536, and higher densities [5] |

| Dimethyl Sulfoxide (DMSO) | Solvent for chemical compound libraries | Maintaining compound solubility and stability in stock and assay plates [5] |

| (^13)C-Labeled Substrates | Isotopically labeled metabolic precursors | Tracing metabolic fluxes through NMR or GC-MS analysis [4] |

| Stoichiometric Models | Mathematical representations of metabolic networks | Constraint-based analysis including FBA [10] [7] |

| Enzyme Inhibitors/Activators | Chemical modulators of specific metabolic enzymes | Perturbation studies to analyze network robustness and flux control [7] |

| Robotic Liquid Handling Systems | Automated pipetting and reagent distribution | Enabling high-throughput screening of compound libraries [5] |

| Sensitive Detectors | Measurement of assay signals (fluorescence, luminescence) | Detection of biological responses in HTS campaigns [5] |

| Metabolite Standards | Reference compounds for identification and quantification | Calibration of analytical instruments for metabolomic studies [12] |

Figure 3: Integrated Workflow for Metabolic Network Optimization with HTS

High Throughput Screening (HTS) represents a cornerstone technology in modern drug discovery and systems biology, enabling the rapid experimental analysis of thousands of biological compounds against therapeutic targets. This technological paradigm has revolutionized pharmaceutical development by accelerating the identification of lead compounds and facilitating complex metabolic network optimization. The integration of HTS with computational systems biology approaches, particularly metabolic network analysis, has created powerful synergies for identifying critical drug targets and repurposing existing therapeutics. Metabolic network analysis provides a computational framework for interrogating pathogen systems and identifying essential genes and synthetic lethal combinations that serve as high-priority therapeutic targets [13]. The strategic relevance of HTS from 2024 to 2030 is underpinned by several converging macro forces—including technological advancements in automation and robotics, rising demand for precision medicine, and the urgent global need for accelerated drug discovery in light of emerging infectious diseases and non-communicable disorders [14].

This whitepaper provides a comprehensive analysis of the HTS market trajectory, examining both its commercial growth patterns and its pivotal role in advancing metabolic engineering and drug discovery pipelines. We explore how the combination of experimental HTS data with computational network analysis creates a powerful feedback loop for identifying critical pathway disruptions and optimizing therapeutic interventions.

Global HTS Market Analysis

Market Size and Growth Projections

The High Throughput Screening market demonstrates robust global expansion driven by increasing R&D investments in pharmaceutical and biotechnology industries and the growing need for efficient drug discovery processes. Market analysis reveals consistent growth patterns across multiple forecasting periods, with the compound annual growth rate (CAGR) ranging between 8-11.8% depending on the specific market segment and geographic region [15] [16] [17].

Table 1: Global HTS Market Size and Growth Projections

| Market Segment | 2024/2025 Value (USD Billion) | 2030/2035 Projection (USD Billion) | CAGR | Source |

|---|---|---|---|---|

| Overall HTS Market | $21.4 (2024) | $35.2 (2030) | 8.5% | [14] |

| HTS Market | $32.0 (2025) | $82.9 (2035) | 10.0% | [16] |

| HTS Wire Market | $0.92 (2025) | $2.2 (2033) | 11.8% | [15] |

| HTS Market (Technavio) | - | $18.8 (2029) | 10.6% | [17] |

The variation in market size estimates across different reports can be attributed to differences in segmentation methodology, with some analyses focusing specifically on HTS instrumentation (HTS Wire Market) while others encompass the broader ecosystem including reagents, services, and data analytics solutions.

Regional Market Distribution

The global HTS market demonstrates distinct regional patterns, with North America maintaining dominance while the Asia-Pacific region emerges as the fastest-growing market.

Table 2: HTS Market Regional Analysis (2024-2030)

| Region | 2024 Market Value (USD Billion) | 2030 Projection (USD Billion) | CAGR | Market Share % (2024) | Key Growth Drivers |

|---|---|---|---|---|---|

| North America | $8.8 | $13.93 | 7.9% | ~48% | Strong research infrastructure, substantial R&D investments, NIH/NCATS funding ($926.1M requested FY2025) [14] |

| Europe | $5.44 | $8.05 | 6.8% | ~25% | EU consortia (e.g., European Lead Factory), Horizon Europe funding programs [14] |

| Asia-Pacific | $3.81 | $6.47 | 9.2% | ~18% | Expanding biopharmaceutical sector, government initiatives, increasing outsourcing to CROs [14] |

| Rest of World | ~$3.35 | ~$6.75 | ~10.5% | ~9% | Gradual infrastructure development, foreign investments [15] |

North America's leadership position stems from its well-established research infrastructure, presence of major pharmaceutical companies, and substantial public and private R&D investments. The region benefits from initiatives such as the NCATS (National Center for Advancing Translational Sciences) with a FY2025 budget request of approximately $926.1 million supporting automation, compound management, and translational screening [14]. Europe maintains a strong position driven by collaborative consortia such as the European Lead Factory (ELF), which has executed campaigns across approximately 270 targets and 15 phenotypic assays, demonstrating continental collaboration and shared infrastructure [14].

The Asia-Pacific region represents the most dynamic growth market, fueled by expanding biopharmaceutical sectors in China, India, and Japan, along with increasing government support for precision medicine initiatives. Japan and South Korea lead in robotic automation and high-content screening adoption, while China scales state-supported HTS nodes with large, local compound libraries [18] [14]. Specific country CAGRs highlight this rapid expansion: China (13.1%), Japan (13.7%), and South Korea (14.9%) [16].

Market Segmentation Analysis

Technology Segment

Cell-based assays dominate the technology segment, holding 39.4% market share in 2025 [16]. This segment's leadership position is attributed to the ability of cell-based assays to deliver physiologically relevant data and predictive accuracy in early drug discovery. The adoption has been supported by technological improvements in live-cell imaging, fluorescence assays, and multiplexed platforms that enable simultaneous analysis of multiple targets [16]. Ultra-high-throughput screening (uHTS) represents the fastest-growing technology segment with a projected CAGR of 12% through 2035, driven by its unprecedented ability to screen millions of compounds quickly using 1536-well and emerging 3456-well formats [16] [14].

Application Segment

Primary screening leads the application segment with 42.7% market share in 2025, maintaining its essential role in identifying active compounds from large chemical libraries at the initial phase of drug discovery [16]. The target identification segment demonstrates the strongest growth trajectory with a projected CAGR of 12% through 2035, driven by its capacity to rapidly assess vast chemical libraries against diverse biological targets [16]. This segment's importance is further amplified by the increasing prevalence of chronic diseases and the need for more effective treatments requiring accurate target identification and validation [17].

End-User Segment

Pharmaceutical and biotechnology companies constitute the largest end-user segment, leveraging HTS for internal drug discovery programs and increasingly adopting high-content screening (HCS) and label-free technologies for complex biologics workflows [14]. Contract research organizations (CROs) represent the fastest-growing segment, demonstrating double-digit growth as pharmaceutical companies increasingly outsource primary screens to conserve capital and access specialized expertise [14]. Academic and research institutes maintain a significant presence, often operating shared HTS facilities that leverage public compound libraries and training resources [16].

Metabolic Network Optimization: Integration with HTS

Fundamental Principles

Metabolic network analysis and optimization provides a computational framework for interrogating pathogenic systems and identifying essential genes and synthetic lethal combinations that serve as high-priority therapeutic targets. The integration of HTS with metabolic network analysis creates a powerful synergy between experimental screening and computational prediction, enhancing the efficiency of drug discovery pipelines. Metabolic network models are typically constructed from annotated genomes and biochemical resources, providing a structured representation of metabolic pathways and flux distributions [13].

Constraint-based modeling techniques, particularly Flux Balance Analysis (FBA), enable the prediction of metabolic flux distributions under different genetic and environmental conditions. FBA computes flow rates through metabolic networks that maximize or minimize specific cellular objectives (typically biomass production) under steady-state constraints [10]. The mathematical formulation of FBA can be represented as:

Maximize: ( Z = c^T v ) Subject to: ( S \cdot v = 0 ) ( v{min} \leq v \leq v{max} )

Where ( S ) is the stoichiometric matrix, ( v ) is the flux vector, and ( c ) is a vector weighting metabolic fluxes to form the cellular objective.

Optimization Methodologies for Metabolic Networks

Three primary optimization strategies with different levels of complexity have been developed for metabolic network analysis and integration with HTS data:

Direct Optimization: This approach assumes complete knowledge of the metabolic network and its kinetic parameters. Using Pontryagin's Maximum Principle, it has been demonstrated that optimal control for a class of metabolic networks, where the product favoring cell growth competes with the desired product yield, can only assume values on the extremes of the interval of its possible values [10]. For a prototype network where a control variable u redirects flux between biomass production and desired product formation, the optimal control profile involves a single switch from u=0 (maximizing growth) to u=1 (maximizing product yield) at a precisely determined switching time (t_reg) [10].

Bi-Level Optimization: This methodology addresses the common limitation of incomplete information in metabolic network models by combining both kinetic and stoichiometric models. Bi-Level optimization frameworks, such as OptKnock, implement a nested structure where the upper level optimizes for a engineering objective (e.g., biochemical production) while the lower level models cellular metabolism using FBA [10]. This approach has been shown to provide a good approximation of the optimum attainable with full information on the original network.

Geometric Programming (GP): GP represents a powerful mathematical optimization tool that can be applied to problems where the objective and constraint functions have a special form. GP is particularly valuable for metabolic network optimization because it can solve large-scale problems with extreme efficiency and reliability. Metabolic networks formulated as S-Systems (a specific type of power-law representation) can be solved with GP after minimal adaptation [10].

Figure 1: HTS and Metabolic Network Analysis Workflow

MetDP: Metabolic Network-Guided Drug Pipeline

The MetDP framework provides a systematic methodology for integrating metabolic network analysis with HTS to prioritize drug targets and repurpose existing therapeutics [13]. This approach has been successfully applied to neglected tropical diseases such as leishmaniasis, demonstrating the potential for rapid identification of novel therapeutic applications for existing FDA-approved drugs.

The MetDP pipeline implements sequential filtering criteria:

- Target Identification: Mapping metabolic genes to known drug targets using sequence similarity and database mining (e.g., DrugBank, STITCH)

- Druggability Assessment: Applying druggability indices (0-1 scale) from resources like TDR Targets database

- Essentiality Analysis: Using FBA to identify genes whose deletion causes significant growth defects (>30% reduction)

- Flux Variability Analysis: Identifying reactions with limited flux flexibility as high-priority targets

- Synthetic Lethality Screening: Detecting non-trivial lethal gene combinations where neither single deletion is lethal but the double deletion is lethal

- Toxicity Filtering: Applying toxicity ratings based on the Hodge and Sterner scale to prioritize safer compounds

Application of MetDP to Leishmania major identified 15 high-priority target genes and 8 synthetic lethal pairs from a metabolic reconstruction of 560 genes, ultimately yielding 254 FDA-approved drugs with potential antileishmanial activity [13]. Experimental validation confirmed the antileishmanial activity of halofantrine (an antimalarial) and identified superadditive drug combinations involving disulfiram, demonstrating the practical utility of this integrated approach.

Experimental Protocols and Methodologies

High Throughput Screening Workflow

A standardized HTS workflow incorporates multiple stages from assay development to hit validation:

Assay Design and Development: Design biologically relevant assay systems with appropriate controls. Cell-based assays should incorporate physiologically relevant models including 3D culture systems and organoids where appropriate [14]. Implement robust positive controls and determine Z-factor values to quantify assay quality (>0.5 indicates excellent assay) [17].

Compound Library Management: Prepare compound libraries in appropriate solvent systems (typically DMSO). Implement quality control measures including compound purity verification and concentration normalization. Modern HTS facilities manage libraries exceeding 1 million compounds with automated storage and retrieval systems [14].

Automated Screening Execution: Transfer assays to microtiter plates (96, 384, 1536-well formats) using automated liquid handling systems. For uHTS, 1536-well and emerging 3456-well formats are employed to minimize reagent consumption and increase throughput [14]. Incubate plates under appropriate environmental conditions.

Signal Detection and Data Acquisition: Measure assay endpoints using appropriate detection methods (absorbance, fluorescence, luminescence, label-free technologies). High-content screening incorporates automated microscopy and image analysis to extract multiparameter data from each well [17].

Hit Identification and Validation: Apply statistical thresholds to identify primary hits (typically >3 standard deviations from mean). Confirm hits through dose-response studies (IC50 determination) and counter-screens to eliminate artifacts [17].

Figure 2: HTS Experimental Workflow

Metabolic Network Analysis Protocol

The integration of HTS data with metabolic network analysis follows a structured computational protocol:

Network Reconstruction:

- Compile genome-scale metabolic reconstruction from annotated genomes and biochemical databases

- Define stoichiometric matrix (S) representing all metabolic reactions

- Establish biomass composition equation reflecting cellular growth requirements

- Define exchange reactions representing metabolite uptake and secretion

Constraint-Based Modeling:

- Apply mass balance constraints: ( S \cdot v = 0 )

- Define flux capacity constraints: ( v{min} \leq v \leq v{max} )

- Set environmental constraints (nutrient availability) based on experimental conditions

- Implement FBA to predict growth rates and flux distributions

Gene Essentiality Analysis:

- Simulate single gene knockouts by constraining associated reaction fluxes to zero

- Compare predicted growth rates to wild-type

- Identify essential genes (growth rate <5% of wild-type) and growth-defective genes (growth rate <30% of wild-type)

Synthetic Lethality Screening:

- Perform double gene deletion simulations for all non-essential gene pairs

- Identify synthetic lethal pairs where double deletion is lethal but individual deletions are not

- Filter results based on druggability indices and functional associations

Integration with HTS Data:

- Map HTS hit compounds to their protein targets

- Cross-reference with essential genes and synthetic lethal pairs from metabolic analysis

- Prioritize compounds targeting metabolic vulnerabilities identified in silico

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for HTS and Metabolic Analysis

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Cell-based Assay Kits | Functional assessment of compound effects in biological systems | Provide physiologically relevant data; optimized for 2D/3D culture models [16] |

| Label-free Detection Reagents | Enable real-time monitoring of binding events without fluorescent tags | Reduce artifacts; valuable for GPCR/kinase and biologics screening [14] |

| High-content Screening Reagents | Multiplexed analysis of multiple cellular parameters | Combine with automated imaging for phenotypic screening [17] |

| Metabolic Profiling Kits | Quantification of metabolite levels and flux measurements | Validate computational predictions of metabolic flux [13] |

| Compound Libraries | Collections of chemical compounds for screening | Include FDA-approved drugs for repurposing campaigns [13] |

| CRISPR/Cas9 Screening Libraries | Genome-wide gene knockout for functional genomics | Identify essential genes and synthetic lethal interactions [14] |

| Liquid Handling Reagents | Optimized solutions for automated pipetting systems | Minimize viscosity and surface tension for nanoliter dispensing [14] |

Emerging Trends and Future Outlook

Technological Innovations

The HTS landscape is being transformed by several converging technological innovations that are reshaping screening paradigms and expanding applications:

Artificial Intelligence and Machine Learning: AI/ML algorithms are being integrated throughout the HTS workflow, from assay design and virtual screening to hit triage and lead optimization. Machine learning models predict hit likelihood, optimize library selection, and prioritize follow-up compounds, significantly compressing false-positive cascades and reducing reagent costs [19] [14]. The integration of AI has demonstrated potential to improve forecast accuracy by up to 18% in materials science applications and is now being adapted to biological screening [17].

Advanced Cellular Models: The transition from 2D monocultures to 3D organoid and microphysiological systems (MPS) represents a fundamental shift in HTS approaches. These advanced models provide more physiologically relevant microenvironments that improve clinical signal fidelity and re-rank chemical matter earlier in the discovery process—significantly impacting kill/continue decisions and portfolio ROI [14]. Organoid/MPS-based HTS is particularly valuable for complex disease areas such as oncology and neurological disorders where tissue context is critical.

Miniaturization and Ultra-High-Throughput Screening: Routine implementation of 1536-well formats and emerging 3456-well platforms continues to drive down per-data-point costs while increasing screening capacity. This miniaturization trend is enabled by advances in low-volume liquid handling, particularly acoustic dispensing technologies that enable precise nanoliter-volume transfers [14]. These developments support million-well campaigns that were previously impractical due to resource constraints.

Label-Free and Kinetic Analytics: Surface plasmon resonance (SPR), bio-layer interferometry (BLI), and impedance-based platforms are scaling into high-throughput modes, enabling real-time binding kinetics without labeling artifacts. These technologies provide valuable mechanistic insights for challenging target classes such as GPCRs, ion channels, and protein-protein interactions [14].

Economic Impact and Strategic Implications

The growing adoption of HTS technologies is generating significant economic impacts across the pharmaceutical and biotechnology sectors:

Accelerated Discovery Timelines: Implementation of HTS has reduced drug discovery timelines by approximately 30%, enabling faster market entry for new therapeutics [17]. The throughput capacity of modern HTS platforms has been amplified to screen thousands of compounds in short timeframes, translating to substantial savings as labor and material costs associated with traditional screening methods are minimized [17].

Cost Efficiency and Resource Optimization: HTS technologies have demonstrated potential to lower operational costs by up to 15% while improving forecast accuracy by approximately 20% [17]. The ability to perform parallel assays and automate processes leads to more streamlined workflows, allowing for faster time-to-market and improved resource allocation [17].

Democratization of Drug Discovery: The expansion of CRO-based HTS services and the availability of public compound libraries (e.g., ChEMBL with ~2.8M distinct compounds across ~17.8k targets) are democratizing access to high-throughput screening capabilities [14]. This trend enables smaller biotech firms and academic researchers to access advanced screening technologies without large capital investments, potentially increasing innovation diversity [18].

Shift in Business Models: Pharmaceutical companies are increasingly consolidating capital-intensive robotics in fewer, higher-utilization hubs while leveraging CRO networks for flexible capacity [14]. This strategic shift optimizes capital allocation while maintaining access to state-of-the-art screening capabilities as needed throughout the drug discovery pipeline.

The continued evolution of HTS technologies and their integration with computational approaches like metabolic network analysis promises to further transform drug discovery efficiency and success rates. As these technologies mature, we anticipate increased convergence between experimental and computational screening approaches, creating more predictive and physiologically relevant discovery platforms that significantly reduce late-stage attrition rates—the single greatest cost driver in pharmaceutical R&D.

The High Throughput Screening sector demonstrates robust growth trajectory and expanding economic impact, driven by technological innovations and increasing integration with computational approaches such as metabolic network analysis. The market is projected to grow at a compound annual growth rate of 8-11.8%, reaching $35-83 billion by 2030-2035 depending on segment definitions [15] [16] [14]. This growth is underpinned by the critical role of HTS in addressing fundamental challenges in drug discovery, particularly the need to improve productivity and reduce late-stage attrition.

The integration of HTS with metabolic network analysis represents a particularly promising frontier, creating powerful synergies between experimental screening and computational prediction. Frameworks such as MetDP demonstrate how this integration can systematically prioritize drug targets and repurpose existing therapeutics, potentially accelerating the discovery of treatments for neglected and emerging diseases [13]. As AI-guided screening, advanced cellular models, and label-free technologies continue to mature, we anticipate further transformation of HTS capabilities and applications.

For researchers and drug development professionals, the evolving HTS landscape presents both opportunities and challenges. Success will require multidisciplinary expertise spanning experimental biology, automation engineering, data science, and metabolic modeling. Organizations that effectively integrate these capabilities and leverage the growing ecosystem of CRO services and public resources will be best positioned to capitalize on the continuing evolution of high-throughput screening and its applications in metabolic network optimization and drug discovery.

The integration of metabolic network optimization and high-throughput screening (HTS) is revolutionizing the development of microbial cell factories for pharmaceutical production. This whitepaper provides an in-depth technical guide on core application areas, detailing how advanced computational tools and experimental protocols enable the efficient bioproduction of drugs, biofuels, and complex chemicals. Framed within a broader thesis on metabolic engineering, this document explores the synergistic relationship between in silico pathway design and rapid experimental validation, offering researchers and drug development professionals a roadmap for accelerating the creation of sustainable, high-yield biomanufacturing processes.

The pharmaceutical and chemical industries are undergoing a significant transformation, moving away from traditional fossil-fuel-based linear economies toward a sustainable bio-based circular economy. Central to this shift are microbial cell factories—engineered microorganisms that convert renewable biological resources into value-added chemicals and pharmaceuticals. The establishment of a true bioeconomy has the potential to address global challenges, including climate change, resource depletion, and public health [20] [21]. However, the complexity of biochemicals often limits their industrial scalability, with engineering strategies previously limited to relatively simple compounds. The key to unlocking the production of more complex molecules lies in combining advanced computational pathway design with sophisticated high-throughput screening methodologies to optimize metabolic networks with unprecedented speed and precision.

Computational Pathway Design: From Linear to Balanced Networks

Algorithmic Approaches to Metabolic Engineering

Computational pathway design has emerged as a groundbreaking methodology that diminishes reliance on expensive trial-and-error approaches. Strategies for biosynthetic pathway reconstruction depend on the types of chemicals and host strains: whether the pathway is native, non-native but existing, or completely novel and created through engineering [20].

Graph-based approaches use graph-search algorithms to find pathways through large biochemical networks, while stoichiometric approaches employ constraint-based optimization to ensure pathways are feasible within the host's metabolic context. A newer class of tools, retrobiosynthesis approaches, uses algebraic operations to propose novel reactions not observed in nature [22]. Each method has distinct advantages and limitations in handling pathway linearity, stoichiometric feasibility, and network size.

The SubNetX Pipeline for Complex Pathway Design

The SubNetX algorithm addresses limitations in existing pathway-design tools by combining the strengths of constraint-based and retrobiosynthesis methods. This pipeline assembles a hypergraph-like network as an intermediate step in pathway design, creating a feasible solution space that connects a target molecule to the native metabolism of the host organism while incorporating mechanistic details like thermodynamics and kinetics [22].

The SubNetX workflow consists of five main steps:

- Reaction network preparation where databases of balanced reactions, target compounds, and precursors are defined

- Graph search of linear core pathways from precursors to targets

- Expansion and extraction of a balanced subnetwork where cosubstrates and byproducts are linked to native metabolism

- Integration of the subnetwork into the host metabolic model

- Ranking of feasible pathways based on yield, enzyme specificity, and thermodynamic feasibility [22]

Table: Comparison of Computational Pathway Design Approaches

| Method Type | Key Features | Advantages | Limitations |

|---|---|---|---|

| Graph-Based | Uses graph-search algorithms | Can navigate large reaction networks | Pathways may lack stoichiometric feasibility |

| Stoiotichiometric | Constraint-based optimization | Ensures metabolic feasibility | Limited by computational power with large networks |

| Retrobiosynthesis | Proposes novel reactions using algebraic operations | Accesses innovative biochemical routes | May propose biologically challenging reactions |

| SubNetX (Hybrid) | Combines constraint-based and retrobiosynthesis | Balances feasibility with innovation | Complex implementation and parameterization |

Figure 1: SubNetX Workflow for Balanced Pathway Design

Deep Learning in Metabolic Pathway Prediction

Recent advancements in deep learning have ushered in a transformative approach to retrosynthesis. These methods discern key features and intricate patterns of synthetic pathways within vast datasets. Deep learning models for metabolic pathway design utilize embedded data of enzymatic reactions, described using molecular structures of substrate-product pairs, along with enzymatic data represented as amino acid sequences or EC numbers [20].

By integrating embedded enzymatic reaction data with molecular structures, these models can predict single-step enzymatic reactions and multi-step pathways, significantly accelerating the design-build-test-learn (DBTL) cycle in metabolic engineering. The application of architectures such as molecular transformers and reinforcement learning has demonstrated particular promise in navigating the complex chemical and metabolic spaces required for pathway prediction [20].

High-Throughput Screening Platforms for Strain Development

Evolution from Traditional Methods to Automated Workflows

Traditional methods of strain engineering are time-consuming and can limit optimization of strain yield and productivity. The design-build-test-learn (DBTL) cycle, essential for optimizing these processes, has traditionally been lengthy and prone to human error. Addressing challenges in the build phase using automation allows researchers to accelerate the cycle and decrease development costs and time [23].

Advanced robotic systems like the BioXp system exemplify this trend toward automation, enabling rapid construction of genetic variants and pathway libraries with minimal manual intervention. This approach is particularly valuable for exploring the vast sequence space required for effective enzyme engineering and metabolic optimization [23].

Advanced Molecular Screening Technologies

Molecular Sensors on Mother Yeast Cells (MOMS)

The MOMS platform represents a breakthrough in high-throughput screening for extracellular metabolite secretion. This technology utilizes aptamers selectively anchored to mother yeast cells that remain confined during cell division, enabling high-sensitivity detection, high-throughput screening, and rapid single-yeast assays [24].

Key performance metrics of the MOMS platform:

- Detection limit: 100 nM

- Screening throughput: Over 10⁷ single cells per run

- Processing speed: 3.0 × 10³ cells/second, enabling screening of 2.2 × 10⁶ variants in just 12 minutes

- Speed advantage: >30-fold speed boost compared to conventional droplet-based screening [24]

Table: Performance Comparison of High-Throughput Screening Platforms

| Screening Platform | Detection Limit | Throughput (cells) | Processing Speed | Key Applications |

|---|---|---|---|---|

| MOMS | 100 nM | >10⁷ per run | 3.0 × 10³ cells/sec | Extracellular secretion analysis |

| FADS | ~10 µM | Limited by encapsulation rate | 10-200 cells/sec | Intracellular molecule analysis |

| RAPID | ~260 µM | Limited by encapsulation rate | ~10 cells/sec | Extracellular secretion with aptamers |

| FACS | Varies | 10³-10⁴ per second | 10³-10⁴ cells/sec | Surface protein and intracellular molecule analysis |

In Vitro Detection for Antibiotic Production

High-throughput screening has been successfully applied to accelerate the breeding of mutated strains for antibiotic production. For spinosad production in Saccharopolyspora spinosa, researchers established an in vitro detection method using a broad substrate promiscuity glycosyltransferase (OleD) from Streptomyces antibioticus for colorimetric detection of pseudoaglycone, the precursor compound for spinosad [25].

Experimental Protocol: Spinosad High-Throughput Screening

- Library Generation: Create mutant libraries of S. spinosa through random mutagenesis

- Reaction Setup: Incubate cell extracts with OleD enzyme and appropriate substrates

- Colorimetric Detection: Monitor color development indicating pseudoaglycone concentration

- Strain Selection: Identify high-producing mutants based on signal intensity

- Validation: Combine with genetic engineering to further enhance production

This approach enabled the selection of mutant strain DUA15, which showed a 0.80-fold increase in spinosad production compared to the original strain. Subsequent genetic engineering yielded strain D15-102 with a 2.9-fold increase in spinosad production [25].

Figure 2: High-Throughput Screening Workflow

Metabolic Engineering for Pharmaceutical Applications

Biopharmaceuticals from Engineered Microbes

In the pharmaceutical industry, antibiotics and vaccines are increasingly produced through engineered microorganisms. Antibiotics are produced from various Streptomyces species, Bacillus brevis, and Pseudomonas aurantiaca, while fungi like Aspergillus terreus and Penicillium species are major producers of antibiotics [26]. Vaccines provide defense against disease-causing organisms by boosting immunity and are developed using bacteria including Clostridium tetani, Corynebacterium diphtheria, and Bacillus anthracis [26].

The human microbiome has been shown to play a significant role in drug metabolism, efficacy, and safety, influencing individual responses to therapy. Advances in pharmacomicrobiomics—the study of drug-microbiota interactions—are playing a key role in the future of personalized medicine through microbiome-based diagnostics, understanding drug-microbiota interactions, and developing precision probiotics and prebiotics [27].

Case Study: Vanillin Production Optimization

The MOMS platform has been successfully applied to directed evolution for vanillin production. Using aptamer sensors specific to vanillin, researchers identified yeast strains optimized for vanillin secretion, achieving over 2.7 times higher secretion rates than their parental strains [24]. This demonstration highlights the power of combining specific molecular sensors with high-throughput screening for pharmaceutical and flavor compound production.

Research Reagent Solutions for Metabolic Engineering

| Reagent/Category | Function | Example Applications |

|---|---|---|

| Molecular Sensors (MOMS) | Detect extracellular metabolites | Vanillin, ATP, glucose detection |

| Glycosyltransferases (OleD) | Enzyme-coupled detection | Spinosad precursor screening |

| Aptamer Sequences | Target-specific molecular recognition | Customizable for various metabolites |

| Biotin-Streptavidin System | Surface anchoring of sensors | MOMS fabrication on cell walls |

| Fluorescence-Activated Cell Sorting (FACS) | High-speed cell separation | Population enrichment based on surface markers |

| Error-Prone PCR Kits | Random mutagenesis | Library generation for directed evolution |

| Microfluidic Droplet Systems | Single-cell encapsulation | Fluorescence-activated droplet sorting |

Emerging Technologies and Future Perspectives

AI and Machine Learning in Bioprocessing

The integration of artificial intelligence (AI) and machine learning (ML) has revolutionized pharmaceutical microbiology by enabling faster, more accurate microbial detection and data analysis. AI-driven technologies are now used to automate routine testing tasks, reduce human error, and optimize laboratory workflows [27].

Specific applications include:

- Predictive Analytics: AI models can forecast contamination risks and enable proactive interventions

- Antimicrobial Resistance Prediction: Machine learning algorithms analyze large genomic datasets to predict resistance patterns

- Automation of Quality Control: AI tools streamline the review of complex data, improving efficiency and ensuring rigorous quality standards [27]

Model-Informed Drug Development (MIDD)

Model-Informed Drug Development is an essential framework for advancing drug development and supporting regulatory decision-making. MIDD provides quantitative predictions and data-driven insights that accelerate hypothesis testing, assess potential drug candidates more efficiently, reduce costly late-stage failures, and accelerate market access for patients [28].

The "fit-for-purpose" approach in MIDD strategically aligns modeling tools with key questions of interest and context of use across all stages of drug development—from early discovery to post-market lifecycle management. Successful applications include dose-finding and patient drop-out predictions across multiple disease areas [28].

The convergence of computational pathway design, high-throughput screening technologies, and advanced analytics is creating unprecedented opportunities for optimizing metabolic networks in pharmaceutical bioproduction. Tools like SubNetX for balanced pathway design and platforms like MOMS for ultra-high-throughput screening represent the cutting edge of this convergence, enabling researchers to move beyond simple linear pathways to complex, balanced metabolic networks that maximize yield while maintaining cellular viability.

As deep learning algorithms become more sophisticated and screening technologies continue to improve in sensitivity and throughput, the DBTL cycle in metabolic engineering will further accelerate. This progress promises to expand the range of pharmaceuticals and complex chemicals that can be economically produced through microbial fermentation, ultimately contributing to a more sustainable, bio-based economy that addresses pressing global challenges in healthcare and environmental sustainability.

The How: Advanced HTS Platforms, Biosensors, and Automated Workflows in Action

The pursuit of understanding and optimizing metabolic networks in biology relies on the ability to conduct high-throughput, high-sensitivity analysis of cellular processes. This whitepaper details two cutting-edge technological platforms that are revolutionizing this field: Molecular Sensors on the Membrane surface of Mother yeast cells (MOMS) and droplet-based microfluidic systems. MOMS represents a novel biosensing approach that enables ultra-sensitive, high-speed analysis of extracellular metabolites from single cells. In parallel, droplet microfluidics provides a powerful framework for compartmentalizing biological assays into picoliter to nanoliter volumes, facilitating ultra-high-throughput screening. Both platforms offer distinct advantages for metabolic flux analysis, strain selection, and the generation of high-quality data for constraining and validating computational models, including Flux Balance Analysis (FBA). Their integration presents a promising pathway for closing the loop between high-throughput experimental data generation and computational model prediction, thereby accelerating research in systems biology, metabolic engineering, and drug development.

Molecular Sensors on Mother Yeast Cells (MOMS)

The MOMS platform is an innovative biosensing system designed for the large-scale, high-sensitivity analysis of extracellular secretions from yeast cells. Its core innovation lies in the selective and dense anchoring of molecular sensors, specifically DNA aptamers, exclusively to the cell wall of mother yeast cells during the budding process [24].

This selective anchoring is achieved through a multi-step functionalization process. First, yeast cells are treated with a membrane-impermeant biotinylating reagent (sulfo-NHS-LC-biotin) that selectively labels surface proteins. Subsequently, streptavidin is attached, followed by biotin-bearing DNA aptamers. During cell division, this engineered coating remains confined to the original mother cell, as daughter cells bud with newly synthesized membranes. This results in a high-density sensor coating (approximately 1.4 × 10^7 sensors per cell) that is not diluted over generations, enabling precise and sustained tracking of secreted molecules from individual mother cells [24].

Performance Metrics and Comparative Advantage

The MOMS platform achieves a performance profile that surpasses existing technologies like Fluorescence-Activated Droplet Sorting (FADS) in several key metrics, as summarized in Table 1.

Table 1: Quantitative Performance Metrics of the MOMS Platform [24]

| Performance Parameter | Metric | Comparative Advantage |

|---|---|---|

| Sensitivity (Limit of Detection) | 100 nM | >10-fold increase over conventional droplet screening |

| Screening Throughput | >10^7 single cells per run | >2-fold improvement over state-of-the-art |

| Processing Speed | 3.0 × 10^3 cells/second | >30-fold speed boost compared to conventional methods |

| Rare Strain Isolation | Identifies top 0.05% of secretory strains from 2.2 × 10^6 variants in 12 minutes | Enables rapid screening of vast mutant libraries |

This combination of high sensitivity, throughput, and speed allows researchers to rapidly interrogate massive populations of yeast variants to identify rare, high-performing strains for metabolic engineering applications, such as the production of valuable pharmaceuticals and chemicals [24].

Experimental Protocol: Fabrication and Screening with MOMS

The following protocol details the key steps for implementing the MOMS platform for metabolic secretion analysis.

- Cell Surface Biotinylation: Harvest yeast cells from a log-phase culture and wash them with an appropriate buffer (e.g., PBS, pH 7.4). Resuspend the cell pellet in a solution of sulfo-NHS-LC-biotin (e.g., 0.5-1.0 mg/mL) and incubate for 30 minutes at room temperature with gentle agitation. This step covalently attaches biotin to amine groups on the cell surface proteins [24].

- Streptavidin Coupling: Wash the biotinylated cells thoroughly to remove excess reagent. Incubate the cells with a solution of streptavidin (e.g., 10-50 µg/mL) for 20-30 minutes on ice. Wash again to remove unbound streptavidin.

- Aptamer Functionalization: Incubate the streptavidin-coated cells with biotinylated DNA aptamers, which are selected to bind the target metabolite (e.g., vanillin, ATP, glucose). Use an aptamer concentration sufficient to achieve a high-density coating (determined via flow cytometry calibration). After incubation, wash the cells to remove unbound aptamers. The resulting cells are now functionalized MOMS sensors [24].

- Secretion Assay and Screening: Resuspend the MOMS-functionalized cells in a suitable growth or assay medium. As the cells metabolize and secrete the target compound, the aptamers on the mother cell surface will capture the molecules directly at the source. The binding event can be transduced into a fluorescent signal via various methods (e.g., using a labeled complementary strand in a displacement assay). The fluorescently labeled mother cells are then analyzed and sorted at high speed using a standard flow cytometer or a specialized microfluidic sorter [24].

- Validation and Downstream Analysis: Sorted cells of interest can be collected and plated for viability checks and proliferation. The metabolic output of selected strains must be validated using gold-standard methods like HPLC-MS or GC-MS to confirm the enhanced secretion phenotype [24].

MOMS Experimental Workflow: From cell preparation to validation.

Droplet-Based Microfluidic Systems

Droplet microfluidics is a powerful technology that involves the discretization of a bulk aqueous sample into thousands to millions of monodisperse, picoliter to nanoliter volume droplets, encapsulated by an immiscible oil phase [29] [30]. Each droplet functions as an isolated micro-reactor, providing a confined environment for chemical or biological assays.

The core operations of droplet-based screening, which emulate and exceed the capabilities of traditional well-plate workflows, are illustrated in Figure 1 and include [29]:

- Droplet Generation: Typically achieved using flow-focusing or T-junction geometries at frequencies exceeding 1 kHz.

- Droplet Incubation: Droplets can be stored off-chip or incubated on-chip in delay lines for extended reaction times.

- Droplet Manipulation: Includes pico-injection for adding reagents, droplet splitting for sampling, and droplet merging for combinatorial screening.

- Droplet Sorting: Based on a fluorescent readout, desired droplets are selectively deflected using techniques like dielectrophoresis (DEP), acoustics, or magnets.

This platform is particularly suited for high-throughput screening (HTS) applications as it offers a monumental 10^3 to 10^6-fold reduction in assay volume compared to bulk workflows, drastically reducing reagent costs and consumable use while enabling ultra-high throughput [29].

Performance Metrics and Applications

Droplet microfluidics excels in processing vast numbers of samples. While its absolute sensitivity can be assay-dependent, the massive volume reduction significantly increases the local concentration of target molecules, leading to enhanced signal-to-noise ratios and enabling the detection of rare events [30].

Table 2: Key Characteristics and Applications of Droplet Microfluidics [29] [30]

| Characteristic | Specification / Impact | Application in Metabolic Research |

|---|---|---|

| Droplet Volume | Femtoliters to Nanoliters | Massive reduction in reagent cost and sample consumption. |

| Throughput | >500 Hz generation; 10^3 - 10^4 droplets/sec for sorting | Ultra-high-throughput screening of microbial libraries (>10^5 samples/day). |

| Key Operations | Injection, merging, splitting, incubation | Enables multi-step assays, combinatorial screening, and sample cleanup. |

| Compartmentalization | Creates isolated micro-reactors | Prevents cross-contamination, allows single-cell analysis, links genotype to phenotype. |

| Rare Event Recovery | Reliable sorting and dispensing into microwells [31] | Isolation of rare, high-producing metabolic strains for further cultivation. |

A primary application in metabolic network research is the screening of mutant libraries for strains with enhanced production of a target metabolite. This is often done using enzyme-coupled assays that generate a fluorescent product inside the droplet, allowing for the sorting of high-producing cells [29] [24].

Experimental Protocol: Metabolite Screening via FADS

The following outlines a standard protocol for Fluorescence-Activated Droplet Sorting (FADS) to screen for microbial variants based on extracellular metabolite secretion.

- Droplet Generation and Cell Encapsulation: A microfluidic droplet generator is used to create a stable water-in-oil emulsion. The aqueous phase contains a suspension of single microbial cells (e.g., yeast or bacteria) and a fluorescent sensor system for the target metabolite. The sensor can be an enzyme-coupled assay, an RNA aptamer, or a co-encapsulated biosensor cell [29] [24] [30].

- On-Chip Incubation: The generated droplets are collected in a capillary tube or stored in a reservoir off-chip to allow time for the cells to grow and secrete the metabolite. Alternatively, an on-chip delay line with a large volume can be used to increase incubation time [29].

- Signal Generation: The secreted metabolite diffuses within the droplet and interacts with the sensor. For an enzyme-coupled assay, this interaction produces a fluorescent product. The fluorescence intensity is proportional to the metabolite concentration [29] [24].