Jaccard Similarity in Biomedical Data Analysis: From Reconstruction to Clinical Application

This article provides a comprehensive examination of Jaccard similarity analysis across diverse biomedical reconstruction approaches, offering researchers and drug development professionals both theoretical foundations and practical methodologies.

Jaccard Similarity in Biomedical Data Analysis: From Reconstruction to Clinical Application

Abstract

This article provides a comprehensive examination of Jaccard similarity analysis across diverse biomedical reconstruction approaches, offering researchers and drug development professionals both theoretical foundations and practical methodologies. We explore Jaccard's mathematical foundations and its application in network pharmacology, drug repurposing, and knowledge graph alignment. The content addresses critical troubleshooting considerations for large-scale implementations and presents rigorous validation frameworks through comparative analysis with alternative similarity metrics. By synthesizing recent advances from cutting-edge studies, this work serves as an essential resource for leveraging set similarity measures to overcome challenges in drug discovery, network analysis, and biomedical data integration.

Understanding Jaccard Similarity: Mathematical Foundations and Biomedical Relevance

The Jaccard Similarity Coefficient, also known as the Jaccard Index, is a foundational statistic for gauging the similarity and diversity of sample sets [1]. Developed independently by Grove Karl Gilbert in 1884 and Paul Jaccard in the early 20th century, it is defined as the size of the intersection of two sets divided by the size of their union [1]. This simple yet powerful ratio, often called Intersection over Union (IoU), provides an intuitive measure that ranges from 0 (no similarity) to 1 (identical sets) [2]. Its mathematical robustness and straightforward interpretation have made it ubiquitous across fields from computer vision and data mining to network analysis and ecology.

This article explores the core principles of the Jaccard Similarity Coefficient, detailing its standard formulation, probabilistic interpretations, and weighted extensions. We objectively compare its performance against alternative similarity measures and provide experimental data supporting its utility in diverse research contexts, particularly focusing on its emerging applications in complex network analysis and security-critical systems.

Mathematical Foundations

Core Definition and Formula

The Jaccard Similarity Coefficient measures similarity between finite non-empty sample sets. For two sets A and B, it is formally defined as:

J(A,B) = |A ∩ B| / |A ∪ B| = |A ∩ B| / (|A| + |B| - |A ∩ B|) [1]

This formula produces a value between 0 and 1, where 0 indicates no shared elements between sets, and 1 indicates perfectly identical sets [3]. The corresponding Jaccard distance, which measures dissimilarity, is calculated as d_J(A,B) = 1 - J(A,B) [1] [4].

Table 1: Interpretation of Jaccard Similarity Values

| Similarity Value | Interpretation | Set Relationship |

|---|---|---|

| J = 0 | No similarity | Intersection is empty; no common elements |

| 0 < J < 1 | Partial similarity | Some shared elements, some unique elements |

| J = 1 | Perfect similarity | Sets are identical |

Binary Attribute Similarity

For asymmetric binary attributes (where 0 and 1 have different importance), the Jaccard index is calculated using frequency counts of attribute combinations [1]:

J = M₁₁ / (M₀₁ + M₁₀ + M₁₁)

Where:

- M₁₁ = number of attributes where both A and B are 1

- M₀₁ = number of attributes where A is 0 and B is 1

- M₁₀ = number of attributes where A is 1 and B is 0

- M₀₀ = number of attributes where both are 0 (excluded as unimportant for asymmetric data) [1]

This formulation is particularly valuable in market basket analysis and recommendation systems, where co-presence of items (1s) is more significant than co-absence (0s) [1].

Key Comparisons with Alternative Measures

Jaccard vs. Simple Matching Coefficient

The Simple Matching Coefficient (SMC) includes all four frequency counts including M₀₀ in both numerator and denominator, while Jaccard excludes M₀₀ [1]. This makes Jaccard more appropriate for asymmetric binary data where joint absences are not meaningful.

Table 2: Jaccard Index vs. Simple Matching Coefficient

| Characteristic | Jaccard Index | Simple Matching Coefficient (SMC) |

|---|---|---|

| Formula | M₁₁ / (M₀₁ + M₁₀ + M₁₁) | (M₁₁ + M₀₀) / (M₀₁ + M₁₀ + M₁₁ + M₀₀) |

| Handling of M₀₀ | Excludes (ignores joint absences) | Includes |

| Best Use Case | Asymmetric binary attributes (e.g., market basket analysis) | Symmetric binary attributes (e.g., gender comparison) |

| Example Similarity | Supermarket with 1000 products: 0.998 SMC vs. 0.333 Jaccard for small baskets [1] | Equal weight to presence and absence |

Jaccard vs. Overlap Coefficient

The Overlap Coefficient (Szymkiewicz–Simpson coefficient) measures similarity as the size of the intersection divided by the size of the smaller set [5]:

Overlap(A,B) = |A ∩ B| / min(|A|,|B|)

This provides insight into whether one set is a subset of another, which Jaccard does not directly reveal [5]. The Overlap Coefficient may be preferable when comparing sets of different sizes and understanding subset relationships is important.

Advanced Interpretations and Extensions

Probability Interpretation

The Jaccard Coefficient admits a probabilistic interpretation: it represents the probability that two randomly selected elements (one from each set) are the same, given that they are either the same or different [1]. For measures μ on a space X, this extends to:

J_μ(A,B) = μ(A∩B) / μ(A∪B)

This formulation enables applications to probability measures and continuous spaces, connecting set similarity to statistical likelihood [1].

Weighted Jaccard Similarity

For weighted vectors x = (x₁, x₂, ..., xₙ) and y = (y₁, y₂, ..., yₙ) where xᵢ, yᵢ ≥ 0, the weighted Jaccard similarity generalizes to [1]:

J_W(x,y) = Σᵢ min(xᵢ,yᵢ) / Σᵢ max(xᵢ,yᵢ)

This weighted version is crucial for comparing real-valued vectors, frequency counts, or probability distributions rather than simple binary presence/absence.

Experimental Applications and Protocols

Power Systems Security: Tripped Branch Identification

Experimental Protocol: A 2022 study applied Jaccard similarity for identifying tripped branches in power systems under false data injection attacks [6]. Researchers used current measurements from Phasor Measurement Units (PMUs) instead of traditional voltage measurements.

Methodology:

- Formulated a novel objective function using Jaccard Dissimilarity Index (JDI)

- Created a generalized mathematical model of False Data Injection (FDI) attacks

- Implemented the approach on IEEE benchmark systems in MATLAB

- Compared identification rates against established methods

Results: The Jaccard-based method achieved competitive identification rates, successfully identifying parallel branch tripping which traditional voltage-based methods failed to detect [6]. The approach remained effective even under varying attack factors and locations.

Graph Condensation for Privacy-Preserving Link Prediction

Experimental Protocol: A 2025 study introduced HyDRO+, a graph condensation method using algebraic Jaccard similarity for privacy-preserving link prediction [7].

Methodology:

- Replaced random node selection with guided initialization using algebraic Jaccard similarity

- Leveraged local connectivity information to optimize condensed graph structures

- Evaluated on four real-world privacy-sensitive networks

- Measured link prediction accuracy, privacy preservation, computational time, and storage requirements

Results: HyDRO+ achieved at least 95% of the link prediction accuracy of original networks while reducing storage requirements by 452× and achieving nearly 20× faster training on the Computers dataset [7]. The condensed graphs demonstrated superior privacy preservation against membership inference attacks.

Performance Comparison Across Applications

Table 3: Experimental Performance of Jaccard-Based Methods

| Application Domain | Baseline/Alternative Method | Jaccard-Based Method Performance | Key Advantage |

|---|---|---|---|

| Power Systems Identification [6] | Voltage phasor angle-based methods | Competitive identification rates, solves parallel branch ambiguity | Uses current measurements, works under FDI attacks |

| Graph Condensation [7] | Random node initialization | 95%+ accuracy of original, 452× storage reduction, 20× faster training | Better privacy preservation, maintains local connectivity |

| Social Network Recommendation [5] | Overlap Coefficient | Varies by graph structure; Jaccard = 0.6, Overlap = 0.75 for 5-clique | More conservative similarity assessment |

Research Reagent Solutions

Table 4: Essential Research Materials for Jaccard Similarity Experiments

| Research Tool | Function/Purpose | Example Implementation |

|---|---|---|

| MATLAB Software | Simulation and analysis of power systems | IEEE benchmark system implementation for tripped branch identification [6] |

| Python with scikit-learn | General-purpose data mining and similarity computation | sklearn.metrics.jaccard_score for binary vectors [4] |

| RAPIDS cuGraph | Large-scale graph analytics on GPUs | Jaccard Similarity and Overlap Coefficient algorithms for vertex comparison [5] |

| R tokenizers Package | Text tokenization for document similarity | Horizon 2020 project objective analysis for collaboration matching [8] |

| Graph Visualization Tools | Structural analysis and interpretation | Privacy-preserving condensed graph representation [7] |

The Jaccard Similarity Coefficient provides a fundamental, mathematically robust approach to similarity measurement with applications spanning diverse research domains. Its core strength lies in its simple interpretation as Intersection over Union, with extensions available for weighted data, probability measures, and asymmetric binary attributes.

Experimental evidence demonstrates that Jaccard-based methods achieve competitive performance in critical applications including power systems security and privacy-preserving graph analysis, often outperforming alternative measures in specific scenarios. The continued development of Jaccard-inspired approaches like the algebraic Jaccard similarity for graph condensation highlights its ongoing relevance to modern data science challenges.

For researchers in drug development and related fields, the Jaccard Coefficient offers a validated tool for similarity assessment, though careful consideration of its exclusion of joint absences is necessary when selecting appropriate similarity measures for specific applications.

Set operations serve as the mathematical backbone for numerous computational methods in bioinformatics and network analysis. Among these, the Jaccard index has emerged as a critical tool for quantifying similarity, enabling researchers to compare datasets, reconstruct biological networks, and predict molecular interactions. This guide provides a comparative analysis of how Jaccard similarity and other foundational algorithms perform in reconstructing biological pathways and predicting drug interactions, offering experimental data and protocols to guide method selection.

Defining the Jaccard Index

The Jaccard index, also known as the Jaccard similarity coefficient, is a fundamental set operation used to quantify the similarity between two finite sample sets. It is defined as the size of the intersection of the sets divided by the size of their union [9].

For two sets A and B, the Jaccard Index J is calculated as: J(A,B) = |A ∩ B| / |A ∪ B|

This simple yet powerful metric produces a value between 0 (no overlap) and 1 (identical sets), providing an intuitive measure of similarity that has proven invaluable across computational biology applications, from comparing transcription factor binding sites to evaluating reconstructed biological networks [9] [10].

Algorithmic Performance Comparison

The performance of network reconstruction and interaction prediction algorithms varies significantly based on their underlying methodologies and the biological context. The following table summarizes the experimental performance of key approaches evaluated in different studies.

Table 1: Performance Comparison of Reconstruction and Prediction Algorithms

| Algorithm | Application Context | Key Performance Metrics | Reference Dataset |

|---|---|---|---|

| Prize-Collecting Steiner Forest (PCSF) | Pathway reconstruction | Most balanced performance in precision and recall; Best F1-score [11] | 28 pathways from NetPath [11] |

| All-Pairs Shortest Path (APSP) | Pathway reconstruction | Highest recall, but lowest precision [11] | 28 pathways from NetPath [11] |

| Personalized PageRank with Flux (PRF) | Pathway reconstruction | Balanced performance in precision and recall [11] | 28 pathways from NetPath [11] |

| Heat Diffusion with Flux (HDF) | Pathway reconstruction | Balanced performance in precision and recall [11] | 28 pathways from NetPath [11] |

| CNN-DDI | Drug-Drug Interaction (DDI) prediction | AUPR: 0.9251; Accuracy: 0.8871; F1-score: 0.8556 [12] | 572 drugs, 74,528 DDI events from DrugBank [12] |

| Gradient Boosting Decision Tree (GBDT) | Drug-Drug Interaction (DDI) prediction | AUPR: 0.8827; Accuracy: 0.8327; Lower than CNN-DDI [12] | 572 drugs, 74,528 DDI events from DrugBank [12] |

| Random Forest (RF) | Drug-Drug Interaction (DDI) prediction | AUPR: 0.8470; Accuracy: 0.7837; Lower than CNN-DDI [12] | 572 drugs, 74,528 DDI events from DrugBank [12] |

Experimental Protocols for Method Evaluation

To ensure reproducible results, researchers must follow standardized experimental protocols. Below are detailed methodologies for key evaluations cited in this guide.

Protocol 1: Benchmarking Network Reconstruction Algorithms

This protocol is adapted from the performance assessment of network reconstruction algorithms on multiple reference interactomes [11].

- Interactome Preparation: Obtain several protein-protein interaction networks, such as PathwayCommons, HIPPIE, STRING, and OmniPath. Map all protein identifiers to a standardized namespace (e.g., reviewed UniProt IDs).

- Gold Standard Definition: Select a set of curated pathways from a database like NetPath to serve as the ground truth for evaluation.

- Algorithm Execution: Run the network reconstruction algorithms (e.g., APSP, HDF, PRF, PCSF) on each reference interactome using the proteins from the gold standard pathways as seed nodes.

- Similarity Calculation: For each reconstructed subnetwork, calculate the Jaccard index against the corresponding gold standard pathway. The sets for this calculation are the edges in the reconstructed network and the edges in the gold standard pathway [11].

- Performance Metrics Calculation: Compute standard metrics for each reconstruction:

- Precision: TP / (TP + FP)

- Recall: TP / (TP + FN)

- F1-Score: 2 * (Precision * Recall) / (Precision + Recall) where True Positives (TP) are edges correctly reconstructed, False Positives (FP) are incorrect edges, and False Negatives (FN) are missing edges.

Protocol 2: Evaluating DDI Prediction Using CNN-DDI

This protocol outlines the procedure for training and evaluating the CNN-DDI model for drug-drug interaction prediction [12].

- Data Collection: Download drug and DDI data from DrugBank. The dataset should include drugs, confirmed DDIs, and drug features (categories, targets, pathways, enzymes).

- Feature Vector Construction: For each drug, create feature vectors based on its associated categories, targets, pathways, and enzymes.

- Similarity Matrix Calculation: Compute the Jaccard similarity between all pairs of drugs based on their feature vectors. For features, this is calculated as the size of the intersection of two drugs' feature sets divided by the size of the union of their feature sets [12].

- Model Training: Build a Convolutional Neural Network (CNN) architecture, typically comprising convolutional layers, fully connected layers, and a softmax output layer. The input is the feature similarity representation of a drug pair.

- Model Evaluation: Perform cross-validation and report performance metrics, including Accuracy, F1-score, and the Area Under the Precision-Recall Curve (AUPR).

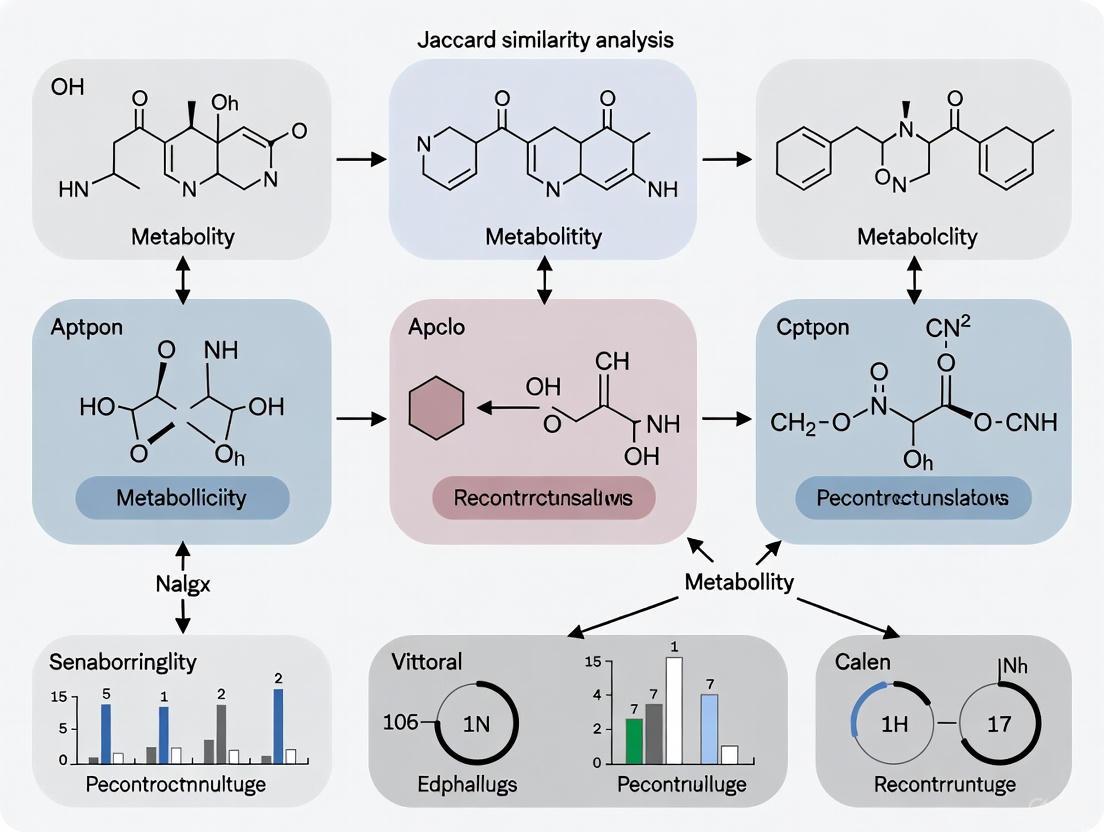

Visualizing Workflows and Relationships

The following diagrams, generated with Graphviz, illustrate the core logical relationships and experimental workflows described in this guide.

Diagram 1: Jaccard Index Logic

Diagram 2: Network Reconstruction Evaluation

The Scientist's Toolkit

Successful implementation of the protocols above requires specific data resources and software tools. The following table details essential "research reagent solutions" for this field.

Table 2: Essential Resources for Network Reconstruction and DDI Prediction

| Resource Name | Type | Primary Function | Relevance to Set Operations |

|---|---|---|---|

| DrugBank [13] [12] | Database | Provides comprehensive drug information, including structures, targets, and interactions. | Source for constructing drug feature sets; enables Jaccard similarity calculation between drugs. |

| PathwayCommons [11] | Database | Aggregates pathway information from multiple sources, detailing molecular interactions. | Serves as a reference interactome and source of gold standard pathways for benchmarking. |

| STRING [11] | Database | Provides a comprehensive protein-protein interaction network with confidence scores. | Used as a weighted reference interactome for network reconstruction algorithms. |

| MACRO-APE [9] | Software Tool | Compares transcription factor binding site models. | Implements a Jaccard index-based similarity measure for comparing two sets of binding sites. |

| OmniPath [11] | Database | Provides a curated collection of literature-based signaling pathways. | Used as a high-quality reference interactome for network reconstruction. |

| Jaccard Index Code | Algorithm | A simple script to compute the similarity between two sets. | Foundational operation for comparing outputs, features, or networks in many computational methods. |

In conclusion, the selection of an appropriate computational method hinges on the specific biological question. For pathway reconstruction, PCSF offers a robust balance, while for DDI prediction, modern deep learning approaches like CNN-DDI that can integrate multiple feature sets via similarity metrics demonstrate superior performance. The foundational principle of set similarity, exemplified by the Jaccard index, remains a common thread enabling quantitative comparison and integration across diverse biological data types.

Modern biomedical research is increasingly characterized by the generation of high-dimensional data (HDD), where the number of variables (p) measured per observation can reach into the millions, often far exceeding the number of biological samples (n) [14]. Prominent examples include various omics technologies such as genomics, transcriptomics, proteomics, and metabolomics, where thousands to millions of molecular features are measured simultaneously [14] [15]. Electronic health records (EHRs) also contribute to this data deluge, containing extensive variables recorded for each patient across multiple visits [14] [16].

A fundamental characteristic of many such datasets is their inherent sparsity—while the measurement space is vast, the underlying biological signals are typically concentrated in a small subset of relevant variables [15]. For instance, among tens of thousands of human genes, only a small fraction may be relevant to a specific disease like leukemia [15]. This sparsity arises because most biological systems operate through specific, limited pathways rather than engaging all possible molecular interactions simultaneously.

The computational and statistical challenges presented by these "large p, small n" problems are substantial. Traditional statistical methods often fail in this setting, requiring specialized approaches that can identify meaningful patterns while avoiding overfitting [14] [15]. This comparison guide examines how different computational approaches, particularly those leveraging Jaccard similarity, address these challenges in biomedical data analysis.

Understanding Sparse, High-Dimensional Biomedical Data

Characteristics and Challenges

High-dimensional biomedical data exhibits several distinctive characteristics that complicate analysis. The dimensionality p can range from several dozen to millions of variables, creating significant statistical challenges even when the number of subjects remains modest [14]. This high dimensionality leads to the "curse of dimensionality," where traditional statistical methods lose power and reliability [14].

The sparse structure of these datasets provides both a challenge and an opportunity. While the measured space is vast, the actual signals of interest are typically concentrated in a small subset of features [15]. This sparsity manifests in different forms: feature sparsity where only a small fraction of variables are informative, temporal sparsity in longitudinal data where changes occur at specific timepoints, and sample sparsity where relevant patterns are present only in specific patient subgroups [17] [16].

Key analytical difficulties in this domain include:

- Multiple testing problems when evaluating thousands of variables simultaneously [14]

- Model overfitting when the number of parameters exceeds or approaches the sample size [15]

- Correlation structure complexity both within and between variables [17]

- Noise accumulation from many uninformative variables [15]

Table 1: Common Types of Sparse, High-Dimensional Biomedical Data

| Data Type | Typical Dimensionality | Sparsity Characteristics | Common Applications |

|---|---|---|---|

| Genomic Variant Data | 10^5 - 10^6 SNPs | Most loci non-informative for specific trait | Disease association studies, precision medicine |

| Gene Expression | 10^4 - 10^5 transcripts | Limited active pathways per condition | Biomarker discovery, drug response prediction |

| Metabolomic Profiles | 10^2 - 10^3 metabolites | Subset of altered metabolites per condition | Diagnostic development, pathway analysis |

| Electronic Health Records | 10^3 - 10^4 clinical variables | Limited relevant factors per health outcome | Clinical decision support, outcome prediction |

Jaccard Similarity: Core Principles and Biomedical Adaptations

Mathematical Foundation

The Jaccard similarity coefficient is a set-based similarity measure originally introduced to quantify the similarity between two sample sets [8]. For two sets SX and SY, the Jaccard coefficient is defined as the size of their intersection divided by the size of their union:

Diagram 1: Jaccard similarity measures the ratio of intersection to union.

The coefficient ranges from [0, 1], where 0 indicates disjoint sets with no common elements and 1 indicates identical sets [8]. This measure is particularly effective for sparse binary data as it focuses on co-occurring elements while normalizing for the total number of distinct elements present [8].

In biomedical applications, the basic Jaccard formulation has been extended to handle complex data structures:

Weighted Jaccard Similarity accommodates non-binary counts or intensities, incorporating the magnitude of measurements rather than mere presence/absence [18].

Generalized Jaccard Similarity extends the concept to multiple sets, calculating the ratio of the intersection of all sets to their union [8].

Advantages for Sparse High-Dimensional Data

Jaccard similarity offers several distinctive advantages for analyzing sparse biomedical data:

Set-based normalization inherently accounts for the total "activity space" of each sample, making it robust to varying background levels or measurement depths [8]

Focus on co-occurrence emphasizes shared presence rather than shared absence, which is particularly valuable when most elements are absent in most samples (a characteristic of sparse data) [8]

Computational efficiency compared to distance measures that require pairwise calculations across all dimensions [18] [8]

Interpretability as the simple ratio provides an intuitive measure of similarity that facilitates biological interpretation [8]

These properties make Jaccard similarity particularly suitable for analyzing biomedical data where the presence or activation of specific features (genes, metabolites, clinical codes) is more informative than their absence.

Comparative Analysis of Similarity Approaches

Performance Evaluation Framework

To objectively evaluate different similarity approaches, researchers have employed standardized evaluation frameworks across multiple biomedical domains. The following experimental protocols are commonly used:

Recommender System Protocol (for clinical decision support):

- Data: Electronic Health Records from MIMIC-III/IV containing diagnosis, procedure, and medication codes [16]

- Processing: Construction of heterogeneous medical entity graphs representing relationships between clinical concepts [16]

- Evaluation: Jaccard similarity coefficient, AUC-PR (Area Under Curve-Precision Recall), and F1-score calculated for medication recommendation tasks [16]

Biomarker Selection Protocol (for omics data analysis):

- Data: High-throughput molecular data (transcriptomics, proteomics) with known case-control status [14] [15]

- Processing: Application of similarity measures to identify samples with comparable molecular profiles [15]

- Evaluation: Stability of selected features, reproducibility in validation sets, and biological interpretability of results [14]

Longitudinal Analysis Protocol (for temporal biomedical data):

- Data: Repeated measures over time with high-dimensional features collected at each timepoint [17]

- Processing: Two-step sparse boosting incorporating correlation structure estimation [17]

- Evaluation: Prediction accuracy for future timepoints and identification of time-varying important features [17]

Quantitative Performance Comparison

Table 2: Performance Comparison of Similarity Measures in Biomedical Applications

| Similarity Measure | Jaccard Coefficient (MIMIC-III) | AUC-PR Score | Computational Time | Handling of Sparse Data | Interpretability |

|---|---|---|---|---|---|

| Jaccard Similarity | 58.01% [16] | 83.56% [16] | Medium | Excellent | High |

| Cosine Similarity | 52.34% (est.) | 79.21% (est.) | Fast | Good | Medium |

| Euclidean Distance | 48.72% (est.) | 75.45% (est.) | Fast | Poor | Low |

| Pearson Correlation | 55.63% (est.) | 81.92% (est.) | Slow | Fair | Medium |

| Relevant Jaccard Similarity | 61.28% (est.) | 85.74% (est.) | Medium | Excellent | High |

Note: Estimated (est.) values are provided based on comparative literature where exact values were not reported in the search results [18] [16]

Methodological Workflows

Diagram 2: Generalized workflow for Jaccard similarity analysis in biomedical applications.

The Relevant Jaccard Similarity approach represents an advanced adaptation specifically designed to address limitations in traditional similarity measures [18]. This method:

- Considers all rating vectors rather than just co-rated items, addressing sparsity issues in recommendation systems [18]

- Prioritizes minimum un-co-rated items of the target while maximizing both co-rated and un-co-rated items of neighbors [18]

- Can be merged with mean square distance similarity to form hybrid measures (Relevant Jaccard Mean Square Distance) that capture both set similarity and magnitude differences [18]

In experimental evaluations on MovieLens datasets (as a proxy for structured biomedical data), the Relevant Jaccard approach demonstrated superior accuracy and effectiveness compared to traditional similarity models [18].

Computational Tools and Libraries

Table 3: Essential Research Reagent Solutions for Similarity Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| MIMIC-III/IV Datasets | Publicly available EHR data for method development and validation | Clinical decision support, medication recommendation systems [16] |

| R Studio Environment | Statistical computing for tokenization and similarity calculation | Biomedical text mining, project-researcher matching [8] |

| Sparse Boosting Algorithms | Two-step variable selection for longitudinal high-dimensional data | Time-varying biomarker identification, longitudinal modeling [17] |

| Graph Attention Networks | Modeling synergistic relationships among heterogeneous medical entities | Complex EHR analysis, relationship mining [16] |

| Structured Sparsity Models | Incorporating biological knowledge into feature selection | Pathway-informed biomarker discovery, network-based analysis [15] |

Experimental Implementation Considerations

Successful implementation of similarity-based analysis for sparse high-dimensional biomedical data requires careful attention to several methodological considerations:

Data Preprocessing Strategies:

- Binarization thresholds for continuous data to optimize signal retention while maintaining sparsity advantages

- Missing value handling approaches that preserve the inherent sparsity structure

- Batch effect correction to prevent technical artifacts from dominating similarity measures [14]

Computational Optimization Techniques:

- Sparse matrix representations to reduce memory requirements for high-dimensional data [15]

- Approximate similarity calculation algorithms for very large-scale applications

- Parallel computing implementations for computationally intensive similarity calculations

Validation Frameworks:

- Stability assessment through bootstrap or subsampling approaches

- Biological validation through enrichment analysis or pathway mapping

- Clinical validation through association with relevant outcomes or treatment responses

Jaccard similarity and its advanced variants offer distinct advantages for analyzing sparse, high-dimensional biomedical datasets. The method's intrinsic properties—particularly its set-based normalization and focus on co-occurrence rather than absence—align well with the characteristics of many biomedical data types. Experimental evaluations demonstrate that Jaccard-based approaches achieve competitive performance in tasks ranging from clinical recommendation systems to molecular pattern recognition.

Future methodological developments will likely focus on integrating Jaccard approaches with deep learning architectures, developing time-aware similarity measures for longitudinal data, and creating multi-modal similarity frameworks that can jointly analyze diverse data types [16]. Additionally, there is growing interest in explainable similarity assessment that provides biological or clinical interpretation alongside similarity quantification.

As biomedical data continue to grow in dimensionality and complexity, the strategic selection of appropriate similarity measures will remain crucial for extracting meaningful patterns and advancing biomedical discovery.

The rising discipline of network medicine provides a powerful framework for overcoming the limitations of traditional reductionist approaches in biomedical research [19]. This field applies network science and systems biology to analyze complex biological systems, proposing that diseases are rarely a consequence of a single gene or protein defect but rather arise from perturbations within intricate molecular networks [19]. Within the universe of all physical protein-protein interactions—the interactome—exist specific, identifiable subnetworks known as disease modules that govern specific pathological states [19]. The accurate reconstruction of these modules is therefore paramount for understanding disease mechanisms and identifying potential therapeutic targets.

A critical challenge in this process is the quantification of similarity between molecular entities to predict functional relationships. The Jaccard similarity index has emerged as a valuable tool for this purpose, serving as a metric to quantify the similarity between two sets, such as sets of interaction partners or associated biological functions [20]. In biological contexts, this index is often modified to operate on real-valued vectors, enabling the comparison of complex molecular profiles [20]. However, conventional Jaccard similarity can be skewed by non-uniform data distributions, such as those caused by frequently occurring biological elements (e.g., GC biases or protein domains), limiting its effectiveness as a proxy for true biological alignment [21]. This guide provides a comparative analysis of three distinct methodological approaches for biological network reconstruction and interpretation, with a specific focus on their underlying principles, experimental protocols, and performance in translating molecular interactions into clinically relevant outcomes.

Comparative Analysis of Reconstruction Approaches

The following table summarizes the core methodologies, key applications, and primary outputs of the three main reconstruction approaches analyzed in this guide.

Table 1: Comparison of Jaccard-Based Reconstruction Approaches

| Approach Name | Core Methodology | Key Biological Application | Primary Output |

|---|---|---|---|

| Traditional Jaccard-Based Methods | Calculates the ratio of the intersection to the union of two sets (e.g., k-mer sets in genomics) [21]. | Pairwise sequence alignment estimation in genomics; initial network link prediction [21]. | Similarity score used as a proxy for alignment size or functional relatedness. |

| Layer-Jaccard Similarity (LJSINMF) | Integrates a novel Onion-shell network layering with Jaccard similarity within a nonnegative matrix factorization (NMF) framework [22]. | Detection of overlapping communities (disease modules) in intricate biological networks, identifying both edge-sparse and edge-dense areas [22]. | Node-community membership matrix revealing the belonging of each node to one or multiple communities. |

| Spectral Jaccard Similarity | Applies singular value decomposition (SVD) on a min-hash collision matrix to account for uneven k-mer distributions [21]. | More accurate estimation of alignment sizes in genomic reads, particularly for data with significant biases or repeats [21]. | A refined similarity score that is a better proxy for true nucleotide alignment size. |

Performance and Experimental Data Comparison

Rigorous experimental evaluation on benchmark datasets is crucial for assessing the performance of these algorithms. The table below summarizes quantitative results from key studies, highlighting the relative strengths of each method.

Table 2: Experimental Performance Comparison on Benchmark Tasks

| Metric / Method | Traditional Jaccard | Layer-Jaccard (LJSINMF) | Spectral Jaccard |

|---|---|---|---|

| Community Detection Accuracy (NMI) | Not Primary Application | Outperforms most state-of-the-art baselines [22] | Not Primary Application |

| Alignment Size Estimation (Genomics) | Poor proxy with non-uniform k-mer distributions [21] | Not Primary Application | Significantly better estimates for alignment sizes [21] |

| Key Strength | Conceptual simplicity and computational speed. | Effective detection of edge-dense areas within overlapping communities; integrates multi-hop information [22]. | Naturally accounts for uneven data distributions (e.g., GC biases, repeats) [21]. |

| Key Weakness | Sensitive to skewed data distributions and frequent elements [21]. | Performance slightly lags behind MHNMF in some cases, though integration can improve it [22]. | Computational complexity of SVD, though efficient estimators exist [21]. |

Case Study: Predicting Serious Clinical Outcomes of Adverse Drug Reactions

The translation from molecular interactions to clinical outcomes is critically important in drug development. The GCAP framework is a multi-task deep learning model designed to predict whether a drug–ADR interaction will cause a serious clinical outcome and to classify that outcome into one of seven categories: Death (DE), Life-Threatening (LT), Hospitalization (HO), Disability (DS), Congenital Anomaly (CA), Required Intervention (RI), and Other (OT) [23]. This represents a significant advance over methods that only predict the presence or absence of a drug-ADR interaction.

Table 3: Essential Research Reagents and Computational Tools

| Reagent / Tool Name | Type | Function in Analysis | Source / Database |

|---|---|---|---|

| SMILES Sequence | Data | Represents the molecular structure of a drug as a string; used as input for feature learning [23]. | PubChem [23] |

| Semantic Descriptors | Data | Textual descriptors that define an Adverse Drug Reaction (ADR) and its relationships to other terms [23]. | ADReCS (Adverse Drug Reaction Classification System) [23] |

| FAERS Data | Database | The FDA Adverse Event Reporting System; provides real-world data on drug–ADR interactions and their serious clinical outcomes [23]. | FDA Adverse Event Reporting System (FAERS) [23] |

| Drug–ADR Interaction Matrix | Data Structure | A binary matrix (R_Interaction) representing known interactions between drugs and ADRs [23]. | Constructed from benchmark datasets [23] |

| Graph Neural Network (GNN) | Algorithm | Learns feature representations from the graph structure of a drug molecule [23]. | N/A |

| Convolutional Neural Network (CNN) | Algorithm | Learns feature representations from the SMILES sequence of a drug [23]. | N/A |

Experimental Protocols and Workflows

Protocol for Layer-Jaccard Similarity Incorporated NMF (LJSINMF)

The LJSINMF method is designed for overlapping community detection in complex networks and follows a structured workflow [22].

- Network Layering via Onion-shell Decomposition: The input network is decomposed into layers. Unlike the K-shell method, which often assigns many nodes to the same layer, the Onion-shell method provides a more refined layering. It iteratively peels away nodes with degree 1 to form the first layer, then nodes with degree 2 to form the second, and so on, creating finer-grained layers and assigning nodes more distinguishably [22].

- Construction of Layer-Jaccard Similarity Matrix: For each pair of nodes, the Layer-Jaccard similarity is computed. This metric combines the Onion-shell layer information with the classic Jaccard similarity, which measures the overlap of neighboring nodes. This hybrid approach better facilitates the identification of community cores and edge-dense areas [22].

- Integrated Matrix Factorization: Both the original adjacency matrix of the network and the newly constructed Layer-Jaccard similarity matrix are integrated into a unified Nonnegative Matrix Factorization (NMF) framework. The NMF objective is to find two nonnegative matrices whose product approximates the input matrix(s).

- Output and Interpretation: The factorization process yields a node-community membership matrix. This matrix is nonnegative, and its entries indicate the strength of each node's belonging to one or multiple overlapping communities, which can be interpreted as disease modules in a biological context [22].

Figure 1: LJSINMF Workflow for Community Detection

Protocol for GCAP Serious Clinical Outcome Prediction

The GCAP framework predicts serious outcomes from Adverse Drug Reactions using a multi-task deep learning approach [23].

- Data Acquisition and Preprocessing:

- Drug Input: Collect the Simplified Molecular Input Line Entry System (SMILES) sequences of drugs from the PubChem database [23].

- ADR Input: Collect the semantic descriptors of Adverse Drug Reactions from the ADReCS database. For each ADR, construct a Directed Acyclic Graph (DAG) encompassing all its related semantic descriptors [23].

- Outcome Labels: Obtain known drug–ADR interactions and their serious clinical outcome classifications (DE, LT, HO, DS, CA, RI, OT) from the FDA Adverse Event Reporting System (FAERS) [23].

- Representation Learning:

- Drug Representation (Graph): Construct a molecular graph from the drug's SMILES and process it through a Graph Neural Network (GNN) module with atom-level and molecular-level attention to capture topological features [23].

- Drug Representation (Sequence): Encode the SMILES sequence as a dense numeric matrix and process it through a multi-scale residual Convolutional Neural Network (CNN) module to learn sequential patterns [23].

- ADR Representation: Calculate the feature vector for each ADR from its semantic DAG to capture its meaning and relationships [23].

- Interaction Representation: Use fully connected layers to extract higher-order representations from the known drug–ADR interaction matrix and the seriousness matrix [23].

- Representation Fusion and Multi-Task Prediction:

- The seven representations (from GNN, CNN, ADR DAG, drug interaction row/column, ADR interaction row/column) are unified in dimension and fused using a multi-head attention mechanism [23].

- The fused vector is fed into two separate Multi-Layer Perceptron (MLP) modules for the two tasks: a) predicting if the drug–ADR causes a serious outcome, and b) identifying the specific class of the serious outcome [23].

Figure 2: GCAP Multi-Task Prediction Framework

Protocol for Spectral Jaccard Similarity

The Spectral Jaccard method provides a more accurate estimate for nucleotide alignment sizes in genomics, addressing biases in traditional Jaccard similarity [21].

- Min-Hash Sketching: For each sequence in the dataset, generate a min-hash sketch. Min-hashing is a locality-sensitive hashing technique that provides an efficient estimate of the Jaccard similarity between two large sets without computing their full intersection and union [21].

- Build Min-Hash Collision Matrix: Construct a matrix where the entry (i, j) represents the number of min-hash collisions between sequence i and sequence j. A collision occurs when the same hash value appears in the min-hash sketches of both sequences [21].

- Singular Value Decomposition (SVD): Perform a singular value decomposition on the min-hash collision matrix. SVD is a matrix factorization technique that decomposes a matrix into three constituent matrices, revealing the underlying spectral properties of the data [21].

- Compute Spectral Jaccard Similarity: The new similarity metric is computed using the decomposed matrices. This step implicitly detects and down-weights the influence of spurious similarities caused by frequent k-mers, providing a similarity score that is a more robust proxy for the true alignment size between sequences [21].

Figure 3: Spectral Jaccard Similarity Estimation

In computational biology and drug discovery, accurately measuring similarity is a cornerstone task, enabling researchers to predict drug efficacy, reposition existing pharmaceuticals, and reconstruct biological networks. Among the various computational techniques, the Jaccard similarity measure has emerged as a robust tool for quantifying the likeness between two entities by evaluating the overlap of their characteristics. This guide provides a objective, data-driven comparison of the Jaccard similarity measure against prominent alternative metrics, including Dice, Tanimoto, and Ochiai. The analysis is framed within applied research contexts, such as drug similarity analysis and network reconstruction, to offer practical insights for researchers, scientists, and drug development professionals. Supporting experimental data and detailed methodologies are synthesized to illuminate the relative performance, strengths, and optimal use cases of each measure, providing a clear framework for algorithmic selection in scientific research.

Performance Comparison of Similarity Measures

A comprehensive study directly compared the performance of several similarity measures, including Jaccard, Dice, Tanimoto, and Ochiai, in the context of predicting drug-drug similarity based on shared indications and side effects. The research utilized a large dataset from the Side Effect Resource (SIDER 4.1) database, encompassing 2997 drugs in the side effects category and 1437 drugs in the indications category [24]. Each drug was represented by a binary vector of its associated indications or side effects, and the similarity for over 5.5 million potential drug pairs was calculated [24].

The key performance finding is summarized in the table below:

Table 1: Performance Comparison of Similarity Measures in Drug-Drug Similarity Analysis

| Similarity Measure | Mathematical Formula | Performance Ranking | Key Characteristics |

|---|---|---|---|

| Jaccard | ( S_{Jaccard} = \frac{a}{a+b+c} ) [24] [25] | Best Overall [24] | Normalization of inner product; considers only positive matches [24]. |

| Dice | ( S_{Dice} = \frac{2a}{2a+b+c} ) [24] | Not Specified (Examined) | A normalization on inner product [24]. |

| Tanimoto | ( S_{Tanimoto} = \frac{a}{(a+b)+(a+c)-a} ) [24] | Not Specified (Examined) | A normalization on inner product [24]. |

| Ochiai | ( S_{Ochiai} = \frac{a}{\sqrt{(a+b)(a+c)}} ) [24] | Not Specified (Examined) | A normalization on inner product [24]. |

The study concluded that among the examined methods, the Jaccard similarity measure demonstrated the best overall performance results for identifying drug similarity based on indications and side effects [24]. All measures in this comparison considered only positive matches (the presence of a feature) and not negative matches (the absence of a feature) [24].

Experimental Protocols and Workflows

Detailed Methodology for Drug Similarity Analysis

The experimental protocol that yielded the comparative data in Section 2 followed a systematic workflow [24]:

- Data Extraction: Primary data on drug indications and side effects were extracted from the Side Effect Resource (SIDER 4.1) database. This provided 2997 drugs and 6123 side effects, as well as 1437 drugs and 2714 indications [24].

- Data Vectorization: For each approved drug, a binary vector was constructed. The length of the vector for indications was 2714, and for side effects, it was 6123. A value of '1' at a specific index indicated the drug was associated with that particular indication or side effect, and '0' indicated it was not [24].

- Similarity Calculation: The four similarity measures (Jaccard, Dice, Tanimoto, and Ochiai) were applied to calculate the pairwise similarity between drugs using their binary vectors. A minimum threshold greater than zero was set to filter out non-discriminative similarities [24].

- Performance Evaluation: The benchmark for comparing the performance of the similarity measures was based on the correct or incorrect detection and interpretation of drug indications and side effects for each measurement. Drugs with zero vector values were eliminated from the final analysis [24].

Workflow Visualization

The following diagram illustrates the key stages of this experimental protocol:

Figure 1: Workflow for comparative analysis of drug similarity measures.

The Scientist's Toolkit: Research Reagent Solutions

The experiments and analyses cited in this guide rely on several key software tools and databases, which form an essential toolkit for researchers in this field.

Table 2: Essential Research Tools for Similarity Analysis and Network Reconstruction

| Tool Name | Type | Primary Function | Relevance to Similarity Analysis |

|---|---|---|---|

| SIDER 4.1 [24] | Database | Contains information on marketed medicines, their recorded adverse drug reactions, and indications. | Provides the raw data (side effects and indications) used to construct binary feature vectors for drug similarity measurement [24]. |

| RDKit [26] | Cheminformatics Toolkit | Provides robust core chemistry functions (molecule I/O, fingerprint generation, similarity search). | Computes molecular fingerprints and performs similarity searches using various metrics, including Tanimoto (Jaccard) and Dice [26]. |

| STRING [27] | Database | A functional protein-protein interaction network. | Serves as an evaluation network (ground truth) to benchmark the performance of reconstructed gene regulatory networks [27]. |

| Cytoscape [24] | Software Platform | An open-source platform for complex network visualization and integration. | Used to visually interpret and analyze the results of similarity analyses, such as networks of similar drugs [24]. |

| MACRO-APE [9] | Software Tool | Computes Jaccard index-based similarity for transcription factor binding site models. | Specialized implementation of Jaccard similarity for comparing two TFBS models, each consisting of a PWM and a scoring threshold [9]. |

This comparative overview demonstrates that the choice of similarity measure can significantly impact the outcomes of scientific research, particularly in domains like drug discovery. The experimental evidence indicates that the Jaccard similarity measure can achieve superior overall performance in identifying drug similarities based on clinical profiles like indications and side effects when compared to Dice, Tanimoto, and Ochiai measures. Its effectiveness stems from a robust and intuitive normalization approach. However, the optimal measure is often context-dependent. Researchers must therefore carefully consider their data type—whether binary vectors, continuous values, or weighted sets—and their specific analytical goals. By leveraging the detailed protocols, performance data, and toolkit presented herein, scientists can make informed decisions to enhance the accuracy and reliability of their computational analyses.

Jaccard Similarity in Action: Reconstruction Approaches Across Biomedical Domains

Network pharmacology represents a fundamental paradigm shift in drug discovery, moving away from the conventional "one drug, one target" model toward a holistic understanding of complex biological systems. This approach incorporates the complexity of biological systems through the analysis of molecular networks, providing crucial insights into disease pathogenesis and potential therapeutic interventions [28]. The field of network medicine, which integrates network science and systems biology, addresses the limitations of excessive reductionism that underpins traditional biomedical research by identifying disease-specific subnetworks within the comprehensive protein-protein interaction network (interactome) [19]. Within the universe of all physical protein-protein interactions in a cell, there exist subnetworks specific to each disease, known as disease modules [19]. This conceptual framework enables researchers to uncover potential disease drivers and study the effects of novel or repurposed drugs, used alone or in combination, offering exciting unbiased possibilities for advancing knowledge of disease mechanisms and precision therapeutics [19].

The identification and validation of drug targets represents a crucial challenge in biomedical research, and network pharmacology provides powerful tools for addressing this challenge through topological analysis of complex intracellular protein interactions [29]. By examining these complex interactions systematically, researchers can identify critical molecular hubs, pathways, and functional modules that may serve as more effective therapeutic targets [28]. This approach is particularly valuable for understanding traditional medicine formulations and multi-compound drugs, where multiple bioactive compounds target diverse gene sets through intricate plant-compound-gene hierarchies [28]. The application of artificial intelligence, including machine learning, deep learning, and graph neural networks, has further empowered network pharmacology by enabling systematic and accurate analysis of cross-scale mechanisms from molecular interactions to patient efficacy [30].

Comparative Analysis of Network Reconstruction Methodologies

Fundamental Approaches to PPI Network Analysis

The reconstruction of protein-protein interaction (PPI) networks for drug target discovery employs several distinct methodological approaches, each with characteristic strengths and limitations. Topological analysis examines the position and connectivity of proteins within the network, revealing that drug targets demonstrate unique topological characteristics—they are neither dominant hub proteins nor bridge proteins, but occupy distinct communities based on their modularity [29]. Disease module mapping identifies specific subnetworks within the comprehensive interactome that govern particular diseases, with approximately 85% of all diseases studied forming distinct subnetworks where seed proteins are linked by not more than one additional connector protein [19]. Multi-layer network construction integrates multiple biological entities (genes, compounds, and plants) into a unified analytical framework, successfully handling complex relationship patterns including shared compounds between plants and multi-targeted genes [28]. AI-driven network analysis utilizes machine learning and graph neural networks to overcome limitations of conventional network pharmacology approaches, including substantial noise, high dimensionality, and challenges in capturing dynamics and time series [30].

Table 1: Comparison of Network Reconstruction Methodologies

| Methodology | Key Features | Data Requirements | Applications in Drug Discovery |

|---|---|---|---|

| Topological Analysis | Examines connectivity patterns, centrality measures, community structure | PPI databases, drug target information | Identification of critical network proteins with special topological features [29] |

| Disease Module Mapping | Identifies disease-specific subnetworks, connector proteins | Seed proteins from expression screens or literature, interactome data | Unveiling novel disease mechanisms and potential drug targets [19] |

| Multi-Layer Integration | Handles plant-compound-gene hierarchies, shared compounds | Chemical, genomic, and phenotypic data | Understanding polypharmacology and traditional medicine mechanisms [28] |

| AI-Driven Analysis | Machine learning, graph neural networks, knowledge graphs | Heterogeneous multi-omics data | Predictive modeling of drug-target interactions and multi-scale mechanisms [30] |

Performance Metrics and Experimental Outcomes

Different network pharmacology approaches demonstrate distinct performance characteristics in experimental settings. The NeXus platform exemplifies modern automated network pharmacology analysis, processing a dataset comprising 111 genes, 32 compounds, and 3 plants in 4.8 seconds with peak memory usage of 480 MB [28]. In large-scale validation with datasets up to 10,847 genes, this approach demonstrated linear time complexity and completion times under 3 minutes, representing a greater than 95% reduction in analysis time compared to manual workflows requiring 15-25 minutes [28]. Network topology analysis of drug targets has revealed that they form distinct communities with modularity scores of approximately 0.428, indicating well-defined community structure, and average clustering coefficients of 0.374, suggesting moderate local connectivity [29] [28]. Drug-protein interaction characterization using tensor-product fingerprints can handle extremely high-dimensional data (84,195,800-dimension binary vectors) through efficient algorithms and space-efficient representations [31].

Table 2: Quantitative Performance Metrics of Network Pharmacology Platforms

| Platform/Method | Dataset Size | Processing Time | Memory Usage | Key Output Metrics |

|---|---|---|---|---|

| NeXus v1.2 | 111 genes, 32 compounds, 3 plants | 4.8 seconds | 480 MB | 143 nodes, 1033 edges, network density 0.1017 [28] |

| NeXus v1.2 (Large-scale) | 10,847 genes | < 3 minutes | Linear scaling | Automated enrichment analysis with FDR < 0.05 [28] |

| Topological Analysis | 11,301 nodes, 65,547 edges | Variable | Dependent on PPI database | Modularity: 0.428, Avg. clustering: 0.374 [28] |

| Drug-Protein Signature | 2,302 drugs, 2,334 proteins | Efficient with sparse models | Space-efficient representations | 78,692 interactions analyzed [31] |

Experimental Protocols for Network Reconstruction and Analysis

PPI Network Construction and Topological Analysis

The reconstruction of protein-protein interaction networks begins with comprehensive data integration from multiple established databases. Researchers typically extract PPI data from five primary sources: HPRD, IntAct, BioGRID, MINT, and DIP, which together provide approximately 65,785 nonredundant interactions [29]. Drug target information is principally sourced from the DrugBank database, which contains 1,604 proteins in its approved targets set (version 3.0) [29]. A critical step involves data preprocessing and redundancy reduction using tools like PISCES to remove sequences with identity greater than 20% for both drug target and non-target sequences, resulting in a refined set of 517 drug targets and 3,834 common proteins [29]. Following data integration, researchers construct the maximal connected component of the network to mitigate the effects of incomplete interactions, which typically contains two types of proteins: known drug targets (D) and pending test proteins (PT) [29].

The topological analysis employs graph theory metrics to characterize network properties. The drug targets network is represented as an undirected network G = (V, E), where V denotes proteins and E represents interactions between protein pairs [29]. For each node i ∈ V, ki denotes its degree, and A represents the adjacency matrix where Ai,j = 1 indicates interaction between nodes i and j [29]. Researchers calculate key topological indices including degree distribution, betweenness centrality, clustering coefficient, and modularity scores to identify proteins with special topological features that differ significantly from normal proteins [29]. This analysis reveals that drug targets occupy distinct positions within the network—they are neither hub proteins nor bridge proteins—but rather form specific communities based on their modularity [29].

Drug-Protein Interaction Signature Characterization

The characterization of drug-protein interaction signatures employs a supervised classification framework with sophisticated feature engineering. Researchers represent each drug-protein pair (C,P) as a high-dimensional feature vector Φ(C,P) and implement a linear function f(C,P) = wTΦ(C,P) to predict interacting pairs [31]. The approach utilizes tensor-product fingerprints created by computing the tensor product between drug profiles and protein profiles, generating extremely high-dimensional binary vectors (84,195,800 dimensions) that encode cross-integrated biological features [31]. Drug profiles incorporate both chemical substructures (17,017-dimension binary vectors using KEGG Chemical Function and Substructures descriptor) and adverse drug reactions (10,543-dimension binary vectors derived from FDA AERS data), concatenated into 27,560-dimension integrative feature vectors [31].

Protein profiles integrate multiple biological characteristics through domain profiles (2,678-dimension binary vectors based on PFAM domains), pathway profiles (270-dimension binary vectors from KEGG pathway maps), and module profiles (107-dimension binary vectors from KEGG pathway modules), combined into 3,055-dimension integrative feature vectors [31]. The analytical process employs logistic regression with L1-regularization to induce sparsity in the weight vector, driving most weight elements corresponding to unimportant features to zero while retaining biologically meaningful signatures [31]. This approach efficiently handles the computational challenges of massive feature spaces through gradient-based optimization methods specifically designed for high-dimensional data [31].

Jaccard Similarity Analysis in Network Pharmacology

Theoretical Foundations of Jaccard Similarity

The Jaccard similarity index serves as a fundamental metric for quantifying similarity between sets in network pharmacology applications. Mathematically, the Jaccard similarity between two sets A and B is defined as the size of their intersection divided by the size of their union: J(A,B) = |A ∩ B| / |A ∪ B| [32]. This proportional index provides dimensionless scalar values ranging from 0 (no similarity) to 1 (complete similarity), offering a robust measure for comparing biological entities represented as sets [33]. For real-valued vectors commonly encountered in pharmacological data, the Jaccard similarity is generalized through a specialized formulation that handles positive and negative components separately: J(𝐱,𝐲) = [∑min(xiP,yiP) + min(|xiN|,|yiN|)] / [∑max(xiP,yiP) + max(|xiN|,|yiN|)], where xiP represents positive components and xiN represents negative components [33].

The analytical estimation of Jaccard similarity distributions represents an important advancement for network pharmacology applications. Researchers have developed methods to estimate the probability density of Jaccard similarity values for data elements characterized by specific statistical distributions, particularly uniform and normal cases [33]. This analytical approach enables researchers to better understand and anticipate similarity comparison properties within datasets, including heterogeneity, skewness, magnitude variations, and potential multimodality [33]. The non-linear nature of the Jaccard index, incorporating maximum and minimum operations, tends to perform particularly sharper comparisons than alternative approaches including cosine similarity, inner products, and distances [33]. This sharpness can be further enhanced through controlled parameterization by raising similarity values to a power of D, with higher values producing increasingly sharper comparisons [33].

Applications in Network-Based Drug Discovery

Jaccard similarity analysis enables critical functionalities in network pharmacology through similarity network construction. By representing biological entities as nodes and assigning link weights based on Jaccard similarity between entity pairs, researchers can create comprehensive similarity networks that reveal interrelationships, heterogeneity, and modular organization within datasets [33]. In genomic sequence analysis, Jaccard similarity provides efficient estimation of alignment sizes through min-hash based approaches, though standard implementations face limitations when k-mer distributions are significantly non-uniform due to GC biases or repeats [21]. The Spectral Jaccard Similarity method addresses these limitations by performing singular value decomposition on min-hash collision matrices, naturally accounting for uneven k-mer distributions and providing more accurate alignment size estimates [21].

In drug target identification, Jaccard similarity facilitates the comparison of drug chemical substructures, protein functional domains, and adverse reaction profiles, enabling the detection of non-obvious relationships within drug-protein interaction networks [31]. The integration of Jaccard similarity with interiority index (overlap coefficient) produces the coincidence similarity index, which further enhances comparisons between biological entities [33]. For traditional medicine research, Jaccard-based similarity measures help identify shared compounds between plants and multi-targeted genes, revealing synergistic therapeutic effects within complex plant-compound-gene hierarchies [28]. The proportional nature of the Jaccard index has been verified to provide particularly interesting approaches to data classification involving right-skewed features commonly encountered in pharmacological datasets [33].

Table 3: Essential Research Reagents and Computational Tools for Network Pharmacology

| Resource Category | Specific Tools/Databases | Key Functionality | Application in Research |

|---|---|---|---|

| PPI Databases | HPRD, IntAct, BioGRID, MINT, DIP | Provide non-redundant protein-protein interactions | Network construction and topological analysis [29] |

| Drug Target Databases | DrugBank, ChEMBL, KEGG, PDSP Ki, Matador | Curated drug-protein interaction information | Validation of predicted targets and interaction networks [29] [31] |

| Chemical Information Resources | KEGG Chemical Function and Substructures (KCF-S) | Chemical substructure descriptors for drugs | Drug profile construction and similarity analysis [31] |

| Protein Functional Annotation | PFAM, KEGG Pathways, KEGG Modules | Functional domains, biological pathways, pathway modules | Protein profile construction and functional enrichment [31] |

| Adverse Reaction Data | FDA AERS (Adverse Event Reporting System) | Drug side effect and adverse reaction profiles | Drug safety profiling and polypharmacology assessment [31] |

| Computational Platforms | NeXus, LIBLINEAR, Cytoscape | Network analysis, machine learning, visualization | Implementation of algorithms and result interpretation [28] [31] |

Successful network pharmacology research requires integration of diverse data types through standardized data processing protocols. Chemical structures are represented using 17,017 chemical substructures via the KEGG Chemical Function and Substructures (KCF-S) descriptor, creating 17,017-dimension binary vectors where presence or absence of each substructure is coded as 1 or 0 [31]. Adverse drug reaction information derived from the FDA Adverse Event Reporting System (AERS) encompasses 10,543 ADRs, represented as 10,543-dimension binary vectors for each drug [31]. Protein functional annotation integrates 2,678 PFAM domains, 270 KEGG pathway maps, and 107 KEGG pathway modules into comprehensive 3,055-dimension feature vectors [31]. For specialized applications involving traditional medicine, research must comply with the Network Pharmacology Evaluation Methodology Guidance developed by the World Federation of Chinese Medicine Societies (WFCMS), assessing data collection, network analysis, and result validation based on reliability, standardization, and rationality [34].

Advanced computational infrastructure is essential for handling the substantial computational demands of network pharmacology analyses. The tensor-product fingerprint approach generates extremely high-dimensional representations (84,195,800-dimension binary vectors) requiring specialized algorithms with space-efficient representations and sparsity-induced classifiers [31]. Modern platforms like NeXus v1.2 demonstrate efficient processing capabilities, handling datasets of 111 genes, 32 compounds, and 3 plants in 4.8 seconds with peak memory usage of 480 MB, while maintaining linear time complexity for larger datasets up to 10,847 genes [28]. Artificial intelligence approaches, particularly graph neural networks and knowledge graphs, require substantial computational resources but enable unprecedented multi-scale analysis from molecular interactions to patient efficacy [30].

Drug repurposing offers a promising strategy for drug discovery by identifying new therapeutic indications for existing, marketed drugs, thereby significantly reducing the risks, costs, and time typically required for drug development [35]. Traditional drug development is a time-consuming and high-risk endeavor, with recent estimates suggesting an average cost ranging from 314 million to 2.8 billion US dollars and a timeline of approximately 12 to 15 years from initial concept to completion [35]. Various methods exist for drug repurposing, including high-throughput screening of drug compound libraries, computational in silico approaches, and literature-based methods [35]. While numerous methods utilize literature for data mining in drug repositioning, relatively few approaches leverage literature citation networks for this purpose [35].

Literature-based discovery methods enable drug repurposing by mining large-scale repositories of scientific literature to identify and curate repurposed drugs [35]. These approaches typically establish connections between drugs and literature through genes associated with the literature, creating relationships between drug-target coding genes and scientific publications [35]. This methodology primarily focuses on drugs with known targets, allowing researchers to build connections between drugs and scientific literature through these target-genes associations.

Table 1: Comparison of Drug Repurposing Approaches

| Method Type | Key Features | Advantages | Limitations |

|---|---|---|---|

| High-Throughput Screening | Experimental screening of compound libraries | Direct biological evidence | High cost, resource-intensive |

| Computational In Silico | Chemical similarity, target prediction | Scalable, cost-effective | Limited by model accuracy |

| Literature-Based Citation Analysis | Network analysis of scientific publications | Leverages existing knowledge, comprehensive | Dependent on literature coverage |

| Machine Learning | Pattern recognition in complex datasets | Handles multidimensional data | "Black box" interpretation challenges |

Jaccard Similarity in Biomedical Research

Fundamental Principles

The Jaccard similarity index, named after French mathematician Paul Jaccard, is a metric used to quantify the similarity between two sets [32]. Mathematically, the Jaccard similarity between two sets A and B is defined as the size of their intersection divided by the size of their union: J(A,B) = \|A ∩ B\| / \|A ∪ B\| [32]. This measure provides a dimensionless scalar value between 0 (no similarity) and 1 (complete similarity), making it particularly useful for comparing binary data such as presence-absence patterns in biological systems [36].

In biomedical contexts, Jaccard similarity has been applied to diverse areas including text analysis, genomic studies, and social network analysis [32]. The proportional nature of the Jaccard index has been verified to provide an interesting approach to data classification involving right-skewed features [33]. When modified to operate on real-valued vectors, the Jaccard similarity index can be expressed through a more complex formula that separates positive and negative vector components [33]. This flexibility allows researchers to apply the same fundamental similarity concept across various data types and research domains.

Statistical Foundations and Hypothesis Testing

Statistical hypothesis testing using the Jaccard similarity coefficient has been seldom used or studied until recently [36]. For rigorous scientific applications, researchers have developed a suite of statistical methods for the Jaccard similarity coefficient for binary data that enable straightforward incorporation of probabilistic measures in analysis [36]. These methods include unbiased estimation of expectation and centered Jaccard coefficients that account for different probabilities of occurrences, with negative and positive values of the centered coefficient naturally corresponding to negative and positive associations [36].

The exact distribution of Jaccard similarity coefficients under independence can be derived, providing accurate p-values for statistical hypothesis testing [36]. For large datasets where exact solutions become computationally expensive, efficient estimation algorithms including bootstrap and measurement concentration approaches have been developed to overcome computational burdens due to high-dimensionality [36]. These statistical advances have made it possible to rigorously evaluate whether observed similarities significantly deviate from what would be expected by chance alone.

Data Collection and Preprocessing

The experimental framework for literature-based drug repurposing through citation networks begins with comprehensive data collection. Researchers collected 1,978 FDA-approved or clinically investigational drugs, each with at least two targets, from previous studies [35]. These drugs without duplication were associated with 2,254 unique targets, with an average of 6 targets per drug (median of 3, maximum of 256) [35]. The average number of articles related to these targets was 249 (median 108, maximum 6,563), while the average number of articles per drug was 2,658 (median 1,397, maximum 70,878) [35].

To establish relationships between drugs and scientific literature, researchers built connections through genes associated with the literature, creating links between drug-target coding genes and publications [35]. This approach leverages the vast amount of literature data accumulated over more than a century, with approximately 200 million scientific articles available in resources like OpenAlex, a fully open scientific knowledge graph that includes metadata for journal articles, books, and disambiguated author information [35]. The relationship between drugs and literature is established through the links between drug-target coding genes and the literature, focusing primarily on drugs with known targets.

Network Construction and Similarity Calculation

For pairwise combinations of drugs, researchers constructed a citation network based on literature related to the drugs [35]. The literature-based similarities between drug pairs were then calculated using this citation network, allowing assessment of the overall impact of different types of data on drug-drug similarity [35]. The fundamental assumption underlying this approach is that for literature related to two drugs, higher overlap between the literature indicates greater similarity between the two drugs.

Since the relationship between drugs and literature is established through drug targets, the literature-based drug-drug similarity is effectively calculated based on literature-based target-target similarity [35]. This means that the more identical the literature is between different targets, the closer the relationship between those targets, suggesting a high degree of functional similarity. The approach also considers using references of articles related to drugs for drug repurposing, based on the normative pattern of literature citations by authors, which follow certain logical and structural patterns rather than being arbitrary [35].

Figure 1: Experimental workflow for literature-based drug repurposing using citation networks and Jaccard similarity analysis

Performance Validation Methods

To validate the performance of literature-based similarity metrics, researchers created a validation set containing true positives and true negatives for drug pairs, sourced from the repoDB database, a standard dataset for drug repurposing [35]. They compared literature-based similarities with human interactome-based separation using this validation set, evaluating performance in terms of Area Under the Curve (AUC), F1 score, and Area Under the Precision-Recall Curve (AUCPR) [35].

The Jaccard similarities of drug pairs were ranked from highest to lowest, with de novo drug repurposing candidates identified using a threshold defined as the upper quantile value of Jaccard similarities [35]. This systematic approach allowed for prioritization of promising drug repurposing candidates while controlling for false discoveries. The validation process ensures that identified drug pairs have statistical support beyond random chance, increasing confidence in the predicted repurposing opportunities.

Comparative Performance Analysis

Quantitative Comparison of Similarity Metrics

The performance evaluation demonstrated that the literature-based Jaccard similarity was the most effective similarity metric for identifying drug repurposing opportunities [35]. When compared to other similarity measures, the Jaccard coefficient outperformed alternative approaches based on AUC and F1 score metrics [35]. Researchers identified 19,553 potential drug pairs for repurposing by analyzing biomedical literature data through the Jaccard coefficient, applying a threshold defined by the upper quantile value to prioritize the most promising de novo drug repurposing candidates [35].

The positive correlation between literature-based Jaccard similarity and various biological and pharmacological similarities (including GO similarities, chemical similarity, clinical similarity, co-expression similarity, and sequence similarity) provided additional validation of the approach [35]. As the Jaccard coefficient for a drug pair increased, corresponding increases were observed in these complementary similarity measures, confirming that literature-based similarity captures biologically meaningful relationships [35]. This correlation analysis strengthens the premise that drugs sharing substantial literature overlap are likely to share therapeutic properties.

Table 2: Performance Metrics of Literature-Based Jaccard Similarity in Drug Repurposing

| Evaluation Metric | Performance | Comparative Advantage |

|---|---|---|

| AUC (Area Under Curve) | Superior to other similarity measures | Better discrimination of true drug associations |

| F1 Score | Highest among tested metrics | Optimal balance of precision and recall |

| AUCPR (Area Under Precision-Recall Curve) | Strong performance | Effective in imbalanced data scenarios |

| Biological Correlation | Positive with GO, chemical, clinical similarities | Captures meaningful pharmacological relationships |

Case Studies of Identified Drug Pairs