Kinetic vs. Stoichiometric Modeling: A Strategic Guide for Biomedical Researchers

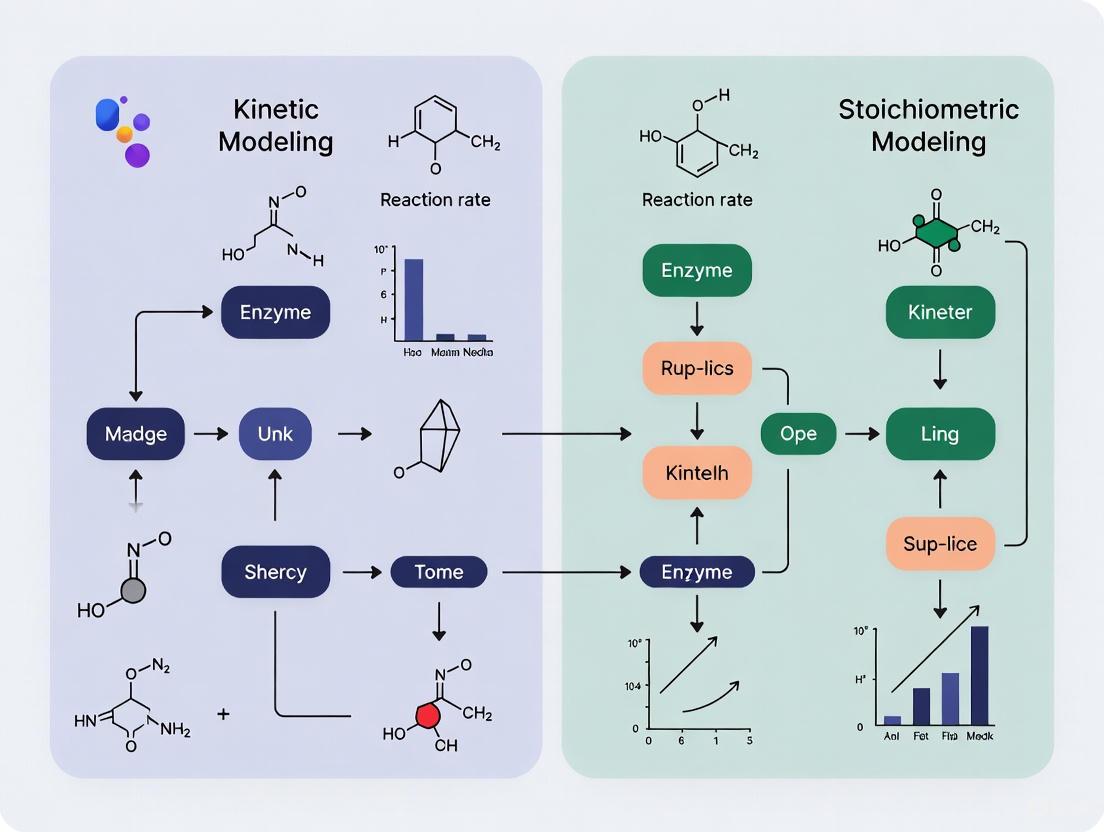

This article provides a comprehensive guide for researchers and drug development professionals on selecting between kinetic and stoichiometric metabolic modeling approaches.

Kinetic vs. Stoichiometric Modeling: A Strategic Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on selecting between kinetic and stoichiometric metabolic modeling approaches. It covers the foundational principles of each method, explores their specific applications from pathway design to drug stability prediction, and addresses common challenges and optimization strategies. A comparative analysis outlines explicit criteria for method selection based on research goals, data availability, and computational resources, empowering scientists to build more accurate and predictive models for biotechnology and biomedical research.

Core Principles: Understanding the Fundamental Mechanics of Kinetic and Stoichiometric Models

Stoichiometric modeling has emerged as an indispensable tool in systems biology and metabolic engineering for analyzing the capabilities of metabolic networks. Unlike kinetic models that require extensive parameterization of enzyme kinetics, stoichiometric models rely fundamentally on the principle of mass balance and the steady-state assumption to predict metabolic flux distributions at a network scale. This approach provides a powerful framework for understanding how metabolic networks supply energy and building blocks for cell growth and maintenance under various conditions [1]. The methodology has been successfully applied to diverse areas including pharmaceutical development, where it guides drug discovery and helps elucidate the mechanisms of target-mediated drug disposition [2] [3].

The foundation of stoichiometric modeling lies in representing metabolism as a network of biochemical reactions interconnected through shared metabolites. Each reaction is characterized by its stoichiometric coefficients, which quantify the precise molecular relationships between reactants and products. When combined with constraint-based optimization techniques, this approach enables researchers to predict cellular phenotypes, identify potential drug targets, and optimize bioprocesses without requiring detailed kinetic information [3] [4]. As the field progresses, standardization of reconstruction methods and model representation formats remains a crucial challenge, particularly for human metabolic models used in biomedical research [5].

Core Principles of Stoichiometric Modeling

The Mass Balance Foundation

At the heart of stoichiometric modeling lies the mass balance principle, which ensures that the total mass of each chemical element is conserved in every biochemical reaction. For any metabolite in a network, its rate of change can be expressed mathematically as a function of the reaction fluxes and their stoichiometric coefficients [1]. This fundamental relationship is captured in the equation:

[ \frac{dxi}{dt} = \sum{j=1}^r n{ij} \cdot vj ]

Where (xi) represents the concentration of metabolite (i), (n{ij}) is the net stoichiometric coefficient of metabolite (i) in reaction (j), and (v_j) is the flux through reaction (j). The stoichiometric coefficient is negative when the metabolite is consumed and positive when it is produced [1]. This mass balance constraint must hold true for all internal metabolites in the network, ensuring that the number of atoms of each type (C, H, O, N, P, S) and the net charge balance on both sides of every reaction equation [1].

In practice, these relationships are collectively represented using a stoichiometric matrix S, where rows correspond to metabolites and columns represent reactions. Each entry (S_{ij}) in this matrix contains the stoichiometric coefficient of metabolite (i) in reaction (j). The stoichiometric matrix thus serves as the mathematical backbone for all subsequent analyses, encoding the network topology and defining the permissible flux distributions through mass conservation constraints [1] [6].

The Steady-State Assumption

The steady-state assumption is a simplifying constraint that dramatically reduces the complexity of analyzing metabolic networks. At steady state, the concentration of internal metabolites remains constant over time, meaning that the net rate of production equals the net rate of consumption for each metabolite. This assumption transforms the mass balance equation into:

[ \frac{d\mathbf{x}}{dt} = \mathbf{S} \cdot \mathbf{v} = 0 ]

Where (\mathbf{S}) is the stoichiometric matrix and (\mathbf{v}) is the flux vector [1] [6]. This steady-state condition implies that the flux vector must reside in the null space of the stoichiometric matrix, meaning all internal metabolites are simultaneously balanced without accumulation or depletion [1].

The steady-state assumption is particularly justified when analyzing metabolic processes where internal metabolite concentrations change slowly compared to metabolic fluxes, or when studying balanced cellular growth. However, this assumption does not apply to external metabolites (nutrients, waste products) or to transient conditions where metabolite concentrations fluctuate significantly. For such dynamic scenarios, kinetic models that explicitly account for temporal changes may be more appropriate [7].

Chemical Moisty Conservation

In metabolic networks, certain metabolites function as cofactors that are continuously recycled rather than consumed. Examples include ATP, NAD(P)H, and coenzyme A, which participate in numerous reactions while maintaining relatively constant total pools. These chemical moiety conservation relationships impose additional constraints on the system [1].

For instance, the conservation of the adenosine moiety can be expressed as:

[ A_T = [ATP] + [ADP] + [AMP] ]

Where (A_T) represents the total adenosine pool. Similar relationships exist for phosphate conservation across adenine nucleotides [1]. These conservation relationships create linear dependencies between metabolites, reducing the number of independent metabolites in the system. Mathematically, this is represented as:

[ \mathbf{L}_0 \cdot \mathbf{x} = \mathbf{t} ]

Where (\mathbf{L}_0) is the moiety conservation matrix, (\mathbf{x}) is the metabolite concentration vector, and (\mathbf{t}) is the vector of total moiety concentrations [1]. These relationships can be derived from the left null-space of the stoichiometric matrix and further constrain the feasible metabolic states.

Network-Scale Analysis

Stoichiometric modeling enables network-scale analysis by considering the entire metabolic system as an interconnected whole rather than isolated pathways. This comprehensive perspective allows researchers to study systemic properties such as metabolic robustness, plasticity, and the organism's ability to cope with environmental changes [1].

The network-scale approach reveals emergent properties that cannot be predicted from individual components alone. For example, elementary flux modes represent minimal sets of reactions that can operate at steady state, while flux balance analysis identifies optimal flux distributions with respect to biological objectives [1]. These methods have been instrumental in predicting metabolic behaviors in various biological systems, from microorganisms to human tissues [4].

Table 1: Key Mathematical Concepts in Stoichiometric Modeling

| Concept | Mathematical Representation | Biological Interpretation |

|---|---|---|

| Stoichiometric Matrix (S) | (S_{ij}): coefficient of metabolite (i) in reaction (j) | Network connectivity and reaction stoichiometry |

| Mass Balance | (\frac{d\mathbf{x}}{dt} = \mathbf{S} \cdot \mathbf{v}) | Metabolic concentration dynamics |

| Steady-State Assumption | (\mathbf{S} \cdot \mathbf{v} = 0) | Homeostasis of internal metabolites |

| Null Space | ({\mathbf{v} | \mathbf{S} \cdot \mathbf{v} = 0}) | All feasible steady-state flux distributions |

| Chemical Moisty Conservation | (\mathbf{L}_0 \cdot \mathbf{x} = \mathbf{t}) | Conservation of recycled cofactor pools |

Methodologies and Experimental Protocols

Metabolic Network Reconstruction

The construction of a reliable stoichiometric model begins with metabolic network reconstruction. This process involves systematically assembling all known biochemical transformations for a specific organism or cell type based on genomic, biochemical, and physiological data [5] [4]. The protocol generally follows these essential steps:

Genome Annotation: Identify metabolic genes and their associated enzyme functions using databases such as KEGG, BRENDA, BioCyc, and Uniprot [4].

Reaction Assembly: Compile the complete set of biochemical reactions, including transport processes across cellular compartments.

Stoichiometric Matrix Formation: Construct the stoichiometric matrix S where rows represent metabolites and columns represent reactions.

Charge and Elemental Balancing: Verify that each reaction is balanced for all chemical elements and charge.

Gap Filling: Identify and address "gaps" in the network where dead-end metabolites or orphan reactions exist, using biochemical knowledge and experimental data [5].

Validation: Test the network's functionality by ensuring it can produce known biomass components and essential metabolites.

For mammalian systems, additional challenges include complex regulatory mechanisms, compartmentalization, and the requirement for more complex nutrient media [4]. Recent efforts have produced comprehensive reconstructions such as Recon, a global human metabolic network that accounts for 1496 genes, 2766 metabolites, and 3311 metabolic and transport reactions [4].

Flux Balance Analysis (FBA) Protocol

Flux balance analysis is a constraint-based optimization method used to predict steady-state flux distributions in metabolic networks. The standard FBA protocol consists of the following steps [1] [6]:

Define the Stoichiometric Matrix: Construct matrix S of dimensions m×n, where m is the number of metabolites and n is the number of reactions.

Set Flux Constraints: Apply lower and upper bounds for each reaction flux ((v_j)):

- For irreversible reactions: (0 \leq vj \leq v{j}^{max})

- For reversible reactions: (v{j}^{min} \leq vj \leq v_{j}^{max})

Define Objective Function: Formulate a linear objective function to optimize, typically biomass production or ATP synthesis. The general form is: [ Z = \mathbf{c}^T \cdot \mathbf{v} ] Where (\mathbf{c}) is a vector of weights indicating how much each flux contributes to the objective.

Solve Linear Programming Problem: [ \begin{align} \text{Maximize } & Z = \mathbf{c}^T \cdot \mathbf{v} \ \text{Subject to } & \mathbf{S} \cdot \mathbf{v} = 0 \ & \mathbf{v}{min} \leq \mathbf{v} \leq \mathbf{v}{max} \end{align} ]

Validate Predictions: Compare model predictions with experimental data, such as measured growth rates or substrate uptake rates.

The COBRA (Constraint-Based Reconstruction and Analysis) Toolbox provides a standardized implementation of FBA and related methods, with default flux bounds typically set to [-1000, 1000] for reversible reactions and [0, 1000] for irreversible ones [6].

Metabolic Flux Analysis (MFA) with Isotopic Labeling

Metabolic flux analysis using isotopic labeling (particularly ¹³C) enhances the resolution of flux estimation by tracking the fate of individual atoms through metabolic networks. The experimental protocol involves [4]:

Tracer Selection: Choose appropriate ¹³C-labeled substrates (e.g., [1-¹³C]glucose, [U-¹³C]glutamine) based on the pathways of interest.

Isotope Labeling Experiment: Cultivate cells with the labeled substrate until isotopic steady state is reached (typically 24-72 hours for mammalian cells).

Mass Spectrometry Analysis: Measure ¹³C labeling patterns in intracellular metabolites using GC-MS or LC-MS.

Stoichiometric Modeling: Incorporate isotopic labeling data into the stoichiometric model to constrain feasible flux distributions.

Flux Estimation: Solve a weighted least-squares problem to find the flux distribution that best fits the measured labeling patterns and extracellular flux data.

This approach is particularly valuable for distinguishing between parallel pathways, quantifying reaction reversibility, and resolving metabolic cycles that are otherwise unobservable from net exchange rates alone [4].

Diagram 1: Metabolic Network Reconstruction Workflow. The process begins with genome annotation and progresses through successive refinement stages before culminating in experimental validation.

Stoichiometric vs. Kinetic Modeling: A Comparative Analysis

Fundamental Differences and Applications

The choice between stoichiometric and kinetic modeling approaches depends on the research question, available data, and desired predictive capabilities. While stoichiometric models focus on network structure and mass balance constraints, kinetic models incorporate detailed enzyme mechanisms and regulatory interactions to capture system dynamics [7].

Table 2: Comparison of Stoichiometric and Kinetic Modeling Approaches

| Characteristic | Stoichiometric Models | Kinetic Models |

|---|---|---|

| Fundamental Basis | Mass balance, steady-state assumption | Enzyme mechanisms, reaction kinetics |

| Mathematical Form | Linear equations: (\mathbf{S} \cdot \mathbf{v} = 0) | Nonlinear ODEs: (\frac{d\mathbf{x}}{dt} = \mathbf{f}(\mathbf{x},\mathbf{p})) |

| Data Requirements | Network topology, exchange fluxes | Kinetic parameters, metabolite concentrations |

| Computational Demand | Relatively low (linear programming) | High (nonlinear optimization, ODE integration) |

| Time Resolution | Steady-state only | Dynamic responses, transient states |

| Regulatory Effects | Indirectly through constraints | Explicit representation of regulation |

| Network Scale | Genome-scale possible | Typically pathway-scale |

| Key Applications | Flux prediction, gap filling, strain design | Dynamic behavior, metabolic control analysis |

Stoichiometric models excel in network-wide analyses and can handle genome-scale reconstructions with thousands of reactions. Their computational efficiency enables high-throughput applications such as predicting gene essentiality, optimizing metabolic engineering strategies, and integrating omics data [1] [4]. However, they cannot capture transient metabolic behaviors or predict metabolite concentration changes over time.

Kinetic models, in contrast, provide dynamic and mechanistic insights but require extensive parameterization that often limits their scope to specific pathways. Recent advances in parameter estimation, machine learning integration, and database development are gradually overcoming these limitations, making larger-scale kinetic models more feasible [7].

Decision Framework: When to Use Each Approach

Selecting the appropriate modeling strategy requires careful consideration of the biological question and available resources. The following decision framework provides guidance:

Choose Stoichiometric Modeling When:

- Analyzing network capabilities and pathway redundancy

- Predicting flux distributions at steady state

- Working with genome-scale networks

- Limited kinetic data is available

- High-throughput analysis of multiple conditions is needed

- Identifying potential drug targets or metabolic engineering strategies [3] [4]

Choose Kinetic Modeling When:

- Studying dynamic responses to perturbations

- Analyzing metabolic regulation and control mechanisms

- Detailed enzyme mechanism information is available

- Predicting metabolite concentration time courses

- Transient states or oscillatory behaviors are of interest

- Investigating allosteric regulation and signaling interactions [7]

In practice, a hybrid approach often proves most powerful, using stoichiometric models to define network boundaries and flux constraints, while incorporating kinetic details for specific pathways of interest [8] [7]. For instance, Mass Action Stoichiometric Simulation (MASS) models represent one such integration, combining stoichiometric network structure with mass action kinetics to create scalable dynamic models [8].

Essential Research Reagents and Computational Tools

Successful implementation of stoichiometric modeling requires both computational tools and experimental reagents for model validation and refinement.

Table 3: Research Reagent Solutions for Stoichiometric Modeling Applications

| Reagent/Tool | Type | Function | Example Applications |

|---|---|---|---|

| ¹³C-Labeled Substrates | Experimental reagent | Enables metabolic flux analysis via isotopic tracing | Mapping pathway contributions, quantifying flux distributions [4] |

| COBRA Toolbox | Computational tool | MATLAB-based suite for constraint-based modeling | FBA, FVA, network gap filling [6] |

| MC3 (Model & Constraint Consistency Checker) | Computational tool | Identifies topological issues in stoichiometric models | Detecting dead-end metabolites, blocked reactions [6] |

| SKiMpy | Computational tool | Python-based framework for kinetic model construction | Integrating stoichiometric and kinetic approaches [7] |

| MASSpy | Computational tool | Python package for kinetic modeling with mass action kinetics | Dynamic simulations of metabolic networks [7] |

| Tellurium | Computational tool | Platform for systems and synthetic biology modeling | Kinetic model simulation, parameter estimation [7] |

Diagram 2: Stoichiometric Modeling Framework Integrating Multiple Data Types. Experimental data including exchange fluxes, isotopic labeling, and gene expression constraints are integrated with stoichiometric models through computational analysis methods like FBA and FVA.

Applications in Pharmaceutical Research and Drug Development

Stoichiometric modeling has found particularly valuable applications in pharmaceutical research, where it helps elucidate complex biological mechanisms and optimize therapeutic protein production.

Target-Mediated Drug Disposition

In pharmacokinetics, stoichiometric modeling has revealed critical insights into target-mediated drug disposition (TMDD) for monoclonal antibodies. Traditional TMDD models often assume 1:1 binding stoichiometry between drugs and targets, while in reality, most antibodies possess two binding sites. This discrepancy can significantly impact model predictions, especially for soluble targets when the elimination rate of the drug-target complex is comparable to or lower than the drug elimination rate [2].

Correct stoichiometric assumptions are essential for adequate description of observed data, particularly when measurements of both total drug and total target concentrations are available. Models with proper 2:1 binding ratios or more comprehensive allosteric binding frameworks may be necessary to accurately capture the system behavior [2]. This highlights how stoichiometric considerations directly impact predictive accuracy in pharmacological applications.

Metabolic Engineering for Therapeutic Protein Production

Stoichiometric models have been extensively applied to optimize therapeutic protein production in mammalian cell systems, particularly Chinese Hamster Ovary (CHO) cells and hybridoma cells [4]. These models help identify metabolic bottlenecks, optimize nutrient feeding strategies, and enhance protein yields by:

Analyzing Central Carbon Metabolism: Identifying optimal ratios of glucose, glutamine, and other nutrients to maximize energy production while minimizing waste accumulation.

Reducing Byproduct Formation: Predicting genetic modifications that decrease lactate and ammonia production, which can inhibit cell growth and protein production.

Balancing Redox Cofactors: Ensuring adequate regeneration of NADPH for biosynthesis and oxidative stress protection.

Optimizing Biomass Formation: Tuning metabolic fluxes to balance energy generation, biomass production, and recombinant protein synthesis.

These applications demonstrate how stoichiometric modeling bridges fundamental metabolic principles with practical bioprocess optimization in pharmaceutical manufacturing [4].

Current Challenges and Future Directions

Despite significant advances, stoichiometric modeling faces several challenges that represent active areas of research. Standardization of reconstruction methods, representation formats, and model repositories remains a critical need, particularly for human metabolic models [5]. The current proliferation of models with different naming conventions, compartmentalization schemes, and levels of completeness hinders direct comparison and integration.

Model validation and consistency checking represent another challenge. Tools like MC3 have been developed to identify common issues such as dead-end metabolites, blocked reactions, and thermodynamic inconsistencies [6]. However, manual curation is still often required to resolve these issues, especially for large-scale models.

The integration of multi-omics data represents a promising frontier for enhancing stoichiometric models. Incorporating transcriptomic, proteomic, and metabolomic data allows the generation of tissue-specific or condition-specific models with improved predictive accuracy [5] [4]. Methods for contextualizing generic models using omics data continue to evolve, offering increasingly sophisticated approaches for studying human health and disease.

Looking forward, the convergence of stoichiometric and kinetic approaches through hybrid modeling frameworks promises to combine the network-scale coverage of stoichiometric models with the dynamic predictive power of kinetic models [8] [7]. Advances in machine learning, parameter estimation, and high-performance computing are accelerating this integration, potentially enabling a new generation of comprehensive metabolic models that capture both structural constraints and dynamic behaviors across entire metabolic networks.

Kinetic modeling represents a powerful methodology for capturing the dynamic, time-dependent behaviors of metabolic systems. Unlike stoichiometric models that predict steady-state fluxes, kinetic models are formulated as systems of ordinary differential equations (ODEs) that describe the temporal evolution of metabolite concentrations, providing a detailed and realistic representation of cellular processes. These models simultaneously link enzyme levels, metabolite concentrations, and metabolic fluxes, enabling researchers to study transient states, regulatory mechanisms, and cellular responses under fluctuating conditions [7]. The capability to capture how metabolic responses to diverse perturbations change over time makes kinetic modeling particularly valuable for applications in drug development, metabolic engineering, and systems biology where understanding dynamic behavior is crucial.

The development and application of kinetic models have historically lagged behind stoichiometric models due to requirements for detailed parametrization and significant computational resources. However, recent advancements are transforming this field, ushering in an era where large kinetic models, including near-genome-scale models, can propel metabolic research forward [7]. This guide examines the core components of kinetic modeling—differential equations, enzyme parameters, and dynamic simulations—within the context of selecting the appropriate modeling framework for specific research questions in pharmaceutical and biotechnology applications.

Kinetic versus Stoichiometric Modeling: A Comparative Framework

Understanding when to employ kinetic modeling versus stoichiometric modeling requires a clear comparison of their capabilities, assumptions, and applications. The table below summarizes the key distinctions:

Table 1: Comparison Between Stoichiometric and Kinetic Modeling Approaches

| Feature | Stoichiometric Models (e.g., FBA) | Kinetic Models |

|---|---|---|

| Mathematical Basis | Linear algebra (stoichiometric matrix S) | Nonlinear ordinary differential equations (ODEs) |

| Time Resolution | Steady-state only | Dynamic, time-course simulations |

| Parameters Required | Stoichiometry, uptake/secretion rates | Kinetic constants (KM, kcat), enzyme concentrations, initial metabolite levels |

| Regulatory Mechanisms | Cannot natively capture | Explicitly models inhibition, activation, allosteric regulation |

| Predictive Capabilities | Flux distributions at steady state | Metabolite concentration dynamics, transient states, multi-omics integration |

| Computational Demand | Relatively low | High, requires sophisticated ODE solvers |

| Parameterization Challenge | Moderate | High, limited by available kinetic data |

| Ideal Application Context | Growth phenotype prediction, pathway analysis | Drug perturbation studies, metabolic dynamics, enzyme-targeted therapies |

The choice between modeling approaches depends fundamentally on the research question. Stoichiometric models, particularly Flux Balance Analysis (FBA), excel when predicting steady-state metabolic fluxes under genetic or environmental perturbations, making them ideal for growth phenotype prediction and pathway analysis. In contrast, kinetic models become essential when investigating dynamic responses, transient metabolic states, or regulatory mechanisms such as allosteric control and feedback inhibition [7]. For drug development professionals, this distinction is critical—kinetic models provide the necessary framework to simulate how pharmaceutical interventions alter metabolic dynamics over time, capturing complex behaviors that steady-state approaches cannot represent.

Mathematical Foundation of Kinetic Models

Core Differential Equation Framework

At the heart of kinetic modeling lies a system of ODEs derived from biochemical reaction principles. The fundamental equation describing the change in metabolite concentrations over time is:

dm(t)/dt = S · v(t, m(t), θ) [9]

Where:

- m(t) = vector of metabolite concentrations at time t

- S = stoichiometric matrix defining the metabolic network structure

- v(t, m(t), θ) = vector of reaction rate functions (kinetic laws)

- θ = vector of kinetic parameters (e.g., KM, kcat, KI)

For enzyme-catalyzed reactions, the ODE system is derived from mass-action kinetics. Consider the classical Michaelis-Menten enzyme mechanism:

E + S ⇌ ES → E + P [10]

The corresponding ODEs describing this system are:

- d[S]/dt = -k₁[E][S] + k₋₁[ES]

- d[ES]/dt = k₁[E][S] - (k₋₁ + k₂)[ES]

- d[P]/dt = k₂[ES] [10]

With the enzyme conservation law: [E] = [E]T - [ES], where [E]T represents the total enzyme concentration.

Kinetic Rate Laws and Enzyme Parameters

Kinetic rate laws define how reaction rates depend on metabolite concentrations and enzyme levels. The most common rate laws and their parameters include:

Table 2: Common Kinetic Rate Laws and Their Parameters

| Rate Law | Mathematical Form | Key Parameters | Applicability |

|---|---|---|---|

| Michaelis-Menten | v = (Vmax × [S]) / (KM + [S]) | Vmax, KM | Single-substrate, irreversible reactions |

| Reversible Michaelis-Menten | v = (Vf×[S]/KmS - Vr×[P]/KmP) / (1 + [S]/KmS + [P]/KmP) | Vf, Vr, KmS, KmP | Single-substrate, reversible reactions |

| Mass Action | v = k × [S1] × [S2] | k (rate constant) | Elementary reactions |

| Hill Equation | v = Vmax × [S]^n / (K0.5 + [S]^n) | Vmax, K0.5, n (Hill coefficient) | Cooperative enzymes |

| Inhibition Models | v = Vmax × [S] / (KM(1 + [I]/KI) + [S]) | Vmax, KM, KI (inhibition constant) | Competitive inhibition |

It is important to note that the classical Michaelis-Menten equation assumes enzyme concentrations ([E]T) are substantially lower than the KM constant. When this condition is violated in vivo, a modified equation that accounts for enzyme concentration may be necessary for accurate predictions in applications such as physiologically based pharmacokinetic (PBPK) modeling [11].

Parameter Estimation Methodologies

Experimental Data Requirements

Parameterizing kinetic models requires quantitative data from various experimental sources. Key data types include:

- Time-resolved metabolomics: Measurements of metabolite concentrations over time following perturbations [7]

- Enzyme kinetic parameters: KM, kcat, KI values from in vitro or in vivo studies [12]

- Steady-state fluxes and concentrations: From ¹³C-metabolic flux analysis or other flux measurements [7]

- Enzyme abundance data: Quantitative proteomics measurements of enzyme concentrations [7]

- Thermodynamic data: Gibbs free energy of reactions for thermodynamically consistent models [7]

For example, in modeling fatty acid synthesis, researchers have compiled kinetic data for key enzymes including acetyl-CoA carboxylase (ACC), fatty acid synthase (FAS), very-long-chain fatty acid elongases (ELOVL 1-7), and desaturases to enable dynamic modeling of these pathways [12].

Computational Parameter Estimation Framework

Modern parameter estimation employs sophisticated computational frameworks. The following workflow diagram illustrates a robust parameter estimation process:

Diagram 1: Parameter Estimation Workflow

The loss function used during optimization must account for the large-scale differences in metabolite concentrations common in biological systems. A mean-centered loss function prevents domination by metabolites with high absolute concentrations:

J(mpred, mobs) = 1/N × Σ((mpred - mobs)/⟨m_obs⟩)² [9]

Where:

- m_pred = predicted metabolite concentrations

- m_obs = observed metabolite concentrations

- ⟨m_obs⟩ = mean of observed concentrations

Advanced training protocols perform gradient descent in log parameter space to handle parameters spanning orders of magnitude, with gradient clipping (global norm typically set to 4) to stabilize training [9]. The adjoint state method provides efficient gradient computation without scaling with the number of parameters, making it suitable for large-scale models [9].

Computational Tools and Implementation

Software Frameworks for Kinetic Modeling

Several computational frameworks support the development and parameterization of kinetic models:

Table 3: Computational Frameworks for Kinetic Modeling

| Tool/Framework | Language | Key Features | Applicability |

|---|---|---|---|

| jaxkineticmodel | Python/JAX | Automatic differentiation, SBML support, hybrid neural-mechanistic models, adjoint sensitivity analysis [9] | Large-scale kinetic model parameterization |

| SKiMpy | Python | Uses stoichiometric network as scaffold, efficient parameter sampling, ensures physiologically relevant time scales [7] | High-throughput kinetic modeling |

| pyPESTO | Python | Multi-start optimization, various parameter estimation techniques, compatible with AMICI for sensitivity computation [9] [7] | Parameter estimation for ODE models |

| Tellurium | Python | Standardized model structures, integrates various simulation and analysis tools [7] | Systems and synthetic biology applications |

| MASSpy | Python | Mass-action kinetics, integrated with constraint-based modeling tools (COBRApy) [7] | Kinetic modeling with flux sampling |

Table 4: Essential Research Reagents and Computational Resources

| Item | Function/Application | Technical Specifications |

|---|---|---|

| Time-series metabolomics data | Model training and validation | Quantitative measurements of metabolite concentrations across multiple time points post-perturbation |

| Enzyme kinetic parameters | Parameterizing rate laws | KM, kcat, KI values from databases or experimental studies [12] |

| SBML models | Model sharing and reproduction | Standardized XML format for exchanging kinetic models [9] |

| JAX-based differentiable programming | Efficient model optimization | Automatic differentiation, just-in-time compilation, GPU acceleration [9] |

| Stiff ODE solvers (e.g., Kvaerno5) | Numerical integration | Handles widely separated time scales in biological systems [9] |

Application Case Study: Glycolysis Modeling

A compelling example of kinetic modeling application comes from fitting a large-scale kinetic model of glycolysis (141 parameters) to experimental data from feast/famine feeding strategies [9]. The implementation used jaxkineticmodel with the following protocol:

- Model Setup: Imported SBML model or constructed from predefined kinetic mechanisms

- Data Preparation: Time-series concentration data for glycolytic intermediates

- Solver Configuration: Kvaerno5 stiff ODE solver with relative tolerance 10⁻⁸ and absolute tolerance 10⁻¹¹

- Optimization Setup: AdaBelief optimizer with gradient norm clipping (ĝ = 4) in log-parameter space

- Training: Iterative parameter adjustment using adjoint state method for gradient computation

This approach demonstrated robust convergence properties even for models with hundreds of parameters, highlighting the potential for large-scale kinetic model training in pharmaceutical research contexts, particularly for simulating metabolic responses to drug treatments.

Kinetic modeling provides an essential framework for predicting dynamic metabolic behaviors that stoichiometric approaches cannot capture. The choice between modeling approaches should be guided by specific research needs:

- Employ stoichiometric models for steady-state flux predictions, growth phenotype analysis, and large-network simulations where comprehensive kinetic data are unavailable

- Implement kinetic models when investigating dynamic responses, transient states, regulatory mechanisms, or integrating multi-omics data, particularly in drug development contexts where understanding temporal metabolic changes is critical

Recent advancements in machine learning integration, novel parameter estimation methodologies, and increased computational resources are making kinetic modeling increasingly accessible for high-throughput applications in pharmaceutical research and metabolic engineering [7]. The emerging capability to create hybrid models that combine mechanistic understanding with neural network components offers particular promise for modeling complex biological systems where some reaction mechanisms remain unknown [9].

In the realm of computational biology, particularly in metabolic engineering and drug development, two dominant mathematical frameworks have emerged for modeling cellular processes: Linear Programming-based Flux Balance Analysis (FBA) and Systems of Ordinary Differential Equations (ODEs). These approaches represent fundamentally different philosophies for capturing biological system behavior. FBA utilizes constraint-based optimization to predict steady-state metabolic fluxes, while kinetic modeling with ODEs describes the dynamic changes in metabolite concentrations over time [13] [14]. The choice between these architectures carries significant implications for model scalability, data requirements, predictive capability, and practical implementation. This technical guide examines the core architectures of both approaches, providing a structured comparison to inform researchers' selection of appropriate modeling frameworks for specific biological questions and experimental contexts within drug development and metabolic engineering research.

Theoretical Foundations and Mathematical Formulations

Flux Balance Analysis: A Constraint-Based Linear Programming Approach

Flux Balance Analysis operates on the fundamental principle that metabolic networks reach a steady state where metabolite concentrations remain constant over time. This steady-state assumption transforms the system of mass balance equations into a set of linear constraints [13] [15]. The core mathematical representation in FBA is the stoichiometric matrix S of size m×n, where m represents the number of metabolites and n the number of metabolic reactions in the network. Each element Sᵢⱼ contains the stoichiometric coefficient of metabolite i in reaction j [13].

The mass balance equation at steady state is represented as: S · v = 0 where v is the vector of metabolic fluxes (reaction rates) of length n [13]. Since metabolic networks typically contain more reactions than metabolites (n > m), this system is underdetermined, allowing multiple feasible flux distributions. FBA identifies a unique solution by optimizing an objective function Z = cᵀv, where c is a vector of weights indicating how much each reaction contributes to the biological objective [13] [15]. Common objectives include maximizing biomass production, ATP synthesis, or synthesis of a target metabolite.

The complete FBA problem formulation is:

- Maximize cᵀv

- Subject to S · v = 0

- And lowerbound ≤ v ≤ upperbound

The bounds on v represent biochemical constraints such as enzyme capacity, substrate availability, or thermodynamic feasibility [13]. This linear programming problem can be solved efficiently even for genome-scale models with thousands of reactions.

Kinetic Modeling: Systems of Ordinary Differential Equations

Kinetic models describe metabolic systems through explicit mathematical functions that relate reaction rates to metabolite concentrations, enzyme levels, and effectors [14]. Unlike FBA, kinetic modeling does not assume steady state and instead captures the transient dynamics of metabolic networks. The core architecture consists of a system of ODEs where the rate of change of each metabolite concentration is determined by the balance of fluxes producing and consuming it [14].

For a system with m metabolites, the dynamics are described by: dx/dt = N · v(x, p) where x is the vector of metabolite concentrations, N is the stoichiometric matrix, and v(x, p) is the vector of kinetic rate laws that depend on x and parameters p [14]. The rate laws v(x, p) can take various mathematical forms including mass action, Michaelis-Menten, or more complex mechanistic representations that account for allosteric regulation and enzyme inhibition [14].

Kinetic parameters p include catalytic rate constants (kcat), Michaelis constants (Km), inhibition constants (Ki), and activation constants (Ka). Parameterizing these models requires significant experimental data, which can be derived from in vitro enzyme assays, in vivo flux measurements, or isotopic labeling experiments [14]. The system of ODEs is typically solved numerically, and the complexity increases substantially with network size.

Comparative Analysis: Mathematical and Practical Implementation

Table 1: Core Architectural Comparison Between FBA and ODE-Based Kinetic Modeling

| Feature | Flux Balance Analysis (FBA) | ODE-Based Kinetic Models |

|---|---|---|

| Mathematical Foundation | Linear programming with steady-state assumption | Systems of ordinary differential equations |

| Core Equation | S · v = 0 [13] | dx/dt = N · v(x, p) [14] |

| Primary Variables | Metabolic fluxes (v) | Metabolite concentrations (x), sometimes enzyme levels |

| Key Parameters | Stoichiometric coefficients, flux bounds [13] | kcat, Km, Ki, enzyme concentrations [14] |

| Time Resolution | Steady-state (no temporal dynamics) [13] | Dynamic (captures transients) [14] |

| Typical Network Size | Genome-scale (≥10,000 reactions) [15] | Pathway-scale (dozens to hundreds of reactions) [14] |

| Computational Demand | Low (linear programming) [15] | High (numerical integration of ODEs) [14] |

| Data Requirements | Stoichiometry, uptake/secretion rates [13] | Comprehensive kinetic parameters, concentration data [14] |

| Regulatory Integration | Limited (requires extensions) [13] | Direct (allosteric regulation, gene expression) [14] |

Table 2: Applications and Limitations in Metabolic Engineering and Drug Development

| Aspect | Flux Balance Analysis (FBA) | ODE-Based Kinetic Models |

|---|---|---|

| Strengths | High scalability; No need for kinetic parameters; Predicts capabilities; Fast computation [13] [15] | Predicts dynamics and concentrations; Captures regulation; Identifies rate-limiting steps [14] |

| Limitations | Cannot predict metabolite concentrations; Limited regulatory integration; Steady-state assumption [13] | High parameter requirements; Limited scalability; Computationally intensive [14] |

| Ideal Use Cases | Gene knockout predictions; Growth phenotype simulation; Genome-scale strain design [13] [15] | Pathway optimization; Understanding metabolic dynamics; Drug target identification [14] |

| Metabolic Engineering Applications | Identifying gene knockout strategies for product yield improvement [13] | Optimizing enzyme expression levels; Engineering allosteric regulation [14] |

| Drug Development Applications | Identifying essential pathogen genes as drug targets [15] | Understanding metabolic pathway dynamics in disease states [14] |

Hybrid Approaches: Bridging the Architectural Divide

Recognizing the complementary strengths of FBA and kinetic modeling, researchers have developed hybrid frameworks that integrate aspects of both architectures. Linear Kinetics-Dynamic FBA (LK-DFBA) incorporates linear kinetic constraints into the FBA framework to capture metabolite dynamics while retaining a linear programming structure [16]. This approach discretizes time and unrolls the temporal aspect into a larger stoichiometric matrix, enabling dynamic simulations with reduced computational complexity compared to full kinetic models [16].

Another hybrid approach, Dynamic FBA (dFBA), combines FBA at each time point with ordinary differential equations that describe extracellular substrate concentrations and biomass changes [17]. In dFBA, the system is solved sequentially: at each time step, FBA computes intracellular fluxes assuming quasi-steady state, and these fluxes then update the extracellular environment through ODEs [17]. This method has been successfully applied to simulate batch and fed-batch fermentation processes where changing substrate concentrations significantly impact metabolic behavior.

Table 3: Experimental Reagents and Computational Tools for Model Implementation

| Resource Type | Specific Tools/Reagents | Function/Application |

|---|---|---|

| Software Tools | COBRA Toolbox [13] | MATLAB-based suite for FBA and constraint-based modeling |

| Software Tools | DyMMM, DFBAlab [17] | Dynamic FBA implementation frameworks |

| Software Tools | LK-DFBA [16] | Framework with linear kinetic constraints for dynamic modeling |

| Software Tools | ORACLE [14] | Kinetics-based framework for metabolic modeling and engineering |

| Experimental Data for Parameterization | Isotopic labeling (¹³C, ²H) [14] | Determination of in vivo metabolic fluxes for model validation |

| Experimental Data for Parameterization | Enzyme kinetics assays [14] | Measurement of Km, kcat values for kinetic models |

| Experimental Data for Parameterization | Metabolomics profiles [14] | Time-course concentration data for model parameterization |

| Experimental Data for Parameterization | Proteomics data [14] | Enzyme abundance levels for constrained-based and kinetic models |

Experimental Protocols and Implementation Workflows

Protocol for FBA-Based Gene Essentiality Analysis

Model Preparation: Obtain a genome-scale metabolic reconstruction in SBML format or load using the COBRA Toolbox function

readCbModel[13]. The model structure should include reaction lists (rxns), metabolite lists (mets), and the stoichiometric matrix (S).Constraint Definition: Set the upper and lower flux bounds for exchange reactions using

changeRxnBoundsto reflect specific growth conditions (e.g., glucose-limited aerobic conditions) [13]. For aerobic E. coli growth simulation, set glucose uptake to 18.5 mmol/gDW/h and oxygen uptake to a high value (e.g., 20 mmol/gDW/h).Objective Specification: Define the biological objective function, typically biomass production. For the COBRA Toolbox, use

optimizeCbModelwith the appropriate objective coefficient vector c [13].Simulation and Validation: Solve the linear programming problem to obtain the wild-type growth rate. For gene essentiality analysis, sequentially constrain each reaction flux associated with a target gene to zero and re-optimize [15]. Compare the resulting growth rate to the wild-type, classifying genes whose deletion reduces growth below a threshold (e.g., <5% of wild-type) as essential.

Protocol for Kinetic Model Parameterization and Validation

Network Definition: Construct a stoichiometric matrix for the target pathway, identifying all metabolites, reactions, and known regulatory interactions [14].

Rate Law Selection: Assign appropriate kinetic rate laws to each reaction. Common formulations include Michaelis-Menten for irreversible reactions, reversible Michaelis-Menten for bidirectional reactions, and Hill equations for cooperativity [14].

Parameter Estimation: Use in vitro kinetic parameters from databases like BRENDA as initial values, then refine using in vivo data. Implement parameter estimation algorithms such as nonlinear least squares regression to minimize the difference between simulated and experimental metabolite concentrations and fluxes [14].

Model Validation: Test the parameterized model against experimental data not used in parameter estimation, such as time-course metabolite concentrations following a perturbation or flux measurements under different genetic backgrounds [14].

Sensitivity Analysis: Perform metabolic control analysis (MCA) to identify flux control coefficients and quantify the effect of changes in enzyme activity on pathway flux and metabolite concentrations [14].

Decision Framework and Research Recommendations

The choice between FBA and ODE-based kinetic modeling depends on the research question, available data, and system characteristics. FBA is recommended when: (1) studying genome-scale networks where kinetic parameterization is infeasible; (2) the primary interest is in steady-state capabilities rather than dynamics; (3) data are limited to stoichiometry and uptake/secretion rates; and (4) high-throughput simulations are needed for multiple genetic or environmental perturbations [13] [15].

ODE-based kinetic modeling is preferable when: (1) understanding dynamic behavior is essential; (2) the pathway is well-characterized with sufficient kinetic data available; (3) regulatory mechanisms (allosteric, post-translational) play a critical role; (4) predicting metabolite concentrations is necessary; and (5) the system operates far from steady-state [14].

For researchers investigating metabolic engineering strategies for compound production, a combined approach is often most effective: using FBA to identify potential genetic modifications at genome scale, then employing kinetic modeling to refine the design and optimize expression levels in the targeted pathway [14]. In drug development, FBA can identify essential pathogen genes as broad-spectrum targets, while kinetic models can elucidate mechanism of action and resistance development for specific inhibitors [15].

Figure 1: Decision workflow for selecting between FBA, ODE-based kinetic modeling, and hybrid approaches based on research requirements and data availability.

In the computational analysis of biological systems, mathematical models serve as essential tools for predicting cellular behavior and guiding metabolic engineering. Two predominant approaches—kinetic modeling and stoichiometric modeling—offer distinct methodologies for representing metabolism. Despite their differences, both frameworks are fundamentally underpinned by a set of universal physical constraints that govern all natural systems, ensuring model predictions remain biologically feasible [18]. These constraints include mass conservation, energy balance, and thermodynamic laws, which together form the foundation upon which reliable metabolic models are built.

The critical importance of these constraints becomes evident when deciding between modeling approaches for research and biotechnological applications. Stoichiometric models, requiring fewer parameters, can encompass genome-scale networks by applying these universal laws as boundary conditions [18] [19]. In contrast, kinetic models incorporate the same physical principles directly into their rate equations, allowing dynamic simulation of metabolite concentrations but typically covering smaller pathway subsets due to data requirements [18] [20]. This whitepaper provides an in-depth technical examination of how these universal constraints operate within both frameworks, offering researchers a principled basis for selecting appropriate methodologies for specific applications in drug development and metabolic engineering.

Theoretical Foundations of Universal Constraints

The Principle of Mass Conservation

The law of mass conservation states that matter cannot be created or destroyed in an isolated system. In metabolic modeling, this principle translates directly to the stoichiometric matrix, which quantifies the mass balance for each metabolite in the network [19]. For any metabolic system with m metabolites and n reactions, the mass balance constraint is mathematically represented as:

S · v = 0

where S is the m × n stoichiometric matrix and v is the vector of reaction fluxes [19]. This equation formalizes the requirement that for each internal metabolite, the total production rate must equal the total consumption rate at steady state, ensuring no metabolite accumulates or depletes indefinitely.

In kinetic models, mass conservation is embedded directly within the system of differential equations that describe metabolite concentration changes over time:

dX/dt = S · v(X,p)

where X represents metabolite concentrations, v(X,p) represents reaction rates that are functions of metabolite concentrations and parameters p, and dX/dt represents concentration time derivatives [18]. At steady state, dX/dt = 0, reducing to the same mass balance condition used in stoichiometric models [18]. This shared foundation enables cross-validation between frameworks, where steady-state fluxes from kinetic models can verify feasibility in stoichiometric models and vice versa [18].

Energy Balance and Thermodynamic Principles

The first law of thermodynamics, concerning energy conservation, provides another universal constraint for metabolic models. While mass conservation deals specifically with material balances, energy conservation ensures that energy transfers and transformations obey fundamental physical laws [21] [18]. In living systems, this primarily manifests through the balance of enthalpy and Gibbs free energy across biochemical reactions.

The second law of thermodynamics introduces the critical concept of entropy, stating that for any spontaneous process, the total entropy of an isolated system always increases [21]. In metabolic terms, this dictates the directionality of biochemical reactions—they must proceed in the direction of negative Gibbs free energy change (ΔG < 0) [18]. This thermodynamic constraint has profound implications for both modeling frameworks:

- In stoichiometric models, reaction directionality constraints are applied as flux boundaries (vᵢ ≥ 0 for irreversible reactions) based on thermodynamic feasibility [18] [19].

- In kinetic models, thermodynamic constraints are embedded within rate expressions themselves, ensuring that reaction rates approach zero as the reaction nears equilibrium [20].

Statistical mechanics provides a microscopic explanation of the second law in terms of probability distributions of molecular states, connecting cellular metabolism with fundamental physical principles [21]. The Clausius statement of the second law—"Heat can never pass from a colder to a warmer body without some other change, connected therewith, occurring at the same time"—has direct analogs in metabolic energy transformations, where energy must be coupled to drive thermodynamically unfavorable reactions [21].

Table 1: Universal Constraints in Metabolic Modeling Frameworks

| Constraint Type | Physical Principle | Stoichiometric Implementation | Kinetic Implementation |

|---|---|---|---|

| Mass Conservation | Matter cannot be created or destroyed | Stoichiometric matrix S with S·v = 0 | Differential equations dX/dt = S·v(X) |

| Energy Balance | Energy conservation (1st Law) | ATP, reducing equivalent balances | Energy currency concentration dynamics |

| Reaction Directionality | Entropy increase (2nd Law) | Irreversibility constraints (vᵢ ≥ 0) | Equilibrium constants in rate laws |

| Thermodynamic Feasibility | Negative ΔG requirement | Flux Balance Analysis with thermodynamic constraints | Convenience kinetics with Haldane relationship |

Constraint Implementation in Modeling Frameworks

Stoichiometric Modeling Approach

Stoichiometric modeling employs mass conservation as its foundational constraint through the steady-state assumption, which posits that internal metabolite concentrations remain constant over time despite ongoing metabolic fluxes [19]. This assumption, mathematically represented as S·v = 0, defines the space of all possible steady-state flux distributions that a metabolic network can support [19]. When combined with capacity constraints (vₘᵢₙ ≤ v ≤ vₘₐₓ) and thermodynamic constraints on reaction directionality, this creates a bounded flux solution space that can be explored using computational techniques.

Flux Balance Analysis (FBA) extends this basic framework by incorporating an objective function (e.g., biomass production, ATP synthesis) to identify optimal flux distributions within the constraint-defined space [19]. The general FBA formulation is:

Maximize: Z = cᵀv Subject to: S·v = 0 vₘᵢₙ ≤ v ≤ vₘₐₓ

where c is a vector of weights defining the biological objective [19]. This constraint-based optimization approach has proven remarkably successful in predicting metabolic behavior across diverse organisms and conditions.

Thermodynamic constraints enhance the biological realism of stoichiometric models by eliminating flux distributions that would violate the second law of thermodynamics. Methods such as Thermodynamic Flux Balance Analysis (TFBA) explicitly incorporate Gibbs free energy calculations to ensure that flux directions align with negative ΔG values under physiological metabolite concentrations [18]. These thermodynamic considerations naturally give rise to multireaction dependencies, where groups of reactions become coupled through shared thermodynamic constraints [22]. The concept of forcedly balanced complexes—mathematical constructs derived from reaction stoichiometries—provides a framework for identifying these dependencies and understanding their impact on metabolic network functionality [22].

Kinetic Modeling Approach

Kinetic modeling implements universal constraints through dynamic equations that describe how metabolite concentrations change over time in response to metabolic reactions. Unlike stoichiometric models that assume steady state, kinetic models explicitly represent the time-dependent behavior of metabolic networks using ordinary differential equations (ODEs) [18]. The general form of these equations is:

dX/dt = S · v(X, p)

where X is the vector of metabolite concentrations, S is the stoichiometric matrix implementing mass conservation, and v(X, p) is the vector of reaction rates that are functions of metabolite concentrations and kinetic parameters p [18] [20].

The choice of rate laws for the components of v(X, p) determines how thermodynamic constraints are incorporated. The convenience kinetics approach provides a general form that ensures thermodynamic consistency by deriving rate expressions from simplified enzyme mechanisms [20]. For a reversible reaction A B, convenience kinetics takes the form:

v(a,b) = E · (k₊ᶜᵃᵗ · ã - k₋ᶜᵃᵗ · b̃) / (1 + ã + b̃)

where E is enzyme concentration, k₊ᶜᵃᵗ and k₋ᶜᵃᵗ are turnover rates, and ã and b̃ are scaled metabolite concentrations (e.g., ã = a/Kₐᴹ) [20]. This formulation naturally incorporates enzyme saturation effects and ensures that the net reaction rate approaches zero as the reaction nears thermodynamic equilibrium.

To maintain biological feasibility, kinetic models often implement additional organism-level constraints:

- Total enzyme activity constraint: Limits the sum of enzyme concentrations based on the organism's protein synthesis capacity [18].

- Homeostatic constraint: Restricts optimized metabolite concentrations to remain within physiological ranges [18].

- Cytotoxicity constraints: Prevents metabolite concentrations from reaching levels that would damage cellular structures [18].

These constraints work together with universal physical laws to ensure kinetic models generate biologically plausible predictions despite incomplete parameter information.

Experimental Protocols for Constraint Application

Protocol 1: Constraint-Based Model Reconstruction

This protocol outlines the systematic development of a constraint-based stoichiometric model, demonstrating how universal constraints are applied to build predictive computational models of metabolism.

Step 1: Network Reconstruction

- Compile all metabolic reactions present in the target organism from genome annotation and biochemical databases [19].

- Represent the network as a stoichiometric matrix S where element Sᵢⱼ indicates the stoichiometric coefficient of metabolite i in reaction j [19].

- Define reaction reversibility based on thermodynamic principles and biochemical evidence [18].

Step 2: Apply Mass Balance Constraints

- Formulate the steady-state constraint S·v = 0 for all internal metabolites [19].

- Identify and remove blocked reactions that cannot carry flux under any steady state [22].

- Verify mass balance for each metabolite across the network.

Step 3: Define System Boundaries

- Specify exchange reactions that allow metabolites to enter or leave the system [19].

- Set appropriate bounds on exchange fluxes based on experimental measurements.

- Define internal flux constraints based on enzyme capacity measurements when available.

Step 4: Incorporate Thermodynamic Constraints

- Apply directionality constraints to irreversible reactions (vᵢ ≥ 0) [18].

- Implement more advanced thermodynamic constraints using Gibbs free energy data if available [18].

- Identify forcedly balanced complexes to reveal multireaction dependencies [22].

Step 5: Validate with Experimental Data

- Compare model predictions with measured growth rates, substrate uptake rates, and byproduct secretion [19].

- Use flux variability analysis to determine the range of possible fluxes for each reaction [19].

- Iteratively refine the model to improve agreement with experimental data.

Table 2: Research Reagent Solutions for Metabolic Modeling

| Reagent/Resource | Function/Application | Example Use Cases |

|---|---|---|

| Stoichiometric Matrix | Encodes mass balance constraints | FBA, Metabolic Flux Analysis [19] |

| Thermodynamic Database | Provides ΔG° values for reactions | Determining reaction directionality [18] |

| Isotope Labeling Data | Experimental flux determination | ¹³C Metabolic Flux Analysis [23] |

| Enzyme Assay Data | Kinetic parameter determination | kcat, KM measurements for kinetic models [20] |

| Convenience Kinetics | Thermodyamically consistent rate laws | Building kinetic models without full mechanistic data [20] |

Protocol 2: Kinetic Model Development with Thermodynamic Constraints

This protocol describes the development of kinetic models with embedded thermodynamic constraints, using the convenience kinetics framework to ensure physical plausibility.

Step 1: Define Model Scope and Reactions

- Select the metabolic pathway(s) to be included in the model [18].

- Define the complete set of reactions and their stoichiometries.

- Compile available kinetic data for each reaction (kcat, KM, KI values).

Step 2: Formulate Rate Equations

- Implement convenience kinetics for each reaction to ensure thermodynamic consistency [20].

- For reactions with known inhibitors or activators, incorporate appropriate regulatory terms.

- Parameterize rate equations using available enzyme kinetic data.

Step 3: Establish Thermodynamic Parameters

- Calculate or compile standard Gibbs free energy changes (ΔG°') for each reaction [20].

- Relate kinetic parameters through Haldane relationships to ensure consistency with thermodynamics.

- For reactions with unknown parameters, use estimates from similar enzymes or fitting procedures.

Step 4: Implement Homeostatic Constraints

- Define physiologically plausible concentration ranges for each metabolite [18].

- Incorporate these ranges as constraints during model simulation and optimization.

- Apply cytotoxicity limits for metabolites known to be harmful at high concentrations.

Step 5: Validate and Refine Model

- Compare model simulations with time-course metabolite concentration data.

- Verify that steady-state fluxes align with stoichiometric model predictions [18].

- Use parameter estimation techniques to refine unknown parameters against experimental data.

Decision Framework: Selecting Appropriate Modeling Approaches

The choice between kinetic and stoichiometric modeling depends on multiple factors including research objectives, data availability, and system scale. The following decision framework provides guidance for selecting the most appropriate approach.

Table 3: Modeling Approach Selection Guide

| Criterion | Stoichiometric Modeling | Kinetic Modeling |

|---|---|---|

| System Scale | Genome-scale networks [18] | Pathway-scale systems [18] |

| Data Requirements | Stoichiometry, growth/uptake rates [19] | Kinetic parameters, concentration data [20] |

| Time Resolution | Steady-state predictions [19] | Dynamic simulations [18] |

| Computational Demand | Lower (linear/convex optimization) | Higher (ODE integration, parameter estimation) |

| Primary Applications | Flux prediction, gap analysis, strain design [19] | Metabolic control analysis, dynamic response [18] |

| Constraint Implementation | S·v = 0, flux bounds [19] | Embedded in ODEs and rate laws [20] |

Universal constraints—mass conservation, energy balance, and thermodynamic laws—form the common foundation upon which both stoichiometric and kinetic metabolic models are built. While these modeling frameworks differ significantly in their implementation details and application domains, their shared basis in physical principles enables complementary insights into metabolic function. Mass conservation provides the fundamental structure through stoichiometric matrices, energy balance ensures thermodynamic plausibility, and the laws of thermodynamics dictate reaction directionality and flux coupling.

For researchers and drug development professionals, selecting the appropriate modeling approach requires careful consideration of research goals, system scale, and data availability. Stoichiometric models offer powerful capabilities for genome-scale analysis and flux prediction when steady-state assumptions are valid and comprehensive kinetic data are lacking [19]. Kinetic models provide unparalleled insights into dynamic metabolic behaviors and control mechanisms when sufficient kinetic parameters are available, albeit for smaller pathway subsets [18] [20].

Future advances in metabolic modeling will likely focus on hybrid approaches that leverage the strengths of both frameworks, such as incorporating kinetic constraints into stoichiometric models or using stoichiometric models to initialize kinetic parameters [18]. As systems biology continues to mature, these constraint-based methodologies will play increasingly important roles in drug discovery, metabolic engineering, and understanding fundamental biological processes.

Strategic Applications: Choosing the Right Tool for Pathway Engineering, Drug Discovery, and Stability

Stoichiometric models have become cornerstone tools in systems biology for predicting cellular phenotypes from genetic makeup. Unlike kinetic models that describe dynamic system behavior through differential equations and detailed enzymatic parameters, stoichiometric models rely on network topology, mass balance, and steady-state assumptions to enable genome-scale analysis with minimal parameter requirements. This technical guide examines the core principles, methodological workflows, and specific applications where stoichiometric modeling provides distinct advantages, particularly for growth phenotype prediction and gene essentiality analysis. We further contextualize these strengths within the broader modeling landscape, clarifying the division of labor between stoichiometric and kinetic approaches for researchers and drug development professionals.

Stoichiometric modeling represents metabolic networks through reaction stoichiometry and mass balance constraints, creating a mathematical framework that predicts feasible metabolic states without requiring detailed kinetic parameters. The core component is the stoichiometric matrix (S), where rows represent metabolites and columns represent biochemical reactions. Each element S_ij corresponds to the stoichiometric coefficient of metabolite i in reaction j. Under the steady-state assumption, which posits that metabolite concentrations remain constant over time, the system is described by S·v = 0, where v is the vector of metabolic fluxes [24] [25].

This approach enables genome-scale reconstruction of metabolic networks for hundreds of organisms, incorporating known biochemical transformations and gene-protein-reaction (GPR) associations that link genes to enzymatic functions [24] [26]. Unlike kinetic models that capture transient dynamics and regulatory mechanisms through ordinary differential equations, stoichiometric models identify possible steady-state flux distributions constrained by reaction stoichiometry, thermodynamic feasibility, and nutrient uptake rates [7] [25]. This fundamental difference makes stoichiometric modeling particularly valuable for large-scale network analysis and phenotype prediction where comprehensive kinetic data remains unavailable.

Methodological Framework: Core Stoichiometric Modeling Techniques

Constraint-Based Reconstruction and Analysis (COBRA)

The COBRA methodology provides a systematic framework for constructing, validating, and analyzing stoichiometric models:

Figure 1: COBRA Method Workflow for Building Stoichiometric Models

Computational Techniques and Objective Functions

Stoichiometric models employ several computational approaches to analyze metabolic networks:

Flux Balance Analysis (FBA): A linear programming approach that identifies an optimal flux distribution maximizing or minimizing a biological objective function, most commonly biomass production as a proxy for cellular growth [24] [25]. FBA formulates this as: maximize c^T·v subject to S·v = 0 and vmin ≤ v ≤ vmax, where c is a vector defining the objective function.

Gene-Protein-Reaction (GPR) Transformation: A model transformation that explicitly represents GPR associations within the stoichiometric matrix, enabling gene-level analysis by accounting for enzyme complexes, isozymes, and promiscuous enzymes [24]. This transformation converts Boolean logic relationships into pseudo-reactions that connect gene products to metabolic functions.

Minimization of Metabolic Adjustment (MOMA): A quadratic programming approach that predicts mutant metabolic states by identifying flux distributions minimally deviating from the wild-type state, based on the hypothesis that knockout strains undergo minimal metabolic reorganization [25].

Table 1: Key Stoichiometric Modeling Algorithms and Applications

| Method | Mathematical Formulation | Primary Application | Key Advantages |

|---|---|---|---|

| Flux Balance Analysis (FBA) | Linear programming: max cTv subject to S·v=0 | Growth phenotype prediction under different conditions | Fast computation, genome-scale applicability, minimal parameter requirements |

| Parsimonious FBA (pFBA) | Two-step optimization: FBA followed by min Σ|v_i| | Identification of thermodynamically feasible flux distributions | Reduces solution degeneracy, more realistic flux distributions |

| Minimization of Metabolic Adjustment (MOMA) | Quadratic programming: min Σ(vmut-vwt)2 | Predicting metabolic effects of gene knockouts | Improved mutant phenotype prediction without regulatory constraints |

| Gene Inactivation Analysis | Set vko=0 for reaction(s) associated with gene | Gene essentiality assessment | Systematic identification of essential genes and potential drug targets |

Application 1: Genome-Scale Metabolic Analysis

Stoichiometric models excel at genome-scale analysis by leveraging network topology to predict systemic metabolic capabilities. The transformation of GPR associations into an extended stoichiometric representation enables direct analysis of genetic contributions to metabolic functions [24]. This approach untangles complex genetic relationships, including enzyme complexes (multiple genes producing one functional enzyme), isozymes (multiple enzymes catalyzing the same reaction), and promiscuous enzymes (single enzymes catalyzing multiple reactions).

Statistical analysis of the iAF1260 genome-scale model for E. coli reveals the complexity of these associations: over 16% of enzymes form protein complexes (up to 13 subunits), 31% of reactions are catalyzed by multiple isozymes (up to 7), and 72% involve at least one promiscuous enzyme [24]. This genetic complexity creates challenges for reaction-level analysis that GPR transformation effectively addresses by introducing enzyme usage variables that quantify the flux contribution of each gene product.

The primary advantage of stoichiometric models in genome-scale analysis is their comprehensive coverage of metabolic networks without requiring extensive parameter estimation. This enables researchers to model hundreds to thousands of reactions simultaneously, providing a systems-level perspective on metabolic network structure and function [24] [26]. Stoichiometric models serve as knowledge bases that integrate genomic, biochemical, and physiological information into a structured, computable format for hypothesis generation and experimental design.

Application 2: Growth Phenotype Prediction

Growth phenotype prediction represents one of the most successful applications of stoichiometric modeling, with FBA achieving remarkable accuracy in predicting microbial growth rates, auxotrophies, and substrate utilization patterns. The key to this success lies in formulating biologically relevant objective functions that capture evolutionary optimization principles [24] [25].

Biomass Objective Function

The biomass objective function represents the drain of metabolic precursors toward biomass composition, including amino acids, nucleotides, lipids, and carbohydrates in proportions reflecting cellular composition. When maximized, this function predicts growth-optimized flux distributions that frequently match experimental measurements [24].

Recent methodological improvements include:

MiMBl (Minimization of Metabolites Balance): A representation-independent algorithm that formulates objective functions using metabolite turnovers rather than reaction fluxes, eliminating artifacts caused by subjective scaling of stoichiometric coefficients [25].

Gene-level pFBA: Implementation of parsimonious flux balance analysis at the gene level, minimizing total enzyme usage rather than total flux, which better aligns with proteomic constraints and resource allocation principles [24].

Table 2: Growth Prediction Performance Across Modeling Approaches

| Organism | Model | Conditions Tested | Accuracy | Limitations |

|---|---|---|---|---|

| E. coli | iAF1260 (GPR-transformed) | Carbon sources, gene knockouts | ~80% correct growth/no-growth predictions | Underpredicts growth in complex media |

| S. cerevisiae | iFF708, iAZ900 | 30 gene knockouts | 60-70% essential gene prediction | Limited regulatory network integration |

| Mammalian cells | Generic models | Cell line proliferation | Qualitative agreement | Tissue-specific functions not fully captured |

Protocol: Growth Phenotype Simulation

Model Constraining: Set exchange reaction bounds to reflect experimental conditions, including carbon source uptake rate, oxygen availability, and nutrient limitations

Objective Definition: Define biomass reaction as optimization target, ensuring composition reflects appropriate physiological state

Problem Solution: Apply linear programming solver to identify optimal flux distribution using: max v_biomass subject to S·v = 0 and LB ≤ v ≤ UB

Solution Validation: Compare predicted growth rate and byproduct secretion with experimental measurements

Sensitivity Analysis: Perturb constraint bounds to identify critical nutrients and potential limitations

Application 3: Gene Knockout Simulations

Gene knockout simulation represents a powerful application of stoichiometric modeling for metabolic engineering and drug target identification. By constraining reactions associated with a deleted gene to zero flux, researchers can predict the phenotypic consequences of genetic manipulations [24] [25].

Gene Essentiality Analysis

Gene essentiality analysis identifies genes required for growth under specific environmental conditions. Essential genes represent potential drug targets for pathogens, while non-essential genes indicate potential knockouts for metabolic engineering. GPR-aware stoichiometric models provide more reliable essentiality predictions by correctly handling isozymes and protein complexes that can compensate for lost gene functions [24].

Strain Design Algorithms

Strain design methodologies leverage gene knockout predictions to identify genetic interventions that optimize desired metabolic phenotypes, particularly for biochemical production:

OptKnock: Identifies reaction knockouts that couple biomass formation with biochemical production through flux coupling

ROOM: Regulatory on/off minimization that finds flux distributions in mutants minimizing significant flux changes from wild-type

Implementation of these algorithms using GPR-transformed models ensures predicted interventions are genetically feasible, avoiding designs that require manipulating partial enzyme functions or specific subunits of essential complexes [24].

Figure 2: Gene Essentiality Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Stoichiometric Modeling

| Resource Type | Specific Tools/Databases | Function | Application Context |

|---|---|---|---|

| Modeling Software | COBRA Toolbox, COBRApy, MASSpy | Model construction, simulation, and analysis | Implementing FBA, pFBA, and variant analysis |

| Strain Design Algorithms | OptKnock, ROOM, GDBB | Identifying gene knockout strategies | Metabolic engineering for biochemical production |

| Kinetic Parameter Databases | BRENDA, SABIO-RK | Enzyme kinetic parameters | Limited use in stoichometric modeling; critical for kinetic approaches |

| Genome Annotation | KEGG, MetaCyc, UniProt | Reaction and GPR association data | Model reconstruction and curation |

| Constraint Data | ECMDB, YMDB | Experimentally measured fluxes | Setting physiological constraint bounds |

Stoichiometric vs. Kinetic Modeling: Decision Framework

The choice between stoichiometric and kinetic modeling approaches depends on research objectives, data availability, and system characteristics:

When to Prefer Stoichiometric Models

Stoichiometric models provide distinct advantages for:

- Genome-scale network analysis requiring comprehensive metabolic coverage

- Growth phenotype prediction under different genetic and environmental conditions

- Gene essentiality analysis and identification of potential drug targets

- Strain design for metabolic engineering applications

- Systems where comprehensive kinetic parameters are unavailable

- Applications requiring high-throughput simulation of multiple scenarios

When to Consider Kinetic Models

Kinetic models become necessary for:

- Analyzing transient metabolic behaviors and dynamic responses

- Studying metabolic regulation including allosteric control and signaling

- Predicting metabolite concentration changes over time

- Systems where enzyme saturation significantly affects flux control

- Metabolic engineering interventions targeting enzyme expression or allosteric regulation [7] [14]

Hybrid Approaches

Recent advances enable combined approaches leveraging strengths of both frameworks:

- Resource Balance Analysis (RBA): Incorporates proteomic constraints into stoichiometric models

- Dynamic FBA: Uses FBA solutions at sequential time points with changing constraints

- Metabolic Control Analysis: Combins stoichiometric network analysis with limited kinetic parameters