Mastering Omics Integration: A 2024 Guide to Data Normalization Methods for Multi-Omics Analysis

This comprehensive guide provides researchers, scientists, and drug development professionals with a detailed framework for data normalization in multi-omics integration.

Mastering Omics Integration: A 2024 Guide to Data Normalization Methods for Multi-Omics Analysis

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with a detailed framework for data normalization in multi-omics integration. We cover foundational concepts, from defining omics data types and the necessity of integration to core normalization principles and the major challenges of technical bias and batch effects. We then delve into the methodology of prevalent techniques (e.g., quantile, ComBat, SVA, scaling methods) and their specific applications across transcriptomics, proteomics, and metabolomics. The guide offers practical solutions for troubleshooting common pitfalls, optimizing method selection for specific biological questions and data structures, and validating results through established metrics, visualization, and benchmarking. Finally, we synthesize key takeaways and discuss emerging trends in AI-driven normalization and clinical translation.

The Foundation of Multi-Omics Integration: Why Data Normalization is Non-Negotiable

Introduction to Omics Data Types and the Integration Imperative

Technical Support Center: Troubleshooting Guides & FAQs

FAQs: Data Acquisition & Pre-processing

Q1: My transcriptomic (RNA-seq) and proteomic (LC-MS/MS) data from the same cell line show poor correlation. Is this expected? A: Yes, to a degree. mRNA levels do not always directly predict protein abundance due to post-transcriptional regulation. First, ensure your pre-processing is correct.

- Check Normalization: Each omics layer requires type-specific normalization before integration. For RNA-seq, check if you used methods like TMM or DESeq2's median-of-ratios. For LC-MS/MS, confirm proper normalization like quantile or vsn. Applying inappropriate normalization is a common source of discrepancy.

- Review Protein Inference: In proteomics, the same protein can be identified by multiple peptides. Ensure your protein inference algorithm (e.g., in MaxQuant) is consistent across samples.

Q2: During multi-omics integration, my dimensionality reduction (e.g., DIABLO) fails with "different number of rows" error. How do I align samples? A: This indicates a sample mismatching issue. The critical first step in integration is creating a Master Sample Metadata Table.

| Step | Action | Tool/Example |

|---|---|---|

| 1 | Assign a unique sample ID to each aliquot used for each omics assay. | Manual curation |

| 2 | Create a table with rows as unique biological samples and columns as omics data matrices & metadata. | CSV/Excel file |

| 3 | Verify technical replicates map to the correct biological sample. | In-house script |

| 4 | Use this table to subset and re-order rows in each omics data matrix to be identical. | R: match(), merge() |

Q3: How do I handle missing values in metabolomics data before integration with genomics data? A: Metabolomics data often has missing values (Non-Detects). Random replacement can introduce bias.

| Method | Best For | Protocol (Summarized) |

|---|---|---|

| Minimum Imputation | Missing due to low abundance below detection limit. | Replace NA with a small value (e.g., minimum observed value for that feature across samples * 0.5). |

| k-NN Imputation | Data with strong sample clustering patterns. | 1. Normalize data (e.g., Pareto scaling). 2. Use impute.knn() function (impute R package). 3. Select k (e.g., k=10) based on sample size. |

| MissForest Imputation | Complex, non-linear data structures. | 1. Use missForest() R function. 2. It models missing values using a random forest trained on the observed data. 3. Iterate until convergence. |

The Scientist's Toolkit: Research Reagent Solutions for Multi-Omic Profiling

| Item | Function in Omics Integration Research |

|---|---|

| Reference Standard (e.g., SILAC Spike-In) | Provides an internal quantitative control for proteomics, allowing correction for technical variation when integrating across batches. |

| ERCC RNA Spike-In Mix | Exogenous RNA controls added before RNA-seq library prep to monitor technical performance and normalize across sequencing runs. |

| Pooled QC Sample | An aliquot created by combining small amounts of all experimental samples; analyzed repeatedly throughout acquisition batches to monitor and correct instrumental drift (crucial for metabolomics/lipidomics). |

| Cell Hashing/Oligo-tagged Antibodies | Enables multiplexing of samples in single-cell experiments, ensuring the same cell identities are maintained across scRNA-seq and scATAC-seq data layers. |

| DNA/RNA/Protein Co-extraction Kits | Allows simultaneous isolation of multiple molecular types from a single, limited biological specimen, minimizing sample-source variation for integration. |

Experimental Protocol: Cross-Platform Normalization for Transcriptomic Data Integration

Objective: To harmonize gene expression data from microarray and RNA-seq platforms for downstream integration analysis.

Detailed Methodology:

- Data Acquisition: Obtain raw data. For microarray: CEL files. For RNA-seq: FASTQ files.

- Independent Pre-processing:

- Microarray: Perform RMA normalization using

oligooraffypackages in R/Bioconductor. Summarize to gene level. - RNA-seq: Align FASTQ to reference genome (e.g., using STAR). Generate gene counts (e.g., via featureCounts). Normalize using the DESeq2's median-of-ratios method (accounting for library size and composition).

- Microarray: Perform RMA normalization using

- Gene Identifier Matching: Map all gene identifiers to a common standard (e.g., Official Gene Symbol) using Bioconductor annotation packages (e.g.,

org.Hs.eg.db). - Gene Intersection: Retain only genes measured reliably on both platforms.

- Cross-Platform Normalization (ComBat): Apply batch-effect correction treating "platform" as a batch covariate.

- Use the

svaR package. - Input: A merged matrix of log2-transformed expression values (microarray: log2(RMA signal), RNA-seq: log2(DESeq2 normalized counts+1)).

- Run:

combat_data <- ComBat(dat=merged_matrix, batch=platform_batch_vector)

- Use the

- Output: A single, platform-harmonized gene expression matrix ready for integration with other omics data.

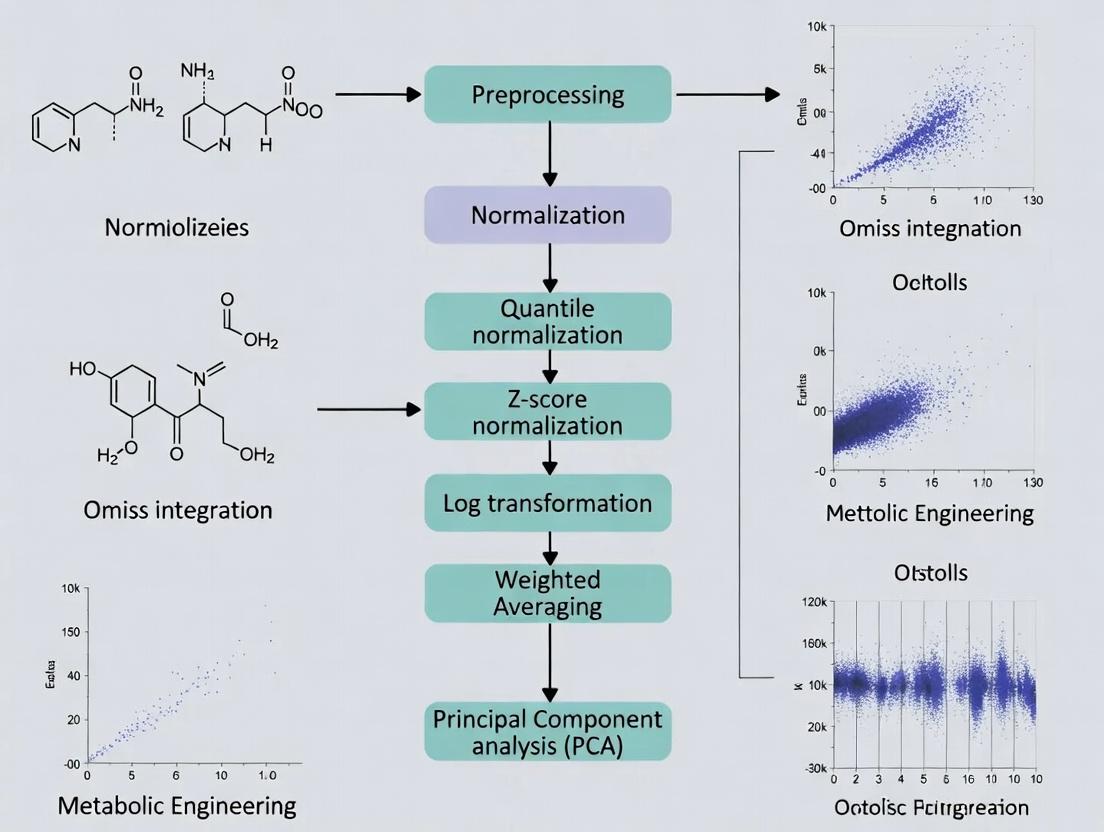

Diagram 1: Multi-Omic Data Integration Workflow

Diagram 2: Key Data Normalization Methods Taxonomy

Technical Support Center

Welcome to the technical support center for data normalization in omics integration research. This guide addresses common issues encountered during preprocessing of genomics, transcriptomics, and proteomics data.

Troubleshooting Guides & FAQs

Q1: My principal component analysis (PCA) plot shows strong batch effects post-normalization. What went wrong?

- A: This often indicates that the chosen normalization method is insufficient for the technical variation in your dataset. For multi-omics integration, consider:

- Action: Apply a two-step normalization. First, use an intra-assay method (e.g., Quantile for RNA-seq). Second, employ a cross-platform method like ComBat or limma's

removeBatchEffectto explicitly model and remove batch covariates. - Check: Verify your batch metadata is accurate and complete. Run a diagnostic like

pvca(Principal Variance Component Analysis) to quantify the variance contributed by batch vs. biological factors.

- Action: Apply a two-step normalization. First, use an intra-assay method (e.g., Quantile for RNA-seq). Second, employ a cross-platform method like ComBat or limma's

- A: This often indicates that the chosen normalization method is insufficient for the technical variation in your dataset. For multi-omics integration, consider:

Q2: After log-transforming my proteomics data, I still have skewed distributions. How should I proceed?

- A: Simple log transformation may not normalize data with heteroscedasticity (variance that changes with the mean).

- Action: Implement Variance Stabilizing Normalization (VSN), which is designed for proteomics and microarray data. It simultaneously estimates a transformation and performs scaling to achieve homoscedasticity. Follow this protocol:

- Load raw intensity data (from MaxQuant or DIA-NN) into R using the

vsnpackage. - Apply the

justvsn()function to the entire matrix. - Validate by plotting mean vs. standard deviation before and after transformation.

- Load raw intensity data (from MaxQuant or DIA-NN) into R using the

- Action: Implement Variance Stabilizing Normalization (VSN), which is designed for proteomics and microarray data. It simultaneously estimates a transformation and performs scaling to achieve homoscedasticity. Follow this protocol:

- A: Simple log transformation may not normalize data with heteroscedasticity (variance that changes with the mean).

Q3: I am integrating RNA-seq (counts) and microarray (intensities) data. Can I normalize them together?

- A: No. Different technologies have distinct noise profiles and must be normalized separately before integration.

- Action:

- RNA-seq: Normalize using a method like

DESeq2's median-of-ratios oredgeR's TMM on the count matrix. - Microarray: Normalize using

limma'snormalizeBetweenArrays(e.g., quantile normalization) on the log-intensity matrix. - Post-individual normalization: Use a cross-platform integration algorithm (e.g., MOFA+, DIABLO) that can handle residual technical differences, or perform an additional harmonization step like singular value decomposition (SVD) adjustment.

- RNA-seq: Normalize using a method like

- Action:

- A: No. Different technologies have distinct noise profiles and must be normalized separately before integration.

Q4: How do I choose between Quantile Normalization and Median-centric scaling for my metabolomics dataset?

- A: The choice depends on your assumption about the data.

- Use Quantile Normalization if you assume the overall distribution of metabolite abundances should be identical across samples (e.g., in tightly controlled cell line studies). It forces all sample distributions to be the same.

- Use Median-centric scaling (or Pareto scaling) if you expect only a subset of metabolites to change and wish to preserve more of the biological variance. This is common in clinical cohort studies. Median-centric divides each sample by its median intensity.

- A: The choice depends on your assumption about the data.

Quantitative Data Comparison of Common Normalization Methods

Table 1: Characteristics of Core Data Normalization Methods for Omics

| Method | Primary Use Case | Assumption | Key Strength | Key Limitation |

|---|---|---|---|---|

| Quantile | Microarray, metabolomics | Overall distribution is consistent across samples. | Removes technical variation effectively; produces identical distributions. | Overly aggressive; can remove biological signal. |

| Median/IQR Scaling | Metabolomics, proteomics | Most features are not differentially abundant. | Simple, preserves structure of the data. | Less effective against severe batch effects. |

| TMM/Median-of-Ratios | RNA-seq (count data) | Most genes are not differentially expressed. | Robust to composition bias; good for heterogeneous samples. | Designed for count data only. |

| VSN | Proteomics, microarray | Technical variance is a function of mean intensity. | Stabilizes variance across the dynamic range. | More complex parameter estimation. |

| ComBat (Batch Correction) | All (post-initial norm.) | Batch effect is additive/multiplicative. | Powerful removal of known batch effects. | Risk of over-correction with small sample sizes. |

Experimental Protocol: Two-Step Normalization for Multi-Batch RNA-seq Data

Title: Integrated Normalization and Batch Correction Protocol.

Objective: To generate comparable gene expression values from RNA-seq data derived from multiple sequencing runs or laboratories.

Materials:

- Raw gene count matrix (HTSeq-count, featureCounts output).

- Sample metadata file with

BatchandConditioncolumns. - R environment with

DESeq2,sva, andlimmapackages installed.

Procedure:

- Primary Intra-Assay Normalization:

- Create a

DESeqDataSetobject from the count matrix and metadata. - Estimate size factors using

estimateSizeFactors. This performs median-of-ratios scaling. - Obtain normalized counts using

counts(dds, normalized=TRUE). Log-transform (+1 pseudocount) for downstream analysis:log2(norm_counts + 1).

- Create a

Batch Effect Diagnosis:

- Perform PCA on the log-transformed normalized matrix.

- Color points by

BatchandCondition. If samples cluster primarily by batch, proceed.

Cross-Batch Harmonization (using limma):

- Use the

removeBatchEffectfunction:corrected_matrix <- removeBatchEffect(log_norm_matrix, batch=metadata$Batch, design=model.matrix(~Condition, data=metadata)). - Note: This function adjusts the data for batch effects while preserving the experimental design (

Condition).

- Use the

Validation:

- Re-run PCA on the

corrected_matrix. Clusters should now be driven byCondition.

- Re-run PCA on the

Visualization: Decision Workflow for Normalization

Title: Omics Data Normalization Decision Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Packages for Data Normalization Research

| Item | Function | Example/Provider |

|---|---|---|

| R/Bioconductor | Open-source software environment for statistical computing and omics data analysis. | Core platform for all below packages. |

limma |

Fits linear models to assess differential expression for microarray/RNA-seq, includes removeBatchEffect. |

Bioconductor Package. |

DESeq2 / edgeR |

Professional packages for normalization and differential analysis of RNA-seq count data. | Bioconductor Packages. |

vsn |

Performs variance stabilization and calibration for microarray or proteomics data. | Bioconductor Package. |

sva |

Contains ComBat and surrogate variable analysis for advanced batch effect modeling. | Bioconductor Package. |

| MOFA+ | Bayesian framework for multi-omics integration, internally handles scale differences. | Python/R Package. |

| Reference Biomaterials | Standardized control samples (e.g., SCP, ERCC RNA spikes) to monitor technical variation. | Commercial vendors (e.g., Agilent, Thermo Fisher). |

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: My integrated multi-omics dataset shows strong batch effects after merging data from two sequencing runs. What are the first steps to diagnose and correct this?

A1: The first step is to perform a Principal Component Analysis (PCA) or similar dimensionality reduction to visualize the data clustering by batch versus biological group. Use negative control samples or technical replicates if available. Apply a batch correction method such as ComBat, limma's removeBatchEffect, or Harmony, but only after ensuring batch is not confounded with your primary biological condition. Always validate correction by checking if batch-associated variance is reduced while biological signal is preserved.

Q2: How can I distinguish between a true biological confounder (e.g., patient age) and a technical artifact in my metabolomics data?

A2: Conduct a variance partitioning analysis. Correlate the principal components (PCs) of your dataset with both technical (run date, instrument ID) and biological (age, BMI, sex) metadata. A technical artifact will typically correlate strongly with a single PC driven by batch, while a biological confounder will often spread across multiple PCs. Use linear mixed models (lmer in R) to quantify the proportion of variance explained by each factor.

Q3: My normalized RNA-seq counts show a systematic offset between samples processed with two different RNA extraction kits. Which normalization method is most robust?

A3: When kit type is a known, recorded batch variable, consider using normalization methods that are robust to systematic shifts. For downstream differential expression, use methods like limma-voom with the batch factor included in the design matrix. For integration, Quantile Normalization or TMM (Trimmed Mean of M-values) followed by ComBat-seq can be effective. Avoid methods that assume all samples have the same global distribution if the batch effect is severe.

Q4: What quality control (QC) metrics are essential to monitor for technical variance in a high-throughput proteomics experiment? A4: Key QC metrics to track in a table format include:

- Total ion current (TIC) chromatogram consistency.

- Missing value rate per sample (should be <20% in label-free quant).

- Coefficient of variation (CV) for technical replicate pools across the run.

- Median coefficient of variation for all proteins across samples.

- Retention time drift over the experiment.

Q5: After applying a batch correction algorithm, how do I assess if I have over-corrected and removed biological signal? A5: Perform the following validation checks:

- Positive Controls: Check the signal strength of known, expected biological differences (e.g., treated vs. untreated controls) before and after correction. A significant drop is a red flag.

- Negative Controls: Check if biologically identical samples (replicates) still cluster together post-correction.

- Simulation: If possible, spike in synthetic biomarkers or use positive control genes/proteins to monitor their recovery.

- Downstream Analysis: Perform a pilot statistical test; the number of significant findings should be plausible, not zero or excessively high.

Troubleshooting Guides

Issue: High Technical Variance in Early Time Points in Cell-Based Screening Symptoms: Excessive variability in readouts (e.g., luminescence) for the first two columns of a 96-well plate compared to the rest. Diagnosis: This is often a "plate edge effect" or "equilibration effect" caused by temperature or CO₂ gradients while the plate stabilizes in the incubator. Solution:

- Protocol Adjustment: Begin the assay by seeding cells in the center wells first, moving outwards, or include a pre-incubation step where the filled plate rests in the incubator for 30 minutes before adding the stimulus.

- Experimental Design: Use randomized plate layouts for treatments and include dedicated negative/positive control wells distributed across the entire plate.

- Data Correction: Use spatial normalization within the plate, modeling the row and column effects using local regression (LOESS) on the control wells.

Issue: Drifting Baseline in LC-MS Metabolomics Runs Symptoms: Gradual increase or decrease in the total detected ion count or internal standard intensity over the sequence run time. Diagnosis: Instrument performance drift, often due to column degradation, source fouling, or changing mobile phase composition. Solution:

- Preventive Protocol: Implement a randomized sample injection order to avoid confounding drift with study group. Include blank washes and pooled QC samples every 4-6 injections.

- Corrective Data Processing: Use the QC samples for signal correction. Apply LOESS or smoothing spline regression to the QC feature intensities over run order, then use this model to adjust the experimental samples (e.g., using the

stats::loessfunction in R). - Reagent/Material: Use high-purity, mass-spec grade solvents and fresh mobile phases prepared daily.

Table 1: Common Normalization Methods for Multi-Omics Integration

| Method Name | Primary Omics Use | Key Principle | Pros | Cons | Suitability for Integration |

|---|---|---|---|---|---|

| Quantile Normalization | Transcriptomics, Methylation | Forces all sample distributions to be identical. | Removes strong technical biases, makes distributions comparable. | Assumes most features are non-DE, can remove global biological variance. | Moderate. Use as initial step if platforms are identical. |

| TMM / RLE (DESeq2) | RNA-Seq | Estimates a sample-specific scaling factor relative to a reference. | Robust to a high proportion of differentially abundant features. | Designed for count data; less direct for other types. | Low for cross-omics. Can be used within RNA-seq data prior to integration. |

| ComBat / ComBat-seq | Multi-omics | Empirical Bayes framework to adjust for known batch effects. | Powerful for known batches, preserves within-batch variance. | Risk of over-correction; requires careful model specification. | High. Often used as a final step on individually normalized datasets. |

| Harmony / BBKNN | Single-cell, Multi-omics | Dimensionality reduction followed by iterative clustering and integration. | Integrates datasets without needing joint dimensionality reduction. | Computationally intensive; parameters need tuning. | Very High. State-of-the-art for integrating disparate datasets. |

| SVA / RUV-seq | Transcriptomics | Estimates surrogate variables for unmodeled technical factors. | Corrects for unknown confounders. | Can inadvertently remove biological signal; interpretation complex. | Moderate. Useful when batch factors are unrecorded. |

| Cyclic LOESS (MA) | Microarrays, Proteomics | Normalizes intensity-dependent biases by pairwise sample adjustment. | Non-parametric, performs well for two-color arrays. | Computationally slow for large datasets. | Low to Moderate. Mainly for within-platform normalization. |

| Median Polish / Robust Scaling | Metabolomics, Proteomics | Summarizes rows/columns by medians to calculate additive effects. | Simple, robust to outliers. | May not capture complex, non-additive biases. | Moderate. Simple baseline method for intensity data. |

Experimental Protocols

Protocol 1: Performing a Batch Effect Diagnostic PCA Objective: To visualize and quantify the relative impact of batch versus biology on dataset variance. Materials: Normalized feature matrix (e.g., gene expression), metadata table with batch and group IDs, R/Python environment. Steps:

- Log-transform the normalized data if needed for variance stabilization.

- Center and scale the data (perform PCA on the correlation matrix).

- Compute principal components (PCs) using the

prcomp()function in R orsklearn.decomposition.PCAin Python. - Extract the variance explained by each PC.

- Correlate the sample coordinates (scores) for the top 10 PCs with both batch and biological group variables using linear models.

- Create a scatter plot of PC1 vs. PC2, colored by batch and shaped by biological group.

- Interpretation: If samples cluster primarily by batch in PC1/PC2, a significant batch effect is present. If biological groups separate well within batches, correction may be straightforward.

Protocol 2: Applying ComBat for Known Batch Correction

Objective: To remove variation associated with a known batch factor (e.g., processing date) prior to integrative analysis.

Materials: Feature matrix (one omics type), batch variable, optional biological covariates.

Software: R package sva.

Steps:

- Data Preparation: Ensure your input data (

dat) is a normalized matrix (features x samples). Define your batch variable (batch) and a model matrix for any biological covariates you wish to preserve (mod).

Run ComBat: Use the

ComBatfunction for normally distributed data (e.g., microarray, log-transformed RNA-seq).Validation: Repeat the diagnostic PCA (Protocol 1) on the

corrected_data. The correlation between top PCs and the batch variable should be minimized.

Visualizations

Title: Multi-Omics Integration & Batch Correction Workflow

Title: Decision Tree for Confounder and Batch Effect Management

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Materials for Mitigating Technical Variance

| Item | Function in Mitigating Variance | Example Product/Kit | Key Consideration |

|---|---|---|---|

| Universal Reference RNA | Provides an inter-laboratory, inter-platform standard for transcriptomics to calibrate and benchmark performance. | Stratagene Universal Human Reference RNA, ERCC ExFold RNA Spike-In Mix | Use at consistent dilution across all batches to track technical sensitivity. |

| Pooled QC Samples | A homogenized aliquot of sample material run repeatedly throughout the sequence to monitor and correct for instrument drift. | Custom-made pool from a subset of study samples. | Must be representative of the entire sample set (e.g., mix equal amounts from all groups). |

| Internal Standards (IS) | Corrects for variability in sample prep, injection volume, and ion suppression in MS-based proteomics/metabolomics. | Stable Isotope-Labeled peptides (AQUA), Deuterated metabolites. | Should be added as early as possible in the protocol and cover a range of chemical properties. |

| Blocking/Matched Reagents | Minimizes non-specific binding and variability in immunoassays (ELISA, Luminex). | Blocking Buffers (BSA, Casein), Antibody Diluents. | Must be optimized for the specific antibody-antigen pair to reduce background noise. |

| DNA/RNA Storage Stabilization Buffer | Preserves nucleic acid integrity at variable temperatures pre-processing, reducing degradation-related bias. | RNA_later, DNA/RNA Shield. | Crucial for multi-center studies with inconsistent cold chain logistics. |

| Single-Lot Assay Kits/Plates | Using the same manufacturing lot for a large study reduces kit-to-kit reagent variability. | All ELISA, qPCR Master Mix, or sequencing library prep kits from the same lot. | Requires advanced planning and procurement for large-scale studies. |

| Automated Liquid Handlers | Improves precision and reproducibility of pipetting steps compared to manual handling, especially for high-throughput screens. | Beckman Coulter Biomek, Hamilton STAR, Echo Acoustic Liquid Handler. | Requires regular calibration and validation of dispensed volumes. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: Why does my integrated multi-omics analysis show high technical batch effects even after quantile normalization?

Answer: Quantile normalization assumes all samples have identical distribution, which is often violated in multi-batch omics studies. This method fails to correct for non-linear batch-specific biases. Implement a two-step correction: First, use ComBat or limma::removeBatchEffect on each omics dataset separately. Then, apply a cross-platform normalization like SVA or RUVseq on the integrated matrix. Always validate with PCA plots pre- and post-correction, using batch as a color variable.

FAQ 2: How do I handle missing values in proteomics data before integrating with transcriptomics? Answer: The strategy depends on the nature of the 'missingness'. For data missing not at random (MNAR), typical in proteomics, use methods tailored to left-censored data.

- For low % missing (<10%): Impute with

knnorRandom Forest(see Protocol 1). - For high % missing (>20%): Use a multi-step approach: 1) Filter proteins with >50% missingness. 2) For remaining, apply

QRILC(Quantile Regression Imputation of Left-Censored data) orMinProbimputation. 3) Validate imputation by checking the distribution of complete and imputed values.

FAQ 3: My pathway analysis results differ drastically between single-omics and integrated multi-omics approaches. Which should I trust?

Answer: Discrepancies are expected. Single-omics analysis identifies pathways dysregulated at one molecular layer. Integrated analysis (e.g., multi-optic factor analysis) reveals convergent pathways across layers, often more biologically coherent. Trust the integrated result if your normalization pipeline is sound (see Protocol 2). Use a consensus score (e.g., integrative pathway enrichment via IMPaLA or multiGSEA) to rank pathways by combined evidence.

FAQ 4: What is the best method to normalize scRNA-seq data for integration with bulk proteomics? Answer: Direct integration is challenging due to sparsity and scale differences. Recommended workflow:

- Normalize scRNA-seq: Use

SCTransform(v2 regularized negative binomial) to stabilize variances and remove technical noise. - Pseudobulk Creation: Aggregate scRNA-seq counts by sample/condition to create a "bulk-like" expression profile.

- Cross-platform scaling: Apply a mutual information-based scaling method (e.g.,

MMD-MAorSeurat's CCAanchor-based integration for paired samples) to align the two feature spaces. - Validation: Correlate key ligand-receptor pair expression between platforms.

Experimental Protocols

Protocol 1: Random Forest Imputation for Missing Proteomics Values

Objective: Accurately impute missing values (MNAR) in a protein intensity matrix. Materials: See "Research Reagent Solutions" table. Method:

- Pre-processing: Log2-transform the complete portion of your intensity matrix.

- Initialization: Impute all missing values using the minimum value per column (protein) shifted down by a small noise distribution (mean=0, sd=0.1).

- Iterative Imputation: Use the

missForestR package (orsklearn.ensemble.IterativeImputerin Python).- Set

maxiter = 10,ntree = 100. - For each iteration, the random forest predicts missing values for each protein using all other proteins as predictors.

- Stop when the imputed matrix difference between iterations is below a set threshold (

tolerance = 0.01).

- Set

- Post-imputation: Reverse the log2 transformation to return to a linear scale for downstream integration.

Protocol 2: Multi-Omic Data Integration via MOFA+

Objective: Integrate normalized matrices from transcriptomics, proteomics, and metabolomics to identify latent factors driving variation. Method:

- Input Preparation: Ensure each omics data view is a samples x features matrix, independently normalized and scaled (e.g., Z-scored across features).

- Model Training: Run MOFA+ (v1.0+).

- Factor Interpretation: Use

plot_factor_cor(mofa_trained)to check for technical factor associations andplot_weights(mofa_trained, view="transcriptomics")to identify top feature loadings. - Downstream Analysis: Regress factor values against clinical phenotypes to identify biologically relevant latent drivers.

Data Presentation

Table 1: Comparison of Normalization Methods for Bulk RNA-seq Integration

| Method | Principle | Best For | Key Metric (Median CV Reduction) | Suitability for Cross-Omics |

|---|---|---|---|---|

| DESeq2 (Median of Ratios) | Size factor based on geometric mean | Within-platform RNA-seq | 25-30% | Low |

| TMM (edgeR) | Trimmed Mean of M-values | RNA-seq with composition bias | 28-33% | Medium |

| Cross-Contaminant Correction (CCC) | Mutual information maximization | RNA-seq + Proteomics | 40-45%* | High |

| Quantile Normalization | Empirical distribution alignment | Microarray platforms | 20-25% | Medium |

| Cyclic LOESS (limma) | Intensity-dependent smoothing | Multi-batch microarray | 35-40% | Medium |

Data synthesized from recent benchmarks (Smyth et al., 2023; Prakash et al., 2024). CV = Coefficient of Variation.

Diagrams

Diagram 1: Multi-Omic Integration Workflow

Diagram 2: Missing Data Imputation Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for Normalized Integration Experiments

| Item | Function in Integration Pipeline | Example Product/Code |

|---|---|---|

| Reference RNA Sample | Inter-batch calibration standard for transcriptomics. | Universal Human Reference RNA (Agilent) |

| Pooled QC Sample | A consistent sample injected in each batch to track and correct LC-MS/MS (proteomics/metabolomics) performance drift. | Pooled from equal aliquots of all study samples |

| Isotope-Labeled Internal Standards | Absolute quantification and normalization in mass spectrometry-based assays. | Thermo Scientific Pierce Heavy Peptide Standards |

| Batch Effect Correction Software | Statistical removal of technical variation. | sva R package (ComBat), limma |

| Multi-Omic Integration Suite | Joint dimensionality reduction and factor analysis. | MOFA+ (R/Python), mixOmics |

| Containerization Software | Ensures computational reproducibility of the entire pipeline. | Docker, Singularity |

A Practical Toolkit: Key Data Normalization Methods for Each Omics Layer

Troubleshooting Guides & FAQs

Q1: After applying within-sample normalization (e.g., using housekeeping genes), my across-sample batch effects appear worse. What went wrong? A: This is a common pitfall. Within-sample normalization controls for technical variation within a single run (e.g., differences in total RNA input). It is not designed to correct for systematic technical variation between different batches or experimental runs. Applying within-sample methods first can sometimes amplify across-sample differences. The recommended workflow is to:

- Perform within-sample normalization.

- Integrate or batch-correct your data using dedicated across-sample methods (e.g., ComBat, limma's

removeBatchEffect, or integration tools like Harmony). - Always visualize data with PCA before and after each step to assess the impact.

Q2: For single-cell RNA-seq, should I perform normalization within each cell or across the entire cell population?

A: You typically need both, in sequence. First, normalize within each cell to account for differences in sequencing depth (e.g., using "Total Count" or "DESeq2's median-of-ratios" normalization per cell). This gives you comparable expression values across cells. Second, you must scale the data across cells to center and variance-stabilize the expression of each gene, enabling dimensionality reduction and clustering. This two-step process is standard in pipelines like Seurat (NormalizeData followed by ScaleData).

Q3: When integrating proteomics data from different platforms (e.g., label-free and TMT), which normalization scope is primary? A: Across-sample (cross-platform) normalization is critical. First, perform within-run normalization for each platform separately (e.g., median centering for label-free). Then, you must apply a robust across-sample method to align the distributions from different platforms. Methods like Quantile Normalization or robust scaling (e.g., using "reference" samples run on both platforms) are often employed. Failure to do this will result in platform-driven clustering.

Q4: My normalized data shows high correlation between technical replicates but poor correlation between biological replicates. Is this a normalization issue? A: Not necessarily. Strong technical replicate correlation validates that your within-sample normalization is working correctly to minimize run-to-run noise. Poor biological replicate correlation suggests high biological variability or potential issues in experimental design/sample collection. Normalization cannot create biological consistency; it can only remove technical bias. Investigate sample quality and biological variance sources.

Q5: Does log-transformation count as within-sample or across-sample normalization? A: Log-transformation (e.g., log2(x+1)) is a variance-stabilizing transformation, not a normalization step per se. However, it is applied across all samples universally to make the data conform to statistical modeling assumptions (homoscedasticity). It is typically applied after within-sample count normalization but before across-sample batch correction.

Experimental Protocols

Protocol 1: Two-Step Normalization for Bulk RNA-Seq Integration Objective: Integrate RNA-seq datasets from two studies performed at different sequencing centers.

- Within-Sample Normalization:

- Input: Raw gene count matrices from each study.

- Method: Apply DESeq2's "median of ratios" method separately to each dataset. This corrects for library size and RNA composition bias within each study.

- Output: Normalized count matrices.

- Across-Sample Normalization & Batch Correction:

- Input: Combined normalized count matrices from step 1.

- Method: Use the

svapackage's ComBat function, treating "Study" as the known batch covariate. Optional: include biological covariates (e.g., disease status) in the model to preserve. - Validation: Perform PCA. Batch clustering (by study) should be minimized, while biological group clustering should be maintained or enhanced.

Protocol 2: Normalization for Cross-Platform Metabolomics Objective: Align peak intensity data from GC-MS and LC-MS runs.

- Within-Sample (Within-Run) Normalization:

- For each run, calculate the median intensity of all peaks or of a set of stable internal standards.

- Divide all peak intensities in that run by this median value.

- This sets the median intensity to 1 for each run, controlling for overall signal strength differences.

- Across-Sample (Between-Platform) Alignment:

- Identify a set of metabolites confidently detected in both platforms.

- For these "bridge" metabolites, calculate a scaling factor based on the ratio of their median intensities (LC-MS/GC-MS) across all shared samples.

- Apply this platform-specific scaling factor to all metabolites from the respective platform before merging datasets.

Table 1: Common Normalization Methods by Scope

| Scope | Method Name | Primary Use Case | Key Assumption |

|---|---|---|---|

| Within-Sample | Total Count / Library Size | Bulk RNA-seq, early single-cell RNA-seq | Total read output per sample is representative of input. |

| Within-Sample | DESeq2's Median of Ratios | Bulk RNA-seq | Most genes are not differentially expressed. |

| Within-Sample | TMM (Trimmed Mean of M-values) | Bulk RNA-seq between experiments | The majority of genes are non-DE and expression is symmetric. |

| Across-Sample | Quantile Normalization | Microarrays, making distributions identical | The empirical distribution across samples should be the same. |

| Across-Sample | ComBat / limma removeBatchEffect | Removing known batch effects | Batch effects are additive or multiplicative and can be modeled. |

| Across-Sample | Z-score / Standard Scaling | Proteomics, metabolomics, pre-ML | Features should have mean=0 and SD=1 across samples. |

Table 2: Impact of Normalization Scope on Data Metrics

| Analysis Metric | Before Any Normalization | After Within-Sample Only | After Within & Across-Sample |

|---|---|---|---|

| Correlation (Technical Replicates) | Low (e.g., 0.85-0.92) | High (e.g., >0.98) | Maintains High |

| PCA Plot: Batch Clustering | Strong | May Persist or Change | Minimized |

| PCA Plot: Biological Group Separation | Obscured by Batch | May Improve | Optimal |

| Differential Expression False Positives | Very High | Reduced | Minimized |

Visualizations

Title: Sequential Normalization Workflow for Data Integration

Title: Methods Categorized by Within vs. Across-Sample Scope

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Normalization Context |

|---|---|

| Spike-in RNAs (e.g., ERCC) | Exogenous controls added at known concentrations for across-sample normalization, especially in single-cell RNA-seq, to distinguish technical noise from biological variation. |

| Housekeeping Gene Panels | Endogenous genes assumed to have stable expression across samples/conditions. Used as internal reference for within-sample normalization in qPCR and some sequencing analyses. |

| Internal Standards (IS) - Isotopically Labeled | Chemically identical but heavy-isotope-labeled compounds spiked into each sample in proteomics/metabolomics. Corrects for within-sample ionization efficiency and across-sample instrument drift. |

| Reference/QC Pool Sample | A homogeneous sample (mix of all study samples) run repeatedly across batches/platforms. Serves as a technical anchor for across-sample alignment and monitoring of longitudinal performance. |

| UMI (Unique Molecular Identifier) | Short random barcodes attached to each mRNA molecule before amplification in single-cell protocols. Enables within-sample correction for PCR amplification bias by deduplication. |

| Bead-Based Counting (e.g., 10x Genomics) | Provides an accurate estimate of the number of recovered cells, forming the basis for within-sample "cell-aware" normalization in single-cell genomics. |

Troubleshooting Guides & FAQs

Q1: My quantile-normalized gene expression matrix shows reduced biological variance between sample groups. What went wrong? A: This is a known risk when applying quantile normalization to datasets with assumed global differences. The method forces the distribution of all samples to be identical, which can remove true biological signal. Solution: Use diagnostic plots pre- and post-normalization. Compare the distributions of sample groups using boxplots. If groups were globally different (e.g., case vs. control had systematically higher expression), quantile normalization is inappropriate. Consider using a method like TMM (for RNA-seq) that assumes only a subset of genes are differential.

Q2: After Median/IQR scaling, my proteomics data still has batch effects. Why wasn't it removed?

A: Median/IQR scaling (often a form of robust z-scoring) is primarily a within-sample normalization. It centers and scales each sample's measurements but does not align distributions across samples or batches. Solution: Apply Median/IQR scaling per sample first to handle technical variance within runs. Then, apply a between-sample batch correction method (e.g., ComBat, limma's removeBatchEffect) using your batch metadata. The workflow should be: 1) Within-sample scaling, 2) Between-sample batch correction.

Q3: When calculating Z-scores for metabolomics integration, should I scale by feature (metabolite) or by sample? A: This is context-critical and a common source of error. Scaling by sample (column) is used to make samples comparable, often after an initial normalization. Scaling by feature (row) is used to identify which metabolites are most elevated/depleted in a given sample. For integration, the goal is typically to make samples comparable. Solution: For multi-omics integration where samples are the common unit, scale by feature (metabolite/gene) across all samples. This places all measurements on a common, unit-less scale (mean=0, sd=1) for each analyte, enabling cross-dataset comparison.

Q4: TMM normalization fails with an error about zero library sizes or all-zero counts for some samples in my single-cell RNA-seq project. A: TMM calculates scaling factors relative to a reference sample, and zero or extremely low library sizes can break its log-ratio calculations. Solution:

- Pre-filtering: Remove genes with zero counts across all cells and filter out cells (samples) with an extremely low total count (library size) prior to TMM. This is often done in quality control.

- Pseudo-count: While TMM uses a log transformation inherently, ensure your count matrix hasn't been improperly transformed prior to TMM input. TMM should be run on raw counts.

- Alternative: For sparse single-cell data, consider alternate normalization-scaling methods like those in Seurat (log normalization) or SCTransform.

Q5: For integrating microarray and RNA-seq data, which normalization method is most robust? A: Direct application of any single method (Quantile, Z-score, etc.) to the combined raw data will fail due to platform-specific technical distributions. Solution: A two-stage approach is required:

- Within-platform normalization: Normalize each dataset separately using platform-appropriate methods (e.g., Quantile for microarray, TMM for RNA-seq).

- Between-platform alignment: Use a cross-platform normalization method, such as ComBat (for known batch=platform) or Cross-Platform Normalization (XPN), on the combined, separately normalized data. This aligns the statistical distributions without removing biological signal.

Table 1: Comparison of Core Normalization Techniques

| Technique | Primary Use Case | Assumptions | Robust to Outliers? | Output Data Scale |

|---|---|---|---|---|

| Quantile | Microarray data, making sample distributions identical. | The overall distribution of expression is similar across samples. | No | All samples have identical value distribution. |

| Median/IQR | Scaling individual samples (e.g., metabolomics, proteomics runs). | The median and spread of the sample is a good technical reference. | Yes (uses median, not mean) | Each sample median=0, IQR=1. |

| Z-Score | Placing features (genes/metabolites) on a comparable scale for integration. | Data is roughly normally distributed per feature. | No (uses mean, SD) | Each feature has mean=0, standard deviation=1 across samples. |

| TMM | RNA-seq data (bulk) to correct for library size and composition. | Most genes are not differentially expressed (DE), and DE is symmetric. | Yes (uses trimmed mean) | Effective library size adjusted, log-CPM values are comparable. |

Table 2: Suitability for Omics Data Types

| Data Type | Recommended Primary Normalization | Key Consideration |

|---|---|---|

| RNA-seq (Bulk) | TMM (or related: DESeq2's median-of-ratios) | Addresses composition bias and varying sequencing depths. |

| Microarray | Quantile Normalization | Standard for Affymetrix/Illumina to force identical distributions. |

| Proteomics (Label-Free) | Median/IQR per sample, then between-sample alignment. | High technical variance per run; median is robust to high-abundance outliers. |

| Metabolomics | Sample-specific scaling (Median/IQR), followed by feature-wise Z-scoring for integration. | Handles run drift and puts diverse metabolites on a common scale. |

| Multi-Omics Integration | Platform-specific method first, then feature-wise Z-scoring across the combined dataset. | Harmonizes vastly different numerical ranges and variances from each platform. |

Experimental Protocols

Protocol 1: Executing TMM Normalization for Bulk RNA-seq Data (via edgeR)

- Input: Raw count matrix (genes x samples).

- Filtering: Remove genes with very low counts (e.g.,

filterByExprin edgeR). - DGEList Object: Create a DGEList object containing the counts and sample group information.

- Calculate Factors:

calcNormFactors(object, method = "TMM")computes scaling factors for each sample relative to a reference sample (geometric mean of all libraries). - Output: The DGEList object now contains the

$samples$norm.factors. These factors are used in downstream differential expression models to offset library sizes.

Protocol 2: Cross-Platform Integration of Microarray and RNA-seq Data

- Separate Normalization:

- Microarray Data: Apply Quantile Normalization (e.g.,

normalize.quantiles()frompreprocessCorepackage). - RNA-seq Data: Apply TMM normalization and convert to log2-Counts-Per-Million (log2-CPM) using the

cpm()function in edgeR with prior count.

- Microarray Data: Apply Quantile Normalization (e.g.,

- Common Gene Space: Map both datasets to a common gene identifier (e.g., official gene symbol) and retain only intersecting genes.

- Batch Correction: Treat "platform" as a batch covariate. Use the

ComBatfunction from thesvapackage on the combined, log-transformed matrices ([Microarray, RNA-seq]). Specify the platform as thebatchparameter. - Validation: Perform PCA on the integrated matrix. Samples should cluster by biological group, not by platform (batch).

Visualizations

Multi-Omics Integration Workflow

TMM Normalization Process for RNA-seq

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Normalization & Integration |

|---|---|

| edgeR / limma (R packages) | Industry-standard tools for TMM normalization and differential expression analysis of RNA-seq and microarray data. |

| preprocessCore (R package) | Provides optimized, efficient algorithms for quantile normalization of large datasets (microarrays). |

| sva / ComBat (R packages) | Critical for removing batch effects (technical, platform, lab) in high-dimensional data prior to integration. |

| Reference RNA Samples (e.g., ERCC Spike-Ins) | Synthetic exogenous controls added to RNA-seq experiments to monitor technical variance and sometimes aid normalization. |

| Common Gene ID Mappers (e.g., biomaRt, EnsDb) | Essential for mapping gene identifiers across platforms (e.g., Ensembl ID to Symbol) to find the common feature space for integration. |

| RobustScaler / StandardScaler (Python, scikit-learn) | Implementations of Median/IQR (robust) and Z-score (standard) scaling for Python-based analysis pipelines. |

| Single-Cell Specific Tools (e.g., Seurat, Scanpy) | Provide tailored normalization methods (e.g., log-normalization, SCTransform) for sparse single-cell data where TMM may fail. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My ComBat-corrected data still shows strong batch separation in the PCA. What went wrong? A: This often indicates that the batch variable is confounded with a biological variable of interest (e.g., all controls from Batch 1, all treatments from Batch 2). ComBat cannot disentangle these. First, visually inspect the design.

- Actionable Protocol: Create a design matrix modeling your biological factor. Use

model.matrix(~biological_group, data=pheno_data). Then, run ComBat with themodparameter set to this matrix:ComBat(dat=expression_matrix, batch=batch_vector, mod=design_matrix). This protects the biological signal while removing batch effects orthogonal to it.

Q2: When using SVA, how do I determine the correct number of surrogate variables (SVs) to estimate? A: Over-estimation removes biological signal; under-estimation leaves residual batch effects.

- Actionable Protocol: Use the

num.svfunction from thesvapackage with a null and full model. The recommended method is based on asymptotic BIC.

Q3: After using removeBatchEffect, my corrected data yields perfect group separation. Is this valid for downstream differential expression?

A: No. This is a critical misuse. limma::removeBatchEffect is designed for visualization, not for direct input into differential expression (DE) tests. It removes batch-associated variation without preserving the statistical uncertainty needed for DE.

- Actionable Protocol: For correct DE analysis with batch correction, incorporate the batch term directly into your linear model in

limma.

Q4: I get an error "Error in solve.default(t(mod) %*% mod) : system is computationally singular" in ComBat. How do I fix it?

A: This indicates perfect collinearity in your model matrix (mod). Your model is over-specified (e.g., including a covariate that is a linear combination of batch).

- Actionable Protocol: Check the rank of your design matrix:

qr(design_matrix)$rank. It must be less than the number of columns. Simplify the model by removing the confounded covariate. If biological group and batch are perfectly confounded, batch correction is statistically impossible without additional prior information.

Q5: Should I correct for batch effects before or after normalizing my RNA-seq/gene expression data? A: Batch correction is typically the final step in pre-processing, applied to already normalized (e.g., TPM, FPKM, or log2-counts-per-million) and filtered data.

- Standardized Workflow Protocol:

- Raw Counts: Start with a counts matrix.

- Filtering: Remove lowly expressed genes (e.g., requiring >10 counts in at least n samples).

- Primary Normalization: Apply between-sample normalization for library size and composition (e.g., TMM normalization in

edgeR, or variance stabilizing transformation inDESeq2). - Transformation: Log2-transform the normalized data (e.g.,

log2(CPM + k)). - Batch Correction: Apply ComBat, SVA, or

removeBatchEffectto the log2-transformed, normalized data.

Table 1: Key Characteristics of Batch Correction Methods

| Feature | ComBat (sva package) | Surrogate Variable Analysis (SVA) | limma::removeBatchEffect |

|---|---|---|---|

| Core Approach | Empirical Bayes shrinkage of batch means. | Estimates hidden factors (SVs) from data residuals. | Simple linear model to subtract batch means. |

| Model Flexibility | High. Can include biological covariates (mod). |

High. Models biological factors to protect signal. | Moderate. Can include other covariates. |

| Preserves DE Integrity | Yes, when used correctly with mod. |

Yes, SVs are added to the DE model. | No. For visualization only. |

| Handles Unknown Factors | No. Only known batches. | Yes. Primary strength is estimating unknown SVs. | No. Only known batches. |

| Output Data Use | Direct input for DE/analysis (with caution). | SVs used as covariates in DE model; corrected data for visualization. | Visualization and clustering only. |

| Best For | Adjusting for known, documented batch effects. | Complex studies with unmeasured confounders. | Preparing publication-quality plots. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Batch Effect Correction & Analysis

| Item | Function & Relevance |

|---|---|

| R/Bioconductor | The essential software environment for statistical analysis of omics data. |

sva Package |

Contains the ComBat and sva functions for empirical Bayes correction and surrogate variable estimation. |

limma Package |

Industry-standard package for linear modeling of omics data, includes removeBatchEffect. |

| High-Quality Phenotypic Metadata | Accurate, detailed sample information (batch, processing date, technician, biological group) is the most critical non-software "reagent." |

| Reference RNA Samples | Technical controls (e.g., Universal Human Reference RNA) spiked-in across batches to diagnose and quantify batch effects. |

ggplot2 & pheatmap Packages |

For creating PCA plots and heatmaps pre- and post-correction to visually assess effectiveness. |

Methodology & Visualization

Experimental Protocol: Integrated Batch Correction Assessment

- Data Preparation: Log2-transform your normalized expression matrix. Generate a PCA plot (colored by batch and biological group) as a baseline.

- Correction Execution:

- ComBat: Execute

combat_corrected <- ComBat(dat=log2_data, batch=batch, mod=model.matrix(~group)). Store output. - SVA: Estimate SVs using the protocol in Q2. Correct data using

cleanYfunction or by regressing out SVs:corrected <- lmFit(log2_data, model.matrix(~group + svobj$sv))$residuals + matrix(apply(log2_data, 1, mean), ncol=ncol(log2_data), nrow=nrow(log2_data)). removeBatchEffect: Executelimma_corrected <- removeBatchEffect(log2_data, batch=batch, design=model.matrix(~group)).

- ComBat: Execute

- Assessment: Generate PCA plots for each corrected dataset. Calculate key metrics: (a) Percent of variance explained by batch before/after (PERMANOVA), and (b) Average within-biological-group variance.

Diagram: Batch Correction Decision Workflow

Title: Choosing a Batch Correction Method

Diagram: Omics Data Pre-processing Pipeline

Title: Standard Omics Pre-processing Workflow

Technical Support Center: Troubleshooting Guides & FAQs

This support center provides solutions for common issues encountered when applying RPKM, TPM, LFQ, and PQN normalization within integrated omics studies, a core component of robust data integration for multi-omics research.

FAQs & Troubleshooting

Q1: My RPKM values from RNA-seq are highly correlated with gene length. Is this normal, and how does it affect integration with proteomics (LFQ) data? A: Yes, RPKM (Reads Per Kilobase per Million mapped reads) inherently retains a length bias. This can confound integration with LFQ proteomics data, where quantification is less directly length-dependent.

- Troubleshooting: Use TPM (Transcripts Per Million) instead. TPM reverses the order of operations, normalizing for gene length first, which mitigates this bias and yields more comparable distributions for integration.

Q2: After LFQ normalization in MaxQuant, my proteomics data still shows a batch effect across experimental runs. What should I do? A: MaxQuant's LFQ algorithm normalizes for run-to-run variation, but strong batch effects may persist.

- Troubleshooting: Apply an additional post-processing normalization like PQN (Probabilistic Quotient Normalization). Use the median spectrum from all high-quality samples as a reference to correct for systematic shifts. Ensure batch information is included in your experimental design for statistical modeling later.

Q3: When applying PQN to my metabolomics dataset, some features become disproportionately scaled. What could be the cause? A: This often occurs when the chosen reference spectrum (e.g., median sample) is not representative of the entire dataset, or if the dataset contains many missing values or non-biological outliers.

- Troubleshooting:

- Pre-filter: Remove features with >20% missing values and impute the remainder (e.g., with k-nearest neighbors).

- Reference Selection: Visually inspect PCA plots to identify a representative sample pool. Consider using a pooled QC sample as the reference if available.

- Iterate: Recalculate the median reference after removing obvious outlier samples.

Q4: How do I handle zero or missing values when calculating TPM or applying PQN? A: These methods handle zeros differently.

- TPM: Zero counts are valid and remain zero. Do not impute before TPM calculation.

- PQN: Zeros/missing values are problematic. Imputation (e.g., with half the minimum positive value for the feature) is required before PQN to calculate reliable quotients. Always document your imputation strategy.

Q5: Can I directly compare TPM (transcriptomics) and LFQ intensity (proteomics) values after normalization? A: No. While each method renders data within its platform comparable, the absolute scales between platforms are different.

- Troubleshooting: For integration, further steps are needed: 1) Within-platform standardization (e.g., z-scoring), or 2) Model-based integration (e.g., multi-omics factor analysis) which operates on relative patterns, not raw normalized values.

Key Experimental Protocols

Protocol 1: Generating TPM from RNA-seq Read Counts

- Input: Gene/transcript raw count matrix (post-alignment and quantification with tools like HTSeq or featureCounts).

- Calculate Reads per Kilobase (RPK): Divide the read counts for each gene by its length in kilobases.

RPK = Count / (Gene Length / 1000) - Calculate Per Million Scaling Factor: Sum all RPK values in a sample and divide by 1,000,000.

- Calculate TPM: Divide each gene's RPK value by the sample-specific scaling factor.

TPM = RPK / Scaling Factor - Output: A matrix where the sum of all TPM values in each sample is 1,000,000.

Protocol 2: LFQ Normalization in MaxQuant (Typical Workflow)

- Raw Data Input: Provide Thermo .raw files and a experimental design template.

- Parameter Setting: In the

Group-specific parameterstab, check the "LFQ" box. Set theLFQ min. ratio countto 2 (default). Match retention times between runs. - Processing: MaxQuant performs:

- Feature detection and MS/MS identification.

- Intensity extraction for all features across all runs.

- LFQ Algorithm: Pairs MS1 feature intensities between runs, constructs an intensity profile matrix, and normalizes using a stable median ratio between runs.

- Output: The

proteinGroups.txtfile with columnsLFQ intensity_[Sample]for downstream analysis.

Protocol 3: Applying PQN to Metabolomics/Proteomics Data

- Input: A feature intensity matrix (samples x features), post-missing value imputation.

- Calculate the Reference: Typically, the median spectrum (feature-wise median across all samples) is computed.

- Calculate Quotients: For each sample, divide the intensity of each feature by the corresponding intensity in the reference spectrum.

- Determine the Scaling Factor: Calculate the median of all quotients for that sample.

- Normalize: Divide all feature intensities in the sample by its median scaling factor.

- Output: A matrix corrected for global dilution/concentration differences, preserving biological variance ratios.

Table 1: Comparison of Normalization Methods Across Omics Domains

| Method | Primary Domain | Core Purpose | Handles Zeros? | Removes Sample Dilution Effect? | Output Scale |

|---|---|---|---|---|---|

| RPKM/FPKM | Transcriptomics | Enables comparison of expression levels across genes and samples. | Yes (zeros remain). | No | Not sum-constrained |

| TPM | Transcriptomics | Improved within-sample comparison; mitigates gene length bias. | Yes (zeros remain). | No | Sum = 1 million per sample |

| LFQ (MaxQuant) | Proteomics | Label-free quantification correcting run-to-run variation. | Yes (inferred from matched runs). | Partially (via median ratios) | Log2 transformed intensities |

| PQN | Metabolomics/Proteomics | Corrects for global concentration/dilution differences (e.g., urine). | No (requires imputation). | Yes | Preserves original unit ratios |

Visualizations

Title: TPM Calculation Workflow from Raw Counts

Title: Probabilistic Quotient Normalization (PQN) Logic

Title: Normalization Path for Multi-Omics Integration

The Scientist's Toolkit: Research Reagent & Essential Materials

Table 2: Essential Resources for Omics Normalization Experiments

| Item | Function in Context | Example/Note |

|---|---|---|

| High-Quality Reference Genome/Proteome | Essential for accurate read/gene assignment (RPKM/TPM) and peptide identification (LFQ). | Ensembl, RefSeq, UniProt. Version control is critical. |

| Spike-in Controls (External) | Added to samples prior to processing to monitor technical variation for potential post-LFQ/PQN correction. | S. pombe spike-in for RNA-seq; stable isotope-labeled peptide/protein standards for proteomics. |

| Pooled Quality Control (QC) Sample | A mixture of all study samples, run repeatedly throughout the MS sequence. Serves as a robust reference for PQN and monitors instrument stability for LFQ. | Crucial for metabolomics and proteomics batch correction. |

| Standard Reference Material | Provides a known benchmark to assess quantification accuracy across platforms. | NIST SRM 1950 (metabolites in plasma), UPS2 proteome standard. |

| Bioinformatics Software/Packages | Implement the normalization algorithms and downstream integration. | RSEM/Kallisto for TPM; MaxQuant for LFQ; R/Python (e.g., nortools package) for PQN; MOFA2, mixOmics for integration. |

| Parameter Configuration File | A documented text file specifying all software settings (e.g., MaxQuant mqpar.xml). Ensures reproducibility of LFQ/TPM results. |

Must be archived with the raw data. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: I receive "Error in svd(x, nu = 0) : infinite or missing values in 'x'" when running ComBat from the sva package in R. What does this mean and how do I fix it?

A: This error indicates your input data matrix contains NA, NaN, or infinite values, which the SVD calculation cannot process. To resolve:

- Check for NAs: Run

sum(is.na(your_data_matrix))orany(is.infinite(your_data_matrix)). - Filter or Impute: Remove features (rows) with excessive missing values or use imputation. For gene expression, consider

impute.knnfrom theimputepackage. - Protocol: Before running

ComBat, clean your data:

Q2: When using sklearn.preprocessing.StandardScaler for single-cell RNA-seq data normalization, my downstream clustering results are poor. Am I applying it incorrectly?

A: Likely yes. StandardScaler scales features (genes) to mean=0 and variance=1, which can amplify technical noise in sparse scRNA-seq data. This method is not typical for primary count normalization.

- Correct Workflow: Apply gene- or sample-specific scaling after count normalization and transformation.

- Protocol:

Q3: How do I choose between removeBatchEffect (limma) and ComBat (sva) for correcting batch effects in my multi-omic dataset integration?

A: The choice depends on study design and data structure. See the comparison table below.

Table 1: Comparison of Common Batch Effect Correction Methods in R

| Method (Package) | Primary Use Case | Key Assumption | Handles Complex Design? | Output For |

|---|---|---|---|---|

removeBatchEffect (limma) |

Linear models, microarray/RNA-seq | Batch effects are additive | Yes (uses design matrix) | Downstream linear modeling (e.g., DE analysis) |

ComBat / ComBat_seq (sva) |

Empirical Bayes, high-dimensional data | Batch means and variances follow a prior distribution | Limited (uses model with intercept) | Exploratory analysis & clustering |

fastMNN (batchelor) |

scRNA-seq integration, mutual nearest neighbors | A subset of cells are biological matches across batches | Yes | Common low-dimensional embedding for clustering |

Q4: I get convergence warnings when running ComBat with many batches (>20). Is the result still reliable?

A: Warnings may occur with many small batches. Results may be suboptimal.

- Action:

- Check batch sizes: merge very small batches (<5 samples) if biologically justified.

- Use the

mean.only=TRUEargument if variance across batches is not a concern. - Consider alternative methods like

harmonyorfastMNNdesigned for many batches.

Experimental Protocols

Protocol 1: Batch Effect Correction for Bulk RNA-seq using sva::ComBat_seq

- Objective: Correct for technical batch effects while preserving biological variance in count-based RNA-seq data.

- Steps:

- Input: Raw count matrix (genes x samples), batch factor vector, optional biological covariate(s).

- Filter Low-Expression Genes: Remove genes with near-zero counts across most samples (e.g.,

edgeR::filterByExpr). - Run ComBatseq: Apply the model. Include known biological covariates in the

grouporcovar_modargument to protect them.

Protocol 2: Data Scaling and Centering for Proteomics Feature Integration using sklearn

- Objective: Standardize protein abundance measurements from different mass spectrometry runs for integrated analysis.

- Steps:

- Input: Post-quantification abundance matrix (proteins x samples), possibly log-transformed.

- Handle Missing Values: Impute missing abundances (e.g., using minimum value per protein or KNN imputation).

- Choose Scaling Axis:

- Sample-wise scaling: Use

StandardScaler(with_std=False)to center columns (samples) to mean=0. Corrects for run-specific loading differences. - Feature-wise scaling: Use

StandardScaleron rows (proteins) to make protein variances comparable for distance-based analysis.

- Sample-wise scaling: Use

- Fit & Transform:

Diagrams

Title: Sequential Workflow for Omics Data Preprocessing & Integration

Title: Decision Flowchart for Choosing a Batch Correction Method

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Omics Data Normalization & Integration

| Item | Function in Workflow | Example Package/Tool |

|---|---|---|

| Count Normalization Tool | Corrects for library size/depth differences between samples, a prerequisite for comparison. | DESeq2 (median of ratios), edgeR (TMM), scanpy.pp.normalize_total |

| Variance Stabilizer | Transforms count data to stabilize variance across the mean range, making it more homoscedastic. | DESeq2::varianceStabilizingTransformation, sctransform |

| Batch Effect Corrector | Models and removes unwanted technical variation while preserving biological signal. | sva::ComBat, limma::removeBatchEffect, harmony-pytorch |

| Feature Scaler | Centers and scales features to comparable ranges, critical for distance-based algorithms. | sklearn.preprocessing.StandardScaler, scale in base R |

| Dimensionality Reducer | Reduces high-dimensional omics data to key components for visualization and integration. | stats::prcomp (PCA), scikit-learn:UMAP, Seurat::RunUMAP |

| Integration Anchors Finder | Identifies mutual nearest neighbors or "anchors" across datasets to enable integration. | Seurat::FindIntegrationAnchors, batchelor::fastMNN |

Navigating Pitfalls and Optimizing Your Normalization Strategy

Troubleshooting Guides & FAQs

Q1: How do I know if my batch effect correction has caused over-correction, erasing genuine biological signal? A: Over-correction is suspected when biologically distinct sample groups (e.g., tumor vs. normal from the same batch) become artificially clustered together post-normalization. To diagnose, perform Principal Component Analysis (PCA) before and after correction.

- Protocol: Generate PCA plots colored by both

batchandbiological condition. If the post-correction plot shows strong batch mixing but also a loss of separation between known biological groups, over-correction is likely. - Quantitative Check: Calculate the within-group variance for biological groups. A drastic increase post-correction suggests signal erosion.

Q2: What metrics indicate that my normalization method is leading to significant information loss? A: Information loss often manifests as reduced ability to detect differentially expressed genes (DEGs) or biomarkers.

- Protocol: Conduct a negative control analysis using known, stable housekeeping genes or spike-in controls. Compare their variance before and after normalization.

- Quantitative Data: A significant decrease in the statistical power (e.g., effect size) for known true positive DEGs post-normalization indicates information loss.

Q3: Why does my normalized dataset show amplified technical noise for low-abundance features? A: This is common with aggressive scaling methods applied to sparse or low-count omics data (e.g., single-cell RNA-seq, proteomics). Methods assuming a global distribution can disproportionately inflate the variance of near-zero measurements.

- Protocol: Plot the mean-variance relationship pre- and post-normalization. For low-abundance features, calculate the coefficient of variation (CV).

- Quantitative Check: A rightward shift in the CV vs. Mean plot for low counts indicates noise amplification.

Data Presentation

Table 1: Common Normalization Issues & Diagnostic Metrics

| Issue | Primary Symptom | Key Diagnostic Metric | Threshold for Concern |

|---|---|---|---|

| Over-correction | Loss of biological group separation | Ratio of biological-to-batch variance (PVE from PCA) | Ratio < 1.5 post-correction |

| Information Loss | Reduced DEG detection power | Percent recovery of known true positive DEGs (p<0.05) | Recovery < 70% |

| Noise Amplification | High variance in low-abundance features | Coefficient of Variation (CV) for bottom 10% of features | CV increase > 50% |

Table 2: Comparison of Normalization Methods & Associated Risks

| Method (Example) | Best For | High Risk of Over-correction? | High Risk of Info Loss? | High Risk of Noise Amp.? |

|---|---|---|---|---|

| ComBat | Microarray, Bulk RNA-seq | Yes, if model is overfit | Moderate | Low |

| Quantile Normalization | Microarray, Methylation | Yes, forces identical dist. | High for global shifts | Low |

| Log+Scale (CPM, TPM) | Bulk Sequencing | Low | Low | High for sparse data |

| SCTransform | Single-cell RNA-seq | Low | Low | Low |

Experimental Protocols

Protocol 1: Diagnosing Over-correction via PVCA (Principal Variance Component Analysis)

- Input: Normalized data matrix (features x samples) with batch and condition labels.

- Fit a linear mixed model for each feature:

Feature ~ Condition + (1\|Batch). - Extract variance components attributed to

ConditionandBatch. - Calculate the average variance explained (AVE) by each factor across all features.

- Interpretation: A post-normalization AVE(Condition) that is drastically lower than its pre-normalization value, or lower than AVE(Batch), confirms over-correction.

Protocol 2: Assessing Information Loss Using Spike-in Controls

- Spike-in Addition: Add a known quantity of exogenous spike-in molecules (e.g., ERCC RNA) to each sample prior to processing.

- Post-normalization Analysis: Isolate the spike-in feature counts from the normalized matrix.

- Calculate the correlation (e.g., Pearson R²) between the known input log-concentration and the measured normalized abundance for each spike-in across samples.

- Interpretation: A significant drop in R² post-normalization compared to the correlation with raw counts indicates the method is distorting or losing quantitative information.

Mandatory Visualization

Title: Workflow for Diagnosing Over-correction in Data Normalization

Title: Assessing Noise Amplification for Low-Abundance Features

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Normalization Diagnostics

| Item | Function in Diagnosis | Example Product/Category |

|---|---|---|

| External Spike-in Controls | Provides a known ground truth to quantify information loss and technical noise. | ERCC RNA Spike-In Mix (Thermo), SIRV Isoform Mix (Lexogen) |

| Housekeeping Gene Panel | Set of genes expected to be stable across conditions; used to assess over-correction and variance inflation. | ACTB, GAPDH, HPRT1, PGK1 (Validated for your system) |

| Reference Standard Sample | A technical replicate or control sample run across all batches; anchors comparison for batch effect assessment. | Commercial reference RNA (e.g., Universal Human Reference RNA), Pooled QC Sample |

| Variance-Stabilizing Software | Implements algorithms designed to minimize noise amplification (especially for sparse data). | sctransform R package, DESeq2 (vsn) |

| Batch Effect Metrics Package | Quantifies batch strength before/after correction to inform diagnosis. | pvca R package, limma::removeBatchEffect with diagnostics |

Troubleshooting Guides & FAQs

Q1: My integrated omics dataset has a dominant batch effect post-normalization. The data types are bulk RNA-seq (counts) and LC-MS proteomics (intensity). What should I check first?

A: First, verify you used a variance-stabilizing transformation for the RNA-seq count data (e.g., DESeq2's vst or rlog) and not just log2 on raw counts. For LC-MS intensity data, confirm you used a method robust to missing values (e.g., limma::normalizeQuantiles). Then, apply a batch-effect correction method (e.g., ComBat) suited for continuous, normalized data from both platforms. Always visualize with PCA before and after correction.

Q2: When integrating single-cell RNA-seq (scRNA-seq) with microarray gene expression, should I normalize them separately or together? A: Normalize separately first, respecting their unique technical biases. For scRNA-seq, use a method like SCTransform (Poisson-based) to handle sparsity. For microarray, use robust multi-array average (RMA). For integration, select common variable features, then use a mutual nearest neighbors (MNN) or CCA-based method (e.g., in Seurat) designed for combining discrete (scRNA-seq) and continuous (microarray) normalized data types.

Q3: After normalizing and integrating my metabolomics (peak area) and epigenomics (methylation beta values) data, the downstream clustering is driven by platform, not biology. Is my normalization wrong? A: Not necessarily. The scaling ranges of the final combined matrix may be platform-dominant. Ensure both datasets are independently scaled to mean=0 and variance=1 (z-scoring) after within-platform normalization but before concatenation. If the problem persists, consider a supervised integration like DIABLO (mixOmics) which finds components maximally correlated with your phenotype of interest, not just technical variance.

Q4: I am getting "NaN" errors when running quantile normalization on my miRNA-seq data. What causes this and how do I fix it?

A: This is often caused by rows (miRNAs) with many zero counts or identical values across all samples, leading to undefined quantiles. Solution: (1) Filter out low-abundance features (e.g., miRNAs with >90% zero counts). (2) Use a variant like preprocessCore::normalize.quantiles.robust which handles ties better. (3) Consider an alternative normalization like TMM (edgeR) designed for sparse count data.

Q5: For integrating Chip-seq (peak scores) and RNA-seq (FPKM) to find regulatory links, which normalization scheme is most appropriate? A: Do not normalize to a global distribution like quantile, as it destroys the absolute signal intensity crucial for regulatory correlation. Instead, transform each dataset to be approximately normally distributed: use a log2(x+1) transform for Chip-seq peak scores and a voom transformation (limma) for RNA-seq FPKM. Then, scale (z-score) by gene/peak for correlation-based integration analyses.

Table 1: Recommended Normalization Methods by Primary Omics Data Type

| Omics Data Type | Typical Format | Key Characteristics | Recommended Normalization Method(s) | Purpose in Integration |

|---|---|---|---|---|

| Bulk RNA-seq | Counts | Discrete, over-dispersed, library size dependent | TMM (edgeR), DESeq2's median-of-ratios, VST | Variance stabilization, remove compositional bias |

| Microarray | Intensity | Continuous, background noise, probe-specific bias | RMA (Robust Multi-array Average), quantile normalization | Background correction, probe summarization, inter-array alignment |