Metabolic Network Reconstruction: A Comprehensive Guide for Biomedical Research and Drug Discovery

Metabolic network reconstruction integrates genomic, biochemical, and omics data to build computational models that simulate cellular metabolism.

Metabolic Network Reconstruction: A Comprehensive Guide for Biomedical Research and Drug Discovery

Abstract

Metabolic network reconstruction integrates genomic, biochemical, and omics data to build computational models that simulate cellular metabolism. This article provides a comprehensive guide for researchers and drug development professionals, covering the foundational principles of reconstructing these networks—from draft generation to curated models. It explores core methodologies like Flux Balance Analysis (FBA) and their direct applications in identifying drug targets and predicting patient-specific metabolic responses. The content also addresses common challenges in model quality and refinement, particularly for non-model organisms, and outlines established standards for model validation and comparative analysis against experimental data. Finally, it synthesizes how these validated models are revolutionizing systems pharmacology and paving the way for personalized therapeutic strategies.

The Blueprint of Life: Core Concepts and Components of Metabolic Networks

Metabolic network reconstruction represents a foundational process in systems biology that formally organizes biochemical knowledge into a structured, computational framework [1]. This process involves determining and systematically cataloging the biochemical transformations that occur within a cell or organism, creating a bridge between the genomic information (genotype) and the observable physiological characteristics (phenotype) [1]. Reconstructions provide a platform for interpreting high-throughput omics data and enable predictive computational simulations of metabolic behavior using constraint-based modeling approaches [1].

The first genome-scale metabolic reconstruction was completed for Haemophilus influenzae in 1999, marking a pivotal advancement in the field [1]. Since then, the discipline has expanded significantly, with reconstructions now available for numerous prokaryotic and eukaryotic organisms, including the global human metabolic network known as Recon 1 [1]. These reconstructions serve as biochemical, genetic, and genomic (BiGG) knowledge-bases that abstract pertinent information on the biochemical transformations taking place within specific target organisms [2].

Quantitative Dimensions of Metabolic Reconstructions

The scope and complexity of metabolic reconstructions vary significantly across organisms, reflecting differences in genome size and biochemical characterization. The table below summarizes quantitative details for representative reconstructions.

Table 1: Quantitative Details of Genome-Scale Metabolic Reconstructions

| Organism | Genes | Reactions | Metabolites | Compartments | Key Applications |

|---|---|---|---|---|---|

| Recon 1 (Human) | 1,496 | 3,311 | 2,712 | 7 (cytoplasm, nucleus, mitochondria, lysosome, peroxisome, Golgi, ER) | Study of cancer, diabetes, host-pathogen interactions, metabolic disorders [1] |

| H. influenzae | Not Specified | Not Specified | Not Specified | Not Specified | First genome-scale reconstruction [1] |

| E. coli | Not Specified | Not Specified | Not Specified | Not Specified | Metabolic engineering, network validation [2] |

| S. cerevisiae | Not Specified | Not Specified | Not Specified | Not Specified | Systems biology studies [1] |

The reconstruction process is typically labor-intensive, ranging from approximately six months for well-studied bacteria to two years with multiple researchers for complex organisms like humans [2]. The human Recon 1 reconstruction alone accounts for 1,496 open reading frames, 2,004 proteins, and 3,311 metabolic reactions distributed across seven cellular compartments [1].

Core Principles and Mathematical Framework

The Reconstruction Protocol

Metabolic network reconstruction employs a rigorous "bottom-up" protocol that begins with genomic data and incorporates biochemical, physiological, and bibliomic information [1] [2]. The process involves:

- Draft Reconstruction: Creating an initial network based on genome annotation and database mining [2]

- Manual Curation: Refining the network through evaluation of primary literature with direct physical evidence [1]

- Network Validation: Testing basic metabolic functionality through computational simulations [1]

- Conversion to Mathematical Model: Formatting the reconstruction for constraint-based analysis [1]

For Recon 1, this process involved over 1,500 literature sources and validation against 288 known human metabolic functions [1].

Gene-Protein-Reaction Associations

The genotype-phenotype relationship is mechanistically defined through Gene-Protein-Reaction (GPR) annotations [1]. These Boolean statements account for:

- Isozymes: Multiple genes encoding proteins that catalyze the same reaction

- Protein Complexes: Multiple gene products required to form a functional enzyme

- Spliced Variants: Alternative gene products with different metabolic capabilities

Constraint-Based Modeling and Flux Balance Analysis

The biochemical network is converted into a mathematical format represented by the stoichiometric matrix (S), where rows correspond to metabolites and columns represent reactions [1]. This matrix forms the foundation for constraint-based modeling and Flux Balance Analysis (FBA), which enables prediction of metabolic flux distributions under steady-state conditions [1].

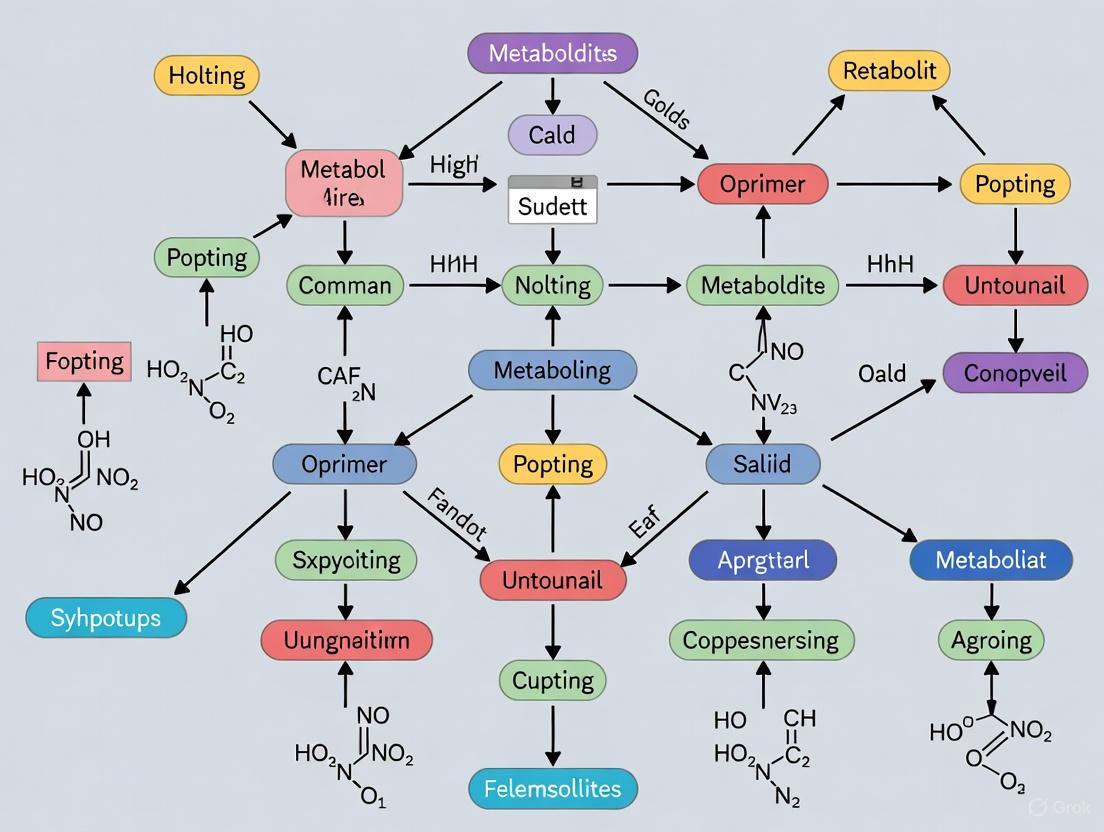

Figure 1: Metabolic Network Reconstruction Workflow

Detailed Reconstruction Methodology

Stage 1: Creating a Draft Reconstruction

The initial draft reconstruction begins with genome sequence data (e.g., Human Build 35) to define an initial set of metabolic genes [1] [2]. Key steps include:

- Gene Identification: Using genome annotations to identify genes encoding metabolic enzymes

- Reaction Assignment: Associating enzymes with their catalyzed biochemical reactions using databases such as KEGG and ExPASy [1]

- Compartmentalization: Assigning reactions to appropriate subcellular locations based on localization data

- Mass and Charge Balancing: Ensuring elemental and charge conservation for all reactions

Stage 2: Manual Reconstruction and Curation

Manual curation addresses organism-specific features that automated approaches often miss [2]. This critical phase involves:

- Literature Integration: Incorporating data from primary literature with direct physical evidence

- Gap Filling: Identifying and addressing missing metabolic functions

- Directionality Assignment: Determining reaction reversibility based on thermodynamic constraints

- Network Evaluation: Testing network functionality against known metabolic capabilities

For Recon 1, this iterative process involved checking 288 known human metabolic functions to ensure the network could successfully complete these biochemical tasks [1].

Stage 3: Conversion to Mathematical Model

The curated reconstruction is converted into a stoichiometric matrix where coefficients represent stoichiometric relationships [1]. The matrix is structured as:

- Rows: Metabolites

- Columns: Reactions

- Coefficients: Negative for substrates, positive for products

This matrix enables constraint-based modeling by defining the system constraints: Sv = 0, where v represents the flux vector of all reactions in the network.

Stage 4: Network Validation and Debugging

Network validation involves comparing model predictions with experimental data [2]. Key validation approaches include:

- Growth Simulation: Testing the model's ability to produce biomass precursors

- Gene Essentiality: Comparing predicted essential genes with experimental knockouts

- Metabolic Capabilities: Verifying the network can perform known metabolic functions

- Physiological Consistency: Ensuring predictions align with physiological observations

Stage 5: Application and Iterative Refinement

The validated model enables numerous applications, with results feeding back into iterative refinement [1]. Applications include:

- Context-Specific Modeling: Creating tissue/cell-type specific models

- Omics Data Integration: Mapping transcriptomic, proteomic, and metabolomic data

- Pathological State Modeling: Simulating metabolic changes in disease states

- Drug Target Identification: Predicting metabolic vulnerabilities

Table 2: Key Phases in Metabolic Network Reconstruction

| Phase | Primary Activities | Key Outputs | Validation Approaches |

|---|---|---|---|

| Draft Reconstruction | Genome annotation, reaction assignment, compartmentalization | Initial reaction list, metabolite inventory | Basic network connectivity assessment |

| Manual Curation | Literature review, gap filling, directionality assignment | Curated network, GPR associations, mass/charge balanced reactions | Functional tests for known metabolic capabilities |

| Mathematical Formulation | Stoichiometric matrix construction, constraint definition | Computational model ready for simulation | Matrix rank analysis, network property evaluation |

| Validation & Debugging | Growth simulation, gene essentiality testing, phenotype comparison | Validated predictive model | Comparison with experimental growth data, knockout phenotypes |

Table 3: Essential Resources for Metabolic Network Reconstruction

| Resource Category | Specific Tools/Databases | Function/Purpose | URL/Availability |

|---|---|---|---|

| Genome Databases | Comprehensive Microbial Resource (CMR), NCBI Entrez Gene, SEED | Provide gene annotations and genomic context | Publicly accessible [2] |

| Biochemical Databases | KEGG, BRENDA, Transport DB | Enzyme function, reaction kinetics, metabolite transport | Mixed (some require subscription) [2] |

| Organism-Specific Databases | Ecocyc, Gene Cards, PyloriGene | Curated organism-specific metabolic information | Publicly accessible [2] |

| Protein Localization | PSORT, PA-SUB | Subcellular localization predictions | Publicly accessible [2] |

| Reconstruction Software | COBRA Toolbox, CellNetAnalyzer | Network simulation, constraint-based analysis | Publicly accessible [2] |

| Chemical Information | PubChem, pKa DB | Metabolite structures, chemical properties | Publicly accessible [2] |

Figure 2: Data Integration in Network Reconstruction

Applications in Biomedical Research

Metabolic network reconstructions have enabled numerous applications in biomedical research, particularly through the use of Recon 1 and its derivatives [1]:

Tissue and Cell-Specific Modeling

Algorithmic approaches such as GIMME (Gene Inactivity Moderated by Metabolism and Expression) tailor the global human metabolic network to specific cell or tissue types by integrating high-throughput data such as transcriptomics and proteomics [1]. These contextualized models provide insights into tissue-specific metabolic behavior.

Pathological State Investigation

Metabolic reconstructions have been applied to study various disease states including:

- Cancer Metabolism: Identifying metabolic dependencies in cancer cells

- Diabetes: Investigating metabolic alterations in insulin resistance

- Inborn Errors of Metabolism: Understanding systemic consequences of enzyme deficiencies

- Host-Pathogen Interactions: Modeling metabolic interactions between hosts and pathogens

Drug Discovery and Development

Reconstructions facilitate drug discovery through:

- Off-Target Effect Prediction: Identifying unintended metabolic consequences of drug candidates

- Metabolic Vulnerability Identification: Discovering essential metabolic functions in pathogens or cancer cells

- Personalized Medicine Approaches: Developing context-specific models for patient populations

Pre-Recon 1 studies demonstrated the potential of these approaches, such as using erythrocyte models to detect glycolytic enzymes causing hemolytic anemia and studying metabolic states in cardiac myocytes under normal, diabetic, ischemic, and dietetic conditions [1].

The reconstruction of organism-specific metabolic networks from genomic data is a fundamental challenge in systems biology. This process translates the genetic blueprint of an organism into a functional biochemical network, enabling researchers to model and predict metabolic behavior across different environmental conditions and genetic backgrounds [3]. The four-partite graph—a computational framework integrating genes, enzymes, reactions, and metabolites as distinct node types—provides a formal structure for addressing the inherent complexity and probabilistic nature of metabolic network reconstruction [3]. This approach moves beyond traditional pathway representations to model metabolism as an interconnected system, offering a more realistic representation of cellular physiology that has profound implications for drug target identification, metabolic engineering, and understanding human disease [4].

The critical importance of this framework stems from its ability to integrate probabilistic information derived from bioinformatics predictions with established biochemical knowledge [3]. High-quality metabolic reconstructions for model organisms like Escherichia coli and Saccharomyces cerevisiae have taken years to assemble manually, highlighting the need for robust computational methods that can streamline this process while maximizing the inclusion of available genomic and experimental data [3]. The four-partite graph formalism addresses this need by providing a mathematical foundation for automated reconstruction that mimics the criteria satisfied by high-quality manual reconstructions, particularly the clustering of connected components into biologically meaningful functional units [3].

Defining the Four-Partite Graph in Metabolism

Fundamental Components

In the context of metabolic networks, the four-partite graph is defined by four distinct node types with specific biological meanings and interrelationships:

- Genes: Represent the genetic elements that code for metabolic enzymes. These nodes form the foundational layer as they provide the genomic potential for metabolic capabilities.

- Enzymes: Represent the functional proteins catalyzing biochemical transformations. These nodes serve as the bridge between genetic information and biochemical function.

- Reactions: Represent the biochemical transformations converting substrate metabolites to product metabolites. Each reaction is defined by its stoichiometry, reversibility, and compartmentalization.

- Metabolites: Represent the chemical compounds that participate as substrates, products, cofactors, or allosteric regulators in biochemical reactions [3] [4].

The reconstruction of a metabolic network begins with the assembly of a preliminary "raw metabolic network" derived from genomic sequence data through a multi-step process: (I) establishing gene models, (II) sequence similarity search, (III) gene product annotation using enzyme databases, (IV) enzyme-reaction association using reaction databases, and (V) pathway mapping [3]. The outcome of steps (I) to (IV) naturally yields the four biological object types that constitute the four-partite graph.

Formal Graph Definition

Formally, the four-partite graph is defined as G = (V, E, w), where:

- V = VG ∪ VE ∪ VR ∪ VM represents the union of genes, enzymes, reactions, and metabolites as nodes.

- E ⊆ (VG × VE) ∪ (VE × VR) ∪ (VR × VM) defines the edges connecting these heterogeneous node types.

- w: E → (0,1] assigns weights to edges reflecting the accuracy or confidence of predicted relationships [3].

This mathematical formulation captures the probabilistic nature of metabolic reconstruction, where gene-enzyme relationships are weighted by annotation accuracy, and enzyme-reaction relationships are weighted based on evidence from comparative genomics and experimental data [3].

Table 1: Node Types and Relationships in the Four-Partite Metabolic Graph

| Node Type | Biological Role | Connected To | Relationship Type | Edge Weight Meaning |

|---|---|---|---|---|

| Genes | DNA sequences encoding enzymes | Enzymes | Codes-for | Annotation confidence based on sequence similarity |

| Enzymes | Protein catalysts | Genes, Reactions | Is-product-of; Catalyzes | Evidence strength for catalytic function |

| Reactions | Biochemical transformations | Enzymes, Metabolites | Is-catalyzed-by; Consumes/produces | Likelihood in specific organism |

| Metabolites | Chemical compounds | Reactions | Is-substrate-of; Is-product-of | Participation confidence |

The Automated Metabolic Network Reconstruction Problem

Problem Formulation

The automated reconstruction of metabolic networks can be formalized as an optimization problem on the four-partite graph. Given a raw metabolic network represented as a weighted four-partite graph G = (V, E, w), the goal is to find a reduced graph G' = (V', E', w') that maximizes the sum of edge weights while ensuring the graph decomposes into a small number of connected components [3]. This formulation integrates three critical aspects: (1) probabilistic information from bioinformatics predictions, (2) connectedness of the final network, and (3) functional clustering of components.

Mathematically, the problem is defined as follows. Let P = {H1, H2, ..., Hk} be a collection of connected edge-disjoint subgraphs of G. The objective is to maximize:

ΣHi ∈ P Σe ∈ E(Hi) w(e)

subject to the constraint that the number of connected components of G[∪Hi ∈ P E(Hi)] is at most d, where d is a small positive integer [3]. This optimization captures the biological reality that high-quality metabolic networks should consist of a limited number of connected pathways (modeled by parameter d) while incorporating relationships with the highest confidence scores.

Computational Complexity

The automated metabolic network reconstruction problem defined on the four-partite graph is NP-hard [3]. This complexity result has significant practical implications for the development of reconstruction algorithms. The problem is a specialization of the set cover problem, which is known to be NP-hard and MAX SNP-hard, meaning that no polynomial-time approximation scheme exists unless P = NP [3].

Despite this challenging complexity landscape, researchers have developed practical algorithmic approaches:

- Polynomial-time algorithm for trees: When the graph structure is restricted to trees, an optimal polynomial-time algorithm exists based on edge contraction and counting arguments [3].

- Approximation algorithms: For general graphs, approximation algorithms provide viable solutions with proven performance guarantees relative to the optimal solution [3].

- Heuristic methods: In practice, heuristic approaches that incorporate biological constraints often yield biologically plausible networks while addressing the computational complexity.

The complexity analysis confirms that metabolic network reconstruction is fundamentally challenging and justifies the use of sophisticated computational strategies rather than simple threshold-based approaches that discard potentially valuable information.

Methodological Framework for Reconstruction

Data Integration and Pre-processing

The reconstruction process begins with the acquisition and integration of heterogeneous biological data. Sequence similarity search using tools like BLAST provides the initial evidence for gene function annotation, with E-values transformed into confidence scores for gene-enzyme relationships [3]. Enzyme databases such as KEGG, BRENDA, and MetaCyc provide critical information for establishing enzyme-reaction relationships [3]. Reaction databases including KEGG LIGAND offer stoichiometric and thermodynamic information necessary for modeling reaction mechanisms [3].

A key challenge in this phase is the appropriate handling of pool metabolites—common metabolites like ATP, NADH, water, and protons that appear in numerous reactions and can obscure meaningful connectivity [5]. Unlike simpler approaches that remove these metabolites, the flux-based graphs naturally discount their over-representation through appropriate weighting schemes based on metabolic flux [5].

Graph Construction Workflow

The construction of a biologically meaningful four-partite graph follows a systematic workflow:

Gene Annotation: Annotate genes with enzyme commission (EC) numbers based on sequence similarity, assigning confidence scores derived from statistical measures like E-values.

Enzyme-Reaction Mapping: Establish connections between enzymes and reactions using curated database information, accounting for organism-specific variations in enzyme function.

Stoichiometric Matrix Construction: Compile the stoichiometric matrix S where rows represent metabolites and columns represent reactions, with elements Sij indicating the net number of metabolite i molecules produced (positive) or consumed (negative) by reaction j [5].

Directionality Assignment: Incorporate reaction directionality based on thermodynamic constraints and organism-specific context, unfolding each reversible reaction into separate forward and backward directions when necessary [5].

Edge Weight Assignment: Assign probabilistic weights to all edges in the four-partite graph based on accumulated evidence from genomic, biochemical, and experimental sources.

Diagram 1: Four-partite graph reconstruction workflow showing the flow from genomic data to functional metabolic network.

Experimental Validation Protocol

Validating reconstructed metabolic networks requires integration of experimental data. A powerful approach combines constraint-based modeling with multi-omics data visualization:

Flux Balance Analysis (FBA): Use the reconstructed network to compute metabolic fluxes under different environmental conditions by solving the linear programming problem that maximizes biomass production subject to stoichiometric constraints [4].

Multi-omics Data Mapping: Integrate transcriptomic, proteomic, and metabolomic data onto the network structure using visualization tools like Shu, which enables comparison of multiple conditions and visualization of distributions [6].

Genetic Perturbation Validation: Compare model predictions with experimental data from gene knockout studies, using the agreement between predicted and observed growth phenotypes as validation metric.

Community Structure Analysis: Examine the modular organization of the network under different conditions, as changes in community structure often reflect functional adaptations to environmental changes [5].

Table 2: Key Databases for Four-Partite Graph Reconstruction

| Database | Primary Content | Role in Reconstruction | URL |

|---|---|---|---|

| KEGG | Pathways, genes, enzymes, reactions | Gene annotation, pathway mapping | www.kegg.jp |

| BRENDA | Enzyme functional data | Enzyme kinetics, reaction associations | www.brenda-enzymes.org |

| MetaCyc | Curated metabolic pathways | Reaction information, organism-specific data | metacyc.org |

| BioCyc | Collection of organism-specific databases | Template for reconstruction | biocyc.org |

| Reactome | Curated biological pathways | Human metabolism, signaling pathways | reactome.org |

Case Study: Sucrose Biosynthesis Pathway Reconstruction

Experimental Implementation

To illustrate the practical application of the four-partite graph approach, we examine the reconstruction of the sucrose biosynthesis pathway in Chlamydomonas reinhardtii [3]. This case study demonstrates how the formal framework translates into concrete biological insights.

The reconstruction began with six genes: CHLRE18029, CHLRE78737, CHLRE81483, CHLRE119219, CHLRE149366, and CHLRE176209. Sequence similarity search produced E-values that were transformed into confidence scores for gene-enzyme relationships [3]. For example, CHLRE_18029 showed high similarity to sucrose phosphate phosphatase genes with transformed E-values of 0.95, indicating high confidence.

The enzyme-reaction relationships were established using KEGG LIGAND database, with enzymes mapped to specific reactions in sucrose biosynthesis:

- Sucrose-phosphate phosphatase (EC 3.1.3.24)

- Sucrose phosphate synthase (EC 2.4.1.14)

- Hexokinase (EC 2.7.1.1)

- Fructokinase (EC 2.7.1.4)

Graph Reduction and Analysis

The raw four-partite graph was reduced by applying the optimization criteria to maximize confidence scores while maintaining connectivity. The resulting network consisted of two connected components: one representing the core sucrose biosynthesis pathway and one representing related hexose phosphorylation reactions [3].

Flux Balance Analysis was performed on the reconstructed network to predict metabolic behavior under different light conditions. The model successfully predicted increased sucrose production under high-light conditions, consistent with experimental observations of Chlamydomonas metabolism. This validation confirmed that the reconstructed network captured essential functional characteristics of the organism's metabolic system.

Analytical Techniques for Reconstructed Networks

Centrality Metrics for Reaction-Centric Analysis

Once reconstructed, metabolic networks can be analyzed using various centrality metrics to identify critical nodes. In directed reaction-centric graphs, several metrics provide complementary insights:

- Cascade Number: A novel metric that quantifies how many nodes become inaccessible from information flow when a specific node is removed, identifying reactions that control local connectivity [7].

- Bridging Centrality: Identifies nodes that act as bridges between functional modules, often corresponding to biologically essential reactions [7].

- Betweenness Centrality: Measures the proportion of shortest paths passing through a node, highlighting reactions involved in global connectivity [7].

- Flux-based Centrality: Incorporates flux distributions from FBA to weight connections, creating context-dependent centrality measures that vary with environmental conditions [5].

Research has shown that reactions with high bridging centrality and cascade numbers tend to have higher essentiality compared to those identified by traditional centrality metrics, making these measures particularly valuable for drug target identification [7].

Gaussian Graphical Models for Metabolite Correlation Analysis

Gaussian Graphical Models (GGMs) provide a powerful method for reconstructing metabolic interactions from high-throughput metabolomics data [8]. Unlike standard correlation networks, GGMs use partial correlation coefficients that measure the correlation between two variables conditioned on all other variables in the system, thereby eliminating indirect correlations and revealing direct metabolic relationships [8].

The GGM approach has been successfully applied to human population cohort data, demonstrating that high partial correlation coefficients frequently correspond to known metabolic reactions. In one study analyzing 1020 blood serum samples with 151 metabolites, GGMs revealed strong modular structure aligned with biochemical pathway organization and successfully reconstructed aspects of fatty acid metabolism [8].

Diagram 2: Gaussian Graphical Models distinguish direct metabolic interactions from indirect correlations through conditional dependence.

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Metabolic Reconstruction

| Resource Type | Specific Tool/Database | Function in Research | Application Context |

|---|---|---|---|

| Gene Annotation | BLAST Suite | Sequence similarity search | Establishing gene-enzyme relationships with E-values |

| Enzyme Database | BRENDA | Enzyme kinetics, reaction data | Determining enzyme-reaction associations |

| Pathway Database | KEGG, MetaCyc | Reference pathways | Template for pathway mapping and validation |

| Modeling Software | COBRA Toolbox | Constraint-based modeling | Flux Balance Analysis of reconstructed networks |

| Visualization Tool | Shu, Escher | Metabolic map visualization | Contextualizing multi-omics data on pathways |

| Network Analysis | Cytoscape with metabolic plugins | Topological analysis | Calculating centrality metrics, module detection |

| Stoichiometric Modeling | SBML | Model representation | Standardized format for model exchange and simulation |

| Omics Data Integration | Gaussian Graphical Models | Network inference from data | Reconstructing metabolic interactions from correlations |

Discussion and Future Perspectives

The four-partite graph framework represents a significant advancement in metabolic network reconstruction by explicitly representing the multi-layered nature of metabolic systems and providing a mathematical foundation for integrating probabilistic data. This approach has demonstrated practical utility in applications ranging from microbial metabolic engineering to understanding human metabolic disorders [5] [4].

Future developments in this field will likely focus on several key areas. First, the integration of machine learning methods for improved edge weight assignment could enhance the accuracy of reconstructed networks. Second, the development of dynamic four-partite graphs that incorporate temporal changes in gene expression and metabolic flux would provide more realistic models of cellular metabolism across different physiological states. Finally, the application of multi-omics data integration through tools like Shu will enable researchers to visualize and analyze metabolic networks across multiple experimental conditions, revealing condition-specific network adaptations [6].

The critical four-partite graph approach bridges genomic information with biochemical function, providing researchers with a powerful framework for understanding metabolic complexity. As reconstruction methods continue to improve and incorporate additional biological layers, this approach will play an increasingly important role in metabolic engineering, drug discovery, and personalized medicine.

Metabolic network reconstruction is a powerful, systematic process for building a biochemical knowledgebase that correlates an organism's genome with its molecular physiology [9]. This process creates a mathematical representation of metabolism, which serves as a structured platform to investigate the underlying principles of metabolism and growth, with applications ranging from metabolic engineering to drug discovery [10] [11]. A reconstruction breaks down metabolic pathways into their respective reactions and enzymes, analyzing them within the perspective of the entire network [9]. The validity and predictive power of a reconstruction are highly dependent on the quality of the underlying biochemical, genetic, and genomic data [9]. The general workflow for building a reconstruction is methodical, proceeding through the key stages of drafting a reconstruction, refining the model, and finally converting it into a mathematical/computational representation for evaluation and debugging through experimentation [9].

Phase 1: Drafting the Reconstruction

The initial drafting phase involves compiling all known metabolic reactions for a target organism from various databases and literature sources to create a first-pass network.

Drafting a reconstruction relies heavily on accessing curated biochemical databases. The table below summarizes the primary resources used for this purpose.

Table 1: Key Databases for Metabolic Network Reconstruction

| Database | Scope and Primary Use | Reference |

|---|---|---|

| KEGG | A comprehensive resource containing information on genes, proteins, reactions, and pathways. | [9] [12] |

| BioCyc/MetaCyc | A collection of organism-specific Pathway/Genome Databases (PGDBs); MetaCyc is a universal reference database of experimentally elucidated pathways. | [10] [9] |

| BRENDA | A comprehensive enzyme database providing functional data, including kinetic parameters and organism-specific information. | [9] |

| BiGG | A knowledgebase of curated, genome-scale metabolic network reconstructions in a standardized format. | [10] [9] |

| ENZYME | An enzyme nomenclature database that provides the reaction catalyzed by a given enzyme and links to other resources. | [9] |

Methodology for Draft Assembly

The draft assembly process typically follows a semi-automated approach:

- Gene Annotation and Reaction Identification: The process begins with an annotated genome. Genes are associated with the enzymes they encode, often via EC numbers or Gene Ontology terms. Tools like PathwayTools can infer probable metabolic reactions and pathways from an annotated genome to produce a new pathway/genome database [9].

- Homology-Based Inference: For an organism with a newly sequenced genome, its proteome is compared to those of closely related organisms with existing, curated reconstructions. Identified homologous genes are used to infer the presence of corresponding metabolic reactions [10] [9].

- Automated Draft Generation: Tools like the ModelSEED can automatically process genome annotations from the RAST system to produce a draft metabolic model, including a network of reactions, gene-protein-reaction (GPR) associations, and a biomass composition reaction [9]. The moped Python package also supports draft reconstruction directly from genome/proteome sequences and pathway/genome databases utilizing GPR annotations [10].

Phase 2: Refining and Curating the Model

The initial draft reconstruction is invariably incomplete and requires rigorous refinement and curation to become a biologically accurate model. This phase is critical for ensuring the model's predictive reliability.

Gap Filling and Network Validation

A common issue in draft models is the presence of "gaps"—metabolic dead-ends that prevent the synthesis of essential biomass components.

- Topological Gap-Filling: Tools like Meneco can be used to identify which reactions need to be added to a network to enable the production of a set of target metabolites from a defined seed set of compounds [10]. This is a topological approach based on the network structure.

- Growth-Based Gap-Filling: A more advanced method involves using the draft model to simulate growth under defined conditions (e.g., a rich medium). If the model fails to grow, an algorithm searches a universal biochemical database (e.g., MetaCyc) for reactions that, if added, would enable growth under the simulated conditions [9]. The moped package facilitates this process by providing an interface to gap-filling tools and allowing easy manual alteration of the model structure by adding, removing, or modifying reactions [10].

Cofactor and Reversibility Handling

For certain analyses, particularly those based on network topology, special handling of cofactors and reaction reversibility is required to generate biologically meaningful results.

- Cofactor Duplication: Many reactions depend on ubiquitous cofactor pairs (e.g., ATP/ADP, NAD+/NADH). In topological analyses, this can lead to a drastic underestimation of a network's biosynthetic capacity. A pragmatic solution is to duplicate reactions involving these cofactor pairs, creating versions that use "mock cofactors" which can be included in the initial seed. This allows reactions that use cofactors in their role as energy carriers to proceed, while reactions that synthesize or degrade the cofactors themselves require the real metabolites to be produced [10].

- Reversibility Duplication: For reversible reactions, the expansion process requires both forward and directions to be explicitly considered. This is handled by adding a reversed version of each reversible reaction to the network, ensuring the model correctly accounts for metabolite production and consumption in both directions [10].

Phase 3: Mathematical Representation and Analysis

The curated metabolic network is converted into a mathematical format that enables computational simulation and analysis. This transformation is the cornerstone of model utility.

Stoichiometric Matrix and Constraint-Based Modeling

The most common mathematical representation is the stoichiometric matrix, S, where rows represent metabolites and columns represent reactions. Each element (S_{ij}) is the stoichiometric coefficient of metabolite i in reaction j [11]. This matrix forms the basis for constraint-based modeling, with the core equation:

S · v = 0

subject to lower and upper bounds on the reaction fluxes, v [11]. This equation defines the space of all possible steady-state flux distributions achievable by the network.

Simulation Techniques

Table 2: Key Mathematical Analysis Techniques for Metabolic Models

| Technique | Principle | Application | |

|---|---|---|---|

| Flux Balance Analysis (FBA) | Finds a steady-state flux vector that maximizes or minimizes a biological objective function (e.g., biomass growth). | Predicting growth rates, nutrient uptake, and byproduct secretion under specific conditions. | [10] [11] [13] |

| Flux Sampling | Instead of predicting a single optimal flux, this method samples the space of all possible fluxes satisfying the constraints. | Capturing phenotypic diversity and understanding network flexibility and robustness. | [11] |

| Metabolic Network Expansion | A topological analysis that computes the set of metabolites (the "scope") producible from an initial seed set. | Characterizing the biosynthetic capacities of a network and supporting gap-filling. | [10] |

Alternative Network Representations

Beyond the stoichiometric matrix, other mathematical representations are useful for different analytical purposes.

- Reaction Graphs and m-DAGs: A reaction graph is a directed graph where nodes are reactions, and edges connect two reactions if a product of the first is a substrate of the second. This can be simplified into a metabolic Directed Acyclic Graph (m-DAG) by collapsing strongly connected components (subnetworks where any reaction can be reached from any other) into single nodes called Metabolic Building Blocks (MBBs). This greatly reduces complexity while preserving network connectivity, facilitating topological analysis and comparison [12] [14]. Tools like MetaDAG automate the construction of these models from KEGG data [12].

- Hypergraphs: Metabolic networks can be natively represented as hypergraphs, where each reaction (a hyperedge) connects multiple substrate nodes to multiple product nodes. This representation more naturally captures the stoichiometry of biochemical transformations [15].

Experimental Protocols for Validation

The final, critical step is to validate the model's predictions through experimentation, creating an iterative cycle of improvement.

Protocol for In Silico Prediction of Essential Genes

Purpose: To identify genes critical for growth under specific environmental conditions. Workflow:

- The reconstructed model is simulated using FBA with biomass production as the objective function.

- A list of all genes associated with model reactions (via GPR rules) is compiled.

- Each gene is systematically "knocked out" in silico by setting the flux bounds of all reactions dependent on that gene to zero.

- FBA is run again for each knockout, and the resulting simulated growth rate is recorded.

- Genes whose knockout leads to a zero or significantly reduced growth rate are predicted to be essential.

- These predictions are validated against experimental gene essentiality data from wet-lab experiments (e.g., gene knockout studies) [9].

Protocol for Community Interaction Analysis

Purpose: To predict and validate pairwise metabolic interactions (e.g., mutualism, competition) between bacterial species in a community. Workflow (as applied to bacterial vaginosis-associated bacteria):

- Model Reconstruction: Build genome-scale metabolic reconstructions (GENREs) for each species in the community, for example, from metagenomic data [16].

- Pairwise Simulation: For each pair of organisms, A and B, simulate their growth in a shared medium environment. The simulation measures the change in biomass flux for organism A when organism B is present compared to when A is in isolation, and vice versa.

- Interaction Scoring: An increase in biomass in co-culture indicates a mutualistic interaction, while a decrease indicates competition [16].

- Experimental Validation: The top predicted interactions are validated in vitro.

- Grow the primary bacterium on spent media from the co-occurring bacterium.

- Use metabolomics to identify metabolites consumed and produced, revealing potential cross-feeding or competition.

- Measure growth yields (e.g., via OD600) to confirm the predicted mutualistic or competitive behavior [16].

Visualizing the Workflow and Network Architecture

The following diagrams, generated using Graphviz, illustrate the core reconstruction workflow and a key network representation.

Metabolic Reconstruction Workflow

Reaction Graph to m-DAG Transformation

The Scientist's Toolkit

This section details essential software tools and resources that form the core toolkit for modern metabolic reconstruction.

Table 3: Essential Tools for Metabolic Reconstruction and Analysis

| Tool / Resource | Type | Function and Application | |

|---|---|---|---|

| PathwayTools | Software Package | Assists in building organism-specific PGDBs, provides visualization, and can generate FBA-ready models from a database. | [9] |

| ModelSEED | Web Resource | Enables automated construction of draft metabolic models from annotated genomes and provides analysis tools. | [9] |

| cobrapy | Python Package | A widely used tool for constraint-based modeling of metabolic networks, including FBA and FVA. | [10] |

| moped | Python Package | Serves as an integrative hub for reproducible model construction, modification, curation, and analysis (e.g., network expansion). | [10] |

| MetaDAG | Web Tool | Constructs and analyzes metabolic networks from KEGG data, generating simplified reaction graphs and m-DAGs for topological comparison. | [12] [14] |

| AGORA2 | Resource Database | A collection of 7,302 curated, strain-level genome-scale metabolic models of human gut microbes. | [13] |

| APOLLO | Resource Database | A massive resource of 247,092 microbial genome-scale metabolic reconstructions from the human microbiome. | [17] |

Metabolic network reconstruction is a foundational process in systems biology that integrates genomic, biochemical, and physiological information to build organism-specific metabolic networks. These reconstructions serve as computational platforms for analyzing, simulating, and predicting metabolic functions, with applications ranging from basic biological discovery to drug target identification and metabolic engineering. The process involves identifying all metabolic reactions present in an organism and linking them to corresponding genes and proteins, creating a genotype-phenotype relationship that can be queried and manipulated in silico. The four knowledge bases discussed in this whitepaper—KEGG, BioCyc, BRENDA, and BiGG—provide the essential data infrastructure and computational tools that make large-scale metabolic reconstruction possible. When framed within the context of a broader thesis on understanding metabolic network reconstruction for modeling research, these resources represent complementary components of the modern systems biology toolkit, each with distinctive strengths, data structures, and applications in biomedical and biotechnological research.

Database Comparative Analysis

The following tables provide a detailed comparison of the four primary databases used in metabolic network reconstruction, highlighting their distinctive features, data content, and primary applications.

Table 1: Core Characteristics of Metabolic Databases

| Database | Primary Focus | Data Sources | Organism Coverage | Access Model |

|---|---|---|---|---|

| KEGG | Reference pathways and functional hierarchies | Manual curation, genomic sequencing | 5,000+ organisms [18] | Freemium (partial free access) |

| BioCyc | Pathway/Genome Databases (PGDBs) | Computational prediction with manual review | 20,080 PGDBs total [19] [20] | Tiered (free & subscription) |

| BRENDA | Enzyme function and kinetics | Manual literature extraction, text mining | 30,000+ organisms [21] | Free (CC BY 4.0) |

| BiGG | Genome-scale metabolic models | Biochemical, genetic, genomic data | 5,000+ metabolites, 10,000+ reactions [22] | Open access |

Table 2: Technical Specifications and Applications

| Database | Data Structure | Key Tools | Model Export | Primary Research Applications |

|---|---|---|---|---|

| KEGG | Reference pathways, orthology groups | KEGG Mapper, Reconstruct [23] | KEGG Markup Language | Pathway mapping, comparative analysis [18] |

| BioCyc | Tiered PGDBs (1-3) based on curation level | Cellular Overview, PathoLogic, Omics Dashboard [19] [20] | SBML | Metabolic engineering, comparative genomics [19] |

| BRENDA | Enzyme-centric with ligand data | EnzymeDetector, 3D Viewer, BRENDA Tissue Ontology [21] [24] | SBML, SOAP API | Enzyme kinetics, drug discovery, biomarker identification [21] |

| BiGG | Biochemical, genetically structured models | Model visualization, metabolic map exploration [22] | SBML | Constraint-based modeling, flux balance analysis [22] |

Database-Specific Methodologies and Workflows

KEGG: Reconstruction from Orthology and Pathways

KEGG employs a standardized framework for metabolic network reconstruction based on its KO (KEGG Orthology) system and reference pathways. The reconstruction methodology involves:

- Gene Annotation: Query genes or proteins are assigned K numbers (KO identifiers) using annotation tools like BlastKOALA or KAAS, which establish orthologous relationships [23].

- Pathway Reconstruction: The K numbers are processed through KEGG Mapper's Reconstruct tool, which maps them onto reference pathway maps [23]. This generates organism-specific metabolic networks by highlighting enzymes present in the query organism on standard KEGG pathway diagrams.

- Network Analysis: The reconstructed pathways enable comparative analysis against KEGG's organism-specific pathways, allowing researchers to identify metabolic capabilities and deficiencies [23].

The KEGG reconstruction approach is particularly valuable for comparative studies across multiple organisms, as it leverages standardized reference maps that maintain consistent pathway definitions and nomenclature.

BioCyc: Computational Generation of Pathway/Genome Databases

BioCyc utilizes a tiered system for metabolic network reconstruction, with varying levels of manual curation:

Tier Classification:

PathoLogic Pipeline: The core reconstruction algorithm involves:

- Importing annotated genomes from RefSeq and other sources [19]

- Predicting metabolic pathways using the PathoLogic program [19]

- Forecasting operons, pathway hole fillers, and transporter reactions [19]

- Generating organism-specific metabolic map diagrams (Cellular Overview) [19]

- Importing additional data from UniProt, PSORTdb, and regulatory databases [19]

Ortholog Computation: BioCyc calculates orthologs using Diamond sequence comparison with an E-value cutoff of 0.001, defining orthologs as bidirectional best hits between proteomes [19].

This multi-tier approach enables BioCyc to scale to thousands of organisms while maintaining high-quality reconstructions for key model organisms through manual curation.

Diagram 1: BioCyc PGDB Reconstruction Workflow [19]

BRENDA: Enzyme-Centric Data Integration for Network Refinement

BRENDA takes a fundamentally different approach focused on enzyme function and kinetics:

Data Acquisition:

Enzyme Classification: All enzymes are classified according to IUBMB Enzyme Commission (EC) numbers, with data organized into >8,000 EC classes [21] [24].

Data Structure: BRENDA organizes information around several key domains:

- Enzyme kinetics parameters (Km, kcat, Ki values)

- Organism-specific enzyme expression and localization

- Ligand data (substrates, products, inhibitors, activators)

- Disease relationships and physiological contexts

Tool Integration: BRENDA provides specialized tools including:

BRENDA serves as a critical resource for constraining and parameterizing metabolic models with experimentally-derived kinetic data, moving beyond stoichiometric networks to dynamic models.

BiGG: Structured Metabolic Models for Systems Biology

BiGG specializes in generating biochemically, genetically, and genomically structured genome-scale metabolic reconstructions:

Data Integration: BiGG integrates published genome-scale metabolic networks into a unified resource with standardized nomenclature, enabling direct comparison of metabolic components across organisms [22].

Model Structure: BiGG models are structured to include:

- Over 5,000 metabolites and 10,000 reactions [22]

- Gene-protein-reaction (GPR) associations

- Compartmentalization information

- Charge and formula balance

Model Applications: BiGG models are specifically designed for systems biology applications including:

- Constraint-based reconstruction and analysis (COBRA)

- Flux balance analysis (FBA)

- Network gap filling and validation

- Synthetic biology design

The standardized nomenclature in BiGG allows researchers to consistently map metabolic components across different organism models, facilitating comparative systems biology.

Integrated Reconstruction Workflow

Combining these databases creates a powerful workflow for comprehensive metabolic network reconstruction:

Diagram 2: Integrated Metabolic Reconstruction Pipeline

Table 3: Essential Computational Tools for Metabolic Reconstruction

| Tool/Resource | Function | Database Association | Application in Reconstruction |

|---|---|---|---|

| KEGG Mapper | Visual mapping of molecular data | KEGG | Pathway completeness assessment [23] |

| PathoLogic | Pathway prediction from genomes | BioCyc | Initial pathway annotation [19] |

| EnzymeDetector | Enzyme annotation verification | BRENDA | Validation of functional assignments [21] |

| Cellular Overview | Metabolic network visualization | BioCyc | Visual exploration of network structure [20] |

| BKMS-react | Biochemical reaction database | BRENDA | Reaction reference and validation [21] |

| SOAP API | Programmatic data access | BRENDA | Automated data retrieval [24] |

| SBML Export | Model exchange format | All databases | Model sharing and simulation [22] |

Advanced Applications in Research

Network Comparison and Evolutionary Analysis

The two-level representation approach implemented in tools like MetNet enables both local (pathway-by-pathway) and global comparison of metabolic networks [18]. This methodology involves:

- Structural Level Analysis: Examining the overall structure of metabolic networks in terms of KEGG pathways and their connections through shared molecular compounds [18].

- Functional Level Analysis: Comparing the functional role of each pathway in terms of its reaction content and connectivity [18].

- Similarity Quantification: Implementing similarity measures at both levels to identify metabolic similarities and differences between organisms, potentially revealing evolutionary relationships and adaptive specializations [18].

Metabolic Modeling for Drug Discovery

Metabolic reconstructions facilitate drug target identification through:

- Essentiality Analysis: Using constraint-based models to predict metabolic enzymes essential for pathogen survival.

- Network-Based Target Identification: Applying iterative algorithms for metabolic network-based drug target identification [25].

- Toxicology Assessment: Utilizing reconstructed human metabolic networks to predict drug metabolism and potential toxicity [25].

Omics Data Integration and Visualization

BioCyc's Omics Dashboard and Cellular Overview provide powerful environments for visualizing high-throughput data within metabolic contexts [20]. This enables researchers to:

- Map Transcriptomics Data: Visualize gene expression patterns directly on metabolic pathways.

- Integrate Metabolomics: Correlate metabolite abundance with pathway activities.

- Identify Regulatory Patterns: Discover coordinated regulation of metabolic genes through visualization of omics data in metabolic contexts.

KEGG, BioCyc, BRENDA, and BiGG represent complementary pillars of the metabolic reconstruction infrastructure, each contributing unique data types, annotation methodologies, and analytical capabilities. KEGG provides the standardized reference framework and orthology-based mapping system; BioCyc offers scalable organism-specific pathway databases with multi-tier curation; BRENDA delivers the essential enzyme kinetic parameters and functional annotations; while BiGG supplies the structured models needed for computational simulation. Together, these resources enable researchers to move from genomic sequences to predictive metabolic models that can drive discoveries in basic biology, drug development, and biotechnology. As metabolic reconstruction continues to evolve toward more complete and quantitative models, the integration of these knowledge bases will remain fundamental to advancing systems biology research.

The steady-state assumption and the stoichiometric matrix constitute the foundational mathematical framework for constraint-based modeling and analysis of metabolic networks. The steady-state assumption posits that for metabolic intermediates, the rate of production equals the rate of consumption, leading to no net accumulation over time. The stoichiometric matrix provides a complete mathematical representation of all metabolic reactions in a system, enabling the quantitative analysis of metabolic fluxes. This technical guide explores the mathematical foundations of these concepts, their integration into computational models, and their critical application in modern metabolic research, particularly in drug development and disease modeling.

Metabolic network reconstruction involves creating a biochemical knowledge base for a specific organism or cell type from genomic, bibliomic, and experimental data. These reconstructions represent structured representations of metabolism that can be converted into mathematical models to simulate metabolic flux distributions [26]. Genome-scale metabolic models (GEMs) have emerged as a formal concept to describe metabolic pathways reconstructed from information present in the genome of living systems as well as biochemical reactions described in the literature [26].

The process of metabolic reconstruction requires gathering a variety of genomic, biochemical, and physiological data from primary literature and databases. Based on extensive evaluations of these sources, generic human metabolic models have been developed, such as Recon 1, which accounts for functions of 1496 ORFs, 2004 proteins, 2766 metabolites, and 3311 metabolic and transport reactions [27]. The latest iteration, Recon3D, provides a thermodynamically constrained version of the global knowledge base of metabolic functions categorised for the human genome [28].

Mathematical Foundation of the Steady-State Assumption

Theoretical Basis and Formalism

The steady-state assumption states that the production and consumption of metabolites inside the cell are balanced. This assumption can be motivated from two different perspectives [29]:

Time-scales perspective: Metabolism is much faster than other cellular processes such as gene expression. Hence, the steady-state assumption is derived as a quasi-steady-state approximation of the metabolism that adapts to changing cellular conditions.

Long-term perspective: Over extended time periods, no metabolite can accumulate or deplete indefinitely.

A proper mathematical representation of the reactions obtained for a specific organism is necessary for any structural analysis of metabolic networks. For a network with m intermediates and r reactions, the stoichiometric matrix N has dimensions m × r, where the entry nᵢⱼ represents the stoichiometric coefficient of metabolite i in reaction j [30].

In a biochemical reaction network, the rate of change of the concentration of the i-th intermediate sᵢ is given by:

where vⱼ represents the flux of reaction j. At steady state, the rates of change of all intermediates are zero:

This equation represents the fundamental steady-state constraint in metabolic network analysis [30].

Application in Growing and Oscillating Systems

Contrary to traditional understanding, the steady-state assumption also applies to oscillating and growing systems without requiring quasi-steady-state at any time point. A mathematical framework based on averages demonstrates that the assumption holds for long time periods in such systems, justifying its successful use in many applications [29].

However, it's important to note that in these systems, the average concentrations may not be compatible with the average fluxes, revealing unintuitive effects in the integration of metabolite concentrations using nonlinear constraints into steady-state models for long time periods [29].

The Stoichiometric Matrix: Structure and Properties

Fundamental Representation

The stoichiometric matrix provides a complete mathematical representation of a metabolic network's structure. In this representation, the rows and columns of the stoichiometric matrix represent reactions and metabolites, respectively. A cell with a nonzero value in the matrix represents the stoichiometric coefficient of the corresponding metabolite in the corresponding reaction [30].

In the stoichiometric matrix:

- Positive values indicate products

- Negative values indicate substrates

- Zero values indicate the metabolite does not participate in the reaction

This representation is a full representation of the network structure, enabling several quantitative analysis methods including flux balance analysis, elementary flux mode analysis, and extreme pathway analysis [30].

Enhanced Matrix Representations

For more complex analyses, the stoichiometric matrix can be augmented with additional information. For example, adding a hypothetical reaction to a system results in an augmented stoichiometric matrix that can be processed to its reduced row echelon form (RREF) [30].

The RREF of the augmented stoichiometric matrix helps identify key and nonkey reactions. Reactions in pivot columns are the nonkey ones, meaning they can be written as linear combinations of nonpivot (key) reactions. This analysis reveals the fundamental equality:

This relationship is critical for understanding the balances in metabolic systems [30].

Constraint-Based Modeling and Analysis Methods

Flux Balance Analysis

Flux Balance Analysis (FBA) is a constraint-based modeling approach that uses linear programming to optimize metabolic fluxes based on stoichiometric coefficients of each reaction throughout the entire metabolic network. FBA requires steady-state absolute concentrations for optimization and can handle large-scale systems without needing kinetic information [31].

FBA operates under the steady-state assumption and uses the stoichiometric matrix to define constraints on the system. The solution space is further constrained by additional factors such as enzyme capacities and nutrient availability. An objective function (e.g., biomass production, ATP yield) is then optimized within this constrained space to predict metabolic behavior.

Metabolic Pathway Analysis

Metabolic Pathway Analysis includes methods such as Elementary Flux Mode Analysis and Extreme Pathway Analysis. These methods decompose complex metabolic networks into meaningful functional units or pathways [30].

However, when dealing with large-scale genome-based metabolic networks, these methods often face serious computational problems. The combinatorial explosion problem resulting from huge numbers of pathways often makes it difficult or even impossible to calculate all elementary modes or extreme pathways in genome-scale metabolic networks [30].

Table 1: Constraint-Based Analysis Methods for Metabolic Networks

| Method | Mathematical Basis | Key Applications | Limitations |

|---|---|---|---|

| Flux Balance Analysis (FBA) | Linear programming with stoichiometric constraints | Prediction of growth rates, nutrient uptake, gene essentiality | Requires objective function; may have multiple optimal solutions |

| Elementary Flux Mode Analysis | Convex analysis of stoichiometric matrix | Pathway identification, network redundancy assessment | Computationally intensive for large networks |

| Extreme Pathway Analysis | Systemically independent pathways | Analysis of network capabilities, pathway redundancy | Similar computational challenges as elementary modes |

| 13C-Metabolic Flux Analysis (13C-MFA) | Isotope labeling patterns combined with stoichiometry | Quantification of intracellular fluxes in central metabolism | Requires extensive experimental data; limited to central metabolism |

Experimental Protocols for Model Validation

Protocol for Steady-State Validation Using Isotope Tracing

Purpose: To validate metabolic steady-state assumptions and quantify intracellular metabolic fluxes using stable isotope tracing.

Materials and Reagents:

- Stable isotope-labeled substrates (e.g., 13C6-glucose, 13C5-glutamine)

- Modified culture media without natural abundance compounds of interest

- Ice-cold methanol for metabolite extraction

- Internal standards (e.g., D6-glutaric acid)

- Chloroform for phase separation

Procedure:

- Culture cells until 70-80% confluency in appropriate growth medium.

- Replace medium with flux media containing isotope-labeled substrates.

- Incubate for specific time intervals (typically 24-48 hours) to allow isotope incorporation.

- Quench metabolism rapidly with ice-cold methanol.

- Extract intracellular metabolites using a methanol-chloroform-water system.

- Analyze metabolite labeling patterns using LC-MS or GC-MS.

- Calculate metabolic fluxes using computational tools such as INCA (Isotopomer Network Compartmental Analysis) [28].

Protocol for Constraint-Based Model Reconstruction

Purpose: To reconstruct a cell-specific genome-scale constraint-based model from transcriptomic data and the global human metabolic reconstruction.

Materials and Computational Tools:

- Transcriptomic data (e.g., from NCBI Gene Expression Omnibus)

- Global metabolic reconstruction (e.g., Recon3D)

- Algorithms: mCADRE, redHuman, Metabotools

- Software: COBRA Toolbox, MATLAB

Procedure:

- Retrieve tissue-specific or cell-specific transcriptomic data from public databases.

- Map expression data to reactions in the global metabolic model (Recon3D).

- Use the mCADRE algorithm to generate a cell-specific model by removing reactions with low expression support.

- Apply thermodynamic constraints using the redHuman algorithm subroutine.

- Constrain the model to mimic specific growth conditions (e.g., RPMI medium) using Metabotools.

- Validate the resulting model against experimental data including growth rates and respiration rates [28].

Table 2: Research Reagent Solutions for Metabolic Flux Studies

| Reagent/Category | Specific Examples | Function in Metabolic Analysis |

|---|---|---|

| Isotope-Labeled Substrates | 13C6-glucose, 13C5-glutamine | Tracing carbon fate through metabolic pathways; quantifying flux distributions |

| Metabolic Inhibitors | Oligomycin, Rotenone, Antimycin A | Probing specific pathway functions; measuring respiratory capacity |

| Extraction Solvents | Ice-cold methanol, chloroform | Quenching metabolism; extracting intracellular metabolites for analysis |

| Analytical Standards | D6-glutaric acid | Normalizing sample analysis; quantifying metabolite concentrations |

| Cell Culture Media | RPMI 1640, DMEM | Providing controlled nutrient environment for steady-state experiments |

| Computational Tools | COBRA Toolbox, INCA, Metabotools | Constraining metabolic models; simulating fluxes; analyzing isotopic labeling |

Application in Mammalian Systems and Disease Modeling

Challenges in Mammalian Metabolic Modeling

Applying stoichiometric analysis tools to mammalian cells presents unique challenges compared to microbial systems. Limited experimental data, complex regulatory mechanisms, and the requirement for more complex nutrient media are major obstacles. Additionally, mammalian systems often involve compartmentalization (e.g., cytosol and mitochondria) and complex transport mechanisms that must be accounted for in models [27].

Despite these challenges, mammalian cells have been used to produce therapeutic proteins, characterize disease states, and analyze toxicological effects of drugs. Therefore, there is a growing need for extending metabolic engineering principles to mammalian cells to understand their underlying metabolic functions [27].

Case Study: Multiple Myeloma Metabolic Interactions

A recent study employed an interdisciplinary approach to model metabolic interactions in multiple myeloma (MM), a malignancy of bone marrow plasma cells. Researchers developed a functional multicellular in-silico model that facilitates qualitative and quantitative analysis of the metabolic network in an in-vitro co-culture of bone marrow mesenchymal stem cells (BMMSC) and myeloma cell lines [28].

The computational workflow transformed Recon3D into two cell-specific models coupled with a metabolic network spanning a shared growth medium. When cross-validating the in-silico model against the in-vitro model, researchers found that the in-silico model successfully reproduced vital metabolic behaviors, including cell growth predictions, respiration rates, and cross-shuttling of redox-active metabolites between cells [28].

This approach demonstrates how the steady-state assumption and stoichiometric analysis can be applied to understand complex metabolic interactions in disease contexts, potentially revealing novel therapeutic targets.

Visualization of Metabolic Networks and Analysis Workflows

Stoichiometric Matrix Structure and Metabolic Network

Constraint-Based Modeling and Analysis Workflow

The steady-state assumption and stoichiometric matrix provide a powerful mathematical foundation for analyzing and simulating metabolic networks. While the steady-state assumption simplifies complex dynamic systems by balancing production and consumption of metabolites, the stoichiometric matrix completely represents the network structure of metabolic reactions. Together, they enable constraint-based modeling approaches that can predict metabolic behavior, identify potential drug targets, and elucidate disease mechanisms.

Recent advances have demonstrated that the steady-state assumption applies not only to traditional constant systems but also to oscillating and growing systems over long time periods. Combined with sophisticated computational workflows that integrate transcriptomic data and thermodynamic constraints, these mathematical foundations continue to drive innovations in metabolic research and therapeutic development.

The continued refinement of genome-scale metabolic models and the integration of multi-omics data promise to further enhance the predictive power and application scope of these foundational mathematical concepts in biomedical research and drug development.

From Theory to Therapy: Methodologies and Drug Discovery Applications

Constraint-Based Modeling is a computational approach that uses genome-scale metabolic models (GEMs) to predict cellular metabolism by applying physico-chemical and biological constraints. Within this framework, Flux Balance Analysis (FBA) has emerged as a fundamental mathematical method for analyzing the flow of metabolites through biochemical networks. FBA operates on the principle that metabolic systems reach a steady state, where the production and consumption of metabolites are balanced. This approach utilizes the stoichiometric coefficients of every reaction in a GEM to form a numerical matrix, which defines the solution space of all possible metabolic fluxes. By applying an optimization function to this space, FBA identifies a specific flux distribution that maximizes a biological objective, such as biomass production or the synthesis of a target metabolite [32].

The power of FBA lies in its ability to predict metabolic behavior without requiring difficult-to-measure kinetic parameters. It effectively characterizes how engineered genetic circuits rewire metabolism, accounting for competing pathways and the reallocation of cellular resources. However, a key limitation of traditional FBA is its assumption of steady-state operation, which may not fully capture the time-dependent dynamics of real biological systems. Furthermore, FBA primarily relies on stoichiometric constraints and can sometimes predict unrealistically high fluxes. To address this, advanced variants incorporate additional layers of biological complexity, such as enzyme constraints, regulatory rules, and multi-species interactions, enhancing their predictive accuracy and applicability in fields ranging from metabolic engineering to drug discovery [32] [33].

Core Mathematical Principles of FBA

The mathematical foundation of FBA is built upon the stoichiometric matrix, S, where m rows represent metabolites and n columns represent biochemical reactions. Each element Sij corresponds to the stoichiometric coefficient of metabolite i in reaction j. The fundamental equation governing mass balance is:

S · v = 0

where v is a vector of reaction fluxes. This equation encapsulates the steady-state assumption, meaning internal metabolite concentrations do not change over time.

The solution space for possible flux distributions is further constrained by lower and upper bounds for each reaction flux:

α ≤ v ≤ β

These bounds define the biochemical irreversibility of reactions and incorporate knowledge about nutrient availability and enzyme capacities. The space of all flux vectors satisfying these constraints is a convex polyhedral cone. FBA identifies an optimal flux distribution within this space by maximizing or minimizing a specific cellular objective function, Z = c · v, where c is a vector of weights. Common objectives include maximizing biomass growth, ATP production, or the synthesis rate of a target biochemical [32].

Table 1: Key Components of the FBA Mathematical Framework

| Component | Symbol | Description | Role in FBA |

|---|---|---|---|

| Stoichiometric Matrix | S |

m x n matrix of stoichiometric coefficients |

Defines the mass-balance constraints for the metabolic network. |

| Flux Vector | v |

n-dimensional vector of reaction rates |

The variable to be solved; represents the flux through each reaction. |

| Objective Function | Z = c · v |

Linear combination of fluxes to be optimized | Defines the biological goal of the cell (e.g., biomass maximization). |

| Flux Constraints | α ≤ v ≤ β |

Lower and upper bounds for each flux | Incorporates thermodynamic and environmental constraints. |

Advanced FBA Frameworks and Methodological Variants

Enzyme-Constrained FBA (ecFBA)

Standard FBA can predict unrealistically high fluxes because it lacks constraints on enzyme capacity and availability. The Enzyme-Constrained FBA (ecFBA) variant addresses this by incorporating kinetic data, capping fluxes based on enzyme abundance and catalytic efficiency (kcat values). This avoids arbitrary high flux predictions and improves model accuracy. A practical implementation, such as the ECMpy workflow, modifies a base GEM by splitting reversible reactions into forward and reverse directions and splitting reactions catalyzed by multiple isoenzymes to assign distinct kcat values. Molecular weights are calculated from protein subunit compositions, and a total enzyme budget is imposed based on the measured protein mass fraction of the cell. This approach increases model predictability without significantly altering the GEM structure or adding pseudo-reactions, as seen in other frameworks like GECKO or MOMENT [32].

Dynamic FBA (dFBA) and Multi-Species Modeling

For systems where the external environment changes over time, Dynamic FBA (dFBA) extends the standard framework. dFBA simulates time-course profiles of metabolite concentrations and cell growth by iteratively performing FBA and updating the extracellular conditions. This is particularly useful for modeling microbial communities, such as gut microbiomes. A multiscale framework for a community of species uses FBA to predict the growth and metabolic activity of each species on a short time scale given available nutrients. The shared environment is then updated based on the species' predicted uptake and secretion rates, creating dynamic, emergent metabolic interactions. The biomass of each species k is updated for the next time point using an exponential growth function that incorporates dilution, ensuring realistic community dynamics [34].

Topology-Informed and Objective-Finding Frameworks

Selecting an appropriate objective function is critical for FBA accuracy. The TIObjFind (Topology-Informed Objective Find) framework integrates Metabolic Pathway Analysis (MPA) with FBA to systematically infer metabolic objectives from experimental data. This method determines Coefficients of Importance (CoIs) that quantify each reaction's contribution to a hypothesized objective function, aligning model predictions with experimental flux data. TIObjFind solves an optimization problem that minimizes the difference between predicted and experimental fluxes while maximizing an inferred metabolic goal. It then maps FBA solutions onto a Mass Flow Graph (MFG), enabling a pathway-based interpretation of metabolic flux distributions and revealing shifting metabolic priorities under different conditions [33].

Modeling Multireaction Dependencies

Recent research has moved beyond pairwise reaction dependencies to explore multireaction dependencies. The concept of a forcedly balanced complex provides an efficient way to determine the effects of these dependencies on metabolic network functions. A complex, defined as a set of species jointly consumed or produced by a reaction, is considered balanced if the sum of fluxes of its incoming reactions equals the sum of fluxes of its outgoing reactions in every steady-state flux distribution. By forcing a specific non-balanced complex to become balanced, new functional relationships and vulnerabilities within the network can be identified, offering a novel means to control metabolic functions, with potential applications in areas like cancer therapy [35].

Experimental Protocols and Implementation

Protocol: Implementing an Enzyme-Constrained Model with ECMpy

This protocol outlines the steps for creating an enzyme-constrained metabolic model from a base GEM, adapted from the iGEM Virginia 2025 workflow [32].

Model Curation and Modification:

- Begin with a well-curated GEM (e.g., iML1515 for E. coli).

- Incorporate genetic modifications relevant to your study. This includes updating Gene-Protein-Reaction (GPR) relationships and modifying

kcatvalues and gene abundance levels to reflect engineered enzymes (e.g., removed feedback inhibition, increased activity). - Perform gap-filling to add missing reactions essential for the pathways of interest (e.g., thiosulfate assimilation pathways).

- Split all reversible reactions into forward and reverse reactions to assign directional

kcatvalues. - Split reactions catalyzed by multiple isoenzymes into independent reactions.

Data Integration:

- Obtain enzyme kinetic data (

kcatvalues) from databases like BRENDA. - Acquire enzyme abundance data (e.g., from PAXdb) and protein subunit composition (e.g., from EcoCyc).

- Set the total protein mass fraction for the cell (e.g., 0.56 for E. coli).

- Obtain enzyme kinetic data (

Defining Medium Conditions:

- Update the upper bounds of exchange reactions to reflect the uptake rates of metabolites in the cultivation medium.

- These rates can be approximated from initial medium concentrations and molecular weights if well-characterized uptake data is unavailable.

- Block the uptake of metabolites that could lead to trivial solutions (e.g., blocking L-serine and L-cysteine uptake to force flux through their biosynthesis pathways).

Model Simulation and Optimization:

- Use a tool like ECMpy to apply the enzyme capacity constraints to the curated GEM.

- Perform FBA using a package like COBRApy.

- To avoid solutions with zero biomass growth, use lexicographic optimization: first optimize for biomass, then constrain the model to require a percentage of this optimal growth (e.g., 30%) while optimizing for the target objective (e.g., L-cysteine export).

Diagram 1: Enzyme-Constrained FBA Workflow. This flowchart outlines the key steps for constructing and simulating an enzyme-constrained metabolic model, from initial curation to final analysis.

Protocol: Simulating a Multi-Species Community with dFBA

This protocol describes how to simulate the growth and metabolism of a microbial community using dFBA, based on a method for modeling gut species [34].

Model and Environment Setup: