Monte Carlo Sampling for Kinetic Model Parameterization: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive overview of Monte Carlo sampling methods for parameterizing kinetic models, a critical task in systems biology and drug development.

Monte Carlo Sampling for Kinetic Model Parameterization: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive overview of Monte Carlo sampling methods for parameterizing kinetic models, a critical task in systems biology and drug development. It covers foundational principles, key algorithms like Markov Chain Monte Carlo (MCMC) and Kinetic Monte Carlo (KMC), and their application to complex biological systems. The content delves into advanced methodologies, including hybrid optimization strategies and specialized techniques like Grand Canonical Monte Carlo for drug discovery. It further addresses practical challenges such as computational cost, convergence diagnostics, and performance benchmarking, offering troubleshooting and optimization guidance. Finally, it synthesizes validation frameworks and comparative analyses of different methods, providing researchers and drug development professionals with the knowledge to robustly estimate parameters for predictive biological models.

Understanding the Basics: Why Monte Carlo Methods are Essential for Kinetic Modeling

Monte Carlo methods represent a broad class of computational algorithms that rely on repeated random sampling to obtain numerical results and solve problems that might be deterministic in principle [1] [2]. The name originates from the Monte Carlo Casino in Monaco, inspired by the gambling activities of the uncle of the primary developer, mathematician Stanisław Ulam [1]. These methods have become fundamental across diverse scientific disciplines, including physics, engineering, finance, and particularly in kinetic model parameterization research where they enable the simulation of complex stochastic processes that are analytically intractable.

The core concept involves using randomness to solve deterministic problems by approximating probability distributions through random sampling [3]. In the context of kinetic parameterization, this approach allows researchers to estimate parameters for systems with significant uncertainty or combinatorial complexity, such as cellular signaling pathways and molecular interactions [4]. Monte Carlo methods have revolutionized these fields by providing tools to model phenomena with coupled degrees of freedom and inherent uncertainty, making them indispensable for modern scientific research, including drug development where understanding kinetic parameters is crucial for pharmacokinetic and pharmacodynamic modeling.

Core Principles and Mathematical Foundation

Fundamental Algorithmic Pattern

Monte Carlo methods follow a consistent pattern across applications, comprising four key stages [1]:

- Define a domain of possible inputs: Establish the parameter space and boundaries for the system under investigation.

- Generate inputs randomly: Sample from probability distributions over the domain, typically using pseudorandom number generators.

- Perform deterministic computation: Execute the core model or simulation using the random inputs.

- Aggregate the results: Combine individual outputs to form statistical estimates and approximations.

This workflow transforms complex integration problems into more manageable averaging problems through the mechanism of random sampling, leveraging the Law of Large Numbers to ensure convergence to correct solutions [1].

Mathematical Framework

The mathematical foundation of Monte Carlo methods rests on probability theory and statistical estimation. Consider a probability distribution $p(\mathbf{x})\mathrm{d}\mathbf{x}$ on a sample space $\Omega$ parametrized by $\mathbf{x}\in\Omega$, where $p(\mathbf{x})$ is non-negative and $\int_\Omega p(\mathbf{x})\mathrm{d}\mathbf{x} = 1$. For an observable or random variable $\mathcal{O}(\mathbf{x})$, the expectation value is given by:

$$\mathbb{E}[ \mathcal{O} ] = \int_{\Omega}\, \mathcal{O}(\mathbf{x})\, p(\mathbf{x}) \mathrm{d}\mathbf{x}.$$

Monte Carlo approximation uses independent samples $\mathbf{x}1, \mathbf{x}2, \ldots, \mathbf{x}_N$ distributed according to $p$ to estimate this expectation:

$$\mathbb{E}[ \mathcal{O} ] \approx \frac{1}{N} \sum{i=1}^N \mathcal{O}(\mathbf{x}i).$$

This transformation from integration to sampling makes previously intractable problems computationally feasible [5].

Sampling Techniques

Multiple sampling strategies exist, with the choice dependent on problem structure and computational constraints:

- Direct Sampling: Independent samples generated from the target distribution using operational procedures, as demonstrated by the classic pebble game for estimating π [5]. This approach is efficient when straightforward generation mechanisms exist.

- Markov Chain Monte Carlo (MCMC): Samples generated sequentially through a Markov process where each sample depends only on the previous state [1] [5]. This method is particularly valuable for complex distributions where direct sampling is infeasible.

- Importance Sampling: A variance reduction technique that samples from an alternative distribution to improve efficiency, then weights results appropriately.

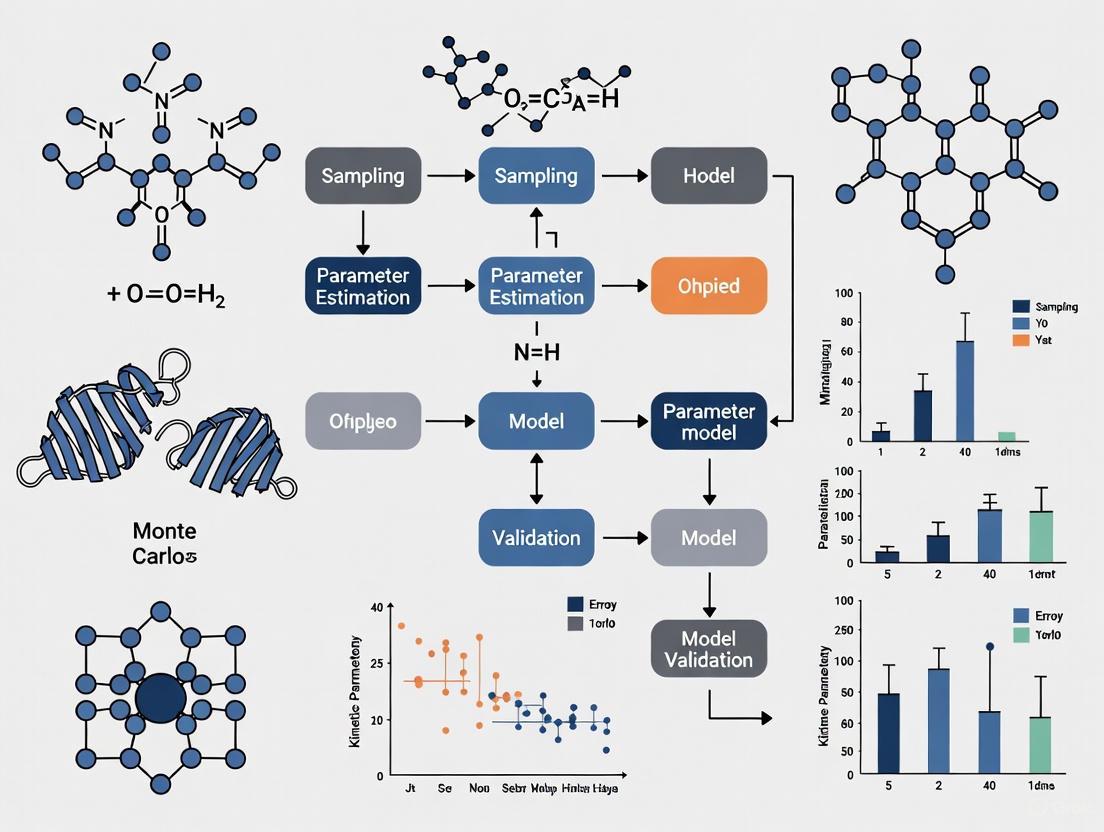

The following workflow illustrates the fundamental Monte Carlo process and its major variants:

Monte Carlo Method Workflow and Sampling Approaches

Monte Carlo Methods in Kinetic Parameterization

Rule-Based Kinetic Monte Carlo

In kinetic parameterization research, traditional population-based simulation methods face significant challenges due to combinatorial complexity, where proteins and other macromolecules can interact in myriad ways to generate vast numbers of distinct chemical species and reactions [4]. Rule-based Kinetic Monte Carlo (KMC) addresses this by directly simulating chemical transformations specified by reaction rules rather than explicitly enumerating all possible reactions.

The rule-based KMC method operates on molecules $P = {P1, \ldots, PN}$ composed of components $C = {C1, \ldots, Cn}$, each with a local state $Si$ representing type, binding partners, and internal states [4]. Reactions are defined by rules $R = {R1, \ldots, R_m}$ that identify molecular components involved in transformations, conditions affecting whether transformations occur, and associated rate laws. The computational cost thus depends on the number of rules $m$ rather than the potentially astronomical number of reactions $M$, making previously intractable systems computationally feasible.

Application to Multivalent Ligand-Receptor Interactions

A demonstrative application involves modeling multivalent ligand-receptor interactions, particularly relevant to drug development [4]. The TLBR (Trivalent Ligand-Bivalent Receptor) model characterizes interactions between trivalent ligands and bivalent cell-surface receptors, representing systems with potential for aggregation and percolation transitions. The model employs three fundamental rules governing association and dissociation kinetics:

- Rule R1: Initial association between free ligand binding sites and free receptor sites with rate $r1 = (k{+1}/V)|X{11}| \cdot |X{12}|$

- Rule R2: Association between receptor-associated ligand binding sites and free receptor sites with rate $r2 = (k{+2}/V)|X{21}| \cdot |X{22}|$

- Rule R3: Dissociation of bound complexes with rate $r3 = k{\text{off}}|X{31}| = k{\text{off}}|X_{32}|$

This rule-based approach efficiently handles the explosive combinatorial complexity that arises near percolation transitions where mean aggregate size increases dramatically [4].

Nuclear Kinetic Parameter Calculation

Monte Carlo methods also find application in calculating kinetic parameters for nuclear systems, demonstrating their versatility across scientific domains. Research on Accelerator Driven Subcritical TRIGA reactors employs multiple Monte Carlo techniques, including Feynman-α (variance-to-mean), Rossi-α (correlation), and slope fit methods to determine effective delayed neutron fraction (βeff) and neutron reproduction time (Λ) [6]. These parameters are crucial for understanding reactor safety and time-dependent power behavior following reactivity insertion. The MCNPX code implements these Monte Carlo approaches, validating results against both reference data and established experimental methods [6].

Experimental Protocols and Implementation

Basic Monte Carlo Integration Protocol

Objective: Estimate the value of π through direct Monte Carlo simulation.

Principle: The ratio of areas between a unit circle and its circumscribing square equals π/4. Random sampling within the square provides an estimate of this ratio [1] [5].

Procedure:

- Define a unit square domain spanning $[-1,1] \times [-1,1]$

- Generate $N$ random points $(xi, yi)$ uniformly distributed within the square

- For each point, determine if it lies within the unit circle: $xi^2 + yi^2 < 1$

- Count the number of points $N_{\text{circle}}$ satisfying this condition

- Calculate the estimate: $\pi{\text{estimate}} = 4 \times N{\text{circle}} / N$

Python Implementation:

Considerations:

- Accuracy improves with increasing $N$; standard error decreases as $1/\sqrt{N}$

- For 95% confidence and error tolerance $\epsilon$, ensure $N \geq 10.6(b-a)^2/\epsilon^2$ for bounded results [1]

- Computational cost scales linearly with $N$, making parallelization effective [1]

Rule-Based Kinetic Monte Carlo Protocol

Objective: Simulate chemical kinetics for systems with combinatorial complexity without explicit reaction network generation.

Principle: Transformations are applied directly to molecular representations based on reaction rules, with stochastic selection proportional to rule rates [4].

Procedure:

- System Representation:

- Represent molecules as structured objects with components and associated states

- Define component sets $C = {C1, \ldots, Cn}$ with states $S = {S1, \ldots, Sn}$

Rule Specification:

- Define reaction rules $R = {R1, \ldots, Rm}$ specifying:

- Molecular components involved as reactants

- Transformation of component states

- Contextual conditions affecting reactivity

- Rate law $r_i$ for each rule

- Define reaction rules $R = {R1, \ldots, Rm}$ specifying:

Simulation Algorithm:

- Initialize molecular population and time $t = 0$

- While $t < t{\text{max}}$: a. Identify all rule instances applicable to current system state b. Calculate cumulative rate $R{\text{total}} = \sum ri$ for all applicable rules c. Generate two random numbers $u1, u2 \sim U(0,1)$ d. Select reaction $j$ with probability $rj / R{\text{total}}$ e. Update time: $t \leftarrow t - \ln(u1)/R_{\text{total}}$ f. Apply selected rule to update system state

- Record trajectories and statistics

Implementation Considerations:

- Use efficient data structures for tracking molecular states and rule applicability

- For systems with spatial heterogeneity, incorporate appropriate diffusion operators

- Employ rate adjustment strategies to handle stiffness in multi-scale systems

The following diagram illustrates the rule-based KMC process for a ligand-receptor system:

Rule-Based KMC for Ligand-Receptor Systems

Determining Sample Size for Desired Precision

Objective: Calculate the number of Monte Carlo samples needed to achieve a specified statistical accuracy.

Procedure:

- Pilot Simulation: Run $k$ initial simulations (typically $k \geq 100$) to estimate variance

- Variance Estimation: Compute sample variance $s^2$ from pilot results

- Sample Size Calculation: For confidence level $z$ and error tolerance $\epsilon$, required samples are:

$$n \geq s^2 z^2 / \epsilon^2$$

Alternative for Bounded Results: When results are bounded between $[a,b]$, use:

$$n \geq 2(b-a)^2 \ln(2/(1-(\delta/100)))/\epsilon^2$$

where $\delta$ is the desired confidence percentage [1].

Quantitative Data and Research Reagents

Kinetic Parameters from Monte Carlo Simulations

Table 1: Kinetic Parameters Calculated by Monte Carlo Methods in Nuclear Systems [6]

| Parameter | Method | Value | Relative Difference | Application |

|---|---|---|---|---|

| Effective delayed neutron fraction (βeff) | Feynman-α | Reference | -5.4% | Reactor safety analysis |

| Effective delayed neutron fraction (βeff) | Rossi-α | Reference | +1.2% | Reactor safety analysis |

| Effective delayed neutron fraction (βeff) | Slope fit | Reference | -10.6% | Reactor safety analysis |

| Neutron reproduction time (Λ) | Multiple methods | Reference | ~2.1% | Power transient analysis |

| Kinetic parameters | MCNPX code | Benchmark | Varies | Accelerator Driven Systems |

Computational Performance Characteristics

Table 2: Monte Carlo Method Performance and Scaling Properties

| Method Type | Computational Cost | Memory Requirements | Key Advantage | Typical Applications |

|---|---|---|---|---|

| Conventional KMC | $O(\log_2 M)$ per event | Proportional to $M$ reactions | Established method | Simple reaction networks |

| Rule-based KMC | Independent of $M$ | Independent of $M$ | Handles combinatorial complexity | Cellular signaling, aggregation |

| Direct sampling | Linear with $N$ samples | Constant | Simple implementation | Integration, π estimation |

| MCMC sampling | Linear with steps | Constant | Handles complex distributions | Bayesian inference, statistical physics |

| Population ODE | Polynomial in species | Cubic for stiff systems | Deterministic | Well-mixed large populations |

Research Reagent Solutions

Table 3: Essential Computational Reagents for Monte Carlo Research

| Reagent / Tool | Function | Application Context | Implementation Notes |

|---|---|---|---|

| Pseudorandom Number Generator | Generate random sequences | All Monte Carlo simulations | Use cryptographically secure generators for sensitive applications |

| MCNPX Code | Neutron transport simulation | Nuclear kinetic parameters | Version 2.4+; supports complex geometries [6] |

| κ-calculus/BNGL | Rule specification language | Biochemical network modeling | Provides formal semantics for reaction rules [4] |

| Markov Chain Sampler | Generate correlated samples | Complex probability distributions | Requires convergence diagnostics (Gelman-Rubin) |

| Variance Estimator | Quantify statistical uncertainty | Result validation | Running variance algorithms available [1] |

| Data Tables | Batch simulation management | Excel-based Monte Carlo | Enable multiple recalculations with recording [7] |

Monte Carlo methods provide an indispensable framework for tackling complex problems in kinetic parameterization and beyond. By transforming deterministic challenges into statistical sampling problems, these techniques enable researchers to extract meaningful parameters from systems characterized by uncertainty, combinatorial complexity, and multi-scale dynamics. The core principles of random sampling, statistical aggregation, and computational approximation form a versatile foundation that continues to evolve with advancements in computing power and algorithmic sophistication.

For kinetic model parameterization specifically, rule-based Monte Carlo approaches represent a significant advancement over traditional methods, efficiently handling the combinatorial explosion inherent in biological systems like multivalent ligand-receptor interactions [4]. Similarly, in nuclear applications, Monte Carlo techniques enable precise calculation of kinetic parameters essential for safety analysis [6]. As computational capabilities grow and Monte Carlo methodologies continue to develop, these approaches will undoubtedly remain at the forefront of scientific research, providing powerful tools for understanding and predicting the behavior of complex systems across diverse domains, including pharmaceutical development where accurate kinetic parameters are essential for drug design and optimization.

The Parameter Estimation Challenge in Kinetic Models of Biological Systems

Kinetic models are indispensable for deciphering the dynamic behavior of biological systems, from intracellular signaling pathways to metabolic networks and gene regulatory circuits. The predictive power of these models, however, hinges on accurate parameter estimation—a formidable challenge given the inherent noise in experimental data, structural uncertainties in model topology, and the frequently non-identifiable nature of parameters. Within the context of Monte Carlo sampling for kinetic model parameterization, researchers increasingly leverage stochastic algorithms to navigate complex, high-dimensional parameter spaces where traditional optimization methods falter. These methods are particularly valuable for approximating posterior distributions in Bayesian inference frameworks, enabling researchers to quantify uncertainty in parameter estimates and model predictions [8].

The parameter estimation challenge manifests in several forms: practical non-identifiability where different parameter sets yield identical model outputs, structural non-identifiability arising from redundant parameter dependencies, and computational bottlenecks in evaluating likelihood functions for complex models. Monte Carlo methods, particularly Markov Chain Monte Carlo (MCMC) and Kinetic Monte Carlo (KMC), provide powerful frameworks to address these challenges by generating representative samples from posterior distributions, even when the normalizing constants are computationally intractable [9]. This protocol explores the application of these methods to kinetic models in biological systems, providing detailed methodologies, benchmarking data, and practical implementation guidelines.

Background and Significance

Kinetic Models in Biological Systems

Kinetic models of biological systems typically employ ordinary differential equations (ODEs) to describe the temporal evolution of molecular species concentrations. The general form of these models can be represented as:

[ \dot{x} = f(x, t, \eta), \quad x(t0) = x0(\eta) ]

where (x(t)) represents the state vector of molecular concentrations, (\eta) denotes the parameter vector (typically kinetic rate constants), (f) defines the vector field describing the system dynamics, and (x_0) specifies the initial conditions [8]. Experimental observations of these systems are invariably corrupted by measurement noise, commonly modeled as:

[ \tilde{y}{ik} = yi(tk) + \varepsilon{ik}, \quad \varepsilon{ik} \sim \mathcal{N}(0, \sigmai^2) ]

where (\tilde{y}{ik}) represents the noisy measurement of observable (i) at time (tk), and (\sigma_i) denotes the standard deviation of the measurement noise [8].

The Parameter Estimation Problem

Parameter estimation for kinetic models involves determining the parameter values (\theta = (\eta, \sigma)) that best explain the experimental data (D = {(tk, \tilde{y}k)}{k=1}^{nt}). In Bayesian frameworks, this translates to sampling from the posterior distribution:

[ p(\theta|D) = \frac{p(D|\theta)p(\theta)}{p(D)} ]

where (p(D|\theta)) is the likelihood function, (p(\theta)) represents the prior distribution encapsulating existing knowledge about parameters, and (p(D)) is the marginal likelihood [8]. For systems with complex likelihood surfaces featuring multimodality, strong parameter correlations, or pronounced tails, traditional optimization methods often converge to local minima, necessitating the use of stochastic sampling approaches.

Monte Carlo Methods for Parameter Estimation

Monte Carlo methods encompass a family of computational algorithms that rely on repeated random sampling to obtain numerical results. These methods are particularly valuable for solving problems that might be deterministic in principle but are too complex for analytical solutions [1]. In the context of parameter estimation for kinetic models, several Monte Carlo approaches have been developed:

Table 1: Monte Carlo Methods for Parameter Estimation

| Method | Key Characteristics | Applicability | Computational Considerations |

|---|---|---|---|

| Markov Chain Monte Carlo (MCMC) | Generates correlated samples from posterior distribution; includes adaptation mechanisms | High-dimensional parameter spaces; multi-modal posteriors | Convergence diagnostics required; chain mixing can be slow |

| Kinetic Monte Carlo (KMC) | Models discrete events; simulates reaction dynamics in stochastic systems | Chemical kinetics; cellular processes; rare event simulation | Computational cost scales with number of possible events |

| Monte Carlo Expectation Maximization (MCEM) | Combines Monte Carlo simulation with EM algorithm for maximum likelihood estimation | Models with latent variables or missing data | Multiple simulations per iteration; can be computationally intensive |

| Natural Move Monte Carlo (NMMC) | Uses collective coordinates to reduce dimensionality; customized moves | Biomolecular systems; functional motion analysis | Several orders of magnitude faster than atomistic methods |

Markov Chain Monte Carlo (MCMC) Algorithms

MCMC algorithms have become the cornerstone of Bayesian parameter estimation for kinetic models, enabling researchers to sample from complex posterior distributions without calculating intractable normalizing constants. The core principle involves constructing a Markov chain that converges to the target posterior distribution as its stationary distribution [9]. Several MCMC variants have been developed to improve sampling efficiency:

- Adaptive Metropolis (AM): Updates proposal distribution based on previously sampled parameters, aligning it with the posterior covariance structure [8]

- Delayed Rejection Adaptive Metropolis (DRAM): Combines adaptation with delayed rejection, where alternative proposals are considered after rejection to reduce in-chain autocorrelation [8]

- Metropolis-Adjusted Langevin Algorithm (MALA): Utilizes gradient information to propose moves aligned with the posterior topography, improving sampling efficiency [8]

- Parallel Tempering: Employs multiple chains at different temperatures to facilitate escape from local modes, with occasional state exchanges between chains [8]

The basic Metropolis algorithm follows a straightforward procedure: (1) Initialize parameter values; (2) Generate a proposed jump using a symmetric distribution; (3) Compute acceptance probability as ( p{\text{move}} = \min\left(1, \frac{P(\theta{\text{proposed}})}{P(\theta_{\text{current}})}\right) ); (4) Accept or reject the proposal based on this probability; (5) Repeat until convergence [9].

Kinetic Monte Carlo (KMC) for Biological Systems

While traditionally applied in materials science and catalysis, KMC is increasingly finding applications in biological systems. KMC simulates the stochastic time evolution of a system by propagating it from one state to another through discrete events, with the probability of each event determined by its rate constant [10]. The algorithm follows these essential steps:

- Catalog all possible events (w) and their corresponding rates (k_w)

- Compute the total rate (k{\text{total}} = \sumw k_w)

- Select an event (q) with probability (P(q) = kq / k{\text{total}})

- Advance time by (\Delta t = -\ln(\rho)/k_{\text{total}}) where (\rho \in [0,1)) is a random number

- Execute the selected event and update the system state [10]

KMC is particularly valuable for simulating biochemical networks where stochasticity plays a crucial role, such as gene expression with low copy numbers or cellular decision-making processes.

Experimental Protocol: MCMC for Kinetic Parameter Estimation

Problem Formulation and Model Specification

Objective: Estimate parameters of an ODE-based kinetic model from noisy experimental data using MCMC sampling.

Preparatory Steps:

- Define the Biological System: Formulate the reaction network and identify molecular species, reactions, and putative regulatory interactions.

- Specify Mathematical Model: Convert the reaction network to ODEs using mass action kinetics or appropriate rate laws.

- Identify Unknown Parameters: Determine which kinetic parameters, initial conditions, and noise parameters require estimation.

- Collect Experimental Data: Gather time-course measurements of relevant molecular species under appropriate experimental conditions.

- Formulate Likelihood Function: Based on error assumptions (typically Gaussian), define ( p(D|\theta) = \prod{i=1}^{ny} \prod{k=1}^{nt} \frac{1}{\sigmai \sqrt{2\pi}} \exp\left(-\frac{(\tilde{y}{ik} - yi(tk))^2}{2\sigma_i^2}\right) ) [8]

- Specify Prior Distributions: Define prior distributions ( p(\theta) ) based on existing biological knowledge or computational constraints.

MCMC Implementation Protocol

Step 1: Algorithm Selection

- For moderately complex problems (≤20 parameters), begin with Adaptive Metropolis

- For multi-modal posteriors or complex correlation structures, use Parallel Tempering

- For problems with computable gradients, consider MALA for improved efficiency [8]

Step 2: Initialization

- Generate initial parameter values by sampling from prior distributions

- For multi-chain methods, initialize chains from dispersed starting points

- Consider using preliminary optimization to identify promising regions of parameter space [8]

Step 3: Adaptation Configuration

- For AM, set adaptation frequency (e.g., update covariance every 100 iterations)

- Configure temperature ladder for Parallel Tempering (geometric progression typically works well)

- Set initial proposal scales (e.g., 0.1-1.0 times parameter standard deviations) [8]

Step 4: Sampling Execution

- Run chains for sufficient iterations (typically 10,000-1,000,000 depending on problem complexity)

- Implement appropriate thinning to reduce storage requirements

- Monitor convergence using diagnostic statistics (Gelman-Rubin statistic, effective sample size) [8]

Step 5: Post-processing and Analysis

- Discard burn-in samples (typically 10-50% of chain length)

- Assess convergence through visual inspection and diagnostic tests

- Compute summary statistics (posterior means, medians, credible intervals)

- Validate model against withheld experimental data [8]

Workflow Visualization

Benchmarking and Performance Evaluation

Comparative Performance of MCMC Algorithms

Comprehensive benchmarking of MCMC algorithms reveals significant differences in performance across problem types. In a systematic evaluation employing >16,000 MCMC runs across diverse biological models, multi-chain methods consistently outperformed single-chain approaches [8].

Table 2: MCMC Algorithm Performance Comparison

| Algorithm | Mixing Efficiency | Multi-modal Exploration | Convergence Rate | Implementation Complexity |

|---|---|---|---|---|

| Adaptive Metropolis | Moderate | Limited | Moderate | Low |

| DRAM | Good | Limited | Moderate | Low |

| MALA | High (with good gradients) | Moderate | Fast | Moderate |

| Parallel Tempering | High | Excellent | Moderate | High |

| Parallel Hierarchical Sampling | High | Good | Moderate | High |

Key findings from benchmarking studies include:

- Multi-chain algorithms demonstrate superior performance for multi-modal posteriors

- Adaptation mechanisms significantly improve sampling efficiency

- For problems with identifiable parameters, all methods perform adequately

- For complex problems with non-identifiabilities, Parallel Tempering is most robust [8]

Computational Considerations

The computational cost of MCMC methods can be substantial, particularly for models requiring numerical integration of ODEs at each evaluation. Several strategies can mitigate these challenges:

- Parallelization: MCMC chains can be run independently in parallel, with post-sampling combination

- Surrogate Modeling: Replace computationally expensive models with efficient emulators

- Gradient Approximation: Use adjoint methods or automatic differentiation for efficient gradient computation

- Early Termination: Implement convergence diagnostics to minimize unnecessary sampling [8]

Application Notes for Biological Systems

Special Considerations for Biological Models

Biological kinetic models present unique challenges that necessitate methodological adaptations:

Non-identifiabilities: Many biological models contain practically non-identifiable parameters, where different parameter combinations yield nearly identical model outputs. In such cases, MCMC samples will reveal the correlated posterior structure, enabling appropriate uncertainty quantification [8].

Multi-scale Dynamics: Biological systems often exhibit processes operating at vastly different timescales. For KMC applications, this necessitates specialized algorithms to handle timescale disparities and focus computational effort on rare, slow events that often govern system behavior [10].

Sparse, Noisy Data: Experimental limitations in biology often result in limited temporal resolution and significant measurement noise. Bayesian approaches naturally handle this through explicit noise models and prior distributions that incorporate biological constraints.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Research Reagent Solutions for Kinetic Modeling

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| ODE Integration Suites (e.g., SUNDIALS, deSolve) | Numerical solution of differential equations | Use with sensitivity analysis for gradient computation |

| MCMC Software (e.g., DRAM, PyMC, Stan) | Bayesian parameter estimation | Select based on problem dimension and complexity |

| KMC Platforms (e.g., kmos, Lattice Microbes) | Stochastic simulation of reaction networks | Ideal for systems with low copy numbers |

| Sensitivity Analysis Tools | Identify influential parameters | Guide experimental design and model reduction |

| Benchmark Problem Collections | Method validation and comparison | Ensure algorithmic robustness across problem types |

Advanced Methodologies and Future Directions

Handling Complex Posterior Topologies

Advanced MCMC methods address challenges posed by complex posterior distributions:

Parallel Tempering: Also known as replica exchange, this method runs multiple chains at different temperatures, with higher temperatures flattening the posterior to facilitate exploration. Occasional swaps between chains allow information transfer, improving sampling of multi-modal distributions [8].

Hamiltonian Monte Carlo (HMC): Utilizes Hamiltonian dynamics to propose distant moves with high acceptance probability, particularly effective for high-dimensional correlated posteriors. The No-U-Turn Sampler (NUTS) automates tuning of HMC parameters [9].

Slice Sampling: Auxiliary variable method that adapts naturally to local posterior geometry, requiring minimal tuning while efficiently exploring complex distributions.

Integrated Workflows for Model Development

Emerging Trends and Methodological Innovations

The field of kinetic parameter estimation continues to evolve with several promising directions:

- Integration with Single-cell Data: Developing methods to leverage high-throughput single-cell measurements for parameter estimation

- Machine Learning Hybrids: Combining Monte Carlo methods with neural networks for surrogate modeling and likelihood approximation

- Multi-omics Integration: Developing frameworks to simultaneously estimate parameters from transcriptomic, proteomic, and metabolomic data

- Experimental Design: Using uncertainty estimates to guide optimal experimental design for parameter identifiability

- Cloud-native Implementations: Leveraging distributed computing resources for computationally intensive sampling problems

Monte Carlo methods, particularly MCMC and KMC, provide powerful approaches for addressing the parameter estimation challenge in kinetic models of biological systems. By generating representative samples from posterior distributions, these methods enable comprehensive uncertainty quantification and robust parameter estimation even for complex, non-identifiable models. The protocols outlined in this application note provide a foundation for implementing these methods, with specific guidance on algorithm selection, workflow design, and performance evaluation. As biological models increase in complexity and scope, continued development and refinement of Monte Carlo methods will be essential for maximizing the predictive power of kinetic models in biological research and therapeutic development.

Monte Carlo (MC) methods represent a broad class of computational algorithms that rely on repeated random sampling to obtain numerical results and solve problems that might be deterministic in principle [1]. These methods are particularly valuable for modeling phenomena with significant uncertainty in inputs and for solving complex problems in optimization, numerical integration, and generating draws from probability distributions [1]. In kinetic model parameterization research, particularly in drug development and biomedical sciences, Monte Carlo techniques enable researchers to quantify uncertainty, handle complex models, and make robust inferences from limited data.

The core principle of Monte Carlo methods involves using randomness to solve problems. The basic pattern followed by most Monte Carlo methods includes: defining a domain of possible inputs, generating inputs randomly from a probability distribution over the domain, performing deterministic computations on these inputs, and aggregating the results [1]. This approach allows researchers to approximate solutions to problems that are otherwise analytically intractable.

This article focuses on three specialized Monte Carlo flavors particularly relevant to kinetic modeling: Markov Chain Monte Carlo (MCMC), Kinetic Monte Carlo (KMC), and Gibbs Canonical Monte Carlo (GCMC). Each method possesses distinct characteristics, applications, and implementation considerations that make them suitable for different aspects of kinetic model parameterization in pharmaceutical and biomedical research.

The table below summarizes the key characteristics, applications, and considerations for MCMC, KMC, and GCMC methods in kinetic model parameterization.

Table 1: Comparative Analysis of Monte Carlo Methods for Kinetic Modeling

| Feature | Markov Chain Monte Carlo (MCMC) | Kinetic Monte Carlo (KMC) | Gibbs Canonical Monte Carlo (GCMC) |

|---|---|---|---|

| Primary Function | Sampling from complex probability distributions; Bayesian parameter estimation [11] [12] | Simulating time evolution of stochastic systems; modeling dynamic processes [13] | Sampling from canonical ensemble; modeling systems at constant temperature, volume, and particle number |

| Key Principle | Constructs Markov chain with equilibrium distribution matching target distribution [11] | Uses transition rates between states to simulate system kinetics [13] | Samples particle configurations according to Boltzmann distribution |

| Typical Applications | Parameter estimation in ODE/PDE models, Bayesian inference, uncertainty quantification [14] [15] | Reaction kinetics, molecular dynamics, surface processes, biochemical networks [13] | Molecular simulation, adsorption studies, phase equilibria, material properties |

| Computational Load | High, especially for complex models; requires many iterations [1] [12] | Depends on number of possible transitions; can be high for complex systems | Moderate to high, depending on system size and interactions |

| Convergence Requirements | Ergodicity, irreducibility, aperiodicity; validated using diagnostic tools [11] | Proper calculation of propensity functions; adequate sampling of rare events | Adequate sampling of phase space; proper handling of particle exchanges |

| Key Advantages | Handles complex, multi-modal distributions; provides full uncertainty quantification [14] [12] | Naturally captures stochasticity of chemical kinetics; provides realistic time evolution | Maintains constant thermodynamic conditions; naturally samples equilibrium fluctuations |

| Main Challenges | Computational intensity; difficulty in tuning parameters; convergence assessment [16] [12] | Computational cost for large systems; rarity of important events | Handling of particle insertion/deletion; efficiency at high densities |

Methodological Deep Dive and Protocols

Markov Chain Monte Carlo (MCMC)

MCMC methods create samples from a continuous random variable with probability density proportional to a known function [11]. These algorithms construct a Markov chain whose elements' distribution approximates the target probability distribution, with the approximation improving as more steps are included [11]. In kinetic model parameterization, MCMC is particularly valuable for Bayesian parameter estimation where it enables researchers to estimate posterior distributions for model parameters and quantify estimation uncertainty [14] [15].

Table 2: MCMC Algorithms and Their Applications in Kinetic Modeling

| Algorithm | Mechanism | Kinetic Modeling Applications | Implementation Considerations |

|---|---|---|---|

| Metropolis-Hastings | Proposes new parameter values, accepts/rejects based on probability ratio [14] [12] | General parameter estimation for ODE/PDE models [15] | Proposal distribution tuning critical for efficiency [12] |

| Gibbs Sampling | Samples each parameter conditional on current values of all others [12] | Hierarchical models, population PK/PD modeling | Efficient when conditional distributions are tractable |

| Hamiltonian Monte Carlo (HMC) | Uses gradient information to propose distant states with high acceptance [12] | High-dimensional parameter spaces, complex differential equation models | Requires gradient computations; more complex implementation |

| Tempered MCMC (TMCMC) | Samples from powered posteriors to improve mixing and handle multi-modality [14] | Multi-modal posteriors, model choice via Bayes factors [14] | Requires careful tuning of inverse temperatures (perks) [14] |

The following workflow illustrates a typical MCMC procedure for kinetic model parameter estimation:

Protocol 1: MCMC for ODE Kinetic Model Parameter Estimation

This protocol outlines the steps for implementing MCMC for parameter estimation in ordinary differential equation (ODE) models of cellular kinetics, such as those describing CAR-T cell dynamics in cancer therapy [15].

Model Specification

- Define the ODE system representing the kinetic processes

- Specify parameters to be estimated and their prior distributions based on biological knowledge

- Formulate the likelihood function relating model predictions to experimental data

Algorithm Configuration

- Select appropriate MCMC algorithm (Metropolis-Hastings, HMC, etc.)

- Tune proposal distributions for efficient exploration of parameter space

- Set chain parameters (number of iterations, burn-in period, thinning interval)

Implementation

- Code the model using scientific programming languages (Python, R, MATLAB)

- Implement MCMC sampler manually or using libraries (PyMC, Stan)

- Validate implementation with synthetic data where true parameters are known

Convergence Assessment

- Run multiple chains from dispersed starting points

- Monitor convergence using diagnostic statistics (Gelman-Rubin statistic, trace plots)

- Ensure adequate sampling of posterior distribution

Posterior Analysis

- Extract point estimates (posterior means, medians) and uncertainty intervals

- Analyze parameter correlations and identifiability

- Perform posterior predictive checks to validate model adequacy

Kinetic Monte Carlo (KMC)

KMC methods simulate the time evolution of systems through a sequence of discrete events, with each event occurring with a probability proportional to its rate constant [13]. Unlike MCMC which focuses on sampling from probability distributions, KMC directly simulates the stochastic kinetics of systems, making it particularly valuable for modeling chemical reactions, molecular processes, and other dynamic systems where timing and sequence of events are crucial.

Table 3: KMC Variants and Applications in Biochemical Modeling

| KMC Variant | Mechanism | Biochemical Applications | Advantages |

|---|---|---|---|

| Direct KMC | Selects events based on propensity functions; uses random numbers to determine next event and time step [13] | Enzyme kinetics, gene expression networks, signaling pathways | Conceptually simple; exact simulation of master equation |

| First-Reaction Method | Calculates putative time for each reaction; selects reaction with smallest time | Complex reaction networks with varying timescales | Simple implementation; naturally handles varying timescales |

| Next-Reaction Method | Uses more efficient data structures to manage reaction times | Large-scale biochemical systems with many reaction channels | Improved computational efficiency for large systems |

| Accelerated KMC | Uses approximate methods to simulate beyond typical timescales | Slow processes (protein folding, rare binding events) | Enables simulation of experimentally relevant timescales |

The following diagram illustrates the core KMC algorithm for simulating kinetic processes:

Protocol 2: KMC for Biochemical Reaction Networks

This protocol describes the implementation of KMC for simulating biochemical reaction networks, such as those encountered in pharmacokinetics and cellular signaling pathways.

System Definition

- Enumerate all possible chemical species and reactions in the network

- Specify reaction rate constants based on experimental measurements or theoretical estimates

- Define initial concentrations or molecule counts for all species

Algorithm Implementation

- Calculate propensity functions for each reaction (product of rate constant and reactant combinations)

- Implement reaction selection using the direct method or more efficient variants

- Advance simulation time using exponentially distributed time steps based on total propensity

Simulation Execution

- Run multiple independent trajectories to gather statistics

- Monitor key system properties over time (concentrations, fluxes, etc.)

- Ensure simulation reaches steady state or desired endpoint

Analysis and Interpretation

- Calculate statistical properties of system behavior (means, variances, distributions)

- Identify rare events and their characteristic timescales

- Compare simulation results with experimental data and deterministic approximations

Gibbs Canonical Monte Carlo (GCMC)

GCMC methods sample from the canonical ensemble (NVT ensemble), where the number of particles (N), volume (V), and temperature (T) are held constant. This approach is particularly useful for studying systems at thermal equilibrium and for calculating thermodynamic properties. In kinetic modeling, GCMC can be employed to study molecular adsorption, binding equilibria, and other processes where particle number fluctuations are important.

Table 4: GCMC Move Types and Applications in Molecular Systems

| Move Type | Purpose | Acceptance Criteria | Molecular Applications |

|---|---|---|---|

| Particle Displacement | Sample configuration space at fixed particle number | Metropolis criterion based on energy change | Conformational sampling, structural relaxation |

| Particle Insertion | Increase particle number in system | Chemical potential-based criterion | Adsorption studies, solvation processes |

| Particle Deletion | Decrease particle number in system | Chemical potential-based criterion | Desorption processes, binding/unbinding |

| Volume Change | Sample isobaric ensemble (NPT) | Pressure-based acceptance criterion | Dense systems, phase transitions |

Protocol 3: GCMC for Molecular Binding and Adsorption

This protocol outlines the application of GCMC to study molecular binding and adsorption processes relevant to drug-receptor interactions and material design.

System Preparation

- Define simulation box with appropriate dimensions and periodic boundaries

- Specify interaction potentials between all particle types

- Set temperature and chemical potential based on experimental conditions

Monte Carlo Cycle Design

- Determine relative probabilities of different move types (displacement, insertion, deletion)

- Implement efficient particle insertion methods, especially for dense systems

- Ensure detailed balance through proper acceptance probabilities

Equilibration and Production

- Equilibrate system from initial configuration

- Run production simulation with adequate sampling of particle number fluctuations

- Monitor convergence of thermodynamic averages

Analysis of Results

- Calculate adsorption isotherms and binding affinities

- Determine spatial distribution of adsorbed molecules

- Compute thermodynamic properties (free energies, enthalpies, entropies)

Table 5: Essential Resources for Monte Carlo Simulations in Kinetic Modeling

| Resource Category | Specific Tools/Solutions | Function/Purpose | Application Context |

|---|---|---|---|

| Programming Frameworks | Python with NumPy/SciPy, R, MATLAB, Julia | Core implementation and numerical computation | General-purpose implementation across all MC methods |

| Specialized MC Libraries | PyMC (Python), Stan (Multi-language), GROMACS (C) | Pre-built MC algorithms and utilities | Accelerated development; MCMC for Bayesian inference [15] |

| ODE Solver Libraries | SUNDIALS (C), LSODA (Fortran), Scipy ODE solvers (Python) | Numerical solution of differential equations | Kinetic model simulation in MCMC and KMC |

| Parallel Computing | MPI, OpenMP, CUDA (GPU computing) | Distributed computation for multiple chains/ensembles | Handling computationally intensive models [1] |

| Visualization Tools | Matplotlib (Python), ggplot2 (R), Paraview (3D) | Results visualization and analysis | Diagnostic checking and result interpretation |

| Convergence Diagnostics | Gelman-Rubin statistic, Geweke test, R-hat | Assessing MCMC convergence and sampling quality | Validating inference results [11] |

Integrated Workflow for Kinetic Model Parameterization

The following comprehensive workflow integrates multiple Monte Carlo methods for complete kinetic model parameterization, from preliminary analysis to final validation:

Integrated Protocol: Multi-Method Approach to Kinetic Model Parameterization

This protocol combines MCMC, KMC, and GCMC methods for comprehensive kinetic model development and validation in pharmaceutical research.

Model Development and Preliminary Analysis

- Use GCMC for initial exploration of parameter space and identification of feasible regions

- Perform sensitivity analysis to identify most influential parameters

- Establish preliminary parameter ranges and correlations

Rigorous Parameter Estimation

- Implement MCMC for Bayesian parameter estimation using experimental data

- Use tempered MCMC variants (TMCMC) to handle multi-modal distributions and compute Bayes factors for model comparison [14]

- Employ adaptive MCMC algorithms to automatically tune proposal distributions

- Validate convergence using multiple chains and diagnostic statistics

Model Validation and Refinement

- Use KMC to simulate stochastic dynamics and validate model predictions

- Compare KMC simulations with experimental time-course data

- Identify potential limitations in deterministic model formulations

- Refine model structure based on validation results

Predictive Application

- Use calibrated models for predictive simulations under new conditions

- Quantify prediction uncertainties using posterior parameter distributions

- Perform virtual screening of experimental conditions or compound properties

- Optimize design of future experiments

This integrated approach leverages the complementary strengths of different Monte Carlo methods to develop more robust and reliable kinetic models for drug development and biomedical research.

Addressing Ill-Conditioning and Multi-Modal Landscapes in Biological Optimization

Parameter estimation for kinetic models in systems biology is fundamentally challenged by ill-conditioned parameter landscapes and multi-modal objective functions. These problems arise when calibrating non-linear ordinary differential equation (ODE) models to experimental data, where the number of unknown parameters far exceeds the information provided by available measurements [17] [18]. Ill-conditioning manifests as large parameter variances, overfitting, and poor generalization performance, often resulting from highly correlated parameters and limited experimental data [18]. Simultaneously, the complex nonlinear dynamics of biological systems create optimization landscapes characterized by numerous local optima, requiring global optimization methods that can effectively explore these multi-modal spaces [17] [19].

The transition from explanatory modeling of fitted data to predictive modeling of unseen data necessitates robust parameter estimation protocols that can accurately recover underlying reaction parameters despite measurement noise and biological variability [19]. This application note provides detailed methodologies and protocols for addressing these challenges through advanced Monte Carlo sampling and evolutionary optimization strategies, specifically framed within kinetic model parameterization research.

Understanding Ill-Conditioned Estimation Problems

Mathematical Foundation and Causes

In mathematical modeling, ill-conditioned estimation problems occur when the system's sensitivity matrix has a high condition number, indicating that small changes in experimental data can lead to large fluctuations in parameter estimates [18]. For a linear regression model Y = Xβ, the covariance matrix of the parameter estimates is given by Cov(β) = σ²(XᵀX)⁻¹, where high correlations between parameters inflate this variance [18]. In biological systems, this frequently arises from:

- Limited experimental data with insufficient excitation of system dynamics

- High parameter correlations from feedback/feedforward loops in network structures

- Poor parameter estimability where different parameter combinations yield similar outputs

- Non-linearities in reaction kinetics that create complex parameter interactions

Practical Implications for Kinetic Modeling

For kinetic models of metabolism, ill-conditioning directly impacts the accuracy of parameter estimation and predictive capability. As noted in studies of metabolic networks, "ill-conditioning results in large parameter variance implying 'overfitting' and poor generalization performance" [18]. In severe cases, this leads to non-identifiability where unique parameter values cannot be determined from available data, fundamentally limiting model utility for predicting cellular states under novel conditions [18] [20].

Monte Carlo Sampling Methods for Ill-Conditioned Problems

Sampling Algorithms for Metabolic Networks

Monte Carlo sampling provides powerful approaches for characterizing solution spaces in ill-conditioned biological optimization problems. For metabolic networks, several sampling algorithms have been developed to address the high-dimensional polytopes formed by stoichiometric constraints:

Figure 1: Monte Carlo sampling algorithms for metabolic networks with highlighted recommended methods.

The Coordinate Hit-and-Run with Rounding (CHRR) algorithm performs best among methods for deterministic formulations, offering guaranteed distributional convergence, which is particularly valuable for genome-scale models [21]. For stochastic formulations that incorporate measurement noise and relax steady-state constraints, Gibbs sampling is the only practical method appropriate for genome-scale sampling, though it ranks as less efficient than samplers used for deterministic formulations [21].

Protocol: CHRR for Metabolic Flux Sampling

Application: Sampling flux distributions from metabolic networks with stoichiometric constraints

Materials and Reagents:

- Stoichiometric matrix (S) of the metabolic network

- Flux capacity constraints (vlb, vub)

- Computing environment with CHRR implementation (e.g., COBRA Toolbox)

Procedure:

- Formulate the solution space polytope: Sv = 0 with v_lb ≤ v ≤ v_ub

- Initialize CHRR with a feasible starting point v⁽⁰⁾ within the polytope

- Perform rounding procedures to remove solution space heterogeneity

- Execute the hit-and-run algorithm for sampling:

- Generate random direction vectors uniformly distributed on the unit sphere

- Compute intersection points with polytope boundaries

- Select random step sizes along directions

- Update current position with new feasible point

- Collect samples after appropriate burn-in period

- Validate convergence using diagnostic metrics

Technical Notes: CHRR's rounding procedure is essential for handling anisotropic polytopes common in metabolic networks. For genome-scale models, convergence typically requires 10,000-100,000 sampling steps depending on network complexity [21].

Evolutionary Strategies for Multi-Modal Landscapes

Algorithm Comparison and Performance

Evolutionary algorithms (EAs) provide robust optimization capabilities for multi-modal parameter landscapes in biological systems. Comparative studies evaluating EAs for kinetic parameter estimation reveal significant performance differences:

Table 1: Performance comparison of evolutionary algorithms for kinetic parameter estimation under measurement noise conditions [19]

| Algorithm | Best For | Noise Resilience | Computational Cost | Convergence Reliability |

|---|---|---|---|---|

| CMAES | GMA & Linear-Logarithmic Kinetics (no noise) | Low | Low (fraction of other EAs) | High without noise |

| SRES | GMA Kinetics with noise | High | High | High across formulations |

| ISRES | GMA Kinetics with noise | High | High | High with marked noise |

| G3PCX | Michaelis-Menten Kinetics | Medium | Low (fold savings) | High for specific formulations |

| DE | Not Recommended | N/A | N/A | Poor performance |

Figure 2: Decision workflow for selecting evolutionary algorithms based on noise conditions and kinetic formulations.

Protocol: SRES for Noisy Kinetic Data

Application: Parameter estimation for kinetic models with significant measurement noise

Materials and Reagents:

- Kinetic model structure (ODE system)

- Experimental time-series data

- Parameter bounds based on biological constraints

- Computing environment with SRES implementation

Procedure:

- Formulate objective function (typically sum of squared errors): SSE(m(c)) = ΣΣ(Yi[j] - Ŷi[j])² where Yi[j] is measured data and Ŷi[j] is model prediction [17]

- Initialize SRES with population size of 50-100 individuals

- Set parameter bounds based on physiological constraints

- For each generation:

- Evaluate fitness of all individuals

- Apply stochastic ranking to handle constraints

- Select parents based on ranked performance

- Generate offspring through mutation and recombination

- Maintain diversity through carefully tuned strategy parameters

- Continue for predefined generations or until convergence criteria met

- Validate parameter estimates on withheld test data

Technical Notes: SRES incorporates constraint handling through stochastic ranking, making it particularly effective for problems with parameter boundaries and noisy data. For medium-scale models (50-100 parameters), typical runtimes range from several hours to days depending on ODE complexity [19].

Advanced Integration: Machine Learning with Evolution Strategies

RENAISSANCE Framework Protocol

The RENAISSANCE (REconstruction of dyNAmIc models through Stratified Sampling using Artificial Neural networks and Concepts of Evolution strategies) framework represents a cutting-edge integration of machine learning and evolution strategies for kinetic model parameterization [20].

Application: Large-scale kinetic model parameterization with integrated omics data

Materials and Reagents:

- Metabolic network structure with stoichiometric matrix

- Multi-omics data (fluxomics, metabolomics, transcriptomics, proteomics)

- Thermodynamic constraints

- Feed-forward neural network architectures

- NES optimization implementation

Procedure:

- Input Preparation:

- Compute steady-state profiles of metabolite concentrations and metabolic fluxes using thermodynamics-based flux balance analysis

- Integrate available omics data and thermodynamic constraints

Generator Network Setup:

- Initialize population of generator neural networks with random weights

- Configure network architecture commensurate with kinetic model complexity

Evolutionary Optimization Loop:

- Step I: Each generator produces batch of kinetic parameters from Gaussian noise input

- Step II: Parameterize kinetic model with generated parameters

- Step III: Evaluate model dynamics using Jacobian eigenvalues and dominant time constants

- Step IV: Assign rewards based on biological relevance (e.g., λ_max < -2.5 for E. coli doubling time)

- Step V: Update generator weights using natural evolution strategies based on normalized rewards

- Step VI: Create new generation through mutation of parent generators

Validation:

- Test phenotypic stability through perturbation analysis (±50% metabolite concentrations)

- Verify return to steady state within biologically relevant timescales

- Validate against experimental bioreactor data

Technical Notes: RENAISSANCE dramatically reduces computation time compared to traditional kinetic modeling methods. For the E. coli model with 113 ODEs and 502 parameters, the framework achieved up to 100% incidence of valid models capturing experimentally observed doubling times [20].

Figure 3: RENAISSANCE workflow for machine learning-enhanced kinetic model parameterization [20].

Research Reagent Solutions

Table 2: Essential computational tools for biological optimization problems

| Tool/Algorithm | Application Context | Key Features | Implementation Sources |

|---|---|---|---|

| CHRR | Sampling metabolic flux distributions | Guaranteed convergence, rounding for anisotropy | COBRA Toolbox [21] |

| Gibbs Sampling | Stochastic formulation with measurement noise | Handles experimental noise, relaxed steady state | limSolve R package [21] |

| SRES | Parameter estimation with noisy data | Stochastic ranking for constraints, high noise resilience | HEBO, specialized implementations [19] |

| CMAES | Efficient parameter estimation without noise | Self-adaptation, low computational cost | Various Python/Matlab implementations [19] |

| RENAISSANCE | Large-scale kinetic model parameterization | Integration of NES with neural networks | Specialized framework [20] |

| Parameter Subset Selection | Ill-conditioned problems with correlated parameters | Reduces decision variables, enhances estimability | Custom implementations [18] |

Integrated Protocol for Kinetic Model Parameterization

Comprehensive Workflow: Addressing ill-conditioning and multi-modality in biological optimization

Phase 1: Problem Assessment and Preprocessing

- Perform identifiability analysis using singular value decomposition of sensitivity matrix

- Apply parameter subset selection methods for ill-conditioned problems:

- Establish parameter bounds based on biological constraints and prior knowledge

Phase 2: Algorithm Selection and Configuration

- For well-conditioned subproblems without significant noise, apply CMAES for efficient optimization

- For problems with substantial measurement noise, implement SRES or ISRES

- For high-dimensional correlated parameters, employ Monte Carlo sampling approaches:

- Use CHRR for deterministic formulations with exact constraints

- Apply Gibbs sampling for stochastic formulations with measurement noise

Phase 3: Multi-stage Optimization

- Initial broad exploration using evolutionary algorithms with large population sizes

- Refinement phase with hybrid approaches combining global and local methods

- Validation through Bayesian approaches to quantify parameter uncertainty

Phase 4: Machine Learning Enhancement (for large-scale models)

- Implement RENAISSANCE framework for models with hundreds of parameters

- Utilize generator neural networks with natural evolution strategies

- Validate dynamic properties against experimental timescale observations

This comprehensive protocol provides researchers with a structured approach to overcome the dual challenges of ill-conditioning and multi-modal landscapes in biological optimization, enabling more robust and predictive kinetic model parameterization for metabolic engineering and drug development applications.

Implementing Monte Carlo Methods: From Theory to Practice in Biomedicine

Markov Chain Monte Carlo (MCMC) constitutes a class of algorithms for drawing samples from probability distributions that are too complex for direct sampling or analytical computation [22] [11]. In kinetic model parameterization, where ordinary differential equation (ODE) systems describe dynamical biological processes, MCMC enables Bayesian inference of uncertain kinetic parameters [23]. Unlike optimization methods that find single best-fit parameter values, MCMC characterizes the full posterior distribution of parameters given experimental data, quantifying uncertainty in model predictions [23] [24]. This is particularly valuable in systems biology and drug development, where parameters are often non-identifiable or poorly constrained by available data [23].

The core principle involves constructing a Markov chain that randomly explores the parameter space such that its stationary distribution matches the target posterior distribution [11]. After a "burn-in" period, samples from this chain approximate the true posterior, allowing estimation of parameter means, credible intervals, and other properties [22] [25].

Theoretical Foundations

Bayesian Inference Framework

MCMC for kinetic parameter estimation operates within a Bayesian framework, where prior knowledge is updated with experimental data. According to Bayes' theorem:

[ p(\theta|D) \propto p(D|\theta) \cdot p(\theta) ]

where (\theta) represents kinetic parameters, (D) denotes experimental data, (p(\theta|D)) is the posterior distribution, (p(D|\theta)) is the likelihood, and (p(\theta)) is the prior [25]. For ODE models, the likelihood typically measures the mismatch between model predictions and experimental observations, often assuming Gaussian noise [23].

Markov Chain Theory

A Markov chain is a sequence of random variables where each state depends only on the previous state [22] [26]. For MCMC, this property ensures computational feasibility. Theoretical guarantees of convergence require the chain to be irreducible (able to reach any state), aperiodic, and positive recurrent [11]. Under these conditions, the chain eventually forgets its initial state and produces samples from the target distribution.

Monte Carlo Integration

Once samples ({\theta1, \theta2, ..., \theta_N}) are obtained from the posterior (p(\theta|D)), Monte Carlo integration approximates expectations:

[ \mathbb{E}[f(\theta)] = \int f(\theta) p(\theta|D) d\theta \approx \frac{1}{N} \sum{i=1}^N f(\thetai) ]

This enables calculation of posterior means, variances, and credible intervals without analytical integration [26] [25].

Key MCMC Algorithms and Protocols

Metropolis-Hastings Algorithm

The Metropolis-Hastings algorithm forms the foundation for many MCMC methods [22] [11]. It uses a proposal distribution to suggest new parameter values and an acceptance criterion to maintain correct sampling probabilities.

Protocol: Metropolis-Hastings for Kinetic Parameter Estimation

Initialization: Choose initial parameter values (\theta_0) and proposal distribution (q(\theta'|\theta)) (often multivariate normal). Set iteration counter (i = 0).

Proposal Generation: Sample a candidate (\theta') from (q(\theta'|\theta_i)).

Acceptance Probability Calculation: Compute: [ \alpha = \min\left(1, \frac{p(D|\theta')p(\theta')q(\thetai|\theta')}{p(D|\thetai)p(\thetai)q(\theta'|\thetai)}\right) ] Note: For ODE models, (p(D|\theta)) requires numerical ODE solution.

Accept/Reject: Draw (u \sim \text{Uniform}(0,1)). If (u \leq \alpha), set (\theta{i+1} = \theta'); otherwise set (\theta{i+1} = \theta_i).

Iteration: Increment (i) and repeat from step 2 until sufficient samples collected.

Burn-in and Thinning: Discard initial samples (typically 10-50%) and optionally thin chain to reduce autocorrelation [27].

Figure 1: Metropolis-Hastings Algorithm Workflow for ODE Models

Gibbs Sampling

Gibbs sampling is effective when conditional distributions of parameters are tractable [22]. It updates each parameter sequentially by sampling from its full conditional distribution.

Protocol: Gibbs Sampling for Hierarchical Kinetic Models

Initialization: Choose initial values for all parameters (\theta = (\theta1, \theta2, ..., \theta_d)).

Cyclic Update: For each iteration (i), sequentially update: [ \theta1^{(i+1)} \sim p(\theta1 | \theta2^{(i)}, \theta3^{(i)}, ..., \thetad^{(i)}, D) ] [ \theta2^{(i+1)} \sim p(\theta2 | \theta1^{(i+1)}, \theta3^{(i)}, ..., \thetad^{(i)}, D) ] [ \vdots ] [ \thetad^{(i+1)} \sim p(\thetad | \theta1^{(i+1)}, \theta2^{(i+1)}, ..., \theta_{d-1}^{(i+1)}, D) ]

Iteration: Repeat until convergence and sufficient samples obtained.

Hamiltonian Monte Carlo

Hamiltonian Monte Carlo (HMC) uses gradient information to propose distant states with high acceptance probability [26]. It is particularly effective for high-dimensional correlated distributions common in kinetic models.

Protocol: Hamiltonian Monte Carlo for ODE Parameters

Momentum Variable: Introduce auxiliary momentum variable (r \sim N(0, M)).

Hamiltonian Dynamics: Simulate Hamiltonian dynamics: [ H(\theta, r) = -\log p(\theta|D) + \frac{1}{2} r^T M^{-1} r ] using the leapfrog integrator with step size (\epsilon) and (L) steps.

Metropolis Acceptance: Accept the proposed state with probability: [ \alpha = \min(1, \exp(H(\theta, r) - H(\theta^, r^))) ]

Momentum Refreshment: Sample new momentum variables for next iteration.

Advanced MCMC Variants

Adaptive MCMC: Gradually tunes proposal distribution based on chain history to improve efficiency [23] [27]. For example, the adaptive Metropolis algorithm updates the proposal covariance matrix using empirical covariance of previous samples.

Parallel Tempering MCMC: Runs multiple chains at different "temperatures" (flattened versions of the posterior) and exchanges information between them to overcome multimodality [23].

Differential Evolution MCMC: Uses multiple chains and proposal generation based on differences between current states, enhancing performance on correlated distributions [24].

Comparative Analysis of MCMC Algorithms

Table 1: Comparison of MCMC Algorithms for Kinetic Parameter Estimation

| Algorithm | Key Features | Computational Requirements | Convergence Properties | Best-Suited Problems |

|---|---|---|---|---|

| Metropolis-Hastings | Simple implementation, flexible proposal distributions | Moderate (1 ODE solve/iteration) | Slow for correlated parameters | Low-dimensional models, initial exploration |

| Gibbs Sampling | No tuning parameters, high acceptance rate | High if conditionals are expensive | Fast when parameters are independent | Hierarchical models, conjugate priors |

| Hamiltonian Monte Carlo | Uses gradients, distant proposals | High (gradient calculations + multiple ODE solves/iteration) | Fast mixing in high dimensions | Differentiable models, >10 parameters |

| Adaptive MCMC | Self-tuning proposals, improved efficiency | Moderate (additional covariance calculations) | Theoretical guarantees with care | Production runs, unknown parameter correlations |

| Parallel Tempering | Multiple temperatures, handles multimodality | High (multiple chains) | Excellent for multimodal posteriors | Complex landscapes, multiple minima |

| Differential Evolution MCMC | Population-based, handles correlations | Moderate (multiple chains) | Robust to parameter correlations | Irregular posteriors, strong parameter dependencies |

Application Notes for Kinetic Models in Drug Development

CAR-T Cell Therapy Modeling

MCMC enables parameter estimation for ODE models of CAR-T cell kinetics, critical for predicting therapy response and optimizing dosing regimens [24]. A recent study demonstrated MCMC estimation of 15+ parameters in a 5-compartment CAR-T cell model using clinical data.

Protocol: CAR-T Cell Model Parameterization

Model Specification: Define ODE system for CAR-T cell phenotypes (distributed, effector, memory, exhausted) and tumor cells [24].

Prior Selection: Use log-normal priors for kinetic rate parameters informed by literature values [23].

Likelihood Definition: Assume multivariate normal distribution for experimental measurements with informed noise priors.

MCMC Implementation: Apply DEMetropolisZ algorithm with 4 parallel chains, 50,000 iterations per chain.

Convergence Assessment: Monitor (\hat{R}) statistics, effective sample size, and trace plots.

Posterior Prediction: Simulate model with posterior samples to predict treatment outcomes with uncertainty intervals.

Figure 2: CAR-T Cell Kinetics Model Structure

Signaling Pathway Parameter Estimation

MCMC estimates parameters in nonlinear signaling pathways (e.g., MAPK cascades) where parameters are often structurally non-identifiable [23].

Protocol: MAPK Pathway Parameter Estimation with Parallel Adaptive MCMC

Experimental Design: Collect time-course phosphorylation data under multiple perturbations.

Prior Construction: Use informative log-normal priors centered on known kinase-substrate interaction rates [23].

Likelihood Model: Employ Student's t-distribution for robust inference against outliers.

Algorithm Configuration: Implement parallel adaptive MCMC with 8 chains, adapting proposal covariance every 100 iterations.

Identifiability Analysis: Compute posterior correlations and profile likelihoods to diagnose non-identifiability.

Fragment-Based Drug Discovery

Grand Canonical nonequilibrium candidate Monte Carlo (GCNCMC) enhances sampling of fragment binding in drug discovery [28].

Protocol: GCNCMC for Fragment Binding Assessment

System Preparation: Solvate protein structure in explicit solvent, equilibrate with standard MD.

GCNCMC Setup: Define chemical potential for fragments, insertion/deletion regions.

Simulation Protocol: Alternate between MD phases (1-10 ps) and GCNCMC moves (fragment insertion/deletion attempts).

Analysis: Compute binding probabilities, preferred binding sites, and free energies from insertion/deletion statistics.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function in MCMC Workflow | Application Context |

|---|---|---|---|

| PyMC [24] | Python library | Flexible implementation of MCMC samplers | General Bayesian modeling, CAR-T cell kinetics |

| TensorFlow Probability [29] | Python library | Gradient-based MCMC (HMC, NUTS) | Large-scale differentiable models |

| BioModels Database [23] | Data repository | Source for prior parameter distributions | Systems biology model parameterization |

| BRENDA Database [23] | Enzyme kinetics database | Informative priors for Michaelis-Menten constants | Metabolic pathway modeling |

| COPASI [23] | Software platform | ODE simulation, parameter estimation | Biochemical network modeling |

| Jupyter Notebooks [24] | Computational environment | Interactive analysis, visualization | Protocol development, result exploration |

| coda R package [27] | R package | MCMC diagnostics, convergence assessment | Posterior analysis, chain diagnostics |

| Grand Canonical NCMC [28] | Specialized algorithm | Enhanced sampling of molecular binding | Fragment-based drug discovery |

Implementation Considerations

Convergence Diagnostics

Effective MCMC requires careful convergence assessment [27]:

- Gelman-Rubin statistic ((\hat{R})): Compare between-chain and within-chain variance (target <1.1).

- Effective Sample Size (ESS): Measure independent samples (target >1000 for key parameters).

- Trace plots: Visual inspection for stationarity and mixing.

- Autocorrelation: Assess sampling efficiency (lower is better).

Performance Optimization

- Proposal tuning: Adjust proposal scales to achieve 20-40% acceptance rates for random walk Metropolis, 60-80% for HMC [27] [25].

- Parallelization: Run multiple chains simultaneously to improve throughput and diagnostics.

- Gradient utilization: When available, use analytical gradients for significantly improved efficiency.

- Adaptive methods: Implement adaptation periods to automatically tune proposal distributions.

Practical Recommendations

For kinetic model parameterization:

- Start with simpler algorithms (Metropolis-Hastings) for initial exploration

- Progress to gradient-based methods (HMC) for production runs on refined models

- Use parallel tempering for suspected multimodal posteriors

- Always run multiple chains from dispersed starting points

- Invest in informative priors from biochemical databases to constrain parameters [23]

Kinetic Monte Carlo (KMC) for Modeling Reaction Kinetics and Battery Interfaces

Kinetic Monte Carlo (KMC) has emerged as a powerful computational technique for simulating the time evolution of complex physical and chemical processes. Within the broader context of Monte Carlo sampling for kinetic model parameterization research, KMC offers a distinct bottom-up approach that bridges molecular-scale phenomena with macroscopic models, effectively balancing computational cost and atomic-scale accuracy [10]. Unlike traditional macroscopic models used in systems like Battery Management Systems (BMS), which often suffer from parameter uncertainties spanning 4-5 orders of magnitude, KMC provides more precise modeling by explicitly simulating stochastic events based on their intrinsic rates [10]. This application note details the principles, implementation protocols, and specific applications of KMC for investigating reaction kinetics and battery interfaces, providing researchers with practical frameworks for integrating these methods into their parameterization workflows.

Theoretical Foundations of Kinetic Monte Carlo

Fundamental Principles

KMC-based methods perform simulations through repeated random sampling of stochastic events. The evolution of physical/chemical processes is described by the Markovian Master equation [10]:

$$\frac{dP\alpha}{dt} = \sum{\beta} k{\beta\alpha}P\beta - k{\alpha\beta}P\alpha$$

where (P\alpha) and (P\beta) represent probabilities of the system being in states α and β at time t, and (k{\alpha\beta}) and (k{\beta\alpha}) are transition rate constants between states. KMC assumes the probability distribution (p{\alpha\beta}(t)) for transitioning from state α to β follows a Poisson process: (p{\alpha\beta}(t) = k{\alpha\beta}e^{-k{\alpha\beta}t}) [10].

The variable step size method represents one of the most employed KMC algorithms. Beginning with a specific system configuration, the algorithm calculates all possible processes 'w' with associated rate constants (kw). The total rate constant is given by (k{tot} = \sumw kw). A process q is selected by generating a random number ρ₁ ∈ [0,1) and finding the integer q satisfying:

$$\sum{w=1}^{q-1} kw \leq \rho1 k{tot} < \sum{w=1}^{q} kw$$

After executing the selected event, simulation time advances according to:

$$\Delta t = -\frac{ln(\rho2)}{k{tot}}$$

where ρ₂ is another random number ∈ [0,1) [10]. This stochastic progression enables KMC to achieve simulation times far beyond other atomistic models while maintaining molecular resolution.

KMC Variants and Representations

KMC implementations primarily follow two structural paradigms:

- Lattice KMC: Represents atoms/molecules as points occupying discrete lattice sites, making it computationally efficient for modeling surface reactions and growth processes [10].

- Off-lattice KMC: Allows particles to exist in continuous space, providing greater flexibility for modeling amorphous materials and structural heterogeneity [30].

Table 1: Comparison of KMC Methodologies

| Feature | Lattice KMC | Off-lattice KMC |

|---|---|---|

| Spatial Representation | Discrete lattice sites | Continuous space |

| Computational Efficiency | Higher | Lower |

| Structural Flexibility | Limited | High |

| Ideal Applications | Surface reactions, interface growth | Amorphous polymers, complex fluids |

| Implementation Complexity | Lower | Higher |

KMC Applications for Battery Interfaces

Solid Electrolyte Interphase (SEI) Formation and Evolution