Navigating Overfitting: Strategies for Robust Kinetic Modeling in Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on identifying, preventing, and managing overfitting in complex kinetic models.

Navigating Overfitting: Strategies for Robust Kinetic Modeling in Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on identifying, preventing, and managing overfitting in complex kinetic models. Covering foundational concepts to advanced validation techniques, it explores why overfitting is a critical concern not only in high-dimensional machine learning but also in traditional kinetic modeling of biological systems. The content synthesizes the latest methodologies, including simplified kinetic frameworks, regularization, and rigorous cross-validation, with practical applications in predicting biotherapeutic stability, drug-target interactions, and drug release kinetics. By offering a troubleshooting toolkit and comparative analysis of model performance, this guide aims to equip scientists with the knowledge to build reliable, generalizable models that accelerate biomedical research and therapeutic development.

Why Overfitting Undermines Kinetic Models: From Biotherapeutics to Drug Discovery

Frequently Asked Questions

What is overfitting in the context of kinetic modeling? Overfitting occurs when a machine learning model learns not only the underlying signal in your training data but also the noise and random fluctuations [1]. In kinetic modeling, this results in a model that fits your training data—such as concentration profiles from a single experimental condition—with extremely high accuracy but fails to generalize. It will perform poorly when predicting new scenarios, such as the metabolic response of a mutant strain or dynamics under a different bioreactor condition [2] [3].

What are the common symptoms that my kinetic model is overfitted? You can identify a potentially overfitted model through several key symptoms [1]:

- Excellent training fit, poor testing performance: The model achieves a low error on the data it was trained on but a high error on a separate, unseen validation dataset.

- High model complexity: The model has an unnecessarily large number of parameters (e.g., a complex neural network or a kinetic model with many redundant terms) relative to the amount and quality of your experimental data.

- Sensitivity to noise: The model's predictions are highly sensitive to small changes or perturbations in the input data, indicating it has learned the noise.

What strategies can I use to prevent overfitting? Several proven methodologies can help mitigate overfitting [1]:

- Data splitting: Always partition your data into distinct training, validation, and test sets. Use the validation set to tune model parameters and the test set for a final, unbiased evaluation.

- Regularization: Apply techniques like Ridge (L2) or LASSO (L1) regression during model training. These methods add a penalty to the model's loss function based on the magnitude of its parameters, discouraging over-complexity.

- Cross-validation: Use k-fold cross-validation to ensure your model's performance is consistent across different subsets of your data.

- Simplify the model: Reduce the number of parameters, for instance, by using approximative rate laws with fewer constants instead of modeling every elementary reaction step [2].

- Increase data volume and quality: The predictive power of any ML approach is dependent on the availability of high volumes of high-quality data [1].

How does symbolic regression help with overfitting compared to neural networks? Symbolic regression identifies an analytical, closed-form mathematical expression for the kinetic rates from data without assuming a pre-defined model structure [3]. This often results in simpler, more interpretable models that are less prone to overfitting, especially with small datasets. In contrast, complex neural networks can have millions of parameters and are notorious for overfitting if not properly regularized or supplied with massive amounts of data [1] [3]. One study found that a symbolic regression approach even slightly outperformed neural network benchmarks in some bioprocess applications [3].

What are the best practices for reporting models to prove they are not overfitted? Transparent reporting is crucial. Best practices include:

- Detail all datasets: Clearly describe the size and origin of the training, validation, and test sets.

- Report performance metrics: Provide key metrics (e.g., Mean Squared Error, R²) for all datasets, not just the training set.

- Document methodology: Specify the techniques used to prevent overfitting, such as the type of regularization, cross-validation strategy, or model selection criteria [1] [4].

- Perform uncertainty quantification: Use frameworks like Maud, which employs Bayesian statistical inference, to quantify the uncertainty in your parameter estimates, giving readers confidence in the model's robustness [2].

Troubleshooting Guide: Diagnosing and Fixing Overfitting

| Symptom | Potential Cause | Corrective Action |

|---|---|---|

| Large gap between training and validation error | Model is too complex for the available data | Apply regularization (L1/L2), simplify model structure, or collect more data [1]. |

| Model fails to predict mutant strain dynamics | Trained on a single strain/condition; cannot generalize | Incorporate multi-condition data (wild-type and mutants) during training, as in the KETCHUP framework [2]. |

| Unstable predictions with slight data variations | Model parameters are overly sensitive and fit to noise | Use parameter sampling methods (e.g., in SKiMpy or MASSpy) to find robust parameter sets [2]. |

| Poor performance on all new data | Validation set was used for model tuning, leading to information leakage | Perform a final evaluation on a completely held-out test set that was never used during model development [1]. |

Experimental Protocols for Model Validation

Protocol 1: k-Fold Cross-Validation for Model Selection This protocol provides a robust estimate of model performance by systematically partitioning the data.

- Shuffle your entire dataset randomly.

- Split the data into

kconsecutive folds (typically k=5 or 10). - For each fold:

a. Designate the current fold as the validation set.

b. Designate the remaining

k-1folds as the training set. c. Train your kinetic model on the training set. d. Validate the model on the validation set and record the performance metric (e.g., RMSE). - Calculate the average performance across all

kfolds. The model with the best average performance is selected.

Protocol 2: Hold-Out Test Set for Final Evaluation This protocol assesses the generalizability of your final chosen model.

- Partition your data into three subsets: Training Set (~70%), Validation Set (~15%), and Test Set (~15%).

- Use the Training Set to train candidate models.

- Use the Validation Set to tune hyperparameters and select the best-performing model.

- Once the final model is chosen, perform a single evaluation on the Test Set to report its expected real-world performance. The test set must not be used for any decision-making during the model development phase [1].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Kinetic Modeling |

|---|---|

| SKiMpy | A semiautomated workflow framework that constructs and parametrizes large kinetic models using a stoichiometric model as a scaffold, efficiently sampling kinetic parameters [2]. |

| MASSpy | A Python framework for building, simulating, and analyzing kinetic models, often with mass-action kinetics. It is well-integrated with constraint-based modeling tools like COBRApy [2]. |

| Tellurium | A versatile modeling environment for systems and synthetic biology that supports standardized model structures, simulation, and parameter estimation [2]. |

| KETCHUP | A method for efficient model parametrization that relies on experimental steady-state fluxes and concentrations from both wild-type and mutant strains [2]. |

| Maud | A tool that uses Bayesian statistical inference to quantify the uncertainty of parameter values, which is critical for assessing model confidence and robustness [2]. |

| Symbolic Regression | A machine learning technique that discovers analytical, interpretable mathematical expressions for kinetic rates directly from data, avoiding pre-defined model structures [3]. |

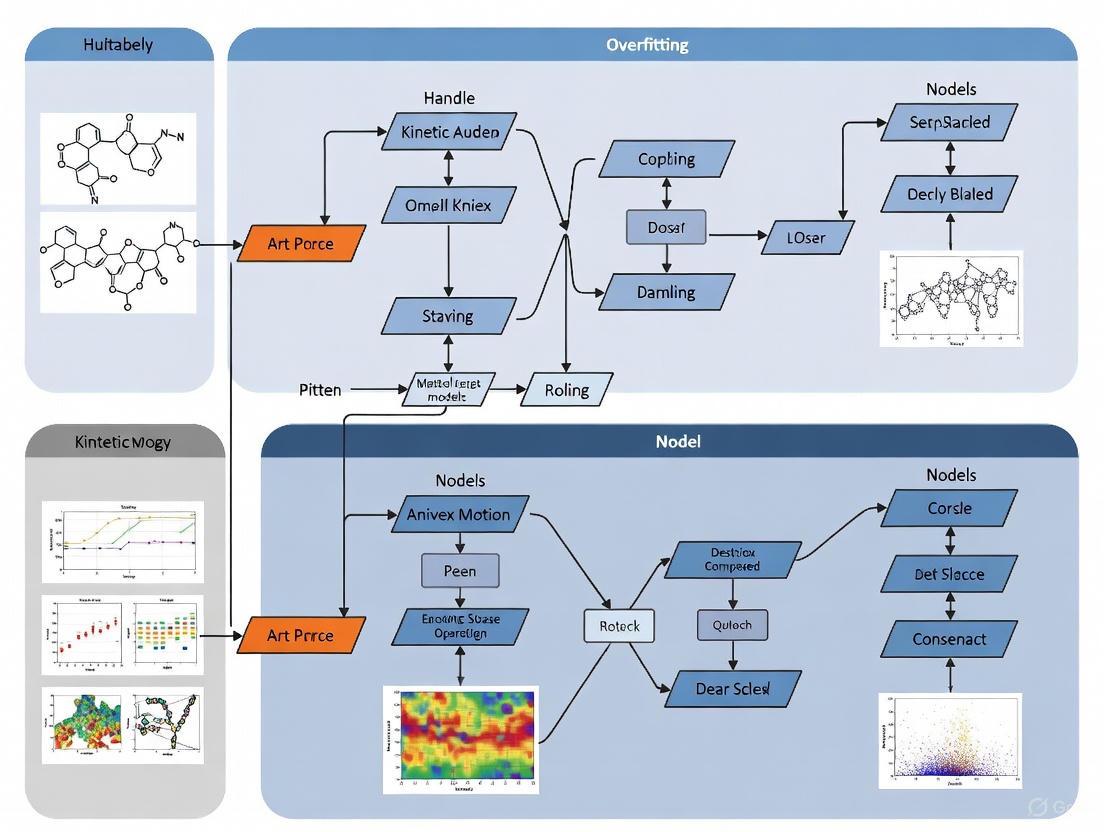

Workflow Diagram: Managing Overfitting in Kinetic Modeling

The diagram below illustrates a robust workflow for developing kinetic models that actively manages the risk of overfitting.

Model Complexity vs. Generalization Diagram

This diagram conceptualizes the relationship between model complexity and error, highlighting the "sweet spot" before overfitting occurs.

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: My complex kinetic model fits my training data perfectly but fails to predict new experimental results. What is the likely cause and how can I address it?

A: This is a classic symptom of overfitting. When a model has too many parameters relative to the amount of data, it can memorize noise and specific data points rather than learning the underlying generalizable relationship [5]. To address this:

- Simplify your model: Reduce the number of fitted parameters. A first-order kinetic model can often effectively describe stability profiles for attributes like protein aggregation, enhancing robustness and reliability by reducing the number of parameters that need to be fitted [6].

- Use hyperparameter optimization with caution: Extensive hyperparameter optimization can itself lead to overfitting on your validation set. In some cases, using a set of pre-optimized hyperparameters can yield similar performance with a drastic reduction in computational effort [5].

- Apply Occam's razor principles: Use methods like FixFit, which employs deep learning to identify the largest set of lower-dimensional latent parameters uniquely resolved by model outputs. This reduces the effective parameter space and helps find a unique best fit for your data [7].

Q2: I suspect my model parameters are redundant or "sloppy." How can I identify and resolve these degeneracies?

A: Parameter redundancy, where different parameter combinations produce identical model outputs, is a common issue in complex kinetic models [7]. To resolve it:

- Identify composite parameters: Use a neural network with a bottleneck layer (like the FixFit method) to automatically identify parameter combinations that the model output is sensitive to. This compresses the parameter space to only those values that are uniquely determined by the data [7].

- Perform global sensitivity analysis: After identifying latent parameters, establish the relationship between these latent variables and the original model parameters to understand which specific parameters are interacting and causing degeneracy [7].

Q3: How can I design my stability study to make kinetic modeling more reliable and less prone to overfitting?

A: Careful experimental design is crucial for building reliable models.

- Strategic temperature selection: Choose accelerated stability test temperatures that activate only the dominant degradation pathway relevant to storage conditions. This prevents the activation of secondary mechanisms that would require a more complex model and more parameters, thereby reducing the risk of overfitting [6].

- Prioritize data quality and cleaning: Ensure careful data aggregation from multiple sources to avoid data duplication, which can lead to biased estimates of model accuracy [5].

Essential Experimental Protocols

Protocol 1: Implementing FixFit for Model Reduction

This protocol outlines the steps to apply the FixFit method to identify and resolve parameter redundancies in a kinetic model [7].

- Model Simulation: Run a large number of simulations of your computational model, sampling widely across the entire input parameter space. This generates a dataset of parameter sets and their corresponding model outputs.

- Neural Network Training: Train a feedforward deep neural network on the simulation data. The network has a specific architecture:

- Encoder: Takes the original model parameters as input.

- Bottleneck Layer: Contains a reduced number of nodes (k), which forces the network to learn a compressed representation of the input parameters.

- Decoder: Reconstructs the model outputs from the bottleneck representation.

- Dimensionality Determination: Repeat the training process with varying bottleneck widths (values of k). The optimal latent dimension is identified as the smallest k that still achieves low prediction error on a validation set of simulated data.

- Latent Parameter Fitting: Once trained, use the decoder part of the network combined with a global optimizer to fit the latent (bottleneck) parameters to experimental data. This ensures a unique fit.

- Sensitivity Analysis: Use the encoder part of the network to perform a global sensitivity analysis, determining the influence of the original input parameters on the latent representation.

Protocol 2: First-Order Kinetic Modeling for Protein Aggregation Predictions

This protocol details the methodology for applying a simplified first-order kinetic model to predict long-term protein aggregation, a key quality attribute in biotherapeutics development [6].

- Sample Preparation and Storage:

- Filter the fully formulated drug substance through a 0.22 µm membrane filter.

- Aseptically fill into glass vials.

- Incubate samples at a range of temperatures (e.g., 5°C, 25°C, 40°C) for up to 36 months. The selection of temperatures is critical to ensure only the relevant degradation pathway is active.

- Data Collection via Size Exclusion Chromatography (SEC):

- At predefined time points, analyze samples using SEC.

- Dilute the protein solution to 1 mg/mL.

- Inject the sample and perform a run (e.g., 12 minutes at 40°C with a specified mobile phase).

- Quantify the percentage of high-molecular species (aggregates) based on the total area of the chromatogram.

- Model Fitting and Prediction:

- Model the formation of aggregates using a first-order kinetic model.

- Apply the Arrhenius equation to model the temperature dependence of the reaction rate.

- Use the data from the accelerated stability conditions (higher temperatures) to fit the model parameters.

- Predict the level of aggregates at the long-term storage condition (e.g., 5°C).

Table 1: Impact of Data Curation and Model Complexity on Predictive Performance

| Model / Strategy | Key Characteristic | Reported Performance | Computational Cost |

|---|---|---|---|

| Graph-Based Models (e.g., ChemProp) [5] | Used hyperparameter optimization on large parameter space | Potential for overfitting when measured on the same data | Very high (reference point) |

| Models with Pre-Set Hyperparameters [5] | Uses a fixed, pre-optimized set of hyperparameters | Similar performance to fully optimized models | ~10,000 times lower |

| TransformerCNN [5] | Representation learning from SMILES strings | Higher accuracy than graph-based methods in 26/28 comparisons | Fraction of the time of other methods |

| First-Order Kinetic Model [6] | Reduced number of parameters; avoids secondary degradation pathways | Robust and precise long-term stability predictions | Enhanced reliability and lower risk of overfitting |

Table 2: FixFit Model Reduction Applied to Known Systems

| Model System | Original Parameters | FixFit-Derived Composite Parameters | Outcome of Reduction |

|---|---|---|---|

| Kepler Orbit Model [7] | Four parameters (m1, m2, r0, ω0) | Two parameters: Eccentricity (e) and Semi-latus rectum (l) | Recovered known analytical solution; enabled unique fitting. |

| Blood Glucose Regulation [7] | Parameters of a dynamic systems model | A reduced set of latent parameters | Allowed for unique fitting of latent parameters to real data. |

| Larter-Breakspear Neural Mass Model [7] | Parameters for a multi-scale brain model | A reduced set of latent parameters | Identified previously unknown parameter redundancies; reduced viable parameter search space. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Kinetic Stability Modeling of Biologics

| Material / Reagent | Function in the Experiment | Example from Protocol |

|---|---|---|

| Proteins (Various Modalities) | The analyte of interest whose stability is being studied. Different formats (IgG1, scFv, DARPin, etc.) test model applicability [6]. | IgG1, IgG2, Bispecific IgG, Fc-fusion, scFv, DARPin (e.g., ensovibep) [6]. |

| Pharmaceutical Grade Formulation Excipients | To create the stable buffer environment for the protein drug substance; composition affects stability [6]. | Specific formulation details are intellectual property but are crucial for the experimental context [6]. |

| Size Exclusion Chromatography (SEC) Column | To separate and quantify protein monomers from aggregates (high-molecular species) in the sample [6]. | Acquity UHPLC protein BEH SEC column 450 Å [6]. |

| SEC Mobile Phase | The liquid solvent that carries the sample through the SEC column; its composition is critical for achieving accurate separation. | 50 mM sodium phosphate and 400 mM sodium perchlorate at pH 6.0 [6]. |

| Molecular Weight Markers | Used to calibrate the SEC system and verify column performance and separation accuracy before sample analysis [6]. | Bovine serum albumin/thyroglobulin/NaCl solution [6]. |

Workflow and Relationship Visualizations

FixFit Model Reduction Workflow

Complex vs Simple Model Outcomes

Technical Support Center: Troubleshooting Guides and FAQs

Troubleshooting Guide: Overfitting in Aggregation Prediction Models

Problem: My model performs well on training data but fails to predict new experimental aggregation data. This is a classic symptom of overfitting, where a model learns patterns from the training data too closely, including noise, and loses its ability to generalize [8].

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Verify Data Splitting | Ensure a clean hold-out test set was never used during training. |

| 2 | Compare Performance Metrics | A significant drop in accuracy (e.g., from 99.9% to 45%) on the test set indicates overfitting [9]. |

| 3 | Simplify the Model | Reduce layers/units or increase regularization (L1/L2); this often improves test set performance [10]. |

| 4 | Implement Cross-Validation | Use k-fold cross-validation to ensure the model performs consistently across different data subsets [8] [9]. |

| 5 | Apply Early Stopping | Halt training when validation loss stops improving to prevent the model from memorizing the training data [10]. |

Problem: My kinetic model for predicting aggregate formation has too many parameters and is unstable. Over-complex kinetic models with many parameters are difficult to fit uniquely and are prone to overfitting experimental data [6] [11].

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Perform Parameter Subset Selection | Identify and estimate only the most critical parameters, fixing others to literature values [11]. |

| 2 | Use a Simplified Rate Law | Replace a complex mechanistic model with a robust, approximative rate law (e.g., first-order kinetics) to reduce the number of fitted parameters [6]. |

| 3 | Incorporate More Experimental Data | Use data from various stress conditions (e.g., different temperatures) to constrain the model better [6]. |

| 4 | Apply Regularization | Add penalty terms to the cost function during parameter estimation to prevent parameters from taking extreme values [9]. |

Frequently Asked Questions (FAQs)

Q1: What is overfitting, and why is it a particular risk in protein aggregation studies? A: Overfitting occurs when a machine learning model gives accurate predictions for training data but fails to generalize to new, unseen data [8]. This is a significant risk in protein aggregation studies because experimental data can be scarce, noisy, and biased toward a few well-known amyloidogenic proteins [12]. When a complex model is trained on limited data, it may "memorize" this specific data rather than learning the underlying principles of aggregation.

Q2: How can I detect overfitting in my predictive models? A: The most straightforward method is to split your data into training and testing sets. A high error rate on the testing set that is not present in the training set indicates overfitting [8]. For a more robust evaluation, use k-fold cross-validation, where the data is split into k subsets. The model is trained on k-1 folds and validated on the remaining one, repeating the process for each fold [8] [9]. A model that performs well across all folds is less likely to be overfit.

Q3: My dataset on aggregation-prone sequences is small. How can I prevent overfitting? A: With a small dataset, consider these strategies:

- Data Augmentation: If possible, artificially expand your dataset. In sequence-based tasks, this could involve generating valid synthetic variants [10].

- Use Simpler Models: Opt for models with fewer parameters. A simpler, more interpretable model might outperform a complex "black-box" AI when data is limited [12].

- Leverage Pre-trained Models and Databases: Use existing tools and databases (e.g., CPAD, AmyPro, A3D) that have been trained on large datasets as a starting point for your analysis [13].

Q4: Are complex AI models always better for predicting protein aggregation? A: Not necessarily. While complex AI models can be powerful, they can also act as "black boxes" and are susceptible to overfitting, especially without massive, high-quality datasets. A study developing the CANYA AI tool deliberately sacrificed some predictive power for interpretability, making its decisions transparent to humans. Despite being less complex, it was about 15% more accurate than existing models because it was trained on a massive, novel dataset of over 100,000 random protein fragments [12].

Experimental Protocols for Robust Model Validation

Protocol: K-Fold Cross-Validation for an Aggregation Predictor Objective: To reliably assess the generalization error of a machine learning model trained to predict aggregation-prone regions from protein sequences.

- Dataset Preparation: Compile a curated dataset of sequences labeled as aggregation-prone or not. Ensure the dataset is as large and unbiased as possible.

- Data Splitting: Randomly split the entire dataset into k equally sized subsets (folds). A common value for k is 5 or 10.

- Iterative Training and Validation: For each unique fold

i(whereiranges from 1 to k):- Training Set: Use the k-1 folds not equal to

ito train the model. - Validation Set: Use fold

ias the validation data to compute the model's performance metrics (e.g., accuracy, F1-score).

- Training Set: Use the k-1 folds not equal to

- Performance Calculation: After k iterations, each fold has been used exactly once as the validation set. Calculate the average of the k performance metrics to produce a single, robust estimation of the model's predictive accuracy [8] [9].

Protocol: Simplified Kinetic Modeling for Predicting Aggregate Formation Objective: To predict long-term stability and aggregate levels for biotherapeutics using a first-order kinetic model, avoiding the overparameterization of complex models.

- Stability Study Design: Incubate the purified protein drug substance under multiple accelerated stress conditions (e.g., 5°C, 25°C, 40°C) for a predefined period (e.g., 12-36 months) [6].

- Data Collection: At regular time points, withdraw samples and analyze them using Size Exclusion Chromatography (SEC) to quantify the percentage of high-molecular-weight aggregates [6].

- Model Fitting: Fit the aggregate formation data at each temperature to a first-order kinetic reaction model. The model characterizes the stability profile through an exponential function, which is robust and requires fewer parameters [6].

- Long-Term Prediction: Use the Arrhenius equation to relate the reaction rate constants at different temperatures to predict the rate of aggregate formation at the recommended storage condition (e.g., 5°C), thus estimating the product's shelf life [6].

Research Reagent Solutions

Essential computational tools and databases for protein aggregation research.

| Resource Name | Type | Function |

|---|---|---|

| CPAD 2.0 [13] | Database | Provides a comprehensive, curated collection of experimental data on protein/peptide aggregation for training and validating models. |

| A3D (Aggrescan3D) [13] | Server/Tool | Uses 3D protein structures (including AlphaFold predictions) to compute structure-based aggregation propensity scores and test the impact of mutations. |

| CANYA [12] | AI Tool | An interpretable deep learning model that predicts amyloid aggregation from sequence and explains the chemical patterns driving its decisions. |

| PASTA 2.0 [13] | Server | Predicts protein aggregation propensity from sequence by evaluating the energy of putative cross-beta pairings. |

| SKiMpy [2] | Modeling Framework | A semiautomated workflow for constructing and parameterizing kinetic models, helping to ensure physiologically relevant time scales and avoid over-complexity. |

Model Complexity vs. Performance Relationship

Workflow for Validating Predictive Models

Frequently Asked Questions

Q1: What is overfitting, and why is it a problem in low-dimensional kinetic models? Overfitting creates a model that accurately represents your training data but fails to generalize to new data because it has learned patterns that are not representative of the population [14]. In kinetic modeling, this can mean your model fits your experimental data perfectly but makes unreliable predictions for new experimental conditions, potentially leading to incorrect conclusions in drug development research.

Q2: How can I detect overfitting in my low-dimensional dataset? A significant warning sign is a model that performs exceptionally well on training data but poorly on validation data. Visually, this can appear as a complex, "wiggly" regression line that perfectly follows the training data points but fails to capture the overall trend of the population data [14]. In practice, you should monitor for inflection points where further training increases training data accuracy but decreases validation performance [14].

Q3: What are common protocol errors that lead to overfitting? A critical error is conducting feature selection on the entire dataset before splitting it into training and testing sets (Partial Cross-Validation). This biases the error estimation. The unbiased alternative is to perform all feature selection and model fitting steps solely within the training portion of the data (Full Cross-Validation) [14]. Using training data error alone to estimate generalization performance will also give unduly optimistic results [14].

Q4: Does hyperparameter optimization always prevent overfitting? No. An optimization over a large parameter space can itself lead to overfitting, especially when evaluated using the same statistical measures [15]. In some cases, using sensible pre-set hyperparameters can achieve similar generalization performance with a fraction of the computational cost [15].

Troubleshooting Guides

Problem: Model fails during external validation despite excellent training performance.

- Potential Cause: The model is overfitted, potentially due to learning idiosyncratic "noise" in the training data [14].

- Solution:

Problem: Uncertainty in which model to select from many similarly performing candidates.

- Potential Cause: Lack of a robust model selection framework interacting with error estimation procedures [14].

- Solution:

Quantitative Data on Overfitting Scenarios

Table 1: Impact of Modeling Protocol on Error Estimation Bias in High-Dimensional Data with No True Signal

| Protocol Name | Description of Protocol | Resulting Estimate of Generalization Error | Bias Level |

|---|---|---|---|

| Biased Resubstitution | Feature selection & error estimation on all data. | Can indicate perfect classification | High Bias |

| Partial Cross-Validation | Feature selection on all data, then CV. | Intermediate, overly optimistic estimates | Intermediate Bias |

| Full Cross-Validation | Feature selection & model fitting within training portion only. | Unbiased, performs at chance level | No Bias |

Source: Adapted from Simon et al. demonstration in genomics-driven discovery [14].

Table 2: Comparison of Model Performance and Computational Effort

| Modeling Approach | Typical Relative Computational Effort | Generalization Performance | Risk of Overfitting |

|---|---|---|---|

| Pre-set Hyperparameters | 1X (Baseline) | Good (Context-dependent) | Lower |

| Full Hyperparameter Optimization | ~10,000X | Can be similar to pre-set parameters [15] | Higher (if not carefully managed) |

Experimental Protocols

Protocol: Fully Cross-Validated Model Development

Objective: To build a predictive model with an unbiased estimate of its generalization error, minimizing the risk of overfitting.

Methodology:

- Data Splitting: Randomly split the entire dataset into K-folds (e.g., K=5 or K=10).

- Iterative Training/Validation:

- For each iteration

i(from 1 to K): a. Set aside foldias the temporary validation set. b. Use the remaining K-1 folds as the training set. c. Perform all feature selection, parameter tuning, and model fitting steps exclusively on this training set. d. Apply the final model from step (c) to the temporary validation set (foldi) to obtain a performance metric.

- For each iteration

- Model Assembly: After all K iterations, combine the entire dataset to train the final model, using the same procedure established in the cross-validation loops.

- Error Estimation: The average performance metric across all K folds provides an unbiased estimate of the model's generalization error [14].

Protocol: Identifying the Overfitting Inflection Point in ANNs

Objective: To determine the optimal number of training iterations for an Artificial Neural Network (ANN) before overfitting begins.

Methodology:

- Split data into training and validation sets.

- Train the ANN, and at regular intervals (e.g., every 50 epochs), pause to calculate the model's accuracy on both the training and validation sets.

- Plot the training error and validation error against the number of training iterations.

- Identify the "breaking point" or inflection point where the validation error stops decreasing and starts to increase, while the training error continues to decrease. The model state just before this point is optimal for generalization [14].

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Kinetic Modeling

| Item | Function in Research |

|---|---|

| Fully Cross-Validated Modeling Protocol | Provides an unbiased framework for model development and error estimation, crucial for preventing overconfidence in results [14]. |

| Nested Cross-Validation | A specific, robust protocol for model selection and performance estimation that helps avoid biases from over-optimizing hyperparameters. |

| Simple Benchmark Models | Acts as a baseline to ensure that complex models provide a meaningful improvement over simple, interpretable alternatives. |

| Multiple Statistical Measures | Using a variety of evaluation metrics provides a more holistic view of model performance and helps avoid overfitting to a single metric [15]. |

| Transformer CNN (NLP-based) | A representation learning method that can provide strong baseline performance with reduced computational effort in some domains [15]. |

Diagram: Overfitting in Model Complexity

Diagram: Model Validation Workflow

Diagram: Error Progression During Training

The Impact of Data Quality and Quantity on Model Generalization

Core Concepts: Overfitting, Generalization, and Data

What is the relationship between overfitting and model generalization?

Overfitting occurs when a machine learning model fits too closely to its training data, capturing noise and irrelevant details instead of the underlying pattern. This results in accurate predictions on the training data but poor performance on new, unseen data [8] [16].

Generalization is the desired opposite of overfitting. A model that generalizes well makes accurate predictions on new data, indicating it has learned the true underlying relationships rather than memorizing the training set [17].

How do data quality and quantity specifically influence overfitting in kinetic models?

In complex kinetic modeling, such as fitting systems of Ordinary Differential Equations (ODEs) to reaction data, both the quality and quantity of data are critical for preventing overfitting and ensuring the model generalizes.

- Data Quantity: Limited kinetic data, especially from a narrow range of initial conditions, fails to capture the full dynamics of the chemical system. This can lead to the model overfitting to a specific scenario, making it unable to predict behaviors under different conditions. Research indicates that with limited data, algorithms may struggle to discover correct reaction scenarios and accurately estimate kinetic parameters [18].

- Data Quality: Kinetic data must be accurate, complete, and representative. Noisy or inaccurate measurements (e.g., from instrumentation) act as "noise" that the model can learn, harming its predictive power. Furthermore, if the data does not adequately represent all possible reaction pathways or conditions, the model will not generalize [18] [19]. High-quality data for kinetics requires precise measurements of concentrations over time under well-controlled conditions [20].

Troubleshooting Guides

Guide 1: Diagnosing Overfitting in Your Kinetic Model

| Symptom | Possible Causes | Diagnostic Steps |

|---|---|---|

| Low training error but high validation/test error [8] [16] | - Model is too complex for the amount of data [17].- Training data contains noise or artifacts the model has learned [8].- The training and validation sets have different statistical distributions [17]. | - Plot loss curves for both training and validation sets. A diverging curve, where validation loss increases while training loss decreases, is a clear indicator [17].- Perform k-fold cross-validation. A high variance in scores across folds suggests overfitting [8] [16]. |

| Model parameters (e.g., rate constants) are physically implausible or have extremely large confidence intervals [18]. | - Insufficient data to reliably estimate all parameters.- High correlation between parameters (lack of identifiability).- Noisy or low-quality experimental data. | - Conduct a sensitivity analysis to determine which parameters the model output is most sensitive to.- Check the correlation matrix of the parameter estimates.- Validate parameters against known literature values or physical constraints. |

| Model fails to predict new experimental runs, even with similar initial conditions. | - The model has memorized the training data without learning the fundamental kinetics.- "Hidden" species or reactions not accounted for in the model topology [18]. | - Test the model on a completely held-out test set from a new experiment.- Review the model topology (reaction network) for missing pathways or deactivation processes [18]. |

Guide 2: Evaluating Your Data's Fitness for Kinetic Modeling

| Data Issue | Impact on Generalization | Corrective Actions |

|---|---|---|

| Insufficient Data QuantityToo few time points or experimental runs. | High variance in parameter estimates; model cannot capture complex reaction dynamics [18]. | - Use algorithms like Chemfit to perform a pre-study to estimate the data required for reliable parameter discovery [18].- Design experiments to maximize information gain (e.g., vary initial conditions widely). |

| Poor Data Quality: Noise & OutliersHigh measurement error in concentration data. | Model learns experimental noise, leading to inaccurate rate constants and poor predictive performance [8] [19]. | - Implement data smoothing or filtering techniques with care.- Increase replication of experiments to better estimate true signal.- Improve experimental protocols and calibration. |

| Non-Representative DataTraining data only covers a narrow range of concentrations/temperatures. | Model will not generalize to conditions outside the training range [17]. | - Ensure your training data is Independently and Identically Distributed (IID) and covers the operational space of interest [17].- Shuffle data thoroughly before splitting into train/validation/test sets. |

| Incomplete DataMissing measurements for key species at critical time points. | Inability to constrain the ODE system, leading to multiple possible models fitting the data equally well. | - Use techniques like data augmentation (e.g., interpolation with caution) or algorithms that can handle missing data.- Redesign experiments to measure critical species. |

Frequently Asked Questions (FAQs)

How can I detect overfitting early during model training?

The most effective method is to use a validation set. Reserve a portion of your data (not used in training) and periodically evaluate your model's performance on it during the training process. Plot the generalization curves (training and validation loss vs. training iterations). When the validation loss stops decreasing and begins to rise while the training loss continues to fall, you are likely overfitting [17] [16]. This can also inform early stopping, where you halt training once performance on the validation set plateaus or degrades [8].

My model is complex, but I have limited data. What are my options?

With limited data, simplifying the model might not be desirable if the kinetics are inherently complex. Consider these strategies:

- Regularization: Apply penalties to the model's complexity (e.g., L1 or L2 regularization) to discourage overfitting by keeping parameter values small [8] [16].

- Data Augmentation: Artificially increase the size of your training set by creating modified versions of your existing data. For kinetic data, this could involve adding controlled noise or using interpolation to generate more time points, though this must be done carefully to not introduce physical impossibilities [8].

- Use a Physical Model: Basing your workflow on actual physical/chemical models (ODEs) is a more feasible strategy with limited data than purely data-extensive empirical methods, as it incorporates prior scientific knowledge [18].

What are the key dimensions of data quality I should measure for kinetic modeling?

For kinetic models, the most critical data quality dimensions are [21] [22]:

- Accuracy: Does the concentration data accurately represent the true values in the reaction vessel? [21]

- Completeness: Are there missing time points or measurements for any species? [21]

- Consistency: Is the data collection method uniform across all experiments? [21]

- Timeliness: Is the data fresh and relevant to the current reaction system being studied? [21]

- Relevance: Is all the collected data necessary and informative for the kinetic model? [21]

How do I split my data to best evaluate generalization?

A robust method is k-fold cross-validation [8] [16]. Your dataset is randomly split into k equally sized folds. The model is trained k times, each time using k-1 folds for training and the remaining fold for validation. The performance scores from all k iterations are averaged to produce a more reliable estimate of model generalization than a single train/test split.

Experimental Protocols & Methodologies

Protocol 1: K-Fold Cross-Validation for Model Assessment

Purpose: To reliably estimate the predictive performance of a kinetic model and detect overfitting.

Methodology:

- Data Preparation: Prepare your full kinetic dataset (e.g., concentration-time data for multiple runs).

- Splitting: Randomly partition the dataset into

k(typically 5 or 10) non-overlapping subsets (folds). - Iterative Training and Validation:

- For each iteration

i(from 1 tok):- Set aside fold

ias the validation set. - Use the remaining

k-1folds as the training set. - Train the kinetic model on the training set.

- Evaluate the model on the validation set and record the performance metric (e.g., Mean Squared Error).

- Set aside fold

- For each iteration

- Performance Calculation: Calculate the average performance across all

kiterations. The standard deviation of the scores also indicates the model's stability [8] [16].

Protocol 2: Assessing Data Quality and Quantity Requirements with Synthetic Data

Purpose: To determine the quality and quantity of experimental data needed for reliable kinetic parameter discovery before conducting costly lab experiments. This is a core function of tools like the Chemfit algorithm [18].

Methodology:

- Model Construction: Construct a candidate set of kinetic models (systems of ODEs) based on chemical knowledge, ranging from simple to complex [18].

- Synthetic Data Generation: Use a known "true" model to generate synthetic kinetic data. This data can be corrupted with different levels of noise and sampled at different resolutions to mimic real-world data quality and quantity issues [18].

- Fitting and Evaluation: Fit your candidate models to the synthetic datasets.

- Analysis: Analyze how the quality (noise level) and quantity (number of time points, range of conditions) of the synthetic data affect the accuracy of the recovered kinetic parameters. This helps define the minimum data requirements for your real experiment [18].

Essential Workflow Visualizations

Diagram 1: Data Quality's Impact on Model Generalization

Diagram Title: How Data Quality Drives Model Generalization

Diagram 2: Kinetic Model Development and Validation Workflow

Diagram Title: Kinetic Model Development Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item or Tool | Function in Kinetic Modeling Research |

|---|---|

| ODE Solvers (e.g., in SciPy) | Numerical engines for simulating the time-dependent behavior of chemical species described by systems of ordinary differential equations [18]. |

Parameter Estimation Algorithms (e.g., lmfit) |

Tools to find the values of kinetic parameters (e.g., rate constants) that minimize the difference between model predictions and experimental data [18]. |

| Synthetic Data Generators | Functions within workflows (e.g., Chemfit) that create simulated kinetic data with user-defined noise and resolution. Used to test modeling strategies and data requirements before wet-lab experiments [18]. |

| K-Fold Cross-Validation Scripts | Code to automatically partition data and perform iterative training/validation, providing a robust estimate of model generalization error [8] [16]. |

| Regularization Techniques (L1/Lasso, L2/Ridge) | Mathematical methods that add a penalty to the model's loss function to prevent parameter values from becoming too large, thereby reducing model complexity and overfitting [16]. |

| Sensitivity Analysis Tools | Methods to determine how uncertainty in the model's output can be apportioned to different sources of uncertainty in its input parameters. This helps identify which parameters are most critical to measure accurately [18]. |

Building Defenses: Methodological Frameworks to Prevent Overfit Kinetic Models

Leveraging Simplified First-Order Kinetics for Robust Long-Term Predictions

Troubleshooting Guide: Common Experimental Issues

1. Problem: Model predictions are inaccurate for new, unseen data (Overfitting)

- Possible Cause: The kinetic model is too complex and has learned the noise or specific quirks of the training dataset instead of the underlying trend [23] [8].

- Solution:

- Simplify the Model: Use a first-order kinetic model, which reduces the number of parameters that need to be fitted, enhancing robustness and reliability [6].

- Cross-Validation: Use k-fold cross-validation during model tuning. This involves splitting the data into k subsets and iteratively training the model on k-1 folds while using the remaining fold for validation [23] [8].

- Regularization: Apply techniques that artificially force the model to be simpler by adding a penalty parameter to the cost function [23].

2. Problem: Poor or no signal in binding or stability assays

- Possible Cause: Instability of reagents (proteins, ligands) over the duration of the assay can lead to loss of signal [24].

- Solution:

- Confirm Reagent Stability: Ensure the protein, target, and tracer are stable over the duration of the experiment [24].

- Check Incubation Conditions: Verify that incubation times and temperatures are correct and sufficient [25].

- Validate Reagents: Use freshly prepared reagents and confirm the activity and specificity of antibodies or other detection reagents [25].

3. Problem: Unable to achieve a good fit with a first-order model

- Possible Cause: The degradation or binding process may involve multiple pathways that are activated at the temperatures or conditions used in the study [6].

- Solution:

- Optimize Temperature Selection: Carefully design stability studies by selecting appropriate temperature conditions. This helps ensure that only one dominant degradation pathway, relevant to storage conditions, is present across all temperature conditions [6].

- Design of Experiments (DoE): Employ optimal experimental design frameworks, which can help maximize the information gained from experiments and ensure the data is suitable for model identification [26].

4. Problem: Model fails to generalize from accelerated to long-term storage data

- Possible Cause: Linear extrapolation from short-term data may not capture the true kinetic behavior [6].

- Solution:

- Use Arrhenius-Based Kinetics: Apply Advanced Kinetic Modelling (AKM) that combines first-order kinetics with the Arrhenius equation to predict long-term stability based on short-term accelerated studies [6].

- Ensure Data Quality: The training data should be clean and relevant. If the data is too noisy or limited, the model will not learn the underlying "signal" [23].

Frequently Asked Questions (FAQs)

Q1: Why should I use a simple first-order kinetic model when my biologic is complex? A first-order kinetic model reduces the number of parameters that need to be fitted, which minimizes the risk of overfitting and enhances the robustness of long-term predictions. For many quality attributes of complex biologics, a single dominant degradation pathway can be effectively described by a simple model, provided the stability study is designed with appropriate temperature conditions [6].

Q2: How can I detect if my kinetic model is overfit? A key method is to split your dataset into training and test subsets. If your model shows high accuracy (e.g., 99%) on the training data but performs poorly (e.g., 55%) on the test data, it is likely overfit [23]. Techniques like k-fold cross-validation can also help detect this issue by providing a more reliable estimate of model performance on unseen data [8].

Q3: What is the key advantage of kinetic experiments over equilibrium experiments? Kinetics experiments measure the rate constants for forward and reverse reactions. The ratio of these rate constants gives you the equilibrium constant. Therefore, a single kinetics experiment provides information about both the dynamics (rates) and the thermodynamics (affinity) of the system, whereas an equilibrium experiment only reveals the affinity [27].

Q4: When is it appropriate to use a simplified model like the Michaelis-Menten (mTMDD) model for Target-Mediated Drug Disposition (TMDD)? The mTMDD model, a simplified model, is accurate only when the initial drug concentration significantly exceeds the total target concentration. For cases where target concentration is comparable to or exceeds the drug concentration, more robust approximations like the quasi-steady-state (qTMDD) model should be used [28].

Experimental Protocol: Predicting Protein Aggregation Stability

Objective: To predict long-term aggregation of a biotherapeutic (e.g., an IgG1) under recommended storage conditions (e.g., 5°C) using short-term stability data and a first-order kinetic model [6].

Materials (Research Reagent Solutions):

| Reagent / Material | Function in the Protocol |

|---|---|

| Formulated Drug Substance | The biotherapeutic protein of interest (e.g., IgG1, bispecific IgG) whose stability is being studied [6]. |

| Size Exclusion Chromatography (SEC) Column | To separate and quantify the amount of protein monomers and aggregates in the samples [6]. |

| Stability Chambers | For precise, quiescent incubation of samples at various stress temperatures (e.g., 5°C, 25°C, 40°C) [6]. |

| Mobile Phase (e.g., 50 mM sodium phosphate, 400 mM sodium perchlorate, pH 6.0) | The solvent used in SEC to elute the protein from the column; additives like sodium perchlorate help reduce secondary interactions [6]. |

Methodology:

- Sample Preparation: Aseptically fill glass vials with the filtered, formulated drug substance [6].

- Stress Storage: Incubate samples upright at a minimum of three different temperatures (e.g., 5°C, 25°C, and 40°C) for a predefined period (e.g., up to 36 months). Include the recommended storage temperature (5°C) [6].

- Periodic Sampling: At predetermined time points (pull points), remove samples and analyze them using SEC [6].

- Data Collection: For each sample, record the percentage of high-molecular weight species (aggregates) from the SEC chromatogram [6].

- Kinetic Modeling:

- Fit the aggregate vs. time data at each temperature to a first-order kinetic model.

- Use the Arrhenius equation to relate the observed degradation rate constants ((k)) at different temperatures to the activation energy ((E_a)).

- Using the fitted Arrhenius parameters, extrapolate the degradation rate to the recommended storage temperature (e.g., 5°C) and predict the aggregation profile over the desired shelf-life [6].

Workflow: Stability Prediction

Key Experimental Parameters for First-Order Kinetics

The table below summarizes critical parameters and their typical considerations for designing a robust stability prediction study [6].

| Parameter | Consideration & Best Practice |

|---|---|

| Protein Modalities | The model has been validated for IgG1, IgG2, Bispecific IgG, Fc fusion, scFv, Nanobodies, DARPins [6]. |

| Temperature Selection | Use at least 3 temperatures. Choose to activate only the degradation pathway relevant to storage conditions [6]. |

| Study Duration | Varies by temperature (e.g., 12-36 months). Must be long enough to observe measurable degradation at each stress condition [6]. |

| Key Output | % High-Molecular Weight Species (HMW) or other quality attributes (purity, charge variants) [6]. |

| Core Kinetic Model | First-order kinetics combined with the Arrhenius equation for long-term prediction [6]. |

Model Simplification & Overfitting Prevention

Strategies to Prevent Overfitting

The Role of the Arrhenius Equation in Accelerated Predictive Stability (APS)

Accelerated Predictive Stability (APS) studies are modern approaches designed to predict the long-term stability of pharmaceutical products in a more efficient and less time-consuming manner compared to traditional methods [29]. These studies are carried out over a 3-4 week period by combining extreme temperatures and relative humidity (RH) conditions, typically ranging from 40-90°C and 10-90% RH [29].

The foundation of APS is the Arrhenius equation, a fundamental principle in chemical kinetics that describes the temperature dependence of reaction rates. The equation is expressed as: k = A · e^(-Ea/RT) where:

- k is the reaction rate constant

- A is the pre-exponential factor (frequency factor)

- Ea is the activation energy (J/mol)

- R is the universal gas constant (8.314 J/mol·K)

- T is the absolute temperature in Kelvin [30] [31]

For pharmaceutical stability testing, this relationship is often modified to account for humidity effects, becoming: k = A · e^(-Ea/RT) · e^(B·RH) where RH is the relative humidity and B is the humidity sensitivity factor [32] [33].

Table 1: Key Variables in the Arrhenius Equation for APS

| Variable | Description | Role in APS | Typical Units |

|---|---|---|---|

| k | Reaction rate constant | Measures degradation speed at given conditions | Varies (s⁻¹, M⁻¹s⁻¹) |

| A | Pre-exponential factor | Related to molecular collision frequency | Same as k |

| Ea | Activation energy | Minimum energy required for degradation | kJ/mol or J/mol |

| T | Temperature | Primary acceleration factor | Kelvin (K) |

| RH | Relative Humidity | Secondary acceleration factor | Percentage (%) |

| B | Humidity sensitivity | Quantifies moisture impact on degradation | Dimensionless |

Frequently Asked Questions (FAQs)

Q1: How does APS using the Arrhenius equation reduce stability testing time from years to weeks?

Traditional ICH stability studies require long-term testing over a minimum of 12 months at 25°C ± 2°C/60% RH ± 5% RH or at 30°C ± 2°C/65% RH ± 5% RH, with accelerated testing covering at least 6 months [29]. In contrast, APS studies leverage the mathematical relationship established by the Arrhenius equation to extrapolate from high-temperature, short-term data (typically 3-4 weeks) to predict stability under normal storage conditions [29] [32].

The Arrhenius equation enables this acceleration because it quantifies how reaction rates increase with temperature. For every 10°C rise in temperature, degradation rates typically increase by 2-5 times. By studying degradation at elevated temperatures (e.g., 50°C, 60°C, 70°C) and applying the Arrhenius relationship, scientists can mathematically project how the product will behave at recommended storage temperatures (e.g., 5°C, 25°C) over much longer timeframes [34] [35].

Q2: What are the practical limitations of the Arrhenius equation in predicting biologics stability?

While the Arrhenius equation works well for small molecules, biologics like monoclonal antibodies present unique challenges due to their complex structure and multiple degradation pathways [6] [35]. The main limitations include:

- Multiple degradation mechanisms: Biologics can degrade through various pathways (aggregation, fragmentation, deamidation, oxidation) that may have different activation energies and temperature dependencies [35].

- Non-Arrhenius behavior: Some protein degradation processes don't follow Arrhenius kinetics, particularly when structural unfolding occurs at higher temperatures [6] [35].

- Concentration-dependent aggregation: For attributes like protein aggregation, the degradation rate depends on protein concentration, complicating simple kinetic modeling [6].

However, recent research demonstrates that with careful experimental design, Arrhenius-based predictions can successfully predict long-term stability (up to 3 years) of therapeutic monoclonal antibodies using short-term (up to 6 months) accelerated stability data [35].

Q3: How do I determine the activation energy (Ea) for my drug substance?

Activation energy can be determined experimentally using the linear form of the Arrhenius equation: ln(k) = (-Ea/R)(1/T) + ln(A) [30] [31]

The step-by-step process involves:

- Measuring degradation rates (k) at multiple temperatures (at least 3-4 different temperatures)

- Plotting ln(k) versus 1/T

- Fitting a straight line to the data points

- Calculating Ea from the slope: Ea = -slope × R

For precise determination, use temperatures that stimulate relatively fast degradation but don't destroy the fundamental characteristics of the product. Very high temperatures may activate different degradation mechanisms not relevant at storage conditions [34].

Q4: What is the minimum number of temperature conditions needed for reliable APS modeling?

For robust APS modeling, a minimum of five sets of randomized temperature and humidity conditions is recommended [32]. Each condition should include several time points with repetitions to ensure statistical significance. This approach helps build a reliable model while minimizing the risk of overfitting.

Using multiple conditions is particularly important because:

- It allows verification of Arrhenius behavior across temperature ranges

- It helps identify when degradation mechanisms change at certain temperatures

- It provides sufficient data points for reliable regression analysis [6] [34]

Troubleshooting Common Experimental Issues

Problem 1: Non-linear Arrhenius Plot

Symptoms: Data points on the ln(k) vs. 1/T plot don't form a straight line; predictions at storage temperature are inaccurate.

Possible Causes:

- Different degradation mechanisms dominating at different temperatures [6]

- Phase transitions (e.g., crystallization, melting) occurring within the temperature range

- Exhaustion of reactants or catalyst effects at higher temperatures

Solutions:

- Limit temperature range: Use only temperatures where similar degradation mechanisms operate [6]

- Apply multi-mechanism models: Use parallel reaction models with different activation energies [6]

- Verify analytical methods: Ensure analytical techniques are detecting the same degradation products across all temperatures

Problem 2: Poor Model Fit at Recommended Storage Conditions

Symptoms: Good prediction at accelerated conditions but poor correlation with real-time stability data.

Possible Causes:

- Different dominant degradation pathways at low vs. high temperatures [6]

- Insufficient data points near storage temperature

- Humidity effects not properly accounted for in the model

Solutions:

- Include intermediate temperatures: Add study points between accelerated and storage temperatures

- Modify Arrhenius equation for humidity: Use k = A · e^(-Ea/RT) · e^(B·RH) for humidity-sensitive products [32] [33]

- Validate with partial real-time data: Use available real-time data to calibrate the model

Problem 3: Overfitting in Complex Kinetic Models

Symptoms: Excellent fit to training data but poor predictive performance; model too complex with too many parameters.

Possible Causes:

- Using overly complex models with limited experimental data [6]

- Fitting too many parameters relative to available data points

- Insufficient experimental design with limited conditions

Solutions:

- Use simplified kinetics: Apply first-order kinetic models where possible [6]

- Apply parsimony principle: Choose the simplest model that adequately describes the data

- Increase experimental conditions: Use more conditions with fewer time points rather than few conditions with many time points [32]

- Cross-validate: Reserve some experimental data for model validation

Table 2: Troubleshooting Common APS Modeling Issues

| Problem | Root Cause | Detection Method | Solution Approach |

|---|---|---|---|

| Non-linear Arrhenius behavior | Multiple degradation mechanisms | Deviation from linearity in ln(k) vs. 1/T plot | Limit temperature range or use parallel reaction models |

| Poor low-temperature prediction | Different pathways at low vs high temp | Model validation failures at storage temp | Include intermediate temperatures in study design |

| Overfitting | Too many model parameters | Good training fit but poor prediction | Use simplified models; follow parsimony principle |

| High prediction uncertainty | Insufficient data points | Wide confidence intervals in predictions | Increase number of experimental conditions |

| Humidity effects unaccounted for | Humidity sensitivity not modeled | Poor correlation in humid conditions | Use modified Arrhenius equation with RH term |

Essential Materials and Experimental Protocols

Research Reagent Solutions for APS Studies

Table 3: Essential Materials for APS Experiments

| Material/Reagent | Function in APS | Application Notes |

|---|---|---|

| Type I Glass Vials | Primary container for stability samples | Chemically inert; minimal leachables [6] [35] |

| Stability Chambers | Controlled temperature and humidity environments | Require precise control (±2°C, ±5% RH) [29] |

| Size Exclusion Chromatography (SEC) | Quantification of protein aggregates and fragments | Critical for biologics stability assessment [6] [35] |

| HPLC Systems with UV Detection | Analysis of degradants and potency | Standard for small molecule quantification [32] |

| Pharmaceutical Grade Excipients | Formulation components | Must be consistent with commercial product [35] |

| Temperature and Humidity Data Loggers | Environmental monitoring | Verification of controlled storage conditions |

Standard Operating Procedure: Designing an APS Study

Objective: Predict long-term stability using short-term accelerated data while avoiding overfitting.

Step 1: Pre-study Formulation Characterization

- Determine physicochemical properties (melting point, deliquescence, hydration)

- Establish specification limits for degradants

- Define optimal storage conditions [32]

Step 2: Analytical Method Validation

- Develop stability-indicating methods for each degradant

- Validate method sensitivity, accuracy, and reliability

- Ensure methods can detect changes larger than experimental variability [34]

Step 3: Experimental Design

- Select 5-8 temperature conditions (typically 40-80°C)

- Include appropriate humidity conditions (10-75% RH) for humidity-sensitive products

- Plan time points to encompass degradation progression at each condition

- Include replicates for statistical significance [32] [33]

Step 4: Sample Aging and Data Collection

- Store samples under controlled conditions

- Analyze samples at predetermined time points

- Record degradation levels for each condition and time point [6]

Step 5: Kinetic Analysis

- Calculate degradation rates (k) at each condition

- Fit data to Arrhenius equation to determine Ea and A

- Apply humidity modification if necessary [32] [33]

Step 6: Model Validation and Prediction

- Validate model with any available real-time data

- Predict degradation at recommended storage conditions

- Establish shelf-life with appropriate confidence limits [34]

Advanced Topics: Managing Overfitting in Complex Models

Strategies for Robust Kinetic Modeling

Overfitting poses a significant challenge when developing kinetic models for stability prediction, particularly with complex biologics. The following strategies help maintain model robustness:

1. Temperature Selection for Single-Mechanism Dominance Carefully choose temperature conditions to ensure only one degradation pathway (relevant at storage conditions) is present across all temperature conditions. This enables the use of simple first-order kinetic models that are less prone to overfitting [6].

2. Parameter Reduction Techniques

- Use a first-order kinetic model instead of more complex models when possible

- Reduce the number of fitted parameters by fixing well-established values

- Apply the isoconversion principle to eliminate the need for complex degradation kinetics [6] [32]

3. Model Validation Approaches

- Reserve portion of experimental data for validation, not model building

- Use statistical measures like prediction intervals rather than just fit quality

- Apply cross-validation techniques when data is limited [6] [34]

4. Confidence Interval Implementation Always report shelf-life predictions with appropriate confidence intervals rather than as single values. The labeled shelf life should be the lower confidence limit of the estimated time to ensure public safety [34].

The movement toward simplified kinetic modeling demonstrates that for many biologics, including monoclonal antibodies, fusion proteins, and various protein modalities, first-order kinetics combined with the Arrhenius equation can provide accurate long-term stability predictions while minimizing overfitting risks [6]. This approach enhances reliability by reducing the number of parameters that need to be fitted and minimizes the number of samples required, making the models more robust and generalizable [6].

Incorporating Regularization Techniques to Penalize Model Complexity

Frequently Asked Questions (FAQs)

FAQ 1: What is regularization and why is it critical for kinetic modeling? Regularization is a set of methods for reducing overfitting in machine learning models by intentionally increasing training error slightly to gain significantly better performance on new, unseen data [36]. In kinetic modeling, this is crucial because complex models with many parameters can easily memorize noise in experimental training data rather than learning the underlying biological mechanisms. This memorization leads to poor predictions when applied to new experimental conditions or biological systems [6].

FAQ 2: How do I choose between L1 (Lasso) and L2 (Ridge) regularization for my kinetic models? The choice depends on your specific modeling goals and the characteristics of your kinetic parameters. L1 regularization (Lasso) is preferable when you suspect many features or kinetic parameters have minimal actual effect and should be eliminated entirely, as it can shrink coefficients to zero [37] [36]. L2 regularization (Ridge) is better when you want to maintain all parameters but constrain their magnitudes, which is useful for handling correlated parameters in kinetic models [37] [38]. For models where both feature selection and parameter shrinkage are desirable, Elastic Net combines both L1 and L2 penalties [37].

FAQ 3: What are the practical signs that my kinetic model needs regularization? Your model likely needs regularization if you observe: significant discrepancy between performance on training data versus validation data, unreasonably large parameter values for kinetic constants, poor convergence with different initial parameter guesses, or predictions that violate known biological constraints when extrapolated beyond training conditions [6] [2]. These indicate overfitting, where your model has become too complex and has memorized noise rather than learned generalizable patterns.

FAQ 4: How can I implement regularization without specialized machine learning expertise? Many scientific computing platforms now include regularization capabilities. For Python users, scikit-learn provides Lasso, Ridge, and ElasticNet classes with straightforward implementations [37]. For R users, the glmnet package offers efficient regularization implementations. These tools handle the complex optimization while requiring you only to specify the regularization strength (λ), making advanced techniques accessible to researchers focused on kinetic applications rather than algorithmic details [38].

FAQ 5: Can regularization help with the limited experimental data common in kinetic studies? Yes, regularization is particularly valuable when experimental data is limited, which is common in kinetic studies due to experimental costs and time constraints [6]. By constraining model complexity, regularization helps prevent overfitting to small datasets and can provide more reliable parameter estimates than unregularized models when training data is scarce. This makes it possible to develop useful models even before comprehensive experimental data is available [36] [38].

Troubleshooting Guides

Problem 1: Model Exhibits High Variance Between Training and Validation Performance

Symptoms

- Excellent fit to training data (low error) but poor performance on validation data

- Large changes in predictions with small changes in training data

- Parameter estimates that vary widely with different data subsets

Solution Steps

- Apply L2 (Ridge) regularization to constrain parameter magnitudes without eliminating them

- Systematically tune regularization strength using cross-validation

- Standardize all input features to ensure regularization is applied fairly across parameters

- Monitor learning curves to identify appropriate regularization strength

Implementation Example

Problem 2: Model is Too Complex with Many Insignificant Parameters

Symptoms

- Difficulty interpreting which parameters most influence predictions

- Long training times with minimal performance benefits

- Parameters with values very close to zero that don't meaningfully contribute

Solution Steps

- Implement L1 (Lasso) regularization to drive unimportant parameter coefficients to zero

- Use feature importance scoring to identify parameters to potentially eliminate

- Apply sequential feature selection with regularization to simplify model structure

- Validate simplified model to ensure performance hasn't degraded substantially

Implementation Example

Problem 3: Model Fails to Generalize to New Experimental Conditions

Symptoms

- Good performance under training conditions but fails with slightly different conditions

- Predictions that violate known biological constraints

- Inability to extrapolate beyond narrow training data range

Solution Steps

- Implement Elastic Net regularization to balance feature selection and parameter constraint

- Incorporate physical constraints into regularization penalties

- Use domain knowledge to weight regularization appropriately for different parameters

- Validate across multiple conditions during regularization tuning

Implementation Example

Regularization Techniques Comparison

Table 1: Comparison of Regularization Techniques for Kinetic Modeling

| Technique | Mathematical Formulation | Best For | Advantages | Limitations |

|---|---|---|---|---|

| L1 (Lasso) | Cost = MSE + λ∑|β| [37] | Feature selection, high-dimensional data [36] | Creates sparse models, eliminates irrelevant features [37] | May eliminate correlated features arbitrarily, unstable with correlated features [38] |

| L2 (Ridge) | Cost = MSE + λ∑β² [37] | Handling multicollinearity, small datasets [37] | Stable with correlated features, always keeps all features [36] | Does not perform feature selection, all features remain in model [38] |

| Elastic Net | Cost = MSE + λ[(1-α)∑|β| + α∑β²] [37] | Balanced approach, grouped feature selection | Combines benefits of L1 and L2, handles correlated features better than L1 alone [37] | Two parameters to tune (λ, α), more computationally intensive [36] |

Table 2: Regularization Hyperparameter Guidelines for Kinetic Models

| Scenario | Recommended Technique | Typical α Range | Typical λ Range | Validation Approach |

|---|---|---|---|---|

| High-throughput kinetic parameter screening | Lasso (L1) | N/A | 0.001-0.1 [37] | Cross-validation with emphasis on sparsity |

| Traditional kinetic modeling with limited data | Ridge (L2) | N/A | 0.01-1.0 [38] | Time-series cross-validation |

| Genome-scale kinetic models | Elastic Net | 0.2-0.8 [37] | 0.001-0.1 [37] | Block cross-validation by biological replicate |

| Mechanistic ODE-based models | Custom weighted L2 | N/A | Domain-dependent | Physiological constraint satisfaction |

Experimental Protocols

Protocol 1: Systematic Regularization Implementation for Kinetic Models

Purpose To implement and validate regularization techniques for preventing overfitting in kinetic models of biological systems.

Materials

- Kinetic modeling software (Tellurium, COPASI, or custom ODE solver) [2]

- Dataset with training and validation conditions

- Computational environment (Python with scikit-learn or R with glmnet) [37]

Procedure

- Data Preparation

- Split data into training (60-70%), validation (15-20%), and test (15-20%) sets

- Standardize all input features to zero mean and unit variance

- Document any known biological constraints on parameter values

Baseline Model Development

- Develop unregularized model as baseline

- Record training and validation performance

- Identify signs of overfitting (large validation vs. training error)

Regularization Implementation

- Implement chosen regularization technique (L1, L2, or Elastic Net)

- Set up hyperparameter grid for cross-validation

- For kinetic models, consider biologically-informed regularization weights

Model Training & Validation

- Train regularized models across hyperparameter range

- Select optimal hyperparameters using validation set performance

- Verify model satisfies essential biological constraints

Final Evaluation

- Evaluate selected model on held-out test set

- Compare with baseline unregularized model

- Document improvement in generalization performance

Expected Results Properly regularized models should show:

- Similar training and validation performance (reduced overfitting)

- biologically plausible parameter estimates

- Improved generalization to new experimental conditions

Protocol 2: Cross-Validation for Regularization Parameter Tuning

Purpose To determine optimal regularization parameters for kinetic models using systematic cross-validation.

Materials

- Kinetic model with identified need for regularization

- Comprehensive dataset covering expected operating conditions

- Computational resources for multiple model fits

Procedure

- Design Cross-Validation Strategy

- Choose k-fold (typically 5-10) or leave-one-out cross-validation based on data size

- For time-series kinetic data, use blocked CV to preserve temporal structure

- Ensure each fold represents expected variability in application conditions

Define Parameter Search Space

- For L2: λ typically between 0.001 and 1000 (logarithmic scale)

- For L1: λ typically between 0.001 and 10 (logarithmic scale)

- For Elastic Net: search both λ (0.001-1.0) and α (0-1)

Execute Cross-Validation

- For each parameter combination, train model on training folds

- Evaluate performance on validation folds

- Compute average performance across all folds

Select Optimal Parameters

- Choose parameters with best cross-validation performance

- Consider simpler models if performance difference is minimal

- Verify selected parameters yield biologically plausible results

Final Model Assessment

- Train final model with selected parameters on full training set

- Assess on completely held-out test set

- Document cross-validation results and final test performance

The Scientist's Toolkit

Table 3: Essential Research Reagents for Regularization Experiments

| Tool/Software | Primary Function | Application in Regularization | Key Features |

|---|---|---|---|

| scikit-learn [37] | Machine learning library | Implementation of L1, L2, Elastic Net | Lasso, Ridge, ElasticNet classes; cross-validation tools [37] |

| glmnet (R package) | Regularized generalized linear models | Efficient regularization for statistical models | Fast computation for high-dimensional data [38] |

| Tellurium [2] | Kinetic modeling environment | Building and simulating biological models | Standardized model structures; parameter estimation [2] |

| SKiMpy [2] | Kinetic modeling framework | Large-scale kinetic model construction | Automatic rate law assignment; parameter sampling [2] |

| MASSpy [2] | Metabolic modeling | Constraint-based modeling integration | Mass action kinetics; parallelizable sampling [2] |

Regularization Workflow Visualization

Regularization Method Selection Workflow

Regularization Method Decision Tree

Troubleshooting Guides and FAQs

This technical support center addresses common challenges researchers face when using automated kinetic modeling frameworks, with a specific focus on mitigating overfitting in complex models for drug development and pharmaceutical research.

Frequently Asked Questions

Q1: What is the primary cause of overfitting in automated kinetic modeling, and how can I detect it?

Overfitting occurs when your model learns the training data too well, including noise and random fluctuations, resulting in poor generalization to new data. Key indicators include:

- Training vs. Validation Performance: A significant and growing gap where training loss decreases while validation loss increases [39] [40]

- Model Complexity: Overly complex models with many parameters relative to the amount of training data [39] [6]

- Feature Importance Inconsistency: Erratic feature importance rankings that vary significantly with small changes in the dataset [41]

Q2: How does the Mixed Integer Linear Programming (MILP) approach help prevent overfitting during model selection?

The MILP framework contributes to robust model selection through several mechanisms:

- Comprehensive Model Generation: Systematically generates all possible reaction models based on mass balance, creating a complete library for evaluation [42] [43]