Optimization-Based Modeling of Stoichiometries and Kinetics: Accelerating Drug Development from Discovery to Clinic

This article provides a comprehensive overview of optimization-based modeling techniques that simultaneously resolve reaction stoichiometries and kinetics, a critical challenge in understanding complex biological and chemical systems.

Optimization-Based Modeling of Stoichiometries and Kinetics: Accelerating Drug Development from Discovery to Clinic

Abstract

This article provides a comprehensive overview of optimization-based modeling techniques that simultaneously resolve reaction stoichiometries and kinetics, a critical challenge in understanding complex biological and chemical systems. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles of integrating stoichiometric and kinetic data, detail advanced methodologies from Mixed-Integer Linear Programming (MILP) to machine learning-enhanced frameworks, and address common troubleshooting and optimization constraints. Through validation case studies and comparative analyses across pharmaceutical and metabolic engineering applications, we demonstrate how these 'fit-for-purpose' models enhance predictive accuracy, streamline development timelines, and improve the probability of success in bringing new therapies to market. This synthesis aims to serve as a strategic roadmap for implementing these powerful computational approaches throughout the drug development lifecycle.

Laying the Groundwork: Integrating Stoichiometry and Kinetics in Complex Systems

The Critical Need for Simultaneous Modeling in Pharmaceutical and Metabolic Systems

Simultaneous modeling represents a paradigm shift in the analysis of complex biological and chemical systems, integrating multiple processes into a unified computational framework. In pharmaceutical and metabolic research, this approach simultaneously captures the interplay between drug pharmacokinetics, metabolite dynamics, and cellular metabolism, enabling more accurate predictions of system behavior. Unlike traditional stepwise methods that analyze system components in isolation, simultaneous modeling maintains the critical dependencies between interconnected processes, providing a mechanistic understanding that is essential for reliable predictions in drug development and metabolic engineering [1] [2].

The foundational principle of simultaneous modeling rests on solving for all system components concurrently rather than sequentially. This is particularly valuable when dealing with formation-rate limited kinetics, where metabolite concentrations are directly governed by their rate of formation from parent compounds, creating an inherent dependency that sequential models struggle to capture accurately [1]. In the context of optimization-based modeling of stoichiometries and kinetics research, simultaneous approaches enable researchers to directly incorporate stoichiometric constraints and kinetic parameters into a unified optimization problem, leading to more physiologically realistic and predictive models [2].

Key Applications in Pharmaceutical Development

Parent Drug and Metabolite Pharmacokinetics

The simultaneous modeling of parent drugs and their metabolites has proven particularly valuable when metabolites contribute significantly to overall pharmacological activity or toxicity. A prominent example comes from the development of rolofylline, an adenosine A1 receptor antagonist investigated for acute congestive heart failure. Rolofylline is primarily metabolized via CYP3A4 to two pharmacologically active metabolites (M1-trans and M1-cis), both exhibiting similar affinity to the human adenosine A1 receptor as the parent drug and demonstrating comparable diuretic and natriuretic effects in preclinical models [1].

Table 1: Pharmacokinetic Parameters for Rolofylline and Metabolites in Healthy Volunteers

| Analyte | Clearance (L/h) | Volume of Distribution at Steady-State (L) | Apparent Terminal Half-life (h) |

|---|---|---|---|

| Rolofylline | 24.4 | 239 | ~15 |

| M1-trans metabolite | Not separately reported | Not separately reported | ~12 |

| M1-cis metabolite | Not separately reported | Not separately reported | ~14 |

The development of a simultaneous pharmacokinetic model for rolofylline and its metabolites required innovative modeling approaches, including provisions for both the conversion of rolofylline to its metabolites and the stereochemical interconversion between M1-trans and M1-cis forms. The final model successfully described a two-compartment linear pharmacokinetic model for rolofylline while simultaneously capturing metabolite pharmacokinetics, demonstrating the power of this approach to maintain critical relationships between parent drug and metabolite dispositions [1].

Integration of Cellular Metabolism into Whole-Body Models

Multiscale modeling represents an advanced application of simultaneous modeling principles, integrating genome-scale metabolic networks at the cellular level with physiologically-based pharmacokinetic (PBPK) models at the whole-body level. This approach, implemented through techniques such as dynamic flux balance analysis (dFBA), allows researchers to quantitatively describe metabolic behavior in the context of time-resolved metabolite concentration profiles throughout the body [3].

Table 2: Applications of Multiscale Whole-Body Modeling

| Application Area | Research Question | Model Components Integrated |

|---|---|---|

| Hyperuricemia Therapy | Distribution and therapeutic effect of allopurinol | Drug pharmacokinetics, uric acid production, cellular metabolism |

| Ammonia Detoxification | Effect of impaired ammonia metabolism on blood plasma levels | Ammonia transport, hepatic urea cycle, biomarker identification |

| Drug-Induced Toxication | Paracetamol-induced liver function impairment | Drug metabolism, toxic intermediate formation, cellular damage |

This integrative methodology provides mechanistic insights into pathology and medication by simultaneously considering multiple layers of biological organization. The approach has been successfully applied to investigate hyperuricemia therapy, ammonia detoxification, and paracetamol-induced toxication, demonstrating its broad utility in pharmaceutical development [3].

Experimental Protocols and Methodologies

Protocol 1: Simultaneous PK/PD Modeling of Drugs and Active Metabolites

Objective: To develop a integrated pharmacokinetic-pharmacodynamic (PK/PD) model that simultaneously describes the time course of parent drug, active metabolites, and resulting pharmacological effects.

Materials and Reagents:

- Analytical Standard: Parent drug and synthesized metabolite standards

- Biological Matrix: Blank plasma and tissue homogenates

- Sample Preparation: Protein precipitation reagents (acetonitrile, methanol)

- Analytical Instrumentation: LC-MS/MS system with validated bioanalytical method

- Software: NONMEM, MONOLIX, or other nonlinear mixed-effects modeling software

Procedure:

- Study Design: Conduct a single rising-dose trial in healthy volunteers or animal models with intensive blood sampling at pre-dose, 0.5, 1, 2, 3, 5, 9, 13, 25, 37, and 49 hours post-dose [1].

- Sample Analysis: Quantify parent drug and metabolite concentrations in all samples using a validated LC-MS/MS method [4].

- Base Model Development:

- Structure a two-compartment linear PK model for the parent drug

- Incorporate formation clearance parameters for metabolite generation

- Include interconversion parameters if metabolites undergo stereochemical conversion

- Estimate clearance and volume of distribution for all analytes [1]

- Covariate Model Building: Evaluate the influence of demographic, physiological, and genetic factors on key model parameters

- Model Validation: Perform visual predictive checks and bootstrap analysis to evaluate model performance

- PD Model Integration: Link drug and metabolite concentrations to effect measures using appropriate Emax or linear models

Data Analysis: The simultaneous model should be evaluated using standard goodness-of-fit plots, including observed vs. predicted concentrations, conditional weighted residuals vs. time/predictions, and visual predictive checks. The final model should adequately describe the observed concentration-time profiles for both parent drug and metabolites while providing physiologically plausible parameter estimates [1] [5].

Protocol 2: Optimization-Based Kinetic Model Development

Objective: To develop a mixed integer linear programming (MILP) model that simultaneously identifies reaction stoichiometries and kinetic parameters from time-resolved concentration data.

Materials and Reagents:

- Experimental Data: Time-course concentration measurements for all reactants and products

- Candidate Stoichiometries: Preliminary screening of possible reaction pathways

- Software Environment: MATLAB or Python with optimization toolboxes

- ODE Solver: Stiff ODE solver (e.g., ode15s in MATLAB) for model simulation

Procedure:

- Data Collection: Conduct kinetic experiments to measure concentration profiles of all species under varied initial conditions

- Pre-screening: Identify candidate stoichiometries through preliminary data analysis to reduce model size [2]

- Model Formulation:

- Define a global ODE model structure that incorporates all possible stoichiometric candidates

- Establish material balance equations for each component

- Formulate reaction rates using power law or mechanistic expressions

- Optimization Problem:

- Define binary variables for stoichiometry selection

- Define continuous variables for kinetic parameters

- Formulate objective function to minimize difference between simulated and experimental data

- Include constraints for reaction fluxes and parameter bounds [2]

- Solution Strategy: Apply MILP solution algorithms to identify the optimal combination of stoichiometries and kinetic parameters

- Model Validation: Test the identified model against unused experimental data to evaluate predictive performance

Data Analysis: The performance of the optimization-based modeling approach should be evaluated using root mean squared error (RMSE) between model predictions and experimental data. The method should be compared against traditional stepwise approaches to demonstrate improvements in computational efficiency and model accuracy [2].

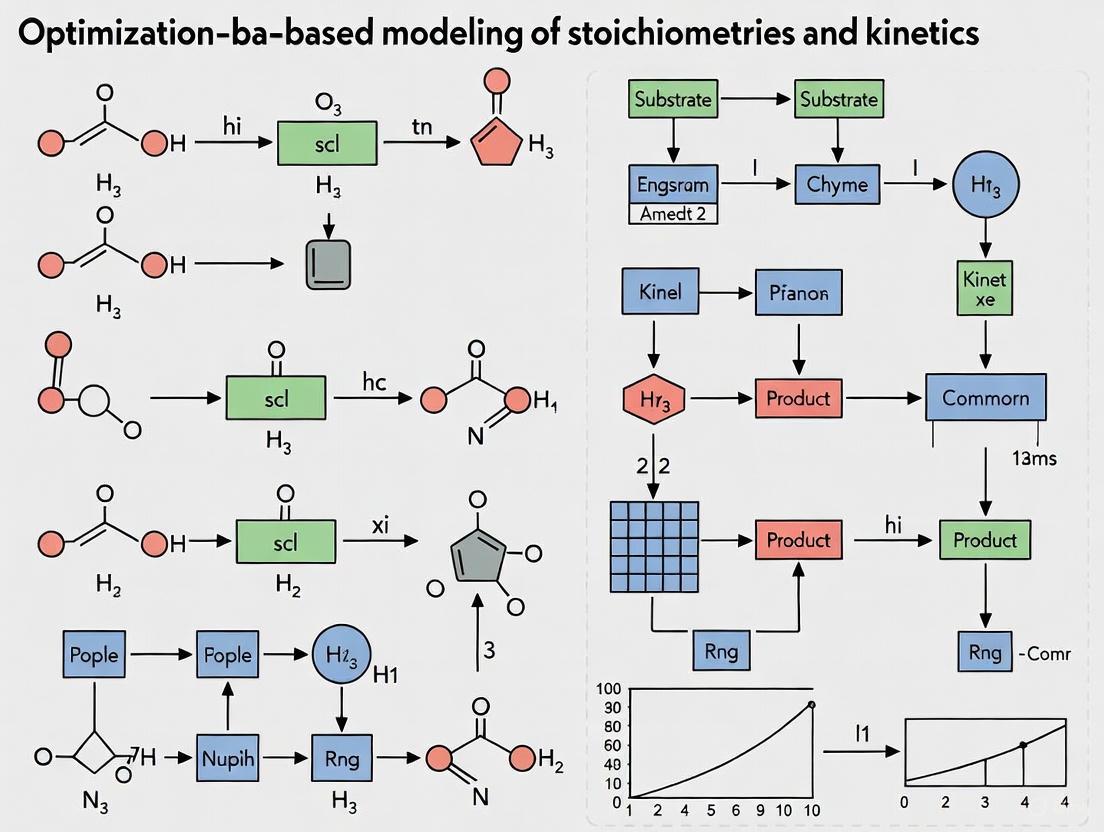

Visualization of Modeling Approaches

Workflow for Simultaneous PK/PD Modeling

Simultaneous PK/PD Modeling Workflow

Multiscale Metabolic Modeling Architecture

Multiscale Metabolic Modeling Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Simultaneous Modeling

| Tool/Category | Specific Examples | Function in Simultaneous Modeling |

|---|---|---|

| Kinetic Modeling Software | NONMEM, MONOLIX, MATLAB | Parameter estimation for complex PK/PD models |

| Stoichiometric Analysis Tools | DAISY, COBRApy, MASSpy | Structural identifiability analysis and metabolic flux calculation |

| Optimization Frameworks | MILP solvers, pyPESTO | Simultaneous identification of stoichiometries and kinetic parameters |

| PBPK Platforms | PK-Sim, GastroPlus | Whole-body physiological modeling and integration with cellular metabolism |

| Bioanalytical Instruments | LC-MS/MS, Q-TOF systems | Simultaneous quantification of parent drug and metabolites |

| Model Evaluation Tools | Xpose, Pirana, PSN | Diagnostic testing and model quality assessment |

Simultaneous modeling approaches represent a critical advancement in pharmaceutical and metabolic research, enabling researchers to capture the complex interplay between drug disposition, metabolite kinetics, and cellular metabolism. By integrating optimization-based methodologies with mechanistic biological knowledge, these approaches provide a powerful framework for predicting system behavior under various physiological and pathological conditions. The continued development and application of simultaneous modeling strategies will undoubtedly accelerate drug development processes and improve our understanding of complex metabolic systems, ultimately leading to more effective and safer therapeutic interventions.

The integration of stoichiometric networks with kinetic rate laws represents a foundational challenge in computational biochemistry and systems biology. While stoichiometry defines the static, topological possibilities of a reaction network, kinetics describe the dynamic, time-evolving behavior of the system. Optimization-based modeling has emerged as a crucial methodology for reconciling these two aspects, enabling researchers to construct predictive models even when complete mechanistic details are unknown. This approach is particularly valuable for fine chemical synthesis and drug development, where rapid process development is essential for responding to market pressures and regulatory requirements [6]. By providing a structured framework for integrating available data—from reaction stoichiometries to partial kinetic information—these methods bridge the gap between network structure and dynamic function, supporting the development of more reliable in silico models for biological and chemical systems.

Core Principles and Methodologies

Fundamental Theoretical Frameworks

The mathematical foundation for bridging stoichiometry and kinetics begins with the standard mass balance equation for biochemical networks:

Equation 1: General Dynamic Mass Balance [ \frac{d\mathbf{S}}{dt} = \mathbf{N} \cdot \mathbf{v}(\mathbf{S}, \mathbf{k}) ] where (\mathbf{S}) is the vector of metabolite concentrations, (\mathbf{N}) is the stoichiometric matrix, and (\mathbf{v}(\mathbf{S}, \mathbf{k})) is the vector of reaction rates dependent on concentrations and kinetic parameters (\mathbf{k}) [7]. The stoichiometric matrix (\mathbf{N}) defines the network topology, with elements (N_{ij}) representing the stoichiometric coefficient of metabolite (i) in reaction (j) [6].

The challenge arises because the exact functional forms of (\mathbf{v}(\mathbf{S}, \mathbf{k})) are often unknown for many enzymatic reactions. Several methodological approaches address this fundamental limitation:

Structural Kinetic Modeling (SKM): This approach develops a parametric representation of the system Jacobian without requiring explicit knowledge of rate law functional forms. The Jacobian matrix (\mathbf{J}) is constructed as (\mathbf{J} = \mathbf{N} \cdot \mathbf{\Theta}{\mathbf{x}}^{\mathbf{\mu}} \cdot \mathbf{\Lambda}), where (\mathbf{\Theta}{\mathbf{x}}^{\mathbf{\mu}}) contains normalized saturation parameters for enzyme-metabolite interactions, and (\mathbf{\Lambda}) incorporates steady-state concentrations and fluxes [7].

Parameter-Rich Kinetics: This methodology disentangles parametric dependencies from structural analysis, allowing independent specification of steady-state concentrations and reactivity values. This flexibility facilitates the analysis of network dynamics, including oscillatory behavior, directly from stoichiometric information [8].

Convenience Kinetics: This general rate law derived from a random-order enzyme mechanism can describe enzyme saturation, regulation by activators and inhibitors, and covers all possible reaction stoichiometries with a relatively small number of parameters [9].

Optimization-Based Framework Integration

Optimization techniques provide the computational machinery for parameterizing stoichio-kinetic models when experimental data are available:

Table 1: Optimization Approaches for Stoichio-Kinetic Modeling

| Optimization Method | Application Context | Key Features |

|---|---|---|

| Stoichiometric Identification [6] | Determining stoichiometric models from concentration data | Uses target factor analysis; identifies stoichiometric matrix from initial and final concentrations without exact mechanism knowledge |

| Bayesian Optimization (LVGP-BO) [10] | Mixed-variable problems with qualitative & quantitative factors | Maps qualitative factors to latent variables; efficient for expensive simulations; handles material compositions and processing conditions |

| Pareto Optimization [11] | Multi-objective problems in agent-based models | Heuristic approach for guided search of solution space; identifies non-dominated solutions when objectives conflict |

| Control Vector Parameterization [6] | Batch reactor optimization | Converts optimal control to non-linear programming problem; handles temperature and feed flow rate profiles |

The integration of these optimization methods with stoichio-kinetic modeling enables:

- Model Identification: Determining both stoichiometric and kinetic parameters from experimental data [6]

- Scale-Up Prediction: Anticipating reaction behavior in large-scale reactors with thermal exchange limitations [6]

- Dynamic Capability Analysis: Exploring oscillatory potential and stability properties of metabolic networks [7] [8]

Application Protocols

Protocol: Stoichio-Kinetic Model Identification for Fine Chemical Synthesis

This protocol outlines the procedure for developing integrated stoichio-kinetic models for fine chemical synthesis, adapted from methodologies applied to aldolic condensation of furfural on acetone [6].

Materials and Reagents

- Table 2: Essential Research Reagent Solutions

| Reagent/Solution | Function/Purpose | Critical Specifications |

|---|---|---|

| Reaction Substrates (e.g., furfural, acetone) | Primary reactants for the synthesis process | High purity; standardized concentration in appropriate solvent |

| Catalyst System (e.g., alkaline medium) | Enables reaction progression; determines reaction pathway | Precise concentration; pH control for consistent activity |

| Analytical Standards (FAc, F2Ac) | Quantification of products and byproducts | Chromatographically pure; prepared at multiple concentrations for calibration |

| Mobile Phases (for GC/LC) | Separation of reaction components for analysis | HPLC or GC grade; filtered and degassed before use |

Procedure

Experimental Data Collection

- Conduct batch reactions at controlled temperatures (e.g., 24°C, 29°C, 34°C, 40°C) under atmospheric pressure [6]

- Withdraw samples at regular time intervals for analysis

- Quench reactions appropriately to prevent further conversion

Analytical Measurement

- Quantify concentrations of substrates and products using gas chromatography (GC) or liquid chromatography (LC) [6]

- Establish calibration curves using analytical standards

- Perform triplicate measurements to ensure statistical reliability

Stoichiometric Model Identification

- Apply target factor analysis to concentration profiles to identify stoichiometric matrices [6]

- Define pseudo-reactions or pseudo-compounds to simplify complex reaction mechanisms

- Validate stoichiometric model through mass balance closure

Kinetic Parameter Estimation

- Use concentration-time profiles at different temperatures for kinetic parameter identification [6]

- Apply non-linear regression to estimate rate constants

- Validate parameters by comparing simulated and experimental concentration profiles

Model-Based Optimization

- Implement identified model for production optimization

- Determine optimal temperature profiles and reactor operation policies

- Use control vector parameterization (CVP) techniques for batch reactor optimization [6]

Troubleshooting Notes

- If model fails to predict concentration profiles accurately, verify stoichiometric assumptions and consider additional reaction pathways

- For poor parameter identifiability, design additional experiments at different initial concentrations to decouple parameter influences

- When scaling up laboratory models, account for heat and mass transfer limitations that may become significant at larger scales [6]

Protocol: Structural Kinetic Modeling of Metabolic Pathways

This protocol describes the application of Structural Kinetic Modeling (SKM) to analyze the dynamic capabilities of metabolic networks without precise knowledge of enzyme kinetic mechanisms [7].

Procedure

Network Definition and Steady-State Identification

- Define the stoichiometric matrix (\mathbf{N}) for the metabolic system

- Identify a physiologically relevant steady state (\mathbf{S}^0) with corresponding fluxes (\mathbf{v}^0) satisfying (\mathbf{N} \cdot \mathbf{v}^0 = 0)

- Determine feasible concentration ranges (Si^- \leq Si^0 \leq Si^+) and flux ranges (vi^- \leq vi^0 \leq vi^+) based on experimental data

Parameter Space Definition

- Construct the matrix (\mathbf{\Lambda}) from steady-state concentrations (\mathbf{S}^0) and fluxes (\mathbf{v}^0)

- Define saturation parameters (\theta{xi}^{\muj}) representing the normalized degree of saturation of reaction (vj) with respect to substrate (S_i)

- Constrain saturation parameters to biochemically plausible intervals (typically [0,1] for Michaelis-Menten type kinetics) [7]

Jacobian Construction and Stability Analysis

- Compute the normalized Jacobian as (\mathbf{J} = \mathbf{\Lambda} \cdot \mathbf{\Theta}_{\mathbf{x}}^{\mathbf{\mu}})

- Analyze eigenvalues to assess steady-state stability

- Identify parameter combinations leading to oscillatory dynamics or bifurcations

Statistical Exploration of Dynamic Capabilities

- Systematically sample the physiologically admissible parameter space

- Quantify the robustness of observed dynamics to parameter variations

- Identify critical parameters controlling system behavior

Case Study: Aldolic Condensation of Furfural on Acetone

Quantitative Results and Analysis

The stoichio-kinetic modeling approach was applied to the aldolic condensation of furfural (F) on acetone (Ac), a reaction producing 4-(2-furyl)-3-buten-2-one (FAc) used as aroma in food industries [6]. This synthesis valorizes residues from sugar cane treatment, making it an economically and environmentally significant process.

Table 3: Experimental Concentration Data for Aldolic Condensation at 24°C [6]

| Compound | Initial Concentration (mol) | Final Concentration (mol) | Conversion/Formation |

|---|---|---|---|

| Furfural (F) | 0.100 | 0.005 | 95% conversion |

| Acetone (Ac) | 0.500 | 0.190 | 62% conversion |

| FAc | 0.000 | 0.055 | Primary product formation |

| F₂Ac | 0.000 | 0.040 | Secondary product formation |

Table 4: Identified Kinetic Parameters at Different Temperatures [6]

| Rate Constant | 24°C | 29°C | 34°C | 40°C | Reaction Step |

|---|---|---|---|---|---|

| k₁ (L·mol⁻¹·min⁻¹) | 0.015 | 0.022 | 0.032 | 0.047 | F + Ac → FAc |

| k₂ (L·mol⁻¹·min⁻¹) | 0.025 | 0.036 | 0.052 | 0.075 | FAc + F → F₂Ac |

| k₃ (L·mol⁻¹·min⁻¹) | 0.008 | 0.012 | 0.017 | 0.025 | F + Ac → F₂Ac |

Model Application and Optimization

The identified stoichio-kinetic model was used to optimize FAc production through temperature profile optimization and operational policy development [6]. The model successfully simulated concentration evolution for reagents and products, with good agreement between experimental data and simulations for compounds that are only reactants or products. The optimization demonstrated that:

- Temperature control significantly impacts product selectivity between FAc and F₂Ac

- Optimal operating policies can maximize conversion while minimizing byproduct formation

- The model enables scale-up predictions by accounting for thermal exchange limitations in larger reactors [6]

Computational Tools and Platforms

Table 5: Essential Computational Resources for Stoichio-Kinetic Modeling

| Tool/Platform | Primary Function | Application Notes |

|---|---|---|

| Python Scientific Stack (NumPy, SciPy, Pandas) | Data manipulation, optimization algorithms, numerical integration | Extensive ecosystem for custom model development; scikit-learn for machine learning enhancement [12] |

| R Statistical Language | Statistical analysis, parameter estimation, visualization | Comprehensive statistics packages; tidyverse for data manipulation; specialized biochemical packages [12] |

| MATLAB Optimization Toolbox | Parameter identification, constrained optimization, control vector parameterization | Robust algorithms for non-linear programming problems; direct implementation of CVP techniques [6] |

| Cloud-Native Platforms (Google BigQuery, Amazon Redshift) | Large-scale data management and analysis | Handle high-dimensional parameter spaces; facilitate collaborative model development [12] |

| Containerization (Docker, Kubernetes) | Reproducible computational environments | Ensure model portability and reproducibility across research teams [12] |

Experimental Design Considerations

Effective stoichio-kinetic modeling requires carefully designed experiments to generate data sufficient for parameter identification:

- Temperature Variant Experiments: Conduct reactions at multiple temperatures to decouple kinetic parameters from concentration dependencies [6]

- Initial Rate Measurements: Determine reaction orders and initial kinetic parameters through systematic variation of initial substrate concentrations

- Time-Course Sampling: Collect sufficient temporal resolution to capture reaction dynamics, especially for complex networks with intermediates

- Mass Balance Verification: Ensure accurate quantification of all major species to validate stoichiometric assumptions

For metabolic networks, additional considerations include:

- Perturbation Experiments: Implement substrate pulses, inhibitor additions, or enzyme activity modulations to probe network responses [7]

- Isotopic Tracer Studies: Employ labeled substrates to elucidate pathway fluxes and network topology

- Multi-Omics Integration: Incorporate transcriptomic, proteomic, and metabolomic data to constrain possible network states

Challenges of Stepwise Modeling and the Combinatorial Explosion Problem

In the field of chemical kinetics and metabolic engineering, the development of accurate models for complex reaction systems is paramount for advancing research in pharmaceutical development and synthetic biology. Stepwise modeling approaches, which involve sequentially identifying reaction stoichiometries before fitting kinetic parameters, have traditionally been employed to understand these systems [2]. However, this methodological framework encounters significant computational barriers when applied to large-scale problems, primarily due to the combinatorial explosion of possible stoichiometric groupings. This application note examines these challenges within the broader context of optimization-based modeling of stoichiometries and kinetics, detailing both the limitations of conventional approaches and the advanced simultaneous methodologies that overcome them.

The combinatorial nature of forming stoichiometric groups in complex organic reaction systems creates intractable computational problems as system size increases [2]. This phenomenon, known as combinatorial explosion, occurs when the number of possible combinations grows exponentially with system complexity, rendering stepwise approaches ineffective for large-scale investigations. Recent advancements in optimization-based simultaneous modeling address these limitations through reformulated mathematical programming approaches that dramatically improve computational efficiency while maintaining model accuracy.

Quantitative Analysis of Stepwise Modeling Limitations

Computational Efficiency Comparison

Table 1 presents a quantitative comparison of computational performance between traditional stepwise modeling and modern simultaneous approaches, highlighting the significant efficiency gains achieved through advanced optimization methods.

Table 1: Computational Performance Comparison of Modeling Approaches

| Modeling Approach | Computational Method | Problem Scale | Solution Time | Key Limitations |

|---|---|---|---|---|

| Stepwise Modeling | Sequential stoichiometry identification followed by kinetics fitting | Small-scale networks | Not specified for direct comparison | Combinatorial nature of stoichiometric groups creates computational bottlenecks [2] |

| Simultaneous Modeling | Mixed Integer Linear Programming (MILP) | Complex organic reaction systems | Highly efficient compared to stepwise | Requires reformulation from MINLP and MIQP to MILP [2] |

| "Compress and Solve" Algorithm | Combinatorial compression | Tiling problem (190 solutions) | 0.88 seconds vs. 16,475 seconds for traditional methods [13] | Development of compression technology for more complex conditions ongoing [13] |

The Combinatorial Explosion Phenomenon

Combinatorial explosion presents a fundamental challenge across multiple scientific domains, particularly in chemical reaction network identification and optimization. This phenomenon occurs when the number of possible combinations grows exponentially with system complexity:

Network Identification Challenges: In complex organic synthesis reactions, particularly in pharmaceutical applications, reaction networks contain numerous intermediate compounds with concurrent and interlinked reaction paths [2]. The number of possible stoichiometric groupings increases combinatorially with system complexity, creating computational barriers that stepwise approaches cannot overcome efficiently.

Algorithmic Implications: Research in combinatorial algorithms addresses this challenge by finding similar combinations from multiple combinations, comprehensively grouping them together, and resizing the whole through compression techniques [13]. This "compress and solve" approach represents a paradigm shift from traditional methods that cut corners in calculations, enabling more accurate solutions while maintaining computational efficiency.

Simultaneous Modeling Methodologies

Optimization-Based Frameworks

Advanced simultaneous modeling methodologies have emerged to address the limitations of stepwise approaches through innovative mathematical programming techniques:

Mixed Integer Linear Programming (MILP): Reformulation of the original nonlinear optimization model into an MILP framework enables efficient identification of reaction stoichiometries and kinetic parameters from time-resolved concentration data [2]. This approach simultaneously combines stoichiometry grouping and kinetics fitting, overcoming the combinatorial challenges of stepwise methods.

Global ODE Model Structure: The simultaneous modeling method defines a global ordinary differential equation (ODE) model structure to explore reaction network structure and accompanying kinetics of complex chemical reaction systems [2]. This provides a more comprehensive framework compared to sequential identification processes used in stepwise approaches.

High-Throughput Kinetic Modeling

Recent advancements are transforming kinetic modeling capabilities, ushering in an era where large kinetic models can propel metabolic research forward:

Speed Improvements: Methodologies based on generative machine learning and novel nonlinear optimization formulations enable rapid construction of models and analysis of phenotypes, achieving speeds one to several orders of magnitude faster than predecessors [14].

Scope Expansion: Current modeling efforts focus on developing large kinetic models encompassing broad ranges of organisms and physiological conditions, with genome-scale kinetic models on the horizon [14]. These offer unique insights into metabolic processes and enable robust identification of optimal genetic and environmental interventions.

Experimental Protocols

Simultaneous Stoichiometry and Kinetics Identification Protocol

This protocol details the procedure for implementing optimization-based simultaneous modeling of stoichiometries and kinetics in complex organic reaction systems, addressing combinatorial explosion challenges.

Materials and Equipment

Table 2: Essential Research Reagent Solutions for Kinetic Modeling

| Reagent/Software Tool | Function/Purpose | Specifications |

|---|---|---|

| MATLAB with ode15s solver | Numerical integration of stiff ODE systems for concentration data simulation | MathWorks 2021 or later [2] |

| SKiMpy Framework | Semiautomated construction and parametrization of large kinetic models using stoichiometric models as scaffold | Python-based; includes built-in kinetic rate law library [14] |

| ORACLE Framework | Sampling kinetic parameter sets consistent with thermodynamic constraints and experimental data | Integrated with SKiMpy for parameter pruning based on physiologically relevant time scales [14] |

| Tellurium | Kinetic modeling tool for systems and synthetic biology applications | Supports standardized model formulations; integrates external packages for ODE simulation [14] |

| MASSpy | Kinetic model construction using mass-action rate laws or custom mechanisms | Built on COBRApy; integrates constraint-based metabolic modeling strengths [14] |

Procedure

Data Collection and Preprocessing

- Collect dynamic experimental measurements of concentration data using spectroscopic or chromatographic techniques [2].

- For simulated validation studies, generate concentration data using stiff ODE solvers (e.g., ode15s in MATLAB) with established kinetic models as reference [2].

- Preprocess data to identify candidate stoichiometries, reducing model size before optimization.

Model Formulation

- Define the global ODE model structure representing the balance between production and consumption of metabolites within the network [2].

- For homogeneous organic reaction systems, formulate the mixed integer nonlinear programming (MINLP) model to simultaneously address stoichiometry identification and kinetics fitting.

- Through reformulation from MINLP and mixed integer quadratic programming (MIQP), develop the final MILP model to improve computational efficiency [2].

Parameter Identification and Optimization

- Apply the MILP model to identify reaction stoichiometries and kinetic parameters from time-resolved concentration data [2].

- Utilize sampling algorithms to generate kinetic parameter sets consistent with thermodynamic constraints and experimental data.

- Prune parameter sets based on physiologically relevant time scales to ensure biological feasibility.

Model Validation

- Compare time-course and steady-state predictions against experimental data from various sources.

- Assess model fit to data, generalization capability to unobserved responses, and robustness across different conditions [14].

- For simulation studies, compare optimized kinetic models against true kinetic information to validate methodological effectiveness [2].

Workflow Visualization

Combinatorial Algorithm Implementation Protocol

This protocol implements cutting-edge combinatorial algorithms to overcome combinatorial explosion in large-scale reaction network identification.

Procedure

Problem Assessment

- Identify combinatorial problems in reaction network identification where the number of possible combinations grows exponentially with system complexity.

- Analyze network design patterns for fault-resistant structures considering complex conditions and budget constraints [13].

Algorithm Selection and Implementation

- Implement combinatorial algorithms that find similar combinations from multiple combinations and comprehensively group them together.

- Apply compression techniques to resize combinatorial spaces without discarding data, enabling database-like functionality for understanding combination properties [13].

- Perform calculations on compressed data to improve computational efficiency and speed while maintaining accuracy.

Performance Validation

- Compare processing times against traditional methods for benchmark problems (e.g., tiling problem with 190 solutions).

- Verify solution accuracy and completeness against established reference datasets.

- Test algorithmic scalability with increasingly complex conditions and problem sizes.

Workflow Visualization

Discussion and Future Perspectives

The challenges of stepwise modeling and combinatorial explosion represent significant hurdles in advancing kinetic modeling for complex biological and chemical systems. The development of simultaneous modeling approaches using optimization-based frameworks marks a paradigm shift in how researchers address these challenges, enabling more efficient and accurate modeling of complex reaction networks.

Future research directions should focus on further enhancing computational efficiency through advanced compression algorithms and machine learning integration [13] [14]. Additionally, the development of comprehensive parameter databases and standardized benchmarking datasets will accelerate adoption of these methodologies across pharmaceutical development and metabolic engineering applications. As these computational approaches mature, their integration with high-throughput experimental data will enable unprecedented scale and accuracy in kinetic modeling, ultimately accelerating drug development and biotechnological innovation.

The evolution of modeling frameworks from traditional Ordinary Differential Equations (ODEs) to modern flexible neural networks represents a paradigm shift in how researchers approach the optimization-based modeling of stoichiometries and kinetics. Ordinary differential equations have long served as the foundational tool for modeling dynamic systems across chemical, biological, and engineering disciplines, providing a mechanistic approach to understanding system behavior over time [15]. However, contemporary research challenges demand increasingly sophisticated approaches that can handle complex reaction networks, stiffness, nonlinearity, and high-dimensional parameter spaces while maintaining physical interpretability.

The integration of computational optimization techniques with both classical and novel modeling frameworks has enabled significant advances in predicting complex system behaviors. This transition is particularly evident in chemical kinetics and metabolic engineering, where the accurate determination of reaction parameters and network structures from experimental data remains a persistent challenge [2] [16]. The emergence of hybrid methodologies that combine first principles with data-driven learning represents the current state-of-the-art, offering enhanced predictive capability while preserving the physical consistency essential for scientific application.

Foundational ODE Frameworks and Limitations

Classical ODE Formulations

Ordinary Differential Equations provide a mathematical framework for modeling system dynamics where time is the primary independent variable. In the context of chemical kinetics, ODE systems typically describe the rate of change of species concentrations based on reaction stoichiometries and rate laws. A general ODE system for chemical kinetics takes the form:

dx/dt = F(x(t), ψ, t)

where x(t) represents the vector of species concentrations, ψ denotes the kinetic parameters, and F defines the reaction rates based on mass action or other kinetic principles [2]. These equations form the basis for mechanistic modeling of reaction systems, enabling the prediction of concentration profiles over time under specified conditions.

The classical approach to solving higher-order ODEs often involves conversion to first-order systems, which can be addressed through numerical integration techniques such as Runge-Kutta methods, Adams-Bashforth-Moulton predictor-corrector schemes, and backward differentiation formulas for stiff systems [17]. Each method presents distinct advantages regarding stability, accuracy, and computational efficiency, with selection dependent on specific problem characteristics.

Limitations in Complex Applications

Traditional ODE solvers face significant challenges when applied to complex reaction systems exhibiting stiffness, nonlinearity, or multi-scale dynamics. Stiff ODEs, characterized by widely separated time scales, necessitate impractically small time steps for explicit solvers, while implicit methods introduce substantial computational overhead [17]. Furthermore, conventional numerical methods struggle with:

- Oscillatory and exponential features in higher-order ODEs leading to stability loss

- High-dimensional parameter estimation for complex reaction networks

- Numerical dispersion and error accumulation in long-time integration

- Computational expense for systems requiring repeated solution during optimization

Table 1: Comparison of Traditional Numerical Methods for ODE-Based Kinetic Modeling

| Method Type | Representative Algorithms | Strengths | Limitations |

|---|---|---|---|

| Single-step | Euler, Runge-Kutta (RK4) | Simple implementation, self-starting | Limited stability, poor stiffness handling |

| Multi-step | Adams-Bashforth, Adams-Moulton | Higher accuracy, efficient | Not self-starting, step size constraints |

| Implicit | Backward Euler, BDF methods | Excellent stability for stiff systems | Computational cost, implementation complexity |

| Block methods | Block backward differentiation | Parallel computation potential | Ineffective for nonlinear/stiff ODEs |

Optimization-Based Modeling Frameworks

Simultaneous Stoichiometry and Kinetics Estimation

A significant advancement in kinetic modeling is the development of optimization-based frameworks that simultaneously address stoichiometry identification and kinetic parameter estimation. This approach formulates the modeling problem as a mathematical programming challenge, where the objective is to minimize the discrepancy between model predictions and experimental data while respecting physicochemical constraints [2].

The simultaneous methodology can be framed as a Mixed Integer Linear Programming (MILP) problem after reformulation from the original nonlinear optimization model. This reformulation enables efficient identification of reaction stoichiometries and kinetic parameters from time-resolved concentration data, addressing the combinatorial nature of stoichiometric grouping that challenges stepwise approaches [2]. The MILP framework balances model complexity with data compatibility, avoiding overfitting while enhancing model portability across conditions.

Parameter Estimation Framework

For complex chemical reactions, a sequential optimization framework has demonstrated effectiveness in kinetic parameter estimation. This approach employs stochastic optimization methods in conjunction with process simulators and experimental data to predict kinetic parameters consistent with the Arrhenius equation, enabling practical implementation in commercial simulation environments [16].

The methodology involves minimizing an objective function that quantifies the difference between experimental observations and model predictions:

min Σ(yexp - ymodel)²

subject to the ODE system describing the reaction kinetics and parameter bounds ensuring physical meaningfulness. The sequential approach maintains feasible solutions throughout the optimization process while generating a smaller optimization problem compared to simultaneous formulations [16].

Model Selection Protocols

With multiple potential ODE models often available for a single phenomenon, model selection becomes crucial. A testing-based approach rooted in the model misspecification framework adapts classical statistical paradigms (Vuong and Hotelling) to the ODE context, enabling comparison and ranking of diverse causal explanations without constraints of nested models [18].

The protocol employs the following steps:

- Estimate pseudo-true parameters for each candidate model via maximum likelihood

- Compute likelihood ratio statistics between competing models

- Apply hypothesis testing to determine if models differ significantly in approximation quality

- Rank models based on their divergence from the true data-generating mechanism

This approach is particularly valuable when disparate scientific theories exist about causal relations or when deciding the appropriate abstraction level in mathematical representation [18].

Neural Network-Enhanced Frameworks

Neural Ordinary Differential Equations

Neural ODEs represent a groundbreaking fusion of differential equation modeling and deep learning, parameterizing the derivative function with a neural network rather than a predefined analytical form [19]. This approach enables the learning of continuous-time dynamics directly from data, effectively creating models of "continuous depth" where the output is computed by numerically integrating the learned dynamics.

The core components of a Neural ODE model include:

- Encoder: Transforms input data into an initial hidden state (often using RNN or feedforward network)

- ODE Function: Neural network defining the continuous dynamics (dh/dt)

- ODE Solver: Numerical integration of the ODE to produce trajectory

- Decoder: Maps hidden state to observable predictions [19]

Training employs the adjoint sensitivity method to compute gradients through the ODE solver with constant memory usage, regardless of integration steps. This enables efficient learning of complex, nonlinear dynamics without discrete time steps, particularly beneficial for irregularly sampled data [19].

Neural-ODE Hybrid Block Method

For solving higher-order ODEs with oscillatory or stiff characteristics, the Neural-ODE Hybrid Block Method combines the approximate power of neural networks with the stability of block numerical methods [17]. This approach directly solves higher-order ODEs without converting to first-order systems, enhancing stability and efficiency for challenging problems.

The method integrates spectral collocation techniques with neural networks, leveraging Chebyshev and Legendre orthogonal polynomials for exceptional accuracy in complex systems. The neural network approximates solution spaces across the problem domain while the block method handles derivative computations, creating a synergistic framework that outperforms conventional solvers for stiff problems and boundary conditions [17].

Table 2: Neural Network-Enhanced Frameworks for Kinetic Modeling

| Framework | Architecture | Key Features | Application Scope |

|---|---|---|---|

| Neural ODEs | Continuous-depth networks | Adaptive computation, irregular sampling | Time-series forecasting, system identification |

| Neural-ODE Hybrid Block | Neural network + block methods | Direct higher-order ODE solution, stiffness handling | Oscillatory systems, boundary value problems |

| Chemical Reaction Neural Networks | Physics-constrained networks | Interpretable, Arrhenius/mass action compliance | Reaction kinetics discovery |

| KA-CRNNs | Kolmogorov-Arnold networks | Pressure-dependent kinetics, physical consistency | Combustion, pressure-varying systems |

| +/- ExpNet | Formation-consumption networks | Atom conservation, source term computation | Catalytic systems, multiscale simulation |

Chemistry-Specific Neural Architectures

Specialized neural architectures have emerged for chemical applications, combining physical consistency with learning capabilities. Chemical Reaction Neural Networks (CRNNs) provide an interpretable machine learning framework for discovering reaction kinetics while strictly adhering to Arrhenius and mass action laws [20]. The Kolmogorov-Arnold CRNN (KA-CRNN) extension models kinetic parameters as learnable functions of system pressure, enabling representation of pressure-dependent behavior without empirical formulations [20].

The Formation-Consumption Neural Network (+/- ExpNet) implements a physics-inspired architecture that models latent formation and consumption rates, subtracted to compute source terms while enforcing atom conservation as a hard constraint [21]. This approach learns kinetics directly from integral reactor data and integrates with computational fluid dynamics for multiscale reactive simulations.

Experimental Protocols and Implementation

Protocol: Optimization-Based Kinetic Parameter Estimation

Objective: Determine kinetic parameters from experimental concentration data for complex reaction systems.

Materials and Equipment:

- Time-resolved concentration data (HPLC, GC, or spectroscopic measurements)

- Process simulator (e.g., Aspen Plus) or custom ODE solver (MATLAB, Python)

- Stochastic optimization algorithm (differential evolution, particle swarm)

- Computational resources for iterative simulation

Procedure:

- Design Experimental Campaign

- Conduct experiments under isothermal conditions in batch or CSTR reactors

- Measure concentration profiles at sufficient time points to capture dynamics

- Vary initial conditions to enhance parameter identifiability

Define Candidate Stoichiometries

- Identify possible reactions based on detected intermediates

- Establish stoichiometric matrix covering all plausible pathways

- Set bounds for stoichiometric coefficients based on chemical feasibility

Formulate Optimization Problem

- Define objective function as sum of squared errors between experimental and predicted concentrations

- Implement ODE constraints representing mass balances

- Set parameter bounds based on physical constraints (e.g., positive rate constants)

Solve MILP Reformulation

- Apply linearization techniques to reformulate nonlinear kinetics

- Implement in optimization solver (Gurobi, CPLEX)

- Identify optimal stoichiometries and initial parameter estimates

Refine Parameters via Sequential Optimization

- Use stochastic optimizer to adjust parameters

- At each iteration, solve ODE system with current parameters

- Compare predicted and experimental profiles

- Update parameters to minimize objective function

Validate Model

- Perform cross-validation with withheld experimental data

- Assess predictive capability under new initial conditions

- Check physical consistency of parameters (e.g., Arrhenius behavior)

Troubleshooting:

- For convergence issues, refine parameter bounds or initial guesses

- For identifiability problems, collect additional experimental data under informative conditions

- For stiffness, employ specialized ODE solvers (e.g., ode15s in MATLAB)

Protocol: Neural ODE Training for Kinetic Systems

Objective: Train Neural ODE model to learn reaction kinetics from time-series data.

Materials and Equipment:

- Normalized time-series concentration data

- PyTorch or TensorFlow with ODE solver integration (torchdiffeq)

- GPU acceleration recommended for training efficiency

Procedure:

- Data Preprocessing

- Standardize concentration data (zero mean, unit variance)

- Segment into overlapping windows for training sequences

- Normalize time within each window to fixed integration interval

Architecture Specification

- Define ODE function network (typically 2-3 hidden layers with tanh activation)

- Implement encoder (GRU or LSTM for initial state estimation)

- Specify decoder (linear layer for output predictions)

- Add explicit temporal features (sin/cos transformations for periodicities)

Model Training

- Initialize parameters following standard deep learning practices

- Select ODE solver (Dormand-Prince adaptive step size recommended)

- Implement adjoint method for memory-efficient backpropagation

- Train with Adam optimizer with learning rate scheduling

Model Validation

- Assess prediction accuracy on test time series

- Evaluate extrapolation capability beyond training time horizon

- Test with irregularly sampled data to verify continuous-time nature

Interpretation and Analysis

- Extract learned dynamics for physical interpretation

- Compare with conventional kinetic models

- Validate against mechanistic knowledge

Troubleshooting:

- For training instability, reduce learning rate or employ gradient clipping

- For poor performance, adjust network architecture or hidden dimension size

- For numerical precision issues, switch to double precision or different ODE solver

Research Reagent Solutions

Table 3: Essential Computational Tools for Optimization-Based Kinetic Modeling

| Tool/Category | Specific Examples | Function | Implementation Considerations |

|---|---|---|---|

| ODE Solvers | ode15s (MATLAB), scipy.integrate.solve_ivp (Python), SUNDIALS | Numerical integration of differential equations | Select based on stiffness; implicit methods for stiff systems |

| Optimization Algorithms | Differential Evolution, Particle Swarm, NSGA-II | Parameter estimation, multi-objective optimization | Stochastic methods avoid local minima; parallelization beneficial |

| Process Simulators | Aspen Plus, COMSOL | Rigorous unit operation models, thermodynamics | Enable integration with established engineering tools |

| Neural Network Frameworks | PyTorch, TensorFlow, torchdiffeq | Neural ODE implementation, automatic differentiation | GPU acceleration essential for large models |

| Model Selection Tools | Custom Python implementation (Vuong test) | Statistical comparison of competing models | Requires careful definition of nestedness for ODE models |

| Metaheuristic Libraries | pymoo, DEAP, Platypus | Multi-objective optimization, evolutionary algorithms | Configurable population size and operators |

Visualization Frameworks

Framework Evolution Diagram

Optimization Workflow Diagram

In the field of systems biology, optimization-based modeling serves as a fundamental framework for deciphering the complex stoichiometries and kinetics of biological systems. The core challenge involves formulating a mathematical representation that can accurately predict cellular behavior by identifying reaction networks and their corresponding rate parameters from experimental data. This approach is particularly valuable for applications in drug development and bioprocess engineering, where understanding metabolic pathways is crucial for identifying therapeutic targets or optimizing production strains. The fundamental optimization problem in this context involves minimizing the difference between model predictions and experimental observations while satisfying constraints derived from physicochemical laws and biological principles [2].

The move from traditional stepwise modeling to simultaneous identification methodologies represents a significant advancement in the field. Earlier approaches suffered from computational limitations, especially when dealing with large-scale biological networks containing numerous intermediate compounds and interlinked reaction paths. Modern optimization frameworks address this challenge by integrating stoichiometric identification and kinetic parameter fitting into a unified model, enabling more efficient and accurate reconstruction of biological networks [2]. This is particularly relevant for modeling metabolic pathways in organisms used for pharmaceutical production, where both the reaction stoichiometry and the enzymatic kinetics must be precisely determined to develop reliable predictive models.

Theoretical Framework: Optimization Problem Formulation

Core Mathematical Structure

At its foundation, modeling biological reaction systems involves defining an objective function that quantifies how well a candidate model fits experimental data, subject to constraints that ensure biological feasibility. The dynamic behavior of metabolic networks is typically described by a system of Ordinary Differential Equations (ODEs) that represent mass balances for each metabolite. For a system with m metabolites and n reactions, the core dynamic model is expressed as:

dx/dt = S · v(x, p)

Where x is the vector of metabolite concentrations, S is the m × n stoichiometric matrix, and v(x, p) is the vector of reaction rates (kinetics) dependent on parameters p. The corresponding optimization problem for identifying both stoichiometries and kinetics can be formulated as [2]:

min J = ∑(xexp - xmodel)² subject to: dx/dt = S · v(x, p) vmin ≤ v(x, p) ≤ vmax S ∈ Z (stoichiometric constraints) p ≥ 0 (parameter non-negativity)

Here, the objective function J minimizes the sum of squared errors between experimental measurements (x_exp) and model predictions (x_model). The constraints include the system dynamics, bounds on reaction fluxes, integer constraints on stoichiometric coefficients (S ∈ Z), and non-negativity of kinetic parameters [2].

Advanced Optimization Formulations

For complex biological networks, the basic formulation often requires extension to more sophisticated optimization frameworks. Mixed Integer Linear Programming (MILP) has emerged as a powerful approach for handling the combinatorial nature of stoichiometric identification while maintaining computational efficiency. Through reformulation of the original nonlinear optimization model, MILP enables simultaneous identification of reaction stoichiometries and kinetic parameters from time-resolved concentration data [2].

In cases where multiple conflicting objectives must be considered, such as simultaneously maximizing product yield while minimizing metabolic burden, multi-objective optimization approaches become necessary. These methods generate a Pareto front representing trade-offs between competing objectives, allowing researchers to select appropriate operating points based on their specific requirements [22]. For biological systems with discrete decision variables or structural alternatives, recent advances in biogeography-based multi-objective discrete optimization provide frameworks for handling both continuous and discrete aspects of model identification [23].

Table 1: Key Components of the Biological Optimization Problem

| Component | Mathematical Representation | Biological Significance |

|---|---|---|

| Decision Variables | Stoichiometric coefficients (S), Kinetic parameters (p) |

Define network structure and reaction rates |

| Objective Function | J = ∑(x_exp - x_model)² (Least squares) |

Quantifies model fit to experimental data |

| Equality Constraints | dx/dt = S · v(x, p) (Mass balance) |

Ensures thermodynamic consistency |

| Inequality Constraints | v_min ≤ v(x, p) ≤ v_max |

Reflects enzyme capacity limits |

| Integer Constraints | S ∈ Z (Stoichiometric coefficients) |

Maintains biological meaning of molecular counts |

Computational Methods and Protocols

Protocol: Simultaneous Stoichiometry and Kinetics Identification

This protocol outlines the procedure for simultaneous identification of reaction stoichiometries and kinetic parameters from time-course metabolite concentration data, adapted from optimization-based modeling approaches [2].

Step 1: Experimental Data Collection and Preprocessing

- Measure time-resolved concentrations of metabolites using techniques such as LC-MS, GC-MS, or NMR spectroscopy. For the DHA production case study in Crypthecodinium cohnii, measurements included biomass growth, substrate consumption (glucose, ethanol, glycerol), and PUFA accumulation tracked via FTIR spectroscopy [24].

- Normalize concentration data to account for variations in initial conditions and measurement scales.

- Partition data into training and validation sets (typically 70%/30% split).

Step 2: Preliminary Stoichiometric Screening

- Compile candidate stoichiometries based on known biochemical transformations and potential metabolic pathways.

- Form stoichiometric groups to reduce combinatorial complexity while maintaining biological relevance.

- This preliminary screening significantly reduces the search space for the subsequent optimization [2].

Step 3: Formulate the Optimization Problem

- Define the objective function as the weighted sum of squared errors between experimental and predicted metabolite concentrations.

- Implement system dynamics as equality constraints using ODEs for mass balances.

- Incorporate inequality constraints for reaction fluxes based on enzyme capacity and thermodynamic feasibility.

- For large-scale problems, reformulate the original MINLP as an MILP to improve computational efficiency [2].

Step 4: Solve the Optimization Problem

- Implement the optimization using appropriate solvers (e.g., CPLEX, Gurobi for MILP).

- For high-dimensional parameter spaces, employ specialized frameworks like DeePMO, which uses an iterative sampling-learning-inference strategy with hybrid deep neural networks to efficiently explore parameter spaces [25].

- Validate solution robustness through multiple runs with different initial parameter guesses.

Step 5: Model Validation and Refinement

- Test the identified model against the validation dataset not used during parameter estimation.

- Perform sensitivity analysis to identify most influential parameters and refine their estimates if necessary.

- Validate predictive capability by simulating untested experimental conditions.

The following workflow diagram illustrates the iterative nature of this protocol:

Protocol: Inverse Optimal Control for Biological Systems

For dynamic biological processes where cellular behavior appears optimized for certain objectives, Inverse Optimal Control (IOC) provides a method to infer these underlying optimality principles directly from data [26].

Step 1: Experimental Design for IOC

- Design experiments that capture system dynamics across relevant conditions, measuring both state and control variables where possible.

- Ensure sufficient data sampling to reconstruct temporal trajectories of key biological variables.

Step 2: Forward Model Development

- Develop a mathematical model describing the system dynamics, typically formulated as ODEs or PDEs.

- Identify known constraints acting on the system (physical limits, resource constraints, etc.).

Step 3: Candidate Objective Function Selection

- Propose parameterized candidate objective functions based on biological knowledge (e.g., maximize growth rate, minimize energy expenditure, maximize robustness).

- Incorporate multi-criteria optimality where appropriate to handle conflicting objectives.

Step 4: Inverse Optimization

- Solve the inverse problem to identify objective function parameters that best explain the observed biological behavior.

- Account for potential switching of optimality principles during the observed time horizon.

- Quantify uncertainty in the estimated objective function parameters.

Step 5: Validation and Forward Prediction

- Validate inferred optimality principles by testing predictions against new experimental data.

- Use the identified objective functions in forward optimal control problems to predict and manipulate biological system behavior.

Case Study: DHA Production Optimization inCrypthecodinium cohnii

Application of Stoichiometric and Kinetic Modeling

A comprehensive study of Docosahexaenoic acid (DHA) production by the marine dinoflagellate Crypthecodinium cohnii demonstrates the practical application of optimization-based modeling in a biotechnological context [24]. Researchers combined fermentation experiments with pathway-scale kinetic modeling and constraint-based stoichiometric modeling to compare DHA production potential from glycerol, glucose, and ethanol.

The objective was to identify optimal substrate conditions for maximizing DHA yield, while the constraints included stoichiometric balances for central carbon metabolism and kinetic limitations of key enzymatic steps. The modeling revealed that although glycerol supported slower biomass growth compared to glucose, it achieved higher polyunsaturated fatty acids (PUFAs) fractions with DHA as the dominant component. Furthermore, glycerol demonstrated the best experimentally observed carbon transformation rate into biomass, approaching theoretical upper limits more closely than other substrates [24].

Table 2: Performance Metrics for DHA Production from Different Substrates [24]

| Substrate | Biomass Growth Rate | PUFAs Fraction | Carbon to Biomass Efficiency | DHA Dominance |

|---|---|---|---|---|

| Glycerol | Slowest | Highest | Closest to theoretical maximum | Dominant |

| Ethanol | Moderate | High | Intermediate | Significant |

| Glucose | Fastest | Lower | Further from theoretical maximum | Less pronounced |

The experimental workflow for this case study involved:

Implementation of Multi-Objective Optimization

The DHA production optimization inherently involved multiple competing objectives: maximizing DHA yield, maximizing biomass productivity, and minimizing substrate cost. This multi-objective nature required specialized optimization approaches capable of generating Pareto-optimal solutions representing trade-offs between these objectives. Recent algorithms such as the Multi-Objective Crested Porcupine Optimization (MOCPO) algorithm demonstrate how bio-inspired approaches can effectively handle such complex multi-objective problems in biological contexts [22].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Optimization-Based Modeling

| Reagent/Tool | Function/Application | Specific Examples |

|---|---|---|

| SKiMpy | Semiautomated workflow for constructing and parametrizing kinetic models | Uses stoichiometric models as scaffold; samples kinetic parameters consistent with thermodynamic constraints [14] |

| Tellurium | Kinetic modeling tool for systems and synthetic biology | Supports standardized model formulations; integrates packages for ODE simulation and parameter estimation [14] |

| MASSpy | Kinetic modeling framework built on COBRApy | Uses mass-action rate laws by default; integrates constraint-based modeling strengths [14] |

| DeePMO | Deep learning-based kinetic model optimization | Handles high-dimensional parameters via iterative sampling-learning-inference strategy [25] |

| FTIR Spectroscopy | Rapid analysis of PUFA accumulation in microbial biomass | Enables tracking of DHA production via characteristic spectral feature at 3014 cm⁻¹ [24] |

| LC-MS/GC-MS | Quantitative measurement of metabolite concentrations | Provides time-resolved data for parameter estimation and model validation |

| MILP Solvers | Numerical solution of mixed integer linear programming problems | Enables efficient stoichiometric network identification [2] |

Advanced Computational Methods and Their Real-World Applications

Mathematical programming approaches are fundamental for solving complex optimization problems in metabolic engineering and process systems engineering. Among these, Mixed-Integer Linear Programming (MILP) and Mixed-Integer Nonlinear Programming (MINLP) represent powerful frameworks for modeling decision-making problems that involve both discrete choices and continuous variables. While MILP deals with problems where the objective function and constraints are linear, MINLP accommodates nonlinear relationships, which are ubiquitous in modeling biological and chemical systems [27] [16]. The application of these techniques is particularly relevant in optimization-based modeling of stoichiometries and kinetics, enabling researchers to predict organism behavior, design metabolic pathways, and estimate kinetic parameters from experimental data [27] [16] [24]. Despite the advanced state of MILP and convex programming solvers, general MINLPs remain challenging due to complex algebraic representations and the lack of efficient convexification routines [28]. This document provides detailed application notes and protocols for reformulating and solving such problems, framed within stoichiometric and kinetic research for drug development and bioprocess optimization.

Theoretical Foundations

MILP Reformulations in Bilevel Optimization

Bilevel optimization problems involve two nested levels of decision-making: an upper-level (leader) and a lower-level (follower) problem. These arise in numerous real-world applications, including metabolic engineering where one level might optimize for biomass production while the other manages enzyme allocation [29]. When integer variables are present in the follower's problem, conventional single-level reformulations often fail.

A recent advancement presents a single-level reformulation for mixed-integer linear bilevel optimization that does not rely on the follower's value function [29]. This approach is based on:

- Convexifying the follower's problem via a Dantzig-Wolfe reformulation

- Exploiting strong duality of the reformulated problem

- Transforming the resulting nonlinear single-level problem into a MILP using standard linearization techniques

- Computing bounds on dual variables via a polynomial-time solvable problem based purely on primal problem data [29]

This reformulation enables a new branch-and-cut approach for mixed-integer linear bilevel optimization and facilitates a penalty alternating direction method for computing high-quality feasible solutions [29].

MINLP Reformulations and Global Optimization

For MINLP problems, which are common in kinetic modeling of metabolic pathways, a graphical framework based on decision diagrams (DDs) has been introduced for global optimization [28]. This approach addresses challenges that remain intractable for conventional algebraic techniques.

The core components of this framework include:

- Graphical reformulation of MINLP constraints

- Convexification techniques derived from the constructed graphs

- Efficient cutting plane methods to generate linear outer approximations

- A spatial branch-and-bound scheme with convergence guarantees [28]

This method is particularly valuable for modeling complex problem structures across different application domains that lack efficient parsers in modern global solvers.

Table 1: Key Mathematical Programming Reformulation Approaches

| Approach | Problem Type | Core Methodology | Applications in Metabolism |

|---|---|---|---|

| Dantzig-Wolfe Single-Level Reformulation [29] | Mixed-Integer Linear Bilevel | Convexification via Dantzig-Wolfe, strong duality, branch-and-cut | Metabolic network optimization with discrete enzyme allocation decisions |

| Decision Diagram-Based Framework [28] | General MINLP | Graphical constraint reformulation, convexification from graphs, spatial branch-and-bound | Kinetic model parameter estimation, pathway optimization with nonlinear kinetics |

| Sequential Optimization Framework [16] | Nonlinear Parameter Estimation | Stochastic optimization with process simulators, Arrhenius equation fitting | Kinetic parameter estimation for catalytic systems, metabolic reaction networks |

Application Protocols

Protocol 1: Bilevel Metabolic Network Optimization Using MILP

This protocol details the application of the Dantzig-Wolfe single-level reformulation for optimizing metabolic networks with discrete regulation decisions.

Experimental Workflow

Step-by-Step Procedures

Step 1: Problem Definition

- Define the upper-level (leader) objective, typically representing biotechnological goals such as maximizing product yield or biomass production

- Formulate the lower-level (follower) problem, representing cellular objectives such as maximizing growth rate or minimizing metabolic adjustment

- Identify discrete decisions in the follower problem, such as enzyme activation/deactivation or pathway knock-outs [29]

Step 2: Follower Problem Convexification

- Apply Dantzig-Wolfe reformulation to the follower's mixed-integer problem

- Express the follower's feasible set as a convex combination of its extreme points

- This transforms the original problem into a form that enables duality theory application [29]

Step 3: Single-Level Reformulation

- Replace the lower-level problem with its optimality conditions using strong duality

- The resulting single-level problem will contain complementarity constraints and nonlinearities

- Transform these nonlinearities using binary variables and big-M constraints to obtain a MILP [29]

Step 4: Solution and Validation

- Implement the branch-and-cut algorithm to solve the reformulated MILP

- Apply the penalty alternating direction method to compute high-quality feasible points when exact solution is computationally expensive

- Validate the biological feasibility of solutions by checking against known physiological constraints [27] [29]

Protocol 2: MINLP-Based Kinetic Parameter Estimation

This protocol addresses the estimation of kinetic parameters for metabolic pathways using MINLP reformulations, essential for understanding cellular metabolism and designing bioprocesses.

Experimental Workflow

Step-by-Step Procedures

Step 1: Experimental Data Collection

- Collect time-course data of metabolite concentrations and reaction fluxes

- For metabolic engineering applications, measure substrate consumption rates, biomass growth, and product formation under different conditions [16] [24]

- Design experiments to capture system dynamics across physiologically relevant ranges

Step 2: MINLP Problem Formulation

- Define the objective function, typically minimizing the difference between model predictions and experimental data

- Incorporate nonlinear kinetic expressions such as Michaelis-Menten, Hill equations, or power-law approximations

- Add relevant biological constraints:

- Mass balance constraints for metabolites

- Energy balance constraints

- Thermodynamic constraints on reaction directionality

- Homeostatic constraints on metabolite concentrations [27]

Step 3: Decision Diagram-Based Reformulation

- Construct decision diagrams to represent complex MINLP constraints graphically

- Apply graph-based convexification techniques to derive convex relaxations

- Generate linear outer approximations using cutting plane methods [28]

Step 4: Global Optimization

- Implement spatial branch-and-bound to systematically partition the search space

- Use convex relaxations to compute lower bounds for minimization problems

- Prune suboptimal regions based on bound comparisons

- Continue until convergence guarantees are satisfied [28]

Step 5: Model Validation and Implementation

- Validate estimated parameters with independent datasets not used in parameter estimation

- Implement the validated kinetic model in process simulators (e.g., Aspen Plus) for scale-up and techno-economic analysis [16]

- For metabolic engineering applications, integrate with stoichiometric models to verify feasibility at genome scale [27]

Case Study: DHA Production Optimization

A practical application of these mathematical programming approaches is demonstrated in the optimization of docosahexaenoic acid (DHA) production by Crypthecodinium cohnii from various carbon substrates [24].

Experimental Design and Data Collection

Researchers compared DHA production potential from glycerol, ethanol, and glucose by combining:

- Fermentation experiments with different substrate concentrations

- Pathway-scale kinetic modeling of central metabolism

- Constraint-based stoichiometric modeling of C. cohnii metabolism [24]

Table 2: Performance Metrics for DHA Production from Different Substrates

| Substrate | Biomass Growth Rate | PUFAs Fraction | Carbon Transformation Efficiency | Key Findings |

|---|---|---|---|---|

| Glucose | Fastest | Lowest | Moderate | Faster growth but lower PUFAs accumulation |

| Ethanol | Moderate | Moderate | Moderate | Good balance between growth and DHA production |

| Glycerol | Slowest | Highest | Closest to theoretical upper limit | Superior for DHA accumulation despite slower growth |

Data sourced from experimental results published in [24].

Mathematical Modeling Approach

The study implemented:

- Kinetic modeling to analyze enzymatic capacity of metabolic pathways towards acetyl-CoA (DHA precursor)

- Stoichiometric modeling to assess availability of metabolic resources at central metabolism scale

- Application of organism-level constraints including:

This integrated approach revealed that glycerol, despite supporting the slowest biomass growth, enabled the highest PUFA fraction where DHA was dominant, and achieved carbon transformation rates closest to theoretical upper limits [24].

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Optimization-Based Modeling

| Research Reagent | Function/Application | Example Use Case |

|---|---|---|

| Process Simulators (Aspen Plus) | Reproduction of unit operation models, thermodynamic and kinetic calculations | Kinetic parameter estimation for complex chemical/biological reactions [16] |

| Stochastic Optimization Algorithms | Global search of parameter spaces, handling nonlinearities | Parameter estimation for kinetic models of metabolic pathways [16] |

| Metabolic Network Models | Stoichiometric representation of metabolism, constraint-based analysis | Flux balance analysis for predicting metabolic capabilities [27] |

| Kinetic Model Libraries | Collection of enzymatic mechanisms and parameters | Building dynamic models of metabolic pathways [27] |

| Decision Diagram Software | Graphical representation of MINLP constraints | Global optimization of challenging MINLP problems [28] |