Scaling Metabolic Gap-Filling: Advanced Algorithms and Strategies for Large Networks

Gap-filling is a critical but computationally intensive step in refining genome-scale metabolic models (GEMs), directly impacting their predictive accuracy in drug discovery and systems biology.

Scaling Metabolic Gap-Filling: Advanced Algorithms and Strategies for Large Networks

Abstract

Gap-filling is a critical but computationally intensive step in refining genome-scale metabolic models (GEMs), directly impacting their predictive accuracy in drug discovery and systems biology. This article explores the latest computational strategies designed to overcome scalability challenges in large metabolic networks. We cover foundational concepts of metabolic gaps, examine next-generation methods from topology-based machine learning to hypothesis-driven workflows, and provide optimization techniques for efficient computation. A comparative analysis of tools and validation frameworks equips researchers and drug development professionals with the knowledge to select and implement scalable gap-filling solutions, ultimately enhancing model utility for biomedical applications.

Understanding the Scalability Challenge in Metabolic Network Gap-Filling

Metabolic gaps represent missing knowledge in genome-scale metabolic models (GEMs), which are mathematical representations of an organism's metabolic capabilities. These gaps manifest primarily as dead-end metabolites—compounds that are produced but not consumed, or consumed but not produced within the network—and phenotypic inconsistencies, where model predictions contradict experimental growth data. Identifying and resolving these gaps is crucial for creating accurate metabolic models that can reliably predict organism behavior in biotechnological and biomedical applications.

The scalability of gap-filling methods becomes particularly important when working with large metabolic networks or multiple organism models. Traditional methods often struggle with computational complexity as network size increases, prompting the development of more efficient algorithmic and machine learning approaches.

Understanding Core Concepts: FAQ

What are dead-end metabolites and why do they matter? Dead-end metabolites (DEMs) are compounds that lack the requisite reactions (either metabolic or transport) that would account for their production or consumption within the metabolic network [1]. Their presence reflects either a deficit in our representation of the network or in our knowledge of metabolism. In E. coli K-12 alone, 127 dead-end metabolites were identified from 995 network compounds, highlighting the pervasiveness of this issue [1]. DEMs act as signposts to the 'known unknowns' of metabolism and serve as starting points for database curation and experimental research.

What is the difference between gap-filling and dead-end metabolite analysis? Dead-end metabolite analysis focuses specifically on identifying metabolites that are network-isolated due to missing production or consumption reactions. Gap-filling is a broader process that addresses DEMs along with other model inconsistencies, including incorrect growth phenotype predictions. Gap-filling typically involves adding missing reactions from universal databases to resolve these issues [2] [3].

Why is scalability important in gap-filling algorithms? Large, compartmentalized metabolic models can contain thousands of reactions and metabolites, making many gap-filling algorithms computationally intractable [3]. Scalable algorithms remain efficient as model complexity increases, enabling researchers to work with comprehensive, compartmentalized models rather than simplified decompartmentalized versions that sacrifice biological accuracy [3].

What types of experimental data can help identify metabolic gaps? High-throughput phenotyping data, including growth profiles of knockout mutants under specific media conditions, can reveal inconsistencies between model predictions and experimental observations [2]. Time-course metabolomic data tracks cellular changes over time, providing dynamic insights into metabolic states that can highlight network deficiencies [4].

Troubleshooting Common Experimental Issues

Problem: Gap-filling solutions seem biologically irrelevant

- Potential Cause: The algorithm may prioritize mathematical solutions over biologically plausible ones, especially when using unweighted reaction databases.

- Solution: Use methods that incorporate biological priors. CHESHIRE utilizes topological features of metabolic networks to predict missing reactions, outperforming methods that don't use biological context [5]. DNNGIOR uses deep learning trained on >11,000 bacterial species to improve prediction relevance, achieving 14 times greater accuracy for draft reconstructions compared to unweighted gap-filling [6].

Problem: Computational time for gap-filling becomes prohibitive with large models

- Potential Cause: Non-scalable algorithms struggle with compartmentalized genome-scale models.

- Solution: Implement efficient algorithms like fastGapFill, specifically designed for compartmentalized reconstructions. This algorithm can handle models like Recon 2 (with 3,187 metabolites and 5,837 reactions) in approximately 30 minutes, compared to methods that may require 24 hours or more [3].

Problem: Model produces false-positive growth predictions

- Potential Cause: Missing regulatory constraints or incorrect essential biomass components.

- Solution: Iteratively refine model using experimental data. Use algorithms like GLOBALFIT that simultaneously match growth and non-growth data sets to correct multiple in silico growth phenotypes more efficiently than earlier methods [2].

Problem: Difficulty visualizing metabolic network dynamics

- Potential Cause: Static representations cannot adequately capture time-course data.

- Solution: Use visualization tools like GEM-Vis, which creates animated sequences of dynamically changing network maps. This method represents metabolite amounts through node fill levels, allowing researchers to observe metabolic state changes over time [4].

Methodologies & Protocols

Protocol 1: Identifying Dead-End Metabolites

Principle: Systematically detect metabolites that are produced but not consumed (or vice versa) within the metabolic network, including transport reactions.

Procedure:

- Network Compilation: Extract all metabolic reactions and transport reactions from your metabolic reconstruction.

- Metabolite-Reaction Mapping: Create a complete mapping of each metabolite to all reactions in which it participates as substrate or product.

- DEM Identification: For each metabolite, check if it has both at least one producing reaction and at least one consuming reaction (including transport).

- Classification: Tag metabolites missing either production or consumption reactions as dead-end metabolites.

- Curation: Manually inspect each DEM to identify potential missing reactions or database representation errors.

Applications: This protocol was used to identify 127 DEMs in EcoCyc's E. coli metabolic network, leading to the addition of 38 transport reactions and 3 metabolic reactions through literature curation [1].

Protocol 2: Topology-Based Gap-Filling Using CHESHIRE

Principle: Predict missing reactions purely from metabolic network topology using deep learning, without requiring experimental phenotypic data.

Procedure:

- Hypergraph Representation: Represent the metabolic network as a hypergraph where each reaction is a hyperlink connecting all participating metabolites [5].

- Feature Initialization: Generate initial feature vectors for each metabolite from the incidence matrix using an encoder-based neural network.

- Feature Refinement: Refine metabolite features using Chebyshev spectral graph convolutional network (CSGCN) to capture metabolite-metabolite interactions.

- Reaction-level Pooling: Integrate metabolite-level features into reaction-level representations using maximum minimum-based and Frobenius norm-based pooling functions.

- Scoring: Produce probabilistic scores indicating reaction existence confidence using a one-layer neural network.

Performance: CHESHIRE outperforms other topology-based methods in recovering artificially removed reactions across 926 GEMs and improves phenotypic predictions for 49 draft GEMs [5].

Protocol 3: Constraint-Based Gap-Filling with fastGapFill

Principle: Efficiently identify a near-minimal set of reactions to add from universal databases to enable growth on specified media.

Procedure:

- Problem Formulation: Identify blocked reactions in the metabolic model that cannot carry flux.

- Global Model Construction: Expand the model with a universal reaction database (e.g., KEGG) placed in each cellular compartment.

- Reaction Addition: Add intercompartmental transport reactions for metabolites in non-cytosolic compartments and exchange reactions for extracellular metabolites.

- Solution Calculation: Compute a subnetwork containing all core reactions plus a minimal number of reactions from the universal database that renders all reactions flux-consistent.

- Validation: Test the gap-filled model for growth prediction accuracy and compare with experimental data.

Scalability: fastGapFill can process large models like Recon 2 (8 compartments, 5,837 reactions) in approximately 30 minutes preprocessing and 30 minutes for the core algorithm [3].

Comparative Analysis of Gap-Filling Methods

Table 1: Comparison of Gap-Filling Algorithms and Their Scalability

| Method | Approach | Data Requirements | Scalability | Best Use Cases |

|---|---|---|---|---|

| CHESHIRE [5] | Deep learning using hypergraph topology | Network structure only | High (tested on 926 GEMs) | Draft model curation without experimental data |

| fastGapFill [3] | Linear programming optimization | Growth media specification | High (handles compartmentalized models) | Rapid gap-filling of large models with defined media |

| DNNGIOR [6] | Deep neural network | Phylogenetic context | High (trained on >11,000 bacteria) | Uncultured bacteria with incomplete genomes |

| GLOBALFIT [2] | Bi-level linear optimization | Growth and non-growth data | Medium | Resolving multiple growth phenotype inconsistencies |

| NHP [5] | Graph-based machine learning | Network structure | Medium | Small to medium networks with limited computational resources |

Table 2: Performance Metrics of Gap-Filling Methods

| Method | Accuracy | Computational Efficiency | Biological Relevance | Implementation Complexity |

|---|---|---|---|---|

| CHESHIRE | Superior in recovering removed reactions [5] | Moderate (requires GPU) | High (uses topological features) | High (specialized deep learning) |

| fastGapFill | High for core metabolic functions [3] | High (LP formulation) | Medium (mathematically driven) | Medium (COBRA toolbox) |

| DNNGIOR | F1 score 0.85 for frequent reactions [6] | High (pre-trained network) | High (incorporates phylogeny) | Medium (with pre-trained model) |

| Traditional MILP | High (optimal solutions) | Low (intractable for large models) | Medium (mathematically driven) | High (complex implementation) |

Visualization of Metabolic Gap Analysis

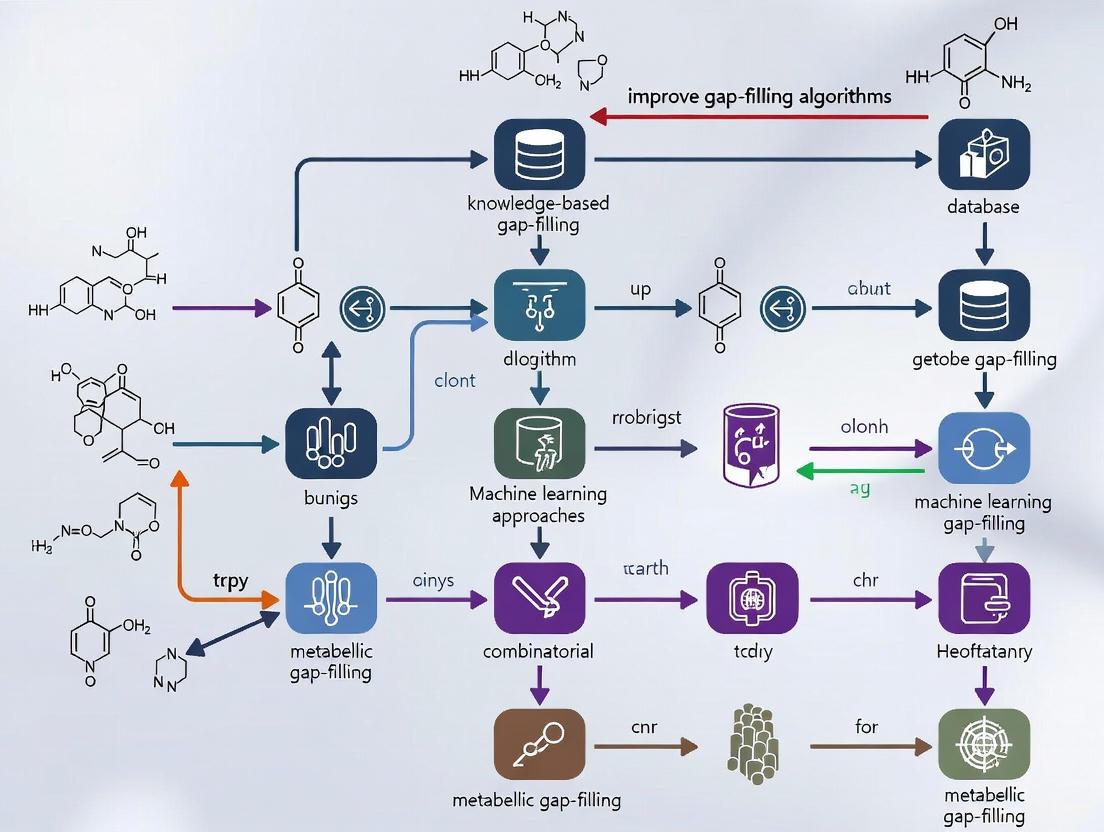

Metabolic Gap Analysis Workflow: This diagram illustrates the comprehensive process for identifying and resolving metabolic gaps, showing the integration of different gap-filling methodologies.

CHESHIRE Architecture: Visualizing the deep learning approach for topology-based gap-filling using hypergraph representation and spectral graph convolutional networks.

Research Reagent Solutions

Table 3: Essential Resources for Metabolic Gap Analysis

| Resource Type | Specific Tools/Databases | Function | Application Context |

|---|---|---|---|

| Metabolic Databases | KEGG, ModelSEED, BiGG Models | Universal reaction databases for gap-filling candidates | Source of potential reactions to fill metabolic gaps [3] |

| Software Platforms | COBRA Toolbox, Pathway Tools | Provide computational infrastructure for gap-filling algorithms | Implementation and testing of gap-filling methods [3] |

| Visualization Tools | GEM-Vis, Escher, Cytoscape | Dynamic visualization of metabolic networks and time-course data | Identifying network deficiencies and presenting results [4] |

| Gap-Filling Algorithms | CHESHIRE, fastGapFill, DNNGIOR | Computational methods for identifying missing reactions | Adding missing knowledge to metabolic reconstructions [5] [6] [3] |

| DEM Identification | EcoCyc DEM Finder Tool | Systematic detection of dead-end metabolites | Initial assessment of network completeness [1] |

Troubleshooting Guide: Scalability and Performance

Q: My gap-filling analysis is taking too long or running out of memory. What are the main strategies to make it more scalable?

A: Computational bottlenecks in gap-filling primarily arise from the explosion in problem size, especially with compartmentalized models or large universal reaction databases. The main strategies to improve scalability involve using more efficient algorithms, incorporating additional biological constraints to reduce the solution space, and employing parallel computing techniques [3] [7] [2].

- Strategy 1: Employ Efficient Algorithms. For traditional optimization-based gap-filling, use tools like fastGapFill, which is specifically designed as a computationally efficient and scalable extension to the COBRA Toolbox. It uses a series of L1-norm regularized linear programs to find a near-minimal set of reactions to add, making it tractable for compartmentalized genome-scale models [3].

- Strategy 2: Integrate Thermodynamic Constraints. Many topologically feasible solutions are biologically irrelevant because they are thermodynamically infeasible. Integrating Network Embedded Thermodynamic (NET) analysis, as in the tEFMA method, allows you to identify and remove these infeasible pathways during the computation. This can drastically reduce memory consumption and runtime by preventing the algorithm from exploring dead-end solutions [7].

- Strategy 3: Leverage Machine Learning and Hypergraph Topology. For a data-free approach, deep learning methods like CHESHIRE can predict missing reactions purely from the network's topology. This avoids the need to solve complex optimization problems repeatedly. CHESHIRE uses a hypergraph representation of the metabolic network and a Chebyshev spectral graph convolutional network to achieve high accuracy in recovering missing reactions without phenotypic data [5].

- Strategy 4: Utilize Parallel Computing. The core computation of fundamental pathway analyses, such as enumerating Elementary Flux Modes (EFMs), can be parallelized. For example, the parallelized Nullspace Algorithm distributes the computational workload across multiple processors to handle the combinatorial explosion in large networks [8].

Q: How do I choose between an optimization-based method and a machine learning method for gap-filling my draft network?

A: The choice depends on the availability of experimental data and the specific goal of your analysis.

- Use Optimization-Based Methods (e.g., fastGapFill, GlobalFit) when you have phenotypic data to validate the model, such as growth profiles or metabolite secretion data. These methods are powerful because they directly resolve inconsistencies between model predictions and experimental observations [2].

- Use Topology-Based Machine Learning Methods (e.g., CHESHIRE) when you are working with a draft reconstruction for a non-model organism and high-throughput phenotypic data is not readily available. These methods allow for rapid in silico curation and hypothesis generation before embarking on resource-intensive experiments [5].

Q: A gap-filling algorithm suggested a large number of reactions to add. How can I prioritize which ones to test experimentally?

A: This is a common challenge. You can prioritize candidate reactions using the following approaches:

- Confidence Scores: Machine learning methods like CHESHIRE output a probabilistic score for each candidate reaction, indicating the confidence of its existence. Focus on reactions with the highest scores [5].

- Minimal Set: Optimization-based methods like fastGapFill aim to find a near-minimal set of reactions that resolve all gaps. This set provides a compact starting point for validation [3].

- Gene Assignment Tools: Use algorithms that not only suggest reactions but also assign candidate genes to them. Tools that incorporate data like sequence similarity, co-expression, and phylogenetic profiles (e.g., GLOBUS) can provide stronger evidence for a reaction's presence [2].

Performance Comparison of Scalable Gap-Filling methods

The table below summarizes the performance of the fastGapFill algorithm on various metabolic reconstructions, demonstrating its scalability [3].

| Model Name | Original Model Size (Metabolites × Reactions) | Global Model Size (Metabolites × Reactions) | Number of Gap-Filling Reactions | fastGapFill Runtime (seconds) |

|---|---|---|---|---|

| Thermotoga maritima | 418 × 535 | 14,020 × 31,566 | 87 | 21 |

| Escherichia coli | 1,501 × 2,232 | 21,614 × 49,355 | 138 | 238 |

| Synechocystis sp. | 632 × 731 | 28,174 × 62,866 | 172 | 435 |

| sIEC | 834 × 1,260 | 48,970 × 109,522 | 14 | 194 |

| Recon 2 | 3,187 × 5,837 | 58,672 × 132,622 | 400 | 1,826 |

The table below compares the performance of topology-based machine learning methods in recovering artificially removed reactions from metabolic models, with AUROC (Area Under the Receiver Operating Characteristic curve) as a key metric [5].

| Method | Core Approach | Test Condition (vs. Negative Reactions) | Test Condition (vs. Real Database) |

|---|---|---|---|

| CHESHIRE | Deep learning on hypergraphs | 0.95 | 0.85 |

| NHP | Neural network on graph approximations | 0.93 | 0.80 |

| C3MM | Clique closure & matrix minimization | 0.90 | 0.75 |

| NVM (Baseline) | Node2Vec embedding & mean pooling | 0.83 | 0.72 |

Experimental Protocols

Protocol 1: Gap-Filling with fastGapFill

This protocol uses the fastGapFill algorithm to efficiently identify a minimal set of reactions to add from a universal database (e.g., KEGG) to a compartmentalized metabolic model [3].

- Input Preparation: Provide your metabolic model

Sand a universal biochemical reaction databaseU. - Preprocessing: The algorithm generates a global model

SUXby:- Placing a copy of

Uin each cellular compartment ofS. - Adding reversible transport reactions

Xfor metabolites in non-cytosolic compartments. - Adding exchange reactions for extracellular metabolites.

- Identifying previously blocked reactions

Bthat become flux-consistent (Bs) in the global model.

- Placing a copy of

- Core Computation: The

fastcorealgorithm is repurposed to compute a subnetwork ofSUXthat includes all core reactions (fromSandBs) plus a minimal number of reactions fromUX, ensuring all reactions in the resulting network are flux-consistent. - Output: The algorithm returns a set of candidate gap-filling reactions. The solutions can be prioritized by applying linear weightings to favor metabolic reactions over transport reactions during the computation.

Protocol 2: Thermodynamic-Feasibility Guided EFMA with tEFMA

This protocol uses the tEFMA package to compute only the thermodynamically feasible Elementary Flux Modes (EFMs), significantly reducing computational time and resources [7].

- Input Preparation: Provide the stoichiometric matrix of your metabolic network (converted to irreversible reactions) and metabolomics data (metabolite concentrations).

- EFM Enumeration: Use the binary null-space algorithm to iteratively generate intermediate EFMs.

- Thermodynamic Checking: At the beginning of each algorithm iteration, check every intermediate EFM for thermodynamic feasibility using Network Embedded Thermodynamic (NET) analysis. This is done by solving a linear program to verify if all reactions in the EFM can simultaneously proceed in their defined directions given the metabolite concentrations.

- Pruning: Immediately remove any intermediate EFM that is found to be thermodynamically infeasible.

- Output: The final output is the complete set of thermodynamically feasible EFMs. Removing infeasible modes early prevents the algorithm from exploring their supersets, which curbs combinatorial explosion.

The Scientist's Toolkit: Essential Research Reagents

This table lists key computational tools and databases essential for conducting scalable gap-filling analyses.

| Item Name | Function / Explanation |

|---|---|

| COBRA Toolbox | A fundamental MATLAB/Octave software suite for constraint-based modeling. It is the platform for tools like fastGapFill [3]. |

| fastGapFill | An algorithm for efficient gap-filling in compartmentalized metabolic networks, available as an extension to the COBRA Toolbox [3]. |

| CHESHIRE | A deep learning method that predicts missing reactions in metabolic models using only topological features from hypergraphs [5]. |

| tEFMA | A Java package that integrates metabolomics and thermodynamics into Elementary Flux Mode analysis to reduce computational costs [7]. |

| KEGG Reaction Database | A universal biochemical reaction database often used as a source of candidate reactions for gap-filling algorithms [3]. |

| BiGG Models | A resource of high-quality, curated genome-scale metabolic models, used as a benchmark for testing new methods [5]. |

Workflow Diagram for Scalable Gap-Filling

The diagram below illustrates the general workflow and decision points for applying scalable gap-filling techniques.

Method Comparison: Traditional vs. ML Gap-Filling

This diagram contrasts the fundamental workflows of traditional optimization-based gap-filling with the newer machine learning approach.

Troubleshooting Guides

Guide 1: Troubleshooting False-Positive Essential Gene Predictions

Problem: Your metabolic model incorrectly predicts that a gene is essential for growth (a false-positive), suggesting a gap in the metabolic network. Explanation: This often occurs due to unannotated genes or underground metabolism, where an existing enzyme possesses promiscuous activity that is not captured in the model.

Steps for Resolution:

- Identify Gaps: Systematically compare model predictions with experimental phenotype data (e.g., from gene knockout studies) to identify false essential gene predictions [9].

- Generate Hypothetical Reactions: Use an extensive biochemical database that includes both known and hypothetical reactions, such as the ATLAS of Biochemistry, to propose potential reactions that could fill the identified gap [9].

- Annotate Candidate Genes: Employ a tool like BridgIT to identify which enzymes in the organism's genome could potentially catalyze the proposed hypothetical reactions, providing gene-protein-reaction (GPR) associations [9].

- Score and Select Solutions: Rank the proposed gap-filling reaction sets using a scoring system that considers:

- Validate the Extended Model: Integrate the highest-ranked reactions into your model and validate its improved performance against additional experimental data (e.g., growth on different carbon sources) [9].

Guide 2: Resolving Inability to Produce Biomass in a Draft Model

Problem: A newly reconstructed draft metabolic model is unable to produce biomass when simulated, indicating missing critical reactions. Explanation: Draft models are frequently incomplete due to missing annotations, especially for transporters, leading to gaps in essential metabolic pathways [10].

Steps for Resolution:

- Perform Gapfilling: Use a gapfilling algorithm to find a minimal set of reactions that, when added to the model, enable biomass production [10].

- Choose Appropriate Media:

- For initial gapfilling, using a minimal media is often recommended, as it forces the algorithm to add reactions necessary for the biosynthesis of many common substrates [10].

- Using "Complete" media (an abstraction where any compound with a known transporter is available) will result in a different solution, often adding more transport reactions [10].

- Inspect the Gapfilling Solution: After running the gapfilling app, examine the output to see which reactions were added. Reactions marked with "=>" or "<=" are new additions, while reactions whose directionality was changed to reversible ("<=>") were already present in the draft model [10].

- Manual Curation: The gapfilling solution is a prediction and may require manual adjustment. If certain added reactions are biologically implausible, you can force their flux to zero and re-run the gapfilling to find an alternative solution [10].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental cause of gaps in metabolic network models? Gaps arise primarily from incomplete biochemical knowledge and genomic information. Key sources include: (1) Unannotated genes, where a gene exists in the genome but its metabolic function is unknown; (2) Underground metabolism, where enzymes exhibit promiscuous activities not yet documented in databases; and (3) Database biases, where reliance on known reactions from limited databases fails to capture the full scope of possible biochemistry [9].

Q2: Our model fails to grow on a minimal medium even after gapfilling. What should we check? First, verify that the correct medium condition was specified during the gapfilling process. If the media field was left blank, the algorithm defaults to "Complete" media, which may not force the addition of all necessary biosynthetic pathways. Re-run the gapfilling, explicitly selecting a minimal media condition relevant to your organism [10].

Q3: How does the gapfilling algorithm decide which reactions to add? The gapfilling algorithm uses an optimization strategy (typically Linear Programming) to find a set of reactions that enables a defined objective, such as biomass production, with minimal cost. Reactions are assigned penalties; non-KEGG reactions, transporters, and reactions with uncertain thermodynamics are often penalized more heavily, steering the solution toward biologically preferred reactions [10].

Q4: What is the advantage of using hypothetical reactions from ATLAS over known reactions from KEGG for gapfilling? Using ATLAS, which contains known and hypothetical reactions, dramatically increases the number of potential solutions for filling a metabolic gap. One study found an average of 252.5 solutions per rescued reaction using ATLAS, compared to only 2.3 solutions using the KEGG database. This greatly enhances the ability to explore underground metabolism and identify novel enzyme functions [9].

Q5: What is the difference between a balanced complex and a concordant complex in metabolic network analysis? A balanced complex has a net formation rate of zero in every possible steady state. Concordant complexes are pairs (or groups) of complexes whose activities maintain a fixed, non-zero ratio across all steady states. All balanced complexes are mutually concordant, but concordance also captures more complex, multi-reaction dependencies that reveal the hidden simplicity and tight coordination in metabolic networks [11].

Quantitative Data on Metabolic Gaps and Solutions

Table 1: Comparative Performance of Gap-Filling Reaction Databases

This table summarizes a case study comparing the use of different reaction databases for filling gaps in an E. coli metabolic model [9].

| Database Type | Database Name | Number of Rescued Reactions | Average Solutions per Rescued Reaction | Key Advantage |

|---|---|---|---|---|

| Known Reactions Only | KEGG | 53 | 2.3 | Solutions are based on well-established biochemistry. |

| Known + Hypothetical Reactions | ATLAS of Biochemistry | 93 | 252.5 | Enables discovery of novel biochemistry and underground metabolism. |

Table 2: Key Reagent Solutions for Metabolic Network Research

This table lists essential computational tools and databases used in modern metabolic network reconstruction and gap-filling.

| Research Reagent | Function/Brief Explanation |

|---|---|

| ATLAS of Biochemistry | An extensive database of both known and hypothetical biochemical reactions, used as a comprehensive reaction pool for gap-filling to propose novel solutions [9]. |

| BridgIT | A computational tool that links biochemical reactions to known enzymes by identifying similarities in substrate reactive sites, facilitating gene annotation for gap-filled reactions [9]. |

| NICEgame Workflow | An integrated workflow (Network Integrated Computational Explorer for Gap Annotation of Metabolism) that systematically identifies and reconciles knowledge gaps in metabolic models using ATLAS and BridgIT [9]. |

| ModelSEED | A platform and biochemistry database used for high-throughput reconstruction, optimization, and analysis of genome-scale metabolic models (GEMs) [10]. |

| SCIP/GLPK Solvers | Optimization solvers used in constraint-based modeling. GLPK is used for pure-linear problems, while SCIP is used for more complex problems involving integer variables, such as some gapfilling formulations [10]. |

Experimental Protocols

Protocol 1: The NICEgame Gap-Filling Workflow

Purpose: To systematically identify and reconcile knowledge gaps in a genome-scale metabolic model (GEM) using both known and hypothetical reactions.

Methodology:

- Input Preparation: Gather the target GEM and high-throughput gene essentiality data (e.g., from knockout experiments) [9].

- Identify False Essentiality: Simulate single-gene knockout phenotypes using the model and compare the results with experimental data. False essential gene predictions (where the model predicts no growth but the experiment shows growth) indicate potential metabolic gaps [9].

- Propose Gap-Filling Solutions: For each gap, query a comprehensive reaction database (e.g., ATLAS) to find all possible reaction sets that would rescue growth [9].

- Annotate with Enzymes: Use BridgIT to identify potential enzymes in the organism's genome that could catalyze the proposed gap-filling reactions [9].

- Rank Solutions: Apply a scoring system to rank the reaction subsets based on thermodynamic feasibility, minimal model impact, and the confidence of the enzyme-reaction association [9].

- Validate the Expanded Model: Incorporate the highest-ranked reactions into the GEM and validate the improved model against a separate set of experimental phenotyping data [9].

Protocol 2: Identifying Concordant Complexes to Simplify Network Analysis

Purpose: To efficiently identify multireaction dependencies (concordant complexes) in a metabolic network, which can reveal functional modules and simplify the apparent complexity of the network.

Methodology:

- Network Representation: Represent the metabolic network as a set of reactions, species, and complexes (the left- and right-hand sides of reactions) [11].

- Formulate Steady-State Equations: Describe the system using the stoichiometric matrix, which can be derived from the species-complex matrix (Y) and the graph incidence matrix (A). The steady-state is given by dx/dt = YAv = 0, where v is a flux distribution [11].

- Define Complex Activity: For a given flux distribution v, the activity of a complex j is defined as αj = Aj · v, which is the net flux through that complex [11].

- Test for Concordance: Complexes i and j are concordant if there exists a non-zero constant γij such that αi - γijαj = 0 for every feasible steady-state flux distribution v in the set S [11].

- Efficient Identification: Use Linear Fractional Programming to computationally determine all pairs of concordant complexes in a large-scale network [11].

Workflow and Pathway Diagrams

Diagram Title: NICEgame Gap-Filling Workflow

Diagram Title: Primary Sources of Metabolic Gaps

Diagram Title: Gapfilling Algorithm Formulations

The Impact of Incomplete Networks on Drug Target Prediction and Phenotypic Forecasting

Frequently Asked Questions

FAQ 1: Why does my metabolic model consistently produce false-negative essential gene predictions, and how can I resolve this? False negatives often arise from knowledge gaps in the metabolic reconstruction, where the model lacks reactions that exist in the biological system. This can be addressed through computational gap-filling. A workflow like NICEgame uses extensive databases of known and hypothetical biochemical reactions to propose thermodynamically feasible solutions that reconcile these false predictions, significantly improving model accuracy [9].

FAQ 2: My phenotypic screen identified a hit, but I don't know its protein target. What in-silico methods can generate testable hypotheses? You can use platforms that combine ligand and protein-structure information. One approach involves fragmenting the hit compound and comparing these fragments to a database of protein-bound ligands from the PDB. This identifies similar sub-pockets, allowing the platform to propose and rank potential macromolecular targets in the pathogen, along with a predicted binding pose for your compound [12].

FAQ 3: How can I integrate metabolomics data to find a drug's off-targets? An effective strategy is a multi-layered workflow. This involves analyzing global metabolomics data with machine learning to identify mechanism-specific perturbations, using metabolic modeling to pinpoint pathways whose inhibition matches the data, and performing structural analysis to find proteins with active sites similar to the drug's known target. This integrated approach prioritizes candidate off-targets for experimental validation [13].

FAQ 4: Are simplified or incomplete network models still useful for predicting cell-fate decisions? Yes, due to a property known as minimal frustration in biological regulatory networks. This feature ensures that even large, complex networks exhibit simple, low-dimensional steady-state behavior. Consequently, simpler network models that lack many nodes and edges can successfully recapitulate the core steady states corresponding to biological cell fates, making them useful predictive tools [14].

Troubleshooting Guides

Problem: High False-Prediction Rates in Metabolic Models

Issue: Your Genome-Scale Metabolic Model (GEM) produces a high rate of false essentiality predictions, indicating gaps in the network.

Background: Gaps are caused by unannotated genes, promiscuous enzymes, and unknown reactions. Traditional gap-filling that relies only on known biochemical databases offers limited solutions [9].

Solution: Implement a comprehensive gap-filling workflow.

Protocol: The NICEgame Gap-Filling Workflow

- Identify False Predictions: Compare your model's gene essentiality predictions with experimental phenotype data (e.g., from gene knockout studies) in a defined medium [9].

- Source a Comprehensive Reaction Pool: Use an extensive biochemical database like ATLAS, which contains both known and hypothetical reactions, to provide a wider set of potential gap-filling solutions [9].

- Propose and Score Solutions: The algorithm will propose multiple reaction sets to fill each gap. These subsets are scored and ranked based on:

- Thermodynamic feasibility.

- Minimal introduction of new metabolites.

- Minimal introduction of novel enzyme functions [9].

- Annotate Genes: Use a tool like BridgIT to identify and score possible enzyme-encoding genes in the organism's genome for the proposed gap-filling reactions [9].

- Validate the Expanded Model: Test the performance of the gap-filled model against a new set of experimental phenotype data to validate the accuracy of its predictions [9].

Expected Outcome: Table: Example Performance Improvement from Gap-Filling

| Metric | Original Model (iML1515) | Gap-Filled Model (iEcoMG1655) | Change |

|---|---|---|---|

| Gene Essentiality Predictions (Accuracy) | Baseline | +23.6% | Improvement [9] |

| False Essential Gene Gaps Identified | 148 | - | - [9] |

| Gaps Rescued with KEGG Reactions | 53 | - | Limited [9] |

| Gaps Rescued with ATLAS Reactions | 93 | - | Significant [9] |

Problem: Target Deconvolution for Phenotypic Screening Hits

Issue: You have a compound active in a phenotypic screen but lack knowledge of its molecular target, hindering lead optimization.

Background: Experimental target identification is complex and time-consuming. Computational prediction can rapidly generate testable hypotheses by leveraging structural and systems biology data [12].

Solution: Utilize a fragment-based target prediction platform.

Protocol: Fragment-Based Target Prediction

- Input the Phenotypic Hit: Start with the 2D chemical structure of your active compound [12].

- Fragment the Compound: Generate multiple molecular fragments from the hit in silico to reduce molecular complexity, analogous to fragment-based drug discovery [12].

- Map to a PDB Fragment Database: Compare the generated fragments against a pre-computed database of fragments derived from small-molecule ligands in the Protein Data Bank (PDB). Identify the PDB fragment most similar to your hit's fragment [12].

- Search Pathogen Proteome for Similar Cavities: Identify proteins within the pathogen's proteome (from crystal structures or high-quality homology models) that possess a binding cavity similar to the one that binds the identified PDB fragment [12].

- Dock and Rank Hypotheses: Dock the entire phenotypic hit molecule into the proposed binding site of candidate targets. Generate a ranked list of potential targets and visualize the proposed binding mode to rationalize structure-activity relationships (SAR) [12].

The following workflow diagram illustrates the multi-stage process of this target prediction method:

Problem: Identifying Off-Targets from Metabolomic Perturbation Data

Issue: Metabolomics data shows your drug causes widespread perturbation, but it's difficult to pinpoint the specific protein off-targets responsible.

Background: Machine learning can find patterns in metabolomics data but lacks interpretability. Combining it with mechanistic models improves target identification resolution [13].

Solution: Apply a multi-scale analysis framework.

Protocol: Integrated Metabolomics-Guided Off-Target Discovery

- Acquire and Preprocess Data: Perform untargeted global metabolomics on the pathogen treated with your drug versus untreated control across multiple growth phases [13].

- Machine Learning Analysis: Train a multi-class classifier (e.g., logistic regression) on a reference dataset of metabolomic responses to antibiotics with known mechanisms. Use this to identify if your drug's metabolic signature aligns with a known mechanism of action [13].

- Metabolic Modeling: Use a genome-scale metabolic model (GEM) to simulate the effect of reaction knockouts. Identify pathways whose inhibition results in growth defects that can be rescued by the same metabolites that rescue your drug's effect [15] [13].

- Structural Similarity Analysis: Compare the 3D structure and active site properties of the drug's known target to other proteins in the pathogen's proteome to identify potential off-targets with similar binding sites [13].

- Experimental Validation: Prioritize candidate targets from the above steps for validation using gene overexpression, in vitro enzyme activity assays, and cellular imaging [13].

The following chart outlines the sequential stages of this integrative approach:

The Scientist's Toolkit

Table: Key Research Reagent Solutions

| Item | Function in Context | Example Use Case |

|---|---|---|

| ATLAS of Biochemistry | A database of both known and hypothetical biochemical reactions used for comprehensive metabolic network gap-filling [9]. | Provides a large solution space of possible reactions to reconcile false predictions in GEMs, moving beyond limited known reactions [9]. |

| BridgIT | A computational tool that links biochemical reactions to known enzyme sequences, suggesting candidate genes for gap-filled reactions [9]. | Annotates proposed reactions from gap-filling with possible genes in the organism's genome, facilitating experimental testing [9]. |

| Protein Data Bank (PDB) | A repository of 3D structural data of proteins and protein-ligand complexes [12]. | Serves as a source for ligand fragmentation and cavity comparison in fragment-based target prediction platforms [12]. |

| Genome-Scale Model (GEM) | A computational reconstruction of an organism's metabolism that allows for simulation of metabolic fluxes using constraints [15]. | Used with Flux Balance Analysis (FBA) to predict gene essentiality and simulate the metabolic impact of drug treatments or gene knockouts [15]. |

| Knowledge Graph (e.g., PPIKG) | A network representing relationships between biological entities (e.g., proteins, drugs) [16]. | Helps narrow down candidate drug targets from hundreds to a more manageable number for further computational or experimental validation [16]. |

Next-Generation Scalable Algorithms and Workflows for Efficient Gap-Filling

Performance Benchmarks: CHESHIRE vs. State-of-the-Art Methods

The table below summarizes the quantitative performance of CHESHIRE against other topology-based machine learning methods during internal validation on high-quality BiGG models. The evaluation is based on the ability to recover artificially removed reactions, a standard test for gap-filling algorithms [5].

Table 1: Performance Comparison on BiGG Models (n=108 models) [5]

| Method | Architecture | AUROC (Average) | Key Limitation |

|---|---|---|---|

| CHESHIRE | Hypergraph Learning with Chebyshev Spectral Graph Convolutional Network | Best Performance | Requires negative sampling during training |

| NHP (Neural Hyperlink Predictor) | Neural Network (approximates hypergraphs as graphs) | Lower than CHESHIRE | Loss of higher-order information |

| C3MM (Clique Closure-based Coordinated Matrix Minimization) | Integrated training-prediction (Matrix Minimization) | Lower than CHESHIRE | Limited scalability; model must be re-trained for each new reaction pool |

| Node2Vec-mean (NVM) | Random walk-based graph embedding with mean pooling | Baseline Performance | Simple architecture without feature refinement |

Key Experiment: Internal Validation Protocol

This protocol tests a model's ability to recover known, artificially removed reactions, which is crucial for verifying its gap-filling capability before applying it to real-world, unknown gaps [5].

Detailed Methodology

- Input Preparation: Start with a high-quality, curated Genome-Scale Metabolic Model (GEM), such as those from the BiGG database [5].

- Reaction Set Splitting: Split the metabolic reactions in the GEM into a training set (e.g., 60%) and a testing set (e.g., 40%). Perform this over multiple Monte Carlo runs (e.g., 10 runs) to ensure statistical robustness [5].

- Negative Sampling: Create artificial negative (non-existent) reactions for both training and testing sets at a 1:1 ratio with the positive reactions. This is done by replacing half of the metabolites in each positive reaction with randomly selected metabolites from a universal metabolite pool. This step teaches the model to distinguish between plausible and implausible reactions [5].

- Model Training & Testing:

- For the training phase, combine the training set of positive reactions with its derived negative reactions.

- For the testing phase, combine the testing set of positive reactions with its derived negative reactions.

- For a more challenging test, the testing set can be mixed with real reactions from a universal database (like KEGG) instead of artificial negatives [5].

- Performance Evaluation: Use classification performance metrics like the Area Under the Receiver Operating Characteristic curve (AUROC) to evaluate the model's prediction accuracy [5].

Table 2: Key Resources for Metabolic Network Gap-Filling Experiments [5] [3]

| Item Name | Function / Purpose in the Experiment |

|---|---|

| Curated GEMs (e.g., BiGG Models) | Provide the high-quality, structured metabolic network data used as the gold standard for training and internal validation [5]. |

| Universal Reaction Database (e.g., KEGG) | Serves as a comprehensive pool of known biochemical reactions from which candidate reactions can be drawn to fill gaps in a draft model [3]. |

| Reaction Pool | A curated list of candidate reactions (often sourced from universal databases) from which the gap-filling algorithm selects reactions to add to the model [5]. |

| Metabolite Pool | A comprehensive list of known metabolites used during the negative sampling process to create artificial, implausible reactions for model training [5]. |

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My model has limited phenotypic data. Can I still use CHESHIRE for gap-filling?

Yes. A key advantage of CHESHIRE and other topology-only methods is that they do not require experimental phenotypic data as input. They rely purely on the topological structure of the metabolic network, making them ideal for non-model organisms where such data is scarce or unavailable [5].

Q2: How does CHESHIRE handle the complexity of metabolic networks better than graph-based methods?

Metabolic networks are inherently hypergraphs, where a single reaction (a hyperedge) can connect multiple metabolites (nodes). Traditional graph-based methods force this structure into a simple graph where edges connect only two nodes, which loses crucial higher-order information. CHESHIRE operates directly on the hypergraph structure, preserving this information and leading to more accurate predictions [5] [17].

Q3: I am working with a very large draft model. Will CHESHIRE be scalable enough?

CHESHIRE was designed to be computationally efficient. Evidence from internal validation shows it was successfully tested on 108 BiGG models and 818 AGORA models, demonstrating its scalability. This is a significant advantage over methods like C3MM, which have limited scalability and require re-training for every new reaction pool, making them cumbersome for large models [5].

Q4: What are the most common failure points when setting up a CHESHIRE experiment, and how can I avoid them?

Table 3: Common Troubleshooting Guide

| Issue | Potential Cause | Solution |

|---|---|---|

| Poor prediction accuracy on your model. | The universal reaction pool or metabolite pool is too limited or not relevant. | Curate a comprehensive, high-quality reaction database tailored to your organism's phylogeny. |

| Model fails to learn or performs poorly during training. | Issues with negative sampling, such as generating unrealistic "negative" reactions that are actually biochemically plausible. | Review and refine the negative sampling strategy. Ensure the random metabolite replacement creates truly implausible reactions [5]. |

| The gap-filled model produces biologically unrealistic flux predictions. | Topology-based methods lack biochemical context (e.g., reaction directionality, metabolite energetics). | Use CHESHIRE's output as a prioritized candidate list. Follow up with biochemical validation and integration with constraint-based modeling techniques that incorporate directionality and thermodynamic constraints [17]. |

Frequently Asked Questions (FAQs)

Q1: What is the ATLAS of Biochemistry and how does it support hypothesis generation in metabolic engineering?

The ATLAS of Biochemistry is a comprehensive repository of all theoretical biochemical reactions based on known biochemical principles and compounds. It was developed using the computational framework BNICE.ch along with cheminformatic tools to assemble the entire theoretical reactome from the known metabolome through expansion of the known biochemistry in the KEGG database. ATLAS includes more than 130,000 hypothetical enzymatic reactions that connect two or more KEGG metabolites through novel enzymatic reactions not previously reported in living organisms. This repository allows researchers to search for all possible metabolic routes from any substrate to any product, providing potential targets for protein engineering and synthetic biology applications [18].

Q2: What percentage of previously unintegrated KEGG metabolites does ATLAS incorporate into novel enzymatic reactions?

ATLAS reactions successfully integrate 42% of KEGG metabolites that were not previously present in any KEGG reaction into one or more novel enzymatic reactions. This significantly expands the biochemical reaction space available for metabolic engineering and pathway design [18].

Q3: How can researchers access the ATLAS of Biochemistry database?

The generated repository is organized in a web-based database accessible at: http://lcsb-databases.epfl.ch/atlas/ [18].

Q4: What are the common scalability challenges when using ATLAS for gap-filling in large metabolic networks?

The primary scalability challenges include computational resource demands when processing over 130,000 theoretical reactions, identifying biologically relevant pathways among numerous possibilities, and prioritizing hypothetical enzymatic activities for experimental validation. The database's sheer size requires efficient filtering algorithms to make gap-filling computationally tractable for genome-scale metabolic models [18].

Troubleshooting Guides

Problem 1: High Computational Load During Network Gap-Filling

Symptoms: Slow processing times, memory overflow errors, or inability to complete pathway identification when using ATLAS for large-scale metabolic models.

Possible Causes & Solutions:

| Cause | Solution | Verification Method |

|---|---|---|

| Large search space from 130,000+ reactions | Apply reaction filters based on enzyme commission numbers or reaction centers | Monitor reduction in candidate reaction sets |

| Inefficient pathway ranking | Implement multi-criteria prioritization (thermodynamics, enzyme existence) | Compare pathway scores pre/post optimization |

| Memory limitations | Use chunked processing of metabolic modules | System resource monitoring during computation |

Prevention Strategies: Implement pre-filtering of ATLAS reactions to include only relevant biochemical domains for your specific organism or metabolic subsystem. Establish quantitative thresholds for pathway feasibility before initializing large-scale gap-filling analyses [18].

Problem 2: Identification of Theoretically Possible but Biologically Irrelevant Pathways

Symptoms: Identified pathways contain enzymatically challenging reactions, require incompatible compartmentalization, or generate toxic intermediates.

Possible Causes & Solutions:

| Cause | Solution | Validation Approach |

|---|---|---|

| Missing constraint integration | Incorporate thermodynamic feasibility checks | Calculate reaction Gibbs free energy |

| Organism-specific limitations | Apply compartmentalization constraints | Compare with subcellular localization data |

| Toxic intermediate accumulation | Screen for known toxic metabolites | Cross-reference with metabolite toxicity databases |

Verification Protocol:

- Apply organism-specific reaction rules to filter ATLAS output

- Check pathway stoichiometry and energy balances

- Validate against known metabolic network topology

- Test for pathway redundancy and essential reactions [18]

Problem 3: Experimental Validation Challenges for ATLAS-Predicted Novel Reactions

Symptoms: Difficulty expressing putative enzymes, inability to detect predicted metabolites, or low reaction fluxes in engineered strains.

Troubleshooting Workflow:

Systematic Approach:

- Verify enzyme expression and folding using Western blot and activity assays

- Confirm cofactor availability and compatibility with host metabolism

- Optimize reaction conditions including pH, temperature, and substrate concentrations

- Test enzyme variants from different organism sources

- Validate analytical methods for detecting novel metabolites [18]

Research Reagent Solutions

Essential materials and computational tools for implementing ATLAS-driven metabolic engineering:

| Research Reagent | Function/Application | Specification Notes |

|---|---|---|

| BNICE.ch Framework | Generate novel biochemical reactions using reaction rules | Required for expanding beyond known biochemistry [18] |

| KEGG Compound Database | Source of known metabolites for pathway reconstruction | Essential reference for mapping metabolic networks [18] |

| Cheminformatic Tools | Analyze molecular structures and predict reaction centers | Compatible with ATLAS reaction prediction pipeline [18] |

| Pathway Analysis Software | Calculate route from substrate to product | Should handle both known and hypothetical reactions [18] |

| Protein Engineering Tools | Create enzymes for novel ATLAS reactions | Critical for validating hypothetical enzymatic activities [18] |

Experimental Protocol: Validating ATLAS-Predicted Novel Pathways

Objective: Experimental verification of a novel biochemical pathway predicted by ATLAS of Biochemistry.

Workflow Diagram:

Step-by-Step Methodology:

Pathway Retrieval

- Query ATLAS database for pathways connecting desired substrates to products

- Apply organism-specific constraints to filter plausible pathways

- Select top 3-5 candidate pathways based on:

- Minimal novel steps required

- Thermodynamic feasibility

- Enzyme engineering complexity

Enzyme Selection & Engineering

- Identify homologous enzymes for predicted reaction steps

- For novel reactions without homologs:

- Apply structure-based enzyme design

- Test promiscuous activities from related enzyme families

- Use directed evolution for activity optimization

In Vitro Validation

- Express and purify candidate enzymes

- Establish assay conditions for novel reactions

- Detect intermediate and final products using:

- LC-MS for metabolite identification

- NMR for structural confirmation

- Determine kinetic parameters (Km, kcat)

In Vivo Implementation

- Construct expression vectors for pathway enzymes

- Engineer host strain with necessary genetic modifications

- Implement dynamic regulation for balanced expression

- Monitor cell growth and product formation

Pathway Optimization

- Apply flux balance analysis to identify bottlenecks

- Fine-tune enzyme expression levels

- Implement cofactor recycling systems

- Scale up production in bioreactors

Quantitative Success Metrics:

- Pathway Functionality: Production of target metabolite above detection limits

- Flux Efficiency: Minimum 30% of theoretical maximum metabolic flux

- Growth Compatibility: Engineered strain growth rate ≥70% of wild-type

- Product Titer: Economically viable yields for scaling (compound-dependent) [18]

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of combining NICEgame with BridgIT over traditional gap-filling methods?

Traditional gap-filling methods are limited to databases of known biochemical reactions, which can restrict solutions for reconciling metabolic gaps [9]. The integrated NICEgame and BridgIT framework uses the ATLAS of Biochemistry, a database of known and over 150,000 hypothetical reactions, to explore a vastly larger biochemical space [19] [9]. This allows the workflow to propose novel biochemical capabilities and identify candidate genes for these reactions, systematically exploring an organism's underground metabolism and leading to more complete functional annotation [19] [9].

Q2: What specific quantitative improvement does this framework offer for genome annotation?

In a case study on the E. coli model iML1515, the framework identified gaps linked to 152 false essentiality predictions. It proposed 77 new reactions associated with 35 candidate E. coli genes, reconciling 47% of the identified gaps [19] [9]. This enhanced the model's accuracy for gene essentiality predictions on 15 carbon sources by 23.6% [9].

Q3: How does the framework rank alternative gap-filling solutions?

The framework uses a scoring system to rank alternative reaction sets. It penalizes solutions that introduce longer pathways (energetically costly), add new metabolites, or propose novel enzyme functions not present in the original model. Reactions annotated by BridgIT with higher confidence scores are favored [9].

Q4: My research involves non-model organisms with limited phenotypic data. Can this framework still be applied?

Yes. A key strength of the NICEgame workflow is that it can be applied to any organism with a Genome-scale Metabolic Model (GEM) and functions with open-source software [19]. While the initial identification of metabolic gaps is enhanced by comparing in silico predictions with experimental phenotyping data (e.g., gene knockout studies), the gap-filling process itself leverages the ATLAS of Biochemistry and BridgIT, which are not dependent on an organism's specific experimental data [19].

Troubleshooting Guides

Issue: Low Yield of Plausible Gap-Filling Solutions

Problem: The workflow runs, but the proposed gap-filling reaction sets are biologically implausible, introduce too many new metabolites, or are thermodynamically unfavorable.

Solutions:

- Check Reaction Pool Parameters: NICEgame allows the use of different subsets of the ATLAS database. To reduce uncertainty and complexity, start with the "E. coli metabolites subset," which adds reactions involving only metabolites already in your GEM, thus avoiding an expansion of the metabolite space [19].

- Review Thermodynamic Feasibility: The workflow includes an assessment of the thermodynamic feasibility of suggested reactions. Ensure this step is activated and carefully review the scores. Prioritize solutions with higher thermodynamic feasibility [19] [9].

- Adjust Solution Ranking Criteria: Revisit the scoring system's weights. Increase the penalty for solutions that introduce a large number of new reactions or metabolites and those that add redundant functionality to the network [19] [9].

Issue: Poor Confidence in Candidate Gene Annotations

Problem: The BridgIT tool assigns low confidence scores to the candidate genes proposed for the gap-filling reactions.

Solutions:

- Validate with Genomic Context: Do not rely solely on the BridgIT score. Perform additional checks on the genomic context of the candidate gene (e.g., operon structure, co-expression data) to see if it supports a metabolic function [9].

- Consider Enzyme Promiscuity: Many of the candidate genes identified may function through enzyme or substrate promiscuity. In the E. coli case, 33 of the 35 candidate genes were already present in the original model but were assigned new, promiscuous functions [9]. Consult literature for known promiscuous activities of similar enzymes.

- Iterative Experimental Validation: Use the computational predictions as a starting point for targeted experimental validation, such as in vitro enzyme assays for the top-ranked candidate genes [19].

Issue: Handling Large Metabolic Networks and Scalability

Problem: The computational workflow becomes slow or fails to complete when applied to a large, complex metabolic network.

Solutions:

- Benchmark Against Advanced Methods: For large-scale or draft networks, consider that newer deep learning methods like CHESHIRE have been developed specifically for rapid gap-filling using only network topology and can handle large reaction pools efficiently [5]. It may be used as a complementary pre-processing step.

- Parallelize Computations: Check the NICEgame implementation for opportunities to run independent processes (e.g., gap-filling for different subsystems) in parallel to reduce total runtime.

- Utilize High-Performance Computing (HPC): Deploy the workflow on a cluster or HPC environment to manage the significant computational resources required for genome-scale analyses [19].

Key Experimental Protocols & Data

Core Workflow of the NICEgame and BridgIT Integration

The following diagram summarizes the integrated seven-step workflow for annotating knowledge gaps in metabolic reconstructions.

Quantitative Performance Data

Table 1: Performance Comparison of Gap-Filling Reaction Pools in E. coli Case Study [9]

| Reaction Pool Used for Gap-Filling | Number of Rescued Reactions (out of 152) | Average Number of Solutions per Rescued Reaction |

|---|---|---|

| KEGG (Known reactions) | 53 | 2.3 |

| ATLAS of Biochemistry (Known & Hypothetical) | 93 | 252.5 |

Table 2: Outcomes of Applied Framework on E. coli iML1515 Model [19] [9]

| Metric | Result |

|---|---|

| Identified False Essential Gene Predictions | 148 genes |

| Associated False Essential Reactions | 152 reactions |

| New Reactions Proposed | 77 reactions |

| Candidate E. coli Genes Proposed | 35 genes |

| Resolved Metabolic Gaps | 47% |

| Accuracy Increase in Gene Essentiality Prediction (iEcoMG1655) | 23.6% |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Databases for the Workflow

| Item Name | Type | Function / Description |

|---|---|---|

| ATLAS of Biochemistry | Reaction Database | A comprehensive database of known and over 150,000 hypothetical biochemical reactions, providing the solution space for novel metabolic pathways [19] [9]. |

| BridgIT | Tool / Algorithm | A computational method that maps biochemical reactions, including hypothetical ones from ATLAS, to candidate enzymes and genes in a genome [19] [9]. |

| NICEgame | Computational Workflow | The core workflow that identifies and curates non-annotated metabolic functions in genomes using GEMs and the ATLAS database [19]. |

| Genome-Scale Model (GEM) | Model / Data Structure | A mathematical representation of an organism's metabolism used to simulate metabolic capabilities and identify gaps [19] [9]. |

| CHEASHIRE | Tool / Algorithm | A deep learning-based method for gap-filling that uses network topology alone, useful for large networks or when phenotypic data is scarce [5]. |

Troubleshooting Guides

Installation and Dependencies

Q: What are the common installation errors and how can I resolve them?

| Error Message | Possible Cause | Solution |

|---|---|---|

'lib = "../R/library"' is not writable |

R library directory permissions [20] | Run: Rscript -e 'if( file.access(Sys.getenv("R_LIBS_USER"), mode=2) == -1 ) dir.create(path = Sys.getenv("R_LIBS_USER"), showWarnings = FALSE, recursive = TRUE)' [20] |

Error: Unknown argument: "qcov_hsp_perc" |

Outdated NCBI BLAST+ version [20] | Upgrade to BLAST+ version 2.2.30 (10/2014) or newer [20] |

| Blast test fails in Singularity | Tool downloads data into read-only repository [21] | Clone GitHub repo in your home/project folder, not in the container itself [21] |

| Missing R packages | Packages not installed in correct R environment [20] [21] | Run R installation commands from the gapseq documentation [20] |

Q: How do I install and configure gapseq for different operating systems?

The following table summarizes the key system dependencies for different environments.

| System | Dependencies (Command Line) | R Packages [20] [21] |

|---|---|---|

| Ubuntu/Debian/Mint | sudo apt install ncbi-blast+ git libglpk-dev r-base-core exonerate bedtools barrnap bc parallel curl libcurl4-openssl-dev libssl-dev libsbml5-dev bc [20] |

data.table, stringr, getopt, R.utils, stringi, jsonlite, httr, pak, Biostrings, Waschina/cobrar [20] |

| Centos/Fedora/RHEL | sudo yum install ncbi-blast+ git glpk-devel BEDTools exonerate hmmer bc parallel libcurl-devel curl openssl-devel libsbml-devel bc [20] |

Same as above [20] |

| MacOS (Homebrew) | brew install coreutils binutils git glpk blast bedtools r brewsci/bio/barrnap grep bc gzip parallel curl bc brewsci/bio/libsbml [20] |

Same as above (Note: Some Mac-specific issues may occur) [20] |

| Conda (Stable) | conda create -c conda-forge -c bioconda -n gapseq gapseq [20] |

Pre-installed in the conda environment [20] |

Workflow Execution and Performance

Q: My "doall" run is taking several hours. Is this normal?

Yes, this is expected behavior. The gapseq doall command is a comprehensive workflow that can take up to four hours for a single genome, as noted in the documentation [22]. The process involves multiple computationally intensive steps: homology searches (find), draft network reconstruction (draft), and gap-filling (fill) [23] [22]. For high-throughput analyses, consider leveraging the newer pan-Draft module, which uses a pan-reactome-based approach to reconstruct species-representative models from multiple genomes more efficiently [24].

Q: How can I improve the solver performance for gap-filling large networks?

gapseq uses Linear Programming (LP) for its gap-filling algorithm [23]. While GLPK is the default open-source solver, you can install and configure the commercial CPLEX solver, which is typically faster [20]. CPLEX is available for free to students and academics through the IBM Academic Initiative. After installing CPLEX, you can install the R interface cobrarCPLEX from GitHub (Waschina/cobrarCPLEX) to enable this integration [20].

Frequently Asked Questions (FAQs)

General Usage

Q: What input formats does gapseq accept?

gapseq is flexible and requires only a genome sequence in FASTA format as its primary input. It does not need a pre-computed annotation file, as it performs its own annotation internally [23].

Q: Can gapseq be used for eukaryotes or archaea?

The current version of gapseq and its core biochemistry database are primarily optimized for bacterial metabolism [23]. The developers note that archaea-specific and eukaryotic-specific reactions are not fully included but are planned for a future release [23].

Q: What is the pan-Draft module and how does it improve scalability?

pan-Draft is an extension integrated into the gapseq pipeline that addresses a key challenge in scalability: generating high-quality models from incomplete Metagenome-Assembled Genomes (MAGs) [24]. Instead of building a model from a single, often fragmented genome, pan-Draft leverages multiple MAGs from the same species cluster. It performs a pan-reactome analysis to determine a solid core set of metabolic reactions, resulting in a more complete and accurate species-level model [24]. This is particularly valuable for large-scale studies of uncultured species.

Interpreting Results

Q: How accurate are gapseq's predictions compared to other tools?

gapseq has been benchmarked against other automated tools like CarveMe and ModelSEED. The following table summarizes its performance based on experimental data.

| Prediction Type | gapseq Performance | Comparison to CarveMe/ModelSEED | Validation Basis |

|---|---|---|---|

| Enzyme Activity | 53% True Positive Rate [23] | Outperforms CarveMe (27%) and ModelSEED (30%) [23] | 10,538 enzyme activity tests for 3,017 organisms [23] |

| Fermentation Products & Carbon Utilization | High accuracy in predicting metabolic phenotypes [23] | Outperforms state-of-the-art tools [23] | Scientific literature and experimental data for 14,931 bacterial phenotypes [23] |

| Pathway Prediction | Based on key enzyme detection and reaction completeness [25] | N/A | Internal curated database and homology searches [23] [25] |

Q: How do I interpret the main output files from gapseq find?

*-Pathways.tbl: This file details the predicted metabolic pathways. Key columns includePrediction(true/false for pathway presence),Completeness(% of reactions found), andKeyReactionsFound(number of key enzymes detected) [25].*-Reactions.tbl: This file lists all checked reactions and the evidence for them. Thestatuscolumn indicates the homology search result (e.g.,good_blast,no_blast), and thepathway.statusexplains why a pathway was predicted (e.g.,full,keyenzyme) [25].

Visualized Workflows

Core gapseq Workflow

The following diagram illustrates the standard workflow for reconstructing a metabolic model from a single genome using gapseq, integrating the individual commands into a logical pipeline [22].

pan-Draft Workflow for Scalable Reconstruction

For large-scale studies, the pan-Draft module provides a more robust and scalable workflow by leveraging multiple genomes from the same species cluster to overcome the limitations of individual, often incomplete, MAGs [24].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key resources and materials used in a typical gapseq experiment for metabolic network reconstruction.

| Item | Function / Purpose | Example / Note |

|---|---|---|

| Genomic Sequence | Primary input for predicting metabolic potential. Format: FASTA [22] | Can be a complete genome or a Metagenome-Assembled Genome (MAG) [24]. |

| Curated Reaction Database | Universal set of biochemical reactions and pathways used for annotation and model building [23] | gapseq uses a manually curated database derived from ModelSEED, comprising ~15,150 reactions [23]. |

| Reference Protein Sequences | Dataset of known enzyme sequences used for homology searches (BLAST) [23] | Sourced from UniProt and TCDB; updated automatically by gapseq [23]. |

| Growth Medium Definition | List of available metabolites in the environment; crucial for the gap-filling step [22] | A CSV file specifying extracellular metabolites. Pre-defined media are included (e.g., TSBmed.csv) [22]. |

| Linear Programming (LP) Solver | Software that performs the optimization during gap-filling and Flux Balance Analysis (FBA) [23] [20] | GLPK (open-source, default) or CPLEX (commercial, faster, free for academics) [20]. |

Optimizing Performance and Overcoming Common Pitfalls in Large-Scale Gap-Filling

Frequently Asked Questions (FAQs)

1. What is reaction pool curation and why is it critical for gap-filling? Reaction pool curation is the process of selecting and managing a database of biochemical reactions used to fill knowledge gaps in genome-scale metabolic models (GEMs). The quality and composition of this pool directly impact the accuracy and computational cost of gap-filling. A poorly curated pool can lead to biologically irrelevant solutions, while an overly large one makes the optimization problem prohibitively expensive to solve [5].

2. How do I choose between different gap-filling algorithms? The choice depends on your specific goals and available data. Optimization-based methods like ModelSEED, which use Linear Programming (LP), are well-established for ensuring growth on a specified medium [10]. For scenarios where no phenotypic data is available, topology-based machine learning methods like CHESHIRE can predict missing reactions using only the network structure, often with superior performance over earlier methods [5].

3. What is the trade-off between using LP vs. MILP in gapfilling? KBase's experience shows that a Linear Programming (LP) formulation, which minimizes the sum of flux through gapfilled reactions, often finds solutions just as minimal as the more complex Mixed-Integer Linear Programming (MILP) but requires far less computation time. While MILP guarantees a minimal set of reactions, LP's minimization of total flux typically results in a similarly minimal set of reactions when using a stoichiometrically consistent database [10].

4. Why does my gapfilled model include seemingly irrelevant reactions? Gapfilling algorithms prioritize network functionality (e.g., biomass production) over biological precision. Reactions are added from the pool based on a cost function, which may penalize, but not entirely exclude, less likely reactions (e.g., transporters or non-KEGG reactions). The solution is a mathematical prediction that requires manual curation to ensure biological relevance [10].

5. How does the selection of a growth medium influence the gapfilling solution? The chosen media condition dictates which nutrients the model can import. Using "complete" media will cause the algorithm to add many transport reactions, as all transportable compounds are available. Using a minimal, biologically relevant media is often recommended for an initial gapfill, as it forces the model to biosynthesize essential substrates, leading to a more functionally complete metabolic network [10].

Troubleshooting Guides

Problem 1: High Computational Cost and Long Run Times Issue: Gapfilling a large metabolic network is taking too long or failing to complete. Solutions:

- Switch to LP Formulation: If using a MILP-based gapfilling tool, see if an LP alternative is available. As demonstrated in KBase, this can drastically reduce compute time with minimal impact on solution quality [10].

- Curate the Reaction Pool: Prune the universal reaction pool to remove biologically irrelevant reactions for your organism (e.g., plant-specific reactions in a bacterial model). This reduces the search space for the algorithm.

- Leverage Topology-Based Pre-Screening: Use a fast, topology-based method like CHESHIRE to generate a shortlist of high-probability missing reactions. This refined pool can then be fed into a more computationally intensive optimization-based gapfiller [5].

Problem 2: Biologically Implausible Gapfilling Solutions Issue: The model grows after gapfilling, but the added reactions are not genetically encoded or are inappropriate for the organism. Solutions:

- Adjust Reaction Costs: Many gapfilling algorithms use a cost function. Increase the penalty for adding reactions from less trusted sources, such as non-KEGG reactions or those with unknown thermodynamics [10].

- Iterative Gapfilling with Manual Curation: Do not accept the first solution. Manually review the added reactions, disable those that are implausible (e.g., by setting their flux bounds to zero), and re-run the gapfilling to find an alternative solution [10].

- Use a Context-Specific Reaction Pool: Instead of a universal database, use a reaction pool tailored to your organism's phylogeny or ecological niche to improve biological relevance.

Problem 3: Model Fails to Grow After Gapfilling Issue: Even after gapfilling, the model is still unable to produce biomass on the expected medium. Solutions:

- Verify Media Composition: Double-check that your growth medium includes all essential nutrients and that the corresponding exchange reactions are open.

- Inspect Dead-End Metabolites: Identify metabolites that cannot be produced or consumed. Their presence may indicate gaps that the algorithm failed to fill, requiring a broader reaction pool or manual intervention.

- Stack Gapfilling Solutions: Perform an initial gapfill on a rich ("complete") media to ensure all basic metabolic functions are present. Then, gapfill the same model a second time on your target minimal media to add only the additional reactions required for growth in that condition [10].

Quantitative Performance of Gap-filling Methods

The table below summarizes the performance of various methods for predicting missing reactions, a key part of reaction pool curation.

| Method | Type | Key Feature | Reported Performance (AUROC) | Reference |

|---|---|---|---|---|

| CHESHIRE | Topology-based (ML) | Uses hypergraph learning and Chebyshev spectral graph CNNs. | Outperformed NHP and C3MM in tests on 926 GEMs. | [5] |

| NHP (Neural Hyperlink Predictor) | Topology-based (ML) | Approximates hypergraphs as graphs for node feature generation. | Lower performance than CHESHIRE in comparative benchmarks. | [5] |

| C3MM (Clique Closure-based Coordinated Matrix Minimization) | Topology-based (ML) | Integrated training-prediction process. | Lower performance than CHESHIRE; limited scalability. | [5] |

| ModelSEED Gapfill | Optimization-based | Uses LP to minimize flux through gapfilled reactions. | Found to be just as minimal as MILP with faster computation. | [10] |

| SynRBL (Rule-based) | Rebalancing | Rule-based for non-carbon compounds; MCS-based for carbon compounds. | 81.19% to 99.33% accuracy for carbon compounds. | [26] |

Experimental Protocols

Protocol 1: Topology-Based Gapfilling with CHESHIRE This protocol is for predicting missing reactions using only the network topology of a GEM [5].

- Input Preparation: Represent your metabolic network as a hypergraph, where each reaction is a hyperlink connecting all its substrate and product metabolites.

- Feature Initialization: Generate an initial feature vector for each metabolite using an encoder-based neural network applied to the hypergraph incidence matrix.

- Feature Refinement: Refine the metabolite features using a Chebyshev Spectral Graph Convolutional Network (CSGCN) on a decomposed graph to capture metabolite-metabolite interactions.

- Pooling and Scoring: Pool the refined metabolite features into a single feature vector for each candidate reaction. Feed this vector into a neural network to produce a confidence score for the reaction's existence.

- Validation: The method's efficacy can be internally validated by artificially removing known reactions and assessing recovery rates (AUROC). External validation involves testing if the gapfilled model improves predictions for metabolic phenotypes like fermentation product secretion.

Protocol 2: Optimization-Based Gapfilling with ModelSEED This protocol uses the KBase framework to enable model growth on a specified medium [10].