Statistical Validation of E. coli Flux Balance Analysis: Methods, Metrics, and Model Selection

This article provides a comprehensive guide for researchers and scientists on statistically validating Flux Balance Analysis (FBA) predictions for Escherichia coli metabolism.

Statistical Validation of E. coli Flux Balance Analysis: Methods, Metrics, and Model Selection

Abstract

This article provides a comprehensive guide for researchers and scientists on statistically validating Flux Balance Analysis (FBA) predictions for Escherichia coli metabolism. It covers foundational principles, from the structure of genome-scale models like iML1515 and iCH360 to advanced techniques for model selection and accuracy assessment. We detail methodological applications, including the use of high-throughput mutant fitness data and enzyme constraints to refine predictions. The article further addresses common troubleshooting scenarios and optimization strategies, such as correcting for vitamin availability and refining gene-protein-reaction mappings. Finally, we present a comparative evaluation of validation metrics and emerging machine-learning approaches, offering a robust framework to enhance confidence in FBA for biomedical and biotechnological applications.

Foundations of E. coli FBA and the Imperative for Statistical Validation

Constraint-Based Modeling (CBM) is a mathematical framework used to simulate and analyze cellular metabolism at a systems level. By applying known constraints that represent physico-chemical and biological limitations, these models can predict metabolic behavior without requiring detailed kinetic parameters, which are often unavailable [1]. The core principle involves defining a solution space of all possible metabolic flux distributions that are possible for a given network, then using constraints to narrow this space to biologically relevant solutions.

Flux Balance Analysis (FBA) is the most widely used computational method within the CBM framework. FBA calculates the flow of metabolites through a metabolic network, enabling the prediction of growth rates, nutrient uptake, and byproduct secretion [1] [2]. A key strength of FBA is its ability to predict optimal metabolic states, such as maximizing biomass production for a given set of nutrients, making it particularly valuable for metabolic engineering applications aimed at optimizing the production of target compounds in E. coli [2].

Comparative Analysis of E. coli Metabolic Models

The predictive accuracy and computational tractability of FBA depend heavily on the quality and scope of the underlying Genome-Scale Metabolic Model (GEM). Several generations of E. coli GEMs have been developed, each with expanding coverage and refinement. The table below summarizes key E. coli metabolic models relevant for FBA.

Table 1: Comparison of Key E. coli Metabolic Models for FBA

| Model Name | Genes | Reactions | Metabolites | Key Features & Applications | Key References |

|---|---|---|---|---|---|

| iML1515 | 1,515 | 2,712 | 1,192 | The most complete reconstruction for E. coli K-12 MG1655; well-curated; used as a base for enzyme-constrained modeling. [1] [3] | [1] |

| iCH360 | 360 | 547 | 360 | Manually curated "Goldilocks-sized" model of core/biosynthetic metabolism; derived from iML1515; high interpretability and rich annotation. [4] | [4] |

| k-ecoli457 | N/A | 457 | 337 | Genome-scale kinetic model; satisfies flux data for 25 mutant strains; superior yield prediction over FBA but more complex. [5] | [5] |

| iJO1366 | 1,366 | 2,255 | 1,135 | Earlier genome-scale model; used as a template for medium design and recombinant protein production studies. [2] | [2] |

Beyond the core stoichiometric models, specialized formulations have been developed to incorporate additional biological layers. Enzyme-Constrained Models (ecModels), such as those created using the ECMpy workflow, integrate catalytic capacity to avoid predictions of unrealistically high fluxes and improve prediction accuracy [1]. Models of Metabolism and Expression (ME-models), like the rETFL formulation, simulate proteome allocation and can predict the metabolic burden associated with recombinant protein expression, providing crucial insights for biopharmaceutical production [6].

Experimental Protocols for FBA

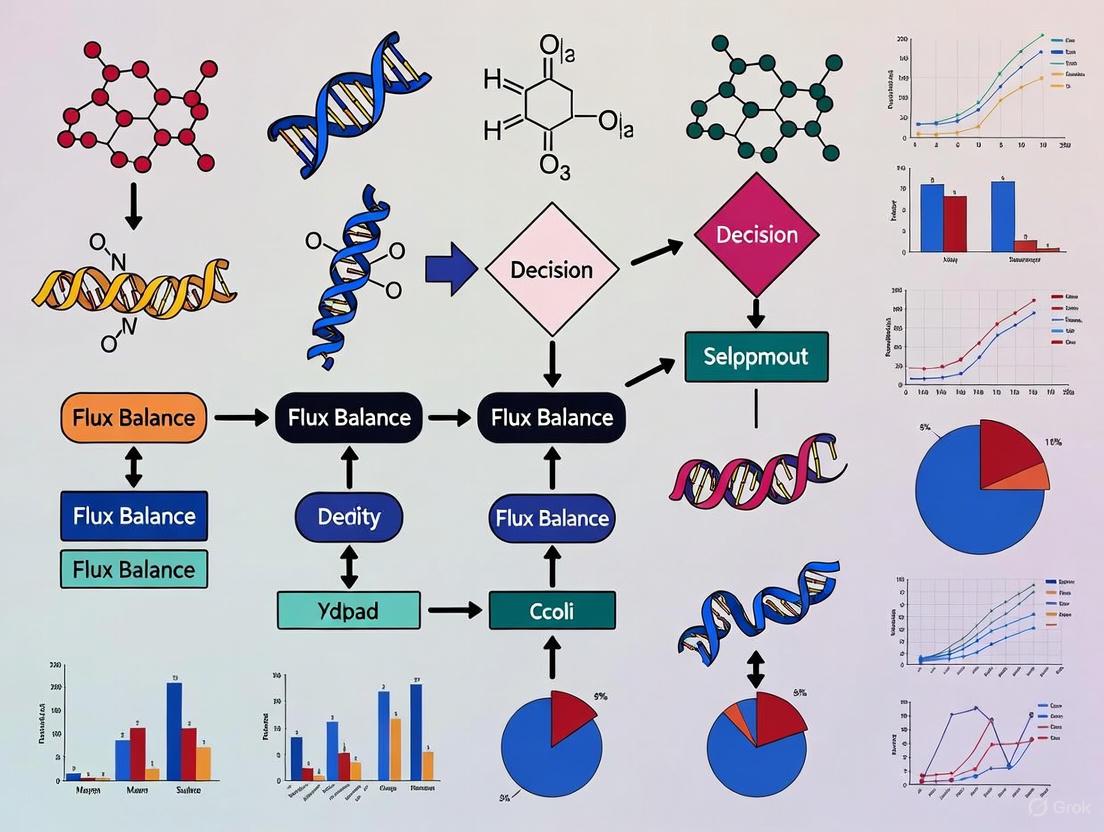

A standard FBA workflow involves multiple steps, from model selection and curation to simulation and validation. The following diagram outlines the core process for a typical FBA study in E. coli.

Diagram 1: Core FBA Workflow.

Protocol 1: Standard FBA for Growth Prediction

This protocol details the steps for performing a standard FBA to predict growth rates.

- Model Selection and Curation: Begin with a well-curated GEM like iML1515. Check and update Gene-Protein-Reaction (GPR) relationships and reaction directions based on authoritative databases like EcoCyc [1].

- Define Medium Composition: Set the upper and lower bounds of exchange reactions to reflect the experimental medium. For instance, in a defined SM1 medium, the glucose uptake rate (

EX_glc__D_e) might be set to ~55.5 mmol/gDW/h, while other metabolites like sulfate and ammonium are similarly constrained [1]. - Set the Objective Function: Typically, the objective is set to maximize the biomass reaction, which represents the drain of biomass precursors for cellular growth.

- Solve the Model: Use a linear programming solver via packages like COBRApy to find the flux distribution that maximizes the objective function [1].

- Output and Validation: The primary output is the optimal growth rate and the underlying flux map. Predictions should be compared with experimental growth data.

Protocol 2: FBA for Recombinant Metabolite Production

To engineer strains for overproduction, the objective function must be modified. This protocol outlines the process for maximizing the production of a target compound, such as L-cysteine.

- Model Customization: Incorporate genetic modifications. This involves altering kinetic parameters (e.g.,

Kcatvalues) to reflect mutant enzyme activity and updating gene abundances to account for modified promoters or plasmid copy numbers [1]. - Gap Filling: Identify and add missing reactions essential for the production pathway. For example, thiosulfate assimilation pathways for L-cysteine production were added to iML1515 via gap-filling [1].

- Lexicographic Optimization: Directly maximizing product export can lead to predictions of zero growth. To obtain realistic solutions, perform a two-step optimization:

- First, optimize for biomass.

- Second, constrain the model to maintain a percentage (e.g., 30%) of the maximum biomass and then optimize for product export [1].

- Output: The result is a flux distribution that supports both cell growth and high-yield production of the target metabolite.

Statistical Validation of FBA Predictions

Robust validation is critical for assessing the predictive power of FBA and guiding model improvements. High-throughput mutant fitness data provides a powerful resource for this task.

Validation Using Mutant Fitness Data

A 2023 study systematically evaluated the accuracy of four successive E. coli GEMs using published mutant fitness data across thousands of genes and 25 carbon sources [3]. The area under a precision-recall curve (AUPR) was identified as a more informative metric for quantifying model accuracy than alternative metrics [3]. This large-scale analysis pinpointed specific sources of prediction errors, highlighting that isoenzyme gene-protein-reaction mapping is a major source of inaccurate predictions [3]. Furthermore, the study used machine learning to identify that metabolic fluxes through hydrogen ion exchange and specific central metabolism branch points are important determinants of model accuracy [3].

Table 2: Key Metrics for FBA Model Validation

| Validation Method | Description | Application Example | Outcome |

|---|---|---|---|

| High-Throughput Mutant Screening | Compares predicted vs. actual growth of gene knockout mutants across many conditions. [3] | Quantifying accuracy of iML1515 using AUPR on fitness data for 25 carbon sources. [3] | Identifies isoenzyme GPR rules and vitamin availability as key areas for model refinement. [3] |

| Product Yield Correlation | Calculates correlation (e.g., Pearson's) between predicted and experimentally measured product yields. [5] | Comparing k-ecoli457 (R=0.84) against FBA (R=0.18) for 320 engineered strains. [5] | Kinetic models like k-ecoli457 can show significantly higher correlation than stoichiometric FBA. [5] |

| Fluxomics Comparison | Directly compares predicted internal fluxes with measured ^13^C-flux data. | Core kinetic model validation against wild-type and mutant flux data. [5] | Validates the accuracy of the predicted flux distribution in central metabolism. |

Dynamic FBA for Bioprocess Optimization

Dynamic FBA (dFBA) extends FBA to incorporate time-varying changes in the extracellular environment, such as nutrient depletion. This is particularly valuable for designing fed-batch fermentation processes.

A 2022 study used dFBA with the iJO1366 model to optimize a chemically defined medium for recombinant scFv antibody production in E. coli [2]. The simulation predicted the depletion of ammonium during the process. To compensate, the model suggested supplementing the medium with the amino acids asparagine, glutamine, and arginine. Experimental validation confirmed that adding these amino acids led to an approximately two-fold increase in both growth rate and total recombinant protein expression compared to the base minimal medium [2]. This case demonstrates how GEMs can rationally guide medium design and feeding strategies to improve protein production.

The Scientist's Toolkit

Successful implementation of FBA requires a suite of computational tools, models, and databases. The table below lists essential resources for conducting FBA research in E. coli.

Table 3: Essential Research Reagents and Tools for E. coli FBA

| Tool / Resource | Type | Function in FBA | Key Features / Examples |

|---|---|---|---|

| Genome-Scale Models (GEMs) | Metabolic Network | Provides the stoichiometric matrix and network topology for simulations. | iML1515 [1], iJO1366 [2], iCH360 [4] |

| COBRApy | Software Package | A primary Python toolbox for performing CBM and FBA. | Used for model simulation, modification, and analysis. [1] |

| ECMpy | Software Package | Workflow for constructing enzyme-constrained models. | Adds enzyme capacity constraints without altering GEM structure. [1] |

| BRENDA Database | Kinetic Database | Source of enzyme kinetic parameters (e.g., Kcat). | Used to parameterize enzyme-constrained models. [1] |

| EcoCyc Database | Knowledge Base | Curated database of E. coli biology for model validation and curation. | Used to update GPR rules and verify metabolic pathways. [1] |

| PAXdb | Protein Abundance Database | Provides data on cellular protein concentrations. | Used to set constraints on total enzyme capacity. [1] |

Genome-scale metabolic models (GEMs) represent comprehensive knowledge bases of an organism's metabolism, mathematically encoding the biochemical reactions, gene-protein-reaction relationships, and transport processes that define metabolic capabilities [7]. For Escherichia coli K-12 MG1655, perhaps the best-characterized model organism, these reconstructions have evolved through iterative generations of refinement, each expanding genomic coverage and improving predictive accuracy. The conversion of these metabolic reconstructions into computational models enables quantitative phenotype prediction through methods such as flux balance analysis (FBA), which computes metabolic flux distributions by optimizing cellular objectives such as growth yield, subject to physicochemical and enzymatic constraints [8] [7].

Within the specific context of flux balance analysis research, statistical validation provides the critical foundation for model credibility and utility. As GEMs grow in complexity and scope, robust validation methodologies are essential to quantify prediction accuracy, identify model shortcomings, and guide future refinement efforts [8]. This comparison guide examines the progression of E. coli GEMs through the lens of statistical validation, highlighting how each model generation has been assessed against experimental data and how these evaluations have shaped our understanding of microbial metabolism.

The Evolutionary Trajectory of E. coli Metabolic Models

The development of E. coli metabolic models represents a remarkable case study in systems biology, demonstrating how iterative curation and expansion of biochemical knowledge has enhanced our ability to simulate cellular physiology. The table below chronicles this evolutionary trajectory, highlighting key expansions in model content and scope.

Table 1: Progression of E. coli Genome-Scale Metabolic Models

| Model Name | Publication Year | Genes | Reactions | Metabolites | Key Advances and Features |

|---|---|---|---|---|---|

| iJR904 | 2003 | 904 | 931 | 625 | Elementally and charge-balanced reactions; direct inclusion of GPR associations; updated quinone specificity in electron transport chain [9] |

| iAF1260 | 2007 | 1,266 | 2,077 | 1,039 | Expansion of transport and biosynthetic pathways; improved energy metabolism representation |

| iJO1366 | 2011 | 1,366 | 2,583 | 1,137 | Integration of new metabolic discoveries; enhanced predictive accuracy for gene essentiality |

| EcoCyc-18.0-GEM | 2014 | 1,445 | 2,286 | 1,453 | Automated generation from EcoCyc database; 23% more reactions than iJO1366; updated three times annually [10] |

| iML1515 | 2017 | 1,515 | 2,719 | 1,192 | Incorporation of reactive oxygen species metabolism; metabolite repair pathways; protein structural information; 3.7% increase in gene essentiality prediction accuracy over iJO1366 [11] |

| iCH360 | 2024 (preprint) | 360 | 562 | 360 | Manually curated medium-scale model focusing on central metabolism; enriched with thermodynamic and kinetic data; improved prediction realism [12] [4] |

This progression demonstrates a clear trend toward more comprehensive biochemical coverage, with the most recent genome-scale model (iML1515) encompassing nearly twice as many genes as the early iJR904 model. However, the recent introduction of iCH360 represents a strategic pivot toward curated precision rather than expanded scope, addressing the tradeoffs between model comprehensiveness and biological realism [4].

Statistical Validation Frameworks for Metabolic Models

Robust validation is particularly challenging in metabolic modeling because in vivo metabolic fluxes cannot be directly measured and must be inferred from other data types [8]. The validation approaches discussed in this section provide the statistical foundation for evaluating model predictive performance.

Gene Essentiality Prediction

Gene essentiality prediction represents one of the most fundamental validation tests for GEMs, assessing a model's ability to correctly identify whether knockout of a specific gene will prevent growth under defined conditions. The standard validation protocol involves:

- Computational Gene Deletion: Simulating gene knockouts in silico using methods such as FBA with a biomass optimization objective

- Growth Phenotype Classification: Categorizing predictions as essential (no growth) or non-essential (growth)

- Experimental Comparison: Comparing predictions with empirical essentiality data from targeted knockouts or high-throughput mutant screens [11]

Statistical performance is typically quantified using metrics such as accuracy, precision, and recall, with the Matthews Correlation Coefficient (MCC) providing a balanced measure particularly useful for imbalanced datasets [11].

Nutrient Utilization Prediction

This validation approach tests a model's ability to correctly predict growth capabilities across different nutrient conditions. The methodology encompasses:

- Environmental Specification: Defining the extracellular environment in the model by enabling specific carbon sources and other nutrients

- Growth Simulation: Performing FBA to predict growth capability

- Phenotypic Comparison: Comparing predictions with experimental growth data across hundreds of conditions [10]

The overall accuracy across all tested conditions serves as the primary metric, with condition-specific analyses identifying systematic prediction errors.

Mutant Fitness Correlation Analysis

A more recent and rigorous validation approach utilizes high-throughput mutant fitness data from techniques such as RB-TnSeq to quantitatively compare model predictions with experimental fitness measurements across thousands of genes and multiple growth conditions [13]. The key steps include:

- Condition-Specific Simulations: Simulating gene knockout effects across multiple environmental conditions

- Quantitative Fitness Comparison: Comparing simulated growth rates with experimental fitness measurements

- Precision-Recall Analysis: Computing precision-recall curves to evaluate prediction quality, with the area under the precision-recall curve (AUC) providing a robust metric that appropriately handles dataset imbalance [13]

This approach offers more statistical power than binary essentiality classification and can identify subtle model inaccuracies.

Table 2: Statistical Validation Metrics for Metabolic Models

| Validation Method | Key Metrics | Advantages | Limitations |

|---|---|---|---|

| Gene Essentiality Prediction | Accuracy, Precision, Recall, Matthews Correlation Coefficient (MCC) | Binary classification simplifies analysis; extensive historical data for comparison | Does not validate internal flux distributions; sensitive to biomass composition |

| Nutrient Utilization Prediction | Overall accuracy, Condition-specific accuracy | Tests metabolic network completeness; identifies missing pathways | Qualitative (growth/no-growth) rather than quantitative |

| Mutant Fitness Correlation | Area Under Precision-Recall Curve (AUC), Correlation coefficients | Quantitative assessment; condition-dependent evaluation; identifies subtle model errors | Requires extensive experimental data; complex statistical interpretation |

| Flux Prediction Validation | χ² goodness-of-fit, Confidence intervals for fluxes | Directly validates internal flux predictions; most physiologically relevant | Requires ¹³C-labeling data; technically challenging and resource-intensive |

Comparative Performance Analysis of E. coli GEMs

Gene Essentiality Prediction Accuracy

A critical validation of iML1515 demonstrated 93.4% accuracy in predicting gene essentiality across 16 different carbon sources, representing a 3.7% improvement over the iJO1366 model (89.8% accuracy) [11]. This evaluation utilized experimental genome-wide knockout screens of the KEIO collection (3,892 gene knockouts), with growth profiles quantitatively assessed through lag time, maximum growth rate, and growth saturation point measurements. When customized with condition-specific proteomics data to remove reactions associated with non-expressed genes, iML1515 achieved an additional 12.7% decrease in false-positive predictions and a 2.1% increase in essentiality predictions (MCC score) [11].

Quantitative Assessment with Mutant Fitness Data

A comprehensive 2023 evaluation quantified prediction accuracy across four successive E. coli GEMs using high-throughput mutant fitness data across thousands of genes and 25 different carbon sources [13]. This analysis revealed several important trends:

- Model Scope Expansion: The number of genes matched between models and experimental datasets has steadily increased from iJR904 to iML1515, reflecting improved genomic coverage [13]

- Precision-Recall Superiority: The area under the precision-recall curve (AUC) provided more robust accuracy quantification than alternative metrics, particularly for handling dataset imbalance [13]

- Error Pattern Identification: Analysis of iML1515 prediction errors highlighted vitamin/cofactor biosynthesis pathways (biotin, R-pantothenate, thiamin, tetrahydrofolate, NAD+) as major sources of false negatives, suggesting metabolite carry-over or cross-feeding in experimental systems rather than model errors [13]

Tradeoffs Between Scale and Realism

The recent introduction of the iCH360 model highlights an important tradeoff in metabolic modeling between comprehensive scope and predictive realism. While iML1515 represents the most complete E. coli metabolic reconstruction, its genome-scale complexity can generate biologically unrealistic predictions due to metabolically irrelevant bypass routes [4]. The manually curated iCH360 model, while smaller in scope, demonstrates improved prediction realism in several scenarios:

- Avoidance of unrealistically high acetate production fluxes predicted by iML1515 [14] [4]

- Enhanced suitability for advanced modeling techniques including enzyme-constrained FBA, elementary flux mode analysis, and thermodynamic analysis [12] [4]

- Superior interpretability and visualization capabilities through focused scope on central metabolic pathways [4]

Experimental Protocols for Model Validation

High-Throughput Mutant Fitness Validation

The protocol for quantitative model validation using mutant fitness data involves these key steps:

Experimental Data Collection:

- Generate mutant fitness data using RB-TnSeq or similar high-throughput techniques

- Measure fitness across multiple conditions (e.g., 25 different carbon sources)

- Normalize fitness values to establish essentiality thresholds [13]

Computational Simulation:

- For each experimental condition, simulate gene knockout effects using FBA

- Convert simulated growth rates to binary essentiality calls or quantitative fitness predictions [13]

Statistical Comparison:

- Compute precision-recall curves comparing predictions to experimental data

- Calculate area under the precision-recall curve (AUC) as primary accuracy metric

- Identify systematic errors through pathway enrichment analysis [13]

Figure 1: Workflow for model validation using mutant fitness data. The process integrates experimental measurements with computational simulations to generate statistically robust accuracy assessments.

Condition-Specific Model Customization

Improving model accuracy through proteomic integration follows this protocol:

Proteomics Data Acquisition:

- Obtain mass spectrometry-based proteomics data for specific growth conditions

- Establish expression thresholds for gene product inclusion [11]

Model Customization:

- Remove reactions catalyzed by non-expressed genes from the metabolic network

- Adjust gene-protein-reaction associations to reflect expression patterns [11]

Validation:

- Compare prediction accuracy of condition-specific models versus the general model

- Quantify reduction in false-positive predictions and improvement in essentiality prediction [11]

Table 3: Key Research Reagents and Computational Tools for E. coli Metabolic Modeling

| Resource Name | Type | Function and Application | Access Information |

|---|---|---|---|

| COBRA Toolbox | Software Package | MATLAB-based suite for constraint-based reconstruction and analysis; implements FBA and related methods [8] | https://opencobra.github.io/cobratoolbox/ |

| cobrapy | Software Package | Python-based counterpart to COBRA Toolbox; enables FBA and other constraint-based analyses [8] | https://cobrapy.readthedocs.io/ |

| MEMOTE | Quality Control Tool | Automated test suite for metabolic model quality assurance; checks stoichiometry, mass, and charge balance [8] | https://memote.io/ |

| BiGG Models | Model Database | Curated repository of genome-scale metabolic models, including E. coli GEMs in standardized formats [8] [11] | http://bigg.ucsd.edu |

| EcoCyc | Knowledgebase | Encyclopedia of E. coli genes and metabolism; source for automated model generation [10] | https://ecocyc.org/ |

| KEIO Collection | Experimental Resource | Complete set of E. coli single-gene knockouts; essential reference data for model validation [11] | http://ecoli.aist-nara.ac.jp |

Future Directions in Model Validation and Refinement

The statistical validation of E. coli metabolic models continues to evolve with several promising frontiers:

Machine Learning Integration: Hybrid approaches that combine FBA with machine learning, such as FlowGAT, which uses graph neural networks to predict gene essentiality from wild-type metabolic phenotypes, are emerging as powerful validation tools [15]

Multi-Omics Data Integration: The development of multi-scale models that incorporate transcriptomic, proteomic, and metabolomic constraints will require more sophisticated validation frameworks that assess prediction accuracy across multiple biological layers [11]

Consensus Modeling: Tools such as GEMsembler, which enables cross-tool model comparison and consensus model assembly, represent a promising approach for leveraging the unique strengths of different reconstruction methodologies [15]

Thermodynamic Constraining: Incorporation of thermodynamic data, as demonstrated in the iCH360 model, provides an additional validation dimension by ensuring flux predictions are thermodynamically feasible [12] [4]

As these advanced validation methodologies mature, they will further strengthen the role of GEMs as predictive tools in metabolic engineering, systems biology, and biotechnology.

Flux Balance Analysis (FBA) has become an indispensable tool for predicting metabolic behavior in systems biology and metabolic engineering. As a constraint-based modeling approach, FBA enables researchers to predict steady-state metabolic flux distributions in genome-scale metabolic models. The core principle underlying FBA and related constraint-based methods is the steady-state assumption, which posits that the production and consumption of metabolites inside the cell are balanced, resulting in constant concentrations of metabolic intermediates. This article examines the mathematical foundation of this critical assumption, explores validation methodologies for FBA predictions in E. coli research, and compares the statistical rigor of different validation approaches in the context of drug development and biotechnology applications.

The Steady-State Assumption: Mathematical and Biological Foundations

In biochemical terms, steady state refers to the maintenance of constant internal concentrations of molecules and ions in living systems, where a continuous flux of mass and energy results in constant synthesis and breakdown of molecules via biochemical pathways [16]. This represents a dynamic steady state where internal composition remains relatively constant but different from equilibrium concentrations, essentially functioning as homeostasis at the cellular level [16].

The mathematical foundation of the steady-state assumption has evolved beyond traditional quasi-steady-state approximations. Recent theoretical work demonstrates that steady-state analysis applies to oscillating and growing systems without requiring quasi-steady-state at any time point [17]. This perspective is based on the concept that over the long term, no metabolite can accumulate or deplete, providing a mathematical framework that justifies the successful use of steady-state assumptions in many applications.

In FBA, this assumption translates to the stoichiometric matrix equation S·v = 0, where S represents the stoichiometric matrix and v the flux vector, constraining the system such that metabolite concentrations remain constant over time [1] [8]. This formulation enables the analysis of genome-scale metabolic networks by eliminating the need for difficult-to-measure kinetic parameters [1].

Validation Frameworks for FBA Predictions

Statistical Validation in 13C-MFA and FBA

Robust validation is essential for establishing confidence in FBA predictions, particularly for applications in drug development and metabolic engineering. The χ2-test of goodness-of-fit serves as the most widely used quantitative validation approach in 13C-Metabolic Flux Analysis (13C-MFA), though limitations have prompted development of complementary validation methods [8].

For FBA, validation techniques are more varied and less standardized. Common approaches include:

- Qualitative Growth/No-Growth Comparisons: Testing presence/absence of reactions necessary for substrate utilization and biomass synthesis [8].

- Quantitative Growth Rate Comparisons: Assessing consistency of metabolic network, biomass composition, and maintenance costs with observed efficiency of substrate-to-biomass conversion [8].

- Flapjack Analysis: Comparing predicted and experimentally measured fluxes for a set of reactions across multiple strains or conditions [8].

Quality Control Frameworks

The COnstraint-Based Reconstruction and Analysis (COBRA) framework includes functions and pipelines to ensure basic model functionality, such as testing the inability to generate ATP without an external energy source [8]. The MEMOTE (MEtabolic MOdel TEsts) pipeline provides additional validation by ensuring biomass precursors can be successfully synthesized in various growth media [8].

Table 1: Validation Techniques for FBA Predictions

| Validation Method | Type | Application | Limitations |

|---|---|---|---|

| χ2-test of goodness-of-fit | Statistical | 13C-MFA flux validation | Requires sufficient degrees of freedom; sensitive to measurement errors |

| Growth/no-growth comparison | Qualitative | Essentiality analysis | Only indicates existence of metabolic routes |

| Growth rate comparison | Quantitative | Biomass synthesis efficiency | Uninformative about internal flux accuracy |

| MEMOTE pipeline | Quality control | Model functionality | Doesn't validate condition-specific predictions |

| Flux sampling + correlation | Statistical | Genome-scale models | Computationally intensive |

Comparative Analysis of Validation Approaches

Traditional vs. Emerging Methods

Traditional validation approaches typically rely on comparing FBA predictions with experimentally measured fluxes, often using correlation analysis or goodness-of-fit tests [8]. While these methods provide valuable validation, they often lack statistical rigor for discriminating between alternative model architectures.

Emerging approaches incorporate machine learning and omics integration to improve validation accuracy. Supervised machine learning models using transcriptomics and/or proteomics data have demonstrated smaller prediction errors compared to standard parsimonious FBA approaches [18]. These data-driven methods represent a shift from purely knowledge-driven approaches toward hybrid validation frameworks.

The TIObjFind Framework for Objective Function Validation

A significant challenge in FBA validation is selecting appropriate objective functions that accurately represent cellular priorities under different conditions. The TIObjFind (Topology-Informed Objective Find) framework addresses this by integrating Metabolic Pathway Analysis (MPA) with FBA to analyze adaptive shifts in cellular responses [19]. This approach:

- Reformulates objective function selection as an optimization problem minimizing differences between predicted and experimental fluxes

- Maps FBA solutions onto a Mass Flow Graph (MFG) for pathway-based interpretation

- Determines Coefficients of Importance (CoIs) that quantify each reaction's contribution to objective functions [19]

This framework demonstrates that static objectives like biomass maximization may not always align with experimental flux data, particularly under changing environmental conditions [19].

Experimental Protocols for Validation

Protocol 1: Model Validation Using MEMOTE

Purpose: Quality control for genome-scale metabolic models

- Load model in SBML format

- Run basic functionality tests (ATP generation without energy source, biomass synthesis without required substrates)

- Test biomass precursor synthesis in different growth media

- Verify stoichiometric consistency and charge balance

- Generate validation report with quantitative scores

Protocol 2: Flux Validation Using 13C-MFA

Purpose: Quantitative comparison of FBA-predicted and experimentally determined fluxes

- Grow E. coli culture in defined medium with 13C-labeled substrate (e.g., [1-13C]glucose)

- Measure metabolic fluxes using mass spectrometry and/or NMR

- Calculate flux maps from isotopic labeling data

- Compare with FBA predictions using χ2-test or residual sum of squares

- Perform flux variability analysis to assess confidence intervals

Protocol 3: Machine Learning-Assisted Validation

Purpose: Integrate multi-omics data for improved validation

- Collect transcriptomics and/or proteomics data under target conditions

- Train supervised machine learning models to predict fluxes from omics data

- Compare ML-predicted fluxes with FBA predictions and experimental data

- Calculate mean squared error and correlation coefficients for method comparison

Research Reagent Solutions

Table 2: Essential Research Tools for E. coli FBA Validation

| Tool/Resource | Function | Application in Validation |

|---|---|---|

| COBRA Toolbox | MATLAB-based suite for constraint-based modeling | Implement FBA and perform basic validation tests |

| cobrapy | Python package for constraint-based modeling | Scriptable validation workflows and flux sampling |

| MEMOTE | Automated testing of genome-scale models | Quality control and model functionality verification |

| BRENDA database | Enzyme kinetic parameters | Incorporate enzyme constraints into models |

| EcoCyc database | E. coli genes and metabolism | Reference for model reconstruction and validation |

| BiGG Models database | Curated genome-scale metabolic models | Benchmarking and comparative validation |

Visualization of Validation Workflows

Diagram 1: FBA Validation Framework

Diagram 2: Multi-Method Validation Approach

The steady-state assumption remains the cornerstone of constraint-based metabolic modeling, with recent mathematical frameworks extending its applicability to oscillating and growing systems. For researchers relying on FBA predictions in drug development and biotechnology applications, robust validation is not optional but essential. The comparison of validation methods presented here reveals a evolving landscape where traditional statistical tests are being supplemented with machine learning approaches and multi-omics integration. The development of frameworks like TIObjFind for objective function identification and the standardization of quality control through tools like MEMOTE represent significant advances in model validation practices. As FBA continues to be applied to increasingly complex biological systems and engineering challenges, the adoption of rigorous, multi-faceted validation protocols will be crucial for enhancing confidence in model predictions and ensuring successful translation to real-world applications.

In the realm of systems biology and metabolic engineering, accurately predicting phenotypic outcomes is crucial for advancing biological research and biotechnological applications. Validation serves as the critical process that ensures these computational predictions reflect biological reality, bridging the gap between in silico models and in vivo functionality. For Escherichia coli researchers utilizing Flux Balance Analysis (FBA), validation provides the necessary confidence in model-derived fluxes by quantifying their agreement with experimental measurements. Without robust validation procedures, FBA predictions risk remaining theoretical exercises with limited practical utility. This guide examines the current validation methodologies for E. coli FBA, comparing statistical frameworks, experimental protocols, and emerging approaches to equip researchers with practical strategies for assessing prediction accuracy across diverse biological contexts.

Comparative Analysis of Validation Methods for FBA Predictions

Validation methods for FBA span qualitative to quantitative approaches, each with distinct strengths, limitations, and appropriate use cases. The table below summarizes the primary validation techniques employed in constraint-based modeling:

Table 1: Validation Methods for Flux Balance Analysis Predictions

| Validation Method | Description | Strengths | Limitations | Best Applications |

|---|---|---|---|---|

| Growth/No-Growth Comparison | Qualitative assessment of model's ability to predict viability on specific substrates [8] | Simple implementation; clear biological interpretation | Only indicates existence of metabolic routes; uninformative for internal flux accuracy [8] | Testing essential genes or auxotrophies; network gap analysis |

| Quantitative Growth Rate Comparison | Quantitative comparison of predicted vs. measured growth rates [8] | Tests overall metabolic efficiency; incorporates multiple constraints | Does not validate internal flux distributions; sensitive to biomass composition [8] | Optimizing growth conditions; media formulation |

| 13C-MFA Flux Comparison | Comparison of FBA-predicted internal fluxes against 13C-Metabolic Flux Analysis estimates [8] [20] | Gold standard for internal flux validation; highly quantitative | Experimentally intensive; requires isotopic labeling data [20] | Critical model validation; algorithm development |

| Machine Learning Integration | Supervised ML models using omics data to predict fluxes [18] | Can outperform traditional FBA; incorporates multi-omics data | Black-box nature; requires large training datasets [18] | Condition-specific predictions; high-throughput screening |

| Comparative Flux Sampling (CFSA) | Statistical comparison of flux spaces for different phenotypes [21] | Identifies engineering targets; enables growth-uncoupled strategies [21] | Computationally intensive; requires well-curated models | Metabolic engineering; strain design |

Experimental Protocols for Key Validation Approaches

Protocol 1: Validation Against 13C-Metabolic Flux Analysis

13C-MFA provides the most rigorous validation of internal flux predictions and follows a standardized experimental and computational workflow:

Experimental Design: Cultivate E. coli with 13C-labeled substrates (typically [1-13C]glucose or [U-13C]glucose) under controlled conditions [8] [20].

Isotopic Labeling: Harvest cells during mid-exponential growth phase and extract intracellular metabolites.

Mass Spectrometry Analysis: Measure mass isotopomer distributions (MIDs) of metabolic intermediates using GC-MS or LC-MS [20].

Flux Estimation: Compute metabolic fluxes by minimizing the residual between measured and simulated MIDs using specialized software.

Statistical Comparison: Calculate goodness-of-fit metrics between FBA-predicted and 13C-MFA-estimated fluxes, typically using χ2-test or confidence intervals from Monte Carlo sampling [20].

This protocol validates the accuracy of internal flux distributions rather than just growth phenotypes, providing crucial information about pathway usage and network function.

Protocol 2: Multi-Omic Machine Learning Validation

A emerging approach leverages machine learning with multi-omics data to validate and potentially enhance FBA predictions:

Data Collection: Acquire paired transcriptomic/proteomic and flux data (from 13C-MFA or similar) across multiple growth conditions [18].

Feature Engineering: Preprocess omics data to identify informative features (gene expression levels, protein abundances).

Model Training: Train supervised ML models (random forests, neural networks) to predict metabolic fluxes from omics inputs.

Performance Assessment: Compare ML-predicted fluxes against both experimental data and FBA predictions using error metrics (RMSE, MAE).

Model Interpretation: Identify features most predictive of flux changes to generate biological insights [18].

This data-driven approach can capture regulatory effects not incorporated in standard FBA and may outperform traditional constraint-based methods in certain applications.

Visualization of Validation Workflows

Title: FBA Validation Framework

Research Reagent Solutions for Validation Experiments

Implementing robust validation requires specific experimental and computational tools. The following table details essential reagents and their applications in FBA validation workflows:

Table 2: Essential Research Reagents for FBA Validation Studies

| Reagent/Resource | Function in Validation | Application Notes |

|---|---|---|

| 13C-Labeled Substrates | Enables 13C-MFA for internal flux validation | [1-13C]glucose recommended for initial studies; multiple tracers improve resolution [20] |

| iML1515 Genome-Scale Model | Base metabolic model for E. coli K-12 | Contains 1,515 genes, 2,719 reactions; well-curated for validation studies [1] |

| COBRA Toolbox | MATLAB software for FBA and validation | Implements MEMOTE for model quality control; flux variability analysis [8] |

| ECMpy Workflow | Adds enzyme constraints to FBA | Improves prediction realism by incorporating enzyme kinetics and abundance [1] |

| BRENDA Database | Source of enzyme kinetic parameters (kcat values) | Critical for enzyme-constrained models; limited coverage of transport reactions [1] |

Validating FBA predictions against biological reality requires a multifaceted approach tailored to specific research questions. While growth phenotype comparisons offer initial validation, 13C-MFA remains the gold standard for internal flux validation despite its experimental complexity. Emerging methods, including machine learning integration and comparative flux sampling, provide promising avenues for enhancing predictive accuracy across diverse conditions. For E. coli researchers, combining multiple validation strategies—leveraging well-curated models like iML1515 with appropriate experimental designs—offers the most robust approach to ensure biological realism. As the field advances, developing standardized validation benchmarks and reporting standards will be crucial for translating FBA predictions into successful metabolic engineering outcomes.

Methodologies for Validation: From Goodness-of-Fit to Phenotypic Screens

The χ2-test of Goodness-of-Fit in 13C-MFA and Its Application to FBA

Quantifying intracellular metabolic fluxes is fundamental to advancing both basic biological understanding and biotechnological applications in Escherichia coli research. Constraint-based modeling frameworks, primarily 13C-Metabolic Flux Analysis (13C-MFA) and Flux Balance Analysis (FBA), are the most commonly used methods for estimating or predicting these in vivo fluxes, which cannot be measured directly [20]. Both methods rely on metabolic network models operating at a metabolic steady-state. A critical, yet sometimes underappreciated, aspect of employing these techniques is the statistical validation of the resulting flux maps and the selection of the most appropriate model architecture. The χ2-test of goodness-of-fit is the most widely used quantitative validation and selection approach in 13C-MFA, providing a statistical measure for how well a model explains the experimental data [20]. Its application, however, presents distinct challenges and differs from validation practices in FBA.

This guide objectively compares the role of the χ2-test in validating 13C-MFA models with the approaches used for FBA in E. coli research. We summarize quantitative performance data, detail key experimental protocols, and provide resources to inform the choices of researchers, scientists, and drug development professionals engaged in E. coli flux analysis.

The χ2-Test in 13C-Metabolic Flux Analysis (13C-MFA)

Principle and Application

In 13C-MFA, cells are fed a 13C-labeled substrate (e.g., [U-13C]glucose), and the resulting mass isotopomer distributions (MIDs) of metabolites are measured using techniques like mass spectrometry [20] [22]. The core of the method involves fitting an assumed metabolic network model to this isotopic labeling data by varying the flux estimates to minimize the difference between the measured and simulated MIDs [20]. The χ2-test of goodness-of-fit is then used to statistically assess whether the discrepancies between the experimental data and the model predictions are likely due to random measurement error alone. A model that passes the χ2-test (typically at a 5% significance level) is considered statistically acceptable and not rejected [22].

Workflow and Protocol

The standard iterative workflow for model development and validation in 13C-MFA is as follows [22]:

- Experiment Design and Data Collection: Cells are cultivated in a defined medium containing a specific 13C-labeled tracer. At metabolic steady-state, samples are taken, and MIDs for key metabolites are measured.

- Parameter Estimation: An initial metabolic network model is defined, and fluxes are estimated by minimizing the weighted sum of squared residuals (SSR) between measured and simulated MIDs.

- χ2-test of Goodness-of-Fit: The SSR is compared to a critical χ2 value. The degrees of freedom for this test are calculated as the number of independent labeling measurements minus the number of free parameters estimated [22].

- Model Selection and Iteration: If the model is rejected by the χ2-test, the network structure is modified (e.g., by adding or removing reactions), and steps 2-3 are repeated until a statistically acceptable model is found.

Table 1: Key Reagents for 13C-MFA Tracer Experiments

| Research Reagent | Function in Experiment | Example Specifics |

|---|---|---|

| [U-13C]Glucose | Uniformly labeled carbon tracer; reveals comprehensive flux pathways | 98 atom% 13C; used in parallel labeling experiments [23] |

| 1,2-13C2 Glucose | Positionally labeled tracer; resolves specific isomerase fluxes | Resolves phosphoglucoisomerase flux [24] |

| [U-13C]Glutamine | Labeled amino acid precursor for complex/mammalian systems | Used in optimal mixture designs with glucose tracers [24] |

| Custom Tracer Mixtures | Optimizes information content and cost-effectiveness | E.g., mixtures of 1,2-13C2 glucose and U-13C glucose [24] |

Limitations and Complementary Methods

While foundational, reliance solely on the χ2-test for model selection in 13C-MFA has recognized limitations [20] [22]:

- Sensitivity to Error Estimation: The test's correctness depends on accurate knowledge of measurement uncertainties (σ). These are often estimated from biological replicates but may not account for all error sources, such as instrumental bias or small deviations from steady-state. Underestimated errors make it very difficult for any model to pass the test [22].

- Informal Model Development: The iterative process of modifying a model until it passes the χ2-test on a single dataset can lead to overfitting or underfitting, as the same data is used for both parameter estimation and model selection [22].

- Alternative Selection Criteria: Other methods exist, such as selecting the model that passes the χ2-test with the greatest margin or that minimizes information criteria like AIC or BIC [22].

To address these issues, a validation-based model selection method has been proposed. This approach involves splitting the data into an estimation set (Dest) for fitting and a separate validation set (Dval) for evaluation. The model that best predicts the independent validation data is selected. This method has been shown to be more robust to uncertainties in measurement error and consistently selects the correct model in simulation studies [22].

Model Validation in Flux Balance Analysis (FBA)

The Role of Objective Function Selection and FBA Validation

Flux Balance Analysis (FBA) predicts metabolic fluxes by using linear optimization to identify a flux map that maximizes or minimizes a defined objective function (e.g., biomass growth or ATP production) within a constrained solution space [20]. Unlike 13C-MFA, FBA does not use intracellular isotopic labeling data and therefore the χ2-test of goodness-of-fit is not directly applicable for validating internal flux predictions. Instead, validation of FBA models relies on comparing model predictions with experimental growth phenotypes [20] [13].

The choice of the objective function is a key determinant of the predicted flux map. Therefore, a crucial step in FBA is the evaluation of alternative objective functions to identify those that result in the best agreement with experimental data [20] [10]. Validation typically involves assessing the model's accuracy in predicting gene essentiality and nutrient utilization.

Workflow and Validation Metrics

The process for developing and validating a genome-scale FBA model for E. coli involves:

- Model Reconstruction: Building a stoichiometric matrix based on genomic and biochemical data.

- Constraint Definition: Setting constraints on uptake and secretion fluxes based on experimental conditions.

- Objective Function Optimization: Solving the linear programming problem to predict growth rates or other phenotypes.

- Phenotypic Validation: Comparing model predictions against high-throughput experimental data.

Table 2: Common Validation Metrics for E. coli FBA Models

| Validation Type | Experimental Data Used | Reported Performance of Latest Models |

|---|---|---|

| Gene Essentiality | Growth phenotypes of single-gene knockout mutants (e.g., Keio collection) | EcoCyc-18.0-GEM: 95.2% accuracy in predicting essentiality [10] |

| Nutrient Utilization | Growth/no-growth data on different carbon sources | EcoCyc-18.0-GEM: 80.7% accuracy across 431 conditions [10] |

| Quantitative Flux Comparison | 13C-MFA flux maps for core metabolism | Used as a robust validation for internal flux predictions [20] |

A key validation is comparing FBA-predicted fluxes against 13C-MFA measured fluxes, which provides a direct check on the accuracy of internal flux predictions [20]. Advanced validation of E. coli FBA models using mutant fitness data across 25 carbon sources has highlighted specific areas for model improvement, such as the mapping of isoenzyme gene-protein-reaction rules and the availability of vitamins/cofactors in the experimental environment that may not be present in the simulation [13].

Comparative Analysis and Future Outlook

Direct Comparison of 13C-MFA and FBA Validation

Table 3: Comparison of Validation Practices in 13C-MFA and FBA

| Aspect | 13C-MFA | Flux Balance Analysis (FBA) |

|---|---|---|

| Primary Validation Method | χ2-test of goodness-of-fit on isotopic labeling data. | Comparison of predicted vs. observed growth phenotypes (gene essentiality, nutrient use). |

| Key Assumption | The metabolic network model is complete and measurement errors are accurately known. | The objective function (e.g., growth maximization) reflects the cell's evolutionary goal. |

| Data Used for Validation | Internal mass isotopomer distributions (MIDs). | External, phenotypic data (growth/ no-growth). |

| Model Selection | Iterative process of modifying network structure based on χ2-test or validation data. | Evaluation of different objective functions or network reconstructions based on prediction accuracy. |

| Strength | Provides a direct statistical test for model consistency with high-resolution intracellular data. | Allows validation of genome-scale models with high-throughput mutant fitness data. |

| Primary Challenge | Sensitivity to error estimation; potential for overfitting during iterative model development. | Difficult to validate internal flux predictions without 13C-MFA data; potential environmental mismatches. |

Synergies and Future Directions

The most robust validation for FBA-predicted internal fluxes is a direct comparison with fluxes estimated by 13C-MFA [20]. This synergy highlights the importance of considering both modeling approaches in tandem. Future developments are likely to focus on:

- Robust Model Selection: Wider adoption of validation-based model selection in 13C-MFA to mitigate overfitting and reduce dependence on accurate a priori error estimates [22].

- Model Refinement: Using inconsistencies between FBA predictions and experimental data (e.g., false essentiality predictions for vitamin genes) to drive targeted curation of genome-scale models, such as refining gene-protein-reaction rules [13] [10].

- Integrated Frameworks: Developing combined validation frameworks for 13C-MFA that incorporate additional data types, such as metabolite pool sizes, to enhance confidence in flux estimates [20].

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools

| Tool/Reagent Name | Category | Primary Function in E. coli Flux Research |

|---|---|---|

| [U-13C]Glucose | Biochemical Tracer | Gold-standard tracer for validating network models in parallel labeling experiments [23]. |

| 1,2-13C2 Glucose | Biochemical Tracer | Resolves specific flux ambiguities (e.g., around phosphoglucoisomerase) [24]. |

| Keio Collection | Biological Resource | Library of E. coli single-gene knockouts for essentiality validation of FBA models [25]. |

| EcoCyc Database | Bioinformatics Database | Curated knowledge base for generating and inspecting E. coli metabolic models [10]. |

| 13C-FLUX2 / influx_s | Software Platform | Software used for the design of 13C-tracer experiments and estimation of metabolic fluxes [24]. |

| Precision-Recall AUC | Validation Metric | Robust metric for quantifying FBA model accuracy using imbalanced mutant fitness data [13]. |

Leveraging High-Throughput Mutant Fitness Data (e.g., RB-TnSeq) for Validation

Constraint-based metabolic modeling, particularly Flux Balance Analysis (FBA), provides a powerful mathematical framework for simulating cellular metabolism at genome-scale. These models simulate metabolic flux distributions using stoichiometric coefficients of metabolic reactions and optimization principles to predict biochemical network behavior under various conditions. A critical challenge in the field has been validating the accuracy of these model predictions against reliable experimental data. The emergence of high-throughput mutant fitness profiling technologies, especially Random Barcode Transposon Site Sequencing (RB-TnSeq), has revolutionized model validation by enabling genome-scale assessment of gene importance across diverse environmental conditions. This approach allows researchers to quantitatively evaluate model predictions against empirical fitness measurements, identifying specific areas where models succeed or fail in capturing biological reality.

RB-TnSeq and related high-throughput functional genomics technologies have enabled systematic quantification of gene fitness contributions by generating pooled mutant libraries where each strain contains a unique genetic barcode. This allows parallel fitness measurement of thousands of mutants through sequencing-based counting, creating rich datasets for model validation. For Escherichia coli, a model organism with extensively curated metabolic models, these fitness data provide an unprecedented opportunity to rigorously assess prediction accuracy and drive model improvement through iterative refinement cycles.

High-Throughput Mutant Fitness Profiling Technologies

Key Functional Genomic Technologies

Multiple high-throughput technologies have been developed for comprehensive functional genomic analysis in bacteria, each with distinct advantages and applications:

RB-TnSeq (Random Barcode Transposon Site Sequencing) utilizes genome-wide transposon insertion mutants labeled with unique DNA barcodes. The barcodes enable quantification of mutant abundance through sequencing, allowing fitness measurements across various growth conditions. A key limitation is that it only assays non-essential genes, as essential genes cannot tolerate transposon insertions [26].

CRISPRi (CRISPR interference) employs a catalytically dead Cas9 protein (dCas9) guided by single-guide RNA (sgRNA) to programmably knock down gene expression. This partial loss-of-function approach allows probing of all genes, including essential ones, and enables more precise targeting of intergenic regions [26].

Dub-seq (Dual-barcoded shotgun expression library sequencing) uses shotgun cloning of randomly sheared genomic DNA fragments on a dual-barcoded plasmid for gain-of-function screens. This approach identifies genes whose overexpression confers fitness advantages or reveals dominant-negative effects [26].

Table 1: Comparison of High-Throughput Functional Genomic Technologies

| Technology | Type | Genes Covered | Key Advantages | Limitations |

|---|---|---|---|---|

| RB-TnSeq | Loss-of-function | Non-essential genes | Highly parallel, cost-effective | Cannot assay essential genes |

| CRISPRi | Partial loss-of-function | All genes | Targets essential genes, precise | Partial knockdown only |

| Dub-seq | Gain-of-function | All genes | Identifies overexpression effects | May not reflect physiological levels |

RB-TnSeq Experimental Workflow

The RB-TnSeq methodology follows a standardized workflow that enables reproducible fitness profiling:

Diagram 1: RB-TnSeq experimental workflow for model validation.

The experimental pipeline begins with transposon mutagenesis to create a comprehensive library of mutant strains, each with a unique barcode linked to its insertion site. The mutant library is then pooled and subjected to conditional screening across various environmental conditions, such as different carbon sources or stress conditions. After growth, barcode sequencing quantifies the abundance of each mutant before and after selection. Fitness calculations then determine the relative importance of each gene for growth under each condition, generating datasets that can be directly compared to model predictions [26] [27].

The scalability of RB-TnSeq makes it particularly valuable for metabolic model validation, as fitness data can be generated for thousands of genes across dozens of conditions, creating rich datasets for statistical evaluation of model accuracy. This comprehensive coverage enables researchers to identify systematic errors in model predictions rather than just isolated inaccuracies.

Quantitative Assessment of E. coli Metabolic Model Accuracy

Performance Evaluation Across Model Generations

A comprehensive study evaluating four successive versions of E. coli genome-scale metabolic models (iJR904, iAF1260, iJO1366, and iML1515) against RB-TnSeq fitness data revealed important trends in model development. The analysis utilized mutant fitness data across thousands of genes and 25 different carbon sources, providing a robust statistical foundation for accuracy assessment [3] [28].

The study employed the area under a precision-recall curve (AUC) as the primary accuracy metric, which was found to be more informative than overall accuracy or receiver operating characteristic curves due to the imbalanced nature of the dataset (far more non-essential than essential genes). This metric focuses on the correct prediction of gene essentiality, which is biologically more meaningful than predicting non-essentiality [28].

Table 2: Accuracy Comparison of E. coli GEM Versions Using RB-TnSeq Validation

| Model Version | Publication Year | Genes in Model | Precision-Recall AUC | Key Improvements |

|---|---|---|---|---|

| iJR904 | 2003 | 904 | 0.69 | Early comprehensive reconstruction |

| iAF1260 | 2007 | 1,260 | 0.67 | Expanded gene coverage |

| iJO1366 | 2011 | 1,366 | 0.65 | Improved biomass formulation |

| iML1515 | 2017 | 1,515 | 0.66 (0.72 after corrections) | Most complete gene coverage |

Interestingly, while the number of genes matched between models and experimental datasets steadily increased—indicating improved coverage of metabolic functions—the initial analysis showed a decrease in accuracy across subsequent model versions. However, this trend was reversed after identifying and correcting systematic errors in the analysis approach, particularly regarding vitamin and cofactor availability [28].

Detailed investigation of errors in the latest E. coli model (iML1515) revealed several key sources of systematic prediction inaccuracies:

Vitamin and cofactor availability accounted for a substantial number of false-negative predictions. Specifically, 21 different genes involved in biosynthesis of biotin, R-pantothenate, thiamin, tetrahydrofolate, and NAD+ were predicted as essential when knocked out, while experimental fitness data showed high viability. This discrepancy was traced to these metabolites being available to mutants despite their absence from the defined experimental growth medium, likely due to cross-feeding between mutants or cellular carry-over of stable metabolites [28].

Isoenzyme gene-protein-reaction mapping was identified as another prominent source of inaccurate predictions. Incorrect annotation of isoenzyme relationships led to erroneous essentiality predictions when non-redundant functions were assumed for actually redundant isoenzymes [3] [28].

Machine learning analysis of errors identified metabolic fluxes through hydrogen ion exchange and specific central metabolism branch points as important determinants of model accuracy, highlighting these areas as priorities for future model refinement [28].

After correcting the environmental conditions in the model to include available vitamins and cofactors, and addressing isoenzyme mapping issues, the accuracy of the iML1515 model improved substantially, with the precision-recall AUC increasing from 0.66 to 0.72 [28].

Experimental Protocols for Model Validation

RB-TnSeq Library Construction and Fitness Profiling

The generation of high-quality mutant fitness data requires careful execution of a standardized experimental protocol:

Stage 1: Library Construction

- Generate transposon mutagenesis library using a custom Mariner transposon containing random barcode sequences

- Transform library into target E. coli strain (typically K-12 MG1655 or BW25113)

- Sequence the library to map barcodes to insertion sites and verify coverage

- Archive library as frozen stock for all future experiments [26]

Stage 2: Competitive Growth Experiments

- Inoculate pooled library into defined minimal medium with specific carbon sources

- Harvest samples at exponential phase (approximately 5 generations) and stationary phase (approximately 12-15 generations)

- Isolate genomic DNA and amplify barcodes using PCR with indexing primers for multiplexing

- Sequence barcode amplicons using high-throughput sequencing [26] [28]

Stage 3: Fitness Calculation

- Count barcode reads for each sample using digital counting methods

- Calculate fitness values for each gene based on mutant abundance changes

- Normalize fitness values across conditions and batches

- Perform statistical analysis to identify significant fitness defects [26] [29]

Model Validation Workflow

The validation of metabolic models against RB-TnSeq data follows a systematic computational pipeline:

Diagram 2: Metabolic model validation workflow using mutant fitness data.

The validation process begins with processing experimental fitness data into binary growth/no-growth calls using appropriate thresholding. In parallel, in silico gene knockout simulations are performed using Flux Balance Analysis (FBA) for each corresponding condition. Model predictions are then compared to experimental results, with accuracy quantified using metrics such as precision-recall AUC. Finally, systematic analysis of errors identifies specific areas for model refinement [3] [28].

Successful implementation of RB-TnSeq validation requires specific experimental and computational resources:

Table 3: Essential Research Reagents and Resources for RB-TnSeq Validation

| Resource | Type | Function | Example Sources |

|---|---|---|---|

| E. coli K-12 GEMs | Computational | Metabolic simulation | BiGG Models, MetaNetX |

| RB-TnSeq Library | Biological | Mutant fitness profiling | Academic core facilities |

| BarSeq Protocol | Methodological | Barcode sequencing | Wetmore et al. 2015 |

| COBRA Toolbox | Computational | Constraint-based modeling | COBRApy, MATLAB COBRA |

| iML1515 Model | Computational | E. coli metabolic reconstruction | BiGG Models |

| Defined Media Components | Chemical | Controlled growth conditions | Sigma-Aldrich |

The COBRA (Constraint-Based Reconstruction and Analysis) toolbox provides essential computational tools for performing FBA simulations and in silico gene knockouts [1]. The iML1515 model represents the most complete reconstruction of E. coli K-12 MG1655 metabolism to date, containing 1,515 genes, 2,719 metabolic reactions, and 1,192 metabolites [1] [4]. For experimental work, defined minimal media with carefully controlled carbon sources and nutrient supplementation is crucial for generating reproducible fitness data [28].

The integration of high-throughput mutant fitness data from technologies like RB-TnSeq has transformed the validation of metabolic models from qualitative assessment to quantitative evaluation. The systematic comparison of E. coli metabolic models against genome-scale fitness data has revealed both substantial progress in model development and persistent challenges in accurate phenotypic prediction.

The identification of systematic error sources, particularly regarding nutrient availability in experimental conditions and isoenzyme annotation, provides a roadmap for future model refinement. Furthermore, the demonstration that machine learning approaches can identify key flux determinants of model accuracy suggests promising avenues for integrating data-driven and knowledge-driven modeling approaches.

As metabolic modeling continues to expand into non-model organisms and complex community systems, the rigorous validation framework established for E. coli will serve as an essential reference for assessing model reliability. The continued development of both experimental fitness profiling technologies and computational validation methods will be crucial for advancing systems biology from descriptive network reconstruction to predictive phenotype simulation.

Genome-scale metabolic models (GEMs) have become indispensable tools in systems biology and metabolic engineering, enabling researchers to predict cellular behavior under various genetic and environmental conditions. For Escherichia coli researchers utilizing Flux Balance Analysis (FBA), the predictive performance of these models directly impacts experimental design and biotechnological applications, from biofuel production to pharmaceutical development [30] [31]. However, the reliability of FBA predictions depends entirely on the quality of the underlying metabolic model, where issues such as incorrect stoichiometry, missing annotations, or energy-generating cycles can lead to untrustworthy predictions [32]. The absence of standardized quality control has historically hampered model reproducibility, reuse, and comparative analysis across different research groups, creating a critical bottleneck in the field.

MEMOTE (METabolic MOdel TEsts) represents a community-driven response to this challenge, providing an open-source, standardized test suite for comprehensive quality assessment of GEMs [32]. This comparison guide examines MEMOTE's role within statistical validation methods for E. coli FBA research, objectively evaluating its capabilities alongside alternative approaches. By analyzing experimental data and implementation protocols, we provide researchers with a scientific basis for selecting appropriate quality control frameworks that ensure model reliability and predictive accuracy.

MEMOTE Testing Framework: A Comprehensive Quality Assessment Suite

Core Testing Modules and Scoring Methodology

MEMOTE implements a structured, multi-faceted testing approach that evaluates metabolic models against fundamental biochemical principles and modeling standards. Its testing framework is organized into four primary areas, each targeting distinct aspects of model quality [32]:

Annotation Tests: These verify that model components are properly annotated according to community standards with MIRIAM-compliant cross-references, ensuring identifiers belong to consistent namespaces and components are described using appropriate Systems Biology Ontology (SBO) terms. Standardized annotations are crucial for model interoperability, comparison, and extension across research teams [32].

Basic Tests: This module checks the formal correctness of model structure by verifying the presence and completeness of essential components including metabolites, compartments, reactions, and genes. It also validates metabolite formula and charge information, Gene-Protein-Reaction (GPR) rules, and calculates general quality metrics such as the degree of metabolic coverage representing the ratio of reactions to genes [32].

Biomass Reaction Tests: For models simulating growth, MEMOTE assesses the biomass reaction's ability to produce necessary precursors under different conditions, checks for biomass consistency, verifies non-zero growth rates, and identifies direct precursors. This is particularly critical for E. coli FBA research where accurate growth prediction is often a primary objective [32].

Stoichiometric Tests: These identify stoichiometric inconsistencies, erroneously produced energy metabolites, and permanently blocked reactions. Errors in stoichiometries may result in thermodynamically infeasible phenomena such as ATP production from nothing, fundamentally undermining flux-based analysis [32].

MEMOTE calculates an overall score as a weighted sum of individual test results normalized by the maximally achievable score. The scoring system allows researchers to quickly assess model quality and track improvements over time, with weights assignable to entire test categories or individual tests based on research priorities [33]. The framework is implemented in Python and supports models encoded in Systems Biology Markup Language (SBML) level 3 with the flux balance constraints package, considered the community standard for encoding GEMs [32].

Experimental Implementation and Workflow Integration

MEMOTE supports two primary workflows tailored to different stages of the model lifecycle [32]. For peer review, it generates "snapshot reports" for individual models or "diff reports" for comparing multiple models. For ongoing model development, it facilitates version-controlled repositories with "history reports" that track quality metrics across model edits, encouraging continuous quality improvement through platforms like GitHub and GitLab [32].

The implementation protocol for MEMOTE involves:

Model Format Conversion: Ensuring the GEM is encoded in SBML format, preferably SBML3FBC for optimal compatibility [32].

Test Suite Execution: Running the core test battery through MEMOTE's command-line interface or Python API.

Results Interpretation: Analyzing the report output, which uses a color-coded system (red-to-green gradient) to indicate performance levels across test categories [33].

Iterative Refinement: Addressing identified issues and rerunning tests to validate corrections, with the history report tracking quality improvements over time.

For E. coli research specifically, MEMOTE can be integrated with established reconstruction pipelines and validated against gold-standard models like iML1515, which includes 1,515 open reading frames, 2,719 metabolic reactions, and 1,192 metabolites [1].

Comparative Analysis of Quality Control Approaches

MEMOTE Versus Alternative Model Testing Frameworks

To objectively evaluate MEMOTE's position in the ecosystem of metabolic model quality control, we compare its capabilities against other validation approaches used in the field. This analysis is based on experimental assessments of model collections and reports from comparative studies.

Table 1: Capability Comparison of Metabolic Model Quality Control Approaches

| Quality Control Feature | MEMOTE | Manual Curation | Tool-Specific Checks | Consensus Modeling |

|---|---|---|---|---|

| Standardized Test Suite | Comprehensive | Limited, expert-dependent | Variable by tool | Not applicable |

| Stoichiometric Balance | Automated testing | Manual verification | Limited implementation | Inherited from source models |

| Annotation Completeness | MIRIAM compliance checks | Inconsistent application | Database-dependent | Varies by reconstruction tool |

| Biomass Reaction Validation | Specialized tests | Case-by-case basis | Often implemented | Dependent on constituent models |

| Quantitative Scoring | Weighted scoring system | Subjective assessment | Not typically provided | Not directly applicable |

| Interoperability Focus | SBML3FBC standard | Format agnostic | Tool-specific formats | Mapping challenges |

| Community Adoption | Growing, openCOBRA | Traditional practice | Tool users only | Emerging approach |

Experimental data from large-scale model evaluations demonstrates MEMOTE's effectiveness. In one validation study encompassing 10,780 models from seven GEM collections, MEMOTE revealed significant quality variations, with approximately 70% of published models containing at least one stoichiometrically unbalanced metabolite [32]. The same study found that automatically reconstructed GEMs (except Path2Models) generally demonstrated better stoichiometric consistency than manually curated models, highlighting the value of automated testing.

Quantitative Performance Benchmarks Across Model Collections

The application of standardized testing to diverse model collections provides insightful performance benchmarks. When analyzing models from major reconstruction sources, MEMOTE assessments have revealed distinct structural and functional characteristics across platforms.

Table 2: Performance Metrics Across Model Reconstruction Platforms Based on MEMOTE Evaluation

| Reconstruction Platform | Stoichiometric Consistency | Reactions without GPR Rules | Universally Blocked Reactions | Annotation Compliance |

|---|---|---|---|---|

| CarveMe | High | ~15% | Very low | Limited to platform-specific |

| gapseq | Variable | Varies by model | Moderate | Comprehensive biochemical |

| KBase | Variable | ~15% | ~30% | SBML-compliant |

| BiGG Models | High variability | Up to 85% in subgroups | ~20% | SBML-compliant, MetaNetX |

| AGORA | Variable | ~15% | ~30% | SBML-compliant |

| Path2Models | Problematic | Varies by model | Very low | Limited |

Comparative analysis reveals that model sources strongly influence quality metrics. A t-distributed stochastic neighbor embedding (t-SNE) analysis of normalized MEMOTE test results demonstrated that models from the same source are generally more similar to each other than to models from other sources, confirming platform-specific quality profiles [32]. This has important implications for E. coli FBA research, where model selection directly impacts predictive accuracy.

Integration with Advanced Flux Analysis Techniques

Complementary Roles in the Validation Ecosystem

MEMOTE operates as a foundational component within a broader validation ecosystem for constraint-based modeling. While MEMOTE focuses on structural and stoichiometric correctness, other specialized methods address complementary aspects of model validation:

13C-MFA Validation: Traditional 13C Metabolic Flux Analysis uses χ2-test of goodness-of fit to validate flux maps against experimental isotopic labeling data [8]. This approach provides statistical validation of flux predictions but requires extensive experimental data.

Bayesian Flux Sampling: Methods like BayFlux use Bayesian inference and Markov Chain Monte Carlo sampling to identify probability distributions of fluxes compatible with experimental data, providing robust uncertainty quantification [34].

Hybrid Constraining Approaches: NEXT-FBA represents an emerging methodology that uses neural networks to correlate exometabolomic data with intracellular flux constraints, improving prediction accuracy with minimal input data requirements [35].

MEMOTE's unique contribution lies in its ability to identify structural problems that would compromise any subsequent flux analysis, regardless of the specific technique employed. For example, a model with stoichiometric inconsistencies would generate biologically impossible flux predictions even with advanced sampling algorithms.

Workflow Integration for E. coli FBA Research

For E. coli researchers implementing FBA, MEMOTE fits into a comprehensive quality control workflow that progresses from structural validation to functional prediction:

This workflow ensures that structural defects are identified and corrected before resource-intensive experimental validation, improving research efficiency and reliability.

Essential Research Reagents and Computational Tools

Successful implementation of quality control pipelines requires specific computational tools and resources. The following table details essential components for establishing a robust model testing framework.

Table 3: Essential Research Reagents and Computational Tools for Metabolic Model Quality Control

| Tool/Resource | Type | Primary Function | Implementation Notes |

|---|---|---|---|

| MEMOTE Suite | Open-source Python package | Core quality testing and scoring | Requires Python 3.7+; integrates with CI/CD pipelines |