Strategic Integration of High-Throughput and Targeted Screening: Accelerating Precision Drug Discovery

This article explores the synergistic coupling of high-throughput (HTS) and targeted screening workflows, a transformative strategy in modern drug discovery.

Strategic Integration of High-Throughput and Targeted Screening: Accelerating Precision Drug Discovery

Abstract

This article explores the synergistic coupling of high-throughput (HTS) and targeted screening workflows, a transformative strategy in modern drug discovery. We detail how AI-driven HTS rapidly identifies potential drug candidates from vast compound libraries, while targeted screening provides deep mechanistic validation for specific biological targets. Aimed at researchers and drug development professionals, the content covers foundational principles, advanced methodological applications, troubleshooting for common pitfalls, and rigorous validation frameworks. By integrating these approaches, researchers can significantly enhance the efficiency, accuracy, and clinical relevance of the therapeutic development pipeline, paving the way for more effective personalized cancer therapies and treatments for complex diseases.

Laying the Groundwork: Core Principles and the Strategic Rationale for Hybrid Screening

High-throughput screening (HTS) represents a foundational methodology in modern scientific discovery, particularly in the fields of drug discovery, biology, materials science, and chemistry [1]. This approach utilizes integrated robotics, sophisticated data processing software, liquid handling devices, and sensitive detectors to rapidly conduct millions of chemical, genetic, or pharmacological tests [1] [2]. The primary objective of HTS is to quickly identify active compounds, antibodies, or genes—collectively termed "hits"—that modulate specific biomolecular pathways [1]. These hits provide crucial starting points for drug design and understanding biological interactions [1].

The fundamental principle underlying HTS is the ability to process vast libraries of compounds or samples in parallel, testing them for biological activity at the model organism, cellular, pathway, or molecular level [2]. In its most common implementation, HTS enables researchers to screen between 10³ to 10⁶ small molecule compounds of known structure in parallel [2]. The methodology has evolved beyond pharmaceutical applications to include toxicity testing, chemical genomics, and synthetic biology [3] [4].

Core Components of an HTS System

Essential Hardware Components

A functional HTS platform relies on several integrated hardware components that work in concert to achieve rapid screening capabilities. Microtiter plates serve as the fundamental labware, featuring grids of small wells arranged in standardized formats of 96, 384, 1536, 3456, or 6144 wells [1]. These disposable plastic containers hold the test items, which may include different chemical compounds, cells, or enzymes dissolved in appropriate solvents [1].

Robotic automation systems form the backbone of HTS operations, transporting assay microplates between specialized stations for sample and reagent addition, mixing, incubation, and final readout [1]. Modern HTS systems can prepare, incubate, and analyze numerous plates simultaneously, dramatically accelerating the data-collection process [1]. Contemporary HTS robots possess the capability to test up to 100,000 compounds per day, with ultra-high-throughput screening (uHTS) pushing this capacity beyond 100,000 compounds daily [1].

Additional critical instrumentation includes liquid handling devices for precise transfer of minute liquid volumes (often in nanoliters), plate readers for detection, incubators for maintaining optimal environmental conditions, centrifuges, and imaging systems for capturing experimental results [5]. The integration of these components enables the massive parallel processing that defines HTS.

Research Reagent Solutions

Table 1: Essential Research Reagents and Materials in HTS

| Reagent/Material | Function in HTS | Application Examples |

|---|---|---|

| Microtiter Plates | Testing vessel with multiple wells | 96, 384, 1536-well formats for assay execution [1] |

| Compound Libraries | Collections of chemical entities for screening | ChemBridge, ChemDiv, NCI libraries; small molecules, natural products [2] [5] |

| HiBiT Detection System | Protein quantification method | Rapid assessment of protein expression in microbial/mammalian strains [4] |

| Detection Reagents | Enable measurement of biological responses | Fluorescent dyes, luminescent substrates, Alamar Blue for viability [2] |

| Cell Lines | Biological models for screening | THP-1 cells (human monocytic leukemia) for immunology screens [6] |

| CRISPR Guide RNA Libraries | Genetic perturbation tools | Pooled gRNA libraries for genetic screening (e.g., 4k gRNA libraries) [7] |

HTS Assay Design and Implementation

Assay Plate Preparation

The HTS workflow begins with careful assay plate preparation. Screening facilities typically maintain libraries of stock plates whose contents are meticulously catalogued [1]. These stock plates may be created internally or obtained from commercial sources [1]. Rather than using stock plates directly in experiments, researchers create assay plates by pipetting small amounts of liquid (often measured in nanoliters) from the stock plates to corresponding wells of empty plates [1].

The wells of the assay plate are then filled with the biological entities targeted for investigation, such as proteins, cells, or animal embryos [1]. Following an appropriate incubation period to allow the biological material to interact with the compounds in the wells, measurements are taken across all plate wells using either manual or automated methods [1]. Automated analysis machines can measure dozens of plates within minutes, generating thousands of data points rapidly [1].

Experimental Workflow and Process

HTS Experimental Workflow

Quantitative HTS (qHTS): An Advanced Paradigm

Principles of qHTS

Quantitative high-throughput screening (qHTS) represents an evolution of traditional HTS methodology by testing compounds at multiple concentrations rather than a single concentration point [3] [2]. This approach generates full concentration-response relationships for each compound simultaneously during the initial screen [3]. The qHTS paradigm leverages automation and low-volume assay formats to pharmacologically profile large chemical libraries through the generation of complete concentration-response curves for each compound [1].

The primary advantage of qHTS lies in its ability to more fully characterize the biological effects of chemicals while decreasing rates of false positives and false negatives [2]. By providing richer datasets early in the discovery process, qHTS enables more informed decisions about compound prioritization and optimization.

Hill Equation Modeling in qHTS

The Hill equation (HEQN) serves as the most common nonlinear model for describing qHTS response profiles [3]. The logistic form of the Hill equation is expressed as:

Rᵢ = E₀ + (E∞ - E₀) / [1 + exp{-h[logCᵢ - logAC₅₀]}] [3]

Where:

- Rᵢ represents the measured response at concentration Cᵢ

- E₀ denotes the baseline response

- E∞ signifies the maximal response

- AC₅₀ indicates the concentration for half-maximal response

- h represents the shape parameter [3]

The parameters AC₅₀ and E_max (calculated as E∞ - E₀) are frequently used in pharmacological and toxicological assessments as approximations for compound potency and efficacy, respectively [3]. However, parameter estimates obtained from the Hill equation can be highly variable if the tested concentration range fails to include at least one of the two asymptotes, if responses are heteroscedastic, or if concentration spacing is suboptimal [3].

Table 2: Impact of Experimental Replicates on Parameter Estimation in qHTS

| True AC₅₀ (μM) | True E_max (%) | Number of Replicates (n) | Mean AC₅₀ Estimate [95% CI] | Mean E_max Estimate [95% CI] |

|---|---|---|---|---|

| 0.001 | 25 | 1 | 7.92e-05 [4.26e-13, 1.47e+04] | 1.51e+03 [-2.85e+03, 3.1e+03] |

| 0.001 | 25 | 3 | 4.70e-05 [9.12e-11, 2.42e+01] | 30.23 [-94.07, 154.52] |

| 0.001 | 25 | 5 | 7.24e-05 [1.13e-09, 4.63] | 26.08 [-16.82, 68.98] |

| 0.001 | 50 | 1 | 6.18e-05 [4.69e-10, 8.14] | 50.21 [45.77, 54.74] |

| 0.001 | 50 | 3 | 1.74e-04 [5.59e-08, 0.54] | 50.03 [44.90, 55.17] |

| 0.001 | 100 | 1 | 1.99e-04 [7.05e-08, 0.56] | 85.92 [-1.16e+03, 1.33e+03] |

| 0.001 | 100 | 5 | 7.24e-04 [4.94e-05, 0.01] | 100.04 [95.53, 104.56] |

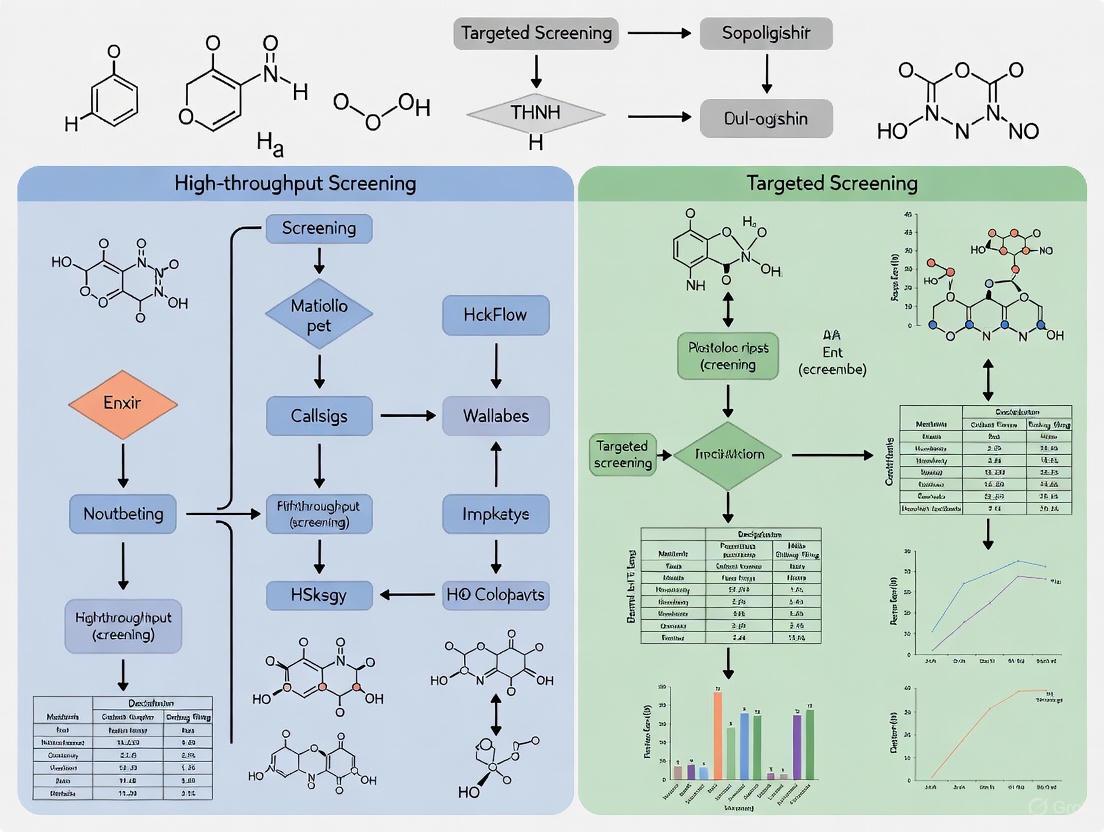

Coupled HTS and Targeted Screening Workflows

A Novel Framework for Metabolic Engineering

Recent research has demonstrated the power of coupling high-throughput screening with targeted validation in metabolic engineering applications [7]. This approach addresses a fundamental challenge in strain development: many industrially interesting molecules cannot be screened at sufficient throughput to leverage modern high-throughput genetic engineering methodologies [7].

The proposed workflow involves initial high-throughput screening of common precursors (e.g., amino acids) that can be screened directly or through artificial biosensors, followed by low-throughput targeted validation of the actual molecule of interest [7]. This strategy enables researchers to uncover non-intuitive beneficial metabolic engineering targets and combinations that might be missed through conventional approaches.

Implementation Case Study

In a practical demonstration of this coupled approach, researchers identified non-obvious novel targets for improving p-coumaric acid (p-CA) and L-DOPA production using large 4k gRNA libraries each deregulating 1000 metabolic genes in Saccharomyces cerevisiae [7]. The initial screen identified 30 targets that increased intracellular betaxanthin content 3.5-5.7 fold [7]. Subsequent targeted screening narrowed these to six targets that increased secreted p-CA titer by up to 15% [7].

Further investigation of combinatorial effects revealed that simultaneous regulation of PYC1 and NTH2 resulted in the highest (threefold) improvement of betaxanthin content, with an additive trend also observed in the p-CA producing strain [7]. When applied to L-DOPA production, the approach identified 10 targets that increased secreted titer by up to 89%, validating the screening by proxy workflow [7].

Coupled HTS and Targeted Screening Workflow

Advanced Protocols and Applications

High-Throughput Flow Cytometry Screening Protocol

Flow cytometry represents a powerful method for analyzing protein expression at the single-cell level but presents challenges when applied to large sample numbers. Recent protocols have addressed this limitation by developing methodologies for high-throughput small molecule screening using flow cytometry analysis of THP-1 cells, a human monocytic leukemia cell line [6].

This approach enables researchers to identify compounds that regulate specific surface proteins (e.g., PD-L1) in stimulated cells and has been successfully used to screen collections of approximately 200,000 compounds [6]. The protocol exemplifies how traditional lower-throughput techniques can be adapted to HTS formats while maintaining the rich information content of single-cell analysis.

HiBiT-Tagged Protein Screening

The HiBiT assay, developed by Promega, provides a valuable screening method for rapid assessment of protein expression across large numbers of candidate microbial and mammalian strains [4]. Implementation of this assay using automated platforms has demonstrated significant efficiency improvements, with one study reporting an 80% reduction in hands-on time compared to standalone lab automation instrumentation [4].

This application enabled quantification of nearly 10,000 protein samples without in-person monitoring or intervention, highlighting how specialized detection technologies coupled with automation can dramatically increase screening throughput while maintaining data quality [4]. The average fold difference between normalized protein concentrations obtained from previous semi-automated protocols versus the new fully-automated system was only 2%, demonstrating excellent reproducibility [4].

Data Analysis and Quality Control

Quality Control Metrics

High-quality HTS assays are critical for successful screening campaigns, requiring integration of both experimental and computational approaches for quality control [1]. Three essential means of quality control include: (i) proper plate design, (ii) selection of effective positive and negative controls, and (iii) development of effective QC metrics to identify assays with inferior data quality [1].

Several quality-assessment measures have been proposed to evaluate data quality, including signal-to-background ratio, signal-to-noise ratio, signal window, assay variability ratio, and Z-factor [1]. More recently, strictly standardized mean difference (SSMD) has been proposed for assessing data quality in HTS assays, offering improved statistical properties for quality assessment [1].

Hit Selection Methodologies

The process of identifying active compounds with desired effects, termed "hit selection," employs different statistical approaches depending on the screening design [1]. For primary screens without replicates, commonly used methods include average fold change, percent inhibition, z-score, and SSMD-based approaches [1]. However, these methods can be sensitive to outliers, prompting development of robust alternatives such as z-score, SSMD, B-score, and quantile-based methods [1].

In screens with replicates, researchers can directly estimate variability for each compound, making SSMD or t-statistics more appropriate as they don't rely on the strong assumptions required by z-score methods [1]. Importantly, SSMD directly assesses effect size rather than merely testing for mean differences, making it particularly valuable for hit selection where effect size represents the primary interest [1].

High-throughput screening continues to evolve technologically and conceptually. Recent innovations include the application of drop-based microfluidics, enabling screening rates 1,000 times faster than conventional techniques while using one-millionth the reagent volume [1]. Other advances include silicon lens arrays that allow simultaneous fluorescence measurement of 64 different output channels, facilitating analysis of 200,000 drops per second [1].

The transition from 2D to 3D cell culture models in HTS represents another significant advancement, better representing in vivo microenvironments despite the physical challenges inherent in mass-testing 3D structures [5]. As equipment, supplies, and HTS systems continue to evolve, they enable more physiologically relevant screening applications aided by synthetic scaffolding and self-assembling hydrogels [5].

The integration of machine learning and artificial intelligence has further transformed HTS, enabling predictive patterning that has contributed to recent discoveries for Ebola and tuberculosis [5]. These computational approaches enhance the value of HTS data by identifying patterns and relationships that might escape conventional analysis.

In conclusion, high-throughput screening has established itself as an indispensable tool in modern biological research and drug discovery. The evolution from simple single-concentration screens to sophisticated quantitative approaches and coupled workflows has dramatically enhanced the quality and information content of screening data. As HTS technologies continue to advance and integrate with complementary methodologies, they promise to further accelerate scientific discovery and therapeutic development.

In the contemporary drug discovery landscape, the strategic integration of high-throughput and targeted screening frameworks is paramount for enhancing lead identification efficiency and success rates. Targeted screening represents a paradigm shift from indiscriminate massive screening toward focused interrogation of specific biological mechanisms, molecular targets, or specialized chemical space. This approach delivers precision, depth, and unparalleled mechanistic insight that complements broader high-throughput screening (HTS) campaigns. The global HTS market, projected to reach $26.4 billion by 2025 with a compound annual growth rate of 11.5%, underscores the scaling of screening infrastructures, yet simultaneously highlights the growing need for smarter, more focused approaches to navigate this expanding capability [8].

Targeted screening methodologies have evolved beyond mere target-based filtering to encompass sophisticated workflows that integrate patient stratification biomarkers, structural biology insights, computational predictions, and functional phenotypic readouts. The adoption of these approaches is driven by the pressing need to reduce attrition rates in late-stage development by front-loading mechanistic validation and ensuring target engagement in physiologically relevant systems. This application note details the implementation protocols, strategic frameworks, and practical tools for deploying targeted screening within integrated discovery workflows, providing researchers with actionable methodologies for enhancing the precision and predictive power of their screening campaigns.

Application Note: Implementing Targeted Screening Across Discovery Workflows

Strategic Implementation and Comparative Value

Targeted screening operates not as a replacement for HTS but as a powerful complementary approach that follows initial broad screening or leverages existing biological knowledge to focus resources on higher-probability spaces. Its strategic value is most evident in its ability to:

- Enrich hit rates by focusing on pre-validated targets or chemical scaffolds with established relevance to the disease pathology

- Reduce resource utilization by screening smaller, more intelligent compound libraries against biologically relevant systems

- Accelerate mechanistic de-risking through integrated target engagement assessment early in the screening workflow

- Enable difficult target classes such as protein-protein interactions, allosteric modulators, and complex phenotypic assays that challenge traditional HTS formats

The empirical validation of this approach comes from large-scale studies demonstrating that computational targeted screening can achieve hit rates of 6.7-7.6% across diverse target classes, substantially exceeding typical HTS hit rates of 0.001-0.15% [9]. This performance advantage is particularly pronounced for novel target classes where chemical starting points are scarce, and for addressing the challenges of emerging therapeutic modalities.

Quantitative Performance Metrics Across Screening Applications

Table 1: Performance Metrics of Targeted Screening Across Applications

| Screening Application | Key Performance Metric | Reported Value | Contextual Comparison |

|---|---|---|---|

| AI-Directed Virtual Screening | Average hit rate (dose-response) | 6.7% (internal portfolio), 7.6% (academic collaborations) | Substantially exceeds typical HTS hit rates of 0.001-0.15% [9] |

| Computational Hit Expansion | Analog screening hit rate | 26-29.8% | Demonstrates robust structure-activity relationship identification [9] |

| Enzyme Engineering HTS | Z'-factor (assay quality) | 0.449 | Meets acceptance criteria for high-quality HTS (Z' > 0.4) [10] |

| HNC Liquid Biopsy Screening | Sensitivity for early detection | High (specific value not reported) | Superior to visual inspection for HPV- and EBV-related cancers [11] |

Protocol 1: Bioinformatics-Driven Target Identification and Validation

Objective and Principle

This protocol outlines a comprehensive computational approach for identifying and validating therapeutic targets specific to breast cancer, leveraging bioinformatics pipelines, molecular docking, and dynamics simulations. The methodology enables researchers to prioritize targets with high disease relevance and identify compounds with optimized binding characteristics before committing to experimental validation [12]. The approach integrates reverse drug screening strategies with structural analysis to establish a mechanistic basis for compound selection.

Materials and Reagents

Table 2: Essential Research Reagent Solutions for Bioinformatics-Driven Screening

| Reagent/Resource | Function/Application | Specification Notes |

|---|---|---|

| SwissTargetPrediction Database | Predicts potential therapeutic targets for query compounds | Species specification: "Homo sapiens" [12] |

| PubChem Database | Screens protein targets and bioactive compounds | Keyword filters: "MDA-MB and MCF-7" for breast cancer targets [12] |

| Discovery Studio 2019 Client | Molecular docking and ligand library construction | CHARMM forcefield for ligand shape refinement [12] |

| GROMACS 2020.3 | Molecular dynamics simulations for binding stability | AMBER99SB-ILDN force field for protein optimization [12] |

| VMD 1.9.3 | 3D visualization and trajectory analysis of binding dynamics | Frame-by-frame analysis of molecular binding process [12] |

Step-by-Step Procedure

Compound Selection and Conformational Optimization

- Select 23 reference compounds with documented inhibitory effects on MDA-MB and MCF-7 breast cancer cell lines from published literature

- Perform conformational optimization to generate 249 distinct conformers for comprehensive spatial analysis

- Conduct split analysis to construct five distinct pharmacophore models representing key structural features influencing biological activity [12]

Target Intersection Analysis

- Input chemical structures of the five most potent compounds from each pharmacophore category into SwissTargetPrediction, specifying "Homo sapiens" as the species

- Identify potential therapeutic targets for each compound, focusing on overlapping targets across multiple active compounds

- Use the Venny online tool (https://bioinfogp.cnb.csic.es/tools/venny/) to perform intersection analysis of the 500 predicted targets, identifying the adenosine A1 receptor as a shared target [12]

Molecular Docking and Validation

- Create a ligand library using Discovery Studio 2019 Client

- Perform docking simulations with CHARMM to refine ligand shapes and charge distribution

- Analyze binding interactions between compounds and the adenosine A1 receptor-Gi2 protein complex (PDB ID: 7LD3)

- Filter targets with LibDock scores exceeding 130, indicating high-confidence binding interactions [12]

Molecular Dynamics Simulation for Binding Stability

- Optimize protein structures using the AMBER99SB-ILDN force field

- Model water molecules with the TIP3P model in a cubic box with minimum atom-box boundary distance of 0.8 nm

- Perform initial energy minimization followed by a 150 ps restrained MD simulation at 298.15 K

- Conduct unrestricted MD simulations with a time step of 0.002 ps for 15 ns, maintaining isothermal-isobaric conditions at 298.15 K and 1 bar pressure [12]

Trajectory Analysis and Binding Position Assessment

- Use VMD 1.9.3 software to analyze the motion trajectory of the molecule interacting with the target

- Record data every 200 frames from the initial to the 8220th frame to document molecular dynamics throughout the binding process

- Identify potential intermediate states and temporal binding characteristics to elucidate the dynamic behavior during target engagement [12]

Expected Results and Interpretation

Researchers implementing this protocol can expect to identify the adenosine A1 receptor as a high-value target for breast cancer intervention. Molecular docking should yield LibDock scores exceeding 130 for promising compounds, while MD simulations will confirm binding stability over the 15ns trajectory. The workflow successfully enabled the design and synthesis of Molecule 10, which demonstrated potent antitumor activity against MCF-7 cells with an IC50 value of 0.032 µM, significantly outperforming the positive control 5-FU (IC50 = 0.45 µM) [12].

Diagram 1: Bioinformatics target identification workflow.

Protocol 2: High-Throughput Screening for Isomerase Engineering

Objective and Principle

This protocol establishes a robust high-throughput screening method for directed evolution of isomerases, specifically using L-rhamnose isomerase (L-RI) as a model system. The method enables efficient screening of large mutant libraries to identify variants with enhanced activity, employing a colorimetric assay based on Seliwanoff's reaction to detect D-allulose depletion. The optimized protocol meets all quality criteria for reliable HTS implementation in protein engineering applications [10].

Materials and Reagents

- L-rhamnose isomerase (L-RI) enzyme variants

- D-allulose substrate (≥95% purity)

- Seliwanoff's reagent (0.5% resorcinol in 95% ethanol with concentrated HCl)

- 96-well plates suitable for colorimetric assays

- High-performance liquid chromatography system for validation

- Plate reader capable of measuring absorbance at appropriate wavelengths

Step-by-Step Procedure

Single-Tube Protocol Optimization

- Conduct initial optimization in single-tube format to refine reaction conditions and minimize interfering factors

- Validate the optimized single-tube protocol against HPLC measurements to confirm accurate quantification of D-allulose depletion

- Establish linear range for the colorimetric detection and determine optimal reaction time and temperature [10]

Adaptation to 96-Well Plate Format

- Transfer the optimized protocol to a 96-well plate format with adjustments for miniaturization

- Implement methods for cell harvest, supernatant removal, and filtration to remove denatured enzymes and reduce assay interference

- Include appropriate controls in each plate (positive, negative, and blank) to normalize results across plates [10]

Quality Control and Validation

- Calculate the Z'-factor using the formula: Z' = 1 - (3×σpositive + 3×σnegative) / |μpositive - μnegative|

- Determine the signal window (SW) and assay variability ratio (AVR) to validate assay robustness

- Verify that the Z'-factor exceeds 0.4, SW is greater than 2, and AVR is below 0.6 to meet HTS quality standards [10]

Library Screening and Hit Identification

- Screen the isomerase variant library using the optimized 96-well plate protocol

- Identify hits based on significantly increased signal compared to negative controls

- Confirm hit variants through repeat testing and secondary validation using HPLC

Expected Results and Interpretation

Successful implementation of this protocol yields a high-quality HTS assay with a Z'-factor of 0.449, signal window of 5.288, and assay variability ratio of 0.551, all meeting acceptance criteria for robust high-throughput screening [10]. The established protocol enables efficient screening of isomerase activity with high reliability for identifying improved enzyme variants in directed evolution campaigns.

Protocol 3: Risk-Stratified Screening for Head and Neck Cancer

Objective and Principle

This protocol outlines a targeted screening strategy for head and neck cancer (HNC) that moves beyond broad population approaches to focus on well-defined high-risk cohorts. The methodology integrates risk stratification, contemporary screening modalities, and emerging technologies to enable early detection when intervention is most effective. This approach addresses the critical challenge that most HNCs are diagnosed at advanced stages, resulting in poor prognosis despite well-known risk factors [11].

Materials and Reagents

Table 3: Research Solutions for Risk-Stratified HNC Screening

| Reagent/Technology | Function/Application | Performance Characteristics |

|---|---|---|

| Liquid Biopsy Platforms | Detection of HPV and EBV DNA in circulation | High sensitivity for early detection and recurrence monitoring [11] |

| Narrow-Band Imaging | Enhanced visual detection of mucosal abnormalities | Improved diagnostic accuracy over white light inspection [11] |

| Raman Spectroscopy | Optical biopsy for molecular tissue characterization | Promising diagnostic accuracy, requires further validation [11] |

| Panendoscopy | Comprehensive examination of upper aerodigestive tract | Remains standard tool but with limited effectiveness and cost-efficiency [11] |

Step-by-Step Procedure

Risk Stratification and Cohort Identification

- Identify high-risk individuals based on established risk factors: tobacco use, alcohol consumption, HPV infection (for oropharyngeal cancer), EBV infection (for nasopharyngeal cancer), and oral potentially malignant disorders

- Prioritize special populations including Fanconi anemia patients (500-800× increased risk), HNC survivors (2-4% per year risk of second primary cancer), and immunodeficient individuals [11]

- Consider regional disease prevalence when determining screening strategy intensity

Screening Modality Selection

- Implement liquid biopsy techniques targeting HPV- and EBV-related HNC for high-sensitivity detection

- Utilize novel imaging technologies including narrow-band imaging and Raman spectroscopy for improved diagnostic accuracy

- Consider opportunistic screening in high-risk individuals, particularly in regions with high HNC prevalence [11]

Screening Implementation and Monitoring

- Establish regular screening intervals based on individual risk profile

- For HNC survivors, implement ongoing surveillance for metachronous primary tumors, with particular attention to those who continue smoking

- Monitor patients with oral potentially malignant disorders (leukoplakia, erythroplakia, oral submucous fibrosis) for malignant transformation [11]

Validation and Follow-up

- Confirm positive screening results with histopathological evaluation

- Implement multidisciplinary review for treatment planning of screen-detected lesions

- Document outcomes to refine risk stratification and screening protocols

Expected Results and Interpretation

A targeted screening approach focusing on high-risk populations demonstrates significantly improved cost-effectiveness compared to broad-based screening programs. Liquid biopsy techniques show high sensitivity for detecting HPV- and EBV-related HNC at early stages, while advanced imaging technologies provide improved diagnostic accuracy. Implementation of this risk-stratified protocol should yield earlier detection rates with corresponding improvements in survival outcomes, as advanced HNC carries significantly poorer prognosis (50% 3-year survival for late-stage oral cancer vs. 80% for early-stage) [11].

Diagram 2: Risk-stratified screening for head and neck cancer.

Integration with High-Throughput Workflows: Strategic Framework

The power of targeted screening is fully realized when strategically coupled with high-throughput approaches within an integrated discovery pipeline. This framework leverages the scale of HTS with the precision of targeted approaches to maximize efficiency and success rates.

Computational-to-Experimental Screening Pipeline

The emergence of AI-directed screening represents a transformative integration of computational and experimental approaches. The workflow encompasses:

Virtual Screening at Scale: Implementation of deep learning systems like AtomNet to screen trillion-compound libraries, requiring massive computational resources (40,000 CPUs, 3,500 GPUs, 150 TB memory) [9]

Algorithmic Compound Selection: Automated clustering of top-ranked molecules and selection of highest-scoring exemplars from each cluster, eliminating manual cherry-picking bias

Synthesis-on-Demand Chemistry: Procurement of selected compounds from on-demand libraries such as Enamine, with quality control to >90% purity via LC-MS and NMR validation [9]

Experimental Validation: Physical testing with standard assay interference mitigation (Tween-20, Triton-X 100, DTT) at reputable contract research organizations

Hit Expansion: Follow-up with analog screening achieving dramatically enhanced hit rates of 26-29.8% compared to primary screening [9]

Functional Validation and Target Engagement

Contemporary screening workflows increasingly prioritize functional validation and confirmation of target engagement within physiologically relevant systems:

Cellular Thermal Shift Assay (CETSA): Implementation for validating direct target engagement in intact cells and tissues, providing quantitative, system-level validation of compound mechanism [13]

High-Content Phenotypic Screening: Integration of multiparametric readouts to capture complex biological responses beyond single-target binding

Multi-omics Integration: Layering of genomic, proteomic, and metabolomic data to contextualize screening results within broader biological networks [14]

This integrated approach ensures that screening hits not only demonstrate binding affinity but also functional activity in biologically relevant systems, de-risking subsequent development stages.

Targeted screening represents an essential component of modern drug discovery, providing the precision and mechanistic depth necessary to navigate increasingly challenging target landscapes. When strategically coupled with high-throughput approaches, these methodologies create a powerful synergistic workflow that maximizes both scale and intelligence in lead identification.

The continued evolution of targeted screening will be shaped by several key trends: the maturation of AI and machine learning algorithms for predictive compound prioritization, the integration of multi-omics data for enhanced target validation, the development of increasingly sophisticated biomimetic assay systems, and the growing emphasis on patient stratification biomarkers to enable precision medicine approaches from the earliest discovery stages [15] [13].

For research teams implementing these protocols, the strategic priority should be creating integrated workflows that leverage the complementary strengths of high-throughput and targeted screening approaches. This includes establishing computational infrastructure for virtual screening, implementing functional validation technologies like CETSA, developing risk-stratified models for patient-derived system screening, and fostering cross-disciplinary expertise spanning computational chemistry, structural biology, and systems pharmacology. Through this integrated approach, targeted screening will continue to enhance the precision, depth, and mechanistic insight of therapeutic discovery, ultimately accelerating the delivery of impactful medicines to patients.

The modern drug discovery pipeline faces increasing pressure to deliver novel therapeutics both rapidly and cost-effectively. While high-throughput screening (HTS) and targeted screening are powerful methodologies individually, their strategic integration creates a synergistic workflow that significantly enhances lead identification and optimization. This convergent approach leverages the broad screening capacity of HTS to explore vast chemical spaces, followed by the focused, deep biological interrogation of targeted screening to validate and characterize promising hits. By combining these methods, researchers can accelerate the discovery timeline, improve the quality of lead candidates, and reduce late-stage attrition rates. This application note provides a detailed framework and validated protocols for implementing this integrated strategy, complete with quantitative comparisons, reagent solutions, and visual workflows to guide researchers in building more efficient and productive discovery campaigns.

Core Concepts and Quantitative Comparison

High-Throughput Screening (HTS) is an automated, rapid-assessment method that utilizes robotics, miniaturized assays, and data analytics to quickly test the biological activity of hundreds of thousands of chemical compounds against a specific target or disease model [16]. Its primary strength lies in its ability to process vast compound libraries—10,000 to 100,000 compounds per day—to identify initial "hits" [16] [17]. Ultra-High-Throughput Screening (uHTS) pushes this further, capable of testing over 100,000, even millions, of compounds daily [16] [18].

In contrast, Targeted Screening employs more focused, hypothesis-driven assays to delve deeper into the mechanism of action, selectivity, and efficacy of hits identified from primary HTS campaigns. These assays are often lower in throughput but provide rich, multi-parametric biological data.

The table below summarizes the distinct yet complementary profiles of these two approaches:

Table 1: Characteristics of HTS and Targeted Screening

| Attribute | High-Throughput Screening (HTS) | Targeted Screening |

|---|---|---|

| Throughput | High (10,000 - 100,000 compounds/day) [16] [17] | Medium to Low (Tens to hundreds of compounds) |

| Assay Format | Biochemical, cell-based in 96- to 1536-well plates [16] | High-content imaging, electrophysiology, complex phenotypic models [19] |

| Primary Goal | Rapid identification of initial "hits" from large libraries | Hit confirmation, mechanism of action studies, lead optimization |

| Data Output | Single or few data points (e.g., inhibition %) [16] | Multiparametric data at the single-cell level (morphology, localization) [19] |

| Key Strength | Breadth of exploration, unbiased discovery | Depth of biological insight, functional validation |

Integrated Workflow and Experimental Protocols

The power of the convergent model is realized in a sequential, iterative workflow where the output of one stage informs the design of the next.

Visual Workflow: The Convergent Screening Pipeline

The following diagram illustrates the integrated pathway from primary screening to validated leads:

Stage 1: Primary HTS Campaign Protocol

This initial stage is designed for speed and breadth to identify starting points from a large compound library.

Objective: To rapidly screen a diverse chemical library (e.g., 100,000 - 1,000,000 compounds) against a defined molecular target or cellular phenotype to identify initial hits.

Materials & Reagents:

- Compound Library: Plated in 384-well or 1536-well source plates [16].

- Assay Reagents: Target protein, substrate, fluorescent probe, or cell line.

- Microplates: 384-well or 1536-well assay-ready plates [16].

- Automation: Robotic liquid handling system [18] [20].

Procedure:

- Assay Development & Validation: Establish a robust, miniaturizable assay. Determine the Z'-factor (>0.5 indicates a robust assay for HTS) using positive and negative controls [16].

- Library Reformating: Use an automated liquid handler to transfer nanoliter volumes of compounds from source plates into assay plates [16] [18].

- Reagent Dispensing: Dispense assay reagents (e.g., enzyme, substrate, cells) into all wells of the assay plate.

- Incubation & Readout: Incubate plates under controlled conditions and measure the signal using an appropriate detector (e.g., fluorescence, luminescence) [16].

- Primary Data Analysis: Normalize data to controls (0% and 100% inhibition/activation). Apply a hit-selection threshold (e.g., >50% inhibition/activation at a single concentration).

Data Analysis: Hits from the primary screen are selected based on the predetermined activity threshold. Triaging is critical here to remove false positives caused by assay interference, compound autofluorescence, or colloidal aggregation [16]. This can be achieved using cheminformatics filters and machine learning models trained on historical HTS data [16].

Stage 2: Targeted Secondary Screening Protocol

This stage subjects the HTS hits to rigorous, information-rich biological scrutiny.

Objective: To confirm the activity of primary hits and gather preliminary data on mechanism of action, cellular toxicity, and selectivity.

Materials & Reagents:

- Hit Compounds: Selected from the primary HTS, reconfirmed and re-supplied.

- Cell Lines: Relevant disease models, including engineered lines and potentially more physiologically relevant primary cells or 3D cultures [19].

- Assay Kits: Reagents for multiparametric staining (e.g., nuclear, cytoskeletal, and target-specific dyes).

- Instrumentation: High-content imaging (HCI) system or high-throughput flow cytometer [21] [19].

Procedure:

- Dose-Response Confirmation: Re-test hits in a dose-response format (e.g., 8-point, 1:3 serial dilution) using the primary assay to generate IC50/EC50 values.

- Counter-Screening & Selectivity: Test active compounds against related but distinct targets (e.g., kinase isoforms) to assess selectivity.

- High-Content Analysis (HCA):

- Seed cells in 384-well imaging plates.

- Treat with compounds at multiple concentrations.

- Fix, permeabilize, and stain with fluorescent dyes (e.g., Hoechst for nuclei, Phalloidin for actin, antibodies for target protein).

- Image plates using an automated microscope.

- Use image analysis software to extract quantitative features: intensity, texture, morphology, and object counts (e.g., neurite outgrowth, nuclear translocation) [19].

- Cytotoxicity Assessment: Run parallel assays to measure cell viability (e.g., ATP-based assays) to triage compounds that act through general cytotoxicity [21] [22].

Data Analysis: Analyze multi-parametric HCA data to create a "phenotypic fingerprint" for each compound. Compounds with similar mechanisms of action often cluster together, allowing for target and pathway prediction [19]. This step is crucial for prioritizing the most promising and novel leads for further optimization.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of the convergent workflow depends on a suite of reliable reagents and tools. The following table details key solutions for critical steps in the pipeline.

Table 2: Key Research Reagent Solutions for Convergent Screening

| Reagent / Solution | Function in Workflow | Specific Application Example |

|---|---|---|

| Liquid Handling Systems | Automated, precise transfer of nanoliter volumes for assay setup and compound dispensing [16] [18]. | Beckman Coulter Cydem VT System; SPT Labtech firefly platform [23]. |

| Ion Channel Readers (ICRs) | High-throughput, functional screening of ion channel modulators using atomic absorption spectroscopy [24]. | Aurora Biomed's ICR series for cardiac safety pharmacology and neurological target screening [24]. |

| High-Content Imaging (HCI) Assays | Multiplexed, single-cell analysis of complex phenotypes, including morphology, protein translocation, and cytotoxicity [19]. | Cell-based assays for neurite outgrowth, mitochondrial health, or nuclear factor translocation (e.g., NF-κB) [19]. |

| Cell-Based Reporter Assays | Physiologically relevant screening for receptor activation or pathway modulation in a live-cell format [23]. | INDIGO Biosciences' Melanocortin Receptor Reporter Assay family [23]. |

| CRISPR Screening Platforms | Genome-wide functional genomics to identify and validate novel drug targets [23]. | CIBER platform for studying extracellular vesicle release regulators [23]. |

| AI/ML-Integrated Data Analytics | Analysis of massive HTS/HCA datasets, pattern recognition, and prediction of compound activity and toxicity [23] [18]. | Schrödinger and Insilico Medicine platforms for virtual screening and lead optimization [23]. |

Convergence in Action: Strategic Integration for Enhanced Output

The true synergy of HTS and targeted screening is realized through specific strategic integrations:

- From Phenotype to Target: Initiate discovery with a phenotypic HTS in a disease-relevant cell model. The active compounds ("hits") are then profiled in a panel of targeted HCA assays designed to report on specific pathway activities. This allows for mechanism of action prediction for compounds discovered in an unbiased manner [19].

- In Silico Triaging Convergence: Leverage artificial intelligence (AI) and machine learning (ML) to analyze primary HTS data. These tools can predict potential false positives and cluster compounds based on structural and initial response features. This in-silico triaging informs the selection of the most promising hits for downstream targeted experimental validation, optimizing resource allocation [23] [18].

- Pharmacotranscriptomics as a Bridge: This emerging field represents a powerful convergence point. It involves large-scale profiling of gene expression changes after drug perturbation [25]. A broad HTS can identify active compounds, which are then subjected to transcriptomic analysis. The resulting gene expression signatures can be compared to databases to hypothesize mechanisms of action and select targeted biological assays for direct confirmation, effectively closing the loop between phenotypic and target-based discovery [25].

The convergent workflow of HTS and targeted screening is not merely sequential but deeply iterative and synergistic. By strategically combining breadth of scope with depth of analysis, this approach de-risks the drug discovery process and significantly enhances the probability of identifying high-quality, novel therapeutic candidates. The protocols, tools, and strategies outlined herein provide a actionable roadmap for research teams to implement this powerful paradigm.

High-Throughput Screening (HTS) technology enables the routine testing of large chemical libraries to discover novel hit compounds in drug discovery campaigns [26]. However, traditional HTS approaches face significant challenges that hamper their efficiency and reliability. These limitations include substantial financial costs, high rates of false positives and false negatives, and the resource-intensive nature of follow-up verification studies [27] [28]. False positives, or assay artifacts, are compounds that appear active in primary screens but show no actual activity in confirmatory assays, often due to various interference mechanisms [26]. The pharmaceutical research community has developed advanced methodologies to address these limitations, including quantitative HTS (qHTS) and computational triage tools, which together enable more informed decision-making in hit selection and validation.

The integration of these approaches within a coupled high-throughput and targeted screening framework allows researchers to maximize the value of HTS data while minimizing resource expenditure on pursuing artifactual compounds. This application note details practical protocols and solutions for addressing traditional HTS limitations, with a focus on reducing costs, mitigating false positives, and implementing efficient triage strategies.

Quantitative HTS (qHTS): A Paradigm Shift

Protocol: Quantitative HTS (qHTS) Implementation

Principle: Traditional HTS tests compounds at a single concentration, making it susceptible to false positives and false negatives, and unable to identify complex pharmacologies [27]. Quantitative HTS (qHTS) addresses these limitations by generating concentration-response curves for every compound in a library, transforming HTS from a binary screening tool to a quantitative profiling method [27].

Materials:

- Chemical library (e.g., 60,000+ compounds)

- 1,536-well assay plates

- Low-volume dispensing system

- High-sensitivity detector

- Robotic plate handler

- Analysis software for curve fitting and classification

Procedure:

- Preparation of Titration Plates:

- Prepare a chemical library as a titration series with at least seven concentrations using 5-fold dilutions.

- This creates a concentration range of approximately four orders of magnitude (e.g., 3.7 nM to 57 μM final concentration after transfer).

- Use inter-plate titrations to replicate the entire library at different concentrations.

Assay Implementation:

- Transfer compounds to 1,536-well plates containing assay mixture using pin tools.

- Maintain a final assay volume of 4 μL.

- Include appropriate controls on each plate (e.g., ribose-5-phosphate as activator control and luteolin as inhibitor control for pyruvate kinase assay).

- Run the screen continuously with automated systems (368 plates screened over 30 hours in the prototype).

Data Quality Control:

- Monitor assay performance using statistical measures.

- Target Z' factor (measure of assay quality) ≥ 0.8.

- Ensure signal-to-background ratio ≥ 9:1.

- Verify consistency of control compound concentration-response curves.

Concentration-Response Analysis:

- Automatically fit concentration-response curves for all compounds.

- Classify curves according to quality of fit (r²), response magnitude (efficacy), and number of asymptotes.

- Calculate half-maximal activity concentration (AC₅₀) values for active compounds.

Troubleshooting:

- Poor curve fits may require adjustment of fitting parameters or range of concentrations.

- If control curves are inconsistent, check reagent stability and dispensing accuracy.

- Low Z' factors may indicate assay variability requiring protocol optimization.

qHTS Data Analysis and Compound Classification

Concentration-Response Curve Classification Criteria [27]:

Table 1: Concentration-Response Curve Classification System for qHTS

| Class | Description | Efficacy | r² | Asymptotes | Interpretation |

|---|---|---|---|---|---|

| 1a | Complete response | >80% | ≥0.9 | Upper and lower | High-quality curve with full efficacy |

| 1b | Complete but shallow response | 30-80% | ≥0.9 | Upper and lower | High-quality curve with partial efficacy |

| 2a | Incomplete response | >80% | ≥0.9 | One | Potent compound but limited concentration range |

| 2b | Weak incomplete response | <80% | <0.9 | One | Weak activity with poor curve fit |

| 3 | Single-point activity | >30% at highest concentration only | N/A | N/A | Inconclusive; requires verification |

| 4 | Inactive | <30% | N/A | N/A | No significant activity |

Data Analysis:

- Compare AC₅₀ values for interscreen replicates to assess reproducibility (r² ≥ 0.98 expected).

- Evaluate "intervendor duplicates" (same compound from different suppliers) to identify sample-specific issues (r² ≈ 0.81 typical).

- Identify structure-activity relationships directly from primary screening data.

Advantages of qHTS over Traditional HTS:

- Eliminates false negatives that occur when single-point screening thresholds fall near inflection points

- Identifies compounds with a wide range of potencies and efficacies directly from primary screens

- Provides rich datasets immediately available for mining reliable biological activities

- Enables detection of subtle complex pharmacologies like partial agonism/antagonism

Computational Triage of HTS Artifacts

Protocol: Predicting Assay Interference Compounds

Principle: Assay interference mechanisms cause false positives in HTS and can persist into hit-to-lead optimization, wasting significant resources [26]. Computational prediction of chemical liabilities enables triage of interference compounds before expensive experimental follow-up.

Materials:

- Compound structures in appropriate chemical format (e.g., SMILES, SDF)

- Liability Predictor webtool (https://liability.mml.unc.edu/)

- PAINS filters (as benchmark, though limited)

- Chemical structure visualization software

Procedure:

- Data Preparation:

- Compile list of hit compounds from HTS campaign.

- Ensure chemical structures are correctly represented (check valences, stereochemistry).

- Export structures in standard format compatible with prediction tools.

Liability Prediction:

- Submit compound structures to Liability Predictor webtool.

- Select appropriate interference models based on assay technology:

- Thiol reactivity model (for cysteine-targeting compounds)

- Redox activity model (for redox-cycling compounds)

- Luciferase interference models (firefly and nano)

- Download results with prediction scores.

Result Interpretation:

- Identify compounds predicted with high probability for specific interference mechanisms.

- Compare results against traditional PAINS filters (note: PAINS are oversensitive and miss many true interferers).

- Prioritize compounds without predicted interference liabilities for follow-up.

Integration with Experimental Data:

- Cross-reference computational predictions with experimental data.

- For predicted interferers, consider conducting counter-screens.

- Use quantitative activity data (e.g., from qHTS) to distinguish specific from non-specific activity.

Validation:

- Experimental testing of 256 virtual hits for each assay showed 58-78% external balanced accuracy for liability prediction models [26].

- Compare performance against PAINS filters, which disproportionately flag compounds as interference compounds while failing to identify most truly interfering compounds [26].

Common Assay Interference Mechanisms

Table 2: Major Assay Interference Mechanisms and Detection Methods

| Interference Mechanism | Description | Assay Technologies Affected | Detection Methods |

|---|---|---|---|

| Chemical Reactivity | Nonspecific covalent modification | Cell-based and biochemical assays | MSTI fluorescence reactivity assay, redox activity assay |

| Redox Activity | Hydrogen peroxide production in reducing buffers | Assays with reducing agents | Redox activity assay, follow-up counterscreens |

| Luciferase Inhibition | Direct inhibition of reporter enzyme | Luciferase reporter assays | Luciferase inhibition assays (firefly and nano) |

| Compound Aggregation | Nonspecific perturbation via colloidal aggregates | Biochemical and cell-based assays | SCAM Detective, detergent sensitivity tests |

| Fluorescence Interference | Autofluorescence or quenching | Fluorescence-based assays | Red-shifted fluorophores, control experiments |

| Absorbance Interference | Colored compounds interfering with detection | Absorbance-based assays | Spectral analysis, control experiments |

Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for HTS Triage

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| Liability Predictor Webtool | Predicts HTS artifacts and chemical liabilities | Free resource; outperforms PAINS filters; covers thiol reactivity, redox activity, luciferase interference |

| qHTS Platform | Generates concentration-response curves for entire libraries | Requires 1,536-well plates, low-volume dispensing, high-sensitivity detection |

| Thiol Reactivity Assay | Detects compounds that covalently modify cysteine residues | Uses (E)-2-(4-mercaptostyryl)-1,3,3-trimethyl-3H-indol-1-ium (MSTI) fluorescence |

| Redox Activity Assay | Identifies redox-cycling compounds | Detects hydrogen peroxide production in reducing conditions |

| Luciferase Inhibition Assays | Identifies luciferase inhibitors | Separate assays for firefly and nano luciferases |

| SCAM Detective | Predicts colloidal aggregators | Common cause of false positives in HTS campaigns |

| PAINS Filters | Substructure alerts for assay interference | Use with caution; high false positive rate; limited predictive value |

Workflow Integration and Visualization

Integrated HTS Triage Workflow

HTS Triage Workflow Diagram

Assay Interference Mechanisms

Assay Interference Mechanisms Diagram

Cost-Benefit Analysis and Implementation Strategy

Economic Considerations of Advanced HTS Approaches

Table 4: Comparative Analysis of HTS Approaches

| Parameter | Traditional HTS | Quantitative HTS (qHTS) | Computational Triage |

|---|---|---|---|

| Throughput | 10,000-100,000 compounds per day [28] | ~60,000 compounds with full titrations in 30 hours [27] | Instant prediction for compound libraries |

| Cost Factors | Reagents, plates, robotics: >$300,000 for large library screen [28] | Higher initial setup; reduced follow-up costs | Free webtool (Liability Predictor) [26] |

| False Positive Rate | High, requiring extensive confirmatory screening | Reduced through curve quality assessment | Identifies 58-78% of true interferers [26] |

| False Negative Rate | Significant, with active compounds missed at single concentration [27] | Minimal, as full concentration range tested | Limited data available |

| Data Richness | Single activity point per compound | Complete concentration-response curves with potency and efficacy | Predicted interference mechanisms |

| Implementation Barrier | Moderate (established technology) | High (specialized equipment and expertise) | Low (accessible webtool) |

Implementation Protocol: Integrated HTS Triage Strategy

Principle: A coupled screening approach that integrates qHTS with computational triage maximizes efficiency while minimizing pursuit of artifactual compounds.

Procedure:

- Primary Screening Design:

- Implement qHTS instead of traditional single-concentration HTS where feasible.

- For larger libraries (>100,000 compounds), consider single-point primary screening followed by qHTS on hits.

- Design assays to minimize inherent interference (e.g., use red-shifted fluorophores).

Data Analysis Phase:

- Process concentration-response data and classify compounds according to established criteria.

- Prioritize Class 1a, 1b, and 2a curves for further evaluation.

- Exercise caution with Class 3 (single-point actives) due to high artifact potential.

Computational Triage:

- Submit prioritized hits to Liability Predictor webtool.

- Filter compounds with high prediction scores for interference mechanisms relevant to your assay.

- Compare with PAINS filters but weight Liability Predictor results more heavily.

Experimental Counterscreening:

- For remaining hits, conduct targeted counterscreens based on predicted liabilities.

- Include assay-specific interference tests (e.g., detergent addition for aggregation).

- Evaluate promising hits in orthogonal assay formats.

Hit Confirmation and Progression:

- Confirm activity of triaged hits in dose-response using original assay.

- Progress confirmed hits to secondary assays and early ADMET profiling.

- Document triage process and rationale for hit selection.

Expected Outcomes:

- Significant reduction in resource expenditure on artifactual compounds

- Higher quality hit lists with enriched true actives

- Accelerated transition from screening to lead optimization

- Comprehensive dataset for structure-activity relationship analysis

The integrated approach outlined in this application note provides a robust framework for addressing traditional HTS limitations. By implementing qHTS and computational triage strategies, researchers can substantially reduce the impact of false positives while maximizing the value of screening data, ultimately accelerating the drug discovery process.

The Impact of AI and Machine Learning on Foundational Screening Efficiency and Data Interpretation

The integration of Artificial Intelligence (AI) and Machine Learning (ML) is fundamentally reshaping high-throughput screening (HTS) and targeted screening in modern drug discovery. These technologies are transitioning from supportive tools to core components of the research workflow, enabling scientists to manage unprecedented data complexity and extract meaningful biological insights with enhanced speed and accuracy. This document details practical applications and protocols for coupling AI-driven high-throughput and targeted screening workflows, a methodology gaining significant traction for identifying non-obvious, beneficial metabolic engineering targets [29]. The focus is on providing actionable guidance for researchers, scientists, and drug development professionals.

Quantitative Impact of AI/ML on Screening Efficiency

The adoption of AI and ML is delivering measurable improvements in screening efficiency and data interpretation. The following tables summarize key quantitative findings from industry surveys and specific research applications.

Table 1: Organizational AI Adoption and Impact Metrics (2024-2025)

| Metric | Value | Source/Context |

|---|---|---|

| Organizations using AI regularly | 78% - 88% | Reported use in at least one business function [30]. |

| Organizations scaling AI | ~33% | Majority remain in experimentation or piloting phases [30]. |

| AI high performers | ~6% | Organizations reporting significant EBIT impact from AI [30]. |

| Cost reduction from AI | 54% | Proportion of businesses reporting cost savings from AI implementation [31]. |

| Data analyst time spent on data cleaning | 70-90% | Manual data preparation, a key target for AI automation [31]. |

Table 2: AI Performance in Specific Screening and Research Applications

| Application / Metric | Performance / Outcome | Source/Context |

|---|---|---|

| CRISPRi/a screening with proxy assay | 3.5-5.7 fold increase in intracellular betaxanthin content; 15% increase in secreted p-Coumaric acid titer [29]. | Identified 30 gene targets improving precursor production [29]. |

| Machine learning virtual screening | Identification of Glucozaluzanin C as a potential inhibitor of mutant PBP2x in Streptococcus pneumoniae [32]. |

Combined ML-based virtual screening with ADMET profiling and DFT analysis [32]. |

| AI-driven molecule to trials | 12-18 months vs. traditional 4-5 years [33]. | As reported for AI-designed molecules by companies like Exscientia and Insilico Medicine [33]. |

AI-Enhanced Screening Workflows: Protocols and Applications

This section provides detailed methodologies for implementing AI and ML in coupled screening workflows.

Protocol: Coupled High-Throughput and Targeted Screening for Metabolic Engineering

This protocol outlines a workflow for using a high-throughput proxy assay to identify targets for a molecule of interest that lacks a direct HTP assay [29].

1. Research Objective To identify non-intuitive metabolic engineering targets that improve the production of a target molecule (e.g., p-Coumaric acid or l-DOPA) by initially screening for an enhanced precursor supply (e.g., L-tyrosine) using a HTP-compatible proxy (e.g., fluorescent betaxanthins) [29].

2. Experimental Design and Workflow A visual representation of the integrated screening workflow is provided below.

3. Materials and Reagents

- gRNA Library: CRISPRi (dCas9-Mxi1) and/or CRISPRa (dCas9-VPR) libraries targeting metabolic genes [29].

- Host Strain: A betaxanthin-producing S. cerevisiae strain (e.g., ST9633 with feedback-insensitive ARO4 and ARO7 alleles) [29].

- Screening Media: Defined mineral media (e.g., 20 g/L glucose) [29].

- Validation Strains: Engineered yeast strains for producing the target molecule (e.g., p-Coumaric acid or l-DOPA) [29].

4. Step-by-Step Procedure

Phase 1: High-Throughput Proxy Screening 1. Library Transformation: Transform the CRISPRi/a gRNA library into the betaxanthin screening strain. A single transformation can generate significant diversity (e.g., 10²–10⁶ strains) [29]. 2. FACS Sorting: Use Fluorescence-Assisted Cell Sorting (FACS) to isolate the top 1-3% of the population with the highest fluorescence (Betaxanthin excitation: ~463 nm, emission: ~512 nm) [29]. 3. Recovery & Colony Selection: Recover sorted cells in liquid mineral media overnight. Plate on solid mineral media and incubate for 3-4 days to form single colonies. Visually select ~350 of the most pigmented (yellow) colonies [29]. 4. Microplate Assay: Cultivate selected clones in 96-deep-well plates for 48 hours. Measure fluorescence and benchmark against the parent strain. Select hits based on a pre-defined fold-change threshold (e.g., >3.5-fold) [29]. 5. Target Identification: Isulate and sequence the sgRNA plasmids from the selected hit strains to identify the genetic targets responsible for the enhanced phenotype [29].

Phase 2: Targeted Validation 1. Individual Target Validation: Clone and express each identified gRNA individually into the target molecule production strain (e.g., p-CA strain) [29]. 2. LTP Analytical Validation: Cultivate engineered strains and measure the titer of the target molecule (e.g., p-Coumaric acid) using low-throughput analytical methods like HPLC or LC-MS. This step validates whether the targets identified via the proxy assay are effective for the molecule of interest [29]. 3. Multiplexing: Create a gRNA multiplexing library combining the most effective individual targets. Repeat the coupled screening workflow (Phase 1 and 2) to identify additive or synergistic combinations [29].

5. Data Analysis and Interpretation

- Fold-change calculations for fluorescence and product titer are central to identifying hits.

- Statistical significance testing (e.g., p-values < 0.05) should be applied to validate improvements [29].

- The primary outcome is a curated list of validated genetic targets and combinations that enhance the production of the final target molecule.

Protocol: ML-Based Virtual Screening for Natural Product Inhibitors

This protocol describes an in silico approach to identify potential natural inhibitors from phytocompound libraries, combining machine learning with computational chemistry [32].

1. Research Objective To rapidly identify and characterize plant-derived natural compounds with potential inhibitory activity against a specific drug-resistant bacterial target (e.g., mutant PBP2x in S. pneumoniae) [32].

2. Experimental Design and Workflow The sequential workflow for virtual screening and characterization is illustrated below.

3. Materials and Software

- Compound Libraries: Phytocompound databases (e.g., IMPPAT, PubChem Bioassay AID 438298 for anti-pneumococcal activity) [32].

- Software for Descriptor Calculation: PaDEL-Descriptor for generating 1D, 2D, and 3D molecular descriptors and fingerprints [32].

- Machine Learning Environment: WEKA (Waikato Environment for Knowledge Analysis) software with classifiers like Random Forest, J48, PART, and RepTree [32].

- ADMET Prediction Tools: ADMETlab 3.0 and ProTox 3.0 for pharmacokinetic and toxicity profiling [32].

- Computational Chemistry Suites: Gaussian09W and GaussView 6.0 for Density Functional Theory (DFT) calculations [32].

- Molecular Modeling Software: PyMOL, SPDB Viewer, and dynamics simulation software (e.g., GROMACS) for docking and simulations [32].

4. Step-by-Step Procedure

5. Data Analysis and Interpretation

- Model Performance: A high AUC and F1-score indicate a robust predictive model for virtual screening.

- DFT Descriptors: A small HOMO-LUMO gap suggests high reactivity, while ESP maps identify nucleophilic/electrophilic regions.

- Docking and Dynamics: Stable RMSD and RMSF profiles, along with persistent hydrogen bonds, indicate a stable and high-affinity interaction, suggesting a promising inhibitor.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for AI-Enhanced Screening

| Item | Function & Application in AI/ML Screening |

|---|---|

| CRISPRi/a gRNA Libraries (e.g., dCas9-VPR/Mxi1) | Enable high-throughput transcriptional activation or repression of metabolic genes to uncover non-obvious beneficial targets [29]. |

| Biosensor Strains / Proxy Assay Systems (e.g., Betaxanthin-producing yeast) | Provide a high-throughput, FACS-compatible readout (fluorescence/color) for compounds or precursors that are otherwise difficult to screen directly [29]. |

| 3D Cell Culture Systems (e.g., Spheroids, Organoids) | Offer more physiologically relevant models for screening. Automation platforms (e.g., mo:re's MO:BOT) standardize 3D culture, improving reproducibility and predictive power [34] [35]. |

| Automated Liquid Handlers & Integrated Platforms (e.g., Veya, firefly+) | Provide nanoliter precision and walk-up automation for robust, reproducible assay setup and execution, reducing human error and freeing scientist time [34] [35]. |

| Data Integration & Lab Management Platforms (e.g., Cenevo, Sonrai Analytics) | Unify fragmented data from instruments and experiments, creating structured, AI-ready datasets. Embedded AI assistants can support search and workflow generation [34]. |

From Theory to Bench: Implementing Integrated Screening Workflows in Biomedical Research

In modern drug discovery, the integration of high-throughput screening (HTS) with subsequent targeted validation represents a critical pathway for identifying and characterizing promising therapeutic candidates. HTS is an automated approach that enables the rapid testing of thousands to hundreds of thousands of chemical compounds against biological targets, significantly accelerating the early drug discovery pipeline [22]. This process allows researchers to screen vast libraries generated by combinatorial chemistry, identifying initial "hits" that interact with a specific target. However, the primary HTS phase is merely the starting point. The true value emerges through a rigorous, stepwise workflow that progresses from these initial hits to thoroughly validated leads via targeted secondary screening. This guide details a comprehensive protocol for this essential transition, ensuring that identified compounds have genuine therapeutic potential before committing substantial resources to development.

The strategic coupling of high-throughput and targeted screening addresses a fundamental challenge in pharmaceutical research: balancing the need for broad screening coverage with the requirement for deep biological characterization. HTS methods have evolved substantially, with ultra high-throughput screening (UHTS) now capable of conducting over 100,000 assays per day [22]. This initial broad net is designed for maximum sensitivity, where accepting false positives is preferable to missing potential hits [36]. The subsequent targeted validation phases then apply increasing stringency to separate true biological activity from artifactual signals, ultimately yielding chemically tractable leads with confirmed mechanism of action and early toxicity profiles. This structured approach from plate-based screening to targeted analysis forms the backbone of modern drug discovery programs across academic and industrial settings.

Core Principles of HTS and Validation

A successful screening campaign is built upon several foundational principles. First, assay robustness is paramount; the biological system must produce a stable, reproducible signal that can withstand the demands of automation and miniaturization. Second, appropriate controls must be strategically implemented throughout the process to monitor assay performance and identify systematic errors. Third, the screening strategy must balance sensitivity (the ability to identify true activators/inhibitors) and specificity (the ability to reject inactive compounds), with the emphasis shifting between these priorities as the workflow progresses from primary to secondary screening [36]. Finally, the entire process should be designed with translational relevance in mind, ensuring that the biological context (e.g., cell type, stimulus, readout) reflects the intended therapeutic application.

The design considerations begin with target identification and reagent preparation, where the biological target (e.g., enzyme, receptor, cellular pathway) is selected and the necessary reagents are optimized for stability and compatibility with HTS automation [22]. For cell-based assays, this includes selecting appropriate cell lines, ensuring their health and authenticity, and optimizing culture conditions for miniaturized formats. Recent advances have introduced innovative models, such as stem cell-derived systems, that enhance the physiological relevance of HTS compatible models [37].

Workflow Schematic and Decision Points

The complete pathway from primary HTS to secondary targeted validation involves multiple stages with key decision points. The following diagram visualizes this integrated workflow:

Diagram 1: Integrated workflow from primary HTS to validated leads with key quality control checkpoints.

This workflow emphasizes critical quality control checkpoints, such as the calculation of Z' factors to ensure sufficient assay robustness and the implementation of orthogonal assays to eliminate false positives early in the process. Each stage applies increasing stringency to refine the candidate list, with decision gates that may return compounds to earlier stages for re-evaluation or remove them entirely from the pipeline.

Materials and Reagents

Essential Research Reagent Solutions

The successful implementation of an HTS to validation workflow requires careful selection and quality control of reagents. The following table details essential materials and their functions within the screening pipeline:

Table 1: Key Research Reagent Solutions for HTS and Validation Workflows

| Reagent/Material | Function | Specification Notes |

|---|---|---|

| Compound Libraries | Source of chemical diversity for screening | 40,000+ compounds; structurally diverse collections; typically stored in DMSO at 2-10mM [38] |

| Microplates | Platform for miniaturized reactions | 384-well or 1586-well formats; working volume 2.5-10μL; tissue culture treated for cell-based assays [22] |

| Detection Reagents | Signal generation for activity measurement | Fluorescence (FRET), luminescence, or absorbance-based; compatible with automation and miniaturization [22] |

| Cell Lines | Biological context for phenotypic screening | Robust growth in microplates; authenticated and mycoplasma-free; relevant to target biology [36] |

| Target Proteins | Molecular targets for biochemical assays | High purity (>90%); functional activity validated; compatible with HTS buffer conditions [38] |

| Primary Antibodies | Detection of specific epitopes in binding assays | Validated for specificity; compatible with HTS detection systems [36] |

| Assay Buffers | Maintain physiological conditions for reactions | Optimized pH, ionic strength; contain necessary cofactors; minimal background signal [36] |

Quality Control of Reagents

Reagent quality directly impacts screening outcomes, making rigorous quality control essential. All reagents should undergo stability testing under screening conditions, including assessments of storage stability and emergency stability in case of instrumentation failure [36]. Critical biological reagents, especially cell lines, must be routinely monitored for contamination (e.g., mycoplasma) and phenotypic drift. For enzyme preparations, specific activity should be verified across multiple batches to ensure consistency. Liquid handling validation using colored dyes is recommended to confirm accurate and precise dispensing before committing valuable reagents to full-scale production screening [36].

Stepwise Experimental Protocols

Phase 1: Primary High-Throughput Screening

Assay Development and Optimization

Before initiating a full-scale screen, extensive assay development is required to optimize conditions for automation and miniaturization:

- Target Selection: Define the biological target (e.g., specific enzyme, receptor, or pathway) and its relevance to the disease context.

- Reagent Preparation: Optimize expression and purification of recombinant proteins or culture conditions for cell-based systems. For cellular microarrays, this may involve robotic spotting of biomolecules or soft lithography to create patterned surfaces [22].

- Assay Miniaturization: Transition from benchtop protocols to microplate formats (384-well or higher density). Determine optimal well volume (typically 5-10μL for 384-well plates) while maintaining signal-to-noise ratio [22].

- Signal Optimization: Test multiple detection methods (e.g., fluorescence, luminescence, FRET, HTRF) to identify the most robust readout [22]. For the HCV NS3/4A protease screening example, a fluorescence-based enzymatic assay was implemented [38].

- Control Selection: Establish appropriate positive controls (known modulators) and negative controls (vehicle-only, e.g., DMSO). These are critical for assessing assay performance.

Validation and Quality Control

Once assay conditions are established, formal validation tests ensure reliability:

- Plate Uniformity Assessment: Evaluate edge effects and signal drift across the plate. For 384-well plates, the outer rows and columns are typically left empty to minimize edge effects [36].

- Robustness Calculation: Determine the Z' factor using positive and negative controls. The Z' factor is a statistical measure of assay quality that accounts for both the dynamic range and the data variation. A Z' factor > 0.4 is generally considered excellent for HTS, while a value > 0.3 is acceptable for cell-based screens [36].

- Formula: Z' = 1 - [3×(σp + σn) / |μp - μn|], where σp and σn are the standard deviations of positive and negative controls, and μp and μn are their means.

- Replicate Experiment: Perform a minimum 2-replicate study over different days to assess biological reproducibility and robustness [36].

Production Screening

Execute the full-scale primary screen:

- Compound Transfer: Using automated liquid handling, transfer compounds from library plates to assay plates. The Janus Liquid Handling Work Station or similar systems are typically employed [36].

- Reagent Addition: Add assay components (e.g., enzyme, substrate, cells) according to the optimized protocol.