Strategies for Robust HTS Assays: A Guide to Improving Product Tolerance in High-Throughput Screening

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to optimizing product tolerance in High-Throughput Screening (HTS) assays.

Strategies for Robust HTS Assays: A Guide to Improving Product Tolerance in High-Throughput Screening

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to optimizing product tolerance in High-Throughput Screening (HTS) assays. Covering foundational principles to advanced validation techniques, it explores key performance metrics like Z'-factor, methodological strategies including universal biochemical assays and automation, and practical troubleshooting for common challenges like DMSO sensitivity and compound interference. The content also outlines rigorous validation and comparative analysis frameworks to ensure the identification of true, physiologically relevant hits, ultimately aiming to enhance screening efficiency, reduce false positives, and accelerate the drug discovery pipeline.

The Bedrock of Robust Screening: Core Principles and Challenges of HTS Assay Performance

Assay robustness is a critical determinant of success in high-throughput screening (HTS) and early drug discovery. It refers to an assay's reliability and reproducibility in producing consistent results despite minor variations in experimental conditions, reagents, or operators. For researchers and scientists in drug development, understanding and optimizing assay robustness is fundamental to ensuring that screening campaigns identify genuine biological hits rather than experimental artifacts. This technical guide focuses on three fundamental metrics—Z'-factor, Signal-to-Background ratio, and Coefficient of Variation (CV)—that form the cornerstone of quantitative assay validation. By mastering these metrics, professionals can significantly improve product tolerance and the overall success of their HTS research, saving valuable time and resources [1] [2].

Key Metrics for Assessing Assay Robustness

Z'-factor

The Z'-factor has become the gold standard metric for evaluating the quality and robustness of HTS assays. It is a dimensionless statistical parameter that measures the separation between positive and negative control populations, taking into account both the means and the variations of these controls [1] [3].

Definition and Calculation: The Z'-factor is calculated using the following formula:

Z' = 1 - [3(σp + σn) / |μp - μn|]where σp and σn are the standard deviations of the positive (p) and negative (n) controls, and μp and μn are their respective means [1] [3].Interpretation and Acceptable Ranges: The Z'-factor ranges from -∞ to 1, and is interpreted as follows [1] [3]:

Z'-factor Value Assay Quality Assessment 1.0 An ideal assay (theoretical) 0.5 ≤ Z' < 1.0 An excellent assay 0 < Z' < 0.5 A marginal assay. For complex phenotypes (e.g., in high-content screening), hits in this range may still be valuable [1]. Z' = 0 The positive and negative control populations overlap at the 3-sigma level. Z' < 0 There is significant overlap between the two control populations. Advantages and Limitations:

- Advantages: The Z'-factor is easy to calculate, accounts for variability in both control groups, and is widely available in commercial and open-source HTS analysis software [1].

- Limitations: Its calculation assumes the control data follows a normal distribution, which is often not verified in cell-based assays. The presence of outliers can skew the standard deviation, leading to a misleading Z'-factor. Furthermore, it does not scale linearly with signal strength [1].

Signal-to-Background Ratio (S/B)

The Signal-to-Background Ratio is a fundamental, though incomplete, measure of assay window size.

Definition and Calculation: It is the simple ratio of the mean signal of the positive control to the mean signal of the negative control.

S/B = μp / μn[3]Interpretation: A higher S/B ratio indicates a larger dynamic range between the positive and negative controls.

Advantages and Limitations:

- Advantage: It is a very simple and intuitive calculation.

- Critical Limitation: The S/B ratio does not contain any information regarding data variation. Therefore, it is an inadequate metric for evaluating assay robustness on its own, as it cannot distinguish between an assay with low background variability and one with high background variability, even if their mean backgrounds are identical [3].

Coefficient of Variation (CV)

The Coefficient of Variation measures the relative variability of a data set, expressed as a percentage.

Definition and Calculation: It is calculated as the standard deviation of a population (e.g., positive control replicates) divided by its mean.

CV = (σ / μ) * 100%[2]Interpretation and Acceptance Criteria: A lower CV indicates higher precision and lower variability among replicates. During assay validation, it is generally required that the CV values for raw "high," "medium," and "low" signals be less than 20% across all validation plates [2].

The table below summarizes the core metrics used to define assay robustness, highlighting what each one measures and its primary use case.

| Metric | Calculation | What It Measures | Primary Use |

|---|---|---|---|

| Z'-factor | 1 - [3(σp + σn) / |μp - μn|] | Separation between positive and negative controls, accounting for means and variances of both [1] [3]. | Overall assay quality and robustness for screening. |

| Signal-to-Background (S/B) | μp / μn | The ratio of the average positive control signal to the average negative control signal [3]. | Basic assessment of the assay's dynamic range (without considering variability). |

| Coefficient of Variation (CV) | (σ / μ) * 100% | The precision and relative variability of replicate measurements within a single population [2]. | Assessing replicate consistency for controls or samples. |

| Signal-to-Noise (S/N) | (μp - μn) / σn | Confidence in quantifying a signal above the background noise [3]. | Evaluating detection confidence for signals near the background level. |

Assay Validation Workflow

Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

Q1: My assay's Z'-factor is below 0.4. What are the first things I should check? First, inspect the raw data for edge effects or systematic drift across the plate, which can be caused by uneven temperature in incubators. Next, check the CV of your controls. If the CV is high (>20%), the issue is likely high variability. Ensure consistent reagent preparation and liquid handling. If the CV is low but the Z'-factor is still poor, the problem is a small signal window; consider optimizing your positive control concentration or assay incubation times to increase the separation between controls [1] [2] [4].

Q2: Is a Z'-factor above 0.5 always necessary for a screen to be successful? Not necessarily. While a Z'-factor > 0.5 is considered excellent, assays with complex phenotypes, such as those in high-content screening (HCS), can still yield valuable, biologically relevant hits with a Z'-factor in the 0 to 0.5 range. The decision should factor in the biological value of the hits and the tolerance for false positives that can be filtered out in subsequent confirmation screens [1].

Q3: My Signal-to-Background ratio is high, but my Z'-factor is low. Why? A high S/B with a low Z'-factor indicates that while your positive and negative controls are well-separated on average, there is excessive variation in one or both of the control populations. The Z'-factor penalizes this variability, while the S/B ratio ignores it. Focus on reducing variability by ensuring consistent cell health, reagent quality, and automated, precise liquid handling [3].

Q4: How many replicates and controls are sufficient for a robust assay validation? For a formal validation, it is recommended to run the assay on three different days with at least three plates per day. Each plate should contain a minimum of 16 replicates each of positive and negative controls, distributed in an interleaved fashion to capture positional effects. For the main screen, duplicate runs are typical for large-scale HTS, with more replicates reserved for confirmation assays [1] [2].

Common Problems and Troubleshooting Table

| Problem | Possible Causes | Tests & Corrective Actions |

|---|---|---|

| Low Z'-factor | 1. High variability in controls.2. Low separation between controls. | 1. Check CVs. Improve reagent consistency and washing steps (e.g., add a soak step) [4].2. Titrate positive control concentration; optimize incubation times. |

| High Background | 1. Incomplete washing.2. Non-specific binding. | 1. Increase number of washes; ensure proper function of plate washer [4].2. Optimize blocking conditions; titrate detection antibody. |

| Poor Replicate Consistency (High CV) | 1. Inconsistent liquid handling.2. Edge effects.3. Contaminated reagents. | 1. Use automated, non-contact dispensers for critical reagents [5].2. Use plate sealers; avoid incubating plates in areas with temperature gradients [4].3. Prepare fresh buffers and reagents. |

| Edge Effects | Evaporation from edge wells causing uneven temperatures. | Use plate sealers during all incubation steps. If possible, use a layout that does not place critical controls only on the edges [1] [4]. |

| Signal Drift Across Plate | Reagents not at uniform temperature before adding; slow or interrupted assay setup. | Ensure all reagents are at room temperature and the assay setup is continuous and swift [4]. |

Troubleshooting Logic for Assay Robustness

Experimental Protocol for Assay Validation

A rigorous assay validation protocol is essential to demonstrate robustness before initiating a large-scale screen. The following procedure, adapted from the Assay Guidance Manual, provides a standardized framework [2].

Detailed Step-by-Step Methodology

Plate Design and Controls:

- Define three types of control samples: "High" signal (positive control), "Low" signal (negative control), and "Medium" signal (e.g., EC50 of a reference compound).

- For the validation run, prepare three identical plates per day for three separate days.

- Use an interleaved layout to detect spatial biases. For a 384-well plate, distribute the controls in the following column-wise order:

- Plate 1: High, Medium, Low, High, Medium, Low...

- Plate 2: Low, High, Medium, Low, High, Medium...

- Plate 3: Medium, Low, High, Medium, Low, High...

- Include a minimum of 16 replicates per control type on each plate.

Assay Execution:

- Prepare fresh samples and reagents on each of the three validation days.

- Use the same automated protocols and instruments that are planned for the full-scale screen.

- Document all reagent details (vendor, lot number, preparation time) and instrument parameters meticulously.

Data Collection and Analysis:

- Collect raw data from the plate reader.

- For each plate, calculate the following for the High, Medium, and Low controls:

- Mean (μ) and Standard Deviation (σ)

- Coefficient of Variation (CV) = (σ / μ) * 100%

- Calculate the Z'-factor between the High and Low controls.

- Visually inspect the data using scatter plots (plotting raw signal in well order) to identify any systematic patterns, drift, or edge effects [2].

Acceptance Criteria:

- The CV for all control wells must be < 20% on all nine plates.

- The Z'-factor should be > 0.4 on all plates (or the Signal Window should be > 2).

- The standard deviation of the normalized "Medium" signal should be < 20.

- No strong spatial patterns should be present in the scatter plots.

Research Reagent Solutions

The table below lists essential materials and their critical functions in ensuring a robust assay.

| Reagent / Material | Function | Considerations for Robustness |

|---|---|---|

| Positive & Negative Controls | Defines the upper and lower bounds of the assay signal; critical for calculating Z'-factor and S/B. | Select controls that are biologically relevant and comparable in strength to expected hits. Avoid overly strong controls that give a false sense of robustness [1]. |

| Cell Lines | The biological system in cell-based assays. | Maintain consistent passage number, splitting routine, and health. Characterize response variability during development [2]. |

| Detection Reagents (e.g., Antibodies, Dyes) | Generate the measurable signal. | Titrate to optimal concentrations to maximize signal window and minimize background. Use consistent lots throughout a campaign. |

| Assay Buffers | Provide the chemical environment for the reaction. | Monitor pH and osmolality. Prepare fresh or freeze aliquots to prevent contamination and ensure stability [4]. |

| Microtiter Plates | The platform for miniaturized reactions. | Use plates designed for specific assays (e.g., ELISA, cell culture). Be aware of potential edge effects and test plate brands for consistency [4]. |

| Reference Standard | Used for potency calculations and standard curves. | Handle according to directions. Use a frozen, large-quantity master stock for long-running campaigns to avoid inter-batch variability [2]. |

The Critical Impact of Product Tolerance on Hit Identification and False Positives

Understanding Product Tolerance and False Positives

What is "product tolerance" in High-Throughput Screening (HTS)?

In High-Throughput Screening (HTS), product tolerance refers to the ability of an assay's detection system to accurately measure the intended enzyme reaction product without interference from screening compounds or assay components. Poor product tolerance leads to false-positive results, where compounds are incorrectly identified as "hits" not due to biological activity, but because they interfere with the detection mechanism itself [6].

Even advanced detection methods like mass spectrometry (MS), which are less prone to artefacts like fluorescence interference, are not immune. Recently, novel mechanisms for false-positive hits have been identified in RapidFire MRM-based screening that are not seen in classical assays, necessitating new pipelines for their detection and mitigation [6].

Why is managing product tolerance critical for HTS success?

Effective management of product tolerance is crucial because false positives consume significant resources and time to resolve. They can obscure genuine hits and lead research down unproductive paths. A well-validated assay with high product tolerance rapidly identifies and eliminates such compounds at the initial screen, saving cost and accelerating the discovery process [6] [7].

Key Consequences of Poor Product Tolerance:

- Resource Drain: Wasted time and materials on investigating false leads.

- Missed Opportunities: Genuine hits can be overlooked amidst noise.

- Pipeline Delays: Extended timelines for lead identification and optimization.

Troubleshooting Guides

Guide 1: Diagnosing a Sudden Increase in False-Positive Rates

Problem: A previously robust HTS assay has begun to show an unacceptably high rate of false-positive hits.

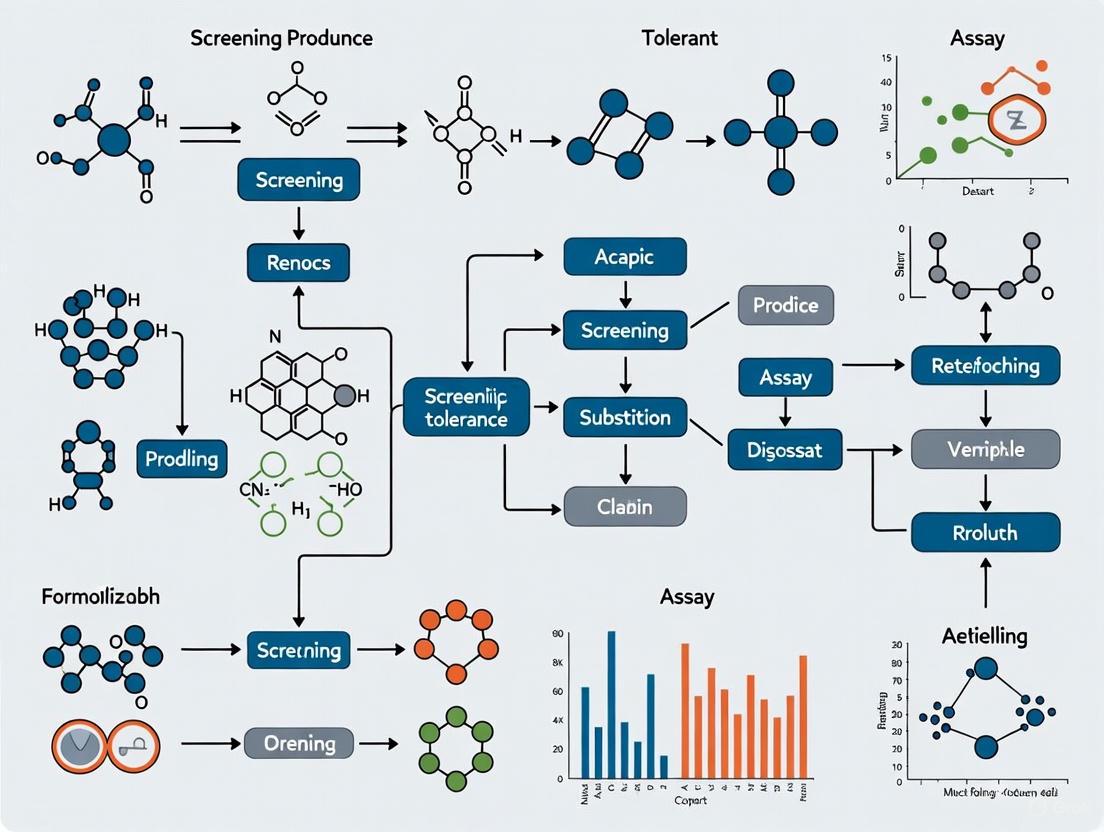

Investigation Workflow: The following diagram outlines a systematic approach to diagnose the root cause of increased false positives.

Diagnostic Steps:

Check Reagent Integrity and Lot Numbers:

- Action: Confirm that all reagents, including enzymes, substrates, and buffers, are within their expiration dates. Compare the current performance against data from the previous reagent lot.

- Rationale: Reagent degradation or subtle variations between lots can alter assay kinetics and signal stability, leading to increased interference [7].

Verify Liquid Handler Performance and Dispensing Accuracy:

- Action: Perform gravimetric checks or use fluorescent dyes to verify the precision and accuracy of all liquid dispensing steps, especially for compounds and DMSO.

- Rationale: Inaccurate dispensing in miniaturized assays (e.g., 1536-well plates) amplifies volumetric errors, causing incorrect compound concentrations and solvent effects that manifest as false positives [8].

Run a Plate Uniformity and Signal Variability Assessment:

- Action: Run control plates containing only "Max" (high signal) and "Min" (low signal) controls distributed across the entire plate.

- Rationale: This test identifies "edge effects" or spatial biases caused by uneven temperature or evaporation across the microplate. A high coefficient of variation (CV) in control wells indicates poor robustness [7] [8].

Assay DMSO Tolerance:

- Action: Run the assay with a dilution series of DMSO (e.g., 0% to 3%) in the absence of test compounds.

- Rationale: The final concentration of the compound solvent DMSO can affect enzyme activity and signal detection. The validated assay should be run with the DMSO concentration that will be used in screening, typically kept under 1% for cell-based assays [7].

Investigate Novel Interference Mechanisms:

- Action: For mass spectrometry-based assays, implement specific counter-screens designed to detect the newly reported false-positive mechanism that involves non-classical interference with the product detection step [6].

Guide 2: Validating Assay Robustness for Hit Identification

Objective: To establish that your HTS assay is statistically robust and has the necessary product tolerance to reliably distinguish true hits from false positives.

Validation Workflow: This workflow details the key experiments required for full assay validation.

Experimental Protocol:

Stability and Process Studies [7]:

- Method: Determine the stability of all critical reagents under storage and assay conditions. Test stability after multiple freeze-thaw cycles and the stability of daily leftover reagents.

- Output: Defined storage conditions and validated in-assay stability for the duration of the measurement.

Plate Uniformity Study [7]:

- Method: Perform a 3-day study using an interleaved-signal plate format. Each plate contains a statistical distribution of wells representing "Max," "Min," and "Mid" signals. This should be done with the final screening concentration of DMSO.

- "Max" Signal: Represents the maximum assay response (e.g., uninhibited enzyme reaction).

- "Min" Signal: Represents the background or minimum assay response (e.g., fully inhibited reaction).

- "Mid" Signal: Represents a mid-point response (e.g., IC50 concentration of a control inhibitor).

- Output: Data on signal window stability and intra-plate variability over time.

Replicate-Experiment Study [7]:

- Method: Conduct the assay on multiple days (e.g., 3 separate days) with independently prepared reagents to assess inter-day reproducibility.

- Output: Assessment of the assay's reproducibility and the identification of day-to-day variance.

Calculate Key Statistical Metrics [8]:

- Use the data from the Plate Uniformity Study to calculate the following metrics. The table below summarizes the formulas and acceptance criteria.

Table 1: Key Statistical Metrics for Assay Validation

Metric Formula Interpretation Target Value Z'-Factor $1 - \frac{3(SD{max} + SD{min})}{ Mean{max} - Mean{min} }$ Assay robustness and suitability for HTS. > 0.5 [8] Signal-to-Background (S:B) $\frac{Mean{max}}{Mean{min}}$ The dynamic range of the assay signal. As high as possible, assay-dependent. Signal Window (SW) $ Mean{max} - Mean{min} / \sqrt{(SD{max})^2 + (SD{min})^2}$ The separation between max and min signals. > 2 [8] Coefficient of Variation (CV) $(SD / Mean) \times 100$ The variability of the control signals. < 10% for controls [8] Establish QC Pass/Fail Criteria:

- Action: Based on the validation data, set quantifiable criteria for each screening plate. For example, a plate may be rejected and repeated if its Z'-factor falls below 0.5 or if the CV of the controls exceeds 10% [8].

Frequently Asked Questions (FAQs)

What defines an acceptable Z'-factor for an HTS assay?

An assay is generally considered excellent and robust for HTS if it has a Z'-factor greater than 0.5. A Z'-factor between 0 and 0.5 may be considered marginal or require careful monitoring, while a Z'-factor below 0 indicates the assay is not suitable for screening as it cannot reliably distinguish between the Max and Min signals [8].

How does plate miniaturization (e.g., moving to 1536-well format) impact false positives?

Plate miniaturization significantly reduces reagent costs but increases the risk of false positives due to amplified volumetric errors and increased evaporation. The higher surface-to-volume ratio accelerates solvent evaporation, which can concentrate compounds and DMSO, leading to solvent tolerance issues and non-specific effects. This necessitates the use of high-precision dispensers and strict environmental controls to maintain assay integrity [8].

We use MS detection to avoid fluorescence interference. Why are we still seeing false positives?

While mass spectrometry is less susceptible to common artefacts like compound auto-fluorescence, novel mechanisms for false positives have been discovered. These can involve compounds that interfere with the specific MS detection process in unexpected ways. It is critical to develop and implement specific counter-assays or a detection pipeline designed to identify and mitigate these newly understood mechanisms [6].

What is the primary function of a "Plate Drift Analysis"?

Plate Drift Analysis is performed during assay validation to confirm that the assay's signal window and statistical performance (like Z'-factor) remain stable over the entire duration of a large screen. It detects systematic temporal errors, such as instrument drift, detector fatigue, or reagent degradation, that could lead to signal inconsistencies and increased false-positive or false-negative rates between plates screened at the start versus the end of an HTS run [8].

Why are "edge effects" a major concern in HTS?

Edge effects—systematic signal gradients between wells at the edge and the center of a microplate—are a major concern because they are a significant source of false positives and negatives. They are primarily caused by uneven heating and differential evaporation across the plate. This can be mitigated by using specialized plate seals, humidified incubators, and strategic placement of controls during validation and screening [8].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Reagents for Robust HTS Assays

| Item | Function & Criticality | Key Considerations |

|---|---|---|

| Microplates | The physical platform for the assay. Material and surface chemistry are critical for performance. | Select material (e.g., polystyrene, polypropylene) and surface treatment (e.g., non-binding) compatible with assay components to minimize non-specific binding [8]. |

| Enzyme/Protein Target | The biological component of the assay. Purity and stability are paramount. | Validate specific activity for each new lot. Determine stability under storage and assay conditions (freeze-thaw cycles, in-assay longevity) [7]. |

| Substrate/ Ligand | The molecule converted or bound by the target to generate a detectable product. | The quality (purity, stability) directly impacts the background signal and the dynamic range of the assay (S:B ratio) [7]. |

| Control Compounds | Pharmacological tools to define the Max, Min, and Mid signals for validation and QC. | Use a well-characterized reference agonist/antagonist for the Mid signal (e.g., at its IC50 concentration). Purity and stability must be assured [7]. |

| DMSO | The universal solvent for compound libraries. | Test for assay compatibility; final concentration should be as low as possible (typically <1% for cell-based assays). Use a consistent, high-quality source [7]. |

| Detection Reagents | Components required to generate the measurable signal (e.g., fluorescent probes, MS buffers). | Must be validated for stability and lack of interference with the product of interest. In MS assays, buffers must be MS-compatible [6] [7]. |

In high-throughput screening (HTS), the pursuit of novel therapeutic candidates is often hindered by technical challenges that can compromise data integrity and lead to costly false leads. Assay interference, compound artifacts, and reagent instability represent a triad of fundamental hurdles that directly impact the success and efficiency of drug discovery campaigns. These issues are particularly critical within the context of improving product tolerance—the ability of an assay system to reliably produce accurate results despite the presence of potentially disruptive factors. This guide provides researchers with practical troubleshooting frameworks and strategic approaches to identify, mitigate, and prevent these common pitfalls, thereby enhancing the robustness and predictive power of HTS experiments.

Understanding Common Interference Mechanisms

In HTS, various forms of compound-mediated interference can generate false positives—compounds that appear active but are not genuinely modulating the intended biological target. These artifacts are reproducible and concentration-dependent, making them particularly challenging to distinguish from true activity [9]. Understanding their origins is the first step toward developing effective countermeasures.

Key Interference Mechanisms:

- Compound Aggregation: Compounds can form colloidal aggregates that non-specifically sequester or inhibit proteins. This is a predominant mechanism of false positives in biochemical assays and can sometimes account for 90-95% of initial actives in a screen [9]. Inhibition from aggregates is often characterized by steep Hill slopes in dose-response curves and is sensitive to detergent addition and enzyme concentration [9].

- Optical Interference: Many compounds have intrinsic properties that interfere with light-based detection methods.

- Fluorescence: Compounds that fluoresce at wavelengths similar to the assay's reporter can cause a false increase or decrease in signal [9]. In certain assays using blue-shifted fluorescence, fluorescent compounds can constitute up to 50% of the initial actives [9].

- Absorbance/Quenching: Compounds that absorb the excitation light or quench the emitted light can artificially suppress the assay signal [10].

- Luciferase Inhibition: In cell-based or biochemical assays utilizing firefly luciferase, compounds can directly inhibit the luciferase enzyme itself, mimicking a true inhibitory response. This single mechanism can be responsible for up to 60% of actives in some cell-based assays [9].

- Redox Activity & Covalent Modifiers: Some compounds can undergo redox cycling in the presence of reducing agents like DTT to generate hydrogen peroxide, which may inactivate enzymes. Others contain reactive functional groups that covalently modify the target protein, leading to non-druglike, irreversible inhibition [9] [10].

- Chemical Instability: Compounds or critical assay reagents may degrade under assay conditions (e.g., in DMSO stocks or aqueous buffer), leading to a loss of signal over time and false negatives. Instability can arise from hydrolysis, oxidation, or photodegradation [10].

Table 1: Summary of Major Assay Interference Types and Mitigation Strategies

| Interference Type | Effect on Assay | Key Characteristics | Prevention & Mitigation Strategies |

|---|---|---|---|

| Compound Aggregation [9] | Non-specific enzyme inhibition; protein sequestration. | Steep Hill slopes; inhibition reversible by detergent or dilution; sensitive to enzyme concentration. | Include 0.01-0.1% Triton X-100 in assay buffer; use biophysical methods for confirmation. |

| Compound Fluorescence [9] | False increase or decrease in fluorescent signal. | Reproducible, concentration-dependent; varies with excitation/emission wavelengths. | Use red-shifted fluorophores; perform a fluorescence "pre-read"; use time-resolved fluorescence (TR-FRET). |

| Firefly Luciferase Inhibition [9] | False inhibition signal in luciferase-based assays. | Concentration-dependent inhibition of purified luciferase. | Counter-screen against purified luciferase; use orthogonal assays with a different reporter (e.g., β-lactamase). |

| Redox Cyclers [9] | Generation of H₂O₂ leading to enzyme inactivation. | Activity diminished by high [DTT] or eliminated by catalase; time-dependent. | Replace DTT/TCEP with weaker reducing agents (e.g., glutathione); include catalase in the assay. |

| Covalent Modifiers [9] [10] | Irreversible, non-specific target inhibition. | Often time-dependent; not reversible by dilution. | Use computational filters to identify reactive functional groups; assess reversibility by dilution. |

Troubleshooting Guides & FAQs

Frequently Asked Questions on Assay Interference

Q1: My primary HTS yielded a high hit rate. How can I quickly triage these hits to identify false positives? Begin with a rigorous data analysis to identify non-physiological patterns, such as compounds that are active only at the highest concentration or show activity across multiple unrelated assays (frequent hitters) [10]. The most efficient first step is to re-test the top actives from the primary screen in a dose-response format using the original assay. This confirms reproducibility. Follow this immediately with a series of counter-screens designed to rule out common interference mechanisms, such as testing for luciferase inhibition or compound fluorescence [9].

Q2: What are the best practices for designing a secondary assay to validate primary screen hits? An effective secondary assay should be orthogonal—meaning it uses a different detection technology or assay format than the primary screen [9]. For example, if the primary screen was a luminescence-based reporter assay, a good orthogonal assay could be a high-content imaging assay quantifying a downstream phenotypic change [11] [10]. This ensures that the observed activity is due to a genuine effect on the biology, not the assay format. The secondary assay should also be more mechanistically informative to help establish the compound's mechanism of action [9].

Q3: How can I prevent compound aggregation during screening? The most common and effective strategy is to include a non-ionic detergent, such as 0.01-0.1% Triton X-100, in the assay buffer [9]. This concentration is typically sufficient to disrupt aggregates without denaturing most proteins. Other strategies include using lower compound concentrations during follow-up studies and employing biophysical methods like dynamic light scattering (DLS) to confirm aggregation in problematic compounds [10].

Q4: My assay shows high well-to-well variability, especially on the edges of the plate. What could be the cause? This is a classic "edge effect," often caused by evaporation in the outer wells of the microplate during incubation, leading to increased compound and reagent concentrations [10]. This is exacerbated in miniaturized assays with lower volumes. Mitigation strategies include using plates with optically clear lids, ensuring high humidity in incubators, using automated lid removal to minimize exposure time, or pre-incubating plates to allow for thermal equilibration before reading [10].

Q5: How does reagent instability manifest in an HTS assay, and how can I monitor for it? Reagent instability can lead to a progressive decline in assay signal-to-background (S/B) or Z'-factor over the course of a screening run [10]. This is often seen as a "drift" in the values of the control wells from the beginning to the end of a plate or screen. To monitor this, include robust positive and negative controls in multiple locations on every plate (e.g., top, middle, bottom) [10]. If a time-dependent degradation of signal is observed, consider aliquoting and freezing reagents, preparing fresh reagents daily, or adding stabilizers to the assay buffer.

Decision Workflow for Identifying Interference

The following diagram outlines a logical workflow for systematically investigating and resolving the root cause of artifactual activity in screening hits.

Proactive Strategies for Robust Assay Design

Preventing interference is more efficient than troubleshooting it post-screening. Integrating robustness into the initial assay design is paramount for improving product tolerance.

1. Prioritize Orthogonal Assay Development: From the outset, plan for a primary screen and an orthogonal confirmation assay. This forward planning influences the choice of the primary format, ensuring a viable orthogonal technology is available [9] [10]. For instance, pairing a biochemical assay with a cell-based phenotypic readout can effectively filter out target-specific actives from technology-specific artifacts.

2. Employ Robust Assay Formats: Certain assay technologies are inherently less prone to specific interferences.

- Time-Resolved FRET (TR-FRET): This method uses long-lived lanthanide fluorophores, introducing a time delay between excitation and measurement. This effectively bypasses short-lived background fluorescence from compounds or plastics, significantly reducing fluorescent interference [12].

- Label-Free Technologies: Methods like surface plasmon resonance (SPR) or mass spectrometry (MS)-based readouts avoid optical artifacts entirely by directly measuring binding or mass changes [13] [10]. While throughput can be a limitation, they are powerful for confirmation.

- Cellular Imaging Assays: High-content screening (HCS) provides multi-parameter data that can help distinguish specific activity from general cytotoxicity [14].

3. Implement Rigorous QC and Control Strategies: A well-designed plate is a key diagnostic tool.

- Controls: Include multiple positive and negative controls distributed across the plate (e.g., in columns 1 and 2, and throughout the plate) to monitor for spatial biases and assay drift [10].

- QC Metrics: Consistently track statistical parameters like the Z'-factor (for yes/no assays) or Minimum Significant Ratio (MSR) (for potency values) to quantitatively assess assay robustness and reproducibility over time [14] [10].

- Compound Controls: Include known interferers (e.g., a fluorescent compound, a known aggregator) as internal controls to validate the performance of your interference counterscreens.

Experimental Protocols for Identifying Artifacts

Protocol 1: Detecting and Mitigating Compound Aggregation

Principle: This protocol determines if a compound's apparent inhibition is caused by the formation of colloidal aggregates that non-specifically sequester proteins. The addition of non-ionic detergent disrupts these aggregates, abolishing the inhibitory effect if aggregation is the cause [9].

Materials:

- Compound of interest (in DMSO)

- Assay buffer (without detergent)

- Triton X-100 (10% v/v stock in water)

- Standard assay reagents (enzyme, substrate, etc.)

Method:

- Prepare two identical sets of serial dilutions of the test compound in assay buffer.

- To one set, add Triton X-100 to a final concentration of 0.01% - 0.1%. To the other set, add an equivalent volume of water or buffer.

- Run the standard assay protocol in parallel for both sets, ensuring the final DMSO concentration is identical (typically ≤1%).

- Measure the IC₅₀ values for the compound in the presence and absence of detergent.

Interpretation: A significant right-shift (increase) in the IC₅₀ value (e.g., >10-fold) in the presence of detergent is a strong indicator that the inhibition was caused by compound aggregation.

Protocol 2: Counterscreen for Firefly Luciferase (FLuc) Inhibitors

Principle: This protocol confirms whether a compound's activity in a FLuc-based assay is due to direct inhibition of the luciferase enzyme rather than the intended biological pathway [9].

Materials:

- Recombinant firefly luciferase

- Luciferin substrate (at Kₐ concentration)

- ATP

- Luciferase assay buffer

- Test compounds and a control FLuc inhibitor (if available)

Method:

- In a white, solid-bottom plate, mix recombinant FLuc with its substrate luciferin and ATP in a buffer that mimics the ionic and pH conditions of your primary assay.

- Critical: Use the Kₐ concentration of luciferin to ensure the assay is sensitive to competitive inhibitors.

- Add the test compounds in a dose-response series.

- Initiate the reaction and measure luminescence immediately.

- Normalize data to vehicle control (0% inhibition) and no-enzyme control (100% inhibition).

Interpretation: Compounds that show direct, concentration-dependent inhibition of the purified luciferase are likely FLuc inhibitors, and their activity in the primary cell-based or biochemical assay is suspect.

The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Key Research Reagent Solutions for Mitigating Interference and Instability

| Reagent / Material | Function in Troubleshooting | Key Considerations |

|---|---|---|

| Triton X-100 [9] | Non-ionic detergent used to disrupt compound aggregates in biochemical assays. | Effective at 0.01-0.1%. Test for compatibility with your target protein, as it can denature some sensitive proteins. |

| Tween-20 | Alternative non-ionic detergent for preventing aggregation. | Can be used similarly to Triton X-100. Some assays may show preference for one over the other. |

| Catalase [9] | Enzyme that decomposes hydrogen peroxide (H₂O₂). Used to identify redox cycling compounds. | Addition to the assay will abolish activity caused by H₂O₂ generation from redox cyclers. |

| Non-reducing Assay Buffers [9] | Buffers without DTT or TCEP help identify redox-sensitive artifacts. | Replacing strong reducing agents with weaker ones (e.g., glutathione) can minimize redox cycling without compromising essential reducing environments. |

| BSA or Carrier Proteins | Can stabilize dilute proteins, reduce non-specific binding, and sometimes mitigate weak aggregation. | May interfere with some protein-protein interactions or compound binding. Requires empirical testing. |

| DMSO-tolerant Assay Components | Ensures assay robustness to the solvent used for compound storage and dilution. | Validate that all assay components (enzymes, cells, detectors) are tolerant to the final DMSO concentration (typically 0.5-1%). |

| Recombinant Reporter Enzymes [9] (e.g., Firefly Luciferase) | Essential for running specific counterscreens for assay interference. | Use under the same substrate conditions (Kₐ) as your primary assay for relevant results. |

| Stable Cell Lines with Alternative Reporters | Provides a ready path to orthogonal assay confirmation. | Having a cell line with a β-lactamase or SEAP (secreted embryonic alkaline phosphatase) reporter allows quick confirmation of activity independent of luciferase. |

Advanced Methodologies for Enhancing Assay Resilience and Throughput

Leveraging Universal Biochemical Assays for Broader Target Applicability

Universal biochemical assays represent a transformative approach in high-throughput screening (HTS) for drug discovery, offering a flexible platform that can be applied across diverse target classes rather than being limited to a single specific target. These assays, such as the Transcreener platform, utilize a common, detectable signal—like the formation of ADP—to monitor enzymatic activity for a wide range of targets including kinases, ATPases, GTPases, helicases, PARPs, and sirtuins [15]. This universality provides significant advantages for improving product tolerance in HTS campaigns, as a single, well-characterized assay format can be deployed across multiple projects, thereby reducing development time, validation resources, and inter-assay variability that often plogs target-specific assay systems [15]. By focusing on universal reaction products rather than target-specific events, these assays offer researchers a powerful tool for accelerating hit identification and lead optimization while maintaining robust performance metrics essential for reliable screening outcomes.

Key Advantages and Implementation Strategies

Fundamental Benefits for Screening Efficiency

The implementation of universal biochemical assays directly addresses several critical challenges in high-throughput screening environments. First, these assays provide exceptional methodological consistency across different target classes, which simplifies training, protocol standardization, and data interpretation across multiple projects or screening campaigns [15]. This consistency is particularly valuable for large-scale screening operations where assay robustness directly impacts data quality and reproducibility.

Second, universal assays offer significant economic advantages by reducing the need to develop, optimize, and validate new assay systems for each novel target. The substantial resource investment required for assay development—including reagents, personnel time, and instrumentation—can be amortized across multiple projects, making the screening process more cost-effective without compromising data quality [15].

Third, these assays enhance product tolerance by providing a consistent analytical framework that accommodates variations in enzyme targets while maintaining reliable performance metrics. This tolerance for target diversity enables researchers to apply the same quality control standards and troubleshooting approaches across different projects, leading to more predictable and reproducible outcomes throughout the drug discovery pipeline [15].

Practical Implementation Framework

Successfully implementing universal biochemical assays requires careful consideration of several key factors. The selection of appropriate detection methods—such as fluorescence polarization (FP), fluorescence intensity (FI), or time-resolved FRET (TR-FRET)—should align with both the universal readout (e.g., ADP formation) and the available instrumentation [15]. Additionally, researchers must establish target-specific validation parameters to ensure that the universal assay format maintains appropriate sensitivity and specificity for each new application.

Proper plate selection and automation compatibility are also critical implementation factors. Universal assays are typically configured in miniaturized formats (96-, 384-, or 1536-well plates) to maximize throughput while minimizing reagent consumption [15]. Ensuring compatibility with automated liquid handling systems is essential for maintaining assay precision and reproducibility in high-throughput environments. Furthermore, researchers should establish rigorous quality control measures, including appropriate Z'-factor calculations, signal-to-noise determinations, and control strategies to monitor assay performance across multiple screening campaigns and target classes [16] [15].

Essential Research Reagent Solutions

The successful implementation of universal biochemical assays relies on a foundation of critical reagents and materials that ensure robust, reproducible performance across diverse targets and screening campaigns. The following table summarizes these essential components and their functions:

| Reagent/Material | Primary Function | Application Notes |

|---|---|---|

| Transcreener ADP² Assay | Universal detection of ADP formation for multiple enzyme classes | Compatible with kinases, ATPases, GTPases; works with FP, FI, or TR-FRET detection [15] |

| Universal Nuclease | Degradation of contaminating nucleic acids in protein samples | Available in various unit sizes (5kU-100kU); critical for sample preparation [17] |

| Control Sets (CHO, HEK, E.coli) | Run-to-run quality control for specific sample matrices | Aliquot for single use; store at -80°C; establishes statistical performance ranges [16] |

| White Microplates | Luminescence signal optimization with clear bottoms | Reduces crosstalk; ideal for bioluminescent detection methods [18] |

| Master Mix Reagents | Minimizing variability between replicates and experiments | Prepare in bulk; use calibrated multichannel pipettes for distribution [18] |

Troubleshooting Guide: Common Experimental Challenges and Solutions

Assay Performance and Signal Issues

Problem: Weak or No Signal Detection Weak or absent signals can result from multiple factors, including reagent instability, low enzyme activity, or suboptimal assay conditions.

- Solution: First, verify reagent functionality and check plasmid DNA quality if using transfected systems. Scale up reaction volumes per well to increase signal intensity. For transfection-based systems, optimize DNA-to-transfection reagent ratios to improve efficiency. Ensure signals exceed background and negative controls by a statistically significant margin [18].

- Preventive Measures: Regularly quality test critical reagents, especially luciferin and coelenterazine in luminescent assays, as these compounds can lose efficiency over time. Use freshly prepared reagents and measure signals before reaching the reagent's half-life period [18].

Problem: High Background Signal Elevated background signals compromise assay sensitivity and can lead to false positives.

- Solution: Switch to white microplates with clear bottoms to reduce optical crosstalk and improve signal-to-background ratios. Replace all reagents with fresh preparations to eliminate contamination as a potential source. For luminescence-based systems, use a luminometer with an injector to precisely control reaction timing [18].

- Advanced Troubleshooting: If high background persists, implement a washing step to remove unbound reagents or incorporate a quenching agent if compatible with the detection chemistry. For binding assays, optimize incubation times and temperatures to maximize specific binding while minimizing non-specific interactions.

Problem: High Signal Variability Between Replicates Inconsistent results between technical replicates undermine data reliability and statistical power.

- Solution: Prepare master mixes for all working solutions to ensure consistent reagent distribution across wells. Use calibrated multichannel pipettes with regular maintenance records. Implement an internal control reporter system, such as the dual luciferase assay, which calculates the ratio between firefly and Renilla luciferase activities to normalize experimental variations [18].

- Process Improvement: Establish strict pipette calibration schedules and technician training protocols. Implement automated liquid handling systems for critical dispensing steps to minimize human error. Introduce standardized plate maps with strategically positioned controls to identify spatial bias.

Interference and Specificity Challenges

Problem: Compound Interference with Detection Signals Some chemical compounds can interfere with assay detection systems, leading to false results.

- Solution: Identify and avoid known luciferase inhibitors such as resveratrol and specific flavonoids. Implement proper controls, including compound-only wells without biological components, to detect interference. Modify incubation times or reduce compound concentrations to minimize inhibitory effects while maintaining biological relevance [18].

- Interference Testing: Pre-screen compound libraries for known assay interference properties using computational tools. Implement counter-screens that use orthogonal detection methods to confirm putative hits. For colorimetric assays, avoid compounds with intrinsic absorbance at detection wavelengths.

Problem: Inadequate Assay Robustness (Low Z'-factor) Poor assay robustness, indicated by Z'-factors below 0.5, compromises the ability to distinguish true hits from background noise.

- Solution: Optimize enzyme and substrate concentrations to maximize the dynamic range between positive and negative controls. Reduce well-to-well variability through improved mixing and temperature uniformity. Extend incubation times if the reaction is not reaching endpoint. For binding assays, optimize washing stringency and detection reagent concentrations [15].

- Systematic Optimization: Perform statistical design of experiments (DoE) to simultaneously optimize multiple assay parameters. Implement routine monitoring of assay performance metrics to detect gradual degradation. Establish thresholds for Z'-factor (≥0.5), signal-to-noise ratio (≥10), and coefficient of variation (≤15%) as minimum acceptance criteria [15].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between biochemical and cell-based HTS assays? Biochemical assays measure direct enzyme or receptor activity in a purified, defined system, providing precise information about compound-target interactions. In contrast, cell-based assays capture pathway activity, phenotypic changes, or cellular responses in living cells, offering more physiological context but with increased complexity and potential for indirect effects [15].

Q2: What constitutes an excellent Z'-factor value in HTS, and why is it important? A Z'-factor between 0.5 and 1.0 is considered excellent and indicates a robust, reproducible assay with a wide separation between positive and negative controls. This statistical parameter is crucial for ensuring that an assay can reliably distinguish active compounds from inactive ones in high-throughput screens [15].

Q3: How can researchers minimize false positives and false negatives in universal biochemical assays? Employ careful assay design with appropriate controls, implement counter-screening approaches to identify assay artifacts, and use simple "mix and read" assay formats without coupling enzymes when possible. Additionally, utilize far-red tracers to reduce compound interference and conduct statistical analysis to establish meaningful hit identification thresholds [15].

Q4: What are the key considerations when modifying a universal assay protocol for a specific target? While universal assays are robust and allow protocol modifications to optimize performance for specific targets, any changes to sample volume, incubation times, or sequential schemes must be thoroughly qualified to ensure acceptable accuracy, specificity, and precision. It's essential to validate that modifications achieve the desired analytical performance without introducing new variables or compromising assay robustness [16].

Q5: How should universal assays be quality controlled across different target applications? Implement laboratory-specific controls made using your source of analyte in your sample matrices. Prepare these controls in bulk, aliquot for single use, and store at -80°C until stability is established. Use 2-3 controls spanning the analytical range (low, medium, and high concentrations) to monitor assay performance. Avoid relying solely on curve fit parameters for quality control, as they lack the sensitivity and specificity of true analyte controls [16].

Experimental Workflow and Performance Metrics

Universal Biochemical Assay Workflow

The following diagram illustrates the generalized workflow for implementing universal biochemical assays in high-throughput screening:

Quantitative Performance Metrics for Universal Biochemical Assays

The successful implementation of universal biochemical assays requires careful monitoring of key performance parameters. The following table outlines critical metrics and their optimal ranges:

| Performance Parameter | Optimal Range | Calculation Method | Significance in HTS | ||

|---|---|---|---|---|---|

| Z'-factor | 0.5 - 1.0 | 1 - (3σₚ + 3σₙ)/ | μₚ - μₙ | Measures assay robustness and quality; higher values indicate better separation between controls [15] | |

| Signal-to-Noise Ratio (S/N) | ≥10:1 | Signalₘₑₐₙ/Noiseₘₑₐₙ | Indicates ability to detect true signals above background; critical for sensitivity [15] | ||

| Coefficient of Variation (CV) | ≤15% | (σ/μ) × 100 | Measures well-to-well precision; lower values indicate higher reproducibility [15] | ||

| Signal Window | ≥2 | Dynamic range to distinguish active from inactive compounds; wider windows improve hit identification [15] | |||

| Replicate %CV | <5% (excellent) <20% (acceptable) | (σᵣₑₚₗᵢᶜₐₜₑₛ/μᵣₑₚₗᵢᵢₐₜₑₛ) × 100 | When precision is very good (%CV <5%), duplicate analysis is adequate. Samples with %CV >20% between replicates should be repeated [16] |

Advanced Applications and Emerging Trends

Universal biochemical assays are evolving to incorporate new technologies and approaches that enhance their utility in modern drug discovery. The integration of artificial intelligence and virtual screening with experimental HTS allows for more efficient compound prioritization and library design [15]. Similarly, the adoption of 3D cell cultures and organoids in secondary screening applications provides more physiologically relevant contexts for validating hits identified through initial biochemical screens [15].

The field is also witnessing increased implementation of high-content screening approaches that combine imaging with multiparametric analysis, adding layers of information to traditional biochemical readouts [15]. Microfluidics and miniaturization continue to advance, enabling further reductions in reagent costs and increases in throughput while maintaining data quality. Additionally, next-generation detection chemistries are pushing the boundaries of sensitivity, allowing researchers to detect increasingly subtle compound-target interactions [15].

These technological advancements, combined with the inherent flexibility of universal assay platforms, are creating new opportunities for accelerating drug discovery across diverse target classes and therapeutic areas. By adopting these innovative approaches within a robust quality control framework, researchers can further enhance product tolerance and screening efficiency in their HTS campaigns.

Troubleshooting Guides

Addressing High Variability and Poor Reproducibility

Problem: High well-to-well variability and inability to reproduce results across different users or days, leading to unreliable data and false positives/negatives [5].

Solutions:

- Implement Automated Liquid Handling: Utilize non-contact dispensers equipped with verification technology (e.g., DropDetection) to confirm dispensed volumes, standardizing pipetting and reducing human error [5].

- Conduct Plate Uniformity Studies: Before screening, run a 3-day plate uniformity assessment. Use interleaved-signal plate layouts with "Max," "Min," and "Mid" control signals to objectively quantify signal window and variability [7].

- Validate Reagent Stability: Determine the stability of all reagents under storage and assay conditions. Perform time-course experiments for each incubation step to establish acceptable timing ranges [7].

Managing Evaporation in Miniaturized Assays

Problem: Significant evaporation in 384-well and 1536-well formats, causing edge-well effects, concentration shifts, and high well-to-well variability [19].

Solutions:

- Use Optimal Microplates: Select microplates designed for miniaturization, such as those with seals or lids that minimize evaporation.

- Automate Liquid Handling with Precision: Employ automated, non-contact liquid handlers that reduce open-plate time. Systems capable of handling low volumes (sub-microliter) with high accuracy are essential [20] [19].

- Control Environmental Conditions: Perform assays in temperature- and humidity-controlled environments to mitigate evaporation.

Overcoming Liquid Handling Inaccuracies in Low Volumes

Problem: Liquid handling errors such as tip clogging, unsatisfactory reagent retrieval (high dead volumes), poor mixing, and carryover during miniaturization [19].

Solutions:

- Regularly Maintain and Calibrate Equipment: Establish a strict schedule for maintaining and calibrating automated liquid handlers.

- Utilize Non-Contact Dispensers: Implement liquid handlers using ink-jet or acoustic dispensing technology for sub-microliter volumes. This avoids issues like tip clogging and reduces cross-contamination [5] [20].

- Perform DMSO Compatibility Tests: Early in assay development, test the tolerance of your assay for the DMSO concentration used to deliver compounds. Run validation experiments with this final DMSO concentration [7].

Troubleshooting Poor Data Quality from Complex Biological Assays

Problem: Increased variability, imaging artifacts, and poor cell viability when adapting complex, biologically relevant assays (e.g., 3D cell cultures, iPSCs) to high-density microplates [19].

Solutions:

- Optimize Cell Handling Protocols: For sensitive cells (e.g., iPSCs), ensure even cell distribution during seeding. Use automated dispensers for consistent, gentle handling.

- Implement Multiplexed Readouts: Use advanced detection modalities (e.g., FRET, TR-FRET) or high-content imaging to gain more data per well, improving the robustness of your conclusions [19].

- Develop Enzyme-Coupled Reporter Systems: For enzymes whose products are hard to detect, couple the primary reaction to a secondary enzyme cascade that produces a measurable colorimetric or fluorescent output. This amplifies signal and improves sensitivity [21].

Frequently Asked Questions (FAQs)

Q1: What are the primary benefits of automating a high-throughput screening (HTS) workflow? Automation enhances data quality and reproducibility by standardizing processes and reducing human error. It significantly increases throughput and efficiency, allows for easy scaling of protocols, reduces costs through miniaturization (reagent savings up to 90%), and streamlines the management and analysis of vast multiparametric data sets [5].

Q2: Our lab is new to automation. What should we consider before implementing an automated system? First, assess your current workflow to identify bottlenecks and labor-intensive tasks (e.g., liquid handling, compound dilutions). When selecting equipment, consider your specific requirements for scale, precision at low volumes, and workflow flexibility. Also, evaluate the vendor's technical support, the system's ease of use, and its software integration capabilities [5] [22] [23].

Q3: How can we validate that our miniaturized assay is robust enough for an HTS campaign? A robust validation is essential. This includes [7]:

- Stability and Process Studies: Determining reagent stability and optimal incubation times.

- Plate Uniformity Assessment: A multi-day study using "Max," "Min," and "Mid" controls in an interleaved format to calculate key performance metrics like Z'-factor.

- Replicate-Experiment Study: Conducting experiments on different days to confirm reproducibility.

Q4: What are the key parameters for assessing assay performance during validation? The table below summarizes the key statistical parameters used to validate assay performance [7].

Table: Key Statistical Parameters for HTS Assay Validation

| Parameter | Description | Target Value |

|---|---|---|

| Z'-Factor | A measure of the assay signal window, accounting for both the dynamic range and the data variation of the positive and negative controls. | Z' > 0.5 is excellent for HTS. |

| Signal-to-Background (S/B) | The ratio of the mean signal of the positive control to the mean signal of the negative control. | A high ratio is desirable. |

| Coefficient of Variation (CV) | The ratio of the standard deviation to the mean, expressed as a percentage. Measures well-to-well variability. | < 10% is typically acceptable. |

Q5: What are common sources of assay artifacts in miniaturized HTS? Common artifacts include compound fluorescence or quenching at low volumes, DMSO sensitivity in cell-based assays (keep final concentration <1%), and meniscus effects or bubbles that interfere with optical readings in small wells. Using controls like "Max" and "Min" signals helps identify these interferences [7] [19].

Experimental Protocols & Data Presentation

Protocol 1: Plate Uniformity and Variability Assessment

This protocol is critical for validating any HTS assay before a full-scale screen [7].

1. Objective: To assess the signal uniformity, variability, and robustness of an assay across multiple plates and days.

2. Materials:

- Assay reagents (enzymes, substrates, cells, buffers)

- Appropriate microplates (96-, 384-, or 1536-well)

- Liquid handling automation

- Plate reader or detector

3. Procedure:

- Day 1-3: For a new assay, run the study over three separate days. For a transferred assay, two days may suffice.

- Plate Layout: Use an Interleaved-Signal Format on each plate.

- "Max" Signal (H): Represents the maximum assay response (e.g., uninhibited enzyme reaction, maximal cell agonist response).

- "Min" Signal (L): Represents the background or minimum signal (e.g., fully inhibited reaction, unstimulated cells).

- "Mid" Signal (M): Represents a mid-point signal (e.g., IC50 concentration of an inhibitor, EC50 concentration of an agonist).

- Preparation: Use independently prepared reagents each day.

- Data Collection: Read the plates according to your standard assay protocol.

4. Data Analysis:

- Calculate the mean (

Mean) and standard deviation (SD) for each signal type (Max, Min, Mid) on each plate. - Calculate the Z'-Factor for each plate:

Z' = 1 - [ (3*SD_Max + 3*SD_Min) / |Mean_Max - Mean_Min| ] - Calculate the Coefficient of Variation (CV) for each signal:

CV = (SD / Mean) * 100% - Calculate the Signal-to-Background (S/B):

S/B = Mean_Max / Mean_Min

Table: Example Plate Uniformity Results from a 384-Well Assay

| Day | Signal Type | Mean (RFU) | SD (RFU) | CV (%) | S/B | Z'-Factor |

|---|---|---|---|---|---|---|

| 1 | Max | 15,250 | 850 | 5.6 | 12.5 | 0.72 |

| Min | 1,220 | 105 | 8.6 | |||

| 2 | Max | 14,980 | 920 | 6.1 | 11.8 | 0.68 |

| Min | 1,270 | 115 | 9.1 | |||

| 3 | Max | 15,500 | 810 | 5.2 | 13.1 | 0.75 |

| Min | 1,183 | 98 | 8.3 |

Protocol 2: Implementing an Enzyme-Coupled Cascade Assay for Detection

This protocol is used when the primary enzymatic product is not easily measurable [21].

1. Objective: To create a detectable signal (absorbance or fluorescence) from a primary enzyme's activity by coupling it to one or more secondary enzymatic reactions.

2. Materials:

- Primary enzyme and its substrate

- Auxiliary enzymes and their co-subrates (e.g., Glucose Oxidase, Horseradish Peroxidase)

- Detectable substrate (e.g., Amplex UltraRed for fluorescence, formazan dye for absorbance)

- Buffer

- Multi-well plates and automated liquid handler

3. Workflow Diagram:

Enzyme Cascade Signal Amplification

4. Procedure:

- Step 1: In a microplate well, combine the primary enzyme, its substrate ("Substrate A"), and the auxiliary enzymes in excess. The auxiliary enzymes must not be the rate-limiting step.

- Step 2: Incubate the reaction under defined conditions (pH, temperature). The primary enzyme converts "Substrate A" to "Product B".

- Step 3: "Product B" becomes the substrate for the first auxiliary enzyme ("Enzyme 2"), which converts it to "Product C".

- Step 4: "Product C" is used by the final auxiliary enzyme ("Enzyme 3"), often an oxidase/peroxidase pair, to generate a colored or fluorescent signal.

- Step 5: Monitor the change in absorbance or fluorescence over time. The rate of signal generation is proportional to the activity of the primary enzyme.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for HTS Automation and Miniaturization

| Item | Function / Explanation |

|---|---|

| Non-Contact Liquid Handler | Automates dispensing of sub-microliter volumes with high precision, reducing variability and cross-contamination. Key for miniaturization [5] [20]. |

| High-Density Microplates (384/1536) | The physical platform for miniaturized assays. Choosing the right plate is critical to mitigate evaporation and optical artifacts [19]. |

| Stable, QC'ed Reagent Lots | Consistent reagent quality is fundamental to reproducibility. Validate new lots against previous ones in bridging studies [7]. |

| DMSO-Tolerant Assay Components | Test compounds are often dissolved in DMSO. Assay components must be stable at the final screening concentration (typically 0.1-1%) [7]. |

| Enzyme Cascade Kits | Pre-optimized mixtures of auxiliary enzymes (e.g., Glucose Oxidase/HRP) to easily create detectable readouts for otherwise "invisible" enzymatic reactions [21]. |

| Validated Control Compounds | Pharmacological standards (full agonists, antagonists, IC50/EC50 compounds) for generating "Max," "Min," and "Mid" signals during validation and screening [7]. |

Optimizing Reagent Formulations and Buffer Systems for Maximum Stability

Troubleshooting Guides and FAQs

This section addresses common challenges researchers face when optimizing reagent formulations and buffer systems for high-throughput screening (HTS) assays, with a focus on improving product tolerance and assay robustness.

Frequently Asked Questions

1. What is the ideal pH for a protein formulation in HTS assays? There is no single "ideal" pH that applies to all proteins. Each protein has a unique isoelectric point (pI) and an optimal pH range for stability. The goal is to find a pH that reduces both physical aggregation and chemical degradation. For many monoclonal antibodies, this range is often slightly acidic, typically between pH 5.0 and 6.5, but this must be determined experimentally for each molecule [24].

2. How does pH affect the viscosity of a protein solution in high-concentration formulations? pH changes alter the net charge of a protein, which directly affects intermolecular interactions. At certain pH values, particularly near the protein's isoelectric point, attractive interactions can increase, leading to higher viscosity. Adjusting the pH away from the pI can increase electrostatic repulsion between molecules, which often helps to lower viscosity [24].

3. Which buffers are most commonly used for protein formulations in HTS? Commonly used buffers include histidine, acetate, citrate, and phosphate. Histidine has become especially popular for high-concentration antibody formulations because it functions well in the pH 5.5 to 6.5 range and can help reduce viscosity in some cases [24].

4. What are the primary causes of protein instability and aggregation in HTS assays? Proteins are sensitive molecules prone to aggregation and degradation during manufacturing, storage, and administration. Key issues include [24] [25]:

- Molecular Crowding: At high concentrations, dense molecular packing increases unintended interactions.

- Suboptimal pH: Deviations from the optimal pH range can disrupt the weak bonds maintaining the protein's 3D structure.

- Chemical Degradation: Processes like deamidation and oxidation compromise protein structure and function.

- Shear Stress: Mechanical forces during processing can induce denaturation and aggregation.

5. How early in development should pH and buffer screening begin? It is advisable to start formulation and CMC strategies as early as the preclinical stage. Early screening for optimal pH and buffer conditions can identify potential stability problems before they become major hurdles, creating a smoother path to clinical trials and eventual market approval [24].

Troubleshooting Common Experimental Issues

| Issue | Possible Cause | Solution |

|---|---|---|

| High Viscosity | Protein concentration too high; pH near pI; strong protein-protein interactions. | Incorporate viscosity-reducing excipients (e.g., L-Proline, L-Arginine); adjust pH away from pI [26] [24]. |

| Protein Aggregation | Suboptimal buffer pH; insufficient stabilizers; exposure to mechanical or thermal stress. | Screen buffers and excipients (e.g., sugars, surfactants) using high-throughput platforms like UNCLE; optimize thermal stability [26] [27]. |

| Chemical Degradation | Oxidative or deamidation pathways; inappropriate storage conditions. | Add stabilizing excipients like L-Methionine (antioxidant); optimize buffer composition and pH to slow degradation [26] [24]. |

| Poor Assay Reproducibility | Buffer preparation inconsistencies; pH shifts during UF/DF processing. | Use automated buffer preparation systems; account for Gibbs-Donnan effect during ultrafiltration/diafiltration (UF/DF) [25] [28]. |

| Unexpected pH Shifts | Gibbs-Donnan effect during UF/DF; volume-exclusion effects. | Perform UF/DF feasibility studies; fine-tune diafiltration buffer conditions to maintain formulation integrity [25]. |

Experimental Protocols for Formulation Optimization

Protocol 1: High-Throughput Formulation Screening Using an Automated Platform

This protocol uses integrated computational and experimental screening to rapidly identify optimal buffer and excipient conditions, minimizing aggregation and viscosity in high-concentration protein formulations [26].

Materials:

- Protein of interest (e.g., mAb)

- Buffer stock solutions (e.g., Histidine, Acetate, Citrate, Succinate)

- Excipients (See "The Scientist's Toolkit" table below)

- High-throughput protein stability analyzer (e.g., UNCLE)

- 96-well plates

- Liquid handling robot

Method:

- In Silico Risk Assessment: Use computational modeling (e.g., CamSol, TAP scores) to predict the molecule's developability, including risks for solubility, viscosity, and aggregation propensity based on its structural characteristics [26].

- Buffer and Excipient Preparation:

- Prepare a 96-well formulation plate using an automated liquid handler. Each well contains a unique combination of buffer, pH, and excipients.

- Common buffers: 20 mM Histidine, Acetate, Citrate; pH range 5.0-6.5.

- Include excipients: sucrose (stabilizer), L-Arginine-HCl (viscosity reducer), Polysorbate 80 (surfactant) [26].

- Buffer Exchange: Exchange the protein solution into each formulation condition using 50 kDa ultrafiltration centrifuge tubes. Centrifuge at 4000 rpm for 30 minutes per cycle at 4°C until buffer exchange efficiency exceeds 99% [26].

- High-Throughput Stability Measurement:

- Load the 96-well plate onto the UNCLE system.

- Measure key stability parameters simultaneously for all samples:

- Melting Temperature (Tm): Assesses conformational stability.

- Aggregation Temperature (Tagg): Indicates colloidal stability.

- Polydispersity Index (PDI): Measures sample homogeneity.

- G22: Quantifies intermolecular interaction strength [26].

- Data Analysis: Use multivariate regression analysis to identify statistically significant formulation factors that maximize Tm and Tagg while minimizing PDI and G22. The optimal formulation is selected based on the best overall stability profile [27].

Protocol 2: HT-PELSA for System-Wide Protein-Ligand Profiling

This high-throughput peptide-centric local stability assay is used to map protein-ligand interactions and determine binding affinities, which is crucial for understanding off-target effects in drug screening [29].

Materials:

- Crude cell, tissue, or bacterial lysates

- Ligand of interest (e.g., small molecule inhibitor)

- 96-well C18 plates

- Trypsin

- Mass spectrometer (e.g., Orbitrap Astral)

Method:

- Sample Preparation in 96-Well Format:

- Distribute lysates into a 96-well plate.

- Treat replicates with different concentrations of the ligand (e.g., staurosporine) or vehicle control [29].

- Limited Proteolysis:

- Add trypsin to all wells simultaneously for a standardized 4-minute digestion at room temperature.

- This step is streamlined from the original PELSA protocol, enhancing throughput and reproducibility [29].

- Peptide Separation:

- Use 96-well C18 plates to separate intact, undigested proteins from shorter peptides. The peptides elute through the plate.

- This step replaces molecular weight cut-off filters, preventing clogging and enabling the use of crude lysates [29].

- Mass Spectrometry Analysis:

- Analyze the eluted peptides using a next-generation mass spectrometer.

- Identify and quantify peptides that show increased or decreased abundance upon ligand binding.

- Dose-Response and Affinity Calculation:

- For significantly stabilized or destabilized peptides, generate dose-response curves across the different ligand concentrations.

- Calculate the half-maximum effective concentration (EC₅₀) values to determine binding affinities for numerous protein targets in parallel [29].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and their functions in optimizing formulations for stability in high-throughput screening environments.

| Item | Function | Example Application |

|---|---|---|

| L-Histidine / Histidine-HCl | Buffer system for maintaining stable pH, commonly in the 5.5-6.5 range [24]. | High-concentration mAb formulations [26]. |

| L-Proline | Viscosity reducer for high-concentration protein solutions [26]. | Improving syringeability and manufacturability of subcutaneous injections [26]. |

| L-Arginine-HCl | Viscosity reducer and stabilizer [26] [24]. | Mitigating protein-protein interactions in concentrated solutions [26]. |

| Sucrose / Trehalose | Stabilizers (osmolytes) that improve conformational stability [24]. | Protecting proteins from denaturation during storage and freeze-thaw cycles [24]. |

| Polysorbate 20 / 80 | Surfactants that minimize aggregation at interfaces [26] [24]. | Preventing surface-induced stress during mixing and filling operations [26]. |

| L-Methionine | Antioxidant that mitigates oxidative degradation [26]. | Stabilizing methionine and cysteine residues in formulations [26]. |

Workflow Visualization

High-Throughput Formulation Screening Workflow

This diagram illustrates the integrated computational and experimental workflow for optimizing protein formulations.

HT-PELSA for Protein-Ligand Interaction Mapping

This diagram outlines the high-throughput workflow for identifying ligand binding sites and determining binding affinities.

Implementing DMSO Tolerance Testing to Mitigate Solvent Effects

Technical Support Center

Troubleshooting Guides and FAQs

Frequently Asked Questions

What is the primary concern with using DMSO in HTS assays? DMSO can act as a differential inhibitor of enzymes and interfere with assay signals. It is not an inert solvent and can directly modulate biological activity, potentially leading to false positives or false negatives in screening campaigns [30].

How can I prevent my inhibitor compound from precipitating when added to an aqueous assay mixture? Avoid making serial dilutions of a DMSO stock solution directly into buffer. Instead, perform initial serial dilutions in DMSO itself, then add this final diluted sample to your buffer or incubation medium. The compound may only be soluble in an aqueous medium at its working concentration [31].

What is the generally tolerated final concentration of DMSO in cell-based assays? Most cells can tolerate up to 0.1% final DMSO concentration. It is crucial to include a control with DMSO alone in every experiment to account for any solvent effects [31].

How does DMSO specifically affect Aldose Reductase (AR) assays? DMSO acts as a weak, differential inhibitor of Aldose Reductase. It shows competitive inhibition towards L-idose reduction, mixed non-competitive inhibition towards HNE reduction, and no effect on the reduction of GSHNE or GAL. This substrate-dependent behavior is critical when identifying differential inhibitors [30].

What is a key step in reagent preparation to maintain compound integrity? Use a fresh stock bottle of DMSO that is deemed free of any moisture. Contaminating moisture can accelerate compound degradation or cause insolubility [31].

Experimental Protocols

Detailed Methodology: DMSO Tolerance Test for Aldose Reductase (AR) Assay

This protocol is adapted from a study investigating DMSO as a differential inhibitor of Aldose Reductase [30].

1. Reagent Preparation

- Purified Human Recombinant AR (hAR): Express and purify hAR, confirming purity via SDS-PAGE (a single band at ~34 kDa). Dialyze the enzyme extensively against a 10 mM sodium phosphate buffer, pH 7.0, before use [30].

- Substrate Solutions: Prepare separate solutions of L-idose and HNE (trans-4-hydroxy-2,3-nonenal) in appropriate buffers [30].

- DMSO Stock Solutions: Prepare DMSO at the desired final concentrations in the assay mixture (e.g., 40 mM, 100 mM, 200 mM). Ensure the concentration is kept constant when varying other parameters like inhibitor or substrate concentrations [30].

2. Assay Procedure

- The AR activity is determined by monitoring the decrease in absorbance at 340 nm, which corresponds to NADPH oxidation [30].

- The standard assay mixture contains [30]:

- 0.25 M sodium phosphate buffer, pH 6.8

- 0.18 mM NADPH

- 0.4 M ammonium sulphate

- 0.5 mM EDTA

- Substrate (e.g., 4.7 mM GAL, or varying concentrations of L-idose or HNE)

- Purified hAR

- DMSO at the test concentration.

- Perform the assay at 37°C.

- Vary the substrate concentrations while keeping the DMSO concentration constant to determine the kinetic model of inhibition (e.g., competitive, mixed).

3. Data Analysis

- Calculate enzyme activity based on NADPH oxidation (ε₃₄₀ = 6.22 mM⁻¹·cm⁻¹).

- For each substrate (L-idose and HNE), determine the inhibitory constant (Ki) and, if applicable, the dissociation constant for the ESI complex (Ki') using kinetic models.

- Use statistical analysis (e.g., two-way ANOVA) to compare the effects of different DMSO concentrations on the inhibition features of test compounds.