Taming Combinatorial Explosion in Biomedical Research: Smart Strategies for Pathway and Drug Combination Testing

Combinatorial explosion presents a fundamental challenge in biomedical research, rendering exhaustive testing of pathway variants or drug combinations experimentally infeasible.

Taming Combinatorial Explosion in Biomedical Research: Smart Strategies for Pathway and Drug Combination Testing

Abstract

Combinatorial explosion presents a fundamental challenge in biomedical research, rendering exhaustive testing of pathway variants or drug combinations experimentally infeasible. This article provides a comprehensive guide for researchers and drug development professionals on systematic strategies to overcome this bottleneck. We explore the foundational causes of combinatorial complexity in metabolic engineering and polypharmacology, detail cutting-edge methodological approaches including machine learning-guided Design-Build-Test-Learn (DBTL) cycles and network-based prediction models, offer practical troubleshooting and optimization techniques for reducing experimental effort, and finally, present robust validation frameworks and comparative analyses of computational tools. By synthesizing insights from recent advances, this work aims to equip scientists with a practical toolkit for navigating high-dimensional biological design spaces efficiently.

Understanding the Bottleneck: The Root Causes of Combinatorial Explosion in Biological Systems

Defining Combinatorial Explosion in Pathway Variant and Drug Combination Spaces

FAQs on Combinatorial Explosion in Research

What is combinatorial explosion and why is it a problem in biological research? Combinatorial explosion refers to the rapid growth of complexity and the number of possible combinations that arise as the number of variables in a system increases [1]. In biological research, such as testing pathway variants or drug combinations, this phenomenon makes it experimentally infeasible to test every possible combination due to resource constraints [2] [3]. For example, the number of possible Latin squares, a combinatorial object, grows from 2 for n=2 to over 9.9 x 10³⁷ for n=10 [1]. This "combinatorial explosion" renders full factorial searches in high-dimensional spaces, like those common in metabolic engineering or combination therapy screening, impossible [2].

What are some real-world examples of combinatorial explosion in a research setting? A concrete example is high-throughput drug combination screening. A single drug combination tested in an 8x8 dose-response matrix requires 64 viability measurements [4]. Screening 466 drug pairs with one drug (e.g., Ibrutinib) in a single cell line would require 29,824 data points for a single matrix, and this scales multiplicatively with additional cell lines or patient samples [4]. In metabolic engineering, optimizing a pathway by simultaneously varying just 4 elements, each with 10 variants, creates 10,000 (10⁴) different genetic configurations to test [2].

What computational strategies can help manage combinatorial explosion? Machine learning models, such as the DECREASE framework, can predict full dose-response synergy landscapes using a minimal set of measured data points (e.g., a single row, column, or diagonal of a full dose-response matrix), drastically reducing experimental burden [4]. Statistical experimental design methods, like pairwise (or "all-pairs") testing, can provide high coverage of interacting variables while using a tiny fraction of the tests required for full combinatorial coverage [3].

What are the key differences between synergy-driven and potency-driven efficacy in drug combinations? Synergy-driven efficacy prioritizes combinations where the combined effect is greater than expected (e.g., using the Bliss or Loewe models) [5]. In contrast, potency-driven efficacy, measured by metrics like the Index of Achievable Efficacy (IAE) from the BRAID model, prioritizes combinations based on their overall potent effect, which may occur even without strong synergy [5]. This distinction is crucial, as some potent combinations may be missed if screened for synergy alone.

Troubleshooting Common Experimental Challenges

Problem: Inaccurate Prediction of Drug Combination Effects

Issue: Machine learning models like DECREASE provide poor synergy predictions from limited data. Solution:

- Verify Experimental Design: The fixed-concentration design using a row or column at the IC50 of single agents has been shown to lead to markedly decreased prediction accuracy (

rBLISS=0.58) [4]. Instead, use a design that measures the diagonal of the dose-response matrix or random points, which showed high accuracy (rBLISS0.82–0.91) [4]. - Check for Outliers: Use the first step of the DECREASE pipeline, which detects outlier measurements inherent in HTS experiments by analyzing differences between observed responses and those expected based on the Bliss approximation [4].

- Model Selection: Ensure the use of an ensemble of composite Non-negative Matrix Factorization (cNMF) and regularized boosted regression trees (XGBoost), which demonstrated the best prediction accuracy in comparative analysis [4].

Problem: Unmanageable Library Size in Pathway Optimization

Issue: The number of genetic variants or pathway configurations is too large to test. Solution:

- Apply Heuristics and Rational Library Reduction: Do not perform full factorial searches. Instead, use methods like "rationally reduced libraries" that exploit prior knowledge or empirical rules to restrict the experimental effort to an affordable size [2] [6].

- Use Equivalence Classes: When creating a test plan, bias towards fewer values by grouping parameters into equivalence classes—sets of values expected to behave similarly—especially for early draft tests [3].

- Employ Oligo-linker Mediated Assembly (OLMA) or Similar Methods: Utilize modern DNA assembly techniques that facilitate the creation of variant libraries where several pathway elements are diversified simultaneously in a more controlled and efficient manner [2].

Problem: Unstable or Biased Synergy Scores

Issue: Traditional synergy metrics (e.g., Combination Index, Bliss Independence) yield unstable or biased results. Solution:

- Switch to a Response-Surface Model: Replace index-based methods with response-surface approaches like the BRAID model, which has been shown to be more consistent and unbiased across different pharmacological conditions [5].

- Understand Model Biases: Be aware that the Bliss independence method is biased by the shape of the individual dose-response curves (Hill slopes). A Loewe additive surface combining two compounds with low Hill slopes will falsely appear antagonistic to Bliss, while combinations with high Hill slopes will appear synergistic [5].

- Use Robust Fitting: When using the Combination Index method, employ a robust least-squares optimization for dose-response analysis instead of the median effect method to improve stability [5].

Experimental Protocols for Efficient Screening

Protocol 1: Predicting Drug Combination Synergy with a Minimal Experimental Design

This protocol is based on the DECREASE machine learning method [4].

Objective: To accurately predict drug combination synergy and antagonism using a minimal set of pairwise dose-response measurements.

Materials:

- Cell line of interest

- Two drugs for combination testing

- Cell viability assay kit (e.g., ATP-based luminescence)

- Liquid handler or multichannel pipettes for high-throughput plating

- Plate reader

Method:

- Experimental Design Selection: Choose a cost-effective design. The most accurate and practical option is to measure only the diagonal of the full dose-response matrix (a fixed-ratio diagonal design) [4].

- Plate Layout: In a 96-well plate, format an 8x8 matrix for the two drugs. Prepare a dilution series for each drug along the axes.

- Sample Preparation:

- Seed cells at an optimal density for the assay duration in all wells.

- For the selected diagonal design, add the corresponding combination of Drug A and Drug B concentrations to wells forming the diagonal of the matrix. Leave control wells for single-agent effects and no-treatment controls.

- Dosing and Incubation: Add drugs according to the diagonal layout. Incubate for the predetermined time.

- Viability Assay: Perform the cell viability assay (e.g., add reagent, incubate, measure luminescence).

- Data Processing:

- Calculate percent inhibition for each well relative to controls.

- Input the measured diagonal dose-response data into the DECREASE web tool .

- Synergy Prediction: The DECREASE model, using an ensemble of cNMF and XGBoost, will predict the full dose-response matrix and calculate overall synergy scores using a reference model of your choice (e.g., Loewe, Bliss, HSA, or ZIP) [4].

Validation: In a validation study, this method using a diagonal design captured almost the same degree of synergy information as fully-measured dose-response matrices, with Pearson correlations (rBLISS) between 0.82 and 0.91 [4].

Protocol 2: A Strategic Heuristic for Combinatorial Pathway Optimization

This protocol is based on principles for reducing experimental effort in metabolic engineering [2].

Objective: To optimize a multi-gene pathway for product yield without testing all combinatorial variants.

Materials:

- Plasmid backbone(s)

- Library of genetic variants (e.g., promoter libraries, RBS libraries, gene homologs)

- Standard molecular biology reagents for assembly (e.g., restriction enzymes, ligase, or Gibson assembly mix)

- Host microbial strain (e.g., E. coli, S. cerevisiae)

- Selective media and product assay (e.g., HPLC, colorimetric assay)

Method:

- Parameter Identification: Identify the

npathway elements to be optimized (e.g., PromoterGene1, RBSGene2, CDS_Gene3). - Value Selection: For each parameter, select a limited number of variants (

v). Use heuristics like homolog performance or promoter strength rankings to pre-select the most promising 3-5 variants per element, rather than a random 10-20 [2]. - Library Design - Saturated Factorial Subset: Instead of creating a full factorial library (size =

vⁿ), which is often impractically large, use a combinatorial method like Oligo-linker Mediated Assembly (OLMA) or Golden Gate assembly to create a rationally reduced library that covers many combinations but not all [2]. - High-Throughput Screening:

- Transform the library into your host strain.

- Screen clones in a 96-well or 384-well deep-well plate format with selective media and conditions for production.

- Measure the product titer for each clone after a fixed fermentation time.

- Hit Analysis:

- Isolate the top 1% of performing clones.

- Sequence the pathway elements in these top hits to identify the combinations of variants that confer high performance.

- Iterative Optimization: Use the information from the first round to refine the variant selection for a subsequent, smaller library (e.g., by fixing the best-performing variant for some well-behaved elements and re-diversifying others) [2].

Key Reduction Strategy: This approach relies on the principle that a relatively small number of combinations, if well-chosen, can capture the global optimal solution without requiring a full factorial search, thus "taming" the combinatorial explosion [2] [6].

Quantitative Data on Combinatorial Explosion and Mitigation

Table 1: Impact of Different Experimental Designs on Synergy Prediction Accuracy (DECREASE Model)

| Experimental Design | Number of Measurements (8x8 grid) | Prediction Accuracy (Pearson rBLISS) |

|---|---|---|

| Full Dose-Response Matrix | 64 | 1.00 (Baseline) |

| Matrix Diagonal | 8 | 0.82 - 0.91 |

| Single Row (Random) | 8 | 0.82 - 0.91 |

| Single Column (Random) | 8 | 0.82 - 0.91 |

| Single Row (at IC50) | 8 | 0.58 |

Source: Adapted from [4].

Table 2: Growth of Combinatorial Spaces and Practical Constraints

| Combinatorial Scenario | Number of Variables (n) | Variants per Variable (v) | Total Possible Combinations |

|---|---|---|---|

| Latin Squares [1] | n (order) | n | ~5.5 x 10²⁷ (n=9) |

| Metabolic Pathway | 4 genes | 10 variants each | 10,000 (10⁴) |

| Drug Combination Screen | 466 drugs + 1 | 6x6 dose matrix | 29,824 data points per matrix [4] |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Combinatorial Screening Experiments

| Reagent / Material | Function in Experiment | Example Application |

|---|---|---|

| cNMF & XGBoost Ensemble Model | Predicts full dose-response combination matrices from a minimal set of measurements. | DECREASE framework for drug synergy prediction [4]. |

| BRAID Model | A response surface model for analyzing drug combinations; provides stable, unbiased interaction parameters. | Overcoming instability and bias of traditional index methods (CI, Bliss) [5]. |

| Oligo-linker Mediated Assembly (OLMA) | A DNA assembly method for creating combinatorial genetic libraries. | Simultaneous diversification of multiple pathway elements in metabolic engineering [2]. |

| Promoter & RBS Libraries | Sets of genetic parts with varying strengths to fine-tune gene expression levels. | Combinatorial optimization of pathway expression to balance metabolic flux [2]. |

| Homolog Libraries | Collections of coding sequences from different species for the same enzyme. | Identifying the most efficient enzyme variant for a specific step in a heterologous pathway [2]. |

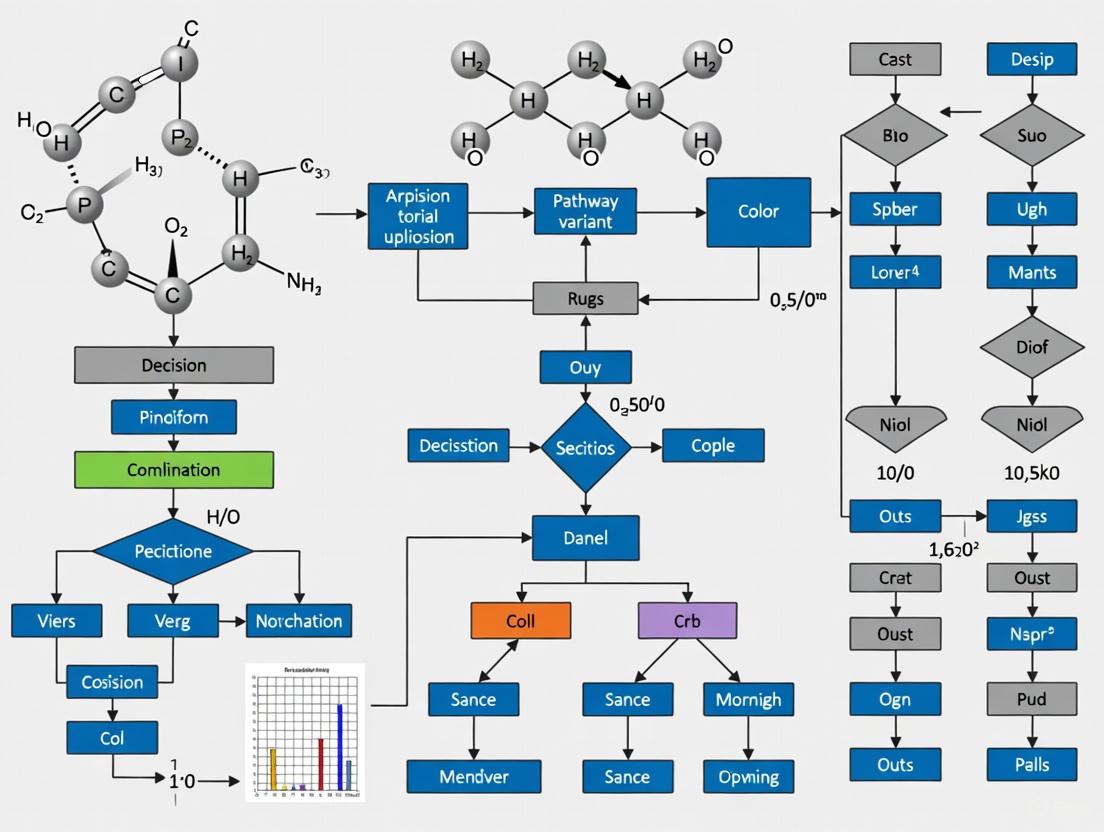

Visualizing Concepts and Workflows

Managing Combinatorial Explosion in Pathway Engineering

Minimal Experiment Design for Drug Screening

Frequently Asked Questions (FAQs)

FAQ 1: What is the core challenge of combinatorial explosion in pathway engineering? Combinatorial explosion refers to the phenomenon where the number of potential variants in a multi-gene pathway becomes impractically large to test exhaustively. For example, a three-gene pathway using RBS libraries with just 4 expression levels per gene creates 64 (4³) combinations. With 8 expression levels, this jumps to 512 combinations (8³), making comprehensive experimental screening infeasible due to resource and time constraints [7].

FAQ 2: How can computational models help reduce experimental effort? Computational algorithms like RedLibs can rationally design reduced, smart libraries. By analyzing the translation initiation rate (TIR) distributions of all possible degenerate RBS sequences, these tools identify a single, optimal degenerate sequence that encodes a small, user-specified library. This library uniformly samples the entire expression level space, maximizing the likelihood of finding a functional "metabolic sweet spot" with a minimal number of clones to test experimentally [7].

FAQ 3: What are the major sources of variation in High-Throughput Screening (HTS) data? Public HTS data can be affected by several technical and biological sources of variation. Key technical sources include batch effects, plate effects, and positional effects (row or column biases) within plates. Biologically, the presence of non-selective binders can lead to false positives. Before using public HTS data for drug repurposing, it is crucial to perform quality control and normalization to account for these variations [8].

FAQ 4: What is the key distinction between a multi-target drug and a promiscuous drug? A multi-target drug is intentionally designed to engage a predefined set of molecular targets to achieve a synergistic therapeutic effect for complex diseases, a strategy known as rational polypharmacology. In contrast, a promiscuous drug often lacks specificity, binding to a broad and unintended range of targets, which can lead to off-target effects and toxicity. The critical difference lies in the intentionality and specificity of the target selection [9].

FAQ 5: How can Thermal Shift Assays (TSAs) be used in drug discovery? TSAs, including DSF, PTSA, and CETSA, are valuable tools for detecting direct physical interactions between small molecules and their target proteins. They are based on the principle that a small molecule binding to a protein can alter its thermal stability, observed as a shift in its melting temperature (Tm). These label-free assays can be used in both biochemical (cell-free) and biological (cell-based) settings to study target engagement throughout the drug discovery process [10].

Troubleshooting Guides

Guide 1: Addressing Poor or Irregular Melt Curves in Differential Scanning Fluorimetry (DSF)

Problem: Irregular melt curves during DSF experiments, making it difficult to determine a reliable Tm.

| Symptom | Potential Cause | Solution |

|---|---|---|

| No transition curve (flat line) | Protein concentration too low; incompatible buffer components (e.g., detergents) quenching the dye. | Increase protein concentration; check dye compatibility with buffer additives [10]. |

| Irregular curve shape (e.g., non-sigmoidal, sharp dips) | Intrinsic fluorescence of the test compound; compound-dye interactions; compound-induced protein aggregation at low temperatures. | Run a control with compound and dye but no protein; inspect raw fluorescence data [10]. |

| High background fluorescence at low temperatures | Contaminants in the buffer; detergent levels too high. | Use ultrapure water and high-grade buffer components; optimize detergent concentration [10]. |

Guide 2: Mitigating Batch and Plate Effects in Public HTS Data

Problem: Analysis of public HTS data (e.g., from PubChem) reveals significant variation in quality metrics (like Z'-factor) across different assay run dates, but plate-level metadata is missing, preventing correction [8].

| Step | Action | Goal |

|---|---|---|

| 1. Data Quality Assessment | Examine distributions of raw readouts (e.g., fluorescence) and quality metrics (Z'-factor) by run date. | Identify batches or dates with anomalous data that may need to be excluded [8]. |

| 2. Choose Normalization Method | If the original raw data with plate annotation can be obtained, apply normalization like Percent Inhibition or Z-score. This requires plate-level control data. | Remove technical variation to make activity scores comparable across plates and batches [8]. |

| 3. Validate with Original Screeners | If plate data is unavailable from the database, the chosen normalization method cannot be validated. Contacting the original screening center for full data is the best recourse. | Ensure the reliability of bioactivity results before using them for computational drug repurposing [8]. |

Guide 3: Optimizing a Multi-Gene Pathway with Limited Screening Capacity

Problem: The combinatorial number of potential expression level variants for a multi-gene pathway far exceeds your laboratory's screening throughput.

| Step | Action | Key Consideration |

|---|---|---|

| 1. Define Goal & Constraint | Clearly state the pathway performance goal (e.g., maximize product titer, minimize byproduct). Define the maximum number of variants you can screen. | A clear objective is essential for evaluating success. A realistic screening capacity is critical for library design [7]. |

| 2. Design a Smart Library | Use a computational algorithm (e.g., RedLibs) to design a degenerate RBS library for each gene. The algorithm will output a single DNA sequence per gene that encodes a small, uniform distribution of expression levels. | This step rationally reduces the library from billions of theoretical combinations to a few hundred or thousand that are practical to screen [7]. |

| 3. Implement & Screen | Synthesize the degenerate oligonucleotides and clone them into your pathway host. Screen the resulting library for your performance metric. | The high density of functional clones in the smart library increases the probability of finding improved variants even with low-throughput assays [7]. |

Experimental Data & Protocols

| Gene Target | Fully Degenerate Library Size | Target Library Size | Number of Possible Sub-Libraries Evaluated | Key Outcome |

|---|---|---|---|---|

| mCherry | 65,536 (N8) | 4 | 4.3 million | Generated a minimal library covering low, medium-low, medium-high, and high TIRs. |

| mCherry | 65,536 (N8) | 12 | 25.7 million | Created a near-uniform distribution of TIRs across the accessible range. |

| mCherry | 65,536 (N8) | 24 | 70.2 million | Achieved a highly uniform sampling of the TIR space with a 2,730-fold reduction from the original library. |

| sfGFP & mCherry | ~2.8 x 10¹⁴ (for 2 genes, N8 each) | 144 (12 TIRs/gene) | N/A | Enabled one-pot cloning and identification of a wide range of fluorescence profiles in vivo. |

Table 2: Essential Research Reagent Solutions

| Reagent / Material | Function / Explanation |

|---|---|

| Degenerate Oligonucleotides | DNA sequences containing degenerate bases (e.g., N) used to create smart RBS libraries for one-pot cloning and pathway optimization [7]. |

| Polarity-Sensitive Fluorescent Dye (e.g., Sypro Orange) | Used in DSF assays. The dye fluoresces strongly when bound to hydrophobic protein regions exposed upon unfolding, allowing melt curve generation [10]. |

| Heat-Stable Control Proteins (e.g., SOD1) | Used as loading controls in PTSA and CETSA experiments for normalization during Western Blot analysis, as they remain stable at high temperatures [10]. |

| Public HTS Databases (e.g., PubChem Bioassay, ChemBank) | Provide bioactivity data for thousands of compounds against various targets, serving as a primary resource for computational drug repurposing efforts [8]. |

Objective: To confirm target engagement of a small molecule by detecting a shift in the protein's melting temperature (Tm).

Materials:

- Purified recombinant protein.

- Test compounds and a DMSO control.

- A polarity-sensitive fluorescent dye (e.g., Sypro Orange).

- A real-time PCR instrument or other thermal gradient-capable fluorometer.

- Suitable assay buffer.

Method:

- Prepare Sample Mixture: In a multi-well plate, combine purified protein, the test compound (or DMSO), and the fluorescent dye in an optimized buffer. A typical final volume is 20-25 µL.

- Run Thermal Ramp: Place the plate in the instrument and program a thermal ramp (e.g., from 25°C to 95°C with a gradual increase of 0.5-1°C per minute). Monitor fluorescence continuously.

- Data Analysis:

- Plot raw fluorescence against temperature to generate melt curves for each well.

- Normalize the data by converting fluorescence to the fraction of protein unfolded.

- Fit the data to a sigmoidal curve to determine the Tm (the temperature at which 50% of the protein is unfolded).

- A positive shift in Tm (ΔTm) in the presence of a compound relative to the DMSO control suggests stabilizing binding.

Visualization of DSF Workflow and Data Interpretation:

Objective: To rationally design a small, smart RBS library that uniformly samples the expression level space for a pathway gene, minimizing experimental screening effort.

Materials:

- Coding sequence of the target gene.

- RedLibs algorithm (freely available online).

- RBS prediction software (e.g., the RBS Calculator).

Method:

- Generate Input Data: Use the RBS prediction software to generate a list of all possible RBS sequences (e.g., for an N8 degenerate sequence) and their corresponding predicted Translation Initiation Rates (TIRs) for your specific gene.

- Run RedLibs: Input the gene-specific TIR data into RedLibs. Specify the desired final library size (e.g., 12).

- Receive Output: RedLibs performs an exhaustive search, comparing the TIR distributions of all possible sub-libraries of the target size. It returns a ranked list of optimal degenerate RBS sequences that most closely match a uniform TIR distribution.

- Library Construction: Synthesize the top-ranked degenerate oligonucleotide sequence and use it to clone a one-pot combinatorial library for your pathway.

Visualization of the RedLibs Library Reduction Concept:

Frequently Asked Questions

What is combinatorial explosion in pathway engineering? Combinatorial explosion occurs when you attempt to optimize multiple pathway elements simultaneously. The number of possible variants increases exponentially with each additional component you try to engineer. For a pathway with m proteins and n expression levels tested per protein, you face a search space of n^m combinations [2] [11]. This creates fundamental experimental limitations since comprehensively screening all variants becomes physically impossible.

How can I reduce library size while maintaining diversity? The RedLibs algorithm addresses this by designing degenerate ribosomal binding site (RBS) sequences that create uniform sampling across translation initiation rate (TIR) space. This method can reduce library sizes from >65,000 variants to smart libraries of just 4-24 members while maintaining broad coverage of expression levels [11].

What are the main experimental limitations in combinatorial testing? The primary constraints are screening throughput and analytical capabilities. As noted in combinatorial testing research, "screening is often limited on the analytical side, generating a strong incentive to construct small but smart libraries" [11]. This limitation makes it essential to prioritize library quality over quantity.

How do constraints affect combinatorial test generation? In practical applications, many parameter combinations are invalid due to biological or technical constraints. Handling these constraints requires specialized algorithms like multi-objective particle swarm optimization, which can satisfy constraints while maintaining coverage [12].

Troubleshooting Guides

Problem: Library Size Growing Exponentially

Symptoms

- Your variant library contains more members than you can realistically screen

- Experimental resources are being stretched too thin

- Most library members show poor performance

Solutions

- Implement Rational Library Design

- Adopt Iterative Optimization

- Start with broad, low-resolution screening

- Use results to inform focused, high-resolution libraries

- Progressively refine toward optimal regions [11]

Problem: Poor Pathway Performance Despite Combinatorial Optimization

Symptoms

- Optimized pathways still show metabolic imbalances

- Toxic intermediate accumulation

- Growth inhibition in host organisms

Solutions

- Diversification Strategy Assessment

- Analytical Framework Implementation

Problem: Constraint Handling in Test Design

Symptoms

- Generated test suites contain biologically impossible combinations

- Invalid parameter configurations in your experimental design

- Reduced testing efficiency due to constraint violations

Solutions

- Constraint-Aware Algorithm Selection

Experimental Data and Protocols

Table 1: Library Size Reduction Using Smart Algorithms

| Scenario | Native Library Size | Reduced Library Size | Coverage Maintained |

|---|---|---|---|

| 8N RBS Library | 65,536 variants | 24 variants | >90% TIR range [11] |

| 3-Gene Pathway | 6.9 × 10^10 combinations | Smart sub-library | Uniform TIR sampling [11] |

| Violacein Biosynthesis | Full combinatorial | 2-step iterative | Improved product selectivity [11] |

Table 2: Combinatorial Optimization Method Comparison

| Method | Key Feature | Experimental Effort | Best Application |

|---|---|---|---|

| RBS Engineering | Translation rate control | Medium | Microbial systems [11] |

| Homolog Screening | Natural enzyme diversity | High | Novel pathway installation [2] |

| Promoter Engineering | Transcriptional control | Low-Medium | Fine-tuning expression [2] |

| Multi-level Optimization | Combined approaches | High | Complex pathway refactoring [2] |

Research Reagent Solutions

Essential Materials for Combinatorial Pathway Optimization

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| RBS Calculator | Predicts translation initiation rates | Enables computational library design [11] |

| RedLibs Algorithm | Designs optimal degenerate RBS sequences | Generates uniform-coverage libraries [11] |

| Degenerate Oligonucleotides | Library construction with controlled diversity | Implements designed variant libraries [11] |

| Fluorescent Reporter Proteins | High-throughput screening readout | Enables rapid library evaluation [11] |

| MOPSO Algorithms | Constrained test suite generation | Handles biological constraints in experimental design [12] |

Experimental Workflows

Pathway Optimization Workflow

Constraint Handling in Experimental Design

Key Insight: The most successful combinatorial optimization strategies combine smart computational design with iterative experimental validation, always respecting the fundamental constraints of your experimental screening capacity [2] [11].

Troubleshooting Guide: Overcoming Combinatorial Explosion

Problem: My screening results are saturated with low-performing variants, and I can't find the optimal combination.

- Potential Cause: The library design is skewed towards weak expression levels, creating a redundant and inefficient search space [7].

- Solution: Implement a rational library design algorithm (e.g., RedLibs) to generate a uniform distribution of expression levels, ensuring comprehensive coverage of the design space with a minimal library size [7].

Problem: My predictive models for drug combinations are too slow and don't generalize to new cell lines.

- Potential Cause: The model relies on indirect prediction methods and extensive computational modeling of cell responses [14].

- Solution: Adopt a direct prediction framework like PDGrapher, a causally-inspired graph neural network that directly predicts perturbagens, enabling faster training and more robust performance across novel samples and cell lines [14].

Problem: I need to optimize a multi-gene pathway, but the number of possible RBS combinations is too vast to test.

- Potential Cause: A fully degenerate RBS design leads to combinatorial explosion. For example, a three-gene pathway with RBSs of 6 randomized bases can produce 6.9 × 10^10 unique combinations [7].

- Solution: Replace fully randomized RBS regions with rationally designed, partially degenerate sequences. This creates a "smart" sub-library that uniformly samples the expression level space with a user-defined, manageable number of variants [7].

Problem: My model predicts drug synergy accurately in training but fails in clinical translation.

- Potential Cause: The model may suffer from limited mechanistic explanation and has not been validated against comprehensive multi-omics data (e.g., genomics, transcriptomics, proteomics) that more closely reflect the clinical reality [15].

- Solution: Integrate multi-omics data into the predictive model and prioritize methods that offer better interpretability. Focus on clinical validation of predicted combinations to bridge the gap between computational prediction and therapeutic application [15].

Frequently Asked Questions (FAQs)

Q1: What is combinatorial explosion in the context of strain optimization? A1: In strain optimization, combinatorial explosion refers to the astronomical number of potential genetic variants that can be created when trying to balance a multi-gene pathway. For example, randomizing just six nucleotides in the ribosomal binding site (RBS) for a three-gene pathway can generate over 69 billion possible combinations, making comprehensive experimental screening impossible [7].

Q2: How can machine learning help overcome combinatorial explosion in drug discovery? A2: Machine learning provides powerful tools to navigate the vast combinatorial space of drug-target interactions. Techniques include:

- Multi-target Prediction: Using models like graph neural networks to predict interactions between drugs and multiple biological targets simultaneously [9].

- Synergy Prediction: Integrating multi-omics data to predict synergistic drug combinations, thereby reducing the need for exhaustive experimental testing of all possible pairs [15].

- Direct Perturbagen Prediction: Employing causally-inspired models like PDGrapher to directly identify the set of therapeutic targets needed to reverse a disease phenotype, which is significantly faster than traditional indirect methods [14].

Q3: What are the key metrics for evaluating drug combination effects? A3: Two common quantitative metrics are:

- Bliss Independence (BI) Score: Calculated as ( S = E{A+B} - (EA + E_B) ), where ( E ) represents the effect of the drug(s). A positive ( S ) indicates synergy, while a negative ( S ) suggests antagonism [15].

- Combination Index (CI): A measure where a CI < 1 indicates synergy, CI = 1 indicates an additive effect, and CI > 1 indicates antagonism [15].

Q4: What is a rationally reduced library, and how does it minimize experimental effort? A4: A rationally reduced library is a smartly designed subset of all possible variants. Algorithms like RedLibs analyze the full combinatorial space and select a small set of variants that most uniformly cover the range of possible expression levels. This allows researchers to maximize the chance of finding high-performing combinations while minimizing the number of clones they need to synthesize and screen [7].

Q5: What are the common challenges in using AI for multi-target drug discovery? A5: Key challenges include [9]:

- Data Sparsity: Limited availability of high-quality, labeled data for many drug-target interactions.

- Model Interpretability: The "black box" nature of some complex models makes it difficult to understand the biological rationale behind their predictions.

- Generalizability: Models trained on one dataset or cell line may not perform well on others.

Quantitative Data on Combinatorial Challenges and Solutions

Table 1: Impact of Combinatorial Explosion in Pathway Engineering

| Scenario | Number of Genes | Randomized Bases per RBS | Possible DNA Sequences | Cloning & Screening Feasibility |

|---|---|---|---|---|

| Small Pathway | 3 | N6 (6 bases) | (4^6)³ = 6.9 x 10¹⁰ | Impossible |

| Small Pathway | 3 | N8 (8 bases) | (4^8)³ = 2.8 x 10¹⁴ | Impossible |

| Solution: RedLibs | 3 | Partially degenerate sequence | User-defined (e.g., 24) | Highly Feasible |

Table 2: Performance of Computational Models in Therapeutic Discovery

| Model / Method | Key Application | Key Metric | Reported Performance / Advantage |

|---|---|---|---|

| RedLibs [7] | Pathway Library Design | Library Size Reduction | Reduces library from billions to dozens of variants while uniformly covering expression space. |

| PDGrapher [14] | Target Perturbation Prediction | Ranking Accuracy & Speed | Ranks ground-truth targets up to 35% higher; trains up to 25-30x faster than existing methods. |

| DeepSynergy [15] | Drug Synergy Prediction | Predictive Accuracy | Mean Pearson Correlation: 0.73; AUC: 0.90. |

| AuDNNsynergy [15] | Drug Synergy Prediction | Data Integration | Integrates genomic data with other omics information for improved prediction. |

Experimental Protocols

Protocol 1: Rational Library Design for Pathway Optimization using RedLibs

This protocol uses the RedLibs algorithm to create a minimal, smart library for optimizing a multi-gene pathway [7].

Gene-Specific TIR Data Generation:

- Input: For each gene in your pathway, provide its coding sequence (CDS).

- Tool: Use RBS prediction software (e.g., the RBS Calculator) to generate a list of all possible RBS sequences (e.g., for an N8 region) and their corresponding predicted Translation Initiation Rates (TIRs).

- Output: A gene-specific dataset of sequence-TIR pairs.

Define Target Library Size:

- Based on the throughput constraints of your analytical assay (e.g., chromatography, fluorescence), decide on the number of variants you can realistically screen (e.g., 12, 24, 96).

Run RedLibs Algorithm:

- Input: Feed the gene-specific TIR dataset and the target library size into the RedLibs algorithm.

- Process: RedLibs performs an exhaustive search to identify the single, partially degenerate RBS sequence whose resulting TIR distribution most closely matches a uniform target distribution across the accessible TIR space. It uses the Kolmogorov-Smirnov distance (dKS) to rank the solutions.

- Output: A ranked list of optimal degenerate RBS sequences for your gene.

Library Construction:

- Using the top-ranked degenerate sequence, synthesize the RBS library for each pathway gene via a one-pot PCR or assembly strategy.

- Clone the variant libraries into your expression construct.

Screening and Analysis:

- Screen the reduced library for your desired phenotypic outcome (e.g., product titer, growth).

- Due to the uniform coverage, a high density of functional clones will be present, accelerating the identification of the optimal pathway balance.

Protocol 2: Predicting Therapeutic Targets with PDGrapher

This protocol outlines the use of PDGrapher for phenotype-driven discovery of combinatorial therapeutic targets [14].

Data Preparation:

- Network Data: Compile a protein-protein interaction (PPI) network or gene regulatory network (GRN) from databases like BioGrid or Interactome Atlas.

- Gene Expression Data: Collect gene expression profiles for paired diseased and treated cell states from resources like CLUE.

- Perturbagen Information: Gather known drug targets or genetic perturbagens from databases like DrugBank and COSMIC.

Model Input:

- For a given sample, input the paired gene expression data (diseased state and desired treated state) along with the biological network.

Model Execution:

- PDGrapher, a graph neural network (GNN), learns a latent representation of the disease state within the context of the network.

- It then directly computes and outputs a ranked list of genes whose perturbation is predicted to shift the network state from "diseased" to "treated."

Validation:

- The top-ranked genes are identified as primary combinatorial therapeutic targets.

- Validate these predictions experimentally in relevant cellular or animal models.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Resources for Combinatorial Research

| Item | Function | Example / Source |

|---|---|---|

| RBS Calculator | Predicts translation initiation rates (TIR) for a given RBS sequence, providing the input data for rational library design [7]. | RBS Calculator v2.0 |

| RedLibs Algorithm | Generates globally optimal, degenerate RBS sequences that create uniform TIR libraries of a user-specified size [7]. | https://www.bsse.ethz.ch/bpl/software/redlibs |

| Protein-Protein Interaction (PPI) Network Data | Serves as a causal graph representing biological systems for models like PDGrapher [14]. | BioGrid, Interactome Atlas |

| Gene Expression Datasets | Provides data on cellular states under diseased and treated conditions for phenotype-driven discovery [14]. | CLUE, LINCS |

| Drug-Target Databases | Curated sources of known drug-target interactions for model training and validation [9] [14]. | DrugBank, ChEMBL, TTD |

| Pre-trained Protein Language Models | Provides advanced vector representations of protein targets for machine learning models [9]. | ESM, ProtBERT |

| Chemical Building Block Catalogs | Virtual or physical sources of diverse chemical matter for synthesizing proposed compounds or libraries [16]. | Enamine MADE, eMolecules, Chemspace |

Workflow Visualization

Computational Workflow to Defeat Combinatorial Explosion

Core Framework for Combinatorial Prediction

Navigating the Design Space: Computational and Experimental Methodologies

Machine Learning-Guided DBTL Cycles for Iterative Pathway Optimization

Troubleshooting Guide: Common DBTL Cycle Challenges

This section addresses specific, high-frequency issues researchers encounter when implementing Machine Learning-guided Design-Build-Test-Learn (DBTL) cycles for metabolic pathway optimization.

FAQ 1: Our initial DBTL cycle yielded poor predictive model performance. How can we improve learning from a small initial dataset?

- Problem: Low predictive accuracy in the first DBTL cycle due to limited training data, a common scenario when exploring a large combinatorial space.

- Solution: Prioritize algorithmic choices and strategic design.

- Algorithm Selection: In low-data regimes, tree-based models like Gradient Boosting and Random Forest have been shown to outperform other methods. They are robust to experimental noise and can handle complex, non-linear relationships between pathway modifications and product flux [17].

- Initial Design Strategy: When the total number of strains you can build is constrained, opt for a larger initial DBTL cycle. Building a more substantial initial dataset provides a better foundation for the ML model to learn from, which is more favorable than distributing the same number of builds evenly across multiple smaller cycles [17].

- Data Representation: Ensure biological perturbations (e.g., enzyme levels) are implemented in the model by changing relevant kinetic parameters, such as

Vmax, to accurately reflect the effect of your genetic modifications [17].

FAQ 2: Our ML model's recommendations seem to exploit experimental noise rather than identifying robust biological trends. What can we do?

- Problem: The model is overfitting to noise in the high-throughput screening data, leading to poor generalizability and ineffective recommendations for subsequent cycles.

- Solution: Enhance model robustness through data and algorithm practices.

- Robust Algorithms: As identified in simulated frameworks, Gradient Boosting and Random Forest models demonstrate resilience to typical experimental noise and biases that may be present in training data from DNA libraries [17].

- Recommendation Algorithm: Use a recommendation algorithm that balances exploration (testing new, uncertain regions of the design space) with exploitation (focusing on designs predicted to be high-performing). This prevents the cycle from getting stuck in a local optimum based on noisy data [17].

FAQ 3: How can we effectively navigate the combinatorial explosion of pathway variants?

- Problem: The number of possible combinations of pathway genes (e.g., promoters, coding sequences) is vast, making exhaustive experimental testing infeasible.

- Solution: Leverage ML to intelligently guide the search.

- Iterative DBTL Framework: The core solution is to adopt an iterative DBTL cycle, as illustrated below. The "Learn" phase is where ML analyzes data from the "Test" phase to propose a focused set of new designs for the next "Build" cycle, avoiding brute-force screening [17].

- Kinetic Modeling: Using a mechanistic kinetic model as a simulated framework allows for the benchmarking of ML methods and DBTL strategies before costly real-world experiments. This helps optimize the workflow to find the best-producing strain with minimal experimental effort [17].

Experimental Protocols for Key DBTL Workflows

Protocol 1: Setting Up a Simulated DBTL Framework for Benchmarking

Purpose: To create a in silico environment for testing machine learning methods and DBTL cycle strategies without the cost and time of wet-lab experiments [17].

Methodology:

- Pathway Representation: Integrate the synthetic pathway of interest into a core kinetic model of the host organism (e.g., the E. coli core kinetic model in the SKiMpy package). This model uses ordinary differential equations (ODEs) to describe reaction fluxes and metabolite concentrations [17].

- Define Optimization Objective: Set the modeling objective, such as maximizing the production flux of a target compound (e.g., compound G in a sample pathway) [17].

- Simulate Strain Designs: In silico, vary enzyme levels (simulating different DNA library components like promoters) by adjusting the

Vmaxparameters in the model. - Generate Training Data: Run the model for each simulated strain design to generate a dataset of enzyme level combinations and their corresponding product fluxes.

- Benchmark ML Models: Use this simulated dataset to train and compare different ML models (e.g., Random Forest, Gradient Boosting, Neural Networks) for their predictive performance and robustness.

Protocol 2: ML-Driven Recommendation for the Next DBTL Cycle

Purpose: To automate the process of selecting which strain designs to build in the next DBTL cycle based on data from previous cycles.

Methodology:

- Model Training: Train a chosen ML model (e.g., Gradient Boosting) on all experimental data collected from previous DBTL cycles. The features are the genetic design elements, and the target is the performance metric (e.g., titer, yield, rate) [17].

- Prediction and Exploration: Use the trained model to predict the performance of all untested genetic designs within the possible combinatorial space.

- Design Selection: Implement a recommendation algorithm that selects a mix of designs:

- Exploitation: Some designs with the highest predicted performance.

- Exploration: Some designs where the model is most uncertain, to gather information on under-explored regions of the design space [17].

- Cycle Continuation: The selected designs form the "Build" list for the next DBTL cycle, making the process (semi)-automated.

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below details key resources for establishing ML-guided DBTL cycles.

| Item / Reagent | Function in DBTL Cycles | Specific Examples / Details |

|---|---|---|

| DNA Library Components | Provides the genetic variability for combinatorial pathway optimization. | Promoters, ribosomal binding sites (RBS), and coding sequences for tuning enzyme expression levels and properties [17]. |

| Host Organism | The chassis for assembling and testing pathway designs. | Escherichia coli core kinetic model provides a physiologically relevant simulation environment [17]. |

| Kinetic Modeling Software | Creates a mechanistic in silico framework to simulate pathway behavior and benchmark DBTL strategies. | Symbolic Kinetic Models in Python (SKiMpy) package [17]. |

| Machine Learning Algorithms | Learns from experimental data to predict strain performance and recommend new designs. | Gradient Boosting and Random Forest are top performers in low-data regimes [17]. VAEs & GANs are used for de novo molecular design in related drug discovery contexts [18]. |

| High-Throughput Screening Assay | The "Test" phase; measures the performance (Titer, Yield, Rate - TYR) of thousands of strain variants. | Assays must be scalable and generate quantitative data on product formation (e.g., from a 1L batch bioreactor model) [17]. |

DBTL Cycle Workflow and Pathway Optimization Visualizations

DBTL Cycle for Pathway Optimization

Combinatorial Pathway Optimization

ML Model Performance Comparison

The table below summarizes quantitative findings on ML model performance from simulated DBTL studies, crucial for selecting the right algorithm.

| Machine Learning Model | Performance in Low-Data Regime | Robustness to Training Set Bias | Robustness to Experimental Noise | Key Application in DBTL |

|---|---|---|---|---|

| Gradient Boosting | High | High | High | Recommendation of new strain designs [17] |

| Random Forest | High | High | High | Recommendation of new strain designs [17] |

| Other Tested Methods | Lower | Lower | Lower | Benchmarking baseline [17] |

Core Concepts: The Network Proximity Framework

This technical guide outlines the principles and methodologies for using human protein-protein interactome proximity to predict synergistic drug combinations, a key strategy for addressing the combinatorial explosion in therapeutic development.

Fundamental Metrics and Formulas

The following quantitative measures form the computational foundation for predicting drug synergy.

Table 1: Key Network Proximity Metrics for Drug Synergy Prediction

| Metric Name | Mathematical Formula | Key Inputs | Interpretation | Primary Application |

|---|---|---|---|---|

| Drug-Disease Proximity (z-score) [19] [20] | ( z = \frac{d - \mu}{\sigma} )Where ( d(S,T) = \frac{1}{\Vert {T} \Vert } {\sum\limits{t \in T} \min{s {\in} S}}d(s,t) ) | Drug targets (T), Disease-associated proteins (S) | Significant z-score (z < -2.5) indicates drug's potential efficacy for a disease. | Validating single drug efficacy against a disease module [19]. |

| Drug-Drug Separation (sAB) [20] | ( s{AB} \equiv d{AB} - \frac{d{AA} + d{BB}}{2} ) | Targets of Drug A, Targets of Drug B | sAB < 0: Targets are in same network neighborhood.sAB ≥ 0: Targets are topologically separated [20]. | Predicting drug combinations; separated targets (sAB ≥ 0) with complementary disease exposure correlate with synergy [20]. |

Network Configurations for Drug Combinations

All possible drug-drug-disease interactions can be classified into six topologically distinct classes. Only one class has been strongly correlated with clinically efficacious combinations.

Table 2: Network-Based Classes of Drug-Drug-Disease Combinations

| Configuration Class | Schematic Description | Relationship Between Drug Targets | Relationship to Disease Module | Correlation with Clinical Efficacy |

|---|---|---|---|---|

| P1: Overlapping Exposure [20] | Two overlapping circles overlapping with a third. | Overlapping (sAB < 0) | Both drugs' target modules overlap with the disease module. | Not significant for therapeutic effect [20]. |

| P2: Complementary Exposure [20] | Two separate circles that both overlap with a third. | Separated (sAB ≥ 0) | Both drugs' target modules individually overlap with the disease module. | Correlates with therapeutic effects in hypertension and cancer [20]. |

| P3: Indirect Exposure [20] | Two overlapping circles, only one of which overlaps with a third. | Overlapping (sAB < 0) | Only one drug's target module overlaps with the disease module. | Not significant for therapeutic effect [20]. |

| P4: Single Exposure [20] | Two separate circles, only one of which overlaps with a third. | Separated (sAB ≥ 0) | Only one drug's target module overlaps with the disease module. | Not significant for therapeutic effect [20]. |

| P5: Non-Exposure [20] | Two overlapping circles separate from a third. | Overlapping (sAB < 0) | Both drug modules are separated from the disease module. | Not significant for therapeutic effect [20]. |

| P6: Independent Action [20] | Three separate circles. | Separated (sAB ≥ 0) | Both drug modules and the disease module are all separated. | Not significant for therapeutic effect [20]. |

Troubleshooting Guides

Computational and Data Issues

Issue: Low predictive accuracy of z-score for drug-drug relationships.

- Problem: The z-score metric fails to discriminate known effective drug pairs from random pairs [20].

- Solution: Use the separation measure (sAB) for analyzing drug-drug pairs, as it is designed for smaller target sets and correlates better with pharmacological similarities [20].

- Prevention: Always validate the applicability of the z-score by checking if the reference distribution for your specific drug targets is Gaussian [20].

Issue: Inability to handle the combinatorial complexity of pathway optimization.

- Problem: The number of permutations for multi-gene pathways makes full factorial screening impossible [2] [11].

- Solution: Implement library reduction algorithms like RedLibs to design degenerate ribosomal-binding site (RBS) libraries that uniformly sample the translation initiation rate (TIR) space with a user-specified, manageable library size [11].

- Prevention: Integrate computational library design early in the experimental planning phase to minimize redundant cloning and screening efforts.

Experimental Validation Issues

Issue: High false positive rate from computational predictions.

- Problem: Network-based predictions identify associations, but not all are causally related or therapeutically relevant.

- Solution: Adopt a validation pipeline that integrates pharmacoepidemiologic analyses of large-scale patient data (>200 million patients) with in vitro experiments [19]. Use a new-user active comparator design and propensity score matching to minimize confounding in patient data [19].

- Prevention: Select predictions for validation based on novelty, strength of the network signal, and the availability of appropriate comparator drugs and high-fidelity disease data in healthcare databases [19].

Frequently Asked Questions (FAQs)

Q1: Why is the "Combinatorial Explosion" a fundamental problem in drug combination and pathway testing? The number of possible drug pairs or pathway variants grows exponentially with the number of components. For example, screening 1000 FDA-approved drugs against 3000 diseases creates 499,500 possible pairwise combinations for a single disease, and this doesn't even include testing different dosages [20]. In pathway engineering, a pathway with 'm' enzymes and 'n' expression levels per enzyme creates an expression level space of n^m permutations, which quickly becomes impossible to test comprehensively [2] [11].

Q2: What is the single most important network configuration for predicting successful drug combinations? The P2: Complementary Exposure configuration is the only one that has been shown to correlate with therapeutic effects for diseases like hypertension and cancer [20]. In this configuration, the two drugs have topologically separated targets (sAB ≥ 0), and both of their target modules individually overlap with the disease module.

Q3: My computational model predicts synergy, but my wet-lab experiments do not confirm it. What could be wrong? This discrepancy can arise from several points of failure:

- Interactome Quality: The human protein-protein interactome you are using may be incomplete or contain false-positive interactions. Ensure you are using a high-quality, consolidated interactome built from multiple experimental sources (e.g., Y2H, kinase-substrate interactions, AP-MS) [19] [20].

- Disease Module Definition: The set of proteins you have defined as the "disease module" may be inaccurate or lack critical components.

- Off-Target Effects: The drug's effect in a biological system may involve off-target binding not captured by the known target list used in your model.

- Dosage: Synergy is often highly dependent on the specific dosages of the drugs used, which the network model does not account for.

Q4: Are there machine learning approaches that can complement these network-based strategies? Yes, ensemble machine learning methods can be highly effective. For predicting drug synergy scores, one can use an ensemble-based differential evolution (DE) approach to optimize Support Vector Machine (SVM) parameters, which has been shown to minimize prediction errors [21]. Other promising approaches include multi-task learning and ensemble methods that integrate different compound representations and similarity networks [22].

Experimental Protocols & Workflows

Core Protocol: Validating a Predicted Drug-Disease Association

This protocol outlines the integrated validation pipeline demonstrated for drug repurposing [19].

Step 1: Computational Prediction.

- Calculate the network proximity (z-score) between the drug's targets and the disease module within a consolidated human interactome [19].

- Select promising associations based on a high |z-score| and novelty.

Step 2: Validation with Longitudinal Healthcare Data.

- Cohort Definition: Use a new-user active comparator design. For the drug of interest, identify a comparator drug used for the same underlying condition but predicted to have no network association with the target disease [19].

- Confounding Adjustment: Use propensity score matching to balance the two cohorts on demographics, comorbidities, and other medication use [19].

- Outcome Analysis: Calculate the hazard ratio (HR) for the disease outcome (e.g., coronary artery disease) using a Cox proportional hazards model. A significant HR confirms the association in patient data [19].

Step 3: Mechanistic In Vitro Validation.

- Design cell-based assays to test the predicted mechanism. For example, if a drug is predicted to reduce CAD risk, test its effect on attenuating pro-inflammatory cytokine-mediated activation in relevant human cells (e.g., human aortic endothelial cells) [19].

Core Protocol: Combinatorial Pathway Optimization with RedLibs

This protocol is for optimizing metabolic pathways while managing combinatorial complexity [11].

Step 1: Generate Input Data.

- For each gene in your pathway, use RBS prediction software (e.g., the RBS Calculator) to generate a list of all possible RBS sequences (e.g., for an N8 library) and their corresponding predicted Translation Initiation Rates (TIRs).

Step 2: Run RedLibs Algorithm.

- Input the gene-specific TIR data into RedLibs.

- Specify the desired target library size (e.g., 12, 24, 96) for each gene.

- RedLibs will perform an exhaustive search to identify the partially degenerate RBS sequence whose TIR distribution most closely matches a uniform target distribution across the TIR space [11].

Step 3: Library Construction and Screening.

- Use the output degenerate sequences to synthesize a one-pot combinatorial RBS library for your pathway.

- Clone the library into your production host.

- Screen the resulting variant library for the desired phenotype (e.g., improved product yield). The reduced, uniform library size maximizes the chance of finding optimal clones with minimal screening effort [11].

Table 3: Key Research Reagent Solutions for Network-Based Drug Synergy Research

| Reagent / Resource | Function / Description | Example Use Case | Key Consideration |

|---|---|---|---|

| Consolidated Human Interactome [19] [20] | A high-quality network of 243,603+ experimentally confirmed PPIs connecting 16,677 proteins, built from Y2H, signaling, and structure data. | Serves as the foundational map for all network proximity calculations (d(S,T), sAB). | Quality is critical. Prefer interactomes using unbiased, experimental data over computationally predicted interactions. |

| Drug-Target Binding Profiles [19] [20] | A compiled set of drugs with experimentally confirmed targets, using binding affinity cutoffs (e.g., Kd ≤ 10 µM). | Defining the target set (T) for a given drug. | The accuracy of your target list directly impacts prediction reliability. |

| RBS Calculator Software [11] | A biophysical model that predicts Translation Initiation Rates (TIRs) from RBS sequences. | Generating the input data for the RedLibs algorithm to design optimized RBS libraries. | Predictions are approximate; empirical screening is still required. |

| RedLibs Algorithm [11] | An algorithm that designs globally optimal, degenerate RBS libraries of a user-specified size for uniform TIR sampling. | Drastically reducing the combinatorial library size for multi-gene pathway optimization. | Computationally intensive for large libraries but essential for manageable experimental effort. |

| Large Healthcare Databases [19] | Longitudinal patient data (e.g., insurance claims) with tens to hundreds of millions of patients. | Validating predicted drug-disease associations using pharmacoepidemiologic methods. | Requires careful study design and statistical adjustment (e.g., propensity score matching) to control for confounding. |

Troubleshooting Guides

Problem 1: Low Protein Expression Yield

- Question: "Why is my final protein expression yield too low after optimizing coding sequences?"

- Investigation:

- Verify the sequence of the synthesized DNA construct to confirm the correct coding sequence (CDS) is present.

- Check the culture conditions (e.g., temperature, inducer concentration, induction time) for the expression host.

- Analyze the cellular fractionation to determine if the protein is expressed in the insoluble inclusion bodies.

- Assess the codon adaptation index (CAI) of the engineered sequence for your specific host organism.

- Solution:

- Re-engineer the CDS to use host-preferred codons, especially for the first ~10 N-terminal codons.

- Lower the induction temperature (e.g., to 18-25°C) to slow down protein synthesis and favor proper folding.

- Test different expression vectors with varying promoter strengths and copy numbers to fine-tune expression levels.

Problem 2: Unintended Phenotypic Outcomes from Pathway Modulation

- Question: "When testing multiple pathway variants, how can I determine which specific genetic combination is causing an observed growth defect or metabolic imbalance?"

- Investigation:

- Use combinatorial design matrices to trace back phenotypic outputs to specific input combinations.

- Employ analytical methods like HPLC or LC-MS to profile metabolites and identify bottlenecks.

- Check for plasmid instability or loss in systems with multiple expression vectors.

- Solution:

- Systematically reduce combinatorial complexity by testing sub-pools of variants.

- Implement a more granular t-way combinatorial testing strategy to isolate interactions between a smaller number of factors (e.g., promoter strength and terminator efficiency).

- Incorporate complementary assays (e.g., RNA-seq, proteomics) to observe system-wide effects.

Problem 3: High Experimental Variance in Multi-Well Assays

- Question: "How can I reduce noise and improve reproducibility when screening a large number of pathway variants in parallel cultures?"

- Investigation:

- Confirm the integrity and accuracy of liquid handling robots.

- Check for edge effects in multi-well plates due to evaporation.

- Validate that inoculum cultures are in the same growth phase.

- Solution:

- Use plates with at least three biological replicates and multiple negative/positive controls distributed randomly.

- Apply statistical outlier detection methods to the dataset.

- Utilize internal fluorescence or absorbance standards to normalize for well-to-well volume or path length differences.

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using a combinatorial approach in metabolic engineering? A combinatorial approach allows researchers to efficiently explore a vast space of genetic variants (e.g., promoters, CDS, terminators) without the need for exhaustive testing of every single possible combination. This is crucial for identifying high-performing, synergistic genetic configurations that would be impractical to find through sequential, one-factor-at-a-time experimentation [23].

Q2: How does t-way combinatorial testing specifically help my research on pathway variants? t-way combinatorial testing is a systematic methodology that ensures all possible interactions between any 't' number of factors (e.g., 2-way or 3-way) are covered by at least one test case in your experimental suite [23]. For example, a 2-way (pairwise) strategy ensures that every possible pair of promoter strength and codon adaptation index (CAI) value is tested together at least once. This can significantly reduce the number of experiments needed while still capturing the most critical interactions that influence phenotype [23].

Q3: My library size is still too large. How can I further reduce it? Beyond employing t-way strategies, you can:

- Prioritize Factors: Use prior knowledge or preliminary data to focus on the most influential genetic parts.

- Apply D-Optimal Design: This statistical method selects a subset of experiments that maximizes the information gain for model fitting.

- Use Sparse Sampling: Sample from the full combinatorial space using algorithms that maximize coverage diversity.

Q4: Are there any software tools to help design these combinatorial experiments? Yes, several tools exist, though many have limitations for high-strength combinations [23]. Tools like PICT and ACTS can generate test suites for 2-way to 6-way interactions. For highly complex scenarios, advanced strategies using heuristic or metaheuristic algorithms (e.g., Evolutionary Heuristics) are being developed to generate near-optimal test suites more efficiently [23].

Data Presentation

Table 1: Combinatorial Test Suite Sizes for a Hypothetical 4-Factor Pathway Experiment

This table illustrates how the number of required test cases grows with increasing combination strength 't'. The system has 4 factors, each with 3 possible variants (e.g., Promoter: Weak, Medium, Strong; CDS: CAI-low, CAI-medium, CAI-high, etc.) [23].

| Combination Strength (t) | Description | Number of Test Cases in Suite | Exhaustive Combinations Covered |

|---|---|---|---|

| 1-way | Each factor value appears | ~4 | 12 (all single values) |

| 2-way | All pairs of factors appear | ~9 | 54 (all pairwise combinations) |

| 3-way | All triplets of factors appear | ~15-20 | 108 (all 3-factor combinations) |

| 4-way (Exhaustive) | All possible combinations | 81 (3^4) | 81 |

Table 2: Quantitative Analysis of Protein Expression under Combinatorial Conditions

This table provides a template for reporting key outcomes from a combinatorial experiment, linking specific genetic combinations to measurable protein expression data.

| Test Case ID | Promoter Strength | CDS CAI | Inducer Concentration (mM) | Specific Yield (mg/L/OD) | Soluble Fraction (%) |

|---|---|---|---|---|---|

| TC-01 | Strong | High | 1.0 | 150 | 85 |

| TC-02 | Strong | Low | 0.1 | 25 | 95 |

| TC-03 | Weak | High | 1.0 | 80 | 90 |

| TC-04 | Weak | Low | 0.1 | 10 | 98 |

| ... | ... | ... | ... | ... | ... |

Experimental Protocols

Protocol 1: Generating a t-Way Combinatorial Test Suite using a Logical Combination Index Table (LCIT)

Purpose: To systematically generate a minimal set of experiments that cover all t-way interactions between genetic factors [23].

Methodology:

- Define Factors and Levels: List each genetic part (Factor) to be engineered and its possible variants (Levels). E.g., Factor A (Promoter): A1, A2, A3; Factor B (RBS): B1, B2.

- Calculate Combinations: Determine all possible t-way combinations (e.g., 2-way pairs: A1B1, A1B2, A2B1, ...).

- Construct LCIT: Build a table where each row represents a potential test case. The algorithm (e.g., a Refined Evolutionary Heuristic) iteratively adds rows that cover the most uncovered t-way combinations until all are covered [23].

- Output Test Suite: The final LCIT is your optimized experimental plan. Each row in the table specifies one combination of factor levels to be tested.

Protocol 2: High-Throughput Screening of Pathway Variants in a Microplate Format

Purpose: To experimentally measure the performance (e.g., growth, productivity) of pathway variants specified by the combinatorial test suite.

Methodology:

- Strain Library Construction: Use automated DNA assembly to create the library of pathway variants in the production host.

- Inoculation and Growth: Inoculate variants into deep-well 96-well plates containing culture medium using a liquid handling robot. Include control strains.

- Induction and Expression: Grow cultures to mid-log phase, then induce protein expression under standardized conditions (temperature, inducer).

- Data Collection:

- Optical Density (OD): Measure at 600nm to monitor cell growth.

- Fluorescence/Absorbance: If the product is a pigment or fluorescent protein, measure directly in the plate.

- Cell Harvesting: Centrifuge plates to pellet cells for downstream analysis (e.g., SDS-PAGE, enzyme assays).

Mandatory Visualization

Diagram 1: Combinatorial Pathway Variant Testing Workflow

Diagram 2: Logical Combination Index Table (LCIT) Concept

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Combinatorial Pathway Engineering

| Item Name | Function / Explanation |

|---|---|

| Codon-Optimized Gene Fragments | Synthetic DNA sequences engineered with host-preferred codons to maximize translation efficiency and protein yield. |

| Modular Cloning Vector System (e.g., MoClo) | A standardized assembly system using Golden Gate cloning that allows for efficient, reproducible combinatorial assembly of multiple genetic parts. |

| Inducible Promoter Plasmids | A library of vectors with promoters of varying strengths (weak, medium, strong) that can be induced by specific chemicals (e.g., IPTG, arabinose). |

| High-Throughput Screening Microplates | Deep-well 96-well or 384-well plates compatible with automation for parallel cultivation and analysis of variant libraries. |

| Fluorescent Protein Reporters | Genes encoding proteins like GFP or RFP, used as fusion tags or transcriptional reporters to quantitatively measure expression levels. |

Leveraging Multi-Omics Data Integration for Enhanced Prediction Accuracy

Technical Support & Troubleshooting Hub

This section addresses frequent computational and experimental challenges encountered in multi-omics data integration projects.

Frequently Asked Questions (FAQs)

Q: How should I normalize my multi-omics data before integration? A: Proper normalization is critical. The recommended approach varies by data type [24]:

- For count-based data (e.g., RNA-seq, ATAC-seq): Apply library size normalization (e.g., size factor normalization) followed by variance-stabilizing transformation. Inputting raw counts directly is not recommended.

- General Practice: Data should be normalized to account for differences in sample size or concentration, converted to a common scale, and technical biases should be removed [25]. Always release both raw and preprocessed data in public repositories to ensure reproducibility and allow for alternative analyses [25].

Q: My datasets have vastly different dimensionalities (e.g., thousands of transcripts vs. hundreds of metabolites). Will this bias the integration? A: Yes, larger data modalities can be overrepresented in the integrated model [24]. To mitigate this:

- Filter uninformative features from the larger datasets (e.g., based on a minimum variance threshold) to bring all modalities to a similar order of magnitude [24].

- Perform feature selection to retain highly variable features (HVGs) per assay, which helps the model focus on biologically relevant signals [24].

Q: Should I remove technical factors like batch effects before running an integration tool like MOFA+?

A: Yes. If clear technical factors (e.g., batch, processing date) are known, it is strongly encouraged to regress them out a priori using methods like a linear model in limma [24]. If not removed, the integration model will prioritize capturing this dominant technical variability, potentially causing smaller, biologically relevant sources of variation to be missed.

Q: How do I handle missing data points, which are common in omics like proteomics and metabolomics? A: Many advanced integration methods, including matrix factorization models like MOFA+, are inherently robust to missing values [24]. They ignore missing values in the likelihood calculation without requiring an imputation step. For other pipelines, dedicated imputation methods such as k-Nearest Neighbors (k-NN) or matrix factorization can be used to estimate missing values [26].

Q: What is the minimum sample size required for a multi-omics study?

A: Multi-omics studies must be adequately powered. For factor analysis models like MOFA+, a sample size of at least 15 is suggested as a minimum, but larger sample sizes are typically necessary for robust results [24]. Tools like MultiPower are available to perform formal sample size and power estimations for multi-omics study designs [27].

Q: What is the risk of data leakage in machine learning for multi-omics, and how can I avoid it? A: Data leakage is a major problem that occurs when information from the test dataset is inadvertently used during model training, leading to overly optimistic performance [28]. To prevent it:

- Ensure a strict separation between training, validation, and test sets before any preprocessing.

- Perform steps like feature selection and normalization within the training set only, and then apply the derived parameters to the test set.

Troubleshooting Common Experimental Issues

| Symptom | Potential Cause | Solution |

|---|---|---|

| A single dominant factor drives sample separation that correlates with technical variables. | Strong batch effects or library size differences not corrected for. | Regress out known technical covariates before integration. For count data, ensure proper library size normalization and variance stabilization [24]. |

| The model fails to identify known biological signal. | Insufficient statistical power (sample size too small) or over-filtering of informative features. | Use power analysis tools (e.g., MultiPower [27]) during study design. Re-evaluate feature selection thresholds. |

| Poor generalization of a trained model to new, independent datasets. | Data shift or overfitting. Data the model was trained on is not representative of "real-world" data [28]. | Simplify the model, increase training data diversity, and use rigorous cross-validation splits that keep independent cohorts separate. |

| One data type (e.g., genomics) dominates the integrated factors, while others (e.g., metabolomics) are ignored. | Large differences in data dimensionality between omics layers. | Filter uninformative features from the larger datasets to balance the influence of each modality [24]. |

| Inconsistent sample IDs or nomenclature across datasets. | Data heterogeneity and lack of standardized data formatting [27]. | Use domain-specific ontologies and standardized data formats for metadata. Implement consistent sample ID schemes across the project [25]. |

Experimental Protocols & Methodologies

This section provides detailed workflows for key multi-omics integration experiments cited in the literature.

Protocol 1: Multi-Omics Factor Analysis (MOFA) for Subtype Identification

This protocol details the use of MOFA+, a widely used tool for unsupervised integration of multi-omics data to discover latent sources of variation and identify patient or sample subgroups [24] [29].

1. Sample and Data Preparation

- Input Data: Collect matched multi-omics measurements (e.g., genomics, transcriptomics, methylomics) from the same set of samples.

- Data Normalization: Normalize each data modality appropriately. For RNA-seq data, use size factor normalization and variance-stabilizing transformation. Do not input raw counts [24].

- Feature Selection: Filter each data view to retain Highly Variable Features (HVGs). When dealing with multiple groups, regress out the group effect before selecting HVGs [24].

- Batch Effect Correction: Regress out known technical factors like batch effects using a tool like

limmabefore fitting the MOFA model [24]. - Data Formatting: Format data into a samples-by-features matrix for each modality.

2. Model Training and Factor Inference

- Model Setup: Specify the appropriate likelihoods for your data (Gaussian for continuous, Bernoulli for binary, Poisson for counts).

- Determine Factor Number: Train the model with a generous number of factors (e.g., 15-20). The model will automatically prune irrelevant factors.

- Training: Run the training algorithm until the Evidence Lower Bound (ELBO) converges.

3. Downstream Analysis and Interpretation

- Factor Interpretation: Correlate factors with known sample covariates (e.g., clinical data) to biological meaning.

- Weight Analysis: Examine the feature weights for each factor to identify which genes, metabolites, or genomic regions drive each factor.

- Subtype Identification: Use the factor values to cluster samples into novel molecular subtypes.

The following workflow summarizes the key steps in this protocol:

Protocol 2: Deep Learning-Based Integration for Complex Trait Prediction

This protocol outlines the use of deep learning frameworks, such as Flexynesis, for supervised integration tasks like drug response prediction or survival analysis [30].

1. Data Preprocessing and Feature Selection

- Data Collection: Assemble a dataset where samples have multi-omics profiles and associated outcome variables (e.g., drug sensitivity, survival status, disease subtype).

- Data Cleansing: Handle missing values using imputation or model-specific methods. Normalize and scale features within each omics layer.

- Feature Selection: Apply feature selection methods (e.g., variance-based, univariate statistical tests) to reduce dimensionality and mitigate overfitting.

2. Model Architecture Configuration

- Encoder Selection: Choose encoder networks (e.g., fully connected, graph-convolutional) to transform each omics data type into a lower-dimensional representation.

- Supervision Heads: Attach Multi-Layer Perceptron (MLP) "heads" on top of the encoders for specific tasks:

3. Model Training, Validation, and Benchmarking

- Training Setup: Split data into training, validation, and test sets. Perform hyperparameter tuning (e.g., learning rate, hidden layer size) on the validation set.