Validating Kinetic Models with Experimental Metabolomics: A Guide for Enhancing Drug Discovery

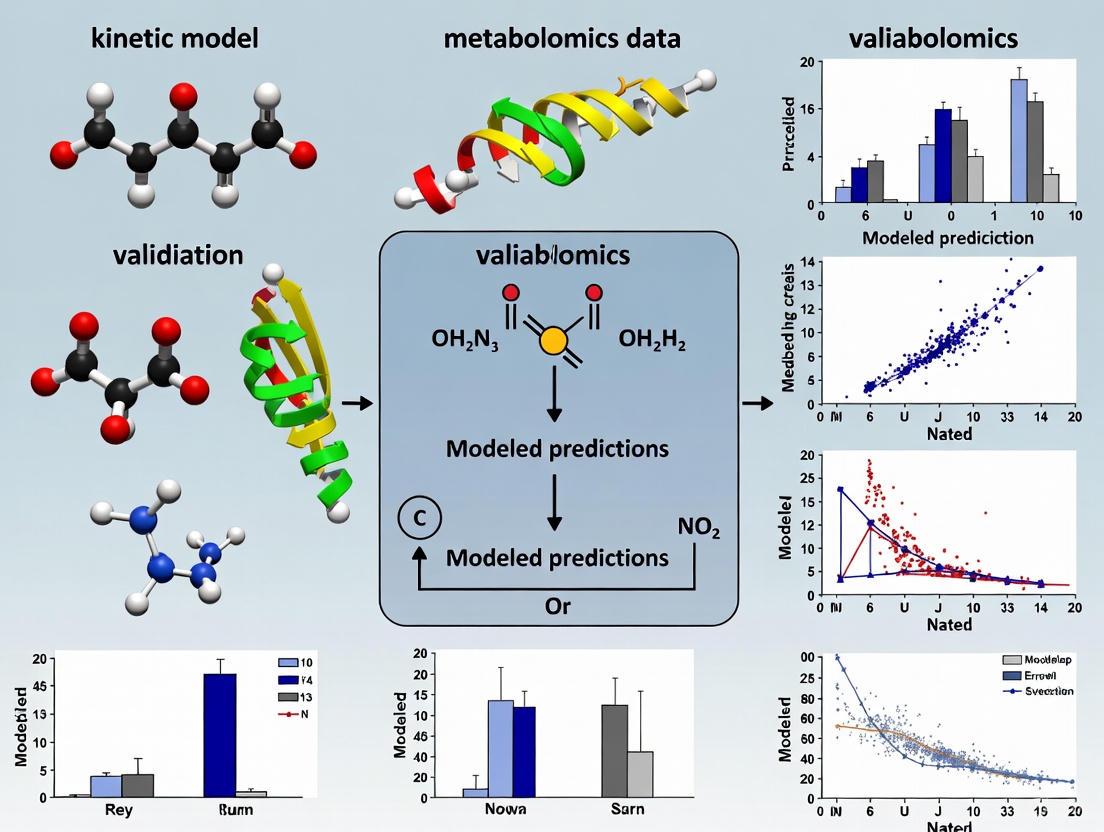

This article provides a comprehensive guide for researchers and drug development professionals on validating kinetic models against experimental metabolomics data.

Validating Kinetic Models with Experimental Metabolomics: A Guide for Enhancing Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on validating kinetic models against experimental metabolomics data. It covers the foundational principles of kinetic modeling and metabolomics technologies, explores advanced methodologies including machine learning and high-throughput frameworks, addresses common troubleshooting and optimization challenges, and establishes robust validation and comparative analysis techniques. By integrating these approaches, scientists can enhance the predictive accuracy of metabolic models, thereby accelerating therapeutic discovery and development, from target identification to clinical translation.

Kinetic Modeling and Metabolomics Fundamentals: Building a Foundational Understanding

Frequently Asked Questions (FAQs)

Q1: What are the primary analytical platforms used in metabolomics to generate data for kinetic model validation? The two main platforms are Mass Spectrometry (MS) and Nuclear Magnetic Resonance (NMR) spectroscopy [1]. MS is often coupled with separation techniques like Liquid Chromatography (LC-MS) or Gas Chromatography (GC-MS) for improved resolution and is widely used for its sensitivity and ability to reliably identify metabolites [1]. NMR is a non-destructive, highly reproducible technique that requires less sample preparation but generally has lower sensitivity compared to MS [1].

Q2: During data preprocessing, how should I handle missing values or zeros in my metabolomics dataset? The handling of missing values is a critical preprocessing step [2]. The appropriate method depends on the nature of the data and the biological hypothesis. It is essential to carefully evaluate and apply strategies to deal with zero and/or missing values before statistical analysis to prevent misinterpretation of results [2].

Q3: What reporting standards should I follow when publishing my metabolomics data and kinetic models? The Metabolomics Standards Initiative (MSI) provides reporting standards for all stages of metabolomics analysis [3]. It is crucial to define metabolite identification levels using MSI guidelines (Level 1 for identified metabolites to Level 4 for unknown compounds) in publications and when submitting data to repositories [1]. Adherence to these standards ensures data is Findable, Accessible, Interoperable, and Reproducible (FAIR) [3].

Q4: My kinetic model predictions do not align with experimental metabolomic data. What are potential sources of this discrepancy? Discrepancies can arise from several points in the experimental workflow:

- Data Quality: Inconsistent quality control (QC) during data acquisition or improper data normalization can introduce technical variance [1] [2].

- Annotation Quality: Using the incorrect metabolite identification level (e.g., presumptive annotation instead of confirmed standard) can lead to invalid comparisons [1].

- Biological Context: The model may not account for all relevant biological perturbations, such as environmental influences on metabolic pathways [4] [3]. Review your experimental design and metadata reporting against MSI guidelines [3].

Troubleshooting Guides

Issue 1: High Variance in Metabolite Feature Measurements

Problem: Quality Control (QC) samples show unacceptably high variance for multiple metabolite features, making it difficult to distinguish technical noise from biological signal.

Solution:

- Systematic Monitoring: Use QC samples to monitor the analytical platform's performance throughout the run [1].

- Filter Features: Calculate the variance of each metabolite feature from the QC samples. Any feature with a variance above a pre-defined threshold should be removed from the analysis [1].

- Apply Normalization: Use data normalization techniques to reduce systematic bias or technical variation. The choice of normalization method should be determined by the data's characteristics and the statistical analysis method [2].

Issue 2: Failure to Identify Key Metabolites from MS Data

Problem: After peak detection and alignment, you cannot match mass spectrometry data to known metabolites.

Solution:

- Internal Library Check: First, compare your MS peak data against an in-house library of authentic standard data [1].

- Utilize Public Databases: If an in-house library is unavailable, use public metabolomics databases. For untargeted metabolomics, databases like the Human Metabolome Database are essential [1].

- Report Annotation Level: Clearly report the level of metabolite identification as per MSI guidelines. If you only have a presumptive annotation (Level 2), do not report it as a confirmed identification (Level 1) [1].

Issue 3: Integrating Multi-Omics Data for Kinetic Model Context

Problem: Your kinetic model of metabolism is isolated and would benefit from the broader context of transcriptomic or proteomic data.

Solution:

- Adopt a Multi-Omics Mindset: Recognize that integrating different omics data requires specialized statistical and bioinformatics software, as a single-omics approach has limitations in exhaustively describing biological processes [1].

- Leverage Computational Metabolomics: Employ in-silico approaches and molecular docking methods. These can simulate interactions between ligands and receptors, helping to identify potential therapeutics and inform on the drug's mode of action, thus providing a richer context for your models [5].

Key Experimental Protocols

Protocol 1: Untargeted Metabolomics Workflow for Kinetic Model Parameterization

This protocol outlines the steps for acquiring global metabolomic data to inform kinetic models [1].

- Sample Preparation: Homogenize tissue or biofluid samples. Use appropriate extraction solvents (e.g., methanol, acetonitrile/water) to isolate a wide range of metabolites.

- Data Acquisition:

- For LC-MS: Separate extracts using a C18 column with a water-acetonitrile gradient. Acquire data in full-scan mode.

- For GC-MS: Derivatize extracts to make compounds volatile. Use a standard DB-5MS column for separation.

- Data Preprocessing: Process raw data files using software like XCMS, MZmine, or MAVEN. This includes peak detection, retention time correction, and chromatographic alignment [1].

- Compound Identification: Annotate features by matching accurate mass and retention time (Level 1) or mass alone (Level 2) against databases [1].

Protocol 2: A High-Throughput Phenotyping and Metabolomics Integration Setup

This protocol, adapted from a plant drought stress study, demonstrates how to correlate dynamic metabolic phenotypes with observable traits [4].

- Experimental Design: Grow twelve genotypes under controlled conditions. Subject them to a stressor (e.g., drought) with a control group, and include a recovery phase.

- Automated Phenotyping: Use a high-throughput phenotyping platform (e.g., LemnaTec-Scanalyzer 3D system) to automatically collect daily imaging-based data on growth parameters like plant height and biomass [4].

- Metabolic Profiling: Perform metabolic profiling at multiple, critical time points, including during stress application and the recovery stage [4].

- Data Integration: Statistically correlate the nearly 200 identified metabolites (organic acids, sugars, amino acids, etc.) with the 17 phenotypic traits measured to identify key metabolic biomarkers for the stress response [4].

Data Presentation Tables

Table 1: Metabolomics Platforms and Their Characteristics

| Platform | Separation Technique | Typical Metabolites Detected | Advantages | Disadvantages |

|---|---|---|---|---|

| Mass Spectrometry (MS) | Liquid Chromatography (LC-MS) | Fatty acids, lipids, nucleotides, polyphenols, terpenes [1] | High sensitivity; reliable identification; selective qualitative/quantitative analysis [1] | High instrument cost; requires sample separation/purification [1] |

| Gas Chromatography (GC-MS) | Amino acids, organic acids, sugars, sugar phosphates (requires derivatization) [1] | High resolution; improved compound identification with separation [1] | Limited to volatile or derivatizable compounds [1] | |

| Nuclear Magnetic Resonance (NMR) | Not required (can be used with HRMAS for tissues) [1] | Broad range of metabolites in a single run | Non-destructive; highly reproducible; minimal sample preparation [1] | Lower sensitivity; potentially masking low-concentration compounds [1] |

Table 2: Essential Metabolomics Data Preprocessing Steps

| Processing Step | Description | Common Tools / Methods |

|---|---|---|

| Peak Detection & Alignment | Identifying metabolite signals from raw data and aligning them across samples [1] | XCMS, MZmine, MAVEN [1] |

| Quality Control (QC) | Using QC samples to monitor and correct for technical variance; removing high-variance features [1] | Statistical evaluation of QC sample data [1] |

| Normalization | Reducing systematic bias or technical variation to make samples comparable [2] | Various methods exist; choice depends on data and hypothesis [2] |

| Handling Missing Values | Addressing zeros or missing data points in the data matrix [2] | Imputation or removal; strategy depends on the nature of the missingness [2] |

Experimental Workflow and Pathway Visualizations

Metabolomics to Kinetic Model Workflow

Plant Drought Stress Metabolic Response

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Metabolomics and Kinetic Modeling

| Item | Function | Example / Specification |

|---|---|---|

| LC-MS Grade Solvents | High-purity solvents for sample preparation and chromatography to minimize background noise and ion suppression. | Methanol, Acetonitrile, Water |

| Derivatization Reagents | For GC-MS analysis; chemically modifies metabolites to increase volatility and thermal stability. | MSTFA (N-Methyl-N-(trimethylsilyl)trifluoroacetamide) |

| Quality Control (QC) Pool | A pooled sample from all experimental samples used to monitor and correct for instrumental drift over the acquisition sequence [1]. | Created from an aliquot of each study sample |

| Authentic Chemical Standards | Used to build in-house libraries for definitive metabolite identification (MSI Level 1) [1]. | Commercially available purified compounds |

| Stable Isotope-Labeled Internal Standards | Added to samples to correct for variability in extraction and analysis efficiency; crucial for quantitative accuracy. | ¹³C or ¹⁵N labeled amino acids, lipids |

| Data Processing Software | Platforms for converting raw instrument data into a quantifiable matrix of metabolite features [1]. | XCMS, MZmine, MAVEN [1] |

Analytical Technologies at a Glance

The core technological pillars of modern metabolomics are Mass Spectrometry (MS) and Nuclear Magnetic Resonance (NMR) spectroscopy. The table below summarizes their key characteristics to guide platform selection.

Table 1: Comparison of Core Metabolomics Technologies

| Feature | Mass Spectrometry (MS) | Nuclear Magnetic Resonance (NMR) |

|---|---|---|

| Sensitivity | High (detects low-abundance metabolites) [6] | Lower than MS; typically quantifies abundant metabolites [7] |

| Analytical Throughput | High [6] | High-throughput and low-cost [6] |

| Quantification | Relative quantification common; absolute requires standards | Excellent for precise, absolute quantification [7] |

| Structural Elucidation | Provides molecular formula via high-mass accuracy; requires fragmentation (MS/MS) for detailed structure [8] | Excellent for de novo structural elucidation and identification of unknown metabolites [7] [8] |

| Sample Nature | Destructive analysis [7] | Non-destructive; sample can be recovered for further analysis [7] |

| Metabolite Coverage | Broad, especially for lipids; enhanced with chromatography [6] | Effective for core metabolites in key pathways [6] |

| Key Strength | High sensitivity and wide metabolite coverage [6] | Highly reproducible, non-destructive, and quantitative [9] [7] |

| Primary Challenge | Limited structural reproducibility; ionization suppression can affect detection [7] [10] | Lower sensitivity compared to MS [7] |

Workflow Diagram: Integrating MS and NMR Data for Kinetic Modeling

The following diagram illustrates a workflow for integrating data from MS and NMR platforms to build and validate kinetic models, leveraging data fusion strategies.

Troubleshooting Guides & FAQs

This section addresses common experimental challenges and provides guidance on data integration for kinetic modeling.

FAQ 1: How do I choose between a targeted vs. an untargeted metabolomics approach?

- Untargeted Metabolomics: An unbiased approach that aims to detect as many metabolites as possible to discern phenotypic patterns and generate hypotheses [6] [8]. It is ideal for discovery-phase research, such as finding novel biomarkers or unexpected metabolic perturbations.

- Targeted Metabolomics: Focuses on the accurate identification and precise quantification of a predefined set of metabolites or specific metabolic pathways [6] [8]. It is best for hypothesis-driven studies where specific metabolites are of interest, and for validating findings from untargeted screens. For kinetic modeling, targeted data providing absolute concentrations of pathway metabolites are often essential.

FAQ 2: What are the most critical factors for ensuring my metabolomics data are reproducible?

Reproducibility is a major challenge in metabolomics. Key factors to control include:

- Study Design:

- Clear Hypothesis: Fewer than 50% of published studies clearly state a research hypothesis, which is crucial for a well-designed experiment [9].

- Sample Size: The number of biological replicates must be sufficient to achieve statistical power, considering the high biological variability of samples [9].

- Sample Preparation:

- Standardized Protocols: Use strict, harmonized protocols for sample collection, quenching, and storage, as these are major sources of variation [11] [10].

- Consider Pre-analytical Factors: The metabolome is influenced by diet, lifestyle, age, sex, and medications. Document and control for these factors where possible [10].

- Reporting: Adopt community-developed reporting standards (e.g., from the Metabolomics Association of North America) to ensure all critical experimental details are documented for evaluation and repetition [9].

FAQ 3: When and how should I integrate NMR and MS data?

Integrating NMR and MS is powerful because their strengths are highly complementary. Data fusion (DF) strategies are used to combine these datasets [7].

Diagram: Data Fusion Strategies for MS and NMR Integration

Table 2: Data Fusion Strategies for MS and NMR Integration

| Fusion Level | Description | Advantages | Considerations |

|---|---|---|---|

| Low-Level | Direct concatenation of raw or pre-processed data matrices from NMR and MS [7]. | Retains the maximum amount of information from both platforms. | Requires careful data scaling to prevent one platform from dominating the model due to higher dimensionality [7]. |

| Mid-Level | Integration of extracted features (e.g., principal components) from each platform [7]. | Reduces data dimensionality and can balance the contribution of each technique. | Requires a separate feature extraction step before fusion. |

| High-Level | Combination of final predictions or decisions from models built on each platform separately [7]. | Offers high flexibility as models are built independently. | Most complex to implement; requires building multiple models. |

FAQ 4: How can I use my metabolomics data to build and validate kinetic models?

Kinetic models explicitly link metabolite concentrations, metabolic fluxes, and enzyme levels, making them powerful tools for understanding metabolic regulation [12] [13].

- Data Requirements: Kinetic models require and can integrate diverse data types, including:

- Model Parameterization: A major challenge is determining the kinetic parameters (e.g., Michaelis constants, V~max~) that govern cellular physiology. Generative machine learning frameworks (e.g., RENAISSANCE) can now efficiently parameterize large-scale kinetic models by integrating omics data and ensuring the model's dynamic properties match experimental observations [13].

- Validation Workflow:

- Model Construction: Define the network stoichiometry based on known pathways.

- Data Integration: Incorporate experimental metabolomics and fluxomics data.

- Parameter Estimation: Use computational methods to find kinetic parameters that fit the experimental data.

- Model Prediction: Simulate the model under a new condition not used for parameterization.

- Experimental Validation: Compare model predictions against new, independent experimental results (e.g., from a genetic perturbation or changed nutrient environment). Accurate prediction of new metabolic states is the strongest validation of a kinetic model [14].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Metabolomics Workflows

| Item | Function in Experiment |

|---|---|

| Internal Standards (IS) | Correct for analyte loss during sample preparation and instrument variability. Essential for precise quantification, especially in MS [9]. |

| Deuterated Solvent (e.g., D~2~O) | The lock signal for NMR spectroscopy to maintain magnetic field stability [7]. |

| Chemical Shift Reference (e.g., TMS, DSS) | Provides a reference peak (0 ppm) for calibrating chemical shifts in NMR spectra [9]. |

| Stable Isotope-Labeled Nutrients (e.g., ^13^C-Glucose) | Used in fluxomics to trace metabolic pathways and quantify metabolic reaction rates (fluxes) [12]. |

| Quality Control (QC) Pool Sample | A pooled sample made from a small aliquot of all study samples, analyzed repeatedly throughout the batch run to monitor instrument stability and performance [9]. |

| Buffers & Extraction Solvents (e.g., Methanol, Acetonitrile) | Quench metabolism and extract metabolites from cells or tissues. Solvent choice impacts the range of metabolites recovered [11]. |

Why is validating kinetic models with experimental data crucial in metabolomics research?

In metabolomics, kinetic models are powerful tools for predicting how metabolic concentrations change over time. However, these mathematical models are built on assumptions and simplifications. Validation against experimental data is the critical process that assesses whether a model accurately reflects real-world biology. Without this step, there is a high risk of drawing incorrect conclusions, which can misdirect research and hinder drug development efforts.

Proper validation ensures your model is not just fitting noise or artifacts in a specific dataset but has genuine predictive capability for new, unseen data from the population of interest [15]. It confirms that the model's parameters are precise and that its predictions are reliable enough to inform scientific and clinical decisions [16].

Troubleshooting Guide: Common Kinetic Model Validation Issues

| Problem Area | Specific Issue | Potential Causes | Corrective Actions |

|---|---|---|---|

| Data Quality | High residuals or poor fit across all data points. | Inaccurate measurements; high technical noise; inappropriate internal standards [17] [18]. | Verify instrument calibration; use isotopically labeled internal standards; implement rigorous QC protocols with pooled quality control (QC) samples [17] [18]. |

| Model Overfitting | The model fits the training data perfectly but fails to predict new experimental data. | The model is too complex, capturing noise rather than the underlying biological trend [15]. | Use cross-validation; split data into independent training and test sets; apply simpler models or regularization techniques [16] [15]. |

| Parameter Uncertainty | Fitted parameters (e.g., rate constants) have very wide confidence intervals. | Insufficient or low-quality data; parameters are highly correlated [16] [19]. | Increase replicate experiments; redesign experiments to collect data over a wider range of conditions; check for parameter correlation [16]. |

| Systematic Residuals | Residuals show a non-random pattern (e.g., a curve) when plotted against time or predicted values. | An incorrect model structure was chosen, failing to capture a key process in the system [16]. | Re-evaluate the model's underlying assumptions; consider alternative model structures that better reflect the biology [16] [19]. |

Frequently Asked Questions (FAQs)

How do I know if my model is complex enough, but not overfitted?

A good fit is judged not only by low residuals but also by the analysis of residuals and the model's predictive capability. The residuals (the differences between observed data and model predictions) should be randomly distributed. If they show a systematic pattern, it indicates the model is missing a key element of the underlying biology [16]. To avoid overfitting, you must test the model on an independent dataset that was not used during the model-building process (a "test set"). A significant performance gap between the training and test data is a classic sign of overfitting [15].

What is the role of quality control (QC) samples in validating metabolomics data?

QC samples are essential for monitoring technical performance and enabling post-acquisition data correction. In large-scale metabolomics studies, a pooled QC sample (a mixture of a small amount of all study samples) is analyzed repeatedly throughout the analytical sequence. This helps track and correct for instrumental drift, such as a drop in MS signal over time [17]. The consistency of the QC samples, measured by metrics like the coefficient of variation (CV%), is a key indicator of data quality, with CV% ideally below 15% for targeted analysis and below 30% for untargeted studies [18].

What are the best practices for reporting model validation results?

When reporting validation, transparency is key. You should:

- Clearly define your population of interest and ensure your test data is representative of it [15].

- Report the validation scheme used (e.g., train/test split, double cross-validation) and any data preprocessing steps, ensuring they were performed without using the test data [15].

- Provide clear performance metrics and openly discuss any limitations or potential risks in your validation strategy [15].

- Go beyond a single goodness-of-fit metric. Evaluate parameter precision and the model's predictive ability outside the training sample [16].

Essential Protocols for Validation

Protocol: Using Internal Standards and QC Samples for Data Normalization

This protocol is critical for ensuring data quality in large-scale metabolomic studies where instrumental drift can occur [17].

- Internal Standards (IS) Preparation: Add a mix of isotopically labeled standards (e.g., deuterated or 13C-labeled metabolites) to every sample before processing. These compounds mimic the chemical behavior of native metabolites but are distinguishable by mass spectrometry [17] [18].

- QC Sample Preparation: Create a pooled QC sample by combining a small aliquot of every biological sample in the study [17] [18].

- Sequential Analysis: Analyze samples and QCs in a randomized order. Inject the pooled QC sample repeatedly throughout the sequence (e.g., every 8-10 injections) to monitor system stability [17].

- Data Normalization: Apply normalization algorithms (e.g., SERRF) that use the signal trends from the IS and pooled QCs to correct for intra- and inter-batch systematic errors in the experimental data [17] [20].

Protocol: A Framework for Kinetic Model Validation

This protocol provides a step-by-step approach to rigorously validate your kinetic models [16] [15].

- Data Splitting: Split your experimental dataset into two independent parts: a model-building set (e.g., 70-80% of data) and a test set (the remaining 20-30%). The test set must not be used in any model fitting or selection steps [15].

- Model Fitting and Selection: Use the model-building set to train and select your candidate kinetic models. This step can involve techniques like cross-validation to tune meta-parameters [15].

- Residual Analysis: Fit the model to the entire model-building set and plot the residuals. Check that they are randomly distributed around zero. Systematic patterns indicate a poor model fit [16].

- Parameter Evaluation: Examine the estimated parameters (e.g., rate constants) for precision. Wide confidence intervals suggest the data is insufficient to reliably estimate that parameter [16].

- Final Validation Test: Apply the final, fully-trained model from Step 2 to the held-out test set. Compare the model's predictions against the actual experimental data in the test set to evaluate its true predictive power [16] [15].

Kinetic Model Validation Workflow

The Scientist's Toolkit

| Category | Item / Tool | Function in Validation |

|---|---|---|

| Research Reagents | Isotopically Labeled Internal Standards (e.g., 13C-glucose, Deuterated Amino Acids) | Added to samples to correct for matrix effects, extraction efficiency, and instrument signal drift during data normalization [17] [18]. |

| Certified Reference Materials | Provide known metabolite concentrations for absolute quantification and to verify method accuracy across laboratories [18]. | |

| Pooled Quality Control (QC) Samples | A pooled aliquot of all study samples, analyzed throughout the sequence to monitor system stability and for post-acquisition data correction [17] [18]. | |

| Computational Tools | SERRF (Systematic Error Removal using Random Forest) | A normalization tool that uses the signals from pooled QC samples to correct technical variance and batch effects in metabolomics datasets [20]. |

| Cross-Validation Routines | A statistical technique used to assess how the results of a model will generalize to an independent dataset, crucial for preventing overfitting [16] [15]. | |

| COVRECON | A computational workflow that integrates metabolomics data with metabolic network models to infer key biochemical regulations and interactions [21]. |

Validation Component Relationships

Core Concepts: Target Identification and Mechanism of Action

What are Target Identification (TID) and Mechanism of Action (MoA), and why are they critical in drug discovery?

Target Identification (TID) is the process of determining the specific molecular target (e.g., a protein, RNA molecule) that a drug interacts with. The Mechanism of Action (MoA) describes the broader biological consequences of this interaction, detailing how the drug's binding to its target produces a phenotypic change at the cellular or tissue level [22]. Understanding TID and MoA is crucial for optimizing drug efficacy, predicting and mitigating side effects, and guiding medicinal chemistry efforts. While some beneficial drugs were developed without this knowledge, elucidating TID/MoA provides tangible benefits for creating improved drug generations and is foundational for personalized medicine, as exemplified by trastuzumab for HER2-positive breast cancer [22] [23].

What are the two main screening approaches in early drug discovery?

The two general approaches are target-based screens and phenotypic screens [22].

- Target-Based Screens: This is a reductionist approach. It uses in vitro biochemical assays to screen compounds against a specific, purified molecular target hypothesized to be relevant to a disease. Its advantages include high efficiency, cost-effectiveness, and high throughput. A major disadvantage is that it requires substantial prior understanding of the disease cause, and drugs discovered this way can fail in clinical trials if the initial target validation was incomplete [22].

- Phenotypic Screens: This is a holistic approach. It tests whether small molecules induce a desirable phenotypic change in a more biologically relevant system, such as cells, tissues, or whole animals. This method can discover new therapeutic targets and "pre-validates" the drug in a disease-relevant context. The primary disadvantage is that it requires a subsequent, often complex, effort to identify the molecular target responsible for the observed phenotype [22] [23].

Table: Comparison of Screening Approaches

| Feature | Target-Based Screening | Phenotypic Screening |

|---|---|---|

| Approach | Reductionist | Holistic |

| Assay System | In vitro, purified target | Cell-based, tissue-based, or whole-animal |

| Primary Readout | Interaction with a specific target | Observable phenotypic change |

| Key Advantage | Efficient, high-throughput, accelerates analog development | Disease-relevant context, discovers novel targets |

| Key Challenge | Requires deep prior disease knowledge; risk of incomplete target validation | Requires deconvolution to identify the molecular target(s) |

Methodological Guides: Experimental Protocols for Target Identification

FAQ: What are the primary experimental methods for identifying a drug's target?

The three main complementary approaches are direct biochemical methods, genetic interaction methods, and computational inference methods [23]. Most successful projects integrate findings from multiple approaches to confirm the target and understand off-target effects.

Protocol 1: Affinity-Based Pull-Down Methods

This direct biochemical method involves conjugating the small molecule to an affinity tag and using it to isolate its binding partners from a complex biological mixture [24].

Detailed Methodology:

- Probe Design and Synthesis: Chemically modify the small molecule of interest by conjugating it to an affinity tag (e.g., biotin) or immobilizing it directly on a solid support (e.g., agarose beads). A linker (e.g., polyethylene glycol - PEG) is often used to attach the molecule in a way that minimizes interference with its biological activity [24].

- Control Preparation: Prepare control beads loaded with an inactive analog of the compound or capped without any compound. This is critical for distinguishing specific binding from nonspecific background interactions [23].

- Incubation with Biological Sample: Incubate the compound-conjugated beads with a cell lysate or protein mixture containing the putative target proteins.

- Wash and Elution: Wash the beads with a suitable buffer to remove non-specifically bound proteins. Elute the specifically bound proteins. Stringent wash conditions can bias results towards high-affinity binders, while milder washes may retain protein complexes [23].

- Target Analysis: Separate the eluted proteins using SDS-PAGE and identify them via mass spectrometry [24].

Troubleshooting Guide:

- Issue: High background of non-specifically bound proteins.

- Solution: Optimize wash stringency (e.g., salt concentration, detergents). Use a different control compound or perform a competition assay by pre-incubating lysate with the free, unlabeled compound [23].

- Issue: The tagged molecule loses biological activity.

- Solution: Redesign the probe, varying the site of attachment and the linker chemistry to preserve the pharmacophore [24].

Protocol 2: Photoaffinity Labeling (PAL)

A variant of affinity methods, PAL uses a photoreactive group to form a permanent, covalent bond with the target protein upon light activation, which is useful for capturing low-abundance or transient interactions [24].

Detailed Methodology:

- Probe Design: Synthesize a probe containing three elements: the small molecule of interest, a photoreactive group (e.g., benzophenone, diazirine), and an affinity tag (e.g., biotin, alkyne for click chemistry).

- Incubation and Cross-Linking: Incubate the probe with cells or lysates. Irradiate the sample with a specific wavelength of light to activate the photoreactive group, triggering covalent bond formation with nearby target proteins.

- Cell Lysis and Capture: Lyse the cells and capture the biotin-tagged protein complexes on streptavidin beads.

- Wash and Elution: Wash the beads thoroughly and elute the proteins.

- Identification: Identify the captured target proteins using SDS-PAGE and mass spectrometry [24].

Protocol 3: Genetic Interaction Methods

These methods modulate gene expression to see how it affects a compound's potency.

Detailed Methodology:

- Resistance Mutagenesis: Grow cells under long-term selection with the drug. Isolate resistant clones and sequence their genomes to identify mutations. The mutated gene often encodes the drug target or a protein in the same pathway [23].

- Haploinsufficiency Profiling (HIP): In yeast, screen a library of heterozygous deletion strains. The strain where the heterozygous deletion of the drug target causes hypersensitivity to the drug will identify the target [23].

- CRISPR-based Genetic Screens: Use genome-wide CRISPR knockout or activation libraries to identify genes whose modification alters cellular sensitivity to the compound [23].

Diagram 1: Genetic Interaction Workflows for Target Identification.

Troubleshooting in Metabolomics and Bioanalysis

Metabolomics is key for understanding MoA by providing a snapshot of the biochemical phenotype. However, the data is prone to technical noise that can confound results if not corrected [25] [26].

FAQ: Why is my metabolomics data highly variable or irreproducible?

Technical variability can arise from multiple sources, including sample preparation inconsistencies, instrument drift, batch effects, and matrix effects (ion suppression/enhancement in MS) [27] [25] [26]. A systematic data correction process is essential to distinguish true biological signal from technical noise.

Troubleshooting Guide for Metabolomics & LC-MS/MS:

- Issue: No or few metabolites detected.

- Potential Causes & Fixes:

- Issue: Ion suppression in LC-MS/MS.

- Potential Causes & Fixes:

- Matrix effects from co-eluting compounds: Improve chromatographic separation. Use a stable isotope-labeled internal standard (SIL-IS) for each analyte. The SIL-IS co-elutes with the analyte and corrects for suppression [27] [26].

- Diagnosis: Perform post-column infusion of the analyte to visualize suppression zones as negative peaks in the chromatogram [27].

- Potential Causes & Fixes:

- Issue: High analytical variance or batch effects.

- Potential Causes & Fixes:

- Inadequate normalization and correction: Apply a systematic data correction pipeline including normalization (to total signal or internal standards), batch effect correction, and drift alignment [25] [26].

- Best Practice: Use a universally labeled 13C biological matrix as an internal standard spiked into every sample to correct for sample loss, ion suppression, and instrument drift [26].

- Potential Causes & Fixes:

Table: Common Bioanalytical Biases and Mitigation Strategies

| Bias Category | Example | Impact | Mitigation Strategy |

|---|---|---|---|

| Sample Preparation | Deviation in extraction, quenching, or storage time [25] | Alters measured analyte levels | Standardize and rigorously validate all protocols |

| Analytical Conditions | Instrument drift between runs [26] | Introduces batch effects | Use quality control (QC) reference samples and correct for drift |

| Sample Complexity | Matrix effects causing ion suppression/enhancement [27] | Distorts quantification accuracy | Use stable isotope-labeled internal standards (SIL-IS) |

| Interpretive | Assuming data is Gaussian-distributed and uncorrelated [25] | Leads to incorrect statistical inferences | Use non-parametric stats and account for metabolite correlations |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Reagents for Target ID and Metabolomics Experiments

| Reagent / Material | Function | Key Application Example |

|---|---|---|

| Biotin-Avidin/Streptavidin System | High-affinity interaction for purifying protein complexes. | Affinity-based pull-down; biotin-tagged small molecule is used to isolate target proteins with streptavidin beads [24]. |

| Photoaffinity Groups (e.g., Diazirines, Benzophenones) | Form covalent bonds with target proteins upon UV light activation. | Photoaffinity Labeling (PAL); captures transient or low-affinity drug-target interactions [24]. |

| Stable Isotope-Labeled Internal Standards (SIL-IS) | Correct for variability in sample preparation and analysis; enable absolute quantification. | Metabolomics data correction; corrects for matrix effects, ion suppression, and instrument drift [27] [26]. |

| CRISPR Library (Knockout/Activation) | Systematically modulate gene expression across the genome. | Genetic interaction screens; identify genes that confer resistance or sensitivity to a drug, pointing to its target or pathway [23]. |

Integrating TID and MoA with Kinetic Models in Metabolomics

Validating kinetic models against experimental metabolomics data requires high-quality, bias-corrected data. Understanding a drug's MoA provides the biological context to constrain and interpret these models.

Workflow for Integration:

- Perturbation: Treat a biological system with the compound whose TID/MoA is being studied.

- Metabolomic Profiling: Collect high-quality, quantitative metabolomics data at multiple time points. This data must be corrected for technical biases (see Troubleshooting section) to ensure it reflects true biology [26].

- Data Integration and Inverse Modeling: Use computational methods like inverse Jacobian analysis (e.g., COVRECON workflow) to infer the differential biochemical regulations between treated and untreated states [21]. This analysis can identify key regulatory processes impacted by the drug.

- Kinetic Model Validation/Refinement: The inferred regulatory changes from the data, guided by the known or hypothesized MoA, are used to test and refine kinetic models of the metabolic network. The model's predictions should align with the empirical MoA data [21].

Diagram 2: Integrating Metabolomics with Kinetic Model Validation.

Advanced Methodologies: Machine Learning and High-Throughput Kinetic Modeling

Generative Machine Learning for Kinetic Parameterization (e.g., RENAISSANCE, DeePMO)

Core Concepts: Frameworks for Kinetic Parameterization

What are RENAISSANCE and DeePMO, and how do they address key challenges in kinetic modeling?

RENAISSANCE (REconstruction of dyNAmIc models through Stratified Sampling using Artificial Neural networks and Concepts of Evolution strategies) and DeePMO (Deep learning-based kinetic model optimization) are generative machine learning frameworks designed to overcome the primary bottleneck in kinetic modeling: the lack of knowledge about in vivo kinetic parameter values [13] [29]. They enable the efficient parameterization of large-scale, biologically relevant kinetic models.

The table below summarizes their core characteristics:

| Feature | RENAISSANCE | DeePMO |

|---|---|---|

| Primary Approach | Generative machine learning using Evolution Strategies [13] [29] | Iterative deep learning with a hybrid DNN [30] |

| Key Innovation | Parameterizes models without needing pre-existing training data [13] [29] | Maps high-dimensional parameters to multi-source performance metrics [30] |

| Learning Strategy | Natural Evolution Strategies (NES) to optimize generator networks [13] [29] | Iterative sampling-learning-inference strategy [30] |

| Typical Application | Intracellular metabolic states (e.g., E. coli metabolism) [13] [29] | Chemical kinetic models (e.g., fuel combustion) [30] |

| Key Advantage | Dramatically reduces computation time; characterizes metabolic states accurately [13] [29] | Effectively explores high-dimensional parameter spaces; versatile across fuel types [30] |

How does the RENAISSANCE framework specifically work?

RENAISSANCE operates through a four-step iterative process to create kinetic models that match experimentally observed dynamics [13] [29]:

- Initialization: A population of feed-forward neural networks (generators) is initialized with random weights.

- Generation: Each generator takes random noise as input and produces a batch of kinetic parameter sets.

- Evaluation: Each parameter set is used to parameterize a kinetic model. The model's dynamics are evaluated (e.g., by calculating the dominant time constant from the Jacobian's eigenvalues), and the generator is rewarded based on how many models match experimental observations.

- Evolution: The generators' weights are updated based on their rewards using Natural Evolution Strategies (NES), creating a new generation of generators. This process repeats until the generators reliably produce valid models.

Troubleshooting Guide: Common Experimental Issues

My generative model fails to converge to biologically plausible kinetic parameters. What could be wrong?

This is often related to input data quality or model configuration. Below is a table of common issues and solutions:

| Problem | Potential Causes | Solutions & Verification Steps |

|---|---|---|

| Slow or No Convergence | Poorly defined steady-state input profile; incorrect hyperparameters [13]. | Verify steady-state fluxes/concentrations with thermodynamic analysis (e.g., flux balance analysis) [13] [29]. Perform hyperparameter tuning for the generator network [13]. |

| Generated Models are Theoretically Invalid | Thermodynamically infeasible parameters are being generated. | Ensure thermodynamic constraints (e.g., reaction directionality from Gibbs free energy) are integrated into the model's structure during steady-state calculation [31]. |

| Poor Generalization to New Data | Overfitting to the specific steady-state data used for training. | Validate model robustness by testing if the system returns to steady state after perturbing metabolite concentrations (e.g., ±50% perturbation) [13]. |

| High Uncertainty in Parameter Estimates | Sparse or low-quality experimental data for reconciliation. | Use the framework's ability to integrate diverse omics data (proteomics, transcriptomics) and reconcile them with sparse kinetic data to reduce uncertainty [13] [29]. |

How do I validate a kinetic model parameterized by RENAISSANCE against my experimental metabolomics data?

Validation should assess both dynamic behavior and steady-state predictions. A key metric is the dominant time constant, derived from the largest eigenvalue (λmax) of the model's Jacobian matrix. This constant should correspond to biologically observed timescales, such as the cell's doubling time [13].

- Protocol: Dynamic Timescale Validation

- Objective: Confirm the model's dynamic response matches experimental observations (e.g., E. coli doubling time of 134 min) [13].

- Procedure:

- Compute the Jacobian matrix for the parameterized kinetic model at steady state.

- Calculate the eigenvalues of the Jacobian.

- Identify the largest eigenvalue, λmax.

- Calculate the dominant time constant as τ = -1/λmax.

- Success Criterion: The dominant time constant should be consistent with physiology. For example, in a validated E. coli model, λmax was < -2.5, corresponding to a τ of ~24 min, ensuring metabolic processes settle before cell division [13].

- Robustness Check: Perturb the model's steady-state metabolite concentrations (e.g., ±50%) and verify that the system returns to the original steady state within the expected timeframe [13].

Experimental Protocols & Methodologies

What is a detailed protocol for implementing a RENAISSANCE-like pipeline to characterize a metabolic network?

The following methodology outlines the key steps, as demonstrated in an E. coli case study [13].

| Phase | Action | Purpose & Technical Notes |

|---|---|---|

| 1. Input Preparation | Compute steady-state profiles. Use thermodynamics-based flux balance analysis to integrate experimental data (e.g., metabolomics, fluxomics) and generate thousands of possible steady-state profiles of metabolite concentrations and fluxes [13]. | Provides a physiologically feasible starting point for kinetic parameterization. |

| 2. Model Scaffolding | Define the network structure. Compile the stoichiometric matrix, regulatory structures, and rate laws for all reactions in the network. This often uses an existing model as a scaffold [13]. | Defines the mathematical structure of the ODEs that form the kinetic model. |

| 3. ML Configuration | Set up the generator and NES. Configure a feed-forward neural network as the generator. Define NES hyperparameters (e.g., population size, noise injection level, reward function). A three-layer network has been successfully used [13]. | The core engine for generating and optimizing kinetic parameters. |

| 4. Iterative Optimization | Run the RENAISSANCE loop. Execute the four-step process (Initialize, Generate, Evaluate, Evolve) for multiple generations (e.g., 50 generations). Track the incidence of valid models [13]. | Evolves the generator to produce increasingly better parameter sets. |

| 5. Validation & Analysis | Test model dynamics and robustness. Validate the final models using the timescale and perturbation checks described in the troubleshooting section [13]. | Confirms the biological relevance and predictive power of the generated models. |

What are the essential computational tools and databases for generative kinetic parameterization?

This table lists key resources mentioned in the research for building and validating kinetic models.

| Category | Tool / Resource | Function & Application |

|---|---|---|

| Generative Frameworks | RENAISSANCE [13] [29] | Generative ML framework for parameterizing metabolic kinetic models without training data. |

| DeePMO [30] | Deep learning-based optimization for high-dimensional parameters in chemical kinetic models. | |

| Kinetic Modeling Tools | SKiMpy [31] | Semiautomated workflow that uses stoichiometric models as a scaffold to construct and parameterize large kinetic models. |

| MASSpy [31] | A framework for kinetic model construction, often using mass-action rate laws, and well-integrated with constraint-based modeling tools. | |

| Tellurium [31] | A versatile tool for kinetic modeling in systems and synthetic biology, supporting standardized model formulations. | |

| Data Integration | COVRECON [21] | A method for analyzing causal molecular dynamics and inferring metabolic network interactions from multi-omics data. |

| Analytical Platforms | LC-MS / NMR [32] [6] | Predominant analytical platforms for metabolomics data generation, which serve as critical input and validation data for kinetic models. |

Core Concepts and Workflow

What is the value of integrating proteomics, fluxomics, and metabolomics?

Integrating proteomics, fluxomics, and metabolomics provides a comprehensive view of cellular processes by connecting different regulatory layers. Proteomics identifies and quantifies proteins, including enzymes that catalyze metabolic reactions. Metabolomics measures metabolite concentrations, representing the end products of cellular processes. Fluxomics quantifies metabolic reaction rates, showing the actual metabolic activity. When analyzed together, they provide bidirectional insights: which enzymes regulate metabolic fluxes and how metabolic changes feedback to modulate protein function through allosteric regulation or post-translational modifications [33] [12].

This integration is particularly powerful for kinetic model validation, as it allows researchers to:

- Test whether predicted flux distributions align with measured enzyme abundances and metabolite concentrations

- Identify discrepancies between enzyme abundance and activity that suggest post-translational regulation

- Validate model predictions against multiple types of experimental data simultaneously [13] [12]

What are the typical workflows for multi-omics analysis?

A typical multi-omics workflow involves several interconnected phases, as illustrated below:

Experimental Phase:

- Sample Preparation: Use joint extraction protocols when possible to simultaneously recover proteins and metabolites from the same biological material. Keep samples on ice and process rapidly to minimize degradation. Include internal standards for accurate quantification [33].

- Data Acquisition:

- Proteomics: Use LC-MS/MS with Data-Independent Acquisition (DIA) for high reproducibility and broad proteome coverage, or Tandem Mass Tags (TMT) for multiplexed quantification across samples.

- Metabolomics: Employ LC-MS or GC-MS platforms. LC-MS offers broader metabolite coverage, while GC-MS provides excellent resolution for volatile compounds.

- Fluxomics: Utilize stable isotope tracing and metabolic flux analysis (MFA) to quantify metabolic reaction rates [33] [12].

Computational Phase:

- Data Preprocessing: Apply normalization techniques (log transformation, quantile normalization) and batch effect correction to harmonize datasets [33] [34].

- Kinetic Model Parameterization: Use frameworks like RENAISSANCE that employ machine learning to efficiently parameterize kinetic models matching experimental observations [13].

- Integration & Analysis: Apply multivariate statistics, correlation analysis, and pathway mapping to identify relationships across omics layers [35] [33].

- Validation & Interpretation: Compare model predictions with experimental data, identify regulatory mechanisms, and generate testable hypotheses [12].

Troubleshooting Common Experimental Issues

Data Quality and Technical Variability

Q: How do I handle different data scales and technical variability across omics datasets?

A: Technical variability arises from different measurement techniques, dynamic ranges, and noise distributions. Address this through:

- Normalization Strategies:

- Metabolomics: Apply log transformation to stabilize variance and reduce skewness

- Proteomics: Use quantile normalization or variance stabilization

- Fluxomics: Normalize fluxes to reference reactions or total flux

- Batch Effect Correction: Use tools like ComBat to mitigate technical variation across different processing batches [33]

- Common Reference Materials: Implement ratio-based profiling by scaling absolute feature values of study samples relative to a concurrently measured common reference sample (e.g., using materials like the Quartet Project references) [36]

Q: My datasets have different dimensionalities - will this affect integration?

A: Yes, larger data modalities tend to be overrepresented in integrated analyses. To address this:

- Filter uninformative features based on minimum variance thresholds

- Aim to have different omics layers within the same order of magnitude in terms of feature numbers

- If unavoidable, be aware that smaller sources of variation in the smaller dataset might be missed [34]

Kinetic Model Validation Challenges

Q: How do I resolve discrepancies between model predictions and experimental measurements?

A: Discrepancies often reveal important biology. Follow this systematic approach:

- Verify Data Quality: Check for consistency in sample processing, normalization effectiveness, and potential batch effects [33] [36].

- Check for Post-Translational Regulation: High enzyme abundance with low flux may indicate inhibitory modifications (phosphorylation, acetylation) not captured in the model [12].

- Examine Allosteric Regulation: Metabolite concentrations inconsistent with flux patterns may suggest unmodeled allosteric regulation [12].

- Validate Kinetic Parameters: Use frameworks like RENAISSANCE to estimate missing kinetic parameters and reconcile them with sparse experimental data [13].

- Update Model Structure: Add missing regulatory interactions or pathway branches suggested by multi-omics discrepancies [14].

Q: How can I estimate missing kinetic parameters for my model?

A: The RENAISSANCE framework provides an efficient approach:

- Uses generative machine learning with natural evolution strategies to parameterize kinetic models

- Requires steady-state profiles of metabolite concentrations and metabolic fluxes as input

- Optimizes kinetic parameters to produce dynamic metabolic responses with timescales matching experimental observations

- Can substantially reduce parameter uncertainty and improve accuracy compared to traditional methods [13]

Biological Interpretation Challenges

Q: How do I interpret relationships between protein abundance, metabolic fluxes, and metabolite concentrations?

A: These relationships provide insights into regulatory mechanisms:

| Relationship Pattern | Potential Interpretation | Regulatory Mechanism |

|---|---|---|

| High enzyme abundance + High flux + Elevated product metabolites | Active pathway with minimal regulation | Transcriptional control |

| High enzyme abundance + Low flux + Accumulated substrate metabolites | Potential inhibition | Post-translational modification or allosteric regulation |

| Low enzyme abundance + High flux + Appropriate metabolite levels | High enzyme efficiency | Evolutionary optimization or compensatory mechanisms |

| Disconnect between flux and metabolite changes | Regulatory network effects | Feedback/feedforward regulation [37] [12] |

Q: How can I identify which enzymes are most important for controlling metabolic fluxes?

A: Apply Inverse Metabolic Control Analysis (IMCA):

- Use kinetic models and metabolomics data to predict changes in enzyme activities

- The method identifies enzymes that strongly regulate metabolite distributions

- For example, IMCA applied to yeast sphingolipid metabolism identified SPO14 as a key regulator of sphingolipid distribution among species [14]

Essential Research Reagents and Computational Tools

Reference Materials and Standards

Table: Essential Multi-Omics Reference Materials

| Material Type | Purpose | Example Resources |

|---|---|---|

| Common Reference Materials | Enable ratio-based profiling across batches and platforms | Quartet Project reference materials (DNA, RNA, protein, metabolites from matched cell lines) [36] |

| Isotope-Labeled Internal Standards | Accurate quantification for proteomics and metabolomics | Stable isotope-labeled peptides and metabolites |

| Quality Control Materials | Monitor technical variability across experiments | Commercially available QC pools for each omics type |

Computational Tools and Frameworks

Table: Computational Tools for Multi-Omics Integration

| Tool Name | Primary Function | Application in Kinetic Modeling |

|---|---|---|

| RENAISSANCE | Parameterization of kinetic models using machine learning | Efficiently generates biologically relevant kinetic models matching experimental dynamics [13] |

| MOFA2 | Multi-omics factor analysis to capture latent factors | Identifies shared and unique sources of variation across omics layers [33] [34] |

| IMCA | Inverse metabolic control analysis | Predicts changes in enzyme activities from metabolomics data [14] |

| MetaboAnalyst | Pathway analysis and integration with proteomic data | Maps identified metabolites and proteins to biological pathways [33] |

| xMWAS | Network-based integration | Visualizes protein-metabolite interaction networks [33] |

Experimental Protocols for Model Validation

Protocol: Validating Kinetic Models Against Multi-Omics Data

Objective: Test whether a kinetic model accurately predicts cellular metabolic states using integrated proteomics, fluxomics, and metabolomics data.

Materials:

- Kinetic model of the metabolic network

- Proteomics data (enzyme abundances)

- Fluxomics data (reaction rates from MFA)

- Metabolomics data (metabolite concentrations)

- Computational tools (RENAISSANCE, IMCA, or similar frameworks)

Procedure:

Data Preprocessing:

Model Parameterization:

- Use steady-state profiles of metabolite concentrations and metabolic fluxes as input

- Apply machine learning frameworks like RENAISSANCE to estimate kinetic parameters

- Validate that generated models produce dynamic responses with biologically relevant timescales [13]

Model Validation:

- Compare model-predicted fluxes with experimentally measured fluxomics data

- Test whether model simulations recapitulate measured metabolite concentration changes

- Check consistency between enzyme abundances and predicted flux control coefficients

Discrepancy Analysis:

- Identify reactions where predictions disagree with experimental data

- Apply Inverse Metabolic Control Analysis to identify potential regulatory mechanisms

- Update model structure to include missing regulatory interactions [14]

Robustness Testing:

- Perturb steady-state metabolite concentrations (e.g., ±50%)

- Verify that the system returns to steady state within biologically relevant timescales

- Test model performance under different physiological conditions [13]

Troubleshooting Tips:

- If model fails to return to steady state after perturbation, check kinetic parameters and regulatory constraints

- If specific pathway predictions consistently disagree with data, consider missing isozymes or transporter reactions

- For poor agreement between predicted and measured fluxes, verify thermodynamic constraints and reaction reversibility

Protocol: Ratio-Based Multi-Omics Profiling for Enhanced Reproducibility

Objective: Implement ratio-based profiling to improve reproducibility and integration across omics datasets.

Materials:

- Common reference materials (e.g., Quartet Project references)

- Study samples

- Appropriate omics measurement platforms

Procedure:

Experimental Design:

- Include common reference materials in each processing batch

- Process reference and study samples concurrently using identical protocols

Data Generation:

- Measure absolute feature values for both study samples and reference materials

- Apply standard quality control metrics for each omics type

Ratio Calculation:

- For each feature, calculate study sample values relative to reference values

- Use these ratios for all downstream analyses instead of absolute values [36]

Quality Assessment:

- Evaluate signal-to-noise ratio using built-in truth from reference materials

- Assess ability to correctly classify samples based on known relationships

Advantages:

- Reduces batch effects and technical variability

- Enables more reliable integration across platforms and laboratories

- Provides built-in quality assessment using known sample relationships [36]

High-Throughput and Genome-Scale Kinetic Modeling Frameworks

Frequently Asked Questions (FAQs)

General Framework Questions

What are the foundational mathematical components of a genome-scale kinetic model? Genome-scale kinetic models are built upon two core data matrices: the Stoichiometric Matrix (S) and the Gradient Matrix (G). The stoichiometric matrix, S, is derived from genomic data and describes the network structure and all biochemical transformations in a chemically accurate manner. The Jacobian matrix (J), which is central to dynamic analysis, is the product of these two matrices: J = S * G. This decomposition separates chemical network topology (S) from kinetic and thermodynamic properties (G) [38].

What is the primary challenge in developing large-scale kinetic models? The primary challenge is the parameterization of models. Knowledge of exact reaction mechanisms and their associated parameters (e.g., Michaelis constants, maximal velocities) is often lacking. Furthermore, the mathematical equations describing biological systems are inherently underdetermined, meaning multiple parameter sets can reproduce the same experimental measurements, making it difficult to identify a unique, correct model [39].

Troubleshooting Computational and Modeling Issues

How can I generate kinetic models with biologically relevant dynamic properties more efficiently? Traditional Monte Carlo sampling methods often produce a large number of dynamically unstable or physiologically irrelevant models. To overcome this, use deep-learning-based frameworks like REKINDLE (Reconstruction of Kinetic Models using Deep Learning). REKINDLE employs generative adversarial networks (GANs) trained on existing kinetic parameter sets to efficiently generate new models that match experimentally observed dynamic responses, significantly improving the incidence of biologically relevant models from less than 1% to over 97% in some cases [39].

Our kinetic model simulations are computationally expensive. How can we speed them up? Integrating surrogate machine learning (ML) models can drastically boost computational efficiency. A demonstrated strategy involves replacing computationally intensive Flux Balance Analysis (FBA) calculations within integrated genome-scale and kinetic models with ML surrogates. This approach can achieve simulation speed-ups of at least two orders of magnitude, enabling tasks like large-scale parameter sampling and dynamic control optimization [40].

How can we integrate a new heterologous pathway model with an existing genome-scale model of a host? A novel strategy involves blending a detailed kinetic model of the heterologous pathway with a genome-scale metabolic model (GEM) of the production host. This method simulates the local nonlinear dynamics of the pathway enzymes and metabolites while being informed by the global metabolic state predicted by the GEM. Using surrogate ML models for the GEM calculations makes this integration computationally feasible for practical applications like predicting metabolite dynamics under genetic perturbations [40].

Troubleshooting Experimental and Data Integration

How do we reduce interindividual variation in metabolomic data used for model validation? The metabolome is highly sensitive to genetic, environmental, and gut microbiota pressures. To reduce confounding variation:

- In clinical studies: Use strict inclusion criteria (age, BMI), admit volunteers to a clinic to standardize diet and environment, and collect samples over a controlled period [32].

- In animal models: Co-house and breed animals in identical conditions, and carefully regulate diet [32].

- Utilize "metabotypes": Consider stratifying populations based on their metabolic fingerprint to account for inherent variation [32].

What is the best way to manage large-scale LC-MS metabolomic batches to ensure data quality?

- Pre-batch Preparation: Prepare sufficient mobile phase for the entire experiment to avoid variability. Clean the MS ionization source between batches [17].

- Batch Sequence Design: Begin with "no-injection" runs and blank (extracting solvent) injections to condition the system and identify carry-over. Inject quality control (QC) samples repeatedly at the start for conditioning and then intersperse them throughout the run [17].

- Handling Instrument Stoppages: If the instrument stops mid-batch, treat the resulting data as separate batches (e.g., Batch 2a, Batch 2b) and apply inter-batch normalization during data processing [17].

How should we use internal standards (IS) in untargeted metabolomics for kinetic model validation? In untargeted LC-MS studies, use a mix of isotopically labeled analogues (e.g., with ²H or ¹³C) of various metabolite classes. Select IS compounds with a range of physicochemical properties to cover different retention times and m/z values. Note that the intensity of these IS should be used to monitor instrument performance but is generally not recommended for correcting systematic errors between batches due to potential interference from metabolites in the sample [17].

Troubleshooting Guides

Problem 1: Low Incidence of Biologically Relevant Kinetic Models During Sampling

Issue: Traditional Monte Carlo sampling yields a very low percentage (e.g., <1%) of parameter sets that result in models with desired dynamic properties, such as stability and experimentally observed response times [39].

Solution: Implement a deep learning framework to generate tailored kinetic models.

Protocol: The REKINDLE Framework [39]

- Generate and Label Training Data: Use an existing kinetic modeling framework (e.g., ORACLE) to produce a large set of kinetic parameter sets. Simulate the dynamics for each parameter set and label them as "biologically relevant" or "not relevant" based on predefined criteria (e.g., stability, matching observed time constants).

- Train Conditional GANs: Train a Generative Adversarial Network (GAN) on the labeled dataset. The generator learns to produce new parameter sets, while the discriminator learns to distinguish between model-generated and training set parameter sets.

- Generate New Models: Use the trained generator to create new kinetic parameter sets conditioned on the "biologically relevant" label.

- Validate Output: Validate the generated models by checking:

- Statistical similarity to the training data (e.g., Kullback-Leibler divergence).

- Linear stability via eigenvalue analysis of the Jacobian matrix.

- Dynamic responses to perturbations.

Table 1: Performance of REKINDLE for E. coli Central Carbon Metabolism [39]

| Physiology Case | Incidence of Relevant Models (Training Data) | Incidence of Relevant Models (REKINDLE - Best Epoch) |

|---|---|---|

| Physiology 1 | ~55% - 61% | 97.7% |

| Physiology 2 | ~55% - 61% | >97% |

| Physiology 3 | ~55% - 61% | >97% |

| Physiology 4 | ~55% - 61% | >97% |

Problem 2: Integrating Kinetic Models with Experimental Metabolomic Data

Issue: Metabolomic data from large-scale studies are often acquired in multiple batches, leading to technical variation (signal drift, retention time shifts) that can invalidate model validation if not corrected.

Solution: Implement a rigorous experimental and computational workflow for multi-batch LC-MS metabolomics.

Protocol: Large-Scale LC-MS Metabolomics for Robust Data Acquisition [17]

Sample Preparation:

- Prepare samples in small, manageable sets to maintain consistency.

- Include a Quality Control (QC) sample. Ideally, this is a pool of all study samples. If this is not feasible, use a pool from a random subset that represents the population.

- Include a labeled Internal Standard (IS) mix to monitor instrument performance.

Instrumental Sequence and Batch Design:

- Conditioning: Start the batch sequence with several "no-injection" runs and blank injections, followed by multiple QC injections (e.g., 10) to equilibrate the system.

- Randomization: Randomize the injection of experimental samples across the entire sequence to avoid confounding biological effects with batch effects.

- QC Placement: Inject a QC sample after every 5-10 experimental samples to monitor and correct for instrumental drift.

- Replicates: Include a subset of case samples as technical replicates across all batches to assess inter-batch variation.

Data Normalization and Processing:

- Do not rely solely on Internal Standards for inter-batch normalization in untargeted studies.

- Use the data from the frequently injected QC samples in post-acquisition normalization algorithms (e.g., QC-SVRC, QC-norm) to correct both intra- and inter-batch systematic errors.

Problem 3: High Computational Cost of Genome-Scale Dynamic Simulations

Issue: Simulating dynamics by directly coupling kinetic pathways with genome-scale models is computationally prohibitive, limiting their use in strain design and virtual screening.

Solution: Use machine learning surrogates to replace expensive computations.

Protocol: Machine Learning-Accelerated Host–Pathway Dynamics [40]

- Model Integration: Formulate a multi-scale model that integrates a kinetic model of a heterologous pathway with a Genome-Scale Metabolic (GEM) model of the host. The GEM provides boundary fluxes and metabolic states for the kinetic model at each simulation step.

- Generate Training Data: Run multiple simulations using the integrated model under various conditions (e.g., different carbon sources, gene knockouts) to generate a dataset of inputs (pathway parameters, environmental conditions) and outputs (FBA-predicted fluxes and metabolite concentrations).

- Train Surrogate Model: Train a machine learning model (e.g., a neural network) to learn the mapping from the inputs to the outputs of the FBA simulation. This surrogate model approximates the FBA solution.

- Deploy for Simulation: Replace the original FBA solver with the trained, fast-executing ML surrogate during dynamic simulations. This enables rapid parameter sampling and optimization for tasks like screening dynamic control circuits.

Table 2: Key Research Reagent Solutions for Kinetic Modeling & Validation

| Item | Function/Application |

|---|---|

| Stoichiometric Matrix (S) | Defines network structure; derived from annotated genome. Forms the foundation of the mass balance equations [38]. |

| Isotopically Labeled Internal Standards | Used in LC-MS to monitor instrument performance and aid in metabolite identification in untargeted metabolomics [17]. |

| Quality Control (QC) Samples | A pooled sample analyzed repeatedly throughout an LC-MS batch sequence to monitor drift and enable post-acquisition data normalization [17]. |

| Generative Adversarial Network (GAN) | A deep learning architecture used in frameworks like REKINDLE to efficiently generate new, valid kinetic parameter sets [39]. |

| Surrogate Machine Learning Model | A fast, approximating model (e.g., neural network) that replaces a slower, mechanistic model (e.g., FBA) to drastically speed up integrated simulations [40]. |

FAQs: Kinetic Modeling and Metabolomics

1. What are the primary methods for determining intracellular metabolic fluxes in E. coli? 13C tracer experiments, followed by 13C-constrained flux analysis, are primary methods. This involves growing cells on a defined medium containing 13C-labeled carbon sources (e.g., lactate). The resulting labeling patterns in proteinogenic amino acids are measured via Gas Chromatography-Mass Spectrometry (GC-MS). These patterns are used to constrain a stoichiometric metabolic model, allowing the calculation of intracellular flux distributions that define the metabolic state [41].

2. How can kinetic models overcome limitations of constraint-based models like FBA? While constraint-based models (e.g., FBA) predict flux distributions at steady-state using stoichiometry and optimization principles, they cannot predict metabolite concentrations or dynamic responses. Kinetic models explicitly incorporate enzyme kinetics and regulatory mechanisms, linking metabolite concentrations, metabolic fluxes, and enzyme levels. This allows them to capture dynamic metabolic responses to perturbations, providing a more detailed characterization of the intracellular state [12] [13].

3. What common issues affect the accuracy of intracellular metabolite quantification? Accurate quantification requires rapid and efficient quenching of metabolism to preserve the in vivo state. Common issues include:

- Metabolite Leakage or Degradation: During quenching and extraction, metabolites can leak from cells or degrade.

- Incomplete Extraction: The extraction method (e.g., using perchloric acid) must fully release intracellular metabolites.

- Instrument Sensitivity: Techniques like Liquid Chromatography-Electrospray Ionization Tandem Mass Spectrometry (LC-ESI-MS/MS) must be optimized to identify and quantify over 15 intracellular metabolites in parallel from small sample volumes [42].

4. Why might a kinetic model fail to predict experimentally observed metabolite concentrations? Failures can stem from:

- Incorrect Kinetic Parameters: A lack of accurate in vivo kinetic parameters (e.g., kcat, KM) is a major challenge.

- Missing Regulatory Loops: The model may omit key allosteric regulations or post-translational modifications.

- Inadequate Model Structure: The model may not include all relevant metabolic reactions or may incorrectly represent network topology [13] [14].

5. How can machine learning improve the creation of kinetic models? Generative machine learning frameworks, like RENAISSANCE, can efficiently parameterize large-scale kinetic models. They integrate diverse omics data (metabolomics, fluxomics, proteomics) and use natural evolution strategies to optimize model parameters. This approach drastically reduces computation time and helps generate models whose dynamic properties match experimental observations, such as cellular doubling times [13].

Troubleshooting Guides

Problem: Discrepancy Between Model Predictions and Experimental Flux Data

| Possible Cause | Solution |

|---|---|

| Incorrect network stoichiometry | Verify and curate the model's reaction list and mass balance using genomic annotation and biochemical databases [12]. |

| Suboptimal objective function in FBA | Test biological objective functions (e.g., maximize ATP yield, minimize nutrient uptake) for your specific condition [12]. |

| Missing pathways or gaps | Use model-driven gap-filling tools and consult organism-specific databases (e.g., VMH) to add missing metabolic capabilities [43] [44]. |

Problem: Low Accuracy in Quantifying Intracellular Metabolite Concentrations

| Possible Cause | Solution |

|---|---|

| Inadequate quenching of metabolism | Optimize the quenching protocol; use cold methanol or other cryogenic solutions for rapid metabolic arrest [42]. |

| Inefficient metabolite extraction | Validate the extraction method (e.g., perchloric acid) for the target metabolites and cell type to ensure complete release [42]. |

| Co-elution or signal interference in LC-MS | Optimize chromatographic separation and use tandem MS (MS/MS) for better specificity and sensitivity [42]. |

Problem: Kinetic Model is Unable to Replicate Dynamic Metabolite Pools

| Possible Cause | Solution |

|---|---|

| Poor parameter estimation | Use frameworks like RENAISSANCE that leverage machine learning and evolution strategies for large-scale parameterization against integrated omics data [13]. |

| Overlooked metabolite homeostasis mechanisms | Review literature for potential substrate-channeling or enzyme clustering mechanisms not captured in a "watery bag" model [45]. |

| Lack of integrated regulatory constraints | Incorporate known transcriptional or post-translational regulatory rules into the model structure where kinetic data is scarce [14]. |

Experimental Protocols for Key Methodologies

Protocol 1: 13C Metabolic Flux Analysis (13C-MFA) in E. coli

Objective: To determine intracellular metabolic flux distributions during growth on a gluconeogenic carbon source.

Materials:

- Bacterial Strain: E. coli K-12 (e.g., MG1655).

- Growth Medium: M9 minimal medium supplemented with a mixture of 20% uniformly 13C-labeled L-lactate (U-13C) and 80% 3-13C-labeled L-lactate.

- Equipment: Baffled Erlenmeyer flasks, magnetic stir bars, GC-MS system (e.g., Trace GC/Trace MS Plus).

Procedure:

- Culture and Harvest: Inoculate medium to a low initial OD600 (<0.005). Grow at 30°C with aeration. Harvest cells at mid-exponential phase (OD600 ~0.5) by centrifugation.

- Hydrolysis and Derivatization: Wash cell pellet with saline. Hydrolyze with 6M HCl at 105°C for 24 hours. Derivatize the hydrolysate with N-methyl-N-(tert-butyldimethylsilyl)trifluoroacetamide (MTBSTFA) at 85°C for 1 hour.

- GC-MS Analysis: Inject the derivatized sample. Collect mass isotopomer distribution data for amino acid fragments.

- Flux Calculation: Correct the raw mass isotopomer data for natural isotopes. Use computational software (e.g., Metano, COBRA Toolbox) with a genome-scale model to perform 13C-constrained flux analysis and compute the flux distribution [41].

Protocol 2: LC-ESI-MS/MS Quantification of Intracellular Metabolites

Objective: To identify and quantify key intracellular metabolites (e.g., glycolytic intermediates, nucleotides).

Materials:

- Extraction Solvent: Perchloric acid.

- Equipment: LC system coupled to a tandem mass spectrometer with an electrospray ionization (ESI) source.

Procedure:

- Rapid Quenching and Extraction: Rapidly quench culture metabolism (e.g., cold methanol). Extract intracellular metabolites using perchloric acid.

- LC-MS/MS Analysis: Separate metabolites using liquid chromatography. Use tandem mass spectrometry in Multiple Reaction Monitoring (MRM) mode for sensitive and specific quantification.

- Quantification and Validation: Quantify metabolites against standard curves from pure analytical standards. Verify method accuracy by comparing results with established enzymatic assays [42].

Pathway and Workflow Visualizations

Experimental and Computational Workflow for Kinetic Model Validation

Logical Relationships in Model Validation

Research Reagent Solutions

| Reagent / Tool | Function / Application |

|---|---|

| 13C-labeled substrates (e.g., U-13C L-lactate) | Serve as tracers for elucidating intracellular metabolic flux routes via 13C-MFA [41]. |